Using Texture Synthesis for NonPhotorealistic Shading from Paint

- Slides: 1

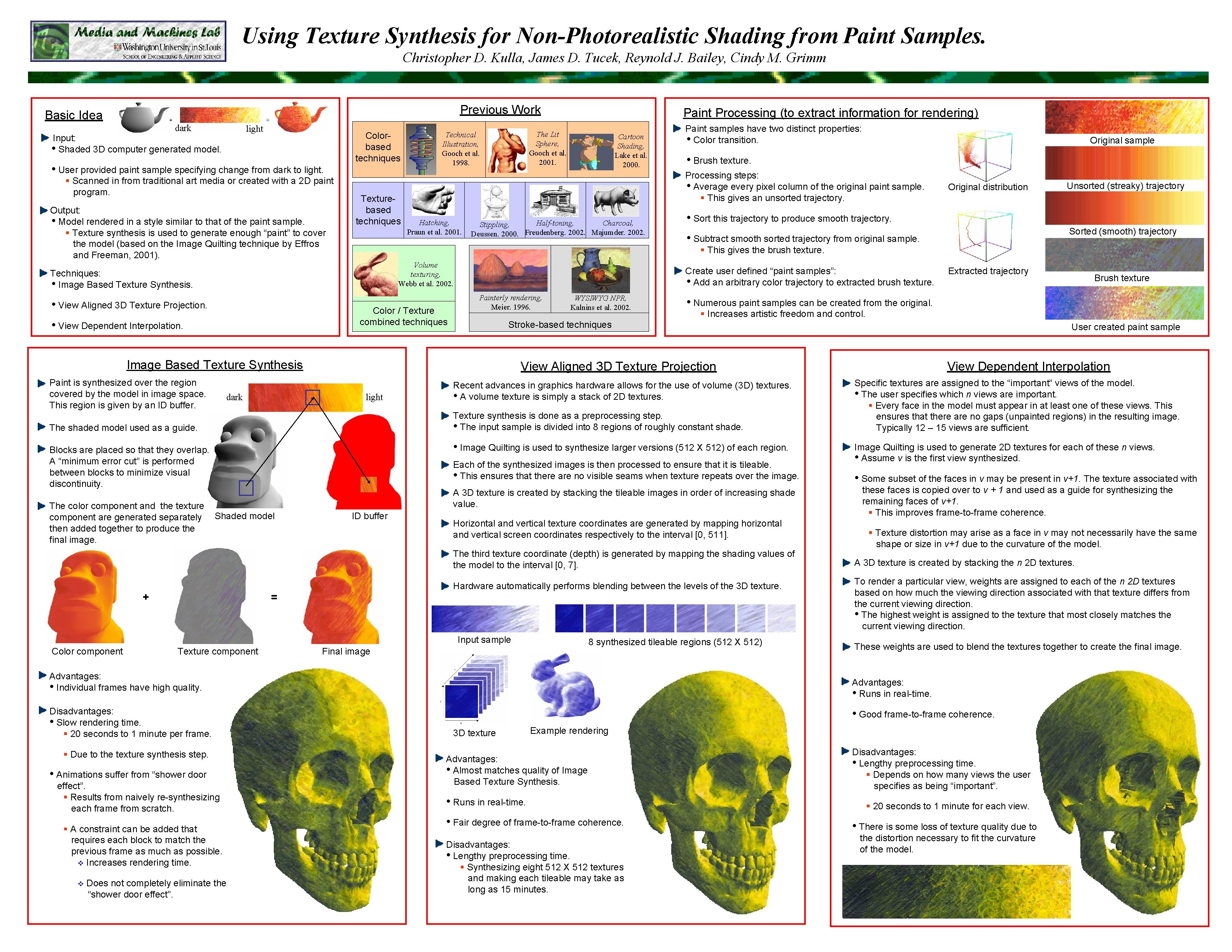

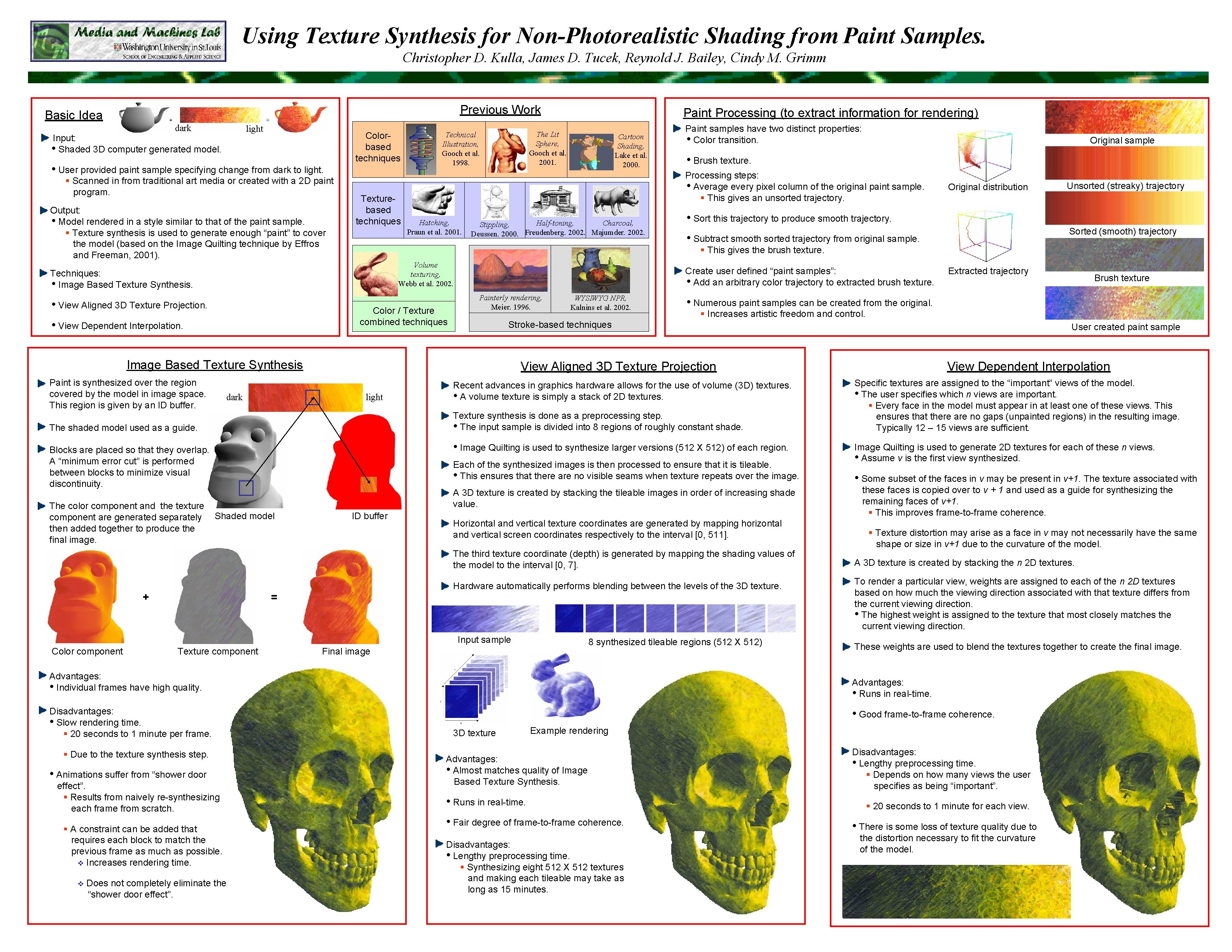

Using Texture Synthesis for Non-Photorealistic Shading from Paint Samples. Christopher D. Kulla, James D. Tucek, Reynold J. Bailey, Cindy M. Grimm Previous Work Basic Idea dark light Input: • Shaded 3 D computer generated model. • User provided paint sample specifying change from dark to light. § Scanned in from traditional art media or created with a 2 D paint program. Output: • Model rendered in a style similar to that of the paint sample. § Texture synthesis is used to generate enough “paint” to cover the model (based on the Image Quilting technique by Effros and Freeman, 2001). Colorbased techniques Texturebased techniques The Lit Sphere, Gooch et al. 2001. Technical Illustration, Gooch et al. 1998. Cartoon Shading, Lake et al. 2000. Hatching, Praun et al. 2001. Color / Texture combined techniques • View Dependent Interpolation. Half-toning, Charcoal, Stippling, Deussen. 2000. Freudenberg. 2002. Majumder. 2002. dark • Brush texture. Painterly rendering, Meier. 1996. WYSIWYG NPR, Kalnins et al. 2002. The shaded model used as a guide. Stroke-based techniques Recent advances in graphics hardware allows for the use of volume (3 D) textures. • A volume texture is simply a stack of 2 D textures. Each of the synthesized images is then processed to ensure that it is tileable. • This ensures that there are no visible seams when texture repeats over the image. A 3 D texture is created by stacking the tileable images in order of increasing shade value. ID buffer Horizontal and vertical texture coordinates are generated by mapping horizontal and vertical screen coordinates respectively to the interval [0, 511]. The third texture coordinate (depth) is generated by mapping the shading values of the model to the interval [0, 7]. Hardware automatically performs blending between the levels of the 3 D texture. = Input sample Color component Texture component 8 synthesized tileable regions (512 X 512) Final image Advantages: • Individual frames have high quality. Disadvantages: • Slow rendering time. § 20 seconds to 1 minute per frame. § Due to the texture synthesis step. • Animations suffer from “shower door effect”. § Results from naively re-synthesizing each frame from scratch. § A constraint can be added that requires each block to match the previous frame as much as possible. v Increases rendering time. v Does not completely eliminate the “shower door effect”. User created paint sample View Dependent Interpolation Specific textures are assigned to the “important” views of the model. • The user specifies which n views are important. § Every face in the model must appear in at least one of these views. This ensures that there are no gaps (unpainted regions) in the resulting image. Typically 12 – 15 views are sufficient. Image Quilting is used to generate 2 D textures for each of these n views. • Assume v is the first view synthesized. • Some subset of the faces in v may be present in v+1. The texture associated with these faces is copied over to v + 1 and used as a guide for synthesizing the remaining faces of v+1. § This improves frame-to-frame coherence. § Texture distortion may arise as a face in v may not necessarily have the same shape or size in v+1 due to the curvature of the model. A 3 D texture is created by stacking the n 2 D textures. To render a particular view, weights are assigned to each of the n 2 D textures based on how much the viewing direction associated with that texture differs from the current viewing direction. • The highest weight is assigned to the texture that most closely matches the current viewing direction. These weights are used to blend the textures together to create the final image. Advantages: • Runs in real-time. • Good frame-to-frame coherence. 3 D texture Example rendering Advantages: • Almost matches quality of Image Based Texture Synthesis. • Runs in real-time. • Fair degree of frame-to-frame coherence. Disadvantages: • Lengthy preprocessing time. § Synthesizing eight 512 X 512 textures and making each tileable may take as long as 15 minutes. Brush texture § Increases artistic freedom and control. • Image Quilting is used to synthesize larger versions (512 X 512) of each region. Blocks are placed so that they overlap. A “minimum error cut” is performed between blocks to minimize visual discontinuity. Extracted trajectory • Numerous paint samples can be created from the original. Texture synthesis is done as a preprocessing step. • The input sample is divided into 8 regions of roughly constant shade. + Unsorted (streaky) trajectory Sorted (smooth) trajectory • Subtract smooth sorted trajectory from original sample. View Aligned 3 D Texture Projection light Shaded model Original distribution • Sort this trajectory to produce smooth trajectory. Create user defined “paint samples”: • Add an arbitrary color trajectory to extracted brush texture. Image Based Texture Synthesis Paint is synthesized over the region covered by the model in image space. This region is given by an ID buffer. Original sample Processing steps: • Average every pixel column of the original paint sample. § This gives an unsorted trajectory. Volume texturing, Webb et al. 2002. • View Aligned 3 D Texture Projection. Paint samples have two distinct properties: • Color transition. § This gives the brush texture. Techniques: • Image Based Texture Synthesis. The color component and the texture component are generated separately then added together to produce the final image. Paint Processing (to extract information for rendering) Disadvantages: • Lengthy preprocessing time. § Depends on how many views the user specifies as being “important”. § 20 seconds to 1 minute for each view. • There is some loss of texture quality due to the distortion necessary to fit the curvature of the model.