Using Stork Barcelona 2006 Condor Project Computer Sciences

Using Stork Barcelona, 2006 Condor Project Computer Sciences Department University of Wisconsin-Madison condor-admin@cs. wisc. edu http: //www. cs. wisc. edu/condor 1

Meet Friedrich* Friedrich is a scientist with a BIG problem. *Frieda’s twin brother 2

I have a lot of data to process. 3

Friedrich's problem … Friedrich has many large data sets to process. For each data set: 1. stage the data in from a remote server 2. run a job to process the data 3. stage the data out to a remote server 4

The Classic Data Transfer Job #!/bin/sh globus-url-copy source dest Scripts often work fine for short, simple data transfers, but… 5

Many things can go wrong! These errors are more likely with large data sets: § The network is down. § The data server is unavailable. § The transferred data is corrupt. § The workflow does not know that the data was bad. 6

Stork Solves Problems § Creates the concept of the data placement job § Managed and scheduled the same as any Condor job § Friedrich’s jobs benefit from built-in fault tolerance 7

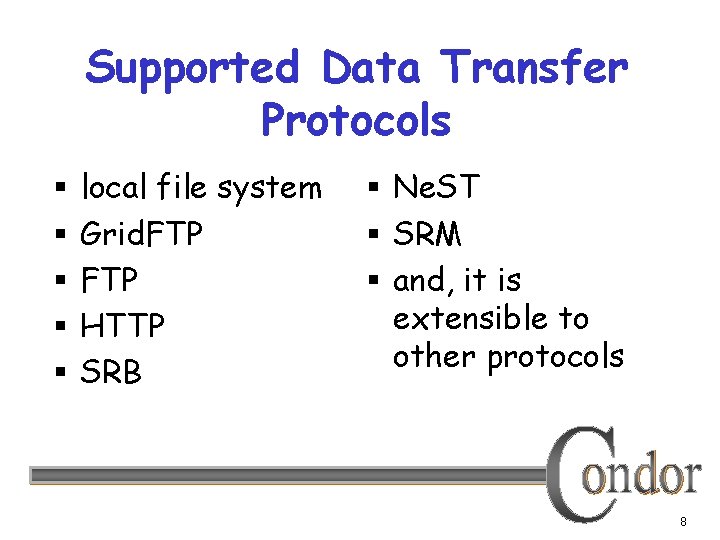

Supported Data Transfer Protocols § § § local file system Grid. FTP HTTP SRB § Ne. ST § SRM § and, it is extensible to other protocols 8

Fault Tolerance § Retries failed jobs § Can also retry a failed data transfer job using an alternate protocol. For example, first try Grid. FTP, then try FTP § Retry stuck jobs § Configurable fault responses 9

Getting Stork § Stork is part of Condor, so get Condor. . . § Available as a free download from http: //www. cs. wisc. edu/condor § Currently available for Linux platforms 10

Personal Condor works well with Stork § This is Condor/Stork on your own workstation, no root access required, no system administrator intervention needed § After installation, Friedrich submits his jobs to his Personal Stork… 11

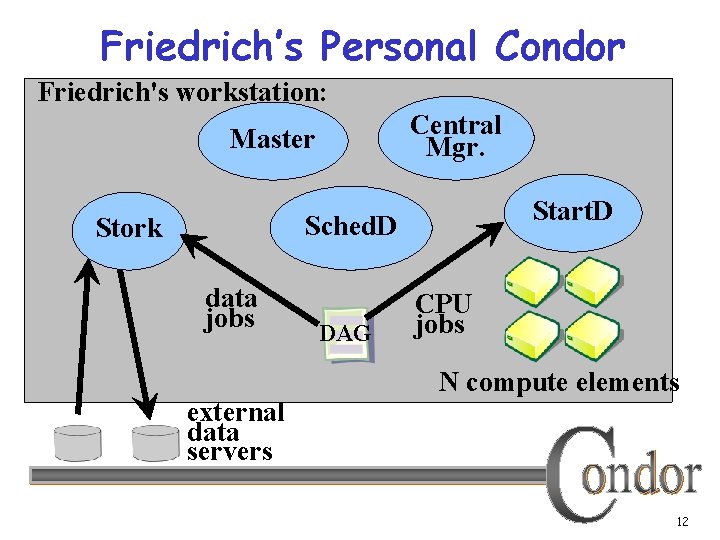

Friedrich’s Personal Condor Friedrich's workstation: Central Mgr. Master Start. D Sched. D Stork data jobs external data servers DAG CPU jobs N compute elements 12

Stork will. . . § Keep an eye on data placement jobs, and it § § § will keep you posted on their progress Throttle the maximum number of jobs running Keep a log of job activities Add fault tolerance to all jobs § Detect and retry failed data placement jobs 13

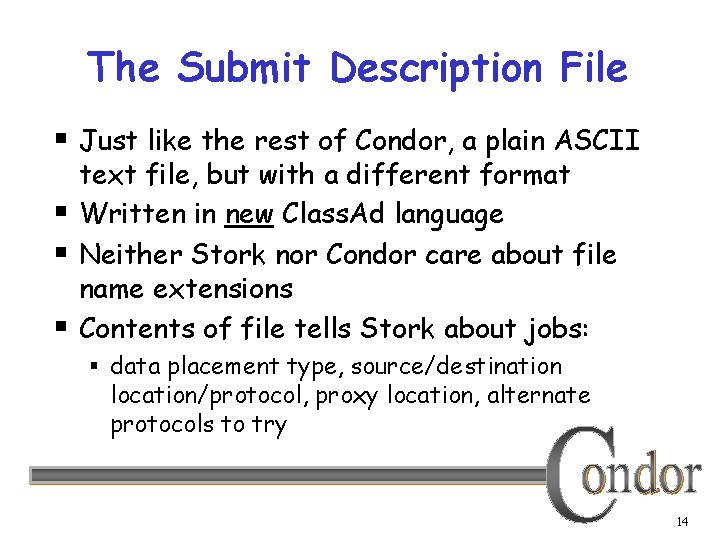

The Submit Description File § Just like the rest of Condor, a plain ASCII § § § text file, but with a different format Written in new Class. Ad language Neither Stork nor Condor care about file name extensions Contents of file tells Stork about jobs: § data placement type, source/destination location/protocol, proxy location, alternate protocols to try 14

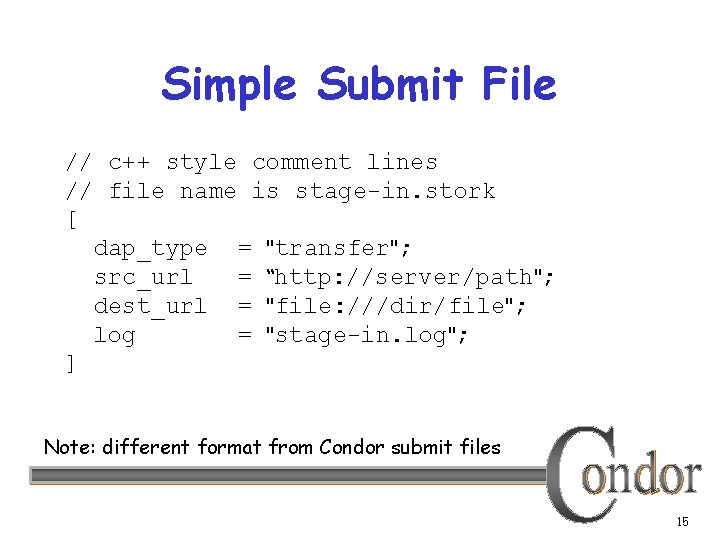

Simple Submit File // c++ style comment lines // file name is stage-in. stork [ dap_type = "transfer"; src_url = “http: //server/path"; dest_url = "file: ///dir/file"; log = "stage-in. log"; ] Note: different format from Condor submit files 15

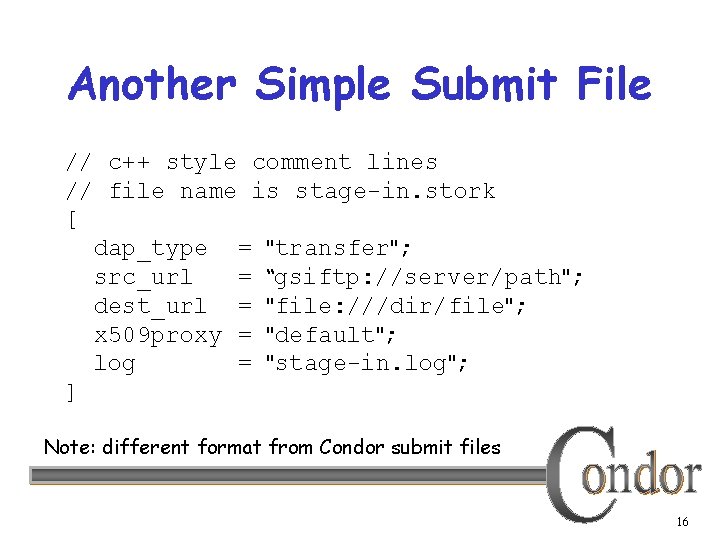

Another Simple Submit File // c++ style comment lines // file name is stage-in. stork [ dap_type = "transfer"; src_url = “gsiftp: //server/path"; dest_url = "file: ///dir/file"; x 509 proxy = "default"; log = "stage-in. log"; ] Note: different format from Condor submit files 16

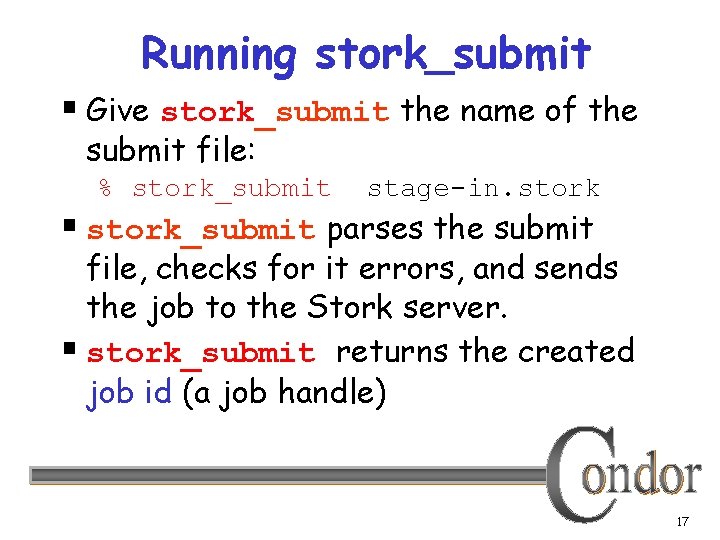

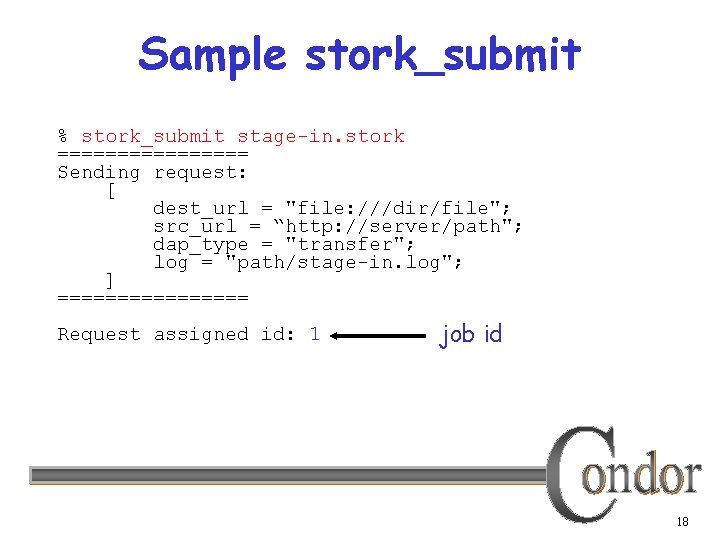

Running stork_submit § Give stork_submit the name of the submit file: % stork_submit stage-in. stork § stork_submit parses the submit file, checks for it errors, and sends the job to the Stork server. § stork_submit returns the created job id (a job handle) 17

Sample stork_submit % stork_submit stage-in. stork ======== Sending request: [ dest_url = "file: ///dir/file"; src_url = “http: //server/path"; dap_type = "transfer"; log = "path/stage-in. log"; ] ======== Request assigned id: 1 job id 18

The Job Queue § stork_submit sends the job to the Stork server § The Stork server manages the local job queue § View the queue with stork_q, or stork_status 19

Job Status § stork_q queries all active jobs % stork_q § stork_status queries the given job id, which may be active, or complete % stork_status 12 20

Removing jobs § To remove a data placement job from the queue, use stork_rm § You may only remove jobs that you own (Unix root may remove anyone’s jobs) § Give a specific job ID % stork_rm 21 removes a single job 21

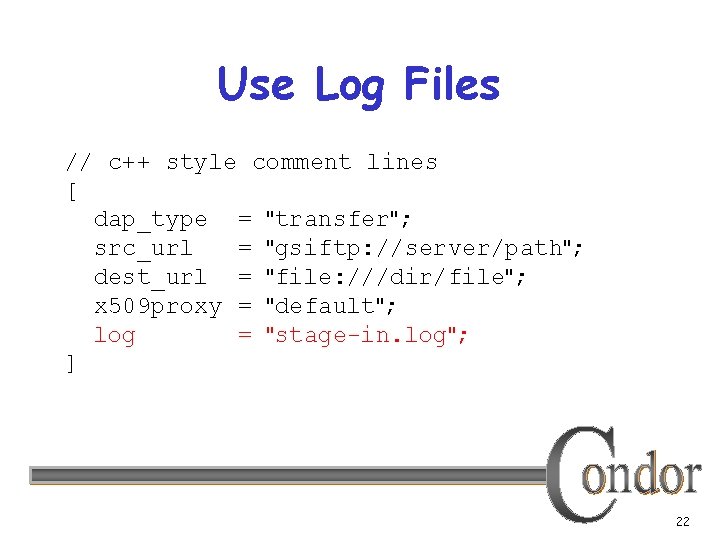

Use Log Files // c++ style comment lines [ dap_type = "transfer"; src_url = "gsiftp: //server/path"; dest_url = "file: ///dir/file"; x 509 proxy = "default"; log = "stage-in. log"; ] 22

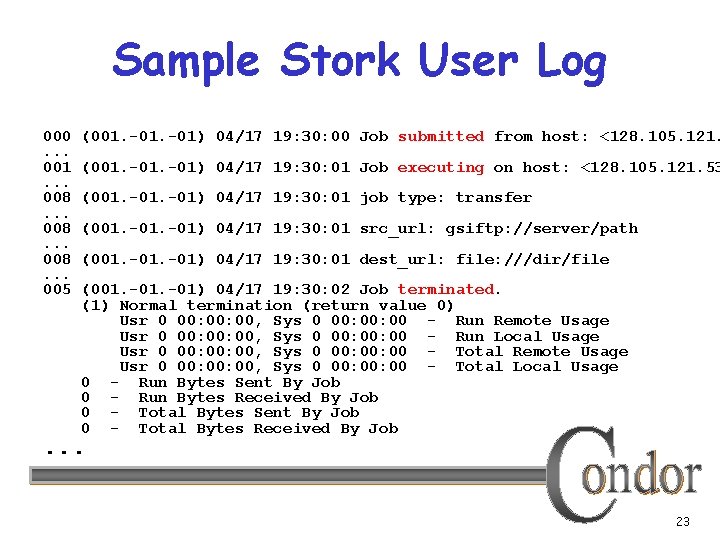

Sample Stork User Log 000. . . 001. . . 008. . . 005 (001. -01) 04/17 19: 30: 00 Job submitted from host: <128. 105. 121. (001. -01) 04/17 19: 30: 01 Job executing on host: <128. 105. 121. 53 (001. -01) 04/17 19: 30: 01 job type: transfer (001. -01) 04/17 19: 30: 01 src_url: gsiftp: //server/path (001. -01) 04/17 19: 30: 01 dest_url: file: ///dir/file (001. -01) 04/17 19: 30: 02 Job terminated. (1) Normal termination (return value 0) Usr 0 00: 00, Sys 0 00: 00 - Run Remote Usage Usr 0 00: 00, Sys 0 00: 00 - Run Local Usage Usr 0 00: 00, Sys 0 00: 00 - Total Remote Usage Usr 0 00: 00, Sys 0 00: 00 - Total Local Usage 0 - Run Bytes Sent By Job 0 - Run Bytes Received By Job 0 - Total Bytes Sent By Job 0 - Total Bytes Received By Job . . . 23

Stork and DAGMan Data placement jobs are integrated with Condor’s DAGMan, and Friedrich benefits 24

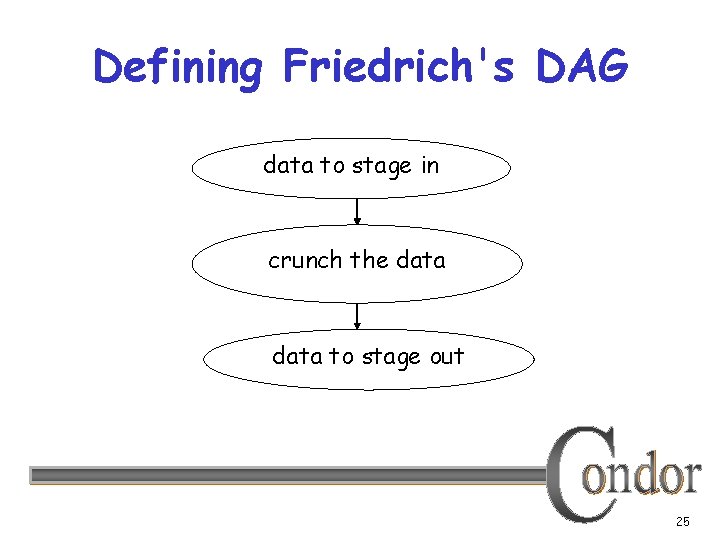

Defining Friedrich's DAG data to stage in crunch the data to stage out 25

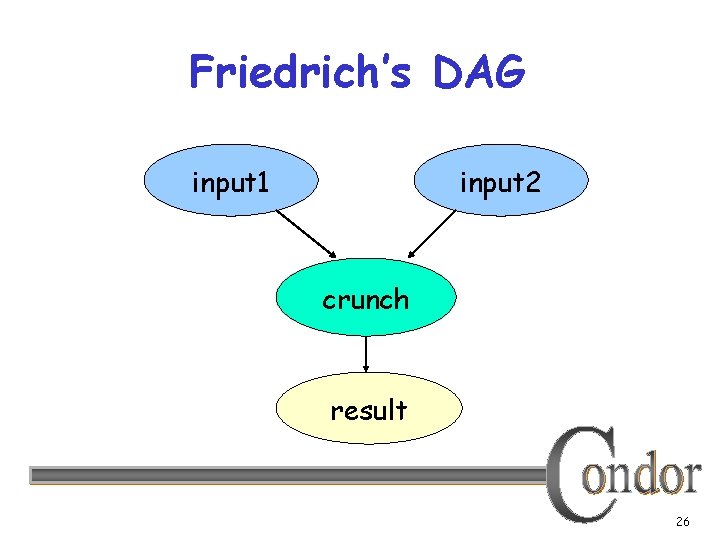

Friedrich’s DAG input 1 input 2 crunch result 26

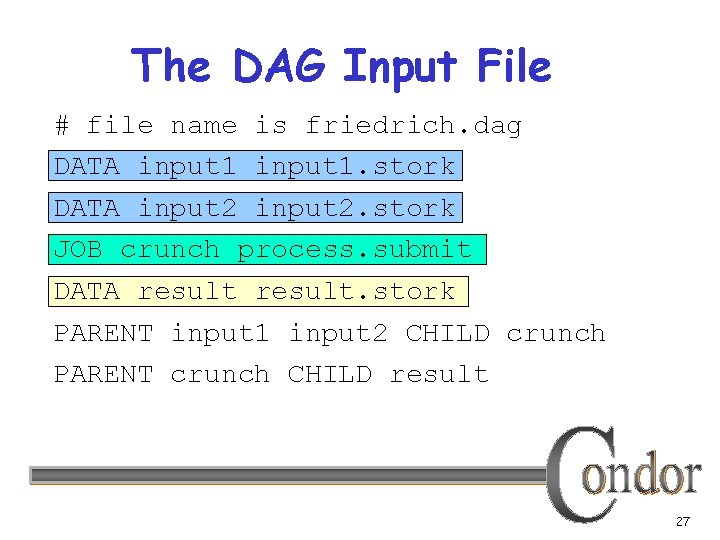

The DAG Input File # file name is friedrich. dag DATA input 1. stork DATA input 2. stork JOB crunch process. submit DATA result. stork PARENT input 1 input 2 CHILD crunch PARENT crunch CHILD result 27

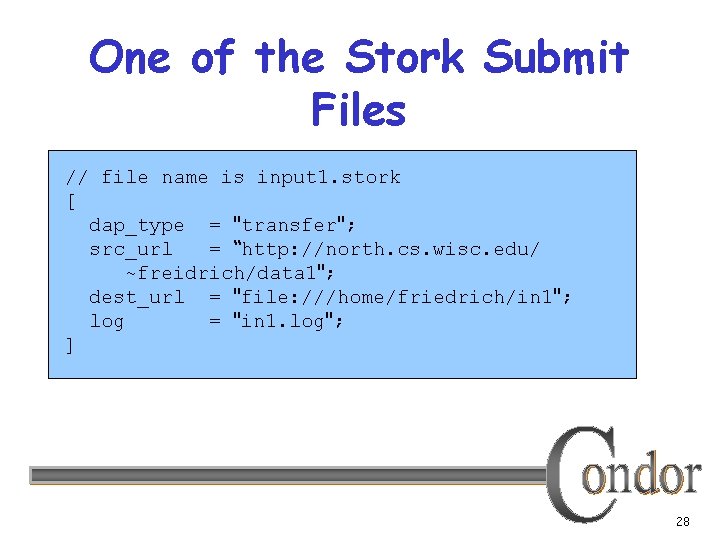

One of the Stork Submit Files // file name is input 1. stork [ dap_type = "transfer"; src_url = “http: //north. cs. wisc. edu/ ~freidrich/data 1"; dest_url = "file: ///home/friedrich/in 1"; log = "in 1. log"; ] 28

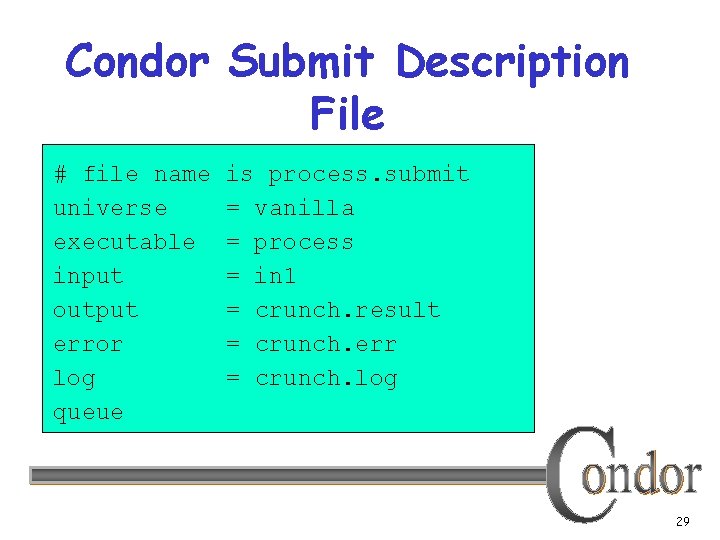

Condor Submit Description File # file name universe executable input output error log queue is process. submit = vanilla = process = in 1 = crunch. result = crunch. err = crunch. log 29

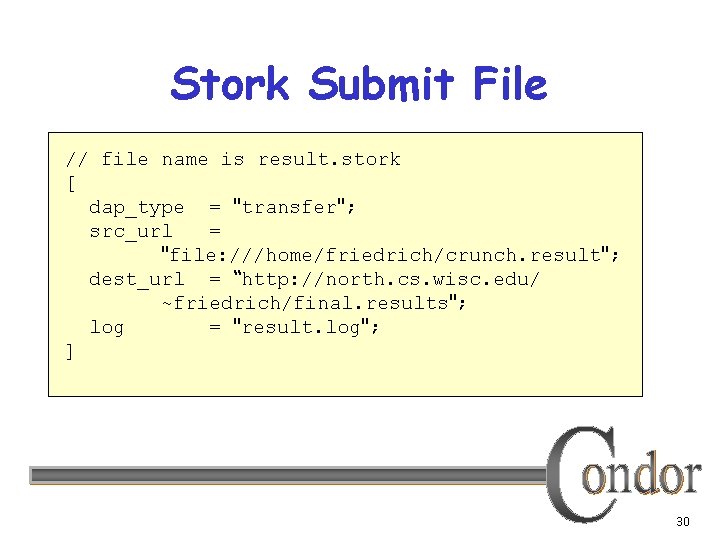

Stork Submit File // file name is result. stork [ dap_type = "transfer"; src_url = "file: ///home/friedrich/crunch. result"; dest_url = “http: //north. cs. wisc. edu/ ~friedrich/final. results"; log = "result. log"; ] 30

Friedrich Submits the DAG • While Friedrich’s current working directory is /home/friedrich % condor_submit_dag friedrich. dag 31

In Review With Stork Friedrich now can… § Submit data processing jobs and go home! Because, Stork manages the data transfers, including fault detection and retry § Condor DAGMan manages dependencies. 32

Additional Resources § http: //www. cs. wisc. edu/condor/stork/ § Condor Manual, Stork section § stork-announce@cs. wisc. edu list § stork-discuss@cs. wisc. edu list 33

Additional Slides 34

Important Parameters § STORK_MAX_NUM_JOBS limits number of active jobs § STORK_MAX_RETRY limits job attempts, before job marked as failed § STORK_MAXDELAY_INMINUTES specifies “hung job” threshold 35

Current Restrictions § Currently, best suited for “Personal Stork” mode § Local file paths must be valid on Stork server, including submit directory. § To share data, successive jobs in DAG must use shared filesystem 36

Future Work § Enhance multi-user fair share § Enhance support for DAGs without shared file system § Enhance scheduling with configurable job requirements and rank § Add DAP job matchmaking § Additional platform ports 37

- Slides: 37