Using Condor An Introduction Condor Week 2009 http

- Slides: 172

Using Condor An Introduction Condor Week 2009 http: //www. cs. wisc. edu/condor 1

The Condor Project (Established ‘ 85) Distributed High Throughput Computing research performed by a team of ~35 faculty, full time staff and students who: face software engineering challenges in a distributed UNIX/Linux/NT environment are involved in national and international grid collaborations, actively interact with academic and commercial entities and users, maintain and support large distributed production environments, and educate and train students. http: //www. cs. wisc. edu/condor 2

The Condor Project http: //www. cs. wisc. edu/condor 3

Some free software produced by the Condor Project › Condor System › VDT › Metronome › Class. Ad Library › DAGMan › › › Hawkeye GCB & CCB MW Ne. ST / Lot. Man And others… all as open source http: //www. cs. wisc. edu/condor 4

Full featured system › Flexible scheduling policy engine via Class. Ads Preemption, suspension, requirements, preferences, groups, quotas, settable fair-share, system hold… › Facilities to manage BOTH dedicated CPUs › › › (clusters) and non-dedicated resources (desktops) Transparent Checkpoint/Migration for many types of serial jobs No shared file-system required Federate clusters w/ a wide array of Grid Middleware http: //www. cs. wisc. edu/condor 5

Full featured system › Workflow management (inter-dependencies) › Support for many job types – serial, parallel, etc. › Fault-tolerant: can survive crashes, network › › outages, no single point of failure. Development APIs: via SOAP / web services, DRMAA (C), Perl package, GAHP, flexible commandline tools, MW Platforms: Linux i 386/IA 64, Windows 2 k/XP/Vista, Mac. OS, Free. BSD, Solaris, IRIX, HP-UX, Compaq Tru 64, … lots. IRIX and Tru 64 are no longer supported by current releases of Condor http: //www. cs. wisc. edu/condor 6

The Problem. Our esteemed scientist needs to run a “small” simulation. http: //www. cs. wisc. edu/condor 7

The Simulation Run a simulation of the evolution of the cosmos with various properties http: //www. cs. wisc. edu/condor 8

The Simulation Details Varying values for the value of: G (the gravitational constant): 100 values Rμν (the cosmological constant): 100 values c (the speed of light): 100 values 100 × 100 = 1, 000 jobs http: //www. cs. wisc. edu/condor 9

Running the Simulation Each simulation: Requires up to 4 GB of RAM Requires 20 MB of input Requires 2 – 500 hours of computing time Produces up to 10 GB of output Estimated total: 15, 000 hours! http: //www. cs. wisc. edu/condor 10

NSF won’t fund the Blue Gene that I requested. http: //www. cs. wisc. edu/condor 11

While sharing a beverage with some colleagues, Carl asks “Have you tried Condor? It’s free, already installed for you, and you can use our pool. ” http: //www. cs. wisc. edu/condor 12

Are my Jobs Safe? › No worries… Condor will take care of your jobs for you Jobs are queued in a safe way • More details later Condor will make sure that your jobs run, return output, etc. • You can specify what defines “OK” http: //www. cs. wisc. edu/condor 13

Condor will. . . › Keep an eye on your jobs and will keep you › › posted on their progress Implement your policy on the execution order of the jobs Keep a log of your job activities Add fault tolerance to your jobs Implement your policy on when the jobs can run on your workstation http: //www. cs. wisc. edu/condor 14

Condor Doesn’t Play Dice with My Jobs! http: //www. cs. wisc. edu/condor 15

Definitions › Job The Condor representation of your work › Machine The Condor representation of computers and that can perform the work › Match Making Matching a job with a machine “Resource” › Central Manager Central repository for the whole pool Performs job / machine matching, etc. http: //www. cs. wisc. edu/condor 16

Job Jobs state their requirements and preferences: I require a Linux/x 86 platform I prefer the machine with the most memory I prefer a machine in the chemistry department http: //www. cs. wisc. edu/condor 17

Machines state their requirements and preferences: Require that jobs run only when there is no keyboard activity I prefer to run Albert’s jobs I am a machine in the physics department Never run jobs belonging to Dr. Heisenberg http: //www. cs. wisc. edu/condor 18

The Magic of Matchmaking › Jobs and machines state their › requirements and preferences Condor matches jobs with machines Based on requirements And preferences “Rank” http: //www. cs. wisc. edu/condor 19

Getting Started: Submitting Jobs to Condor › Overview: Choose a “Universe” for your job Make your job “batch-ready” Create a submit description file Run condor_submit to put your job in the queue http: //www. cs. wisc. edu/condor 20

1. Choose the “Universe” › Controls how Condor › handles jobs Choices include: Vanilla Standard Grid Java Parallel VM Todd’s Universe http: //www. cs. wisc. edu/condor 21

Using the Vanilla Universe • The Vanilla Universe: – Allows running almost any “serial” job – Provides automatic file transfer, etc. – Like vanilla ice cream • Can be used in just about any situation http: //www. cs. wisc. edu/condor 22

2. Make your job batchready Must be able to run in the background • No interactive input • No GUI/window clicks – Who needs ‘em anyway? • No music ; ^) http: //www. cs. wisc. edu/condor 23

Make your job batch-ready (details)… Job can still use STDIN, STDOUT, and STDERR (the keyboard and the screen), but files are used for these instead of the actual devices Similar to UNIX shell: • $. /myprogram <input. txt >output. txt http: //www. cs. wisc. edu/condor 24

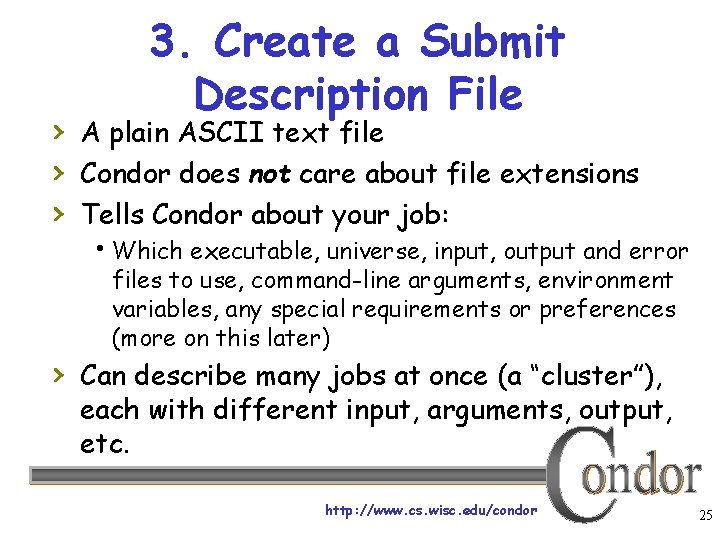

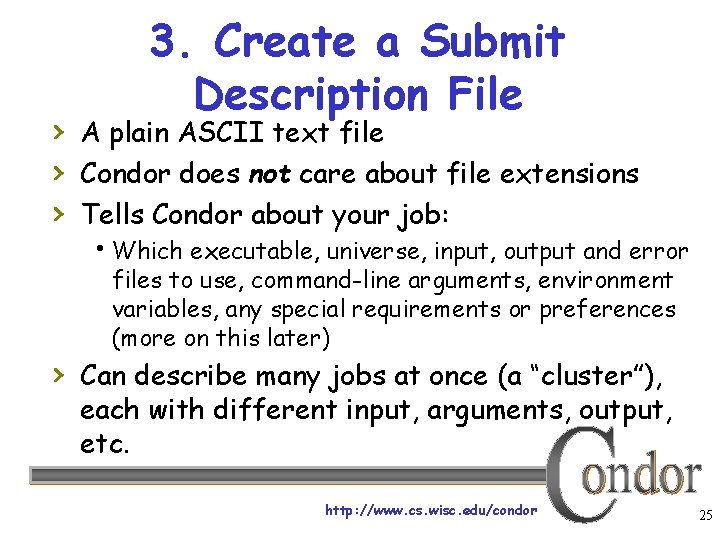

3. Create a Submit Description File › A plain ASCII text file › Condor does not care about file extensions › Tells Condor about your job: Which executable, universe, input, output and error files to use, command-line arguments, environment variables, any special requirements or preferences (more on this later) › Can describe many jobs at once (a “cluster”), each with different input, arguments, output, etc. http: //www. cs. wisc. edu/condor 25

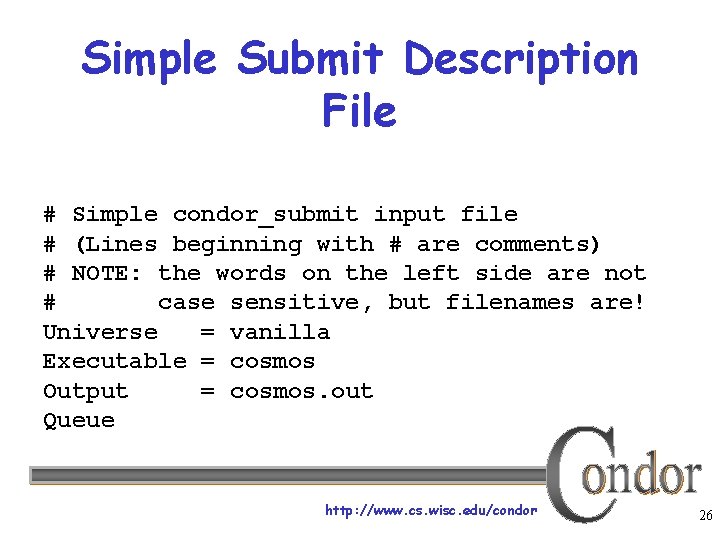

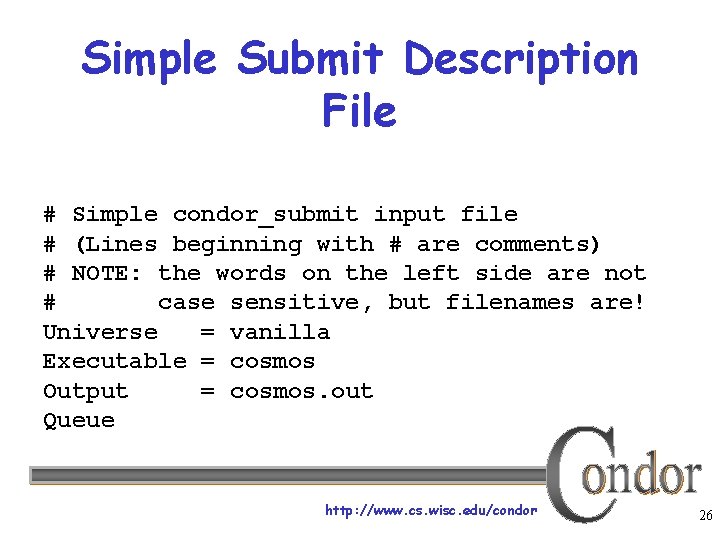

Simple Submit Description File # Simple condor_submit input file # (Lines beginning with # are comments) # NOTE: the words on the left side are not # case sensitive, but filenames are! Universe = vanilla Executable = cosmos Output = cosmos. out Queue http: //www. cs. wisc. edu/condor 26

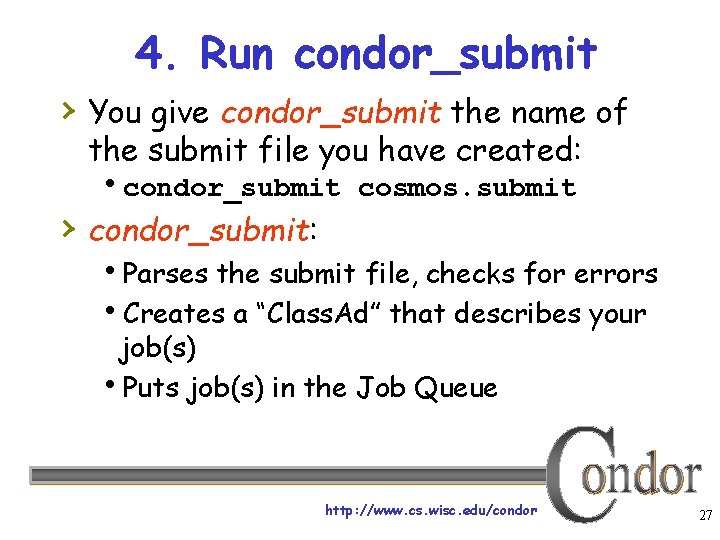

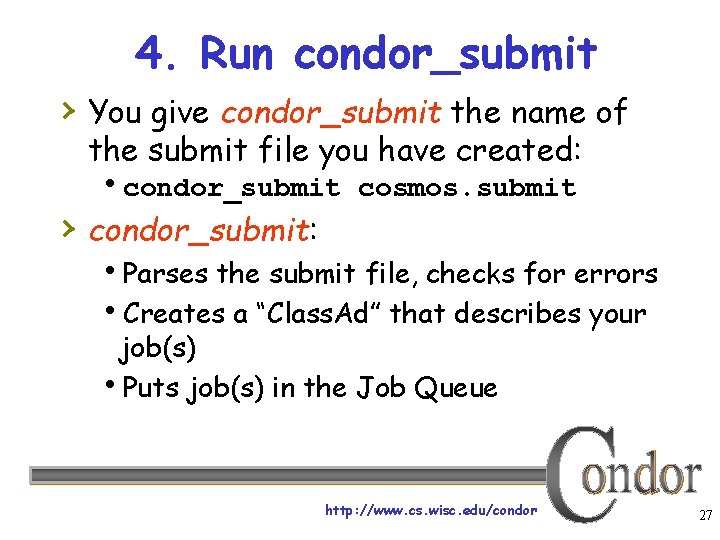

4. Run condor_submit › You give condor_submit the name of the submit file you have created: condor_submit cosmos. submit › condor_submit: Parses the submit file, checks for errors Creates a “Class. Ad” that describes your job(s) Puts job(s) in the Job Queue http: //www. cs. wisc. edu/condor 27

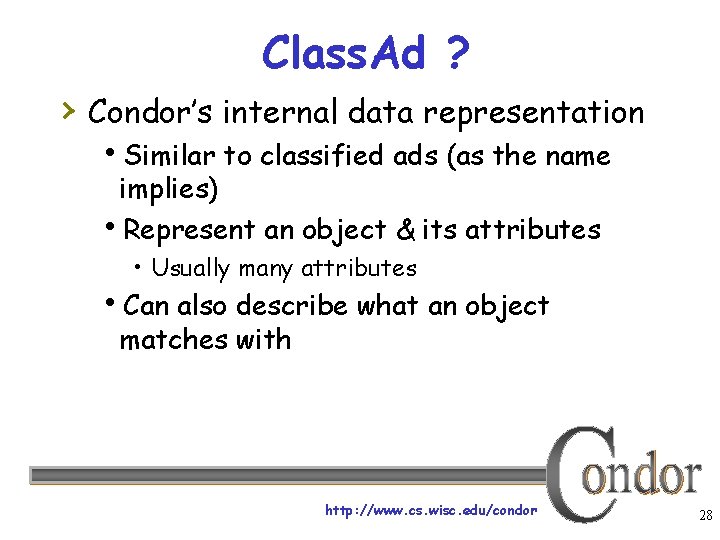

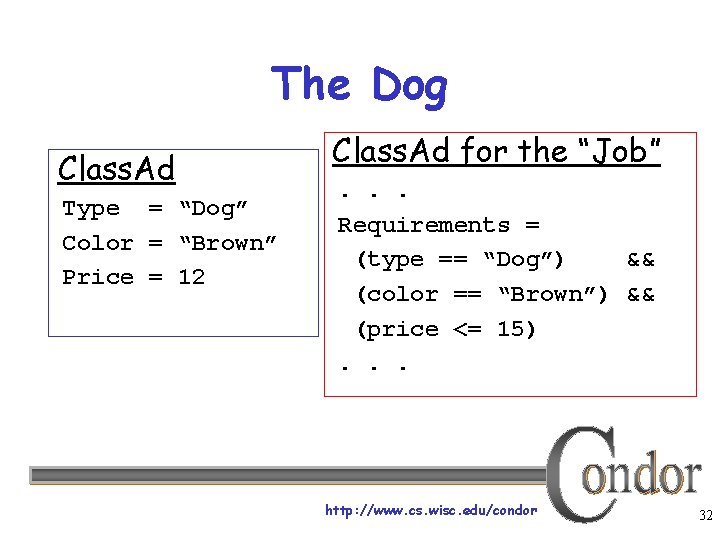

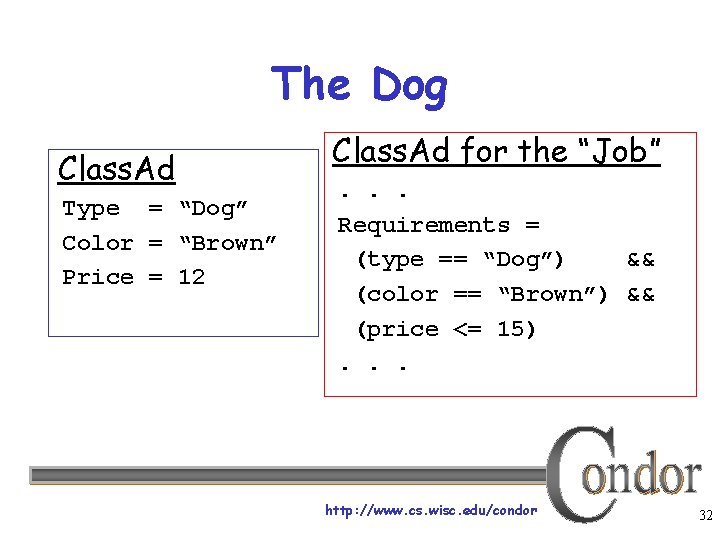

Class. Ad ? › Condor’s internal data representation Similar to classified ads (as the name implies) Represent an object & its attributes • Usually many attributes Can also describe what an object matches with http: //www. cs. wisc. edu/condor 28

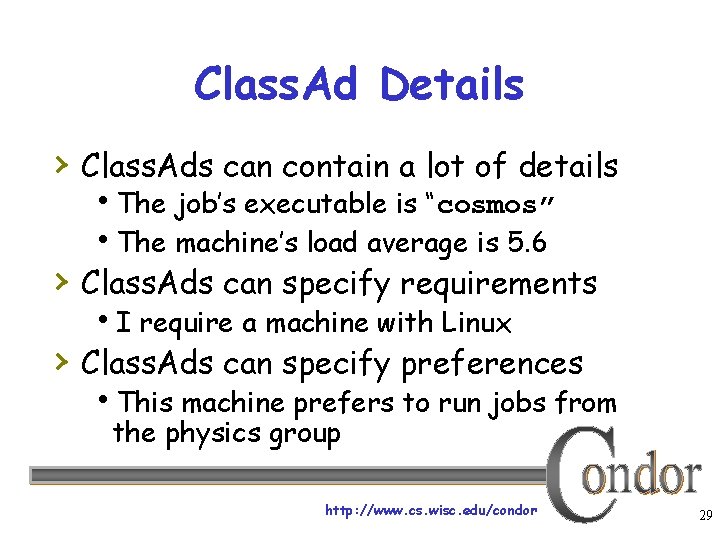

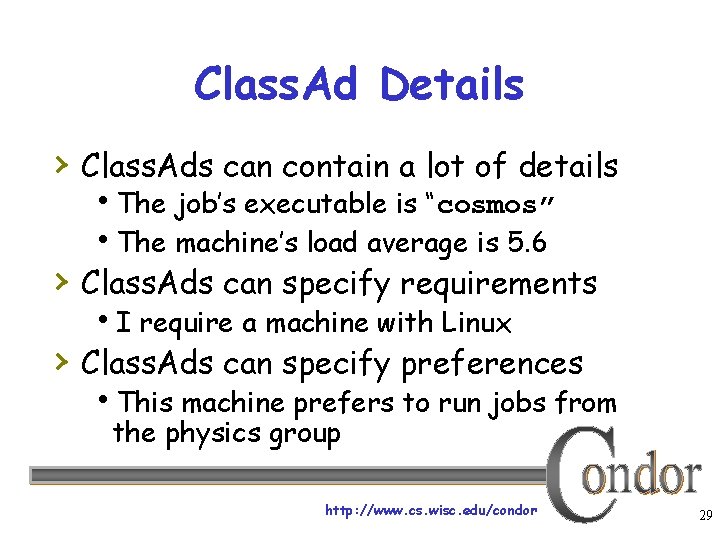

Class. Ad Details › Class. Ads can contain a lot of details The job’s executable is “cosmos” The machine’s load average is 5. 6 › Class. Ads can specify requirements I require a machine with Linux › Class. Ads can specify preferences This machine prefers to run jobs from the physics group http: //www. cs. wisc. edu/condor 29

Class. Ad Details (continued) › Class. Ads are: semi-structured user-extensible schema-free Attribute = Expression http: //www. cs. wisc. edu/condor 30

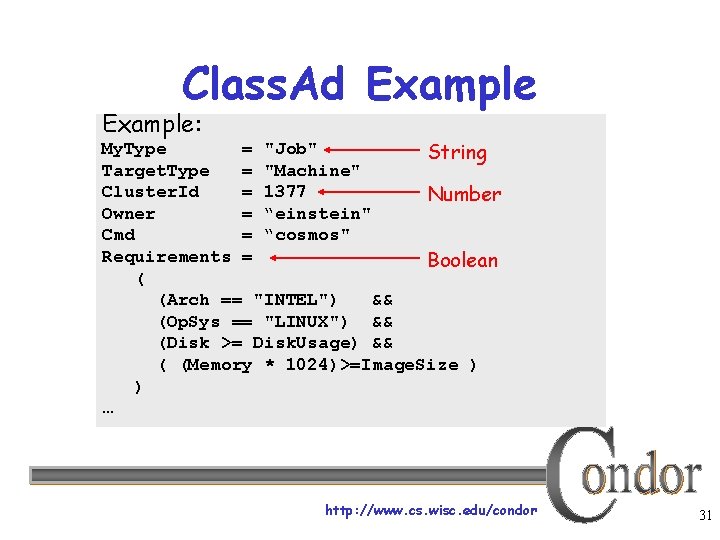

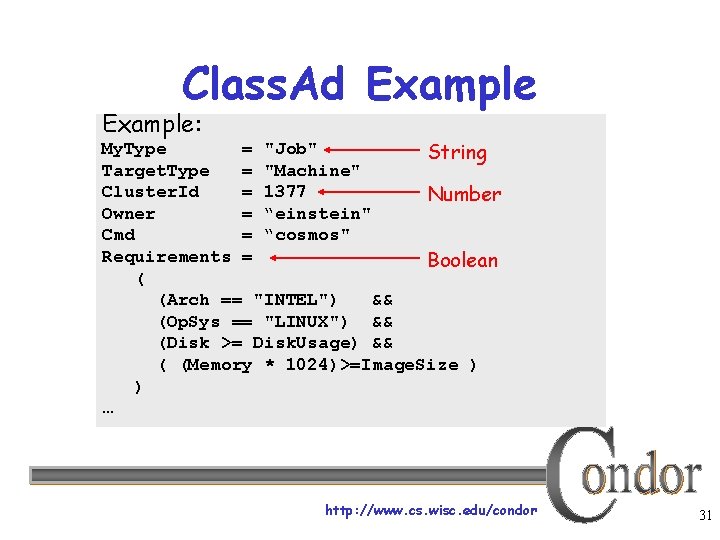

Class. Ad Example: My. Type = "Job" String Target. Type = "Machine" Cluster. Id = 1377 Number Owner = “einstein" Cmd = “cosmos" Requirements = Boolean ( (Arch == "INTEL") && (Op. Sys == "LINUX") && (Disk >= Disk. Usage) && ( (Memory * 1024)>=Image. Size ) ) … http: //www. cs. wisc. edu/condor 31

The Dog Class. Ad Type = “Dog” Color = “Brown” Price = 12 Class. Ad for the “Job”. . . Requirements = (type == “Dog”) && (color == “Brown”) && (price <= 15). . . http: //www. cs. wisc. edu/condor 32

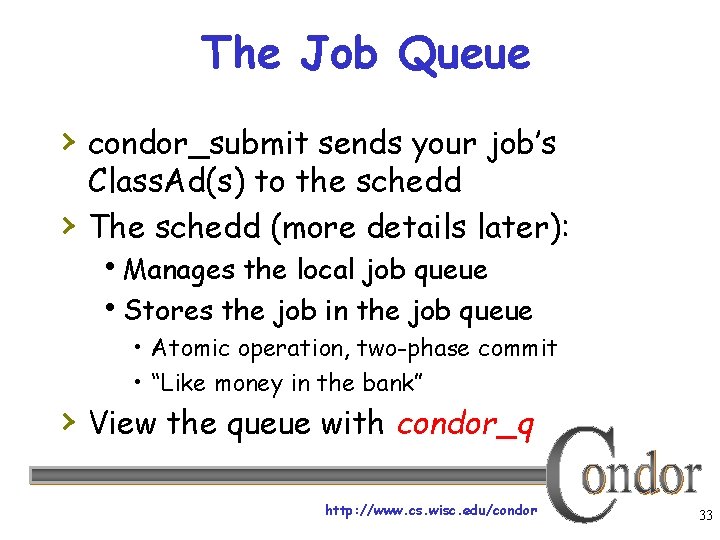

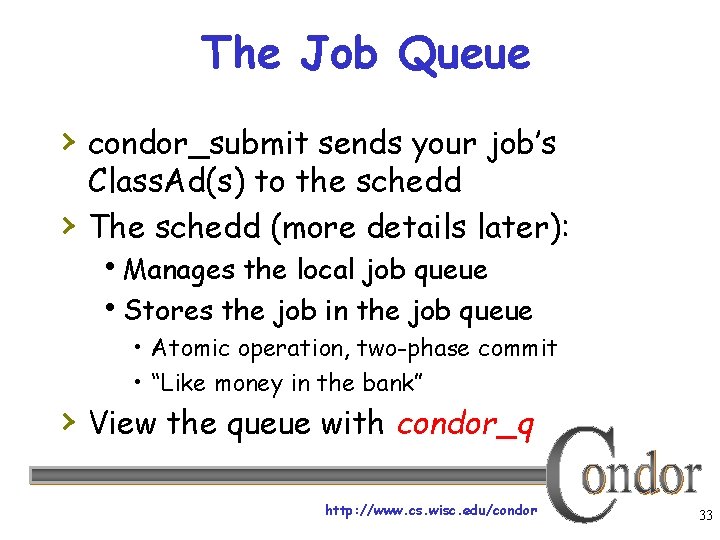

The Job Queue › condor_submit sends your job’s › Class. Ad(s) to the schedd The schedd (more details later): Manages the local job queue Stores the job in the job queue • Atomic operation, two-phase commit • “Like money in the bank” › View the queue with condor_q http: //www. cs. wisc. edu/condor 33

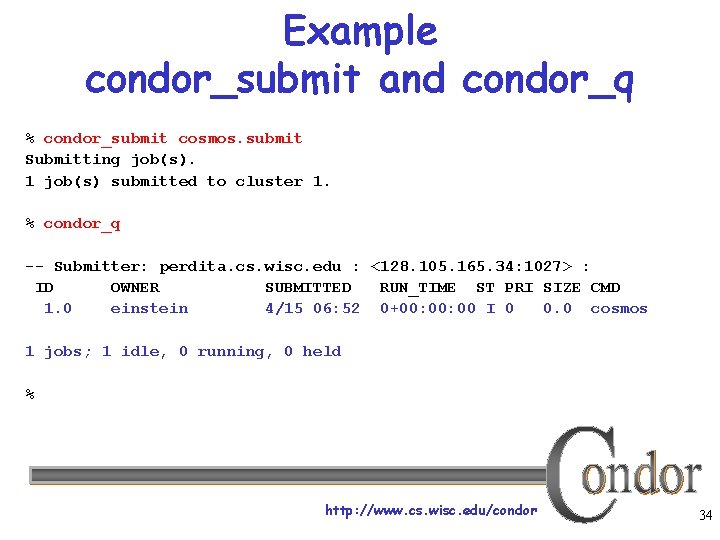

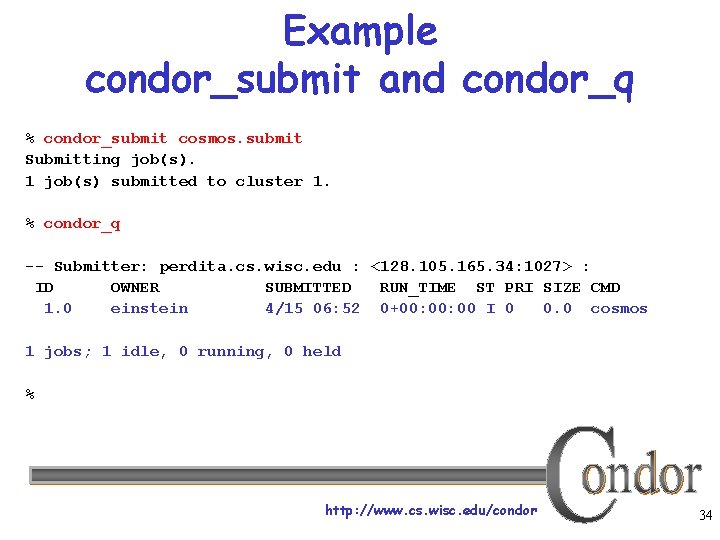

Example condor_submit and condor_q % condor_submit cosmos. submit Submitting job(s). 1 job(s) submitted to cluster 1. % condor_q -- Submitter: perdita. cs. wisc. edu : <128. 105. 165. 34: 1027> : ID OWNER SUBMITTED RUN_TIME ST PRI SIZE CMD 1. 0 einstein 4/15 06: 52 0+00: 00 I 0 0. 0 cosmos 1 jobs; 1 idle, 0 running, 0 held % http: //www. cs. wisc. edu/condor 34

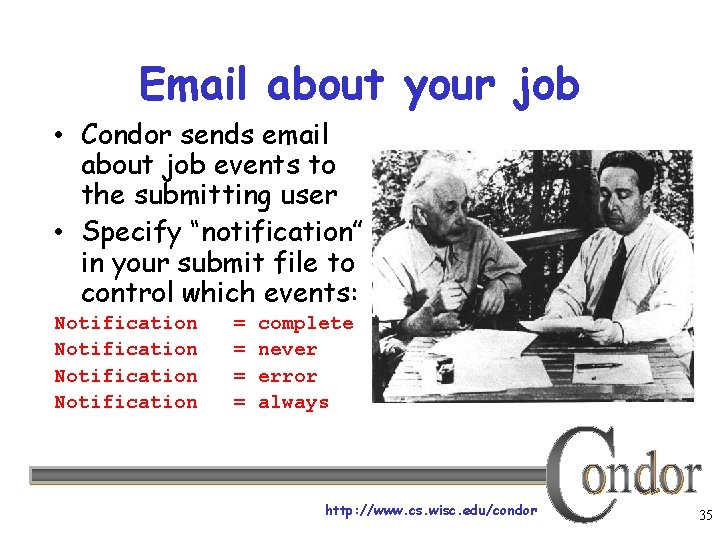

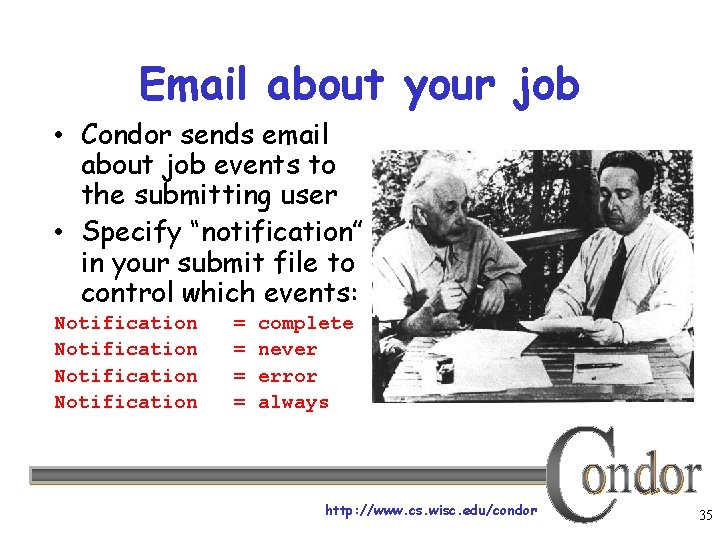

Email about your job • Condor sends email about job events to the submitting user • Specify “notification” in your submit file to control which events: Notification = = complete never error always Default http: //www. cs. wisc. edu/condor 35

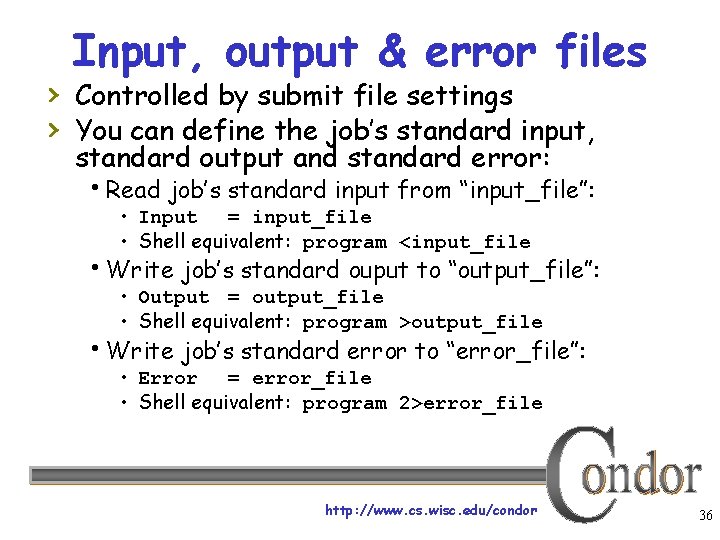

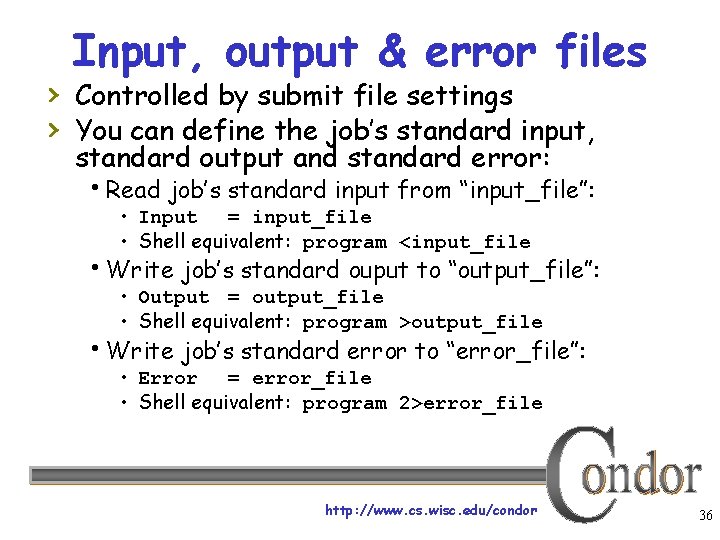

Input, output & error files › Controlled by submit file settings › You can define the job’s standard input, standard output and standard error: Read job’s standard input from “input_file”: • Input = input_file • Shell equivalent: program <input_file Write job’s standard ouput to “output_file”: • Output = output_file • Shell equivalent: program >output_file Write job’s standard error to “error_file”: • Error = error_file • Shell equivalent: program 2>error_file http: //www. cs. wisc. edu/condor 36

Feedback on your job › Create a log of job events › Add to submit description file: log = cosmos. log › Becomes the Life Story of a Job Shows all events in the life of a job Always have a log file http: //www. cs. wisc. edu/condor 37

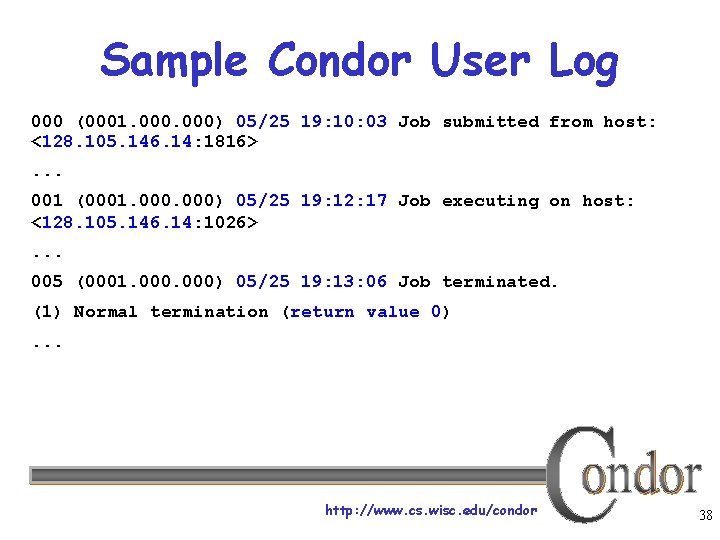

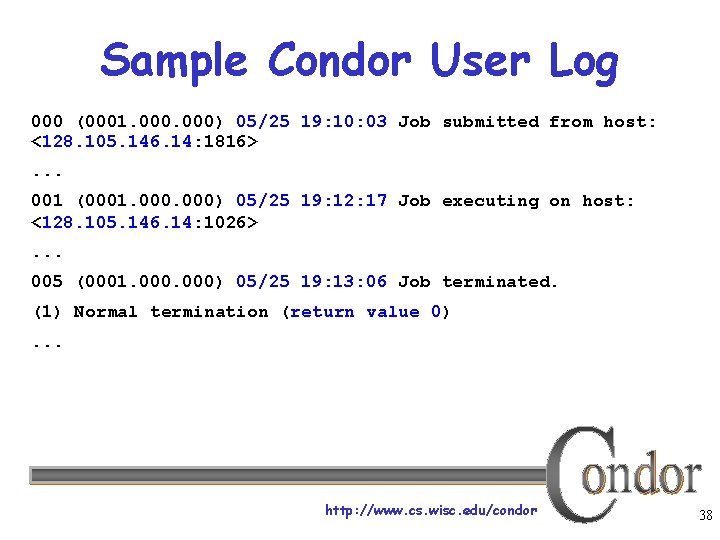

Sample Condor User Log 000 (0001. 000) 05/25 19: 10: 03 Job submitted from host: <128. 105. 146. 14: 1816>. . . 001 (0001. 000) 05/25 19: 12: 17 Job executing on host: <128. 105. 146. 14: 1026>. . . 005 (0001. 000) 05/25 19: 13: 06 Job terminated. (1) Normal termination (return value 0). . . http: //www. cs. wisc. edu/condor 38

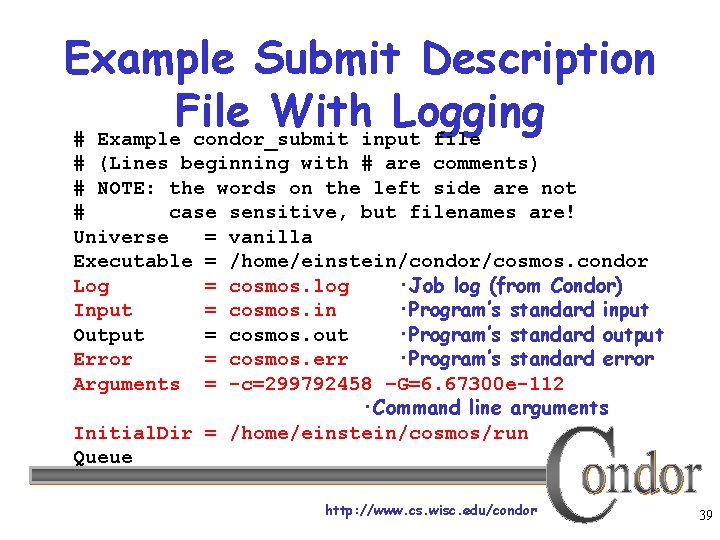

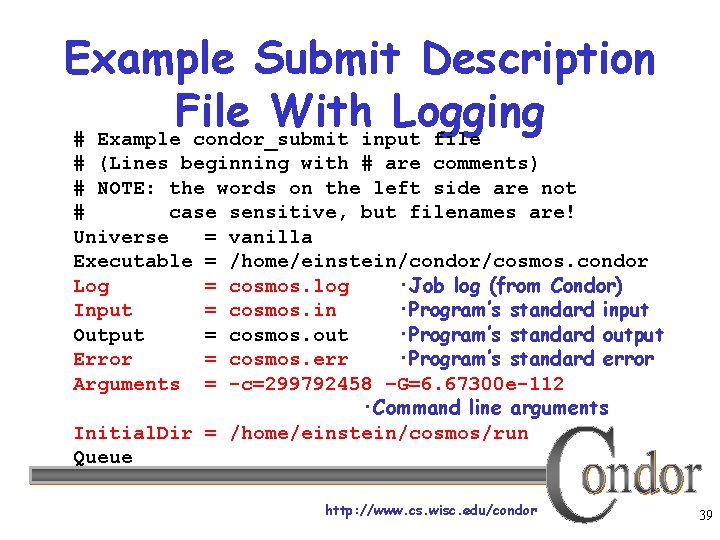

Example Submit Description File With Logging # Example condor_submit input file # (Lines beginning with # are comments) # NOTE: the words on the left side are not # case sensitive, but filenames are! Universe = vanilla Executable = /home/einstein/condor/cosmos. condor Log = cosmos. log ·Job log (from Condor) Input = cosmos. in ·Program’s standard input Output = cosmos. out ·Program’s standard output Error = cosmos. err ·Program’s standard error Arguments = -c=299792458 –G=6. 67300 e-112 ·Command line arguments Initial. Dir = /home/einstein/cosmos/run Queue http: //www. cs. wisc. edu/condor 39

“Clusters” and “Processes” › If your submit file describes multiple jobs, we call › › › this a “cluster” Each cluster has a unique “cluster number” Each job in a cluster is called a “process” Process numbers always start at zero A Condor “Job ID” is the cluster number, a period, and the process number (i. e. 2. 1) A cluster can have a single process • Job ID = 20. 0 ·Cluster 20, process 0 Or, a cluster can have more than one process • Job ID: 21. 0, 21. 1, 21. 2 ·Cluster 21, process 0, 1, 2 http: //www. cs. wisc. edu/condor 40

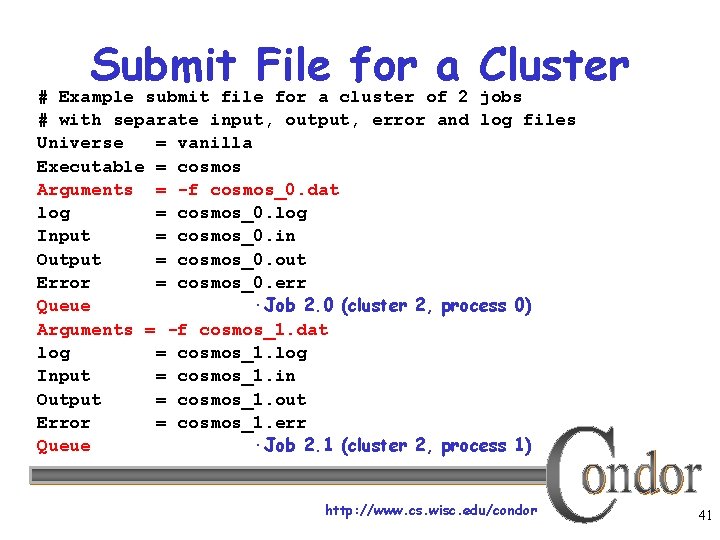

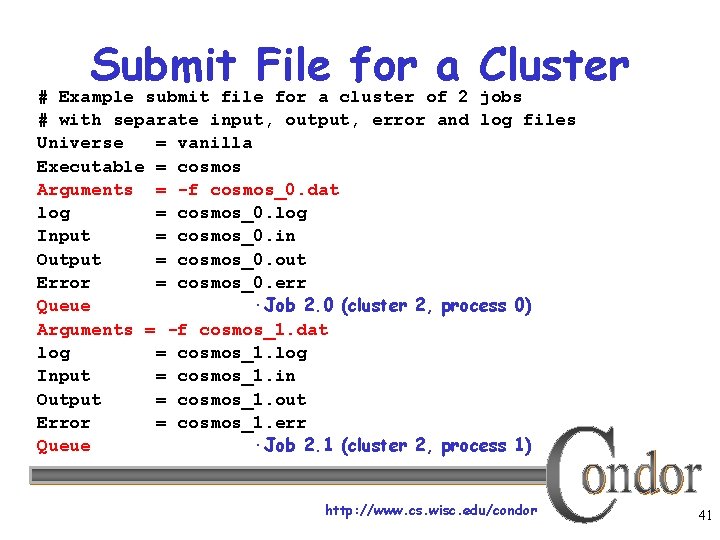

Submit File for a Cluster # Example submit file for a cluster of 2 jobs # with separate input, output, error and log files Universe = vanilla Executable = cosmos Arguments = -f cosmos_0. dat log = cosmos_0. log Input = cosmos_0. in Output = cosmos_0. out Error = cosmos_0. err Queue ·Job 2. 0 (cluster 2, process 0) Arguments = -f cosmos_1. dat log = cosmos_1. log Input = cosmos_1. in Output = cosmos_1. out Error = cosmos_1. err Queue ·Job 2. 1 (cluster 2, process 1) http: //www. cs. wisc. edu/condor 41

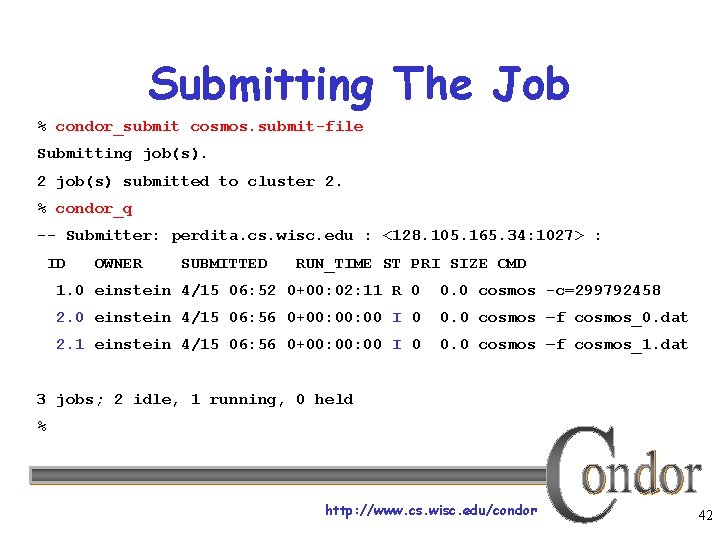

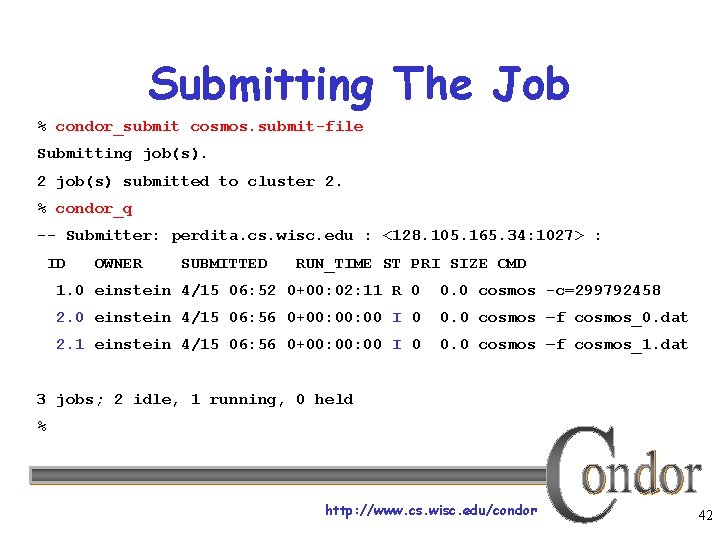

Submitting The Job % condor_submit cosmos. submit-file Submitting job(s). 2 job(s) submitted to cluster 2. % condor_q -- Submitter: perdita. cs. wisc. edu : <128. 105. 165. 34: 1027> : ID OWNER SUBMITTED RUN_TIME ST PRI SIZE CMD 1. 0 einstein 4/15 06: 52 0+00: 02: 11 R 0 0. 0 cosmos -c=299792458 2. 0 einstein 4/15 06: 56 0+00: 00 I 0 0. 0 cosmos –f cosmos_0. dat 2. 1 einstein 4/15 06: 56 0+00: 00 I 0 0. 0 cosmos –f cosmos_1. dat 3 jobs; 2 idle, 1 running, 0 held % http: //www. cs. wisc. edu/condor 42

Back to our 1, 000 jobs… › We could put all input, output, error & log files in the one directory One of each type for each job That’d be 4, 000 files (4 files × 1, 000 jobs) Difficult (at best) to sort through › Better: Create a subdirectory for each run http: //www. cs. wisc. edu/condor 43

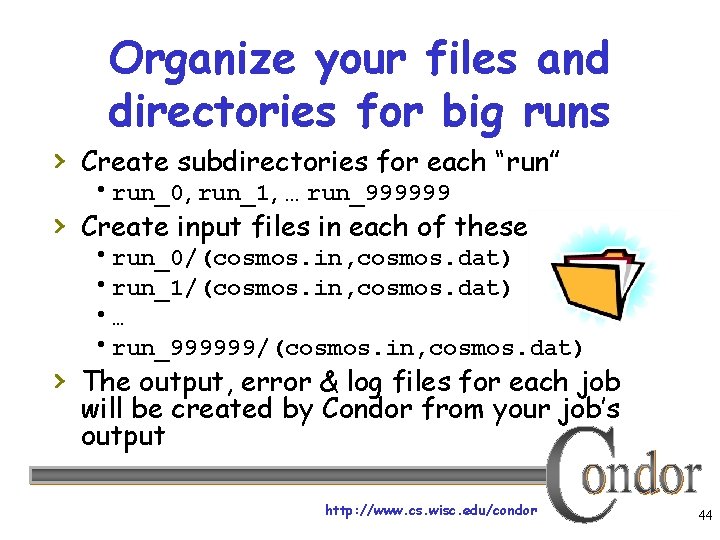

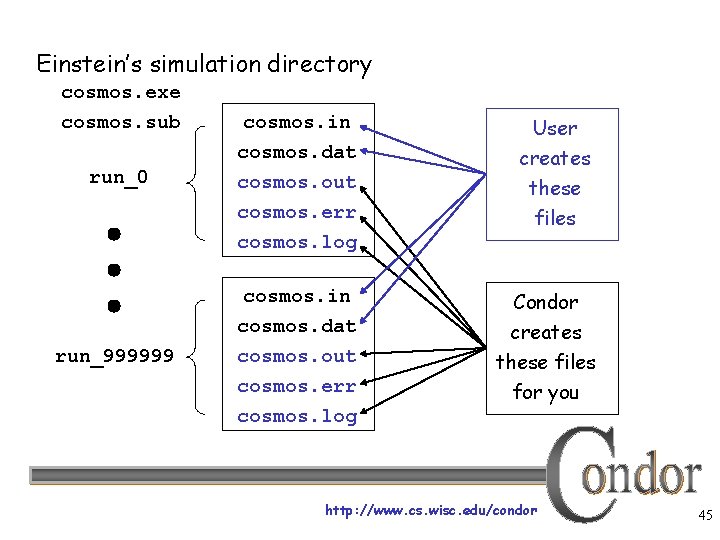

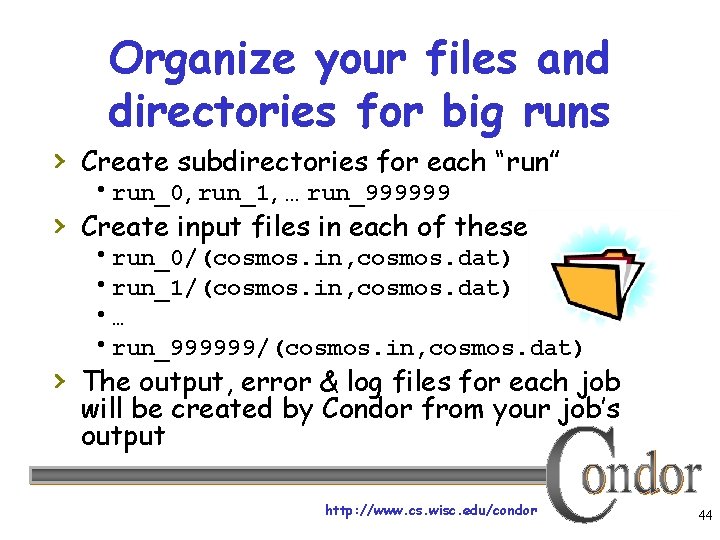

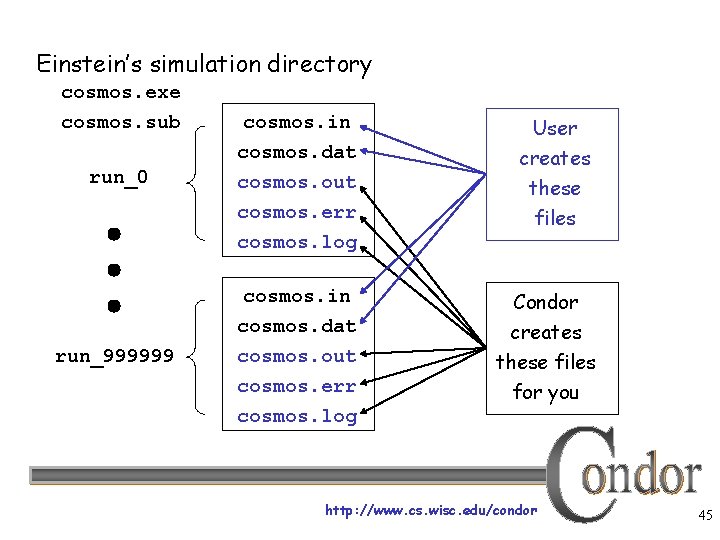

Organize your files and directories for big runs › Create subdirectories for each “run” run_0, run_1, … run_999999 › Create input files in each of these run_0/(cosmos. in, cosmos. dat) run_1/(cosmos. in, cosmos. dat) … run_999999/(cosmos. in, cosmos. dat) › The output, error & log files for each job will be created by Condor from your job’s output http: //www. cs. wisc. edu/condor 44

Einstein’s simulation directory cosmos. exe cosmos. sub cosmos. in cosmos. dat run_0 cosmos. out cosmos. err cosmos. log cosmos. in cosmos. dat run_999999 cosmos. out cosmos. err cosmos. log User creates these files Condor creates these files for you http: //www. cs. wisc. edu/condor 45

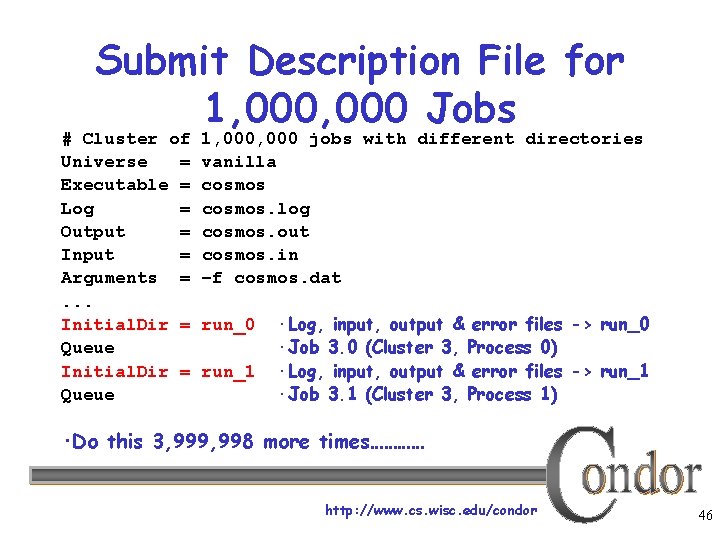

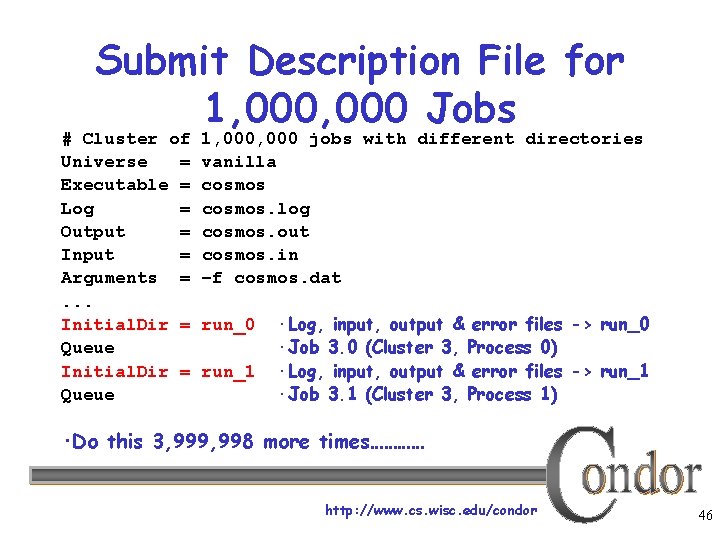

Submit Description File for 1, 000 Jobs # Cluster of Universe = Executable = Log = Output = Input = Arguments =. . . Initial. Dir = Queue 1, 000 jobs with different directories vanilla cosmos. log cosmos. out cosmos. in –f cosmos. dat run_0 run_1 ·Log, input, output & error files -> run_0 ·Job 3. 0 (Cluster 3, Process 0) ·Log, input, output & error files -> run_1 ·Job 3. 1 (Cluster 3, Process 1) ·Do this 3, 999, 998 more times………… http: //www. cs. wisc. edu/condor 46

Submit File for a Big Cluster of Jobs › We just submitted 1 cluster with › › › 1, 000 processes All the input/output files will be in different directories The submit file is pretty unwieldy (over 5, 000 lines!) Isn’t there a better way? http: //www. cs. wisc. edu/condor 47

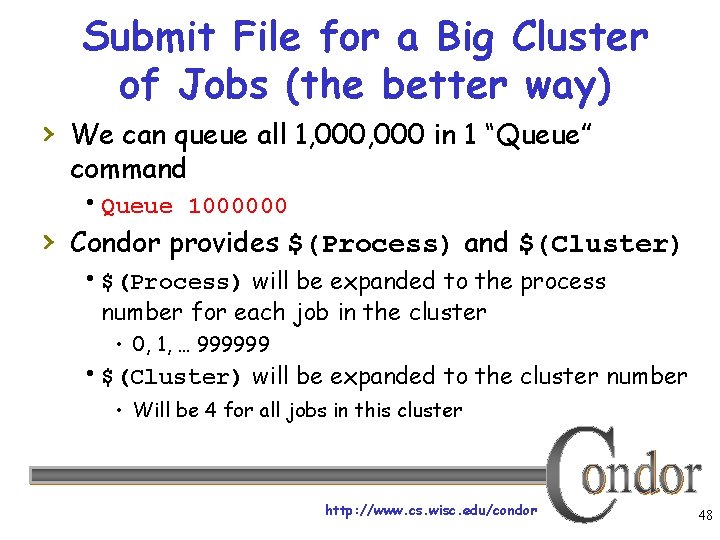

Submit File for a Big Cluster of Jobs (the better way) › We can queue all 1, 000 in 1 “Queue” command Queue 1000000 › Condor provides $(Process) and $(Cluster) $(Process) will be expanded to the process number for each job in the cluster • 0, 1, … 999999 $(Cluster) will be expanded to the cluster number • Will be 4 for all jobs in this cluster http: //www. cs. wisc. edu/condor 48

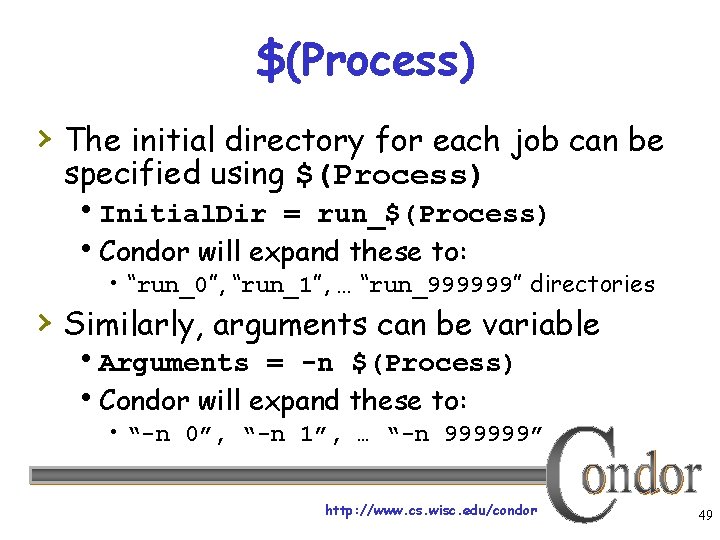

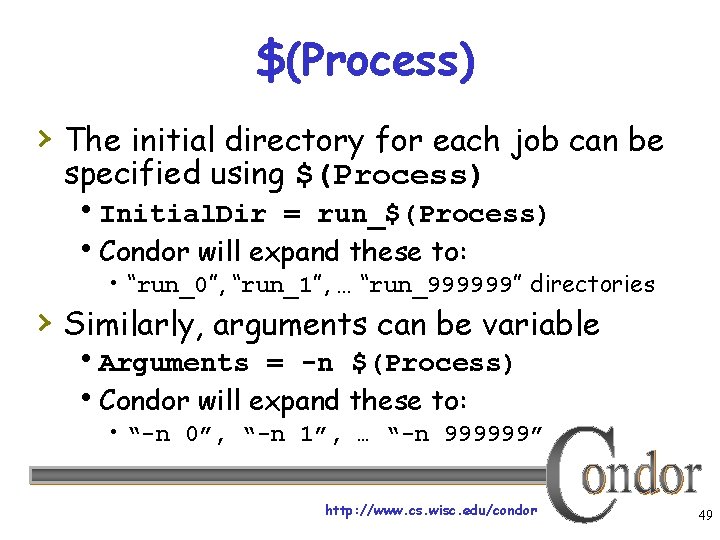

$(Process) › The initial directory for each job can be specified using $(Process) Initial. Dir = run_$(Process) Condor will expand these to: • “run_0”, “run_1”, … “run_999999” directories › Similarly, arguments can be variable Arguments = -n $(Process) Condor will expand these to: • “-n 0”, “-n 1”, … “-n 999999” http: //www. cs. wisc. edu/condor 49

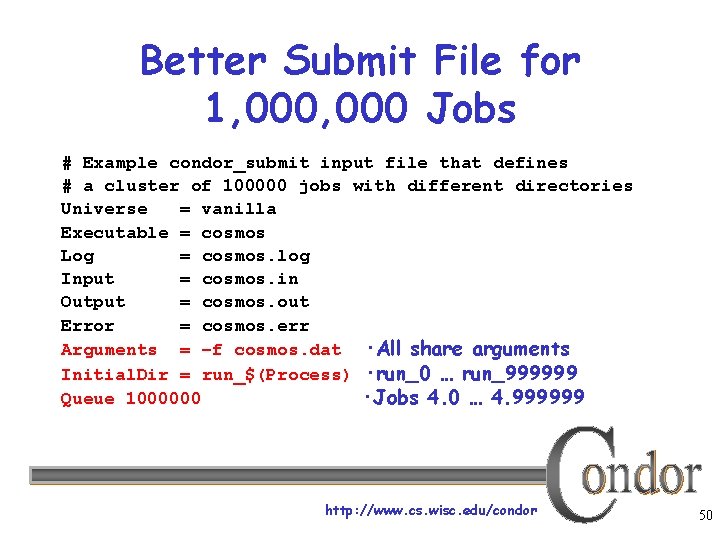

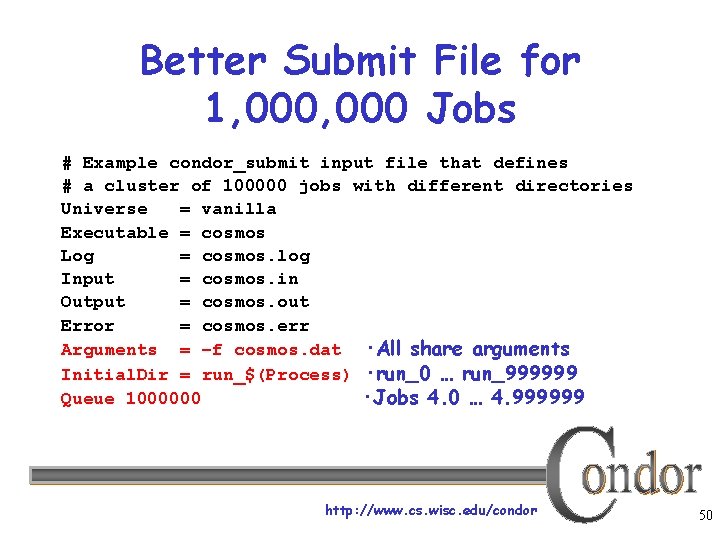

Better Submit File for 1, 000 Jobs # Example condor_submit input file that defines # a cluster of 100000 jobs with different directories Universe = vanilla Executable = cosmos Log = cosmos. log Input = cosmos. in Output = cosmos. out Error = cosmos. err Arguments = –f cosmos. dat ·All share arguments Initial. Dir = run_$(Process) ·run_0 … run_999999 Queue 1000000 ·Jobs 4. 0 … 4. 999999 http: //www. cs. wisc. edu/condor 50

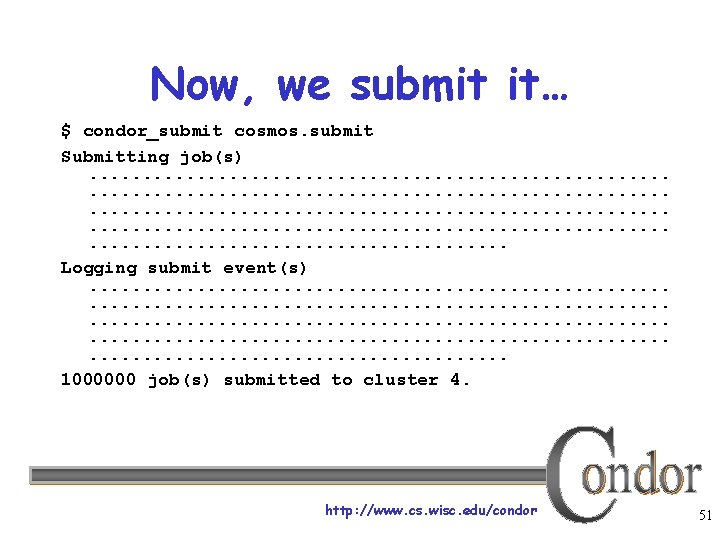

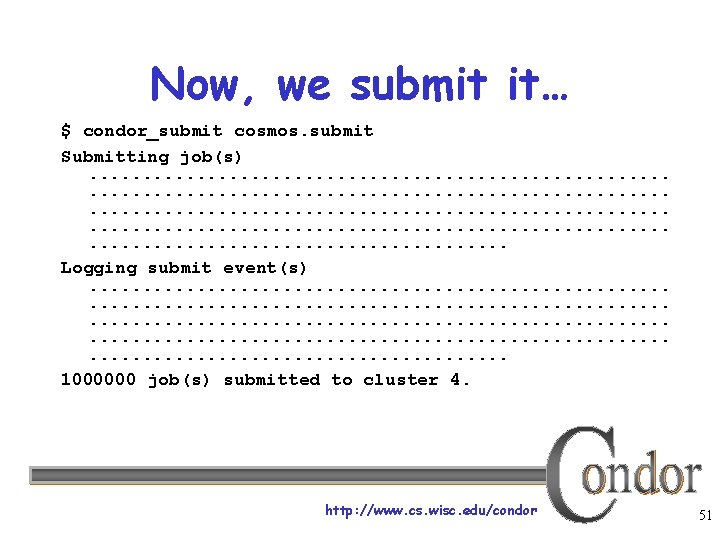

Now, we submit it… $ condor_submit cosmos. submit Submitting job(s). . . . . . . . . . . . . . . . Logging submit event(s). . . . . . . . . . . . . . . . 1000000 job(s) submitted to cluster 4. http: //www. cs. wisc. edu/condor 51

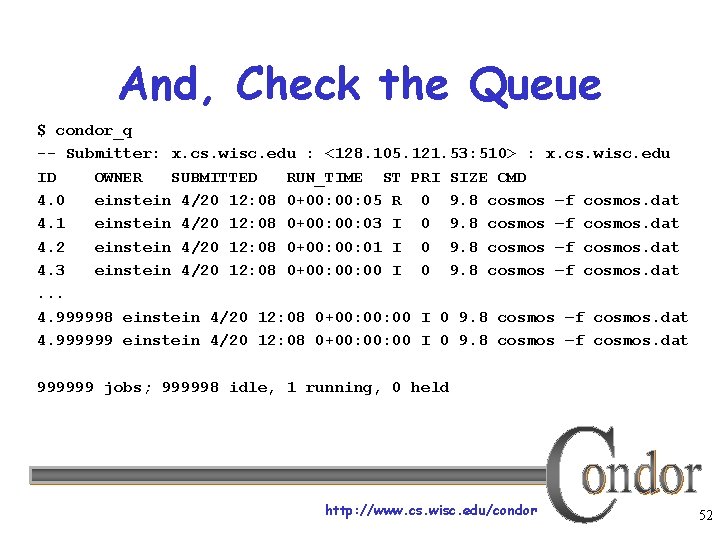

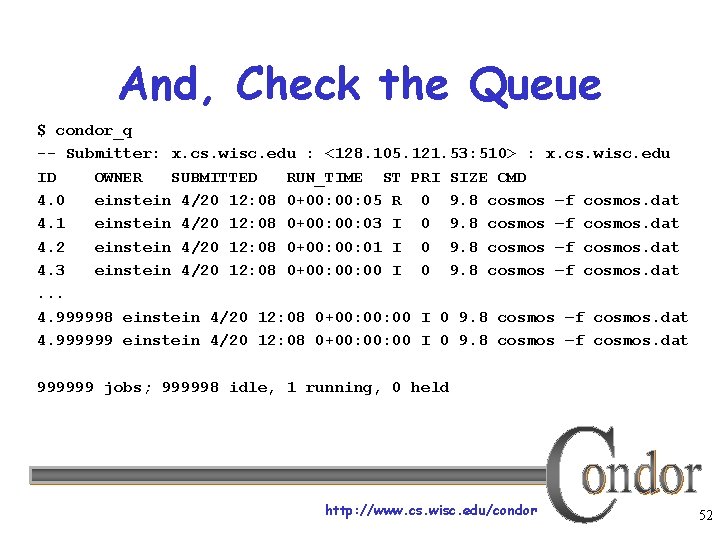

And, Check the Queue $ condor_q -- Submitter: x. cs. wisc. edu : <128. 105. 121. 53: 510> : x. cs. wisc. edu ID OWNER SUBMITTED RUN_TIME ST PRI SIZE CMD 4. 0 einstein 4/20 12: 08 0+00: 05 R 0 9. 8 cosmos –f cosmos. dat 4. 1 einstein 4/20 12: 08 0+00: 03 I 0 9. 8 cosmos –f cosmos. dat 4. 2 einstein 4/20 12: 08 0+00: 01 I 0 9. 8 cosmos –f cosmos. dat 4. 3 einstein 4/20 12: 08 0+00: 00 I 0 9. 8 cosmos –f cosmos. dat. . . 4. 999998 einstein 4/20 12: 08 0+00: 00 I 0 9. 8 cosmos –f cosmos. dat 4. 999999 einstein 4/20 12: 08 0+00: 00 I 0 9. 8 cosmos –f cosmos. dat 999999 jobs; 999998 idle, 1 running, 0 held http: //www. cs. wisc. edu/condor 52

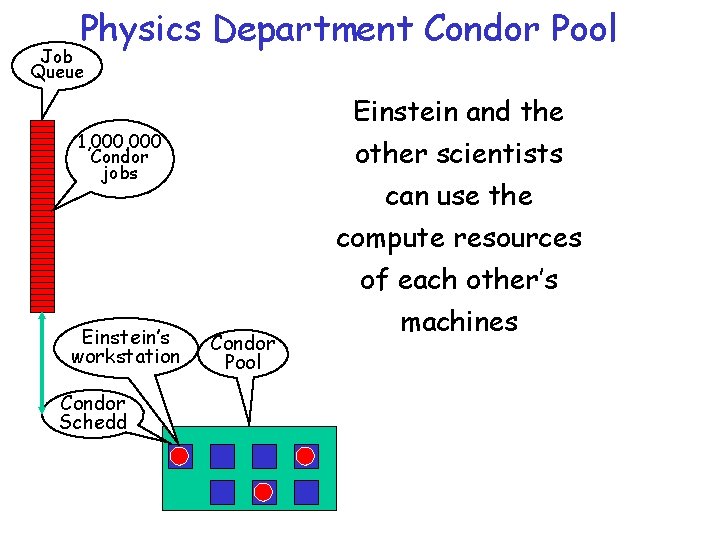

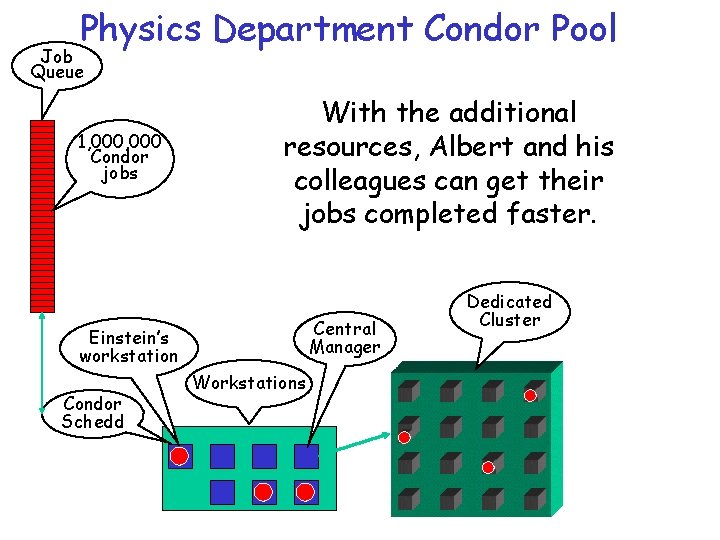

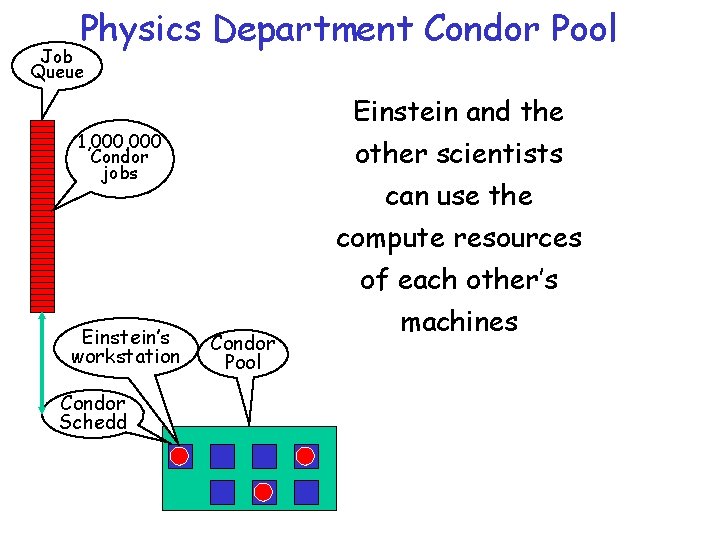

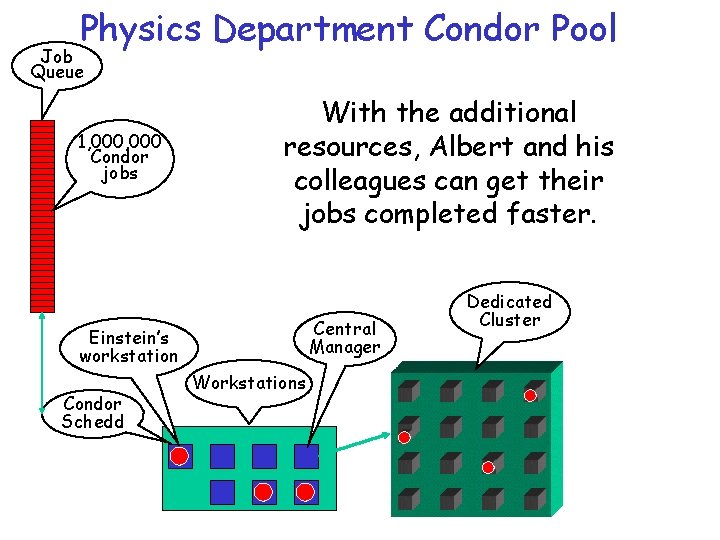

Physics Department Condor Pool Job Queue Einstein and the 1, 000 Condor jobs other scientists can use the compute resources Einstein’s workstation Condor Pool of each other’s machines Condor Schedd http: //www. cs. wisc. edu/condor 53

My Biggest Blunder Ever › Albert removes Rμν › (Cosmological Constant) from his equations, and needs to remove his running jobs We’ll just ignore that modern cosmologists may have re-introduced Rμν (so called “dark energy”) http: //www. cs. wisc. edu/condor 54

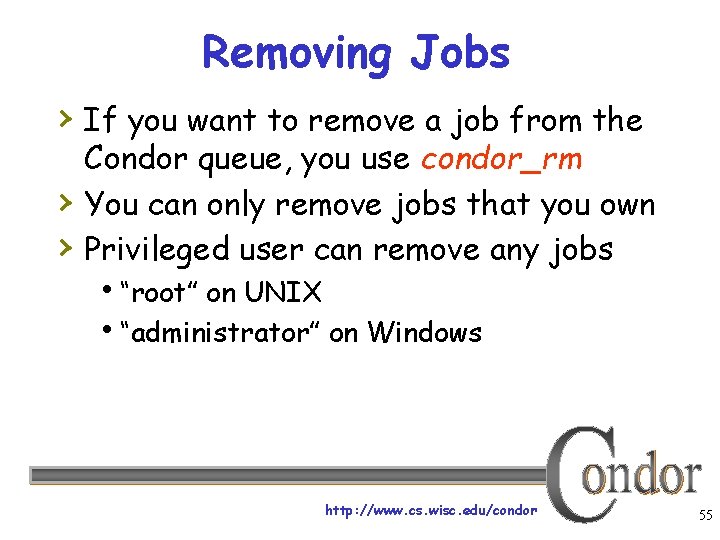

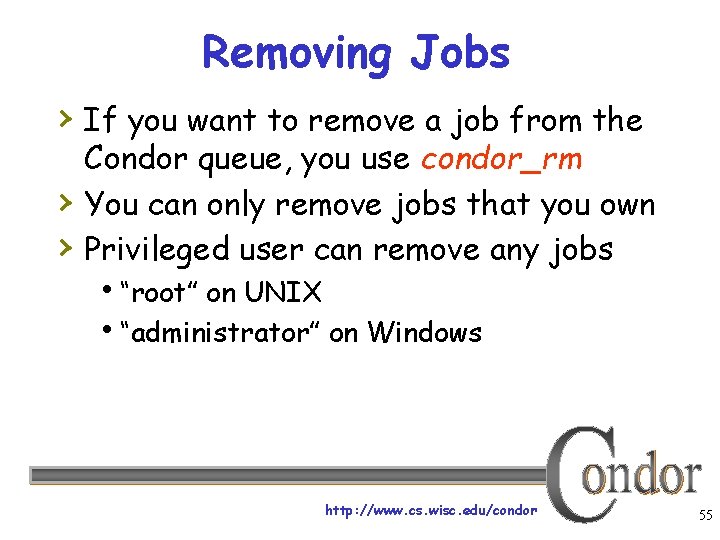

Removing Jobs › If you want to remove a job from the › › Condor queue, you use condor_rm You can only remove jobs that you own Privileged user can remove any jobs “root” on UNIX “administrator” on Windows http: //www. cs. wisc. edu/condor 55

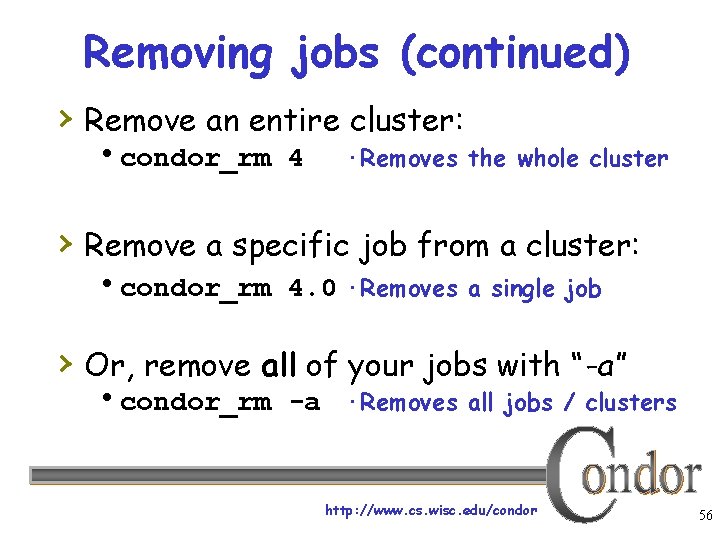

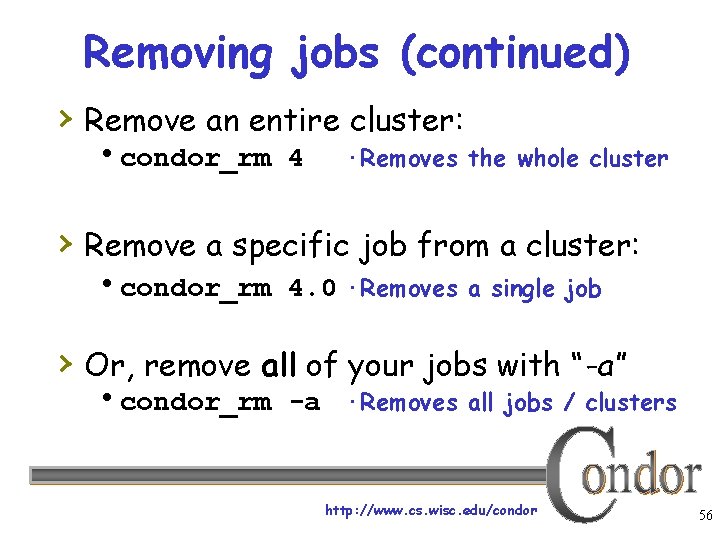

Removing jobs (continued) › Remove an entire cluster: condor_rm 4 ·Removes the whole cluster › Remove a specific job from a cluster: condor_rm 4. 0 ·Removes a single job › Or, remove all of your jobs with “-a” condor_rm -a ·Removes all jobs / clusters http: //www. cs. wisc. edu/condor 56

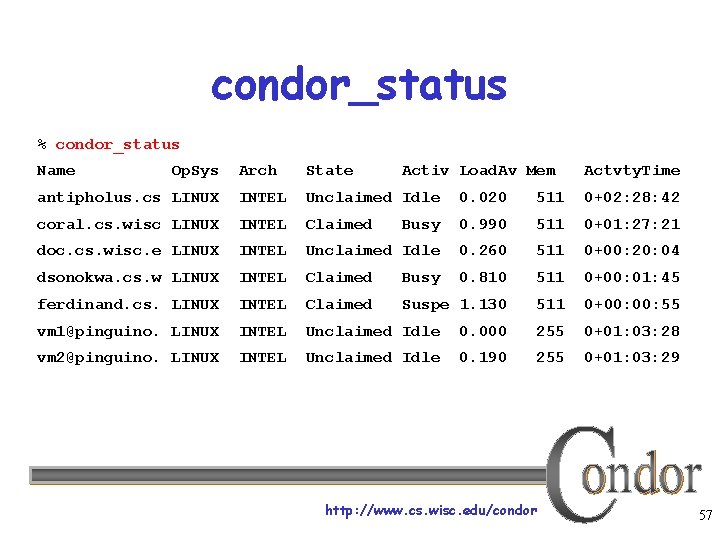

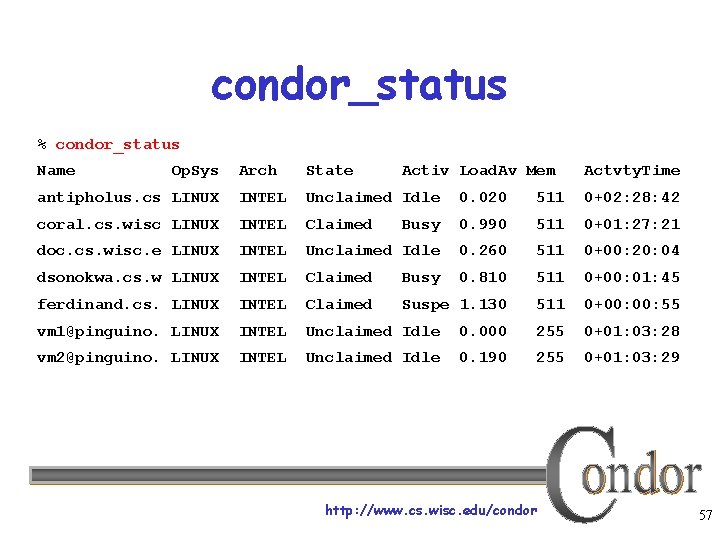

condor_status % condor_status Name Op. Sys Arch State Activ Load. Av Mem antipholus. cs LINUX INTEL Unclaimed Idle 0. 020 511 0+02: 28: 42 coral. cs. wisc LINUX INTEL Claimed Busy 0. 990 511 0+01: 27: 21 doc. cs. wisc. e LINUX INTEL Unclaimed Idle 0. 260 511 0+00: 20: 04 dsonokwa. cs. w LINUX INTEL Claimed Busy 0. 810 511 0+00: 01: 45 ferdinand. cs. LINUX INTEL Claimed Suspe 1. 130 511 0+00: 55 vm 1@pinguino. LINUX INTEL Unclaimed Idle 0. 000 255 0+01: 03: 28 vm 2@pinguino. LINUX INTEL Unclaimed Idle 0. 190 255 0+01: 03: 29 http: //www. cs. wisc. edu/condor Actvty. Time 57

How can my jobs access their data files? http: //www. cs. wisc. edu/condor 58

Access to Data in Condor › Use shared filesystem if available › No shared filesystem? Condor can transfer files • Can automatically send back changed files • Atomic transfer of multiple files • Can be encrypted over the wire Remote I/O Socket (parrot) Standard Universe can use remote system calls (more on this later) http: //www. cs. wisc. edu/condor 59

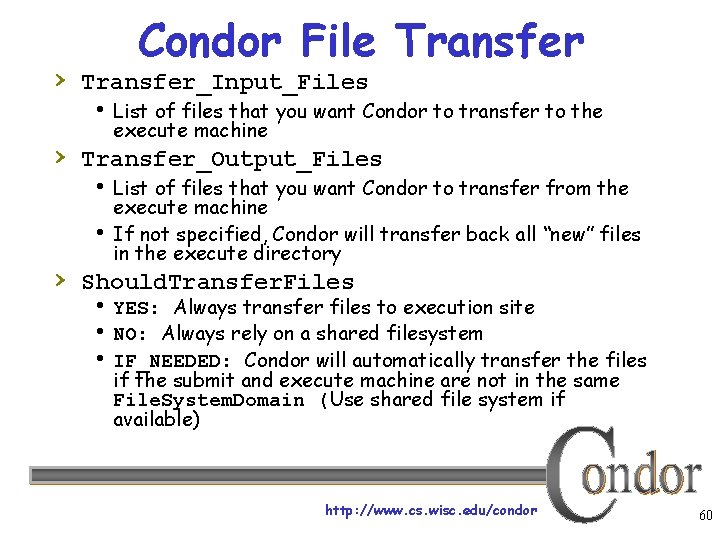

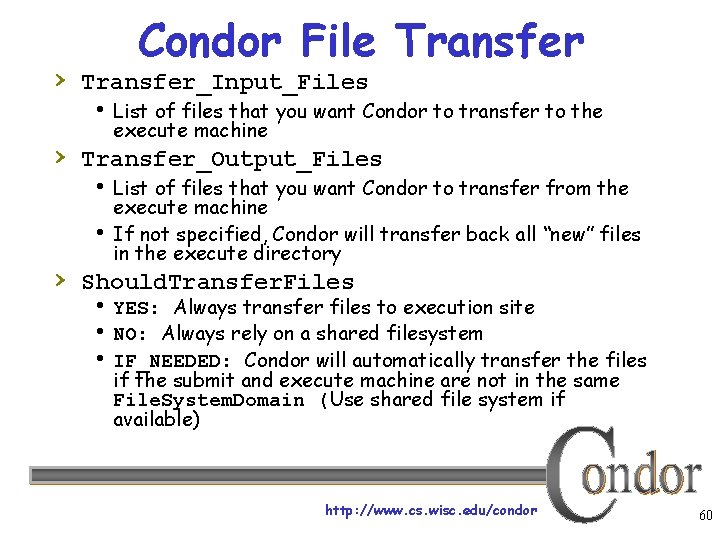

Condor File Transfer › Transfer_Input_Files List of files that you want Condor to transfer to the execute machine › Transfer_Output_Files List of files that you want Condor to transfer from the execute machine If not specified, Condor will transfer back all “new” files in the execute directory › Should. Transfer. Files YES: Always transfer files to execution site NO: Always rely on a shared filesystem IF_NEEDED: Condor will automatically transfer the files if the submit and execute machine are not in the same File. System. Domain (Use shared file system if available) http: //www. cs. wisc. edu/condor 60

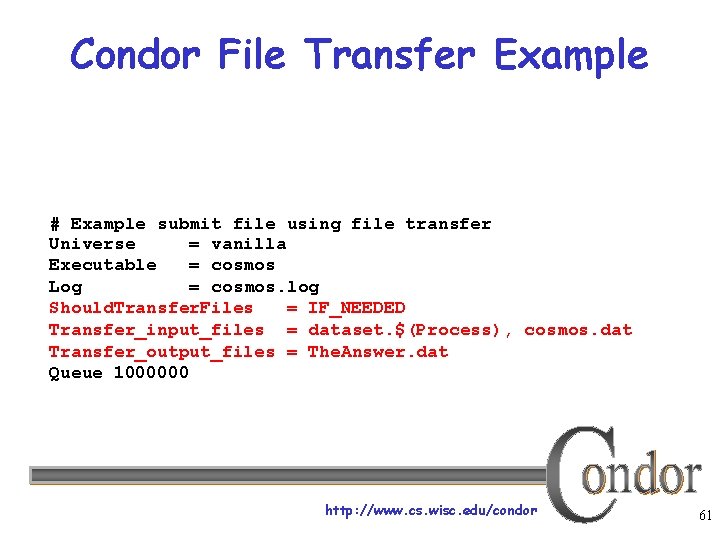

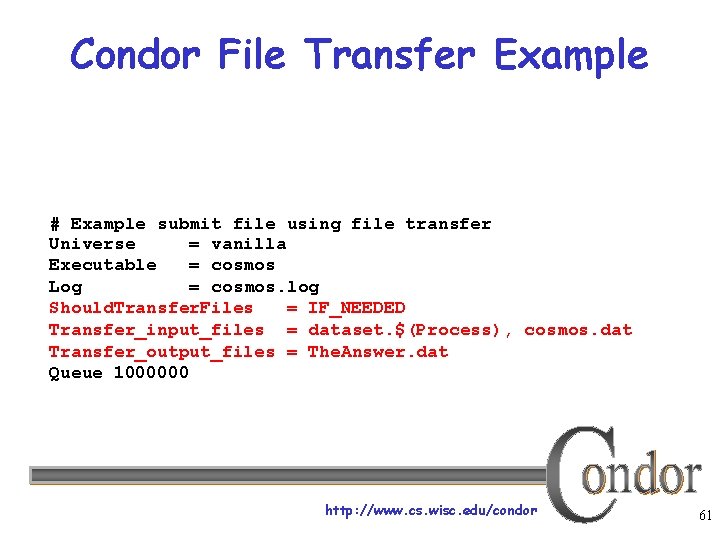

Condor File Transfer Example # Example submit file using file transfer Universe = vanilla Executable = cosmos Log = cosmos. log Should. Transfer. Files = IF_NEEDED Transfer_input_files = dataset. $(Process), cosmos. dat Transfer_output_files = The. Answer. dat Queue 1000000 http: //www. cs. wisc. edu/condor 61

We Always Want More Condor is managing and running our jobs, but: § Our CPU requirements are greater than our resources § Jobs are preempted more often than we like http: //www. cs. wisc. edu/condor 62

Happy Day! The Physics Department purchased a dedicated cluster! • The administrator installs Condor on all these new dedicated cluster nodes http: //www. cs. wisc. edu/condor 63

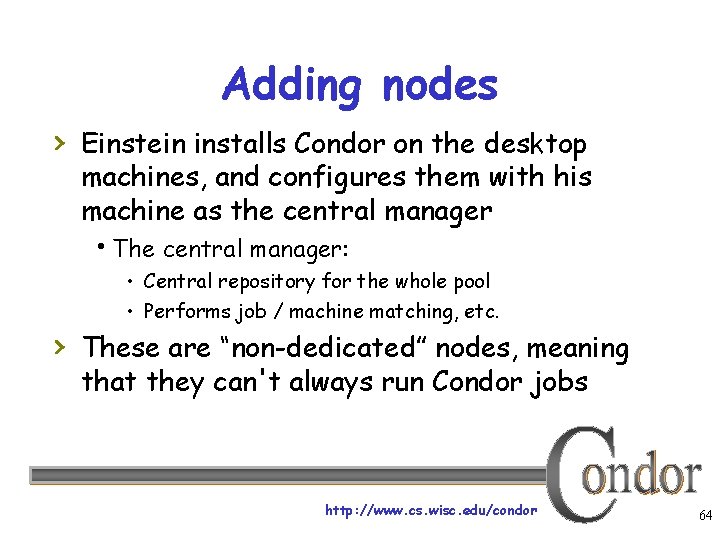

Adding nodes › Einstein installs Condor on the desktop machines, and configures them with his machine as the central manager The central manager: • Central repository for the whole pool • Performs job / machine matching, etc. › These are “non-dedicated” nodes, meaning that they can't always run Condor jobs http: //www. cs. wisc. edu/condor 64

Physics Department Condor Pool Job Queue 1, 000 Condor jobs With the additional resources, Albert and his colleagues can get their jobs completed faster. Central Manager Einstein’s workstation Condor Schedd Dedicated Cluster Workstations http: //www. cs. wisc. edu/condor 65

Some Good Questions… • What are all of these Condor Daemons running on my machine? • What do they do? http: //www. cs. wisc. edu/condor 66

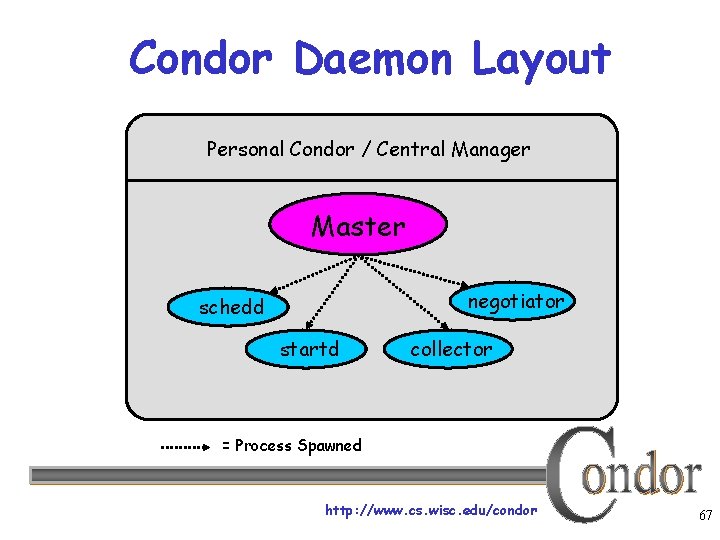

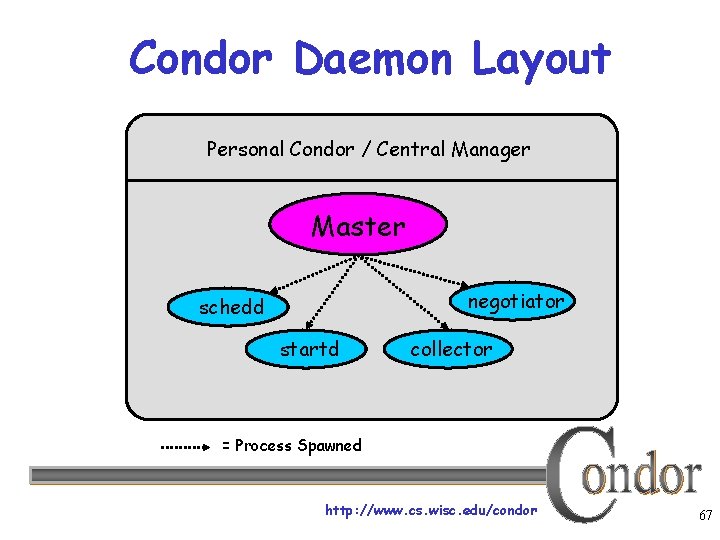

Condor Daemon Layout Personal Condor / Central Manager Master negotiator schedd startd collector = Process Spawned http: //www. cs. wisc. edu/condor 67

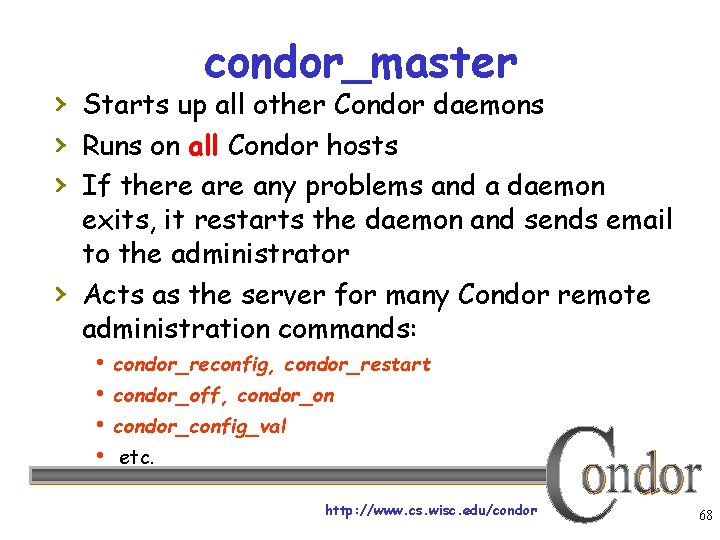

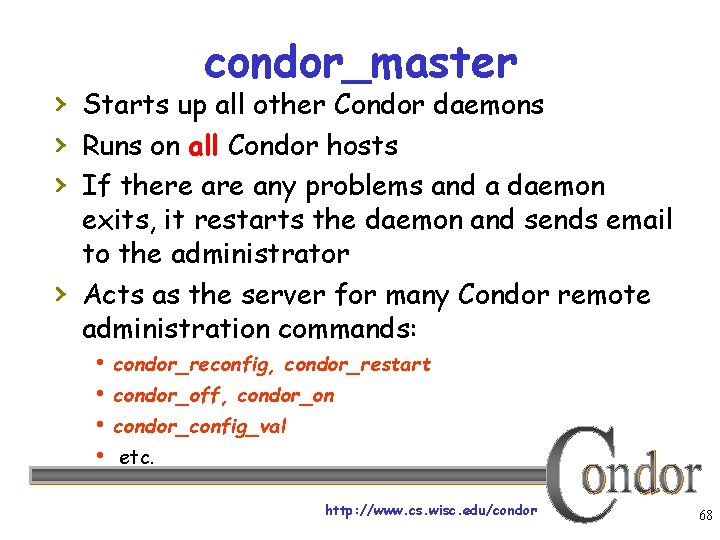

condor_master › Starts up all other Condor daemons › Runs on all Condor hosts › If there any problems and a daemon › exits, it restarts the daemon and sends email to the administrator Acts as the server for many Condor remote administration commands: condor_reconfig, condor_restart condor_off, condor_on condor_config_val etc. http: //www. cs. wisc. edu/condor 68

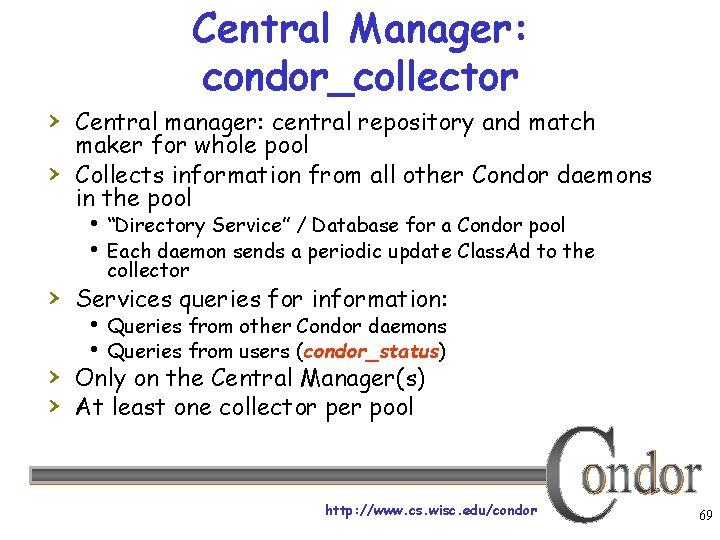

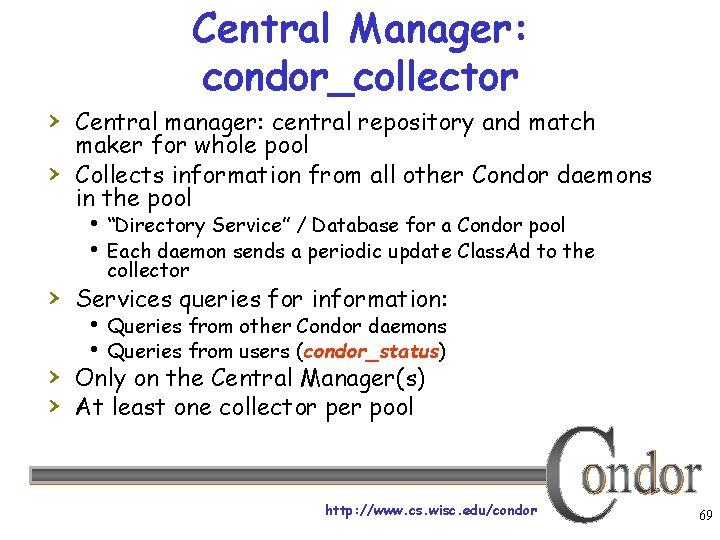

Central Manager: condor_collector › Central manager: central repository and match › maker for whole pool Collects information from all other Condor daemons in the pool “Directory Service” / Database for a Condor pool Each daemon sends a periodic update Class. Ad to the collector › Services queries for information: Queries from other Condor daemons Queries from users (condor_status) › Only on the Central Manager(s) › At least one collector per pool http: //www. cs. wisc. edu/condor 69

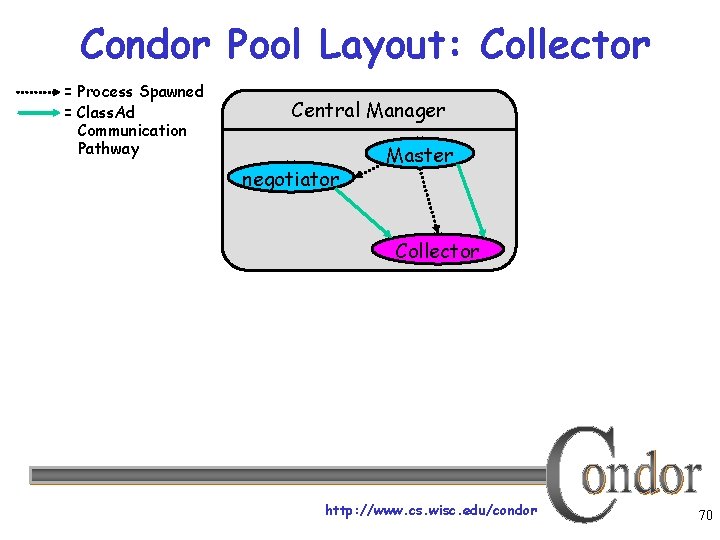

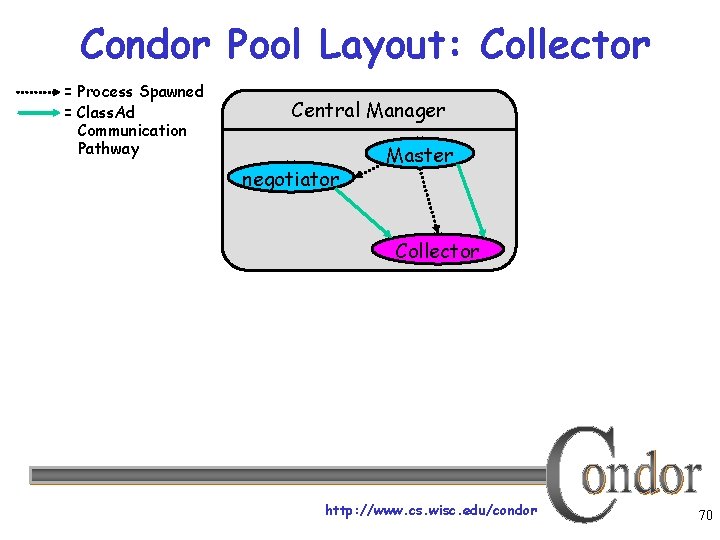

Condor Pool Layout: Collector = Process Spawned = Class. Ad Communication Pathway Central Manager negotiator Master Collector http: //www. cs. wisc. edu/condor 70

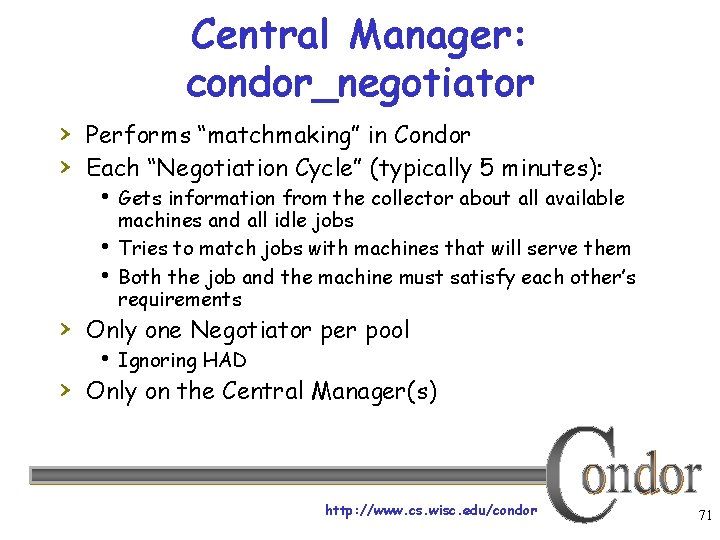

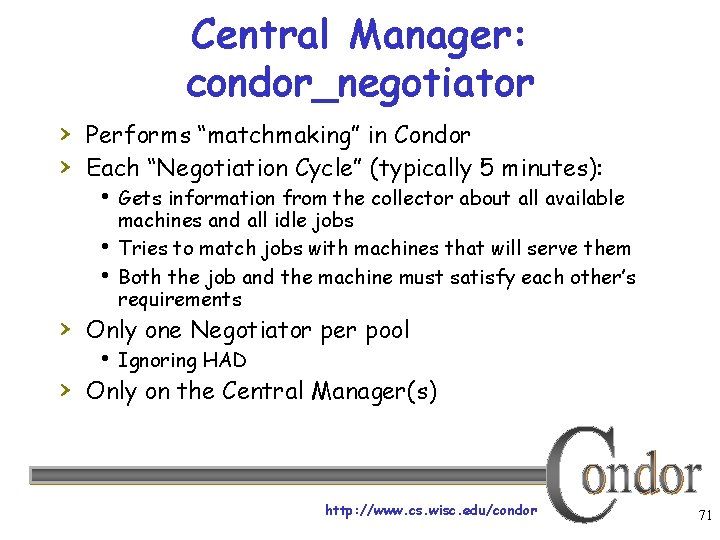

Central Manager: condor_negotiator › Performs “matchmaking” in Condor › Each “Negotiation Cycle” (typically 5 minutes): Gets information from the collector about all available machines and all idle jobs Tries to match jobs with machines that will serve them Both the job and the machine must satisfy each other’s requirements › Only one Negotiator per pool Ignoring HAD › Only on the Central Manager(s) http: //www. cs. wisc. edu/condor 71

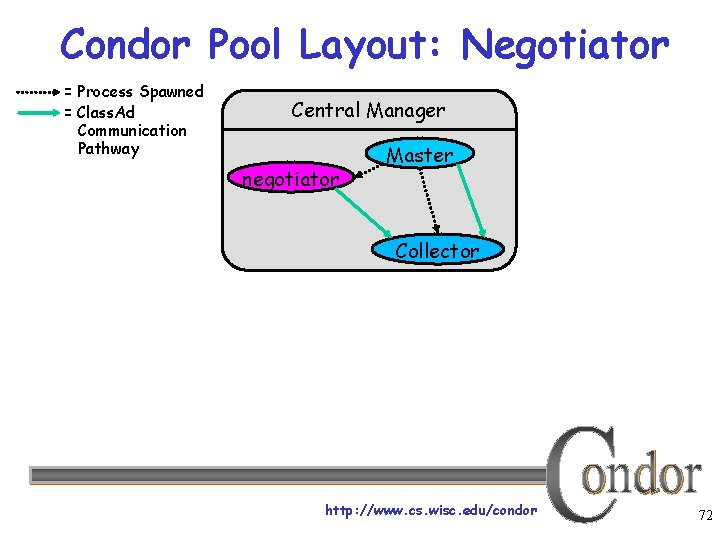

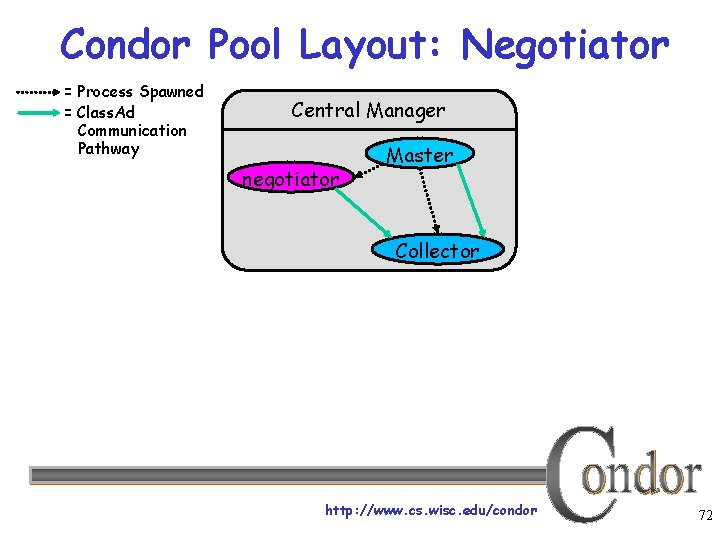

Condor Pool Layout: Negotiator = Process Spawned = Class. Ad Communication Pathway Central Manager negotiator Master Collector http: //www. cs. wisc. edu/condor 72

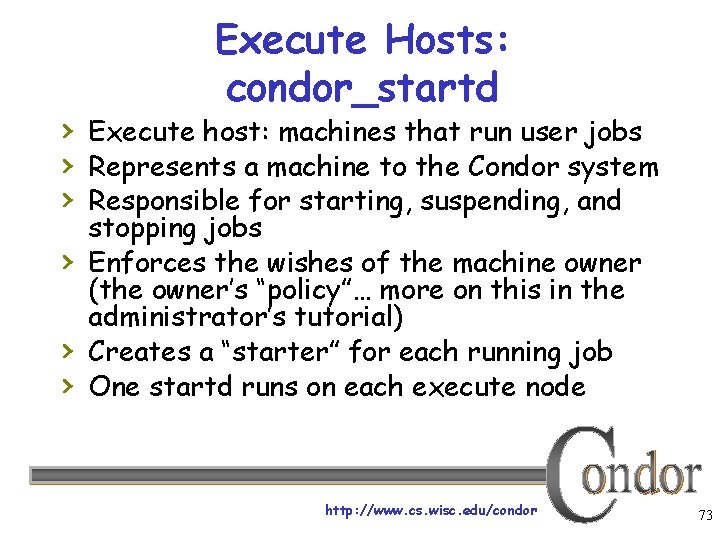

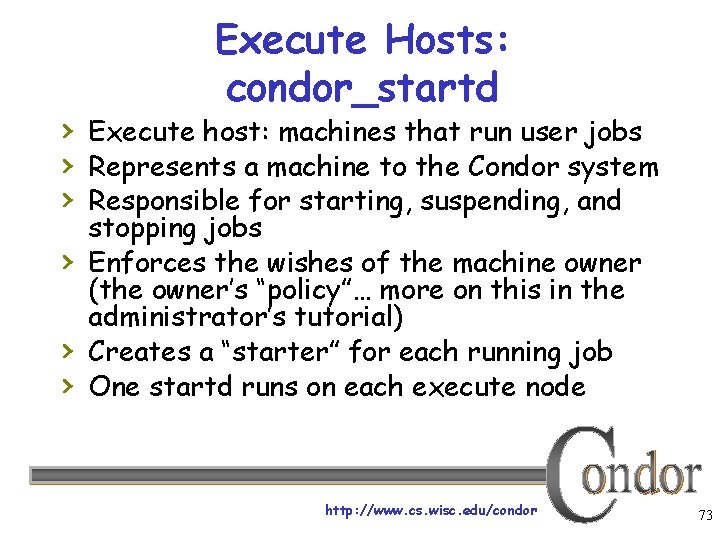

Execute Hosts: condor_startd › Execute host: machines that run user jobs › Represents a machine to the Condor system › Responsible for starting, suspending, and › › › stopping jobs Enforces the wishes of the machine owner (the owner’s “policy”… more on this in the administrator’s tutorial) Creates a “starter” for each running job One startd runs on each execute node http: //www. cs. wisc. edu/condor 73

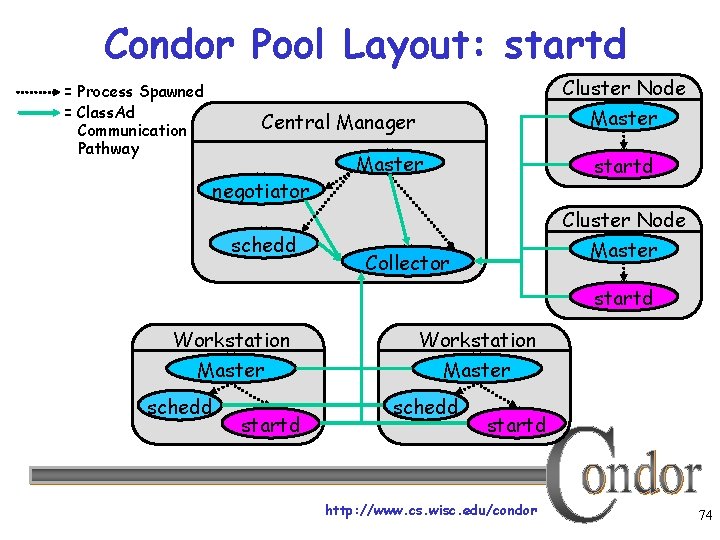

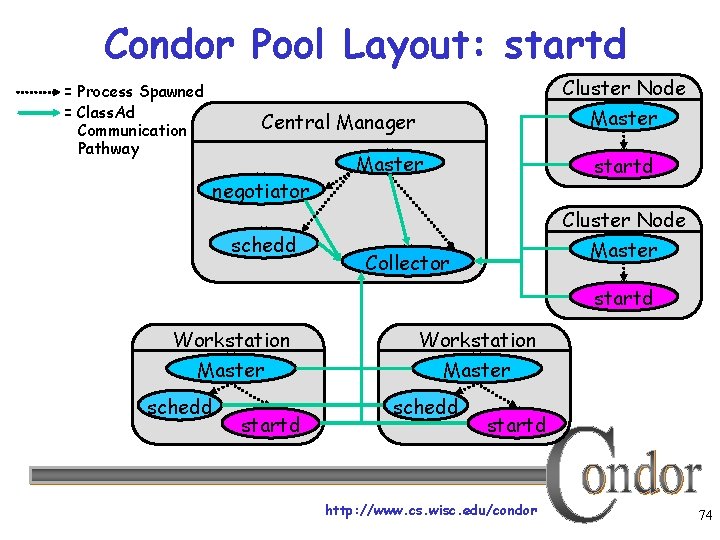

Condor Pool Layout: startd Cluster Node = Process Spawned = Class. Ad Communication Pathway Master Central Manager negotiator schedd Master startd Cluster Node Master Collector startd Workstation Master schedd startd http: //www. cs. wisc. edu/condor 74

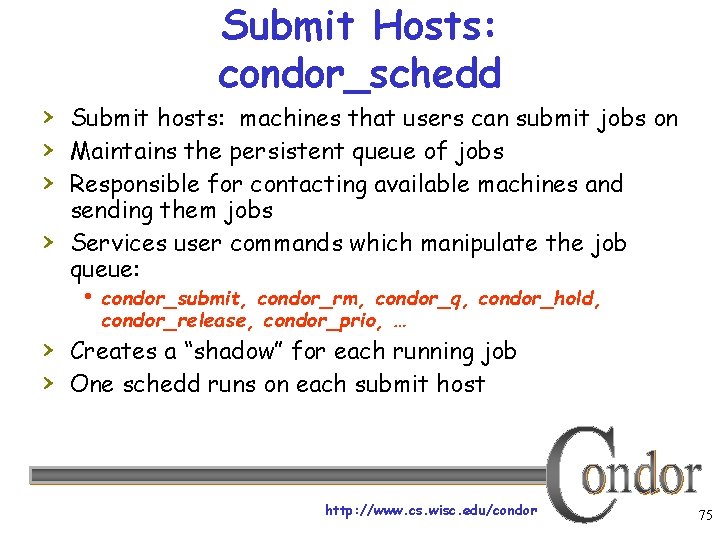

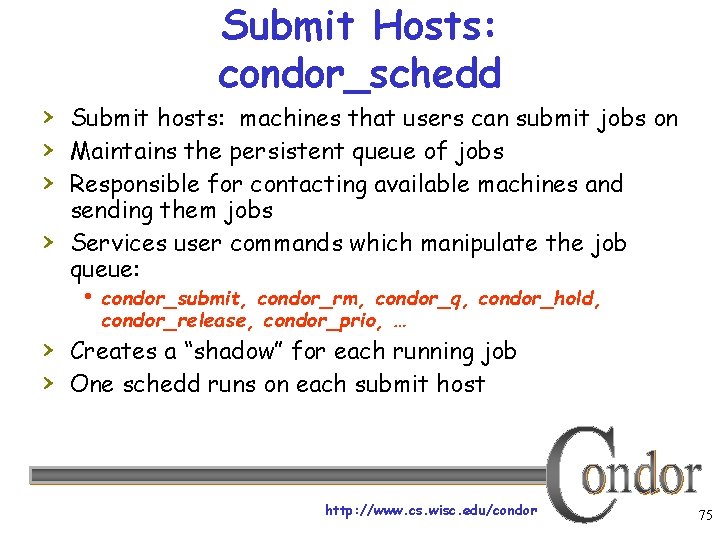

Submit Hosts: condor_schedd › Submit hosts: machines that users can submit jobs on › Maintains the persistent queue of jobs › Responsible for contacting available machines and › sending them jobs Services user commands which manipulate the job queue: condor_submit, condor_rm, condor_q, condor_hold, condor_release, condor_prio, … › Creates a “shadow” for each running job › One schedd runs on each submit host http: //www. cs. wisc. edu/condor 75

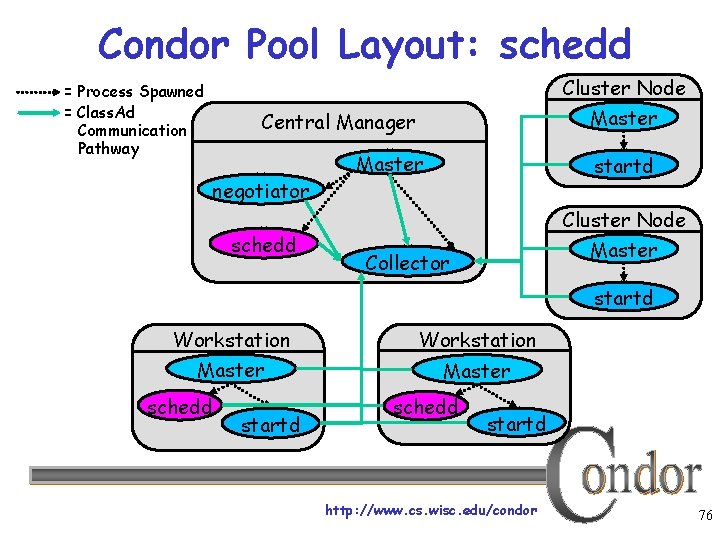

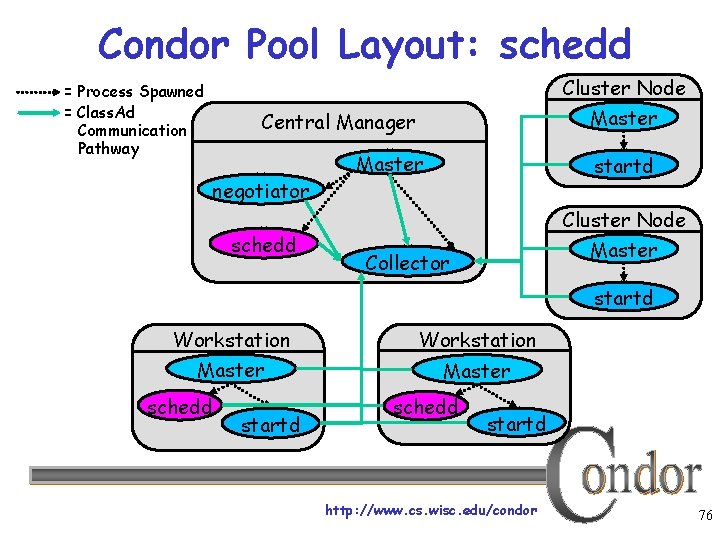

Condor Pool Layout: schedd Cluster Node = Process Spawned = Class. Ad Communication Pathway Master Central Manager negotiator schedd Master startd Cluster Node Master Collector startd Workstation Master schedd startd http: //www. cs. wisc. edu/condor 76

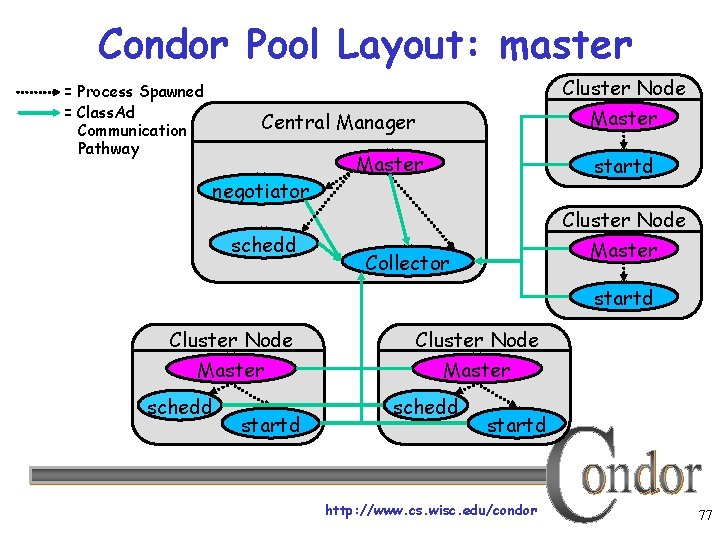

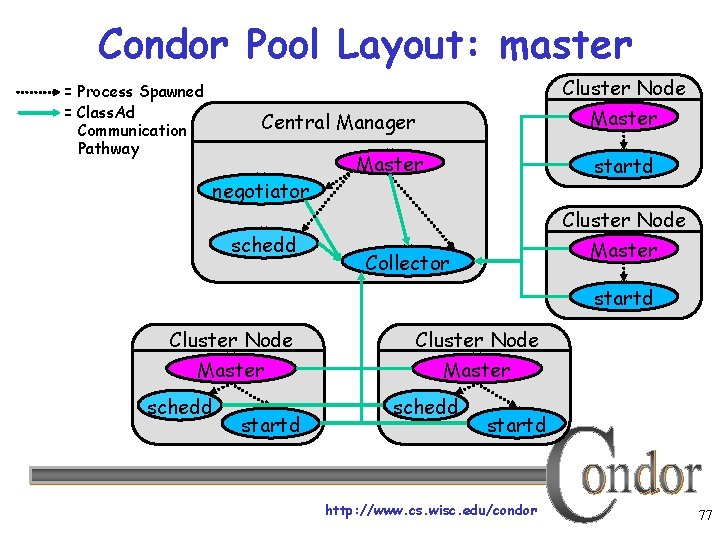

Condor Pool Layout: master Cluster Node = Process Spawned = Class. Ad Communication Pathway Master Central Manager negotiator schedd Master startd Cluster Node Master Collector startd Cluster Node Master schedd startd http: //www. cs. wisc. edu/condor 77

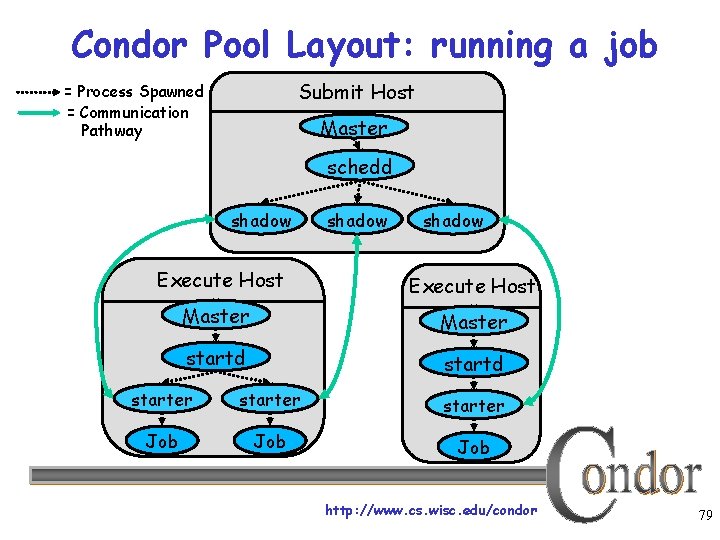

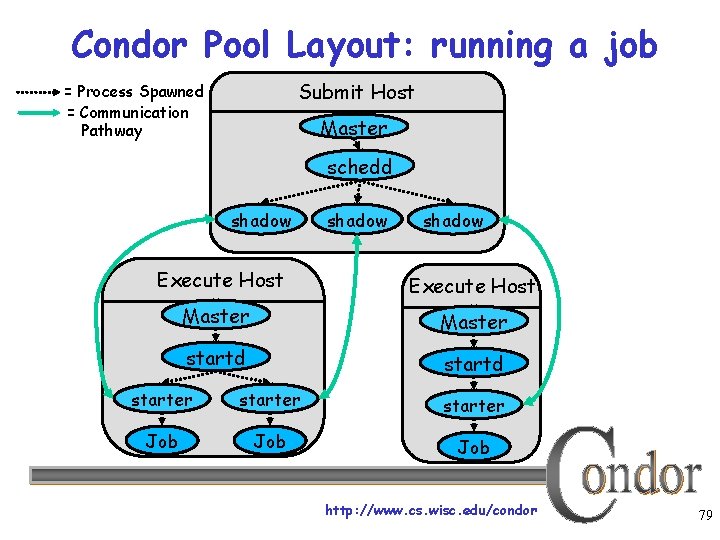

What about these “condor_shadow” processes? › The Shadow processes are Condor’s local representation of your running job One is started for each job › Similarly, on the “execute” machine, a condor_starter is run for each job http: //www. cs. wisc. edu/condor 78

Condor Pool Layout: running a job Submit Host = Process Spawned = Communication Pathway Master schedd shadow Execute Host Master startd starter Job Job http: //www. cs. wisc. edu/condor 79

Now what? › Some of the machines in the pool can’t run my jobs Not enough RAM Not enough scratch disk space Required software not installed Etc. http: //www. cs. wisc. edu/condor 80

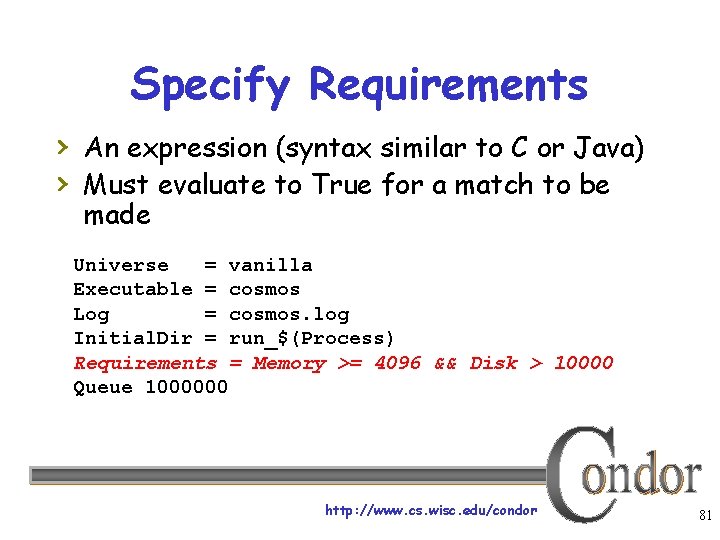

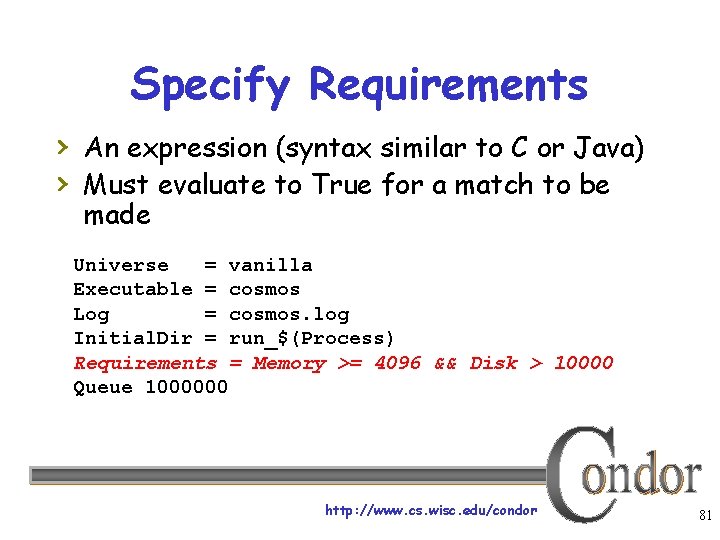

Specify Requirements › An expression (syntax similar to C or Java) › Must evaluate to True for a match to be made Universe = vanilla Executable = cosmos Log = cosmos. log Initial. Dir = run_$(Process) Requirements = Memory >= 4096 && Disk > 10000 Queue 1000000 http: //www. cs. wisc. edu/condor 81

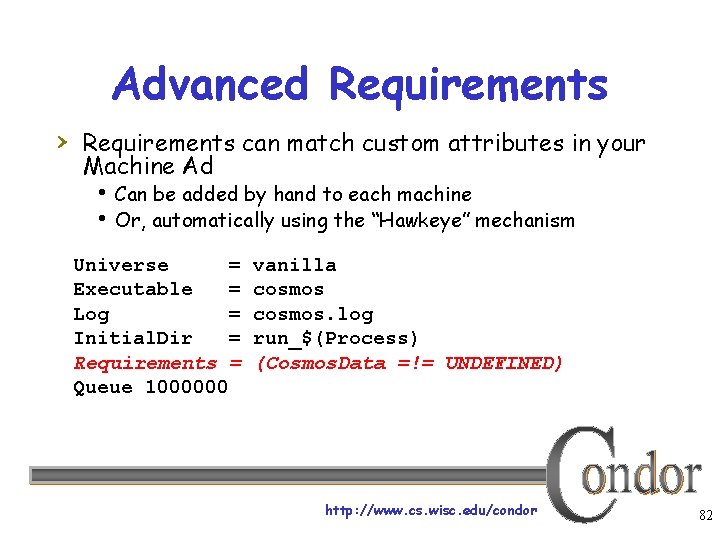

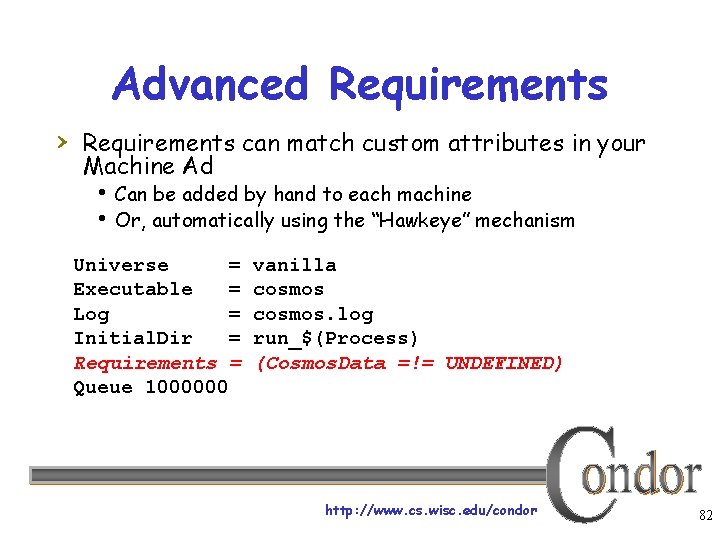

Advanced Requirements › Requirements can match custom attributes in your Machine Ad Can be added by hand to each machine Or, automatically using the “Hawkeye” mechanism Universe = Executable = Log = Initial. Dir = Requirements = Queue 1000000 vanilla cosmos. log run_$(Process) (Cosmos. Data =!= UNDEFINED) http: //www. cs. wisc. edu/condor 82

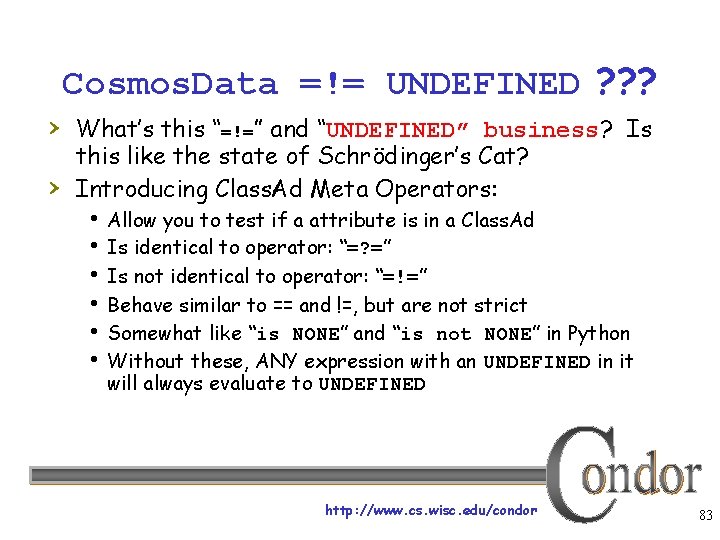

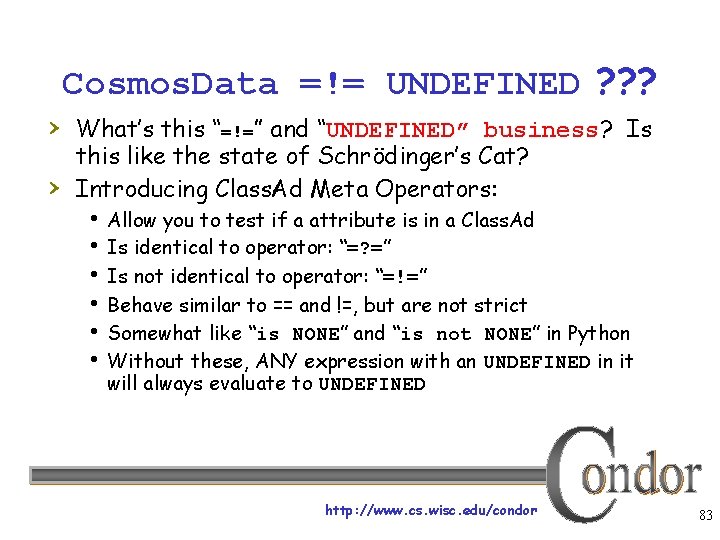

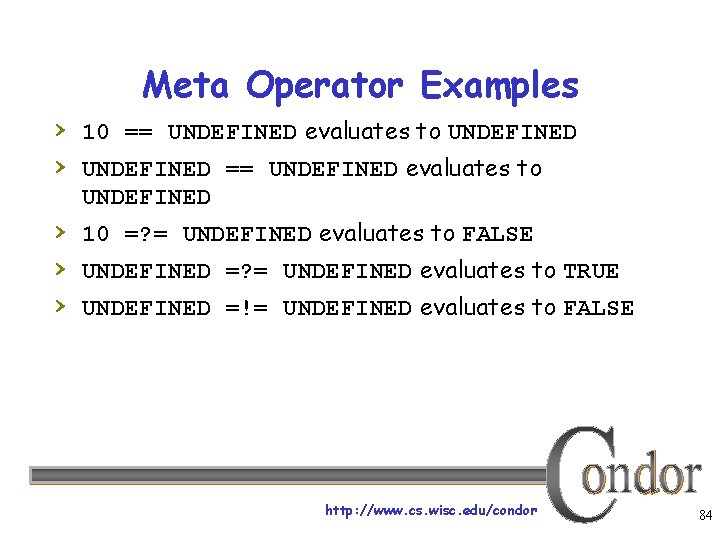

Cosmos. Data =!= UNDEFINED ? ? ? › What’s this “=!=” and “UNDEFINED” business? Is › this like the state of Schrödinger’s Cat? Introducing Class. Ad Meta Operators: Allow you to test if a attribute is in a Class. Ad Is identical to operator: “=? =” Is not identical to operator: “=!=” Behave similar to == and !=, but are not strict Somewhat like “is NONE” and “is not NONE” in Python Without these, ANY expression with an UNDEFINED in it will always evaluate to UNDEFINED http: //www. cs. wisc. edu/condor 83

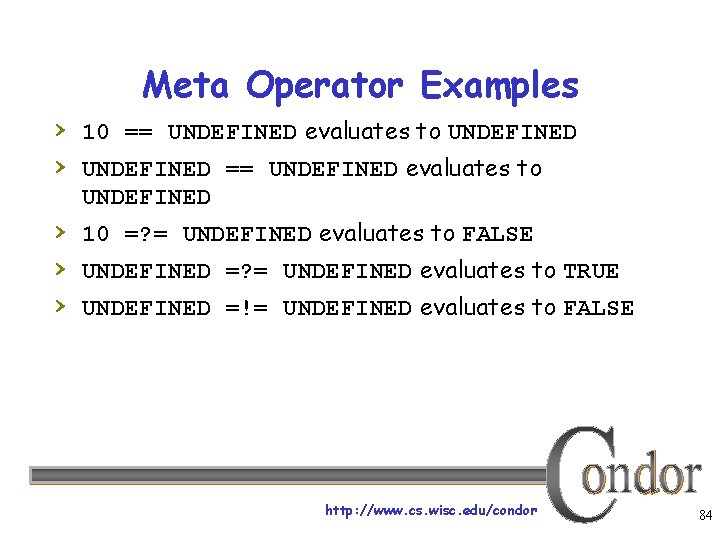

Meta Operator Examples › 10 == UNDEFINED evaluates to UNDEFINED › UNDEFINED == UNDEFINED evaluates to UNDEFINED › 10 =? = UNDEFINED evaluates to FALSE › UNDEFINED =? = UNDEFINED evaluates to TRUE › UNDEFINED =!= UNDEFINED evaluates to FALSE http: //www. cs. wisc. edu/condor 84

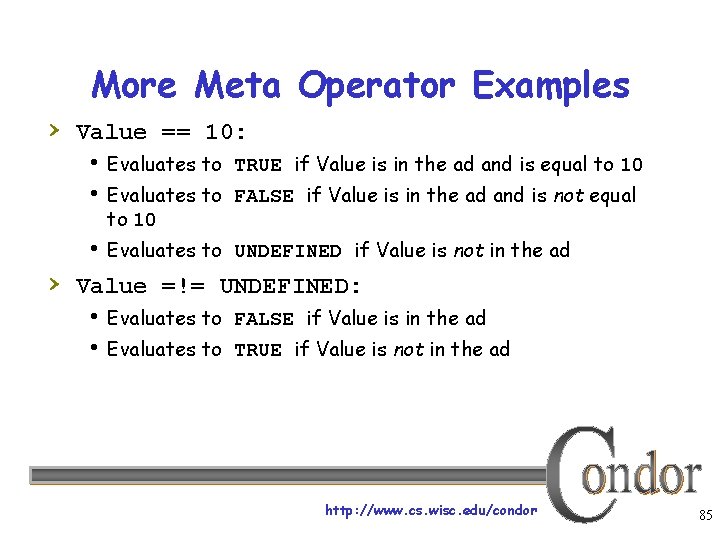

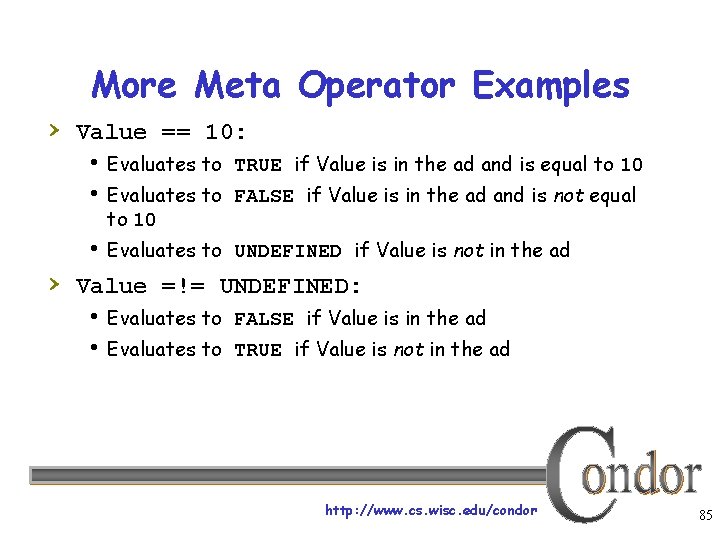

More Meta Operator Examples › Value == 10: Evaluates to TRUE if Value is in the ad and is equal to 10 Evaluates to FALSE if Value is in the ad and is not equal to 10 Evaluates to UNDEFINED if Value is not in the ad › Value =!= UNDEFINED: Evaluates to FALSE if Value is in the ad Evaluates to TRUE if Value is not in the ad http: //www. cs. wisc. edu/condor 85

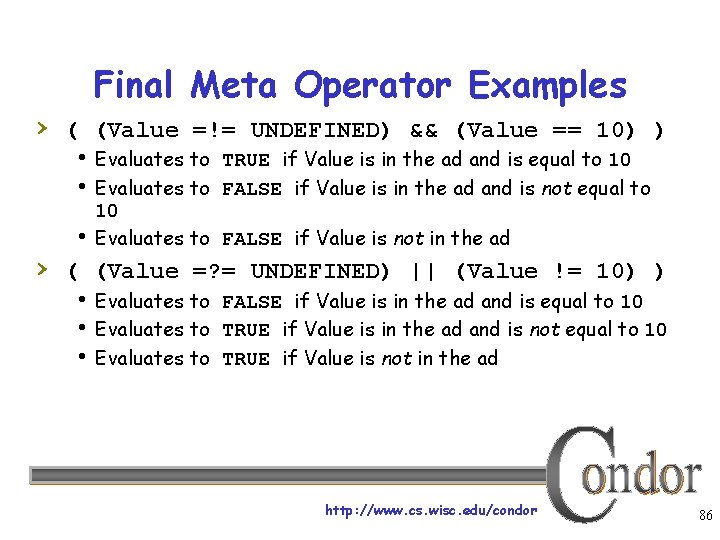

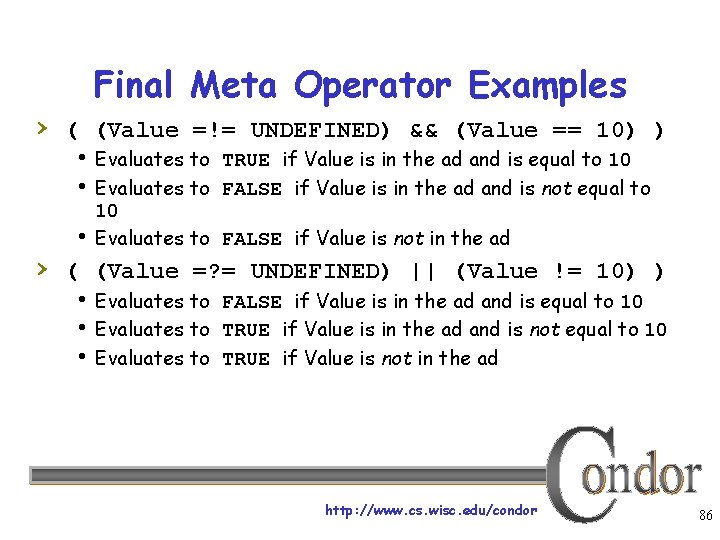

Final Meta Operator Examples › ( (Value =!= UNDEFINED) && (Value == 10) ) Evaluates to TRUE if Value is in the ad and is equal to 10 Evaluates to FALSE if Value is in the ad and is not equal to 10 Evaluates to FALSE if Value is not in the ad › ( (Value =? = UNDEFINED) || (Value != 10) ) Evaluates to FALSE if Value is in the ad and is equal to 10 Evaluates to TRUE if Value is in the ad and is not equal to 10 Evaluates to TRUE if Value is not in the ad http: //www. cs. wisc. edu/condor 86

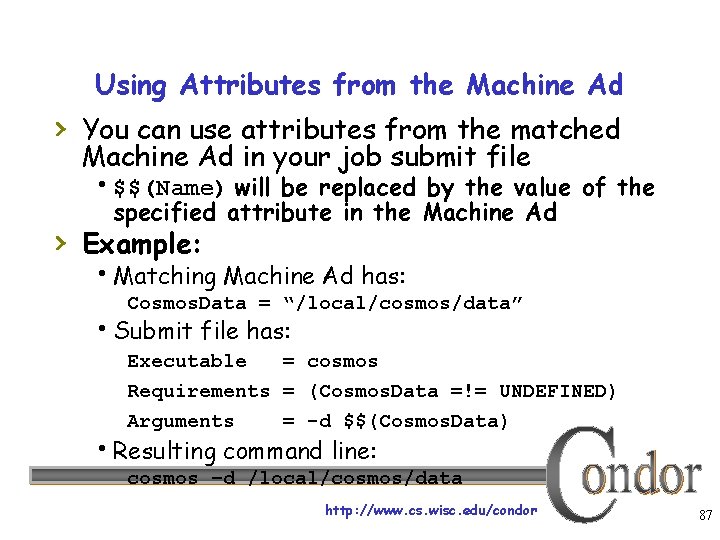

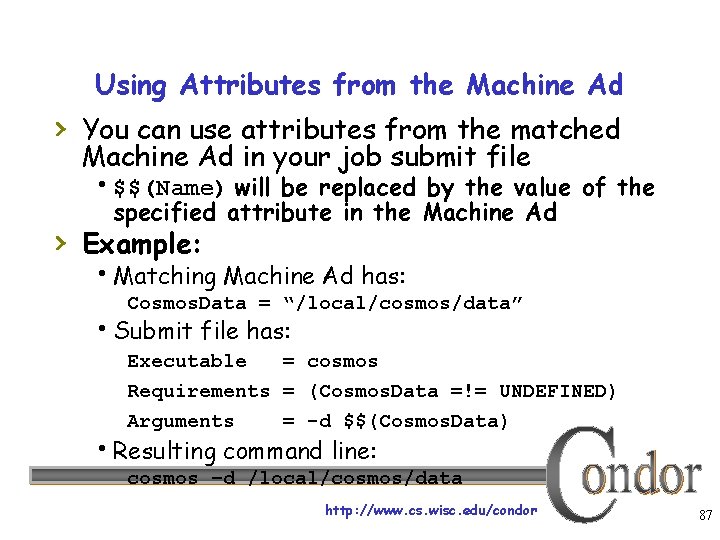

Using Attributes from the Machine Ad › You can use attributes from the matched Machine Ad in your job submit file $$(Name) will be replaced by the value of the specified attribute in the Machine Ad › Example: Matching Machine Ad has: Cosmos. Data = “/local/cosmos/data” Submit file has: Executable = cosmos Requirements = (Cosmos. Data =!= UNDEFINED) Arguments = -d $$(Cosmos. Data) Resulting command line: cosmos –d /local/cosmos/data http: //www. cs. wisc. edu/condor 87

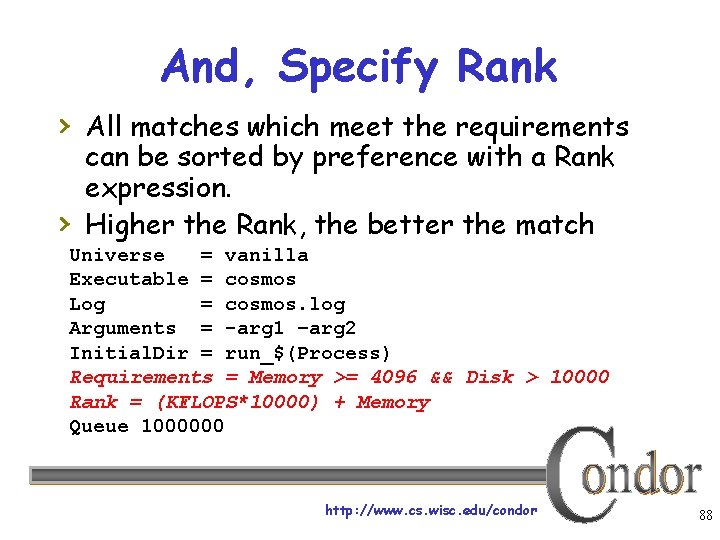

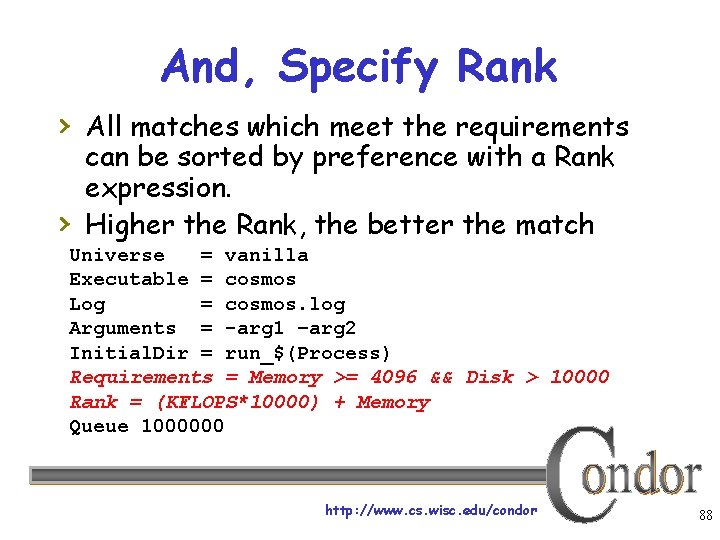

And, Specify Rank › All matches which meet the requirements › can be sorted by preference with a Rank expression. Higher the Rank, the better the match Universe = vanilla Executable = cosmos Log = cosmos. log Arguments = -arg 1 –arg 2 Initial. Dir = run_$(Process) Requirements = Memory >= 4096 && Disk > 10000 Rank = (KFLOPS*10000) + Memory Queue 1000000 http: //www. cs. wisc. edu/condor 88

My jobs aren’t running!! http: //www. cs. wisc. edu/condor 89

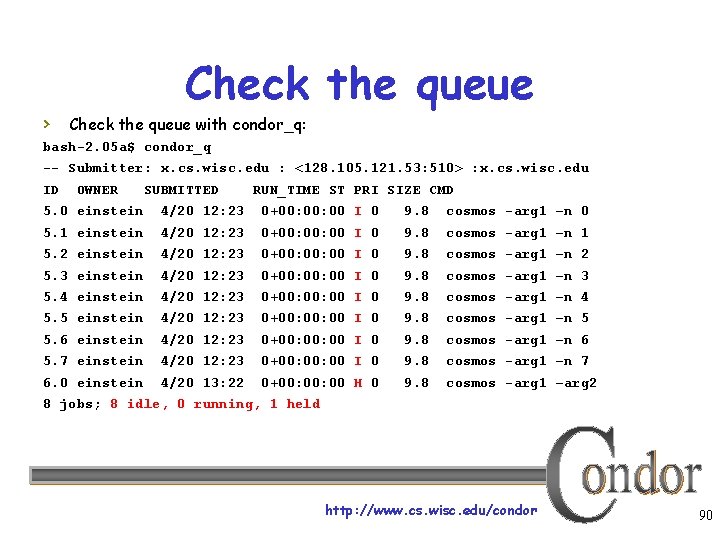

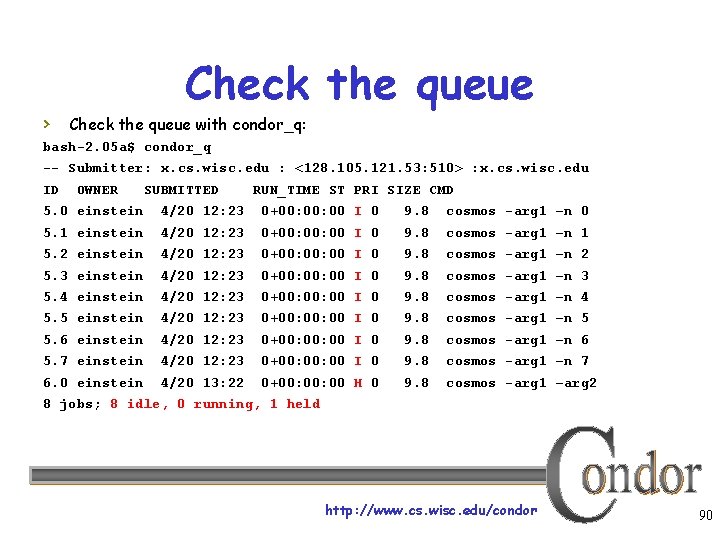

Check the queue › Check the queue with condor_q: bash-2. 05 a$ condor_q -- Submitter: x. cs. wisc. edu : <128. 105. 121. 53: 510> : x. cs. wisc. edu ID OWNER SUBMITTED RUN_TIME ST PRI SIZE CMD 5. 0 einstein 4/20 12: 23 0+00: 00 I 0 9. 8 cosmos -arg 1 –n 0 5. 1 einstein 4/20 12: 23 0+00: 00 I 0 9. 8 cosmos -arg 1 –n 1 5. 2 einstein 4/20 12: 23 0+00: 00 I 0 9. 8 cosmos -arg 1 –n 2 5. 3 einstein 4/20 12: 23 0+00: 00 I 0 9. 8 cosmos -arg 1 –n 3 5. 4 einstein 4/20 12: 23 0+00: 00 I 0 9. 8 cosmos -arg 1 –n 4 5. 5 einstein 4/20 12: 23 0+00: 00 I 0 9. 8 cosmos -arg 1 –n 5 5. 6 einstein 4/20 12: 23 0+00: 00 I 0 9. 8 cosmos -arg 1 –n 6 5. 7 einstein 4/20 12: 23 0+00: 00 I 0 9. 8 cosmos -arg 1 –n 7 6. 0 einstein 4/20 13: 22 0+00: 00 H 0 9. 8 cosmos -arg 1 –arg 2 8 jobs; 8 idle, 0 running, 1 held http: //www. cs. wisc. edu/condor 90

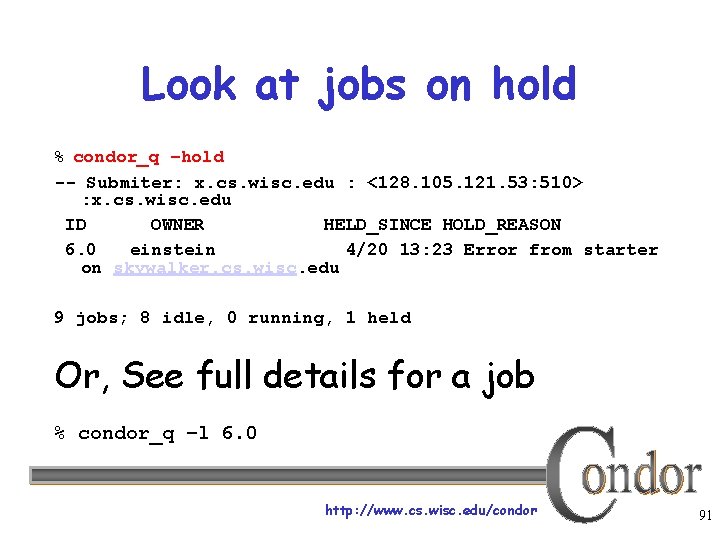

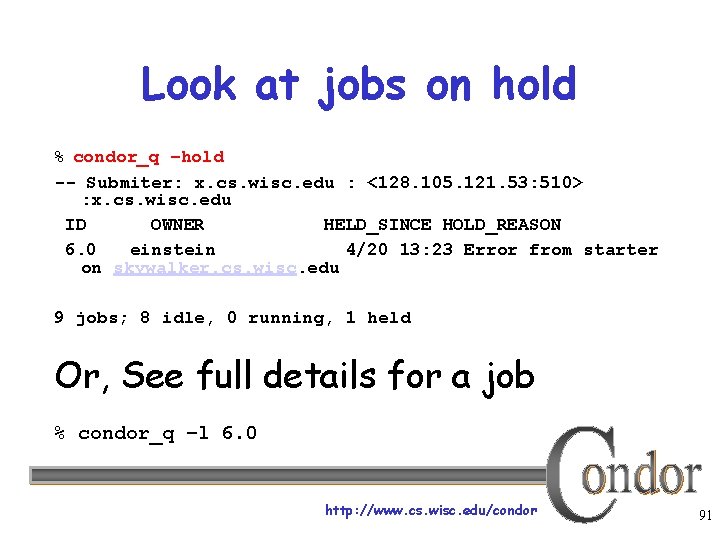

Look at jobs on hold % condor_q –hold -- Submiter: x. cs. wisc. edu : <128. 105. 121. 53: 510> : x. cs. wisc. edu ID OWNER HELD_SINCE HOLD_REASON 6. 0 einstein 4/20 13: 23 Error from starter on skywalker. cs. wisc. edu 9 jobs; 8 idle, 0 running, 1 held Or, See full details for a job % condor_q –l 6. 0 http: //www. cs. wisc. edu/condor 91

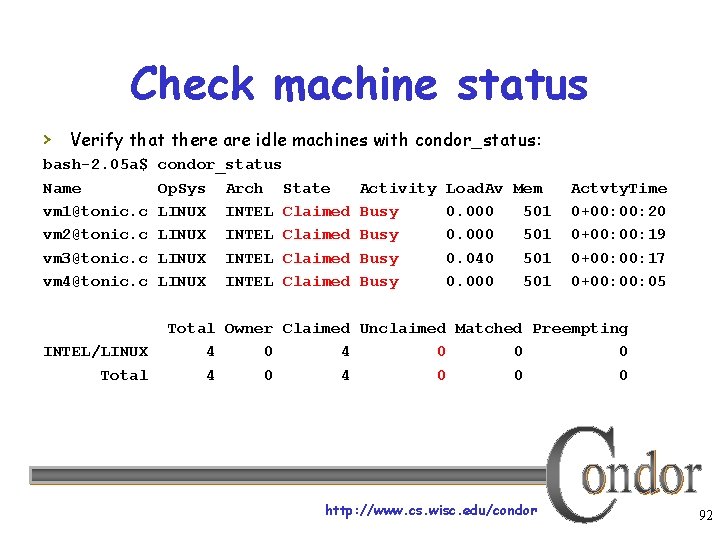

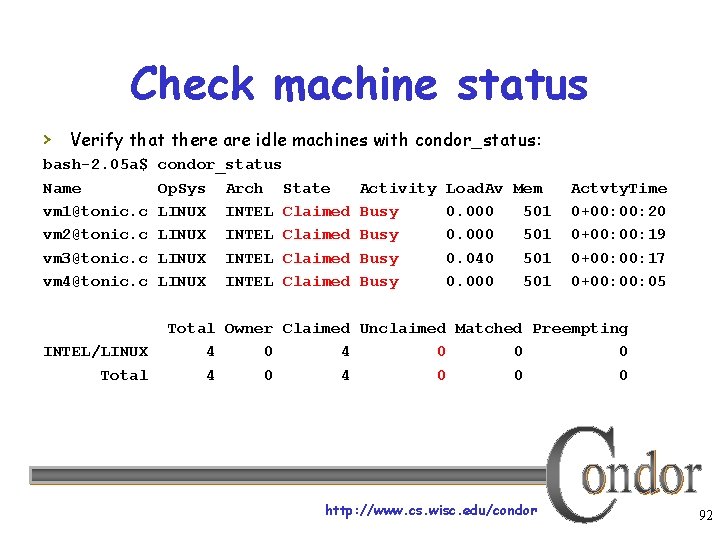

Check machine status › Verify that there are idle machines with condor_status: bash-2. 05 a$ Name vm 1@tonic. c vm 2@tonic. c vm 3@tonic. c vm 4@tonic. c INTEL/LINUX Total condor_status Op. Sys Arch State LINUX INTEL Claimed Activity Busy Load. Av Mem 0. 000 501 0. 040 501 0. 000 501 Actvty. Time 0+00: 20 0+00: 19 0+00: 17 0+00: 05 Total Owner Claimed Unclaimed Matched Preempting 4 0 4 0 0 0 http: //www. cs. wisc. edu/condor 92

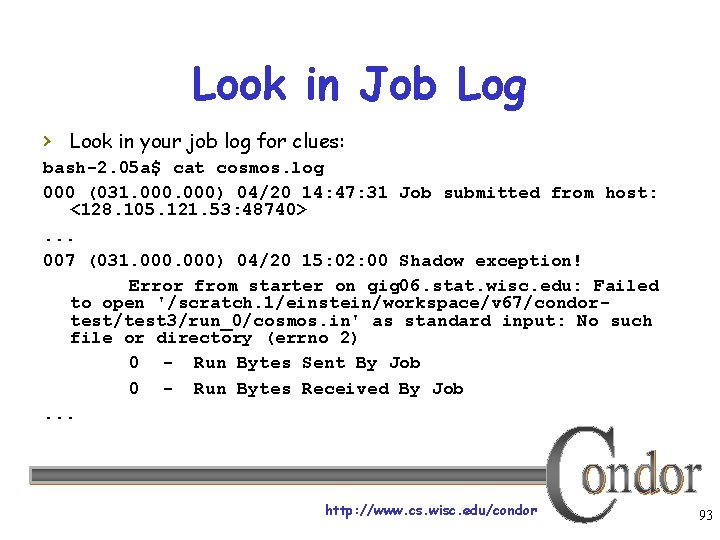

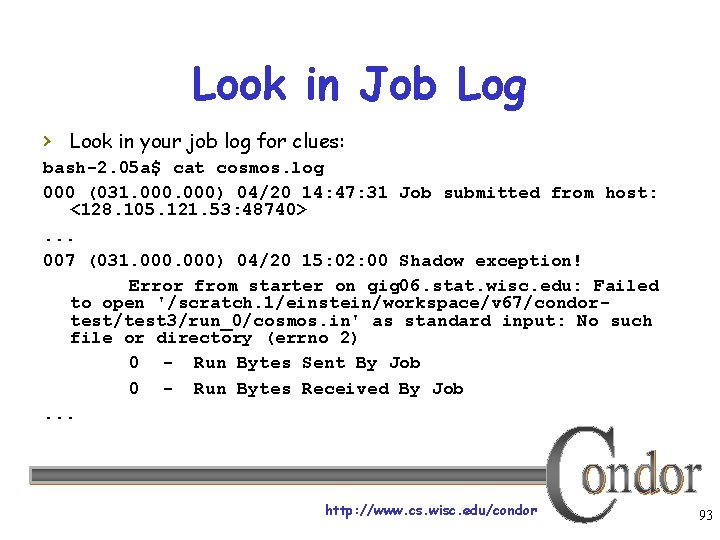

Look in Job Log › Look in your job log for clues: bash-2. 05 a$ cat cosmos. log 000 (031. 000) 04/20 14: 47: 31 Job submitted from host: <128. 105. 121. 53: 48740>. . . 007 (031. 000) 04/20 15: 02: 00 Shadow exception! Error from starter on gig 06. stat. wisc. edu: Failed to open '/scratch. 1/einstein/workspace/v 67/condortest/test 3/run_0/cosmos. in' as standard input: No such file or directory (errno 2) 0 - Run Bytes Sent By Job 0 - Run Bytes Received By Job. . . http: //www. cs. wisc. edu/condor 93

Still not running? Exercise a little patience › On a busy pool, it can take a while to match and start your jobs › Wait at least a negotiation cycle or two (typically a few minutes) http: //www. cs. wisc. edu/condor 94

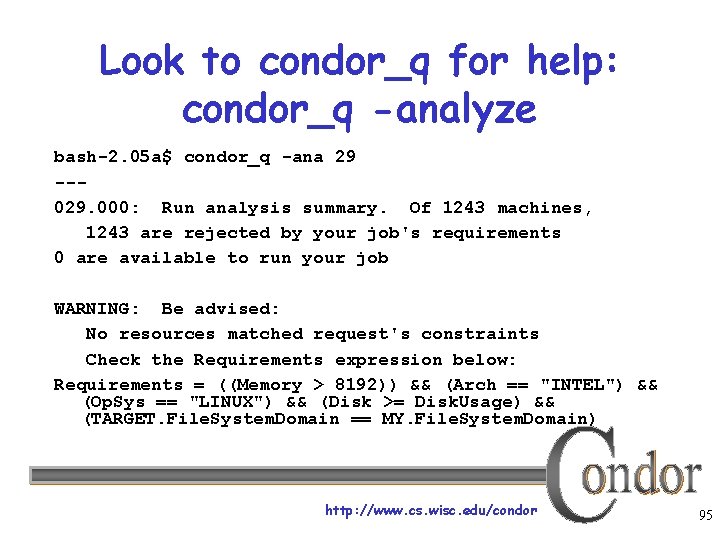

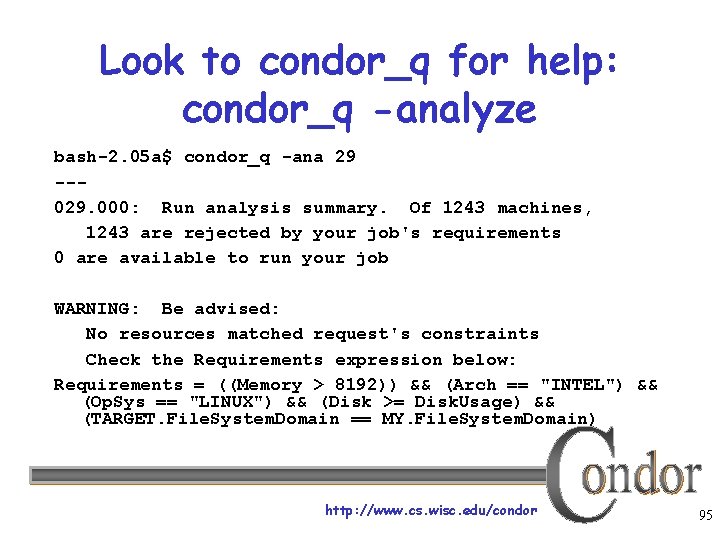

Look to condor_q for help: condor_q -analyze bash-2. 05 a$ condor_q -ana 29 --029. 000: Run analysis summary. Of 1243 machines, 1243 are rejected by your job's requirements 0 are available to run your job WARNING: Be advised: No resources matched request's constraints Check the Requirements expression below: Requirements = ((Memory > 8192)) && (Arch == "INTEL") && (Op. Sys == "LINUX") && (Disk >= Disk. Usage) && (TARGET. File. System. Domain == MY. File. System. Domain) http: //www. cs. wisc. edu/condor 95

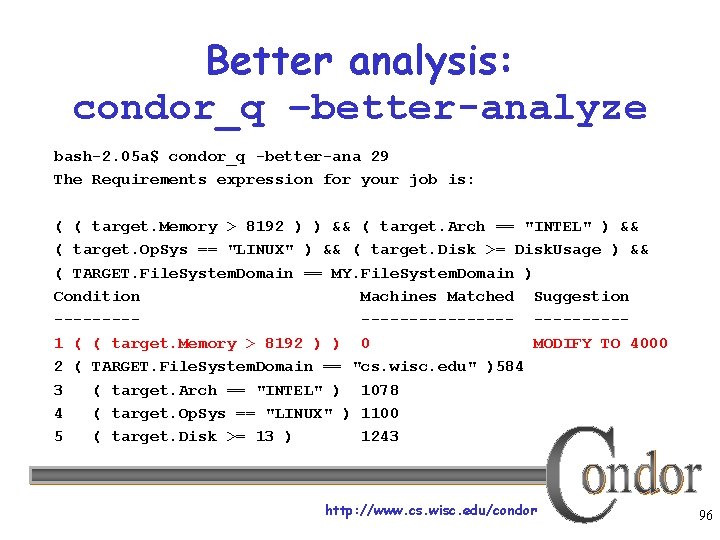

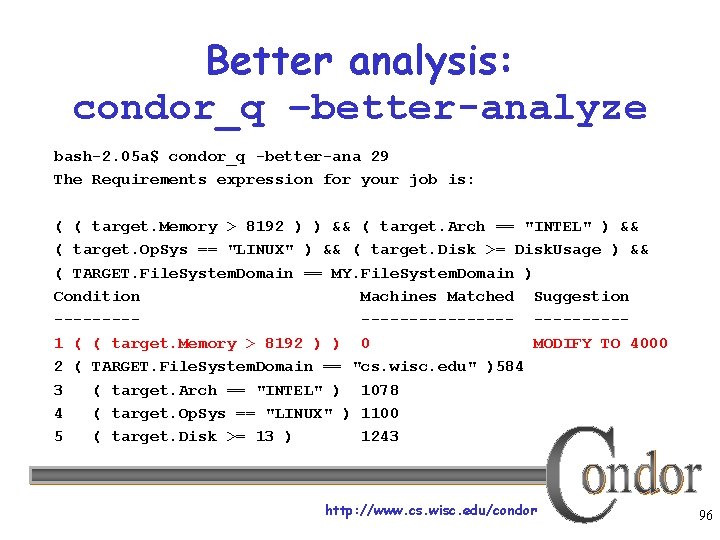

Better analysis: condor_q –better-analyze bash-2. 05 a$ condor_q -better-ana 29 The Requirements expression for your job is: ( ( target. Memory > 8192 ) ) && ( target. Arch == "INTEL" ) && ( target. Op. Sys == "LINUX" ) && ( target. Disk >= Disk. Usage ) && ( TARGET. File. System. Domain == MY. File. System. Domain ) Condition Machines Matched Suggestion ------------1 ( ( target. Memory > 8192 ) ) 0 MODIFY TO 4000 2 ( TARGET. File. System. Domain == "cs. wisc. edu" )584 3 ( target. Arch == "INTEL" ) 1078 4 ( target. Op. Sys == "LINUX" ) 1100 5 ( target. Disk >= 13 ) 1243 http: //www. cs. wisc. edu/condor 96

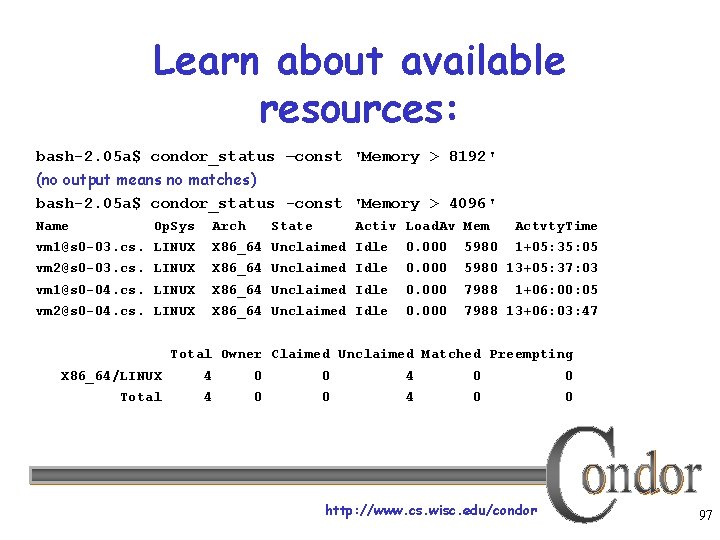

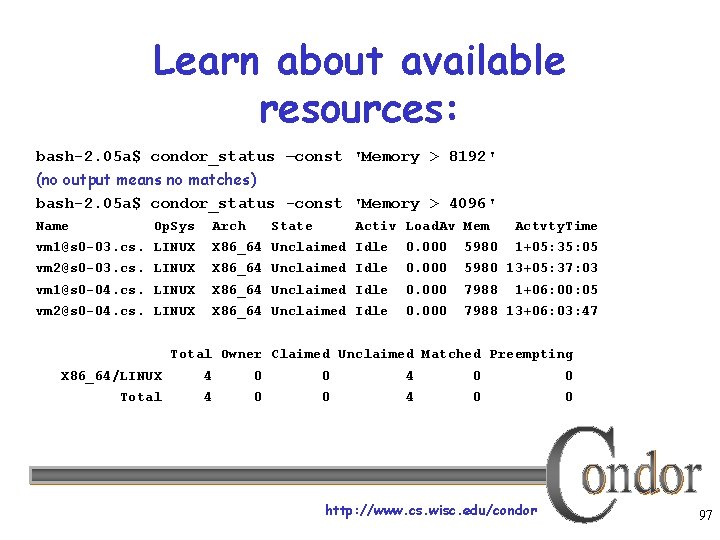

Learn about available resources: bash-2. 05 a$ condor_status –const 'Memory > 8192' (no output means no matches) bash-2. 05 a$ condor_status -const 'Memory > 4096' Name Op. Sys Arch State Activ Load. Av Mem Actvty. Time vm 1@s 0 -03. cs. LINUX X 86_64 Unclaimed Idle 0. 000 5980 1+05: 35: 05 vm 2@s 0 -03. cs. LINUX X 86_64 Unclaimed Idle 0. 000 5980 13+05: 37: 03 vm 1@s 0 -04. cs. LINUX X 86_64 Unclaimed Idle 0. 000 7988 vm 2@s 0 -04. cs. LINUX X 86_64 Unclaimed Idle 0. 000 7988 13+06: 03: 47 1+06: 00: 05 Total Owner Claimed Unclaimed Matched Preempting X 86_64/LINUX 4 0 0 Total 4 0 0 http: //www. cs. wisc. edu/condor 97

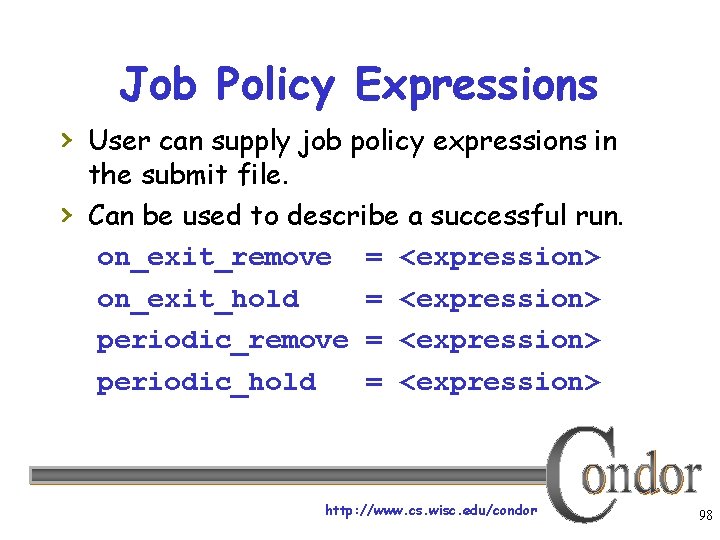

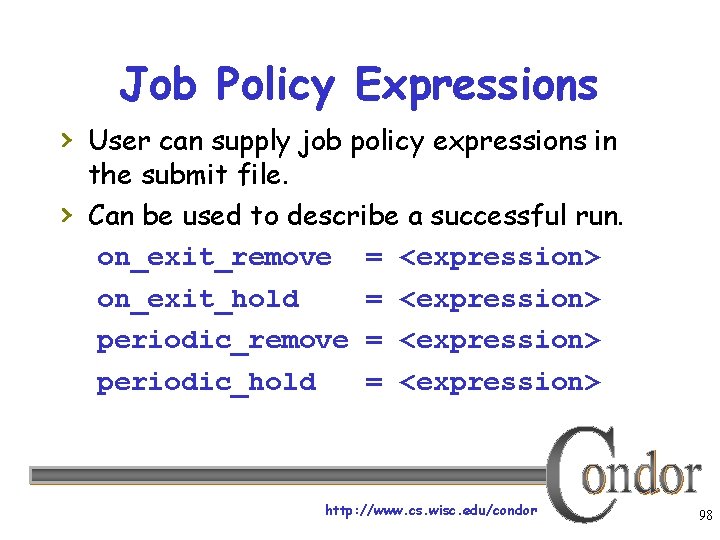

Job Policy Expressions › User can supply job policy expressions in › the submit file. Can be used to describe a successful run. on_exit_remove = <expression> on_exit_hold = <expression> periodic_remove = <expression> periodic_hold = <expression> http: //www. cs. wisc. edu/condor 98

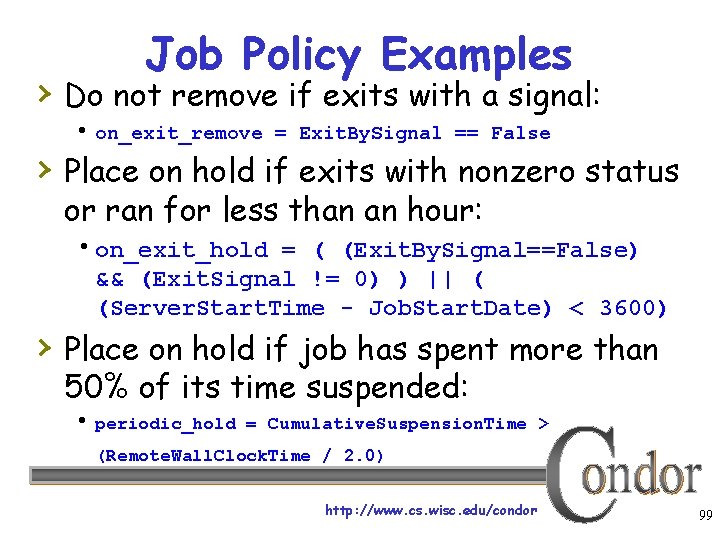

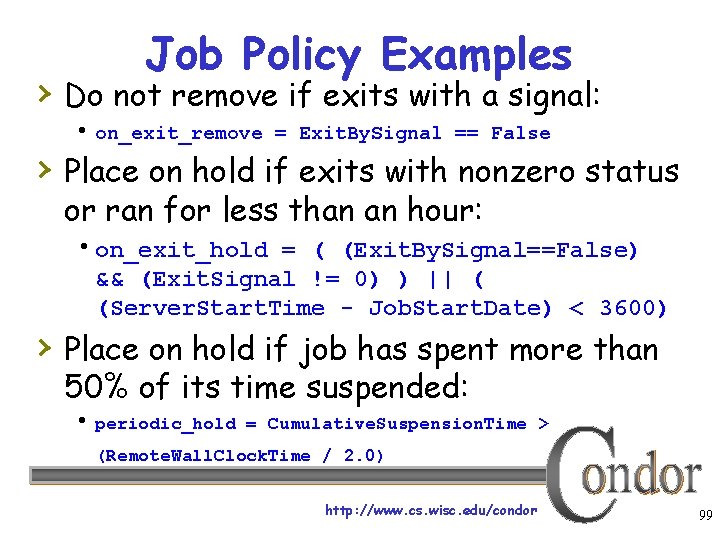

Job Policy Examples › Do not remove if exits with a signal: on_exit_remove = Exit. By. Signal == False › Place on hold if exits with nonzero status or ran for less than an hour: on_exit_hold = ( (Exit. By. Signal==False) && (Exit. Signal != 0) ) || ( (Server. Start. Time - Job. Start. Date) < 3600) › Place on hold if job has spent more than 50% of its time suspended: periodic_hold = Cumulative. Suspension. Time > (Remote. Wall. Clock. Time / 2. 0) http: //www. cs. wisc. edu/condor 99

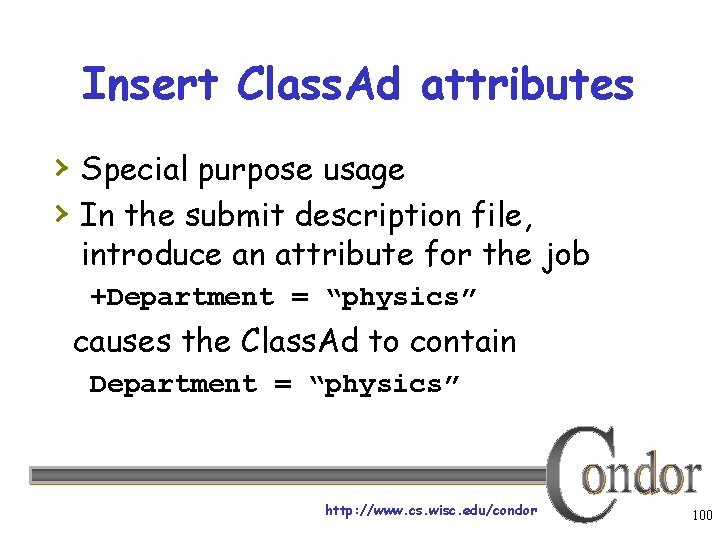

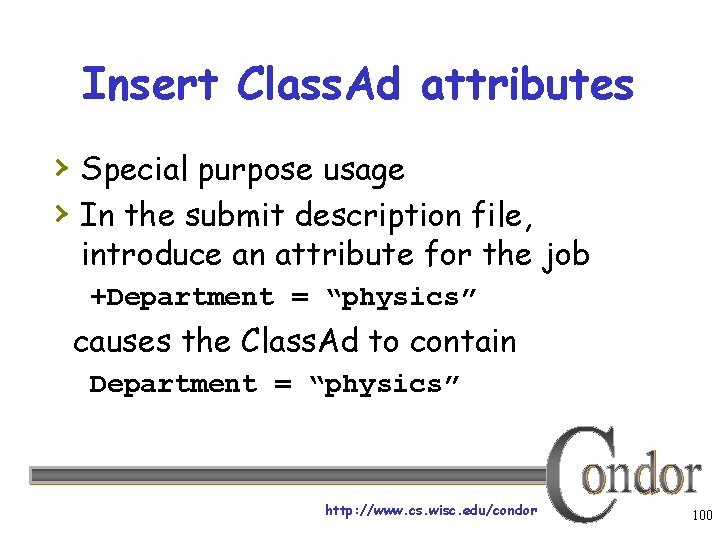

Insert Class. Ad attributes › Special purpose usage › In the submit description file, introduce an attribute for the job +Department = “physics” causes the Class. Ad to contain Department = “physics” http: //www. cs. wisc. edu/condor 100

We’ve seen how Condor can: › Keep an eye on your jobs Keep you posted on their progress › Implement your policy on the › execution order of the jobs Keep a log of your job activities http: //www. cs. wisc. edu/condor 101

Getting Condor › Available as a free download from › http: //www. cs. wisc. edu/condor Download Condor for your operating system Available for most UNIX (including Linux and Apple’s OS/X) platforms Also for Windows XP / 2003 / Vista http: //www. cs. wisc. edu/condor 102

Condor Releases › Stable / Developer Releases Version numbering scheme similar to that of the (pre 2. 6) Linux kernels … › Major. minor. release Minor is even (a. b. c): Stable • Examples: 7. 0. 5, 7. 2. 2 – 7. 2. 2 just released • Very stable, mostly bug fixes Minor is odd (a. b. c): Developer • New features, may have some bugs • Examples: 7. 1. 5, 7. 3. 1 – 7. 3. 1 “real soon now” http: //www. cs. wisc. edu/condor 103

Albert installs a “Personal Condor” on his laptop… › What do we mean by a “Personal” Condor? Condor on your own workstation No root / administrator access required No system administrator intervention needed › His Personal Condor can joins the local Condor pool He can now access to the pool’s resources (machines) › Can also be “Stand Alone” Can still run his own jobs, maintain job queue, etc. Can even access grid resources if needed! http: //www. cs. wisc. edu/condor 104

Multiple Universes http: //www. cs. wisc. edu/condor 105

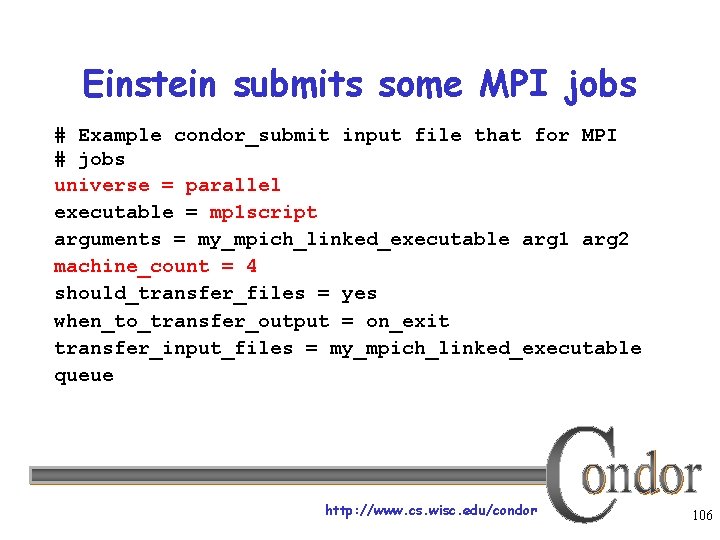

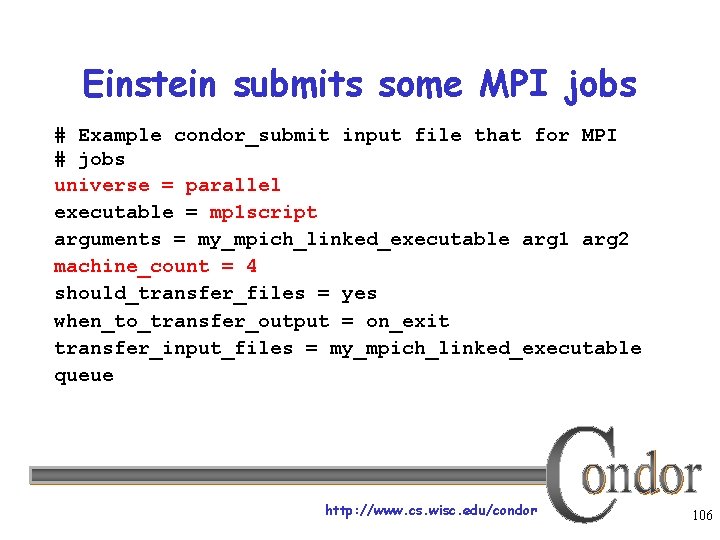

Einstein submits some MPI jobs # Example condor_submit input file that for MPI # jobs universe = parallel executable = mp 1 script arguments = my_mpich_linked_executable arg 1 arg 2 machine_count = 4 should_transfer_files = yes when_to_transfer_output = on_exit transfer_input_files = my_mpich_linked_executable queue http: //www. cs. wisc. edu/condor 106

My new jobs can run for 20 days… • What happens when a job is forced off it’s CPU? – Preempted by higher priority user or job – Vacated because of user activity • How can I add fault tolerance to my jobs? http: //www. cs. wisc. edu/condor 107

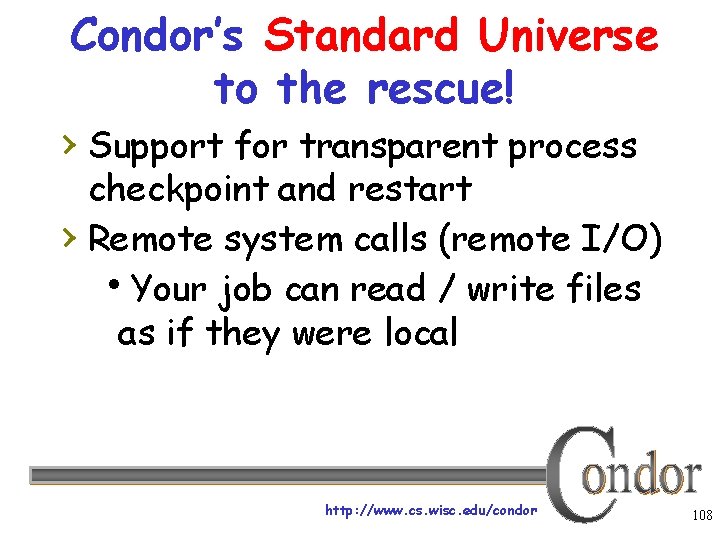

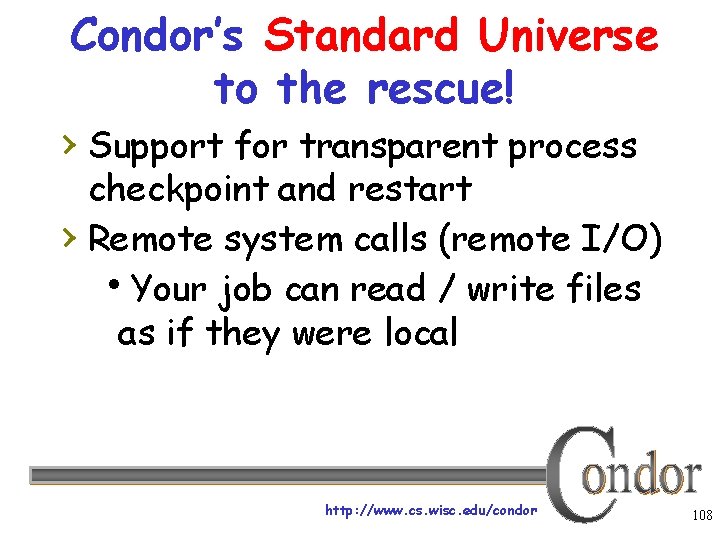

Condor’s Standard Universe to the rescue! › Support for transparent process checkpoint and restart › Remote system calls (remote I/O) Your job can read / write files as if they were local http: //www. cs. wisc. edu/condor 108

Remote System Calls in the Standard Universe › I/O system calls are trapped and sent back to the submit machine Examples: open a file, write to a file › No source code changes typically required › Programming language independent http: //www. cs. wisc. edu/condor 109

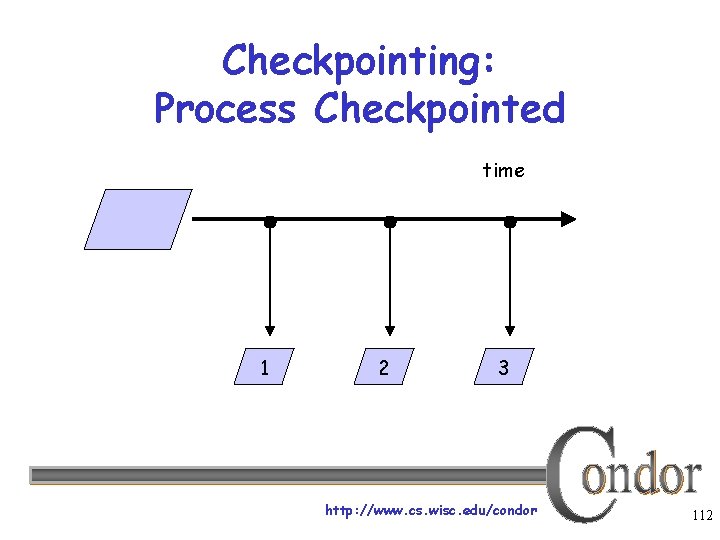

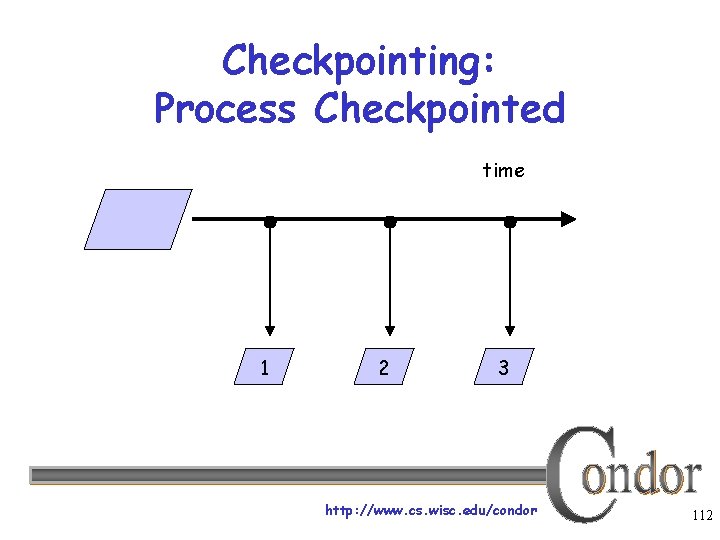

Process Checkpointing in the Standard Universe › Condor’s process checkpointing provides a › mechanism to automatically save the state of a job The process can then be restarted from right where it was checkpointed After preemption, crash, etc. http: //www. cs. wisc. edu/condor 110

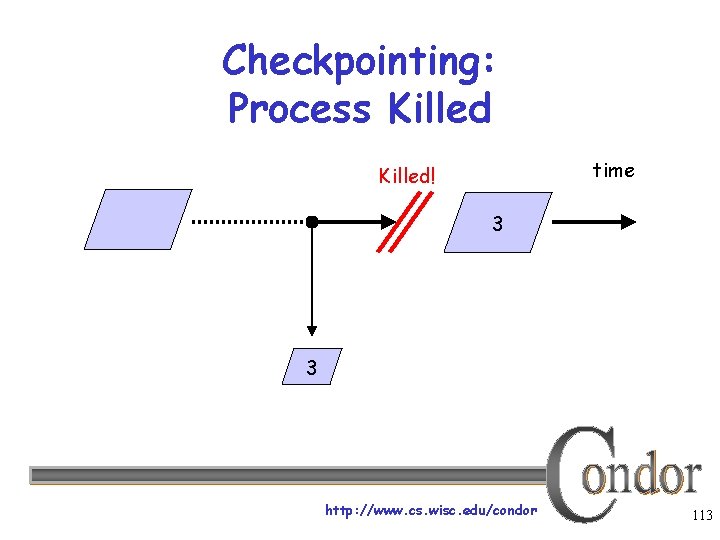

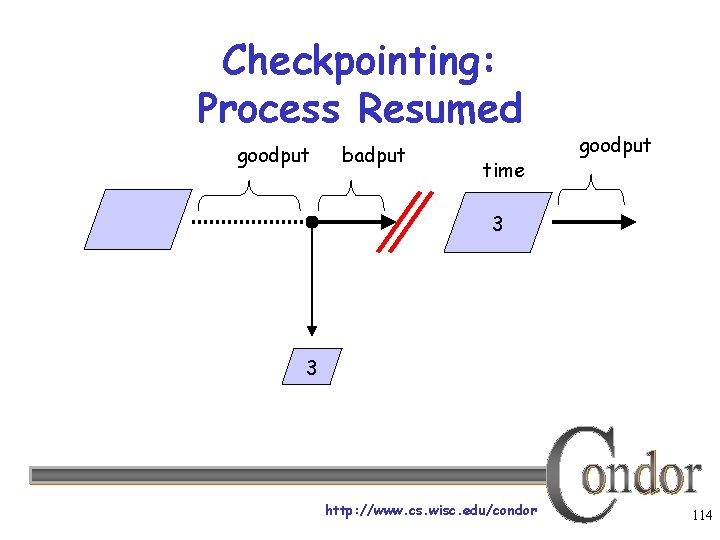

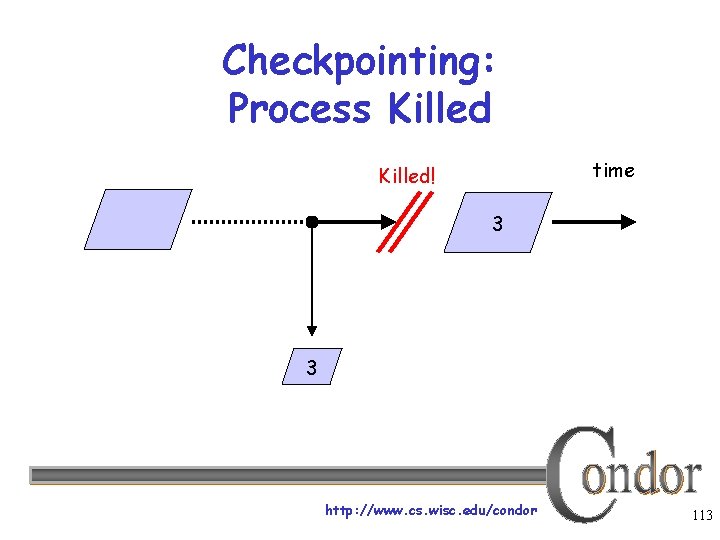

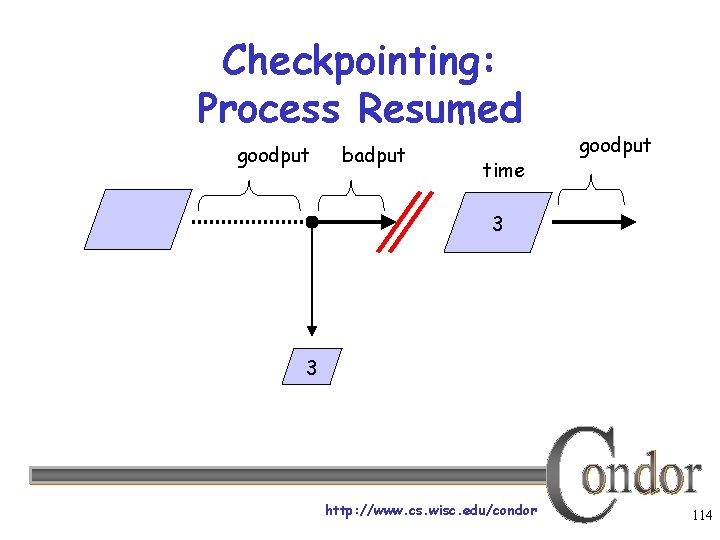

Checkpointing: Process Starts checkpoint: the entire state of a program, saved in a file § CPU registers, memory image, I/O time http: //www. cs. wisc. edu/condor 111

Checkpointing: Process Checkpointed time 1 2 3 http: //www. cs. wisc. edu/condor 112

Checkpointing: Process Killed time Killed! 3 3 http: //www. cs. wisc. edu/condor 113

Checkpointing: Process Resumed goodput badput time goodput 3 3 http: //www. cs. wisc. edu/condor 114

When will Condor checkpoint your job? › Periodically, if desired For fault tolerance › When your job is preempted by a higher › › priority job When your job is vacated because the execution machine becomes busy When you explicitly run condor_checkpoint, condor_vacate, condor_off or condor_restart command http: //www. cs. wisc. edu/condor 115

Making the Standard Universe Work › The job must be relinked with Condor’s › standard universe support library To relink, place condor_compile in front of the command used to link the job: % condor_compile gcc -o myjob. c - OR % condor_compile f 77 -o myjob filea. f fileb. f - OR % condor_compile make –f My. Makefile http: //www. cs. wisc. edu/condor 116

Limitations of the Standard Universe › Condor’s checkpointing is not at the kernel level. Standard Universe the job may not: • Fork() • Use kernel threads • Use some forms of IPC, such as pipes and shared memory › Must have access to source code to relink › Many typical scientific jobs are OK http: //www. cs. wisc. edu/condor 117

Death of the Standard Universe http: //www. cs. wisc. edu/condor 118

DMTCP & Parrot › DMTCP (Checkpointing) “Distributed Multi. Threaded Checkpointing” Developed at Northeastern University http: //dmtcp. sourceforge. net/ See Pete’s talk tomorrow @ 11: 40 › Parrot (Remote I/O) “Parrot is a tool for attaching existing programs to remote I/O system” Developed by Doug Thain (now at Notre Dame) http: //www. cse. nd. edu/~ccl/software/parrot/ dthain@nd. edu http: //www. cs. wisc. edu/condor 119

Connecting Condors • Albert knows people with their own Condor pools, and gets permission to use their computing resources… • How can Condor help him do this? http: //www. cs. wisc. edu/condor 120

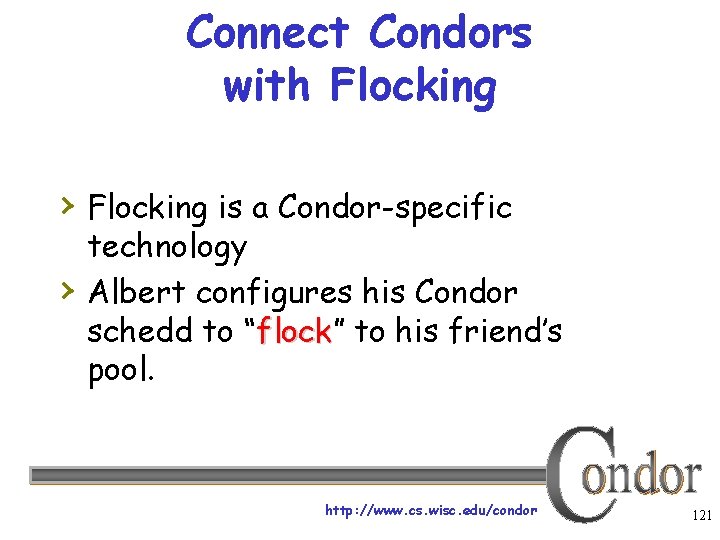

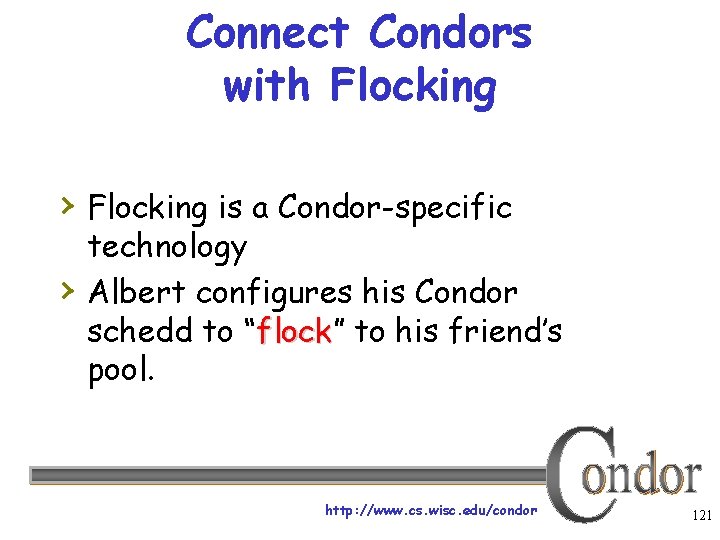

Connect Condors with Flocking › Flocking is a Condor-specific › technology Albert configures his Condor schedd to “flock” flock to his friend’s pool. http: //www. cs. wisc. edu/condor 121

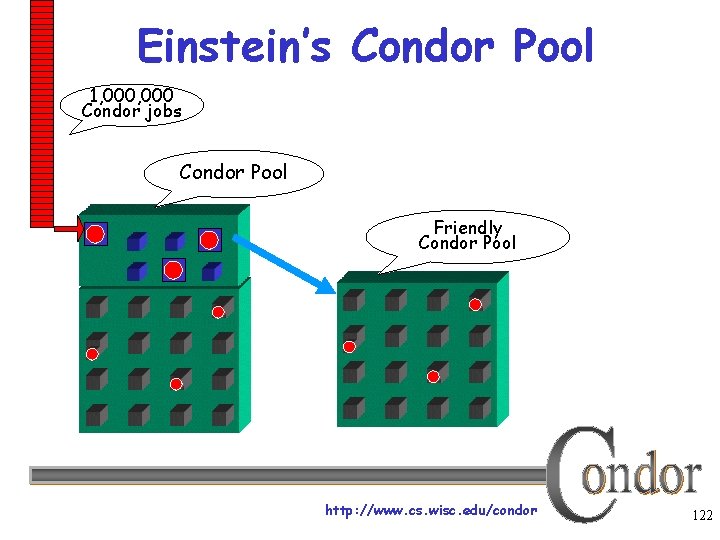

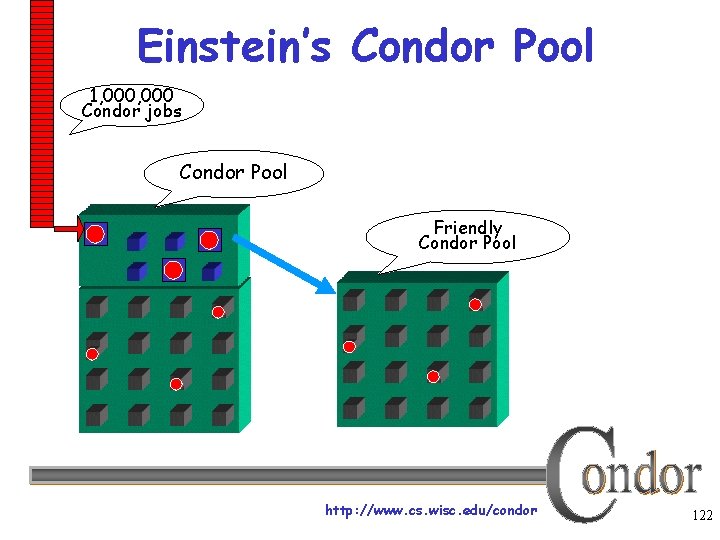

Einstein’s Condor Pool 1, 000 Condor jobs Condor Pool Friendly Condor Pool http: //www. cs. wisc. edu/condor 122

Albert meets The Grid › Albert also has access to grid resources he wants to use He has certificates and access to Globus or other resources at remote institutions › But Albert wants Condor’s queue › management features for his jobs! He installs Condor so he can submit “Grid Universe” jobs to Condor http: //www. cs. wisc. edu/condor 123

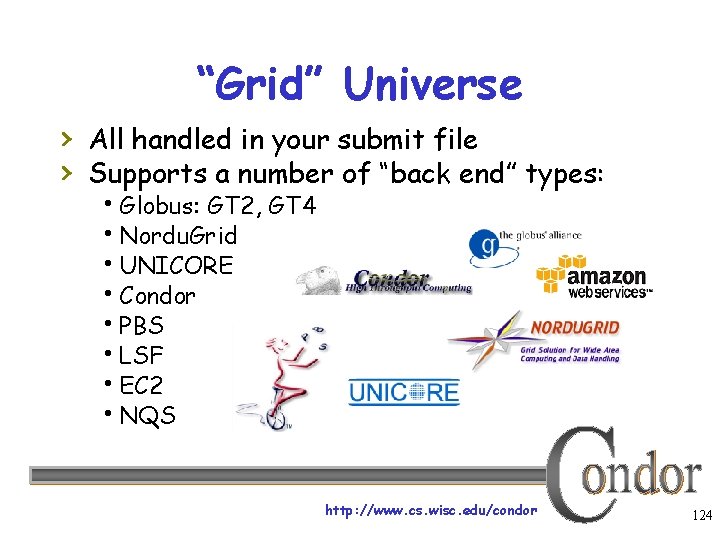

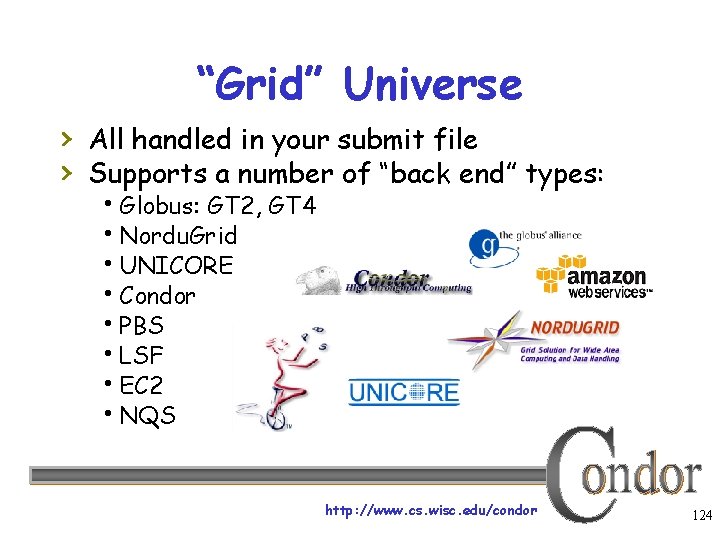

“Grid” Universe › All handled in your submit file › Supports a number of “back end” types: Globus: GT 2, GT 4 Nordu. Grid UNICORE Condor PBS LSF EC 2 NQS http: //www. cs. wisc. edu/condor 124

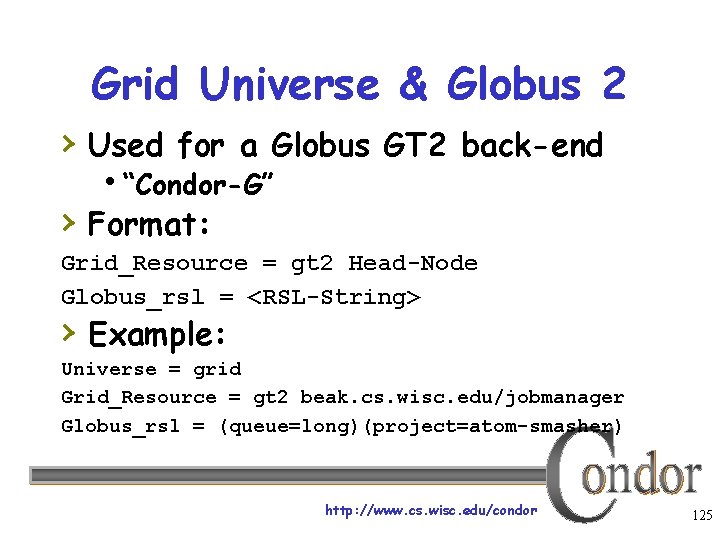

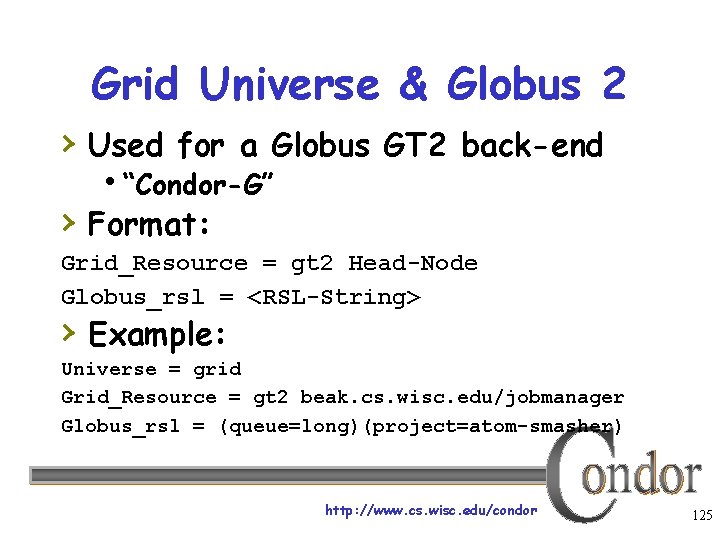

Grid Universe & Globus 2 › Used for a Globus GT 2 back-end “Condor-G” › Format: Grid_Resource = gt 2 Head-Node Globus_rsl = <RSL-String> › Example: Universe = grid Grid_Resource = gt 2 beak. cs. wisc. edu/jobmanager Globus_rsl = (queue=long)(project=atom-smasher) http: //www. cs. wisc. edu/condor 125

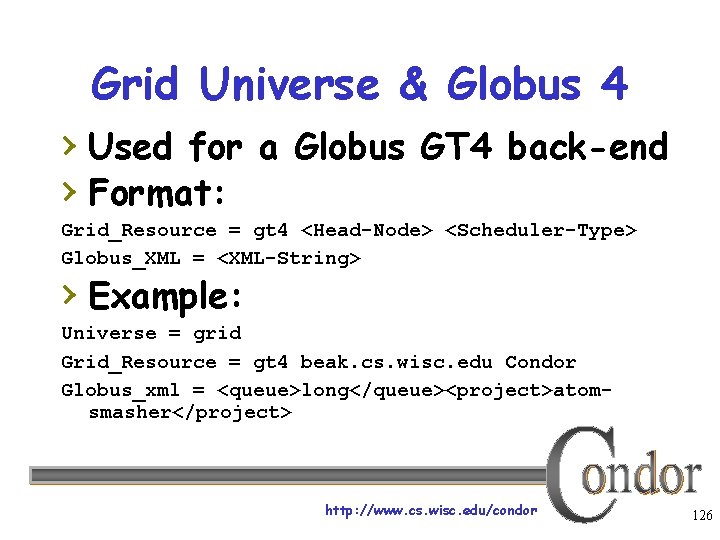

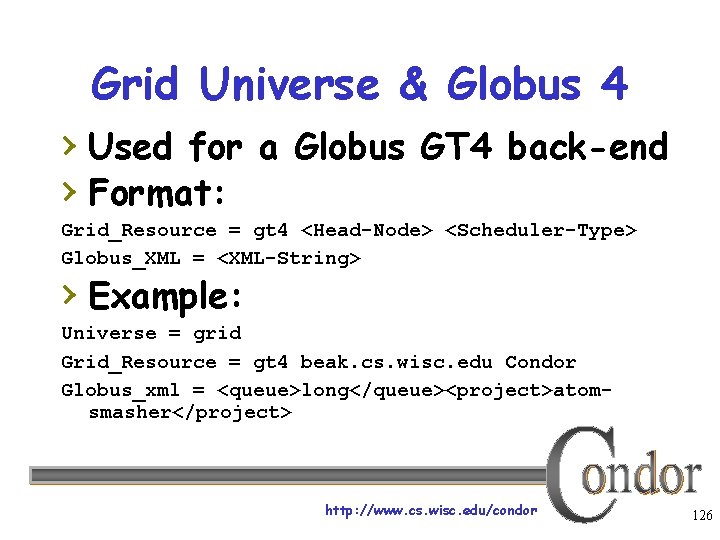

Grid Universe & Globus 4 › Used for a Globus GT 4 back-end › Format: Grid_Resource = gt 4 <Head-Node> <Scheduler-Type> Globus_XML = <XML-String> › Example: Universe = grid Grid_Resource = gt 4 beak. cs. wisc. edu Condor Globus_xml = <queue>long</queue><project>atomsmasher</project> http: //www. cs. wisc. edu/condor 126

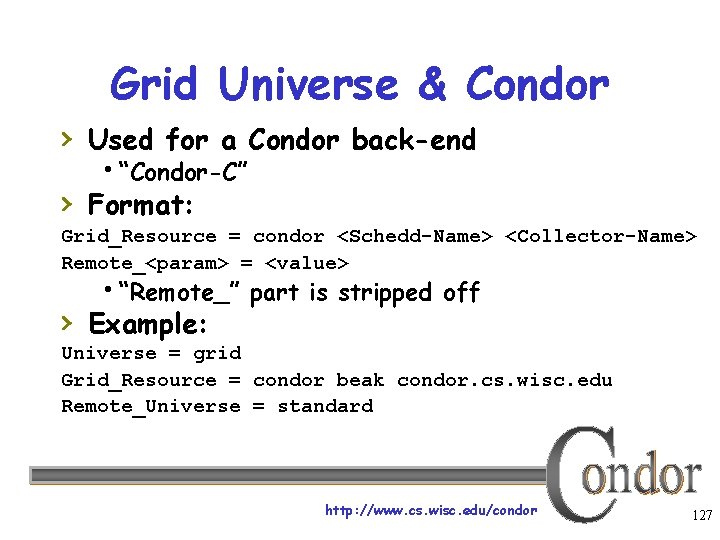

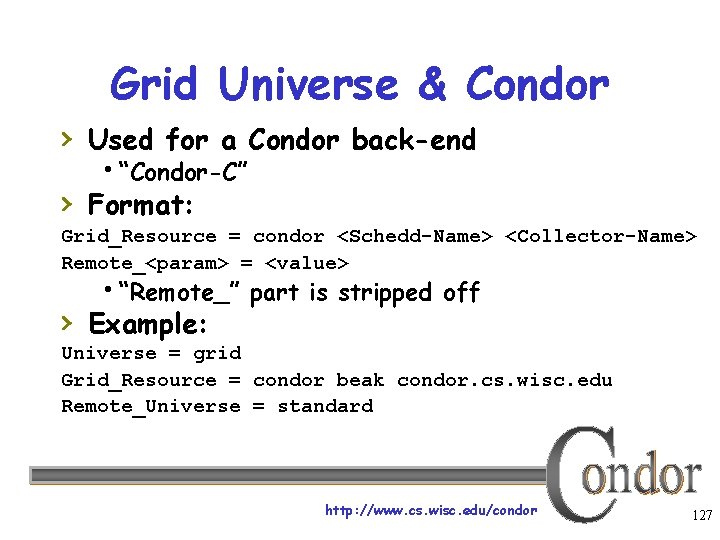

Grid Universe & Condor › Used for a Condor back-end “Condor-C” › Format: Grid_Resource = condor <Schedd-Name> <Collector-Name> Remote_<param> = <value> “Remote_” part is stripped off › Example: Universe = grid Grid_Resource = condor beak condor. cs. wisc. edu Remote_Universe = standard http: //www. cs. wisc. edu/condor 127

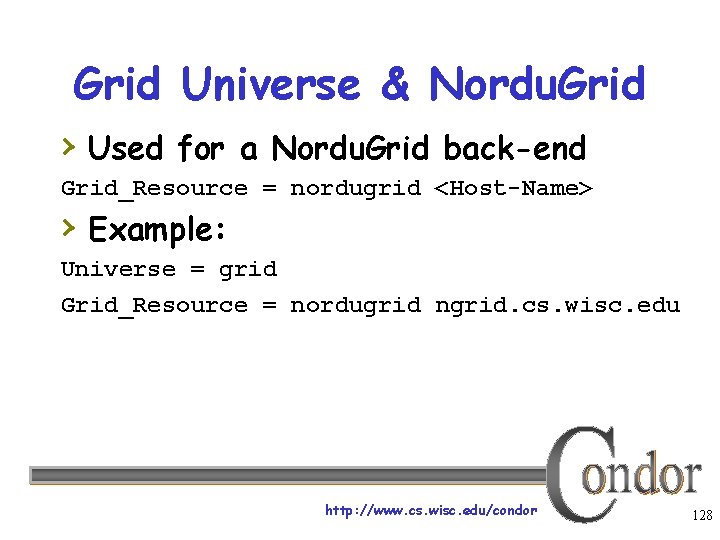

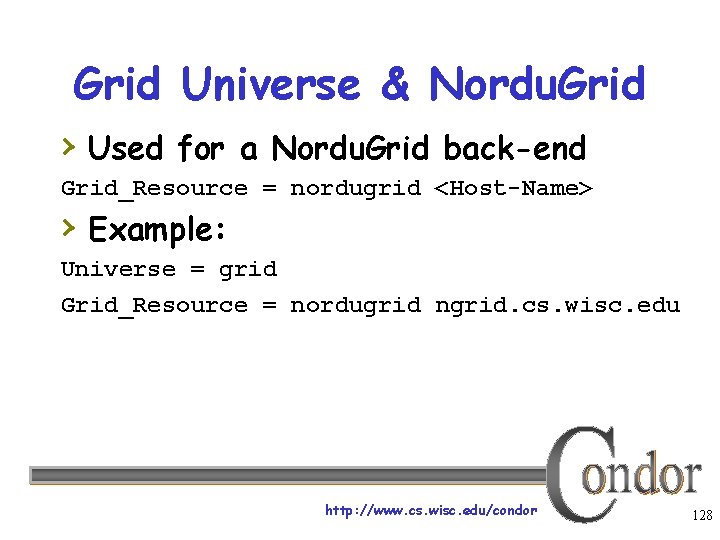

Grid Universe & Nordu. Grid › Used for a Nordu. Grid back-end Grid_Resource = nordugrid <Host-Name> › Example: Universe = grid Grid_Resource = nordugrid ngrid. cs. wisc. edu http: //www. cs. wisc. edu/condor 128

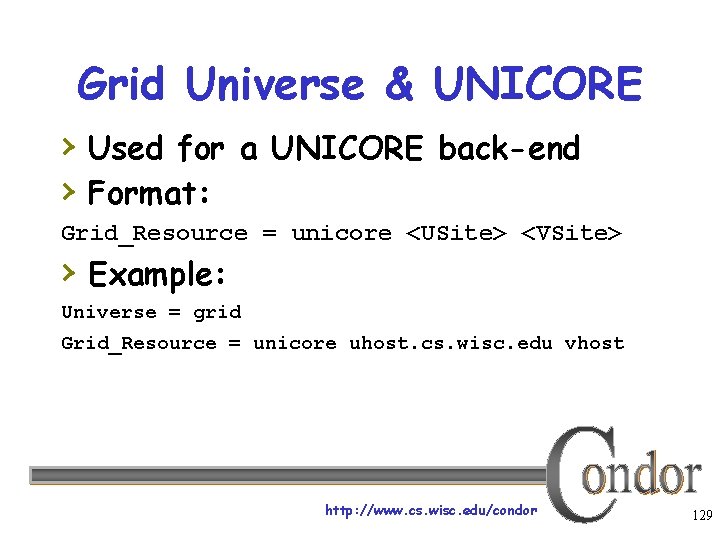

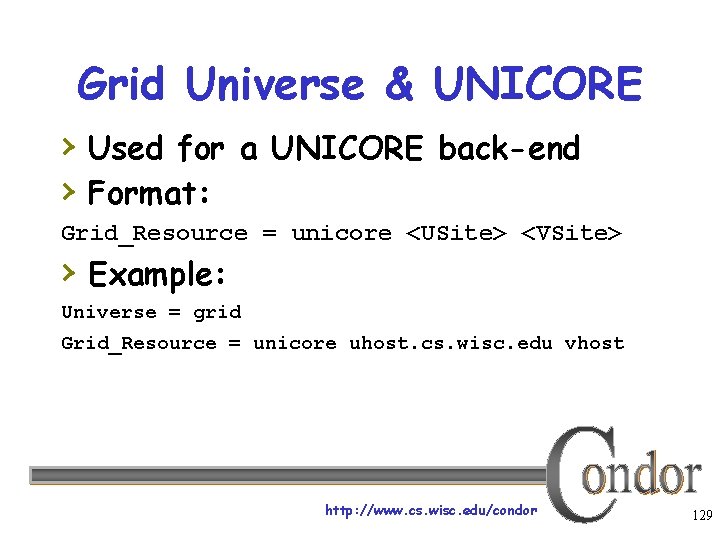

Grid Universe & UNICORE › Used for a UNICORE back-end › Format: Grid_Resource = unicore <USite> <VSite> › Example: Universe = grid Grid_Resource = unicore uhost. cs. wisc. edu vhost http: //www. cs. wisc. edu/condor 129

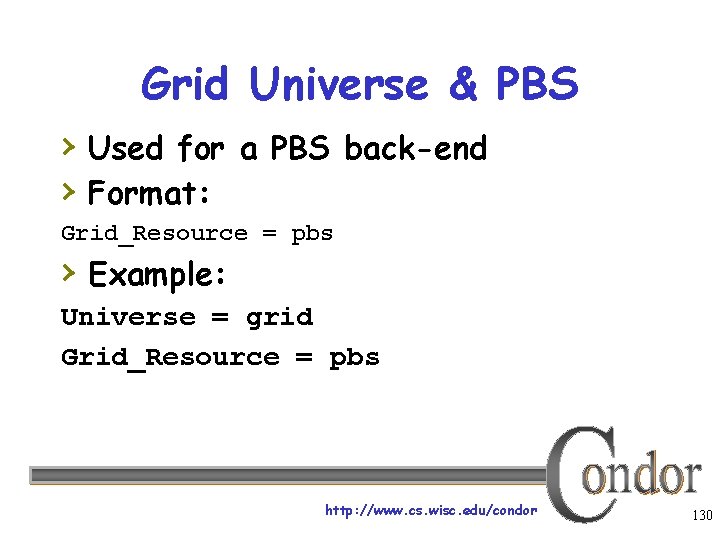

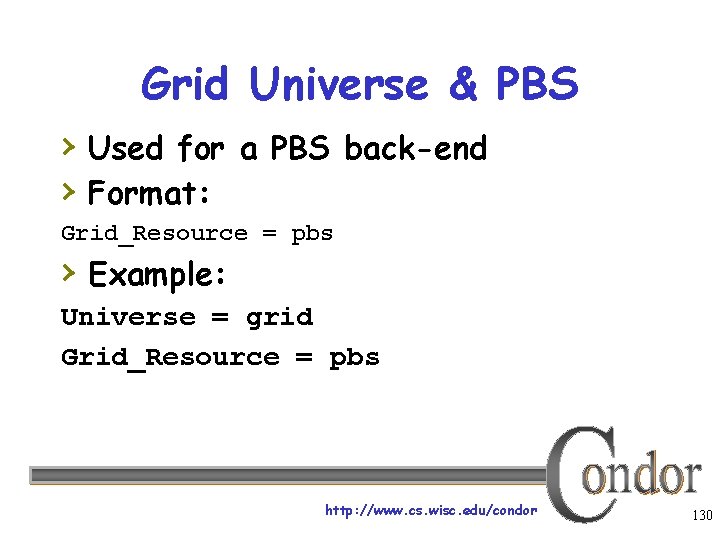

Grid Universe & PBS › Used for a PBS back-end › Format: Grid_Resource = pbs › Example: Universe = grid Grid_Resource = pbs http: //www. cs. wisc. edu/condor 130

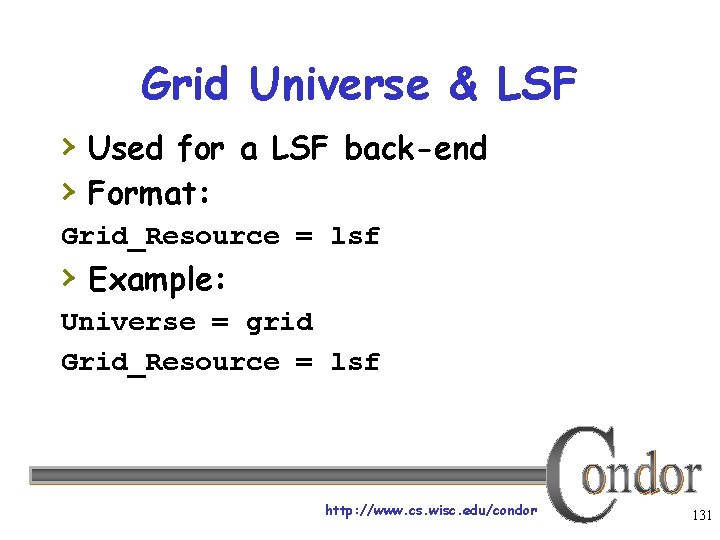

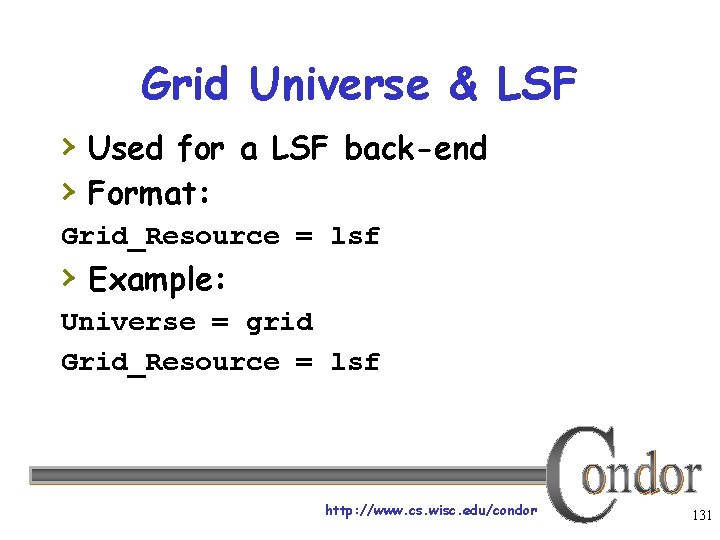

Grid Universe & LSF › Used for a LSF back-end › Format: Grid_Resource = lsf › Example: Universe = grid Grid_Resource = lsf http: //www. cs. wisc. edu/condor 131

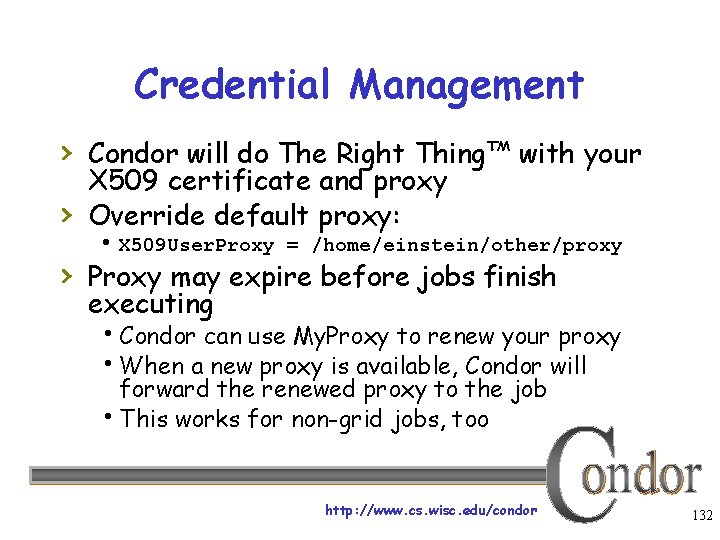

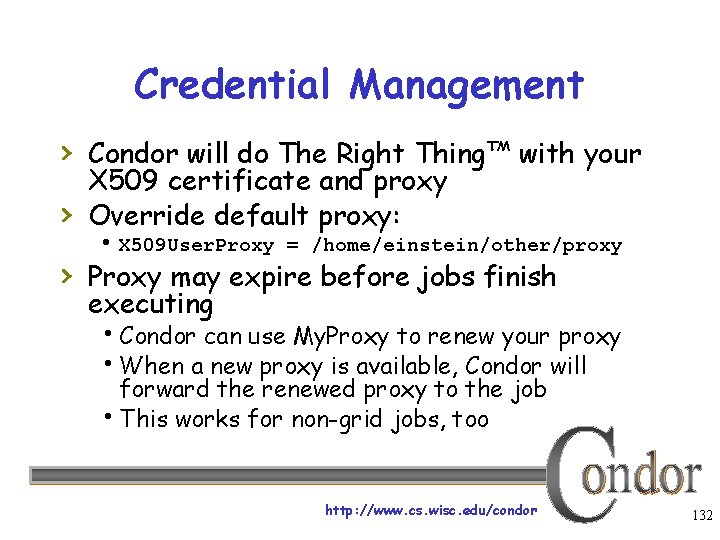

Credential Management › Condor will do The Right Thing™ with your › X 509 certificate and proxy Override default proxy: X 509 User. Proxy = /home/einstein/other/proxy › Proxy may expire before jobs finish executing Condor can use My. Proxy to renew your proxy When a new proxy is available, Condor will forward the renewed proxy to the job This works for non-grid jobs, too http: //www. cs. wisc. edu/condor 132

Condor Universes: Scheduler and Local › Scheduler Universe Plug in a meta-scheduler Similar to Globus’s fork job manager Developed for DAGMan (next slide) › Local Very similar to vanilla, but jobs run on the local host Has more control over jobs than scheduler universe http: //www. cs. wisc. edu/condor 133

My jobs have dependencies… Can Condor help solve my dependency problems? http: //www. cs. wisc. edu/condor 134

Einstein learns DAGMan › Directed Acyclic Graph Manager › DAGMan allows you to specify the dependencies between your Condor jobs, so it can manage them automatically for you. E. G. : “Don’t run job ‘B’ until job ‘A’ has completed successfully. ” http: //www. cs. wisc. edu/condor 135

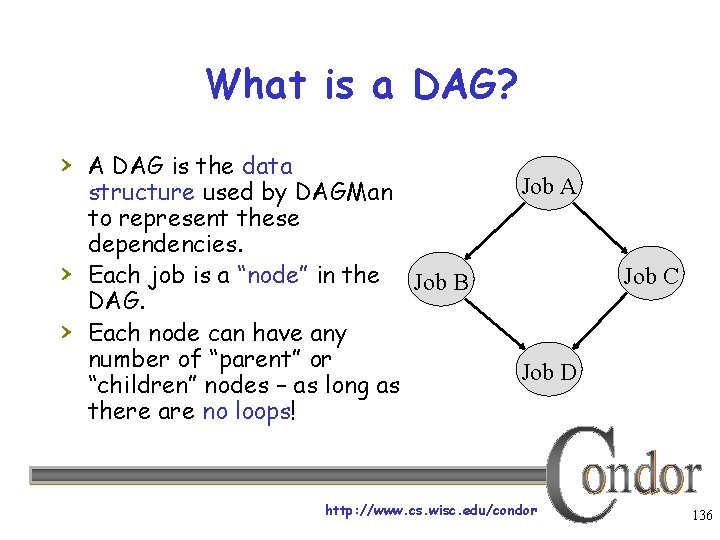

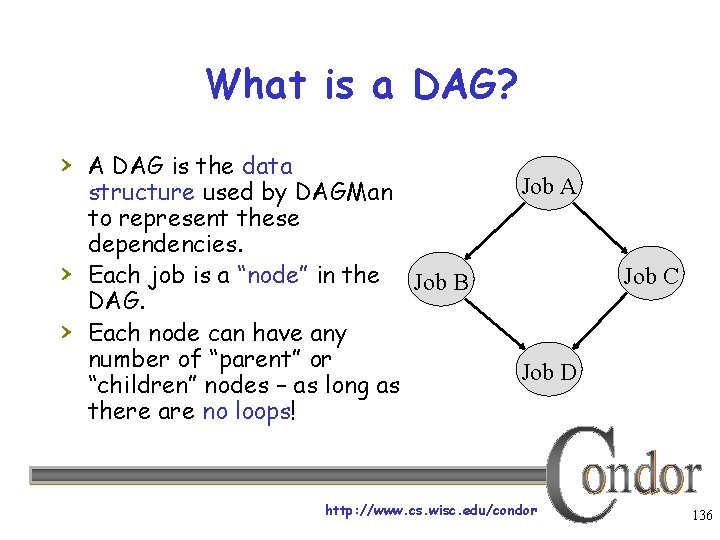

What is a DAG? › A DAG is the data › › structure used by DAGMan to represent these dependencies. Each job is a “node” in the Job B DAG. Each node can have any number of “parent” or “children” nodes – as long as there are no loops! Job A Job C Job D http: //www. cs. wisc. edu/condor 136

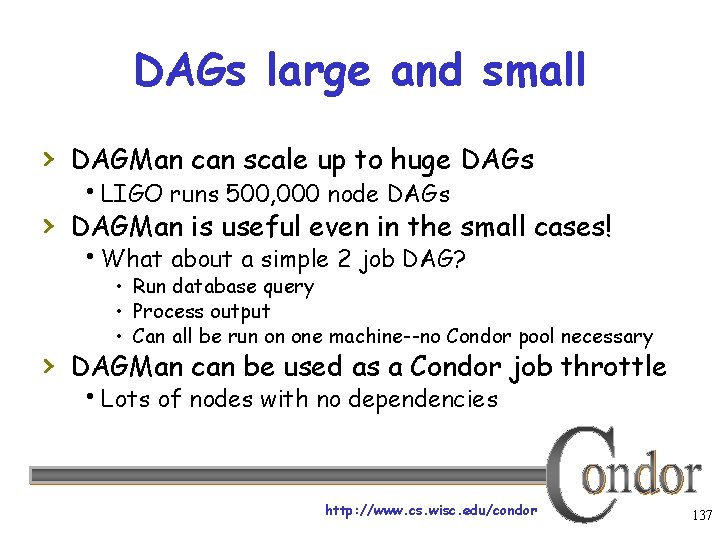

DAGs large and small › DAGMan can scale up to huge DAGs LIGO runs 500, 000 node DAGs › DAGMan is useful even in the small cases! What about a simple 2 job DAG? • Run database query • Process output • Can all be run on one machine--no Condor pool necessary › DAGMan can be used as a Condor job throttle Lots of nodes with no dependencies http: //www. cs. wisc. edu/condor 137

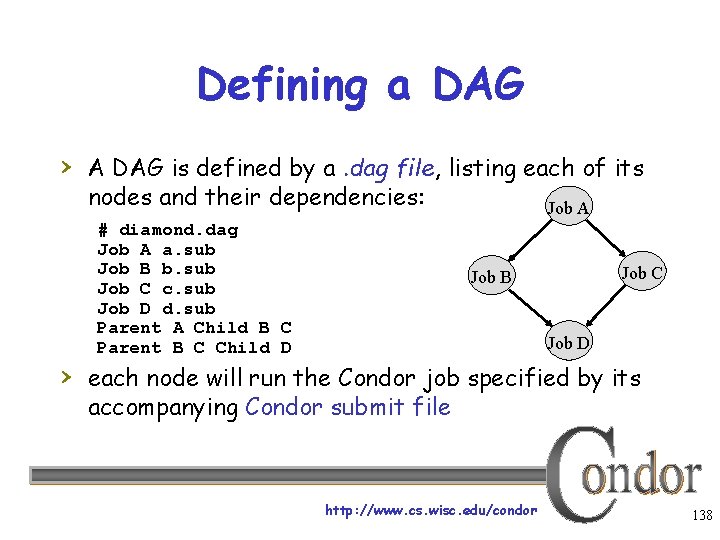

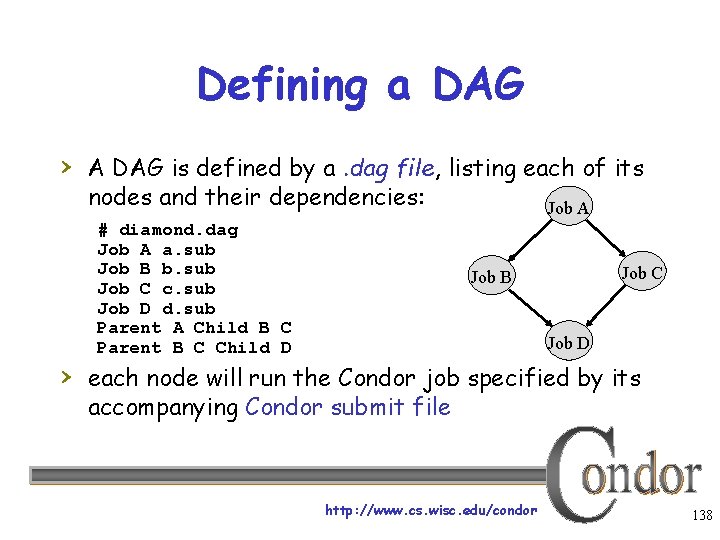

Defining a DAG › A DAG is defined by a. dag file, listing each of its nodes and their dependencies: # diamond. dag Job A a. sub Job B b. sub Job C c. sub Job D d. sub Parent A Child B C Parent B C Child D Job A Job C Job B Job D › each node will run the Condor job specified by its accompanying Condor submit file http: //www. cs. wisc. edu/condor 138

Submitting a DAG › To start your DAG, just run condor_submit_dag with your. dag file Condor will start a personal DAGMan daemon which to begin running your jobs: % condor_submit_dag diamond. dag › condor_dagman is then run by the schedd Thus, DAGMan daemon itself is “watched” by Condor, so you don’t have to http: //www. cs. wisc. edu/condor 139

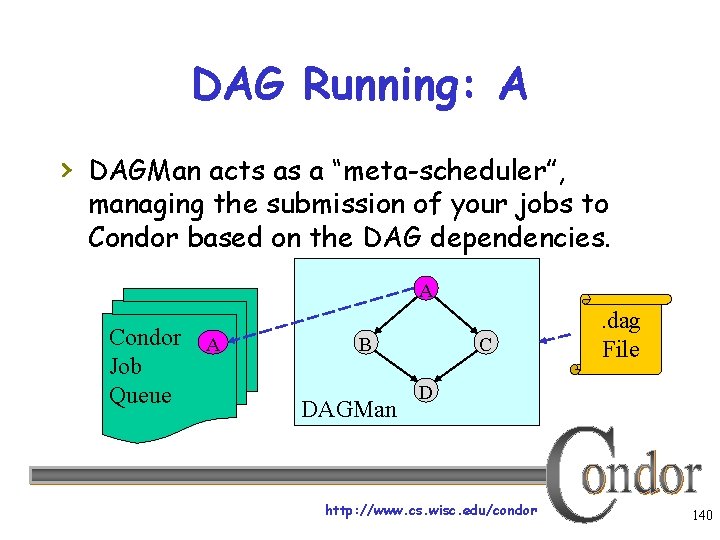

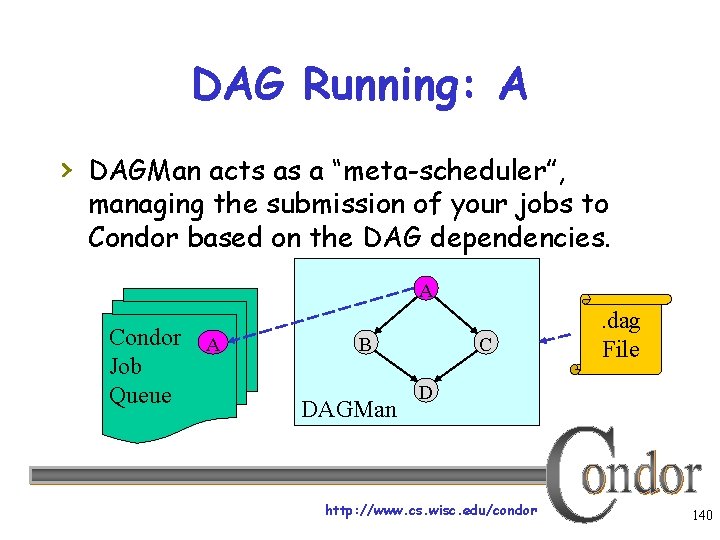

DAG Running: A › DAGMan acts as a “meta-scheduler”, managing the submission of your jobs to Condor based on the DAG dependencies. A Condor Job Queue A B DAGMan C . dag File D http: //www. cs. wisc. edu/condor 140

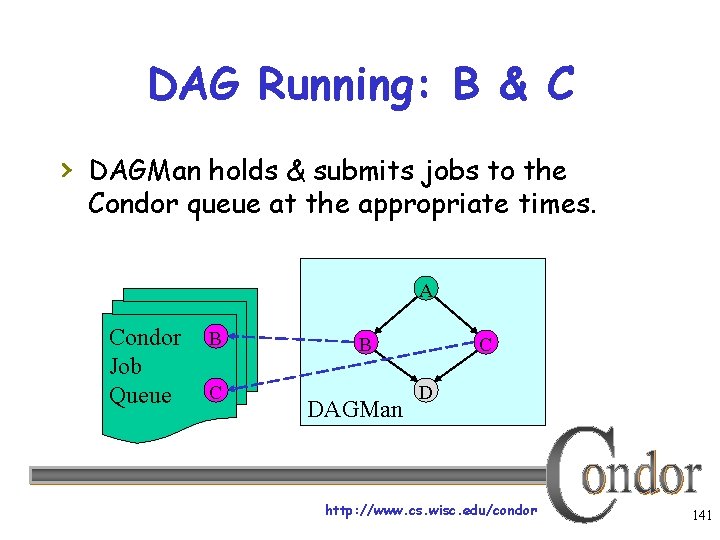

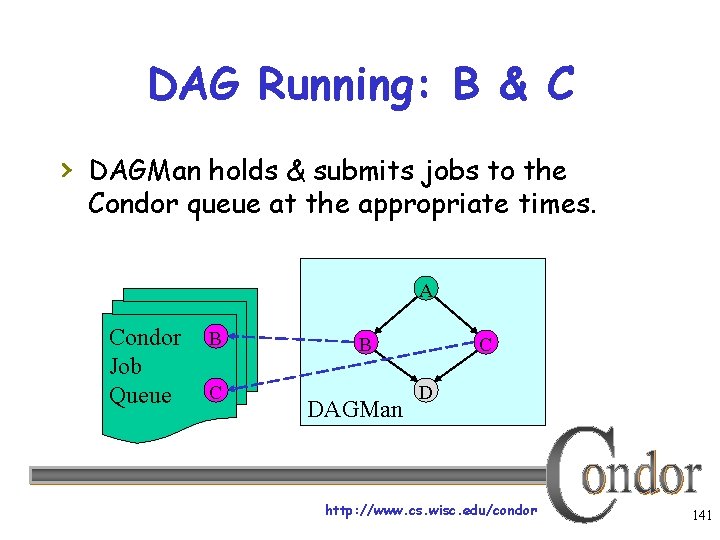

DAG Running: B & C › DAGMan holds & submits jobs to the Condor queue at the appropriate times. A Condor Job Queue B C B DAGMan C D http: //www. cs. wisc. edu/condor 141

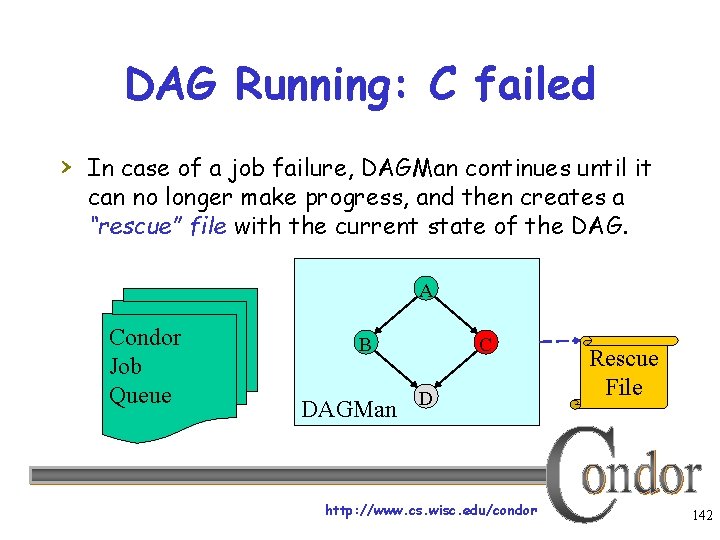

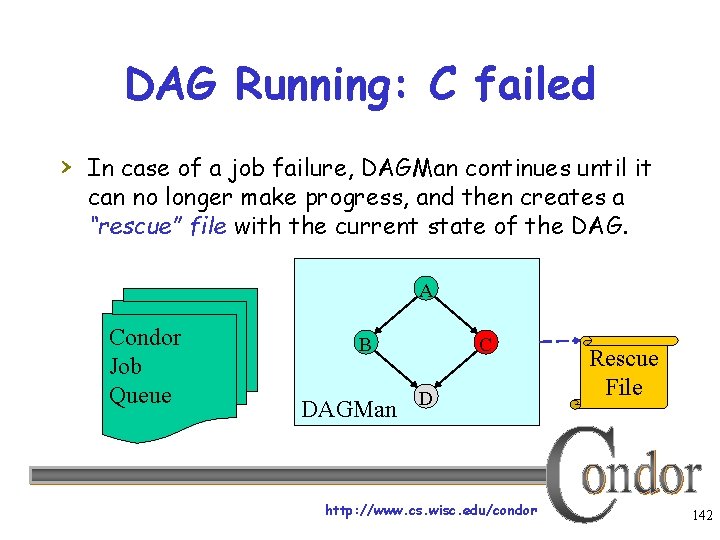

DAG Running: C failed › In case of a job failure, DAGMan continues until it can no longer make progress, and then creates a “rescue” file with the current state of the DAG. A Condor Job Queue B C DAGMan D http: //www. cs. wisc. edu/condor Rescue File 142

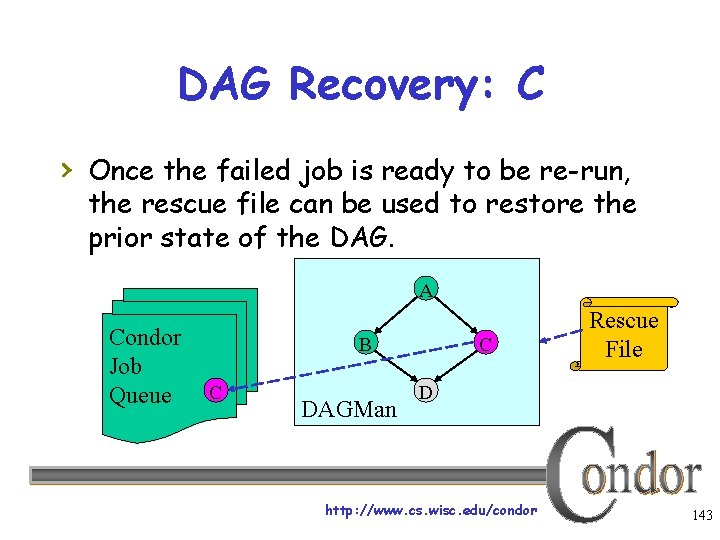

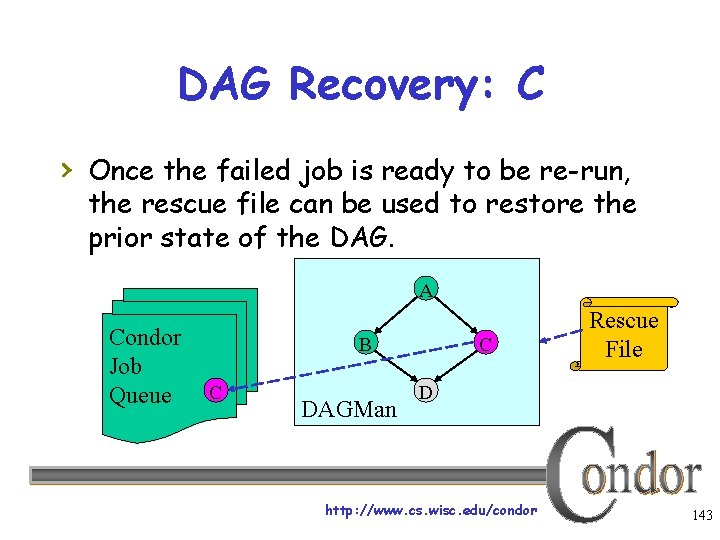

DAG Recovery: C › Once the failed job is ready to be re-run, the rescue file can be used to restore the prior state of the DAG. A Condor Job Queue B C DAGMan C Rescue File D http: //www. cs. wisc. edu/condor 143

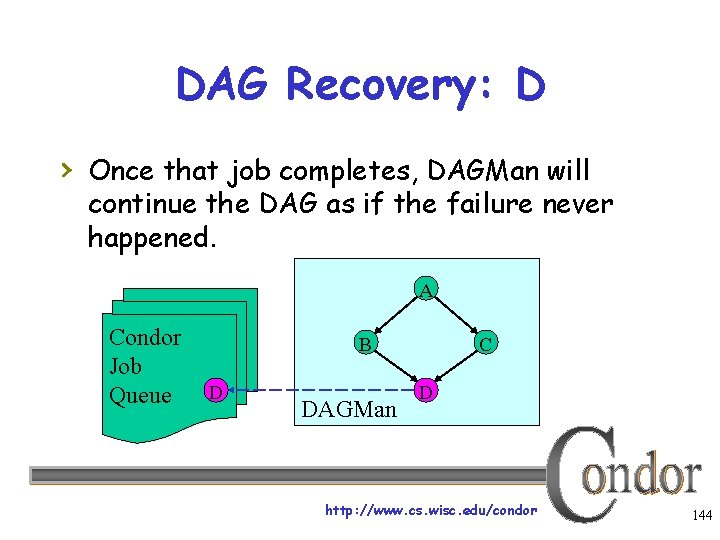

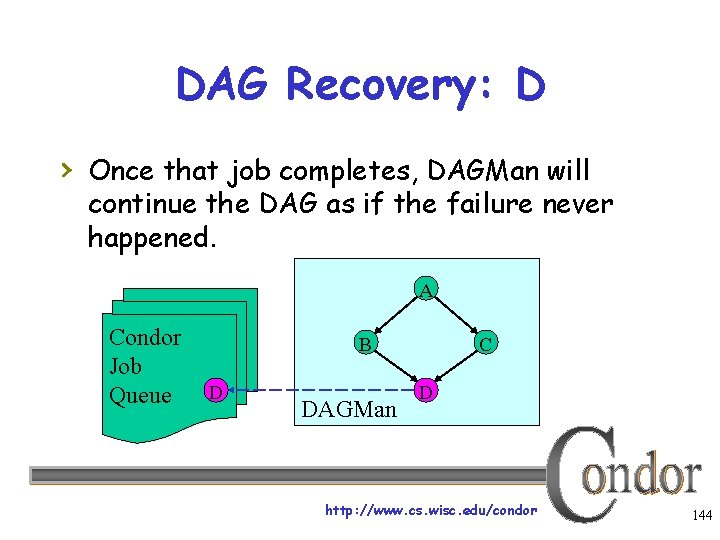

DAG Recovery: D › Once that job completes, DAGMan will continue the DAG as if the failure never happened. A Condor Job Queue B D DAGMan C D http: //www. cs. wisc. edu/condor 144

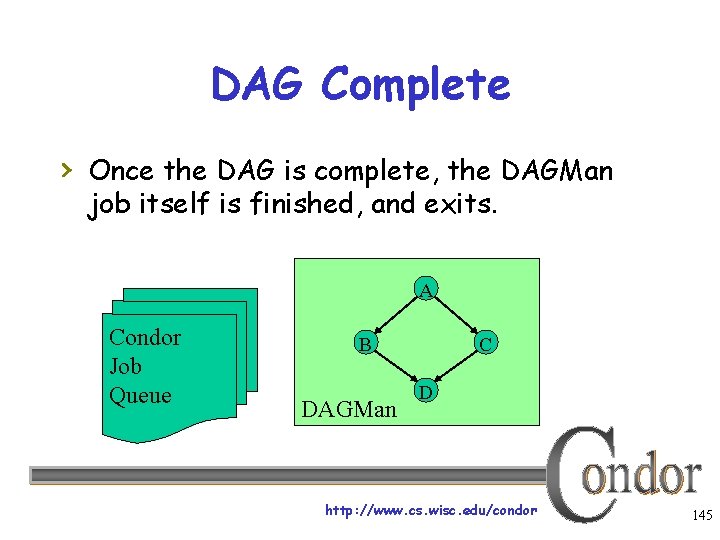

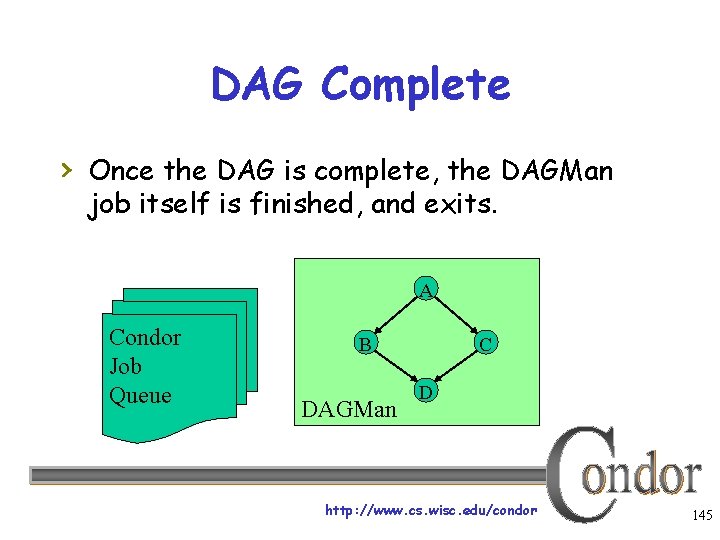

DAG Complete › Once the DAG is complete, the DAGMan job itself is finished, and exits. A Condor Job Queue B DAGMan C D http: //www. cs. wisc. edu/condor 145

Additional DAGMan Features › Provides other handy features for job management… Nodes can have PRE & POST scripts Failed nodes can be automatically re- tried a configurable number of times Job submission can be “throttled” http: //www. cs. wisc. edu/condor 146

Want more from DAGMan? › What if: You want to define workflows that can flexibly take advantage of different grid resources? You want to register data products in a way that makes them available to others? You want to use the grid without a full Condor installation? http: //www. cs. wisc. edu/condor 147

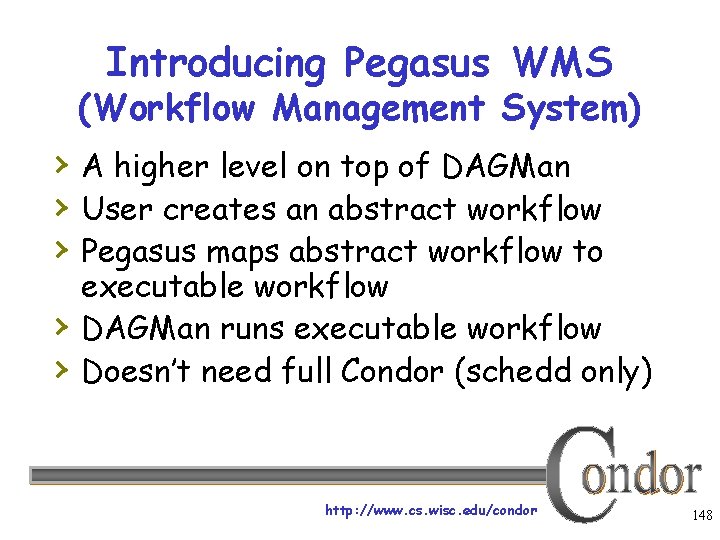

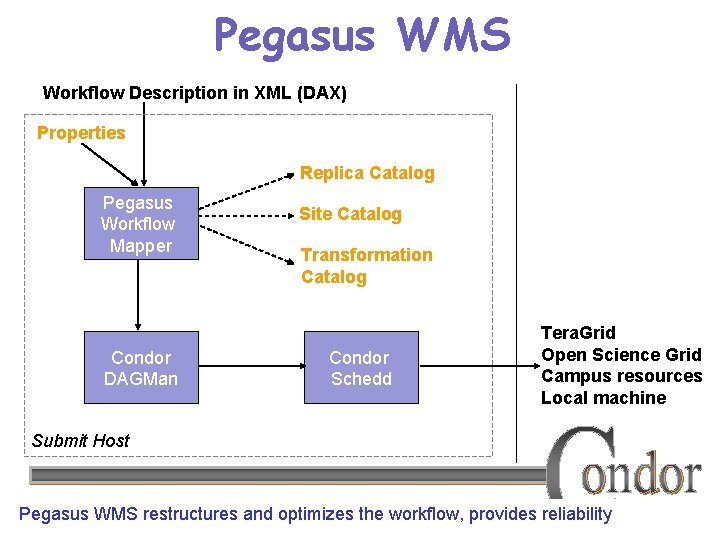

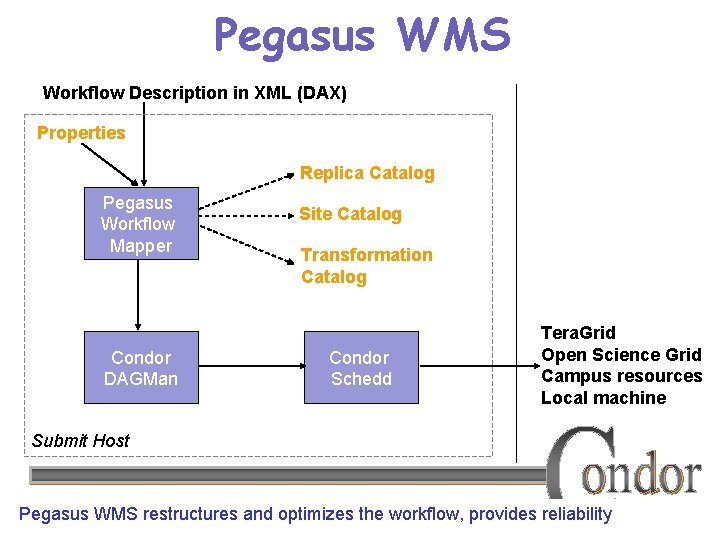

Introducing Pegasus WMS (Workflow Management System) › A higher level on top of DAGMan › User creates an abstract workflow › Pegasus maps abstract workflow to › › executable workflow DAGMan runs executable workflow Doesn’t need full Condor (schedd only) http: //www. cs. wisc. edu/condor 148

Pegasus Features › Workflow has inter-job dependencies › › › (similar to DAGMan) Pegasus can map jobs to grid sites Pegasus handles discovery and registration of data products Pegasus handles data transfer to/from grid sites http: //www. cs. wisc. edu/condor 149

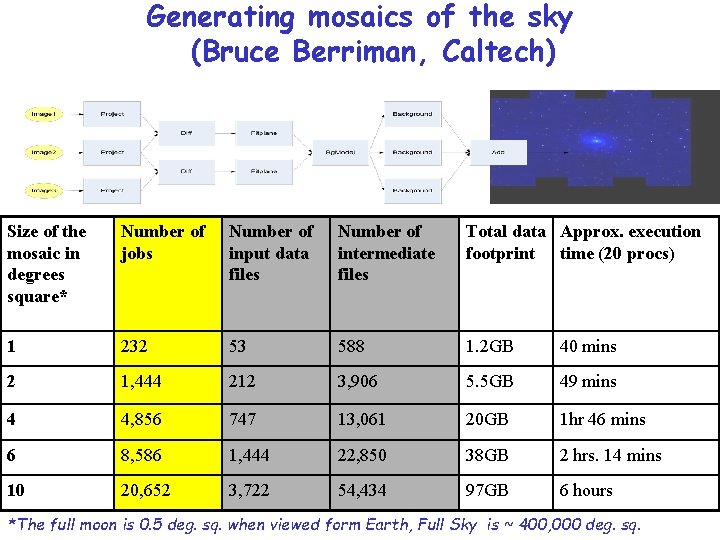

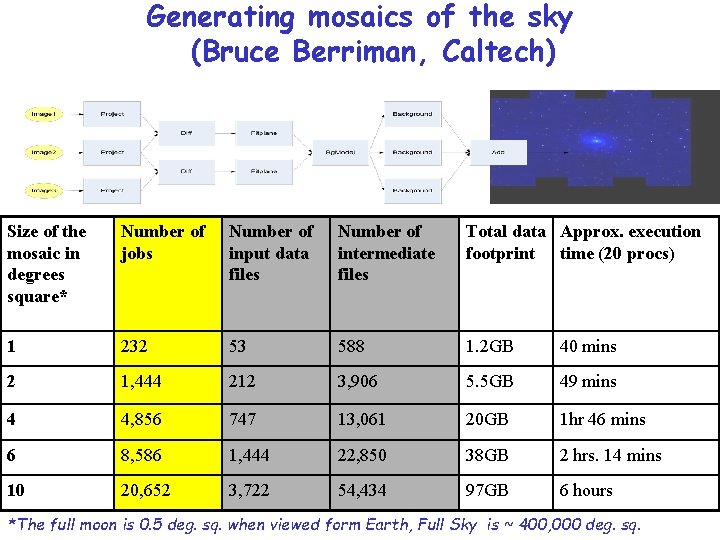

Generating mosaics of the sky (Bruce Berriman, Caltech) Size of the mosaic in degrees square* Number of jobs Number of input data files Number of intermediate files Total data Approx. execution footprint time (20 procs) 1 232 53 588 1. 2 GB 40 mins 2 1, 444 212 3, 906 5. 5 GB 49 mins 4 4, 856 747 13, 061 20 GB 1 hr 46 mins 6 8, 586 1, 444 22, 850 38 GB 2 hrs. 14 mins 10 20, 652 3, 722 54, 434 97 GB 6 hours http: //www. cs. wisc. edu/condor *The full moon is 0. 5 deg. sq. when viewed form Earth, Full Sky is ~ 400, 000 deg. sq. 150

Abstract Workflow (DAX) › Pegasus workflow description—DAX In XML • Java API to generate XML for you Workflow “high-level language” Devoid of resource descriptions Devoid of data locations Refers to codes as logical transformations Refers to data as logical files Specifies dependencies • Akin to a high-level DAG file http: //www. cs. wisc. edu/condor 151

Basic Workflow Mapping › Mapping is Translating abstract DAX into an executable DAG › Select where to run the computations Change task nodes into nodes with executable descriptions › Select which data to access Add stage-in and stage-out nodes to move data › Add nodes that register the newly-created data products › Add nodes to create an execution directory on a remote site › Write out the workflow in a form understandable by a workflow engine Include provenance capture steps http: //www. cs. wisc. edu/condor 152

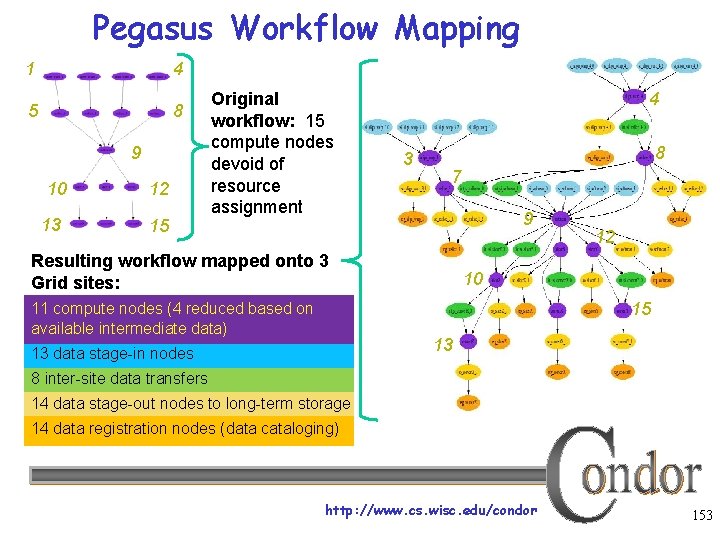

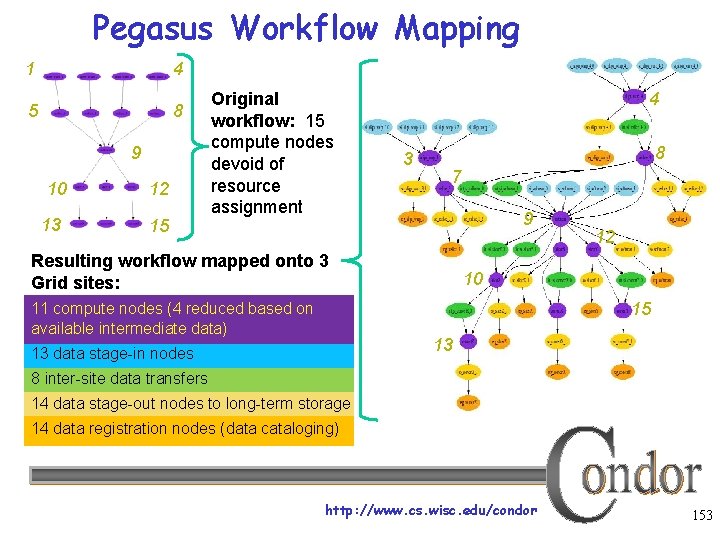

Pegasus Workflow Mapping 1 4 5 8 9 10 13 12 15 Original workflow: 15 compute nodes devoid of resource assignment 4 3 8 7 9 Resulting workflow mapped onto 3 Grid sites: 12 10 15 11 compute nodes (4 reduced based on available intermediate data) 13 13 data stage-in nodes 8 inter-site data transfers 14 data stage-out nodes to long-term storage 14 data registration nodes (data cataloging) http: //www. cs. wisc. edu/condor 153

Pegasus WMS Workflow Description in XML (DAX) Properties Replica Catalog Pegasus Workflow Mapper Condor DAGMan Site Catalog Transformation Catalog Condor Schedd Tera. Grid Open Science Grid Campus resources Local machine Submit Host http: //www. cs. wisc. edu/condor Pegasus WMS restructures and optimizes the workflow, provides reliability 154

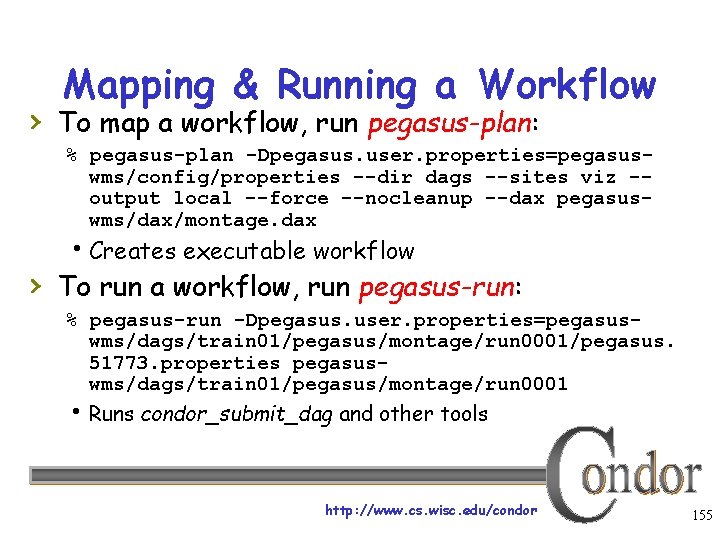

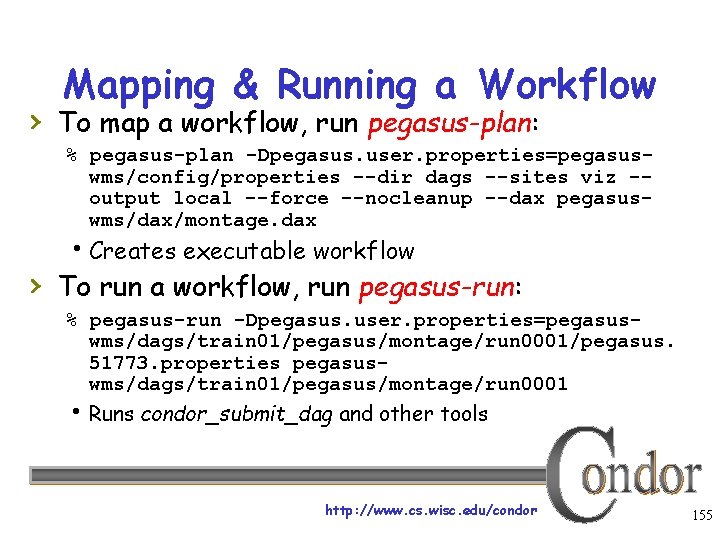

Mapping & Running a Workflow › To map a workflow, run pegasus-plan: % pegasus-plan -Dpegasus. user. properties=pegasuswms/config/properties --dir dags --sites viz -output local --force --nocleanup --dax pegasuswms/dax/montage. dax Creates executable workflow › To run a workflow, run pegasus-run: % pegasus-run -Dpegasus. user. properties=pegasuswms/dags/train 01/pegasus/montage/run 0001/pegasus. 51773. properties pegasuswms/dags/train 01/pegasus/montage/run 0001 Runs condor_submit_dag and other tools http: //www. cs. wisc. edu/condor 155

There’s much more… › We’ve only scratched the surface of › Pegasus’s capabilities Resources: Pegasus home page: pegasus. isi. edu Tutorial materials available at: http: //pegasus. isi. edu/tutorials. php Questions: pegasus@isi. edu Also, talk to Kent Wenger (Condor Team) • wenger@cs. wisc. edu http: //www. cs. wisc. edu/condor 156

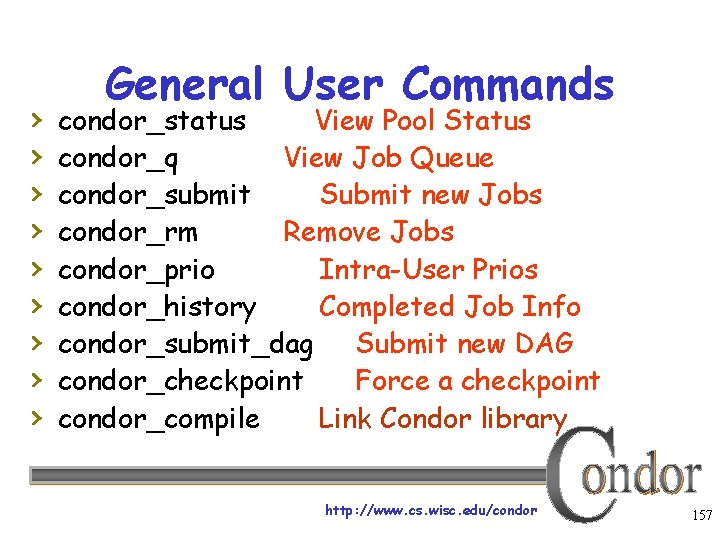

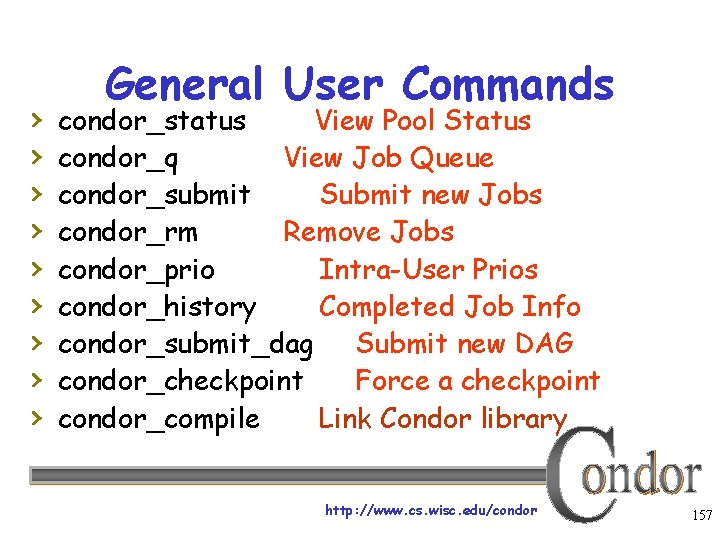

› › › › › General User Commands condor_status View Pool Status condor_q View Job Queue condor_submit Submit new Jobs condor_rm Remove Jobs condor_prio Intra-User Prios condor_history Completed Job Info condor_submit_dag Submit new DAG condor_checkpoint Force a checkpoint condor_compile Link Condor library http: //www. cs. wisc. edu/condor 157

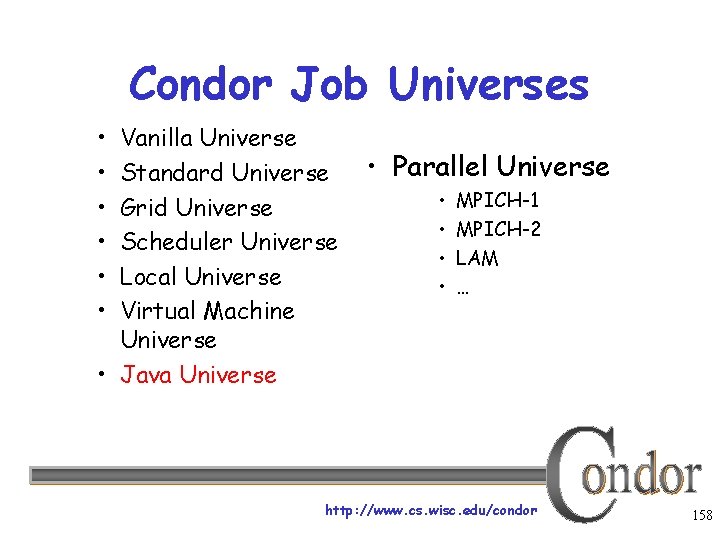

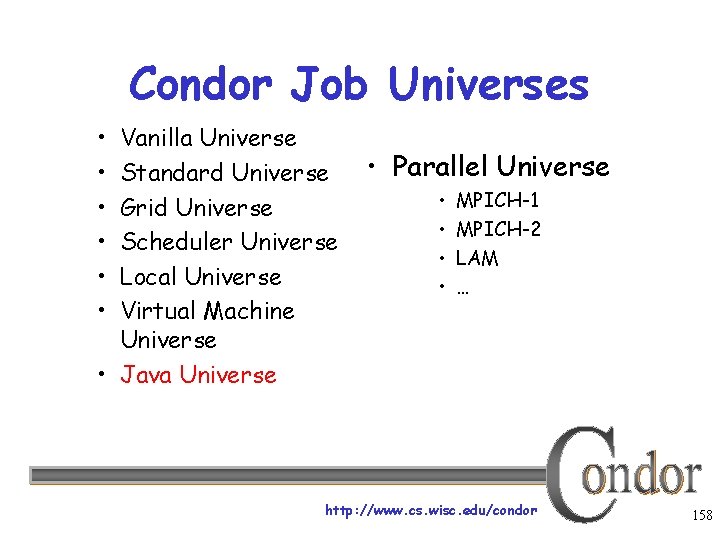

Condor Job Universes • • • Vanilla Universe Standard Universe Grid Universe Scheduler Universe Local Universe Virtual Machine Universe • Java Universe • Parallel Universe • • MPICH-1 MPICH-2 LAM … http: //www. cs. wisc. edu/condor 158

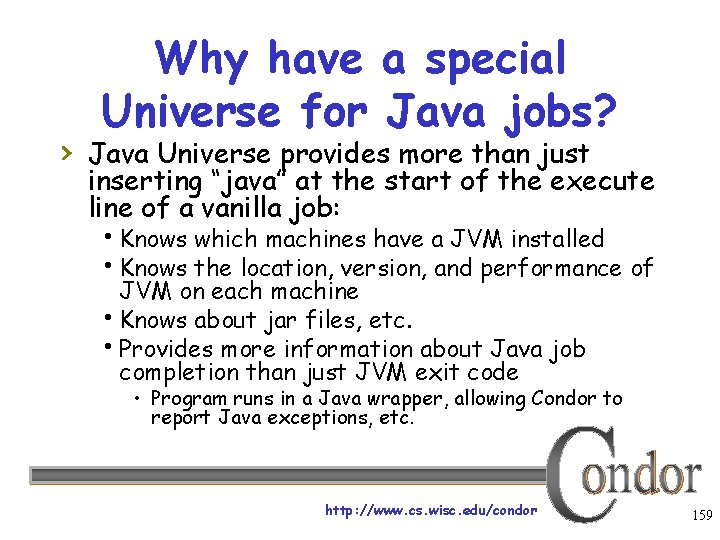

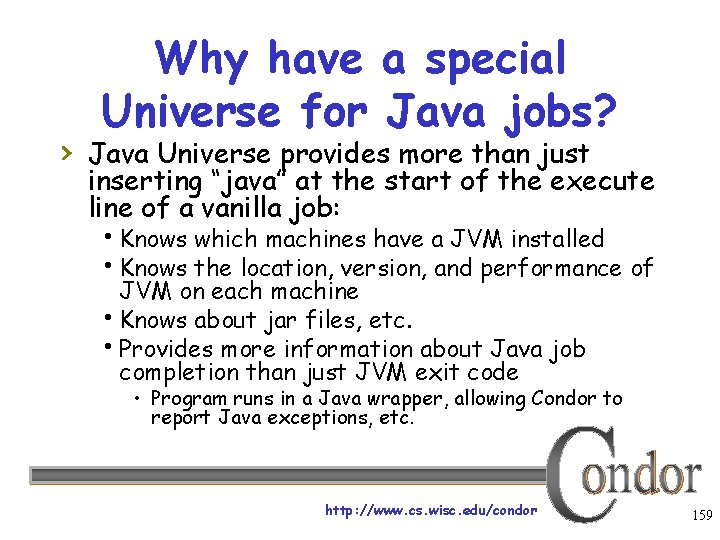

Why have a special Universe for Java jobs? › Java Universe provides more than just inserting “java” at the start of the execute line of a vanilla job: Knows which machines have a JVM installed Knows the location, version, and performance of JVM on each machine Knows about jar files, etc. Provides more information about Java job completion than just JVM exit code • Program runs in a Java wrapper, allowing Condor to report Java exceptions, etc. http: //www. cs. wisc. edu/condor 159

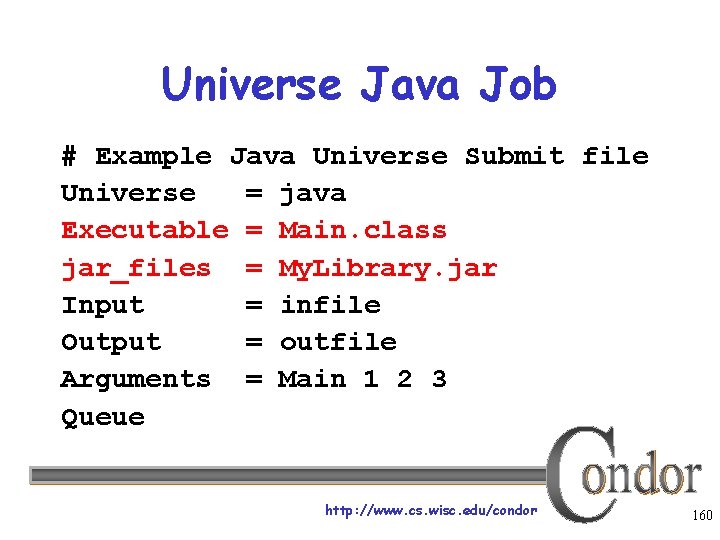

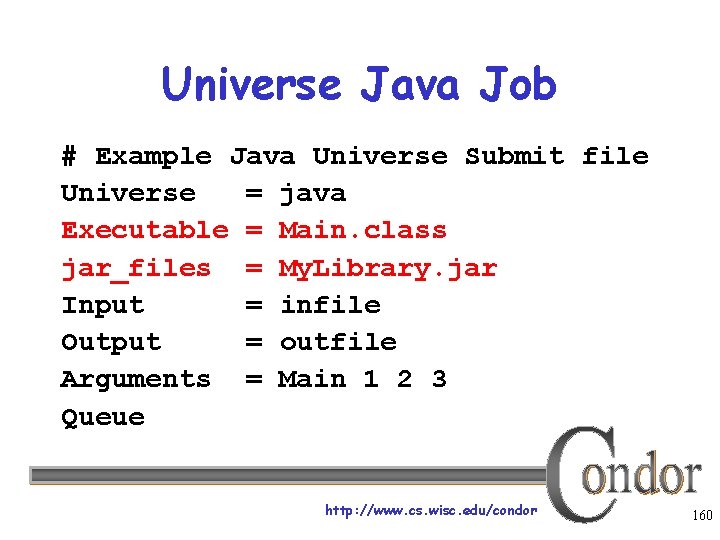

Universe Java Job # Example Java Universe Submit file Universe = java Executable = Main. class jar_files = My. Library. jar Input = infile Output = outfile Arguments = Main 1 2 3 Queue http: //www. cs. wisc. edu/condor 160

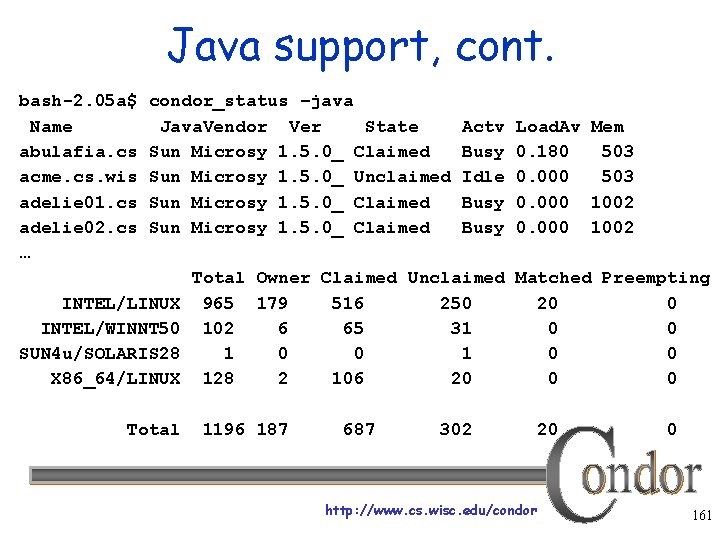

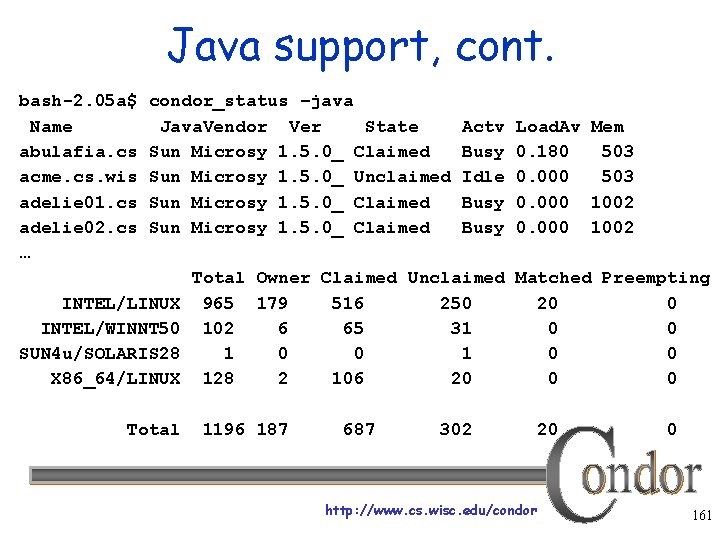

Java support, cont. bash-2. 05 a$ Name abulafia. cs acme. cs. wis adelie 01. cs adelie 02. cs … condor_status –java Java. Vendor Ver State Sun Microsy 1. 5. 0_ Claimed Sun Microsy 1. 5. 0_ Unclaimed Sun Microsy 1. 5. 0_ Claimed INTEL/LINUX INTEL/WINNT 50 SUN 4 u/SOLARIS 28 X 86_64/LINUX Total Actv Busy Idle Busy Load. Av Mem 0. 180 503 0. 000 1002 Total Owner Claimed Unclaimed Matched Preempting 965 179 516 250 20 0 102 6 65 31 0 0 128 2 106 20 0 0 1196 187 687 302 http: //www. cs. wisc. edu/condor 20 0 161

Albert wants Condor features on remote resources › He wants to run standard universe jobs on Grid-managed resources For matchmaking and dynamic scheduling of jobs For job checkpointing and migration For remote system calls http: //www. cs. wisc. edu/condor 162

Condor Glide. In › Albert can use the Grid Universe to run › › › Condor daemons on Grid resources When the resources run these Glide. In jobs, they will temporarily join his Condor Pool He can then submit Standard, Vanilla, PVM, or MPI Universe jobs and they will be matched and run on the remote resources Currently only supports Globus GT 2 We hope to fix this limitation http: //www. cs. wisc. edu/condor 163

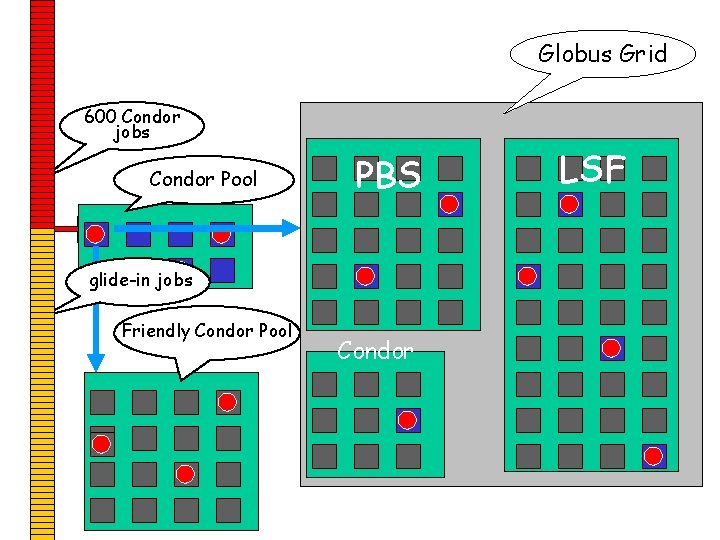

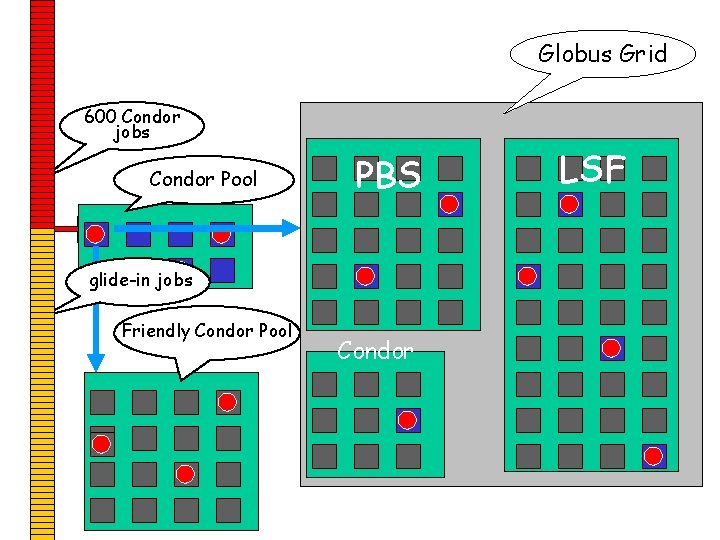

Globus Grid 600 Condor jobs personal your. Pool Condor workstation Condor PBS LSF glide-in jobs Friendly Condor Pool Condor http: //www. cs. wisc. edu/condor 164

Glide. In Concerns › What if the remote resource kills my Glide. In job? That resource will disappear from your pool and your jobs will be rescheduled on other machines Standard universe jobs will resume from their last checkpoint like usual › What if all my jobs are completed before a Glide. In job runs? If a Glide. In Condor daemon is not matched with a job in 10 minutes, it terminates, freeing the resource http: //www. cs. wisc. edu/condor 166

In Review With Condor’s help, Albert can: Manage his compute job workload Access local machines Access remote Condor Pools via flocking Access remote compute resources on the Grid via “Grid Universe” jobs Carve out his own personal Condor Pool from the Grid with Glide. In technology http: //www. cs. wisc. edu/condor 167

› › › › Administrator Commands condor_vacate Leave a machine now condor_on Start Condor condor_off Stop Condor condor_reconfig Reconfig on-the-fly condor_config_val View/set config condor_userprio User Priorities condor_stats View detailed usage accounting stats http: //www. cs. wisc. edu/condor 168

My boss wants to watch what Condor is doing http: //www. cs. wisc. edu/condor 169

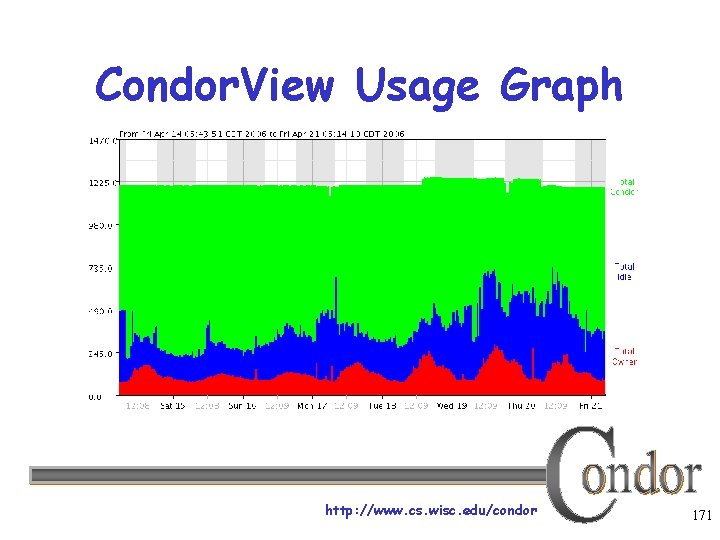

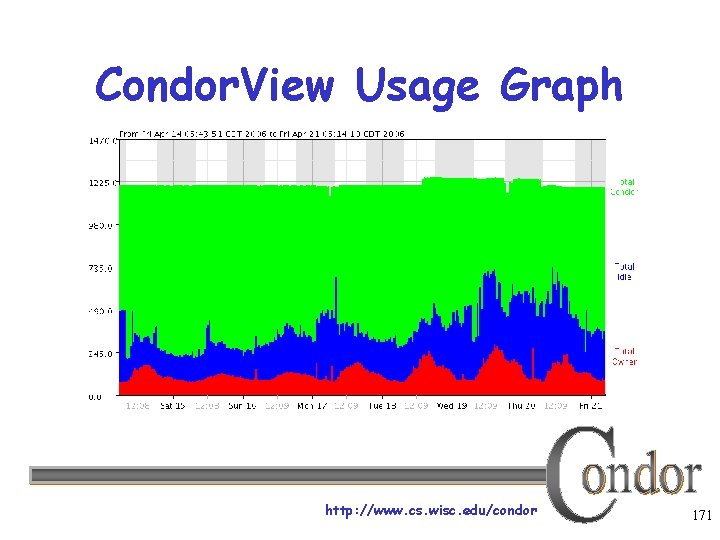

Use Condor. View! › Provides visual graphs of current and past › › › utilization Data is derived from Condor's own accounting statistics Interactive Java applet Quickly and easily view: How much Condor is being used How many cycles are being delivered Who is using them Utilization by machine platform or by user http: //www. cs. wisc. edu/condor 170

Condor. View Usage Graph http: //www. cs. wisc. edu/condor 171

› › › I could also talk lots about… GCB: Living with firewalls & private networks Federated Grids/Clusters APIs and Portals MW Database Support (Quill) High Availability Fail-over Compute On-Demand (COD) Dynamic Pool Creation (“Glide-in”) Role-based prioritization and accounting Strong security, incl privilege separation Data movement scheduling in workflows … http: //www. cs. wisc. edu/condor 172

Thank you! Check us out on the Web: http: //www. condorproject. org Email: condor-admin@cs. wisc. edu http: //www. cs. wisc. edu/condor 173