Userfriendly Parallelization of GAUDI Applications with Python CHEP

![Cluster Parallelization Details The [pp]server is created/destroyed by the user script ◦ Requires ssh Cluster Parallelization Details The [pp]server is created/destroyed by the user script ◦ Requires ssh](https://slidetodoc.com/presentation_image_h2/50355fc0c33572919422ac189a71353c/image-10.jpg)

- Slides: 22

User-friendly Parallelization of GAUDI Applications with Python CHEP 09, 22 -27 March 2009, Prague Pere Mato (CERN), Eoin Smith (CERN)

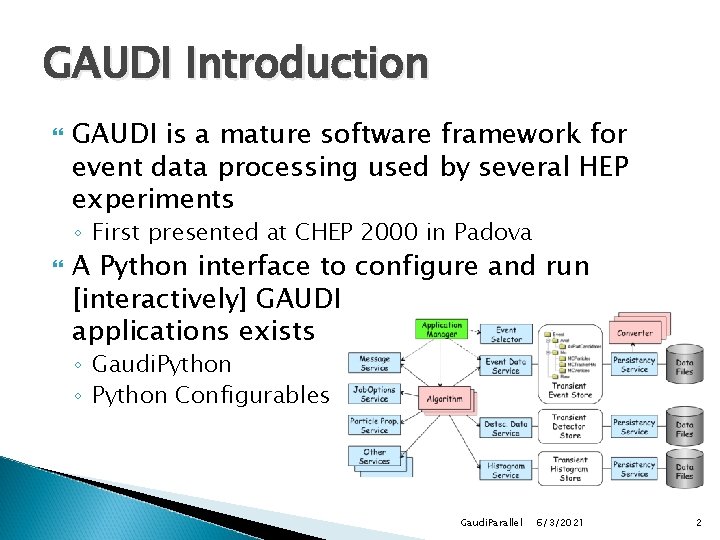

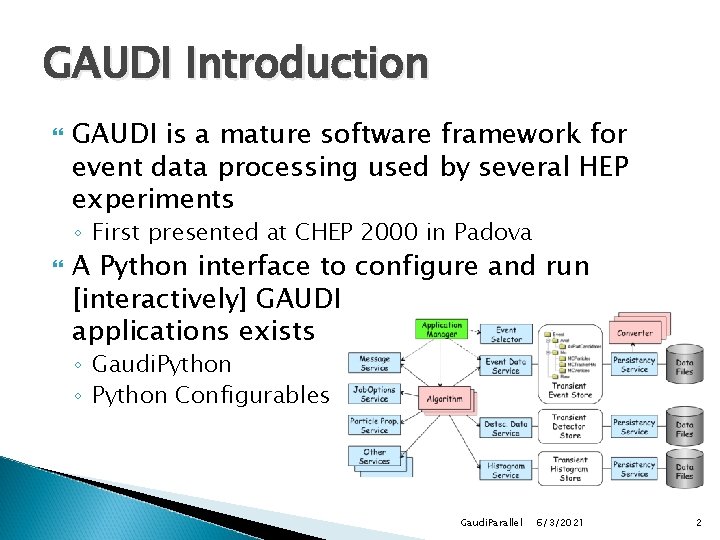

GAUDI Introduction GAUDI is a mature software framework for event data processing used by several HEP experiments ◦ First presented at CHEP 2000 in Padova A Python interface to configure and run [interactively] GAUDI applications exists ◦ Gaudi. Python ◦ Python Configurables Gaudi. Parallel 6/3/2021 2

Motivation Work done in the context of the R&D project to exploit multi-core architectures Main goal ◦ Get quicker responses when having 4 -8 core machines at your disposal ◦ Minimal changes (and understandable) to the Python scripts driving the program Leverage from existing third-party Python modules ◦ processing module, parallel python (pp) module Optimization of memory usage Gaudi. Parallel 6/3/2021 3

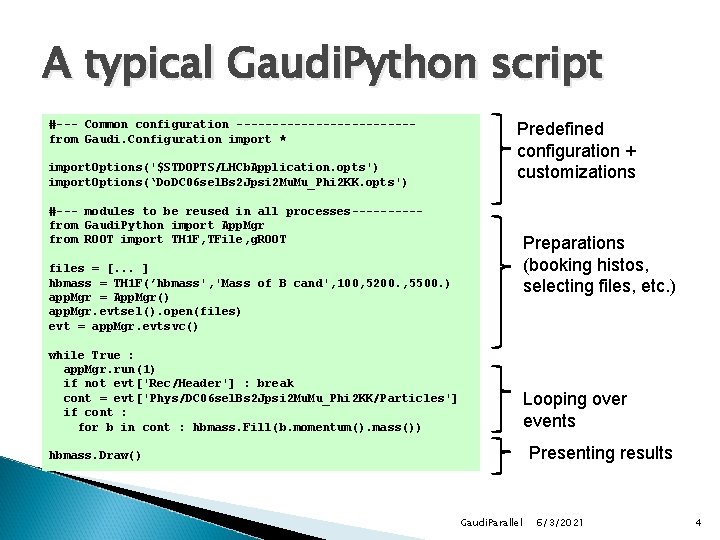

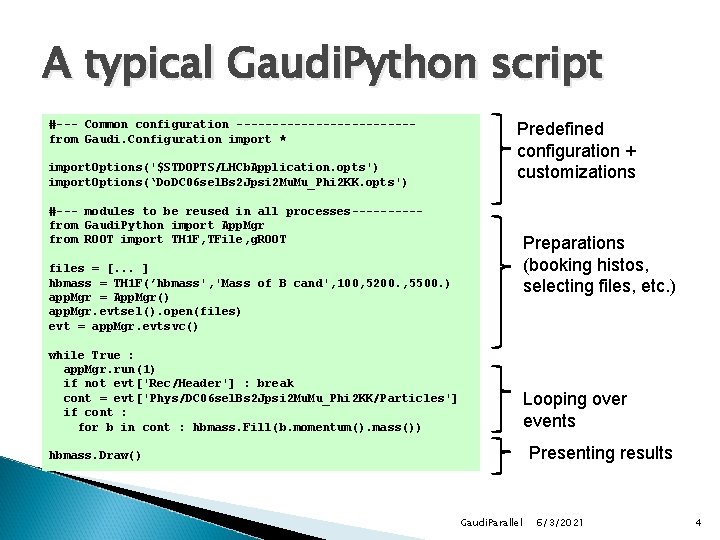

A typical Gaudi. Python script #--- Common configuration ------------from Gaudi. Configuration import * import. Options('$STDOPTS/LHCb. Application. opts') import. Options(‘Do. DC 06 sel. Bs 2 Jpsi 2 Mu. Mu_Phi 2 KK. opts') Predefined configuration + customizations #--- modules to be reused in all processes-----from Gaudi. Python import App. Mgr from ROOT import TH 1 F, TFile, g. ROOT Preparations (booking histos, selecting files, etc. ) files = [. . . ] hbmass = TH 1 F(’hbmass', 'Mass of B cand', 100, 5200. , 5500. ) app. Mgr = App. Mgr() app. Mgr. evtsel(). open(files) evt = app. Mgr. evtsvc() while True : app. Mgr. run(1) if not evt['Rec/Header'] : break cont = evt['Phys/DC 06 sel. Bs 2 Jpsi 2 Mu. Mu_Phi 2 KK/Particles'] if cont : for b in cont : hbmass. Fill(b. momentum(). mass()) Looping over events Presenting results hbmass. Draw() Gaudi. Parallel 6/3/2021 4

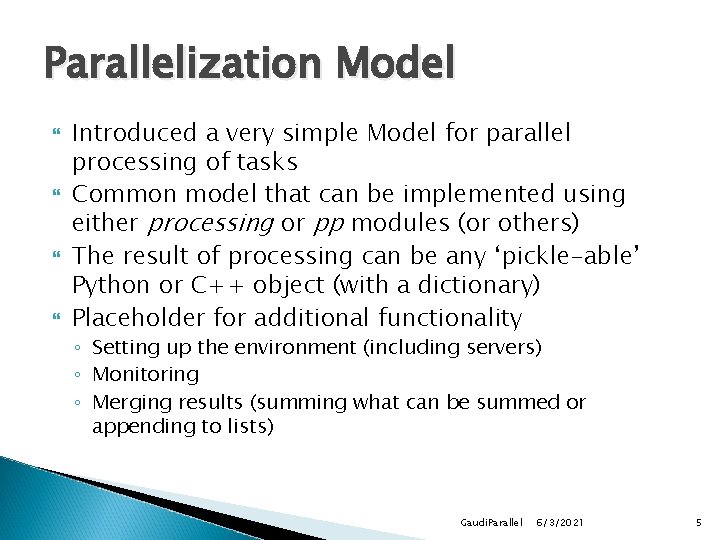

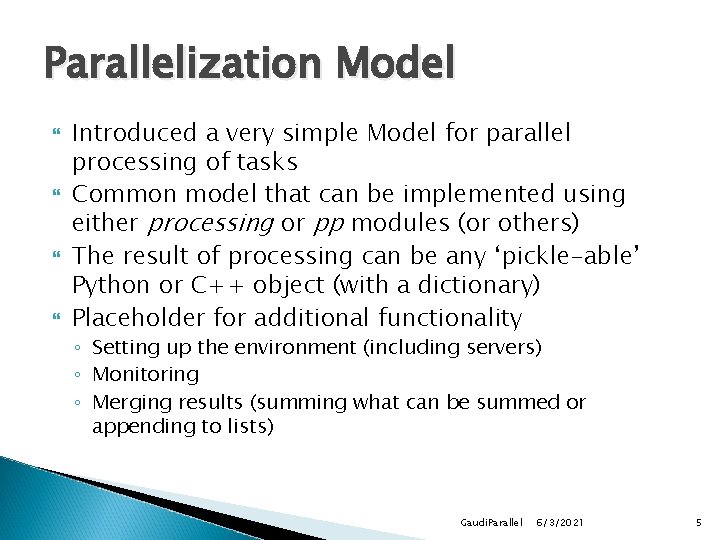

Parallelization Model Introduced a very simple Model for parallel processing of tasks Common model that can be implemented using either processing or pp modules (or others) The result of processing can be any ‘pickle-able’ Python or C++ object (with a dictionary) Placeholder for additional functionality ◦ Setting up the environment (including servers) ◦ Monitoring ◦ Merging results (summing what can be summed or appending to lists) Gaudi. Parallel 6/3/2021 5

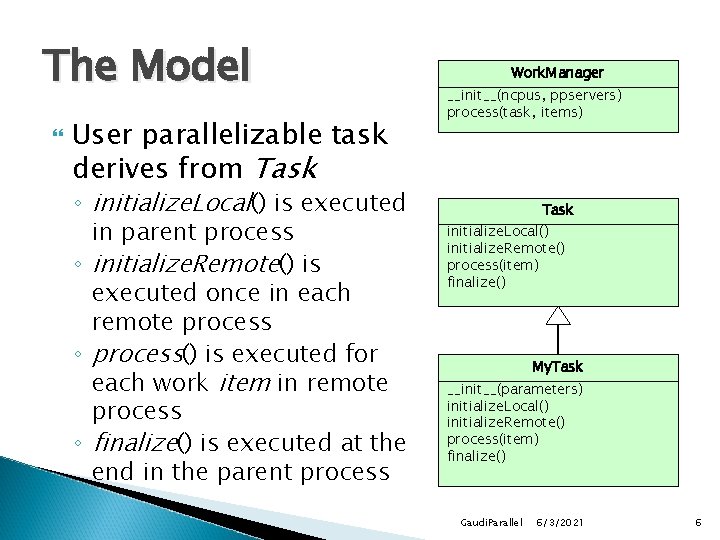

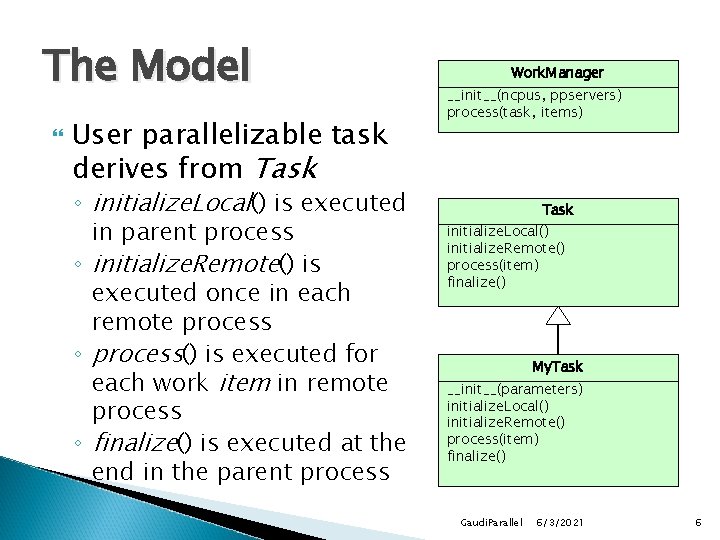

The Model User parallelizable task derives from Task ◦ initialize. Local() is executed in parent process ◦ initialize. Remote() is executed once in each remote process ◦ process() is executed for each work item in remote process ◦ finalize() is executed at the end in the parent process Work. Manager __init__(ncpus, ppservers) process(task, items) Task initialize. Local() initialize. Remote() process(item) finalize() My. Task __init__(parameters) initialize. Local() initialize. Remote() process(item) finalize() Gaudi. Parallel 6/3/2021 6

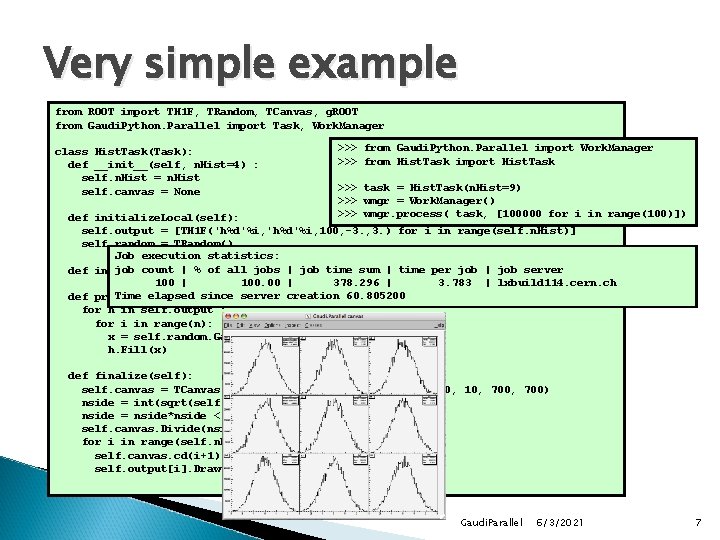

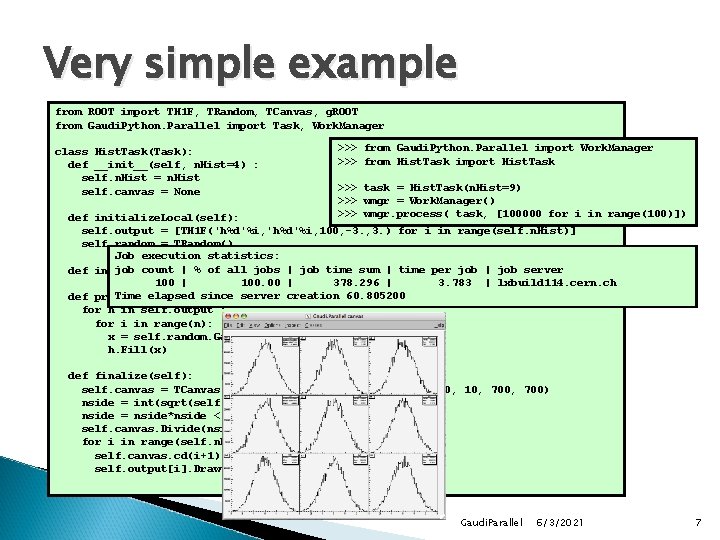

Very simple example from ROOT import TH 1 F, TRandom, TCanvas, g. ROOT from Gaudi. Python. Parallel import Task, Work. Manager class Hist. Task(Task): def __init__(self, n. Hist=4) : self. n. Hist = n. Hist self. canvas = None >>> from Gaudi. Python. Parallel import Work. Manager >>> from Hist. Task import Hist. Task >>> task = Hist. Task(n. Hist=9) >>> wmgr = Work. Manager() >>> wmgr. process( task, [100000 for i in range(100)]) def initialize. Local(self): self. output = [TH 1 F('h%d'%i, 100, -3. , 3. ) for i in range(self. n. Hist)] self. random = TRandom() Job execution statistics: job count | % of all pass jobs | job time sum | time per job | job server def initialize. Remote(self): 100 | 100. 00 | 378. 296 | 3. 783 | lxbuild 114. cern. ch Time elapsed since server creation 60. 805200 def process(self, n): for h in self. output : for i in range(n): x = self. random. Gaus(0. , 1. ) h. Fill(x) def finalize(self): self. canvas = TCanvas('c 1', 'Gaudi. Parallel canvas', 200, 10, 700) nside = int(sqrt(self. n. Hist)) nside = nside*nside < self. n. Hist and nside + 1 or nside self. canvas. Divide(nside, 0, 0) for i in range(self. n. Hist): self. canvas. cd(i+1) self. output[i]. Draw() Gaudi. Parallel 6/3/2021 7

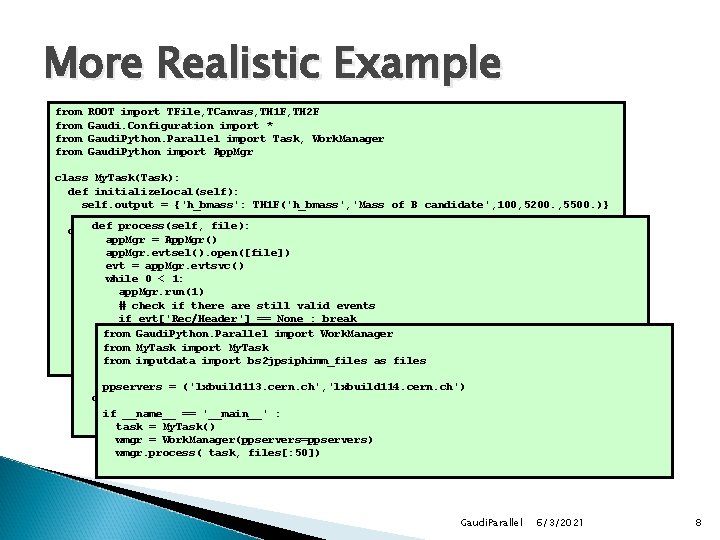

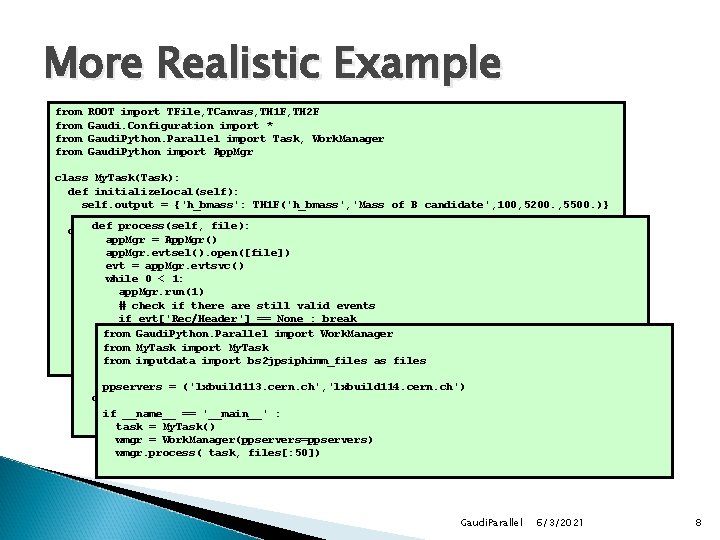

More Realistic Example from ROOT import TFile, TCanvas, TH 1 F, TH 2 F Gaudi. Configuration import * Gaudi. Python. Parallel import Task, Work. Manager Gaudi. Python import App. Mgr class My. Task(Task): def initialize. Local(self): self. output = {'h_bmass': TH 1 F('h_bmass', 'Mass of B candidate', 100, 5200. , 5500. )} process(self, file): def initialize. Remote(self): app. Mgr App. Mgr() #--- Common= configuration -------------------------app. Mgr. evtsel(). open([file]) import. Options('printoff. opts') evt = app. Mgr. evtsvc() import. Options('$STDOPTS/LHCb. Application. opts') while 0 < 1: import. Options('$STDOPTS/Dst. Dicts. opts') app. Mgr. run(1) import. Options('$DDDBROOT/options/DC 06. opts') # check if there are still valid events import. Options('$PANORAMIXROOT/options/Panoramix_Da. Vinci. opts') if evt['Rec/Header'] == None : break import. Options('$BS 2 JPSIPHIROOT/options/Do. DC 06 sel. Bs 2 Jpsi 2 Mu. Mu_Phi 2 KK. opts') cont = evt['Phys/DC 06 sel. Bs 2 Jpsi 2 Mu. Mu_Phi 2 KK/Particles'] from Gaudi. Python. Parallel import Work. Manager if cont != None : from My. Task import My. Task app. Conf = Application. Mgr( Output. Level = 999, App. Name = 'Ex 2_multicore') b in+=cont : from for inputdata import bs 2 jpsiphimm_files as files app. Conf. Ext. Svc ['Data. On. Demand. Svc', 'Particle. Property. Svc'] success = self. output['h_bmass']. Fill(b. momentum(). mass()) ppservers = ('lxbuild 113. cern. ch', 'lxbuild 114. cern. ch') def finalize(self): self. output['h_bmass']. Draw() if __name__ == '__main__' : print found: ', self. output['h_bmass']. Get. Entries() task 'Bs = My. Task() wmgr = Work. Manager(ppservers=ppservers) wmgr. process( task, files[: 50]) Gaudi. Parallel 6/3/2021 8

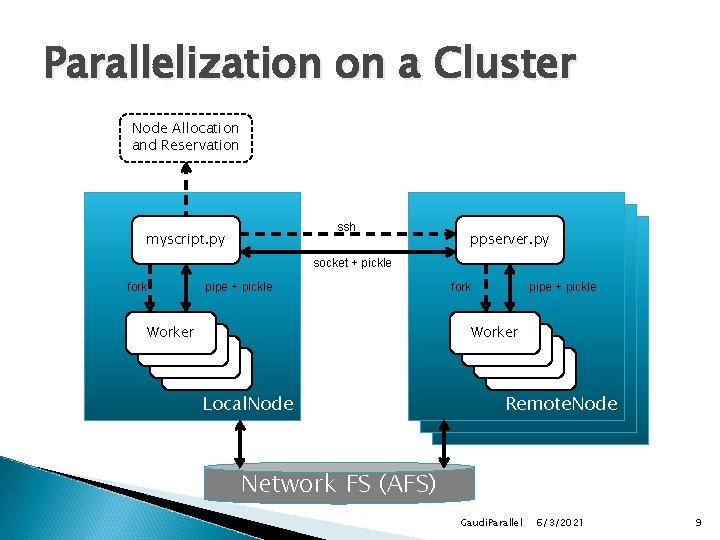

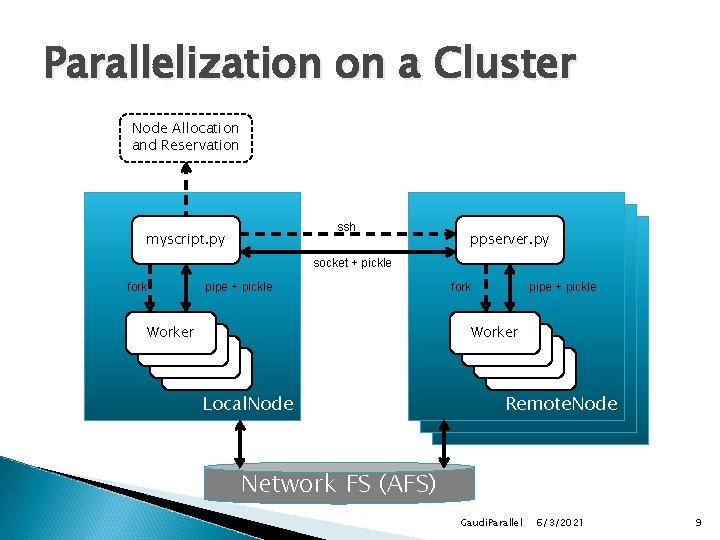

Parallelization on a Cluster Node Allocation and Reservation ssh myscript. py ppserver. py socket + pickle fork pipe + pickle Worker Worker Local. Node Remote. Node Network FS (AFS) Gaudi. Parallel 6/3/2021 9

![Cluster Parallelization Details The ppserver is createddestroyed by the user script Requires ssh Cluster Parallelization Details The [pp]server is created/destroyed by the user script ◦ Requires ssh](https://slidetodoc.com/presentation_image_h2/50355fc0c33572919422ac189a71353c/image-10.jpg)

Cluster Parallelization Details The [pp]server is created/destroyed by the user script ◦ Requires ssh properly setup (no password required) Ultra-lightweight setup Single user, proper authentication, etc. The ‘parent’ user environment is cloned to the server ◦ Requires a shared file system Automatic cleanup on crashes/aborts Gaudi. Parallel 6/3/2021 10

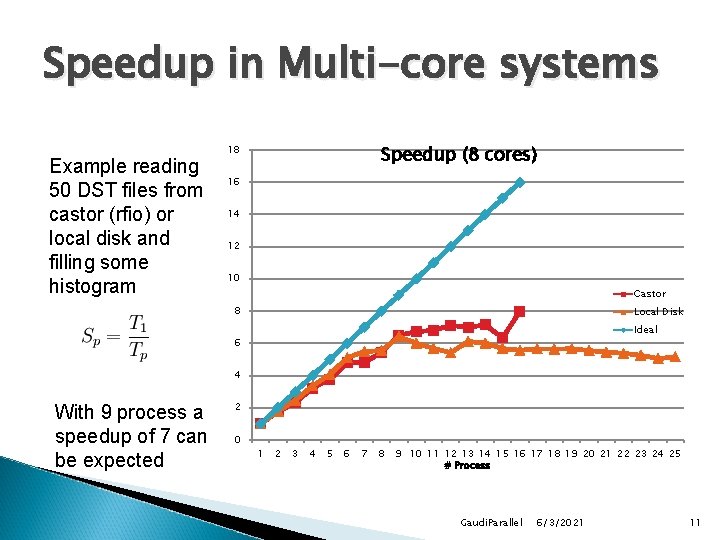

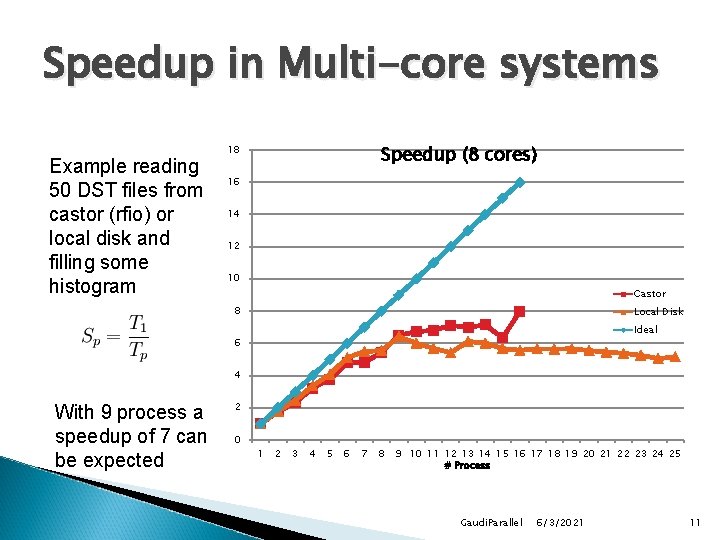

Speedup in Multi-core systems Example reading 50 DST files from castor (rfio) or local disk and filling some histogram Speedup (8 cores) 18 16 14 12 10 Castor 8 Local Disk Ideal 6 4 With 9 process a speedup of 7 can be expected 2 0 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 # Process Gaudi. Parallel 6/3/2021 11

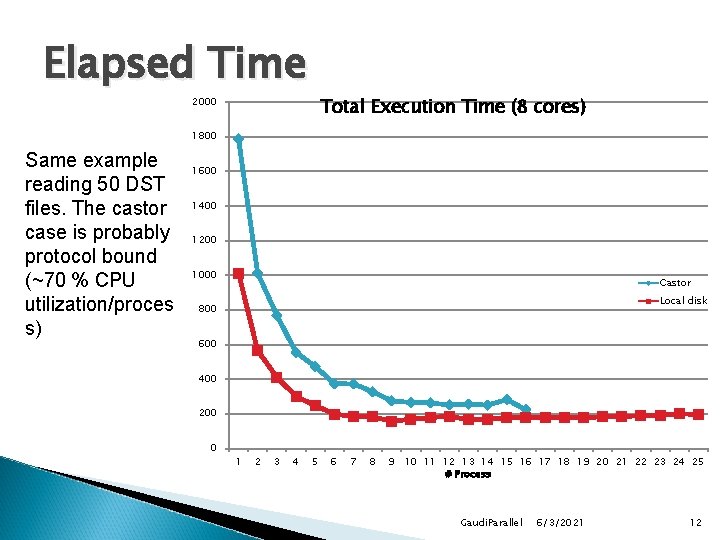

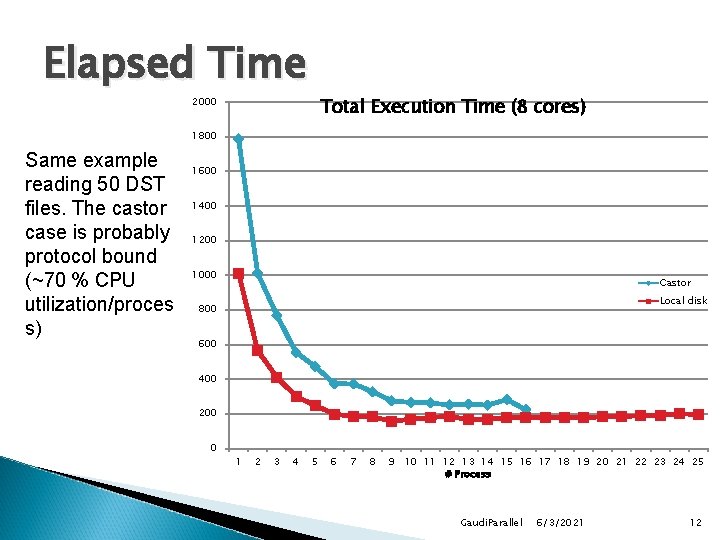

Elapsed Time Total Execution Time (8 cores) 2000 1800 Same example reading 50 DST files. The castor case is probably protocol bound (~70 % CPU utilization/proces s) 1600 1400 1200 1000 Castor Local disk 800 600 400 200 0 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 # Process Gaudi. Parallel 6/3/2021 12

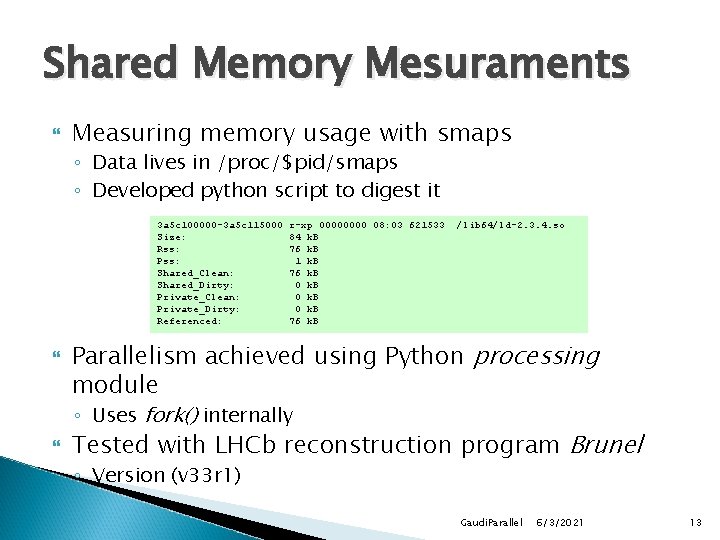

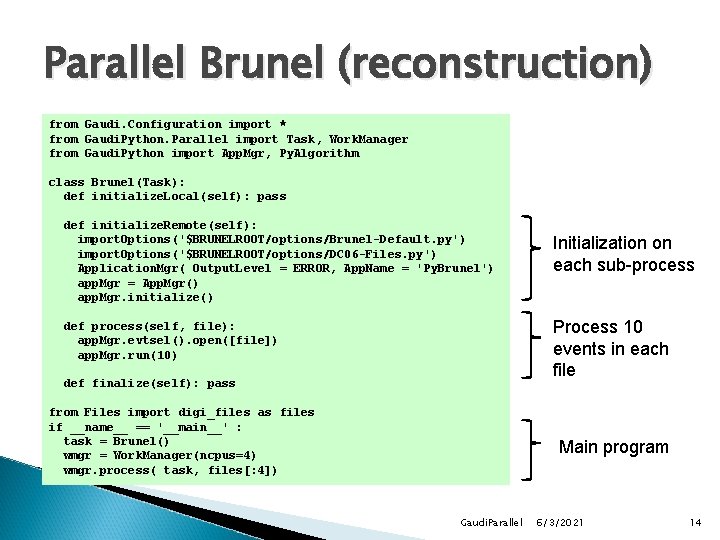

Shared Memory Mesuraments Measuring memory usage with smaps ◦ Data lives in /proc/$pid/smaps ◦ Developed python script to digest it 3 a 5 c 100000 -3 a 5 c 115000 Size: Rss: Pss: Shared_Clean: Shared_Dirty: Private_Clean: Private_Dirty: Referenced: r-xp 0000 08: 03 621533 84 k. B 76 k. B 1 k. B 76 k. B 0 k. B 76 k. B /lib 64/ld-2. 3. 4. so Parallelism achieved using Python processing module ◦ Uses fork() internally Tested with LHCb reconstruction program Brunel ◦ Version (v 33 r 1) Gaudi. Parallel 6/3/2021 13

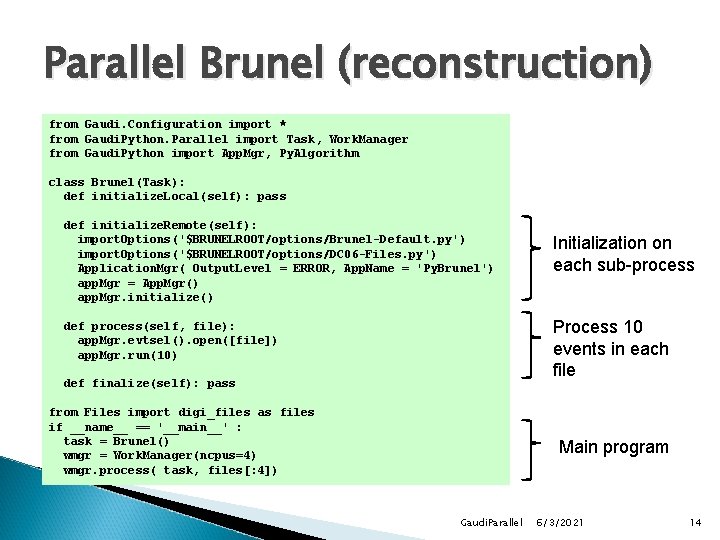

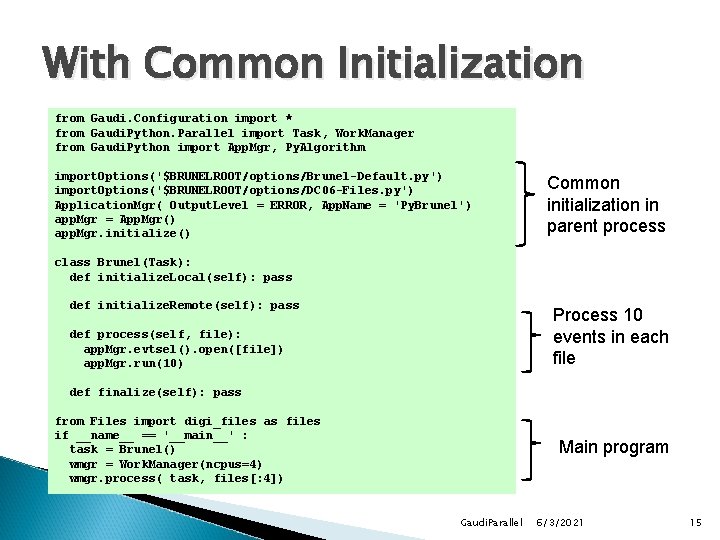

Parallel Brunel (reconstruction) from Gaudi. Configuration import * from Gaudi. Python. Parallel import Task, Work. Manager from Gaudi. Python import App. Mgr, Py. Algorithm class Brunel(Task): def initialize. Local(self): pass def initialize. Remote(self): import. Options('$BRUNELROOT/options/Brunel-Default. py') import. Options('$BRUNELROOT/options/DC 06 -Files. py') Application. Mgr( Output. Level = ERROR, App. Name = 'Py. Brunel') app. Mgr = App. Mgr() app. Mgr. initialize() Initialization on each sub-process Process 10 events in each file def process(self, file): app. Mgr. evtsel(). open([file]) app. Mgr. run(10) def finalize(self): pass from Files import digi_files as files if __name__ == '__main__' : task = Brunel() wmgr = Work. Manager(ncpus=4) wmgr. process( task, files[: 4]) Main program Gaudi. Parallel 6/3/2021 14

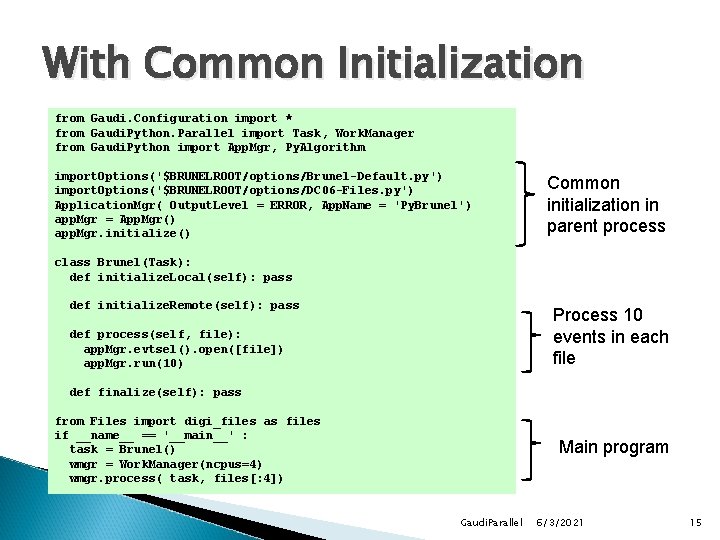

With Common Initialization from Gaudi. Configuration import * from Gaudi. Python. Parallel import Task, Work. Manager from Gaudi. Python import App. Mgr, Py. Algorithm import. Options('$BRUNELROOT/options/Brunel-Default. py') import. Options('$BRUNELROOT/options/DC 06 -Files. py') Application. Mgr( Output. Level = ERROR, App. Name = 'Py. Brunel') app. Mgr = App. Mgr() app. Mgr. initialize() Common initialization in parent process class Brunel(Task): def initialize. Local(self): pass def initialize. Remote(self): pass Process 10 events in each file def process(self, file): app. Mgr. evtsel(). open([file]) app. Mgr. run(10) def finalize(self): pass from Files import digi_files as files if __name__ == '__main__' : task = Brunel() wmgr = Work. Manager(ncpus=4) wmgr. process( task, files[: 4]) Main program Gaudi. Parallel 6/3/2021 15

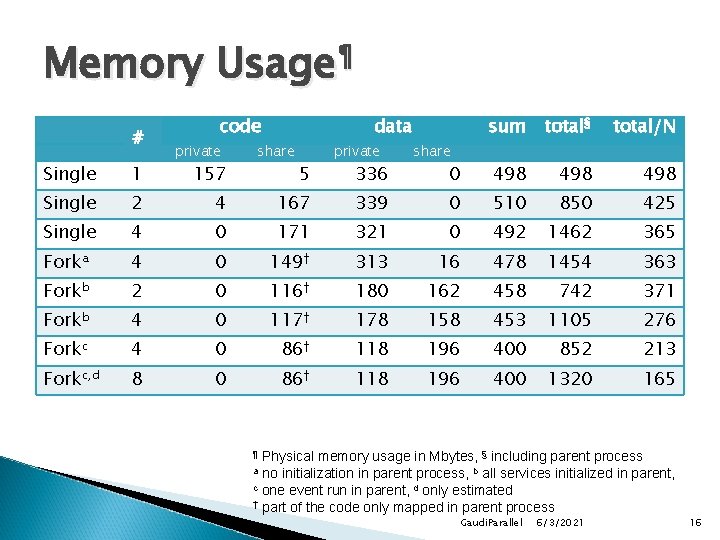

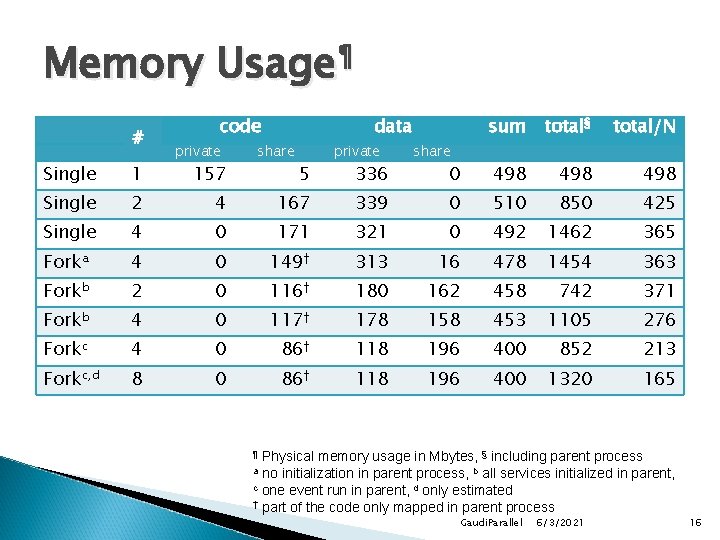

Memory Usage¶ # code private 157 data share 5 private 336 sum total§ total/N 0 498 498 share Single 1 Single 2 4 167 339 0 510 850 425 Single 4 0 171 321 0 492 1462 365 Forka 4 0 149† 313 16 478 1454 363 Forkb 2 0 116† 180 162 458 742 371 Forkb 4 0 117† 178 158 453 1105 276 Forkc 4 0 86† 118 196 400 852 213 Forkc, d 8 0 86† 118 196 400 1320 165 Physical memory usage in Mbytes, § including parent process a no initialization in parent process, b all services initialized in parent, c one event run in parent, d only estimated † part of the code only mapped in parent process ¶ Gaudi. Parallel 6/3/2021 16

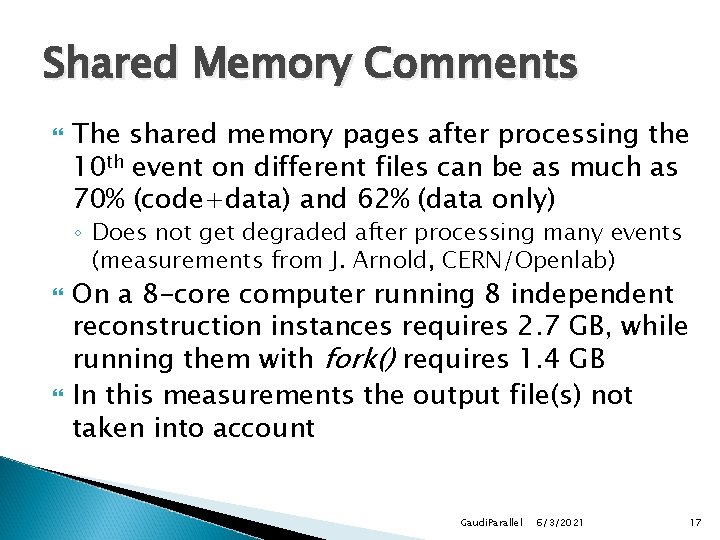

Shared Memory Comments The shared memory pages after processing the 10 th event on different files can be as much as 70% (code+data) and 62% (data only) ◦ Does not get degraded after processing many events (measurements from J. Arnold, CERN/Openlab) On a 8 -core computer running 8 independent reconstruction instances requires 2. 7 GB, while running them with fork() requires 1. 4 GB In this measurements the output file(s) not taken into account Gaudi. Parallel 6/3/2021 17

Model fails for “Event” output In case of a program producing large output data the current model is not adequate ◦ Data production programs: simulation, reconstruction, etc. Two options: ◦ Each sub-process writing a different file, parent process merges the files Merging files non-trivial when “references” exists ◦ Event data is collected by parent process and written into a single output stream Some additional communication overhead Gaudi. Parallel 6/3/2021 18

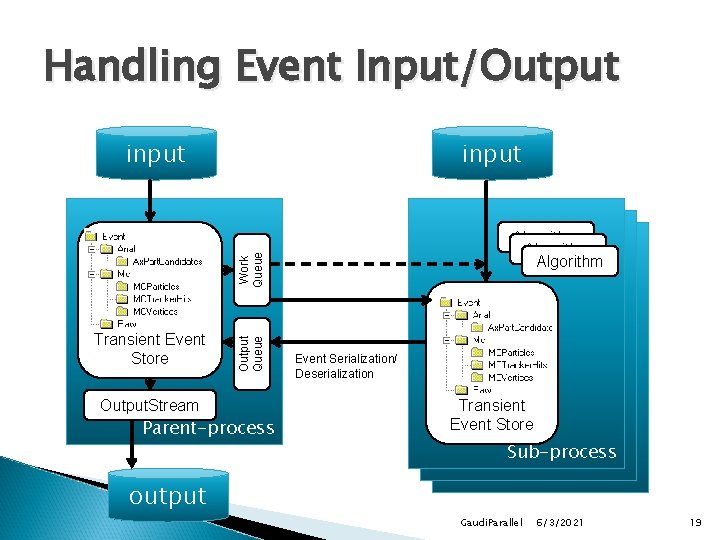

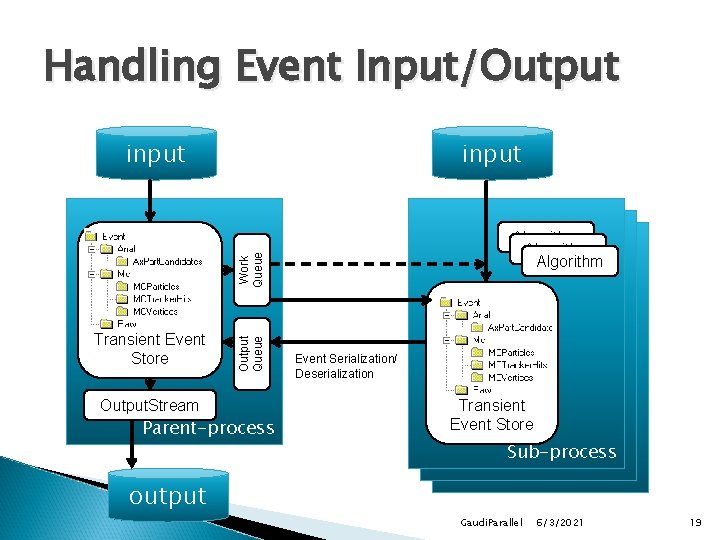

Handling Event Input/Output input Transient Event Store Output. Stream Output Queue Work Queue Algorithm Parent-process Event Serialization/ Deserialization Transient Event Store Sub-process output Gaudi. Parallel 6/3/2021 19

Handling Input/Output Developed event serialization/deserialization (TESSerializer) ◦ The entire transient event store (or parts) can be moved from/to sub-processes ◦ Implemented by pickling a TBuffer. File object ◦ Links and references must be maintained Modifying ‘processing’ python module to add input and output queues with additional thread Extending ‘Task’ with new method process. Local() to process in parent process each event [fragment] coming from workers Gaudi. Parallel 6/3/2021 20

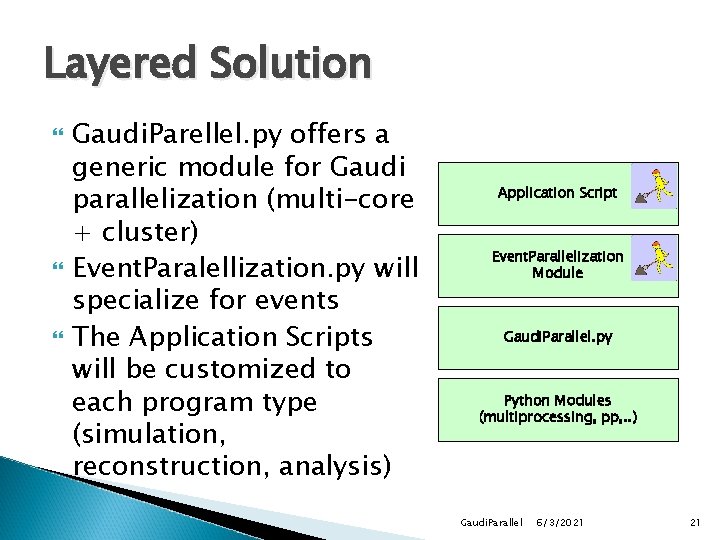

Layered Solution Gaudi. Parellel. py offers a generic module for Gaudi parallelization (multi-core + cluster) Event. Paralellization. py will specialize for events The Application Scripts will be customized to each program type (simulation, reconstruction, analysis) Application Script Event. Parallelization Module Gaudi. Parallel. py Python Modules (multiprocessing, pp, . . ) Gaudi. Parallel 6/3/2021 21

Summary Python offers a very high-level control of the applications based on GAUDI Very simple adaptation of user scripts may led to huge gains in latency (multi-core) Gains in memory usage are also important Several schemas and scheduling strategies can be tested without changes in the C++ code ◦ E. g. local/remote initialization, task granularity (file, event, chunk), sync or async mode, queues, etc. Gaudi. Parallel 6/3/2021 22