Usable Security Part 2 Dr Kasia Muldner 1

Usable Security: Part 2 Dr. Kasia Muldner 1

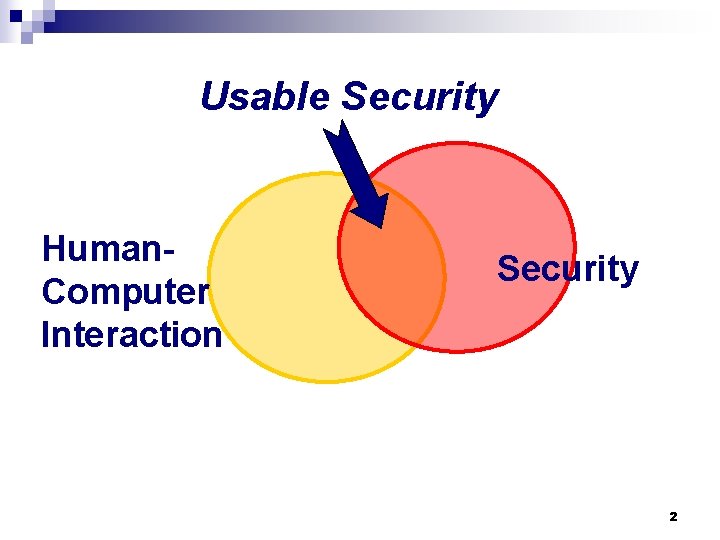

Usable Security Human. Computer Interaction Security 2

Who is the weakest link? 3

Some thoughts… n "To err is human, to really foul things up you need a computer. " - Paul Ehrlich n "Don't make me think. " - Steve Krug n "Everything should be made as simple as possible, but not simpler. " - Albert Einstein 4

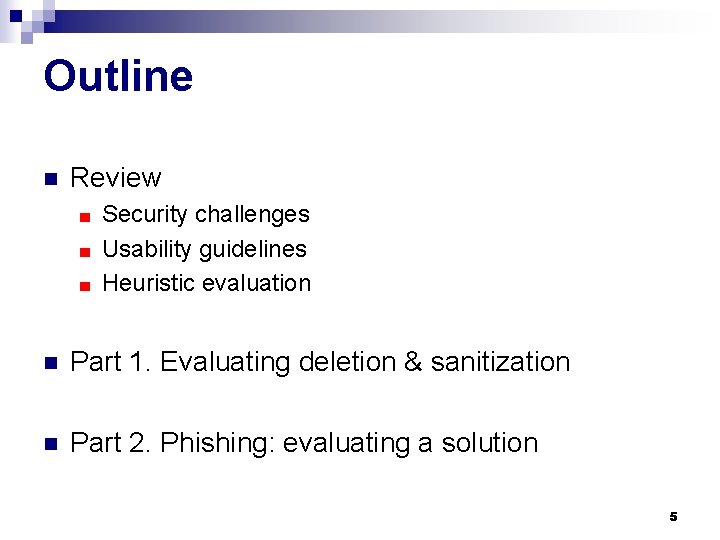

Outline n Review Security challenges ■ Usability guidelines ■ Heuristic evaluation ■ n Part 1. Evaluating deletion & sanitization n Part 2. Phishing: evaluating a solution 5

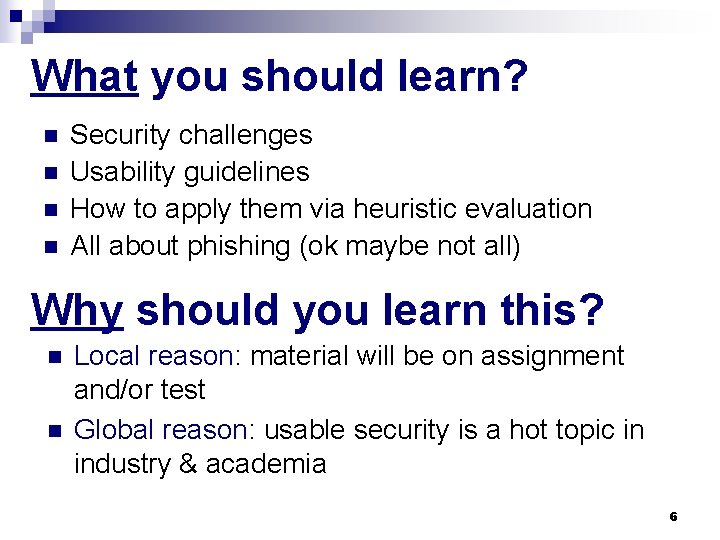

What you should learn? n n Security challenges Usability guidelines How to apply them via heuristic evaluation All about phishing (ok maybe not all) Why should you learn this? n n Local reason: material will be on assignment and/or test Global reason: usable security is a hot topic in industry & academia 6

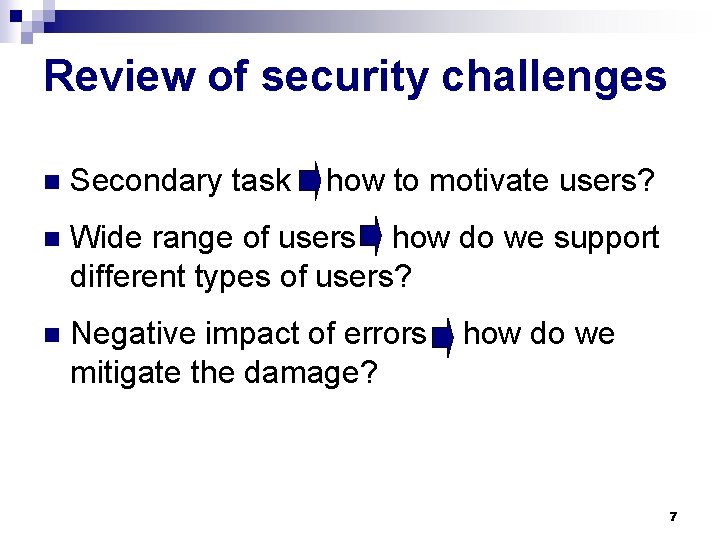

Review of security challenges n Secondary task how to motivate users? n Wide range of users how do we support different types of users? n Negative impact of errors mitigate the damage? how do we 7

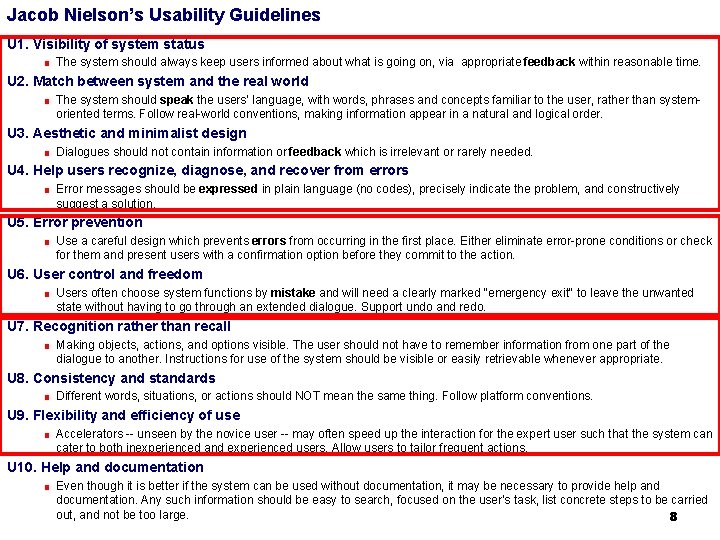

Jacob Nielson’s Usability Guidelines U 1. Visibility of system status ■ The system should always keep users informed about what is going on, via appropriate feedback within reasonable time. U 2. Match between system and the real world ■ The system should speak the users' language, with words, phrases and concepts familiar to the user, rather than system- oriented terms. Follow real-world conventions, making information appear in a natural and logical order. U 3. Aesthetic and minimalist design ■ Dialogues should not contain information or feedback which is irrelevant or rarely needed. U 4. Help users recognize, diagnose, and recover from errors ■ Error messages should be expressed in plain language (no codes), precisely indicate the problem, and constructively suggest a solution. U 5. Error prevention ■ Use a careful design which prevents errors from occurring in the first place. Either eliminate error-prone conditions or check for them and present users with a confirmation option before they commit to the action. U 6. User control and freedom ■ Users often choose system functions by mistake and will need a clearly marked "emergency exit" to leave the unwanted state without having to go through an extended dialogue. Support undo and redo. U 7. Recognition rather than recall ■ Making objects, actions, and options visible. The user should not have to remember information from one part of the dialogue to another. Instructions for use of the system should be visible or easily retrievable whenever appropriate. U 8. Consistency and standards ■ Different words, situations, or actions should NOT mean the same thing. Follow platform conventions. U 9. Flexibility and efficiency of use ■ Accelerators -- unseen by the novice user -- may often speed up the interaction for the expert user such that the system can cater to both inexperienced and experienced users. Allow users to tailor frequent actions. U 10. Help and documentation ■ Even though it is better if the system can be used without documentation, it may be necessary to provide help and documentation. Any such information should be easy to search, focused on the user's task, list concrete steps to be carried out, and not be too large. 8

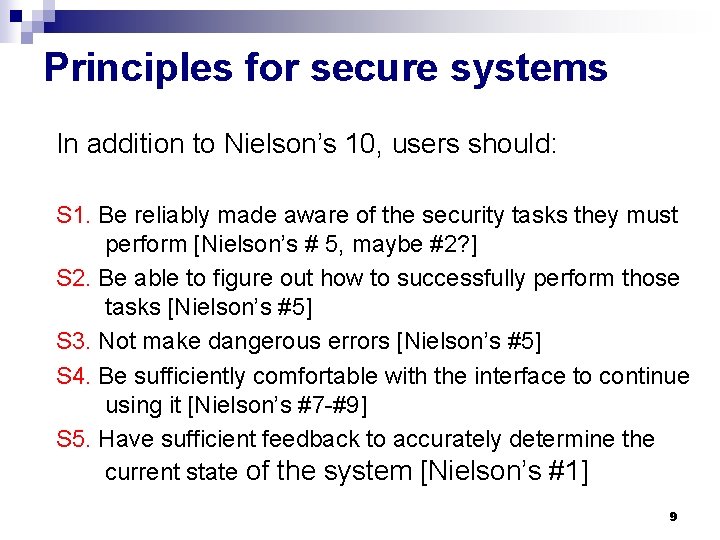

Principles for secure systems In addition to Nielson’s 10, users should: S 1. Be reliably made aware of the security tasks they must perform [Nielson’s # 5, maybe #2? ] S 2. Be able to figure out how to successfully perform those tasks [Nielson’s #5] S 3. Not make dangerous errors [Nielson’s #5] S 4. Be sufficiently comfortable with the interface to continue using it [Nielson’s #7 -#9] S 5. Have sufficient feedback to accurately determine the current state of the system [Nielson’s #1] 9

Principles for secure systems (2) n Path of Least Resistance ■ n n Maintain accurate awareness of the user's own authority to access resources. Draw distinctions among objects and actions along boundaries relevant to the task. Identifiability ■ n Enable the user to express safe security policies in terms that fit the user's task. Relevant Boundaries ■ n Protect the user's channels to agents that manipulate authority on the user's behalf. Expressiveness ■ Maintain accurate awareness of others' authority as relevant to user decisions. Self-Awareness ■ n Offer the user ways to reduce others' authority to access the user's resources. Visibility Trusted Path ■ Grant authority to others in accordance with user actions indicating consent. Revocability ■ n Match the most comfortable way to do tasks with the least granting of authority. Active Authorization ■ n Present objects and actions using distinguishable, truthful appearances. Foresight ■ Indicate clearly the consequences of decisions that the user is expected to make. 10

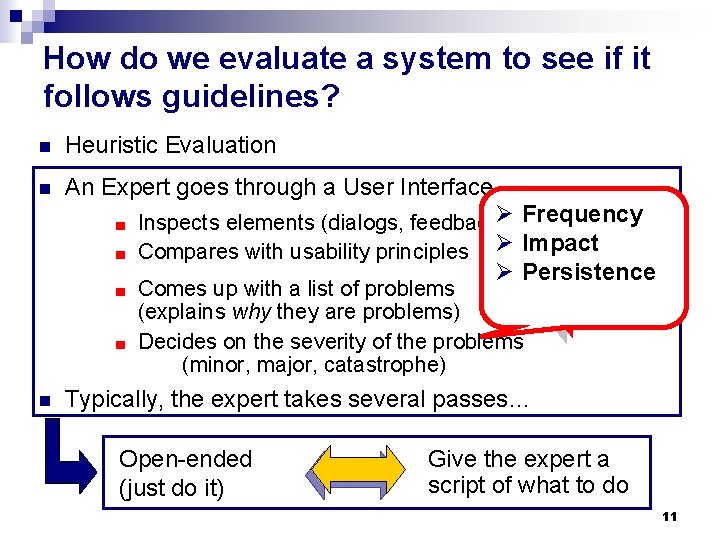

How do we evaluate a system to see if it follows guidelines? n Heuristic Evaluation n An Expert goes through a User Interface Frequency Inspects elements (dialogs, feedback, Øetc) ■ Compares with usability principles Ø Impact ■ Ø Persistence Comes up with a list of problems (explains why they are problems) ■ Decides on the severity of the problems (minor, major, catastrophe) ■ n Typically, the expert takes several passes… Open-ended (just do it) Give the expert a script of what to do 11

Heuristic evaluation √ x Pros: Quick & Dirty (do not need to design experiment, get users, etc) ■ Good for finding obvious usability flaws ■ Cons: ■ Experts are not the “typical” user! 12

Outline n Review Security challenges ■ Usability guidelines ■ Heuristic evaluation ■ n Part 1. Evaluating deletion & sanitization n Part 2. Phishing: Evaluating a solution 13

Next up: don’t lie to the user! n Focus: n deletion & sanitization Slides for this portion of the lecture are based on “Best Practices for Usable Security in Desktop Software” presentation by Simson L. Garfinkel 14

Deletion and sanitization n Why study deletion? Affects everybody: we all have private or security-critical information that needs to be deleted. ■ Lots of lore, not a lot of good academic research. ■ 15

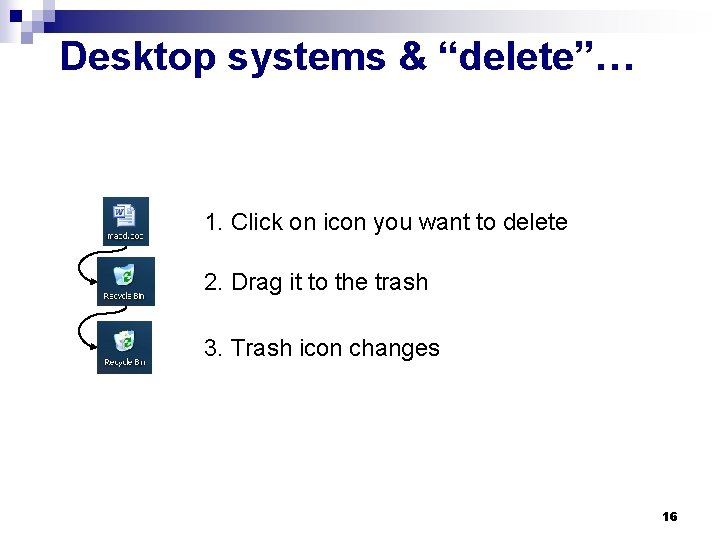

Desktop systems & “delete”… 1. Click on icon you want to delete 2. Drag it to the trash 3. Trash icon changes 16

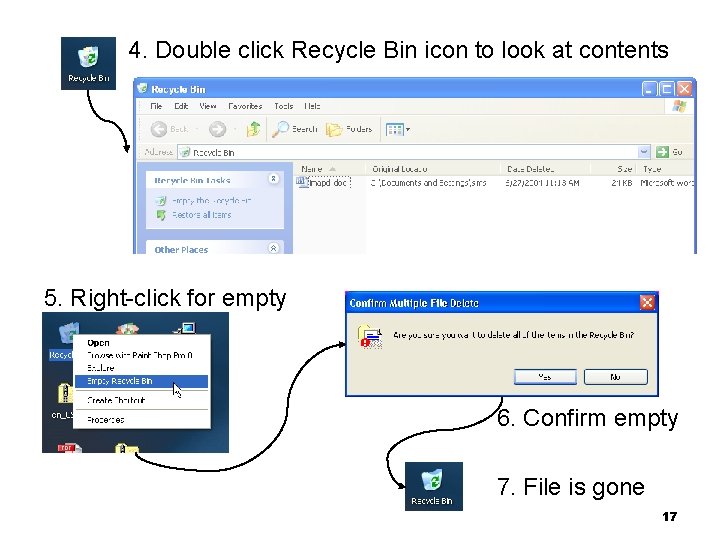

4. Double click Recycle Bin icon to look at contents 5. Right-click for empty 6. Confirm empty 7. File is gone 17

Group Activity: Heuristic Evaluation n An Expert (you) goes through a User Interface Inspect elements (dialogs, feedback, etc) ■ Compare with usability principles ■ Evaluate pros and cons of delete functionality according to guidelines ■ [optional] Decide on the severity of the problems (minor, major, catastrophe) ■ 18

Recovery after confirmation… Can you get back a file after you empty the trash? Sure! 19

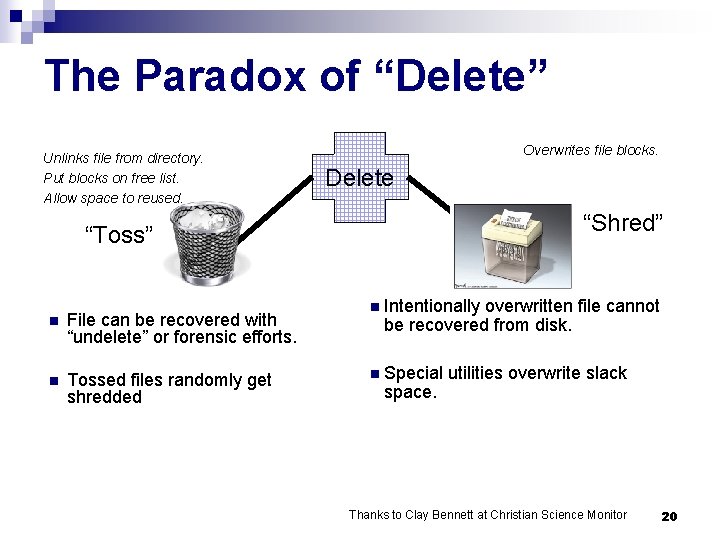

The Paradox of “Delete” Unlinks file from directory. Put blocks on free list. Allow space to reused. Overwrites file blocks. Delete “Shred” “Toss” n File can be recovered with “undelete” or forensic efforts. n Tossed files randomly get shredded n Intentionally overwritten file cannot be recovered from disk. n Special space. utilities overwrite slack Thanks to Clay Bennett at Christian Science Monitor 20

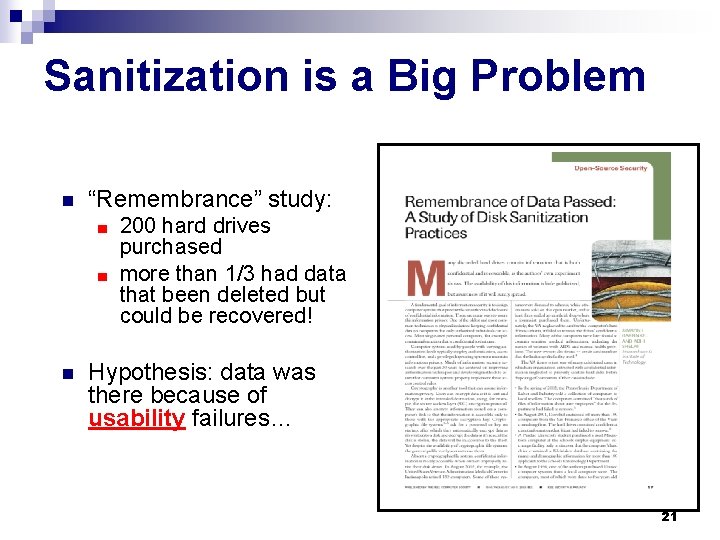

Sanitization is a Big Problem n “Remembrance” study: 200 hard drives purchased ■ more than 1/3 had data that been deleted but could be recovered! ■ n Hypothesis: data was there because of usability failures… 21

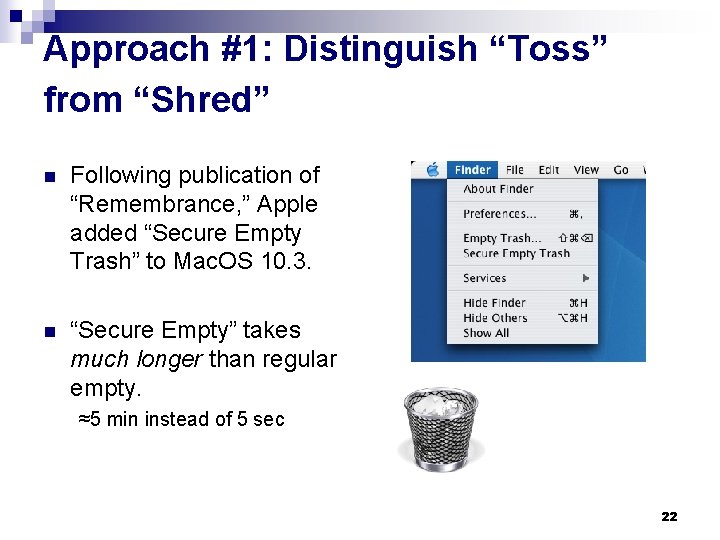

Approach #1: Distinguish “Toss” from “Shred” n Following publication of “Remembrance, ” Apple added “Secure Empty Trash” to Mac. OS 10. 3. n “Secure Empty” takes much longer than regular empty. ≈5 min instead of 5 sec 22

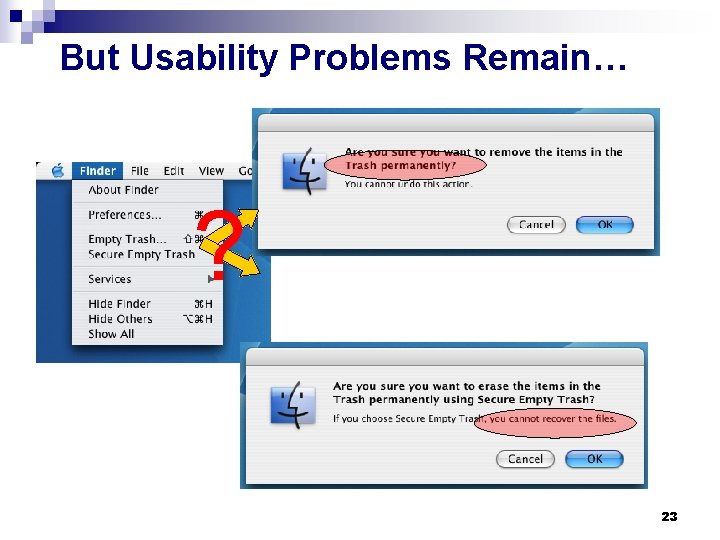

But Usability Problems Remain… ? 23

Other Problems… What is a “Secure Empty Trash” ? ? ? … Users may not know what “Secure Empty Trash” means… 24

Redesign the interaction (simulation) n Make “shred” an explicit operation at the interface. 25

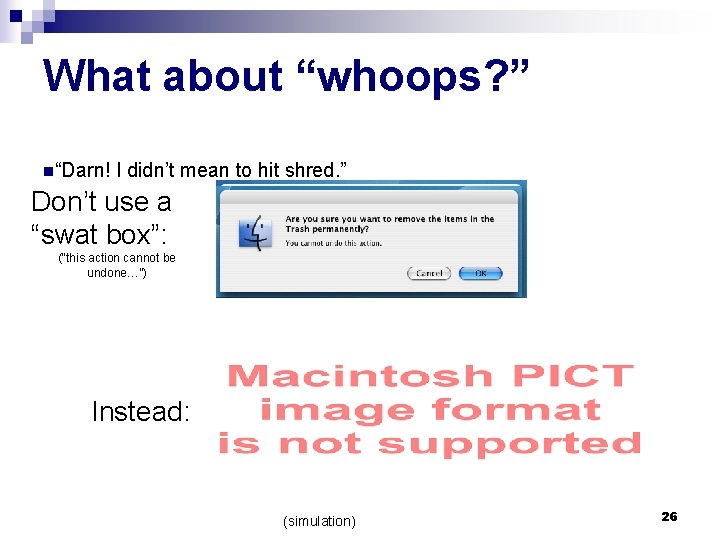

What about “whoops? ” n“Darn! I didn’t mean to hit shred. ” Don’t use a “swat box”: (“this action cannot be undone…”) Instead: (simulation) 26

Best Practices n Distinguish “toss” from “shred. ” ≠ n Don’t use a “swat box” to confirm an action that can’t be undone! It’s easier to beg forgiveness than ask for permission ■ Let people change their minds. ■ “Polite Software Is Self-Confident” (Cooper, p. 167) ■ 27

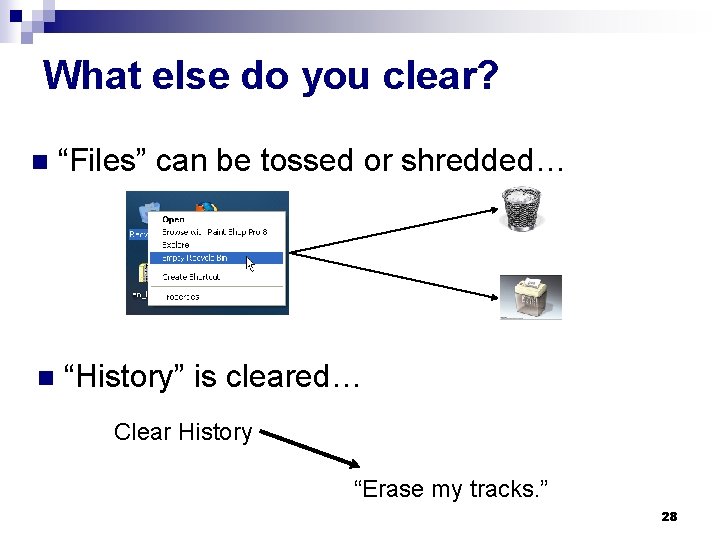

What else do you clear? n “Files” can be tossed or shredded… n “History” is cleared… Clear History “Erase my tracks. ” 28

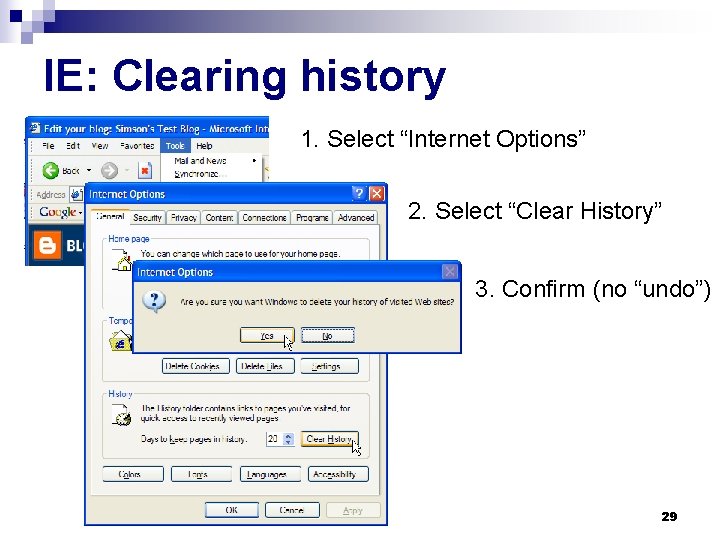

IE: Clearing history 1. Select “Internet Options” 2. Select “Clear History” 3. Confirm (no “undo”) 29

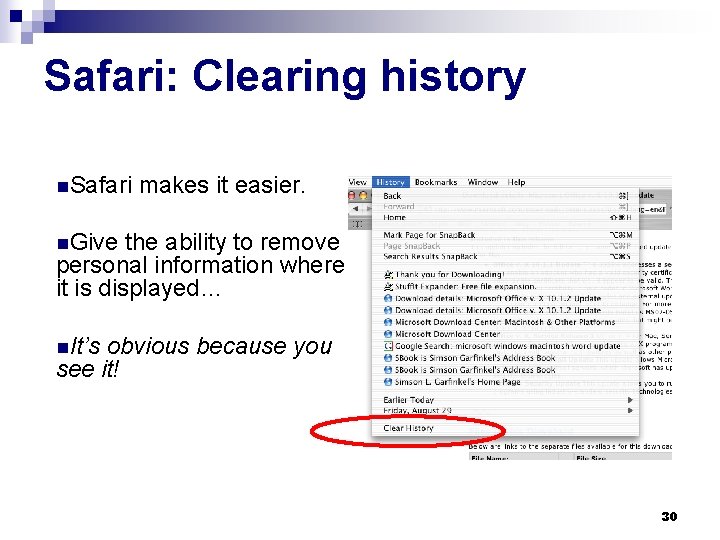

Safari: Clearing history n. Safari makes it easier. n. Give the ability to remove personal information where it is displayed… n. It’s obvious because you see it! 30

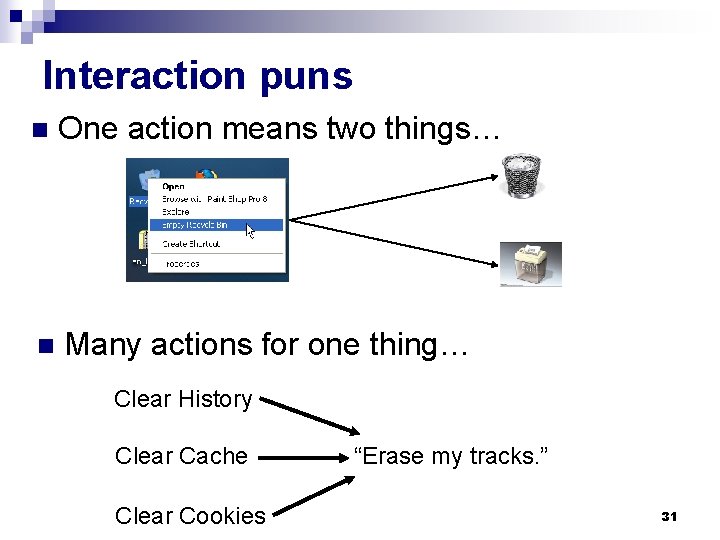

Interaction puns n One action means two things… n Many actions for one thing… Clear History Clear Cache Clear Cookies “Erase my tracks. ” 31

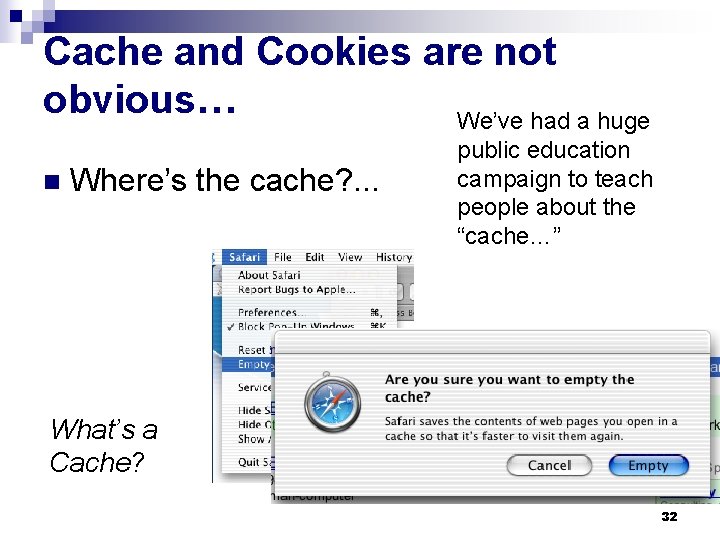

Cache and Cookies are not obvious… We’ve had a huge n Where’s the cache? . . . public education campaign to teach people about the “cache…” What’s a Cache? 32

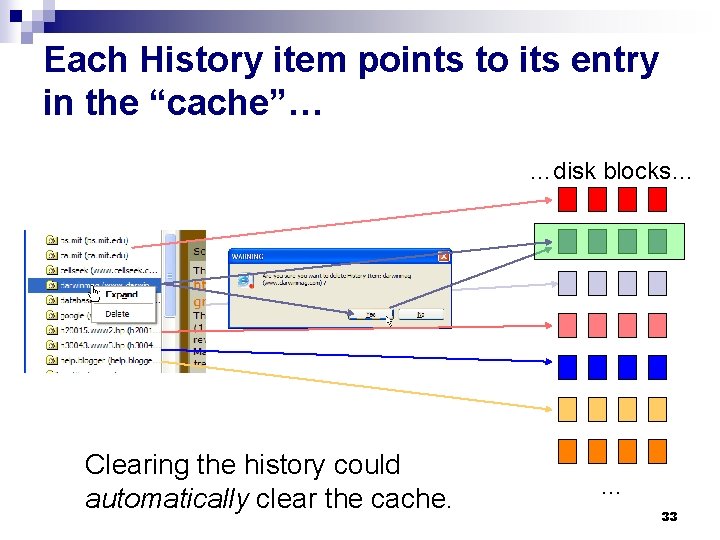

Each History item points to its entry in the “cache”… …disk blocks… Clearing the history could automatically clear the cache. … 33

But what about “Secure Empty Trash? ” n “Clear History, ” “Clear Cache” and “Reset Browser” don’t sanitize! n The privacy protecting features give a false sense of security. 34

Best Practices Follow the guidelines; help users to: S 2. Be able to figure out how to successfully perform those tasks (if you mean delete, delete!) S 3. Not make dangerous errors S 5. Have sufficient feedback to accurately determine the current state of the system 35

Outline n Review Security challenges ■ Usability guidelines ■ Heuristic evaluation ■ n Part 1. Evaluating deletion & sanitization n Part 2. Phishing: Evaluating a solution 36

Next up: A class of security attacks that target endusers rather than computer systems themselves. n Some slides are based on existing ones; credit on the bottom n 37

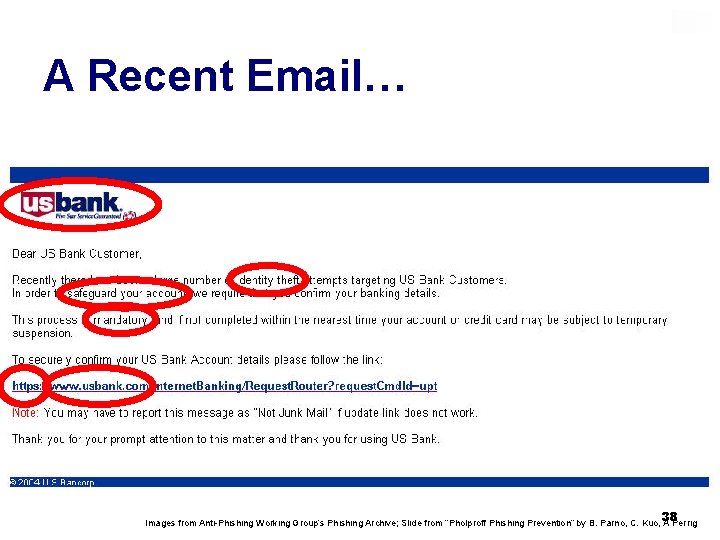

A Recent Email… 38 Images from Anti-Phishing Working Group’s Phishing Archive; Slide from “Pholproff Phishing Prevention” by B. Parno, C. Kuo, A Perrig

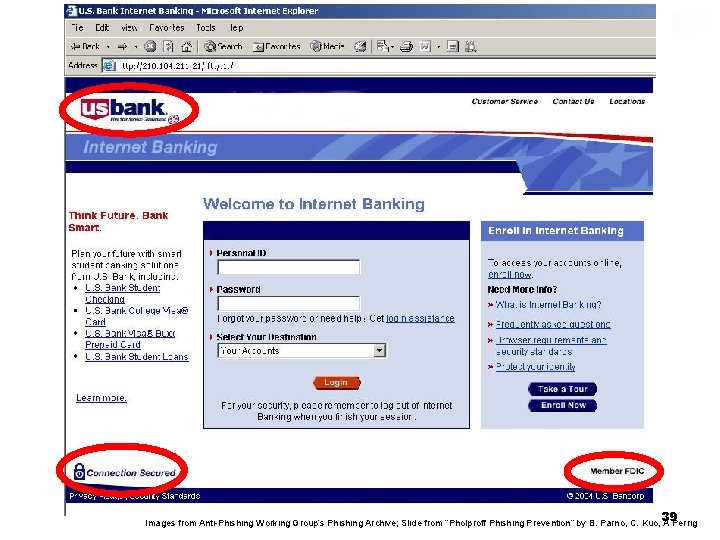

39 Images from Anti-Phishing Working Group’s Phishing Archive; Slide from “Pholproff Phishing Prevention” by B. Parno, C. Kuo, A Perrig

The next page requests: n n n n Name Address Telephone Credit Card Number, Expiration Date, Security Code PIN Account Number Personal ID Password 40 Slide from “Pholproff Phishing Prevention” by B. Parno, C. Kuo, A Perrig

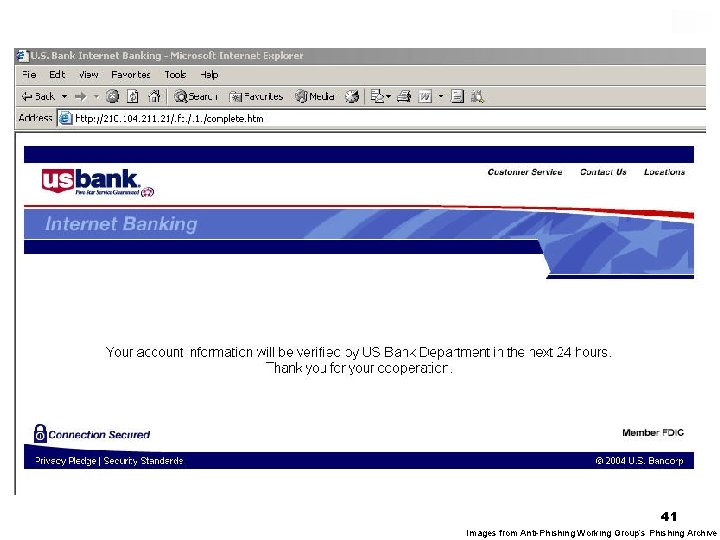

41 Images from Anti-Phishing Working Group’s Phishing Archive

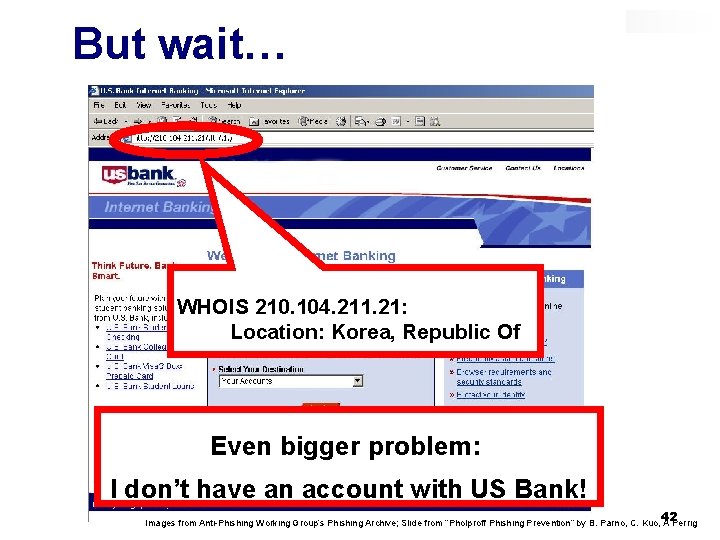

But wait… WHOIS 210. 104. 211. 21: Location: Korea, Republic Of Even bigger problem: I don’t have an account with US Bank! 42 Images from Anti-Phishing Working Group’s Phishing Archive; Slide from “Pholproff Phishing Prevention” by B. Parno, C. Kuo, A Perrig

Phishing They demand authentication from us… but do we also want authentication from them? 43

What is phishing? Phishing attacks use both social engineering and technical subterfuge to steal consumers' personal identity data and financial account credentials (http: //www. antiphishing. org) 44

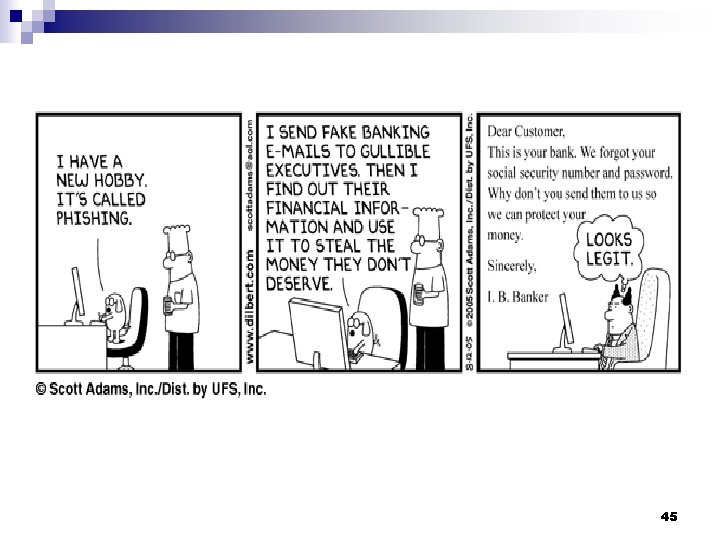

45

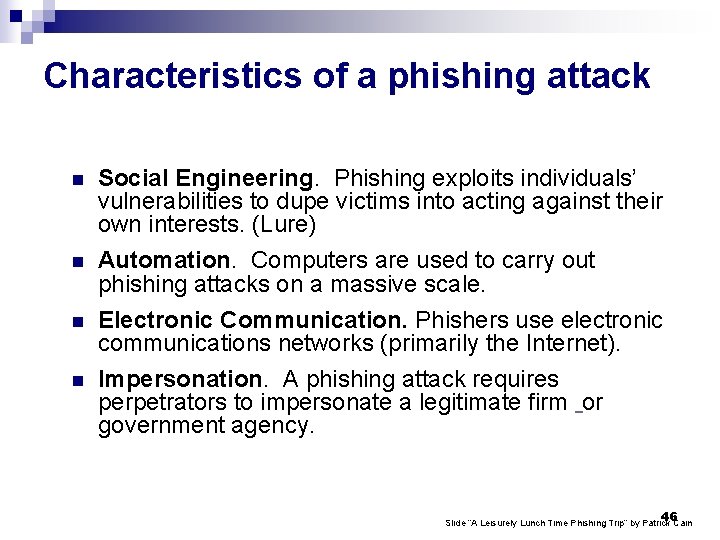

Characteristics of a phishing attack n n Social Engineering. Phishing exploits individuals’ vulnerabilities to dupe victims into acting against their own interests. (Lure) Automation. Computers are used to carry out phishing attacks on a massive scale. Electronic Communication. Phishers use electronic communications networks (primarily the Internet). Impersonation. A phishing attack requires perpetrators to impersonate a legitimate firm or government agency. 46 Slide “A Leisurely Lunch Time Phishing Trip” by Patrick Cain

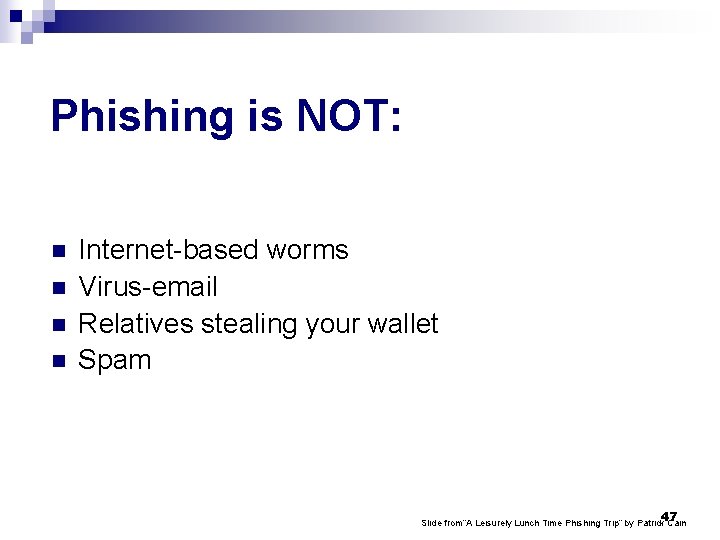

Phishing is NOT: n n Internet-based worms Virus-email Relatives stealing your wallet Spam 47 Slide from“A Leisurely Lunch Time Phishing Trip” by Patrick Cain

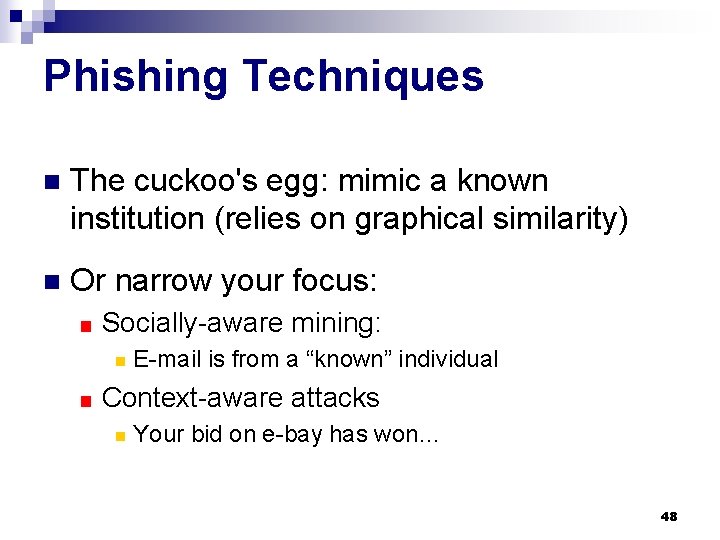

Phishing Techniques n The cuckoo's egg: mimic a known institution (relies on graphical similarity) n Or narrow your focus: ■ Socially-aware mining: n ■ E-mail is from a “known” individual Context-aware attacks n Your bid on e-bay has won… 48

Why is Phishing Successful? n Some users trust too readily n Users cannot parse URLs, domain names or PKI certificates n Users are inundated with requests, warnings and popups 49 Slide based on one in “Pholproff Phishing Prevention” by B. Parno, C. Kuo, A Perrig

Impact of Phishing n Hundreds of millions of $$$ cost to U. S. economy (e. g. , 2. 4 billion in fraud just for bank -related fraud) n Affects 1+ million Internet users in U. S. alone n What about privacy! n The problem is growing… the number of phishing attacks doubled from 2004 ->2005 (from 16, 000 to 32, 000) 50 Slide based on one in “i. Trust. Page: Pretty Good Phishing Protection” S. Saroiu, T. Ronda, and A. Wolman

What can we do? n Good user interface design (usability guidelines) n Help users make good decisions rather than presenting dilemmas 51 Slide based on one in “i. Trust. Page: Pretty Good Phishing Protection” S. Saroiu, T. Ronda, and A. Wolman

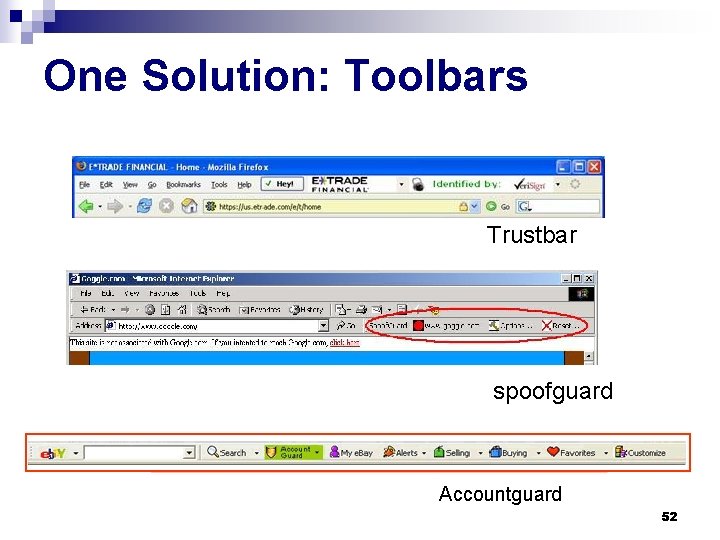

One Solution: Toolbars Trustbar spoofguard Accountguard 52

n Let’s try it… 53

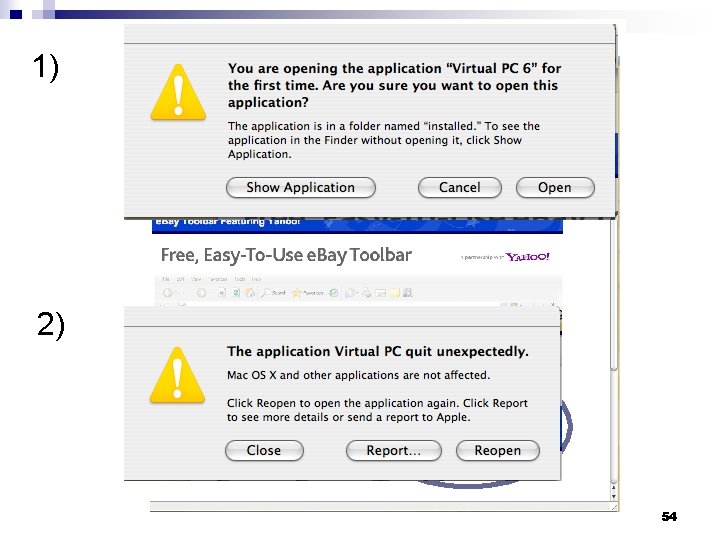

1) 2) 54

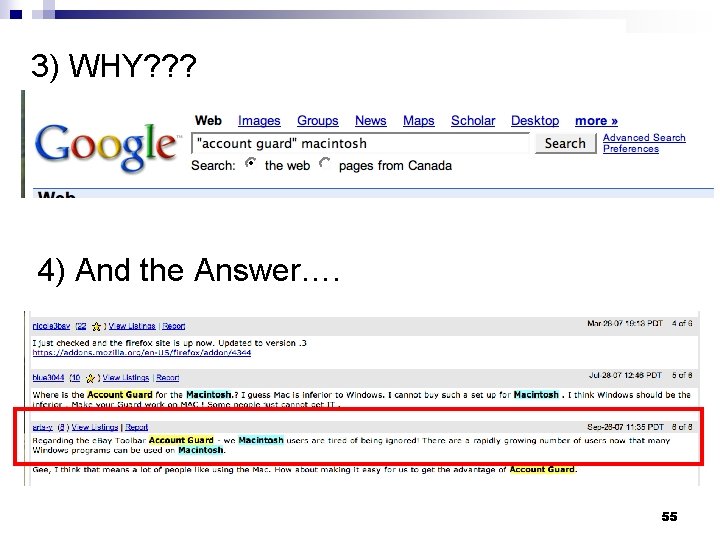

3) WHY? ? ? 4) And the Answer…. 55

n So, back to Account Guard… 56

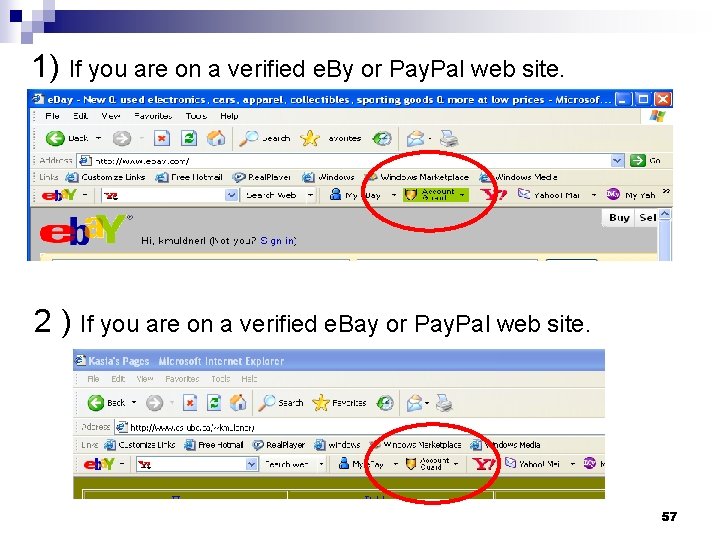

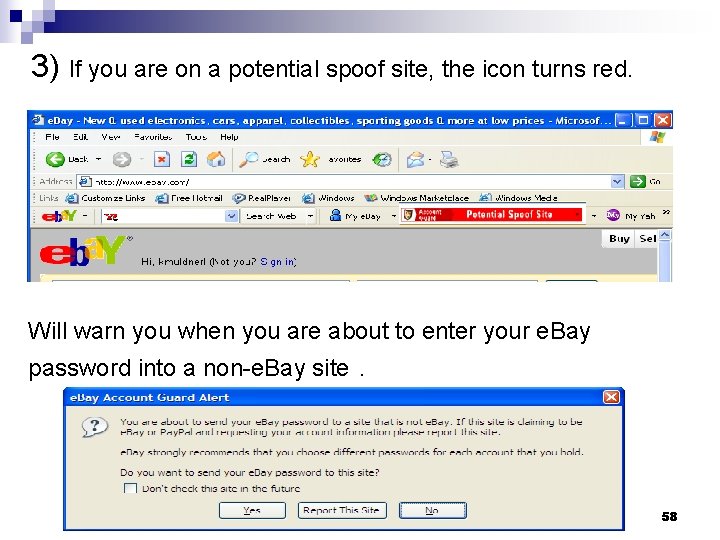

1) If you are on a verified e. By or Pay. Pal web site. 2 ) If you are on a verified e. Bay or Pay. Pal web site. 57

3) If you are on a potential spoof site, the icon turns red. Will warn you when you are about to enter your e. Bay password into a non-e. Bay site. 58

Interlude 2: What is good about account guard? n What is bad? n 59

Wrap-up Revising “What you should learn”… Security challenges n Usability guidelines n How to apply them via heuristic evaluation n All about phishing (ok maybe not all) n 60

Where Do We Go From Here? n How does usability relate to security? Bad usability: bad security? ■ Good usability: bad security? ■ n Who is to blame: end-users or designers? Slide based on a presentation by R. Biddle 61

Thank-you for your attention! 62

- Slides: 62