Usability and Security Overview Limits of Usability for

Usability and Security

Overview • • Limits of Usability for Security 10 basic principles (Ka-Ping-Yee) Psychological foundations Key Management Bad Usability – or why Johnny can‘t encrypt User Intentions Authority Reduction Testing UIs

Limits of Usability • Usability cannot answer security questions which offer no real alternatives • Usability cannot reduce a systems ability to do damage • Pushing security problems to users is NOT usability!

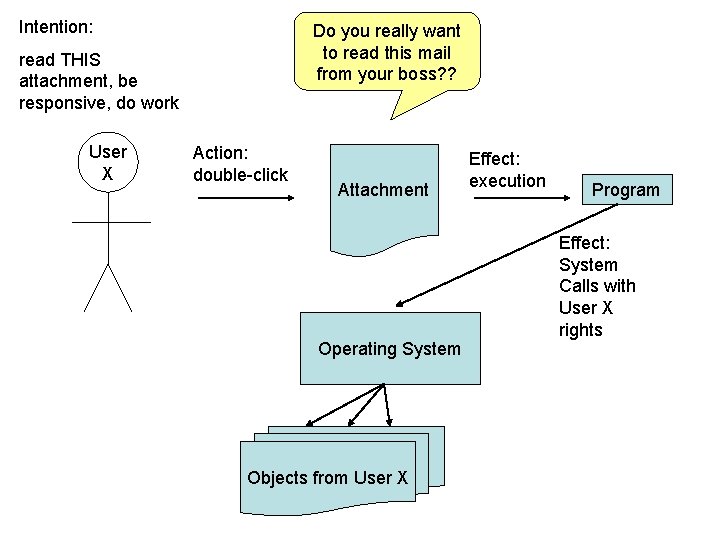

Intention: Do you really want to read this mail from your boss? ? read THIS attachment, be responsive, do work User X Action: double-click Attachment Operating System Objects from User X Effect: execution Program Effect: System Calls with User X rights

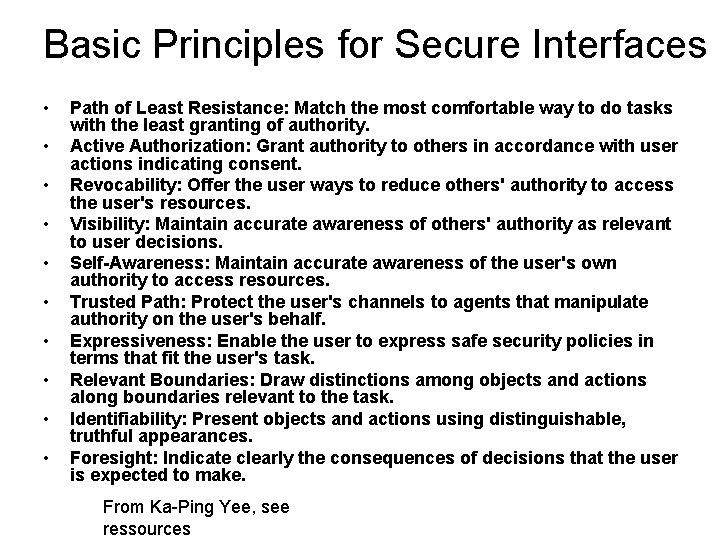

Basic Principles for Secure Interfaces • • • Path of Least Resistance: Match the most comfortable way to do tasks with the least granting of authority. Active Authorization: Grant authority to others in accordance with user actions indicating consent. Revocability: Offer the user ways to reduce others' authority to access the user's resources. Visibility: Maintain accurate awareness of others' authority as relevant to user decisions. Self-Awareness: Maintain accurate awareness of the user's own authority to access resources. Trusted Path: Protect the user's channels to agents that manipulate authority on the user's behalf. Expressiveness: Enable the user to express safe security policies in terms that fit the user's task. Relevant Boundaries: Draw distinctions among objects and actions along boundaries relevant to the task. Identifiability: Present objects and actions using distinguishable, truthful appearances. Foresight: Indicate clearly the consequences of decisions that the user is expected to make. From Ka-Ping Yee, see ressources

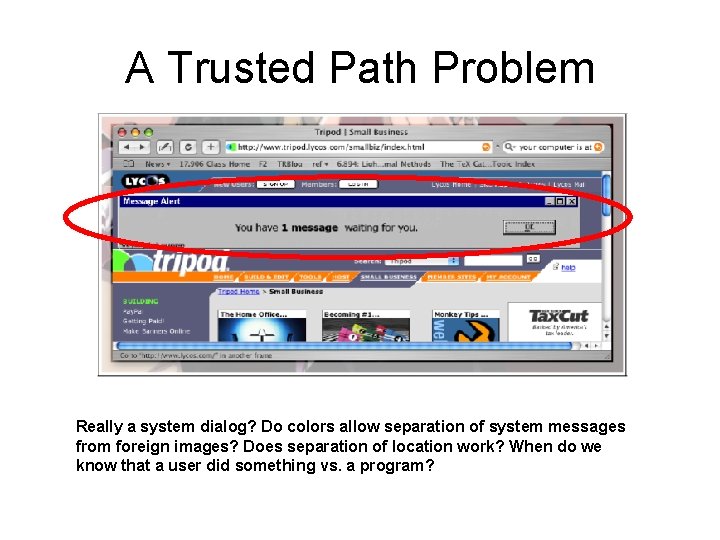

A Trusted Path Problem Really a system dialog? Do colors allow separation of system messages from foreign images? Does separation of location work? When do we know that a user did something vs. a program?

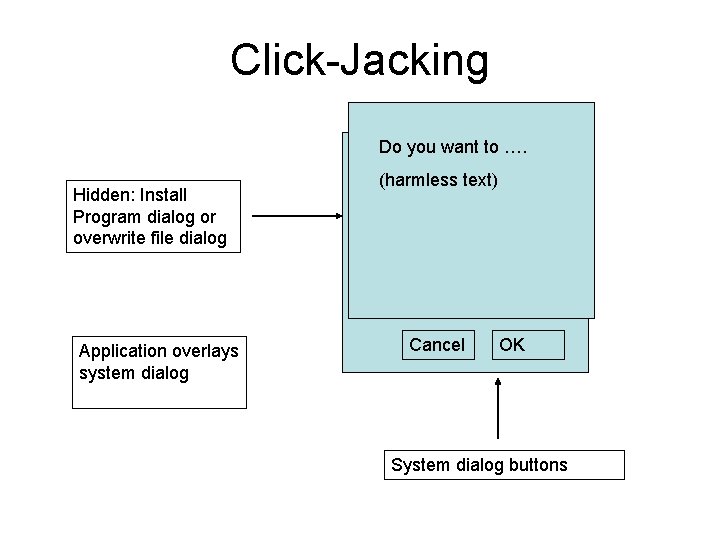

Click-Jacking Do you want to …. Hidden: Install Program dialog or overwrite file dialog Application overlays system dialog (harmless text) Cancel OK System dialog buttons

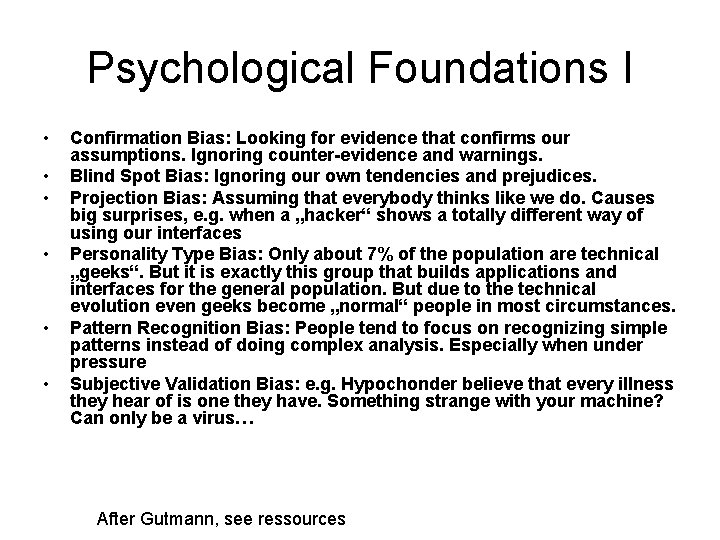

Psychological Foundations I • • • Confirmation Bias: Looking for evidence that confirms our assumptions. Ignoring counter-evidence and warnings. Blind Spot Bias: Ignoring our own tendencies and prejudices. Projection Bias: Assuming that everybody thinks like we do. Causes big surprises, e. g. when a „hacker“ shows a totally different way of using our interfaces Personality Type Bias: Only about 7% of the population are technical „geeks“. But it is exactly this group that builds applications and interfaces for the general population. But due to the technical evolution even geeks become „normal“ people in most circumstances. Pattern Recognition Bias: People tend to focus on recognizing simple patterns instead of doing complex analysis. Especially when under pressure Subjective Validation Bias: e. g. Hypochonder believe that every illness they hear of is one they have. Something strange with your machine? Can only be a virus… After Gutmann, see ressources

Psychological Foundations I • Rationalisation: We invent seemingly rational explanations even when we should perhaps recognize that something critical, not normal is going on. Explaining things away. • Emotional Bias: Emotions like depression or optimism dominate decisions. They can turn over logical considerations. • Inattentional Blindness: Not seeing things which are a little bit outside of our regular concentration. Like car drivers not seeing motorbike riders. This makes „Simon Says“ a bad strategy. • Zero-Risk-Bias in security experts: by only accepting 100% secure solutions (which are hardly ever possible) they tend to ignore security mechanisms which would work in most cases and are much simpler to implement. (Certificates vs. Local history) After Gutmann, see ressources

Social Foundations • „forced“ trust within groups (exchange of passwords) • Social cohaesion through sharing secrets • Traditions, expectations etc. (politeness)

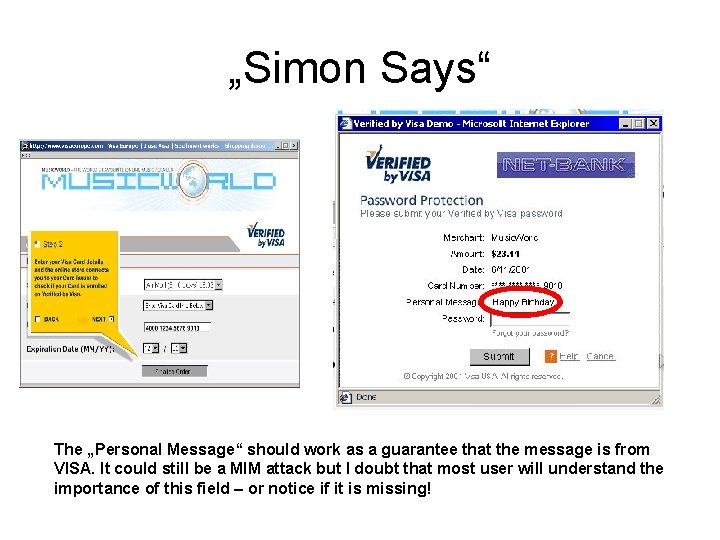

„Simon Says“ The „Personal Message“ should work as a guarantee that the message is from VISA. It could still be a MIM attack but I doubt that most user will understand the importance of this field – or notice if it is missing!

Key Management and Identities • • Keeping Keys and Petnames constant Offer only Petnames Distribute Keys automatically Use Proxies for key handling Remember: some security is better than none!

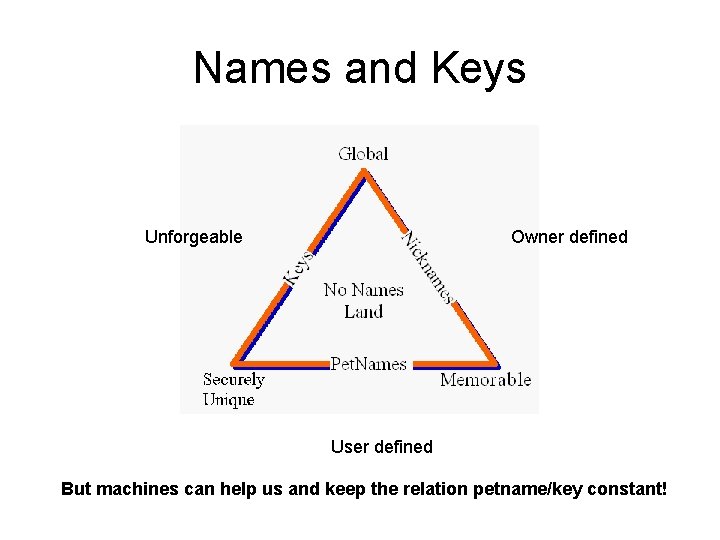

Names and Keys Unforgeable Owner defined User defined But machines can help us and keep the relation petname/key constant!

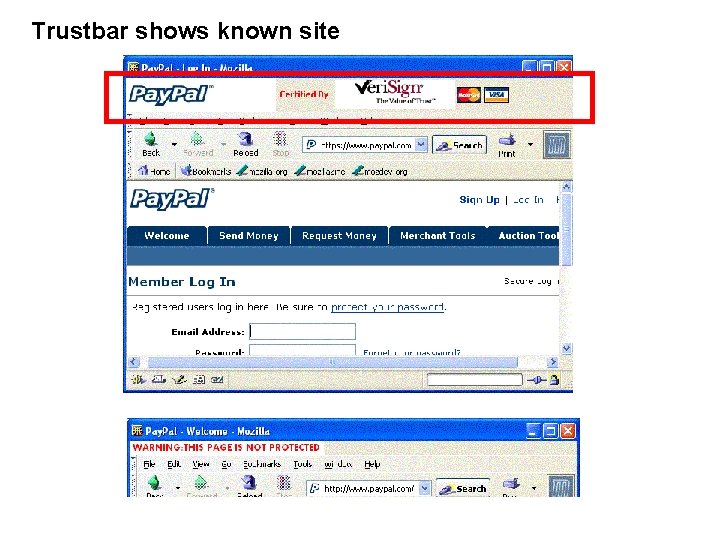

Trustbar shows known site

Why Johnny Can‘t Encrypt • Most mail is sent unencrypted and unsigned • Few client certificates are registered • Many websites use unknown or self-signed certificates • Users do not understand the concepts behind Certificate Authorities • Certificate exchange before communication starts does not work • PKI with Certificate Authorities does not work for a user: what is a name? Renewal? Revoking? Key Management? • Most programs do not help with security ("Verified Visa" example) • Most programs lie to the user with respect to their security and privacy (lock icons, delete no real delete etc. ) From: Simson Garfinkel's thesis on usable security. The book contains lots of empirical tests and data on the above issues.

Usability Guidelines that can help Johnny: good security now • Create Keys When Needed: Ensure that cryptographic protocols that can use keys will have access to keys, even if those keys were not signed by the private key of a well-known Certificate Authority. • Key Continuity Management: Use digital certificates that are self-signed or signed by unknown CAs for some purpose that furthers secure usability, rather than ignoring them entirely. This, in turns, makes possible the use of automatically created selfsigned certificates created by individuals or organizations that are unable or unwilling to obtain certificates from well-known Certification Authorities. • Track Received Keys: Make it possible for the user to know if this is the first time that a key has been received, if the key has been used just a few times, or if it is used frequently. • Track Recipients: Ensure that cryptographically protected email can be appropriately processed by the intended recipient. • Migrate and Backup Keys: Prevent users from losing their valuable secret keys. From: [S. GARFINKEL, Thesis]

Security Mediation: Local vs. Foreign • Petnames allow the use of local (well known) names • Local object names are well known too • Communication history is known locally as well • The history of a key • Spatial order of local objects • Private actions to cause some action • Least Privilege principle used when commands are executed • Getting to know somebody via friends • • • Certificates which relate keys with nonunique names Certificate Authorities whose root certificates establish huge sets of trust relations. External judgements on sites via color coding or trust statements Signed Code to download software Programs which define the user interface and the names used (window titles etc. )

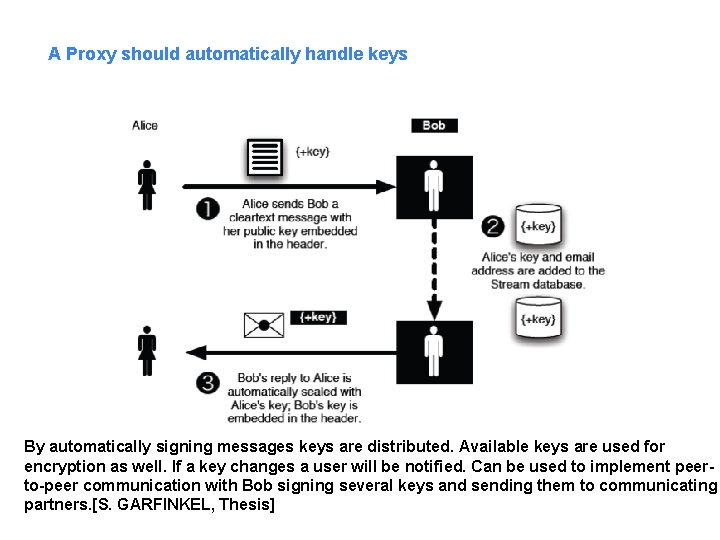

A Proxy should automatically handle keys By automatically signing messages keys are distributed. Available keys are used for encryption as well. If a key changes a user will be notified. Can be used to implement peerto-peer communication with Bob signing several keys and sending them to communicating partners. [S. GARFINKEL, Thesis]

Capturing User Intentions

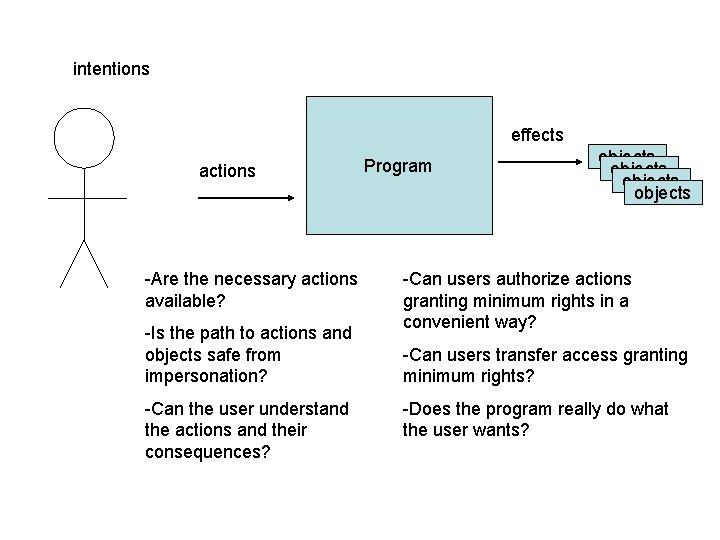

intentions effects actions -Are the necessary actions available? -Is the path to actions and objects safe from impersonation? -Can the user understand the actions and their consequences? Program objects -Can users authorize actions granting minimum rights in a convenient way? -Can users transfer access granting minimum rights? -Does the program really do what the user wants?

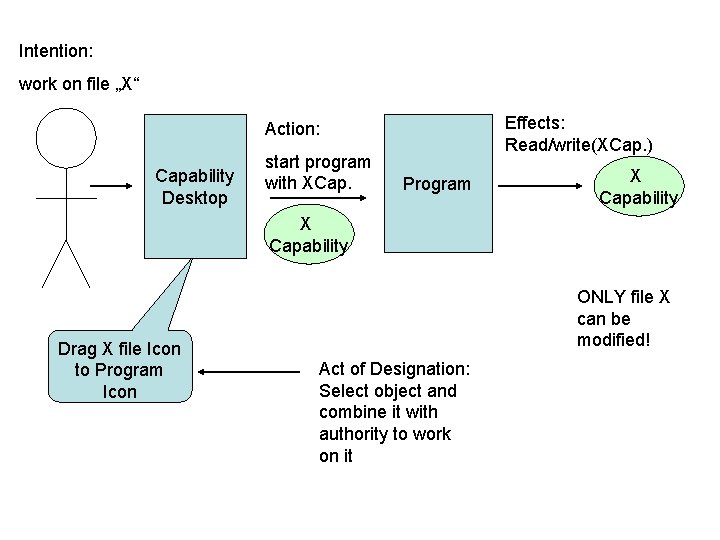

Intention: work on file „X“ Effects: Read/write(XCap. ) Action: Capability Desktop start program with XCap. Program X Capability Drag X file Icon to Program Icon ONLY file X can be modified! Act of Designation: Select object and combine it with authority to work on it

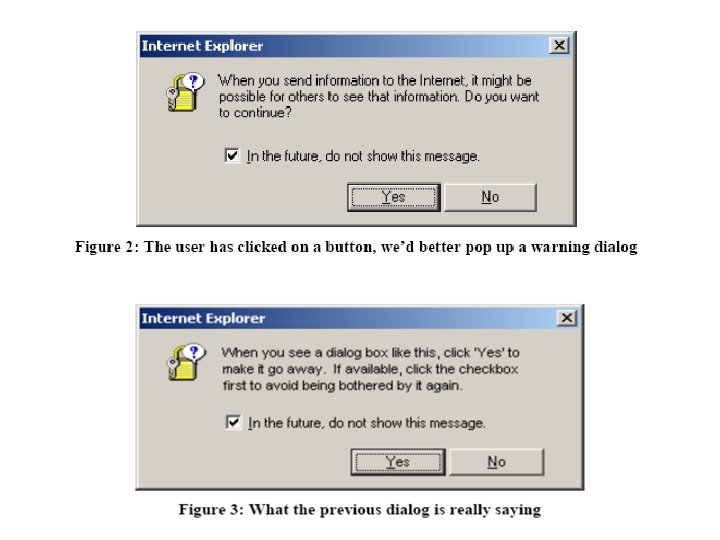

Bad Usability gives bad security

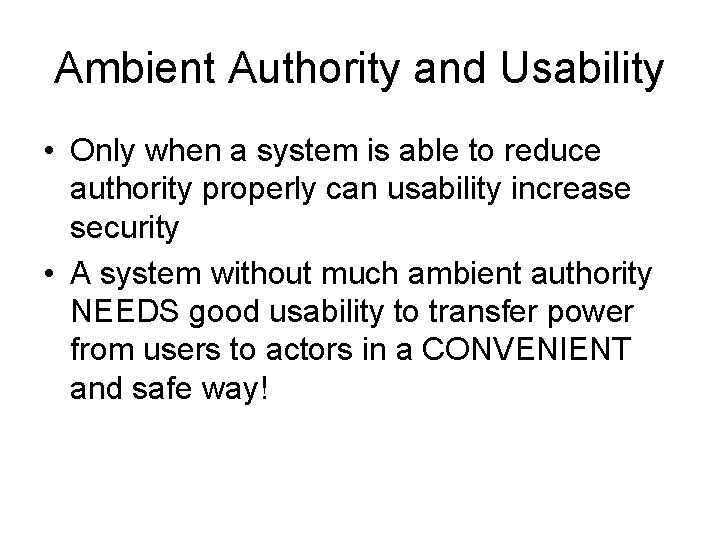

Ambient Authority and Usability • Only when a system is able to reduce authority properly can usability increase security • A system without much ambient authority NEEDS good usability to transfer power from users to actors in a CONVENIENT and safe way!

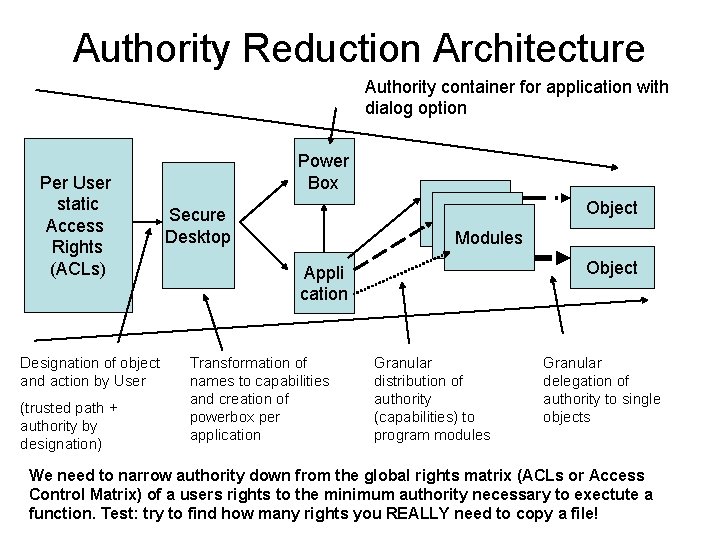

Authority Reduction Architecture Authority container for application with dialog option Per User static Access Rights (ACLs) Designation of object and action by User (trusted path + authority by designation) Power Box Modules Secure Desktop Object Appli cation Transformation of names to capabilities and creation of powerbox per application Object Granular distribution of authority (capabilities) to program modules Granular delegation of authority to single objects We need to narrow authority down from the global rights matrix (ACLs or Access Control Matrix) of a users rights to the minimum authority necessary to exectute a function. Test: try to find how many rights you REALLY need to copy a file!

Testing the UI

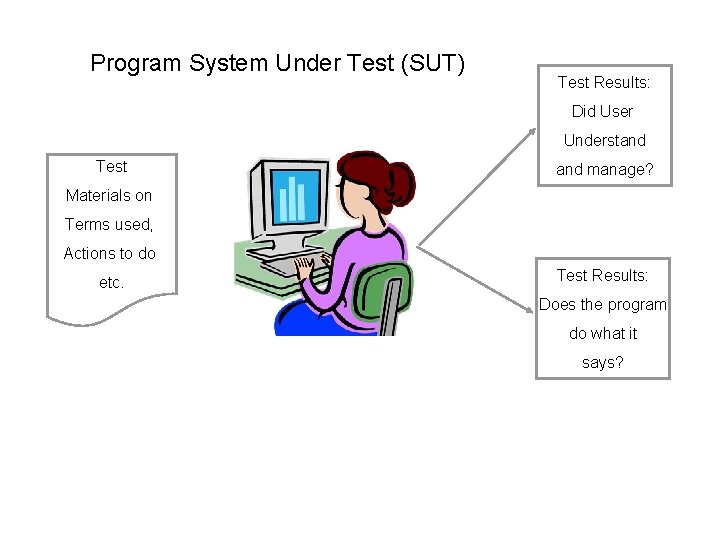

Program System Under Test (SUT) Test Results: Did User Understand Test and manage? Materials on Terms used, Actions to do etc. Test Results: Does the program do what it says?

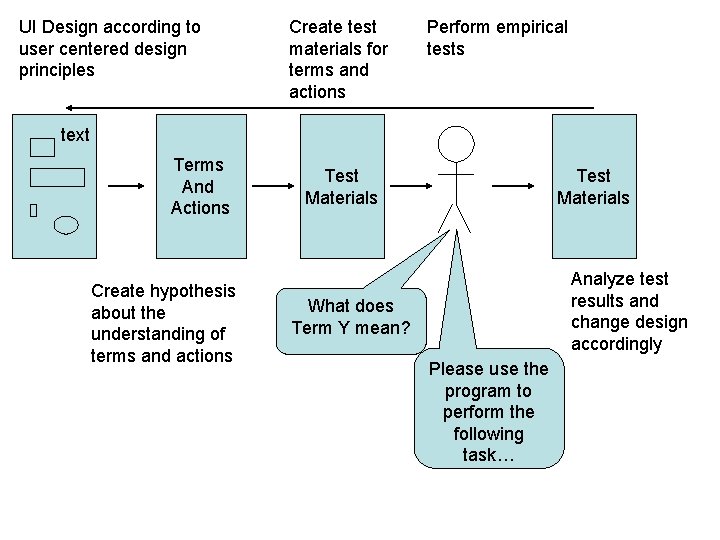

UI Design according to user centered design principles Create test materials for terms and actions Perform empirical tests text Terms And Actions Create hypothesis about the understanding of terms and actions Test Materials Analyze test results and change design accordingly What does Term Y mean? Please use the program to perform the following task…

![Resources • • [Gutm] Peter Gutmann, Security Usability Fundamentals, Draft 2008, http: //www. cs. Resources • • [Gutm] Peter Gutmann, Security Usability Fundamentals, Draft 2008, http: //www. cs.](http://slidetodoc.com/presentation_image_h/9ac2c9e84f94a719ead26d13f2739a2d/image-30.jpg)

Resources • • [Gutm] Peter Gutmann, Security Usability Fundamentals, Draft 2008, http: //www. cs. auckland. ac. nz/~pgut 001/pubs/usability. pdf [KS] W. Kriha, R. Schmitz, Usability und Security, in KES 03/2007, Zeitschrift des Bundesamtes für Sicherheit in der Informationstechnik, http: //www. bsi. bund. de/literat/forumkes/kes 0307. pdf [GM] S. Garfinkel, R. Miller, Johnny 2: A User Test of Key Continuity Management with S/MIME and Outlook Express, presented at the Symposium on Usable Privacy and Security (SOUPS 2005), July 6 -8, 2005, Pittsburgh, PA, online at http: //www. simson. net/cv/pubs. php [Sti 1] M. Stiegler, An Introduction to Petname Systems, http: //www. skyhunter. com/marcs/petnames/Intro. Pet. Names. html [Sti 2] M. Stiegler, The Skynet Virus: Why it is unstoppable; How to stop it (Video), http: //www. skyhunter. com/marcs/skynet. wmv [VISA] Verified by Visa Konzept, siehe http: //usa. visa. com/personal/security/ visa_security_program/vbv/how_it_works. html [WT] A. Whitten, J. D. Tygar, Why Jonny Can't Encrypt, Kapitel 34 [CG] (ursprünglich in Proc. 8 th USENIX Security Syposium, Washington, D. C. , 1999. [Yee 02 a] K. P. Yee, User Interaction Design for Secure Systems, Berkeley University Tech Report CSD-02 -1184, 2002. Online at http: //www. sims. berkeley. edu/~ping/sid/uidss-may-28. pdf.

- Slides: 30