Usability and Human Factors Usability Evaluation Methods Lecture

Usability and Human Factors Usability Evaluation Methods Lecture a This material (Comp 15 Unit 5) was developed by Columbia University, funded by the Department of Health and Human Services, Office of the National Coordinator for Health Information Technology under Award Number 1 U 24 OC 000003. This material was updated by The University of Texas Health Science Center at Houston under Award Number 90 WT 0006. This work is licensed under the Creative Commons Attribution-Non. Commercial-Share. Alike 4. 0 International License. To view a copy of this license, visit http: //creativecommons. org/licenses/by-nc-sa/4. 0/.

Usability Evaluation Methods Lecture a – Learning Objectives • Describe the importance of usability in relation to health information technologies (Lecture a) • List and describe usability evaluation methods (Lecture a) • Given a situation and set of goals, determine which usability evaluation method would be most appropriate and effective (Lecture a) • Conduct a cognitive walkthrough (Lecture b) • Design appropriate tasks for a usability test (Lecture b) • Describe the usability testing environment, required equipment, logistics, and materials (Lecture b) 2

Usability • Quality of a user's experience when interacting with a product or system • Factors affect the user's experience: – – – – Ease of learning / learnability Efficiency of use Supports cognitive task Memorability Error frequency and severity Esthetics Subjective satisfaction 3

Why Usability Matters: The Clinical Story • Over half of all information system projects fail in some way (Kaplan and Harris-Salamone, 2009) • Healthcare Information and Management Systems Society (HIMSS) considers poor usability of clinical information systems as possibly the most important factor hindering adoption • Problems with adoption, productivity and patient safety 4

Why Usability Matters: The Story from Patients and Consumers • e. Health interventions offer significant promise to bridge the digital divide – Problems with usability and poor design disproportionately affect lower computer literacy users – Exacerbate the digital divide and possibly increase health disparities • Chronic illness and Aging populations have special needs – More susceptible to usability problems • Consequences of poor design: – Lack of empowerment, reduced enthusiasm, limited adoption and nonproductive use of patient-centered systems 5

Usability Evaluation: Why It Is Important Evaluation is a critical element in the success of any project, both for developers and buyers. - National Center for Cognitive Informatics & Decision Making in Healthcare 6

Usability Evaluation Methods • • • Interviews/Focus groups Questionnaires/Surveys Ethnographic Observations Usability Inspection Methods Usability Testing Controlled Cognitive Experiments 7

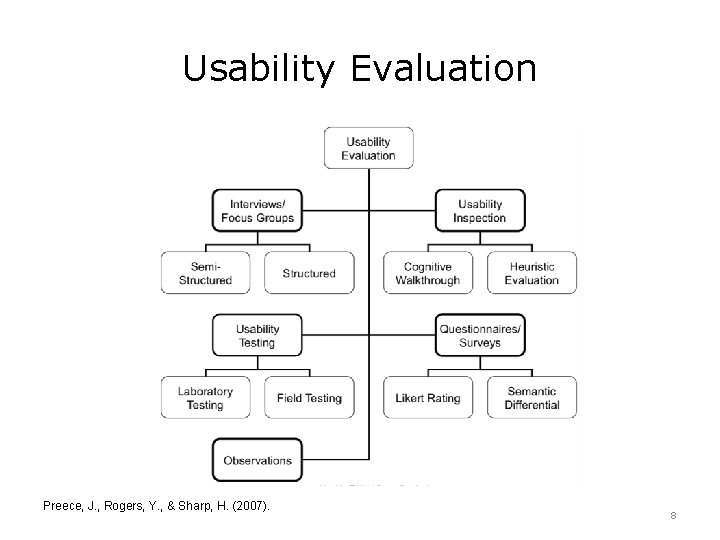

Usability Evaluation Preece, J. , Rogers, Y. , & Sharp, H. (2007). 8

Interviews: Four Main Types 1. Unstructured Interview 2. Structured Interview • Exploratory • Open-ended questions • Ask predetermined questions (similar to questionnaire) • Questions are closed: response from a limited set of alternatives. – What are the advantages for accessing clinical information on mobile devices? • Useful for early phase design – Do you visit this website: 1) daily, 2) weekly or 3) monthly? • Useful when goals are clearly understood 9

Interviews: Four Main Types (Cont’d – 1) 3. Semi-Structured Interview • Most widely used • Starts with preplanned questions • Then probes the interviewee to elaborate on emergent themes 4. Group Interviews/Focus groups • All interviews and focus groups are typically audio recorded and transcribed – “Can you tell me a little more about your thoughts about redesigning this site? 10

Phases of the Interview • Introduction: – The interviewer introduces himself and discusses purpose of interview • Warm-up session: – Simple questions including demographics • Main session: – Questions are presented in a logical sequence with the more probing questions towards the end • Closing session: – Interviewee thanks subject and signals an end to the interview 11

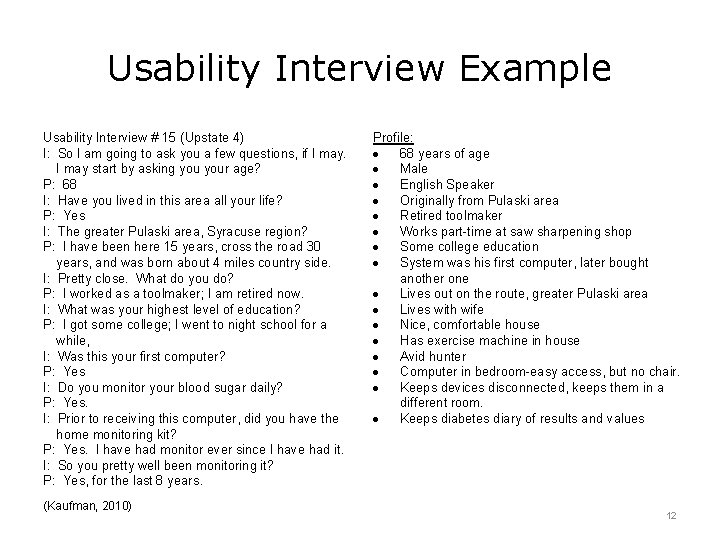

Usability Interview Example Usability Interview # 15 (Upstate 4) I: So I am going to ask you a few questions, if I may start by asking your age? P: 68 I: Have you lived in this area all your life? P: Yes I: The greater Pulaski area, Syracuse region? P: I have been here 15 years, cross the road 30 years, and was born about 4 miles country side. I: Pretty close. What do you do? P: I worked as a toolmaker; I am retired now. I: What was your highest level of education? P: I got some college; I went to night school for a while, I: Was this your first computer? P: Yes I: Do you monitor your blood sugar daily? P: Yes. I: Prior to receiving this computer, did you have the home monitoring kit? P: Yes. I have had monitor ever since I have had it. I: So you pretty well been monitoring it? P: Yes, for the last 8 years. (Kaufman, 2010) Profile: 68 years of age Male English Speaker Originally from Pulaski area Retired toolmaker Works part-time at saw sharpening shop Some college education System was his first computer, later bought another one Lives out on the route, greater Pulaski area Lives with wife Nice, comfortable house Has exercise machine in house Avid hunter Computer in bedroom-easy access, but no chair. Keeps devices disconnected, keeps them in a different room. Keeps diabetes diary of results and values 12

Focus Groups • Commonly used method in marketing and social science research • Typically 3 to 10 participants representative of the sample population – For example: attending physicians, residents, nurses, pharmacists & administrators • • Discussion led by trained facilitator Preset agenda to guide discussion Sufficient flexibility to pursue emergent issues Facilitator guides and prompts discussion – Encourages less talkative participants to speak up – Discourages more verbose participants from dominating 13

Questionnaire / Surveys • Common method for collecting user demographic data and soliciting opinions • Can reach a rather large group of people • Questions are typically closed, though open comments may be solicited 14

Rating Scales: Likert • • Mostly commonly used Measure opinions, attitudes and beliefs Widely used for evaluating user satisfaction Statement representing a range of opinions – I found the system to be very easy to use • Rate response on a continuum from one extreme (e. g. , highest or strongest agreement) to another (lowest or strongest disagreement) 15

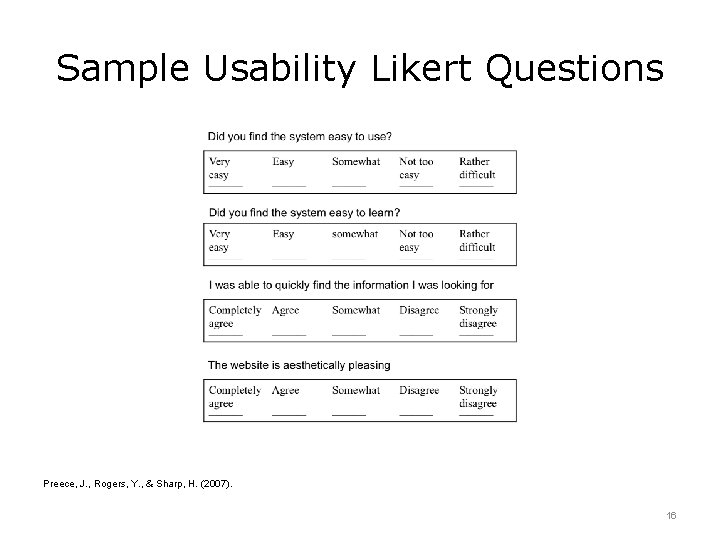

Sample Usability Likert Questions Preece, J. , Rogers, Y. , & Sharp, H. (2007). 16

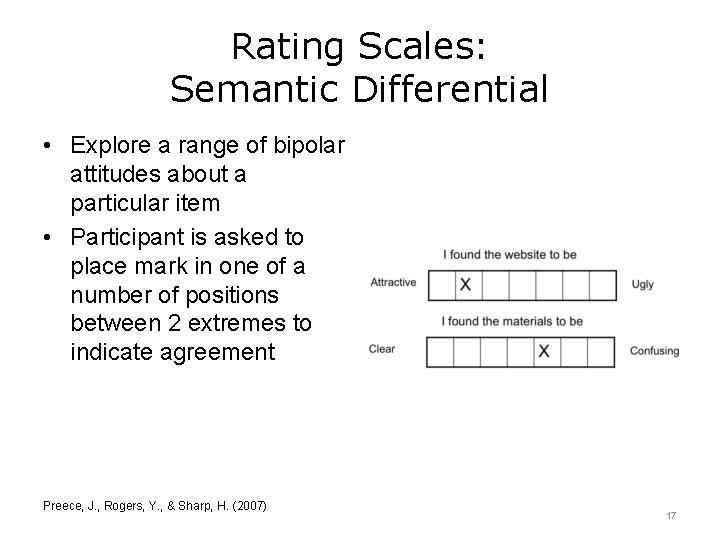

Rating Scales: Semantic Differential • Explore a range of bipolar attitudes about a particular item • Participant is asked to place mark in one of a number of positions between 2 extremes to indicate agreement Preece, J. , Rogers, Y. , & Sharp, H. (2007) 17

Online Surveys: Two Types Web-based: Email-based: • Can be interactive • Electronic version of and can include check paper survey boxes, pull down • Options are limited menus, etc. • Branching logic tailor questions to specific populations • Answers written to a database 18

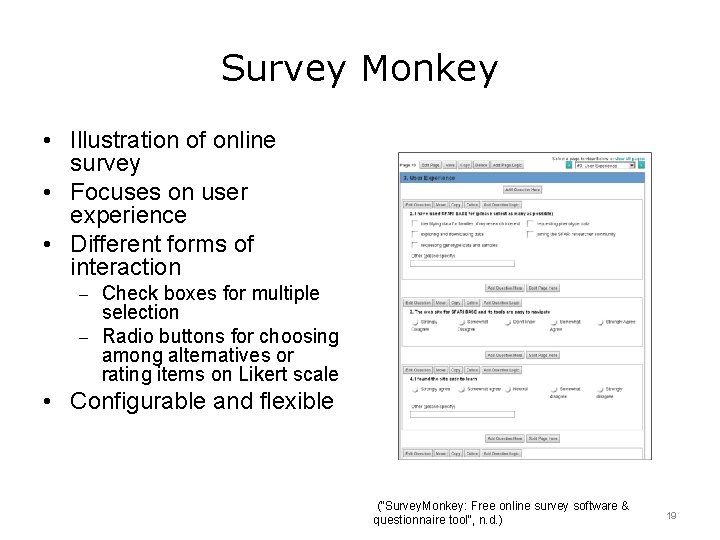

Survey Monkey • Illustration of online survey • Focuses on user experience • Different forms of interaction – Check boxes for multiple selection – Radio buttons for choosing among alternatives or rating items on Likert scale • Configurable and flexible ("Survey. Monkey: Free online survey software & questionnaire tool", n. d. ) 19

Usability Inspection Methods: Expert Reviews • Methods for examining user interface – Performed by analysts or evaluators – Reliance on judgment as a source of feedback • Cost effective • Usability expert (or team of experts) methodically steps through the system – Noting usability problems and violations to usability principles – Noting the severity of problems • Involves assessing the usability of a system for carrying out specific tasks (i. e. , scenarios) • Heuristic Evaluation: – Display based • Cognitive Walkthrough: – Task analytic 20

Usability Principles (Nielsen, 1993) • • • Visibility of system status Match between system and the real world User control and freedom Consistency and standards Help users recognize, diagnose, and recover from errors • Minimize memory load – Emphasize recognition rather than recall • Flexibility and efficiency • Motivation and engagement 21

Heuristic Evaluation • Developed by Jakob Nielsen and Rolf Molich • Method for structuring the critique of a system using simple & general heuristics • Several analysts critique a system to identify & diagnose usability problems • Display based • Each evaluator assesses the system & notes violations of usability principles • In a second pass, analyst rates severity of problems • Can be used in all phases of design including storyboards, prototypes and fully functioning systems 22

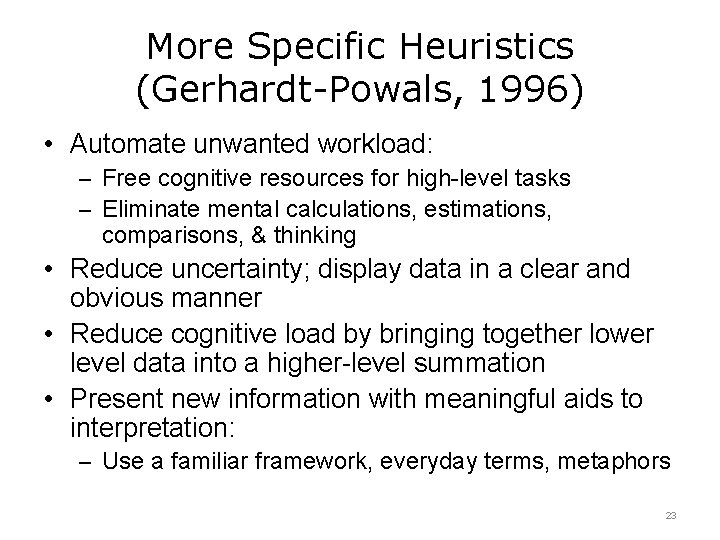

More Specific Heuristics (Gerhardt-Powals, 1996) • Automate unwanted workload: – Free cognitive resources for high-level tasks – Eliminate mental calculations, estimations, comparisons, & thinking • Reduce uncertainty; display data in a clear and obvious manner • Reduce cognitive load by bringing together lower level data into a higher-level summation • Present new information with meaningful aids to interpretation: – Use a familiar framework, everyday terms, metaphors 23

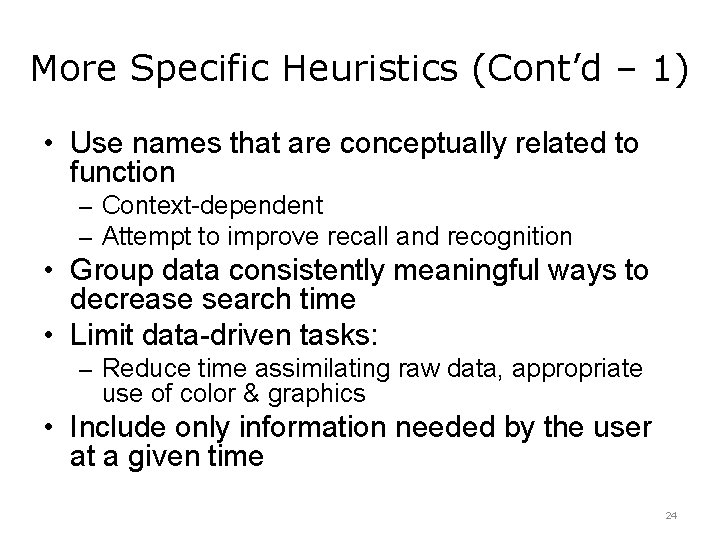

More Specific Heuristics (Cont’d – 1) • Use names that are conceptually related to function – Context-dependent – Attempt to improve recall and recognition • Group data consistently meaningful ways to decrease search time • Limit data-driven tasks: – Reduce time assimilating raw data, appropriate use of color & graphics • Include only information needed by the user at a given time 24

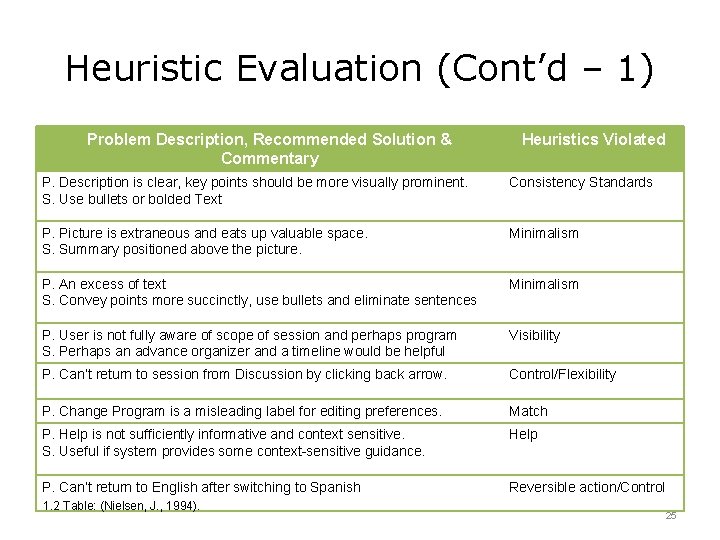

Heuristic Evaluation (Cont’d – 1) Problem Description, Recommended Solution & Commentary Heuristics Violated P. Description is clear, key points should be more visually prominent. S. Use bullets or bolded Text Consistency Standards P. Picture is extraneous and eats up valuable space. S. Summary positioned above the picture. Minimalism P. An excess of text S. Convey points more succinctly, use bullets and eliminate sentences Minimalism P. User is not fully aware of scope of session and perhaps program S. Perhaps an advance organizer and a timeline would be helpful Visibility P. Can’t return to session from Discussion by clicking back arrow. Control/Flexibility P. Change Program is a misleading label for editing preferences. Match P. Help is not sufficiently informative and context sensitive. S. Useful if system provides some context-sensitive guidance. Help P. Can’t return to English after switching to Spanish Reversible action/Control 1. 2 Table: (Nielsen, J. , 1994). 25

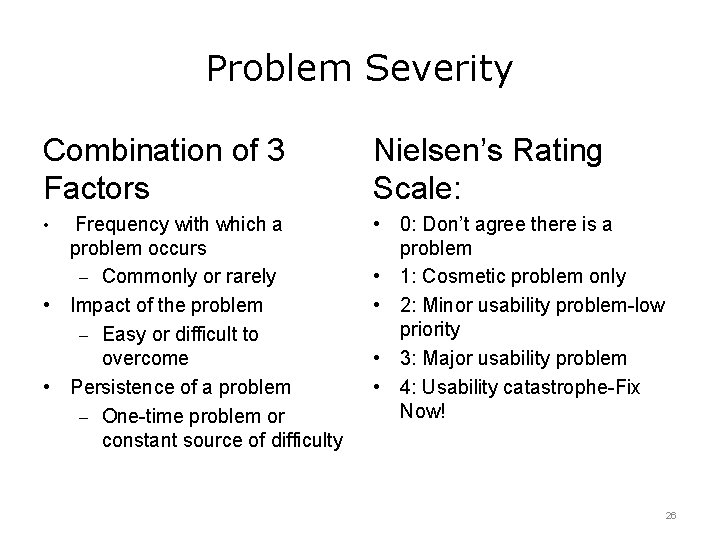

Problem Severity Combination of 3 Factors • Frequency with which a problem occurs – Commonly or rarely • Impact of the problem – Easy or difficult to overcome • Persistence of a problem – One-time problem or constant source of difficulty Nielsen’s Rating Scale: • 0: Don’t agree there is a problem • 1: Cosmetic problem only • 2: Minor usability problem-low priority • 3: Major usability problem • 4: Usability catastrophe-Fix Now! 26

Online Tutorials from NCCID • Expert Review, Heuristic Evaluation: Definition and History • Expert Review, Heuristic Evaluation: Principles • Expert Review, Heuristic Evaluation: Methods • User Testing: Setting up the Testing • User Testing: Role of the Moderator • User Testing: Conducting the Test and Reporting the Results 27

Usability Evaluation Methods Summary – Lecture a • • Why usability matters Usability Evaluation methods Interviews, Focus Groups and Surveys Usability inspection methods – Heuristic evaluation • What’s next? – Cognitive walkthrough – Usability testing 28

Usability Evaluation Methods References – Lecture a References 1. Defining and Testing EMR Usability: Principles and Proposed Methods of EMR Usability Evaluation and Rating (2009) Health Information Management Systems Society, http: //www. himss. org/defining-and-testing-emr-usability-principles-and-proposedmethods-emr-usability-evaluation 2. HIMSS white paper: 'Usability' is critical to adoption of EMRs. (2009). Healthcare IT News. Retrieved 23 June 2016, from http: //www. healthcareitnews. com/news/himss-white-paper-usability-critical-adoption-emrs 3. Nielsen, J. (1994). Heuristic evaluation. In Nielsen, J. , and Mack, R. L. (Eds. ), Usability Inspection Methods. John Wiley & Sons, New York, NY. 4. Kaplan, B. , & Harris-Salamone, K. D. (2009). Health IT Success and Failure: Recommendations from Literature and an AMIA Workshop. Journal of the American Medical Informatics Association JAMIA, 16(3), 291– 299. http: //doi. org/10. 1197/jamia. M 2997 5. Kaufman, D. R. , Patel, V. L. , Hilliman, C. , Morin, P. C. , Pevzner, J, Weinstock, Goland, R. Shea, S. & Starren, J. (2003). Usability in the real world: Assessing medical information technologies in patients’ homes. Journal of Biomedical Informatics, 36, 45 -60. 6. Kaufman, D. (2010). Usability interview example. Department of Biomedical Informatics, Columbia University, New York, NY. 7. Gerhardt-Powals, J. (1996). Cognitive engineering principles for enhancing human computer performance. Int. J. Hum. Comp. Interf. , 8, pp. 189– 211. 8. (2016). Retrieved 23 June 2016, from https: //sbmi. uth. edu/nccd/educational-tutorials/ Images Slide 7, 15 & 16: Preece, J. , Rogers, Y. , & Sharp, H. (2007). Interaction Design: Beyond Human-Computer Interaction (2 nd ed. ). West Sussex, England: Wiley. Slide 18: Survey. Monkey: Free online survey software & questionnaire tool. Surveymonkey. com. Retrieved 23 June 2016, from https: //www. surveymonkey. com/ Charts, Tables, & Figures 1. 2 Table: Nielsen, J. (1994). Heuristic evaluation. In Nielsen, J. , and Mack, R. L. (Eds. ), Usability Inspection Methods. John Wiley & Sons, New York, NY. 29

Usability and Human Factors Usability Evaluation Methods Lecture a This material was developed by Columbia University, funded by the Department of Health and Human Services, Office of the National Coordinator for Health Information Technology under Award Number 1 U 24 OC 000003. This material was updated by The University of Texas Health Science Center at Houston under Award Number 90 WT 0006. 30

- Slides: 30