Usability and Human Factors Electronic Health Records and

Usability and Human Factors Electronic Health Records and Usability Lecture c This material (Comp 15 Unit 6) was developed by Columbia University, funded by the Department of Health and Human Services, Office of the National Coordinator for Health Information Technology under Award Number 1 U 24 OC 000003. This material was updated by The University of Texas Health Science Center at Houston under Award Number 90 WT 0006. This work is licensed under the Creative Commons Attribution-Non. Commercial-Share. Alike 4. 0 International License. To view a copy of this license, visit http: //creativecommons. org/licenses/by-nc-sa/4. 0/.

Electronic Health Records and Usability Lecture c – Learning Objectives • Explain how user-centered design can enhance adoption of EHRs (Lecture c) • Discuss the role of usability testing, training and implementation of electronic health records (Lecture c) • Describe Web 2. 0 and novel concepts in system design (Lecture c) • Identify potential methods of assessing and rating EHR usability when selecting an appropriate EHR system (Lecture c) 2

Usability Challenges in Healthcare • • • Complex needs, time pressure Vary from setting to setting, specialty 50 specialties; other professions – • • (OT, PT, pharmacists, respiratory therapists etc. ) Team-based work Clinician mobility, primary focus on patient (should be) Legal, ethical, errors, high-stakes Multiple standards, evidence-based Medicine Hard to get clinician feedback Confidentiality makes in situ observation, testing difficult Cost of change (time, vendor, consensus, cost) Interruptions, time pressure, institution policies 3

More issues • Hold harmless – Clauses mean institution liable • Learned intermediary – Clinician responsible even if no choice or access to guts of software • Patient Safety – 25% medication errors (2006) involved computer technology – 82% of these CPOE/data entry 4

Basic Minimums • Usability = Efficiency of use + usefulness + ease of use? • For EHRs, we want to: – Minimize likelihood of user error – Provide cognitive support – guide interaction to foster good work, no errors • Avoid: – Errors of commission: e. g. wrong patient chart – Errors of omission: e. g. fail to notice abnormal result – Failure to complete task (due to interruption and no handling of interruption) 5

Evidence-based Usability/Cognitive Support • Usability is not just screen or software design – Affected by workflow, time pressure, physical space layout, lighting, policies for use, and even user experience during implementation • Design needs to be evidence based, and evidence is available (Karsh, 2010) 6

Evidence-based Usability/Cognitive Support (Cont’d – 1) • Fact: – Healthcare is complex, training will be required • Myth: – No training needed with good usability 7

Evidence-based Usability/Cognitive Support (Cont’d – 2) • Fact: – Users not trained in science of usability and cognition – What they want may be wrong, mis-specified, or inarticulate • Myth: – User-centered design = give users what they want 8

Evidence-based Usability/Cognitive Support (Cont’d – 3) • Myth: – Health IT should integrate into workflow • Fact: – Healthcare workflow is emergent – what is needed is more flexible availability of data 9

Evidence-based Usability/Cognitive Support (Cont’d – 4) • Usability is not a fixed target • Don’t think we have the answers • Ongoing research is needed (Karsh, 2010) 10

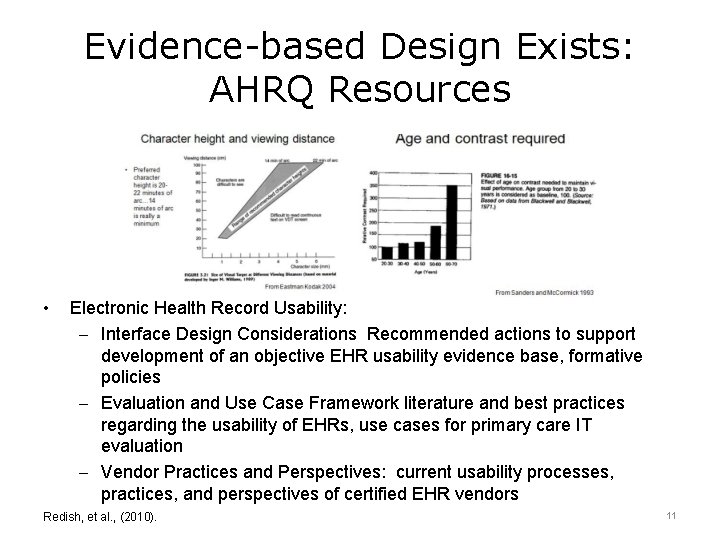

Evidence-based Design Exists: AHRQ Resources • Electronic Health Record Usability: – Interface Design Considerations Recommended actions to support development of an objective EHR usability evidence base, formative policies – Evaluation and Use Case Framework literature and best practices regarding the usability of EHRs, use cases for primary care IT evaluation – Vendor Practices and Perspectives: current usability processes, practices, and perspectives of certified EHR vendors Redish, et al. , (2010). 11

Usability Testing: Subjective vs. Objective • “In routine handling, the Sorrento feels responsive in corners, with nicely weighted, quick steering… The gated zigzag shifter is awkward to use” • “It posted a commendable speed through our avoidance maneuver. Avoidance maneuver, max. spd: 51. 5 mph. 0 to 60 mph: 7. 6 sec” • Two Voices in Consumer Reports Car Reviews 12

Subjective v. Objective • Subjectivist/Qualitative (“art criticism”) – Not everything of importance can be quantified – Differences of opinion are okay – The value is in the “thick description” – Rigorous methods exist (one is formal criticism) 13

Subjective v. Objective (Cont’d – 1) • Objectivist/Quantitative – Believable knowledge derives from measurement of attributes that are inherent in objects – All observers should agree on measurement results (inter-subjectivity) – On what result is “better” (polarity) Accuracy o Response time o Time to identify / Time spent searching o Eye gaze o 14

Heuristic Evaluation • • Expert(s) evaluate system according to heuristics (Norman) 10 main axes: – 1. Visibility of system status – 2. Match between system and the real world – 3. User control and freedom – 4. Consistency and standards – 5. Error prevention – 6. Recognition rather than recall – 7. Flexibility and efficiency of use – 8. Aesthetic and minimalist design – 9. Help users recognize, diagnose, and recover from errors – 10. Help and documentation 15

Focus Groups • Use formative evaluation – Find out information designers can use – What should the system do? – What are users worst problems? – What is their workflow? – How do they do the task now? • Use for summative evaluation (after system is built) • Method: get typical users to view and discuss system and related ideas – Group similar users together – Don’t put supervisors with staff (people should feel free to speak) 16

Focus Groups (Cont’d – 1) • Prepare open-ended questions, not yes/noe fixed time, f • Get permission in writing – Institutional Review Board • Define privacy policy – e. g. participants will be anonymous in any publications, talks • Compensate users – Clinicians are busy and highly paid – Compensate appropriately, e. g. $100/hour 17

Focus Groups (Cont’d – 2) • Record meeting (two digital or tape recorders); obtain permission of subjects • Transcribe and code thematically 18

Usability Testing Think aloud, in-lab • Subject (typical user) uses system in quiet office, software captures video of their screen actions and voice (and face, if desired) • User is told to think aloud while using software for typical tasks • Video is coded analyzed for themes, action patterns, problems, time for tasks… • Morae software is a common tool – Requires webcam with sound, can do remote testing • Video can be edited for administrators, clients, decision-makers, programmers 19

Field Usability Testing • Examine user’s interactions in their normal workplace setting • Field observation – Important method to establish conditions of work, workflow • Answer questions such as – What are time constraints, interruptions, noise, information sharing with colleagues, information flow, information sources, needs, team members? • Observation, log file analysis, user interviews, and perhaps remote – Morae testing will be the main methods • Problems not detected in a laboratory test likely to come to light after deployment • Monitor usage closely soon after deployment 20

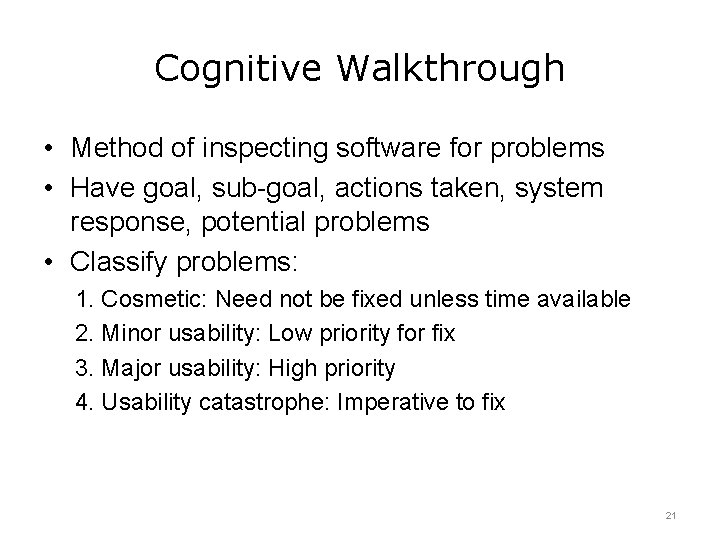

Cognitive Walkthrough • Method of inspecting software for problems • Have goal, sub-goal, actions taken, system response, potential problems • Classify problems: 1. Cosmetic: Need not be fixed unless time available 2. Minor usability: Low priority for fix 3. Major usability: High priority 4. Usability catastrophe: Imperative to fix 21

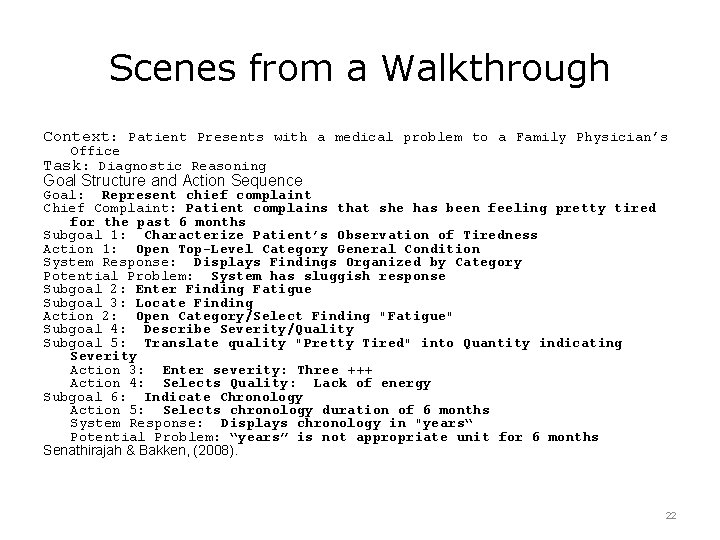

Scenes from a Walkthrough Context: Patient Presents with a medical problem to a Family Physician’s Office Task: Diagnostic Reasoning Goal Structure and Action Sequence Goal: Represent chief complaint Chief Complaint: Patient complains that she has been feeling pretty tired for the past 6 months Subgoal 1: Characterize Patient’s Observation of Tiredness Action 1: Open Top-Level Category General Condition System Response: Displays Findings Organized by Category Potential Problem: System has sluggish response Subgoal 2: Enter Finding Fatigue Subgoal 3: Locate Finding Action 2: Open Category/Select Finding "Fatigue" Subgoal 4: Describe Severity/Quality Subgoal 5: Translate quality "Pretty Tired" into Quantity indicating Severity Action 3: Enter severity: Three +++ Action 4: Selects Quality: Lack of energy Subgoal 6: Indicate Chronology Action 5: Selects chronology duration of 6 months System Response: Displays chronology in "years“ Potential Problem: “years” is not appropriate unit for 6 months Senathirajah & Bakken, (2008). 22

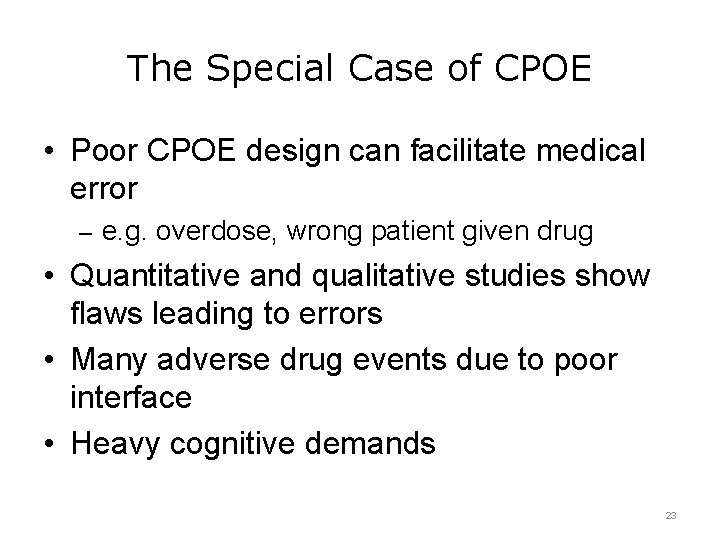

The Special Case of CPOE • Poor CPOE design can facilitate medical error – e. g. overdose, wrong patient given drug • Quantitative and qualitative studies show flaws leading to errors • Many adverse drug events due to poor interface • Heavy cognitive demands 23

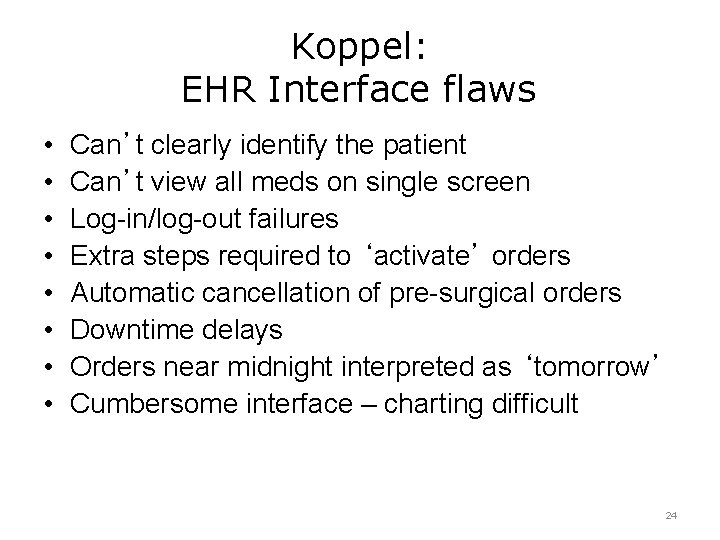

Koppel: EHR Interface flaws • • Can’t clearly identify the patient Can’t view all meds on single screen Log-in/log-out failures Extra steps required to ‘activate’ orders Automatic cancellation of pre-surgical orders Downtime delays Orders near midnight interpreted as ‘tomorrow’ Cumbersome interface – charting difficult 24

CPOE-Related Usability Problems • Avoid: – Deep navigational structures requiring multiple clicks – Too close screen elements -> errors in selections – Same color for data entry fields and others – Long drop-down menus requiring scrolling – Documentation templates different from clinician cognitive model – – of ordering Use of string sensitive data fields for abbreviations Excessive alerts, alerts which do not appear at appropriate time (when clinician would look for that information) Excess screen density, or too many screens Obscure hierarchies of orders or order sets 25

CPOE Design Recommendations • View complete medication record on one screen, avoid scrolling/other screens/fragmentation • Avoid subtle differences in layout, forms, labels for large functional differences • Explicit time indications • Map interface to ordering workflow • Maximum 3 layers of screens • Use consistent terms, organize elements into logical groups, separated by space, alignment • Distinguish active and passive elements • Consistent, sparing color 26

Technology Effects • Effects with technology – How performance changes when one uses a system • Effects of technology – Longer-term effects of technology on cognition, even when the technology is no longer being used 27

Information Gathering Strategies • Hypothesis-driven strategy: Requests for information guided by clinician’s hypothesis independent of the screen displays • Screen-driven: Guided by the ordered sequence of information on the computer screen • With experience: Novice changed from hypothesis-driven strategies to screen-driven 28

Study Results • Paper-based records – Narrative form, with connected and linked text and sentences • EMRs – More info on patient’s past medical history, lifestyle, and primary diagnosis – Information entered in point form, not linked in narrative – Followed structure and sequence of system – Time course not adequately captured • Post EMR paper-based record – Closely resembled EMR in structure and format – No connecting narrative – Limited info on time course 29

Web 2. 0 and Modern Approaches • ‘Web 2. 0’ is a change in internet approaches – Give the user more control • Both philosophical approaches & technical approaches • Social networking applications – e. g. Facebook • Crowd sourcing: – Obtain information or judgment from a large group of users 30

Web 2. 0 and EHRs • Facilitate user control, a better user experience, new forms of interactive information display, and social networking • Address problems of clinician collaboration, optimal design of EHRs, flexibility to meet rapid change, and other problems 31

Electronic Health Records and Usability Summary – Lecture c • EHR usability is a complex area in which we do not yet have standards, but best practices are being intensively studied • Usability is one of the most important factors affecting adoption, satisfaction, and optimal use of EHRs • Usability should be an important factor in selection and deployment of a system • Much research continues to be done in this area; it is important to keep abreast of developments 32

Electronic Health Records and Usability References – Lecture c References 1. Karsh, B. TB. (2010). Health IT Design and Usability: Myths and Realities. AHRQ/NIST EHR Usability Conference; 2010; Washington DC. 2. Koppel , R. , Metlay, J. P. , Cohen, A. , Abaluck, B. , et al. (2005). Role of computerized physician order entry systems in facilitating medical errors. JAMA 293(10): 1197 -1203. 3. Patel V, Kushniruk A. Cognitive and usability engineering methods for the evaluation of clinical information systems. Journal of Biomedical Informatics. 2004; 37(1): 56 -76. 4. Patel VL, Kushniruk, A. W. , Yang, S. , & Yale, J. F. . Impact of a computerized patient record system on medical data collection, organization and reasoning. J of the American Medical Informatics Association. 2000; 7(6): 569 -85. 5. Shabot M. Ten commandments for implementing clinical information systems. Proc (Bayl Univ Med Cent). 2004; 17(3): 265 -9. 6. Staggers N, Mills ME. Nurse-Computer Interaction: Staff Performance Outcomes. Nursing Research. 1994; 43(3): 144 -50. 7. Zhu X, Gold SA, Lai A, Hripcsak G, Cimino J, editors. Using Timeline Displays to Improve Medication Reconciliation. 2009 International Conference on e. Health, Telemedicine, and Social Medicine; 2009. 8. Belden J. EHR usability: an illustrated guide. AHRQ/NIST EHR Usability Conference; 2010; Washington DC: National Institute of Standards and Technology. 9. Friedman, C. (2010). Usability in Health IT: technical strategy, research, and implementation: summary workshop. National Institute of Standards and Technology, U. S. , Department of Commerce. Retrieved on September 4 th, 2012 from http: //www. nist. gov/itl/hit/upload/U-HIT_Workshop_Report_Publication_Version. pdf 10. Khajouei, R. , Jaspers, MWM. CPOE System Design Aspects and Their Qualitative Effect on Usability. e. Health Beyond the Horizon. 33

Electronic Health Records and Usability References – Lecture c (Cont’d – 1) Images Slide 11: Redish, J. G. , Lowry, S. Z. , Locke, G. , Gallagher, P. D. , Friedman, C. (2010). Usability in Health IT: technical strategy, research, and implementation: summary workshop. National Institute of Standards and Technology, U. S. , Department of Commerce. Retrieved on September 4 th, 2012 from http: //www. nist. gov/itl/hit/upload/U-HIT_Workshop_Report_Publication_Version. pdf Slide 21: Senathirajah Y, Bakken, S. , editor. A User-Configurable EHR using Web 2. 0 Approaches. AMIA Spring 2008; Phoenix, AZ: AMIA. 34

Usability and Human Factors Electronic Health Records and Usability Lecture c This material was developed by Columbia University, funded by the Department of Health and Human Services, Office of the National Coordinator for Health Information Technology under Award Number 1 U 24 OC 000003. This material was updated by The University of Texas Health Science Center at Houston under Award Number 90 WT 0006. 35

- Slides: 35