Update Propagation with Variable Connectivity Prasun Dewan Department

Update Propagation with Variable Connectivity Prasun Dewan Department of Computer Science University of North Carolina dewan@unc. edu 1

Evolution of wireless networks n Early days: disconnected computing (Coda’ 91) Laptops plugged in at home or office u No wireless network u n Later: weakly connected computing (Coda ‘ 95) Assume a wireless network available, but u Performance may be poor u Cost may be high u Energy consumption may be high u Intermittent disconnectivity causes involuntary breaks u 2

Issues raised by Coda’ 91 n Considers strong connection and disconnection u weak connection? hoarding, emulation, or something else? 3

Design Principles for Weak Connectivity n Don’t make strongly-connected clients suffer u n n n E. g. wait for weakly connected client to give token Weak-connection should be better than disconnection Do communication tasks in background if possible u user patience threshold In weakly connected mode, communicate the more important info u what is important? u n n When in doubt ask, user Should be able to work without user advice 4

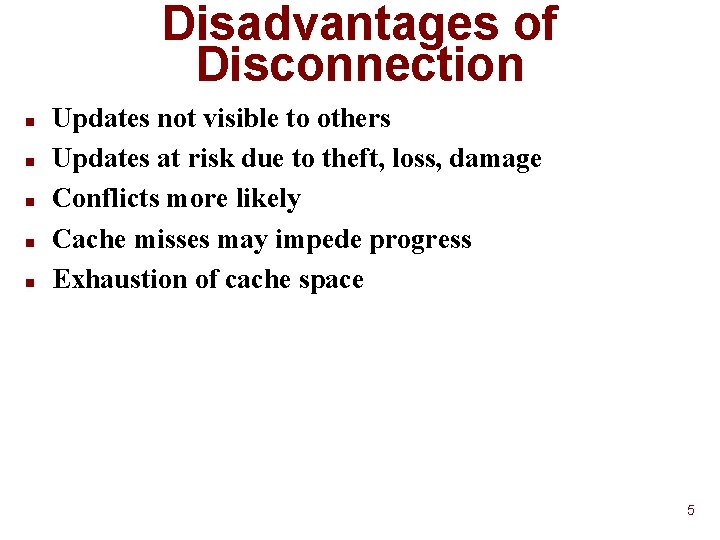

Disadvantages of Disconnection n n Updates not visible to others Updates at risk due to theft, loss, damage Conflicts more likely Cache misses may impede progress Exhaustion of cache space 5

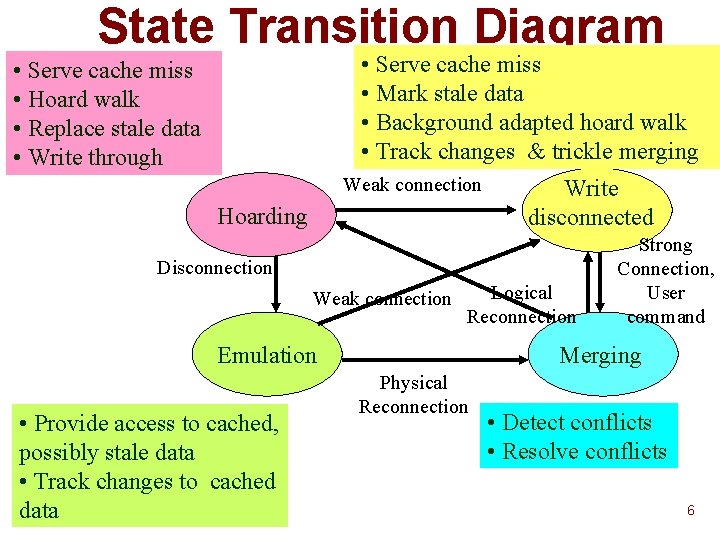

State Transition Diagram • Serve cache miss • Mark stale data • Background adapted hoard walk • Track changes & trickle merging • Serve cache miss • Hoard walk • Replace stale data • Write through Weak connection Hoarding Write disconnected Disconnection Weak connection Logical Reconnection Emulation • Provide access to cached, possibly stale data • Track changes to cached data Strong Connection, User command Merging Physical Reconnection • Detect conflicts • Resolve conflicts 6

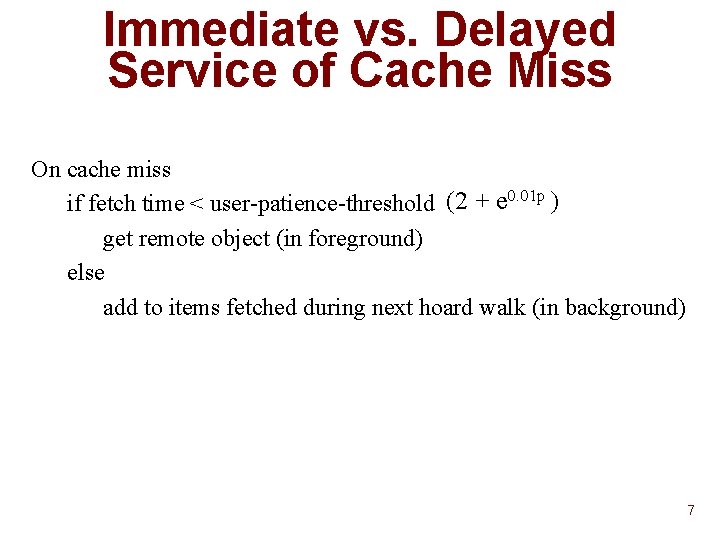

Immediate vs. Delayed Service of Cache Miss On cache miss if fetch time < user-patience-threshold (2 + e 0. 01 p ) get remote object (in foreground) else add to items fetched during next hoard walk (in background) 7

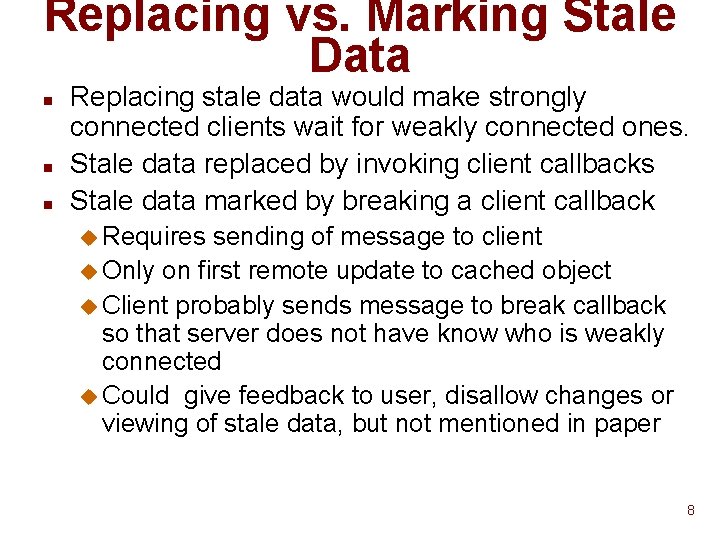

Replacing vs. Marking Stale Data n n n Replacing stale data would make strongly connected clients wait for weakly connected ones. Stale data replaced by invoking client callbacks Stale data marked by breaking a client callback u Requires sending of message to client u Only on first remote update to cached object u Client probably sends message to break callback so that server does not have know who is weakly connected u Could give feedback to user, disallow changes or viewing of stale data, but not mentioned in paper 8

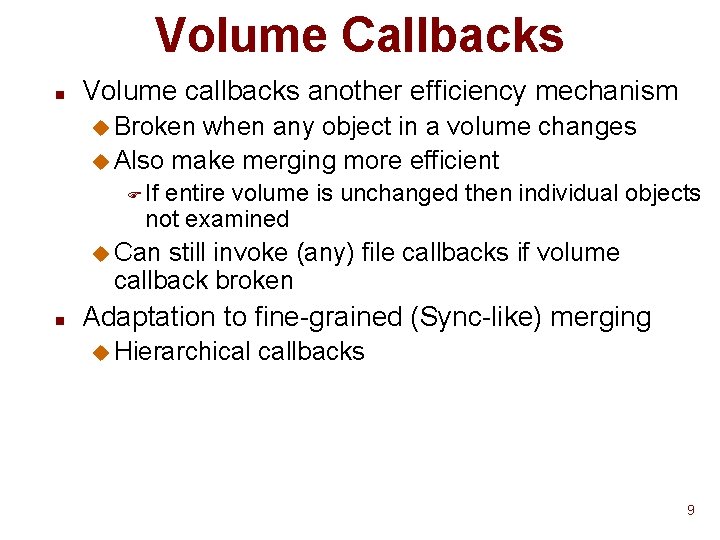

Volume Callbacks n Volume callbacks another efficiency mechanism u Broken when any object in a volume changes u Also make merging more efficient F If entire volume is unchanged then individual objects not examined u Can still invoke (any) file callbacks if volume callback broken n Adaptation to fine-grained (Sync-like) merging u Hierarchical callbacks 9

Hoard Walk n Done every h time units (10 minutes) u or user request u n n n Not the same as connected hoard walk Stale small files automatically included User has option to marks large files to be fetched 10

Trickle Merging n Do not propagate local changes immediately u reduces # of messages sent u reduces size of data sent F buffering changes allows log compression F important because whole file sent F what if small (Sync-like) changes sent? 11

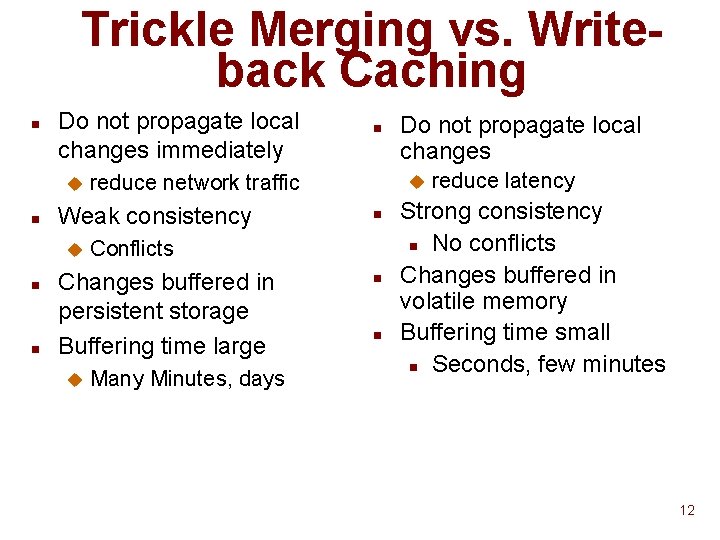

Trickle Merging vs. Writeback Caching n Do not propagate local changes immediately u n n n reduce network traffic Weak consistency u Many Minutes, days Do not propagate local changes u n Conflicts Changes buffered in persistent storage Buffering time large u n n n reduce latency Strong consistency n No conflicts Changes buffered in volatile memory Buffering time small n Seconds, few minutes 12

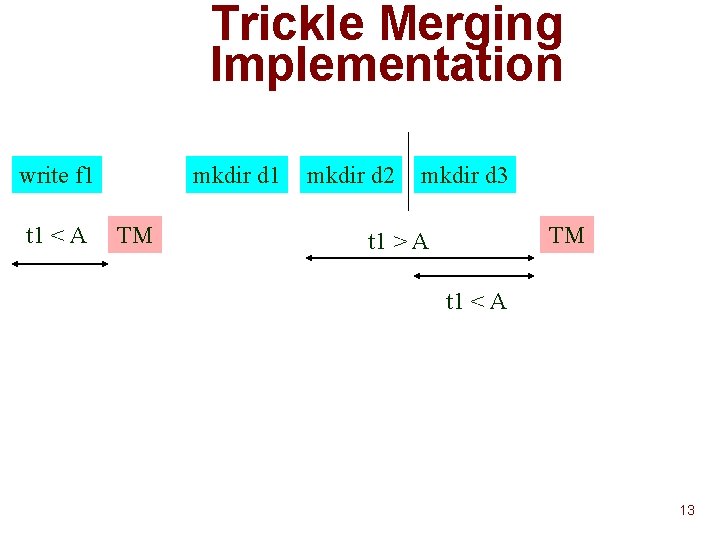

Trickle Merging Implementation write f 1 t 1 < A mkdir d 1 TM mkdir d 2 mkdir d 3 TM t 1 > A t 1 < A 13

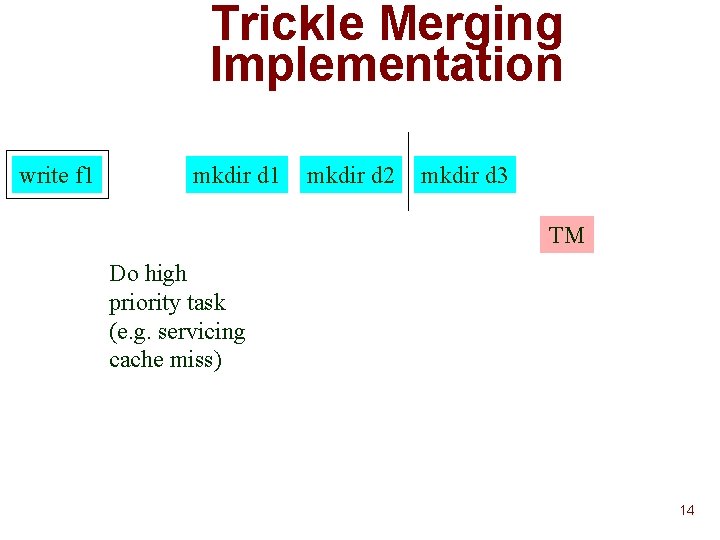

Trickle Merging Implementation write f 1 mkdir d 2 mkdir d 3 TM Do high priority task (e. g. servicing cache miss) 14

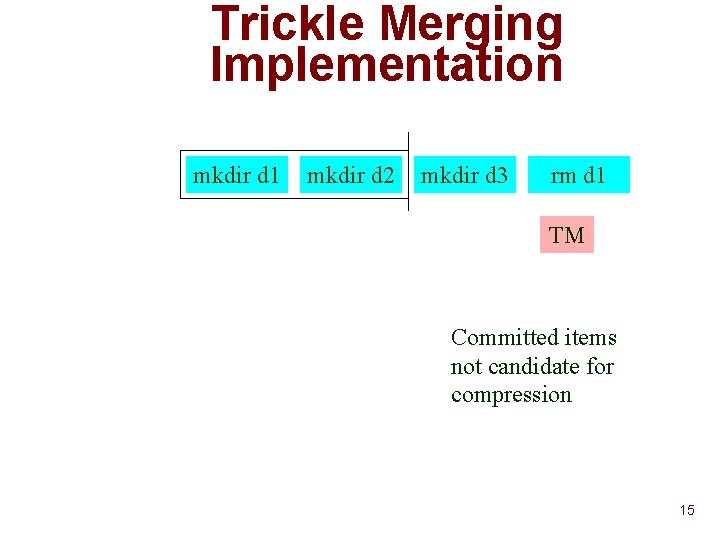

Trickle Merging Implementation mkdir d 1 mkdir d 2 mkdir d 3 rm d 1 TM Committed items not candidate for compression 15

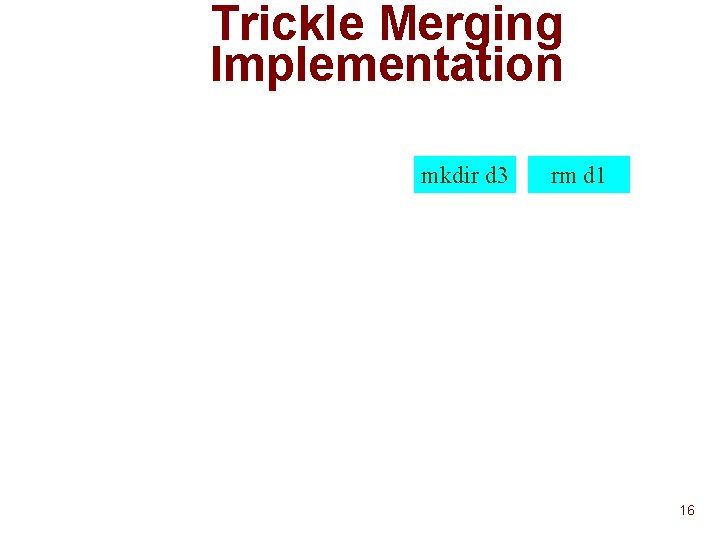

Trickle Merging Implementation mkdir d 3 rm d 1 16

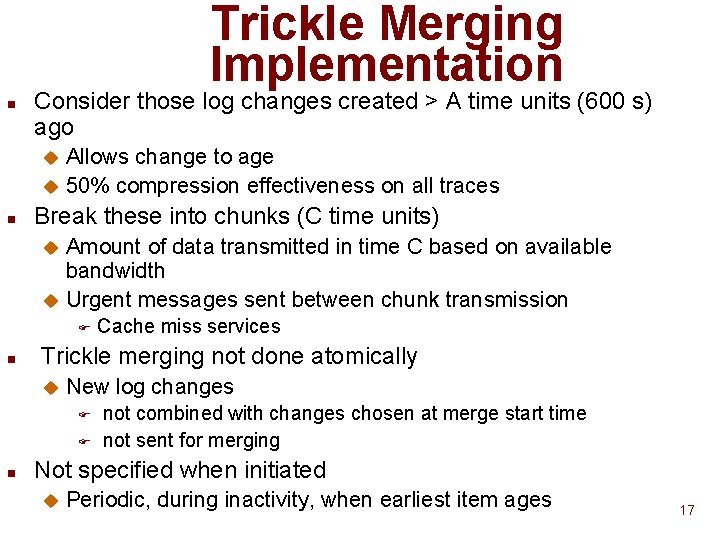

Trickle Merging Implementation n Consider those log changes created > A time units (600 s) ago Allows change to age u 50% compression effectiveness on all traces u n Break these into chunks (C time units) Amount of data transmitted in time C based on available bandwidth u Urgent messages sent between chunk transmission u F n Trickle merging not done atomically u New log changes F F n Cache miss services not combined with changes chosen at merge start time not sent for merging Not specified when initiated u Periodic, during inactivity, when earliest item ages 17

Merging n n Times chosen to get desired level of compression Desired level of consistency? 18

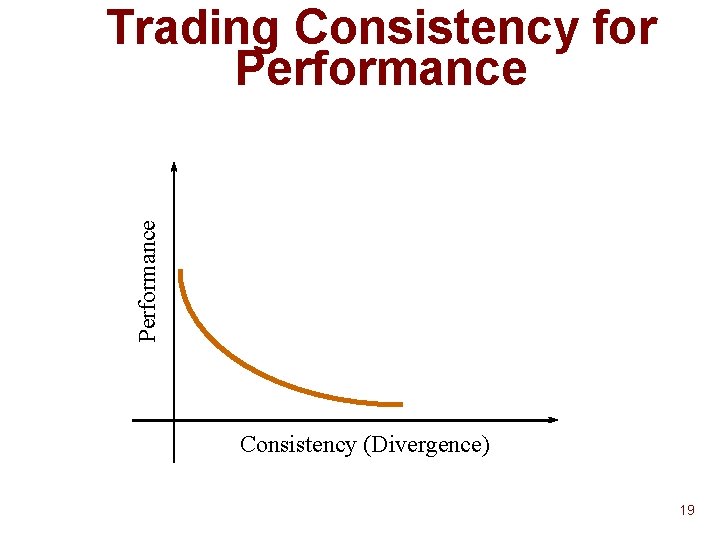

Performance Trading Consistency for Performance Consistency (Divergence) 19

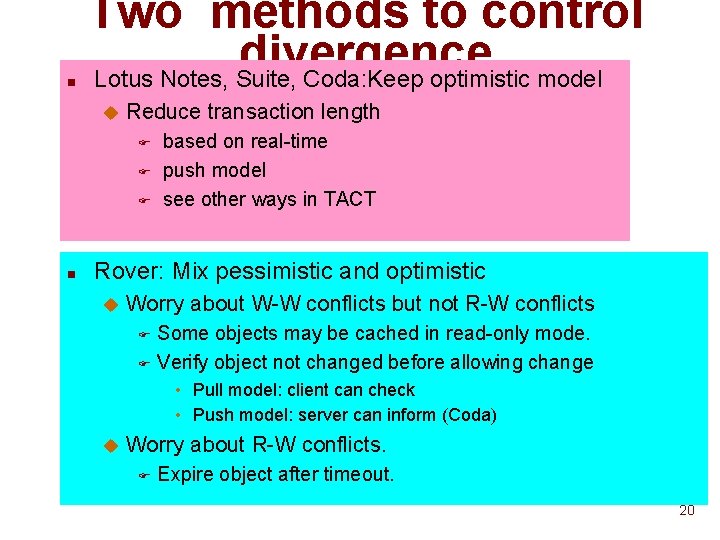

n Two methods to control divergence Lotus Notes, Suite, Coda: Keep optimistic model u Reduce transaction length F F F n based on real-time push model see other ways in TACT Rover: Mix pessimistic and optimistic u Worry about W-W conflicts but not R-W conflicts Some objects may be cached in read-only mode. F Verify object not changed before allowing change F • Pull model: client can check • Push model: server can inform (Coda) u Worry about R-W conflicts. F Expire object after timeout. 20

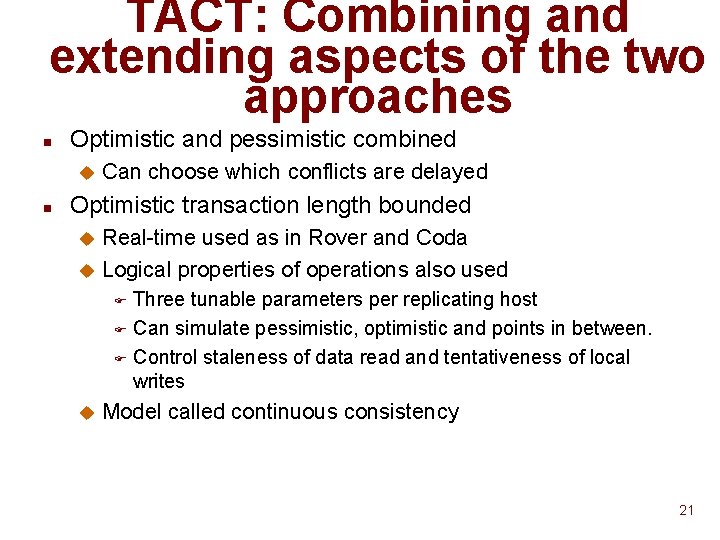

TACT: Combining and extending aspects of the two approaches n Optimistic and pessimistic combined u n Can choose which conflicts are delayed Optimistic transaction length bounded Real-time used as in Rover and Coda u Logical properties of operations also used u Three tunable parameters per replicating host F Can simulate pessimistic, optimistic and points in between. F Control staleness of data read and tentativeness of local writes F u Model called continuous consistency 21

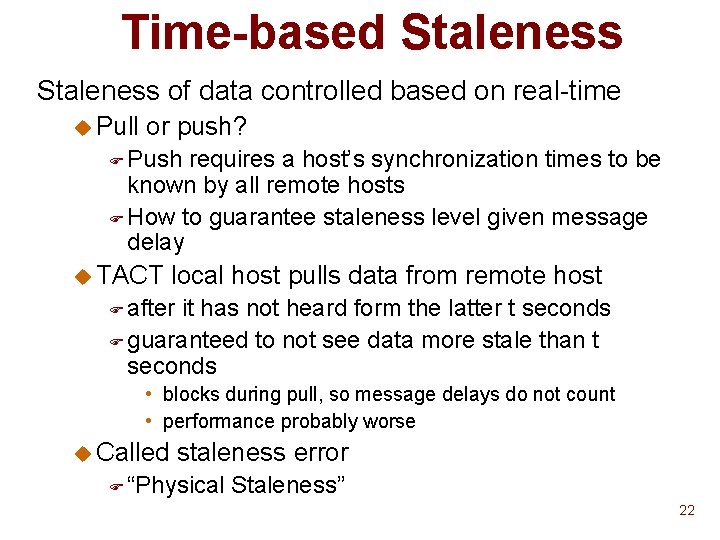

Time-based Staleness of data controlled based on real-time u Pull or push? F Push requires a host’s synchronization times to be known by all remote hosts F How to guarantee staleness level given message delay u TACT local host pulls data from remote host F after it has not heard form the latter t seconds F guaranteed to not see data more stale than t seconds • blocks during pull, so message delays do not count • performance probably worse u Called staleness error F “Physical Staleness” 22

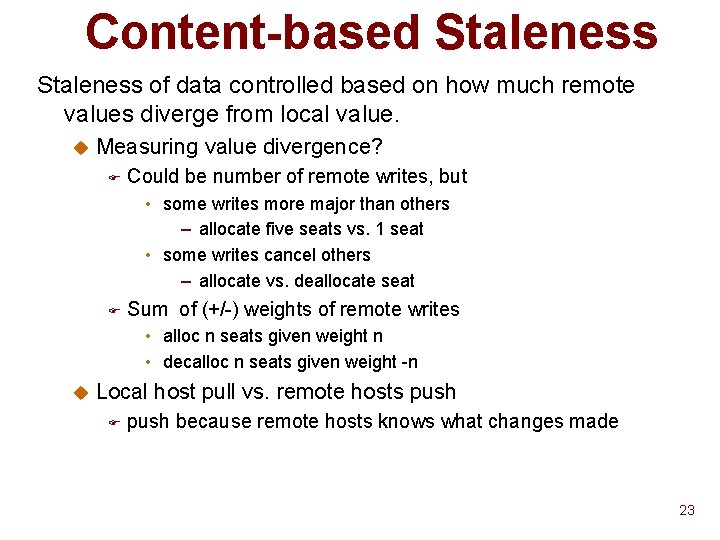

Content-based Staleness of data controlled based on how much remote values diverge from local value. u Measuring value divergence? F Could be number of remote writes, but • some writes more major than others – allocate five seats vs. 1 seat • some writes cancel others – allocate vs. deallocate seat F Sum of (+/-) weights of remote writes • alloc n seats given weight n • decalloc n seats given weight -n u Local host pull vs. remote hosts push F push because remote hosts knows what changes made 23

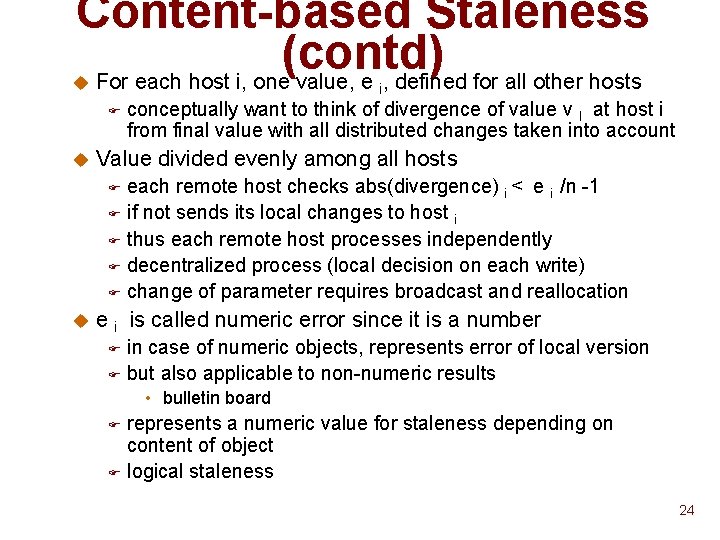

Content-based Staleness (contd) For each host i, one value, e , defined for all other hosts u i F u conceptually want to think of divergence of value v I at host i from final value with all distributed changes taken into account Value divided evenly among all hosts each remote host checks abs(divergence) i < e i /n -1 F if not sends its local changes to host i F thus each remote host processes independently F decentralized process (local decision on each write) F change of parameter requires broadcast and reallocation F u e i is called numeric error since it is a number in case of numeric objects, represents error of local version F but also applicable to non-numeric results F • bulletin board represents a numeric value for staleness depending on content of object F logical staleness F 24

Tentativeness of Local Writes n n Local writes tentative until ordered wrt to remote writes Parameter determines how many local writes buffered until resolution process occurs u a subset of remote peers may be sent the writes primary server F quorum F n Called order error u assumes merge process simply orders the writes F other approaches possible • taking avg, min • discarding • order not important for commuting operations u In case list insertions, causes insertions to be out of order F bulletin board example 25

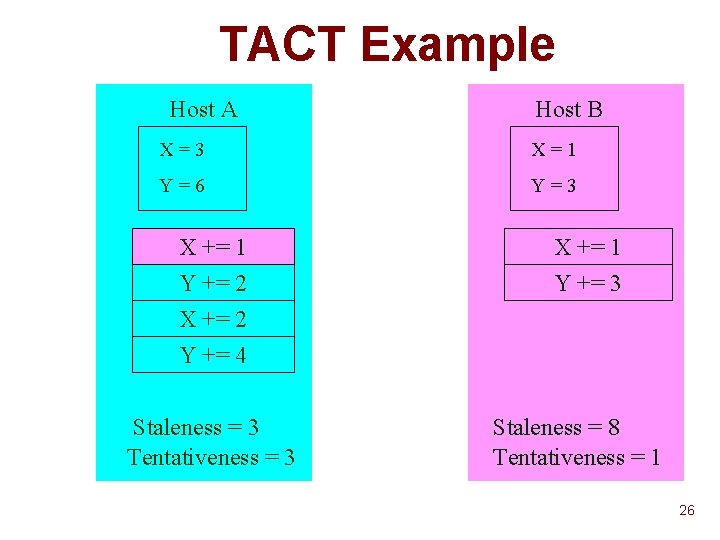

TACT Example Host A Host B X=3 X=1 Y=6 Y=3 X += 1 Y += 2 X += 2 Y += 4 Y += 3 Staleness = 3 Tentativeness = 3 Staleness = 8 Tentativeness = 1 26

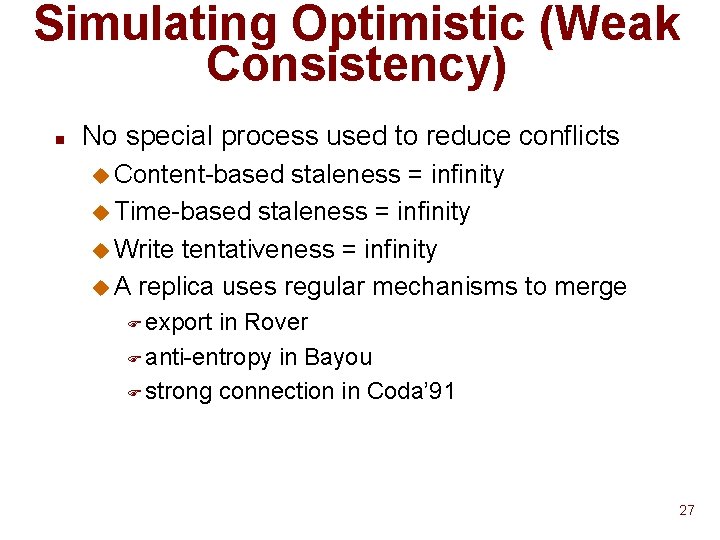

Simulating Optimistic (Weak Consistency) n No special process used to reduce conflicts u Content-based staleness = infinity u Time-based staleness = infinity u Write tentativeness = infinity u A replica uses regular mechanisms to merge F export in Rover F anti-entropy in Bayou F strong connection in Coda’ 91 27

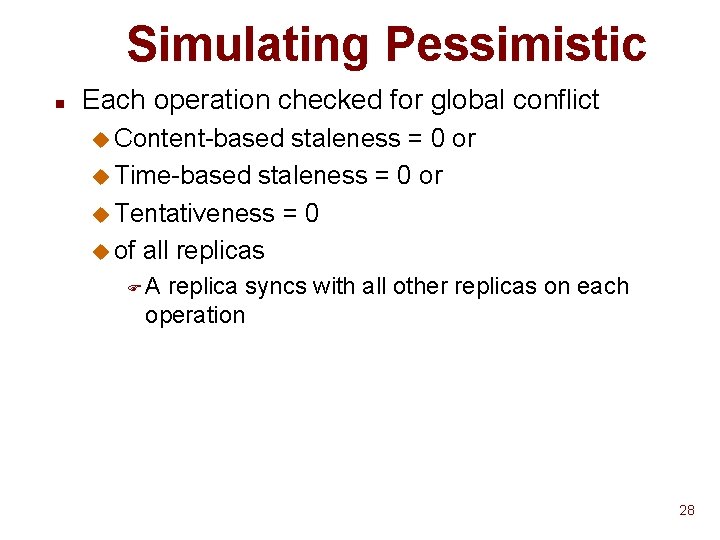

Simulating Pessimistic n Each operation checked for global conflict u Content-based staleness = 0 or u Time-based staleness = 0 or u Tentativeness = 0 u of all replicas FA replica syncs with all other replicas on each operation 28

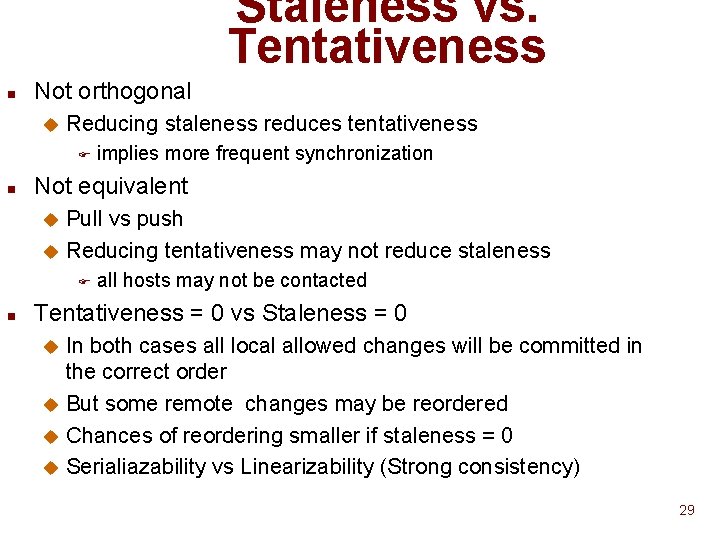

Staleness vs. Tentativeness n Not orthogonal u Reducing staleness reduces tentativeness F n implies more frequent synchronization Not equivalent Pull vs push u Reducing tentativeness may not reduce staleness u F n all hosts may not be contacted Tentativeness = 0 vs Staleness = 0 In both cases all local allowed changes will be committed in the correct order u But some remote changes may be reordered u Chances of reordering smaller if staleness = 0 u Serialiazability vs Linearizability (Strong consistency) u 29

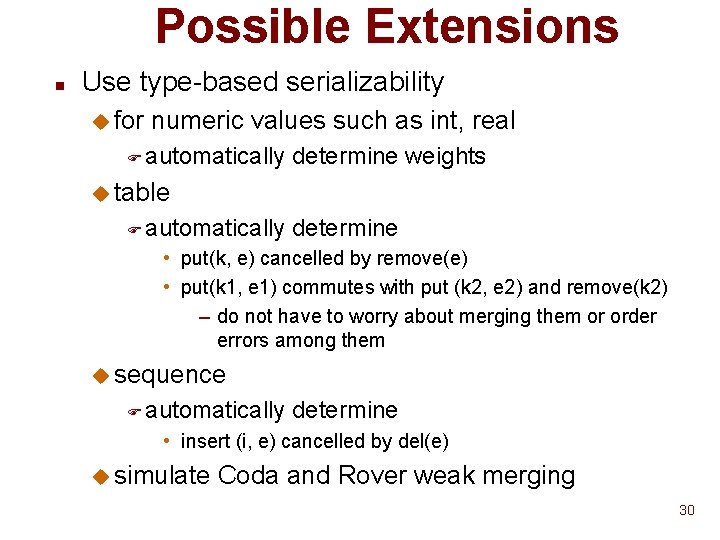

Possible Extensions n Use type-based serializability u for numeric values such as int, real F automatically determine weights u table F automatically determine • put(k, e) cancelled by remove(e) • put(k 1, e 1) commutes with put (k 2, e 2) and remove(k 2) – do not have to worry about merging them or order errors among them u sequence F automatically determine • insert (i, e) cancelled by del(e) u simulate Coda and Rover weak merging 30

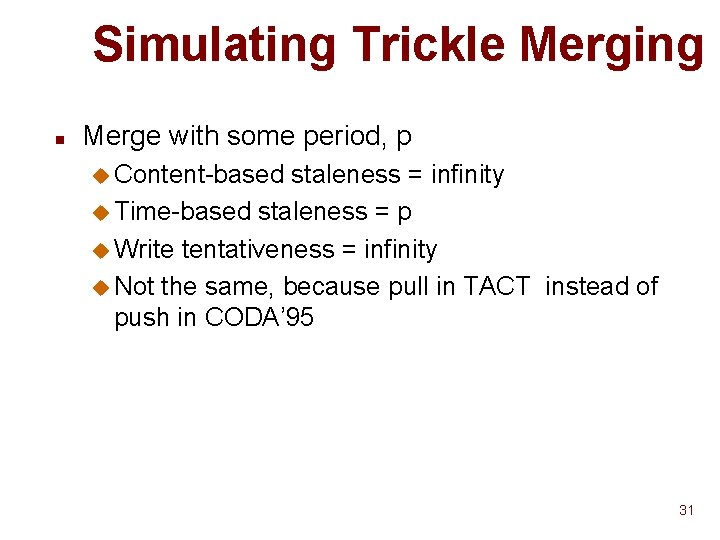

Simulating Trickle Merging n Merge with some period, p u Content-based staleness = infinity u Time-based staleness = p u Write tentativeness = infinity u Not the same, because pull in TACT instead of push in CODA’ 95 31

- Slides: 31