Unix Scheduling Multilevel feedback queues 128 priority queues

Unix Scheduling • Multilevel feedback queues – 128 priority queues (value: 0 -127) – Round Robin per priority queue • Every scheduling event the scheduler picks the lowest priority non-empty queue and runs jobs in round-robin • Scheduling events (when to schedule): – Clock interrupt – Process does a system call – Process gives up CPU, e. g. to do I/O

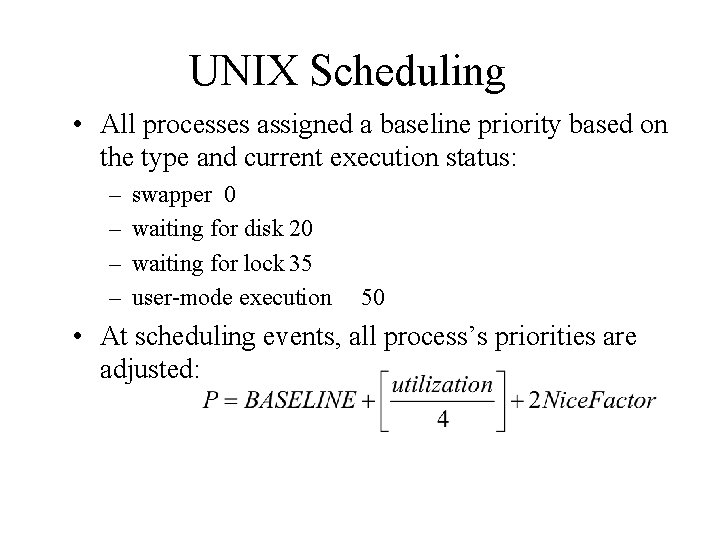

UNIX Scheduling • All processes assigned a baseline priority based on the type and current execution status: – – swapper 0 waiting for disk 20 waiting for lock 35 user-mode execution 50 • At scheduling events, all process’s priorities are adjusted:

Multiprocessor Scheduling • Targeted applications: Single-process jobs. Multi-threaded jobs. • Multiprogrammed vs. dedicated: running several applications concurrently? • Granularity of parallelism. • Scheduling policy?

Granularity of Parallelism • Independent parallelism: independent processes. No need to synchronize. • Coarse-grained parallelism: relatively independent processes with occasional synchronization. • Medium-grained parallelism. E. g. multi-threads which sync frequently. • Fine-grained parallelism: synchronization every few instructions.

Scheduling Challenges in Multiprogrammed Environments • Why multiprogramming? – Improve system utilization • Applications with independent tasks or coarse grained parallelism. – Regular OS scheduling policy • Applications with medium grained parallelism – Special scheduling policy is needed. E. g. Consider a set of cooperative threads collectively.

Assigning Processes to Processors • Master/slave approach: Single scheduler. One processor is dedicated for processor assignment – Good: Centralized decision making – But: single point of failure. Not scalable. • Peer assignment: OS can run any processor. Each processor does self-scheduling.

Scheduler Organization in Peer Assignment • Single global ready queue with locks. CPUs schedule jobs from this queue. – Use FCFS or other scheduling policies. – Limited scalability, but reasonable. • One ready queue per CPU. Each CPU schedules its processes. – Static process assignment: cache friendly (Affinity), but difficult to balance load – Dynamic assignment with job stealing. • may need process migration.

Self Scheduling vs. Gang Scheduling • Self-scheduling (load sharing): Pool of threads, pool of processors. – Better utilization – Cooperative threads may not run in parallel. • Gang-scheduling (co-scheduling): Schedule related threads/processes at once on different CPUs. – Maximum concurrency for parallel applications. – Minimize intra-task communication latency. – More resource waste.

Other Issues • Time sharing and space sharing: – Space sharing: Should processors be statically or dynamically divided among applications? – Time sharing: should a processor be multiprogrammed? • Resource allocation: – How many processors should be assigned to a process (a user job)? How can the OS know about the resource requirement of a job (process). • In Solaris, a user needs to declare threads to be scheduled in a system scope. – Should the resource allocation be dynamically adjusted? A job may not utilize all processors assigned.

Lottery Scheduling for Resource Share Allocation • Carl A. Waldspurger and William E. Weihl. Lottery Scheduling: Flexible Proportional-Share Resource Management. In OSDI’ 94 • Probabilistic approach for proportional resource sharing. – Assign a number of “lottery tickets” to each process. – The OS selects “winning ticket” randomly. – Tickets are transferable to among processes for share adjustment. – The # of tickets a process owns represents the percentage of its share.

- Slides: 10