University of Pennsylvania Distributed Systems By Ilmin Kim

University of Pennsylvania Distributed Systems 개요 By Ilmin Kim 1

University of Pennsylvania Introduction to Distributed Systems Why do we develop distributed systems? • Main frames were very expensive. One com. = many users. • availability of powerful yet cheap microprocessors (PCs, workstations), continuing advances in communication technology, multiple computers = one user What is a distributed system? A distributed system is a collection of independent computers that appear to the users of the system as a single system. Examples: • Network of workstations • Distributed manufacturing system (e. g. , automated assembly line) • Network of branch office computers 11/14/00 CSE 380 2

Advantages of Distributed Systems over Centralized Systems University of Pennsylvania • Economics: a collection of microprocessors offer a better price/performance than mainframes. Low price/performance ratio: cost effective way to increase computing power. • Speed: a distributed system may have more total computing power than a mainframe. Ex. 10, 000 CPU chips, each running at 50 MIPS. Not possible to build 500, 000 MIPS single processor since it would require 0. 002 nsec instruction cycle. Enhanced performance through load distributing. • Inherent distribution: Some applications are inherently distributed. Ex. a supermarket chain. • Reliability: If one machine crashes, the system as a whole can still survive. Higher availability and improved reliability. • Incremental growth: Computing power can be added in small increments. Modular expandability • Another deriving force: the existence of large number of personal computers, the need for people to collaborate and share information. 11/14/00 CSE 380 3

Advantages of Distributed Systems over Independent PCs University of Pennsylvania • Data sharing: allow many users to access to a common data base • Resource Sharing: expensive peripherals like color printers • Communication: enhance human-to-human communication, e. g. , email, chat • Flexibility: spread the workload over the available machines 11/14/00 CSE 380 4

University of Pennsylvania Disadvantages of Distributed Systems • Software: difficult to develop software for distributed systems • Network: saturation, lossy transmissions • Security: easy access also applies to secrete data 11/14/00 CSE 380 5

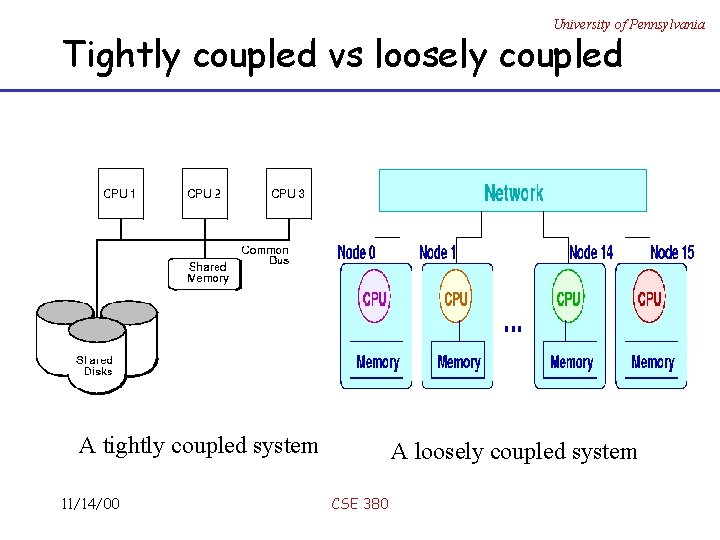

Hardware Concepts University of Pennsylvania MIMD (Multiple-Instruction Multiple-Data) Tightly Coupled versus Loosely Coupled · Tightly coupled systems (multiprocessors) o shared memory with bus o Inter-machine delay short, data rate high · Loosely coupled systems (multi-computers) o private memory o Computers are connected with networks o Inter-machine delay long, data rate low 11/14/00 CSE 380 6

University of Pennsylvania Tightly coupled vs loosely coupled A tightly coupled system 11/14/00 A loosely coupled system CSE 380

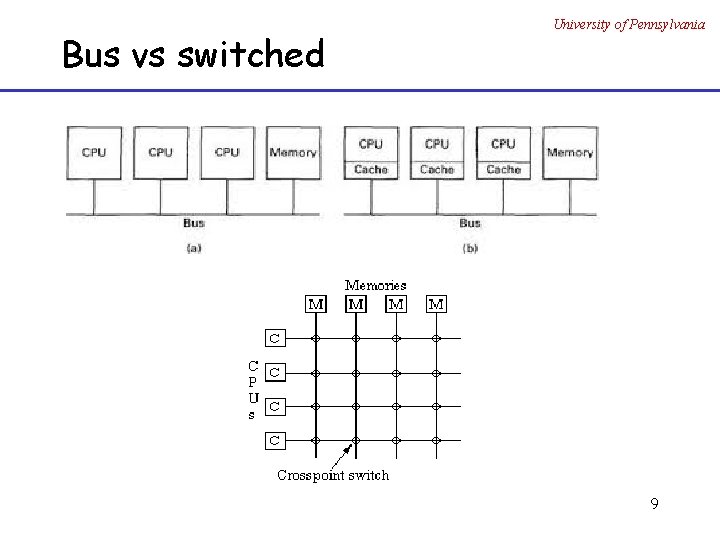

Bus versus Switched MIMD University of Pennsylvania • Bus: a single network, backplane, bus, cable or other medium that connects all machines. E. g. , cable TV • Switched: individual wires from machine to machine, with many different wiring patterns in use. Multiprocessors (shared memory) – Bus – Switched Multicomputers (private memory) – Bus – Switched 11/14/00 CSE 380 8

Bus vs switched University of Pennsylvania 9

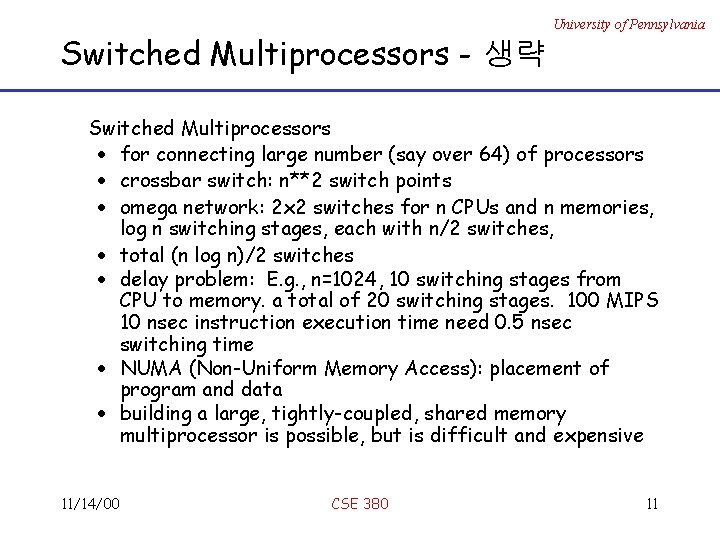

Switched Multiprocessors - 생략 University of Pennsylvania Switched Multiprocessors · for connecting large number (say over 64) of processors · crossbar switch: n**2 switch points · omega network: 2 x 2 switches for n CPUs and n memories, log n switching stages, each with n/2 switches, · total (n log n)/2 switches · delay problem: E. g. , n=1024, 10 switching stages from CPU to memory. a total of 20 switching stages. 100 MIPS 10 nsec instruction execution time need 0. 5 nsec switching time · NUMA (Non-Uniform Memory Access): placement of program and data · building a large, tightly-coupled, shared memory multiprocessor is possible, but is difficult and expensive 11/14/00 CSE 380 11

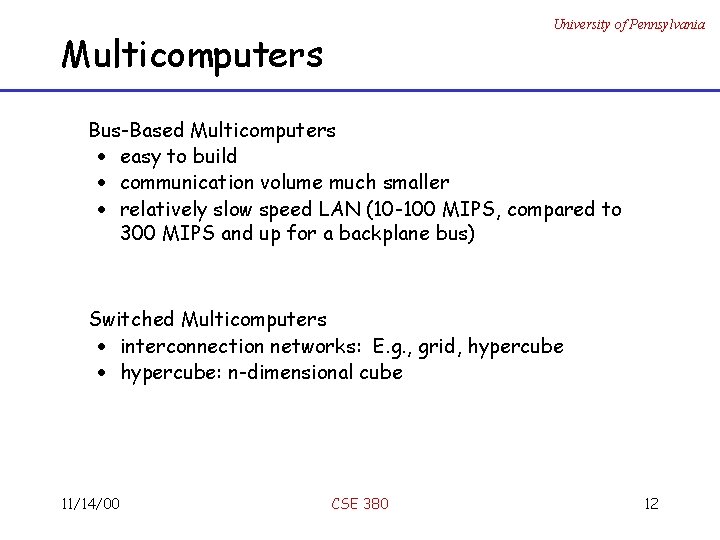

University of Pennsylvania Multicomputers Bus-Based Multicomputers · easy to build · communication volume much smaller · relatively slow speed LAN (10 -100 MIPS, compared to 300 MIPS and up for a backplane bus) Switched Multicomputers · interconnection networks: E. g. , grid, hypercube · hypercube: n-dimensional cube 11/14/00 CSE 380 12

Software Concepts University of Pennsylvania • Software more important for users • Three types: 1. Network Operating Systems 2. (True) Distributed Systems 3. Multiprocessor Time Sharing 11/14/00 CSE 380 13

Network Operating Systems University of Pennsylvania · loosely-coupled software on loosely-coupled hardware · A network of workstations connected by LAN · each machine has a high degree of autonomy o rlogin machine o rcp machine 1: file 1 machine 2: file 2 (remote copy prot. ) · Files servers: client and server model · Clients mount directories on file servers · Best known network OS: o Sun’s NFS (network file servers) for shared file systems · a few system-wide requirements: format and meaning of all the messages exchanged 11/14/00 CSE 380 14

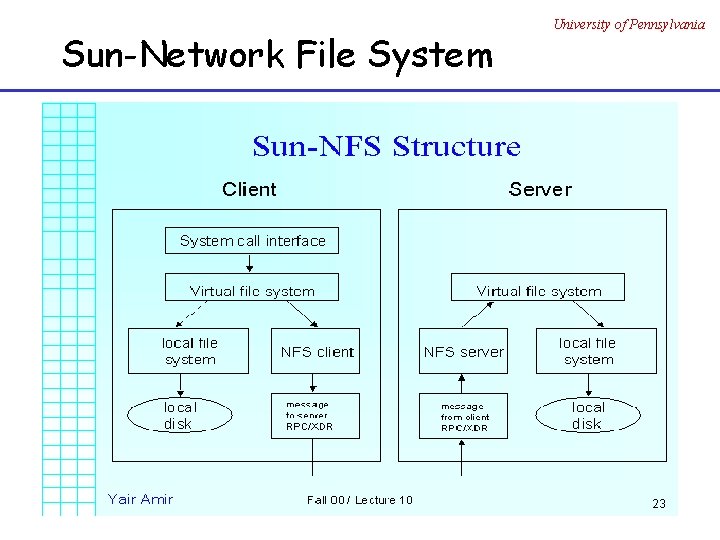

Sun-Network File System University of Pennsylvania

University of Pennsylvania NFS Architecture • Server exports directories • Clients mount exported directories NSF Protocols • For handling mounting • For read/write: no open/close, stateless 11/14/00 CSE 380 16

(True) Distributed Systems University of Pennsylvania § tightly-coupled software on loosely-coupled hardware § provide a single-system image or a virtual uniprocessor § a single, global interprocess communication mechanism, process management, file system; the same system call interface everywhere § Ideal definition: “ A distributed system runs on a collection of computers that do not have shared memory, yet looks like a single computer to its users. ” 11/14/00 CSE 380 17

University of Pennsylvania Multiprocessor Operating Systems · Tightly-coupled software on tightly-coupled hardware · Examples: high-performance servers · shared memory · single run queue · traditional file system as on a single-processor system: central block cache · Mordern MS windows belong to this type 11/14/00 CSE 380 18

University of Pennsylvania Design Issues of Distributed Systems • Transparency • Flexibility • Reliability • Performance • Scalability 11/14/00 CSE 380 19

University of Pennsylvania 1. Transparency – hide distribution • How to achieve the single-system image, i. e. , how to make a collection of computers appear as a single computer. • Hiding all the distribution from the users as well as the application programs can be achieved at two levels: 1) hide the distribution from users 2) at a lower level, make the system look transparent to programs. 1) and 2) requires uniform interfaces such as access to files, communication. 11/14/00 CSE 380 20

Types of transparency University of Pennsylvania – Location Transparency: users cannot tell where hardware and software resources such as CPUs, printers, files, data bases are located. – Migration Transparency: resources must be free to move from one location to another without their names changed. E. g. , /usr/lee, /central/usr/lee – Replication Transparency: OS can make additional copies of files and resources without users noticing. – Concurrency Transparency: The users are not aware of the existence of other users. Need to allow multiple users to concurrently access the same resource. Lock and unlock for mutual exclusion. – Parallelism Transparency: Automatic use of parallelism without having to program explicitly. The holy grail(성배, 불가능한목표) for distributed and parallel system designers. Users do not always want complete transparency: a fancy printer 1000 miles away 11/14/00 CSE 380 21

University of Pennsylvania 2. Flexibility • Make it easier to change • Monolithic Kernel: systems calls are trapped and executed by the kernel. All system calls are served by the kernel, e. g. , UNIX. (vs. micro-kernel) • Microkernel: provides minimal services. 1) IPC 2) some memory management 3) some low-level process management and scheduling 4) low-level i/o E. g. , Mach can support multiple file systems, multiple system interfaces. 11/14/00 CSE 380 22

University of Pennsylvania 3. Reliability • Distributed system should be more reliable than single system. Example: 3 machines with. 95 probability of being up. 1 -. 05**3 probability of being up. – Availability: fraction of time the system is usable. Redundancy improves it. (low MTBF) – Need to maintain consistency – Need to be secure – Fault tolerance: need to mask(감추다) failures, recover from errors. 11/14/00 CSE 380 23

University of Pennsylvania 4. Performance • Without gain on this, why bother with distributed systems. • Performance gain due to the networked multiple CPUs • Performance loss due to communication delays: – fine-grain parallelism: high degree of interaction – coarse-grain parallelism • Performance loss due to making the system fault tolerant. 11/14/00 CSE 380 24

University of Pennsylvania 5. Scalability • Systems grow with time or become obsolete. • Techniques that require resources linearly in terms of the size of the system are not scalable. e. g. , broadcast based query won't work for large distributed systems. • Examples of bottlenecks o Centralized components: a single mail server o Centralized tables: a single URL address book o Centralized algorithms: routing based on complete information 11/14/00 CSE 380 25

University of Pennsylvania Distributed system challenges (text) - Heterogeneity - Openness - Security - Scalability - Failure handling - Concurrency - Transparency - Quality of service 11/14/00 CSE 380 28

- Slides: 25