University of Belgrade School of Electrical Engineering Chair

University of Belgrade School of Electrical Engineering Chair of Computer Engineering and Information Theory Data Mining and Semantic Web Neural Networks: Backpropagation algorithm Miroslav Tišma tisma. etf@gmail. com

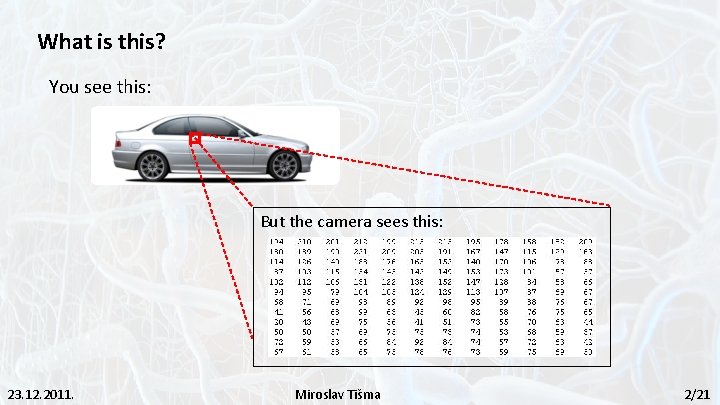

What is this? You see this: But the camera sees this: 23. 12. 2011. Miroslav Tišma 2/21

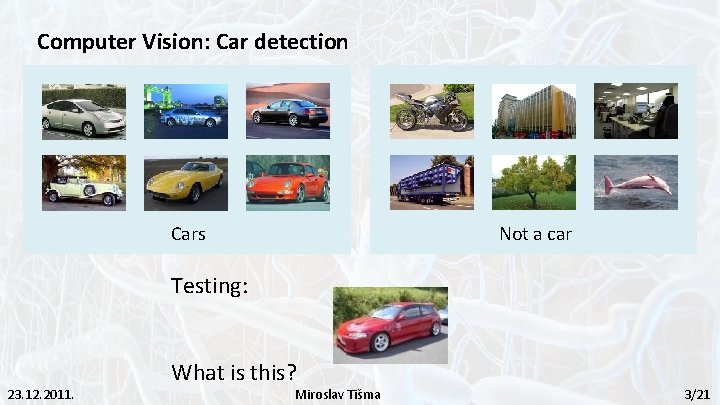

Computer Vision: Car detection Not a car Cars Testing: 23. 12. 2011. What is this? Miroslav Tišma 3/21

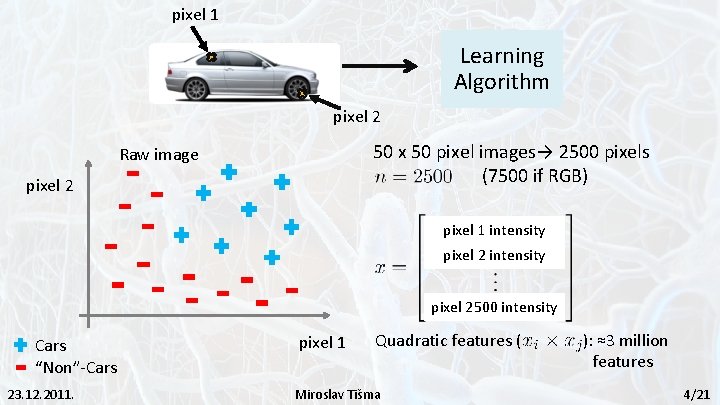

pixel 1 Learning Algorithm pixel 2 50 x 50 pixel images→ 2500 pixels (7500 if RGB) Raw image pixel 2 pixel 1 intensity pixel 2500 intensity Cars “Non”-Cars 23. 12. 2011. pixel 1 Quadratic features ( Miroslav Tišma ): ≈3 million features 4/21

Neural Networks • Origins: Algorithms that try to mimic the brain • Was very widely used in 80 s and early 90 s; popularity diminished in late 90 s. • Recent resurgence: State-of-the-art technique for many applications 23. 12. 2011. Miroslav Tišma 5/21

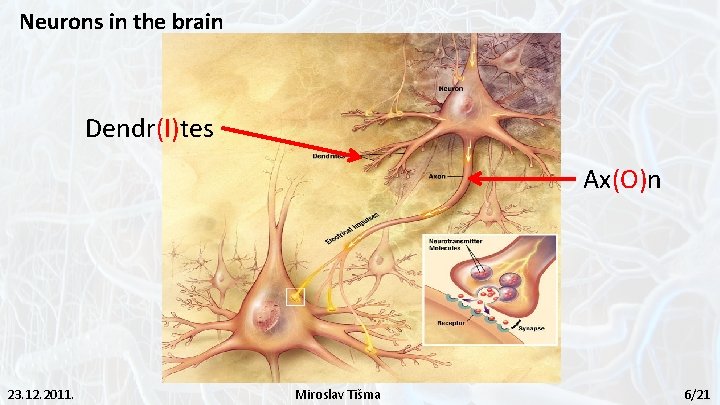

Neurons in the brain Dendr(I)tes Ax(O)n 23. 12. 2011. Miroslav Tišma 6/21

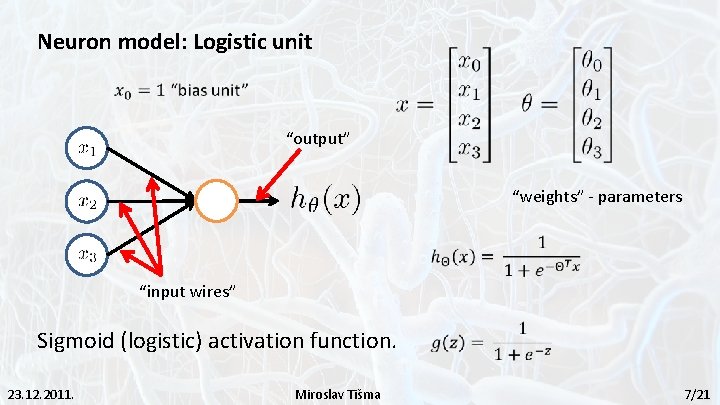

Neuron model: Logistic unit “output” “weights” - parameters “input wires” Sigmoid (logistic) activation function. 23. 12. 2011. Miroslav Tišma 7/21

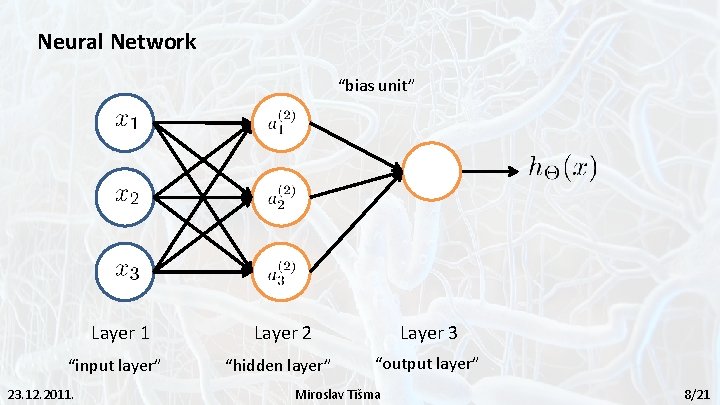

Neural Network “bias unit” Layer 1 “input layer” 23. 12. 2011. Layer 2 Layer 3 “hidden layer” “output layer” Miroslav Tišma 8/21

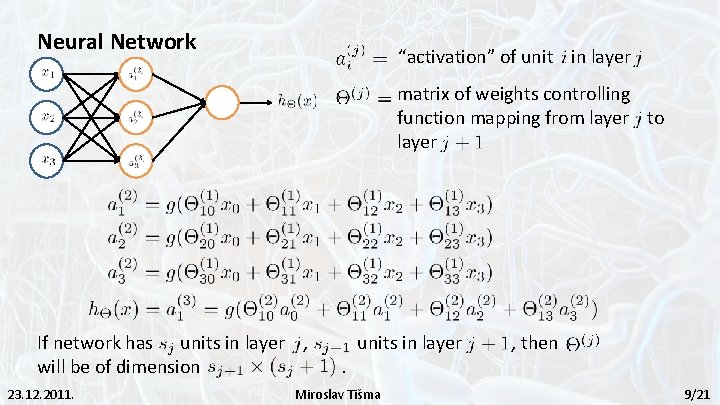

Neural Network “activation” of unit in layer matrix of weights controlling function mapping from layer to layer If network has units in layer , will be of dimension 23. 12. 2011. . units in layer Miroslav Tišma , then 9/21

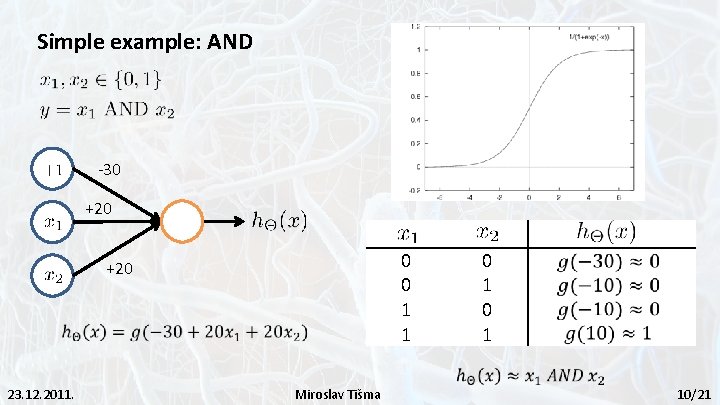

Simple example: AND -30 +20 0 0 1 1 +20 23. 12. 2011. Miroslav Tišma 0 1 10/21

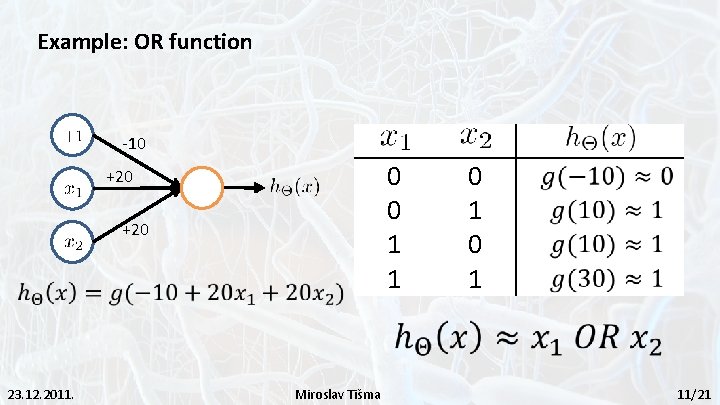

Example: OR function -10 0 0 1 1 +20 23. 12. 2011. Miroslav Tišma 0 1 11/21

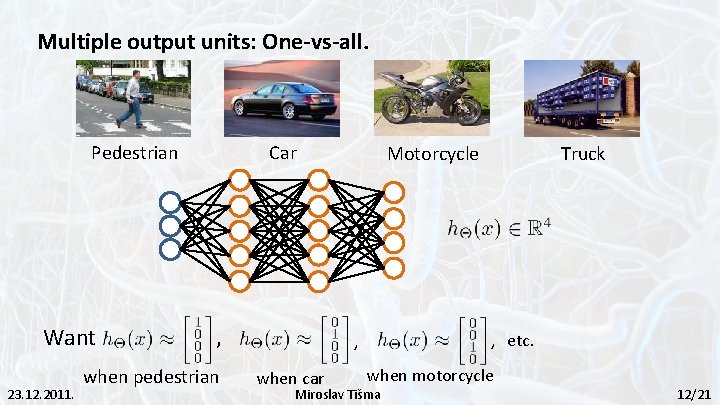

Multiple output units: One-vs-all. Pedestrian Want 23. 12. 2011. Car , when pedestrian Motorcycle , etc. , when car Truck when motorcycle Miroslav Tišma 12/21

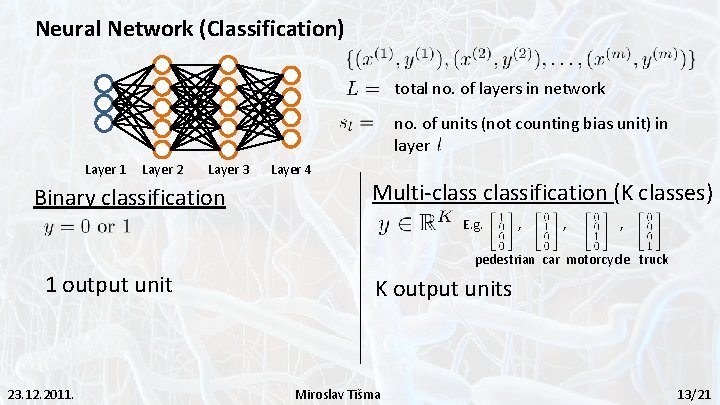

Neural Network (Classification) total no. of layers in network no. of units (not counting bias unit) in layer Layer 1 Layer 2 Layer 3 Binary classification Layer 4 Multi-classification (K classes) E. g. , , , pedestrian car motorcycle truck 1 output unit 23. 12. 2011. K output units Miroslav Tišma 13/21

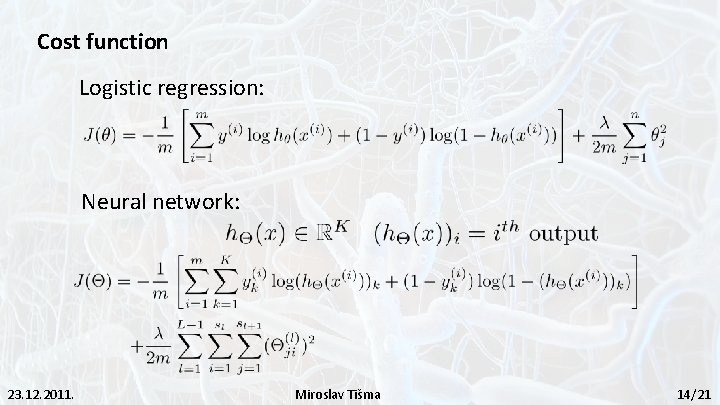

Cost function Logistic regression: Neural network: 23. 12. 2011. Miroslav Tišma 14/21

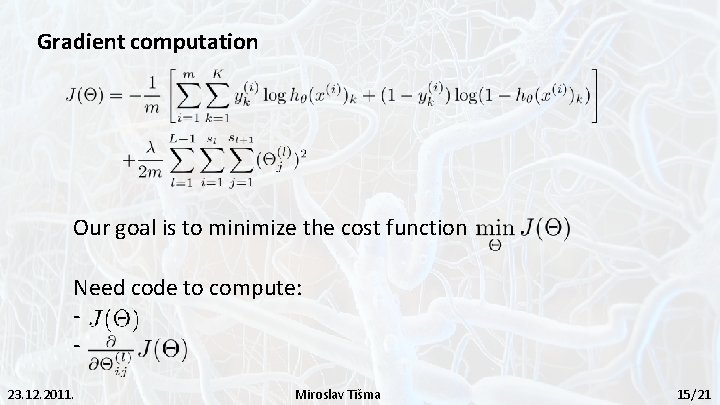

Gradient computation Our goal is to minimize the cost function Need code to compute: 23. 12. 2011. Miroslav Tišma 15/21

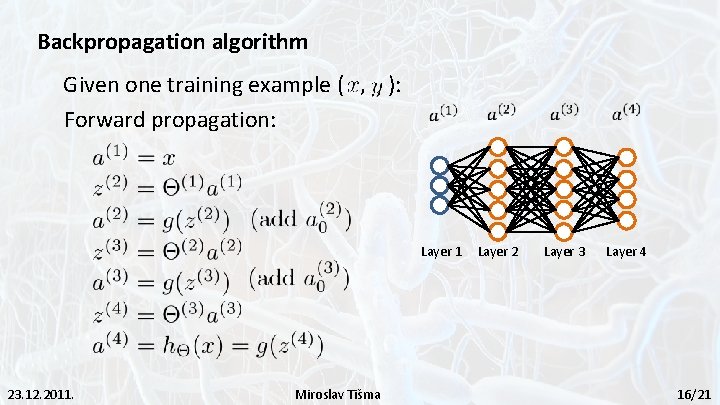

Backpropagation algorithm Given one training example ( , ): Forward propagation: Layer 1 23. 12. 2011. Miroslav Tišma Layer 2 Layer 3 Layer 4 16/21

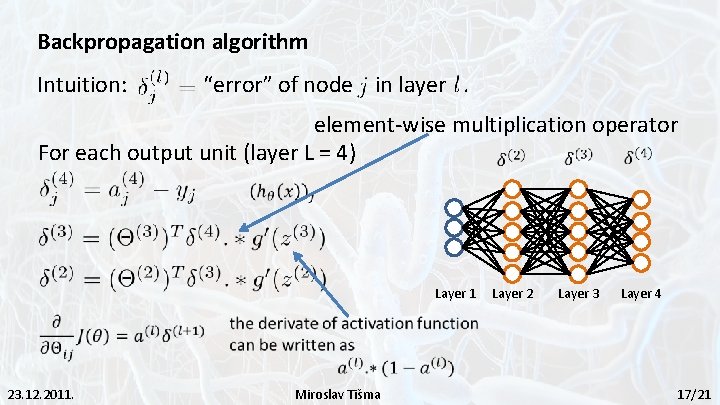

Backpropagation algorithm Intuition: “error” of node in layer. element-wise multiplication operator For each output unit (layer L = 4) Layer 1 23. 12. 2011. Miroslav Tišma Layer 2 Layer 3 Layer 4 17/21

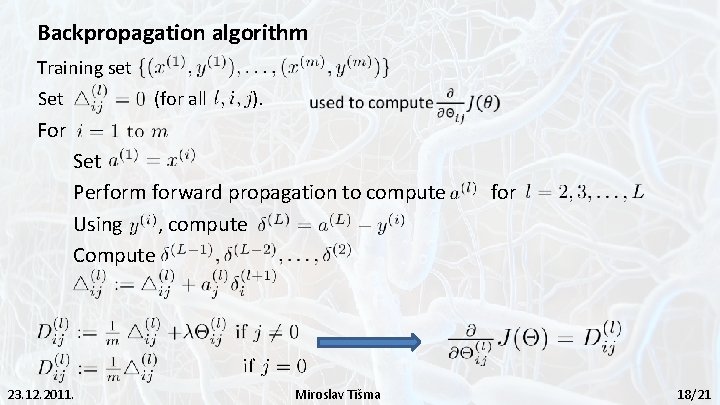

Backpropagation algorithm Training set Set (for all ). For Set Perform forward propagation to compute Using , compute Compute 23. 12. 2011. Miroslav Tišma for 18/21

Advantages: - Relatively simplementation - Standard method and generally wokrs well - Many practical applications: * handwriting recognition, autonomous driving car Disadvantages: - Slow and inefficient - Can get stuck in local minima resulting in sub-optimal solutions 23. 12. 2011. Miroslav Tišma 19/21

Literature: - http: //en. wikipedia. org/wiki/Backpropagation - http: //www. ml-class. org - http: //home. agh. edu. pl/~vlsi/AI/backp_t_en/backprop. html 23. 12. 2011. Miroslav Tišma 20/21

Thank you for your attention! 23. 12. 2011. Miroslav Tišma 21/21

- Slides: 21