UNIT II SOFTWARE MEASUREMENTS Learning outcomes 1 Able

UNIT – II SOFTWARE MEASUREMENTS

Learning outcomes 1 Able to measure internal product attribute. 2 Able to measure the size of product. 3 Able to measure the structure of product. 4 Able to measure the software quality. 5 Able to classify and determine size, structure and software quality. 6 Benefits

Software Size Simple measure of size are often rejected because they do not adequately reflect: § effort: they fail to take account redundancy and complexity. § Productivity: they fail to take account of true functionality and effort. § Cost: they fail to take account of complexity and reuse.

Software Size Simple measure of size are often rejected because they do not adequately reflect: § effort: they fail to take account redundancy and complexity. § Productivity: they fail to take account of true functionality and effort. § Cost: they fail to take account of complexity and reuse.

Aspects of Software Size We suggest Soft size can be described with four attributes: i. Length – Physical size of the product ii. Functionality – amount of functions supplied by the product iii. Complexity –Interpreted in different ways iv. Reuse- Extent to which product was copied or modified from previous version of existing product.

2. LENGTH: We want to measure size of Code, Design & Specification 2. 1 CODE: - produce by programming language 2. 1. 1 Code Measures: - § A) LOC : LOC = CLOC + NCLOC § B) Textual Code: It includes i. Blank line ii. Comment iii. Data declaration iv. Line that contain several separate instructions C) Non-Textual Code: It includes GUI of a system

2. LENGTH (Contd. . ) 2. 2 Specification & Design • Specification or design consist of text, graphs, symbols & mathematical diagrams • When measuring code size, it is expected to identify atomic object to count LOC, executable statements, instructions, objects & methods • Identify atomic object to count – no. of sequential pages (text & diagrams togetherly form a sequential pages) as well as different types of diagrams & symbols • We can view the length as a composite measure – text length & diagram length • Way to handle well-known methods: 1. Atomic objects for data flow diagrams (DFD) are processes (bubble nodes), external entity (box), data stores & data flow (arcs) 2. Atomic entity for algebraic specification are sorts, function, operations, axioms 3. Atomic entity for Z-schema are various lines appearing in specification.

2. LENGTH (Contd. . ) 2. 3 Predicting length § Predict length as early as possible § Length may predict by considering median expansion ratio from design length to code length on similar projects § Use size of design to predict size of resulting code. § design to design ratio is- size of design size of code

3. REUSE § Improves our productivity and quality. § Reuse done by modification in unit of code or reuse without modify ØReuse verbatim : - code in unit reused without any changes ØSlightly modified: - fewer than 25% of LOC in unit modified ØExtensively modified: - 25% or more LOC modified ØNew: - newly develop code Hewlett-Packward considers 3 -level of code 1. New code 2. Reused code: - without any modification 3. Leveraged code: - modification of existing code

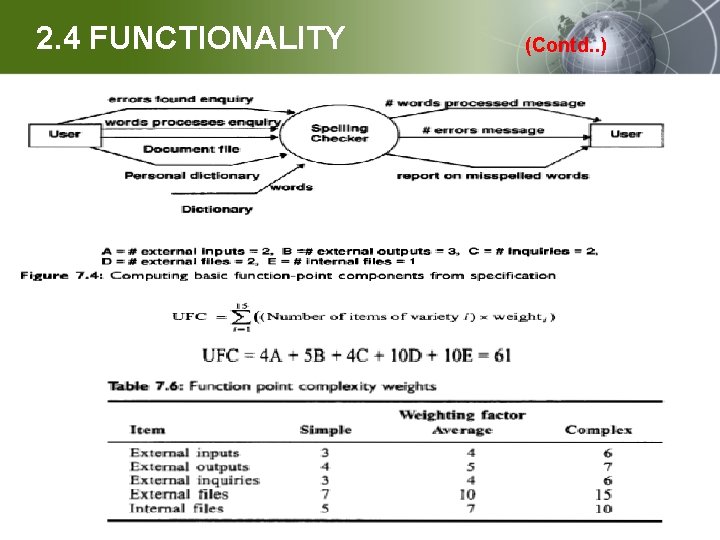

2. 4 FUNCTIONALITY 2. 4. 1 Albrecht’s function point approach : - Effort Estimation based on FP - It is based on function point(FP) - Fp measure amount of functionality described by specification - UFC : we determine from some representation of the software the number of items of the following types: 1. 2. 3. 4. 5. External i/p: - provided by user to describe distinct application – oriented data , doesn’t include inquiries (File name, menu selection) External o/p: - provided to user that generate application oriented data viz. Report External inquiries : -i/p requiring response External files: - machine readable interface to other system Internal files: logical master files in the system

2. 4 FUNCTIONALITY (Contd. . )

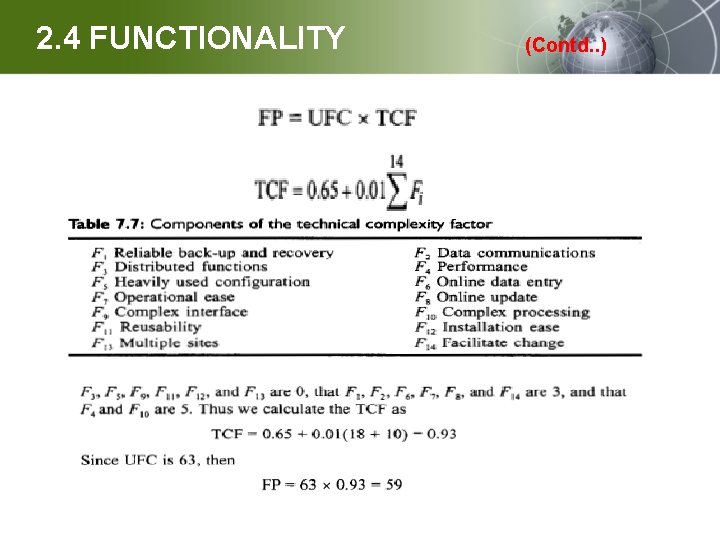

2. 4 FUNCTIONALITY (Contd. . )

2. 4 FUNCTIONALITY (Contd. . ) § LIMITATION OF ALBRECHT’s FP: 1. Problem with subjectivity in technology factor: - - TCF may range from 0. 65 to 1. 35 , UFC can be changed by +-35% 2. Problem with double counting: - - It is possible to account internal complexity twice Ø In weighting the i/p for UFP count & again in TCF 3. Problem with counter intuitive values: - When each Fi is “average” & rated 3 , we would expect TCF to be 1 , instead formula yields 1. 07 4. Problem with accuracy: - - TCF doesn’t significantly improve resource estimates - TCF doesn’t seem useful in increasing accuracy of prediction. 5. Problem with early life cycle use -FP calculation require a full s/w specification , a user requirement document is not sufficient

2. 4 FUNCTIONALITY (Contd. . ) 6. Problem with changing requirements: - no. & complexity of i/p , o/p , enquiries & other FP related data will be underestimated in a specification because they are not well understood early in a project 7. Problem with differentiating specified items: - Calculation of FP from a specification can not be completely automated 8. Problem with technology dependence: - Counting rule for FP may require adjustment to the particular method being used 9. Problem with application domain - Use of FP in real-time , scientific application is controversial 10. Problem with subjectivity weighting - Choice of weights for calculating UFP was determined subjectively from IBM experience , these valuses may not be appropriate in other development environment 11. Problem with measurement theory: -

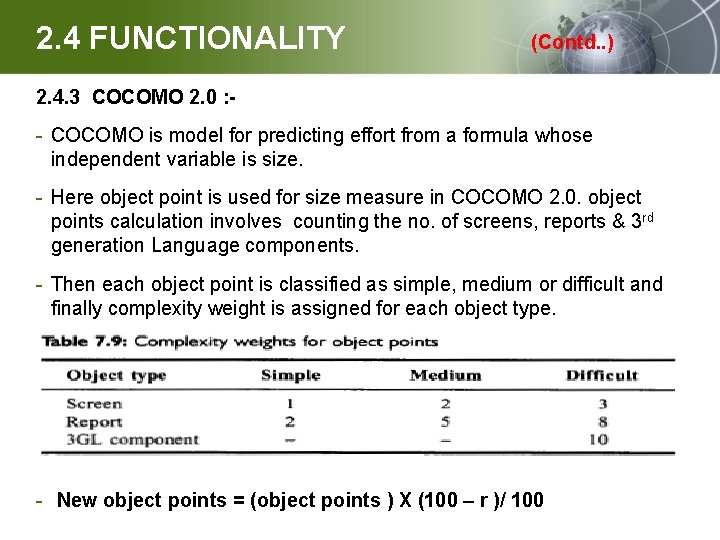

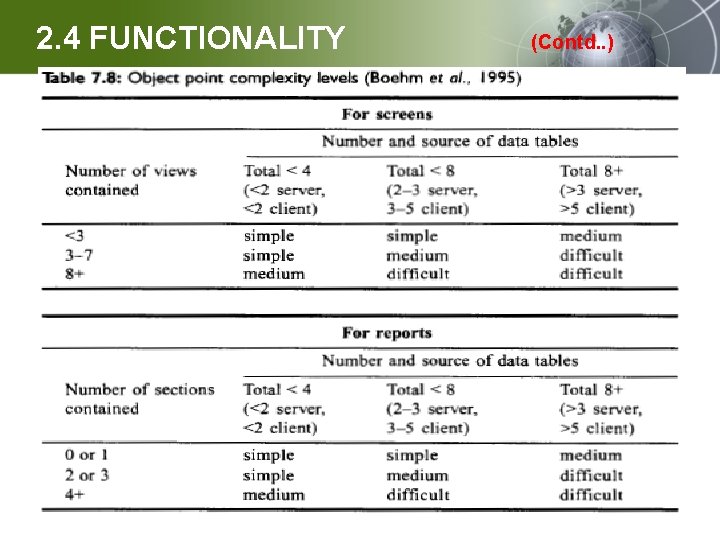

2. 4 FUNCTIONALITY (Contd. . ) 2. 4. 3 COCOMO 2. 0 : - - COCOMO is model for predicting effort from a formula whose independent variable is size. - Here object point is used for size measure in COCOMO 2. 0. object points calculation involves counting the no. of screens, reports & 3 rd generation Language components. - Then each object point is classified as simple, medium or difficult and finally complexity weight is assigned for each object type. - New object points = (object points ) X (100 – r )/ 100

2. 4 FUNCTIONALITY (Contd. . )

2. 4 FUNCTIONALITY (Contd. . ) 3. De. Marco’s approach • This approach proposed a functionality measure based on structured analysis & design notation • This approach involves bang metrics (specification weight metrics) • Bang metric involve 2 -measure 1. Function strong system – based on the no. of functional primitives (lowest –level bubbles) in DFD. Here basic functional primitive count is weighted according to the type of functional primitive & no. of data tokens used by functional primitive 1. Data strong system – based on the no. of entities in ER model. - Here basic entity count is weighted according to the type no. of relationship involving each entity

2. 5 COMPLEXITY § 2 -aspects of Complexity: 1. Time Complexity: - resource is computer time 2. Space Complexity: - resource is computer memory a. Problem Complexity – measures complexity of underlying (basic) problem b. Algorithmic Complexity – measure efficiency of s/w c. Structural Complexity – measures the structure of the s/w used to implement the algorithm. d. Cognitive Complexity – measures the effort required to understand the s/w.

2. 5 COMPLEXITY (Contd. . ) 2. 5. 1 Measuring algorithmic efficiency § Determine time & memory Ex: - binary search algo : -max. no. of comparison is internal attribute of algo. § Measuring efficiency: üTime efficiency is measured by running program through particular compiler on particular machine with particular i/p, measuring actual processing time

2. 5 COMPLEXITY (Contd. . ) § Big-O notation - Big O notation is the language we use for indicating how long an algorithm takes to run. It's how we compare the efficiency of different approaches to a problem. - it measures the efficiency of an algorithm based on the time it takes for the algorithm to run as a function of the input size.

MEASURING INTERNAL PRODUCT ATTRIBUTES : STRUCTURE

2. 6. 1 Types of Structural Measure § Size – tell us about the effort for creating product. § Structure of the product plays a part, not only in required development effort but also in how the product is maintained. § Structure as having at least 3 parts: - Control Flow Structure - Data Structure

2. 7 CONTROL FLOW STRUCTURE - Control Flow Structure : Sequence in which instructions are executed. It also reflects iterative & looping nature of program - Data Flow Structure : It indicates behavior of data as it interacts with program. It also keeps track of data item as it is created or handled by a program. - Data Structure : Organization of the data itself, independent of program. Some times a program is complex due to a complex data structure rather than complex control or data flow.

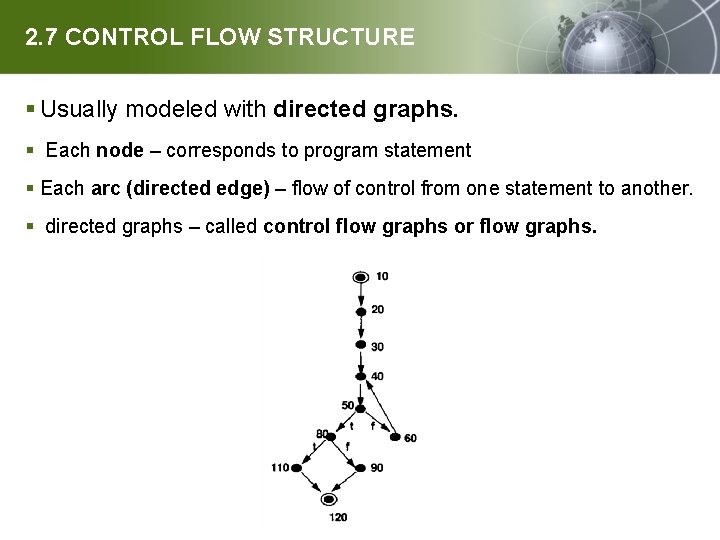

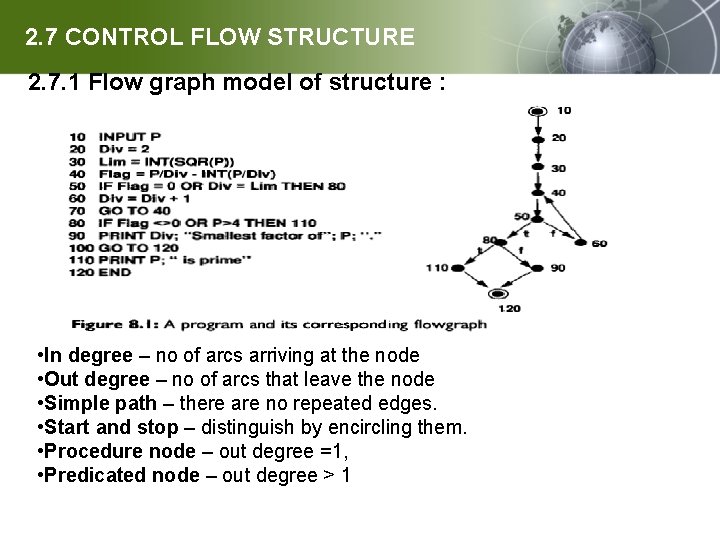

2. 7 CONTROL FLOW STRUCTURE § Usually modeled with directed graphs. § Each node – corresponds to program statement § Each arc (directed edge) – flow of control from one statement to another. § directed graphs – called control flow graphs or flow graphs.

2. 7 CONTROL FLOW STRUCTURE 2. 7. 1 Flow graph model of structure : • In degree – no of arcs arriving at the node • Out degree – no of arcs that leave the node • Simple path – there are no repeated edges. • Start and stop – distinguish by encircling them. • Procedure node – out degree =1, • Predicated node – out degree > 1

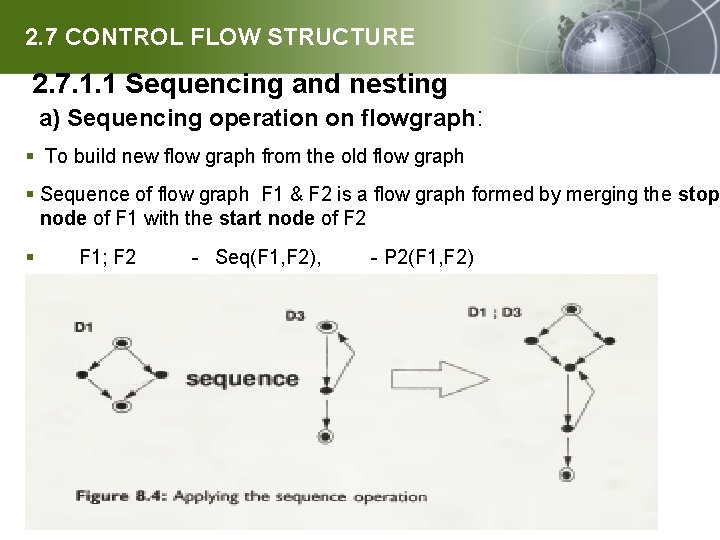

2. 7 CONTROL FLOW STRUCTURE 2. 7. 1. 1 Sequencing and nesting a) Sequencing operation on flowgraph: § To build new flow graph from the old flow graph § Sequence of flow graph F 1 & F 2 is a flow graph formed by merging the stop node of F 1 with the start node of F 2 § F 1; F 2 - Seq(F 1, F 2), - P 2(F 1, F 2)

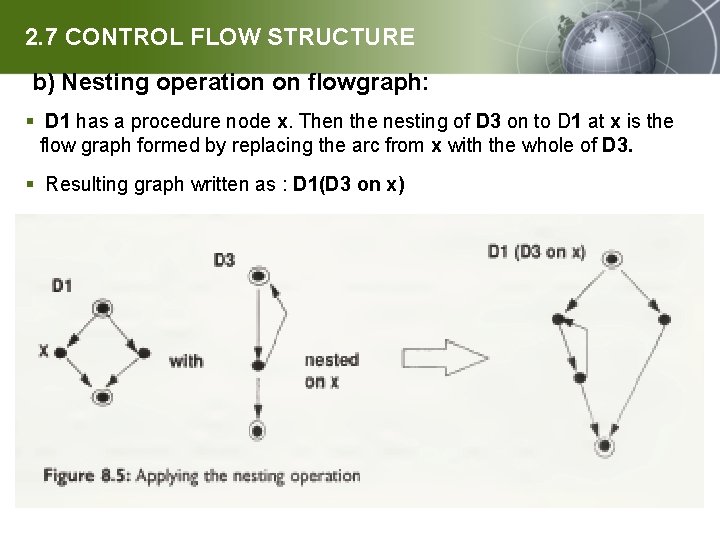

2. 7 CONTROL FLOW STRUCTURE b) Nesting operation on flowgraph: § D 1 has a procedure node x. Then the nesting of D 3 on to D 1 at x is the flow graph formed by replacing the arc from x with the whole of D 3. § Resulting graph written as : D 1(D 3 on x)

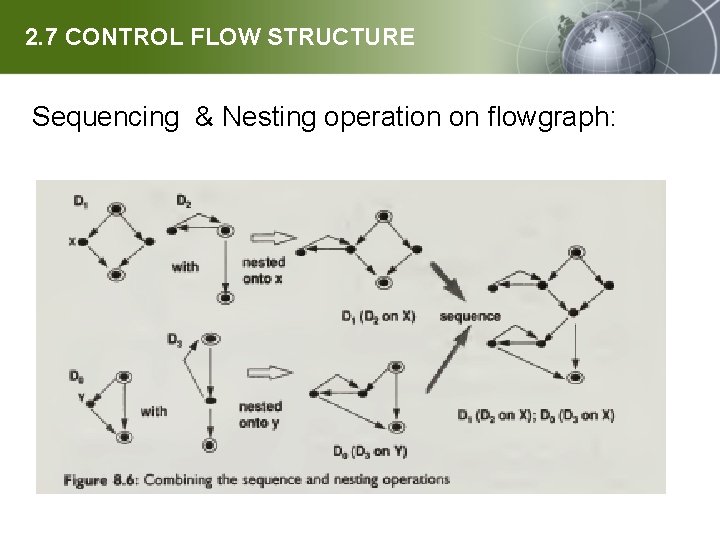

2. 7 CONTROL FLOW STRUCTURE Sequencing & Nesting operation on flowgraph:

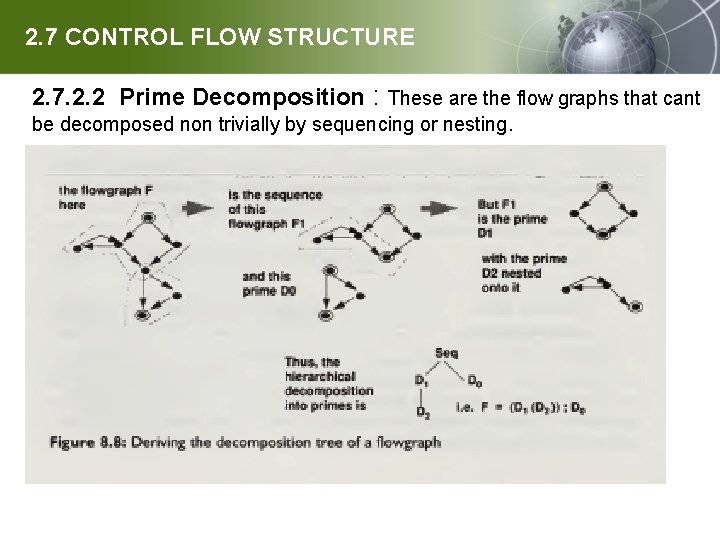

2. 7 CONTROL FLOW STRUCTURE 2. 7. 2. 2 Prime Decomposition : These are the flow graphs that cant be decomposed non trivially by sequencing or nesting.

2. 7 CONTROL FLOW STRUCTURE 2. 7. 2 Hierarchical Measure : 2. 7. 2. 1 Mc. Cabe’s cyclomatic complexity measure: § proposed that program complexity be measured by the cyclomatic number of the programs flow graph. v(F) = e – n + 2 where f has e is the arcs, n is the nodes - cyclomatic number actually measure the number of linear independent paths through F. v(F) = 1 + d d - predicate nodes in F Thus, if v is measure of “complexity”, it follows that: 1. The complexity of primes is dependent on the no of predicates in them 2. The complexity of sequence is equal to the sum of the complexities of the components minus the number of components plus one. 3. The complexity of nesting components on a prime F is equal to the complexity of F plus the sum of the complexity of the components minus the number of components.

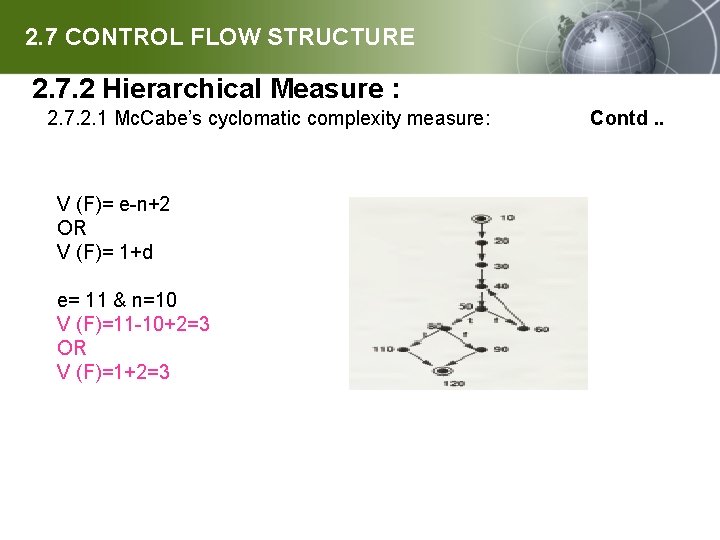

2. 7 CONTROL FLOW STRUCTURE 2. 7. 2 Hierarchical Measure : 2. 7. 2. 1 Mc. Cabe’s cyclomatic complexity measure: Contd. . V (F)= e-n+2 OR V (F)= 1+d e= 11 & n=10 V (F)=11 -10+2=3 OR V (F)=1+2=3

2. 7 CONTROL FLOW STRUCTURE 2. 7. 2 Hierarchical Measure : 2. 7. 2. 2 Mc. Cabe’s essential complexity measure: ü Mc. Cabe also proposed a measure to capture the overall level of structuredness in a program. ü For a program with flowgraph F, the essential complexity ev is defined as üEv(F) = v(F) – m ü where m is the number of sub flow graphs of F

2. 7 CONTROL FLOW STRUCTURE 2. 7. 3 Test Coverage Measures: § Structure of module is related to difficulty find in testing § Program ‘P’ produced for specification ‘S ‘ , we test p with i/p I & check o/p for i satisfies specification or not § We define test cases in pair ( i, s(i)) Ex: - p program for following specification S of exam scores, rules are: - üFor score under 45, program o/p “ fails” üFor score between 45 & 80 , program o/p “ Pass” üFor score above 80 , program o/p “ Pass with distinction ” üAny i/p other than numerical value produces error Test cases are: - (40, ”fails”) , (60, ”pass”), (90, ” Pass with distinction ”), (fifty, ” Invalid i/p ”),

2. 7 CONTROL FLOW STRUCTURE 2. 7. 3 Test Coverage Measures: Contd. . §Test strategies: 1. black-box (closed box) testing: - test cases are derived from specification/requirement without reference to code or structure 2. White –box (open box) testing : Test cases based on knowledge of internal program structure § Limitations of white-box testing 1. Infeasible path: - is a program path that can’t be executed for any i/p 2. It doesn't guarantee adequate s/w testing 3. Knowing set of path that satisfies strategies don’t tell how to create test – cases to match the path §Minimum no. of test cases üImportant to know mini. No. of test cases needed to satisfy strategy üIt help in plan of testing üFor generating data for each test case üUnderstand time to be taken for testing

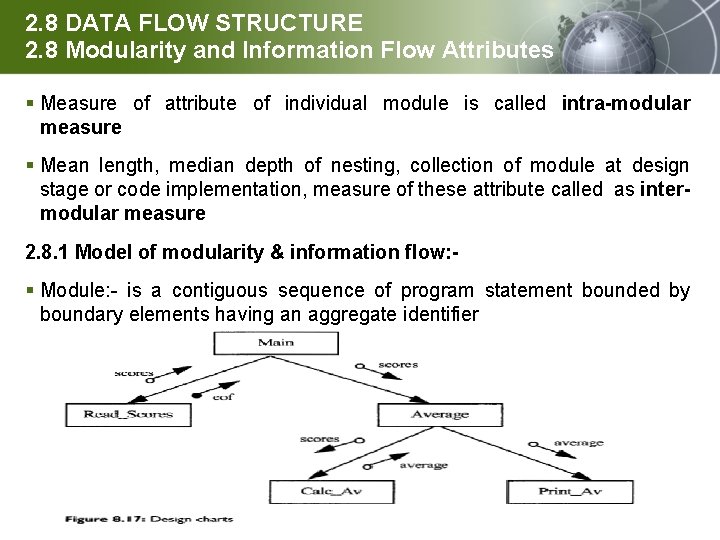

2. 8 DATA FLOW STRUCTURE 2. 8 Modularity and Information Flow Attributes § Measure of attribute of individual module is called intra-modular measure § Mean length, median depth of nesting, collection of module at design stage or code implementation, measure of these attribute called as intermodular measure 2. 8. 1 Model of modularity & information flow: - § Module: - is a contiguous sequence of program statement bounded by boundary elements having an aggregate identifier

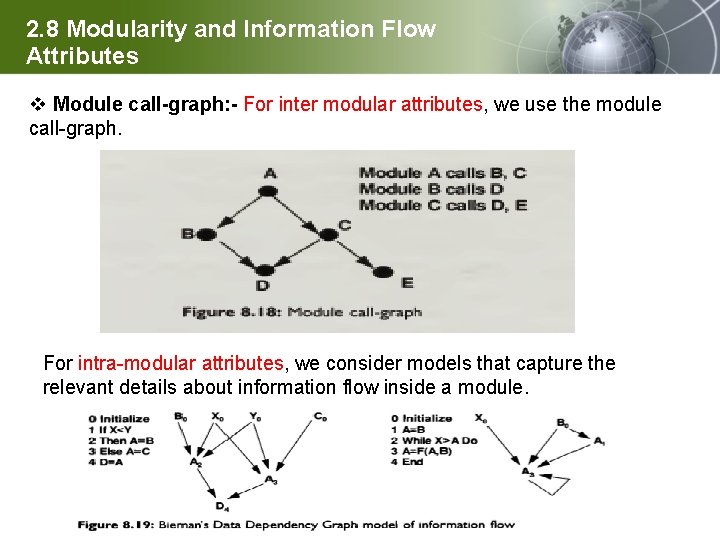

2. 8 Modularity and Information Flow Attributes v Module call-graph: - For inter modular attributes, we use the module call-graph. For intra-modular attributes, we consider models that capture the relevant details about information flow inside a module.

2. 8 Modularity and Information Flow Attributes 2. 8. 2 Global modularity: It is difficult to define because of different view of what modularity means. For example, we consider average module length as a measure of global modularity. It is done by examining mean length of all modules in system. Hausen suggest that we describe in terms of several specific views of modularity such as: M 1= modules/procedures M 2=modules/variables Both Hausen’s and Bohem’s observations suggests that we focus first on specific aspects of modularity and then construct more general models from them.

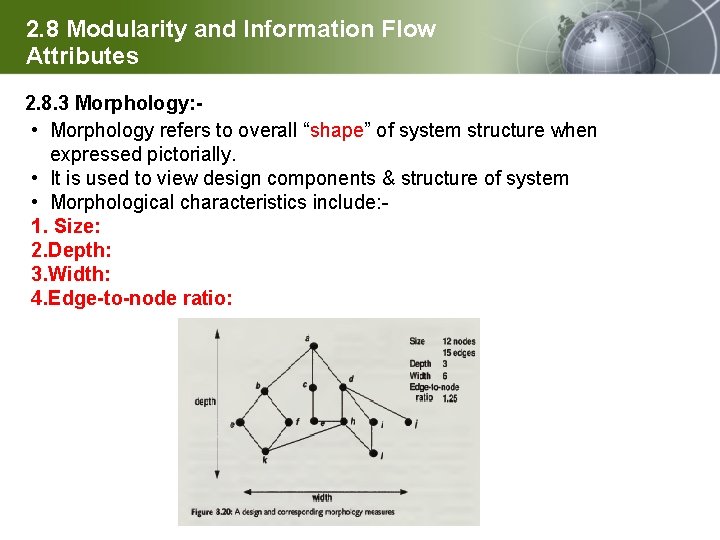

2. 8 Modularity and Information Flow Attributes 2. 8. 3 Morphology: • Morphology refers to overall “shape” of system structure when expressed pictorially. • It is used to view design components & structure of system • Morphological characteristics include: - 1. Size: 2. Depth: 3. Width: 4. Edge-to-node ratio:

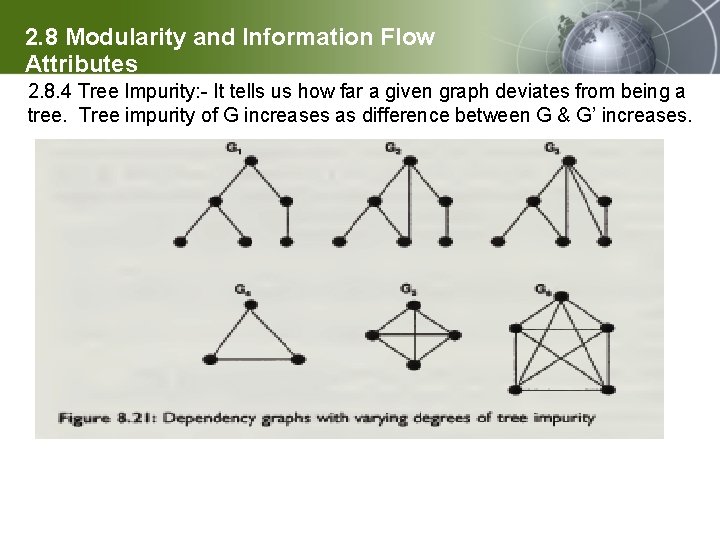

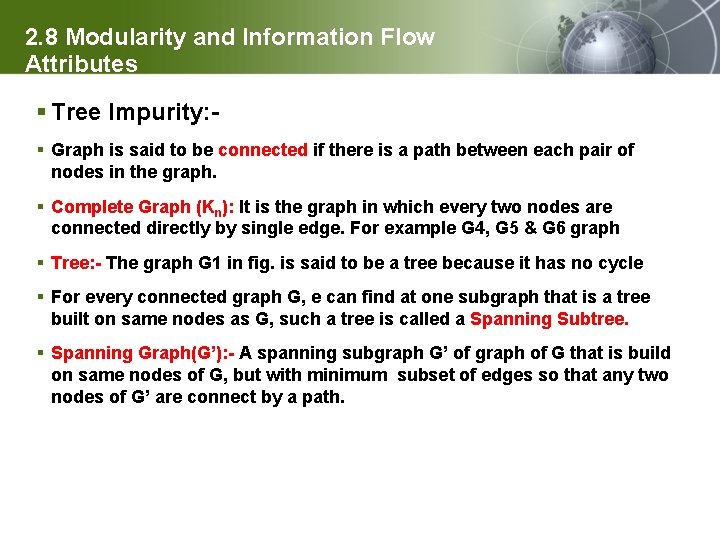

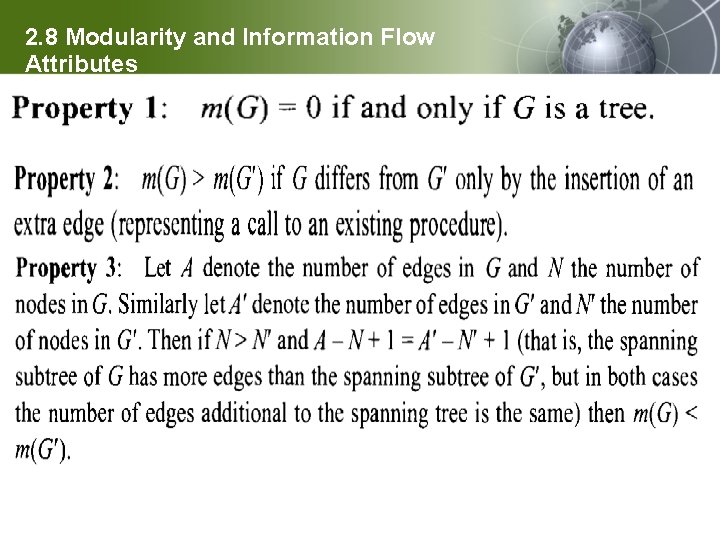

2. 8 Modularity and Information Flow Attributes 2. 8. 4 Tree Impurity: - It tells us how far a given graph deviates from being a tree. Tree impurity of G increases as difference between G & G’ increases.

2. 8 Modularity and Information Flow Attributes § Tree Impurity: § Graph is said to be connected if there is a path between each pair of nodes in the graph. § Complete Graph (Kn): It is the graph in which every two nodes are connected directly by single edge. For example G 4, G 5 & G 6 graph § Tree: - The graph G 1 in fig. is said to be a tree because it has no cycle § For every connected graph G, e can find at one subgraph that is a tree built on same nodes as G, such a tree is called a Spanning Subtree. § Spanning Graph(G’): - A spanning subgraph G’ of graph of G that is build on same nodes of G, but with minimum subset of edges so that any two nodes of G’ are connect by a path.

2. 8 Modularity and Information Flow Attributes

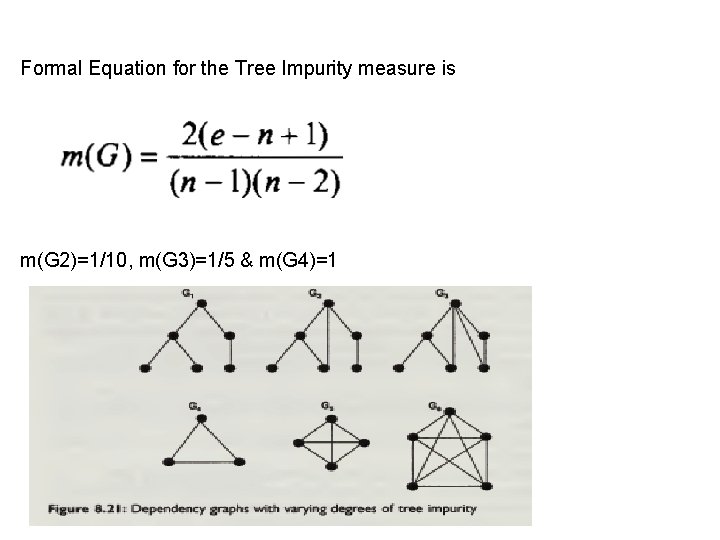

Formal Equation for the Tree Impurity measure is m(G 2)=1/10, m(G 3)=1/5 & m(G 4)=1

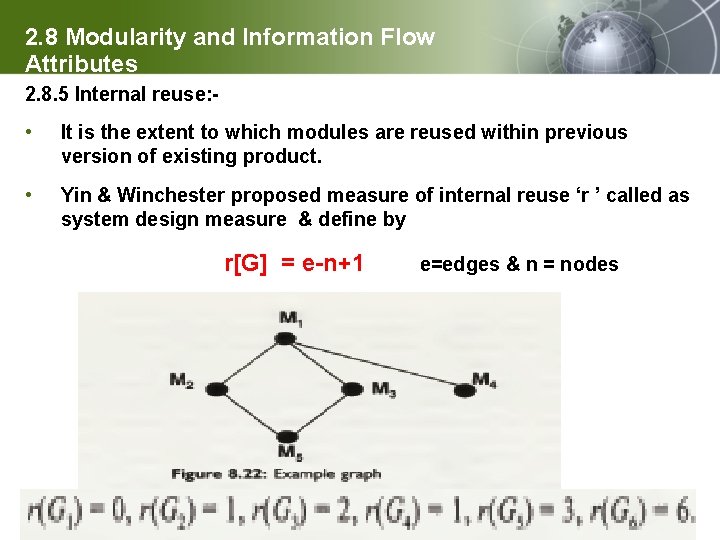

2. 8 Modularity and Information Flow Attributes 2. 8. 5 Internal reuse: - • It is the extent to which modules are reused within previous version of existing product. • Yin & Winchester proposed measure of internal reuse ‘r ’ called as system design measure & define by r[G] = e-n+1 e=edges & n = nodes

2. 8 Modularity and Information Flow Attributes 2. 8. 6 Coupling: üIt is degree of interdependence between modules üClassification define 6 relations on set of pair of module x & y 1. No coupling relation Ro – no communication 2. Data coupling relation R 1 – communicate by parameter 3. Stamp coupling relation R 2 – accept the same record type as parameter 4. Control coupling relation R 3 - passes parameter to control its behavior 5. Common coupling relation R 4 – refers to same global data 6. Content coupling relation R 3 – x refers to inside of y

2. 8 Modularity and Information Flow Attributes 2. 8. 7 Cohesion: • Definition: - It is the extent to which individual component are needed to perform same task. • Types of Cohesion: 1. Functional: - perform single function 2. Sequential: - perform more than one function but in sequential order 3. Communicational: - module perform multiple function 4. Procedural: - more than one function, related to general procedure 5. Temporal: - related only by the fact that they must occur within the same timespan 6. Logical: - related only logically 7. Co-incidental: - performs multiple function & are unrelated

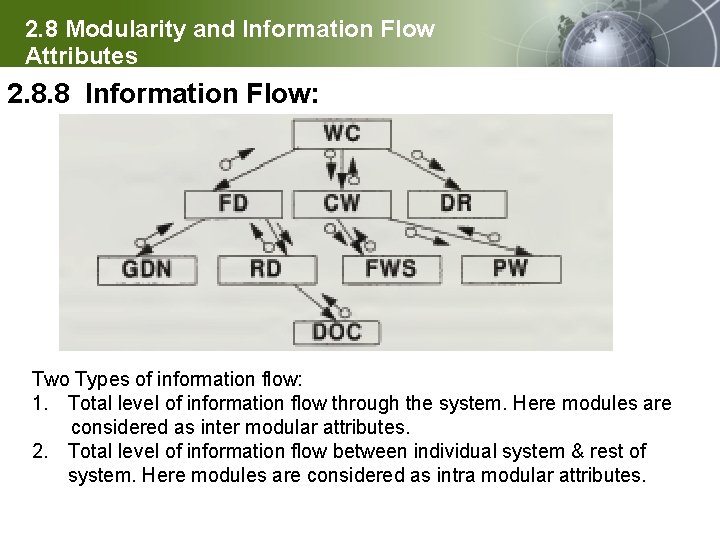

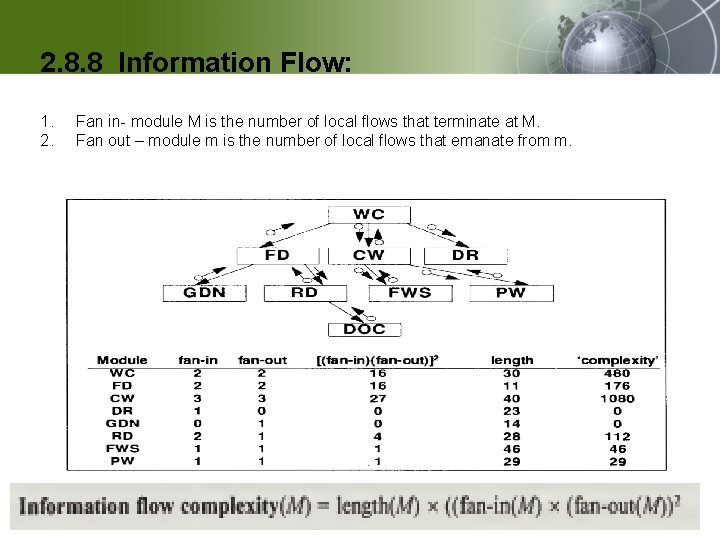

2. 8 Modularity and Information Flow § Attributes 2. 8. 8 Information Flow: Two Types of information flow: 1. Total level of information flow through the system. Here modules are considered as inter modular attributes. 2. Total level of information flow between individual system & rest of system. Here modules are considered as intra modular attributes.

§ 2. 8. 8 Information Flow: Two particular attributes of information flow: 1. 2. Fan in- module M is the number of local flows that terminate at M. Fan out – module m is the number of local flows that emanate from m.

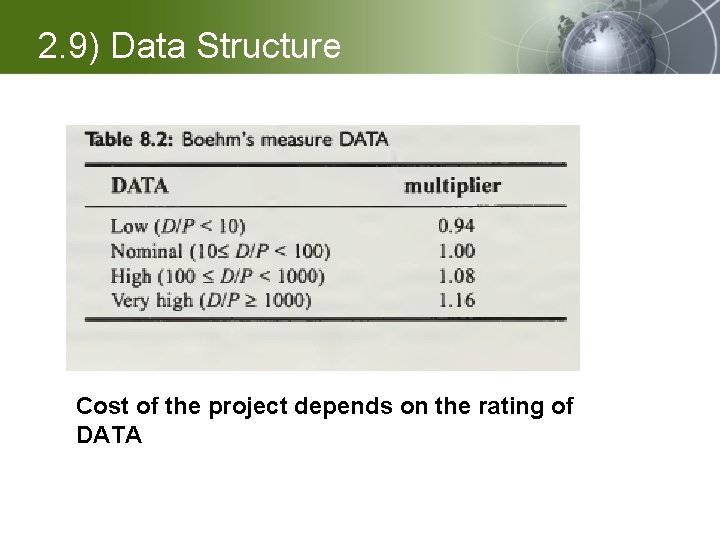

2. 9) Data Structure § Local measures: • Measure includes amount of structure in each data items • Elliot uses a graph-theoretic approach to analyze & measure properties of data structure. • Data structure measures can be defined hierarchically in terms of values for the primes and values associated with the various operations. • Van den berg uses same kind of approach to measure structure of function definition in functional programming language Global measures: • We measure amount of data for a given system by counting total no. of variables • Boehm founds a several difficulties and problems when using COCOMO model • D/P= (D/B size in bytes or char/ Program size in DSI) • Using this measure, Boehm derived a ordinal scale measure: DATA to measure amount of data

2. 9) Data Structure § Cost of the project depends on the rating of DATA

External Attribute of Product: Quality Models: 1) Mc. Call Quality Model 2) Boehm's Quality Model 3) ISO 9126 Standard Quality Model

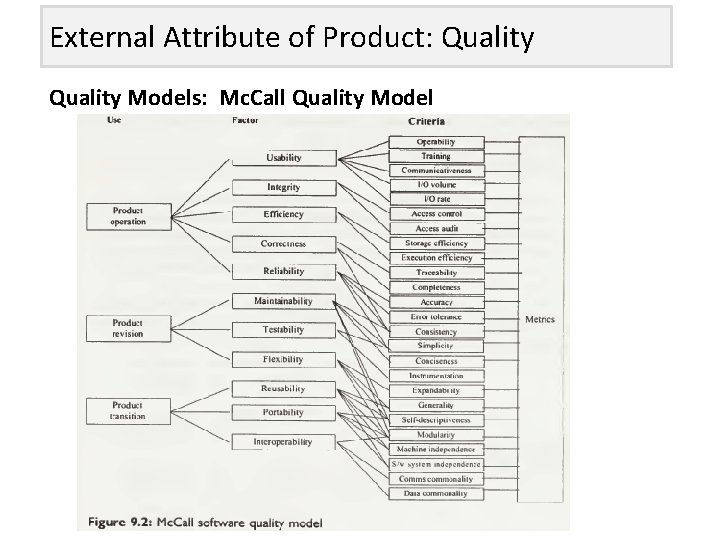

External Attribute of Product: Quality Models: Mc. Call Quality Model

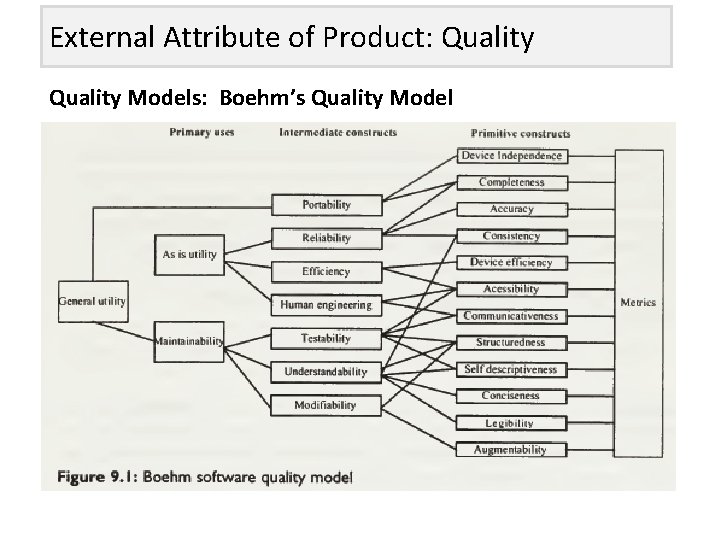

External Attribute of Product: Quality Models: Boehm’s Quality Model

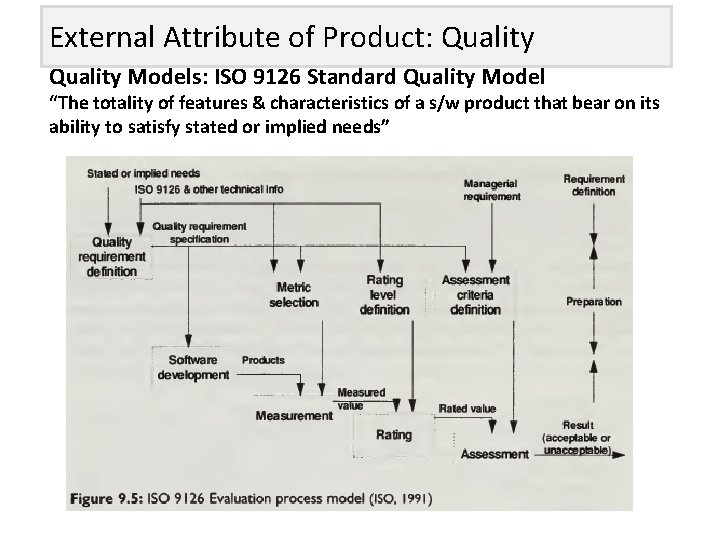

External Attribute of Product: Quality Models: ISO 9126 Standard Quality Model “The totality of features & characteristics of a s/w product that bear on its ability to satisfy stated or implied needs”

External Attribute of Product: Quality Measuring Aspects of Quality: 1) Defect based Quality Measure 2) Usability Measures 3) Maintainability Measures

External Attribute of Product: Quality Measuring Aspects of Quality: 1) Defect based Quality Measure: For any product possible 2 types of defects are: • Known Defects • Latent Defects • Defect Density= No of known defects/Product size

External Attribute of Product: Quality Measuring Aspects of Quality: 2) Usability Measures: Usability of a s/w product is an extent to which product is convenient & practical to use. Measurable attributes of usability are: • Entry Level: Experience, age etc. • Learnability: Speed of learning • Handling Ability: Speed of working when trained or error made when working at normal speed

External Attribute of Product: Quality Measuring Aspects of Quality: 2) Maintainability Measures: S/w is said to be maintainable when it is easy to understand, correct or enhance. Types of maintenance are: • Corrective Maintenance • Adaptive Maintenance • Preventive Maintenance • Perfective Maintenance

q. Information flow • Local direct flow exist if 1. Module invoke a second module & passes info. To it 2. Invoked module return result to caller • Local indirect flow 1. It exists if invoked module returns info. That is subsequently passed to a second invoked module • Global flow exists

§ Fan-In: -of module m is no. of local flow that terminate at M, plus no. of data structure from which info. Is retrieved by M § Fan-out: - no. of local flow that emerges from M plus no. of data structured updated by M Shepperd proposed some refinement : - 1. Recursive call of module should be treated as normal calls 2. Any variable shared by two or more module treated as normal calls 3. Compiler & library module should be ignored 4. Indirect local flow counted across only one hierarchical level 5. No attempt should made to include dynamic analysis of module calls 6. Duplicate flow should be ignored 7. Module length should be discarded as it is a separate attribute

DATA STRUCTURES § Measuring data structure should be straight forward § Measure amount of structure in each data items § Elliott uses graph-theorotic approach to measure data structure § data structure measure can define hierarchically in terms of primes & operation § Boehm’s ordinal scale measure called as DATA for data measure

DIFFICULTY WITH GENERAL COMPLEXITY MEASURE § Generating a single, comprehensive measure to express complexity § This single measure should be powerful , indicator of comprehensibility, correctness, maintainability, reliability, testability & ease of implementation § It also known as “Quality” measure § But some properties are contradictory § Weyuker’s has given different properties for s/w complexity metric from 1 to 9 § Some properties: 1. Adding code to program can’t decrease it’s complexity. so low comprehensibility is not a key factor in complexity 2. Two program bodies of equal complexity can yield third program of different complexity

MEASURING EXTERNAL PRODUCT ATTRIBUTE q. Modeling s/w quality § Early models: § Mc Call & Boehm describes quality using decomposition approach § In models focus is given on final product & identify key attribute of quality from user’s perspective § Key attribute called as quality factors- high level external attributes like reliability, usability & maintainability

§ Directly measurable attribute called quality metrics § 2 -way to monitor s/w quality: 1. Fixed model approach: 2. Define own quality model: 3. Define –your-own-models: - § The ISO-9126 standard quality model • Quality is decomposed into 6 factors: - 1. Functionality efficiency 2. reliability 3. 4. Usability portability 5. maintainability 6.

MEASURING ASPECT OF QUALITY § Defect-based quality measure • 2 - type of defects: 1. Known defect: - discovered through testing , inspection & other techniques 2. Latent defect: -present in system but not discovered product size in LOC • Defect density is acceptable measure to apply to project & provide useful information

§ Defect count include: 1. Post released failure 2. Residual fault (fault discovered after release) 3. All known fault 4. Set of fault discovered after fixed point in s/w life cycle (ex: - after unit testing) • Defect rate • Defect density

§ Other quality measure based on defect counts § Defect density is not the only useful defect based quality measure § Japanese companies defined quality in terms of spoilage § It require careful data collection § Usability measure: § It include: üWell-structure manual üGood use of menus & graphics üInfo. Error messages üHelp functions üConsistent interfaces

§ External view of usability ØGilb’s approach: - it assess by user on particular type of product § Entry level: -measured in terms of experience § Learnability: - measure in speed of learning § Handling ability: - measure in speed of working when trained Ex: - COQUAMO provides automated support for setting target for measuring usability , it provide templates for usability

§ Internal attribute affecting usability § Maintainability measure § s/w measure is valuable after delivery of product, involve changes § s/w is maintainable if it is easy to understand, enhance or correct § Measurement guide during maintenance § 4 -types of maintenance: 1. Corrective maintenance: 2. Adaptive maintenance: 3. Preventive maintenance: 4. Perfective maintenance: -

§ Internal attri. Affecting maintainability: § Complexity- describe level of maintenance effort § Structural measure: - use for risk avoidance w. r. t. maintainability § Cyclomatic no. & other are insufficient as indicator of maintainability as they capture limited view of s/w structure

2. Length •

1. 2. 1 Neglect of Measurement in Software Engg Ø It is difficult to imagine electrical, mechanical and civil engg without a central role of measurement. Eg: Ohms law – relationship between resistance, current and voltage. Ø Neither effective nor practical ØFor most development projects: 1. We fail to set measurable targets for our software products. 2. We fail to understand quantify the cost of software products. 3. We can’t quantify the quality of the product we produce 4. We can’t find out the improvements in out product development

- Slides: 74