Understanding unstructured texts via Latent Dirichlet Allocation Raphael

- Slides: 29

Understanding unstructured texts via Latent Dirichlet Allocation Raphael Cohen DSaa. S, EMC IT June 2015

Motivation

Let’s start small – one label per document EMC CONFIDENTIAL—INTERNAL USE ONLY. 4

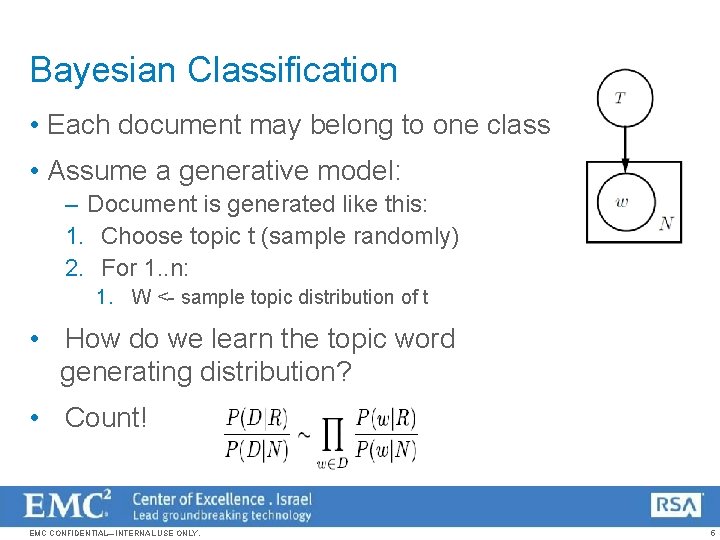

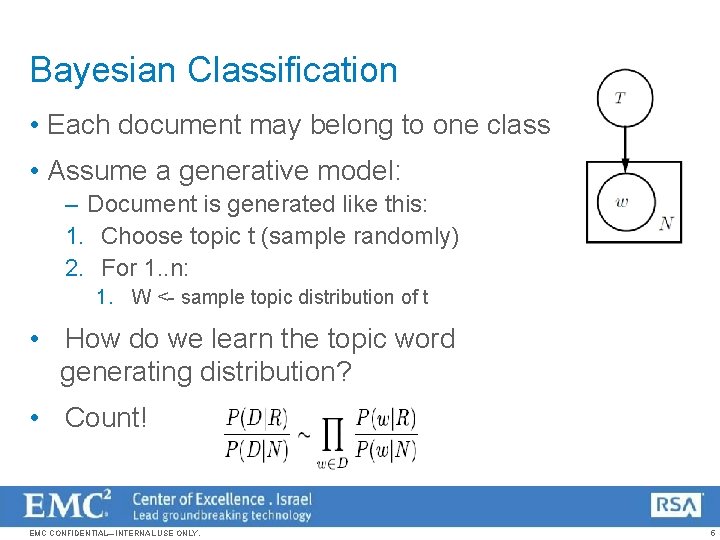

Bayesian Classification • Each document may belong to one class • Assume a generative model: – Document is generated like this: 1. Choose topic t (sample randomly) 2. For 1. . n: 1. W <- sample topic distribution of t • How do we learn the topic word generating distribution? • Count! EMC CONFIDENTIAL—INTERNAL USE ONLY. 5

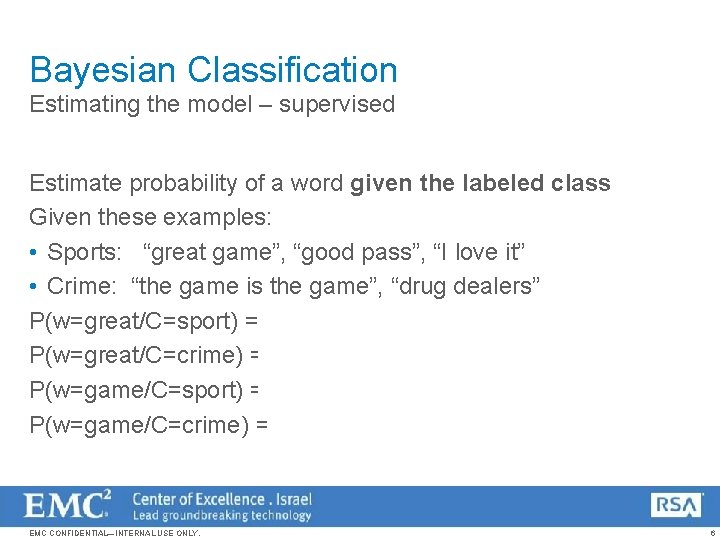

Bayesian Classification Estimating the model – supervised Estimate probability of a word given the labeled class Given these examples: • Sports: “great game”, “good pass”, “I love it” • Crime: “the game is the game”, “drug dealers” P(w=great/C=sport) = 1/7 P(w=great/C=crime) = 0/7 P(w=game/C=sport) = 1/7 P(w=game/C=crime) = 1/7 EMC CONFIDENTIAL—INTERNAL USE ONLY. 6

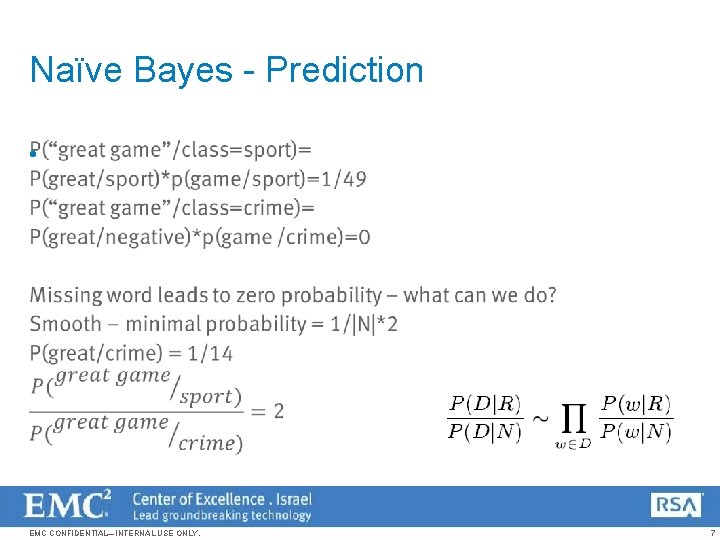

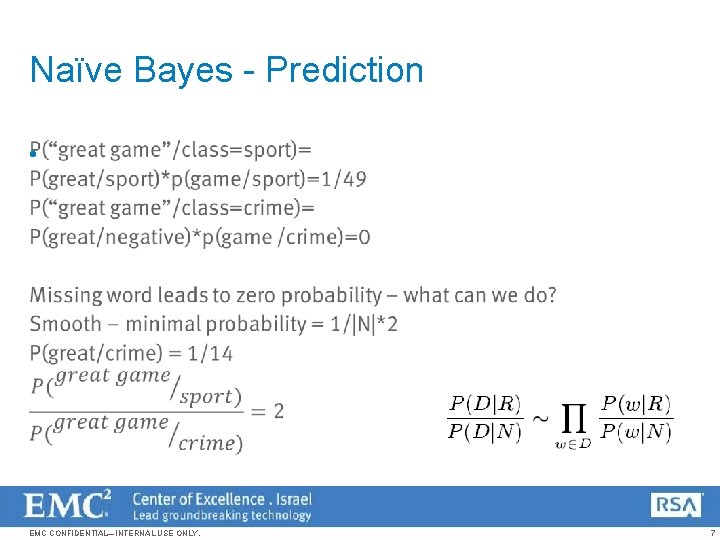

Naïve Bayes - Prediction • EMC CONFIDENTIAL—INTERNAL USE ONLY. 7

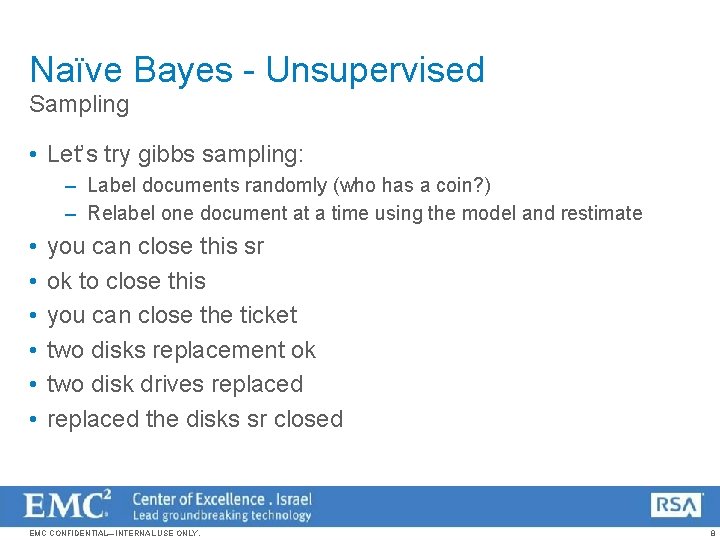

Naïve Bayes - Unsupervised Sampling • Let’s try gibbs sampling: – Label documents randomly (who has a coin? ) – Relabel one document at a time using the model and restimate • • • you can close this sr ok to close this you can close the ticket two disks replacement ok two disk drives replaced the disks sr closed EMC CONFIDENTIAL—INTERNAL USE ONLY. 8

But that assumed one topic per document… EMC CONFIDENTIAL—INTERNAL USE ONLY. 9

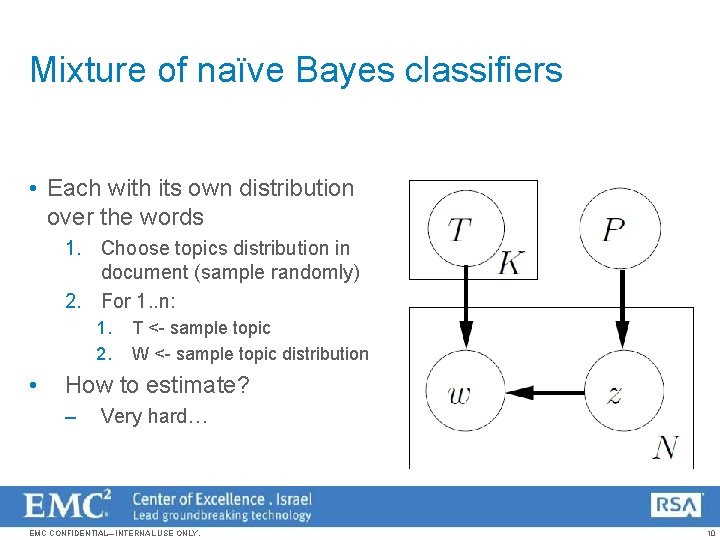

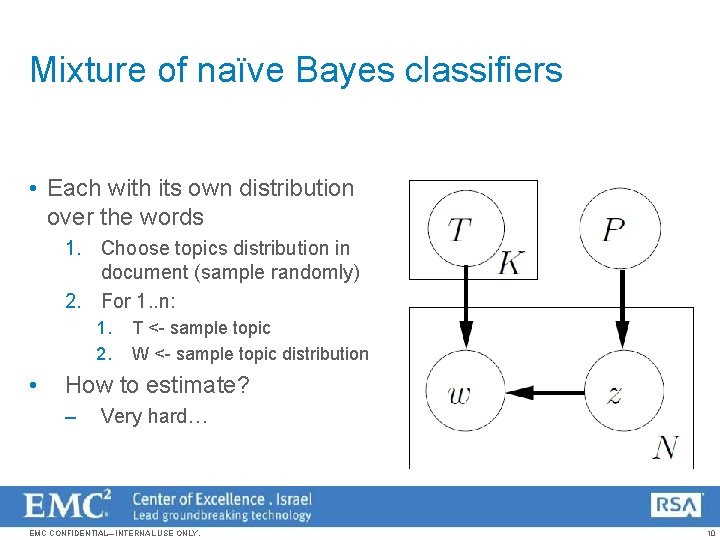

Mixture of naïve Bayes classifiers • Each with its own distribution over the words 1. Choose topics distribution in document (sample randomly) 2. For 1. . n: 1. 2. • T <- sample topic W <- sample topic distribution How to estimate? – Very hard… EMC CONFIDENTIAL—INTERNAL USE ONLY. 10

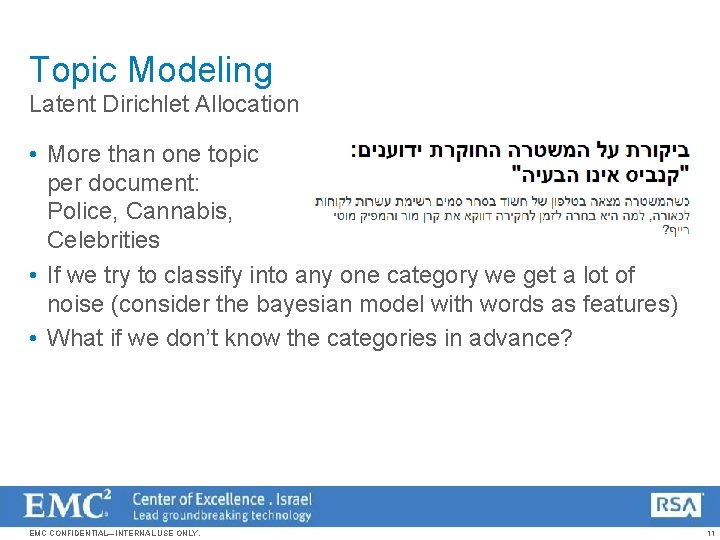

Topic Modeling Latent Dirichlet Allocation • More than one topic per document: Police, Cannabis, Celebrities • If we try to classify into any one category we get a lot of noise (consider the bayesian model with words as features) • What if we don’t know the categories in advance? EMC CONFIDENTIAL—INTERNAL USE ONLY. 11

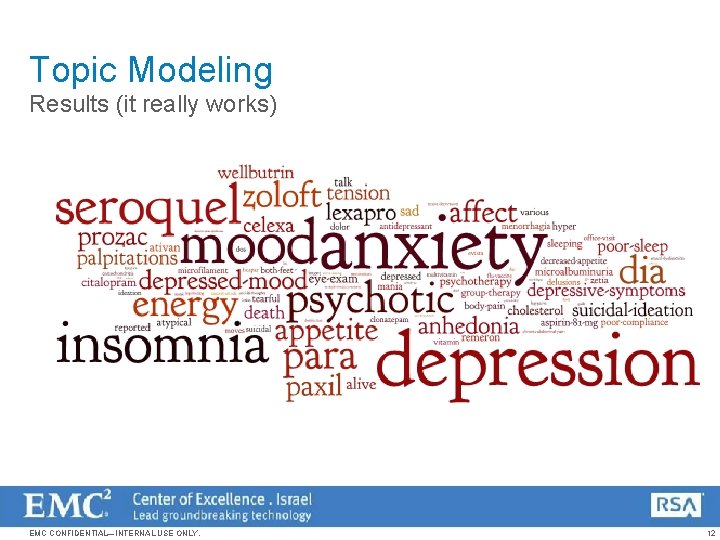

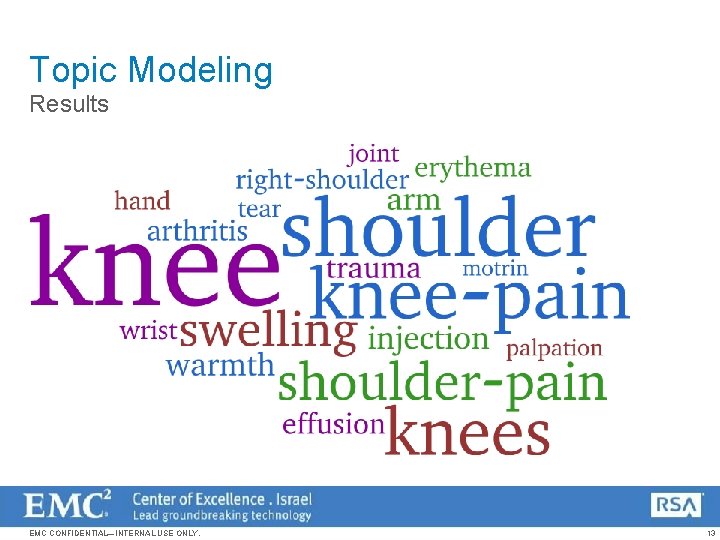

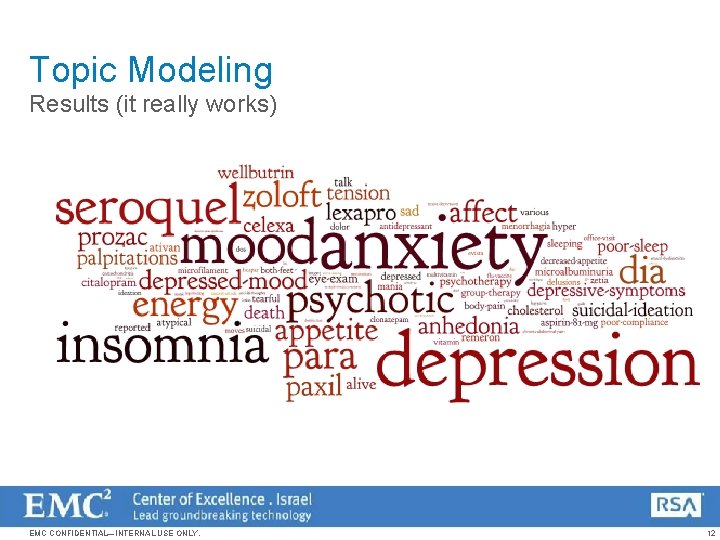

Topic Modeling Results (it really works) EMC CONFIDENTIAL—INTERNAL USE ONLY. 12

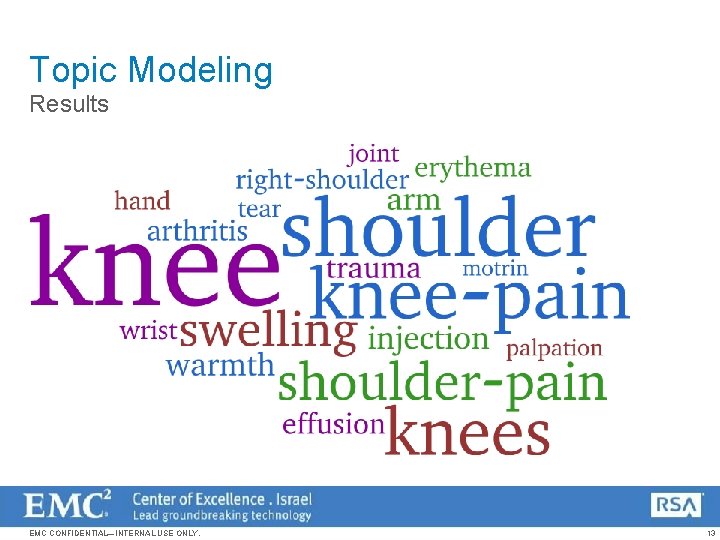

Topic Modeling Results EMC CONFIDENTIAL—INTERNAL USE ONLY. 13

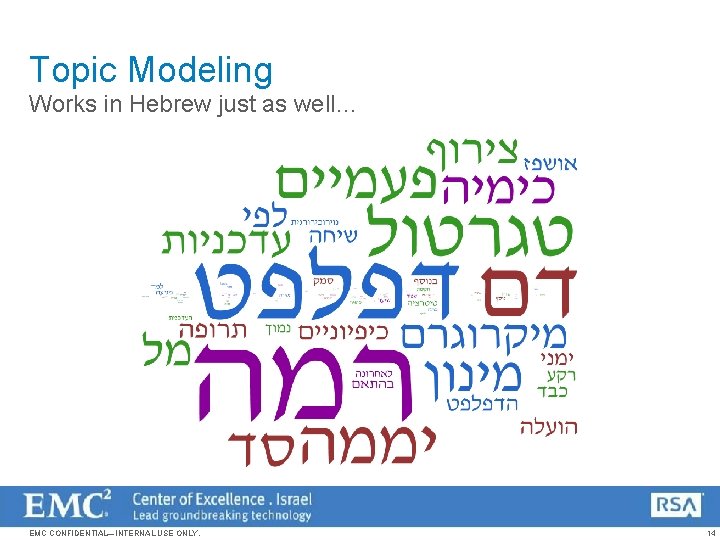

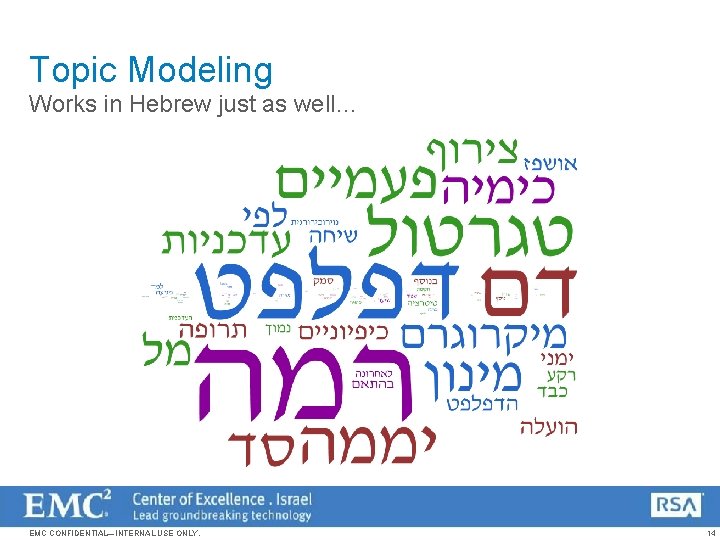

Topic Modeling Works in Hebrew just as well… EMC CONFIDENTIAL—INTERNAL USE ONLY. 14

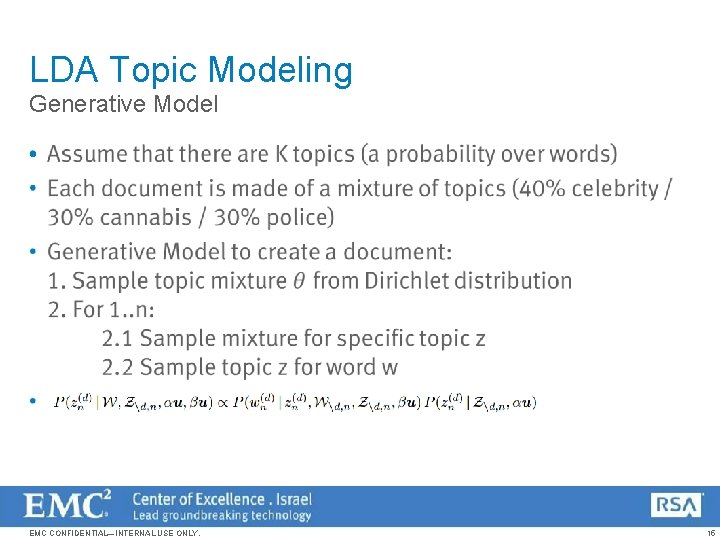

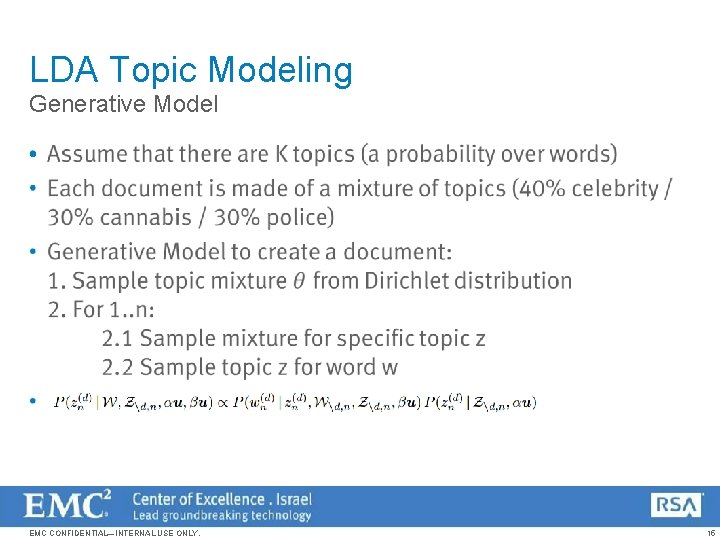

LDA Topic Modeling Generative Model • EMC CONFIDENTIAL—INTERNAL USE ONLY. 15

Topic Modeling Sampling • So we have a generative model, how does that help? • We can learn the parameters of the model: The probability of each word in each topic The probability of the topic itself (in some LDA Variants) • Gibbs Sampling – Start with random topic tags for all words Each step – remove one tag and recompute based on the topic mixture in the same document and the topic probability of the word in the corpus EMC CONFIDENTIAL—INTERNAL USE ONLY. 16

Topic Modeling Sampling • Let’s try gibbs sampling… – With LDA (uniform priors) • • • you can close this sr ok to close this you can close the ticket two disks replacement ok two disk drives replaced the disks sr closed EMC CONFIDENTIAL—INTERNAL USE ONLY. 17

Topic Modeling Application in Data Science • Data exploration of free text • Summarization – state of the art uses topics to abstract words • Text Classification – use the topics as features instead of the words • Domain Adaptation – supervised learning on a small annotated sample then generalize with topics • Go beyond text – cluster costumers by their latent needs / preferences based on their installed applications EMC CONFIDENTIAL—INTERNAL USE ONLY. 18

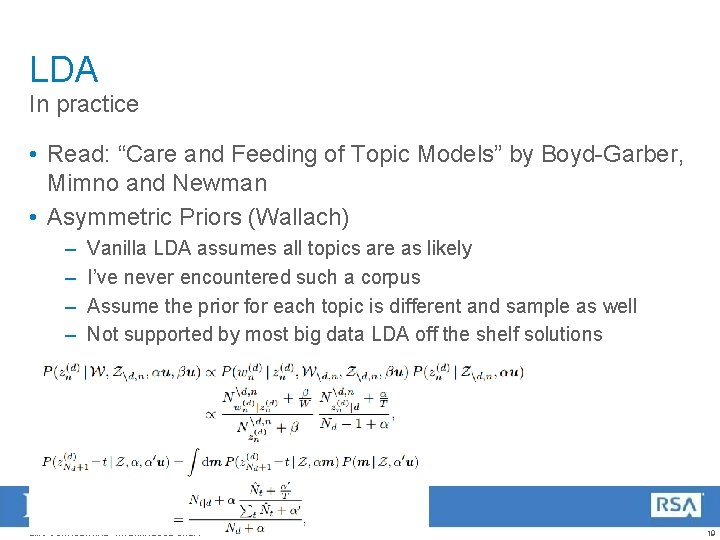

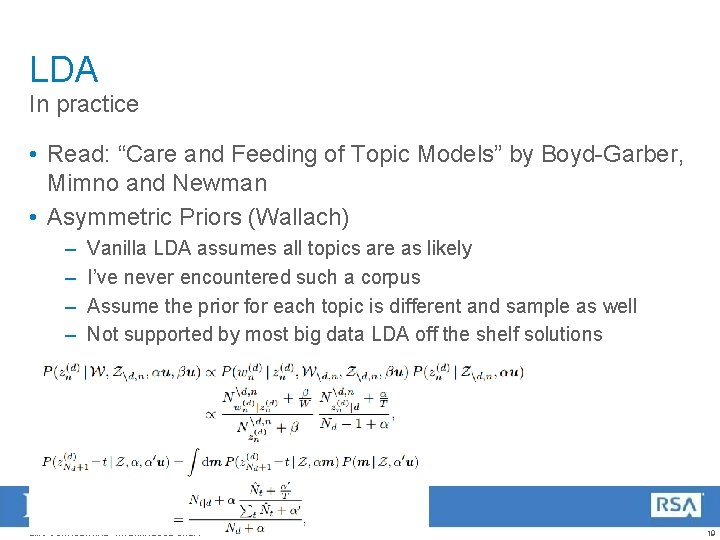

LDA In practice • Read: “Care and Feeding of Topic Models” by Boyd-Garber, Mimno and Newman • Asymmetric Priors (Wallach) – – Vanilla LDA assumes all topics are as likely I’ve never encountered such a corpus Assume the prior for each topic is different and sample as well Not supported by most big data LDA off the shelf solutions EMC CONFIDENTIAL—INTERNAL USE ONLY. 19

Redundancy and LDA • In real life we encounter templates / copy-paste and document duplication • A rare word which appears in a copied document will have bias the topics – Will get higher weight in the topic – Noisy topics (mix two topics together) • Solution: – Remove redundant documents – Create a more complex model EMC CONFIDENTIAL—INTERNAL USE ONLY. 20

Text Analytics System Topic modeling is not enough… EMC CONFIDENTIAL—INTERNAL USE ONLY. 21

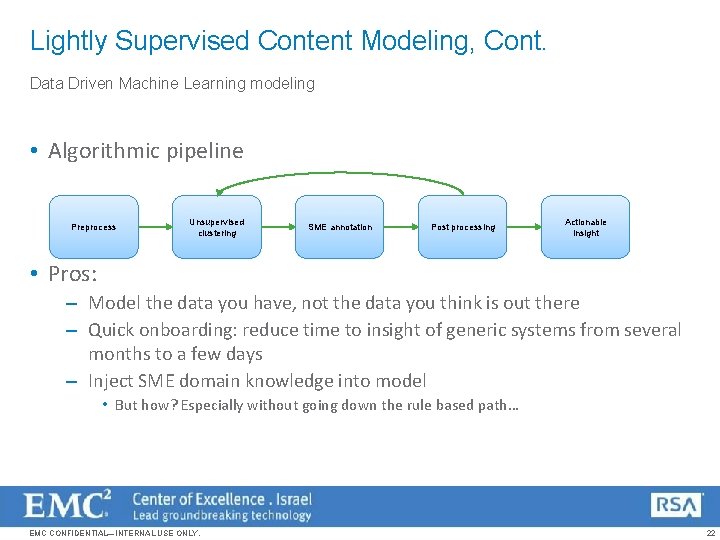

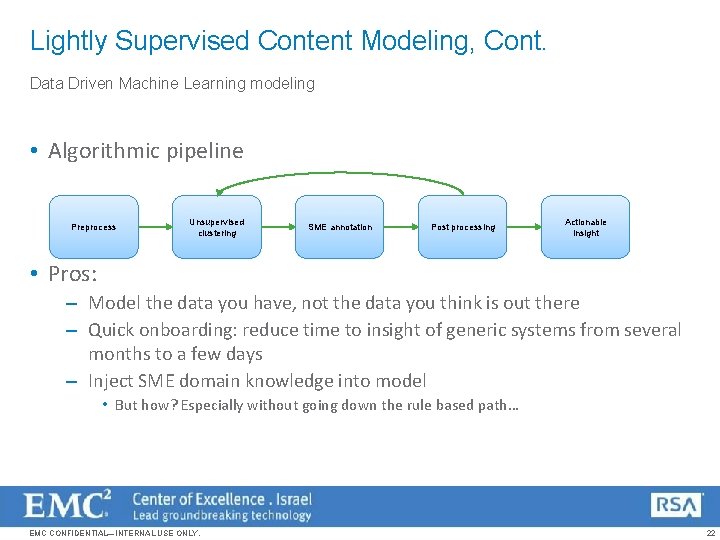

Lightly Supervised Content Modeling, Cont. Data Driven Machine Learning modeling • Algorithmic pipeline Preprocess Unsupervised clustering SME annotation Post processing Actionable insight • Pros: – Model the data you have, not the data you think is out there – Quick onboarding: reduce time to insight of generic systems from several months to a few days – Inject SME domain knowledge into model • But how? Especially without going down the rule based path… EMC CONFIDENTIAL—INTERNAL USE ONLY. 22

Preprocessing tricks EMC CONFIDENTIAL—INTERNAL USE ONLY. 23

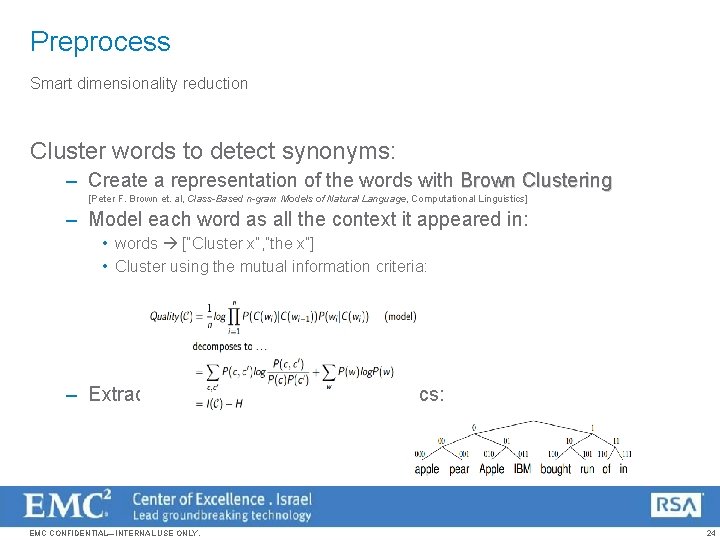

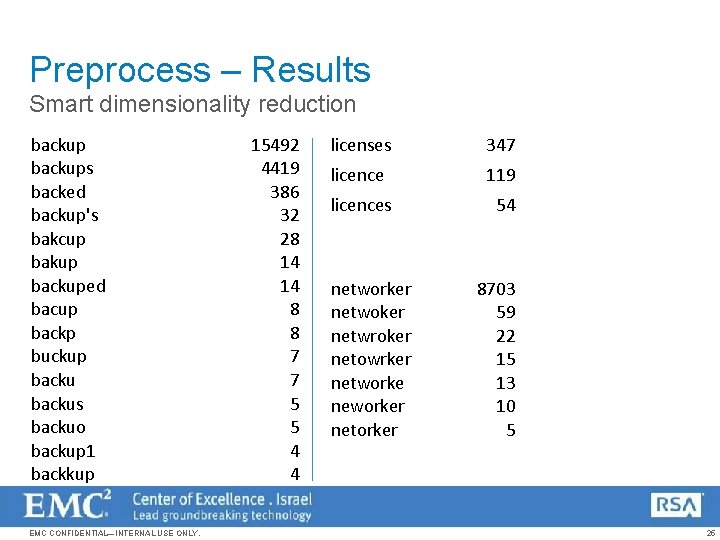

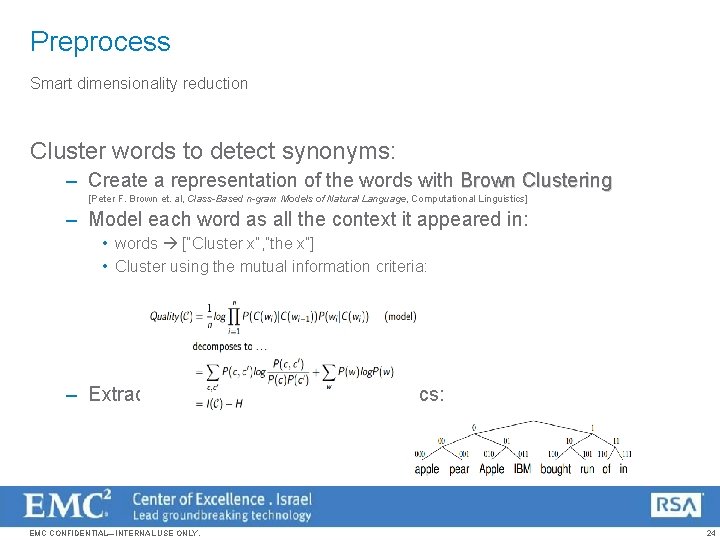

Preprocess Smart dimensionality reduction Cluster words to detect synonyms: – Create a representation of the words with Brown Clustering [Peter F. Brown et. al, Class-Based n-gram Models of Natural Language, Computational Linguistics] – Model each word as all the context it appeared in: • words [“Cluster x”, ”the x”] • Cluster using the mutual information criteria: – Extract likely synonyms using heuristics: EMC CONFIDENTIAL—INTERNAL USE ONLY. 24

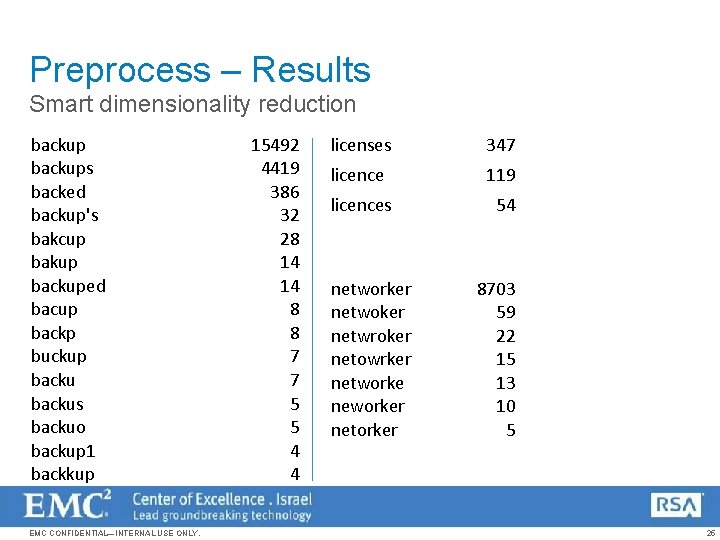

Preprocess – Results Smart dimensionality reduction backups backed backup's bakcup bakup backuped bacup backp buckup backus backuo backup 1 backkup EMC CONFIDENTIAL—INTERNAL USE ONLY. 15492 4419 386 32 28 14 14 8 8 7 7 5 5 4 4 licenses 347 licence 119 licences 54 networker netwoker netwroker netowrker networke neworker netorker 8703 59 22 15 13 10 5 25

Injecting SME knowledge into a Machine Learning Model EMC CONFIDENTIAL—INTERNAL USE ONLY. 26

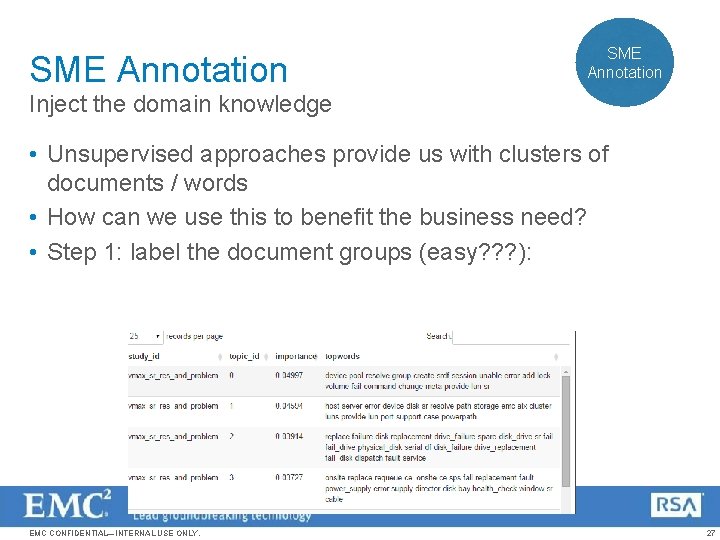

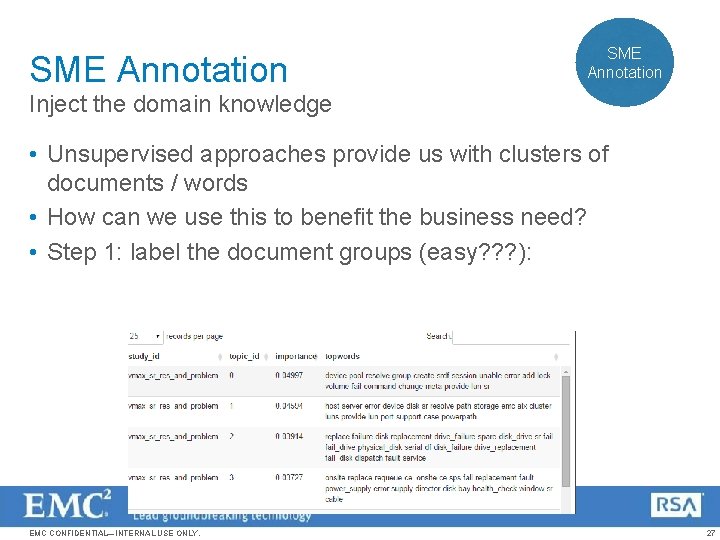

SME Annotation Inject the domain knowledge • Unsupervised approaches provide us with clusters of documents / words • How can we use this to benefit the business need? • Step 1: label the document groups (easy? ? ? ): EMC CONFIDENTIAL—INTERNAL USE ONLY. 27

SME Annotation Inject the domain knowledge Provide as many layers of information as possible to make it easy: • Single word information • Bi-Gram (two word) relevant information: – Remove stop words – Choose statistically interesting words (not just two common words that are likely to appear together by sheer abundance) • Whole sentence extraction – using statistical summarization EMC CONFIDENTIAL—INTERNAL USE ONLY. 28

Model Tuning SME Annotation Inject the domain knowledge Is it enough? • No, business users require high accuracy Solution: • Allow SME to tune the model • Make it easy and simple – much simpler than making up rules • The result should be optimized for input to an accurate supervised machine learning model EMC CONFIDENTIAL—INTERNAL USE ONLY. 29