Understanding Reproducibility and Characteristics of Flaky Tests Through

Understanding Reproducibility and Characteristics of Flaky Tests Through Test Reruns in Java Projects Wing Lam Stefan Winter Angello Astorga Victoria Stodden Darko Marinov winglam 2@illinois. edu CCF-1763788 OAC-1839010

Flaky tests A test is flaky if it can pass and fail when run in the same test scenario Code under test Test code T 1: T 2: … ? ? ? ● May not be faults in current changes; reduces trust in testing ● Wastes time—developers may manually debug nonexistent fault in changes

Flaky test – Example flaky test from https: //github. com/zalando/riptide: Line 4 launches a server which takes some time: • If some other tests run before this test, the test takes ~1000 ms • Without the other tests, the test takes ~1500 ms and may fail due to the timeout (Line 1) 3

Flaky test – Example failure rates Example flaky test from https: //github. com/zalando/riptide: When the test is run with other tests in different test orders, its failure rate is between 0— 20% • Failure rate: ratio of # failed runs over # total runs for a test order To understand flaky tests, prior work categorized flaky tests • What category would this test be? 4

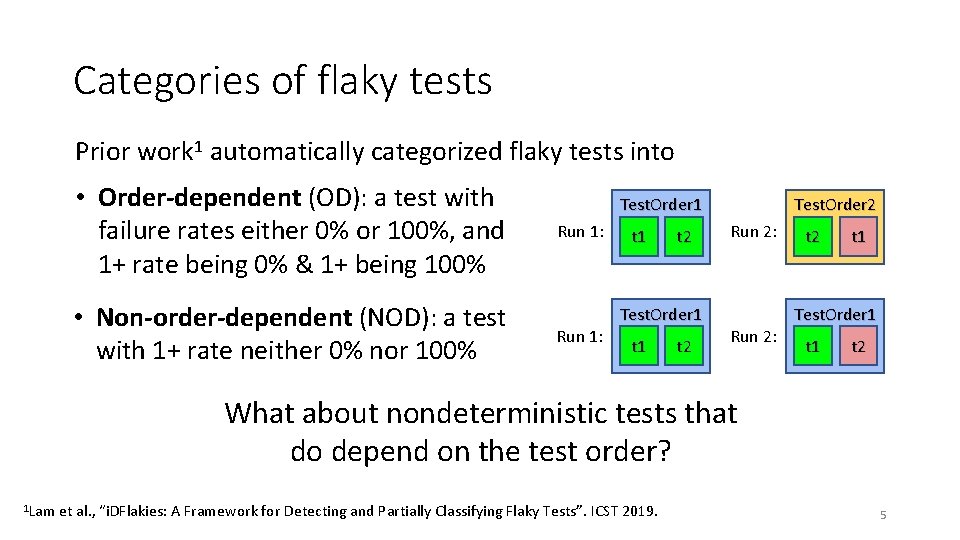

Categories of flaky tests Prior work 1 automatically categorized flaky tests into • Order-dependent (OD): a test with failure rates either 0% or 100%, and 1+ rate being 0% & 1+ being 100% • Non-order-dependent (NOD): a test with 1+ rate neither 0% nor 100% Test. Order 1 Run 1: t 1 t 2 Test. Order 2 Run 2: t 2 t 1 Test. Order 1 t 2 What about nondeterministic tests that do depend on the test order? 1 Lam et al. , “i. DFlakies: A Framework for Detecting and Partially Classifying Flaky Tests”. ICST 2019. 5

Refining the categorization of NOD tests We propose that NOD tests should be refined to • Non-Deterministic Order-Dependent (NDOD): a test with 1+ failure rate that significantly* differs from other rates • Non-Deterministic Order-Independent (NDOI): a test with no failure rates that significantly* differs from other rates *Significance can be determined with statistical tests Our flaky test example is NDOD. � 2 statistical test with # of failures and # runs for various test orders shows p-value = 2. 2 E-16 (p-value < 0. 05 suggests a test is likely NDOD) 6

Our contributions We empirically study NOD tests and refine their categorization From our experiments with 44 test suites, each run 4000 times, we distill guidelines on how one should: • G 1: run test suites to detect flaky tests using a limited # of runs • G 2: use reruns to check if test failures are from flaky tests • G 3: reproduce flaky-test failures observed from test-suite runs • G 4: prioritize debugging of flaky tests 7

Study setup Goal: study NOD tests by many runs of many test orders • 44 test suites from Maven-based, Java projects available in public dataset collected in our prior work 1 • Each test suite is run in ~20 test-class orders (TCOs) • The test suite is run 100+ times for each TCO • In total, each test suite is run exactly 4000 times in ~20 TCOs • Our runs find 107 flaky tests that we use for our study • We inspected 21 NOD tests in detail (see paper) 1 Lam et al. , “i. DFlakies: A Framework for Detecting and Partially Classifying Flaky Tests”. ICST 2019. 8

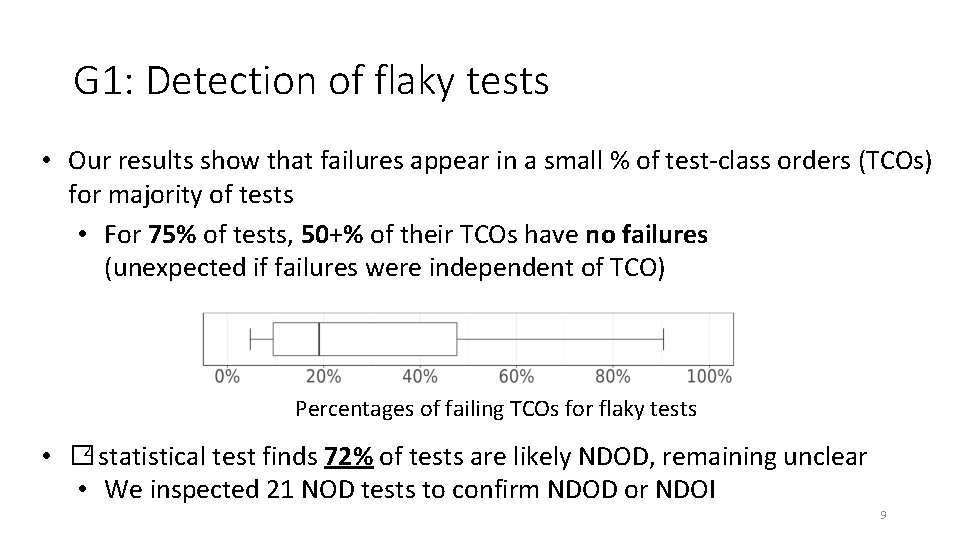

G 1: Detection of flaky tests • Our results show that failures appear in a small % of test-class orders (TCOs) for majority of tests • For 75% of tests, 50+% of their TCOs have no failures (unexpected if failures were independent of TCO) Percentages of failing TCOs for flaky tests • � 2 statistical test finds 72% of tests are likely NDOD, remaining unclear • We inspected 21 NOD tests to confirm NDOD or NDOI 9

G 1: Detection of flaky tests • Our results show that failures appear in a small % of test-class orders (TCOs) for majority of tests • For 75% tests, 50+% of their failures We of find that many NODTCOs testshave areno likely NDOD; (unexpected if failures were independent of TCO) flaky tests, to detect whether a test suite contains it is better to run test suites in more test orders with fewer times each than in fewer test orders with more times each. Percentages of failing TCOs for flaky tests • � 2 statistical test finds 72% of tests are likely NDOD, remaining unclear • We inspected 21 NOD tests to confirm NDOD or NDOI 10

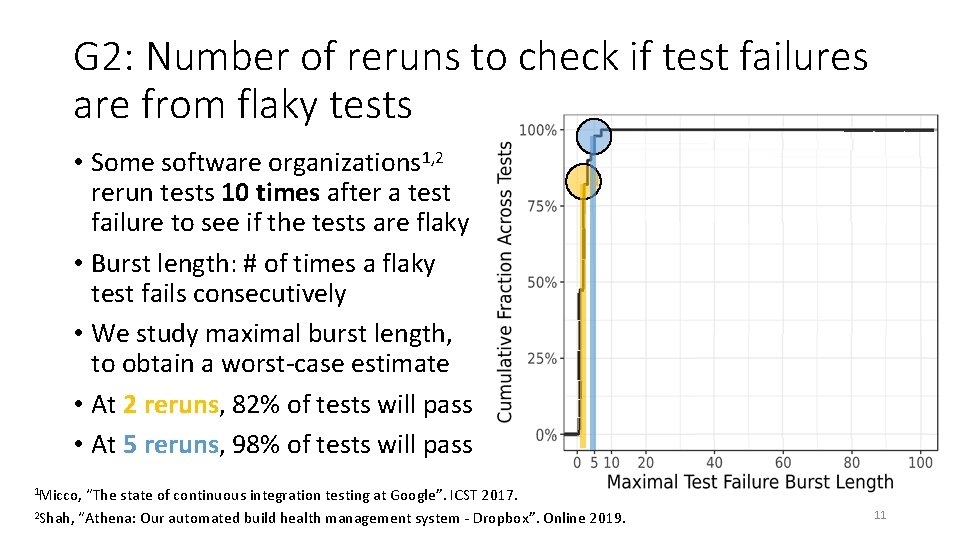

G 2: Number of reruns to check if test failures are from flaky tests • Some software organizations 1, 2 rerun tests 10 times after a test failure to see if the tests are flaky • Burst length: # of times a flaky test fails consecutively • We study maximal burst length, to obtain a worst-case estimate • At 2 reruns, 82% of tests will pass • At 5 reruns, 98% of tests will pass “The state of continuous integration testing at Google”. ICST 2017. 2 Shah, “Athena: Our automated build health management system - Dropbox”. Online 2019. Test-suite ordering (TSO) Isolated ordering (ISO) 1 Micco, 11

G 2: Number of reruns to check if test failures are from flaky tests • Some software organizations 1, 2 rerun tests 10 times after a test failure to if theiftests flaky are due to flaky tests, Tosee check test are failures • Burst length: # ofuse times a flaky one can 2– 5 reruns (50+% fewer than some test fails. SW consecutively organization’s default 10 reruns) and still • We study maximal burst length, check correctly most of the time. to obtain a worst-case estimate Test-suite ordering (TSO) Isolated ordering (ISO) • At 2 reruns, 82% of tests will pass • At 5 reruns, 98% of tests will pass 1 Micco, “The state of continuous integration testing at Google”. ICST 2017. 2 Shah, “Athena: Our automated build health management system - Dropbox”. Online 2019. 12

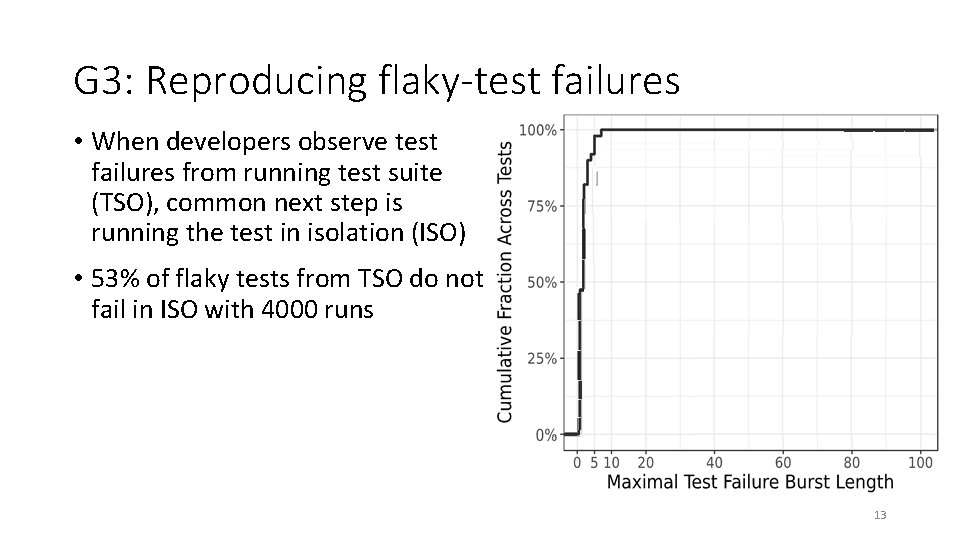

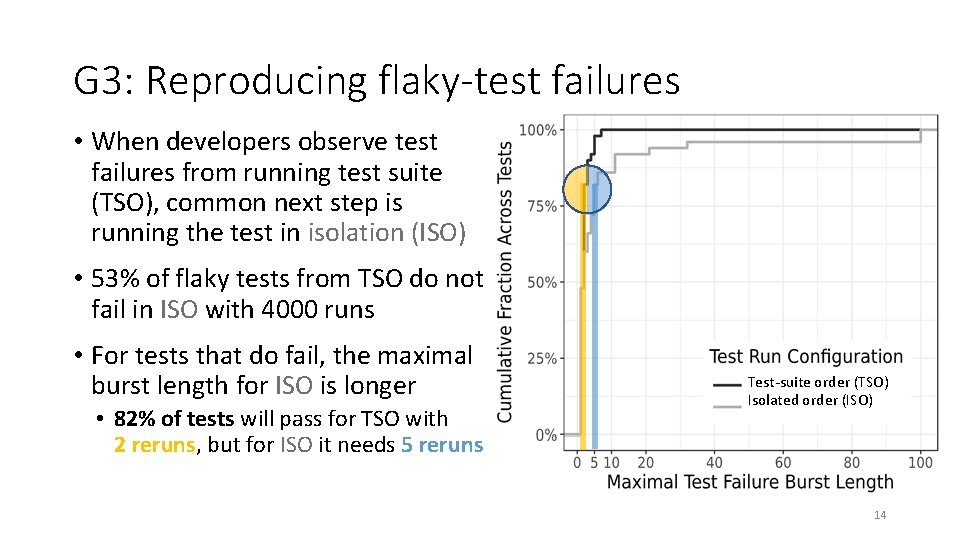

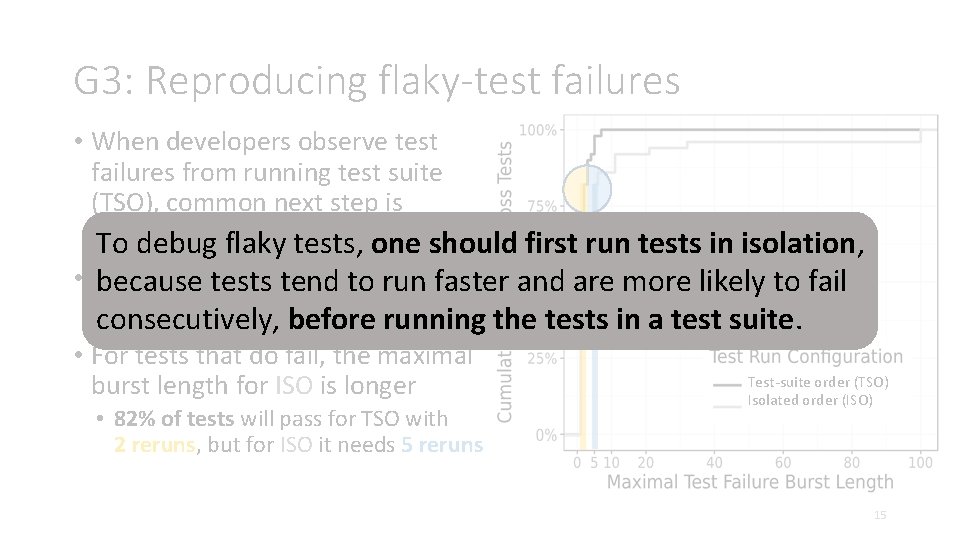

G 3: Reproducing flaky-test failures • When developers observe test failures from running test suite (TSO), common next step is running the test in isolation (ISO) • 53% of flaky tests from TSO do not fail in ISO with 4000 runs Test-suite ordering (TSO) Isolated ordering (ISO) 13

G 3: Reproducing flaky-test failures • When developers observe test failures from running test suite (TSO), common next step is running the test in isolation (ISO) • 53% of flaky tests from TSO do not fail in ISO with 4000 runs • For tests that do fail, the maximal burst length for ISO is longer • 82% of tests will pass for TSO with 2 reruns, but for ISO it needs 5 reruns Test-suite order (TSO) Isolated order (ISO) 14

G 3: Reproducing flaky-test failures • When developers observe test failures from running test suite (TSO), common next step is running the flaky test intests, isolation To debug one(ISO) should first run tests in isolation, • 53% of flaky teststend fromto TSO dofaster not and are more likely to fail because tests run fail in ISO with 4000 runs running the tests in a test suite. consecutively, before • For tests that do fail, the maximal burst length for ISO is longer • 82% of tests will pass for TSO with 2 reruns, but for ISO it needs 5 reruns Test-suite order (TSO) Isolated order (ISO) 15

G 4: Prioritizing the debugging of flaky tests • Even 1 flaky-test failure in a test-suite run (TSR) could prevent developers from merging their code changes • Our experiments show that a TSR failure due to flaky test(s) happens 5. 4% of the time on average • By studying how often flaky tests fail together, we find that up to 57% of test suites have flaky tests that are likely related! • E. g. , two flaky tests that both pass or both fail if a network port is available • Flaky-test management systems could present historical related flaky-test failures to help developers prioritize which tests to fix 16

G 4: Prioritizing the debugging of flaky tests • Even 1 flaky-test failure in a test-suite run (TSR) could prevent developers from merging their code changes To prioritize debugging, systems • Our experiments show that flaky-test a TSR failuremanagement due to flaky test(s) happens couldofpresent flaky-test failures 5. 4% the timerelated on average (asstudying many test havetests flakyfailtests that are likely related) • By howsuites often flaky together, we find that up to 57% of test suites haveprioritize flaky testswhich that are likely to help developers tests torelated! fix, • E. g. , two fix flaky tests that both passare or both fail if a network port is available e. g. , first that related. • Flaky-test management systems could present historical related flaky-test failures to help developers prioritize which tests to fix 17

Conclusion We refine the categorization of non-order-dependent (NOD) flaky tests into: • Non-Deterministic Order-Dependent (NDOD) • Non-Deterministic Order-Independent (NDOI) We provide the following guidelines: • To detect flaky tests, one should run test suites in more test orders with fewer times each because many NOD tests are likely NDOD • To check if test failures are due to flaky tests, one can use 2– 5 reruns (50+% fewer than some SW organization’s 10 reruns) and still check correctly most of the time • To debug flaky tests, one should first run tests in isolation then in a test suite, because tests tend to run faster and are more likely to fail consecutively in isolation • To prioritize debugging, flaky-test management systems could present related flaky-test failures as many test suites have flaky tests that are likely related Email: winglam 2@Illinois. edu

- Slides: 18