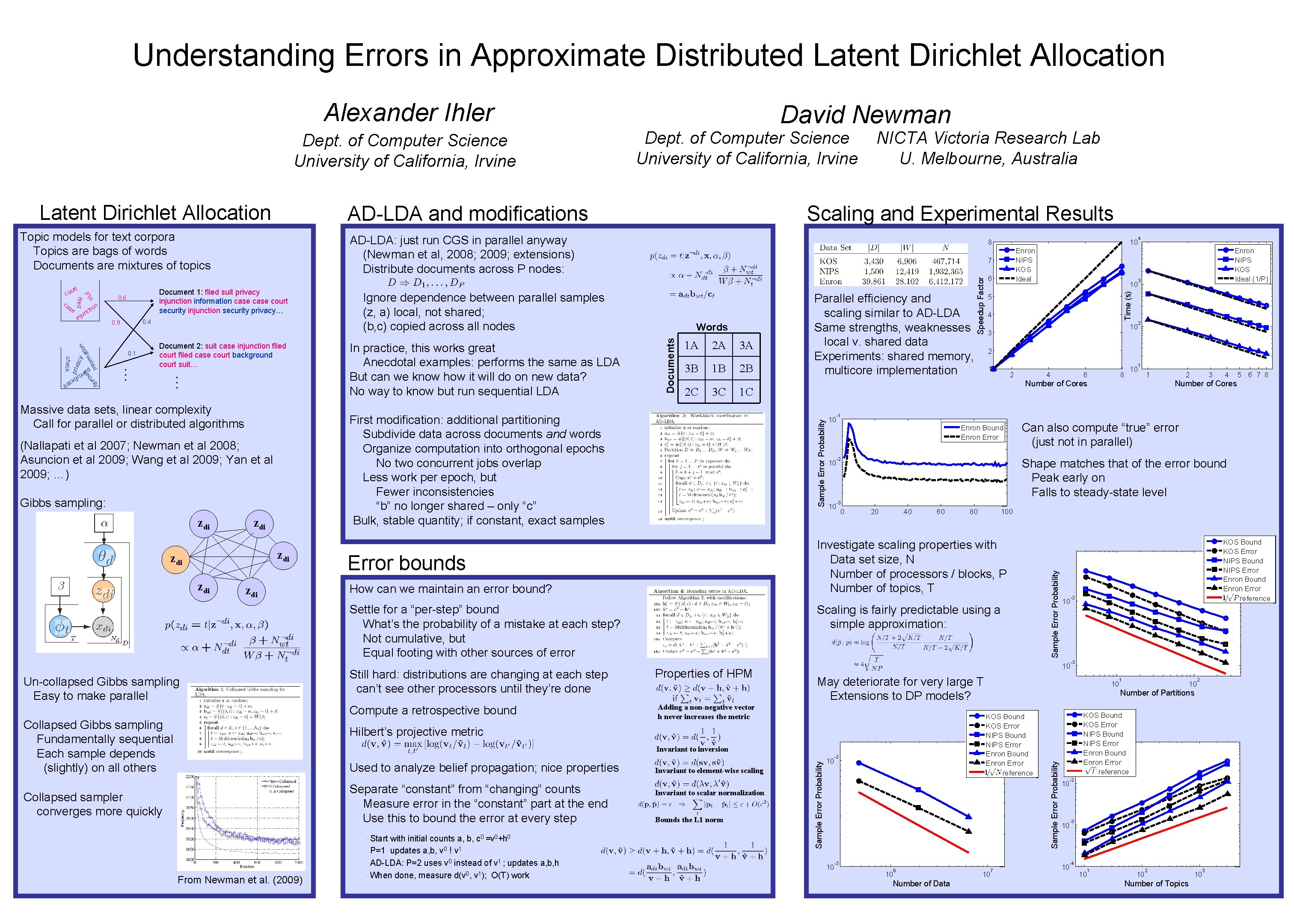

Understanding Errors in Approximate Distributed Latent Dirichlet Allocation

Understanding Errors in Approximate Distributed Latent Dirichlet Allocation Alexander Ihler AD-LDA and modifications … or inf priv acy ti ma ity r cu b se kg ac … check r nd ou 0. 1 Document 2: suit case injunction filed court filed case court background court suit… Massive data sets, linear complexity Call for parallel or distributed algorithms Gibbs sampling: zdi zdi 1 A 2 A 3 A 3 B 1 B 2 B 2 C 3 C zdi How can we maintain an error bound? Settle for a “per-step” bound What’s the probability of a mistake at each step? Not cumulative, but Equal footing with other sources of error Un-collapsed Gibbs sampling Easy to make parallel Compute a retrospective bound Collapsed Gibbs sampling Fundamentally sequential Each sample depends (slightly) on all others 10 Collapsed sampler converges more quickly From Newman et al. (2009) –Start with initial counts a, b, c 0 =v 0+h 0 –P=1 updates a, b, v 0 ! v 1 –AD-LDA: P=2 uses v 0 instead of v 1 ; updates a, b, h –When done, measure d(v 0, v 1); O(T) work 4 10 3 Invariant to scalar normalization Bounds the L 1 norm 3 2 2 1 2 Enron Bound Enron Error 10 10 -2 4 6 10 8 1 1 4 5 6 7 8 Number of Cores -3 0 20 40 60 80 10 KOS Bound KOS Error NIPS Bound NIPS Error Enron Bound Enron Error reference -2 -3 May deteriorate for very large T Extensions to DP models? 10 3 Can also compute “true” error (just not in parallel) 10 10 2 Shape matches that of the error bound Peak early on Falls to steady-state level Adding a non-negative vector h never increases the metric Invariant to element-wise scaling Enron NIPS KOS Ideal (1/P) -1 10 -2 -3 10 6 Number of Data 1 10 2 Number of Partitions KOS Bound KOS Error NIPS Bound NIPS Error Enron Bound Enron Error reference Invariant to inversion Separate “constant” from “changing” counts Measure error in the “constant” part at the end Use this to bound the error at every step 5 Scaling is fairly predictable using a simple approximation: Properties of HPM 10 4 Number of Cores Hilbert’s projective metric Used to analyze belief propagation; nice properties 6 Investigate scaling properties with Data set size, N Number of processors / blocks, P Number of topics, T Error bounds Still hard: distributions are changing at each step can’t see other processors until they’re done Enron NIPS KOS Ideal 1 C First modification: additional partitioning Subdivide data across documents and words Organize computation into orthogonal epochs No two concurrent jobs overlap Less work per epoch, but Fewer inconsistencies “b” no longer shared – only “c” Bulk, stable quantity; if constant, exact samples (Nallapati et al 2007; Newman et al 2008; Asuncion et al 2009; Wang et al 2009; Yan et al 2009; …) zdi In practice, this works great Anecdotal examples: performs the same as LDA But can we know how it will do on new data? No way to know but run sequential LDA Words Parallel efficiency and scaling similar to AD-LDA Same strengths, weaknesses local v. shared data Experiments: shared memory, multicore implementation Speedup Factor 0. 4 0. 9 Ignore dependence between parallel samples (z, a) local, not shared; (b, c) copied across all nodes Sample Error Probability u inj 10 7 Sample Error Probability on ti nc 8 Documents siu 0. 6 on se filed ca AD-LDA: just run CGS in parallel anyway (Newman et al, 2008; 2009; extensions) Distribute documents across P nodes: Document 1: filed suit privacy injunction information case court security injunction security privacy… t rt cou Scaling and Experimental Results Time (s) Topic models for text corpora Topics are bags of words Documents are mixtures of topics NICTA Victoria Research Lab U. Melbourne, Australia Sample Error Probability Latent Dirichlet Allocation Dept. of Computer Science University of California, Irvine 10 7 10 Sample Error Probability Dept. of Computer Science University of California, Irvine David Newman 10 10 10 -1 KOS Bound KOS Error NIPS Bound NIPS Error Enron Bound Enron Error reference -2 -3 -4 10 1 10 2 Number of Topics 10 3

- Slides: 1