Umbra Efficient and Scalable Memory Shadowing Qin Zhao

Umbra: Efficient and Scalable Memory Shadowing Qin Zhao (MIT) Derek Bruening (VMware) Saman Amarasinghe (MIT) CGO 2010, Toronto, Canada April 26, 2010

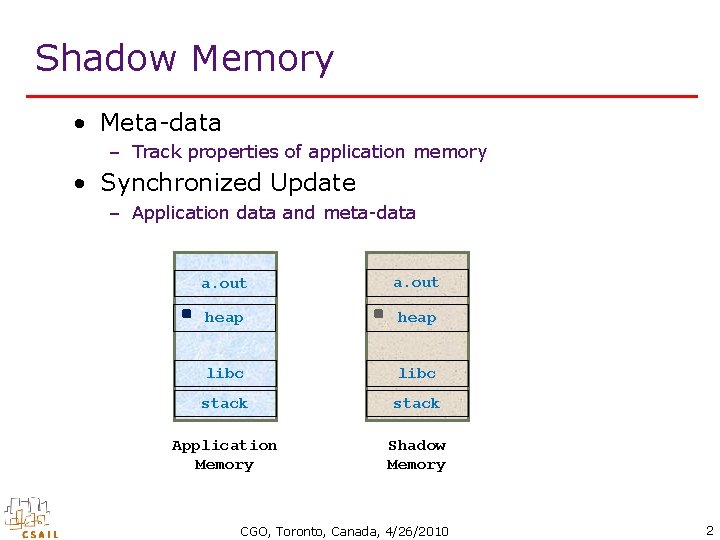

Shadow Memory • Meta-data – Track properties of application memory • Synchronized Update – Application data and meta-data a. out heap libc stack Application Memory Shadow Memory CGO, Toronto, Canada, 4/26/2010 2

![Examples • Memory Error Detection – – Mem. Check [VEE’ 07] Purify [USENIX’ 92] Examples • Memory Error Detection – – Mem. Check [VEE’ 07] Purify [USENIX’ 92]](http://slidetodoc.com/presentation_image_h/2b8cac957800a5d9476d65944d2fdea7/image-3.jpg)

Examples • Memory Error Detection – – Mem. Check [VEE’ 07] Purify [USENIX’ 92] Dr. Memory Mem. Tracker [HPCA’ 07] • Dynamic Information Flow Tracking – LIFT [MICRO’ 39] – Taint. Trace [ISCC’ 06] • Multi-threaded Debugging – Eraser [TCS’ 97] – Helgrind • Others – Redux [TCS’ 03] – Software Watchpoint [CC’ 08] CGO, Toronto, Canada, 4/26/2010 3

![Issues • Performance – Runtime overhead • Example: Mem. Check 25 x [VEE’ 07] Issues • Performance – Runtime overhead • Example: Mem. Check 25 x [VEE’ 07]](http://slidetodoc.com/presentation_image_h/2b8cac957800a5d9476d65944d2fdea7/image-4.jpg)

Issues • Performance – Runtime overhead • Example: Mem. Check 25 x [VEE’ 07] • Scalability – 64 -bit architecture • Dependence – OS – Hardware • Development – Implemented with specific analysis – Lack of a general framework CGO, Toronto, Canada, 4/26/2010 4

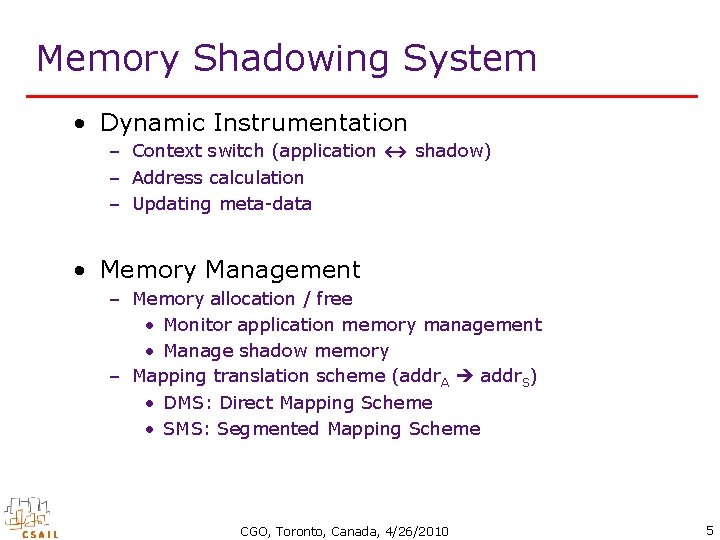

Memory Shadowing System • Dynamic Instrumentation – Context switch (application ↔ shadow) – Address calculation – Updating meta-data • Memory Management – Memory allocation / free • Monitor application memory management • Manage shadow memory – Mapping translation scheme (addr. A addr. S) • DMS: Direct Mapping Scheme • SMS: Segmented Mapping Scheme CGO, Toronto, Canada, 4/26/2010 5

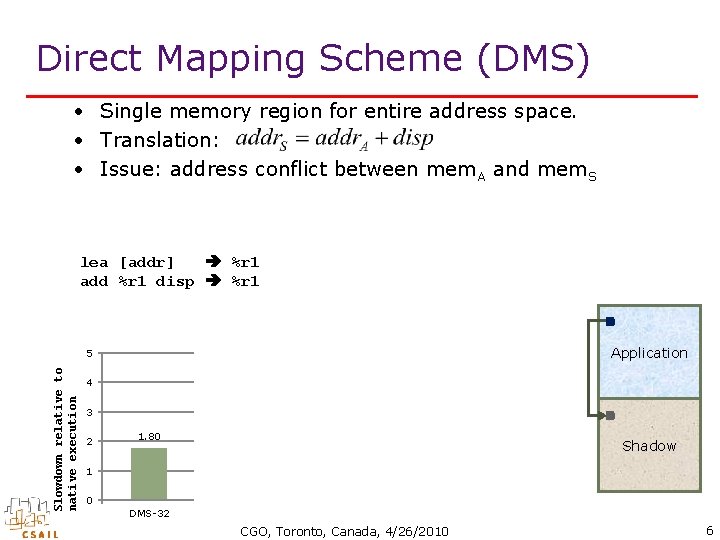

Direct Mapping Scheme (DMS) • Single memory region for entire address space. • Translation: • Issue: address conflict between mem. A and mem. S lea [addr] %r 1 add %r 1 disp %r 1 Slowdown relative to native execution 5 4. 67 Application 4 3 2 2. 40 1. 80 Shadow 1 0 DMS-32 SMS-32 DMS-64 SMS-64 CGO, Toronto, Canada, 4/26/2010 6

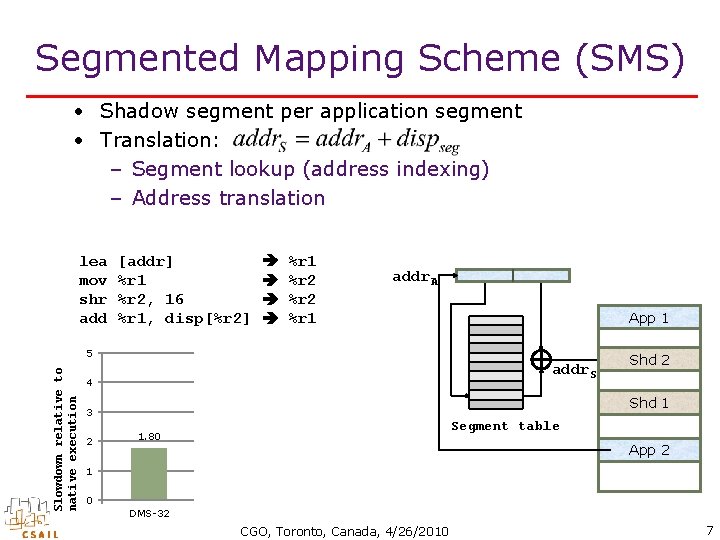

Segmented Mapping Scheme (SMS) • Shadow segment per application segment • Translation: – Segment lookup (address indexing) – Address translation lea mov shr add [addr] %r 1 %r 2, 16 %r 1, disp[%r 2] %r 1 %r 2 %r 1 addr. A App 1 Slowdown relative to native execution 5 4. 67 addr. S 4 Shd 1 3 2 Shd 2 2. 40 Segment table 1. 80 App 2 1 0 DMS-32 SMS-32 DMS-64 SMS-64 CGO, Toronto, Canada, 4/26/2010 7

Umbra • Mapping Scheme – Segmented mapping – Scale with actual memory usage • Implementation – Dynamo. RIO • Optimization – Translation optimization – Instrumentation optimization • Client API • Experimental Results – Performance evaluation – Statistics collection CGO, Toronto, Canada, 4/26/2010 8

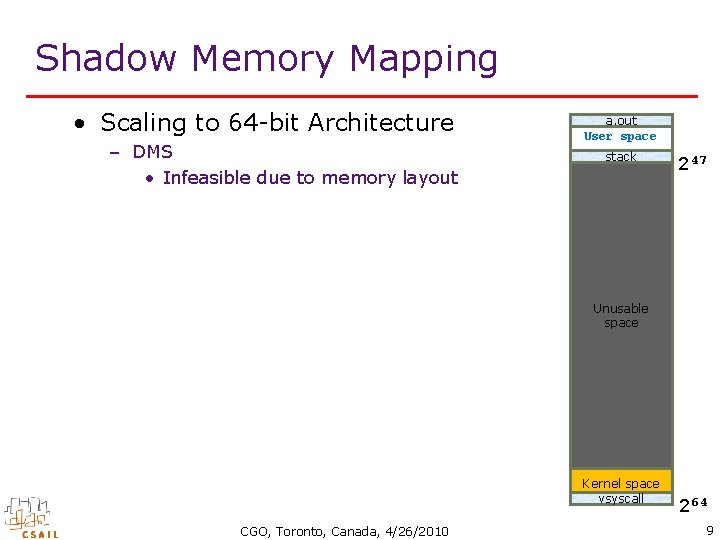

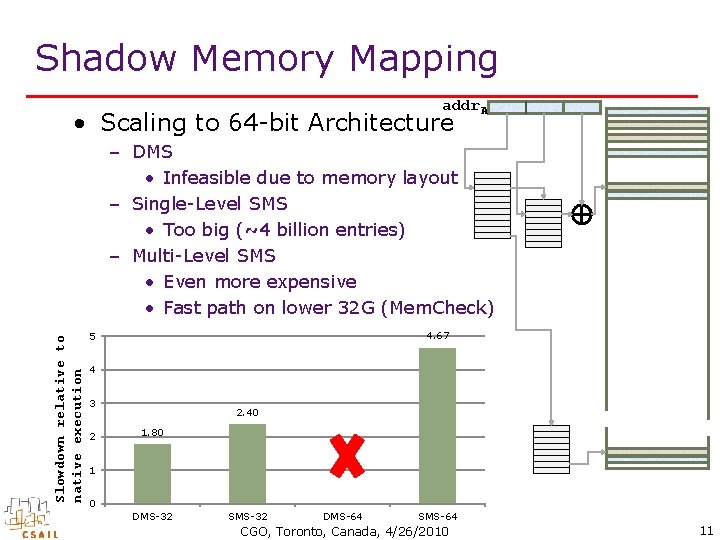

Shadow Memory Mapping • Scaling to 64 -bit Architecture – DMS • Infeasible due to memory layout a. out User space stack 247 Unusable space Kernel space vsyscall CGO, Toronto, Canada, 4/26/2010 264 9

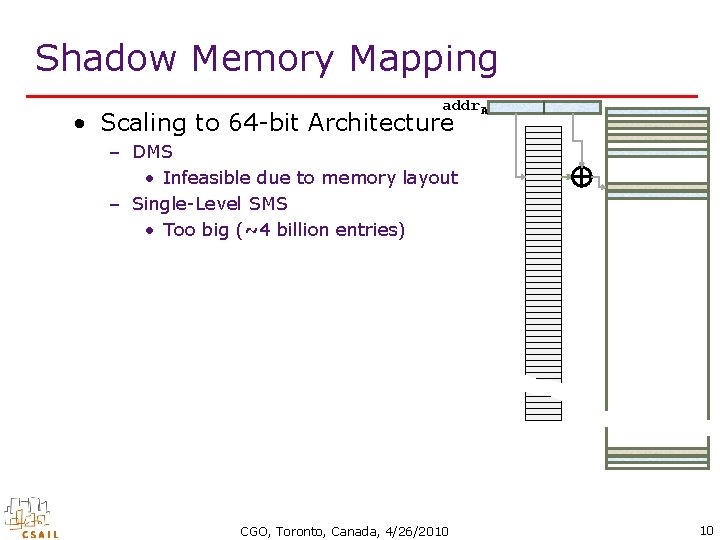

Shadow Memory Mapping addr. A • Scaling to 64 -bit Architecture – DMS • Infeasible due to memory layout – Single-Level SMS • Too big (~4 billion entries) CGO, Toronto, Canada, 4/26/2010 10

Shadow Memory Mapping addr. A • Scaling to 64 -bit Architecture Slowdown relative to native execution – DMS • Infeasible due to memory layout – Single-Level SMS • Too big (~4 billion entries) – Multi-Level SMS • Even more expensive • Fast path on lower 32 G (Mem. Check) 4. 67 5 4 3 2 2. 40 1. 80 1 0 DMS-32 SMS-32 DMS-64 SMS-64 CGO, Toronto, Canada, 4/26/2010 11

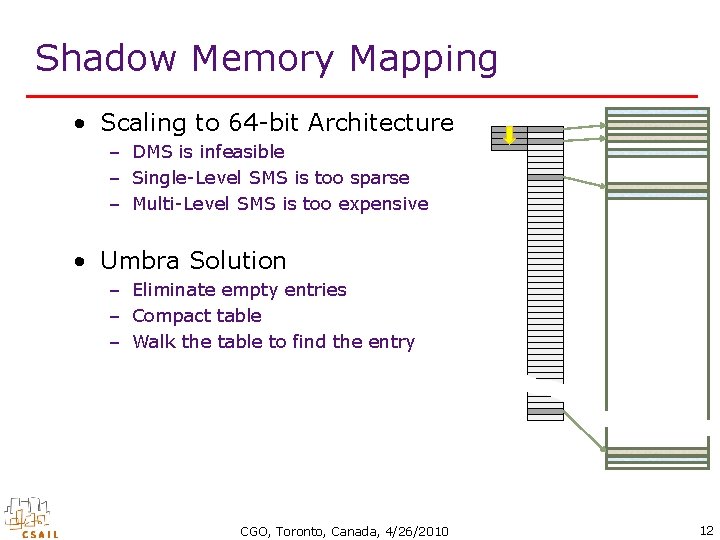

Shadow Memory Mapping • Scaling to 64 -bit Architecture – DMS is infeasible – Single-Level SMS is too sparse – Multi-Level SMS is too expensive • Umbra Solution – Eliminate empty entries – Compact table – Walk the table to find the entry CGO, Toronto, Canada, 4/26/2010 12

Umbra • Mapping Scheme √ – Segmented mapping – Scale with actual memory usage • Implementation – Dynamo. RIO • Optimization – Translation optimization – Instrumentation optimization • Client API • Experimental Result – Performance evaluation – Statistics collection CGO, Toronto, Canada, 4/26/2010 13

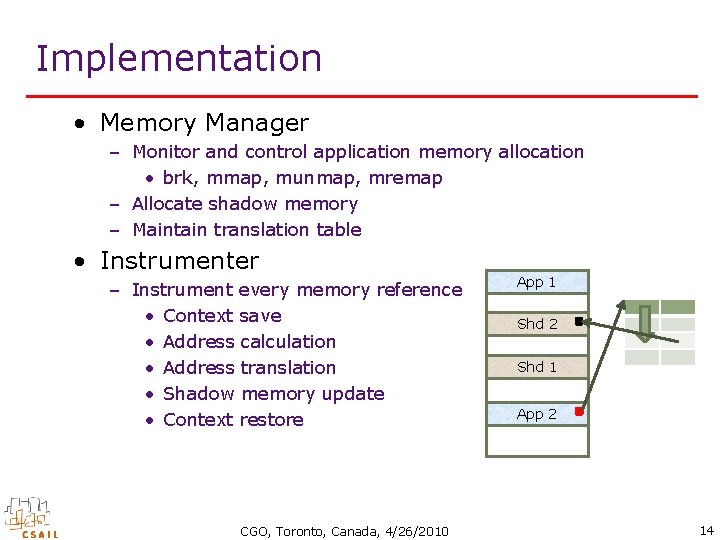

Implementation • Memory Manager – Monitor and control application memory allocation • brk, mmap, munmap, mremap – Allocate shadow memory – Maintain translation table • Instrumenter – Instrument every memory reference • Context save • Address calculation • Address translation • Shadow memory update • Context restore CGO, Toronto, Canada, 4/26/2010 App 1 Shd 2 Shd 1 App 2 14

Umbra • Mapping Scheme √ – Segmented mapping – Scale with actual memory usage • Implementation √ – Dynamo. RIO • Optimization – Translation optimization – Instrumentation optimization • Client API • Experimental Result – Performance evaluation – Statistics collection CGO, Toronto, Canada, 4/26/2010 15

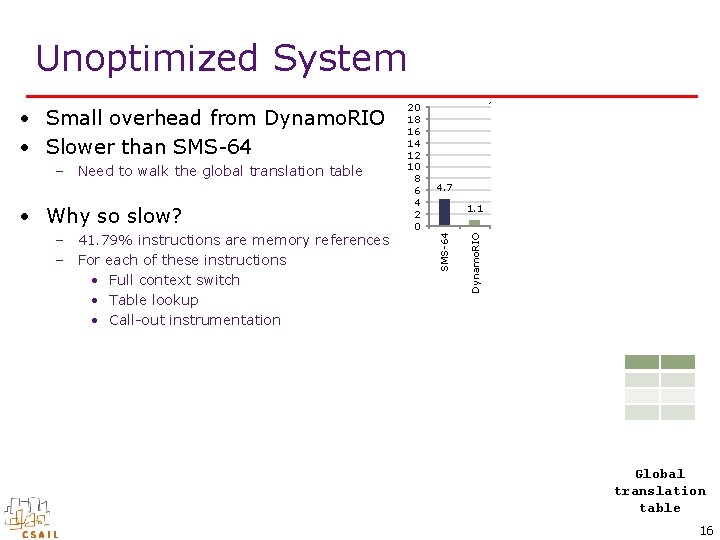

Unoptimized System Reference Cache Memoization Check Hash Table 1. 1 3. 1 2. 5 Reference Grouping 4. 7 Context Switch Reduction 8. 3 Local Translation Table – 41. 79% instructions are memory references – For each of these instructions • Full context switch • Table lookup • Call-out instrumentation 12. 0 Unoptimized • Why so slow? 15. 8 15. 2 Dynamo. RIO – Need to walk the global translation table ~100 100. 0 SMS-64 • Small overhead from Dynamo. RIO • Slower than SMS-64 20 18 16 14 12 10 8 6 4 2 0 Global translation table 16

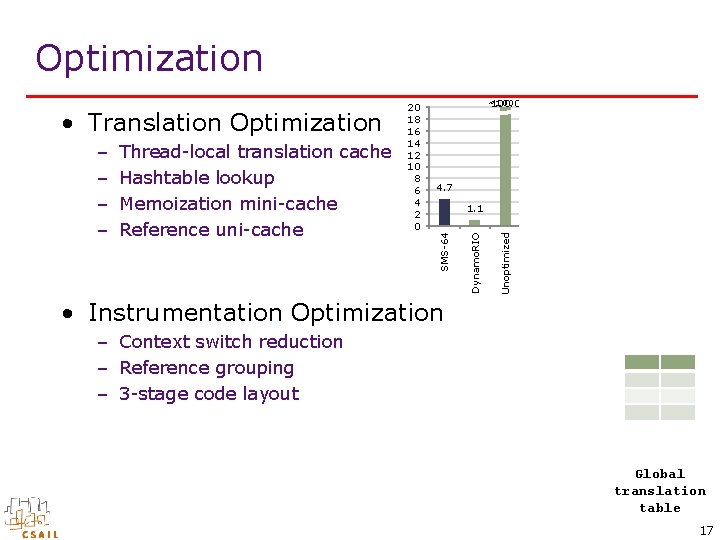

Optimization 12. 0 – Context switch reduction – Reference grouping – 3 -stage code layout Reference Cache Hash Table Memoization Check • Instrumentation Optimization Local Translation Table 1. 1 3. 1 2. 5 Reference Grouping 4. 7 Context Switch Reduction 8. 3 Unoptimized Thread-local translation cache Hashtable lookup Memoization mini-cache Reference uni-cache 15. 8 15. 2 Dynamo. RIO – – ~100 100. 0 SMS-64 • Translation Optimization 20 18 16 14 12 10 8 6 4 2 0 Global translation table 17

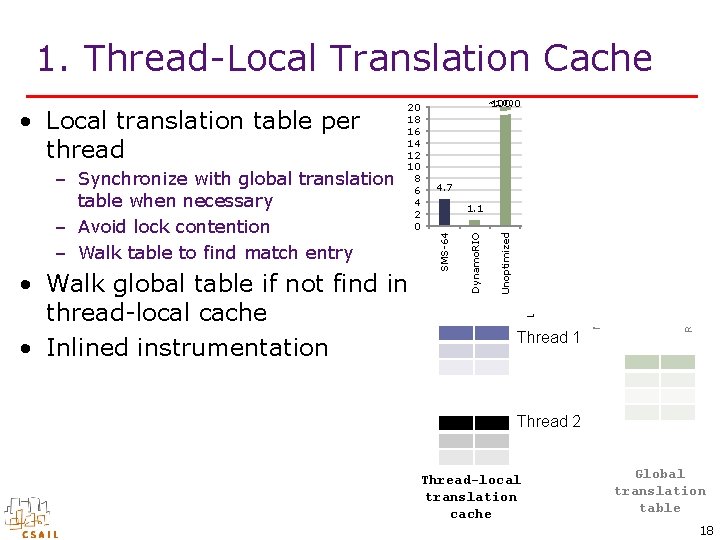

1. Thread-Local Translation Cache 12. 0 Thread 1 Reference Cache Memoization Check Local Translation Table Hash Table 1. 1 3. 1 2. 5 Reference Grouping 4. 7 Context Switch Reduction 8. 3 Unoptimized • Walk global table if not find in thread-local cache • Inlined instrumentation 15. 8 15. 2 Dynamo. RIO – Synchronize with global translation table when necessary – Avoid lock contention – Walk table to find match entry ~100 100. 0 SMS-64 • Local translation table per thread 20 18 16 14 12 10 8 6 4 2 0 Thread 2 Thread-local translation cache Global translation table 18

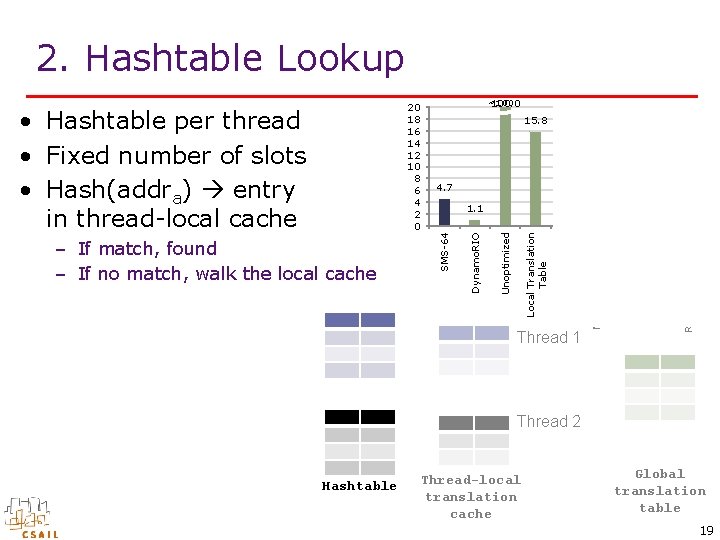

2. Hashtable Lookup 12. 0 Thread 1 Reference Cache Memoization Check Local Translation Table Hash Table 1. 1 3. 1 2. 5 Reference Grouping 4. 7 Context Switch Reduction 8. 3 Unoptimized – If match, found – If no match, walk the local cache 15. 8 15. 2 Dynamo. RIO • Hashtable per thread • Fixed number of slots • Hash(addra) entry in thread-local cache ~100 100. 0 SMS-64 20 18 16 14 12 10 8 6 4 2 0 Thread 2 Hashtable Thread-local translation cache Global translation table 19

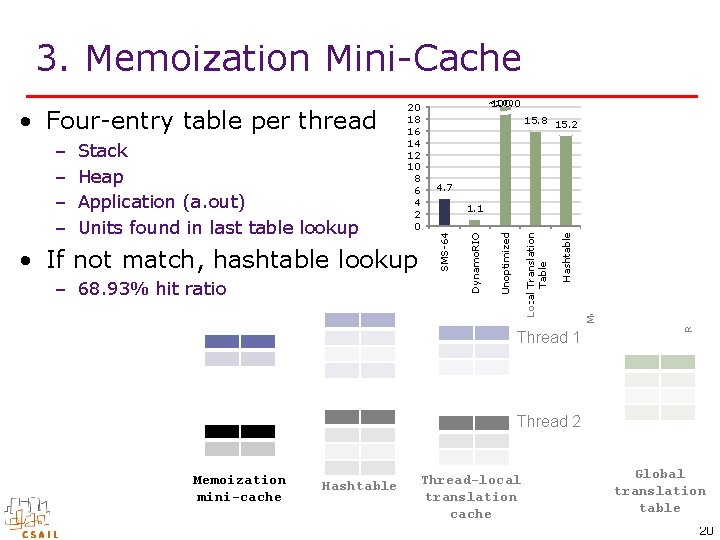

3. Memoization Mini-Cache Reference Uni. Cache 3. 1 2. 5 Reference Grouping Thread 1 Memoization Mini. Cache 1. 1 Context Switch Reduction 4. 7 Hashtable – 68. 93% hit ratio 8. 3 Local Translation Table • If not match, hashtable lookup 12. 0 Unoptimized Stack Heap Application (a. out) Units found in last table lookup 15. 8 15. 2 Dynamo. RIO – – ~100 100. 0 SMS-64 • Four-entry table per thread 20 18 16 14 12 10 8 6 4 2 0 Thread 2 Memoization mini-cache Hashtable Thread-local translation cache Global translation table 20

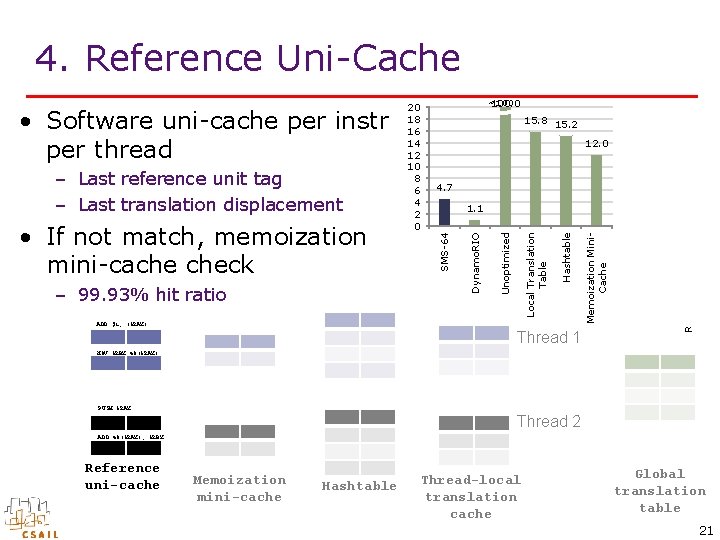

4. Reference Uni-Cache ADD $1, (%RAX) Thread 1 Reference Uni. Cache Memoization Mini. Cache Hashtable 1. 1 3. 1 2. 5 Reference Grouping 4. 7 Context Switch Reduction 8. 3 Local Translation Table – 99. 93% hit ratio 12. 0 Unoptimized • If not match, memoization mini-cache check 15. 8 15. 2 Dynamo. RIO – Last reference unit tag – Last translation displacement ~100 100. 0 SMS-64 • Software uni-cache per instr per thread 20 18 16 14 12 10 8 6 4 2 0 MOV %RBX 48(%RAX) PUSH %RAX Thread 2 ADD 40(%RAX), %RBX Reference uni-cache Memoization mini-cache Hashtable Thread-local translation cache Global translation table 21

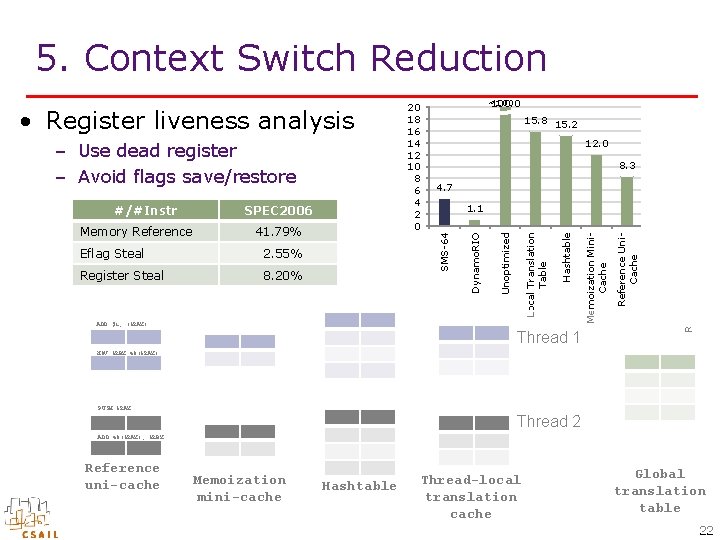

5. Context Switch Reduction ADD $1, (%RAX) Thread 1 2. 5 Reference Grouping 8. 20% 3. 1 Context Switch Reduction Register Steal Reference Uni. Cache 2. 55% 1. 1 Memoization Mini. Cache Eflag Steal 4. 7 Hashtable 41. 79% 8. 3 Local Translation Table Memory Reference SPEC 2006 12. 0 SMS-64 #/#Instr 15. 8 15. 2 Unoptimized – Use dead register – Avoid flags save/restore ~100 100. 0 Dynamo. RIO • Register liveness analysis 20 18 16 14 12 10 8 6 4 2 0 MOV %RBX 48(%RAX) PUSH %RAX Thread 2 ADD 40(%RAX), %RBX Reference uni-cache Memoization mini-cache Hashtable Thread-local translation cache Global translation table 22

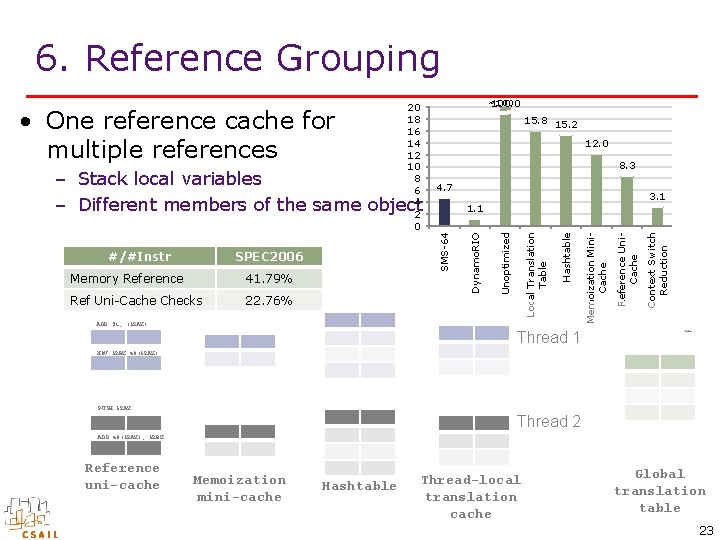

6. Reference Grouping ~100 100. 0 15. 8 15. 2 12. 0 2. 5 Reference Grouping Thread 1 3. 1 Context Switch Reduction ADD $1, (%RAX) Reference Uni. Cache 22. 76% Memoization Mini. Cache Ref Uni-Cache Checks Hashtable 41. 79% Local Translation Table SPEC 2006 Memory Reference 1. 1 Unoptimized #/#Instr 4. 7 SMS-64 – Stack local variables – Different members of the same object 8. 3 Dynamo. RIO • One reference cache for multiple references 20 18 16 14 12 10 8 6 4 2 0 MOV %RBX 48(%RAX) PUSH %RAX Thread 2 ADD 40(%RAX), %RBX Reference uni-cache Memoization mini-cache Hashtable Thread-local translation cache Global translation table 23

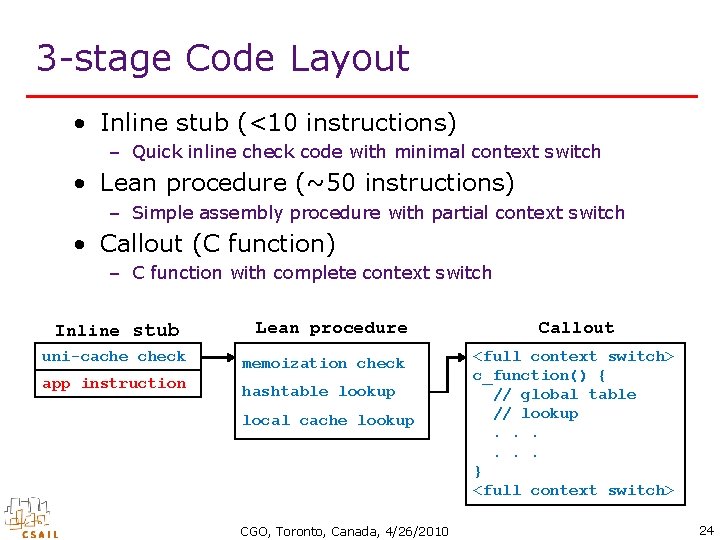

3 -stage Code Layout • Inline stub (<10 instructions) – Quick inline check code with minimal context switch • Lean procedure (~50 instructions) – Simple assembly procedure with partial context switch • Callout (C function) – C function with complete context switch Inline stub uni-cache check app instruction Lean procedure memoization check hashtable lookup local cache lookup CGO, Toronto, Canada, 4/26/2010 Callout <full context switch> c_function() { // global table // lookup. . . } <full context switch> 24

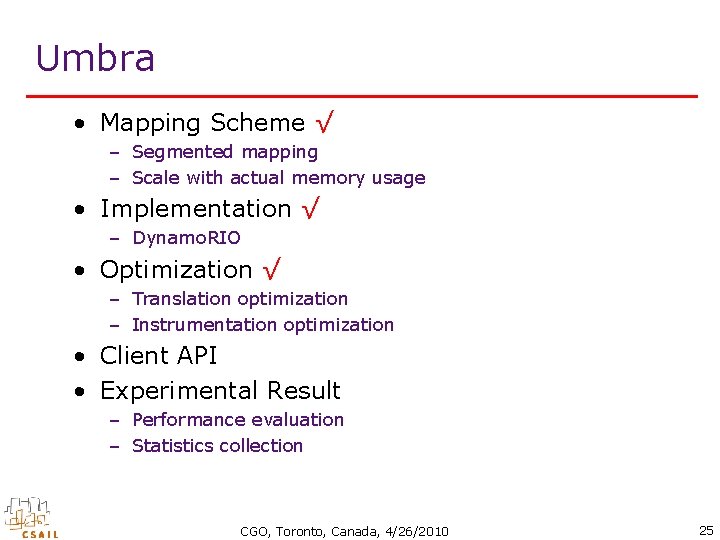

Umbra • Mapping Scheme √ – Segmented mapping – Scale with actual memory usage • Implementation √ – Dynamo. RIO • Optimization √ – Translation optimization – Instrumentation optimization • Client API • Experimental Result – Performance evaluation – Statistics collection CGO, Toronto, Canada, 4/26/2010 25

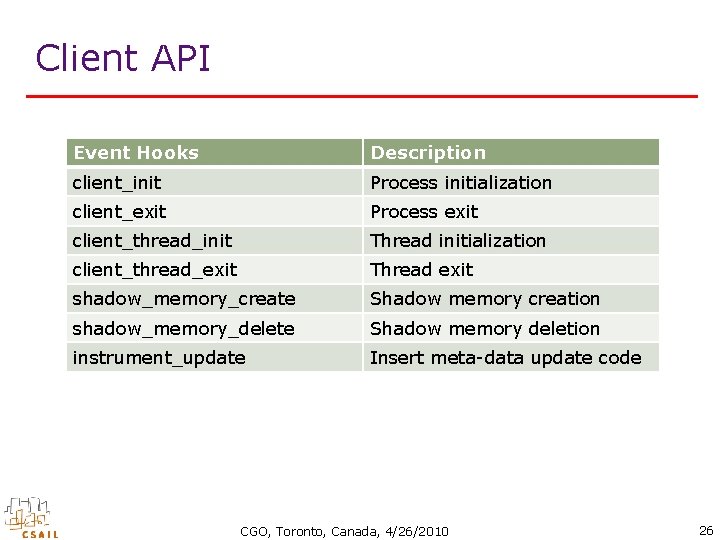

Client API Event Hooks Description client_init Process initialization client_exit Process exit client_thread_init Thread initialization client_thread_exit Thread exit shadow_memory_create Shadow memory creation shadow_memory_delete Shadow memory deletion instrument_update Insert meta-data update code CGO, Toronto, Canada, 4/26/2010 26

Umbra Client: Shared Memory Detection • Meta-data maintains a bit map to store which threads access the associated memory static void instrument_update(void *drcontext, umbra_info_t *umbra_info, mem_ref_t *ref, instrlist_t *ilist, instr_t *where) { … /* lock or [%r 1], tid_map [%r 1] */ opnd 1 = OPND_CREATE_MEM 32(umbra_info reg, 0, OPSZ_4); opnd 2 = OPND_CREATE_INT 32(client_tls_data tid_map); instr = INSTR_CREATE_or(drcontext, opnd 1, opnd 2); LOCK(instr); instrlist_meta_preinsert(ilist, label, instr); } CGO, Toronto, Canada, 4/26/2010 27

Umbra • Mapping Scheme √ – Segmented mapping – Scale with actual memory usage • Implementation √ – Dynamo. RIO • Optimization √ – Translation optimization – Instrumentation optimization • Client API √ • Experimental Result – Performance evaluation – Statistics collection CGO, Toronto, Canada, 4/26/2010 28

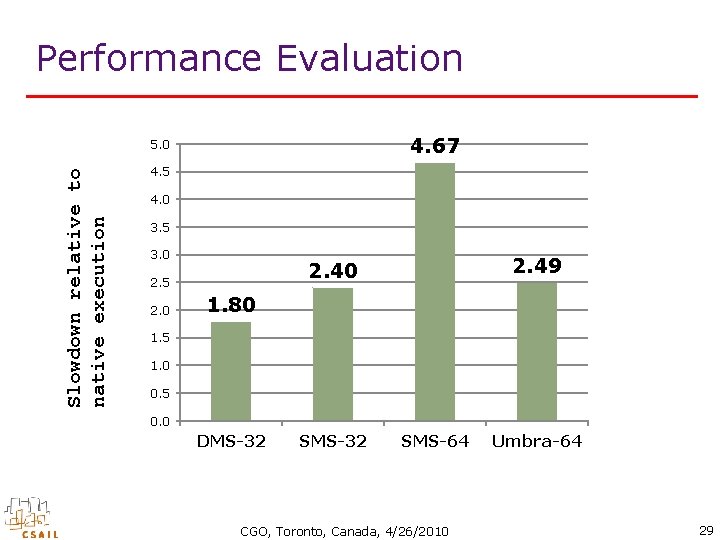

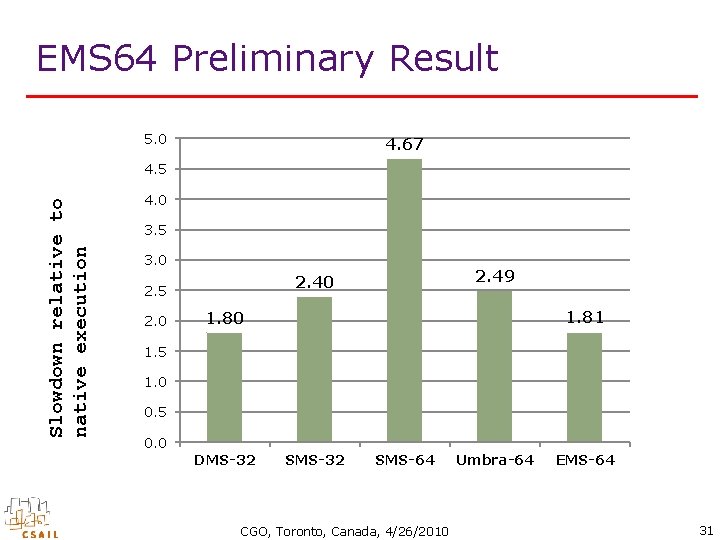

Performance Evaluation 4. 67 Slowdown relative to native execution 5. 0 4. 5 4. 0 3. 5 3. 0 2. 49 2. 40 2. 5 1. 80 1. 5 1. 0 0. 5 0. 0 DMS-32 SMS-64 CGO, Toronto, Canada, 4/26/2010 Umbra-64 29

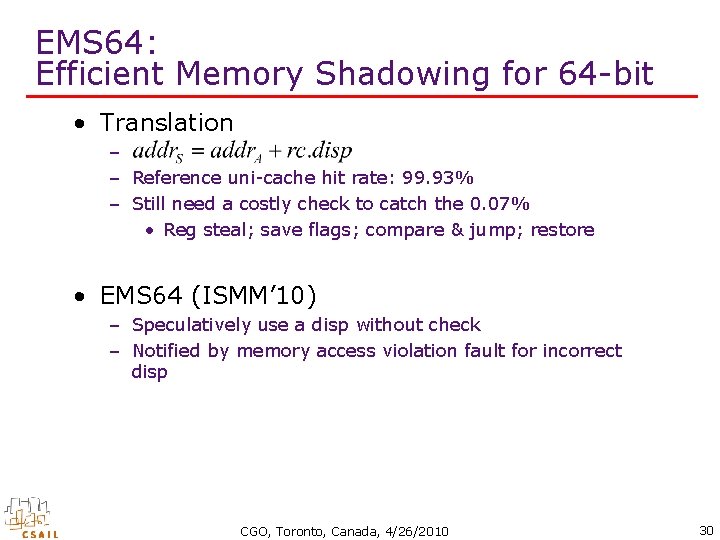

EMS 64: Efficient Memory Shadowing for 64 -bit • Translation – – Reference uni-cache hit rate: 99. 93% – Still need a costly check to catch the 0. 07% • Reg steal; save flags; compare & jump; restore • EMS 64 (ISMM’ 10) – Speculatively use a disp without check – Notified by memory access violation fault for incorrect disp CGO, Toronto, Canada, 4/26/2010 30

EMS 64 Preliminary Result 5. 0 4. 67 Slowdown relative to native execution 4. 5 4. 0 3. 5 3. 0 2. 49 2. 40 2. 5 1. 81 1. 80 1. 5 1. 0 0. 5 0. 0 DMS-32 SMS-64 CGO, Toronto, Canada, 4/26/2010 Umbra-64 EMS-64 31

Thanks • Download – http: //people. csail. mit. edu/qin_zhao/umbra/ • Q&A CGO, Toronto, Canada, 4/26/2010 32

- Slides: 32