UKISouth Grid Overview Grid PP 27 Pete Gronbech

UKI-South. Grid Overview Grid. PP 27 Pete Gronbech South. Grid Technical Coordinator CERN September 2011

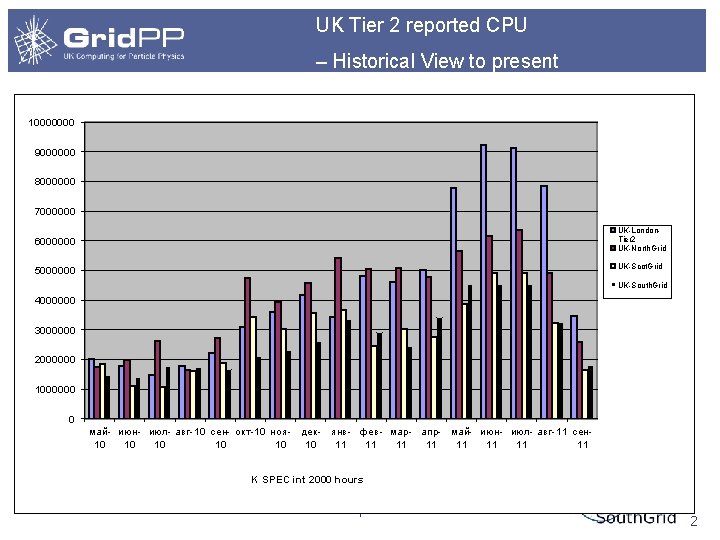

UK Tier 2 reported CPU – Historical View to present 10000000 9000000 8000000 7000000 UK-London. Tier 2 UK-North. Grid 6000000 UK-Scot. Grid 5000000 UK-South. Grid 4000000 3000000 2000000 1000000 0 май- июн- июл- авг-10 сен- окт-10 ноя- дек- янв- фев- мар- апр- май- июн- июл- авг-11 сен 10 10 10 11 11 K SPEC int 2000 hours South. Grid September 2011 2

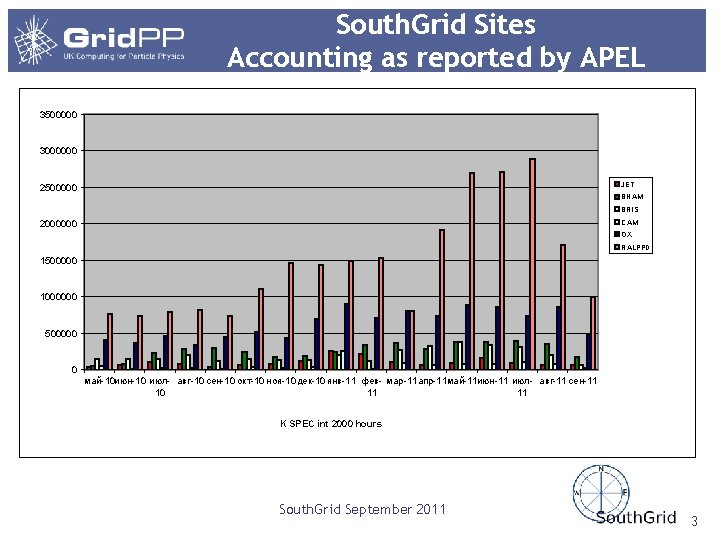

South. Grid Sites Accounting as reported by APEL 3500000 3000000 JET 2500000 BHAM BRIS CAM 2000000 OX RALPPD 1500000 1000000 500000 0 май-10 июн-10 июл- авг-10 сен-10 окт-10 ноя-10 дек-10 янв-11 фев- мар-11 апр-11 май-11 июн-11 июл- авг-11 сен-11 10 11 11 K SPEC int 2000 hours South. Grid September 2011 3

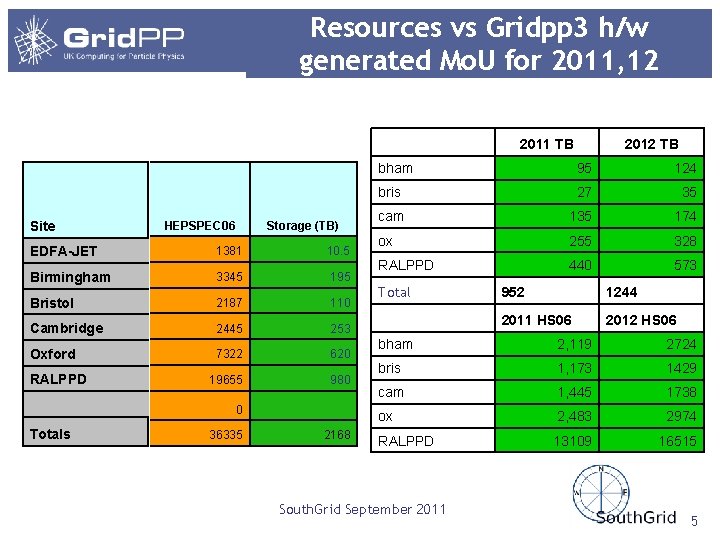

Resources vs Gridpp 3 h/w generated Mo. U for 2011, 12 2011 TB Site HEPSPEC 06 Storage (TB) EDFA-JET 1381 10. 5 Birmingham 3345 195 Bristol 2187 110 Cambridge 2445 253 Oxford 7322 620 19655 980 RALPPD 0 Totals 36335 2168 2012 TB bham 95 124 bris 27 35 cam 135 174 ox 255 328 RALPPD 440 573 Total 952 1244 2011 HS 06 2012 HS 06 bham 2, 119 2724 bris 1, 173 1429 cam 1, 445 1738 ox 2, 483 2974 RALPPD 13109 16515 South. Grid September 2011 5

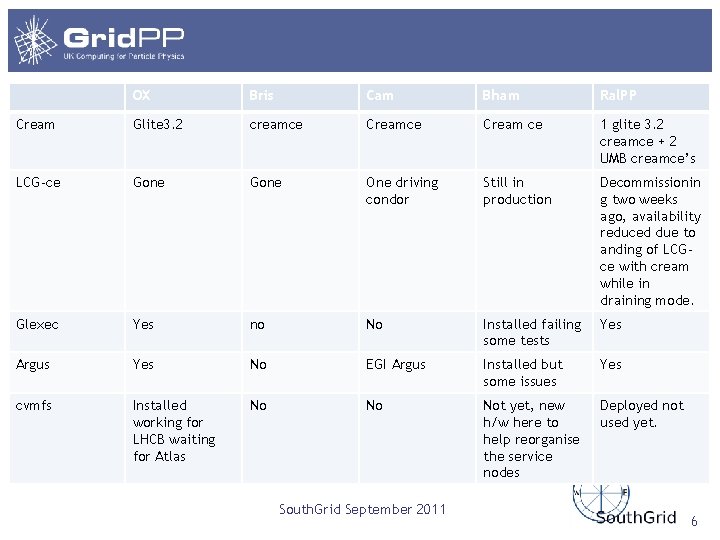

OX Bris Cam Bham Ral. PP Cream Glite 3. 2 creamce Cream ce 1 glite 3. 2 creamce + 2 UMB creamce’s LCG-ce Gone One driving condor Still in production Decommissionin g two weeks ago, availability reduced due to anding of LCGce with cream while in draining mode. Glexec Yes no No Installed failing some tests Yes Argus Yes No EGI Argus Installed but some issues Yes cvmfs Installed working for LHCB waiting for Atlas No No Not yet, new h/w here to help reorganise the service nodes Deployed not used yet. South. Grid September 2011 6

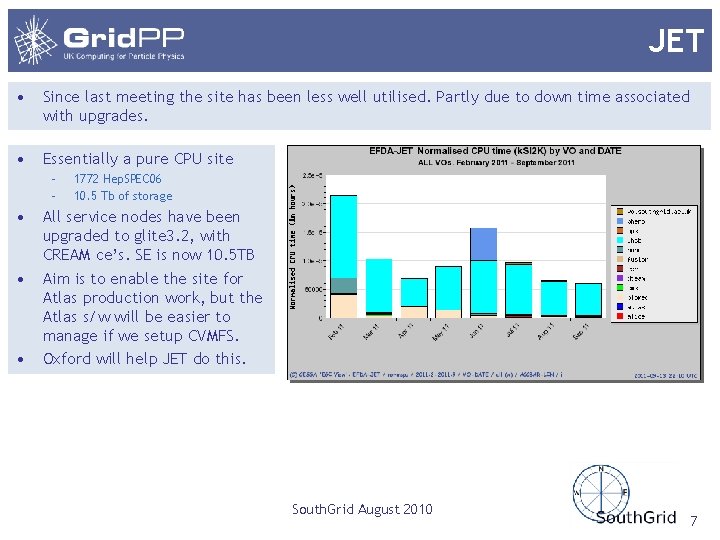

JET • Since last meeting the site has been less well utilised. Partly due to down time associated with upgrades. • Essentially a pure CPU site – – • • • 1772 Hep. SPEC 06 10. 5 Tb of storage All service nodes have been upgraded to glite 3. 2, with CREAM ce’s. SE is now 10. 5 TB Aim is to enable the site for Atlas production work, but the Atlas s/w will be easier to manage if we setup CVMFS. Oxford will help JET do this. South. Grid August 2010 7

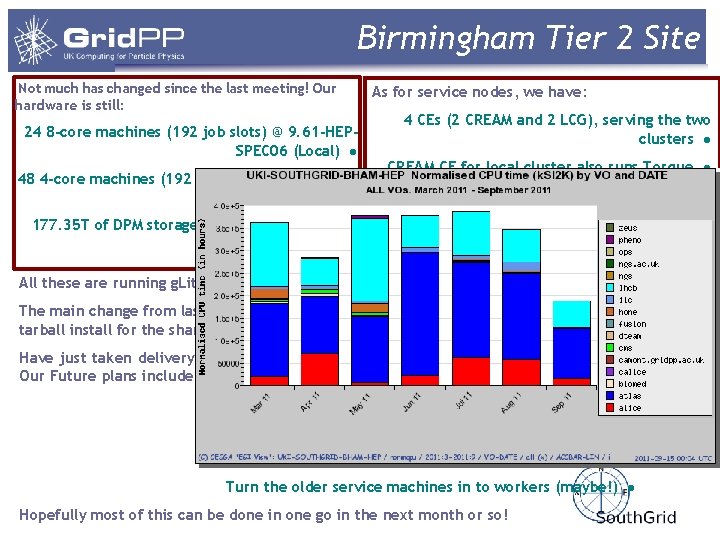

Birmingham Tier 2 Site Not much has changed since the last meeting! Our hardware is still: 24 8 -core machines (192 job slots) @ 9. 61 -HEPSPEC 06 (Local) ● 48 4 -core machines (192 jobs slots) @ 7. 93 -HEPSPEC 06 (Shared) ● 177. 35 T of DPM storage across 4 pool nodes ● As for service nodes, we have: 4 CEs (2 CREAM and 2 LCG), serving the two clusters ● CREAM CE for local cluster also runs Torque ● 2 ALICE VO Boxes, 1 for each cluster ● An ARGUS server for the local cluster ● Usual BDII, APEL and DPM My. SQL server nodes ● All these are running g. Lite 3. 2 SL 5 with the exception of the LCG Ces The main change from last time is we have deployed glexec on the local cluster – still waiting on a tarball install for the shared cluster Have just taken delivery of 2 new 8 core systems to replace the 4 quad core service machines. Our Future plans include: Decommission the LCG CEs ● Consolidate service nodes on to new machines ● Split the Torque server and CREAM CE ● Deploy CVMFS ● Turn the older service machines in to workers (maybe!) ● Hopefully most of this can be done in one go in the next month or so!

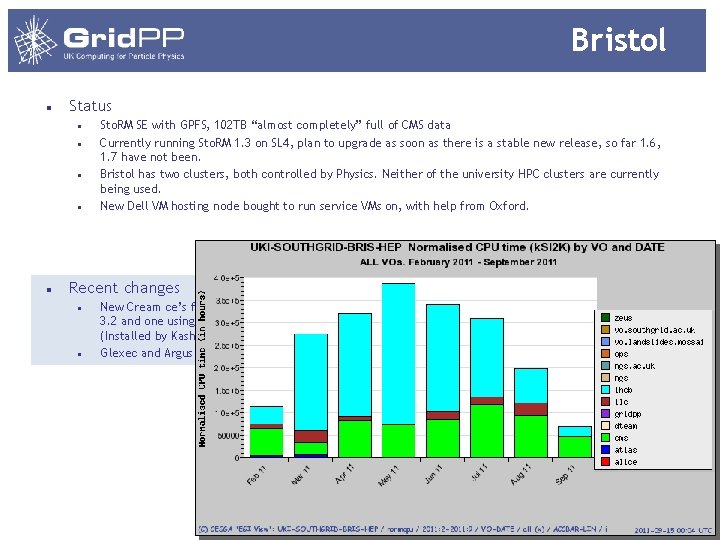

Bristol Status Sto. RM SE with GPFS, 102 TB “almost completely” full of CMS data Currently running Sto. RM 1. 3 on SL 4, plan to upgrade as soon as there is a stable new release, so far 1. 6, 1. 7 have not been. Bristol has two clusters, both controlled by Physics. Neither of the university HPC clusters are currently being used. New Dell VM hosting node bought to run service VMs on, with help from Oxford. Recent changes New Cream ce’s front each cluster, one glite 3. 2 and one using the new UMD release. (Installed by Kashif ) Glexec and Argus have not yet been installed.

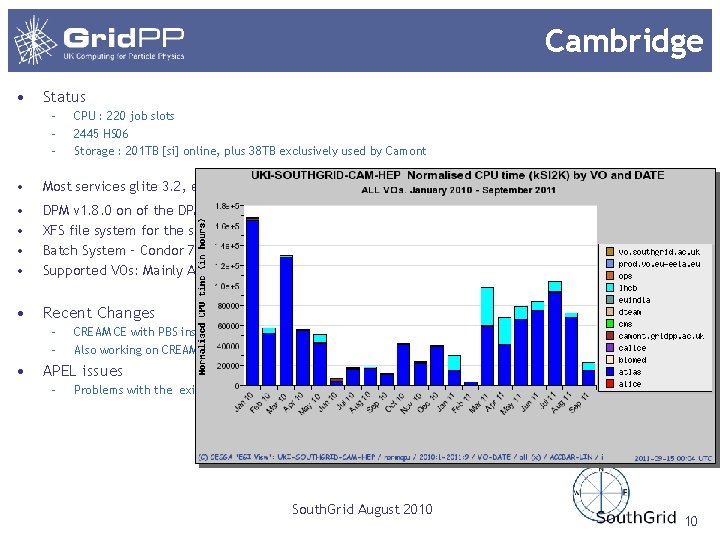

Cambridge • Status – – – CPU : 220 job slots 2445 HS 06 Storage : 201 TB [si] online, plus 38 TB exclusively used by Camont • Most services glite 3. 2, exception is the DPM head node and the LCG-ce for the condor cluster. • • DPM v 1. 8. 0 on of the DPM disk servers, SL 5 XFS file system for the storage Batch System – Condor 7. 4. 4, Torque 2. 3. 13 Supported VOs: Mainly Atlas, LHCb and Camont • Recent Changes – – • CREAM CE with PBS installed Also working on CREAM-Condor in parallel APEL issues – Problems with the existing APEL implementation for Condor South. Grid August 2010 10

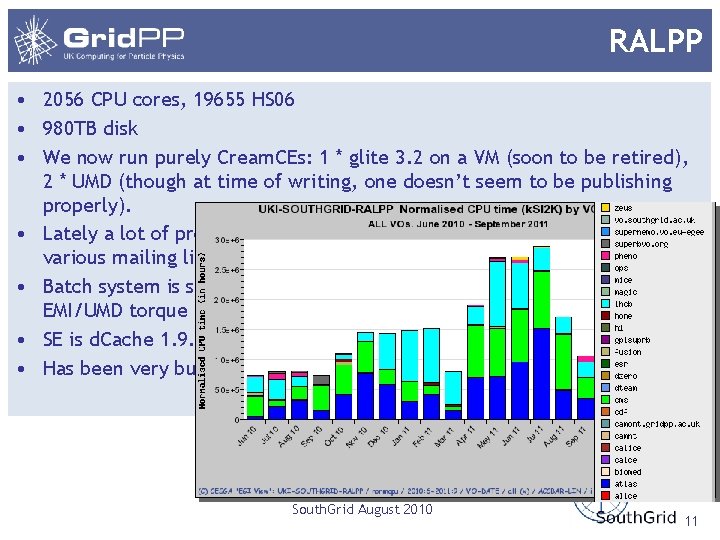

RALPP • 2056 CPU cores, 19655 HS 06 • 980 TB disk • We now run purely Cream. CEs: 1 * glite 3. 2 on a VM (soon to be retired), 2 * UMD (though at time of writing, one doesn’t seem to be publishing properly). • Lately a lot of problems with CE stability, as per discussions on the various mailing lists. • Batch system is still Torque from glite 3. 1, but we will soon bring up an EMI/UMD torque to replace it (currently installed for test). • SE is d. Cache 1. 9. 5 – planning to ugrade to 1. 9. 12 in the near future. • Has been very busy over recent months. South. Grid August 2010 11

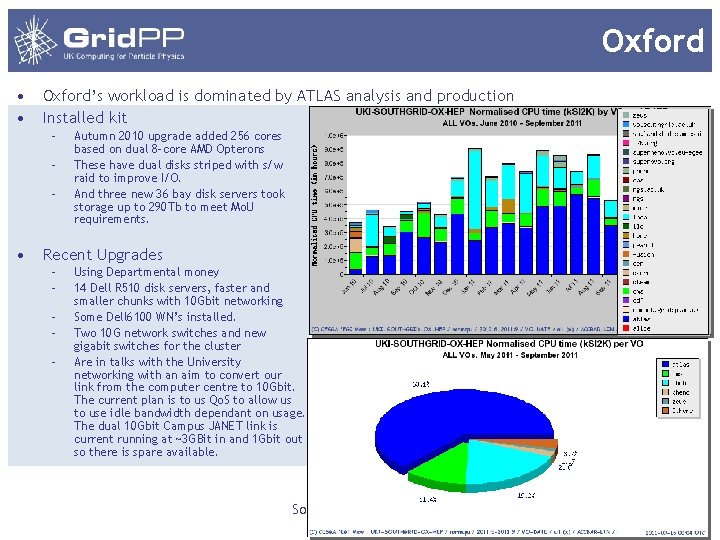

Oxford • • Oxford’s workload is dominated by ATLAS analysis and production Installed kit – – – • Autumn 2010 upgrade added 256 cores based on dual 8 -core AMD Opterons These have dual disks striped with s/w raid to improve I/O. And three new 36 bay disk servers took storage up to 290 Tb to meet Mo. U requirements. Recent Upgrades – – – Using Departmental money 14 Dell R 510 disk servers, faster and smaller chunks with 10 Gbit networking Some Dell 6100 WN’s installed. Two 10 G network switches and new gigabit switches for the cluster Are in talks with the University networking with an aim to convert our link from the computer centre to 10 Gbit. The current plan is to us Qo. S to allow us to use idle bandwidth dependant on usage. The dual 10 Gbit Campus JANET link is current running at ~3 GBit in and 1 Gbit out so there is spare available. South. Grid August 2010 12

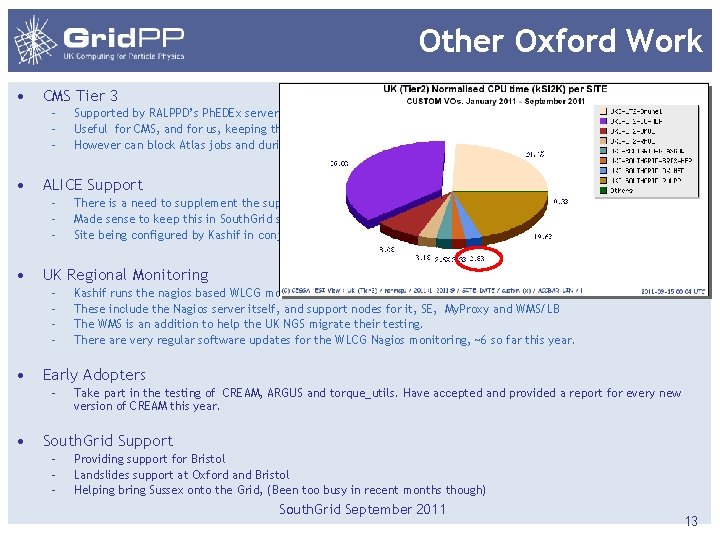

Other Oxford Work • CMS Tier 3 – – – • ALICE Support – – – • Kashif runs the nagios based WLCG monitoring on the servers at Oxford These include the Nagios server itself, and support nodes for it, SE, My. Proxy and WMS/LB The WMS is an addition to help the UK NGS migrate their testing. There are very regular software updates for the WLCG Nagios monitoring, ~6 so far this year. Early Adopters – • There is a need to supplement the support given to ALICE by Birmingham. Made sense to keep this in South. Grid so Oxford have deployed an ALICE VO box Site being configured by Kashif in conjunction with Alice support UK Regional Monitoring – – • Supported by RALPPD’s Ph. EDEx server Useful for CMS, and for us, keeping the site busy in quiet times However can block Atlas jobs and during accounting period not so desirable Take part in the testing of CREAM, ARGUS and torque_utils. Have accepted and provided a report for every new version of CREAM this year. South. Grid Support – – – Providing support for Bristol Landslides support at Oxford and Bristol Helping bring Sussex onto the Grid, (Been too busy in recent months though) South. Grid September 2011 13

Sussex • • Sussex has a significant local ATLAS group, their system is designed for the high IO bandwidth patterns that ATLAS analysis can generate. Up and running as a Tier 3 with the Feynman sub-cluster for Particle Physics, Apollo sub-cluster used by rest of University. Feynman : 8 nodes, each node has 2 Intel Xeon X 5650 @ 2. 67 GHz measured at ~15. 67 Hep. Spec 06 per core, total of 96 cores. 48 GB Ram per node. Apollo currently has 38 nodes totalling 464 cores. The plan is to merge the 2 sub-clusters in next 6 months 81 T of Lustre storage shared by both sub-clusters. Everything fully interconnected with infiniband. Cluster is Dell hardware, using three R 510 disk servers each with two external disk shelves (each with its own RAID controller). CVMFS installed and working, being used by the ATLAS group as Sussex. In process of installing and configuring grid services to become a Tier 2 site (UKI-SOUTHGRID-SUSX) for South. Grid. We have registered the service nodes and got grid certificates for them. 4 machines are set up ready for BDII, Cream. CE, Apel and SE. BDII and Apel done, working on CE and SE. Hoping to be fully up and running within 2 months. South. Grid August 2010 14

Conclusions • South. Grid sites utilisation generally improving, but some sites small compared with others. • Birmingham supporting Atlas, Alice and LHCb. • Bristol; Need to get new version of STORM working if the hope to be a CMS tier 2 site • Cambridge; only partly using PBS so APEL still reports low. The Condor part does not report correctly into APEL. Accounting metrics come direct from ATLAS so less critical for that. • Could enable JET for ATLAS production as they now have enough disk, but ATLAS say they would prefer them to use CVMFS, so we have to help them do that. • Oxford upgraded to be optimised for ATLAS analysis, and is involved in many other areas. • RALPPD are at full strength , leading the way. • Sussex; need some small effort/support to bring them on line South. Grid September 2011 15

- Slides: 14