Two Approaches to Bayesian Network Structure Learning Goal

Two Approaches to Bayesian Network Structure Learning Goal: Compare an algorithm that learns a BN tree structure (TAN) with an algorithm that learns a constraints-free structure – (Build-BN). Problem Definition: Finding an exact BN structure for complete discrete data. – Known to be NP-hard. – maximization problem over defined score. Yael Kinderman & Tali Goren Build-BN Algorithm: Algorithm’s Attributes: – No structural constraints. – Straight Forward approach – not avoiding any computation. – Feasible only for small networks (<30 variables). Crucial Facts lying in the core of the Algorithm: – There are scoring-functions which are decomposable to local scores (we used BIC for the algorithm) – Every DAG has at least one node with no outgoing arcs (=sink). Implementation Note: Build-BN requires a lot of memory. Therefore, implementation strongly utilizes the file-system.

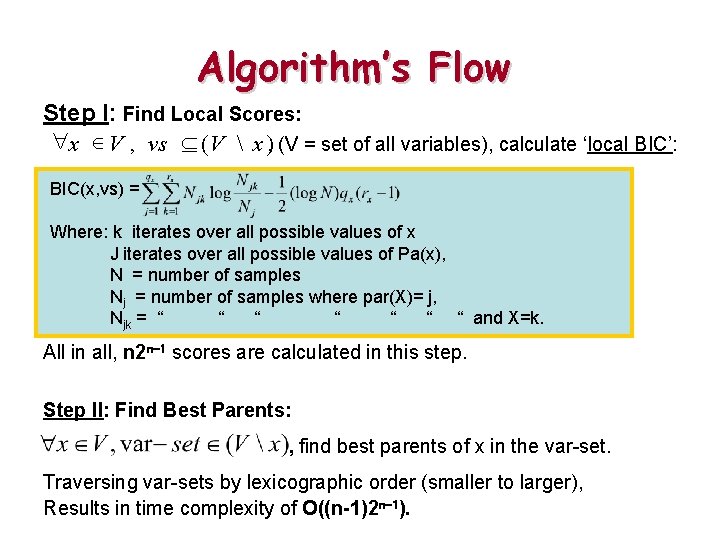

Algorithm’s Flow Step I: Find Local Scores: "x Î V , vs ( V x ), (V = set of all variables), calculate ‘local BIC’: BIC(x, vs) = Where: k iterates over all possible values of x J iterates over all possible values of Pa(x), N = number of samples Nj = number of samples where par(X)= j, Njk = “ “ “ “ and X=k. All in all, n 2 n-1 scores are calculated in this step. Step II: Find Best Parents: , find best parents of x in the var-set. Traversing var-sets by lexicographic order (smaller to larger), Results in time complexity of O((n-1)2 n-1).

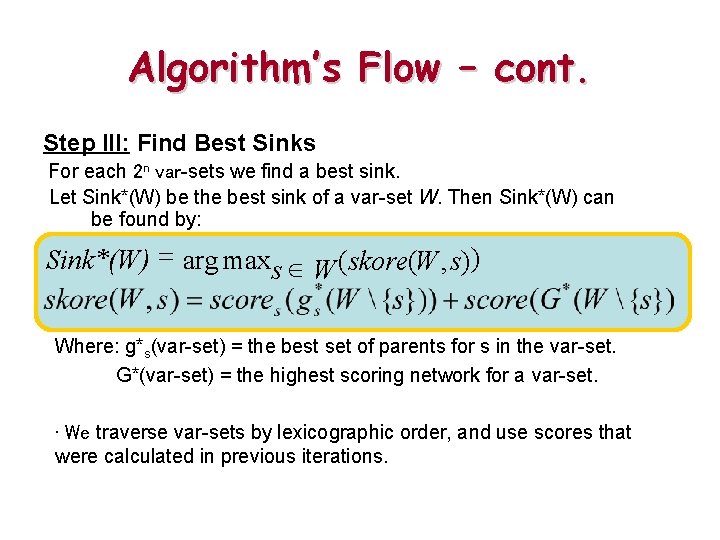

Algorithm’s Flow – cont. Step III: Find Best Sinks For each 2 n var-sets we find a best sink. Let Sink*(W) be the best sink of a var-set W. Then Sink*(W) can be found by: Sink*(W) = arg maxs Î W (skore(W , s)) Where: g*s(var-set) = the best set of parents for s in the var-set. G*(var-set) = the highest scoring network for a var-set. • We traverse var-sets by lexicographic order, and use scores that were calculated in previous iterations.

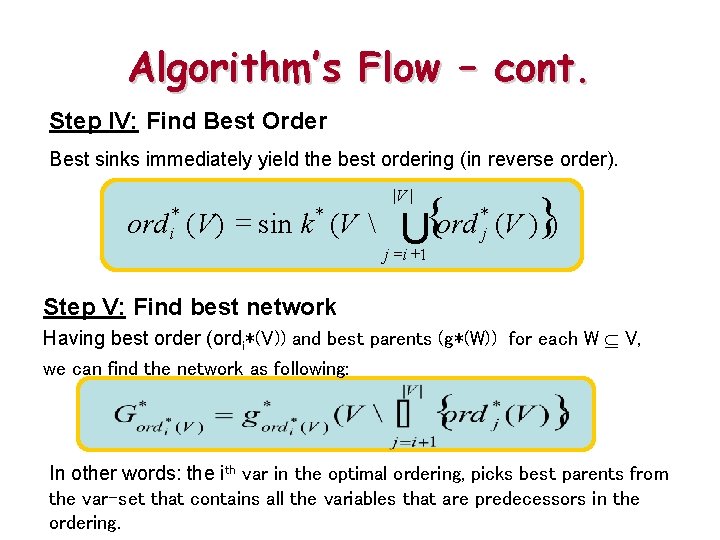

Algorithm’s Flow – cont. Step IV: Find Best Order Best sinks immediately yield the best ordering (in reverse order). * = ord ( V) sin k (V * i { U ord |V | j =i +1 * j (V )}) Step V: Find best network Having best order (ordi*(V)) and best parents (g*(W)) for each W V, we can find the network as following: In other words: the ith var in the optimal ordering, picks best parents from the var-set that contains all the variables that are predecessors in the ordering.

Using the BN for Prediction • • 5 -fold cross validation: 80% of the data used for building structure & CPDs, 20% “ “ “ ‘label prediction’. Predicting the label ‘C’ of a given sample is done using:

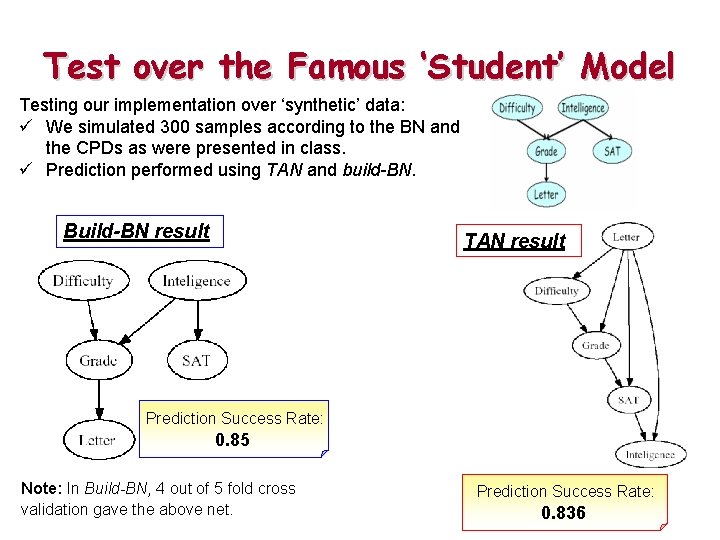

Test over the Famous ‘Student’ Model Testing our implementation over ‘synthetic’ data: ü We simulated 300 samples according to the BN and the CPDs as were presented in class. ü Prediction performed using TAN and build-BN. Build-BN result TAN result Prediction Success Rate: 0. 85 Note: In Build-BN, 4 out of 5 fold cross validation gave the above net. Prediction Success Rate: 0. 836

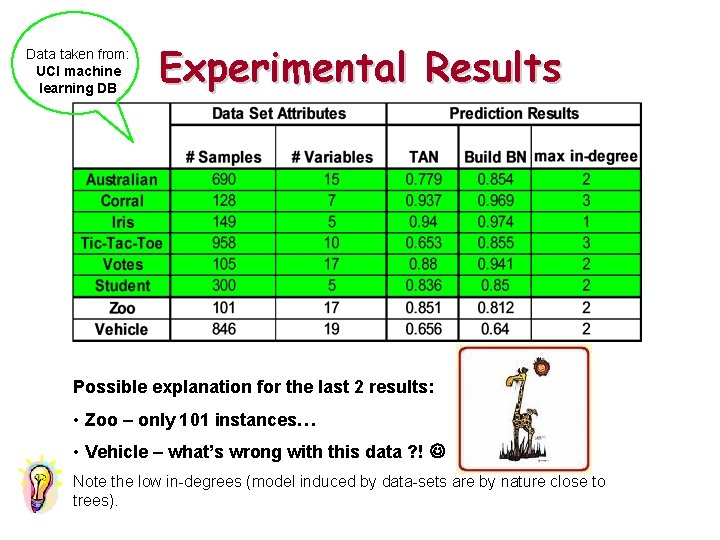

Data taken from: UCI machine learning DB Experimental Results Possible explanation for the last 2 results: • Zoo – only 101 instances… • Vehicle – what’s wrong with this data ? ! Note the low in-degrees (model induced by data-sets are by nature close to trees).

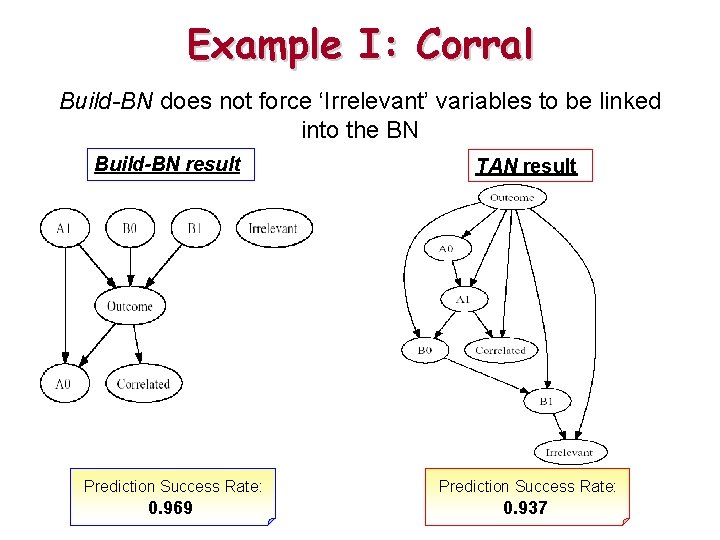

Example I: Corral Build-BN does not force ‘Irrelevant’ variables to be linked into the BN Build-BN result TAN result Prediction Success Rate: 0. 969 0. 937

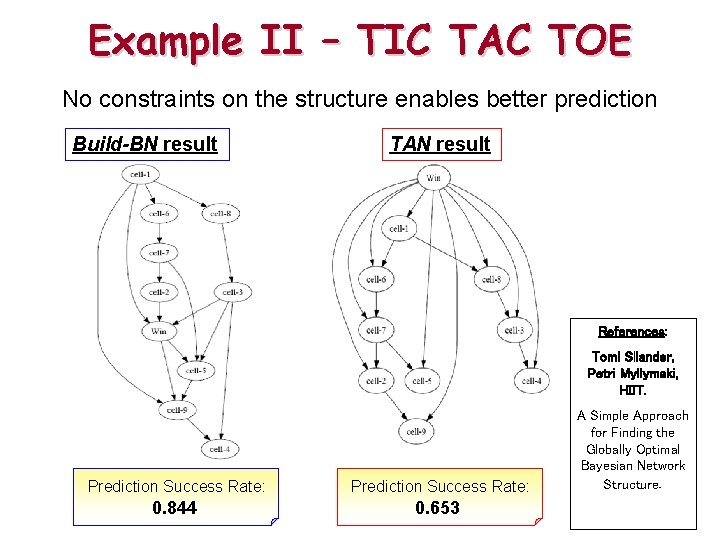

Example II – TIC TAC TOE No constraints on the structure enables better prediction Build-BN result TAN result References: Tomi Silander, Petri Myllymaki, HIIT. Prediction Success Rate: 0. 844 0. 653 A Simple Approach for Finding the Globally Optimal Bayesian Network Structure.

- Slides: 9