TWAREN Bruce Wang brucewangringline com tw Ring Line

TWAREN年度教育訓練: 雲端網路規劃與設計 Bruce Wang bruce_wang@ringline. com. tw Ring. Line Corp.

10 G/40 G/100 G乙太網路

DC Facilities Top of Mind Complexity, Cost, Power, Cooling Increased Efficiency, Standards Compliance Simpler Operations Reliability, Availability Scalability, Flexibility, Management, Security Technology adoption, Future Proofing Modularity, Mobility 4

10 Gigabit Ethernet to the Server Impacting DC access Layer Cabling Architecture § Multi-core CPU architectures § Virtual Machines driving Increased I/O bandwidth per server increased business agility § Increased network bandwidth demands § Consolidation of Networks Unified Fabrics / UIO § Future Proofing - Network, Cable Plant, 10 G/40 G/100 G 5

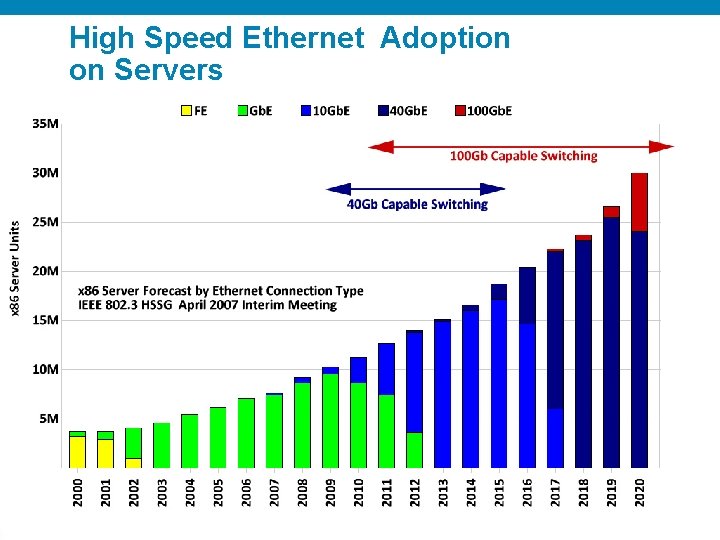

High Speed Ethernet Adoption on Servers 6

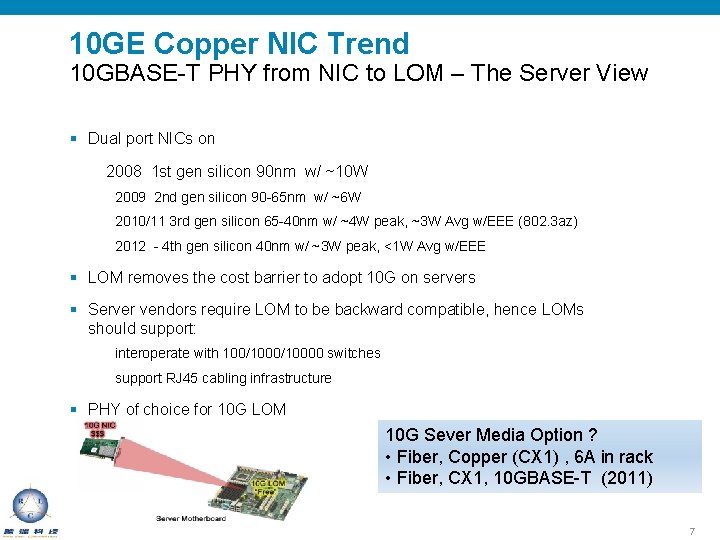

10 GE Copper NIC Trend 10 GBASE-T PHY from NIC to LOM – The Server View § Dual port NICs on 2008 1 st gen silicon 90 nm w/ ~10 W 2009 2 nd gen silicon 90 -65 nm w/ ~6 W 2010/11 3 rd gen silicon 65 -40 nm w/ ~4 W peak, ~3 W Avg w/EEE (802. 3 az) 2012 - 4 th gen silicon 40 nm w/ ~3 W peak, <1 W Avg w/EEE § LOM removes the cost barrier to adopt 10 G on servers § Server vendors require LOM to be backward compatible, hence LOMs should support: interoperate with 100/10000 switches support RJ 45 cabling infrastructure § PHY of choice for 10 G LOM 10 G Sever Media Option ? • Fiber, Copper (CX 1) , 6 A in rack • Fiber, CX 1, 10 GBASE-T (2011) 7

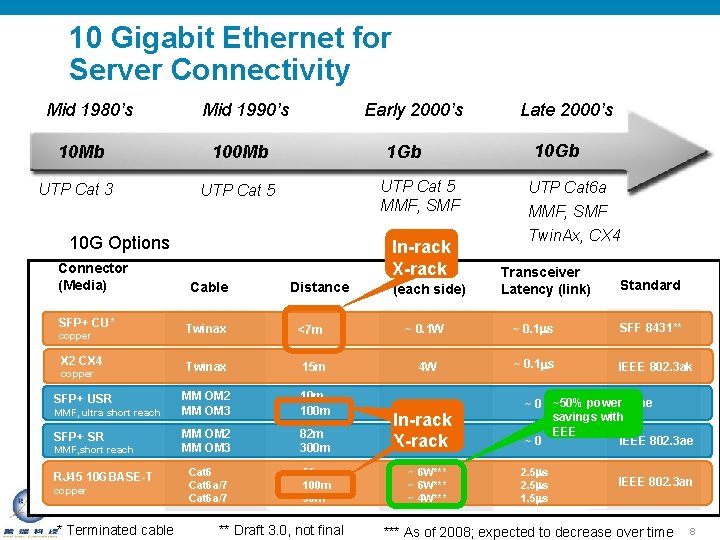

10 Gigabit Ethernet for Server Connectivity Mid 1980’s 10 Mb UTP Cat 3 Mid 1990’s Early 2000’s 100 Mb 1 Gb UTP Cat 5 MMF, SMF UTP Cat 5 10 G Options Connector (Media) SFP+ CU* copper X 2 CX 4 copper SFP+ USR MMF, ultra short reach SFP+ SR MMF, short reach RJ 45 10 GBASE-T copper * Terminated cable Cable Twinax Distance <7 m In-rack X-rack Power (each side) 10 Gb UTP Cat 6 a MMF, SMF Twin. Ax, CX 4 Transceiver Latency (link) Standard ~ 0. 1 W ~ 0. 1 ms SFF 8431** ~ 0. 1 ms IEEE 802. 3 ak Twinax 15 m 4 W MM OM 2 MM OM 3 10 m 100 m 1 W MM OM 2 MM OM 3 82 m 300 m Cat 6 a/7 55 m 100 m 30 m ** Draft 3. 0, not final Late 2000’s In-rack 1 W X-rack ~ 6 W*** ~ 4 W*** ~ 0 ~50% power none savings with EEE IEEE 802. 3 ae ~0 2. 5 ms 1. 5 ms IEEE 802. 3 an *** As of 2008; expected to decrease over time 8

10 Gigabit Transmissions § Different Standards 10 GBase-T (IEEE 802. 3 an) 10 GBase-CX 4 (IEEE 802. 3 ak) 10 GBase-R (IEEE 802. 3 xx) LRM (802. 3 aq) LR, ER, SR (802. 3 ae) SFF 8431 (SFP+ Fiber & cu) § Applications Server Interconnects Aggregation of Network Links Switch to Switch Links Storage Area Networks (SAN) 9

10 GBase-T § IEEE 802. 3 an § Full duplex transmissions § 100 meters on Class F (shielded) cabling § 30 -55 meters on Class E/Category 6 cabling § 100 m on Class EA/Category 6 augmented copper cabling § Alien Cross-Talk suppression up to 500 MHz § Cat 6 parameters extrapolated up to 500 MHz § Cat 7 insertion loss characteristics 10

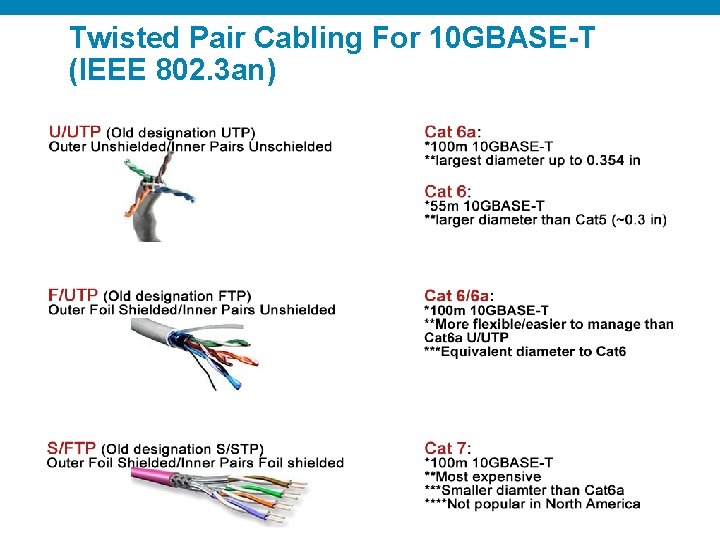

Twisted Pair Cabling For 10 GBASE-T (IEEE 802. 3 an) 11

10 G Copper Infiniband - 10 GBase-CX 4 10 G Copper on Twin Axial copper § IEEE 802. 3 ak § Supports 10 G up to 15 meters § Quad 100 ohm twinax, Infiniband cable and connector § Primarily for rack-to-rack links § Low Latency § Use in Infiinband environments 12

10 G SPF+ Cu § SFF 8431 § Supports 10 GE passive direct attached up to 7 meters § Twinax with direct attached SFP+ § Primarily for in rack and rack-to-rack links § Low Latency, low cost, low power 13

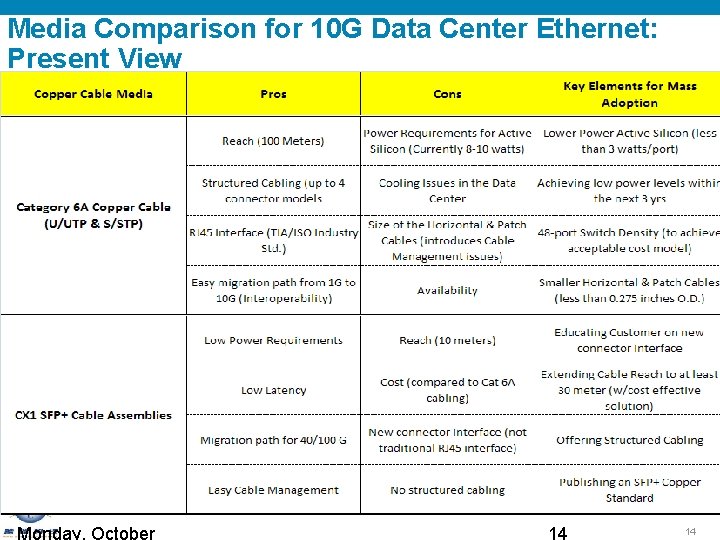

Media Comparison for 10 G Data Center Ethernet: Present View 14

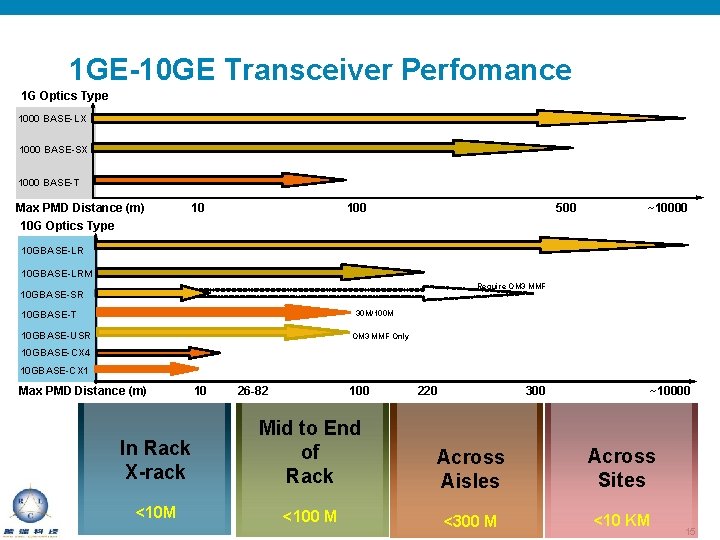

1 GE-10 GE Transceiver Perfomance 1 G Optics Type 1000 BASE-LX 1000 BASE-SX 1000 BASE-T Max PMD Distance (m) 10 G Optics Type 10 100 500 ~10000 10 GBASE-LRM Require OM 3 MMF 10 GBASE-SR 30 M/100 M 10 GBASE-T 10 GBASE-USR OM 3 MMF Only 10 GBASE-CX 4 10 GBASE-CX 1 Max PMD Distance (m) 10 26 -82 100 220 300 ~10000 In Rack X-rack Mid to End of Rack Across Aisles Across Sites <10 M <100 M <300 M <10 KM 15

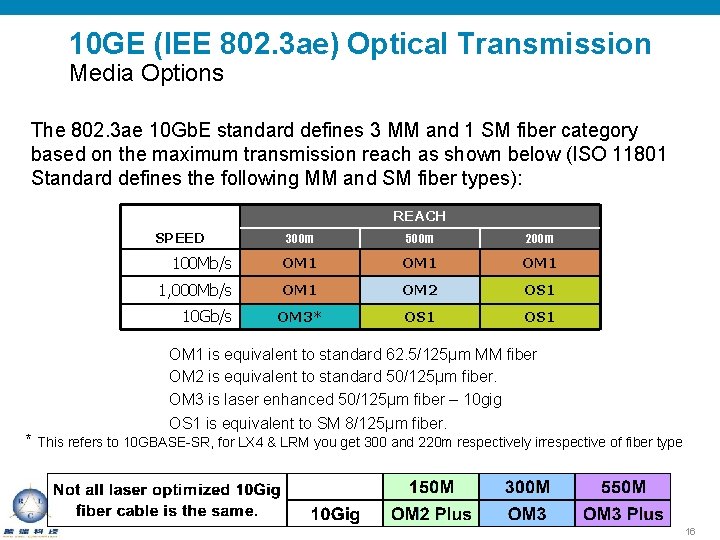

10 GE (IEE 802. 3 ae) Optical Transmission Media Options The 802. 3 ae 10 Gb. E standard defines 3 MM and 1 SM fiber category based on the maximum transmission reach as shown below (ISO 11801 Standard defines the following MM and SM fiber types): REACH SPEED 300 m 500 m 200 m 100 Mb/s OM 1 1, 000 Mb/s OM 1 OM 2 OS 1 OM 3* OS 1 10 Gb/s OM 1 is equivalent to standard 62. 5/125µm MM fiber OM 2 is equivalent to standard 50/125µm fiber. OM 3 is laser enhanced 50/125µm fiber – 10 gig OS 1 is equivalent to SM 8/125µm fiber. * This refers to 10 GBASE-SR, for LX 4 & LRM you get 300 and 220 m respectively irrespective of fiber type 16

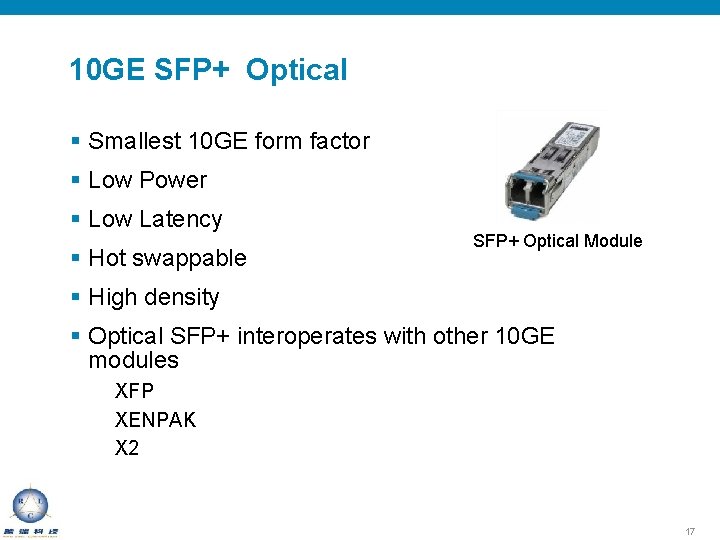

10 GE SFP+ Optical § Smallest 10 GE form factor § Low Power § Low Latency § Hot swappable SFP+ Optical Module § High density § Optical SFP+ interoperates with other 10 GE modules XFP XENPAK X 2 17

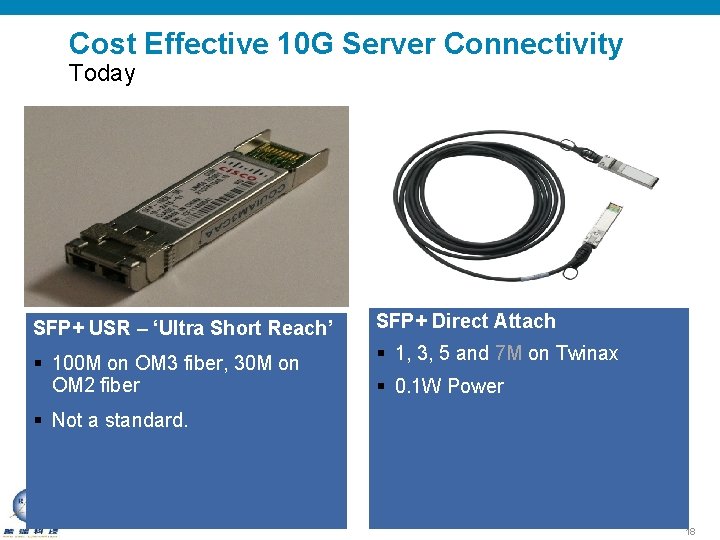

Cost Effective 10 G Server Connectivity Today SFP+ USR – ‘Ultra Short Reach’ SFP+ Direct Attach § 100 M on OM 3 fiber, 30 M on OM 2 fiber § 1, 3, 5 and 7 M on Twinax § 0. 1 W Power § Not a standard. 18

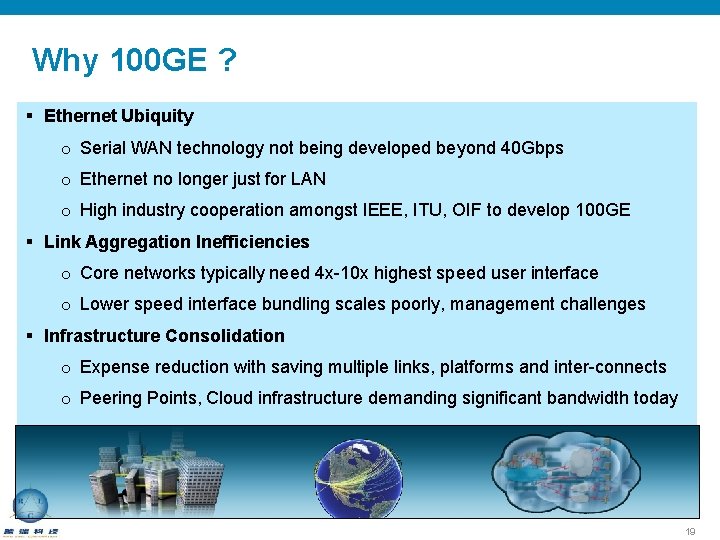

Why 100 GE ? § Ethernet Ubiquity o Serial WAN technology not being developed beyond 40 Gbps o Ethernet no longer just for LAN o High industry cooperation amongst IEEE, ITU, OIF to develop 100 GE § Link Aggregation Inefficiencies o Core networks typically need 4 x-10 x highest speed user interface o Lower speed interface bundling scales poorly, management challenges § Infrastructure Consolidation o Expense reduction with saving multiple links, platforms and inter-connects o Peering Points, Cloud infrastructure demanding significant bandwidth today 19

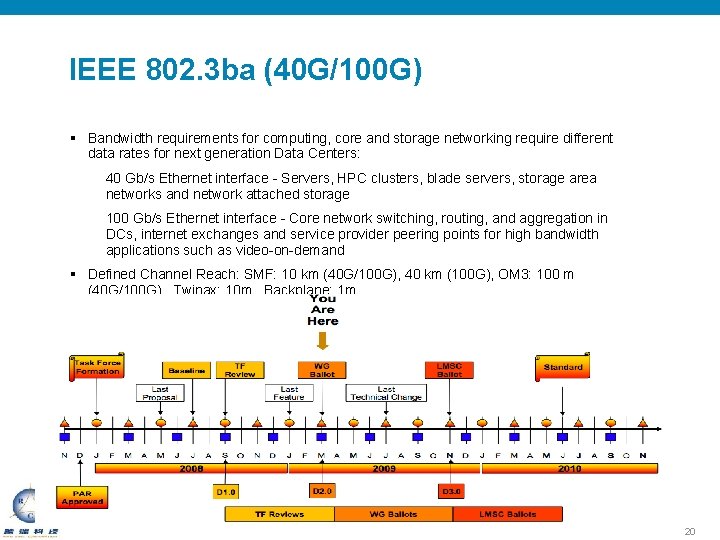

IEEE 802. 3 ba (40 G/100 G) § Bandwidth requirements for computing, core and storage networking require different data rates for next generation Data Centers: 40 Gb/s Ethernet interface - Servers, HPC clusters, blade servers, storage area networks and network attached storage 100 Gb/s Ethernet interface - Core network switching, routing, and aggregation in DCs, internet exchanges and service provider peering points for high bandwidth applications such as video-on-demand § Defined Channel Reach: SMF: 10 km (40 G/100 G), 40 km (100 G), OM 3: 100 m (40 G/100 G), Twinax: 10 m , Backplane: 1 m. 20

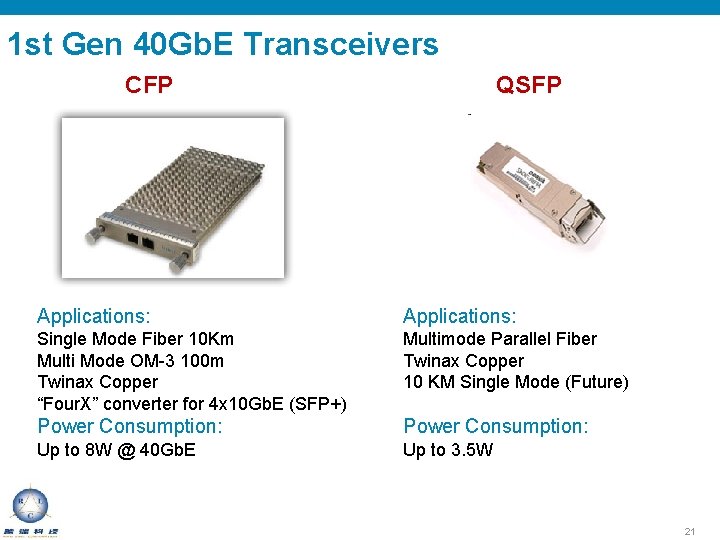

1 st Gen 40 Gb. E Transceivers CFP QSFP Applications: Single Mode Fiber 10 Km Multi Mode OM-3 100 m Twinax Copper “Four. X” converter for 4 x 10 Gb. E (SFP+) Multimode Parallel Fiber Twinax Copper 10 KM Single Mode (Future) Power Consumption: Up to 8 W @ 40 Gb. E Up to 3. 5 W 21

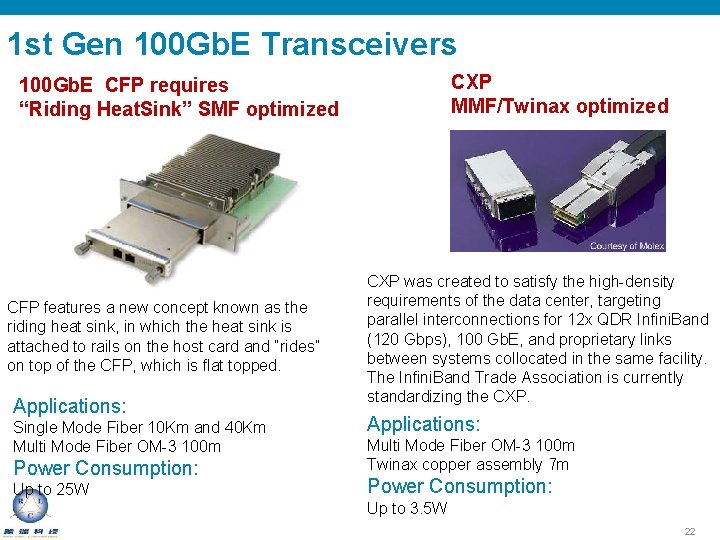

1 st Gen 100 Gb. E Transceivers CXP MMF/Twinax optimized 100 Gb. E CFP requires “Riding Heat. Sink” SMF optimized CFP features a new concept known as the riding heat sink, in which the heat sink is attached to rails on the host card and “rides” on top of the CFP, which is flat topped. Applications: Single Mode Fiber 10 Km and 40 Km Multi Mode Fiber OM-3 100 m Power Consumption: Up to 25 W CXP was created to satisfy the high-density requirements of the data center, targeting parallel interconnections for 12 x QDR Infini. Band (120 Gbps), 100 Gb. E, and proprietary links between systems collocated in the same facility. The Infini. Band Trade Association is currently standardizing the CXP. Applications: Multi Mode Fiber OM-3 100 m Twinax copper assembly 7 m Power Consumption: Up to 3. 5 W 22

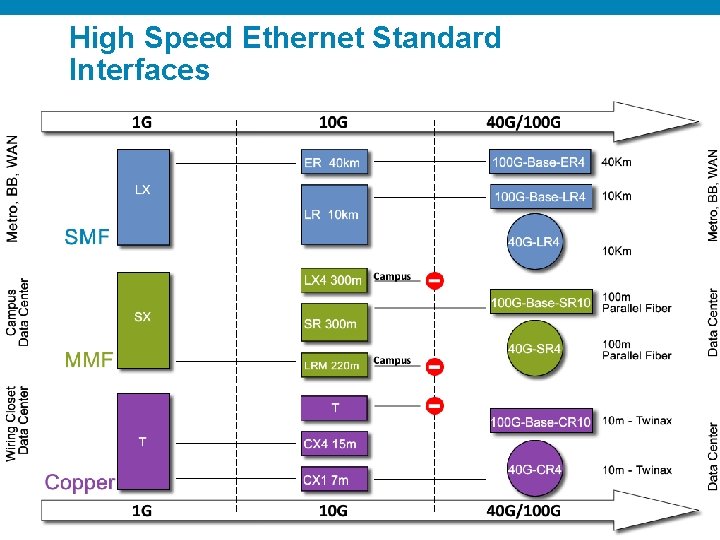

High Speed Ethernet Standard Interfaces 23

多鏈路互連交換技術 Fabric. Path/TRILL

L 2 Provides Flexibility in the Data Center § Layer 2 is still required by some data center applications § With Layer 2: § Server mobility does not require interaction between Network/Server teams § No physical constraint on server location § Layer 2 is Layer 3 agnostic § Layer 2 is “plug and play” 25

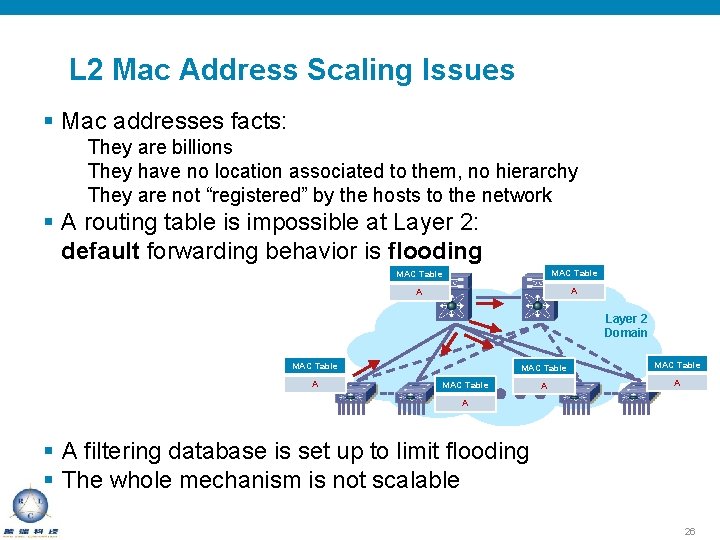

L 2 Mac Address Scaling Issues § Mac addresses facts: They are billions They have no location associated to them, no hierarchy They are not “registered” by the hosts to the network § A routing table is impossible at Layer 2: default forwarding behavior is flooding MAC Table A A Layer 2 Domain MAC Table A A MAC Table A § A filtering database is set up to limit flooding § The whole mechanism is not scalable 26

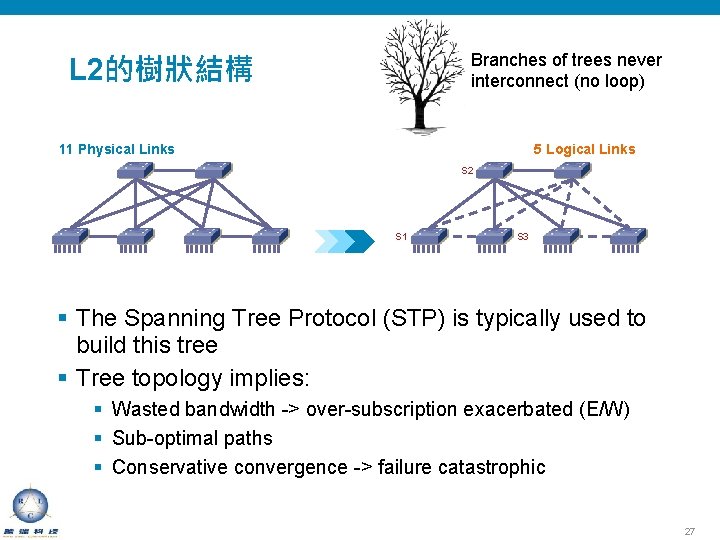

Branches of trees never interconnect (no loop) L 2的樹狀結構 5 Logical Links 11 Physical Links S 2 S 1 S 3 § The Spanning Tree Protocol (STP) is typically used to build this tree § Tree topology implies: § Wasted bandwidth -> over-subscription exacerbated (E/W) § Sub-optimal paths § Conservative convergence -> failure catastrophic 27

RFC 5556 TRILL § IETF standard for Layer 2 Multipathing § Driven by multiple vendors, including Cisco § Base protocol RFC ready for standardization but waiting on dependent standards § Control-plane protocol RFCs still in process § Target for standard completion is early CY 2011 http: //datatracker. ietf. org/wg/trill/ 28

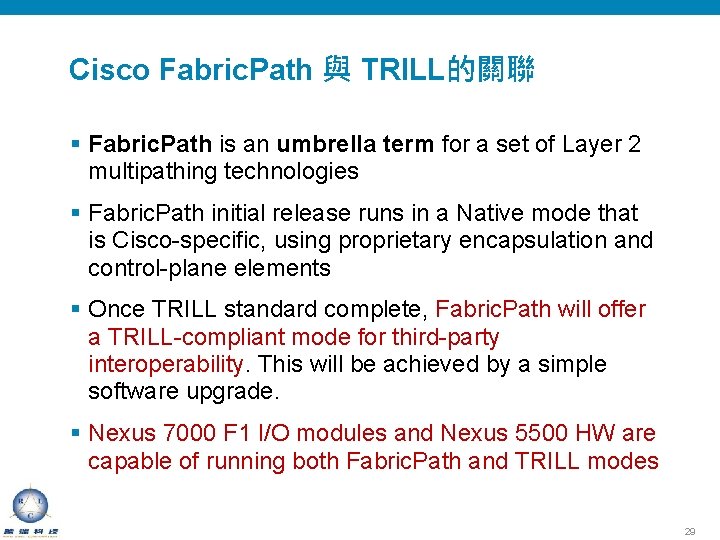

Cisco Fabric. Path 與 TRILL的關聯 § Fabric. Path is an umbrella term for a set of Layer 2 multipathing technologies § Fabric. Path initial release runs in a Native mode that is Cisco-specific, using proprietary encapsulation and control-plane elements § Once TRILL standard complete, Fabric. Path will offer a TRILL-compliant mode for third-party interoperability. This will be achieved by a simple software upgrade. § Nexus 7000 F 1 I/O modules and Nexus 5500 HW are capable of running both Fabric. Path and TRILL modes 29

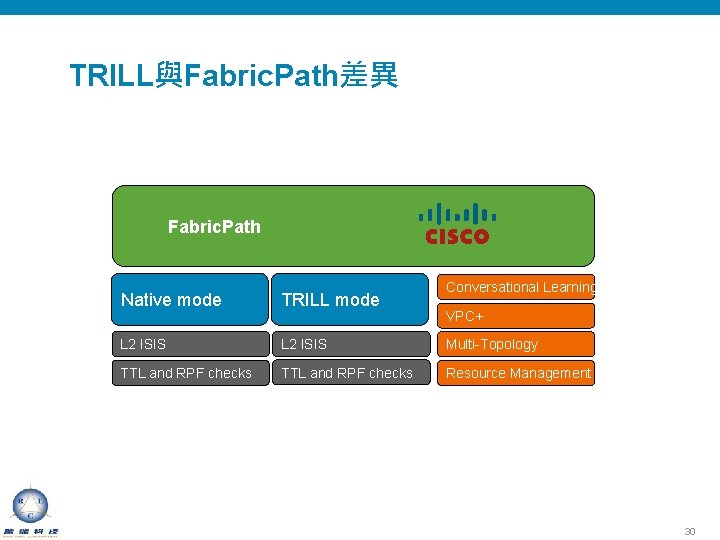

TRILL與Fabric. Path差異 Fabric. Path Conversational Learning Native mode TRILL mode L 2 ISIS Multi-Topology TTL and RPF checks Resource Management VPC+ 30

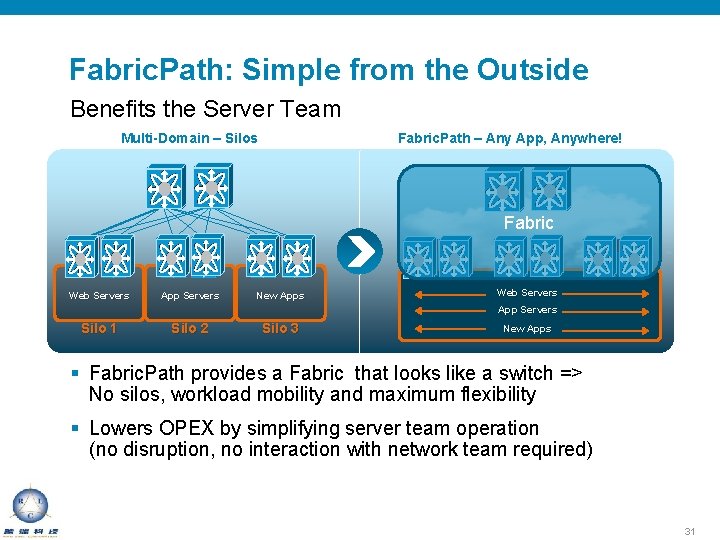

Fabric. Path: Simple from the Outside Benefits the Server Team Multi-Domain – Silos Fabric. Path – Any App, Anywhere! Fabric Web Servers App Servers New Apps Web Servers App Servers Silo 1 Silo 2 Silo 3 New Apps § Fabric. Path provides a Fabric that looks like a switch => No silos, workload mobility and maximum flexibility § Lowers OPEX by simplifying server team operation (no disruption, no interaction with network team required) 31

Fabric. Path: Simple from the Inside Benefits the Network Team § Reduces the number of switches required (higher port density possible without increasing oversubscription) § Isolate the network from the users No topology change propagation between inside/outside Fabric can be upgraded/reconfigured live § Open protocol, no secret sauce Operates on a single control protocol (unicast, multicast, pruning) Maintenance tools equivalent to those of L 3 networks (ping, traceroute) Little configuration (auto addressing) 32

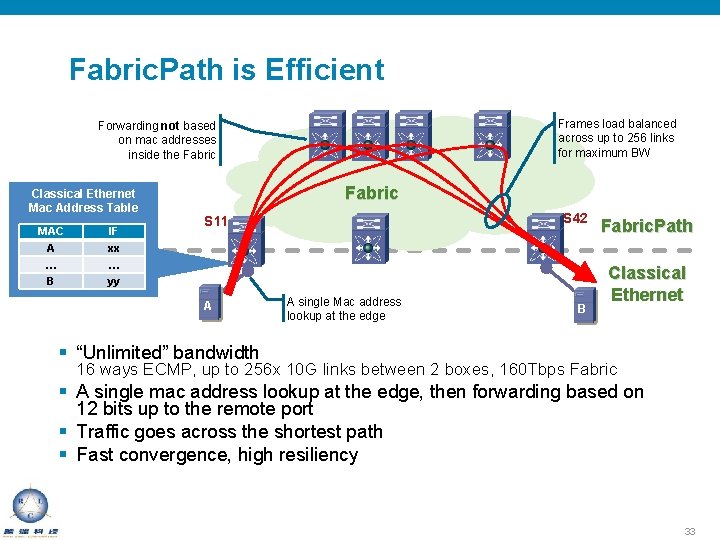

Fabric. Path is Efficient Frames load balanced across up to 256 links for maximum BW Forwarding not based on mac addresses inside the Fabric Classical Ethernet Mac Address Table MAC IF A … B xx … yy Fabric S 42 S 11 A A single Mac address lookup at the edge B Fabric. Path Classical Ethernet § “Unlimited” bandwidth 16 ways ECMP, up to 256 x 10 G links between 2 boxes, 160 Tbps Fabric § A single mac address lookup at the edge, then forwarding based on 12 bits up to the remote port § Traffic goes across the shortest path § Fast convergence, high resiliency 33

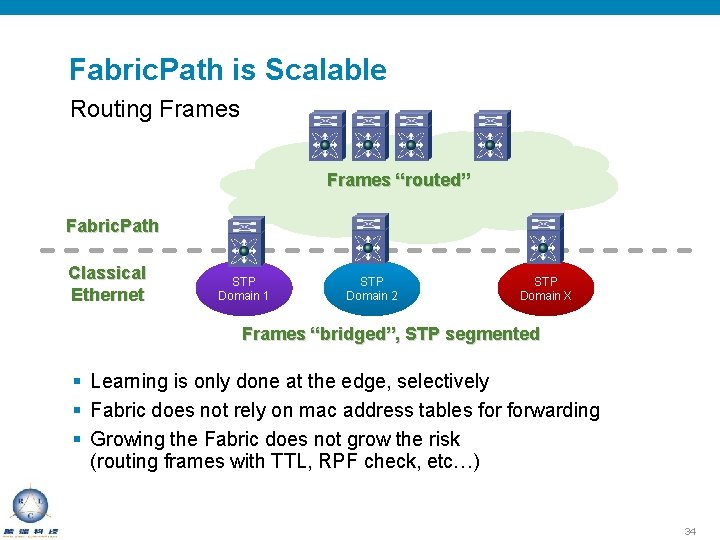

Fabric. Path is Scalable Routing Frames “routed” Fabric. Path Classical Ethernet STP Domain 1 STP Domain 2 STP Domain X Frames “bridged”, STP segmented § Learning is only done at the edge, selectively § Fabric does not rely on mac address tables forwarding § Growing the Fabric does not grow the risk (routing frames with TTL, RPF check, etc…) 34

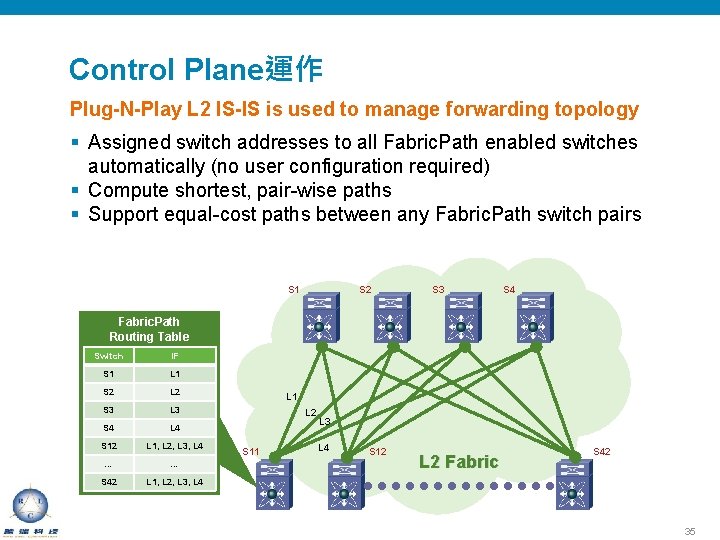

Control Plane運作 Plug-N-Play L 2 IS-IS is used to manage forwarding topology § Assigned switch addresses to all Fabric. Path enabled switches automatically (no user configuration required) § Compute shortest, pair-wise paths § Support equal-cost paths between any Fabric. Path switch pairs S 1 S 2 S 3 S 4 Fabric. Path Routing Table Switch IF S 1 L 1 S 2 L 2 S 3 L 3 S 4 L 4 S 12 L 1, L 2, L 3, L 4 … … S 42 L 1, L 2, L 3, L 4 L 1 L 2 S 11 L 3 L 4 S 12 L 2 Fabric S 42 35

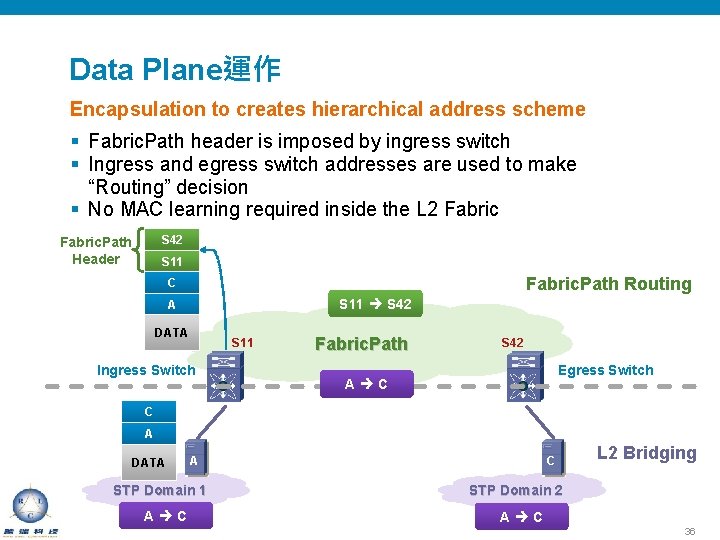

Data Plane運作 Encapsulation to creates hierarchical address scheme § Fabric. Path header is imposed by ingress switch § Ingress and egress switch addresses are used to make “Routing” decision § No MAC learning required inside the L 2 Fabric S 42 Fabric. Path Header S 11 Fabric. Path Routing C S 11 S 42 A DATA S 11 Ingress Switch STP Fabric. Path Domain S 42 Egress Switch A C C A DATA A STP Domain 1 A C C L 2 Bridging STP Domain 2 A C 36

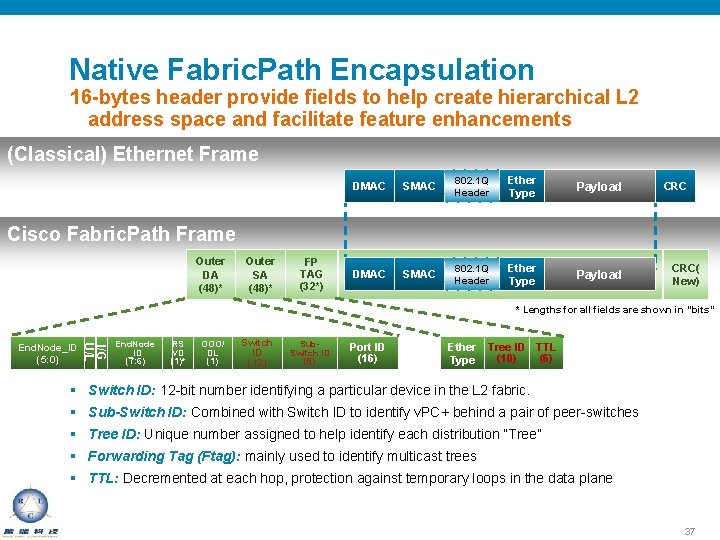

Native Fabric. Path Encapsulation 16 -bytes header provide fields to help create hierarchical L 2 address space and facilitate feature enhancements (Classical) Ethernet Frame DMAC SMAC 802. 1 Q Header Ether Type Payload CRC Cisco Fabric. Path Frame Outer DA (48)* Outer SA (48)* FP TAG (32*) CRC( New) * Lengths for all fields are shown in “bits” I/G U/L End. Node_ID (5: 0) End. Node _ID (7: 6) RS VD (1)* OOO/ DL (1) Switch ID (12) Sub. Switch ID (8) Port ID (16) Ether Type Tree ID TTL (10) (6) § Switch ID: 12 -bit number identifying a particular device in the L 2 fabric. § Sub-Switch ID: Combined with Switch ID to identify v. PC+ behind a pair of peer-switches § Tree ID: Unique number assigned to help identify each distribution “Tree” § Forwarding Tag (Ftag): mainly used to identify multicast trees § TTL: Decremented at each hop, protection against temporary loops in the data plane 37

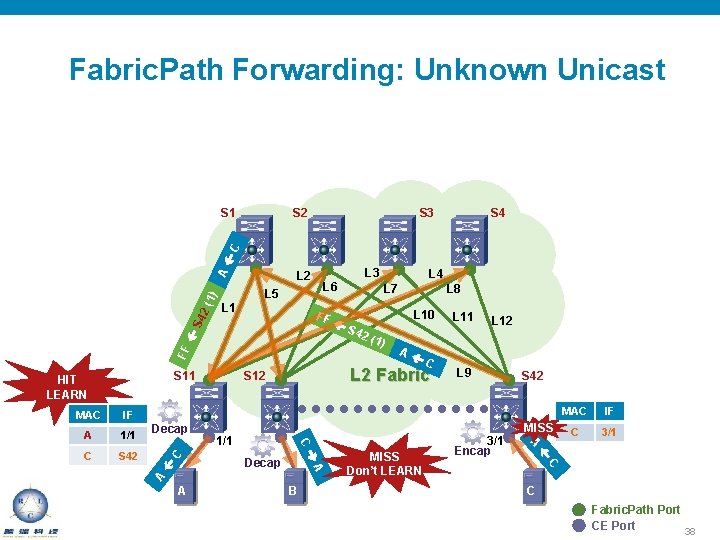

Fabric. Path Forwarding: Unknown Unicast S 2 S 3 S 4 L 2 L 6 L 5 L 1 FF FF S 4 2( 1) A C S 11 HIT LEARN C S 42 1/1 A L 10 S 42 (1) A C B MISS Don’t LEARN L 11 L 12 L 9 3/1 Encap S 42 MISS MAC IF C 3/1 C Decap A 1/1 L 8 A L 7 A IF L 4 L 2 Fabric S 12 C MAC L 3 C Fabric. Path Port CE Port 38

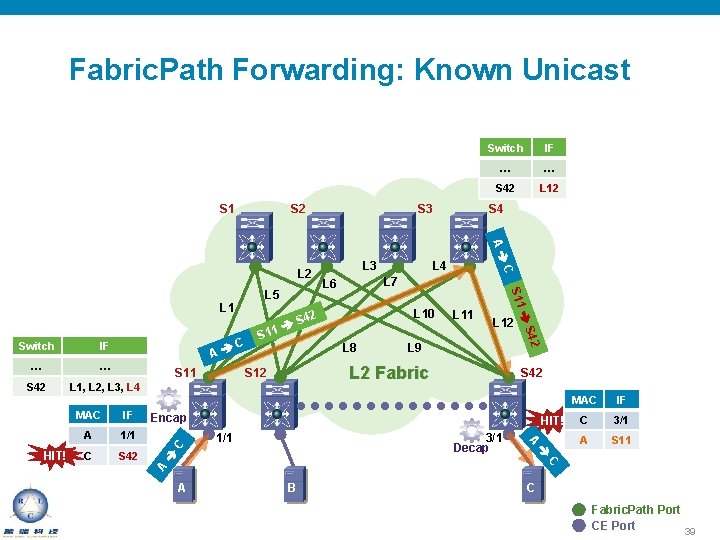

Fabric. Path Forwarding: Known Unicast S 1 S 2 IF … … S 42 L 1, L 2, L 3, L 4 S 11 S 42 C C S 42 Encap HIT! 1/1 3/1 Decap 1/1 L 2 Fabric S 12 A A L 9 MAC IF C 3/1 A S 11 C IF L 8 L 12 MAC C L 11 A HIT! A L 12 S 42 Switch S L 4 L 10 S 42 11 S 42 S 11 L 1 … S 4 L 7 L 6 … C L 5 L 3 IF A L 2 S 3 Switch A B C Fabric. Path Port CE Port 39

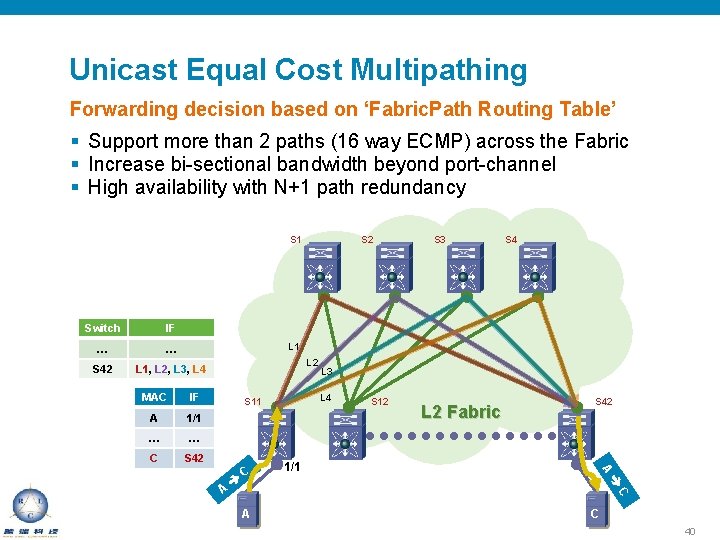

Unicast Equal Cost Multipathing Forwarding decision based on ‘Fabric. Path Routing Table’ § Support more than 2 paths (16 way ECMP) across the Fabric § Increase bi-sectional bandwidth beyond port-channel § High availability with N+1 path redundancy S 1 Switch IF … … S 42 L 1, L 2, L 3, L 4 S 2 S 3 S 4 L 1 MAC IF A 1/1 … … C S 42 L 4 S 11 L 2 Fabric S 42 1/1 S 12 A C L 3 A C 40

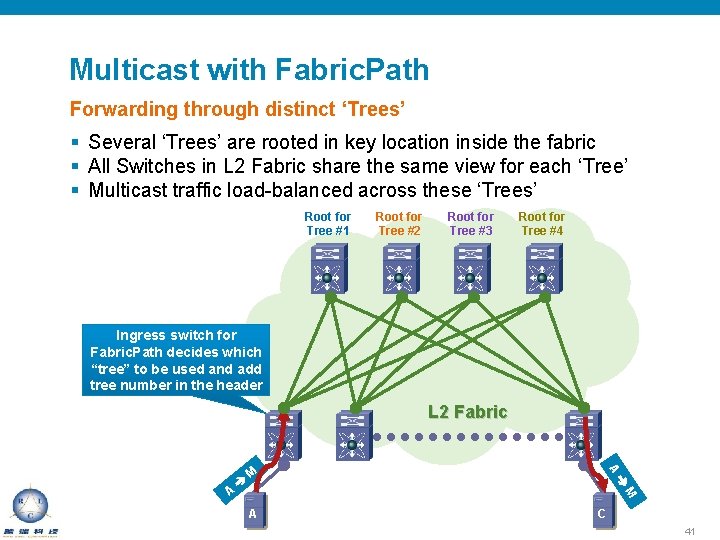

Multicast with Fabric. Path Forwarding through distinct ‘Trees’ § Several ‘Trees’ are rooted in key location inside the fabric § All Switches in L 2 Fabric share the same view for each ‘Tree’ § Multicast traffic load-balanced across these ‘Trees’ Root for Tree #1 Root for Tree #2 Root for Tree #3 Root for Tree #4 Ingress switch for Fabric. Path decides which “tree” to be used and add tree number in the header L 2 Fabric M A A C 41

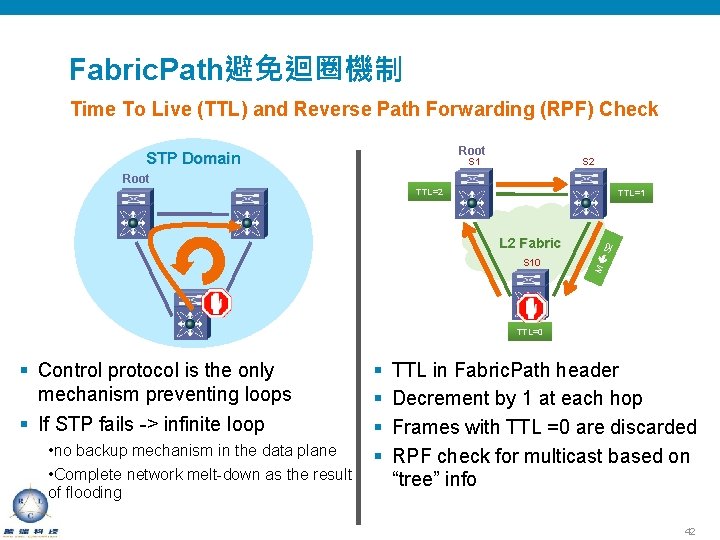

Fabric. Path避免迴圈機制 Time To Live (TTL) and Reverse Path Forwarding (RPF) Check Root STP Domain S 1 S 2 Root TTL=2 S 10 M L 2 Fabric S 2 TTL=1 TTL=3 TTL=0 § Control protocol is the only mechanism preventing loops § If STP fails -> infinite loop • no backup mechanism in the data plane • Complete network melt-down as the result of flooding § § TTL in Fabric. Path header Decrement by 1 at each hop Frames with TTL =0 are discarded RPF check for multicast based on “tree” info 42

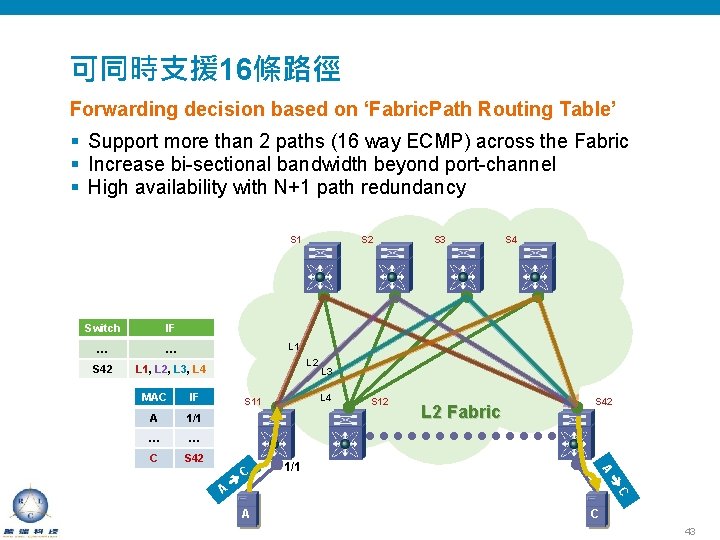

可同時支援 16條路徑 Forwarding decision based on ‘Fabric. Path Routing Table’ § Support more than 2 paths (16 way ECMP) across the Fabric § Increase bi-sectional bandwidth beyond port-channel § High availability with N+1 path redundancy S 1 Switch IF … … S 42 L 1, L 2, L 3, L 4 S 2 S 3 S 4 L 1 MAC IF A 1/1 … … C S 42 L 4 S 11 L 2 Fabric S 42 1/1 S 12 A C L 3 A C 43

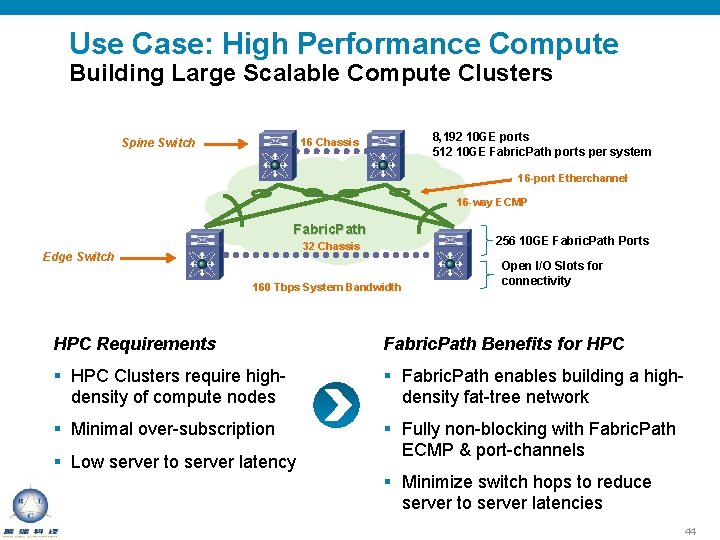

Use Case: High Performance Compute Building Large Scalable Compute Clusters 8, 192 10 GE ports 512 10 GE Fabric. Path ports per system 16 Chassis Spine Switch 16 -port Etherchannel 16 -way ECMP Fabric. Path 256 10 GE Fabric. Path Ports 32 Chassis Edge Switch 160 Tbps System Bandwidth Open I/O Slots for connectivity HPC Requirements Fabric. Path Benefits for HPC § HPC Clusters require highdensity of compute nodes § Fabric. Path enables building a highdensity fat-tree network § Minimal over-subscription § Fully non-blocking with Fabric. Path ECMP & port-channels § Low server to server latency § Minimize switch hops to reduce server to server latencies 44

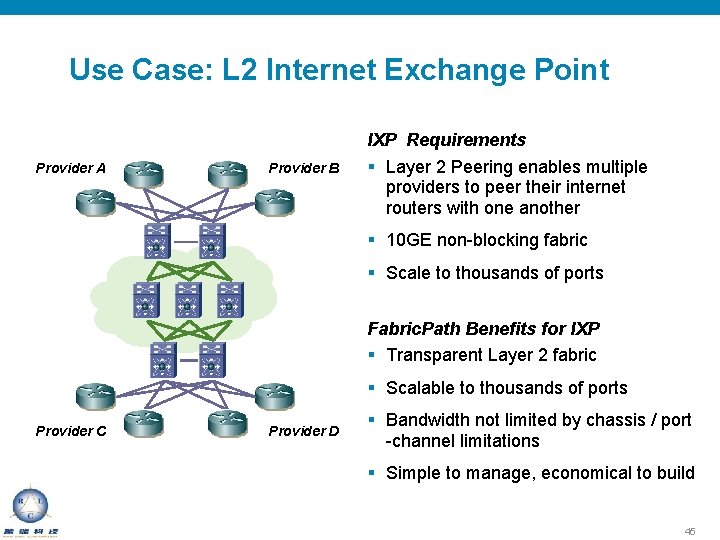

Use Case: L 2 Internet Exchange Point Provider A Provider B IXP Requirements § Layer 2 Peering enables multiple providers to peer their internet routers with one another § 10 GE non-blocking fabric § Scale to thousands of ports Fabric. Path Benefits for IXP § Transparent Layer 2 fabric § Scalable to thousands of ports Provider C Provider D § Bandwidth not limited by chassis / port -channel limitations § Simple to manage, economical to build 45

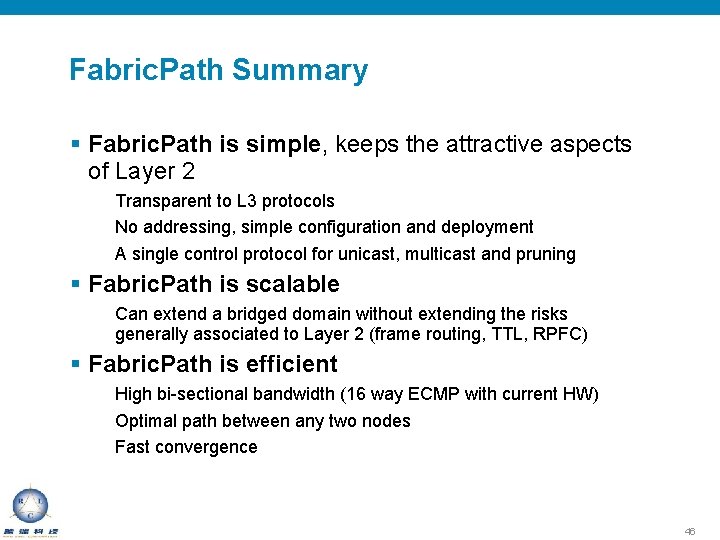

Fabric. Path Summary § Fabric. Path is simple, keeps the attractive aspects of Layer 2 Transparent to L 3 protocols No addressing, simple configuration and deployment A single control protocol for unicast, multicast and pruning § Fabric. Path is scalable Can extend a bridged domain without extending the risks generally associated to Layer 2 (frame routing, TTL, RPFC) § Fabric. Path is efficient High bi-sectional bandwidth (16 way ECMP with current HW) Optimal path between any two nodes Fast convergence 46

整合式網路傳輸技術 Fibre. Channel over Ethernet

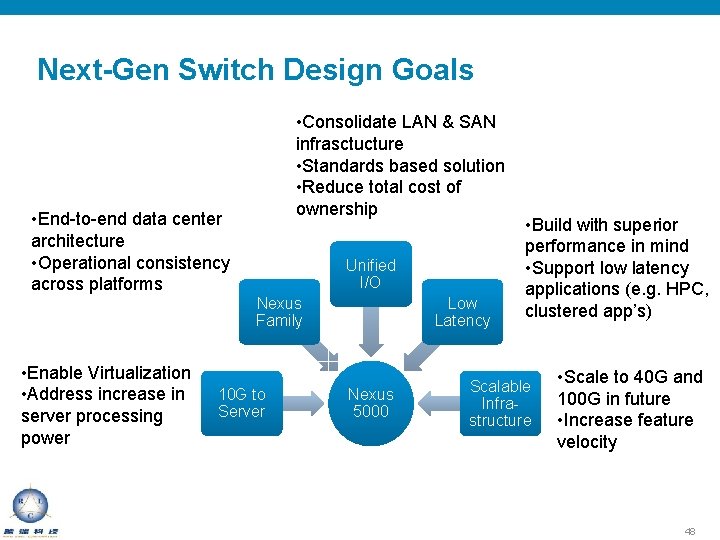

Next-Gen Switch Design Goals • Consolidate LAN & SAN infrasctucture • Standards based solution • Reduce total cost of ownership • End-to-end data center architecture • Operational consistency across platforms Unified I/O Nexus Family • Enable Virtualization • Address increase in server processing power 10 G to Server Low Latency Nexus 5000 • Build with superior performance in mind • Support low latency applications (e. g. HPC, clustered app’s) Scalable Infrastructure • Scale to 40 G and 100 G in future • Increase feature velocity 48

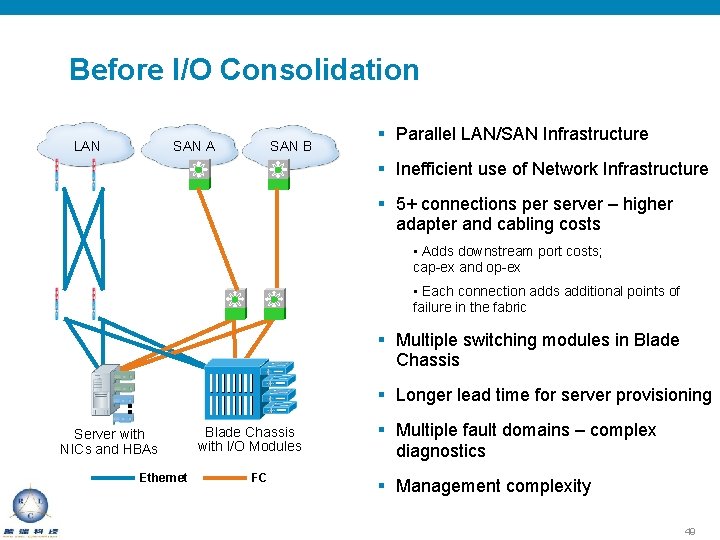

Before I/O Consolidation LAN SAN B SAN A § Parallel LAN/SAN Infrastructure § Inefficient use of Network Infrastructure § 5+ connections per server – higher adapter and cabling costs • Adds downstream port costs; cap-ex and op-ex • Each connection adds additional points of failure in the fabric § Multiple switching modules in Blade Chassis § Longer lead time for server provisioning Server with NICs and HBAs Ethernet Blade Chassis with I/O Modules FC § Multiple fault domains – complex diagnostics § Management complexity 49

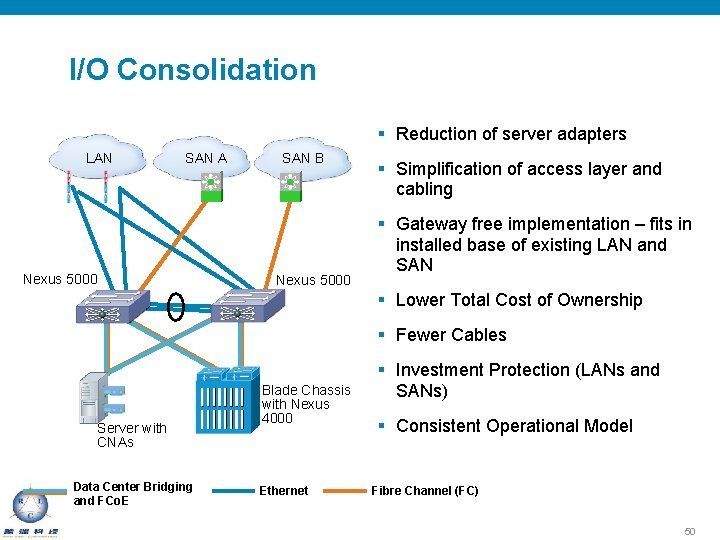

I/O Consolidation § Reduction of server adapters LAN SAN A Nexus 5000 SAN B Nexus 5000 § Simplification of access layer and cabling § Gateway free implementation – fits in installed base of existing LAN and SAN § Lower Total Cost of Ownership § Fewer Cables Server with CNAs Data Center Bridging and FCo. E Blade Chassis with Nexus 4000 Ethernet § Investment Protection (LANs and SANs) § Consistent Operational Model Fibre Channel (FC) 50

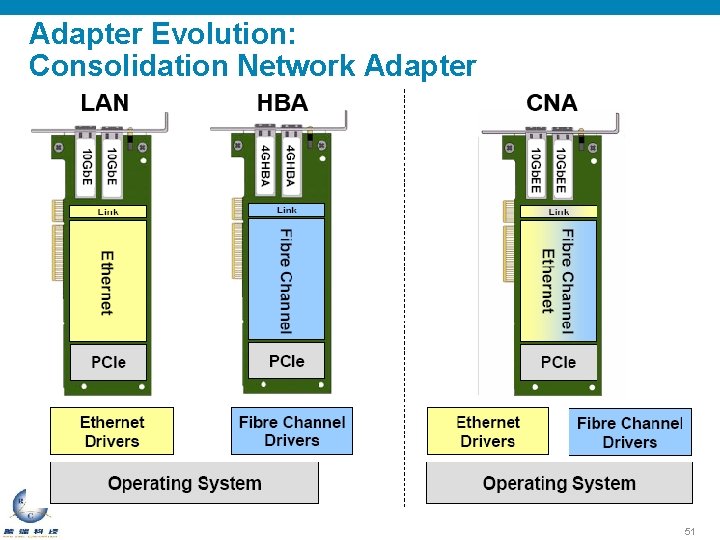

Adapter Evolution: Consolidation Network Adapter 51

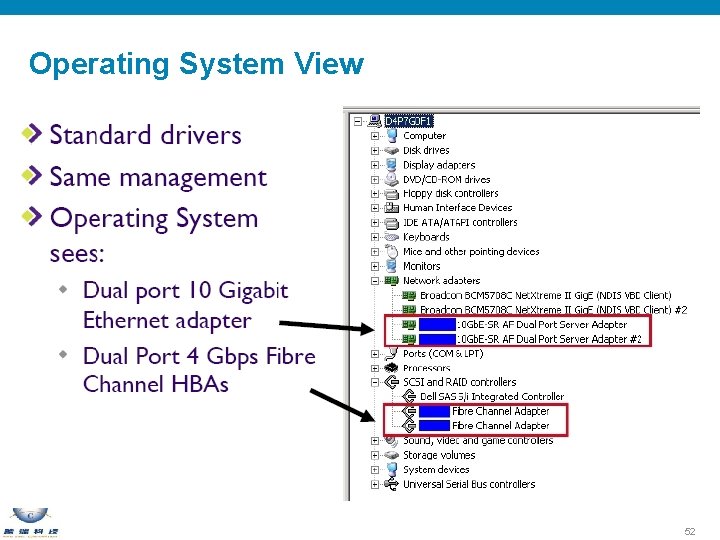

Operating System View 52

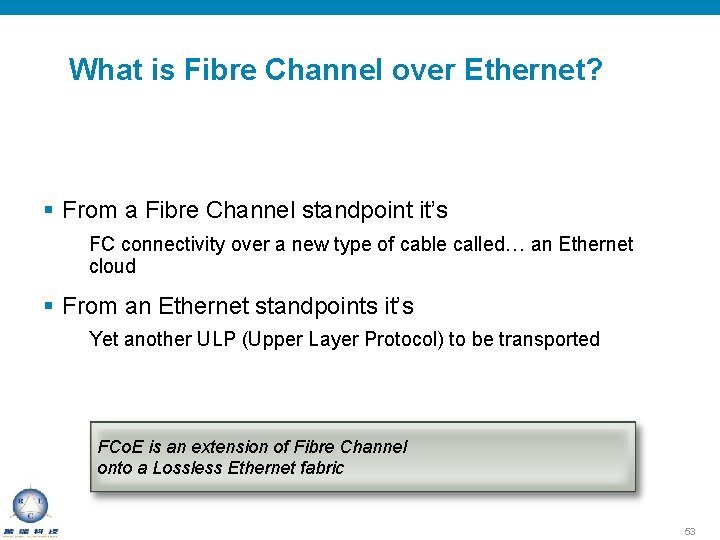

What is Fibre Channel over Ethernet? § From a Fibre Channel standpoint it’s FC connectivity over a new type of cable called… an Ethernet cloud § From an Ethernet standpoints it’s Yet another ULP (Upper Layer Protocol) to be transported FCo. E is an extension of Fibre Channel onto a Lossless Ethernet fabric 53

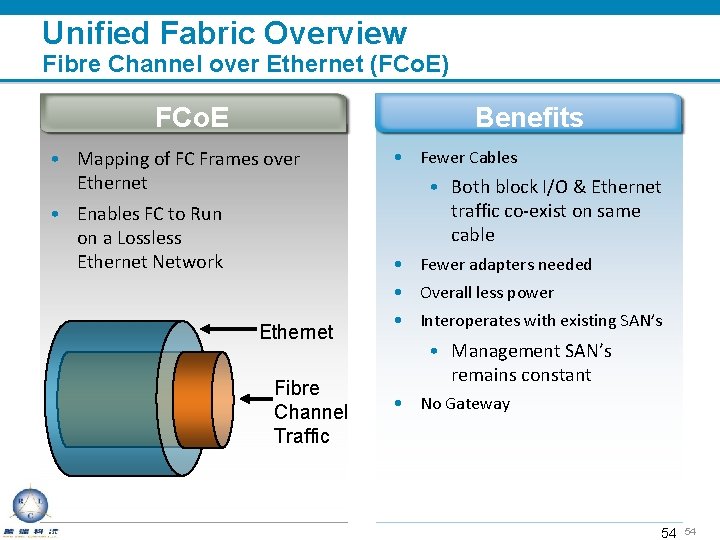

Unified Fabric Overview Fibre Channel over Ethernet (FCo. E) FCo. E Benefits • Mapping of FC Frames over Ethernet • Enables FC to Run on a Lossless Ethernet Network • Fewer Cables • Both block I/O & Ethernet traffic co-exist on same cable • Fewer adapters needed • Overall less power Ethernet Fibre Channel Traffic 10/26/2020 • Interoperates with existing SAN’s • Management SAN’s remains constant • No Gateway 54 54

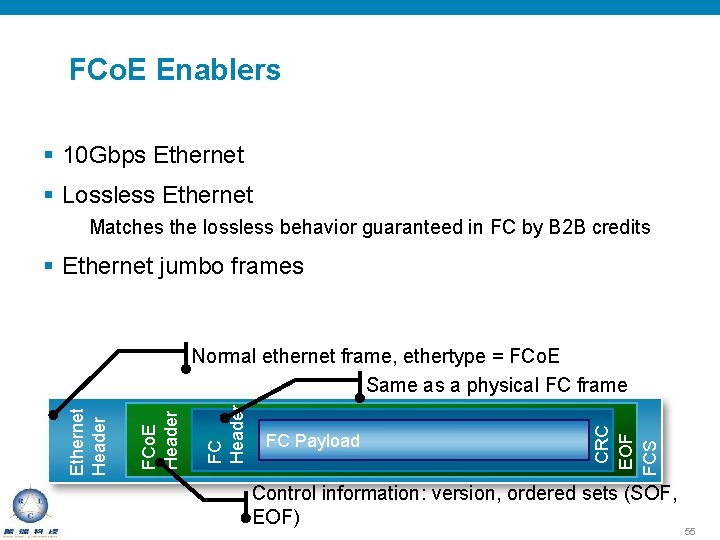

FCo. E Enablers § 10 Gbps Ethernet § Lossless Ethernet Matches the lossless behavior guaranteed in FC by B 2 B credits § Ethernet jumbo frames FC Payload CRC EOF FCS FC Header FCo. E Header Ethernet Header Normal ethernet frame, ethertype = FCo. E Same as a physical FC frame Control information: version, ordered sets (SOF, EOF) 55

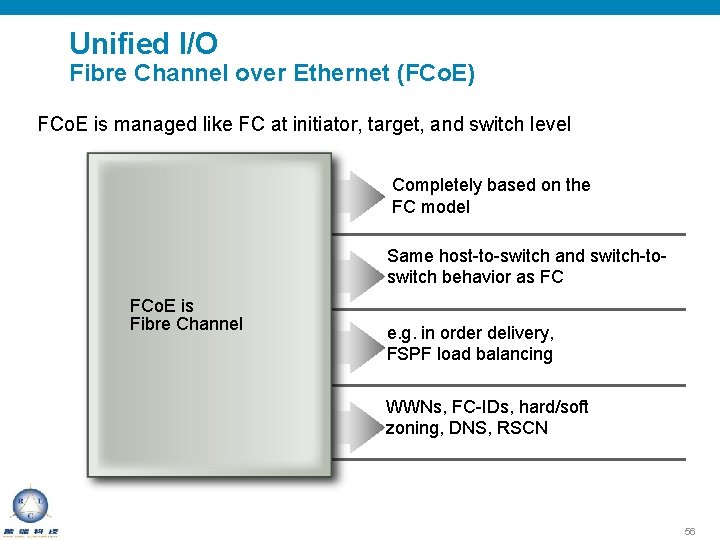

Unified I/O Fibre Channel over Ethernet (FCo. E) FCo. E is managed like FC at initiator, target, and switch level Easy to Understand Same Operational Model FCo. E is Fibre Channel Completely based on the FC model Same host-to-switch and switch-toswitch behavior as FC Same Techniques of Traffic Management e. g. in order delivery, FSPF load balancing Same Management and Security Models WWNs, FC-IDs, hard/soft zoning, DNS, RSCN 56

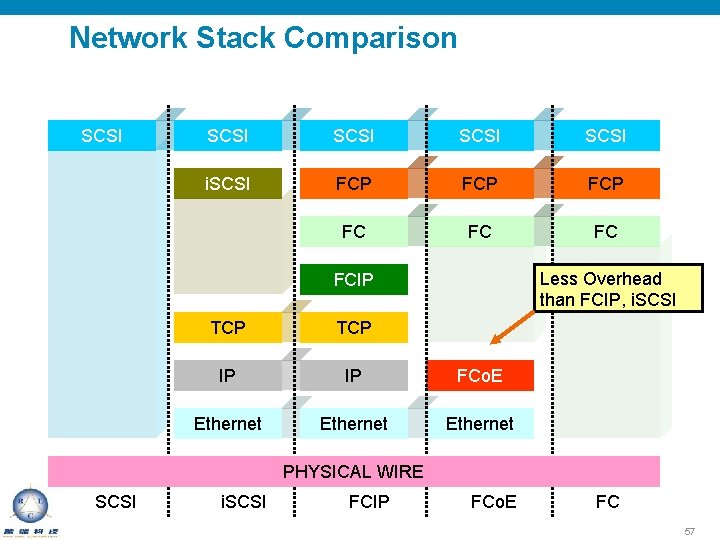

Network Stack Comparison SCSI SCSI i. SCSI FCP FCP FC FC FC Less Overhead than FCIP, i. SCSI FCIP TCP IP IP FCo. E Ethernet PHYSICAL WIRE SCSI i. SCSI FCIP FCo. E FC 57

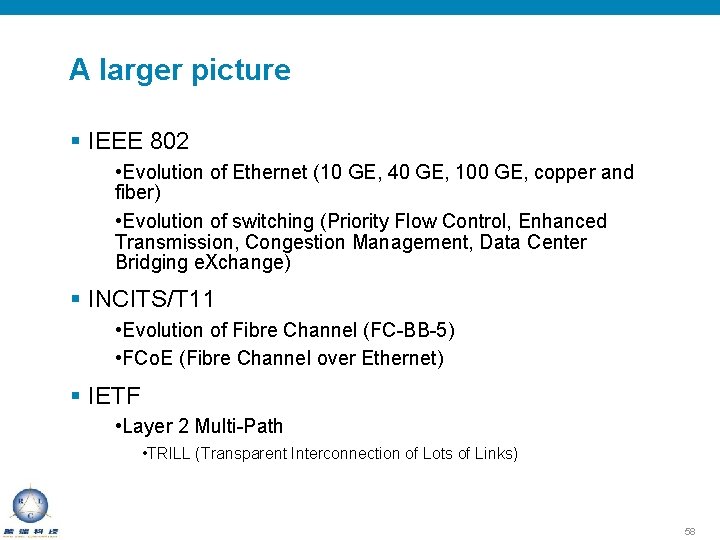

A larger picture § IEEE 802 • Evolution of Ethernet (10 GE, 40 GE, 100 GE, copper and fiber) • Evolution of switching (Priority Flow Control, Enhanced Transmission, Congestion Management, Data Center Bridging e. Xchange) § INCITS/T 11 • Evolution of Fibre Channel (FC-BB-5) • FCo. E (Fibre Channel over Ethernet) § IETF • Layer 2 Multi-Path • TRILL (Transparent Interconnection of Lots of Links) 58

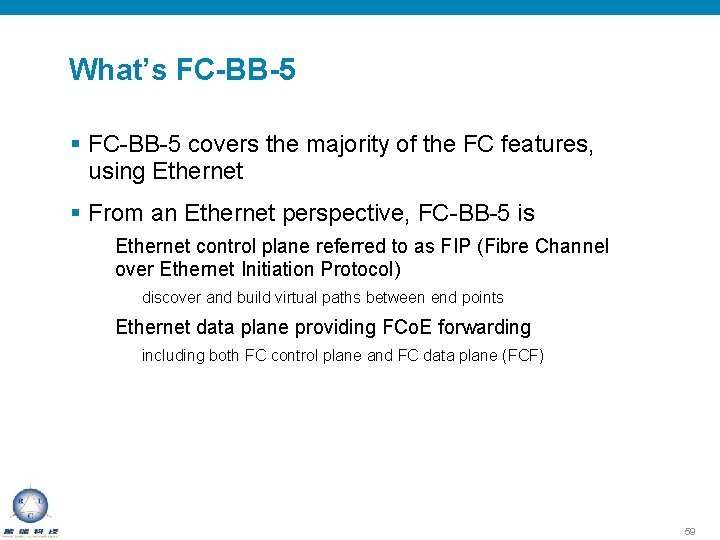

What’s FC-BB-5 § FC-BB-5 covers the majority of the FC features, using Ethernet § From an Ethernet perspective, FC-BB-5 is Ethernet control plane referred to as FIP (Fibre Channel over Ethernet Initiation Protocol) discover and build virtual paths between end points Ethernet data plane providing FCo. E forwarding including both FC control plane and FC data plane (FCF) 59

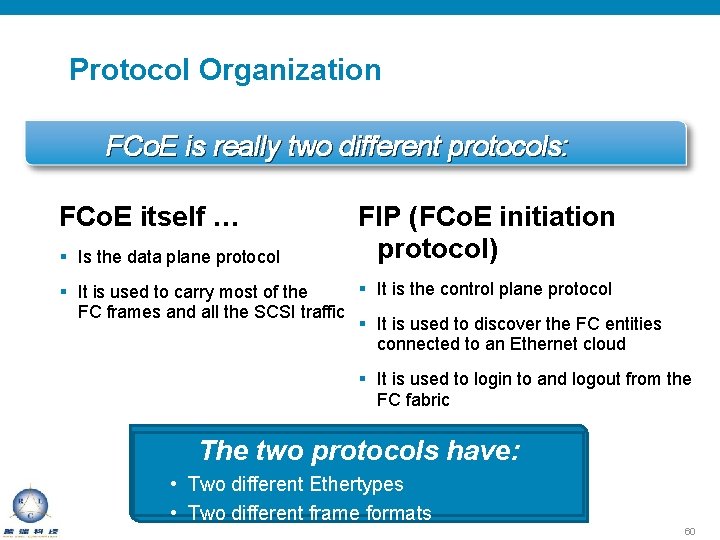

Protocol Organization FCo. E is really two different protocols: FCo. E itself … § Is the data plane protocol FIP (FCo. E initiation protocol) § It is the control plane protocol § It is used to carry most of the FC frames and all the SCSI traffic § It is used to discover the FC entities connected to an Ethernet cloud § It is used to login to and logout from the FC fabric The two protocols have: • Two different Ethertypes • Two different frame formats 60

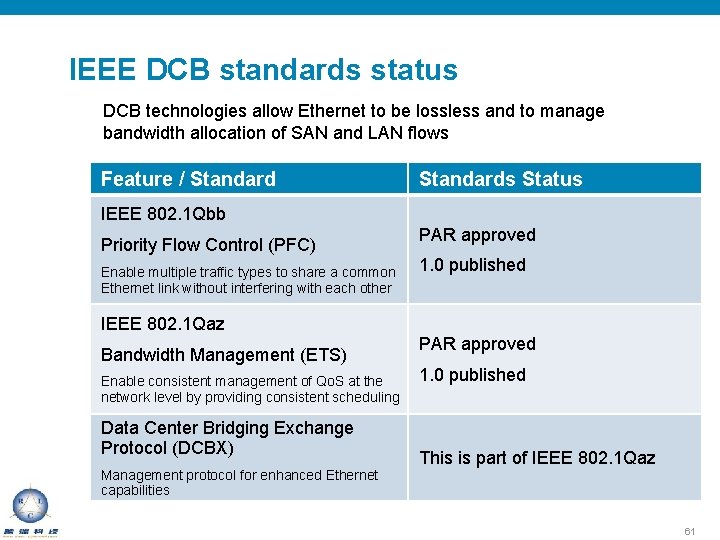

IEEE DCB standards status DCB technologies allow Ethernet to be lossless and to manage bandwidth allocation of SAN and LAN flows Feature / Standards Status IEEE 802. 1 Qbb Priority Flow Control (PFC) Enable multiple traffic types to share a common Ethernet link without interfering with each other PAR approved 1. 0 published IEEE 802. 1 Qaz Bandwidth Management (ETS) Enable consistent management of Qo. S at the network level by providing consistent scheduling Data Center Bridging Exchange Protocol (DCBX) Management protocol for enhanced Ethernet capabilities PAR approved 1. 0 published This is part of IEEE 802. 1 Qaz 61

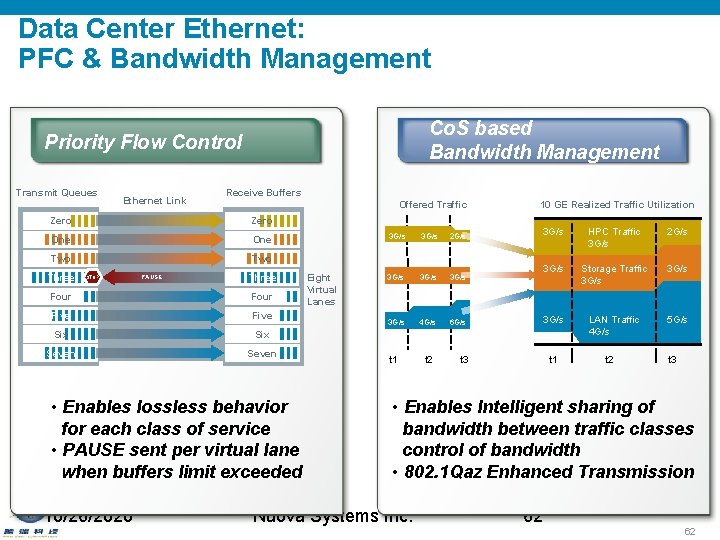

Data Center Ethernet: PFC & Bandwidth Management Co. S based Bandwidth Management Priority Flow Control Transmit Queues Ethernet Link Receive Buffers Offered Traffic Zero One Two Three STOP PAUSE Three Four Five Six Seven • Enables lossless behavior for each class of service • PAUSE sent per virtual lane when buffers limit exceeded 10/26/2020 Eight Virtual Lanes 3 G/s 2 G/s 3 G/s 4 G/s 6 G/s t 1 t 2 10 GE Realized Traffic Utilization 3 G/s HPC Traffic 3 G/s 2 G/s 3 G/s Storage Traffic 3 G/s LAN Traffic 4 G/s 5 G/s t 1 t 3 t 2 t 3 • Enables Intelligent sharing of bandwidth between traffic classes control of bandwidth • 802. 1 Qaz Enhanced Transmission Nuova Systems Inc. 62 62

DCBX Overview Auto-negotiation of capability and configuration Priority Flow Control capability and associated Co. S values Allows one link peer to push config to other link peer Link partners can choose supported features and willingness to accept Discovers FCo. E Capabilities Responsible for Logical Link Up/Down signaling of Ethernet and FC DCBX negotiation failures will result in: vfc not coming up Per-priority-pause not enabled on Co. S values with PFC configuration http: //download. intel. com/technology/eedc/dcb_cep_spec. pdf http: //www. ieee 802. org/1/files/public/docs 2008/ 63

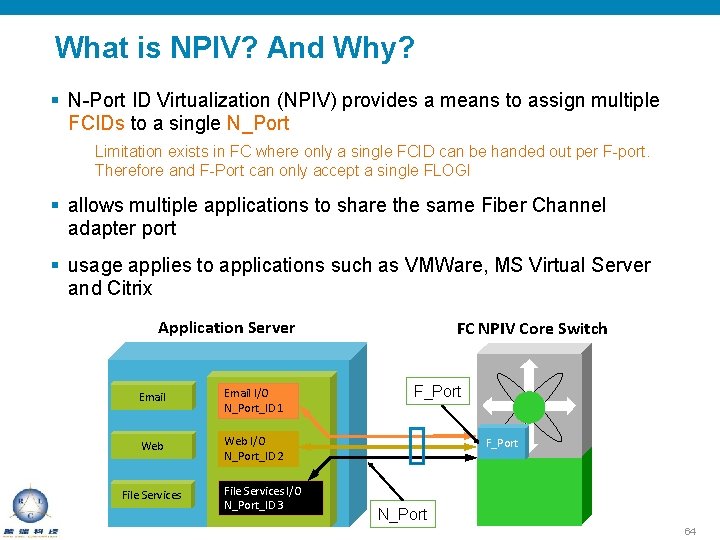

What is NPIV? And Why? § N-Port ID Virtualization (NPIV) provides a means to assign multiple FCIDs to a single N_Port Limitation exists in FC where only a single FCID can be handed out per F-port. Therefore and F-Port can only accept a single FLOGI § allows multiple applications to share the same Fiber Channel adapter port § usage applies to applications such as VMWare, MS Virtual Server and Citrix Application Server Email I/O N_Port_ID 1 Web I/O N_Port_ID 2 File Services I/O N_Port_ID 3 FC NPIV Core Switch F_Port N_Port 64

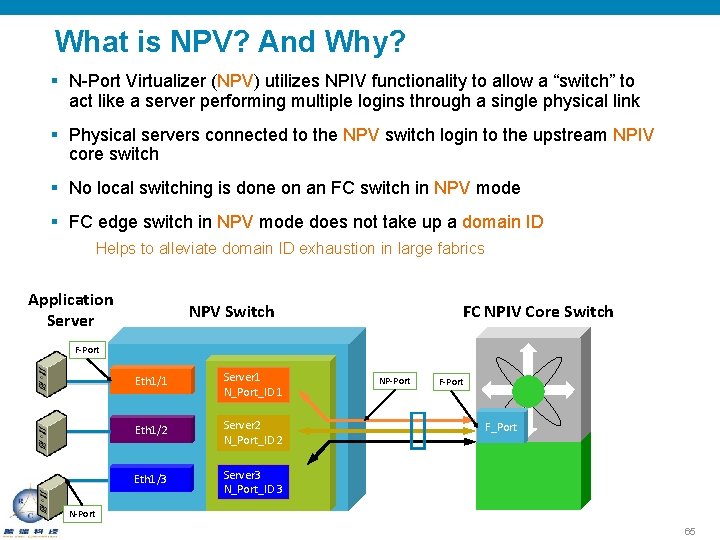

What is NPV? And Why? § N-Port Virtualizer (NPV) utilizes NPIV functionality to allow a “switch” to act like a server performing multiple logins through a single physical link § Physical servers connected to the NPV switch login to the upstream NPIV core switch § No local switching is done on an FC switch in NPV mode § FC edge switch in NPV mode does not take up a domain ID Helps to alleviate domain ID exhaustion in large fabrics Application Server NPV Switch FC NPIV Core Switch F-Port Eth 1/1 Server 1 N_Port_ID 1 Eth 1/2 Server 2 N_Port_ID 2 Eth 1/3 Server 3 N_Port_ID 3 NP-Port F_Port N-Port 65

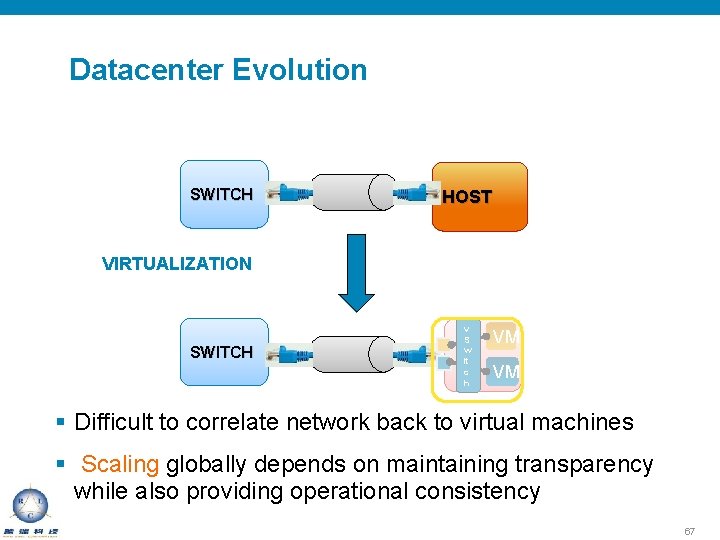

Datacenter Evolution SWITCH HOST VIRTUALIZATION SWITCH v S w it c h VM VM § Difficult to correlate network back to virtual machines § Scaling globally depends on maintaining transparency while also providing operational consistency 67

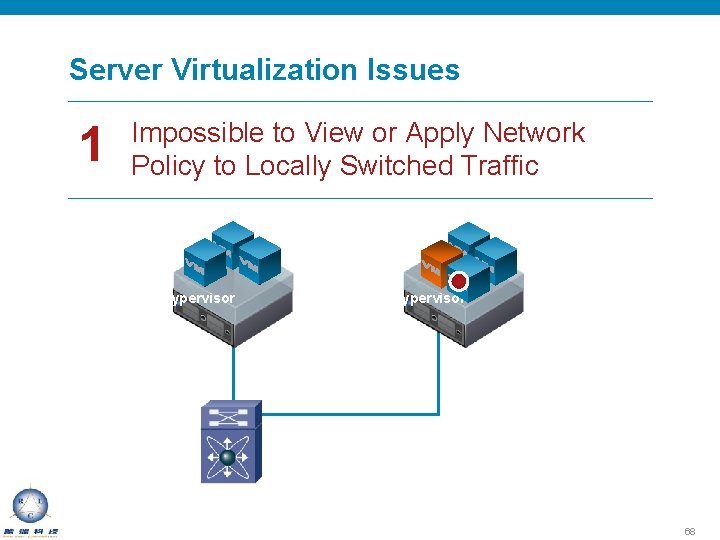

Server Virtualization Issues 1 Impossible to View or Apply Network Policy to Locally Switched Traffic Hypervisor 68

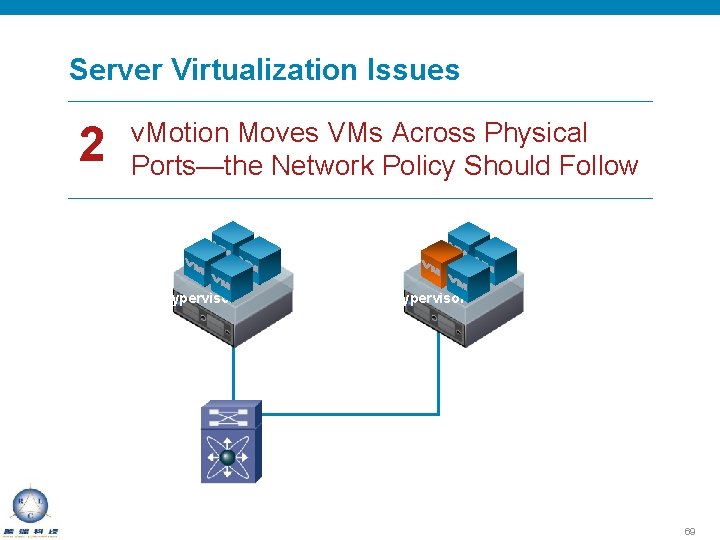

Server Virtualization Issues 2 v. Motion Moves VMs Across Physical Ports—the Network Policy Should Follow Hypervisor 69

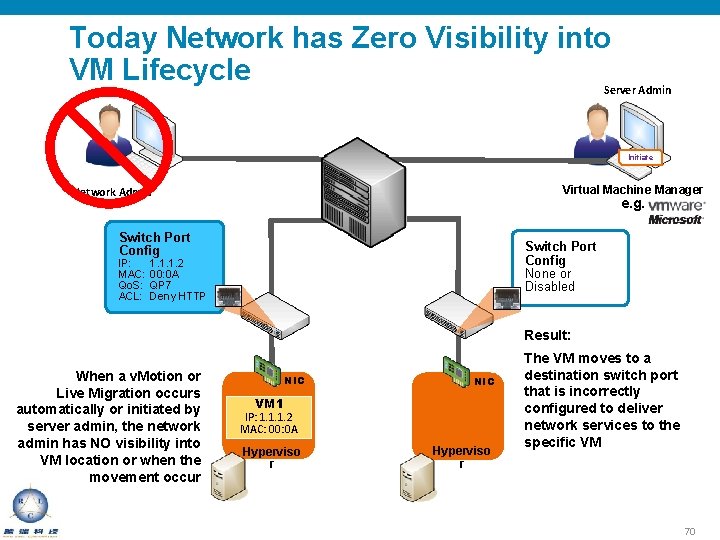

Today Network has Zero Visibility into VM Lifecycle Server Admin Initiate Virtual Machine Manager Network Admin e. g. Switch Port Config IP: MAC: Qo. S: ACL: Switch Port Config None or Disabled 1. 1. 1. 2 00: 0 A QP 7 Deny HTTP Result: When a v. Motion or Live Migration occurs automatically or initiated by server admin, the network admin has NO visibility into VM location or when the movement occur NIC VM 1 IP: 1. 1. 1. 2 MAC: 00: 0 A Hyperviso r The VM moves to a destination switch port that is incorrectly configured to deliver network services to the specific VM 70

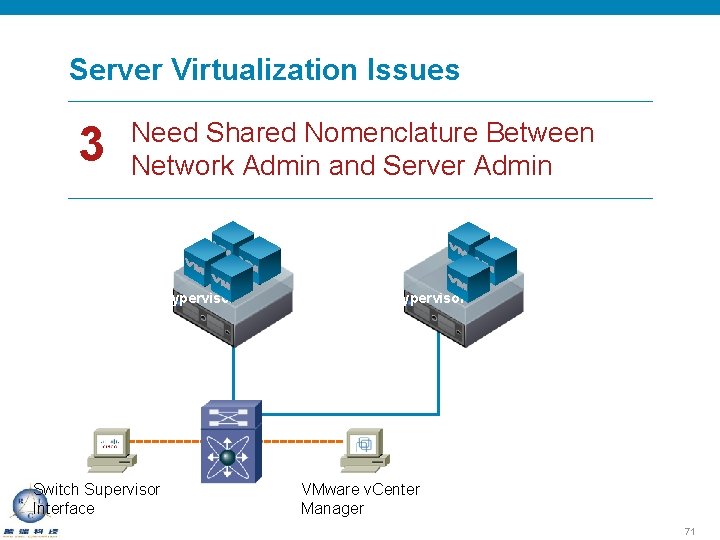

Server Virtualization Issues 3 Need Shared Nomenclature Between Network Admin and Server Admin Hypervisor Switch Supervisor Interface Hypervisor VMware v. Center Manager 71

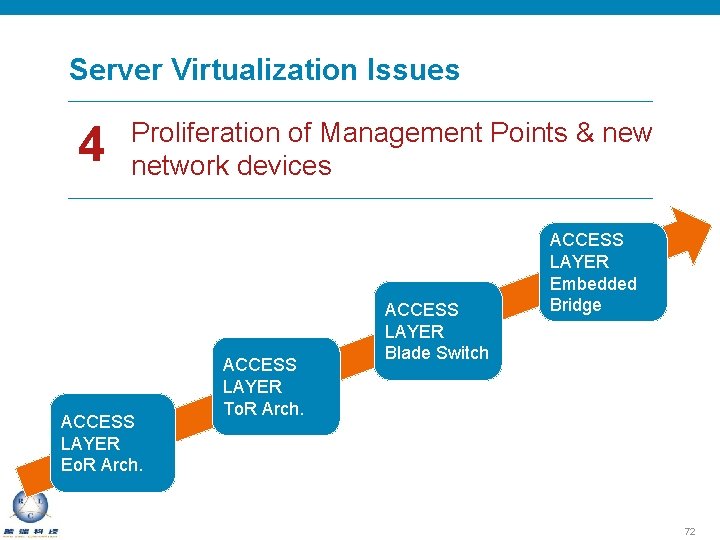

Server Virtualization Issues 4 Proliferation of Management Points & new network devices ACCESS LAYER Eo. R Arch. ACCESS LAYER To. R Arch. ACCESS LAYER Blade Switch ACCESS LAYER Embedded Bridge 72

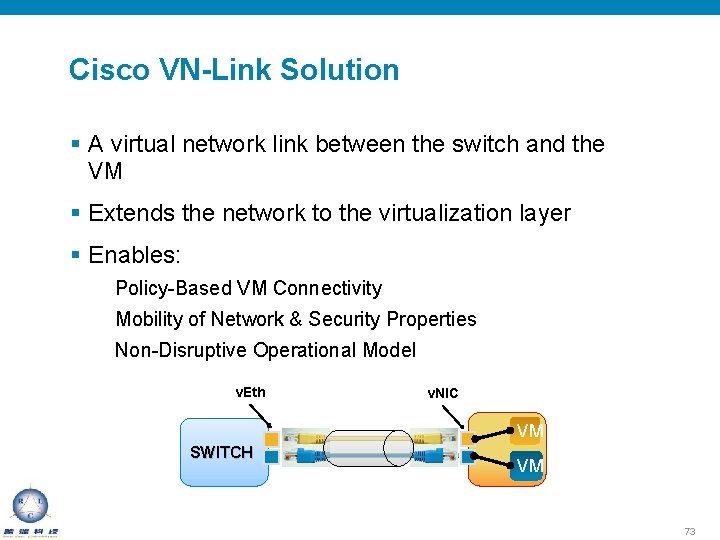

Cisco VN-Link Solution § A virtual network link between the switch and the VM § Extends the network to the virtualization layer § Enables: Policy-Based VM Connectivity Mobility of Network & Security Properties Non-Disruptive Operational Model v. Eth v. NIC VM SWITCH VM 73

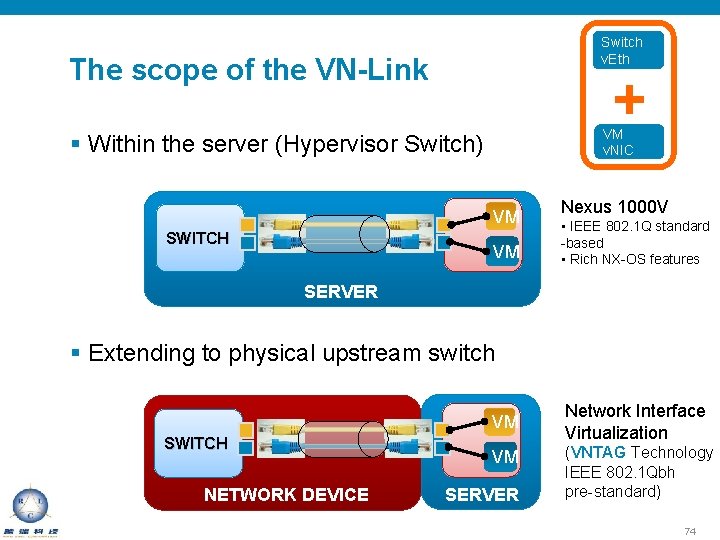

Switch v. Eth The scope of the VN-Link + VM v. NIC § Within the server (Hypervisor Switch) VM SWITCH VM Nexus 1000 V • IEEE 802. 1 Q standard -based • Rich NX-OS features SERVER § Extending to physical upstream switch VM SWITCH NETWORK DEVICE VM SERVER Network Interface Virtualization (VNTAG Technology IEEE 802. 1 Qbh pre-standard) 74

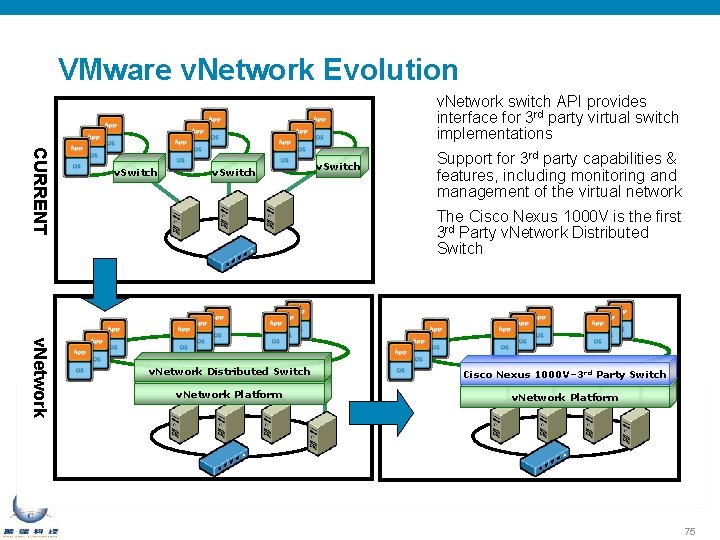

VMware v. Network Evolution v. Network switch API provides interface for 3 rd party virtual switch implementations CURRENT v. Switch Support for 3 rd party capabilities & features, including monitoring and management of the virtual network The Cisco Nexus 1000 V is the first 3 rd Party v. Network Distributed Switch Cisco Nexus 1000 V– 3 rd Party Switch v. Network Platform 75

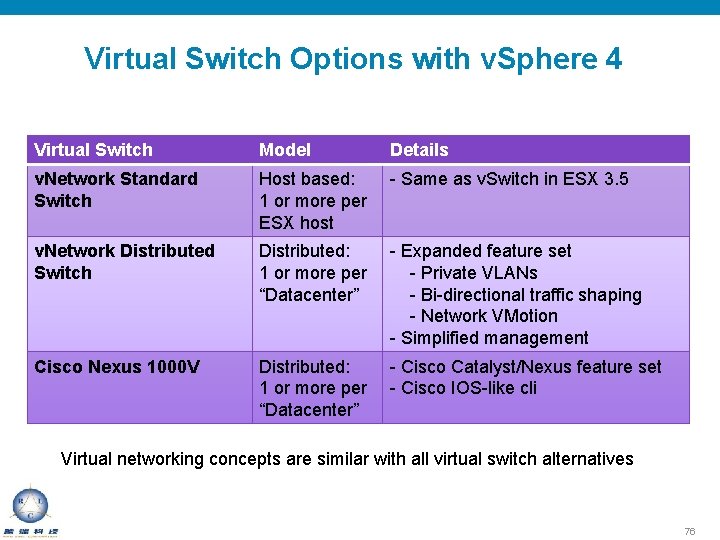

Virtual Switch Options with v. Sphere 4 Virtual Switch Model Details v. Network Standard Switch Host based: 1 or more per ESX host - Same as v. Switch in ESX 3. 5 v. Network Distributed Switch Distributed: 1 or more per “Datacenter” - Expanded feature set - Private VLANs - Bi-directional traffic shaping - Network VMotion - Simplified management Cisco Nexus 1000 V Distributed: 1 or more per “Datacenter” - Cisco Catalyst/Nexus feature set - Cisco IOS-like cli Virtual networking concepts are similar with all virtual switch alternatives 76

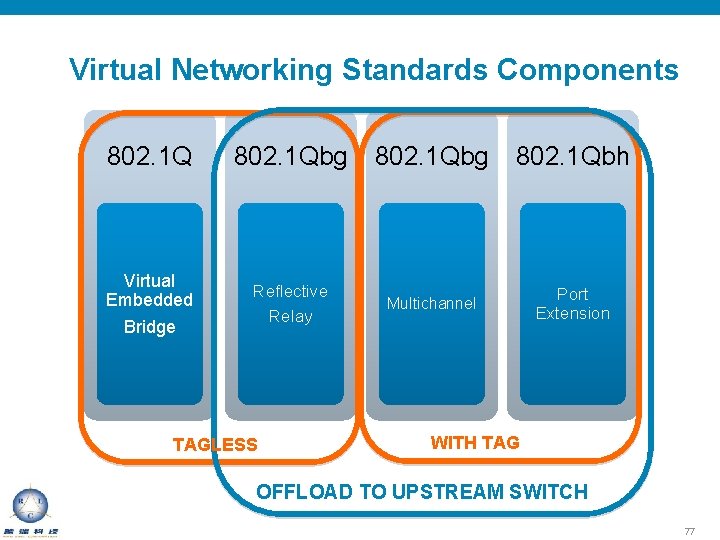

Virtual Networking Standards Components 802. 1 Qbg 802. 1 Qbh Virtual Embedded Bridge Reflective Relay Multichannel Port Extension TAGLESS WITH TAG OFFLOAD TO UPSTREAM SWITCH 77

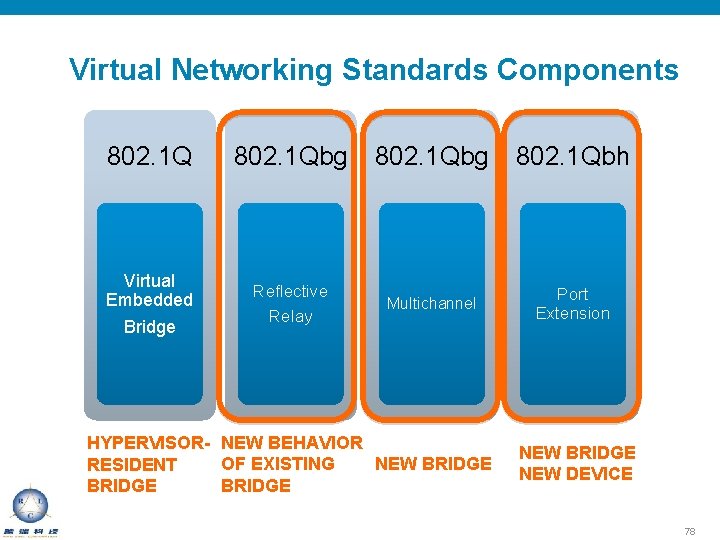

Virtual Networking Standards Components 802. 1 Qbg 802. 1 Qbh Virtual Embedded Bridge Reflective Relay Multichannel Port Extension HYPERVISOR- NEW BEHAVIOR NEW BRIDGE OF EXISTING RESIDENT BRIDGE NEW DEVICE 78

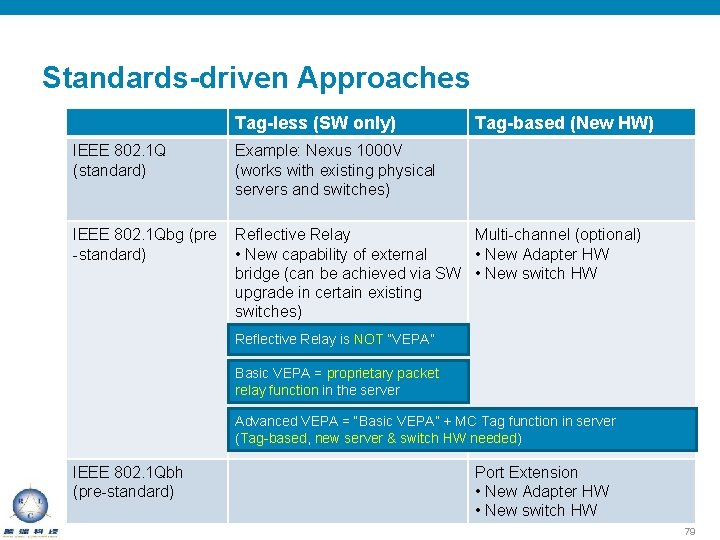

Standards-driven Approaches Tag-less (SW only) Tag-based (New HW) IEEE 802. 1 Q (standard) Example: Nexus 1000 V (works with existing physical servers and switches) IEEE 802. 1 Qbg (pre -standard) Reflective Relay Multi-channel (optional) • New capability of external • New Adapter HW bridge (can be achieved via SW • New switch HW upgrade in certain existing switches) Reflective Relay is NOT “VEPA” Basic VEPA = proprietary packet relay function in the server Advanced VEPA = “Basic VEPA” + MC Tag function in server (Tag-based, new server & switch HW needed) IEEE 802. 1 Qbh (pre-standard) Port Extension • New Adapter HW • New switch HW 79

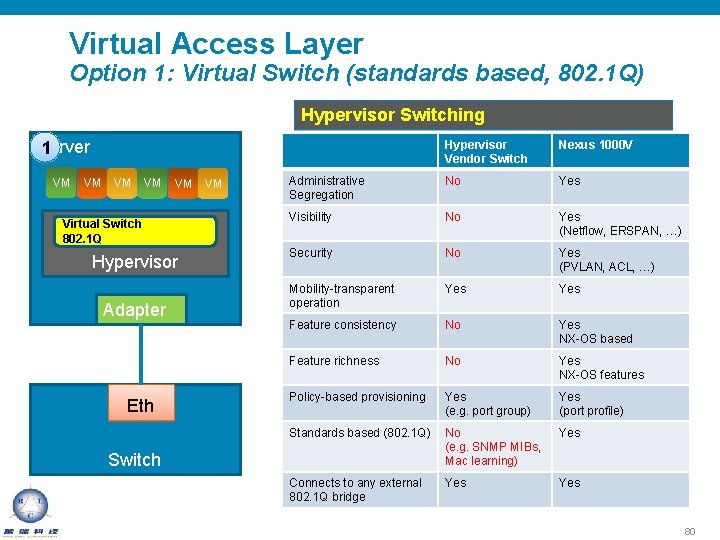

Virtual Access Layer Option 1: Virtual Switch (standards based, 802. 1 Q) Hypervisor Switching Server 1 VM VM VM Virtual Switch 802. 1 Q Hypervisor Adapter Eth VM Hypervisor Vendor Switch Nexus 1000 V Administrative Segregation No Yes Visibility No Yes (Netflow, ERSPAN, …) Security No Yes (PVLAN, ACL, …) Mobility-transparent operation Yes Feature consistency No Yes NX-OS based Feature richness No Yes NX-OS features Policy-based provisioning Yes (e. g. port group) Yes (port profile) Standards based (802. 1 Q) No (e. g. SNMP MIBs, Mac learning) Yes Connects to any external 802. 1 Q bridge Yes Switch 80

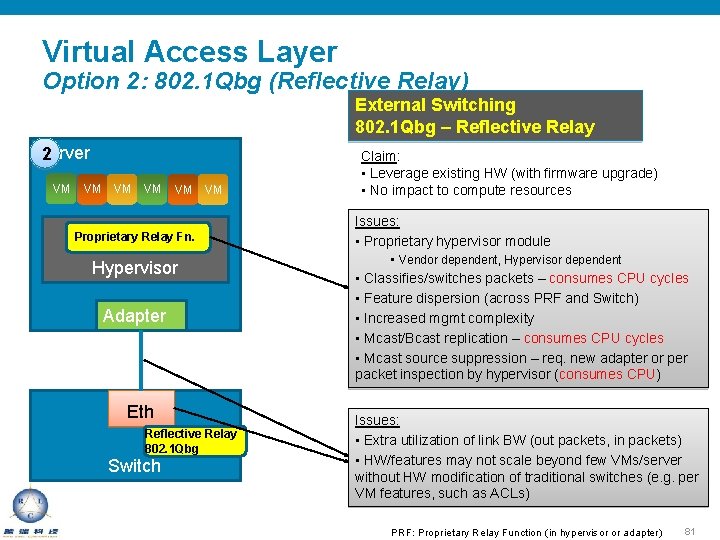

Virtual Access Layer Option 2: 802. 1 Qbg (Reflective Relay) External Switching 802. 1 Qbg – Reflective Relay Server 2 VM VM VM Proprietary Relay Fn. Hypervisor Adapter Eth Reflective Relay 802. 1 Qbg Switch Claim: • Leverage existing HW (with firmware upgrade) • No impact to compute resources Issues: • Proprietary hypervisor module • Vendor dependent, Hypervisor dependent • Classifies/switches packets – consumes CPU cycles • Feature dispersion (across PRF and Switch) • Increased mgmt complexity • Mcast/Bcast replication – consumes CPU cycles • Mcast source suppression – req. new adapter or per packet inspection by hypervisor (consumes CPU) Issues: • Extra utilization of link BW (out packets, in packets) • HW/features may not scale beyond few VMs/server without HW modification of traditional switches (e. g. per VM features, such as ACLs) PRF: Proprietary Relay Function (in hypervisor or adapter) 81

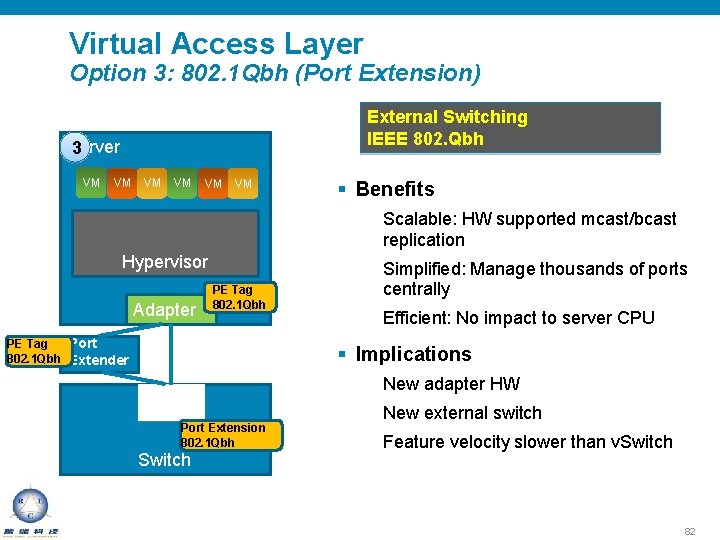

Virtual Access Layer Option 3: 802. 1 Qbh (Port Extension) External Switching IEEE 802. Qbh Server 3 VM VM VM § Benefits Scalable: HW supported mcast/bcast replication Hypervisor Adapter PE Tag 802. 1 Qbh PE Tag Port 802. 1 Qbh Extender Simplified: Manage thousands of ports centrally Efficient: No impact to server CPU § Implications New adapter HW Eth Port Extension 802. 1 Qbh Switch New external switch Feature velocity slower than v. Switch 82

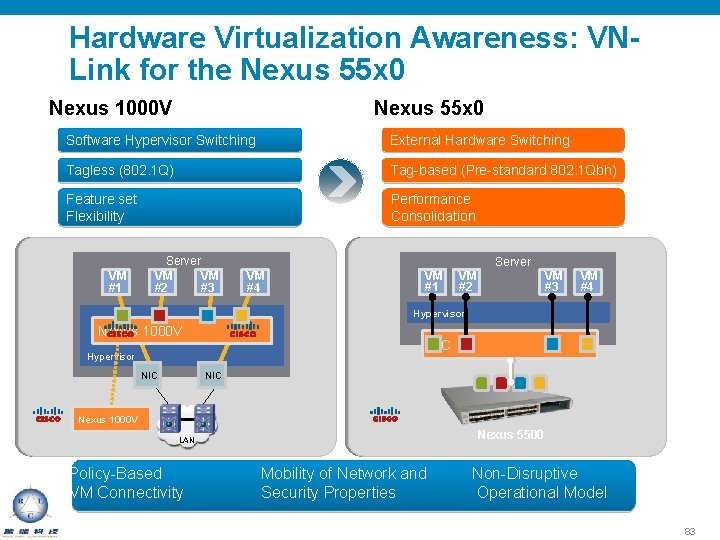

Hardware Virtualization Awareness: VNLink for the Nexus 55 x 0 Nexus 1000 V Nexus 55 x 0 Software Hypervisor Switching External Hardware Switching Tagless (802. 1 Q) Tag-based (Pre-standard 802. 1 Qbh) Feature set Flexibility Performance Consolidation VM #1 Server VM VM #2 #3 VM #4 VM #1 VM #2 Server VM #3 VM #4 Hypervisor Nexus 1000 V VIC Hypervisor NIC Nexus 1000 V Nexus 5500 LAN Policy-Based VM Connectivity Mobility of Network and Security Properties Non-Disruptive Operational Model 83

- Slides: 84