Trust and Semantic attacks Ponnurangam Kumaraguru PK Usable

Trust and Semantic attacks Ponnurangam Kumaraguru (PK) Usable, Privacy, and Security Mar 17, 2008 CMU Usable Privacy and Security Laboratory http: //cups. cmu. edu/

Who am I? n Ph. D. candidate in the Computation, Organizations, and Society program in the School of Computer Science n Research interests - Privacy, Security, Trust, Human Computer Interaction, and Learning Science • CMU Usable Privacy and Security Laboratory • http: //www. cs. cmu. edu/~ponguru 2

Outline n Trust n Semantic n User attacks - Phishing education n Learning science n Evaluating n Ongoing embedded training work n Conclusion • CMU Usable Privacy and Security Laboratory • http: //www. cs. cmu. edu/~ponguru 3

What is trust? n No single definition n Depends on the situation and the problem n Many models developed n Very few models evaluated • CMU Usable Privacy and Security Laboratory • http: //www. cs. cmu. edu/~ponguru 4

Trust in literature n Economics (how trust affects transactions) • Reputation n Marketing (how to build trust) • Persuasion n HCI (what affects trust) • Design n Psychology (positive theory) • Intimacy • CMU Usable Privacy and Security Laboratory • http: //www. cs. cmu. edu/~ponguru 5

Trust Models n Positive antecedents • Benevolence • Comprehensive information • Credibility • Familiarity • Good feedback • Propensity • Reliability • Usability • Willingness to transact • … n Negative antecedents • Risk • Transaction cost • Uncertainty • … • CMU Usable Privacy and Security Laboratory • http: //www. cs. cmu. edu/~ponguru 6

How do users make decisions? n Interview design, 25 participants (11 experts and 14 - non-experts) n Measured the strategies and decision process of the users in online situations n Results • Non-experts wanted advice to help them make better trust decisions • Non-experts used significantly fewer meaningful signals compared to experts P. Kumaraguru, A. Acquisti, and L. Cranor. Trust modeling for online transactions: A phishing scenario. In Privacy Security Trust, Oct 30 - Nov 1, 2006, Ontario, Canada. • CMU Usable Privacy and Security Laboratory • http: //www. cs. cmu. edu/~ponguru 7

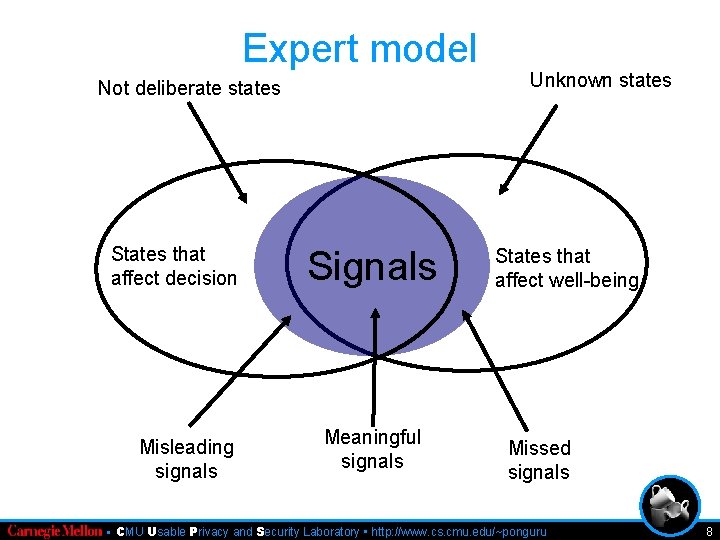

Expert model Not deliberate states States that affect decision Misleading signals Signals Meaningful signals Unknown states States that affect well-being Missed signals • CMU Usable Privacy and Security Laboratory • http: //www. cs. cmu. edu/~ponguru 8

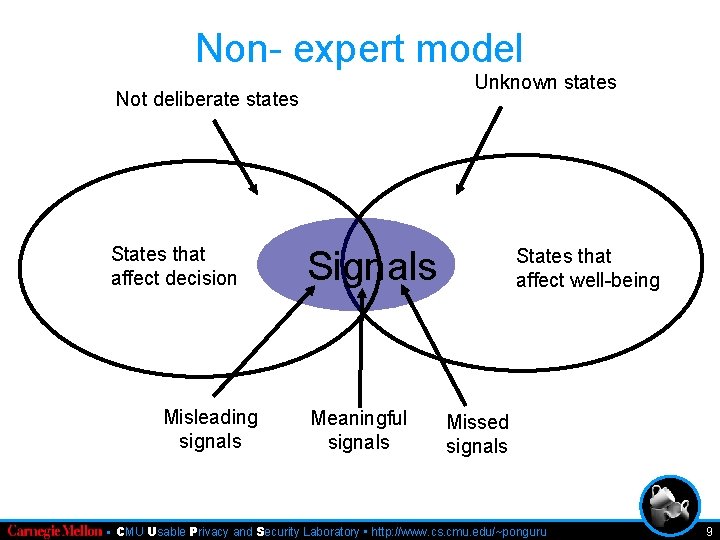

Non- expert model Unknown states Not deliberate states States that affect decision Misleading signals Signals Meaningful signals States that affect well-being Missed signals • CMU Usable Privacy and Security Laboratory • http: //www. cs. cmu. edu/~ponguru 9

Outline n Trust n Semantic n User attacks - Phishing education n Learning science n Evaluating n Ongoing embedded training work n Conclusion • CMU Usable Privacy and Security Laboratory • http: //www. cs. cmu. edu/~ponguru 10

Security Attacks: Waves n Physical: attack the computers, wires and electronics § E. g. physically cutting the network cable n Syntactic: attack operating logic of the computers and networks § E. g. buffer overflows, DDo. S n Semantic: computers attack the user not the § E. g. Phishing http: //www. schneier. com/essay-035. html • CMU Usable Privacy and Security Laboratory • http: //www. cs. cmu. edu/~ponguru 11

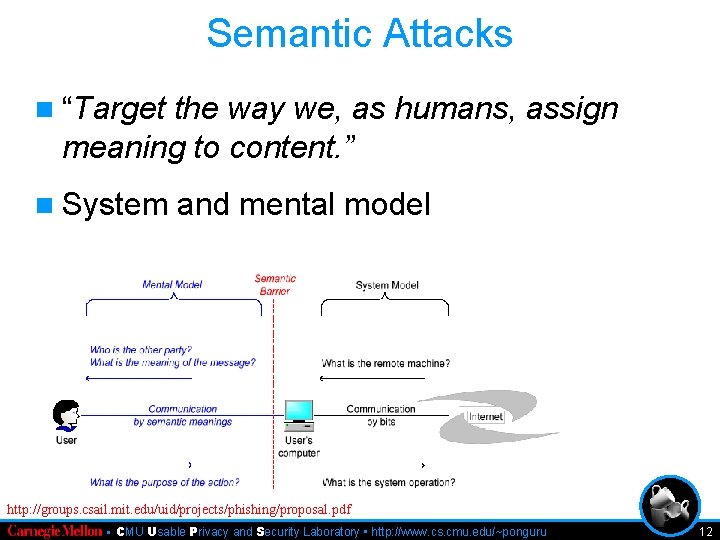

Semantic Attacks n “Target the way we, as humans, assign meaning to content. ” n System and mental model http: //groups. csail. mit. edu/uid/projects/phishing/proposal. pdf • CMU Usable Privacy and Security Laboratory • http: //www. cs. cmu. edu/~ponguru 12

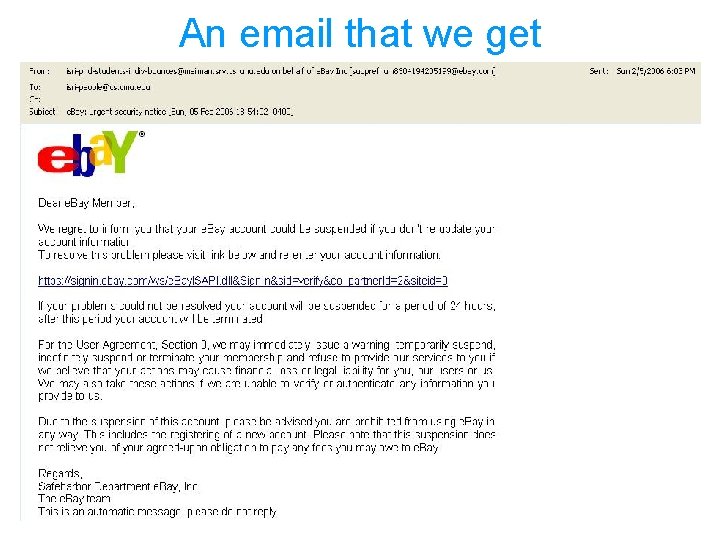

An email that we get 13

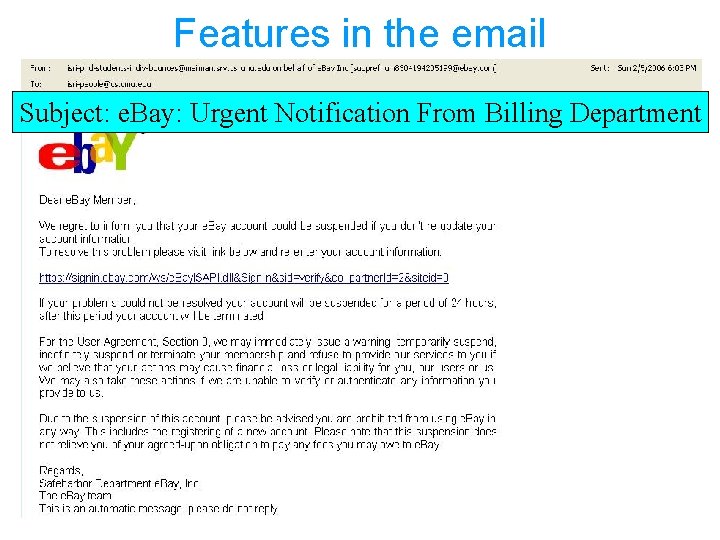

Features in the email Subject: e. Bay: Urgent Notification From Billing Department 14

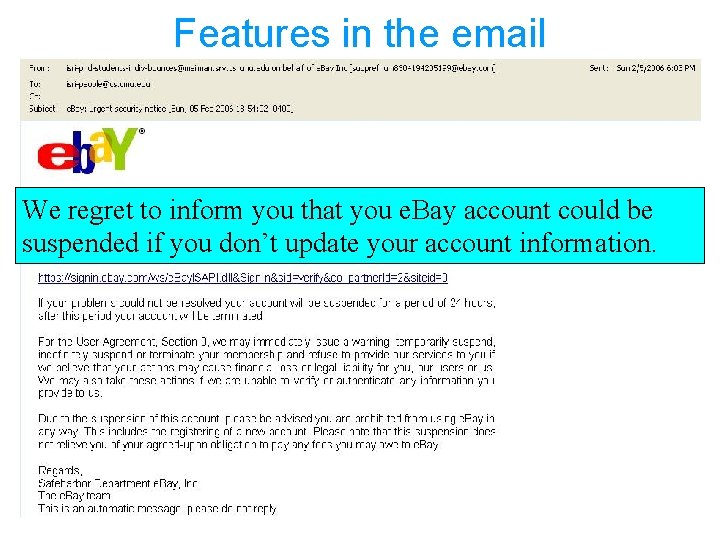

Features in the email We regret to inform you that you e. Bay account could be suspended if you don’t update your account information. 15

Features in the email https: //signin. ebay. com/ws/e. Bay. ISAPI. dll? Sign. In&sid=veri fy&co_partnerid=2&sidteid=0 16

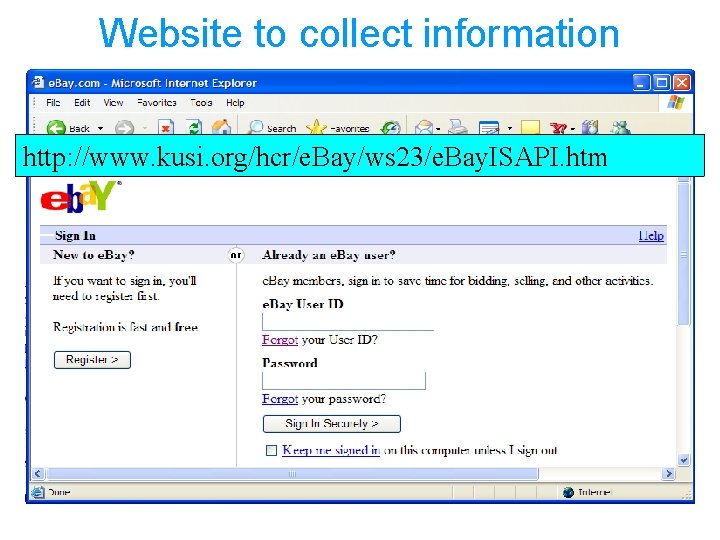

Website to collect information http: //www. kusi. org/hcr/e. Bay/ws 23/e. Bay. ISAPI. htm 17

What is phishing? Phishing is “a broadly launched social engineering attack in which an electronic identity is misrepresented in an attempt to trick individuals into revealing personal credentials that can be used fraudulently against them. ” Financial Services Technology Consortium. Understanding and countering the phishing threat: A financial service industry perspective. 2005. • CMU Usable Privacy and Security Laboratory • http: //www. cs. cmu. edu/~ponguru 18

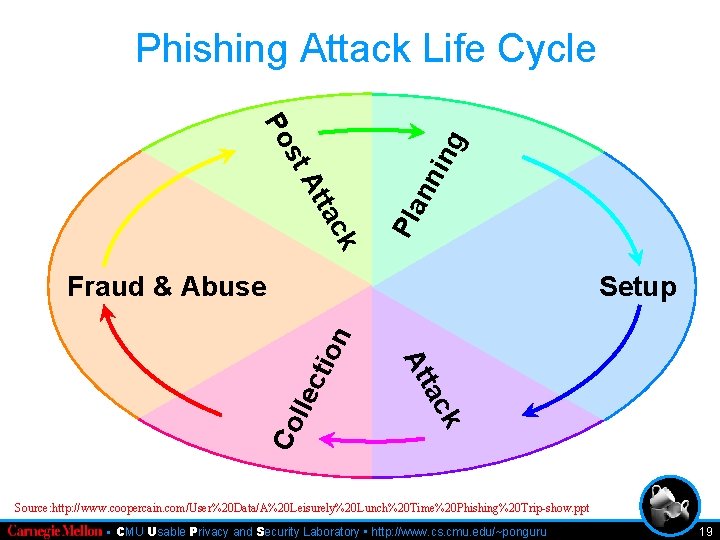

Phishing Attack Life Cycle k nn tac At Pla st ing Po Setup ec Co ll k tac At tio n Fraud & Abuse Source: http: //www. coopercain. com/User%20 Data/A%20 Leisurely%20 Lunch%20 Time%20 Phishing%20 Trip-show. ppt • CMU Usable Privacy and Security Laboratory • http: //www. cs. cmu. edu/~ponguru 19

A few statistics on phishing n 73 million US adults received more than 50 phishing emails each in the year 2005 n Gartner in 2006 found 30% users changed online banking behavior because of attacks like phishing n Gartner in 2006 predicted $2. 8 billion loss due to phishing in that year • CMU Usable Privacy and Security Laboratory • http: //www. cs. cmu. edu/~ponguru 20

Why phishing is a hard problem? n Semantic attacks take advantage of the way humans interact with computers n Phishing is one type of semantic attack n Phishers make use of the trust that users have on legitimate organizations • CMU Usable Privacy and Security Laboratory • http: //www. cs. cmu. edu/~ponguru 21

Three strategies for usable privacy and security n Invisible strategy • Regulatory solution • Detecting and deleting the emails n User interface based • Toolbars n Training users • CMU Usable Privacy and Security Laboratory • http: //www. cs. cmu. edu/~ponguru 22

Our Multi-Pronged Approach n Human • • side Interviews to understand decision-making Phish. Guru embedded training Anti-Phishing Phil game Understanding effectiveness of browser warnings n Computer side • PILFER email anti-phishing filter • CANTINA web anti-phishing algorithm Automate where possible, support where necessary 23

Outline n Trust n Semantic n User attacks - Phishing education n Learning science n Evaluating n Ongoing embedded training work n Conclusion • CMU Usable Privacy and Security Laboratory • http: //www. cs. cmu. edu/~ponguru 24

Why user education is hard? n Security is a secondary task n Users not motivated to taking time for education n Non-existence of an effective method • CMU Usable Privacy and Security Laboratory • http: //www. cs. cmu. edu/~ponguru 25

To address the open questions n Embedded training methodology • Make the training part of primary task • Create motivation among users n Learning science • Principles for designing training interventions • CMU Usable Privacy and Security Laboratory • http: //www. cs. cmu. edu/~ponguru 26

Approaches for training n Posting articles • FTC, … n Phishing IQ tests • Mail Frontier, … n Classroom training (Robila et al. ) n Sending security notices http: //www. ftc. gov/bcp/conline/pubs/alerts/phishingalrt. htm http: //www. sonicwall. com/phishing/ http: //pages. ebay. com/education/spooftutorial/ • CMU Usable Privacy and Security Laboratory • http: //www. cs. cmu. edu/~ponguru 27

Security notices • How to spot an email • How to report spoof email • Five ways to protect yourself from identity theft 28

Outline n Trust n Semantic n User attacks - Phishing education n Learning science n Evaluating n Ongoing embedded training work n Conclusion • CMU Usable Privacy and Security Laboratory • http: //www. cs. cmu. edu/~ponguru 29

Why learning science? n Research on how people gain knowledge and learn new skills n ACT-R theory of cognition and learning • Declarative knowledge (knowing that) • Procedural knowledge (knowing how) n Learning science principles • CMU Usable Privacy and Security Laboratory • http: //www. cs. cmu. edu/~ponguru 30

Learning science principles n Learning-by-doing • More practice better performance n Story-based agent • Using agents in a story-based content enhances user learning n Immediate feedback • Feedback during learning phase results in efficient learning Clark, R. C. , and Mayer, R. E. E-Learning and the science of instruction: proven guidelines for consumers and designers of multimedia learning. John Wiley & Sons, Inc. , USA, 2002. • CMU Usable Privacy and Security Laboratory • http: //www. cs. cmu. edu/~ponguru 31

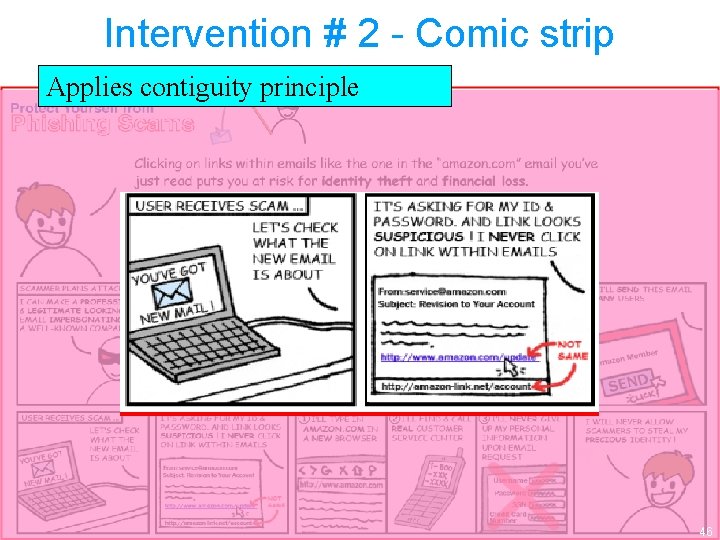

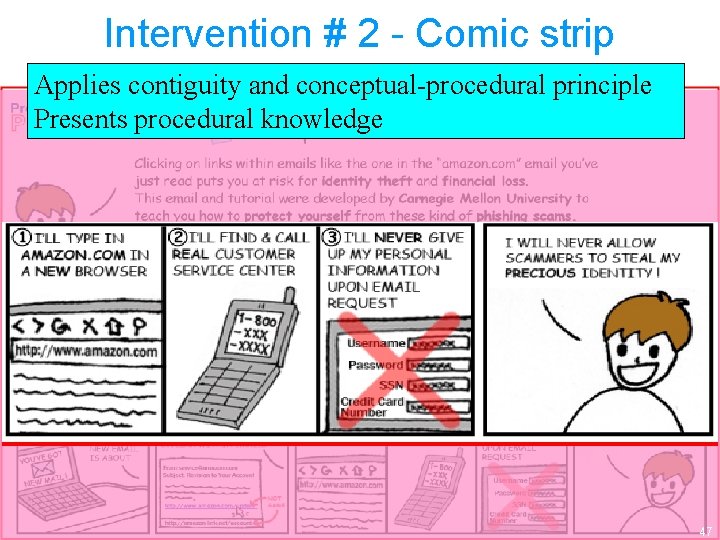

Learning science principles n Conceptual-procedural • Presenting procedural materials in between conceptual materials helps better learning n Contiguity • Learning increases when words and pictures are presented contiguously than isolated n Personalization • Using conversational style rather than formal style enhances learning Clark, R. C. , and Mayer, R. E. E-Learning and the science of instruction: proven guidelines for consumers and designers of multimedia learning. John Wiley & Sons, Inc. , USA, 2002. • CMU Usable Privacy and Security Laboratory • http: //www. cs. cmu. edu/~ponguru 32

Outline n Trust n Semantic n User attacks - Phishing education n Learning science n Evaluating n Ongoing embedded training work n Conclusion • CMU Usable Privacy and Security Laboratory • http: //www. cs. cmu. edu/~ponguru 33

Design constraints n People don’t proactively read the training materials on the web n People can learn from web-based training materials, if only we could get people to read them! (Kumaraguru et al. ) P. Kumaraguru, S. Sheng, A. Acquisti, L. Cranor, and J. Hong. Teaching Johnny Not to Fall for Phish. Tech. rep. , Cranegie Mellon University, 2007. http: //www. cylab. cmu. edu/files/cmucylab 07003. pdf. • CMU Usable Privacy and Security Laboratory • http: //www. cs. cmu. edu/~ponguru 34

Embedded training n We know people fall for phishing emails n So make the training available through the phishing emails n Training materials are presented when the users actually fall for phishing emails n Makes training part of primary task n Creates motivation among users n Applies learning-by-doing and immediate feedback principle • CMU Usable Privacy and Security Laboratory • http: //www. cs. cmu. edu/~ponguru 35

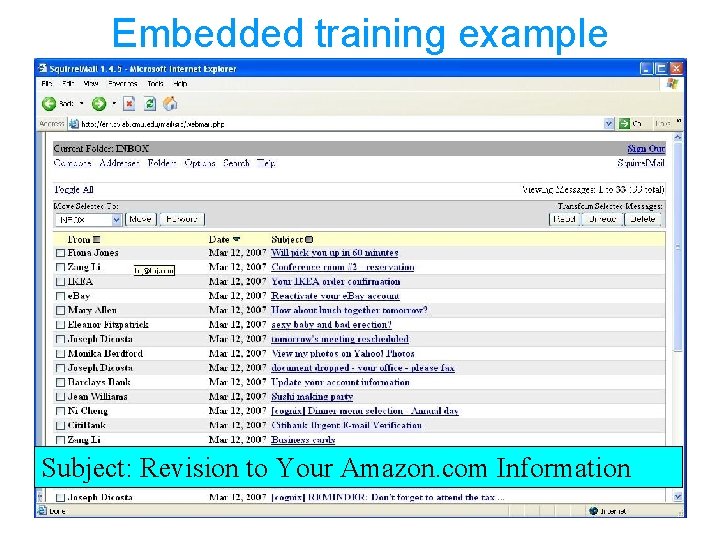

Embedded training example Subject: Revision to Your Amazon. com Information 36

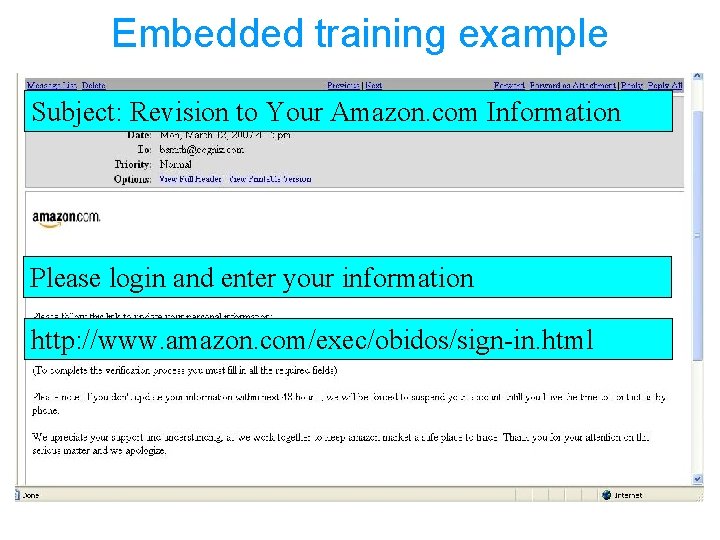

Embedded training example Subject: Revision to Your Amazon. com Information Please login and enter your information http: //www. amazon. com/exec/obidos/sign-in. html 37

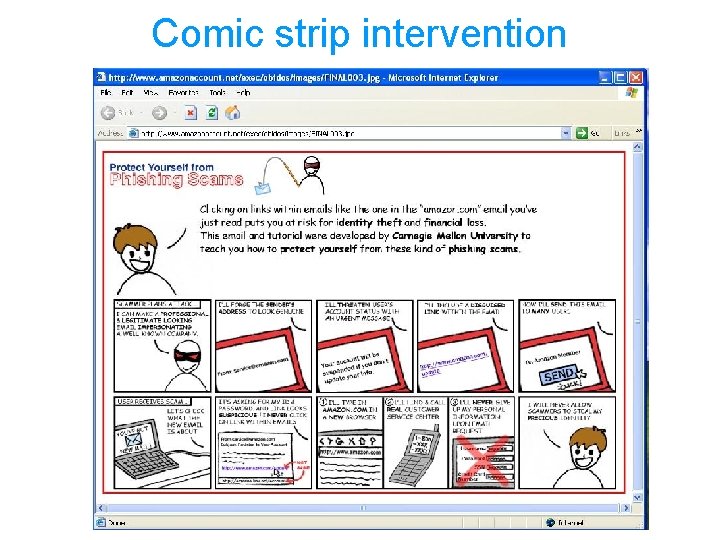

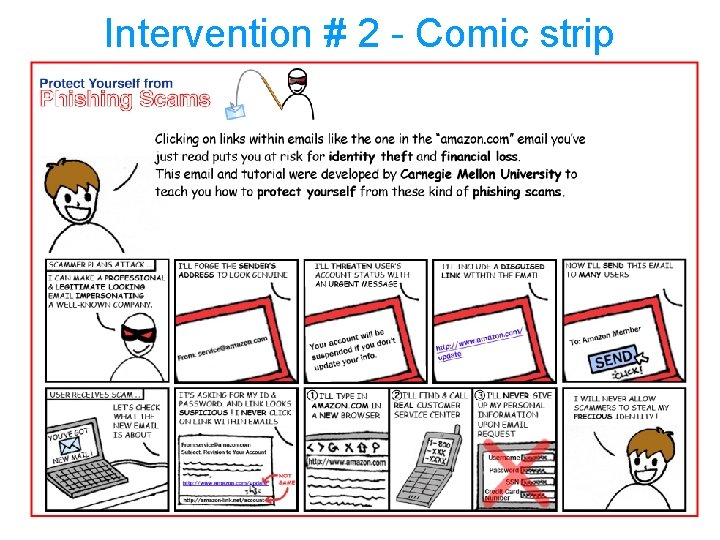

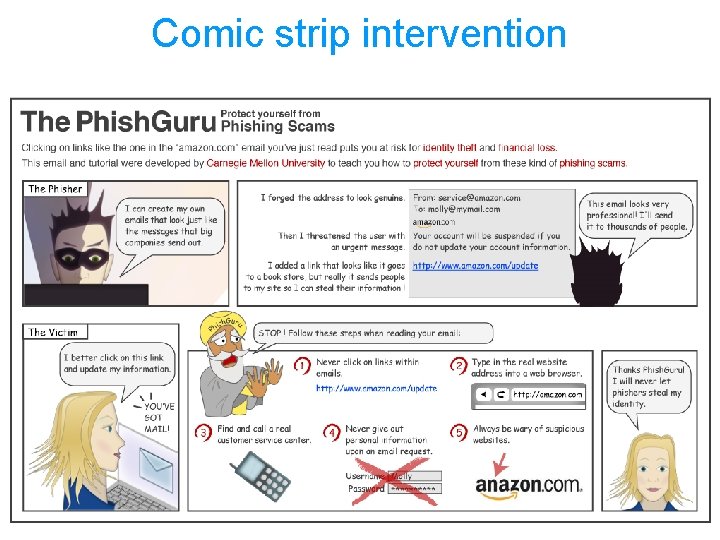

Comic strip intervention 38

Design rationale n What to show in the intervention? n When to show the intervention? n Analyzed instructions from most popular websites n Paper and HTML prototypes, 7 users each n Lessons learned • Two designs • Present the training materials when users click on the link • CMU Usable Privacy and Security Laboratory • http: //www. cs. cmu. edu/~ponguru 39

Study 1: Evaluation of interventions n H 1: Security notices are an ineffective medium for training users n H 2: Users make better decisions when trained by embedded methodology compared to security notices • CMU Usable Privacy and Security Laboratory • http: //www. cs. cmu. edu/~ponguru 40

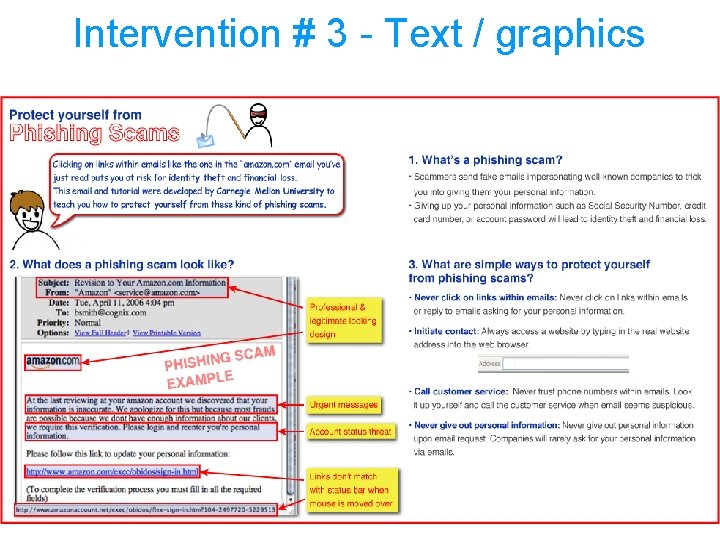

Study design n Think aloud study n Role play as Bobby Smith, 19 emails including 2 interventions, and 4 phishing emails n Three conditions: security notices, text / graphics intervention, comic strip intervention n 10 non-expert participants in each condition, 30 total P. Kumaraguru, Y. Rhee, A. Acquisti, L. Cranor, J. Hong, and E. Nunge. Protecting People from Phishing: The Design and Evaluation of an Embedded Training Email System. Cy. Lab Technical Report. CMU-Cy. Lab-06 -017, 2006. http: //www. cylab. cmu. edu/default. aspx? id=2253 [to be presented at CHI 2007] • CMU Usable Privacy and Security Laboratory • http: //www. cs. cmu. edu/~ponguru 41

Intervention #1 - Security notices • How to spot an email • How to report spoof email • Five ways to protect yourself from identity theft 42

Intervention # 2 - Comic strip 43

Intervention # 2 - Comic strip Applies personalization and story based principle Presents declarative knowledge 44

Intervention # 2 - Comic strip Applies personalization principle 45

Intervention # 2 - Comic strip Applies contiguity principle 46

Intervention # 2 - Comic strip Applies contiguity and conceptual-procedural principle Presents procedural knowledge 47

Intervention # 3 - Text / graphics 48

User involvement • CMU Usable Privacy and Security Laboratory • http: //www. cs. cmu. edu/~ponguru 49

Legitimate Phish Training Spam 50

User study - results n We treated clicking on link to be falling for phishing n 93% of the users who clicked went ahead and gave personal information • CMU Usable Privacy and Security Laboratory • http: //www. cs. cmu. edu/~ponguru 51

User study - results • CMU Usable Privacy and Security Laboratory • http: //www. cs. cmu. edu/~ponguru 52

User study - results n Significant difference between security notices and the comic strip group -value < 0. 05) (p n Significant difference between the comic and the text / graphics group (p -value < 0. 05) • CMU Usable Privacy and Security Laboratory • http: //www. cs. cmu. edu/~ponguru 53

Lessons learned rte d Security notices are an ineffective medium for training users Su pp o n H 1: Users make better decision when trained by embedded methodology compared to security notices Su pp or te d n H 2: • CMU Usable Privacy and Security Laboratory • http: //www. cs. cmu. edu/~ponguru 54

Open questions n Previous studies measured only knowledge gain n Users have specific knowledge than generalized knowledge (Downs et al. ) n What about knowledge retention and transfer? • CMU Usable Privacy and Security Laboratory • http: //www. cs. cmu. edu/~ponguru 55

Knowledge retention and transfer n Knowledge retention (KR) • The ability to apply the knowledge gained after a time period n Knowledge transfer (KT) • The ability to transfer the knowledge gained from one situation to another situation • CMU Usable Privacy and Security Laboratory • http: //www. cs. cmu. edu/~ponguru 56

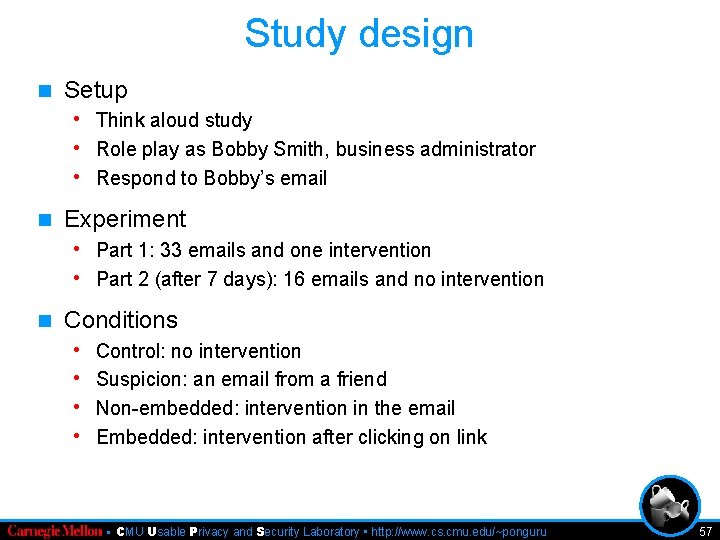

Study design n Setup • Think aloud study • Role play as Bobby Smith, business administrator • Respond to Bobby’s email n Experiment • Part 1: 33 emails and one intervention • Part 2 (after 7 days): 16 emails and no intervention n Conditions • • Control: no intervention Suspicion: an email from a friend Non-embedded: intervention in the email Embedded: intervention after clicking on link • CMU Usable Privacy and Security Laboratory • http: //www. cs. cmu. edu/~ponguru 57

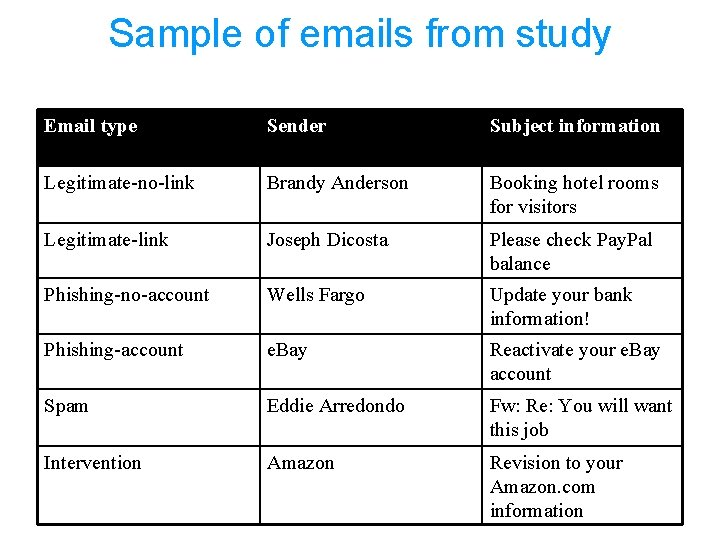

Sample of emails from study Email type Sender Subject information Legitimate-no-link Brandy Anderson Booking hotel rooms for visitors Legitimate-link Joseph Dicosta Please check Pay. Pal balance Phishing-no-account Wells Fargo Update your bank information! Phishing-account e. Bay Reactivate your e. Bay account Spam Eddie Arredondo Fw: Re: You will want this job Intervention Amazon Revision to your Amazon. com information 58

Comic strip intervention 59

Hypotheses n H 1: Participants in the embedded condition learn more effectively than participants in the non-embedded condition, suspicion condition, and the control condition n H 2: Participants in the embedded condition retain more knowledge about how to avoid phishing attacks than participants in the non-embedded condition, suspicion condition, and the control condition • CMU Usable Privacy and Security Laboratory • http: //www. cs. cmu. edu/~ponguru 60

Hypotheses n H 3: Participants in the embedded condition transfer more knowledge about how to avoid phishing attacks than participants in the non-embedded condition, suspicion condition, and the control conditions • CMU Usable Privacy and Security Laboratory • http: //www. cs. cmu. edu/~ponguru 61

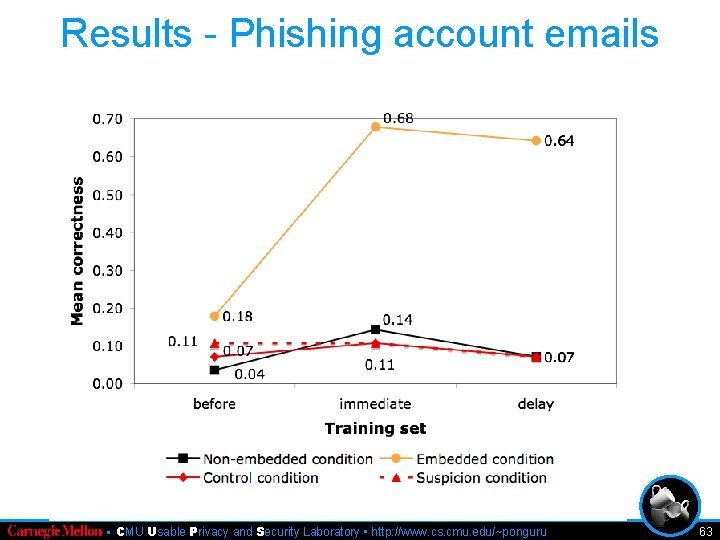

Study results n We treated clicking on link to be falling for phishing n 89% of the users who clicked went ahead and gave personal information • CMU Usable Privacy and Security Laboratory • http: //www. cs. cmu. edu/~ponguru 62

Results - Phishing account emails • CMU Usable Privacy and Security Laboratory • http: //www. cs. cmu. edu/~ponguru 63

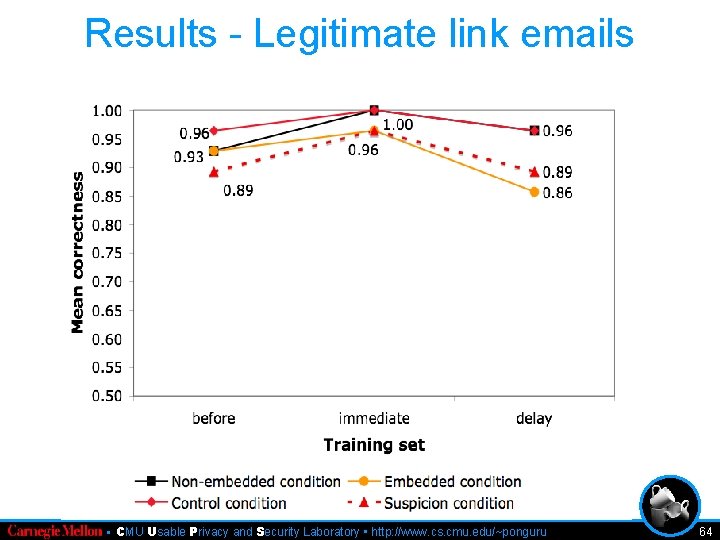

Results - Legitimate link emails • CMU Usable Privacy and Security Laboratory • http: //www. cs. cmu. edu/~ponguru 64

Measuring retention n Training phish on Amazon. com account revision n Testing a week later on Citibank account revision phish n Significant difference between embedded and other groups (p < 0. 01) n “I remember reading last time that thing [training material] said not click and give personal information. ” • CMU Usable Privacy and Security Laboratory • http: //www. cs. cmu. edu/~ponguru 65

Measuring transfer n Training phish on Amazon. com account revision n Testing a week later on e. Bay account reactivation phish n Significant difference between embedded and other groups (p < 0. 01) n “Phish. Guru said not to click on links and give personal information, so will not do it, I will delete this email. ” • CMU Usable Privacy and Security Laboratory • http: //www. cs. cmu. edu/~ponguru 66

A few observations n “I was more motivated to read the training materials since it was presented after me falling for the attack. ” n “Thank you Phish. Guru, I will remember that [the 5 instructions given in the training material]. ” n “This [image in the email] looks like some spam. ” • CMU Usable Privacy and Security Laboratory • http: //www. cs. cmu. edu/~ponguru 67

Outline n Trust n Semantic n User attacks - Phishing education n Learning science n Evaluating n Ongoing embedded training work n Conclusion • CMU Usable Privacy and Security Laboratory • http: //www. cs. cmu. edu/~ponguru 68

Ongoing work n Test the system in real-world • CMU Usable Privacy and Security Laboratory • http: //www. cs. cmu. edu/~ponguru 69

Conclusion Educating users about security can be a reality rather than just a myth • CMU Usable Privacy and Security Laboratory • http: //www. cs. cmu. edu/~ponguru 70

Collect homework • CMU Usable Privacy and Security Laboratory • http: //www. cs. cmu. edu/~ponguru 71

Acknowledgements n Members of Supporting Trust Decision research group n Members of CUPS lab • CMU Usable Privacy and Security Laboratory • http: //www. cs. cmu. edu/~ponguru 72

• CMU Usable Privacy and Security Laboratory • http: //www. cs. cmu. edu/~ponguru 73

CMU Usable Privacy and Security Laboratory http: //cups. cmu. edu/ 74

Learning-by-doing principle n Production rules are acquired and strengthened through practice n More practice better performance n Story-centered n Cognitive curriculum tutors • CMU Usable Privacy and Security Laboratory • http: //www. cs. cmu. edu/~ponguru 75

Immediate feedback principle n Feedback during knowledge acquisition phase results in efficient learning n Corrects n Avoids behavior floundering n LISP tutors n “yes” or “no” or detailed • CMU Usable Privacy and Security Laboratory • http: //www. cs. cmu. edu/~ponguru 76

Conceptual-Procedural principle n. A concept is a mental representation or prototype of objects or ideas n. A procedure is a series of clearly defined steps n Presenting procedural materials in between conceptual materials helps better learning n Studies • Mathematics • CMU Usable Privacy and Security Laboratory • http: //www. cs. cmu. edu/~ponguru 77

Contiguity principle n Learning increases when words and pictures are presented contiguously rather than isolated from one another n Human learning process - creating meaningful relation between pictures and words n Studies • Vehicle braking system • Geometry cognitive tutor • CMU Usable Privacy and Security Laboratory • http: //www. cs. cmu. edu/~ponguru 78

Personalization principle n Using conversational style rather than formal style enhances learning n To use “I, ” “we, ” “my, ” “you, ” and “your” in the instructional materials n Studies • Process of lightning formation • Mathematics • CMU Usable Privacy and Security Laboratory • http: //www. cs. cmu. edu/~ponguru 79

Story-based agent principle n Characters who help in guiding the users through the learning process n Using agents in a story-based content enhances user learning n Stories simulate cognitive process n Experiments - Herman • CMU Usable Privacy and Security Laboratory • http: //www. cs. cmu. edu/~ponguru 80

- Slides: 80