Transport Triggered Architectures used for Embedded Systems International

Transport Triggered Architectures used for Embedded Systems International Symposium on NEW TRENDS IN COMPUTER ARCHITECTURE Gent, Belgium December 16, 1999 Henk Corporaal EE department Delft Univ. of Technology h. corporaal@et. tudelft. nl http: //cs. et. tudelft. nl

Topics n MOVE project goals n Architecture spectrum of solutions n From VLIW to TTA n Code generation for TTAs n Mapping applications to processors n Achievements n TTA related research 2 Gent, December 1999

MOVE project goals n n n n 3 Remove bottlenecks of current ILP processors Tools for quick processor and system design; offer expertise in a package Application driven design process Exploit ILP to its limits (but not further !!) Replace hardware complexity with software complexity as far as possible Extreme functional flexibility Scalable solutions Orthogonal concept (combine with SIMD, MIMD, FPGA function units, . . . ) Gent, December 1999

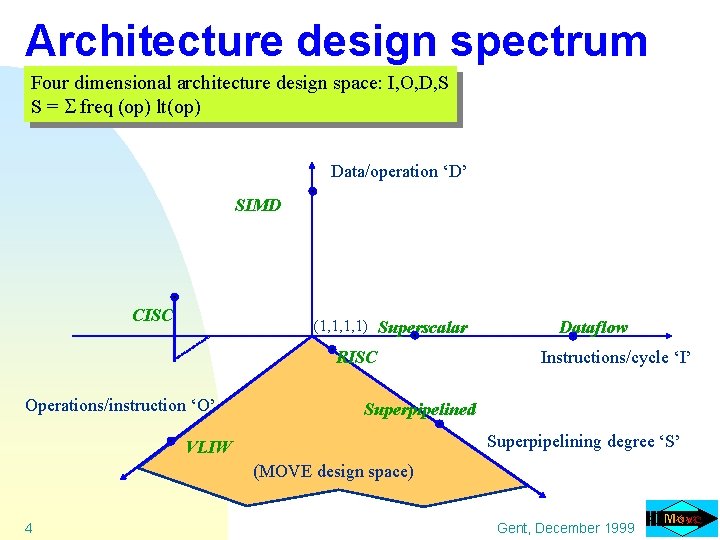

Architecture design spectrum Four dimensional architecture design space: I, O, D, S S = freq (op) lt(op) Data/operation ‘D’ SIMD CISC (1, 1, 1, 1) Superscalar RISC Operations/instruction ‘O’ Dataflow Instructions/cycle ‘I’ Superpipelined Superpipelining degree ‘S’ VLIW (MOVE design space) 4 Gent, December 1999

Architecture design spectrum Mpar is the amount of parallelism to be exploited by the compiler / application ! 5 Gent, December 1999

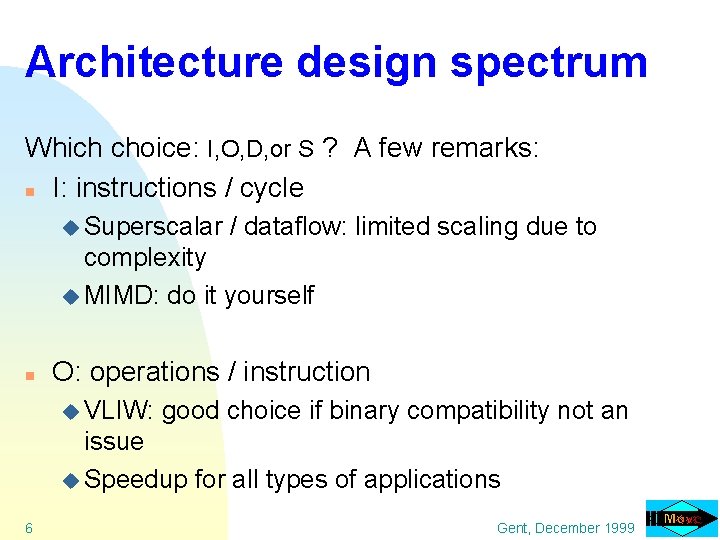

Architecture design spectrum Which choice: I, O, D, or S ? A few remarks: n I: instructions / cycle u Superscalar / dataflow: limited scaling due to complexity u MIMD: do it yourself n O: operations / instruction u VLIW: good choice if binary compatibility not an issue u Speedup for all types of applications 6 Gent, December 1999

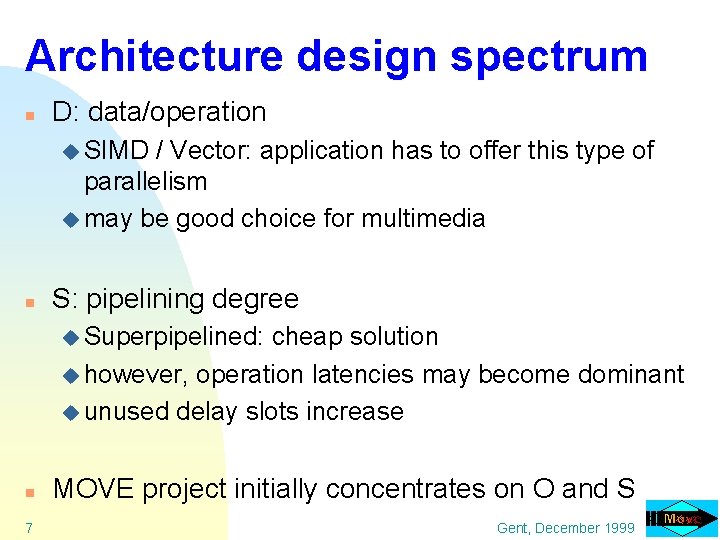

Architecture design spectrum n D: data/operation u SIMD / Vector: application has to offer this type of parallelism u may be good choice for multimedia n S: pipelining degree u Superpipelined: cheap solution u however, operation latencies may become dominant u unused delay slots increase n MOVE project initially concentrates on O and S 7 Gent, December 1999

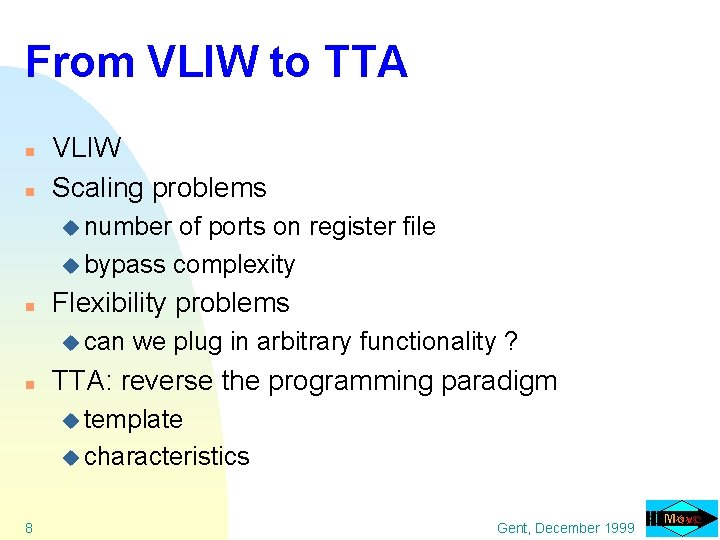

From VLIW to TTA n n VLIW Scaling problems u number of ports on register file u bypass complexity n Flexibility problems u can n we plug in arbitrary functionality ? TTA: reverse the programming paradigm u template u characteristics 8 Gent, December 1999

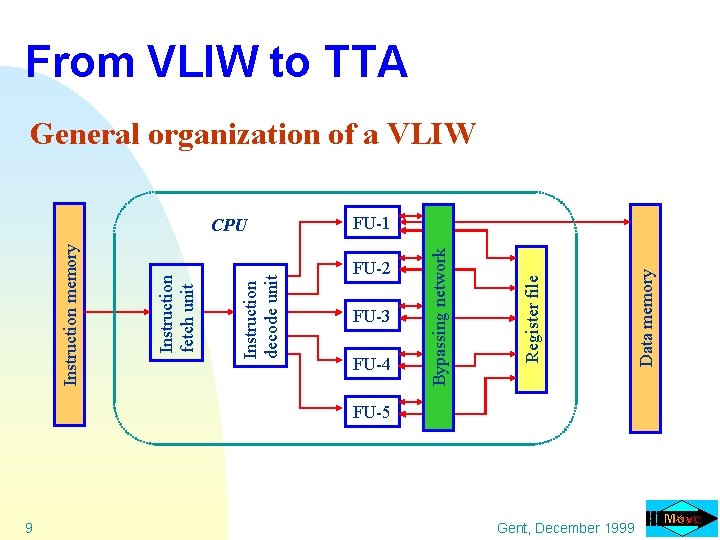

9 Instruction decode unit Instruction fetch unit Instruction memory FU-2 FU-3 FU-4 FU-5 Gent, December 1999 Data memory Register file CPU Bypassing network From VLIW to TTA General organization of a VLIW FU-1

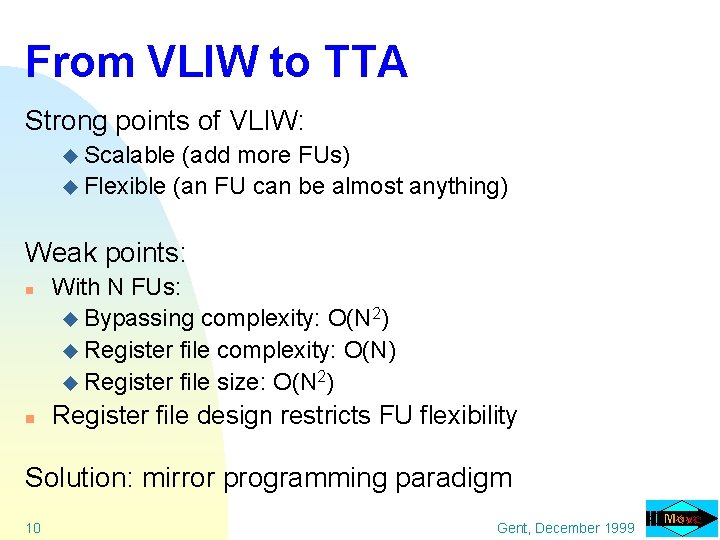

From VLIW to TTA Strong points of VLIW: u Scalable (add more FUs) u Flexible (an FU can be almost anything) Weak points: n n With N FUs: u Bypassing complexity: O(N 2) u Register file complexity: O(N) u Register file size: O(N 2) Register file design restricts FU flexibility Solution: mirror programming paradigm 10 Gent, December 1999

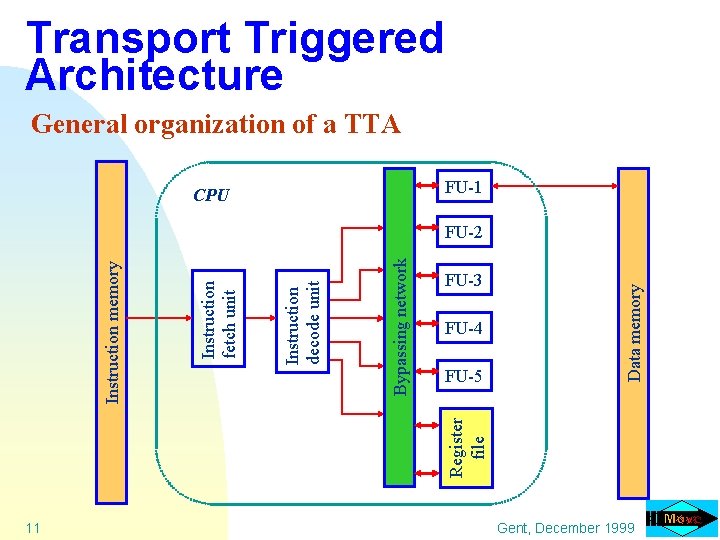

Transport Triggered Architecture General organization of a TTA FU-1 CPU FU-4 FU-5 Data memory FU-3 Register file Bypassing network Instruction decode unit Instruction fetch unit Instruction memory FU-2 11 Gent, December 1999

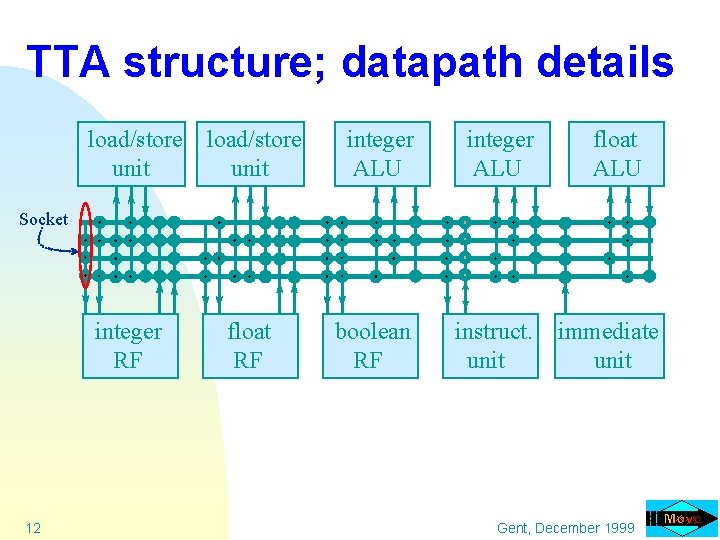

TTA structure; datapath details load/store unit integer ALU boolean RF instruct. unit float ALU Socket integer RF 12 float RF immediate unit Gent, December 1999

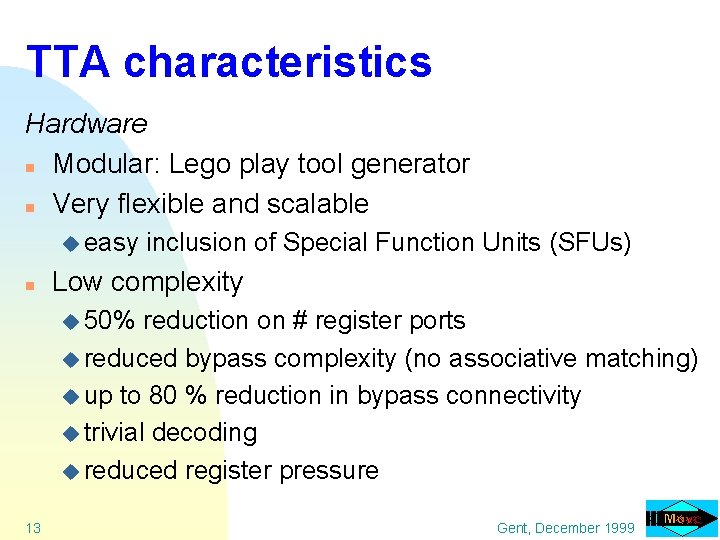

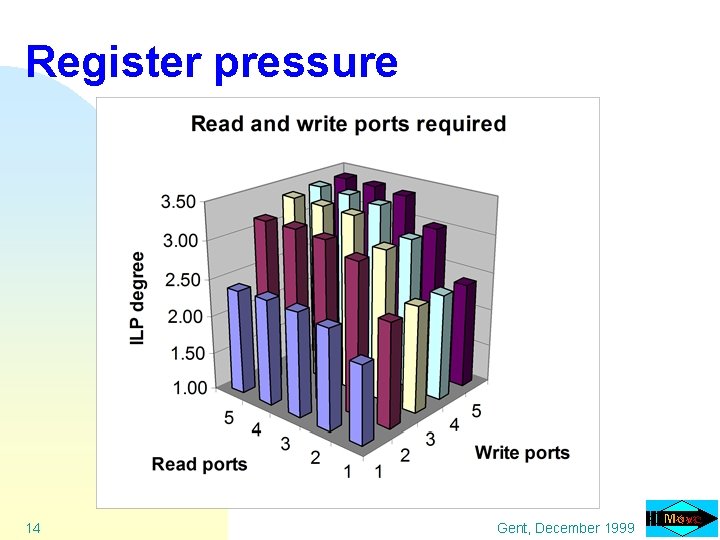

TTA characteristics Hardware n Modular: Lego play tool generator n Very flexible and scalable u easy n inclusion of Special Function Units (SFUs) Low complexity u 50% reduction on # register ports u reduced bypass complexity (no associative matching) u up to 80 % reduction in bypass connectivity u trivial decoding u reduced register pressure 13 Gent, December 1999

Register pressure 14 Gent, December 1999

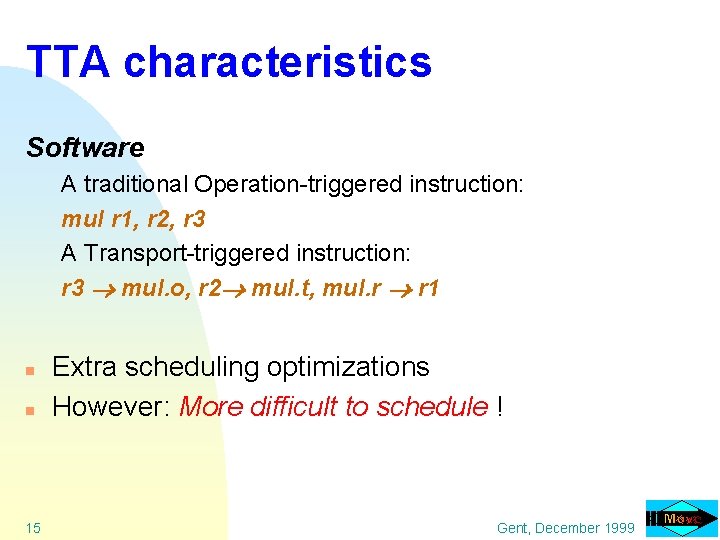

TTA characteristics Software A traditional Operation-triggered instruction: mul r 1, r 2, r 3 A Transport-triggered instruction: r 3 mul. o, r 2 mul. t, mul. r r 1 n n 15 Extra scheduling optimizations However: More difficult to schedule ! Gent, December 1999

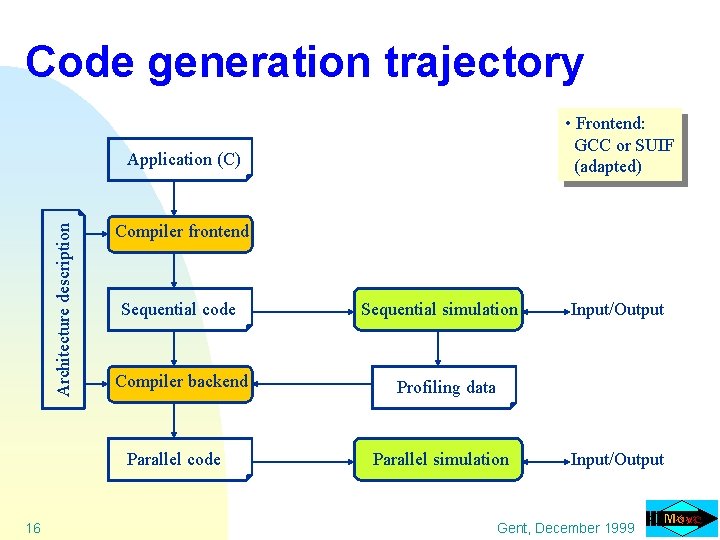

Code generation trajectory • Frontend: GCC or SUIF (adapted) Architecture description Application (C) Compiler frontend Sequential code Compiler backend Parallel code 16 Sequential simulation Input/Output Profiling data Parallel simulation Input/Output Gent, December 1999

TTA compiler characteristics n n n n 17 Handles all ANSI C programs Region scheduling scope with speculative execution Using profiling Software pipelining Predicated execution (e. g. for stores) Multiple register files Integrated register allocation and scheduling Fully parametric Gent, December 1999

Code generation for TTAs n TTA specific optimizations u common operand elimination u software bypassing u dead result move elimination u scheduling freedom of T, O and R n 18 Our scheduler (compiler backend) exploits these advantages Gent, December 1999

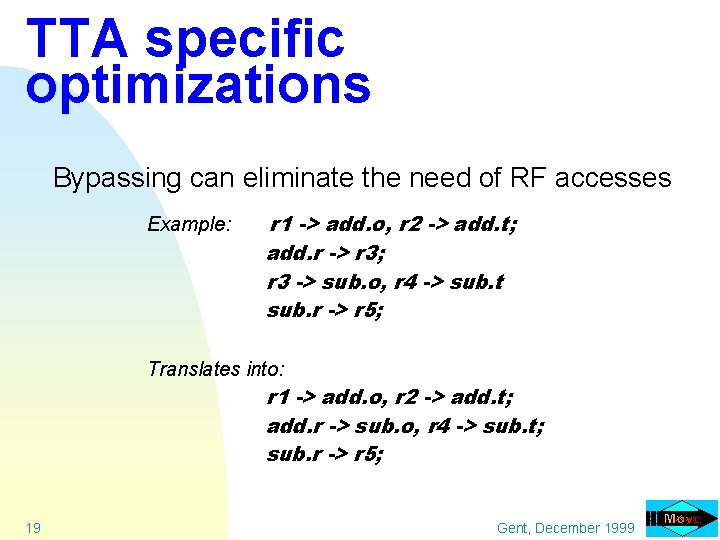

TTA specific optimizations Bypassing can eliminate the need of RF accesses Example: r 1 -> add. o, r 2 -> add. t; add. r -> r 3; r 3 -> sub. o, r 4 -> sub. t sub. r -> r 5; Translates into: r 1 -> add. o, r 2 -> add. t; add. r -> sub. o, r 4 -> sub. t; sub. r -> r 5; 19 Gent, December 1999

Mapping applications to processors We have described a n Templated architecture n Parametric compiler exploiting specifics of the template Problem: How to tune a processor architecture for a certain application domain? 20 Gent, December 1999

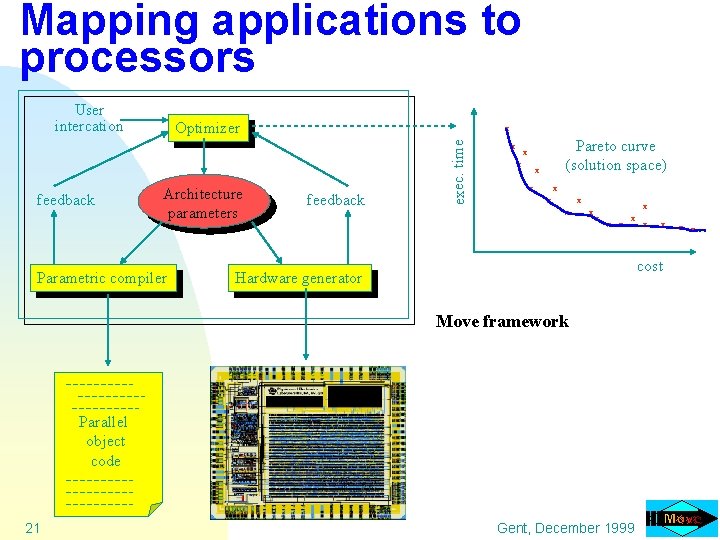

Mapping applications to processors User intercation x Architecture parameters Parametric compiler feedback exec. time feedback Optimizer x Pareto curve (solution space) x x x x 21 x cost Hardware generator Move framework Parallel object code x chip Gent, December 1999 x x

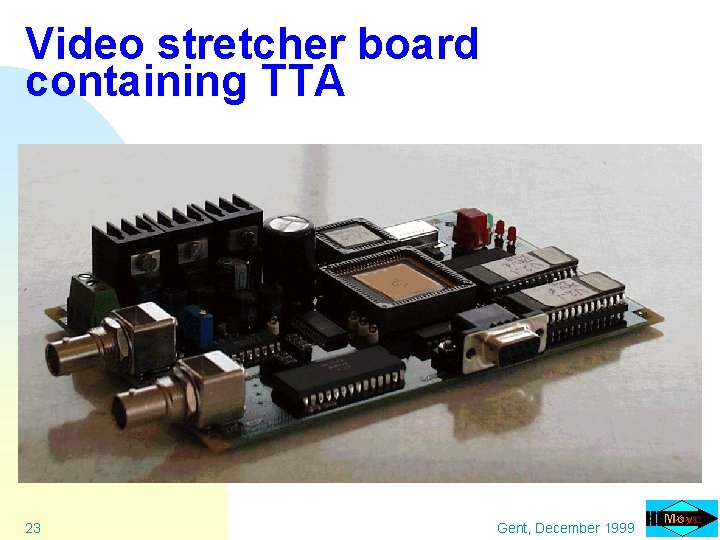

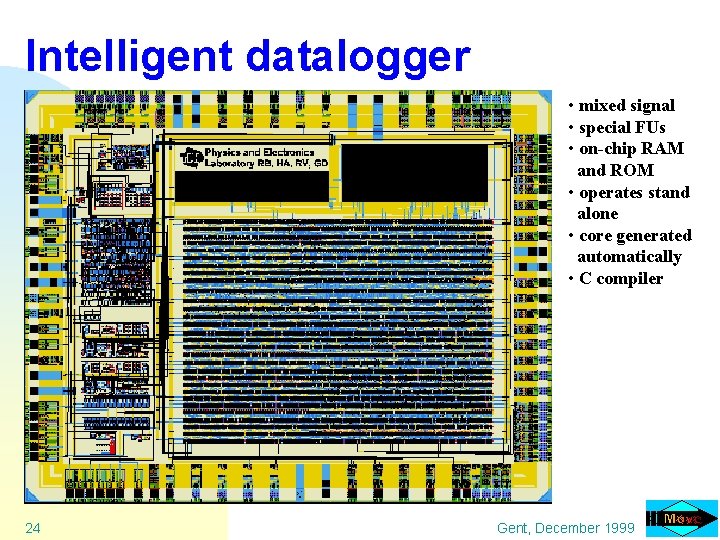

Achievements within the MOVE project n Transport Triggered Architecture (TTA) template u lego n playbox toolkit Design framework almost operational u you may add your own ‘strange’ function units (no restrictions) n Several chips have been designed by TUD and Industry; their applications include u Intelligent 22 datalogger u Video image enhancement (video stretcher) u MPEG 2 decoder u Wireless communication Gent, December 1999

Video stretcher board containing TTA 23 Gent, December 1999

Intelligent datalogger • mixed signal • special FUs • on-chip RAM and ROM • operates stand alone • core generated automatically • C compiler 24 Gent, December 1999

TTA related research n n n n 25 Ro. D: registers on demand scheduling SFUs: pattern detection CTT: code transformation tool Multiprocessor single chip embedded systems Global program optimizations Automatic fixed point code generation Re. Move Gent, December 1999

Ro. D: Register on Demand scheduling 26 Gent, December 1999

Phase ordering problem: scheduling allocation n Early register assignment u Introduces false dependencies u Bypassing information not available n Late register assignment u Span of live ranges likely to increase which leads to more spill code u Spill/reload code inserted after scheduling which requires an extra scheduling step n Integrated with the instruction scheduler: Ro. D u More 27 complex Gent, December 1999

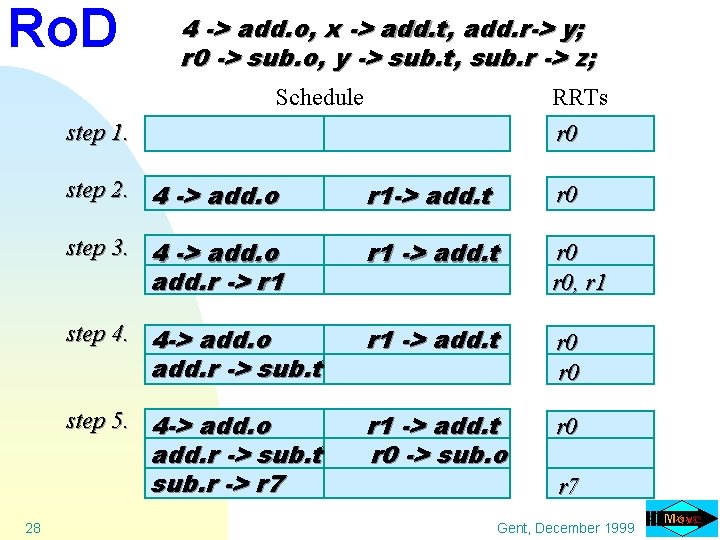

Ro. D 4 -> add. o, x -> add. t, add. r-> y; r 0 -> sub. o, y -> sub. t, sub. r -> z; Schedule RRTs r 0 step 1. step 2. 4 -> add. o r 1 -> add. t r 0 step 3. 4 -> add. o r 1 -> add. t r 0, r 1 step 4. 4 -> add. o r 1 -> add. t r 0 step 5. 4 -> add. o r 1 -> add. t r 0 -> sub. o r 0 add. r -> r 1 add. r -> sub. t sub. r -> r 7 28 r 7 Gent, December 1999

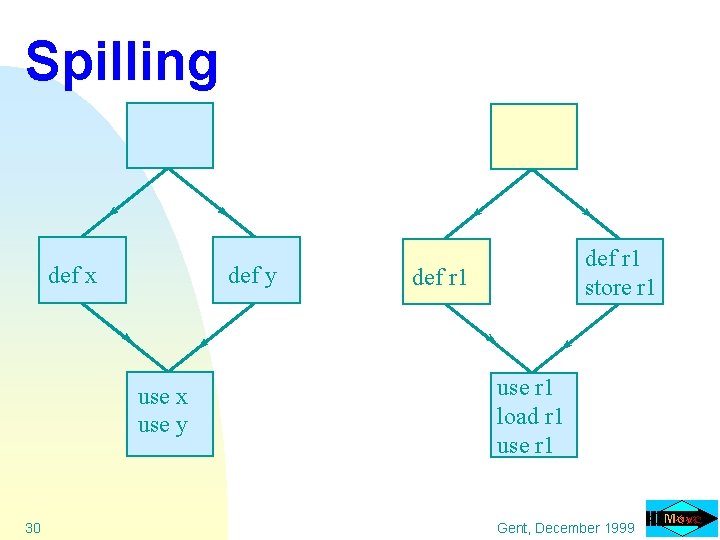

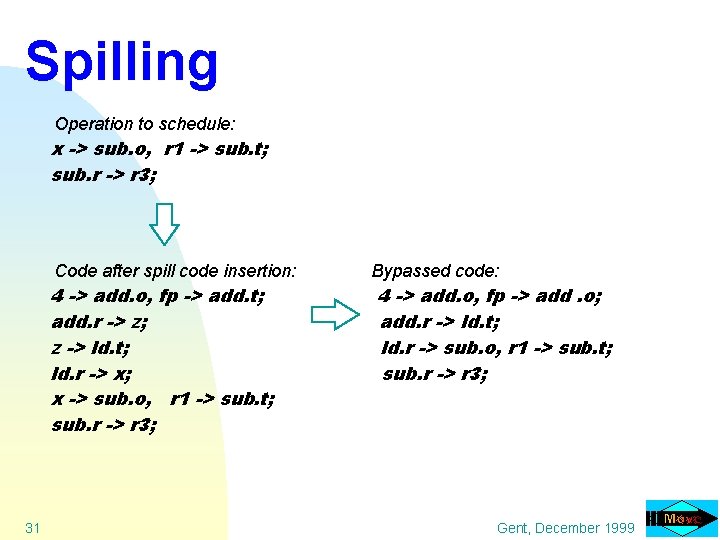

Spilling n n n 29 Occurs when the number of simultaneously live variables exceeds the number of registers Contents of variables are stored in memory The impact on the performance due to the insertion of extra code must be as small as possible Gent, December 1999

Spilling def x def y use x use y 30 def r 1 store r 1 def r 1 use r 1 load r 1 use r 1 Gent, December 1999

Spilling Operation to schedule: x -> sub. o, r 1 -> sub. t; sub. r -> r 3; Code after spill code insertion: 4 -> add. o, fp -> add. t; add. r -> z; z -> ld. t; ld. r -> x; x -> sub. o, r 1 -> sub. t; sub. r -> r 3; 31 Bypassed code: 4 -> add. o, fp -> add. o; add. r -> ld. t; ld. r -> sub. o, r 1 -> sub. t; sub. r -> r 3; Gent, December 1999

![Speedup of Ro. D[%] Ro. D compared with early assignment 32 Number of registers Speedup of Ro. D[%] Ro. D compared with early assignment 32 Number of registers](http://slidetodoc.com/presentation_image/4b1997e6bdba68050766fa95005a7b88/image-32.jpg)

Speedup of Ro. D[%] Ro. D compared with early assignment 32 Number of registers Gent, December 1999

![Ro. D compared with early assignment cycle count increase[%] Impact of decreasing number of Ro. D compared with early assignment cycle count increase[%] Impact of decreasing number of](http://slidetodoc.com/presentation_image/4b1997e6bdba68050766fa95005a7b88/image-33.jpg)

Ro. D compared with early assignment cycle count increase[%] Impact of decreasing number of registers 24 early assignment 20 Ro. D 16 12 8 4 0 12 16 20 24 28 32 Number of registers 33 Gent, December 1999

Special Functionality: SFUs 34 Gent, December 1999

Mapping applications to processors SFUs may help ! n Which one do I need ? n Tradeoff between costs and performance SFU granularity ? n Coarse grain: do it yourself (profiling helps) Move framework supports this n Fine grain: tooling needed 35 Gent, December 1999

SFUs: fine grain patterns n Why using fine grain SFUs: u code size reduction u register file #ports reduction u could be cheaper and/or faster u transport reduction u power reduction (avoid charging non-local wires) Which patterns do need support? n 36 Detection of recurring operation patterns needed Gent, December 1999

SFUs: Pattern identification Method: n Trace analysis n Built DDG n Create pattern library on demand n Fusing partial matches into complete matches 37 Gent, December 1999

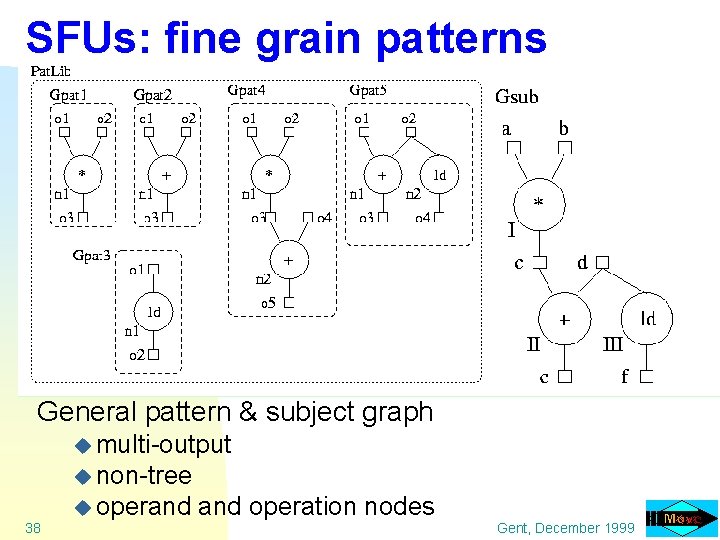

SFUs: fine grain patterns General pattern & subject graph u multi-output u non-tree 38 u operand operation nodes Gent, December 1999

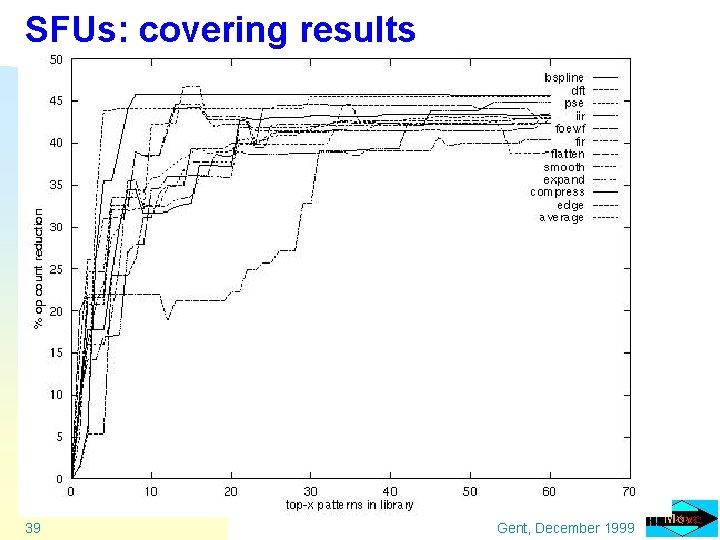

SFUs: covering results 39 Gent, December 1999

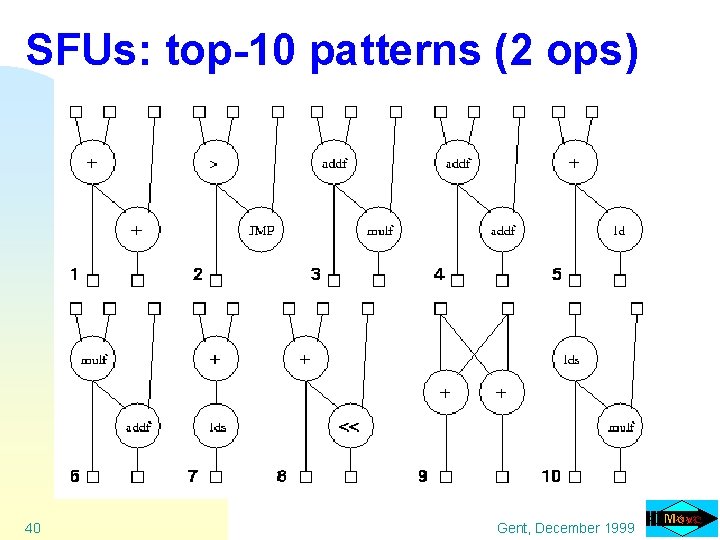

SFUs: top-10 patterns (2 ops) 40 Gent, December 1999

SFUs: conclusions n n Most patterns are: multi-output and not tree like Patterns 1, 4, 6 and 8 have implementation advantages 20 additional 2 -node patterns give 40% reduction (in operation count) Group operations into classes for even better results Now: scheduling for these patterns? How? 41 Gent, December 1999

Source-to. Source transformations 42 Gent, December 1999

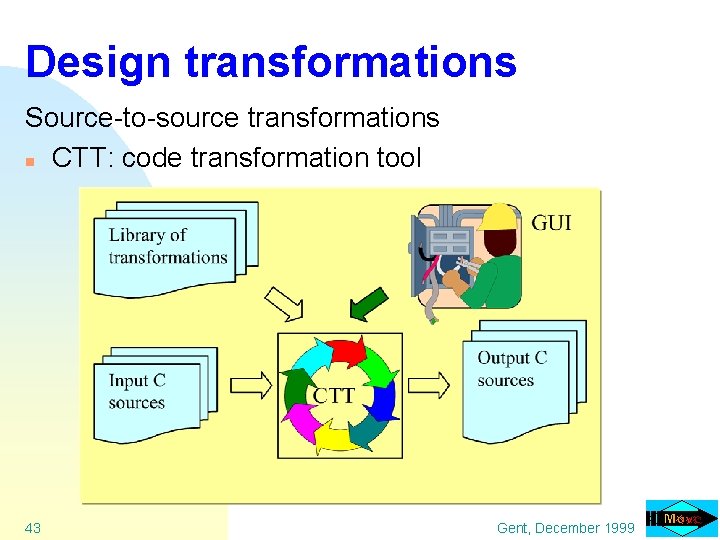

Design transformations Source-to-source transformations n CTT: code transformation tool 43 Gent, December 1999

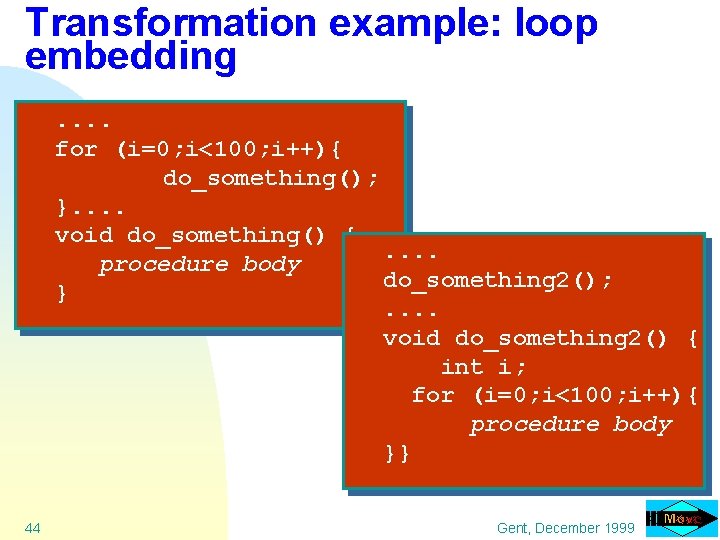

Transformation example: loop embedding. . for (i=0; i<100; i++){ do_something(); }. . void do_something() {. . procedure body do_something 2(); }. . void do_something 2() { int i; for (i=0; i<100; i++){ procedure body }} 44 Gent, December 1999

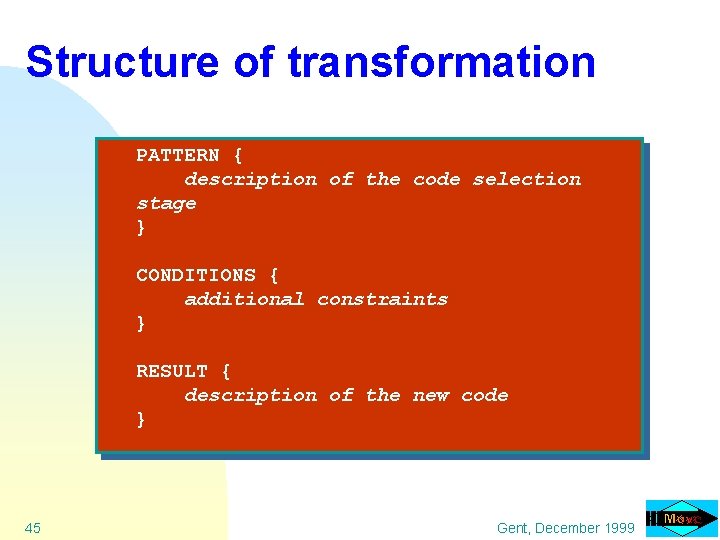

Structure of transformation PATTERN { description of the code selection stage } CONDITIONS { additional constraints } RESULT { description of the new code } 45 Gent, December 1999

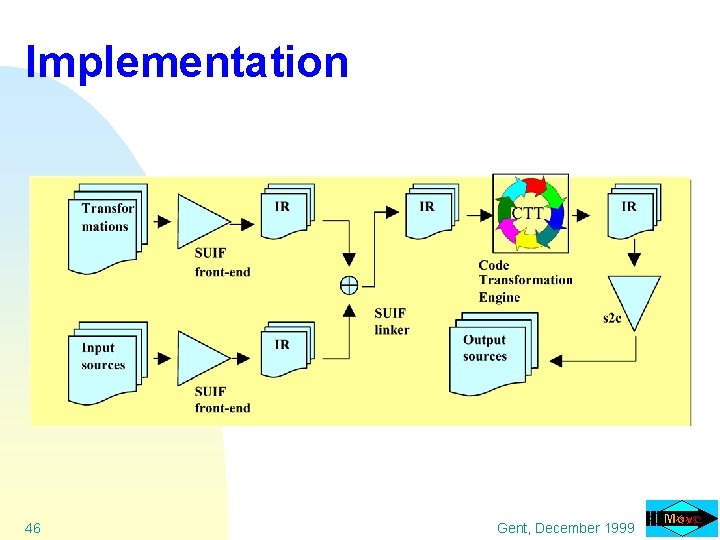

Implementation 46 Gent, December 1999

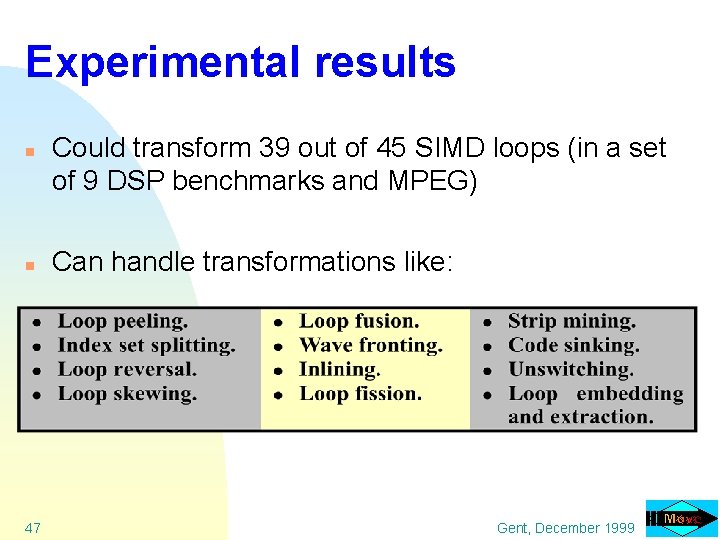

Experimental results n n 47 Could transform 39 out of 45 SIMD loops (in a set of 9 DSP benchmarks and MPEG) Can handle transformations like: Gent, December 1999

Partitioning your program for Multiprocessor single chip solutions 48 Gent, December 1999

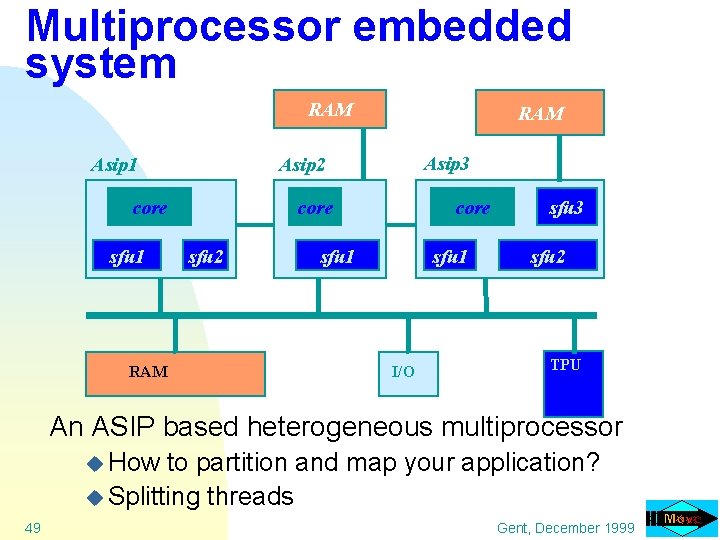

Multiprocessor embedded system RAM Asip 1 RAM Asip 3 Asip 2 core sfu 1 RAM core sfu 2 core sfu 1 I/O sfu 3 sfu 2 TPU An ASIP based heterogeneous multiprocessor u How to partition and map your application? u Splitting threads 49 Gent, December 1999

Design transformations Why splitting threads? n n n Combine fine (ILP) and coarse grain parallelism Avoid ILP bottleneck Multiprocessor solution may be cheaper u More n 50 efficient resource use Wire delay problem clustering needed ! Gent, December 1999

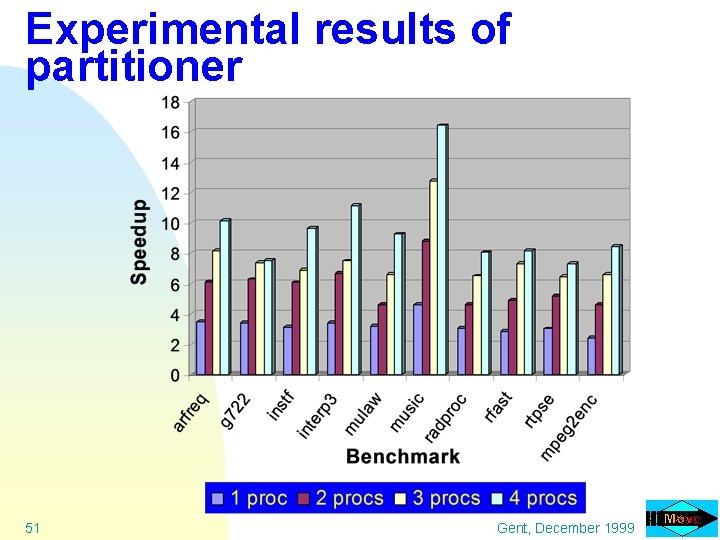

Experimental results of partitioner 51 Gent, December 1999

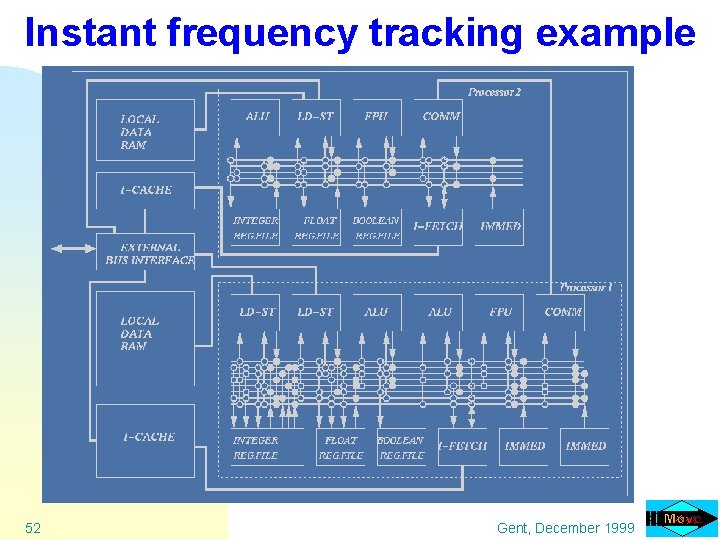

Instant frequency tracking example 52 Gent, December 1999

Global program optimizations 53 Gent, December 1999

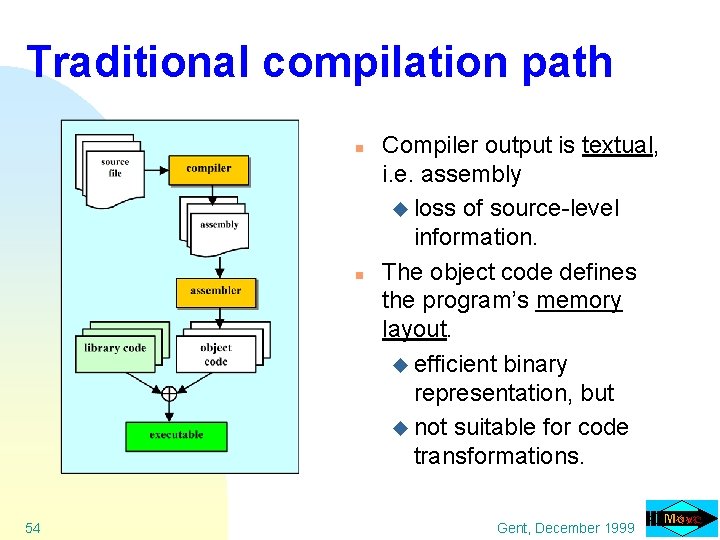

Traditional compilation path n n 54 Compiler output is textual, i. e. assembly u loss of source-level information. The object code defines the program’s memory layout. u efficient binary representation, but u not suitable for code transformations. Gent, December 1999

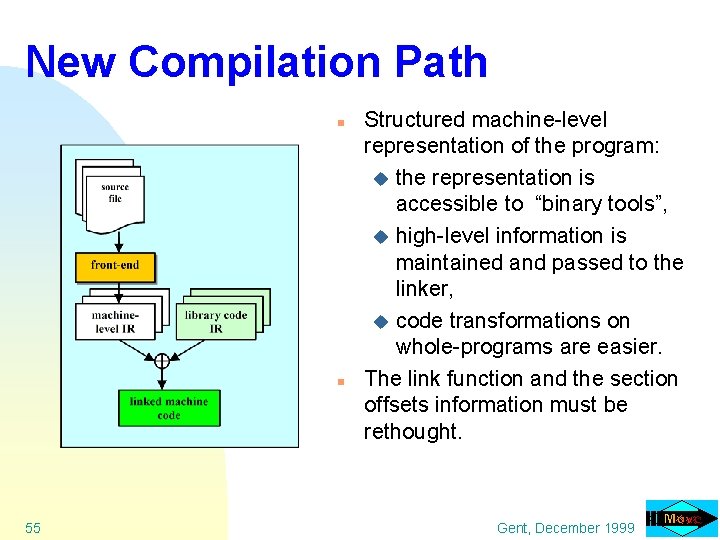

New Compilation Path n n 55 Structured machine-level representation of the program: u the representation is accessible to “binary tools”, u high-level information is maintained and passed to the linker, u code transformations on whole-programs are easier. The link function and the section offsets information must be rethought. Gent, December 1999

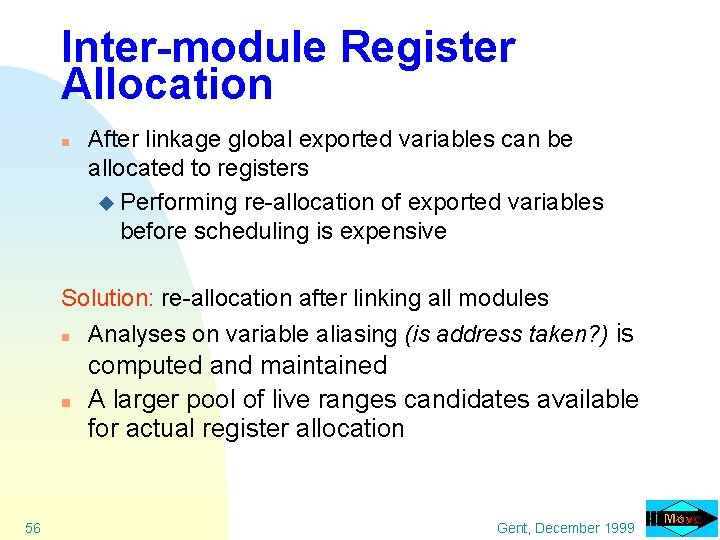

Inter-module Register Allocation n After linkage global exported variables can be allocated to registers u Performing re-allocation of exported variables before scheduling is expensive Solution: re-allocation after linking all modules n n 56 Analyses on variable aliasing (is address taken? ) is computed and maintained A larger pool of live ranges candidates available for actual register allocation Gent, December 1999

Fixed-point conversion: motivation n Cost of floating-point hardware. n Most “embedded” programs written in ANSI C. n C does not support fixed-point arithmetic. n n 57 Manual writing of fixed-point programs is tedious and error-prone (insertion of scaling operations). Fixed-point extensions to C are only a partial solution. Gent, December 1999

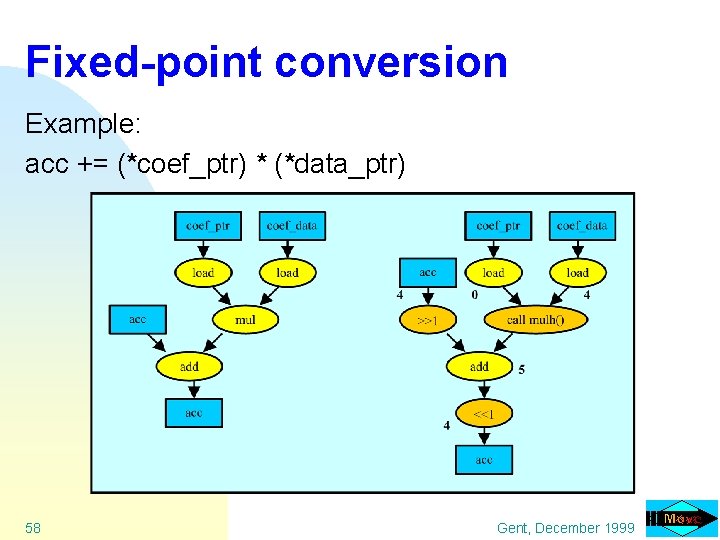

Fixed-point conversion Example: acc += (*coef_ptr) * (*data_ptr) 58 Gent, December 1999

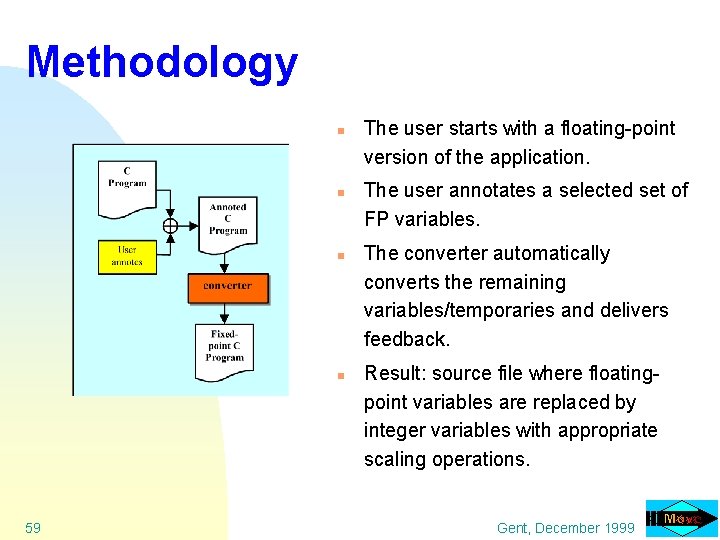

Methodology n n 59 The user starts with a floating-point version of the application. The user annotates a selected set of FP variables. The converter automatically converts the remaining variables/temporaries and delivers feedback. Result: source file where floatingpoint variables are replaced by integer variables with appropriate scaling operations. Gent, December 1999

Link-time code conversion n 60 Problem: linking fixed-point code with library code u transformations on binary code impractical u source-level linkage is awkward Solution: Floating- to fixed-point conversion of library code “on the fly” during linkage. Advantages: u No need to compile in advance a specific version of the library for a particular fixed-point format. u Information about the fixed-point format can flow between user and library code in both directions. Gent, December 1999

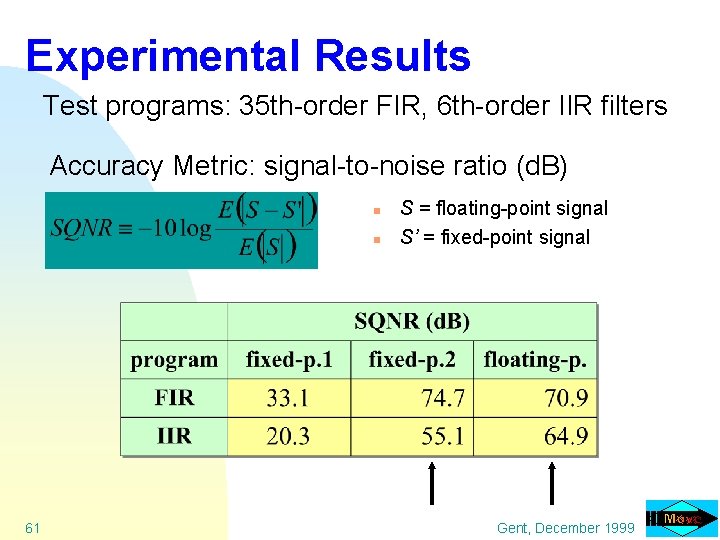

Experimental Results Test programs: 35 th-order FIR, 6 th-order IIR filters Accuracy Metric: signal-to-noise ratio (d. B) n n 61 S = floating-point signal S’ = fixed-point signal Gent, December 1999

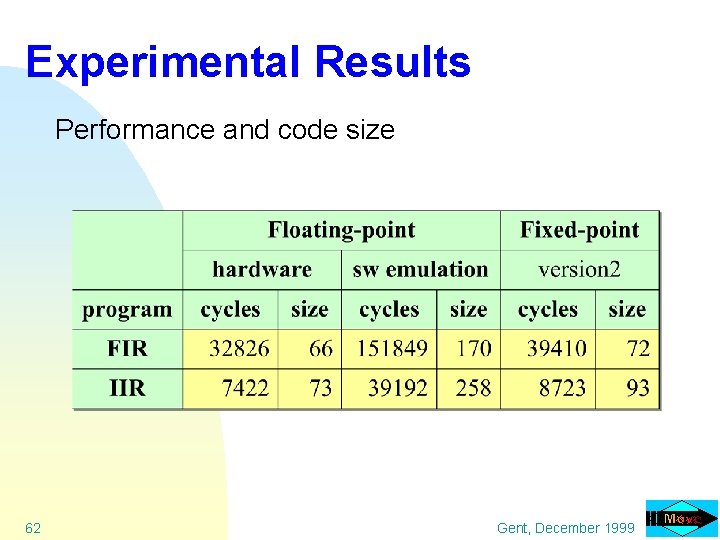

Experimental Results Performance and code size 62 Gent, December 1999

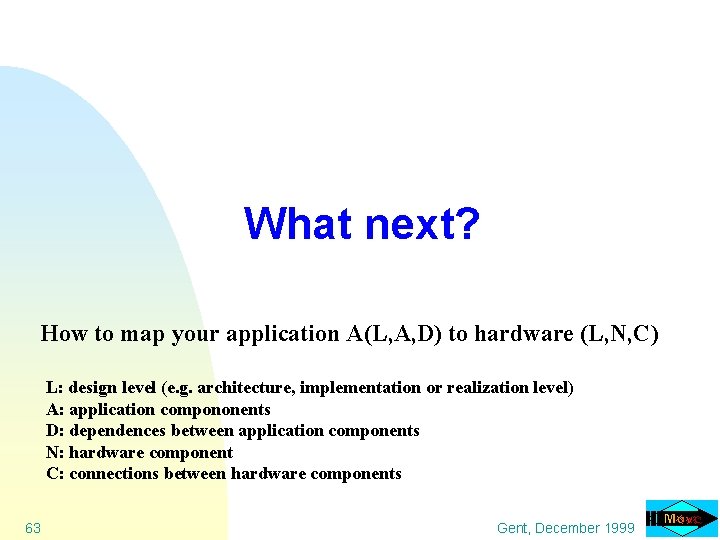

What next? How to map your application A(L, A, D) to hardware (L, N, C) L: design level (e. g. architecture, implementation or realization level) A: application compononents D: dependences between application components N: hardware component C: connections between hardware components 63 Gent, December 1999

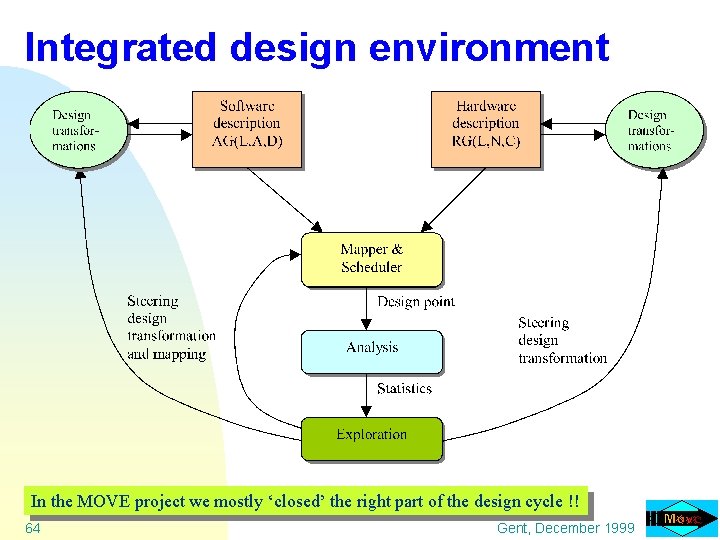

Integrated design environment In the MOVE project we mostly ‘closed’ the right part of the design cycle !! 64 Gent, December 1999

Conclusions / Discussion Billions of embedded systems with embedded processors sold annually; how to design these systems quickly, cheap, correct, low power, . . ? n We have experience with tuning architectures for applications u extremely flexible templated TTA; used by several companies u parametric code generation u automatic TTA design space exploration n The challenge: automated tuning of applications for architectures : closing the Y-chart u design 65 transformation framework needed Gent, December 1999

- Slides: 65