Transparent CPUGPU Collaboration for DataParallel Kernels on Heterogeneous

Transparent CPU-GPU Collaboration for Data-Parallel Kernels on Heterogeneous Systems Janghaeng Lee Mehrzad Samadi Yongjun Park and Scott Mahlke University of Michigan - Ann Arbor 1 University of Michigan Electrical Engineering and Computer Science

Heterogeneity • Computer systems have become heterogeneous – Laptops, Servers – Mobile devices • GPUs integrated with CPUs • Discrete GPUs added for – Gaming – Supercomputing • Co-processors for massive data-parallel workload – Intel Xeon PHI 2 University of Michigan Electrical Engineering and Computer Science

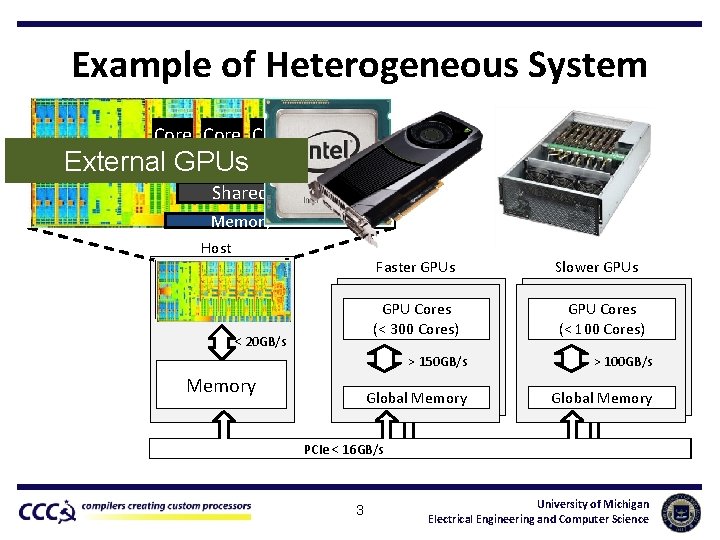

Example of Heterogeneous System Core External GPUs GPU Shared L 3 Memory Controller Host GPU Cores (> 16 cores) Multi Core CPU (4 -16 cores) < 20 GB/s Memory Faster GPUs Slower GPUs GPU Cores (< 300 Cores) GPU Cores (< 100 Cores) > 150 GB/s > 100 GB/s Global Memory PCIe < 16 GB/s 3 University of Michigan Electrical Engineering and Computer Science

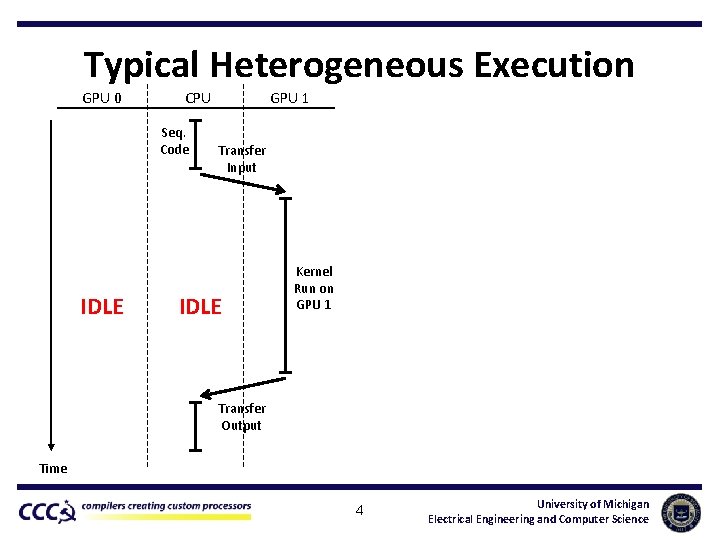

Typical Heterogeneous Execution GPU 0 CPU Seq. Code IDLE GPU 1 Transfer Input IDLE Kernel Run on GPU 1 Transfer Output Time 4 University of Michigan Electrical Engineering and Computer Science

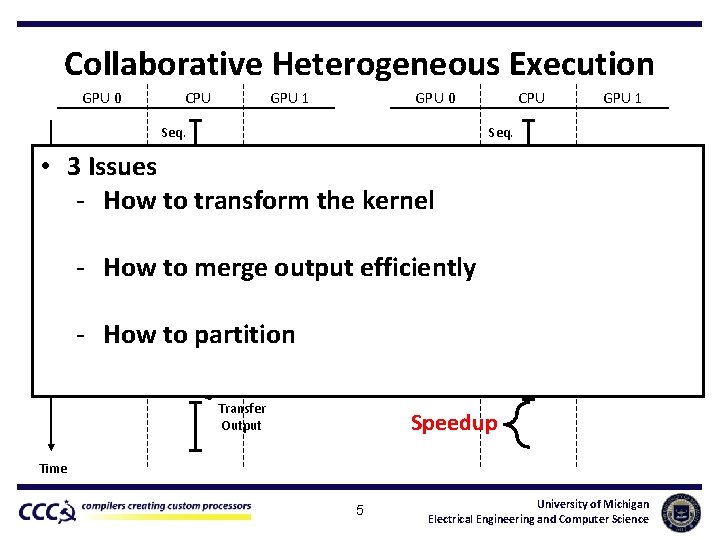

Collaborative Heterogeneous Execution GPU 0 CPU Seq. Code GPU 1 GPU 0 CPU Seq. Code Transfer Input • 3 Issues - How to transform the kernel - How to merge output efficiently Kernel IDLE Transfer Input Run on CPU Run on GPU 0 IDLE GPU 1 Run on GPU 1 - How to partition Merge Transfer Output Seq. Code Transfer Output Speedup Time 5 University of Michigan Electrical Engineering and Computer Science

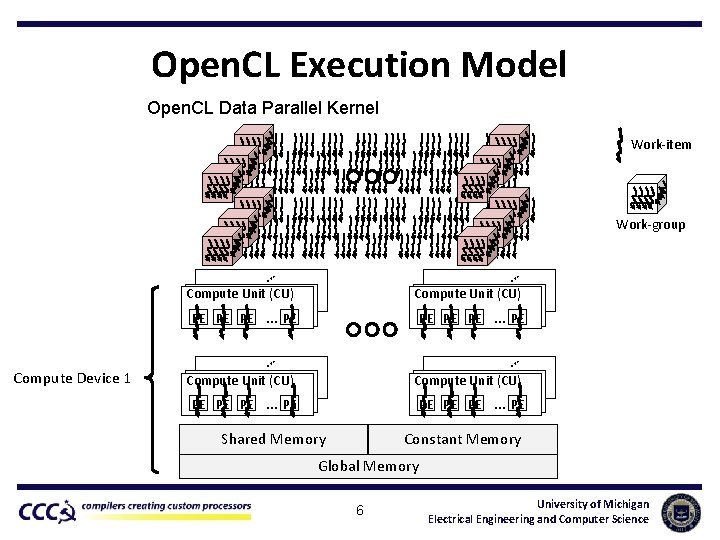

Open. CL Execution Model Open. CL Data Parallel Kernel Work-item Work-group … … Compute Unit (CU) PE PE PE Compute Device 1 Compute Unit (CU) … PE PE … … Compute Unit (CU) PE PE PE … PE Compute Unit (CU) … PE PE Shared Memory … PE Constant Memory Global Memory 6 University of Michigan Electrical Engineering and Computer Science

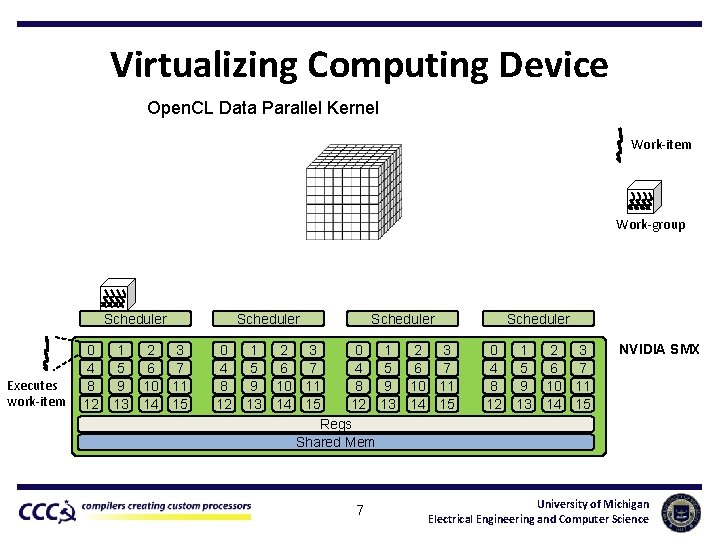

Virtualizing Computing Device Open. CL Data Parallel Kernel Work-item Work-group Scheduler Executes work-item 0 4 8 12 1 5 9 13 2 3 6 7 10 11 14 15 Scheduler 0 4 8 12 1 5 9 13 Scheduler 2 3 0 6 7 4 10 11 8 14 15 12 Regs Shared Mem 7 1 5 9 13 2 3 6 7 10 11 14 15 Scheduler 0 4 8 12 1 5 9 13 2 3 6 7 10 11 14 15 NVIDIA SMX University of Michigan Electrical Engineering and Computer Science

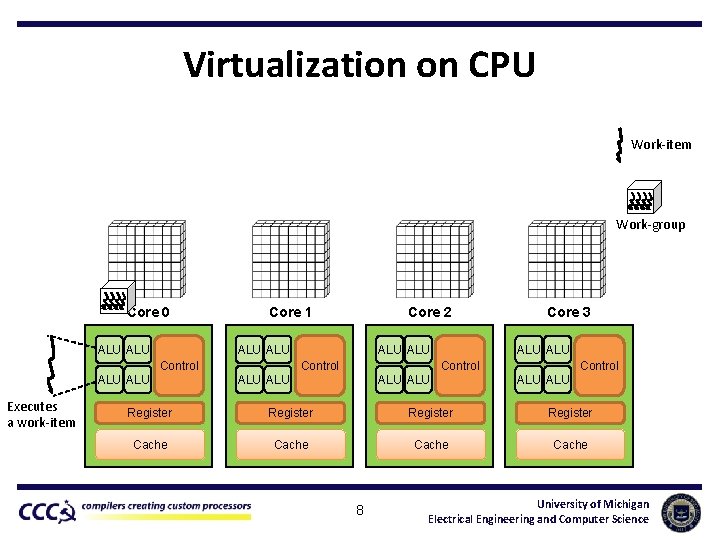

Virtualization on CPU Work-item Work-group Core 0 ALU Core 1 ALU Control ALU Executes a work-item Core 2 ALU Control Core 3 ALU ALU Control ALU ALU Register Cache 8 University of Michigan Electrical Engineering and Computer Science

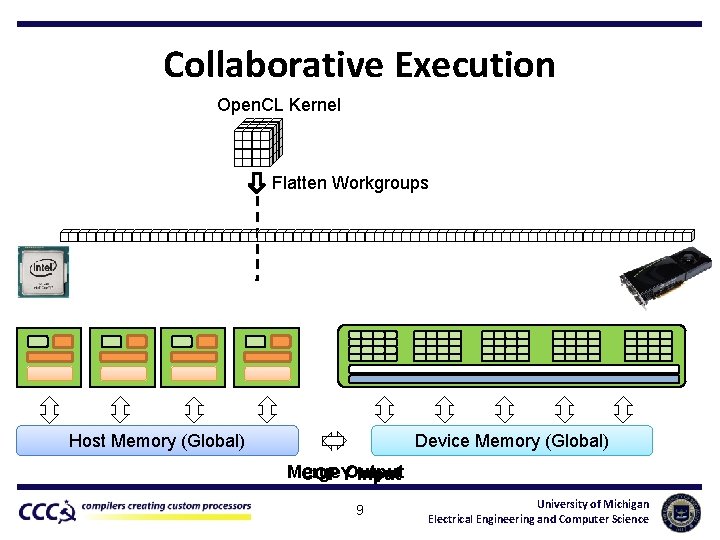

Collaborative Execution Open. CL Kernel Flatten Workgroups Host Memory (Global) Device Memory (Global) Merge COPYOutput Input 9 University of Michigan Electrical Engineering and Computer Science

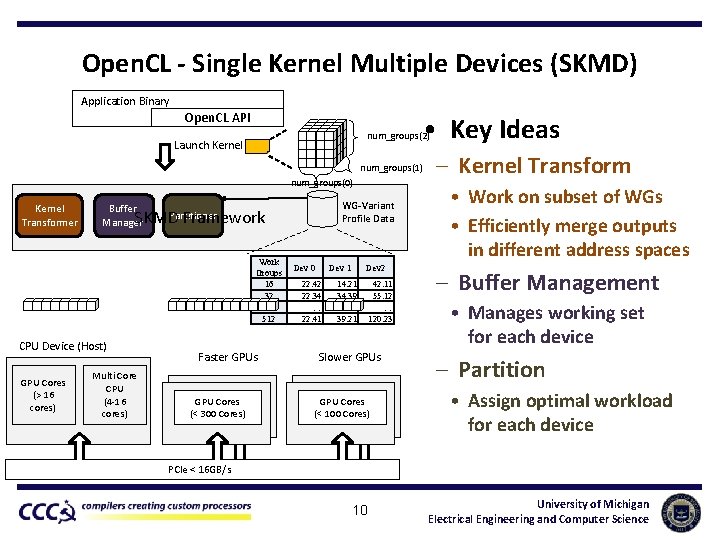

Open. CL - Single Kernel Multiple Devices (SKMD) Application Binary Open. CL API • Key Ideas num_groups(2) Launch Kernel num_groups(1) num_groups(0) Kernel Transformer Partitioner SKMD Framework Work Groups 16 32 … 512 CPU Device (Host) GPU Cores (> 16 cores) WG-Variant Profile Data Buffer Manager Multi Core CPU (4 -16 cores) Faster GPUs GPU Cores (< 300 Cores) Dev 0 Dev 1 22. 42 22. 34 … 22. 41 Dev 2 14. 21 34. 39 … 39. 21 42. 11 55. 12 … 120. 23 Slower GPUs GPU Cores (< 100 Cores) – Kernel Transform • Work on subset of WGs • Efficiently merge outputs in different address spaces – Buffer Management • Manages working set for each device – Partition • Assign optimal workload for each device PCIe < 16 GB/s 10 University of Michigan Electrical Engineering and Computer Science

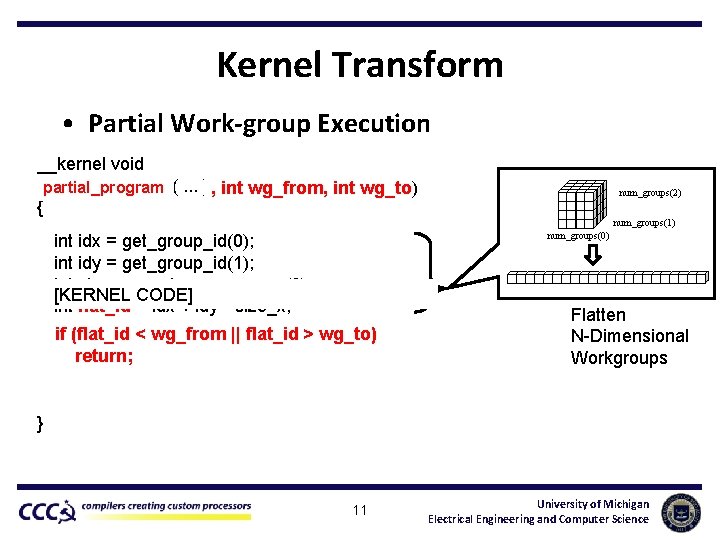

Kernel Transform • Partial Work-group Execution __kernel void original_program( partial_program. . . ) , int wg_from, int wg_to) { num_groups(2) num_groups(1) num_groups(0) int idx = get_group_id(0); int idy = get_group_id(1); int size_x = get_num_groups(0); [KERNEL CODE] int flat_id = idx + idy * size_x; if (flat_id < wg_from || flat_id > wg_to) return; Flatten N-Dimensional Workgroups } 11 University of Michigan Electrical Engineering and Computer Science

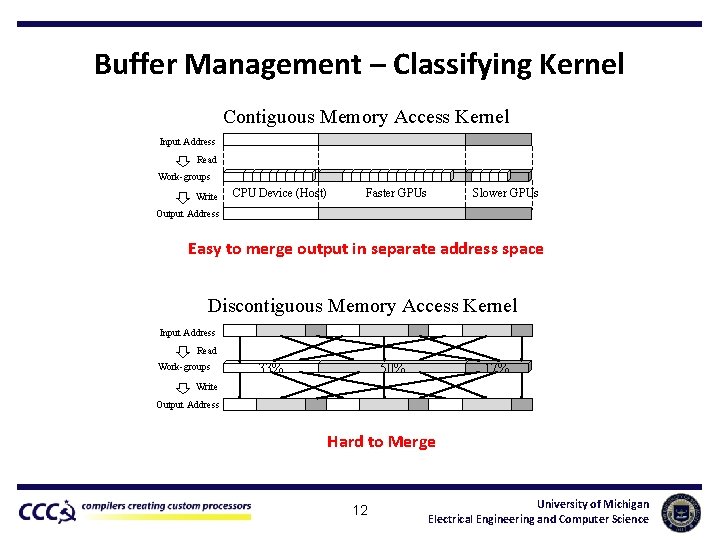

Buffer Management – Classifying Kernel Contiguous Memory Access Kernel Input Address Read Work-groups Write 33% CPU Device (Host) 50% 17% Faster GPUs Slower GPUs Output Address Easy to merge output in separate address space Discontiguous Memory Access Kernel Input Address Read Work-groups 33% 50% 17% Write Output Address Hard to Merge 12 University of Michigan Electrical Engineering and Computer Science

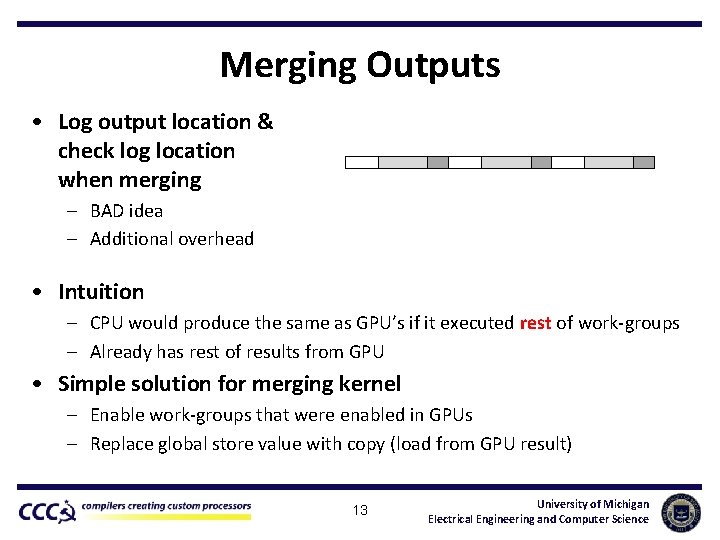

Merging Outputs • Log output location & check log location when merging – BAD idea – Additional overhead • Intuition – CPU would produce the same as GPU’s if it executed rest of work-groups – Already has rest of results from GPU • Simple solution for merging kernel – Enable work-groups that were enabled in GPUs – Replace global store value with copy (load from GPU result) 13 University of Michigan Electrical Engineering and Computer Science

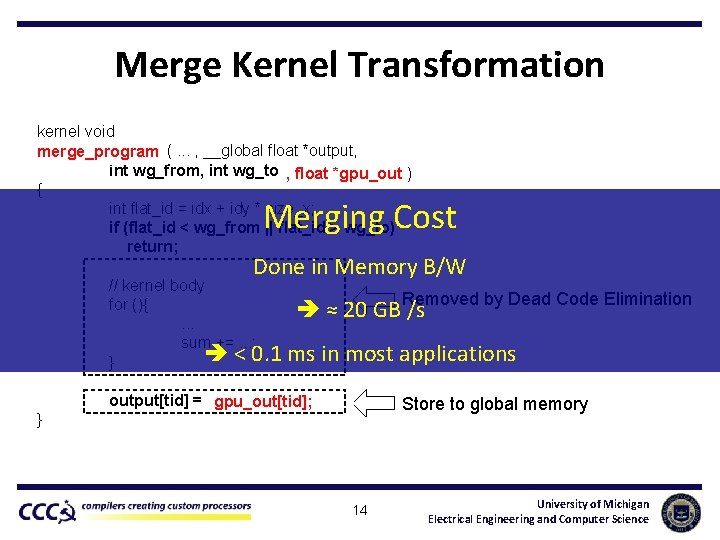

Merge Kernel Transformation kernel void partial_program (. . . , __global float *output, merge_program int wg_from, int wg_to ), float *gpu_out ) { int flat_id = idx + idy * size_x; if (flat_id < wg_from || flat_id > wg_to) return; Merging Cost Done in Memory B/W // kernel body for (){. . . sum +=. . . ; < 0. 1 } Removed by Dead Code Elimination ≈ 20 GB /s ms in most applications output[tid] = sum; gpu_out[tid]; Store to global memory } 14 University of Michigan Electrical Engineering and Computer Science

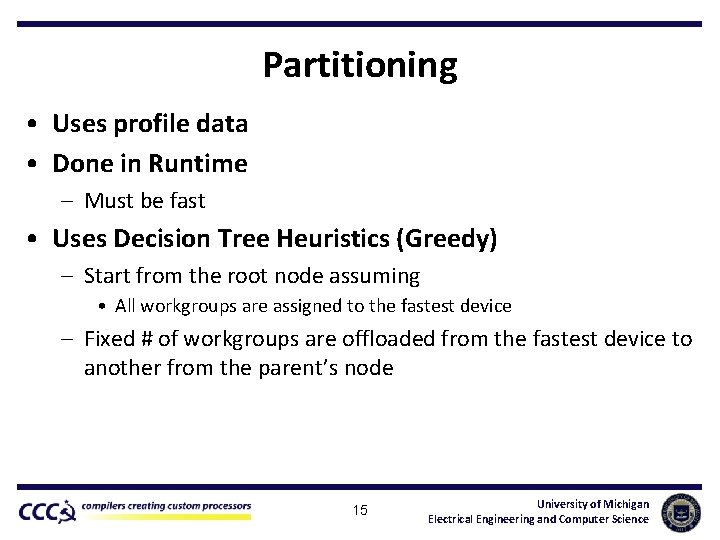

Partitioning • Uses profile data • Done in Runtime – Must be fast • Uses Decision Tree Heuristics (Greedy) – Start from the root node assuming • All workgroups are assigned to the fastest device – Fixed # of workgroups are offloaded from the fastest device to another from the parent’s node 15 University of Michigan Electrical Engineering and Computer Science

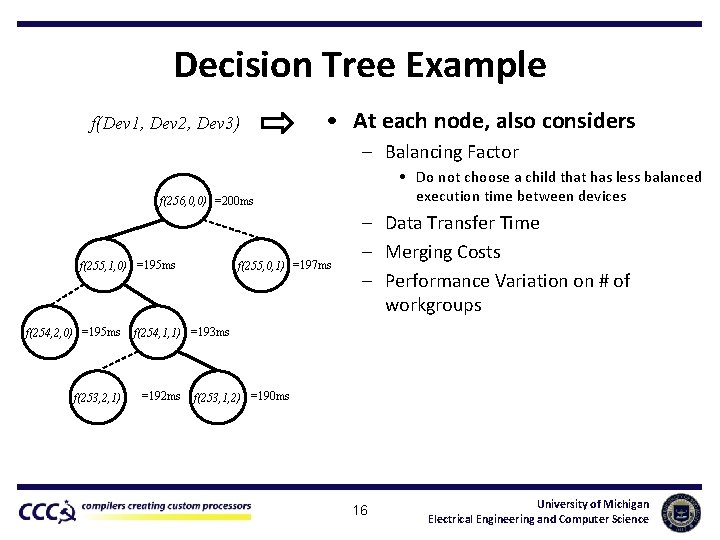

Decision Tree Example f(Dev 1, Dev 2, Dev 3) • At each node, also considers – Balancing Factor • Do not choose a child that has less balanced execution time between devices f(256, 0, 0) =200 ms f(255, 1, 0) =195 ms f(254, 2, 0) =195 ms f(253, 2, 1) f(255, 0, 1) =197 ms – Data Transfer Time – Merging Costs – Performance Variation on # of workgroups f(254, 1, 1) =193 ms =192 ms f(253, 1, 2) =190 ms 16 University of Michigan Electrical Engineering and Computer Science

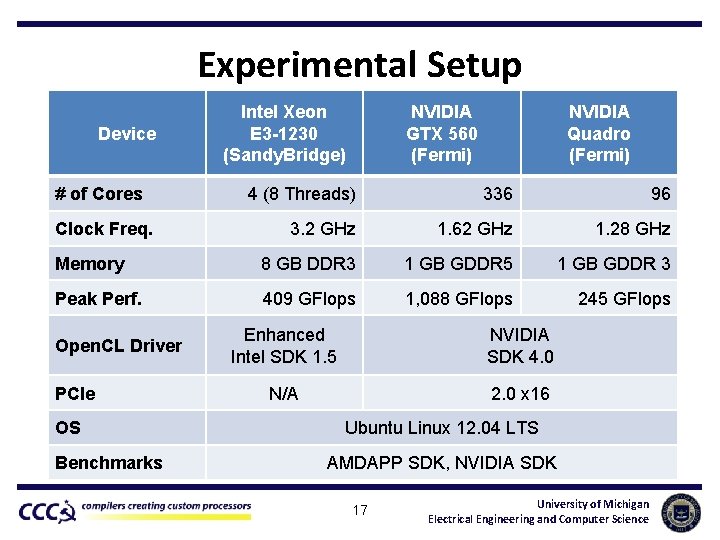

Experimental Setup Device # of Cores Intel Xeon E 3 -1230 (Sandy. Bridge) NVIDIA GTX 560 (Fermi) NVIDIA Quadro (Fermi) 4 (8 Threads) 336 96 3. 2 GHz 1. 62 GHz 1. 28 GHz Memory 8 GB DDR 3 1 GB GDDR 5 1 GB GDDR 3 Peak Perf. 409 GFlops 1, 088 GFlops 245 GFlops Clock Freq. Open. CL Driver PCIe OS Benchmarks Enhanced Intel SDK 1. 5 NVIDIA SDK 4. 0 N/A 2. 0 x 16 Ubuntu Linux 12. 04 LTS AMDAPP SDK, NVIDIA SDK 17 University of Michigan Electrical Engineering and Computer Science

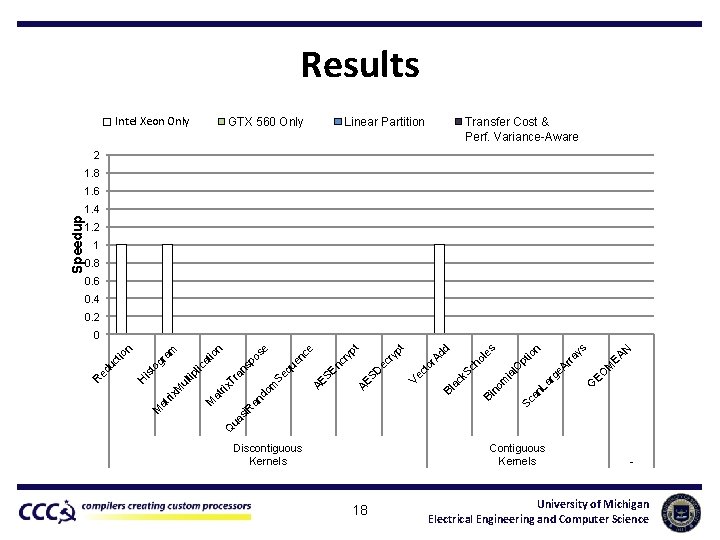

Results Intel Xeon Only GTX 560 Only Transfer Cost & Perf. Variance-Aware Linear Partition 2 1. 8 1. 6 1. 2 1 0. 8 0. 6 0. 4 0. 2 M EA N s G EO ge Ar ra y n Sc an La r m no Bi Bl ac k Sc ia l. O pt io ho le s d or Ad Ve ct SD AE AE SE nc ry en c ec ry pt pt e e Q ua si R M an d om rix at Se qu sp Tr an tip ul M rix at M os n io at lic to gr is H ed u ct io n am 0 R Speedup 1. 4 Discontiguous Kernels Contiguous Kernels 18 - University of Michigan Electrical Engineering and Computer Science

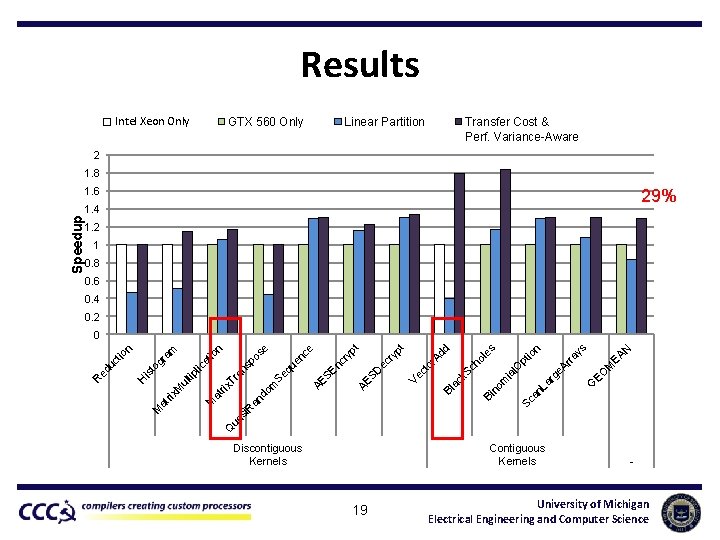

Results Intel Xeon Only GTX 560 Only Transfer Cost & Perf. Variance-Aware Linear Partition 2 1. 8 1. 6 29% 1. 2 1 0. 8 0. 6 0. 4 0. 2 M EA N s G EO ge Ar ra y n Sc an La r m no Bi Bl ac k Sc ia l. O pt io ho le s d or Ad Ve ct SD AE AE SE nc ry en c ec ry pt pt e e Q ua si R M an d om rix at Se qu sp Tr an tip ul M rix at M os n io at lic to gr is H ed u ct io n am 0 R Speedup 1. 4 Discontiguous Kernels Contiguous Kernels 19 - University of Michigan Electrical Engineering and Computer Science

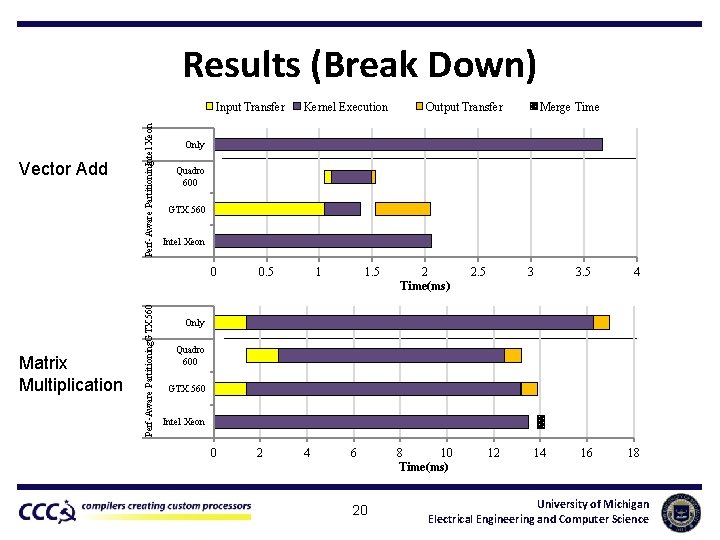

Results (Break Down) Matrix Multiplication Perf-Aware Partitioning. GTX 560 Vector Add Perf-Aware Partitioning. Intel Xeon Input Transfer Kernel Execution Output Transfer Merge Time Only Quadro 600 GTX 560 Intel Xeon 0 0. 5 0 2 1 1. 5 2 Time(ms) 2. 5 3 3. 5 4 16 18 Only Quadro 600 GTX 560 Intel Xeon 4 6 20 8 10 Time(ms) 12 14 University of Michigan Electrical Engineering and Computer Science

Summary • Systems have been become more heterogeneous – Configured with several types of devices • Existing CPU + GPU heterogeneous – Single device executes a single kernel • Single Kernel Multiple Devices (SKMD) – – – CPUs and GPUs working on a single kernel Transparent Framework Partial kernel execution Merging partial output Optimal partitioning Performance improvement of 29% over single device execution 21 University of Michigan Electrical Engineering and Computer Science

Q&A 22 University of Michigan Electrical Engineering and Computer Science

- Slides: 22