Transcription System using Automatic Speech Recognition ASR for

![Review of ASR technology (1/2) • Broadcast News [world-wide] – Professional anchors, mostly reading Review of ASR technology (1/2) • Broadcast News [world-wide] – Professional anchors, mostly reading](https://slidetodoc.com/presentation_image/c7e330dbd2fdd95a43f66d799515617c/image-5.jpg)

![Review of ASR technology (2/2) • Telephone conversations [US] – Ordinary people, speaking casually Review of ASR technology (2/2) • Telephone conversations [US] – Ordinary people, speaking casually](https://slidetodoc.com/presentation_image/c7e330dbd2fdd95a43f66d799515617c/image-6.jpg)

- Slides: 26

Transcription System using Automatic Speech Recognition (ASR) for the Japanese Parliament (Diet) Tatsuya Kawahara (Kyoto University, Japan)

Brief Biography • • 1995 -96 2003 - • 2003 -06 • 2006 - Ph. D. (Information Science), Kyoto Univ. Associate Professor, Kyoto Univ. Visiting Researcher, Bell Labs. , USA Professor, Kyoto Univ. IEEE SPS Speech TC member Technical Consultant, The House of Representative, Japan Published 150~ papers in automatic speech recognition (ASR) and its applications Web http: //www. ar. media. kyoto-u. ac. jp/~kawahara/

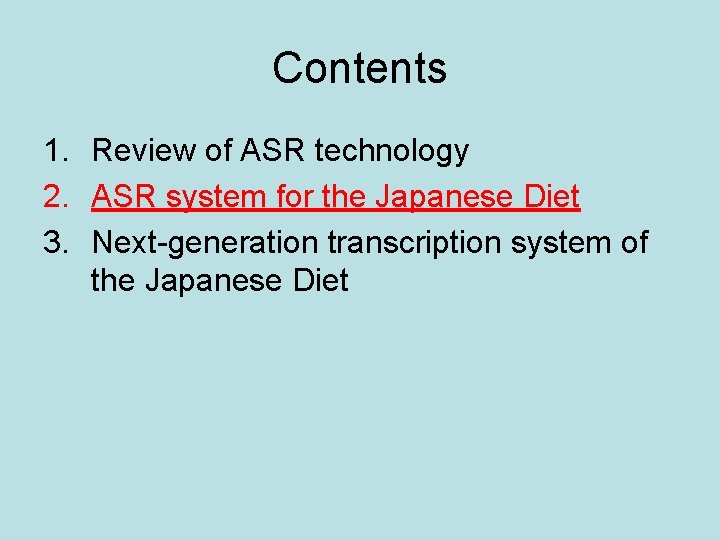

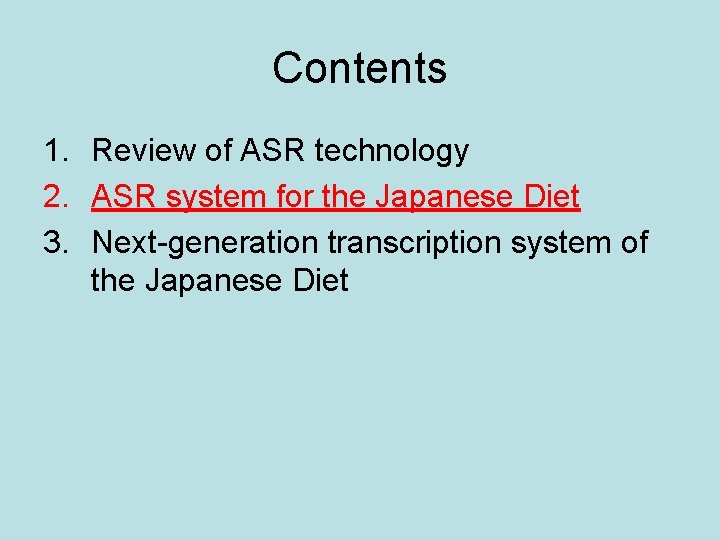

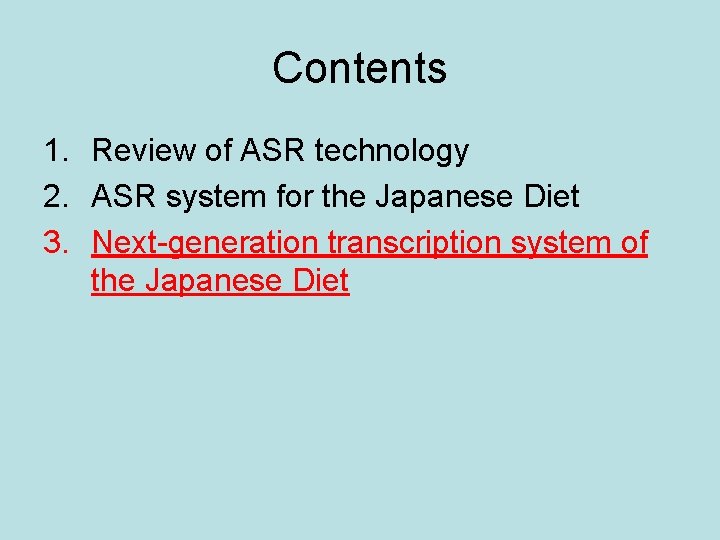

Contents 1. Review of ASR technology 2. ASR system for the Japanese Diet 3. Next-generation transcription system of the Japanese Diet

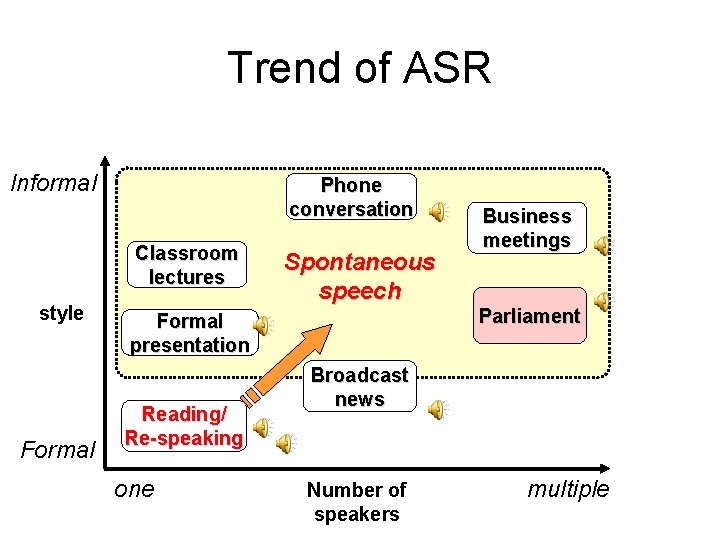

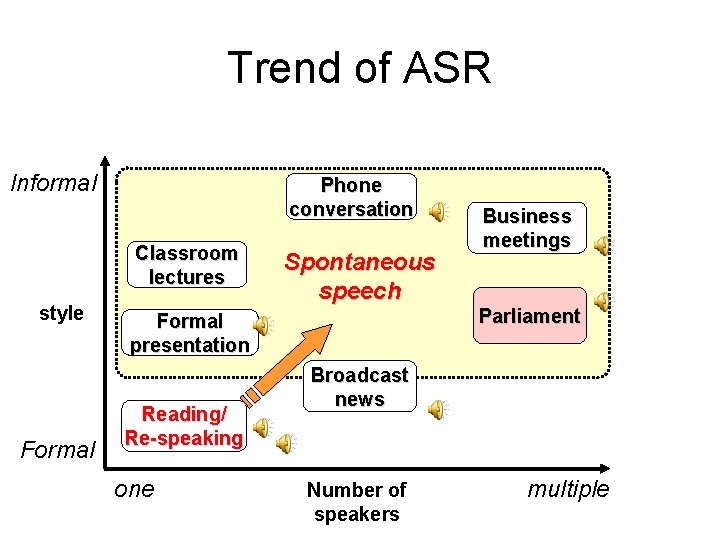

Trend of ASR Informal Phone conversation Classroom lectures style Formal Spontaneous speech Formal presentation Reading/ Re-speaking one Business meetings Parliament Broadcast news Number of speakers multiple

![Review of ASR technology 12 Broadcast News worldwide Professional anchors mostly reading Review of ASR technology (1/2) • Broadcast News [world-wide] – Professional anchors, mostly reading](https://slidetodoc.com/presentation_image/c7e330dbd2fdd95a43f66d799515617c/image-5.jpg)

Review of ASR technology (1/2) • Broadcast News [world-wide] – Professional anchors, mostly reading manuscripts – Accuracy over 90% • Public speaking, oral presentations [Japan] – Ordinary people making fluent speech – Accuracy ~80% (close-talking mic. ) • Classroom lectures [world-wide] – More informal speaking – Accuracy ~60% (pin mic. )

![Review of ASR technology 22 Telephone conversations US Ordinary people speaking casually Review of ASR technology (2/2) • Telephone conversations [US] – Ordinary people, speaking casually](https://slidetodoc.com/presentation_image/c7e330dbd2fdd95a43f66d799515617c/image-6.jpg)

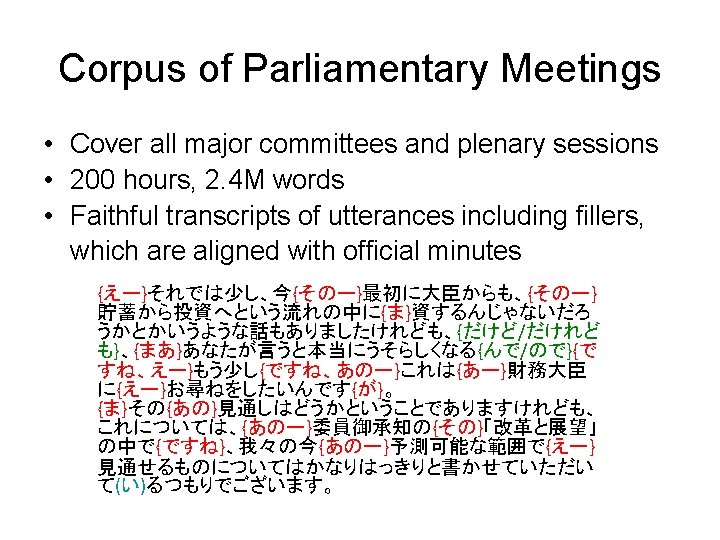

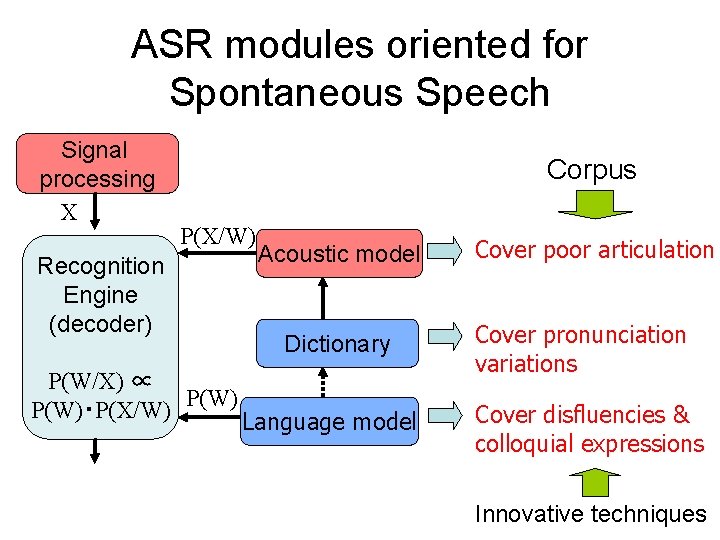

Review of ASR technology (2/2) • Telephone conversations [US] – Ordinary people, speaking casually – Accuracy 60% 85% • Business meetings [Europe/US] – Ordinary people, speaking less formally – Accuracy 70% (close mic. ), 60% (distant mic. ) • Parliamentary meetings [Europe/Japan] – Politicians speaking formally – EU: plenary sessions: 90% – Japan: committee meetings: 85%

Deployment of ASR in Parliaments & Courts • Some countries – Steno-mask & Voice writing – Re-speaking Commercial dictation software • Some local autonomies in Japan – Direct recognition of politicians’ speech • Japanese Courts – ASR for efficient retrieval from recorded sessions • Japanese Parliaments (=Diet) – to introduce ASR; direct recognition of politicians’ speech – Mostly in committee meetings …interactive, spontaneous, sometimes excited

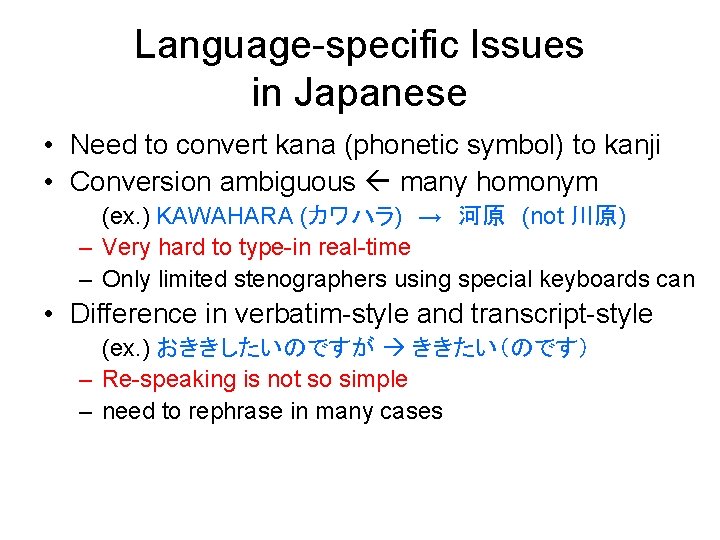

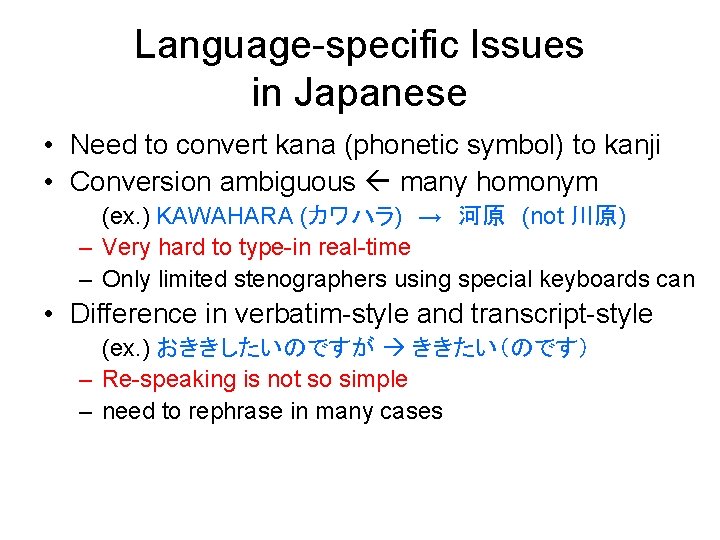

Language-specific Issues in Japanese • Need to convert kana (phonetic symbol) to kanji • Conversion ambiguous many homonym (ex. ) KAWAHARA (カワハラ) → 河原 (not 川原) – Very hard to type-in real-time – Only limited stenographers using special keyboards can • Difference in verbatim-style and transcript-style (ex. ) おききしたいのですが ききたい(のです) – Re-speaking is not so simple – need to rephrase in many cases

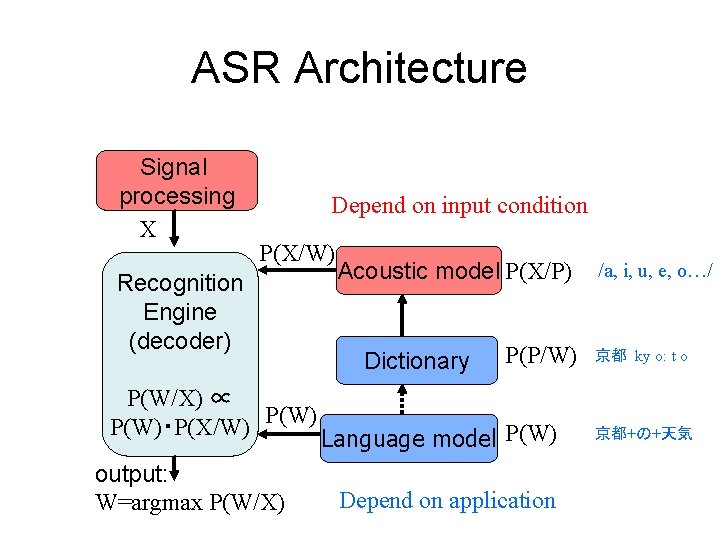

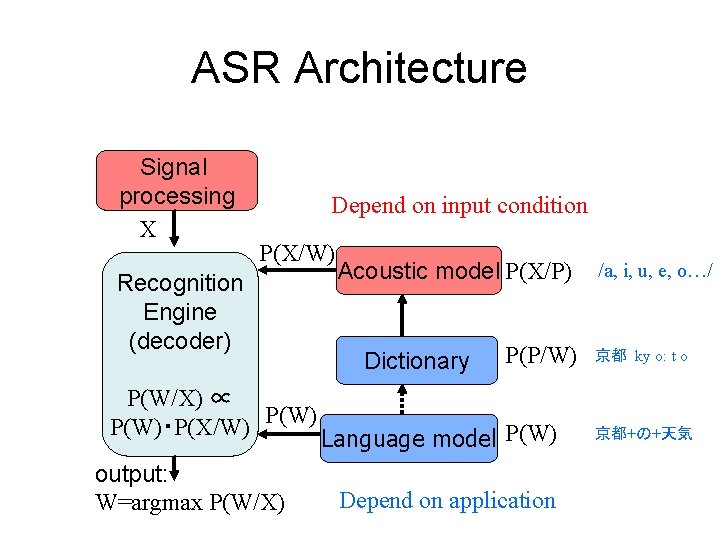

ASR Architecture Signal processing X Depend on input condition P(X/W) Recognition Engine (decoder) P(W/X) ∝ P(W)・P(X/W) output: W=argmax P(W/X) Acoustic model P(X/P) Dictionary P(P/W) Language model P(W) Depend on application /a, i, u, e, o…/ 京都 ky o: t o 京都+の+天気

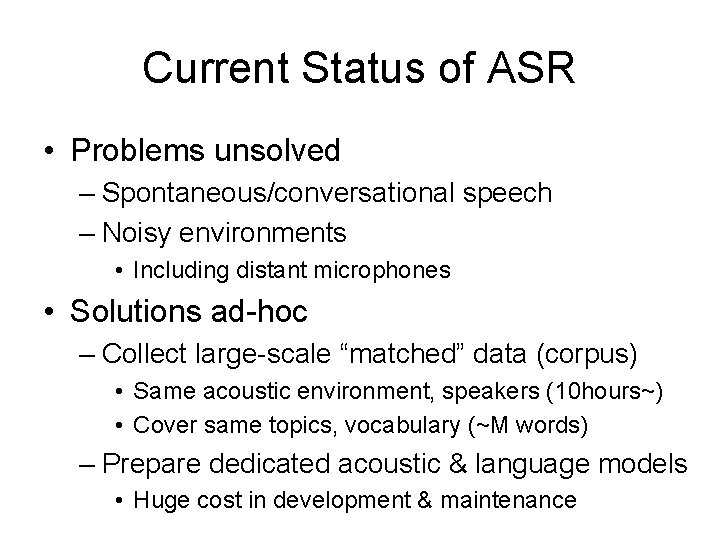

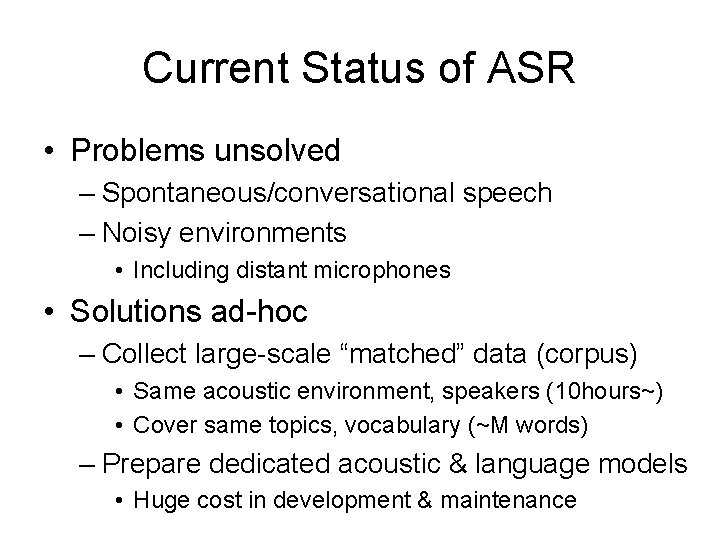

Current Status of ASR • Problems unsolved – Spontaneous/conversational speech – Noisy environments • Including distant microphones • Solutions ad-hoc – Collect large-scale “matched” data (corpus) • Same acoustic environment, speakers (10 hours~) • Cover same topics, vocabulary (~M words) – Prepare dedicated acoustic & language models • Huge cost in development & maintenance

Contents 1. Review of ASR technology 2. ASR system for the Japanese Diet 3. Next-generation transcription system of the Japanese Diet

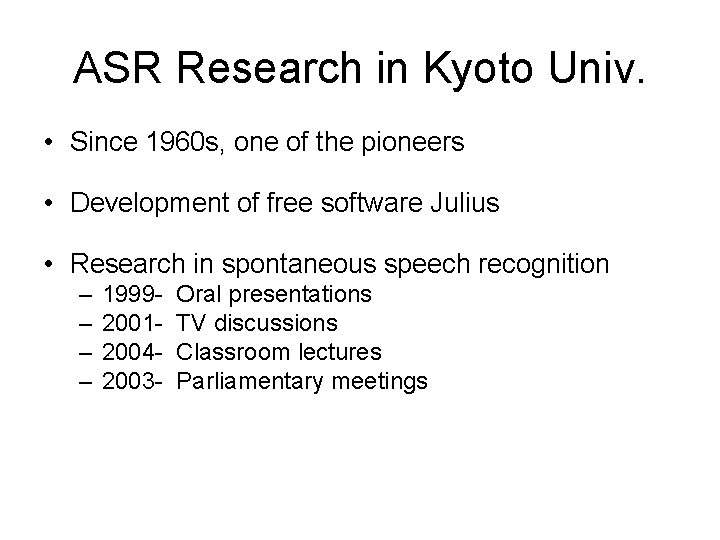

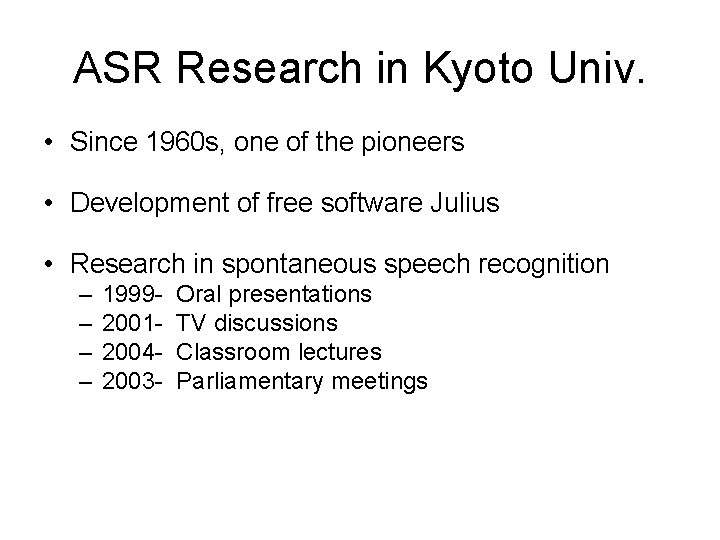

ASR Research in Kyoto Univ. • Since 1960 s, one of the pioneers • Development of free software Julius • Research in spontaneous speech recognition – – 1999200120042003 - Oral presentations TV discussions Classroom lectures Parliamentary meetings

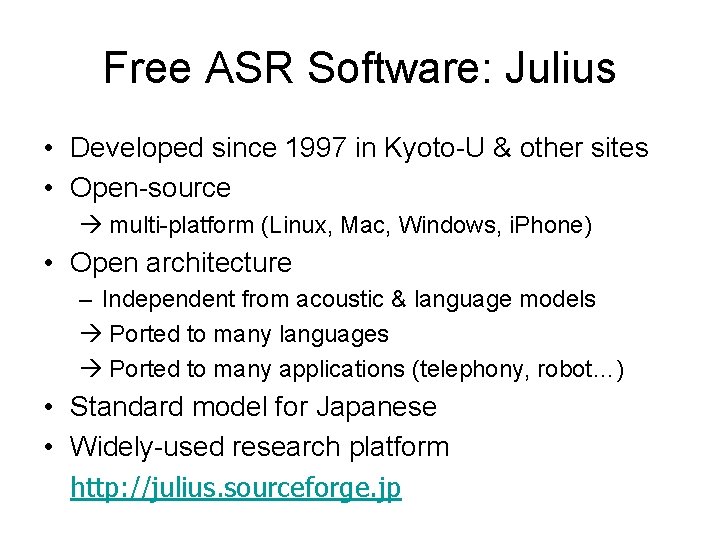

Free ASR Software: Julius • Developed since 1997 in Kyoto-U & other sites • Open-source multi-platform (Linux, Mac, Windows, i. Phone) • Open architecture – Independent from acoustic & language models Ported to many languages Ported to many applications (telephony, robot…) • Standard model for Japanese • Widely-used research platform http: //julius. sourceforge. jp

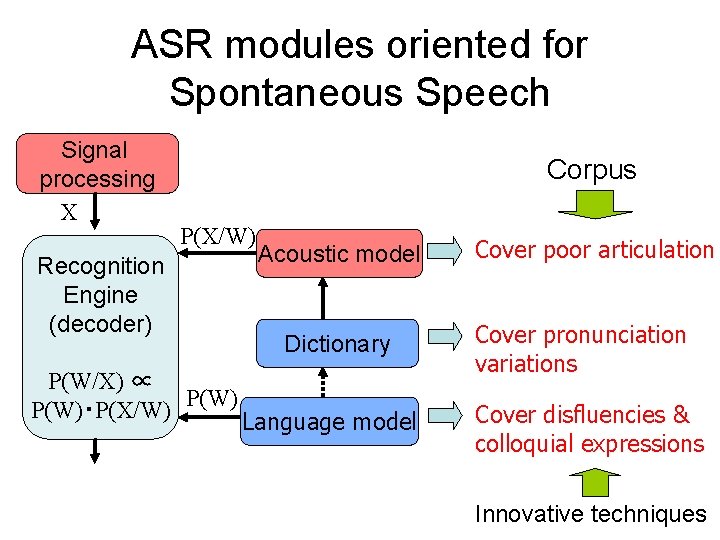

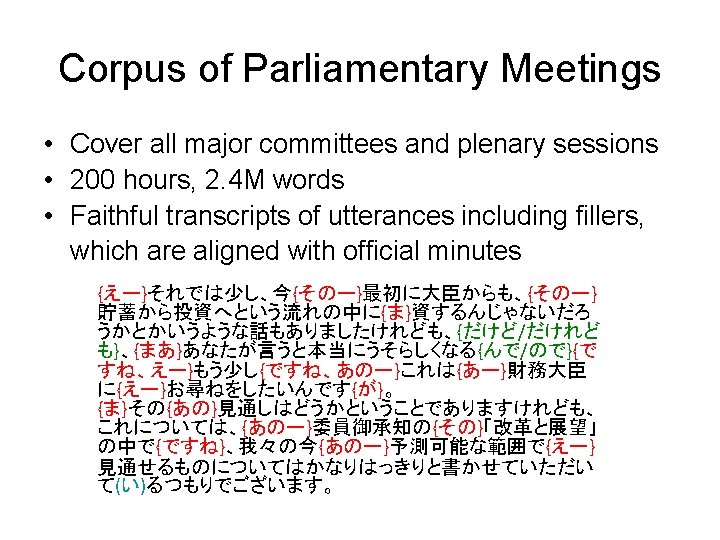

ASR modules oriented for Spontaneous Speech Signal processing X Corpus P(X/W) Recognition Engine (decoder) P(W/X) ∝ P(W)・P(X/W) Acoustic model Dictionary Language model Cover poor articulation Cover pronunciation variations Cover disfluencies & colloquial expressions Innovative techniques

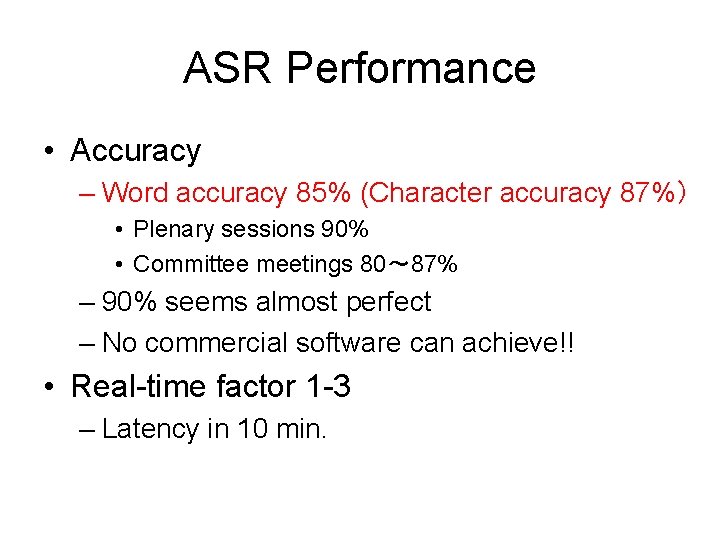

ASR Performance • Accuracy – Word accuracy 85% (Character accuracy 87%) • Plenary sessions 90% • Committee meetings 80~ 87% – 90% seems almost perfect – No commercial software can achieve!! • Real-time factor 1 -3 – Latency in 10 min.

Related Techniques • Noise suppression & dereverberation – Not serious once matched training data available • Speaker change detection – Preferred – Current technology level seems not sufficient • Auto-edit – Filler removal easy – Colloquial expression replacement non-trivial – Period insertion still research stage

Contents 1. Review of ASR technology 2. ASR system for the Japanese Diet 3. Next-generation transcription system of the Japanese Diet

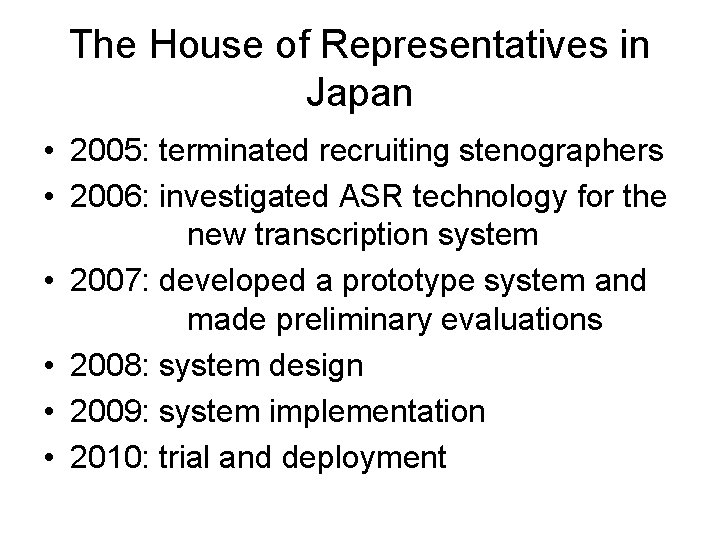

The House of Representatives in Japan • 2005: terminated recruiting stenographers • 2006: investigated ASR technology for the new transcription system • 2007: developed a prototype system and made preliminary evaluations • 2008: system design • 2009: system implementation • 2010: trial and deployment

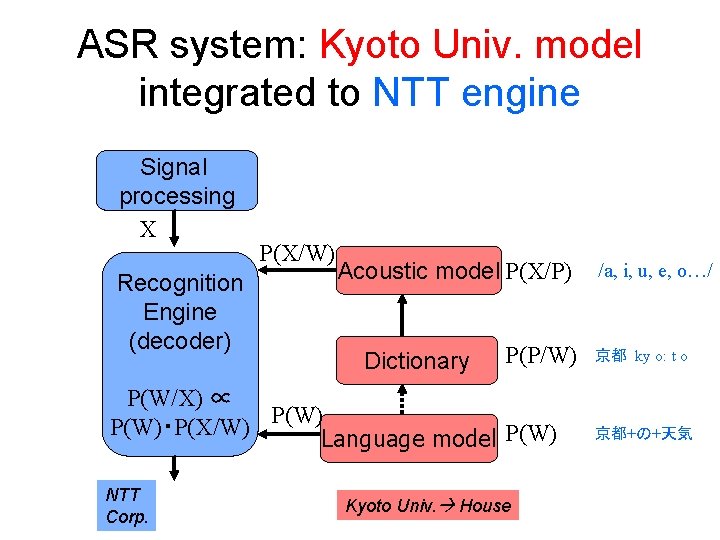

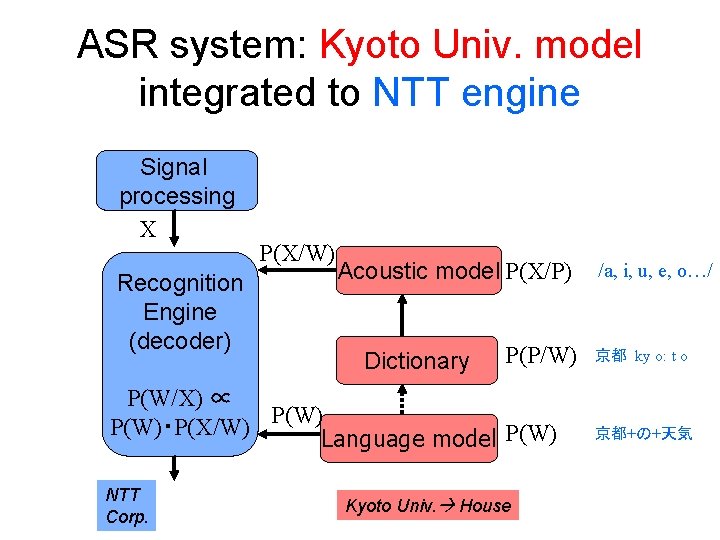

ASR system: Kyoto Univ. model integrated to NTT engine Signal processing X Recognition Engine (decoder) P(X/W) Acoustic model P(X/P) Dictionary P(P/W) P(W/X) ∝ P(W)・P(X/W) Language model P(W) NTT Corp. Kyoto Univ. House /a, i, u, e, o…/ 京都 ky o: t o 京都+の+天気

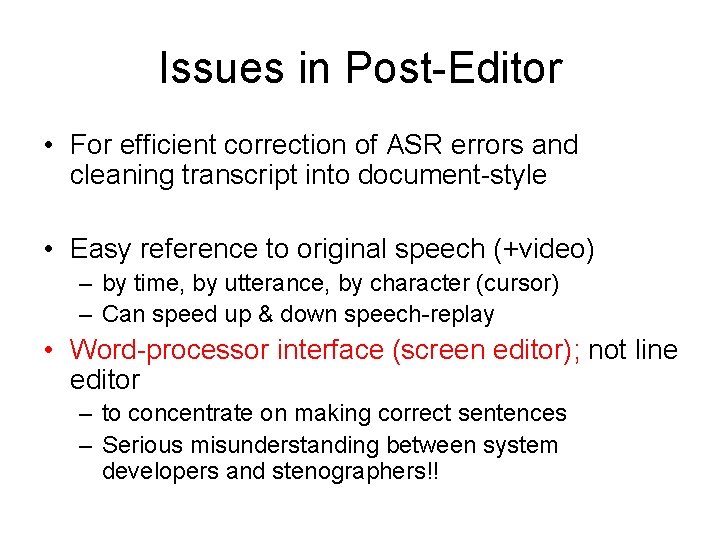

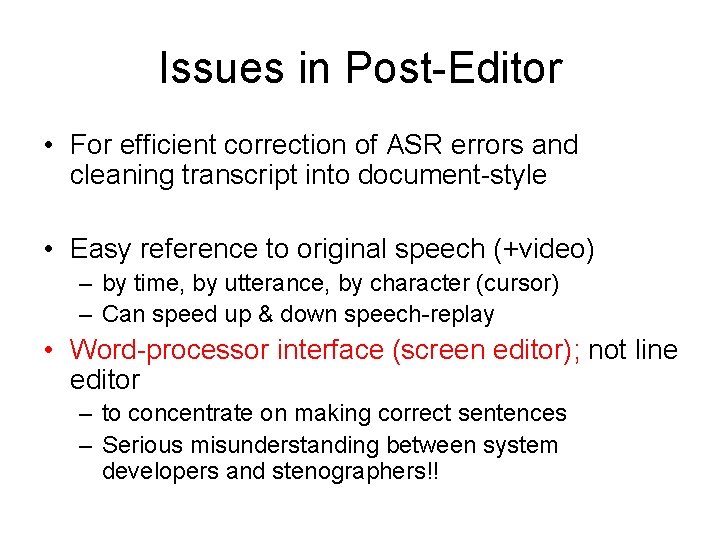

Issues in Post-Editor • For efficient correction of ASR errors and cleaning transcript into document-style • Easy reference to original speech (+video) – by time, by utterance, by character (cursor) – Can speed up & down speech-replay • Word-processor interface (screen editor); not line editor – to concentrate on making correct sentences – Serious misunderstanding between system developers and stenographers!!

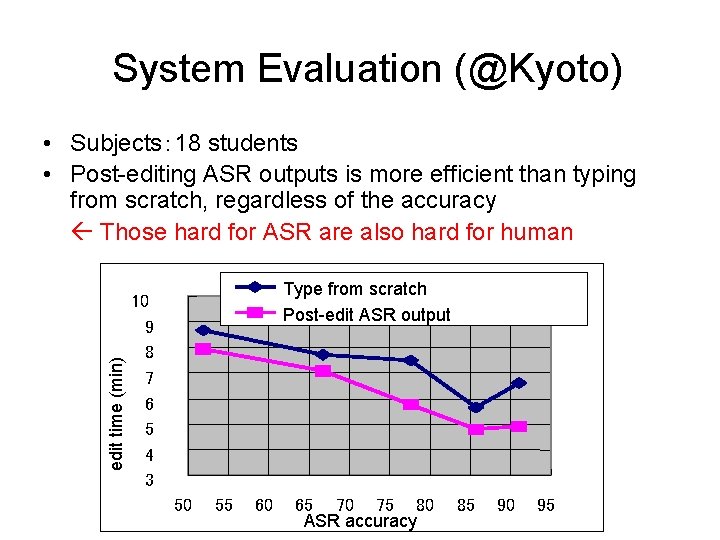

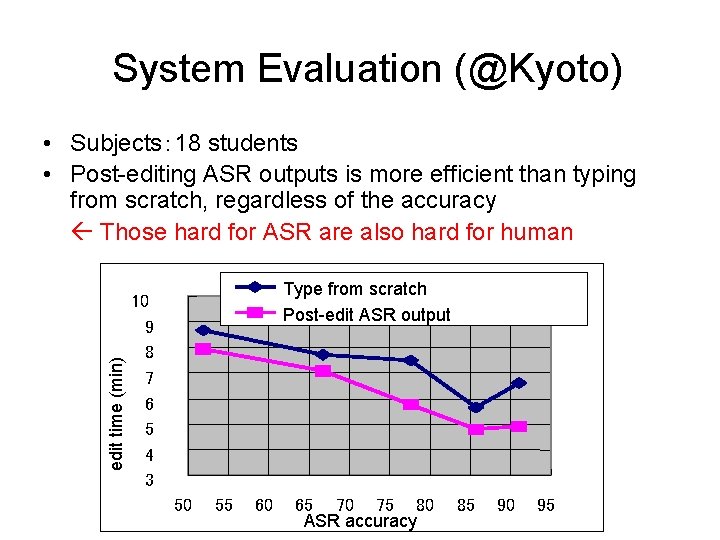

System Evaluation (@Kyoto) edit time (min) • Subjects: 18 students • Post-editing ASR outputs is more efficient than typing from scratch, regardless of the accuracy Those hard for ASR are also hard for human Type from scratch Post-edit ASR output 10 9 8 7 6 5 4 3 50 55 60 65 70 75 80 ASR accuracy 85 90 95

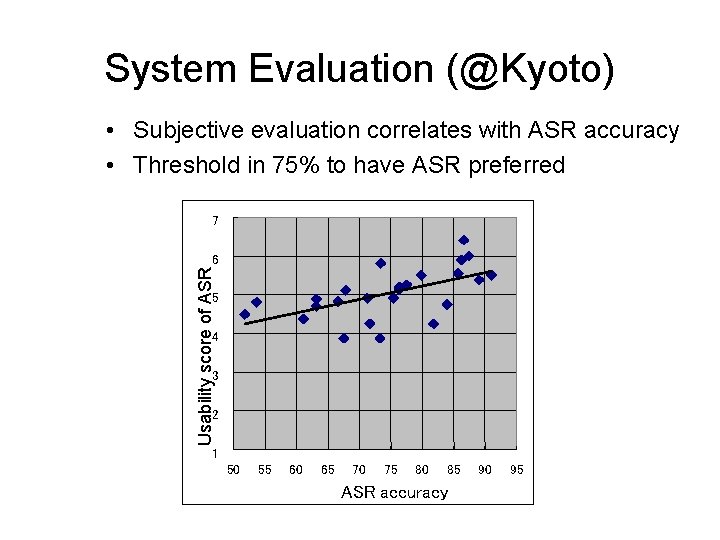

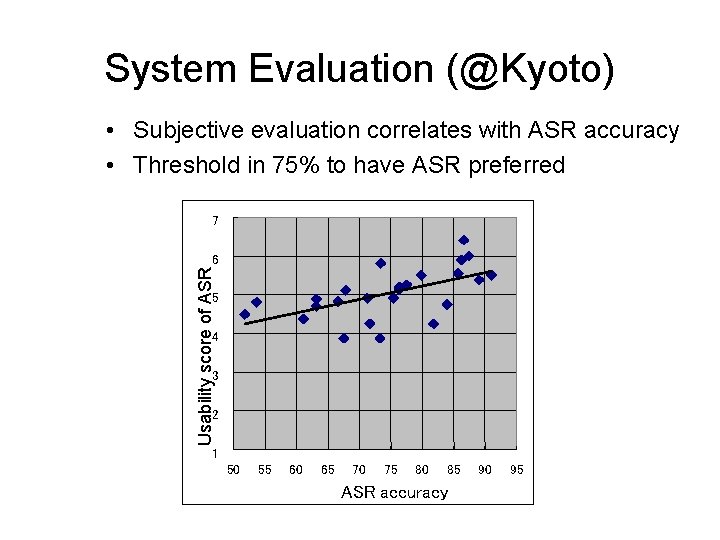

System Evaluation (@Kyoto) • Subjective evaluation correlates with ASR accuracy • Threshold in 75% to have ASR preferred 7 Usability score of ASR 6 5 4 3 2 1 50 55 60 65 70 75 80 85 ASR accuracy 90 95

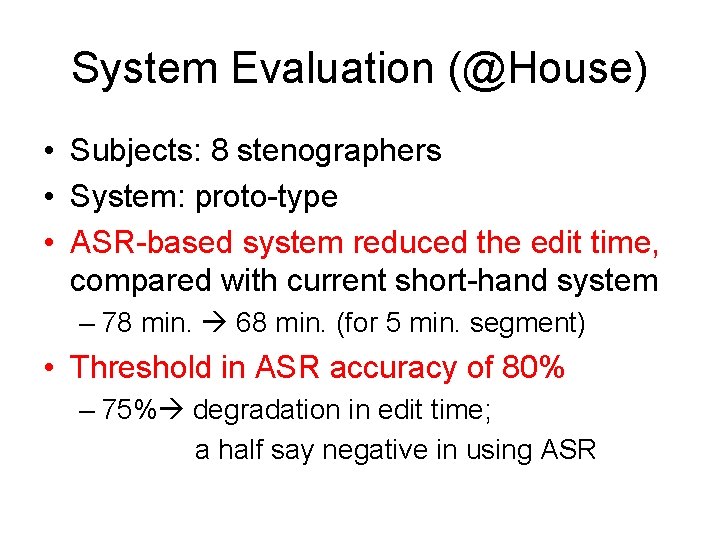

System Evaluation (@House) • Subjects: 8 stenographers • System: proto-type • ASR-based system reduced the edit time, compared with current short-hand system – 78 min. 68 min. (for 5 min. segment) • Threshold in ASR accuracy of 80% – 75% degradation in edit time; a half say negative in using ASR

Side effect of ASR-based system • Everything (text/speech/video) digitized and hyper-linked Efficient search & retrieval • Less burden? may work on longer segments? ? • Significantly less special training needed compared with current short-hand system

Conclusions • ASR of parliamentary meetings is feasible, given a large collection of data – ~100 hour speech – ~1 G word text (minutes) – Accuracy 85 -90% • Effective post-processing is still under investigation • Automatic translation research is also ongoing