Transactions and Reliability Sarah Diesburg Operating Systems CS

Transactions and Reliability Sarah Diesburg Operating Systems CS 3430

Motivation l File systems have lots of metadata: ¡Free blocks, directories, file headers, indirect blocks l Metadata is heavily cached for performance

Problem l System crashes l OS needs to ensure that the file system does not reach an inconsistent state l Example: move a file between directories ¡Remove a file from the old directory ¡Add a file to the new directory l What happens when a crash occurs in the middle?

UNIX File System (Ad Hoc Failure. Recovery) l Metadata handling: ¡Uses a synchronous write-through caching policy l. A call to update metadata does not return until the changes are propagated to disk ¡Updates are ordered ¡When crashes occur, run fsck to repair inprogress operations

Some Examples of Metadata Handling l Undo effects not yet visible to users ¡If a new file is created, but not yet added to the directory l. Delete the file l Continue effects that are visible to users ¡If file blocks are already allocated, but not recorded in the bitmap l. Update the bitmap

UFS User Data Handling l Uses a write-back policy ¡Modified blocks are written to disk at 30 -second intervals l. Unless a user issues the sync system call ¡Data updates are not ordered ¡In many cases, consistent metadata is good enough

Example: Vi l Vi saves changes by doing the following 1. Writes the new version in a temp file l. Now we have old_file and new_temp file 2. Moves the old version to a different temp file l. Now we have new_temp and old_temp 3. Moves the new version into the real file l. Now we have new_file and old_temp 4. Removes the old version l. Now we have new_file

Example: Vi l When crashes occur ¡Looks for the leftover files ¡Moves forward or backward depending on the integrity of files

Transaction Approach l A transaction groups operations as a unit, with the following characteristics: ¡Atomic: all operations either happen or they do not (no partial operations) ¡Serializable: transactions appear to happen one after the other ¡Durable: once a transaction happens, it is recoverable and can survive crashes

More on Transactions l A transaction is not done until it is committed l Once committed, a transaction is durable l If a transaction fails to complete, it must rollback as if it did not happen at all l Critical sections are atomic and serializable, but not durable

Transaction Implementation (One Thread) l Example: money transfer Begin transaction x = x – 1; y = y + 1; Commit

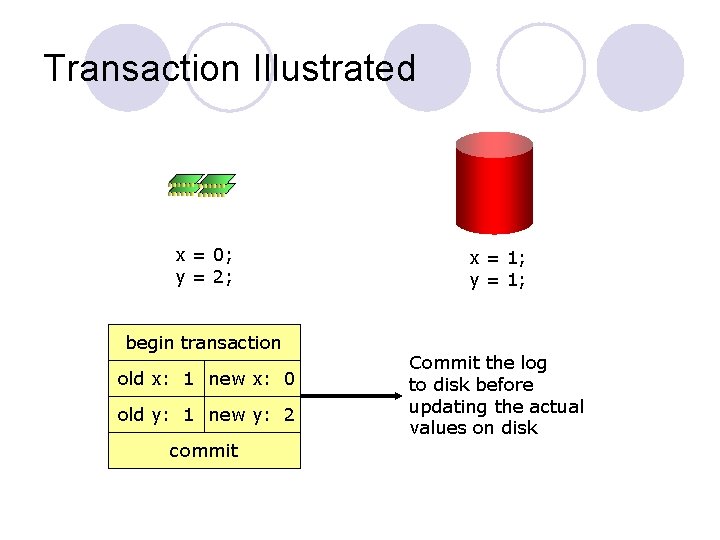

Transaction Implementation (One Thread) l Common implementations involve the use of a log, a journal that is never erased l A file system uses a write-ahead log to track all transactions

Transaction Implementation (One Thread) l Once accounts of x and y are on a log, the log is committed to disk in a single write l Actual changes to those accounts are done later

Transaction Illustrated x = 1; y = 1;

Transaction Illustrated x = 0; y = 2; x = 1; y = 1;

Transaction Illustrated x = 0; y = 2; begin transaction old x: 1 new x: 0 old y: 1 new y: 2 commit x = 1; y = 1; Commit the log to disk before updating the actual values on disk

Transaction Steps l Mark the beginning of the transaction l Log the changes in account x l Log the changes in account y l Commit l Modify account x on disk l Modify account y on disk

Scenarios of Crashes l If a crash occurs after the commit ¡Replays the log to update accounts l If a crash occurs before or during the commit ¡Rolls back and discard the transaction

Two-Phase Locking (Multiple Threads) l Logging alone not enough to prevent multiple transactions from trashing one another (not serializable) l Solution: two-phase locking 1. Acquire all locks 2. Perform updates and release all locks l Thread A cannot see thread B’s changes until thread A commits and releases locks

Transactions in File Systems l Almost all file systems built since 1985 use write-ahead logging ¡NTFS, HFS+, ext 3, ext 4, … + Eliminates running fsck after a crash + Write-ahead logging provides reliability - All modifications need to be written twice

Log-Structured File System (LFS) l If logging is so great, why don’t we treat everything as log entries? l Log-structured file system ¡Everything is a log entry (file headers, directories, data blocks) ¡Write the log only once l. Use version stamps to distinguish between old and new entries

More on LFS l New log entries are always appended to the end of the existing log ¡All writes are sequential ¡Seeks only occurs during reads l. Not so bad due to temporal locality and caching l Problem: ¡Need to create more contiguous space all the time

RAID and Reliability l So far, we assume that we have a single disk l What if we have multiple disks? ¡ The chance of a single-disk failure increases l RAID: redundant array of independent disks ¡ Standard way of organizing disks and classifying the reliability of multi-disk systems ¡ General methods: data duplication, parity, and errorcorrecting codes (ECC)

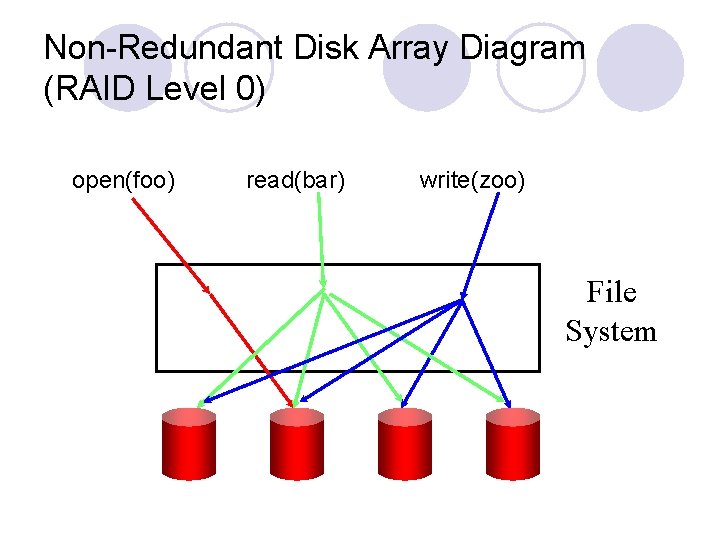

RAID 0 l No redundancy l Uses block-level striping across disks ¡i. e. , 1 st block stored on disk 1, 2 nd block stored on disk 2 l Failure causes data loss

Non-Redundant Disk Array Diagram (RAID Level 0) open(foo) read(bar) write(zoo) File System

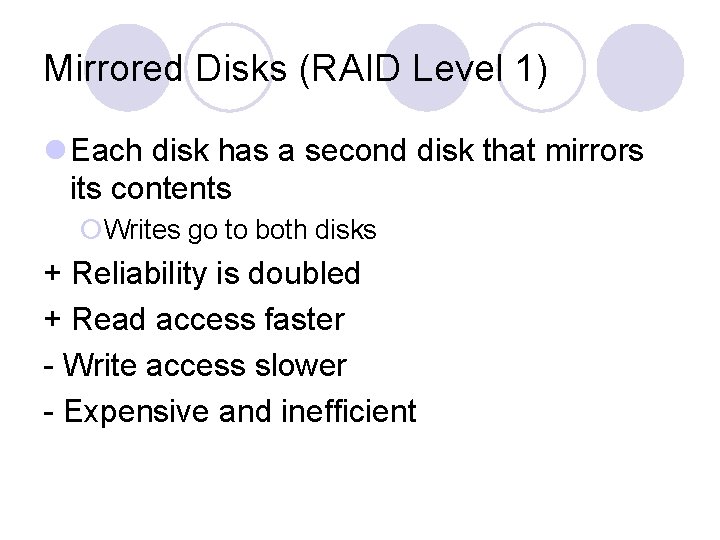

Mirrored Disks (RAID Level 1) l Each disk has a second disk that mirrors its contents ¡Writes go to both disks + Reliability is doubled + Read access faster - Write access slower - Expensive and inefficient

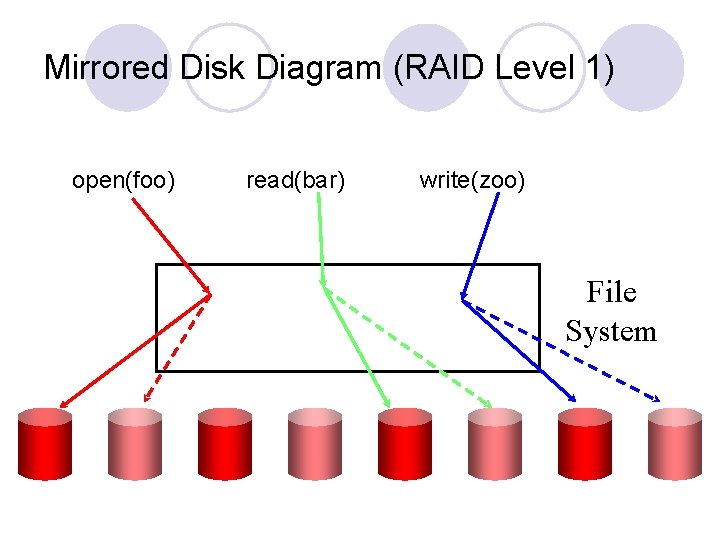

Mirrored Disk Diagram (RAID Level 1) open(foo) read(bar) write(zoo) File System

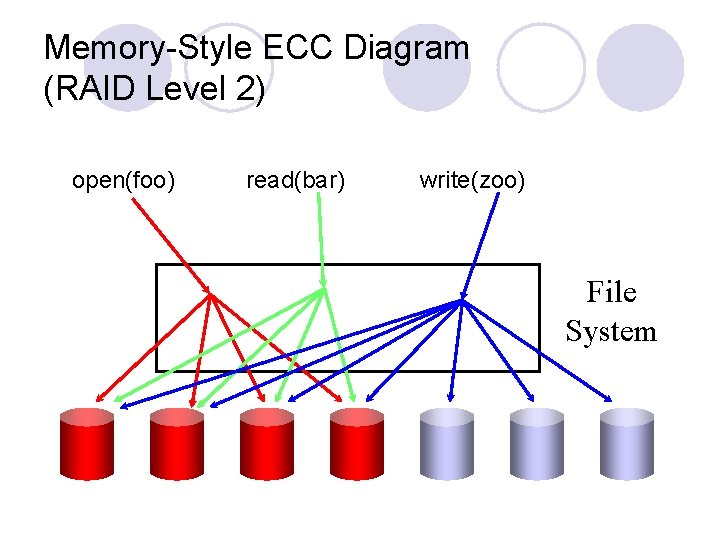

Memory-Style ECC Diagram (RAID Level 2) open(foo) read(bar) write(zoo) File System

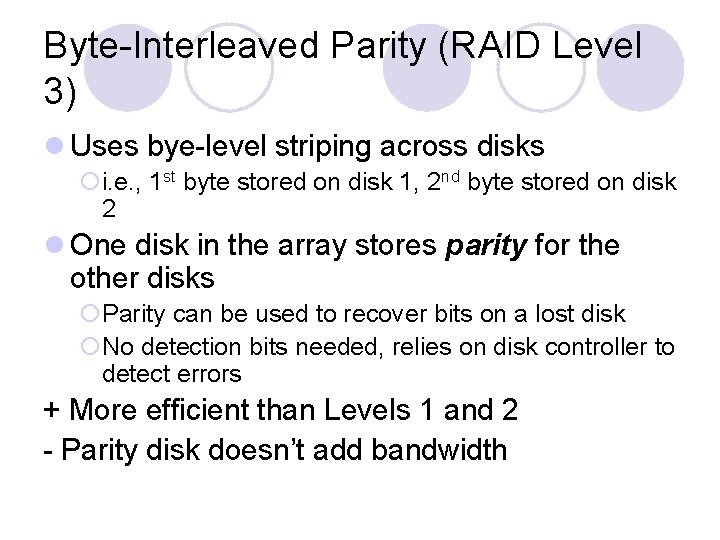

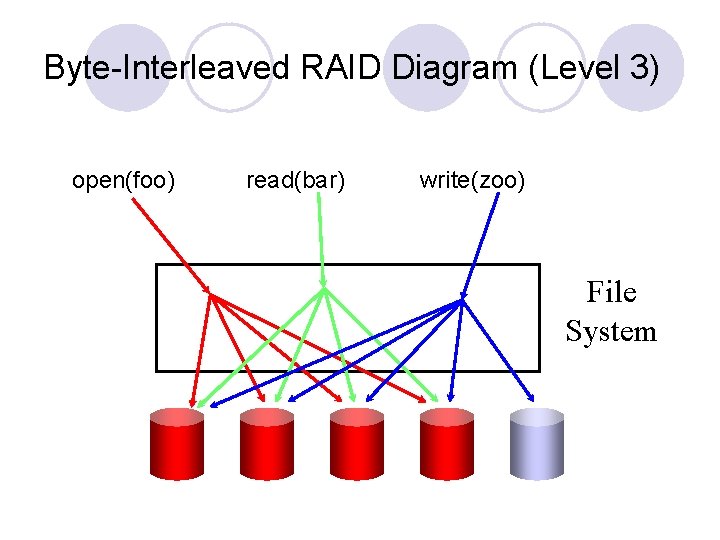

Byte-Interleaved Parity (RAID Level 3) l Uses bye-level striping across disks ¡i. e. , 1 st byte stored on disk 1, 2 nd byte stored on disk 2 l One disk in the array stores parity for the other disks ¡Parity can be used to recover bits on a lost disk ¡No detection bits needed, relies on disk controller to detect errors + More efficient than Levels 1 and 2 - Parity disk doesn’t add bandwidth

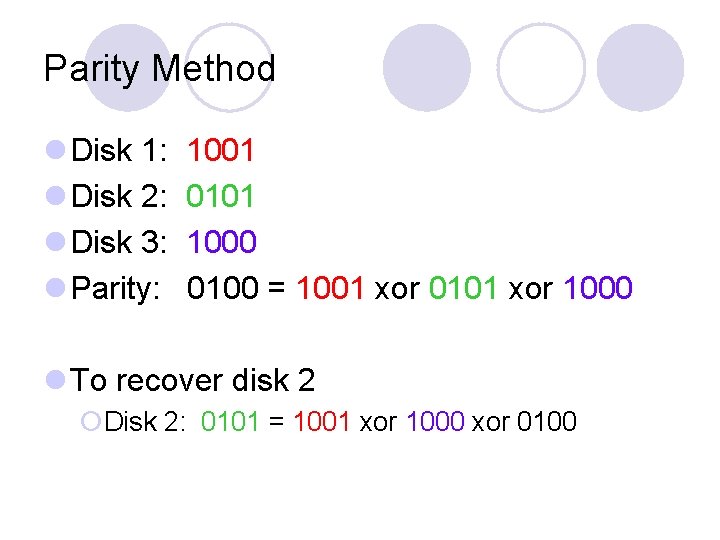

Parity Method l Disk 1: l Disk 2: l Disk 3: l Parity: 1001 0101 1000 0100 = 1001 xor 0101 xor 1000 l To recover disk 2 ¡Disk 2: 0101 = 1001 xor 1000 xor 0100

Byte-Interleaved RAID Diagram (Level 3) open(foo) read(bar) write(zoo) File System

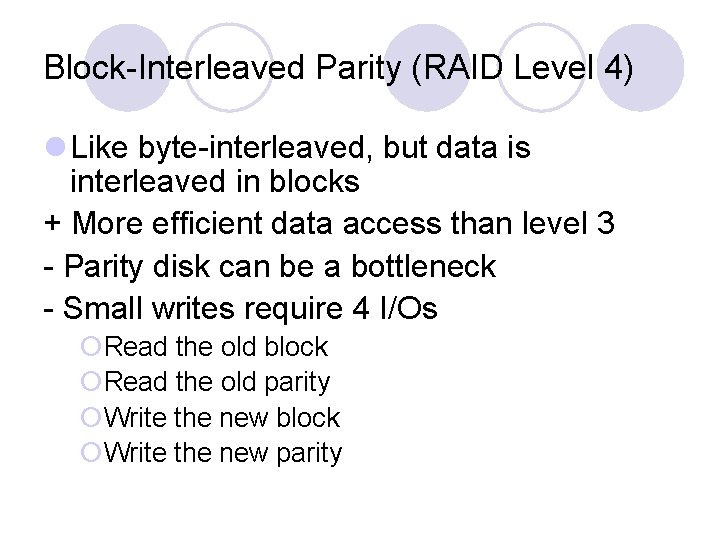

Block-Interleaved Parity (RAID Level 4) l Like byte-interleaved, but data is interleaved in blocks + More efficient data access than level 3 - Parity disk can be a bottleneck - Small writes require 4 I/Os ¡Read the old block ¡Read the old parity ¡Write the new block ¡Write the new parity

Block-Interleaved Parity Diagram (RAID Level 4) open(foo) read(bar) write(zoo) File System

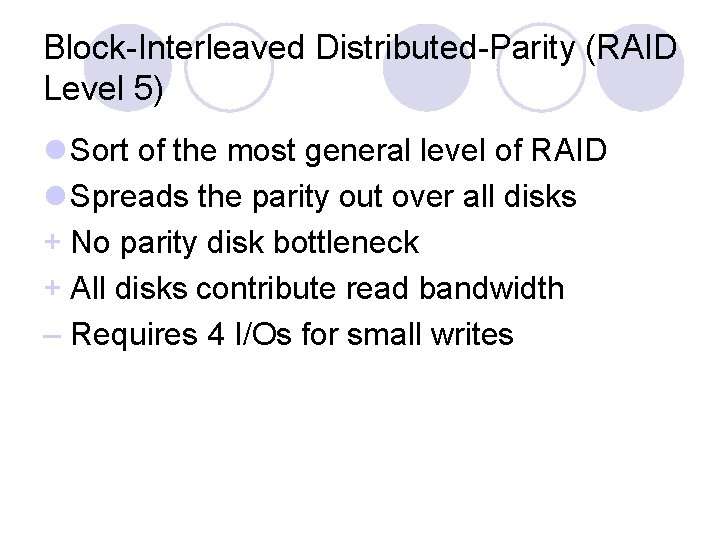

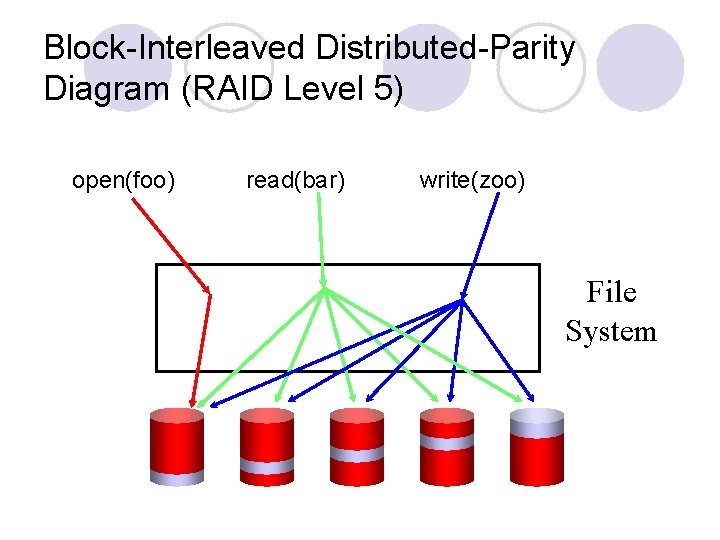

Block-Interleaved Distributed-Parity (RAID Level 5) l Sort of the most general level of RAID l Spreads the parity out over all disks + No parity disk bottleneck + All disks contribute read bandwidth – Requires 4 I/Os for small writes

Block-Interleaved Distributed-Parity Diagram (RAID Level 5) open(foo) read(bar) write(zoo) File System

Other RAIDs l RAID 6 ¡Extends RAID 5 by adding another parity block ¡Uses block-level striping with two parity blocks across all disks ¡ 4 drive minimum, can survive two drive failures l RAID 10 (RAID 1 + RAID 0) ¡Combines disk mirroring and striping l RAID 50 (RAID 5 + RAID 0) ¡Combines distributed parity with striping

- Slides: 36