Tradeoffs in contextual bandit learning Alekh Agarwal Microsoft

Tradeoffs in contextual bandit learning Alekh Agarwal Microsoft Research Joint work with Daniel Hsu, John Langford, Lihong Li, Satyen Kale and Rob Schapire

Learning to interact: example #1 • Loop: • 1. Patient arrives with symptoms, medical history, genome, … • 2. Physician prescribes treatment. • 3. Patient’s health responds (e. g. , improves, worsens). • Goal: prescribe treatments that yield good health outcomes.

Learning to interact: example #2 • Loop: • 1. User visits website with profile, browsing history, … • 2. Website operator choose content/ads to display. • 3. User reacts to content/ads (e. g. , click, “like”). • Goal: choose content/ads that yield desired user behavior.

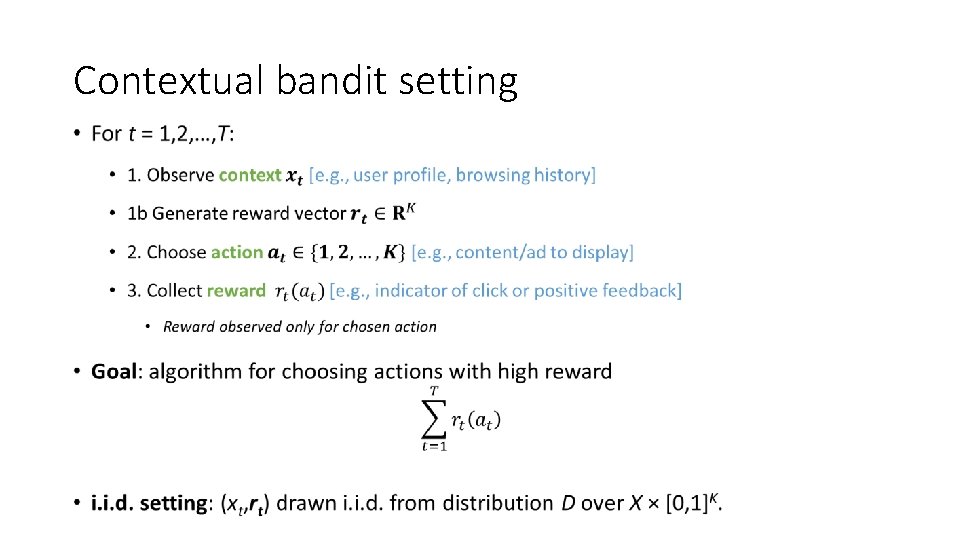

Contextual bandit setting •

Challenges • Fundamental dilemma • Exploit what has been learned • Explore to find which behaviors lead to high rewards • Need to use context effectively • Different actions are preferred under different contexts • Might not see the same context twice • Computational efficiency

Special case: Multi-armed bandits • Reward information only on selected action • No context • Goal: Do as well as best single action • Tacit assumption: there is one action which always gives high rewards • E. g. : Single treatment/content/ad that is right for the entire population

From actions to policies •

Learning with contexts and policies •

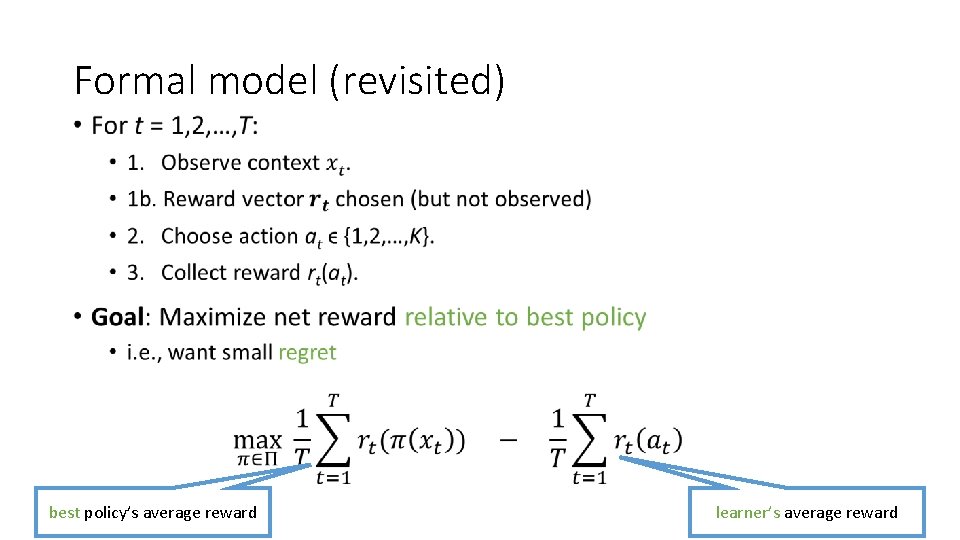

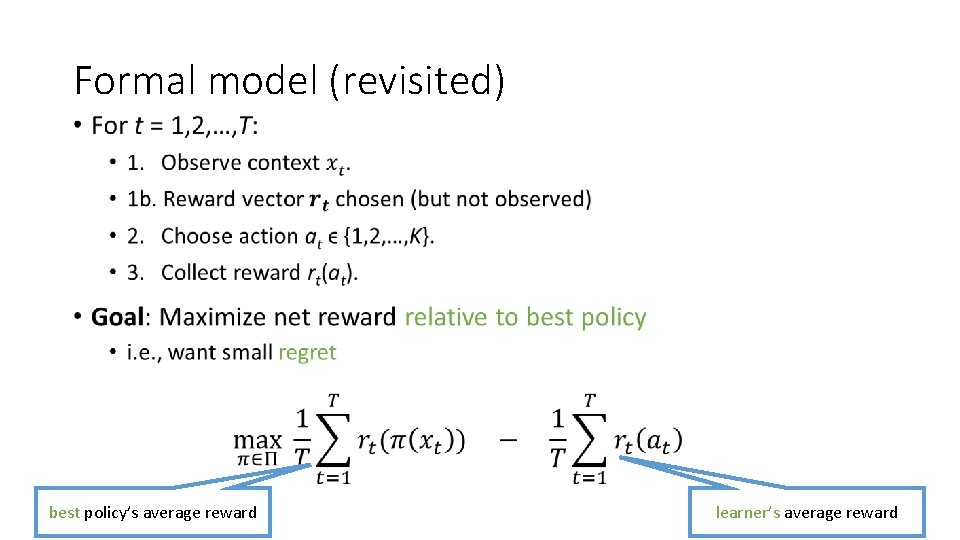

Formal model (revisited) • best policy’s average reward learner’s average reward

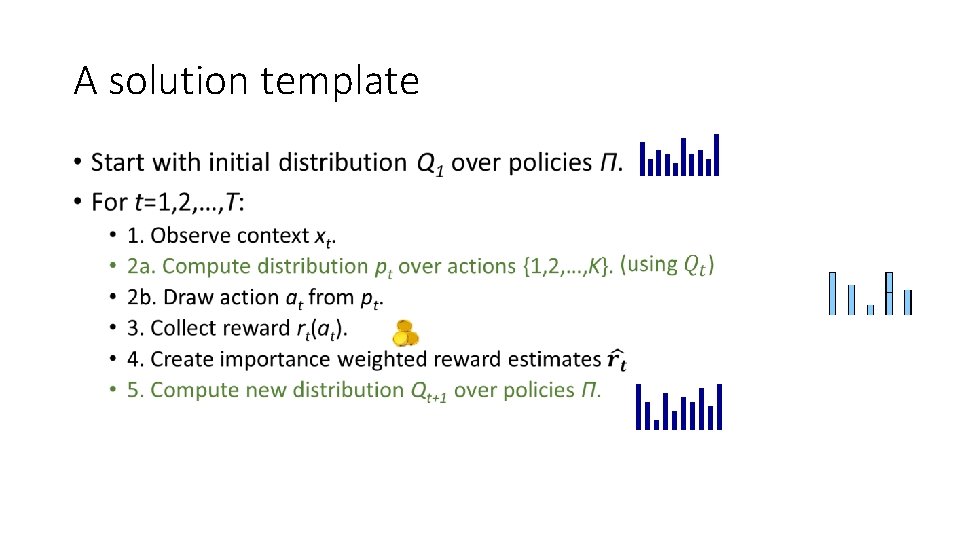

A solution template •

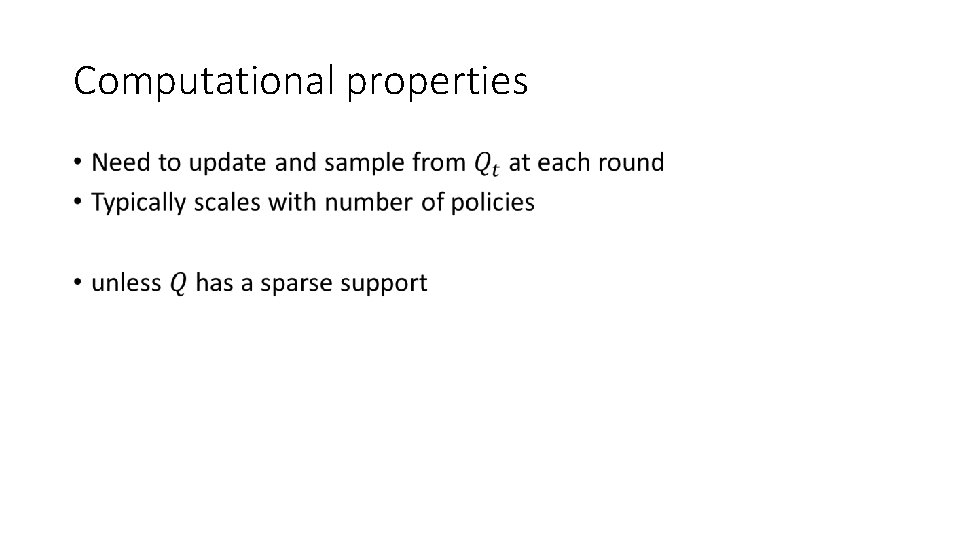

Computational properties •

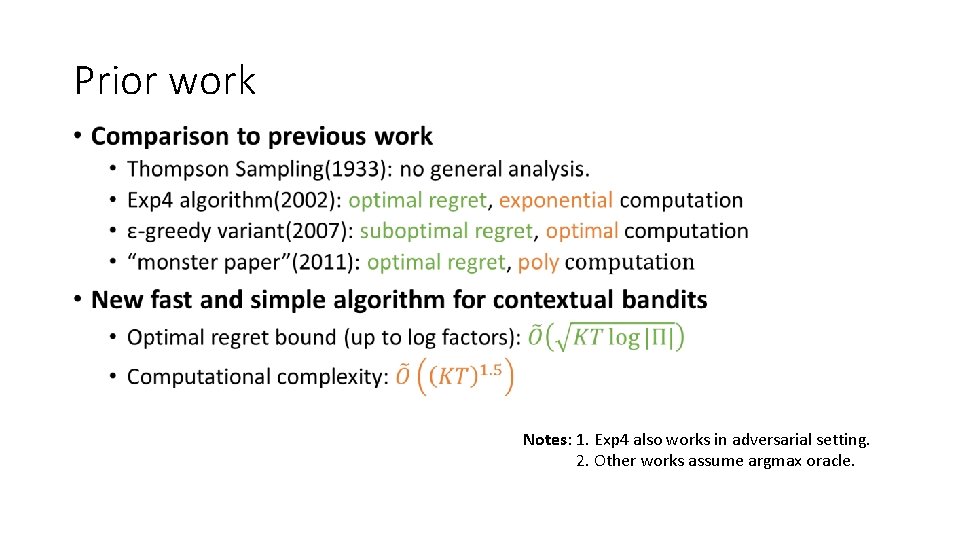

Prior work • Notes: 1. Exp 4 also works in adversarial setting. 2. Other works assume argmax oracle.

This talk • New and general algorithm for contextual bandit problem • Optimal statistical performance • Much faster and simpler than predecessors • Computational barrier for a class of algorithms

Formal model (revisited) • best policy’s average reward learner’s average reward

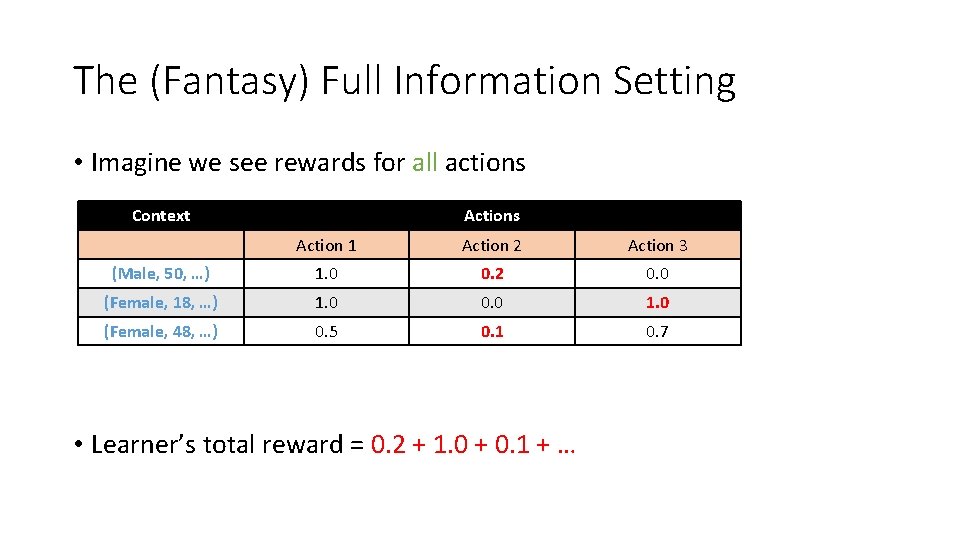

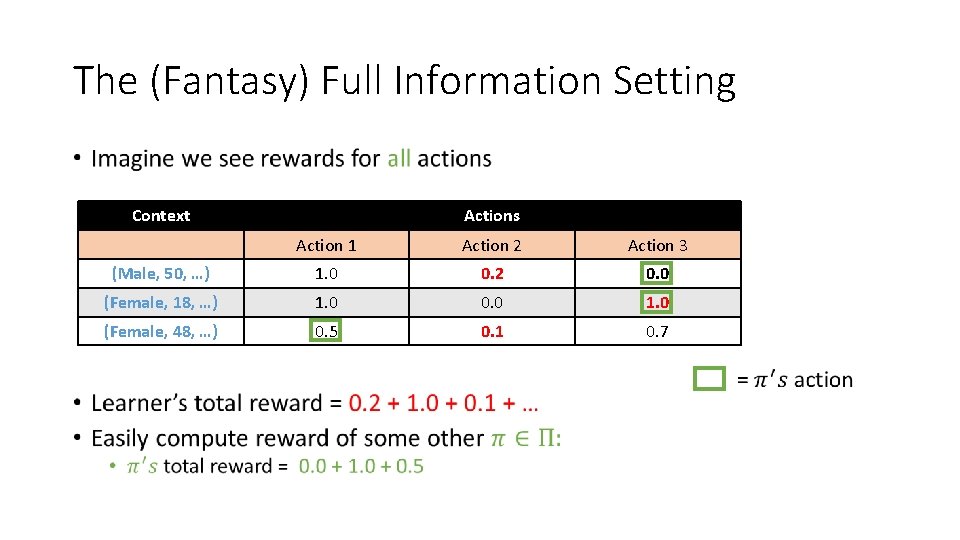

The (Fantasy) Full Information Setting • Imagine we see rewards for all actions Context Actions Action 1 Action 2 Action 3 (Male, 50, …) 1. 0 0. 2 0. 0 (Female, 18, …) 1. 0 0. 0 1. 0 (Female, 48, …) 0. 5 0. 1 0. 7 • Learner’s total reward = 0. 2 + 1. 0 + 0. 1 + …

The (Fantasy) Full Information Setting • Context Actions Action 1 Action 2 Action 3 (Male, 50, …) 1. 0 0. 2 0. 0 (Female, 18, …) 1. 0 0. 0 1. 0 (Female, 48, …) 0. 5 0. 1 0. 7

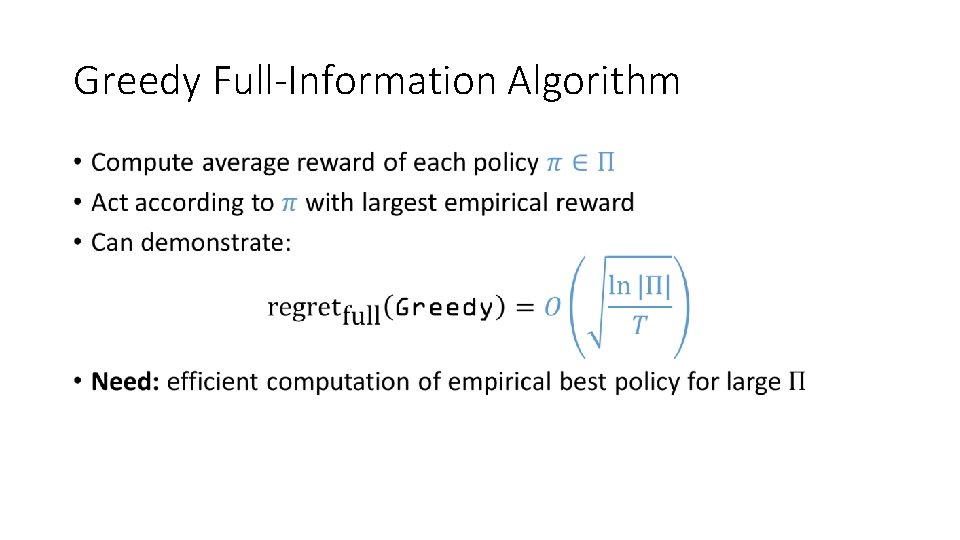

Greedy Full-Information Algorithm •

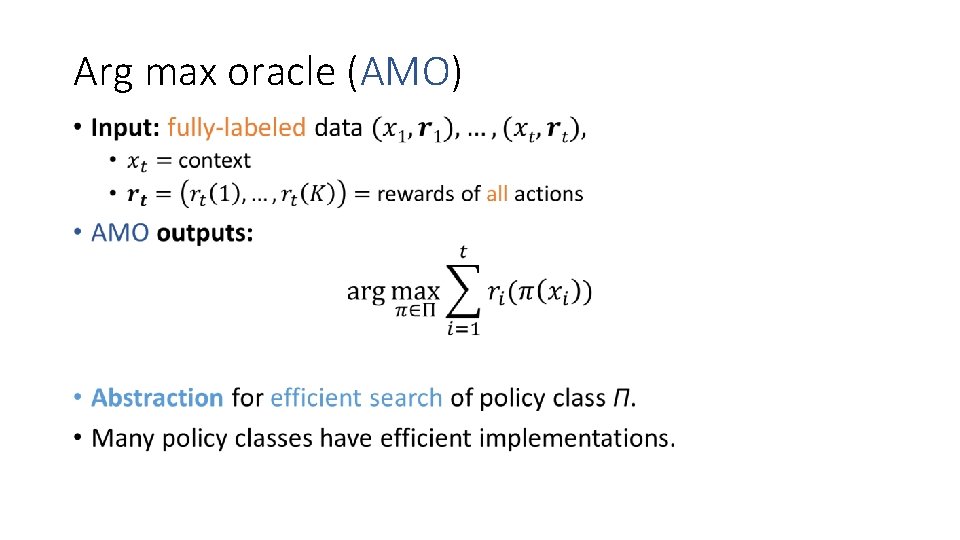

Arg max oracle (AMO) •

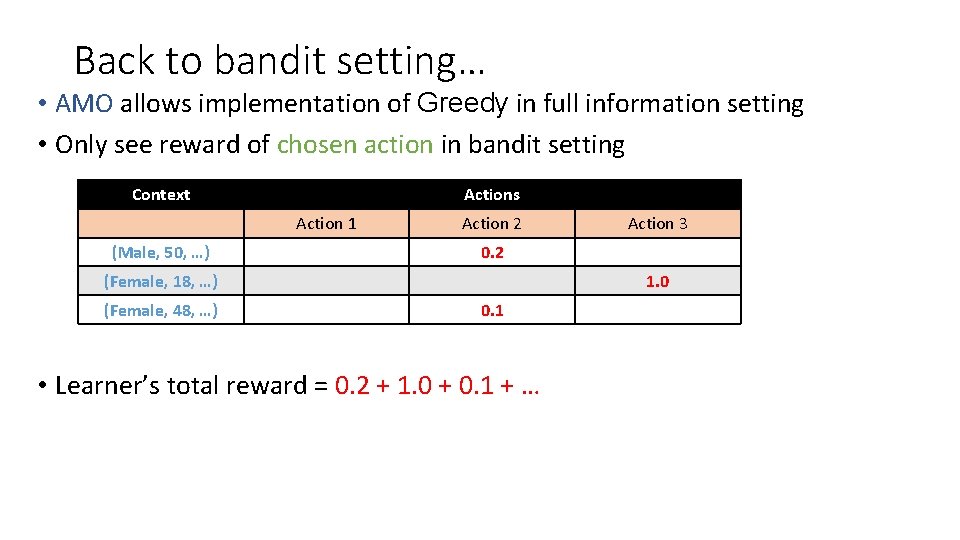

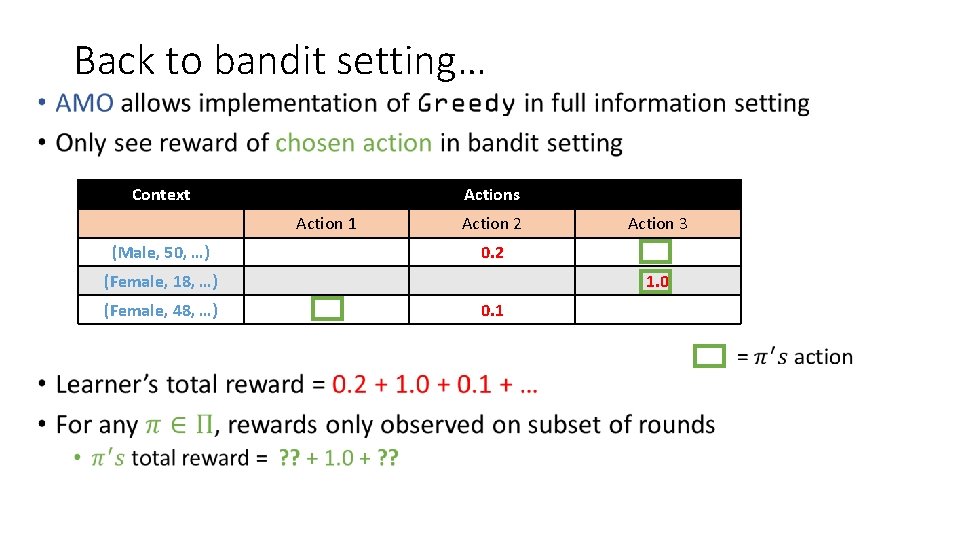

Back to bandit setting… • AMO allows implementation of Greedy in full information setting • Only see reward of chosen action in bandit setting Context Actions Action 1 (Male, 50, …) Action 2 0. 2 (Female, 18, …) (Female, 48, …) Action 3 1. 0 0. 1 • Learner’s total reward = 0. 2 + 1. 0 + 0. 1 + …

Back to bandit setting… • Context Actions Action 1 (Male, 50, …) Action 2 0. 2 (Female, 18, …) (Female, 48, …) Action 3 1. 0 0. 1

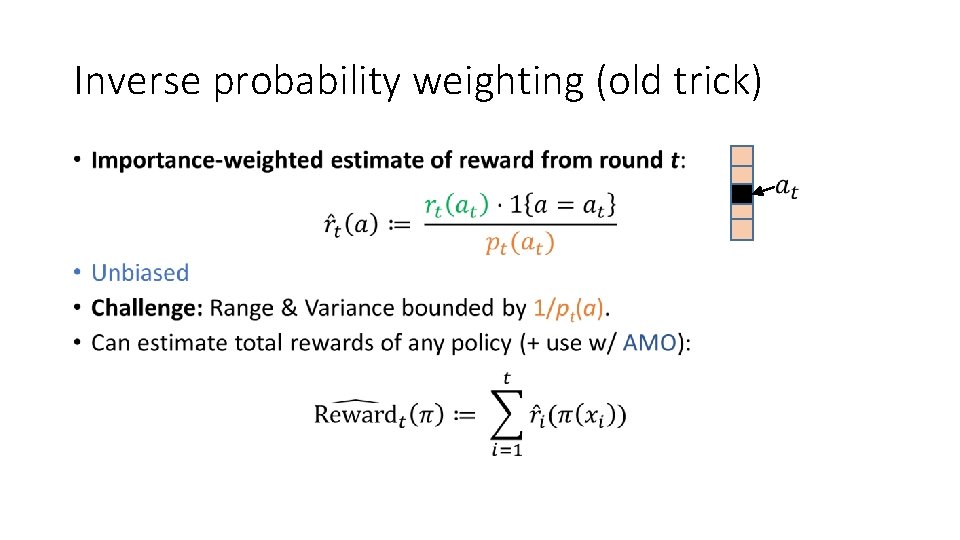

Inverse probability weighting (old trick) •

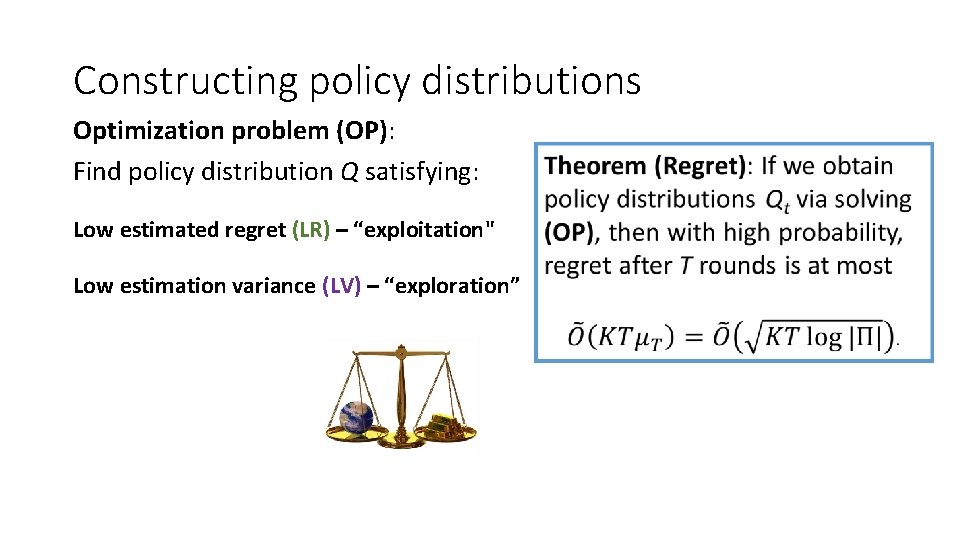

Constructing policy distributions Optimization problem (OP): Find policy distribution Q satisfying: Low estimated regret (LR) – “exploitation" Low estimation variance (LV) – “exploration”

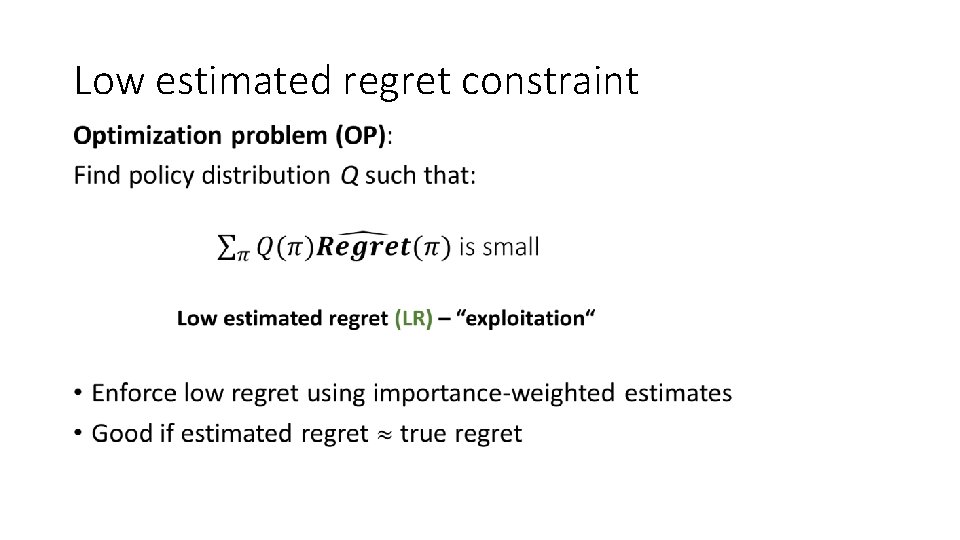

Low estimated regret constraint •

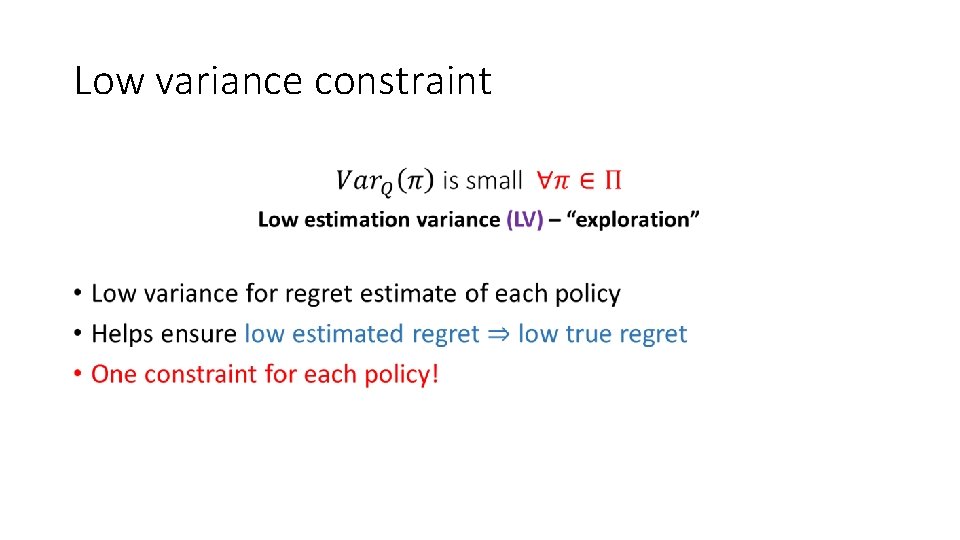

Low variance constraint •

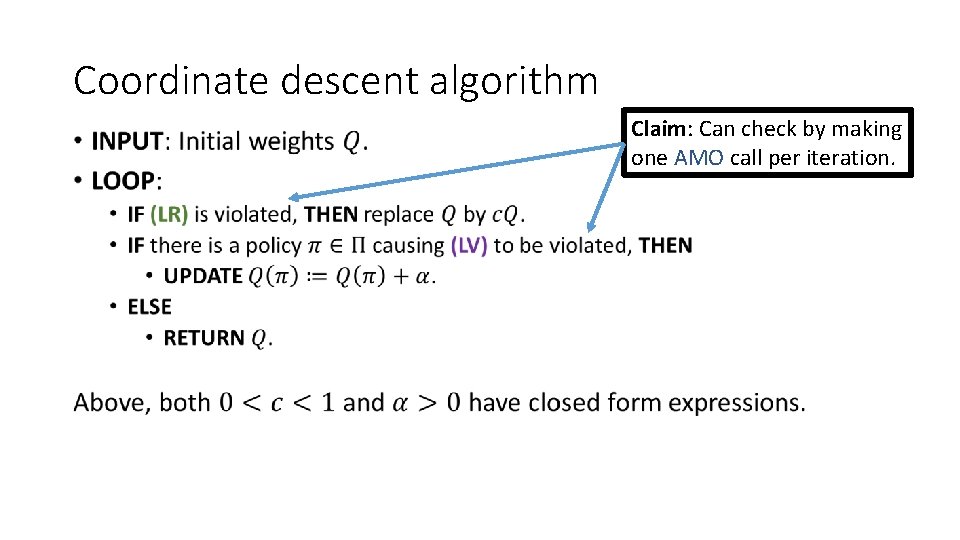

Coordinate descent algorithm • Claim: Can check by making one AMO call per iteration.

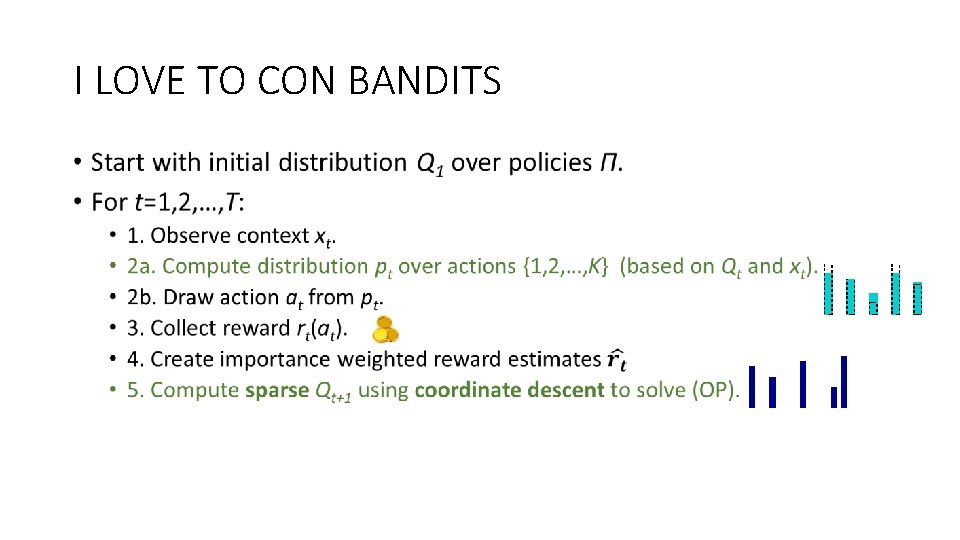

I LOVE TO CON BANDITS •

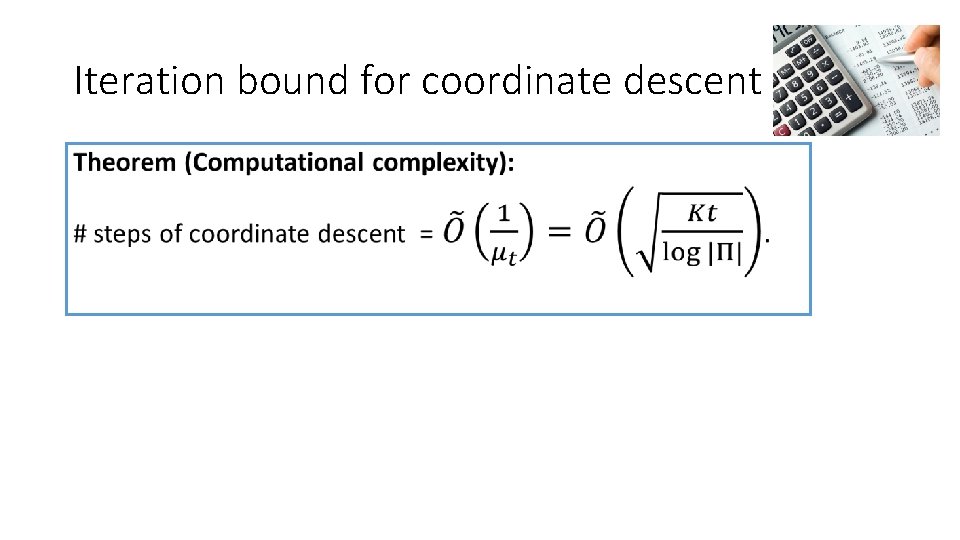

Iteration bound for coordinate descent •

Warm-start •

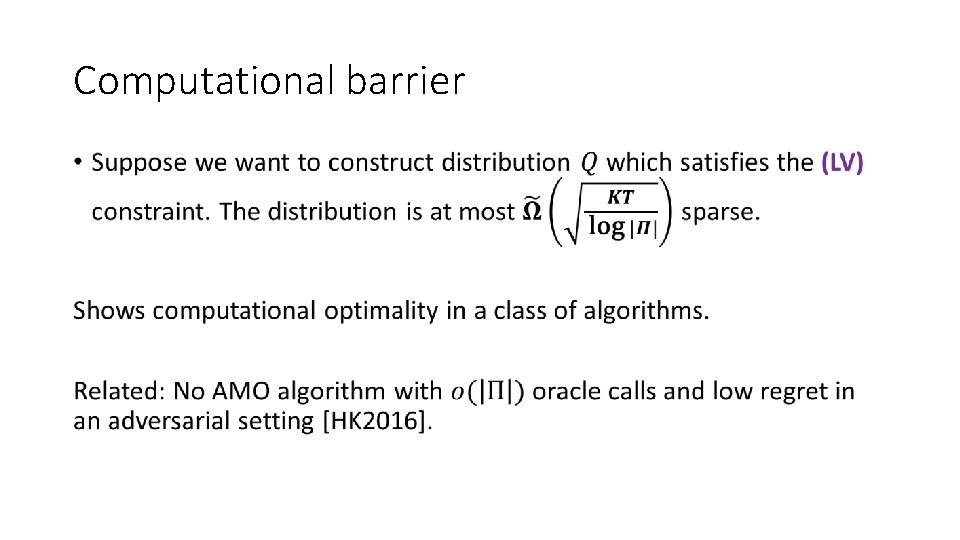

Computational barrier •

Conclusion • Contextual bandit reduction to supervised learning • Access policy class only via AMO. • New coordinate descent algorithm. • Statistically optimal and computationally practical. • Learn more: http: //arxiv. org/pdf/1402. 0555. pdf

Open problems and future steps • More adaptive algorithm + analysis (similar to Epoch-greedy)? • Algorithm for an online oracle? • Can we use a computationally efficient AMO? • Extensions to structured actions (such as rankings, lists …) • Extensions to Reinforcement Learning

- Slides: 32