Towards Robot Theatre Marek Perkowski Department of Electrical

Towards Robot Theatre Marek Perkowski Department of Electrical and Computer Engineering, Portland State University, Portland, Oregon, 97207 -0751

Week 2 • Lectures 3 and 4

Humanoid Robots and Robot Toys

Talking Robots • Many talking robots exist, but they are still very primitive • Work with elderly and disabled • Actors for robot theatre, agents for advertisement, education and Dog. com from Japan entertainment. • Designing inexpensive We concentrate on Machine Learning natural size humanoid techniques used to teach robots caricature and realistic behaviors, natural language dialogs robot heads and facial gestures. Work in progress

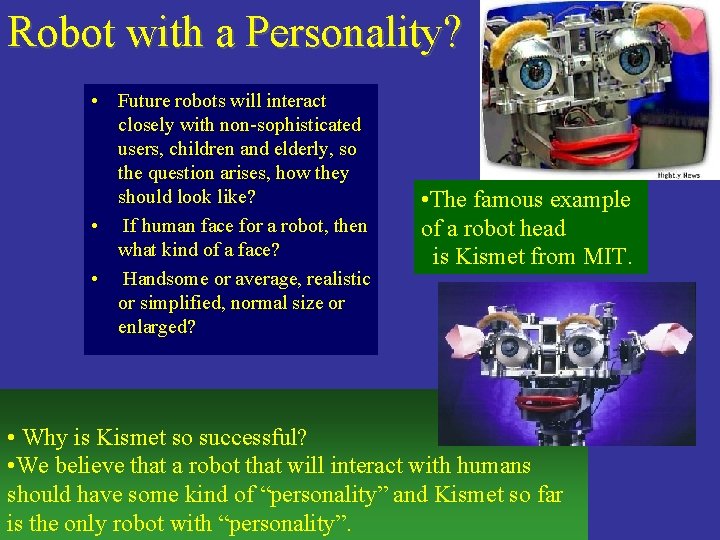

Robot with a Personality? • Future robots will interact closely with non-sophisticated users, children and elderly, so the question arises, how they should look like? • If human face for a robot, then what kind of a face? • Handsome or average, realistic or simplified, normal size or enlarged? • The famous example of a robot head is Kismet from MIT. • Why is Kismet so successful? • We believe that a robot that will interact with humans should have some kind of “personality” and Kismet so far is the only robot with “personality”.

Robot face should be friendly and funny The Muppets of Jim Henson are hard to match examples of puppet artistry and animation perfection. We are interested in robot’s personality as expressed by its: – – behavior, facial gestures, emotions, learned speech patterns.

Behavior, Dialog and Learning Words communicate only about 35 % of the information transmitted from a sender to a receiver in a human-to-human communication. The remaining information is included in para-language. Emotions, thoughts, decision and intentions of a speaker can be recognized earlier than they are verbalized. NASA • Robot activity as a mapping of the sensed environment and internal states to behaviors and new internal states (emotions, energy levels, etc). • Our goal is to uniformly integrate verbal and non-verbal robot behaviors.

Morita’s Theory

Robot Metaphors and Models

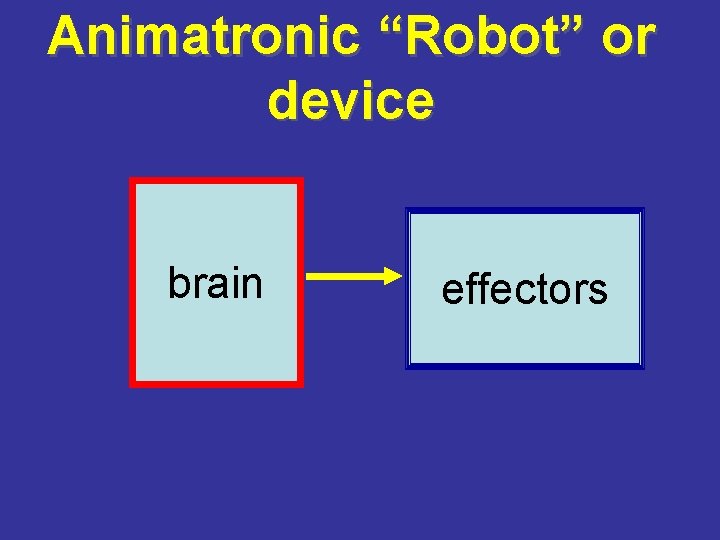

Animatronic “Robot” or device brain effectors

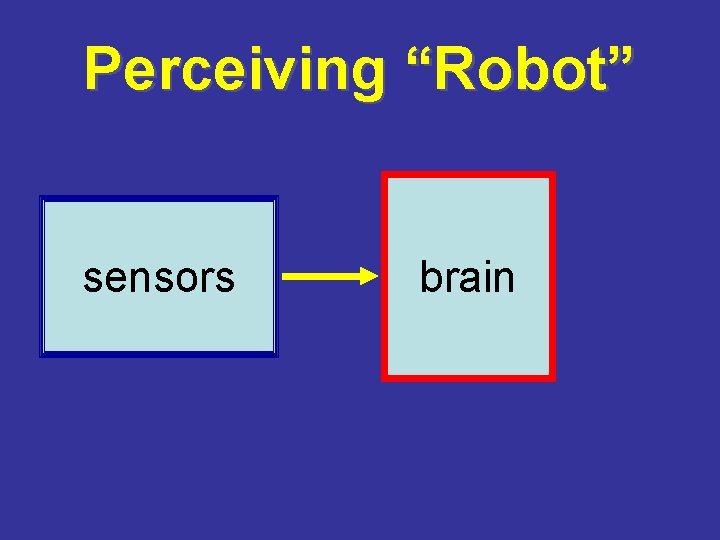

Perceiving “Robot” sensors brain

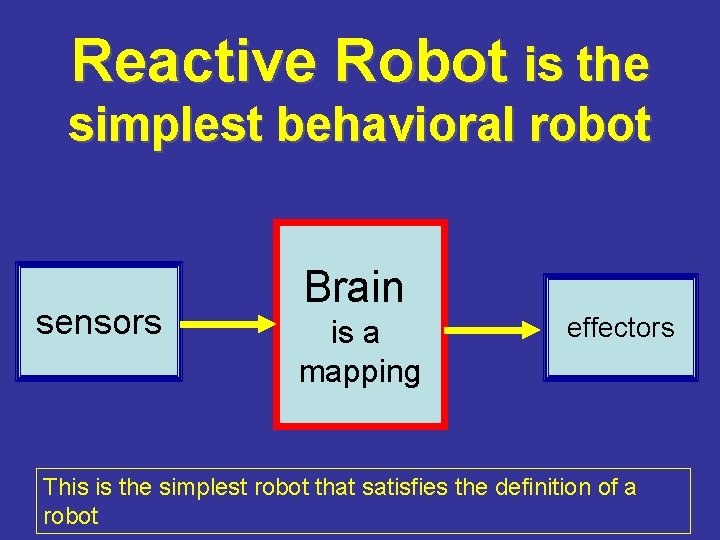

Reactive Robot is the simplest behavioral robot sensors Brain is a mapping effectors This is the simplest robot that satisfies the definition of a robot

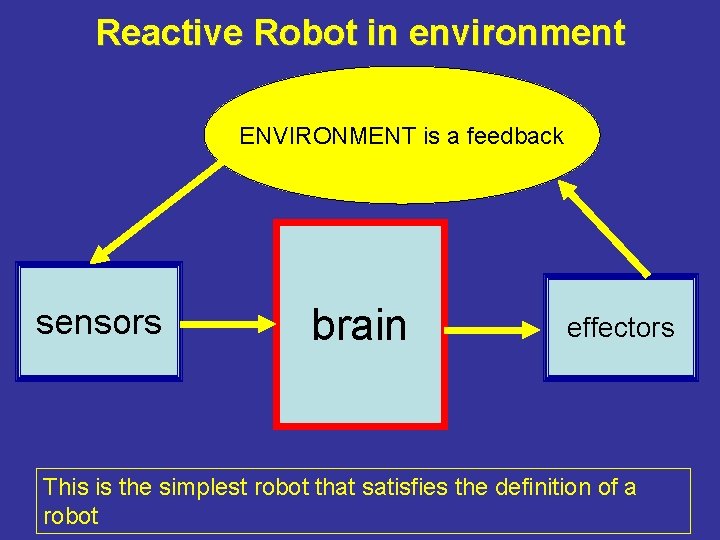

Reactive Robot in environment ENVIRONMENT is a feedback sensors brain effectors This is the simplest robot that satisfies the definition of a robot

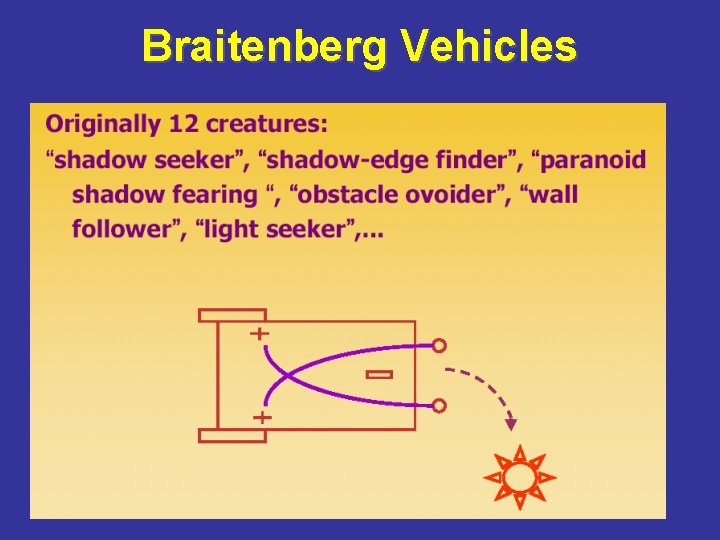

Braitenberg Vehicles and Quantum Automata Robots

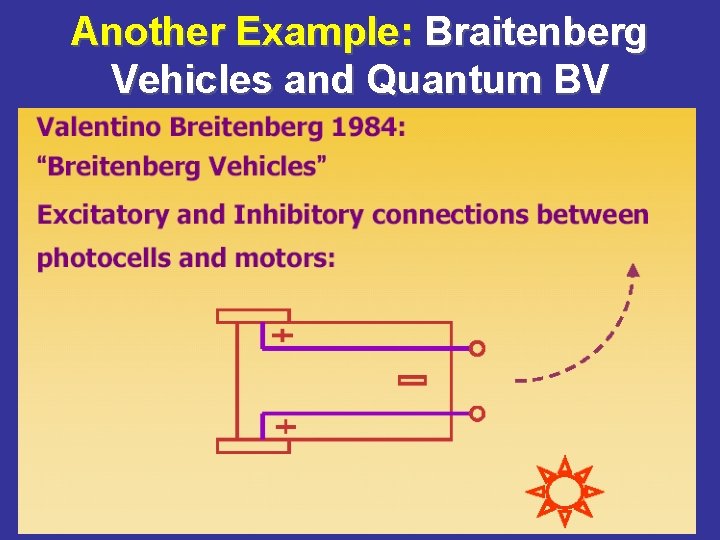

Another Example: Braitenberg Vehicles and Quantum BV

Braitenberg Vehicles

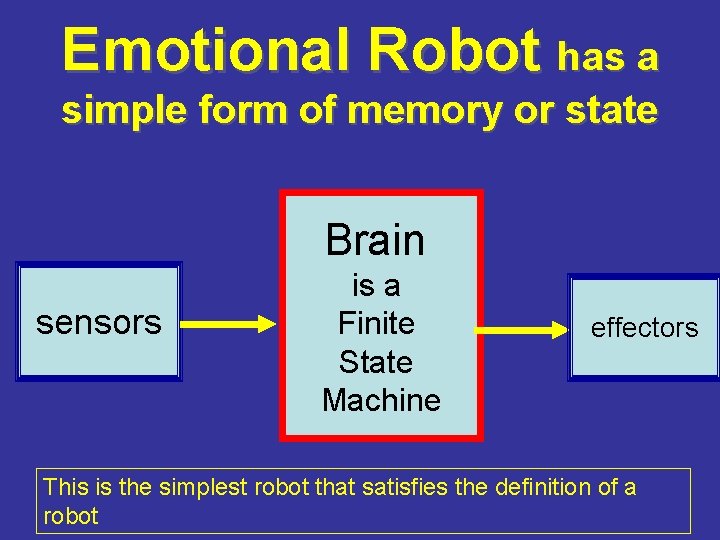

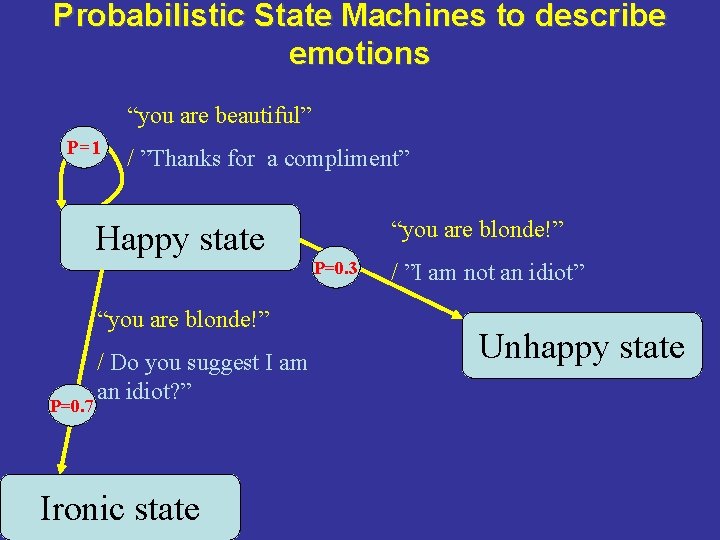

Emotional Robot has a simple form of memory or state Brain sensors is a Finite State Machine effectors This is the simplest robot that satisfies the definition of a robot

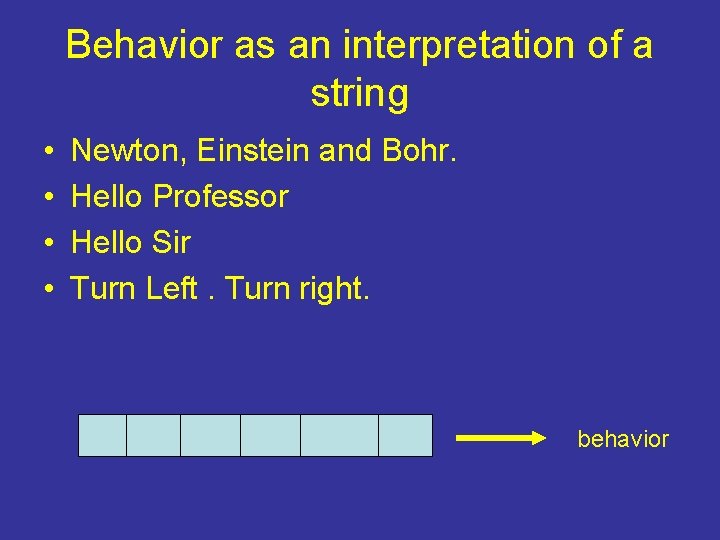

Behavior as an interpretation of a string • • Newton, Einstein and Bohr. Hello Professor Hello Sir Turn Left. Turn right. behavior

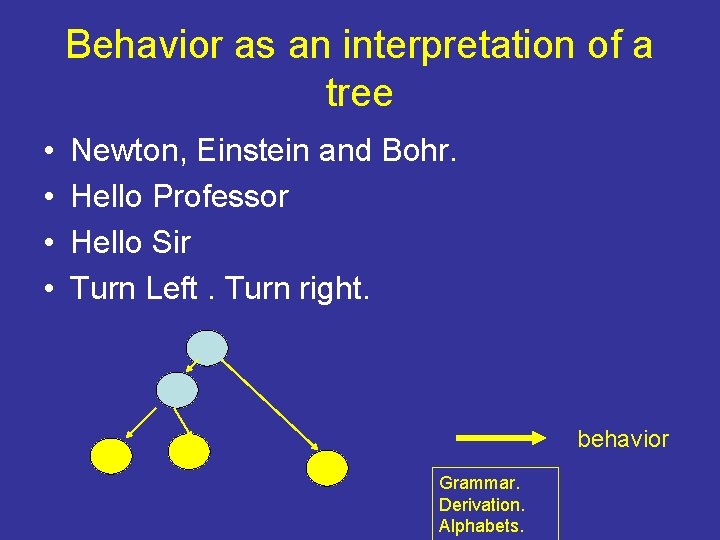

Behavior as an interpretation of a tree • • Newton, Einstein and Bohr. Hello Professor Hello Sir Turn Left. Turn right. behavior Grammar. Derivation. Alphabets.

Our Base Model and Designs

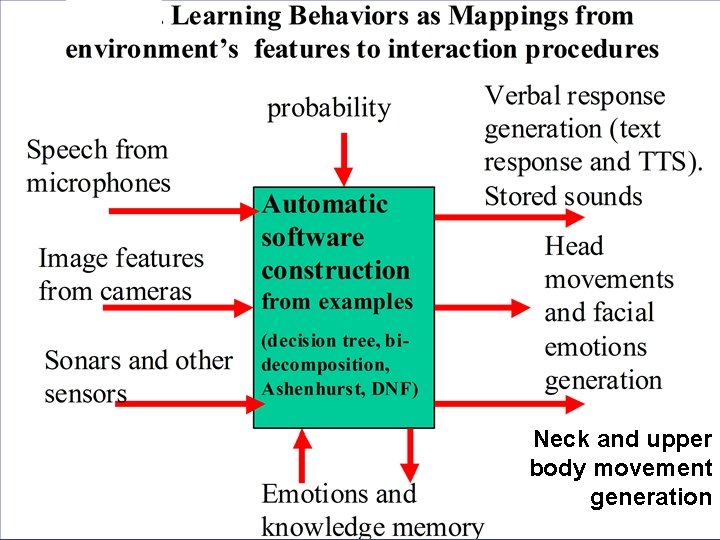

Neck and upper body movement generation

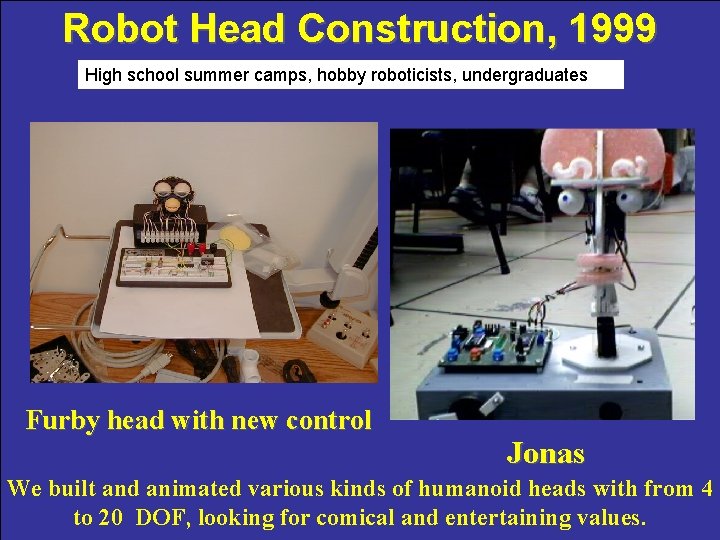

Robot Head Construction, 1999 High school summer camps, hobby roboticists, undergraduates Furby head with new control Jonas We built and animated various kinds of humanoid heads with from 4 to 20 DOF, looking for comical and entertaining values.

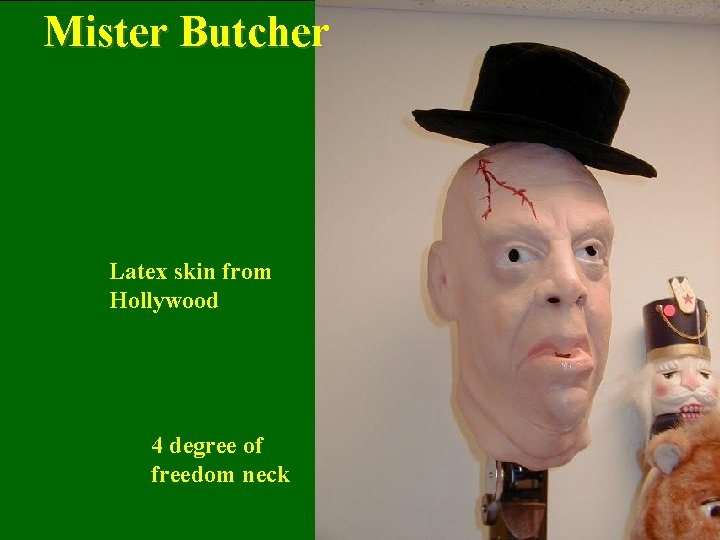

Mister Butcher Latex skin from Hollywood 4 degree of freedom neck

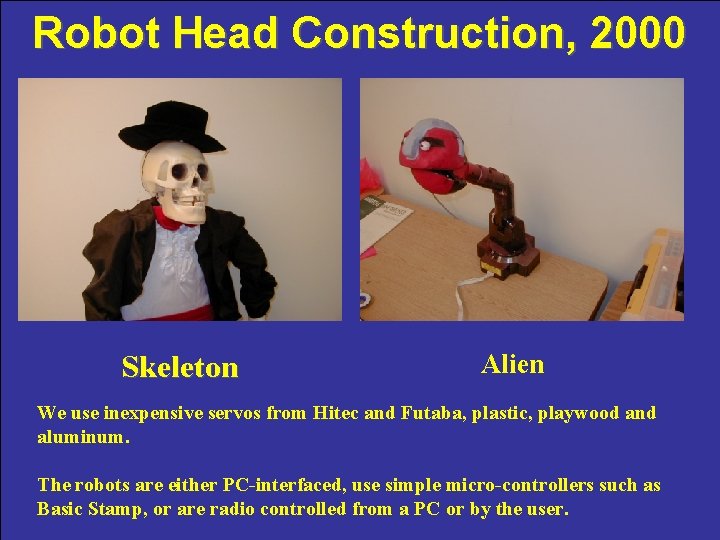

Robot Head Construction, 2000 Skeleton Alien We use inexpensive servos from Hitec and Futaba, plastic, playwood and aluminum. The robots are either PC-interfaced, use simple micro-controllers such as Basic Stamp, or are radio controlled from a PC or by the user.

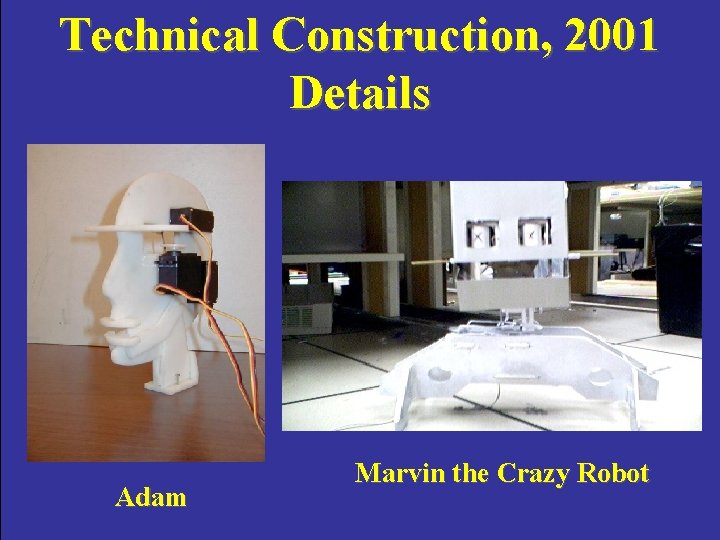

Technical Construction, 2001 Details Adam Marvin the Crazy Robot

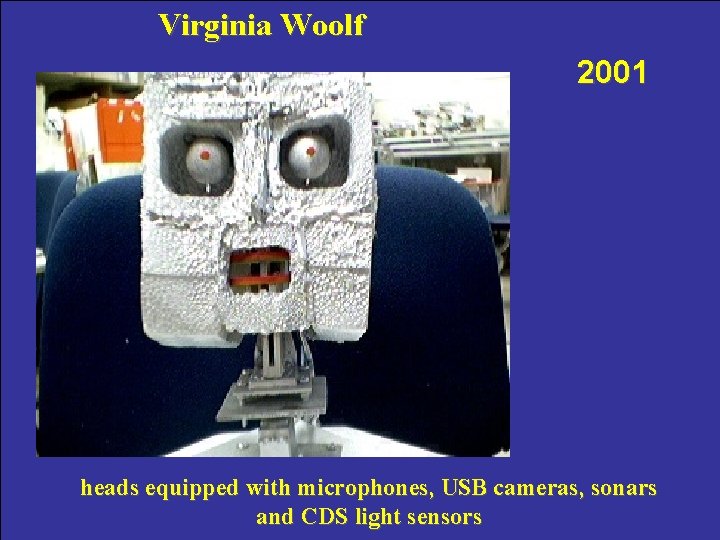

Virginia Woolf 2001 heads equipped with microphones, USB cameras, sonars and CDS light sensors

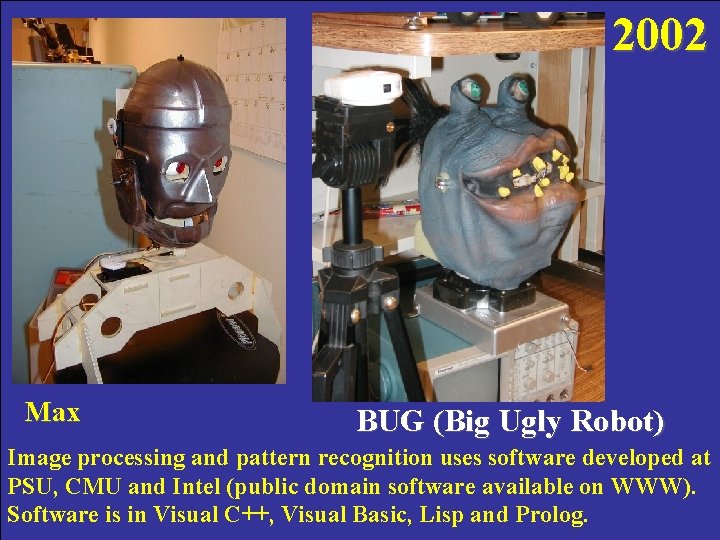

2002 Max BUG (Big Ugly Robot) Image processing and pattern recognition uses software developed at PSU, CMU and Intel (public domain software available on WWW). Software is in Visual C++, Visual Basic, Lisp and Prolog.

Visual Feedback and Learning based on Constructive Induction Uland Wong, 17 years old 2002

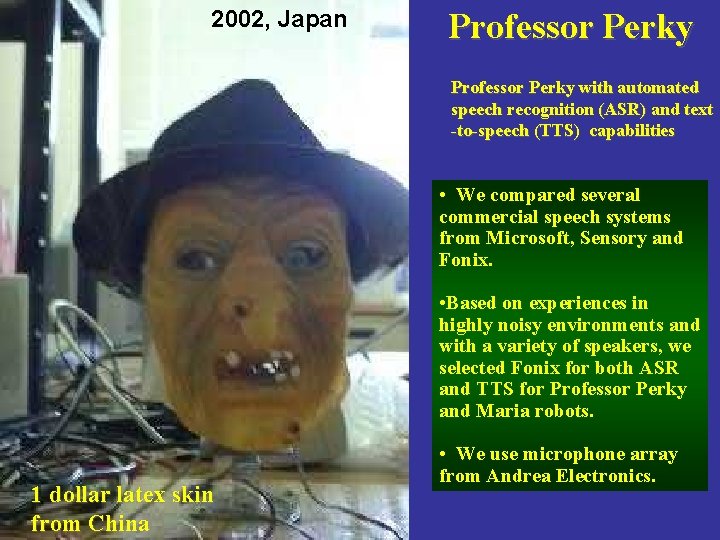

2002, Japan Professor Perky with automated speech recognition (ASR) and text -to-speech (TTS) capabilities • We compared several commercial speech systems from Microsoft, Sensory and Fonix. • Based on experiences in highly noisy environments and with a variety of speakers, we selected Fonix for both ASR and TTS for Professor Perky and Maria robots. 1 dollar latex skin from China • We use microphone array from Andrea Electronics.

Maria, 2002/2003 20 DOF

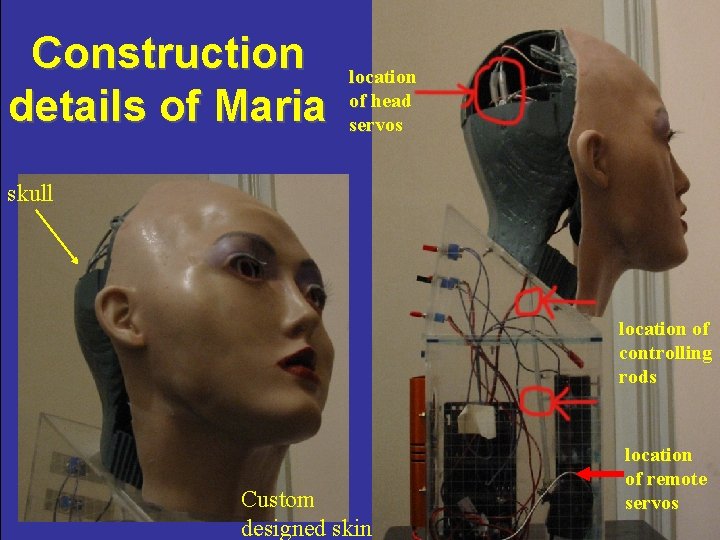

Construction details of Maria location of head servos skull location of controlling rods Custom designed skin location of remote servos

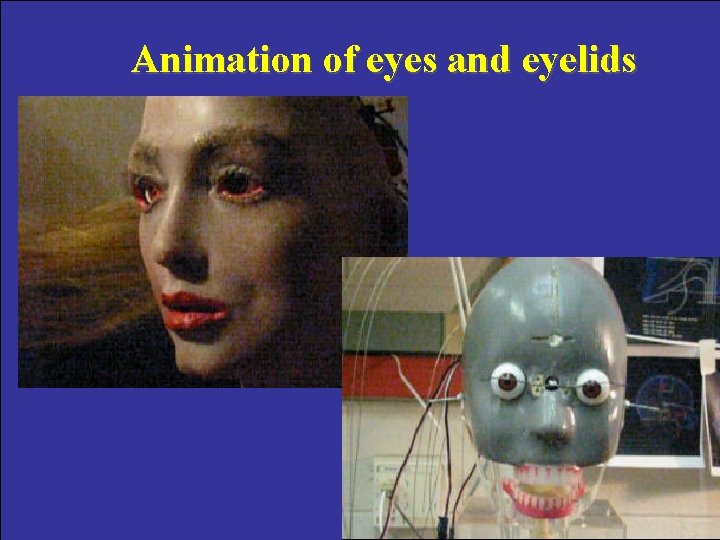

Animation of eyes and eyelids

Cynthia, 2004, June

Currently the hands are not moveable. We have a separate hand design project.

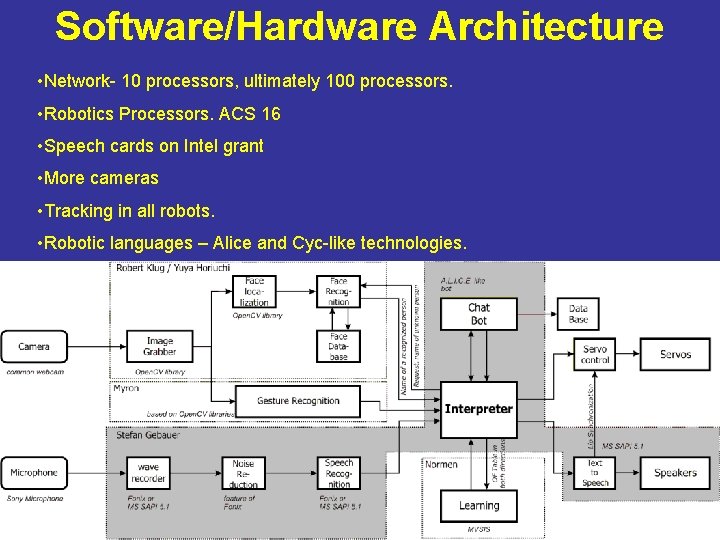

Software/Hardware Architecture • Network- 10 processors, ultimately 100 processors. • Robotics Processors. ACS 16 • Speech cards on Intel grant • More cameras • Tracking in all robots. • Robotic languages – Alice and Cyc-like technologies.

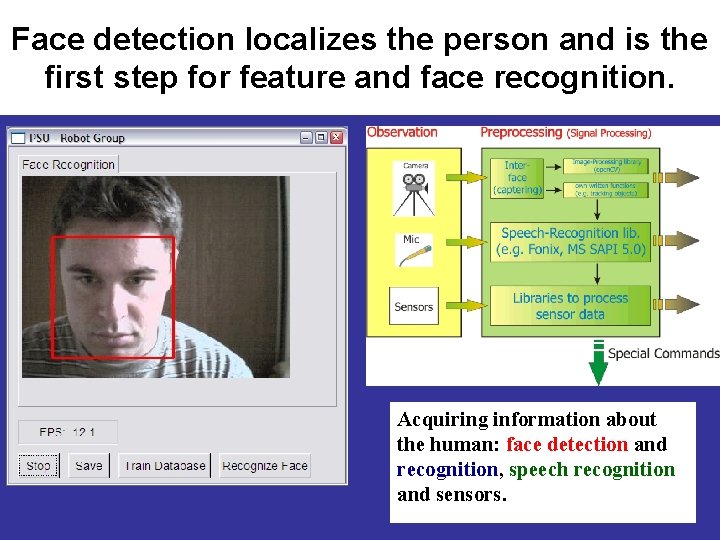

Face detection localizes the person and is the first step for feature and face recognition. Acquiring information about the human: face detection and recognition, speech recognition and sensors.

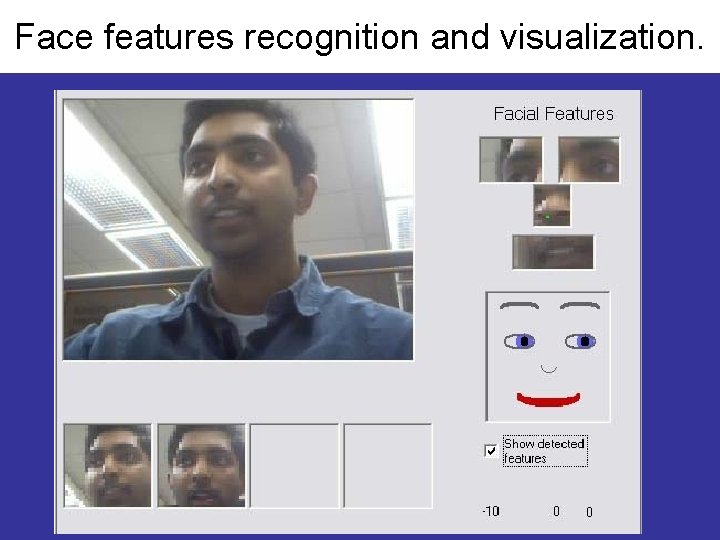

Face features recognition and visualization.

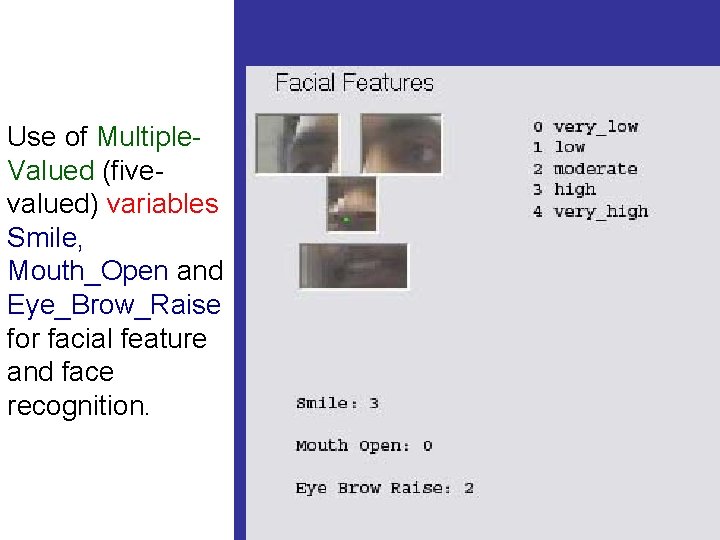

Use of Multiple. Valued (fivevalued) variables Smile, Mouth_Open and Eye_Brow_Raise for facial feature and face recognition.

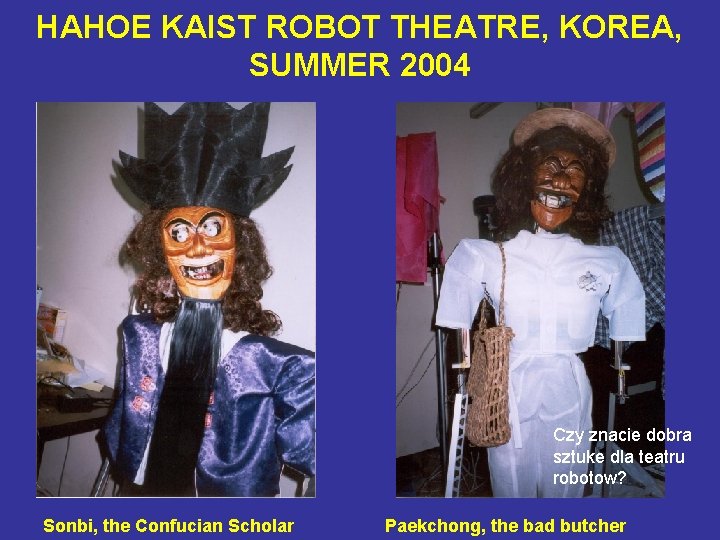

HAHOE KAIST ROBOT THEATRE, KOREA, SUMMER 2004 Czy znacie dobra sztuke dla teatru robotow? Sonbi, the Confucian Scholar Paekchong, the bad butcher

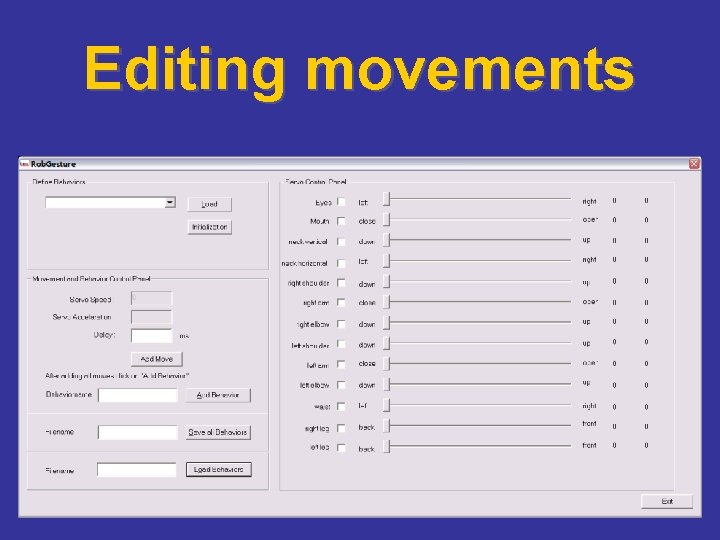

Editing movements

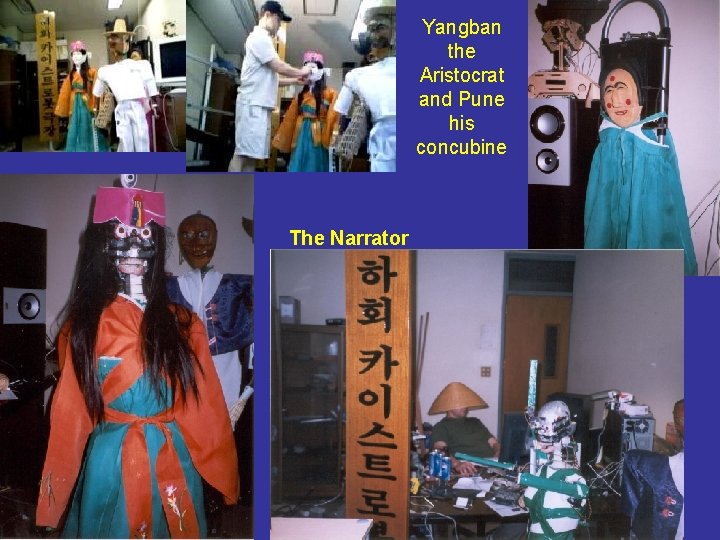

Yangban the Aristocrat and Pune his concubine The Narrator

The Narrator

We base all our robots on inexpensive radiocontrolled servo technology.

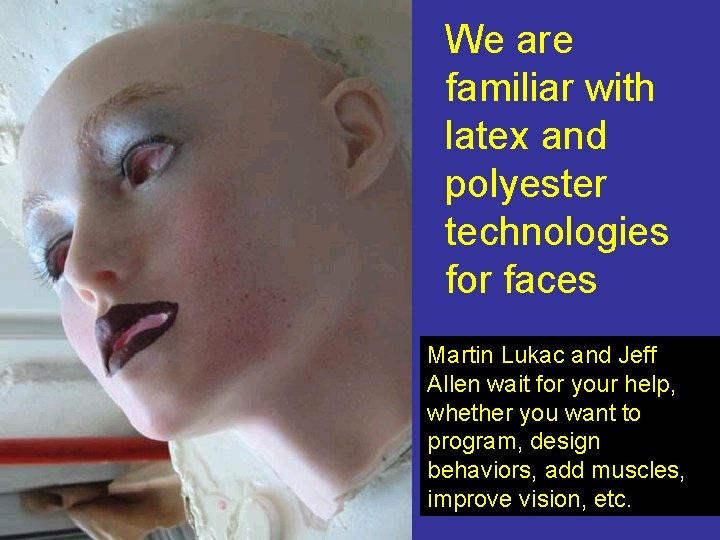

We are familiar with latex and polyester technologies for faces Martin Lukac and Jeff Allen wait for your help, whether you want to program, design behaviors, add muscles, improve vision, etc.

New Silicone Skins

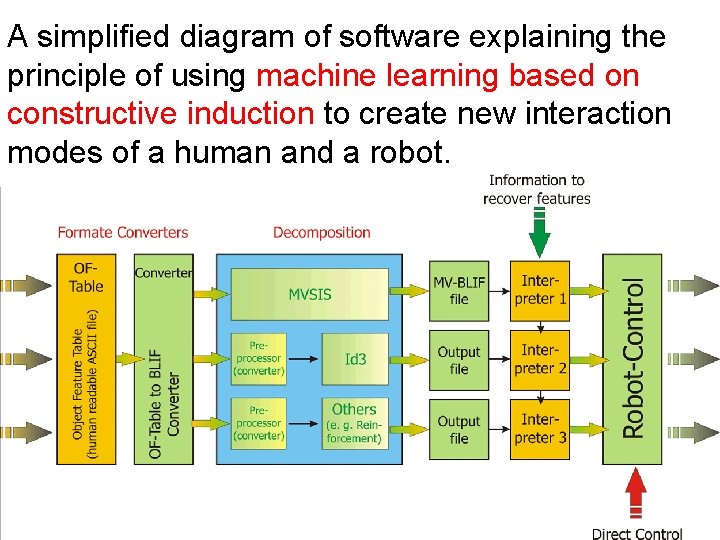

A simplified diagram of software explaining the principle of using machine learning based on constructive induction to create new interaction modes of a human and a robot.

Probabilistic and Finite State Machines

Probabilistic State Machines to describe emotions “you are beautiful” P=1 / ”Thanks for a compliment” Happy state “you are blonde!” P=0. 7 / Do you suggest I am an idiot? ” Ironic state “you are blonde!” P=0. 3 / ”I am not an idiot” Unhappy state

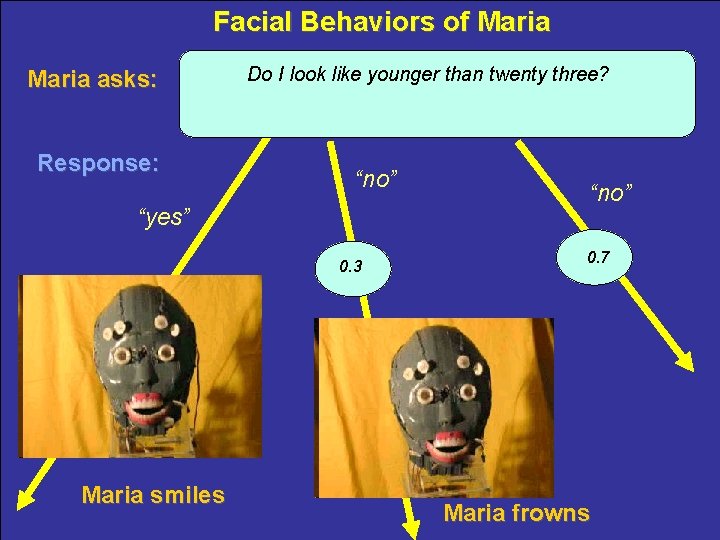

Facial Behaviors of Maria asks: Response: Do I look like younger than twenty three? §“no” §“yes” 0. 3 Maria smiles §“no” 0. 7 Maria frowns

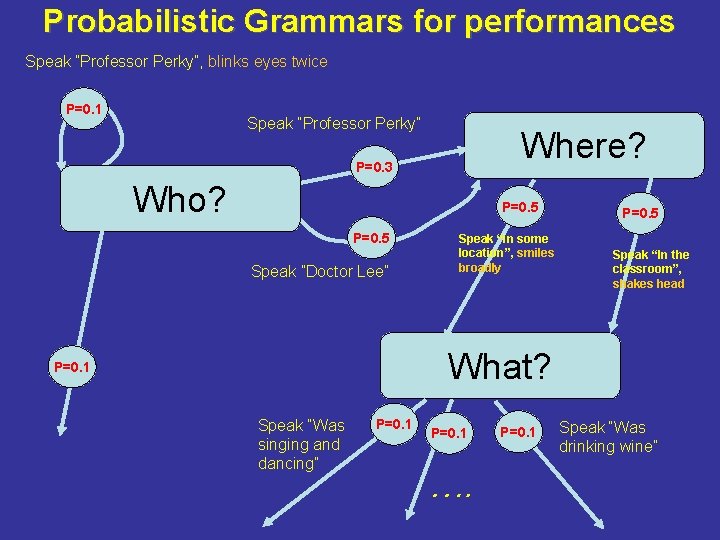

Probabilistic Grammars for performances Speak ”Professor Perky”, blinks eyes twice P=0. 1 Speak ”Professor Perky” Where? P=0. 3 Who? P=0. 5 Speak ”Doctor Lee” Speak “in some location”, smiles broadly P=0. 5 Speak “In the classroom”, shakes head What? P=0. 1 Speak “Was singing and dancing” P=0. 1 …. P=0. 1 Speak “Was drinking wine”

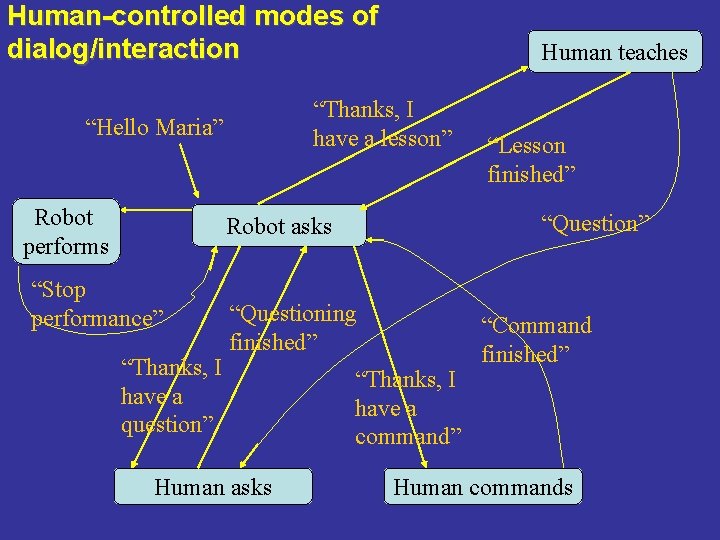

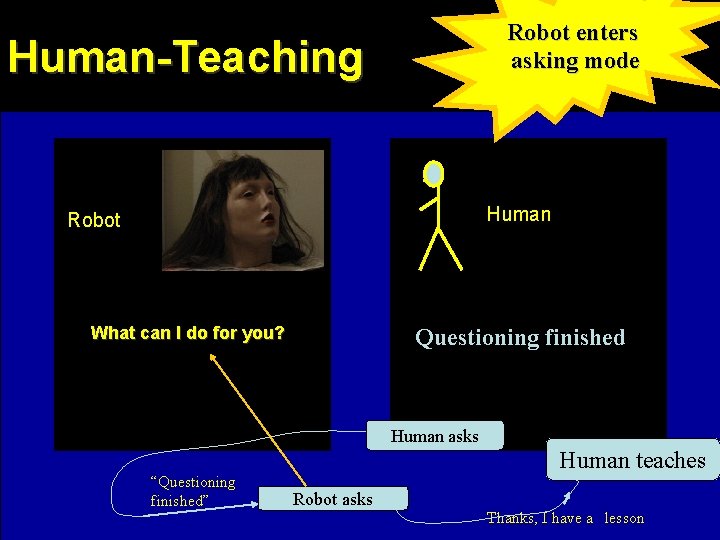

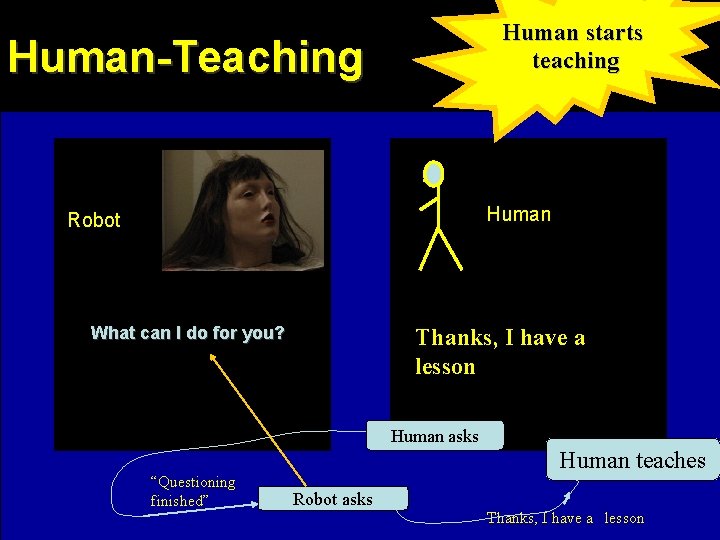

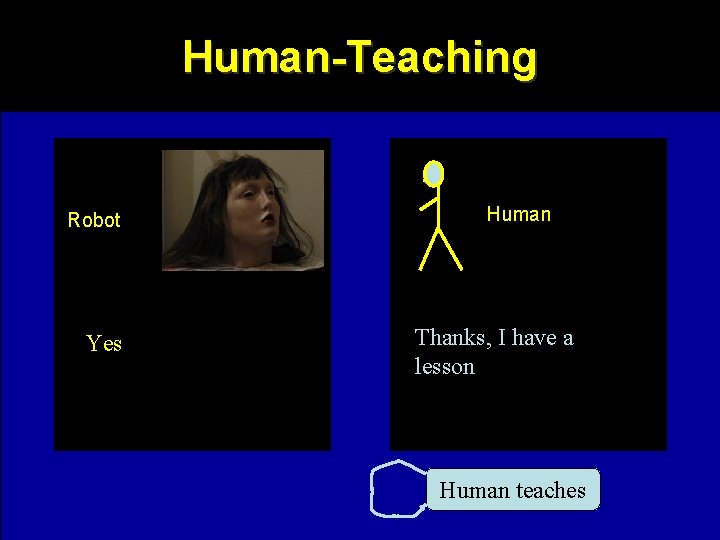

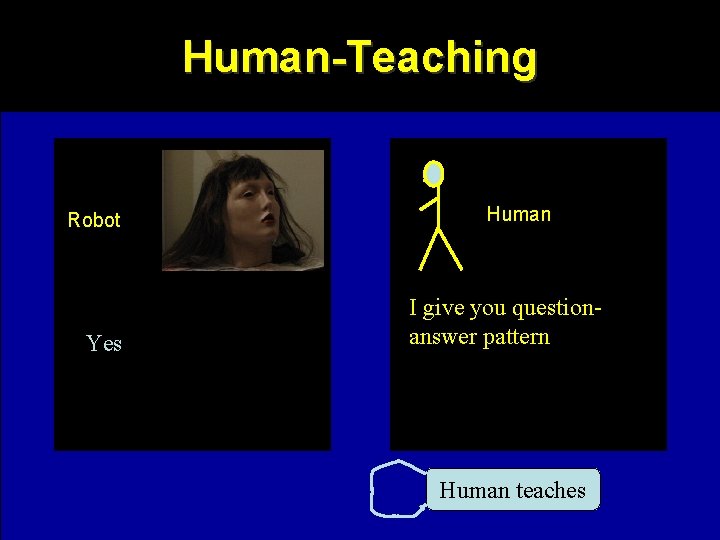

Human-controlled modes of dialog/interaction “Thanks, I have a lesson” “Hello Maria” Robot performs Human teaches “Question” Robot asks “Stop performance” “Thanks, I have a question” “Questioning finished” Human asks “Lesson finished” “Thanks, I have a command” “Command finished” Human commands

Dialog and Robot’s Knowledge

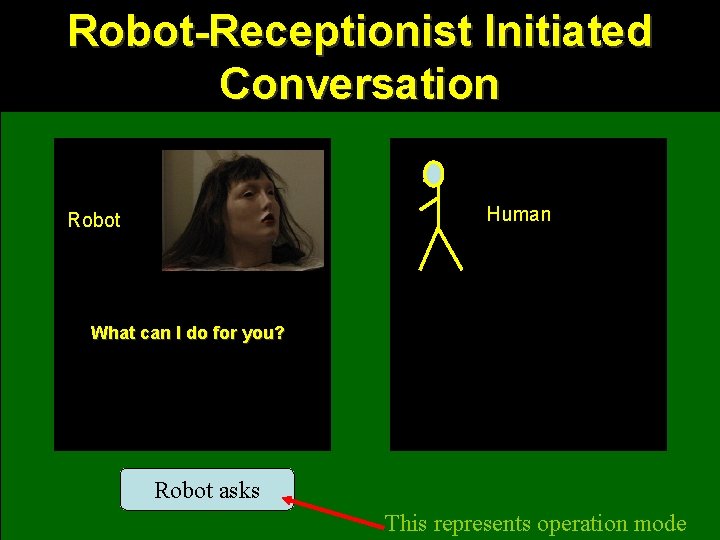

Robot-Receptionist Initiated Conversation Human Robot What can I do for you? Robot asks This represents operation mode

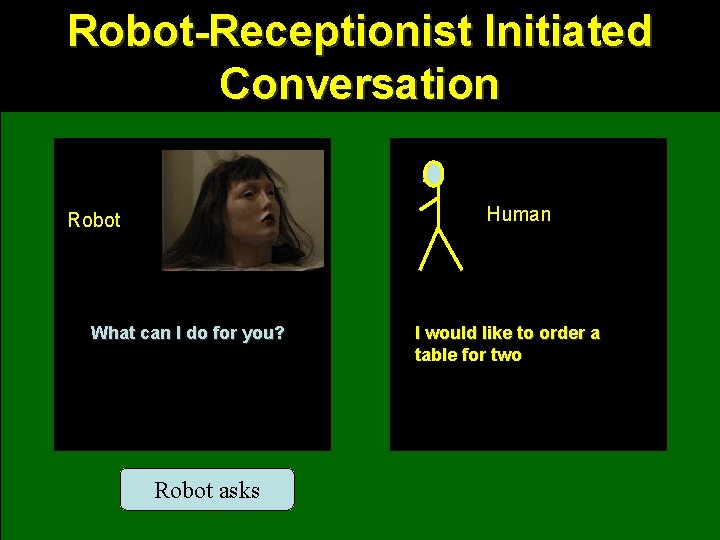

Robot-Receptionist Initiated Conversation Human Robot What can I do for you? Robot asks I would like to order a table for two

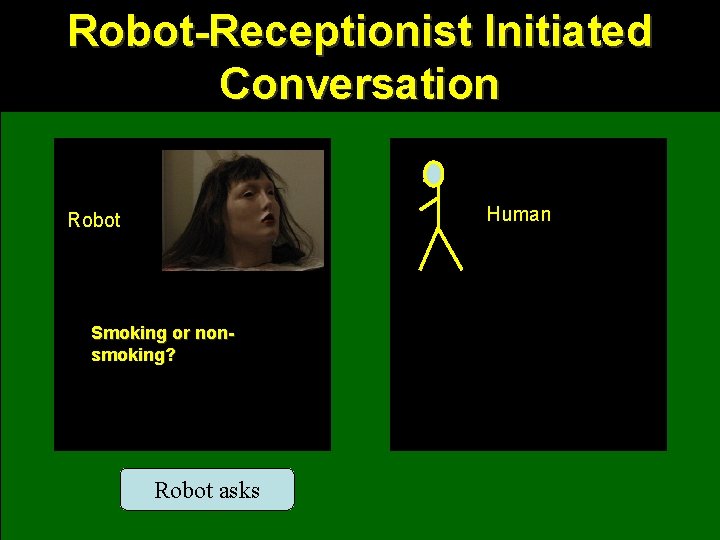

Robot-Receptionist Initiated Conversation Human Robot Smoking or nonsmoking? Robot asks

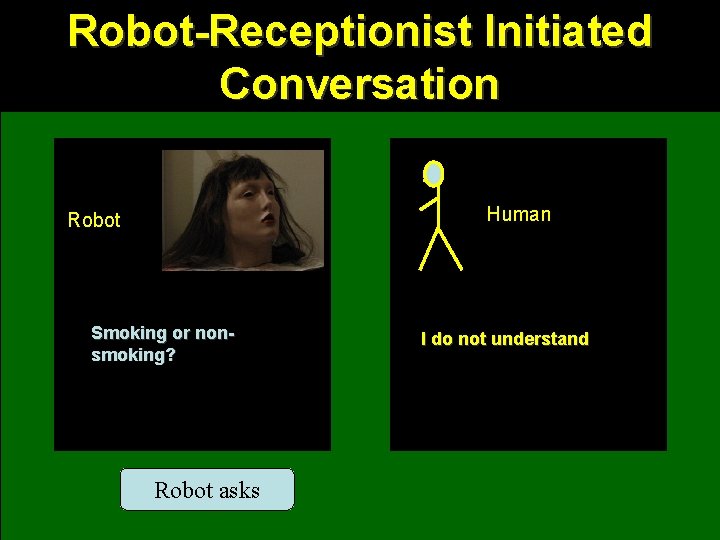

Robot-Receptionist Initiated Conversation Human Robot Smoking or nonsmoking? Robot asks I do not understand

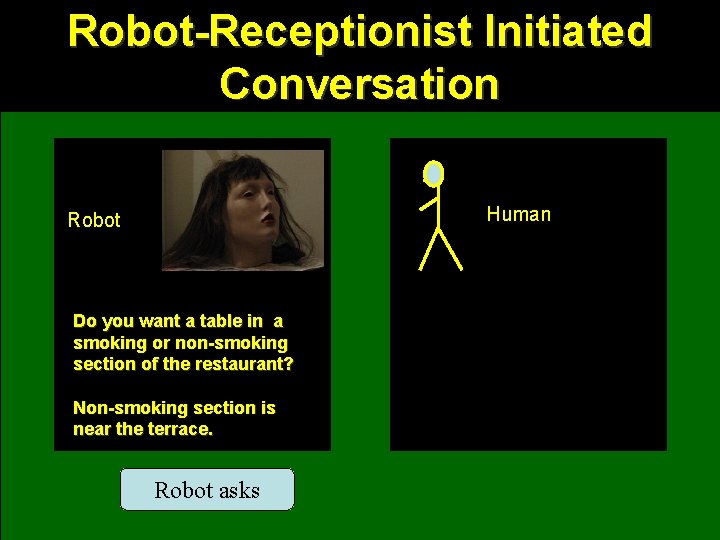

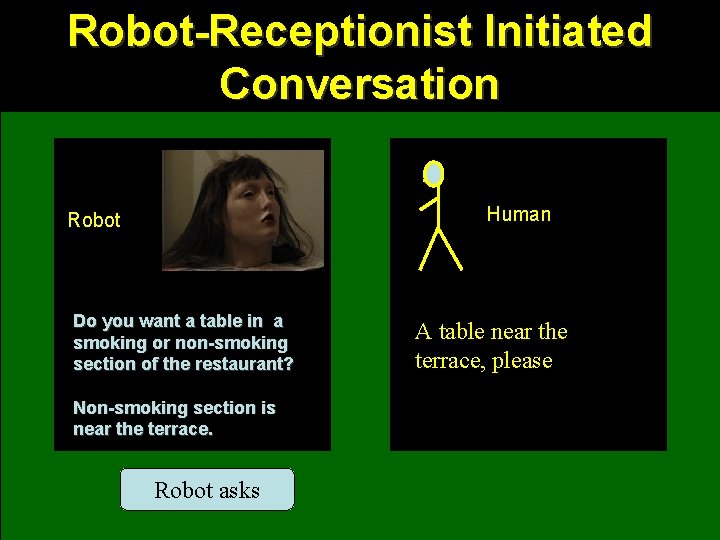

Robot-Receptionist Initiated Conversation Human Robot Do you want a table in a smoking or non-smoking section of the restaurant? Non-smoking section is near the terrace. Robot asks

Robot-Receptionist Initiated Conversation Human Robot Do you want a table in a smoking or non-smoking section of the restaurant? Non-smoking section is near the terrace. Robot asks A table near the terrace, please

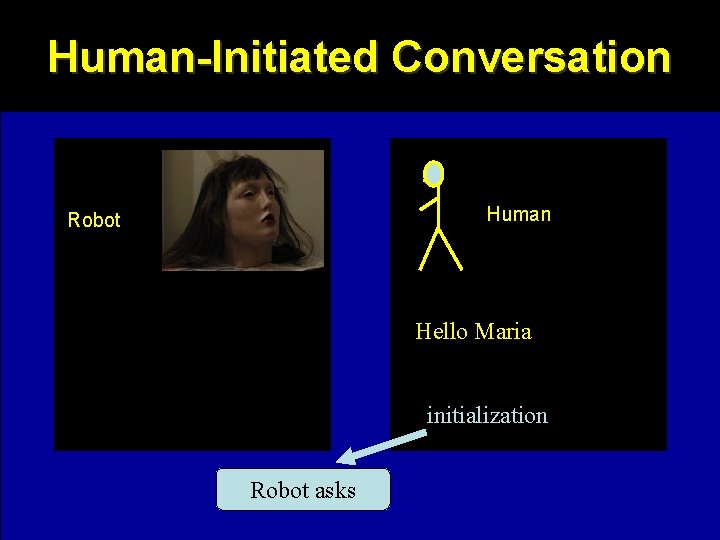

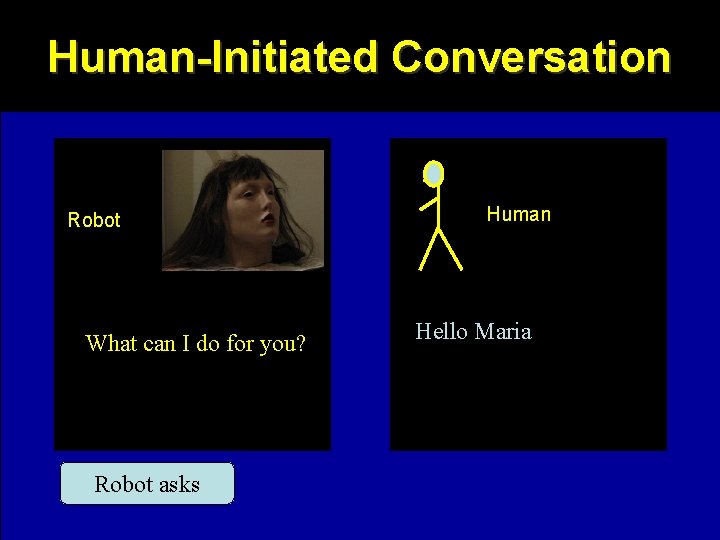

Human-Initiated Conversation Human Robot Hello Maria initialization Robot asks

Human-Initiated Conversation Robot What can I do for you? Robot asks Human Hello Maria

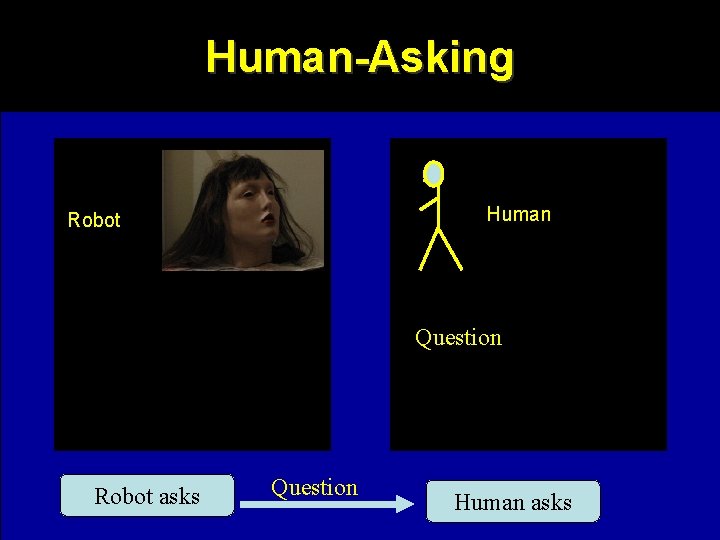

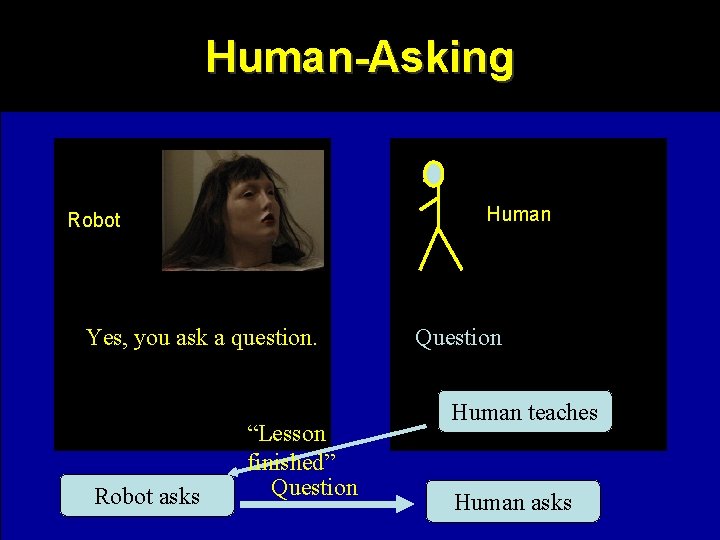

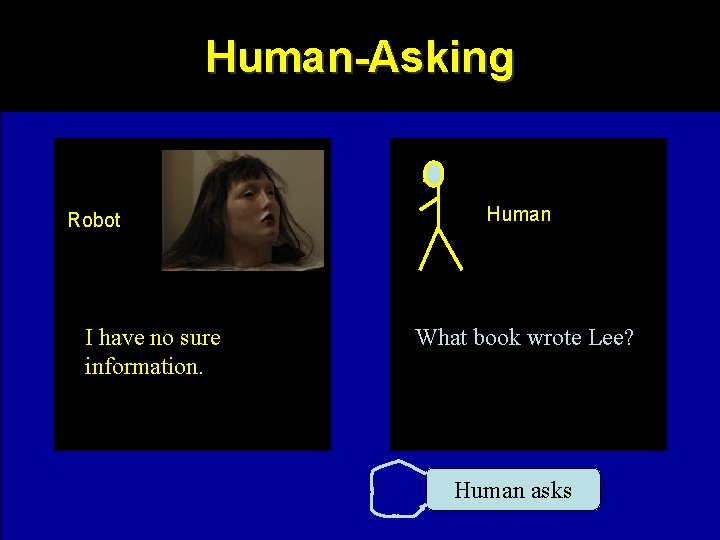

Human-Asking Human Robot Question Robot asks Question Human asks

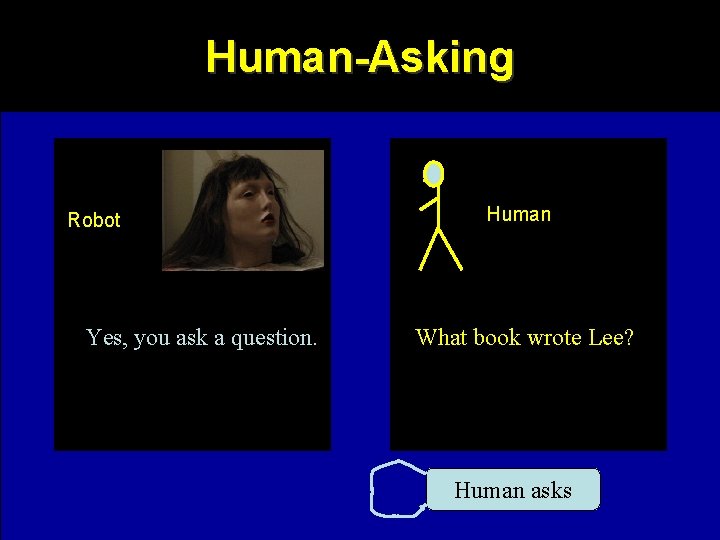

Human-Asking Robot Yes, you ask a question. Human Question Human asks

Human-Asking Robot Yes, you ask a question. Human What book wrote Lee? Human asks

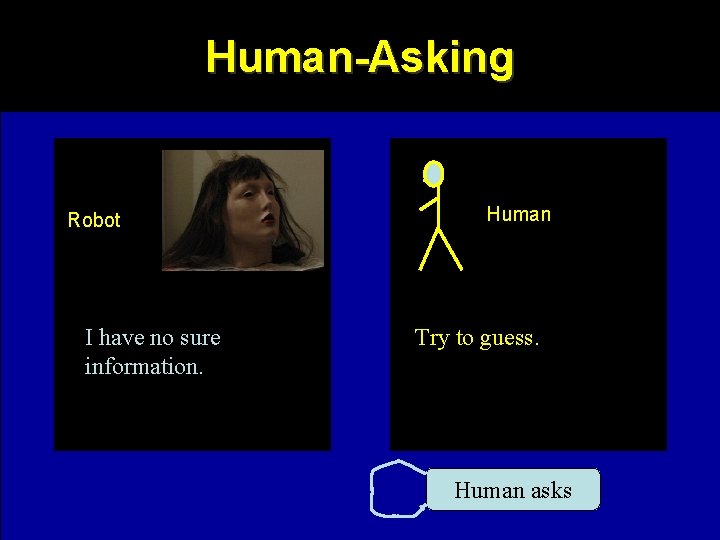

Human-Asking Robot I have no sure information. Human What book wrote Lee? Human asks

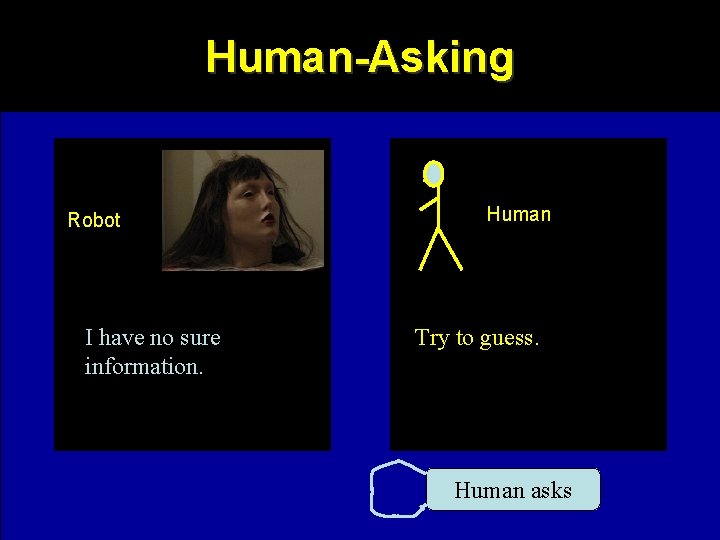

Human-Asking Robot I have no sure information. Human Try to guess. Human asks

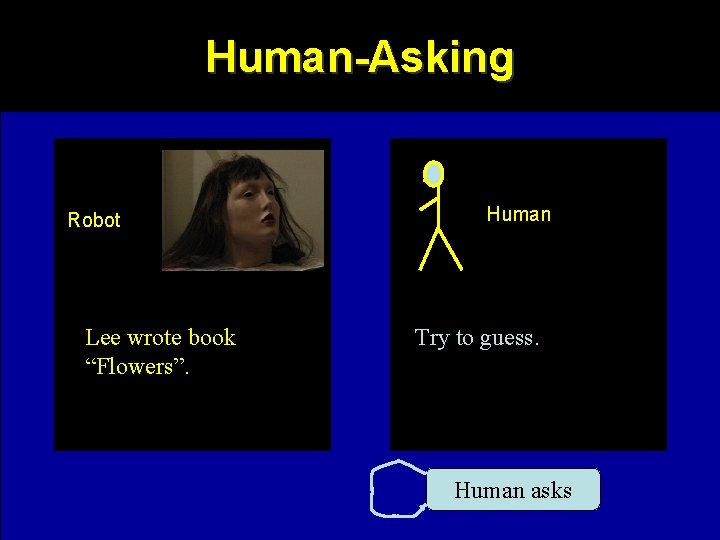

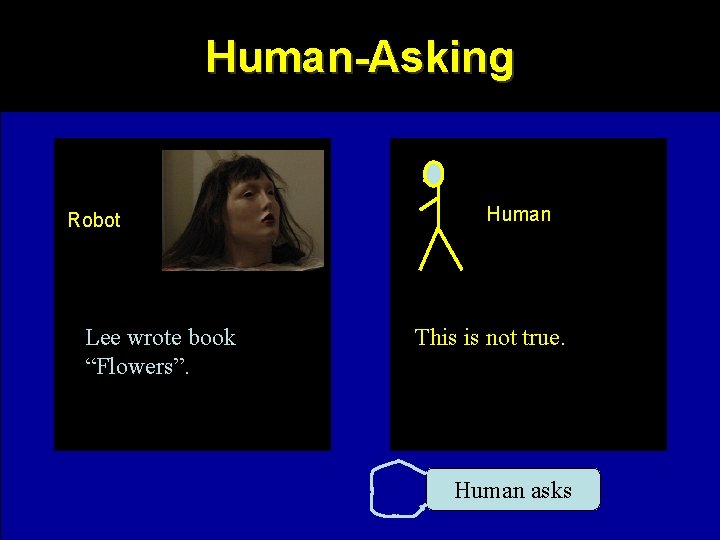

Human-Asking Robot Lee wrote book “Flowers”. Human Try to guess. Human asks

Human-Asking Robot Lee wrote book “Flowers”. Human This is not true. Human asks

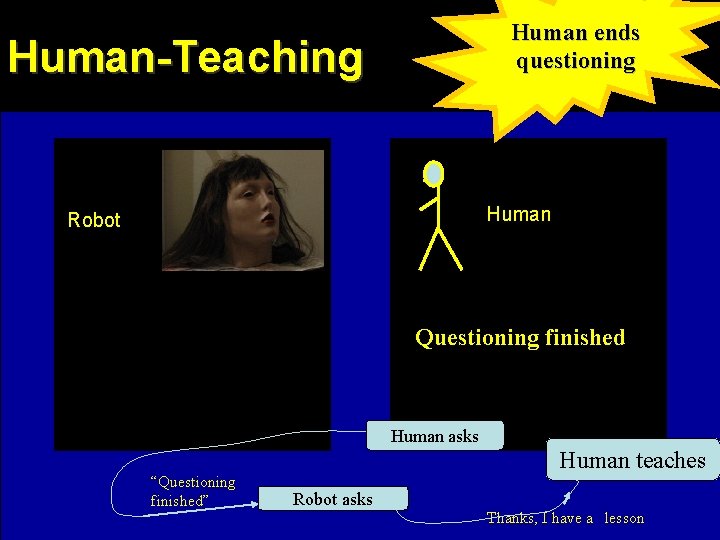

Human ends questioning Human-Teaching Human Robot Questioning finished Human asks “Questioning finished” Human teaches Robot asks Thanks, I have a lesson

Robot enters asking mode Human-Teaching Human Robot What can I do for you? Questioning finished Human asks “Questioning finished” Human teaches Robot asks Thanks, I have a lesson

Human starts teaching Human-Teaching Human Robot What can I do for you? Thanks, I have a lesson Human asks “Questioning finished” Human teaches Robot asks Thanks, I have a lesson

Human-Teaching Robot Yes Human Thanks, I have a lesson Human teaches

Human-Teaching Robot Yes Human I give you questionanswer pattern Human teaches

Human-Teaching Robot Human Question pattern: Yes What book Smith wrote? Human teaches

Human-Teaching Robot Human Answer pattern: Yes Smith wrote book “Automata Theory” Human teaches

Human-Teaching Robot Human Checking question: Yes What book wrote Smith? Human teaches

Human-Teaching Robot Human Checking question: Smith wrote book “Automata Theory” What book wrote Smith? Human teaches

Human-Teaching Robot Yes Human I give you questionanswer pattern Human teaches

Human-Teaching Robot Human Question pattern: Yes Where is room of Lee? Human teaches

Human-Teaching Robot Human Answer pattern: Yes Lee is in room 332 Human teaches

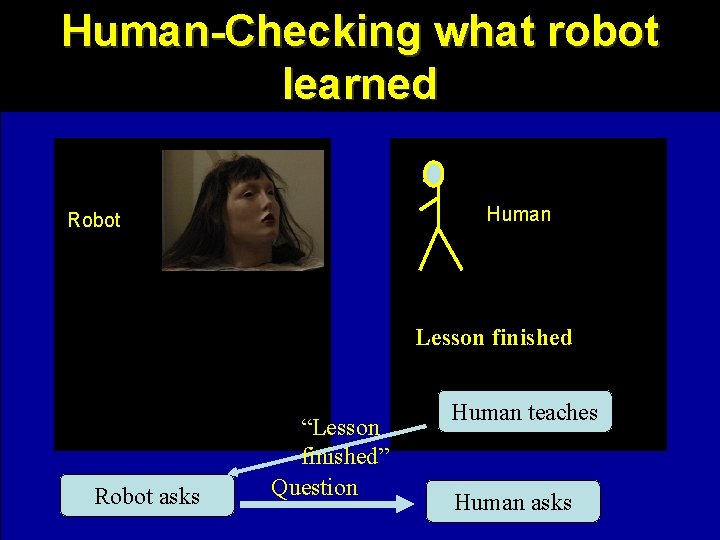

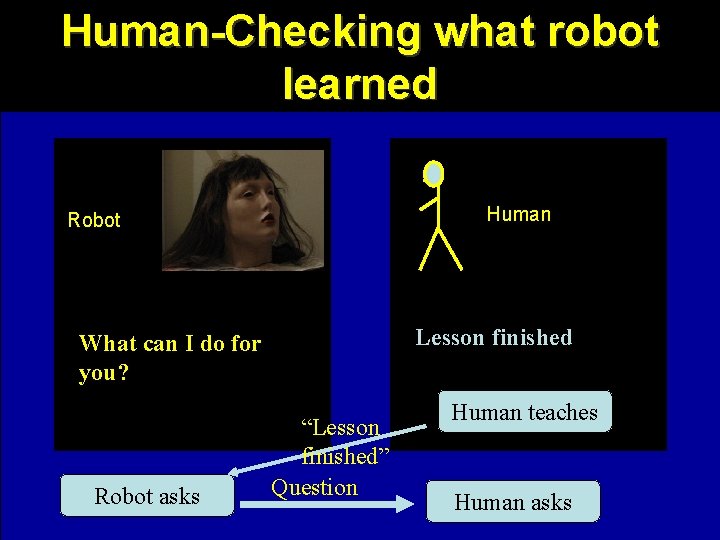

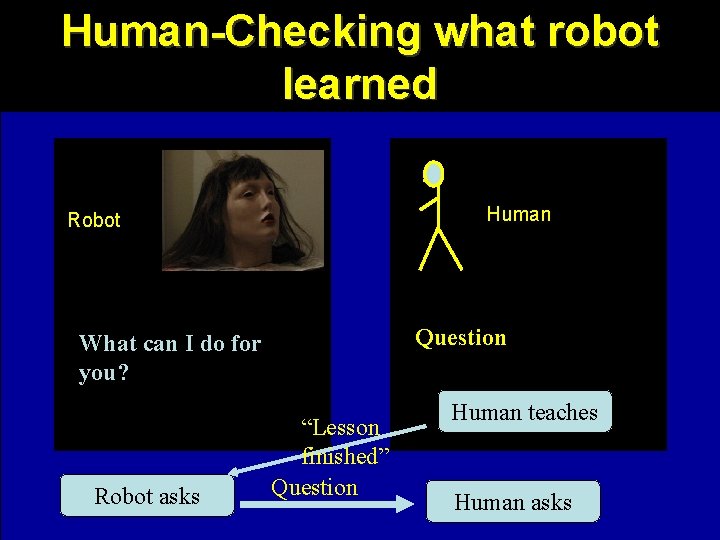

Human-Checking what robot learned Human Robot Lesson finished Robot asks “Lesson finished” Question Human teaches Human asks

Human-Checking what robot learned Human Robot Lesson finished What can I do for you? Robot asks “Lesson finished” Question Human teaches Human asks

Human-Checking what robot learned Human Robot Question What can I do for you? Robot asks “Lesson finished” Question Human teaches Human asks

Human-Asking Human Robot Yes, you ask a question. Robot asks “Lesson finished” Question Human teaches Human asks

Human-Asking Robot Yes, you ask a question. Human What book wrote Lee? Human asks

Human-Asking Robot I have no sure information. Human What book wrote Lee? Human asks

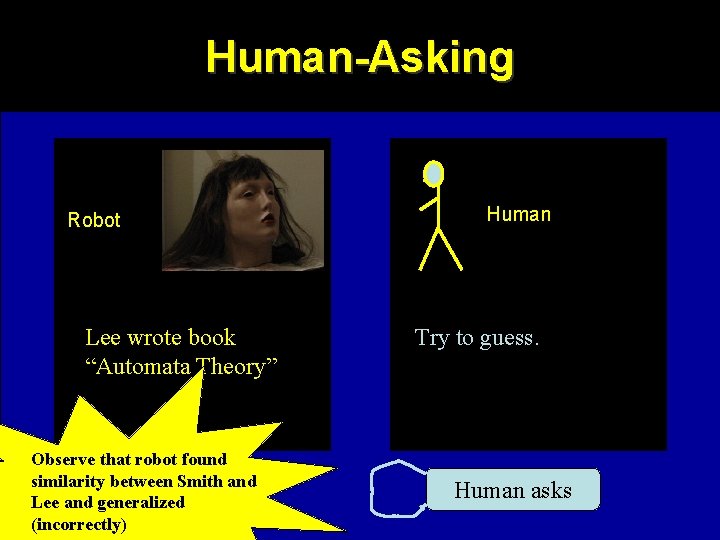

Human-Asking Robot I have no sure information. Human Try to guess. Human asks

Human-Asking Robot Lee wrote book “Automata Theory” Observe that robot found similarity between Smith and Lee and generalized (incorrectly) Human Try to guess. Human asks

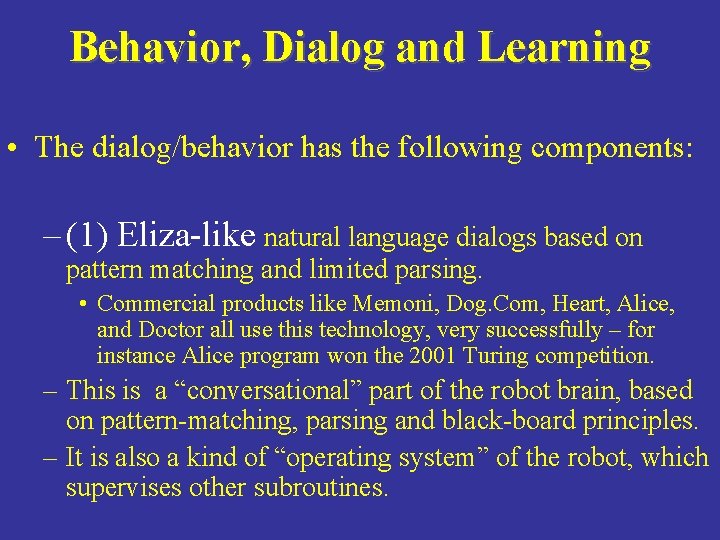

Behavior, Dialog and Learning • The dialog/behavior has the following components: – (1) Eliza-like natural language dialogs based on pattern matching and limited parsing. • Commercial products like Memoni, Dog. Com, Heart, Alice, and Doctor all use this technology, very successfully – for instance Alice program won the 2001 Turing competition. – This is a “conversational” part of the robot brain, based on pattern-matching, parsing and black-board principles. – It is also a kind of “operating system” of the robot, which supervises other subroutines.

Behavior, Dialog and Learning • (2) Subroutines with logical data base and natural language parsing (CHAT). – This is the logical part of the brain used to find connections between places, timings and all kind of logical and relational reasonings, such as answering questions about Japanese geography.

Behavior, Dialog and Learning • (3) Use of generalization and analogy in dialog on many levels. – Random and intentional linking of spoken language, sound effects and facial gestures. – Use of Constructive Induction approach to help generalization, analogy reasoning and probabilistic generations in verbal and non-verbal dialog, like learning when to smile or turn the head off the partner.

Behavior, Dialog and Learning • (4) Model of the robot, model of the user, scenario of the situation, history of the dialog, all used in the conversation. • (5) Use of word spotting in speech recognition rather than single word or continuous speech recognition. • • (6) Continuous speech recognition (Microsoft) • (7) Avoidance of “I do not know”, “I do not understand” answers from the robot. – Our robot will have always something to say, in the worst case, over-generalized, with not valid analogies or even nonsensical and random.

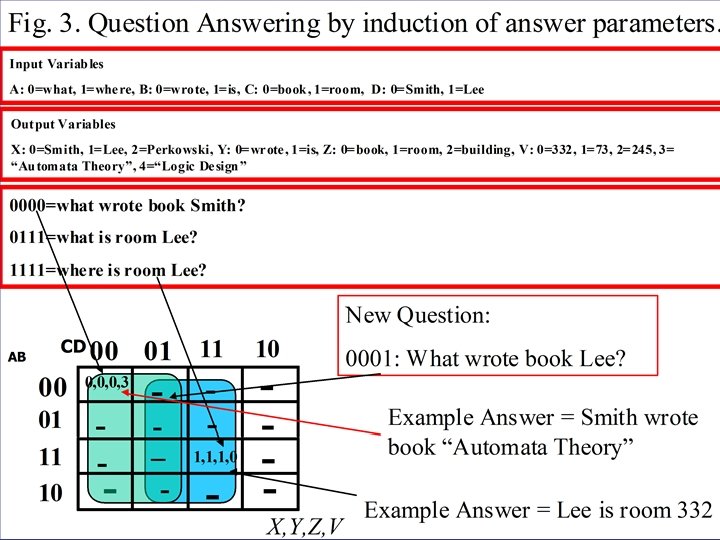

Constructive Induction

What is constructive induction? • Constructive induction is a logic-based method of teaching a robot of new knowledge. • It can be compared to neural networks. • Teaching is constructing some structure of a logic function: – Decision tree – Sum of Products – Decomposed structue

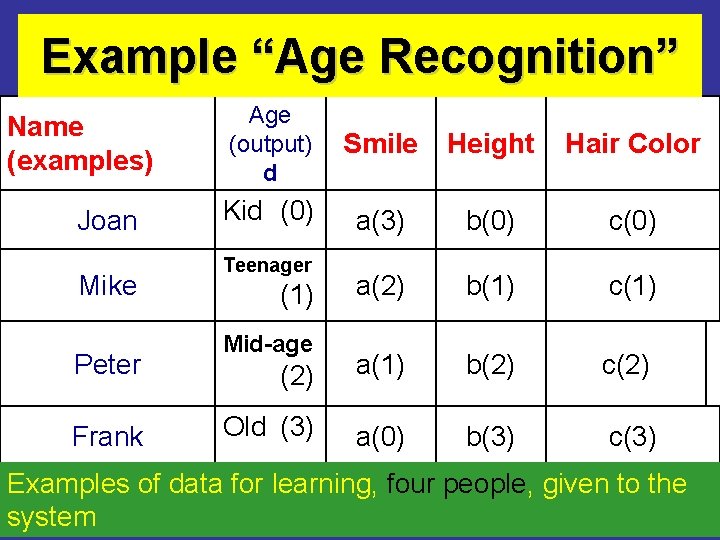

Example “Age Recognition” Name (examples) Joan Mike Peter Frank Age (output) d Smile Height Hair Color Kid (0) a(3) b(0) c(0) a(2) b(1) c(1) (2) a(1) b(2) c(2) Old (3) a(0) b(3) c(3) Teenager (1) Mid-age Examples of data for learning, four people, given to the system

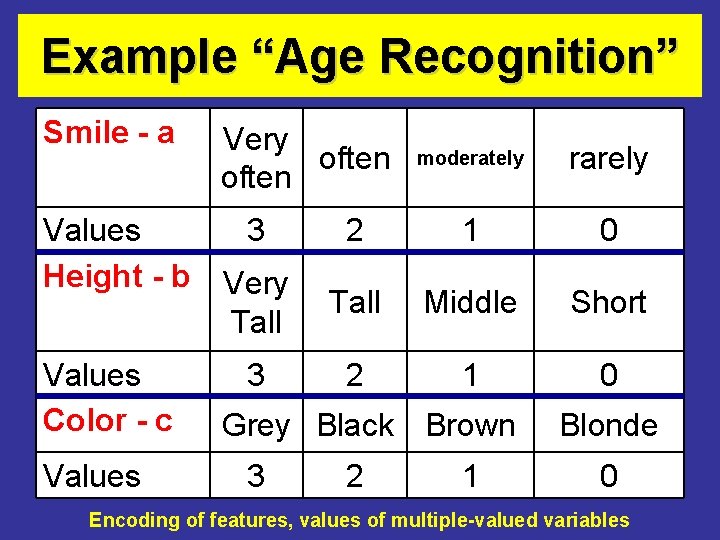

Example “Age Recognition” Smile - a Values Height - b Values Color - c Values Very often moderately rarely 3 2 1 0 Very Tall Middle Short 3 2 1 0 Grey Black Brown 3 2 1 Blonde 0 Encoding of features, values of multiple-valued variables

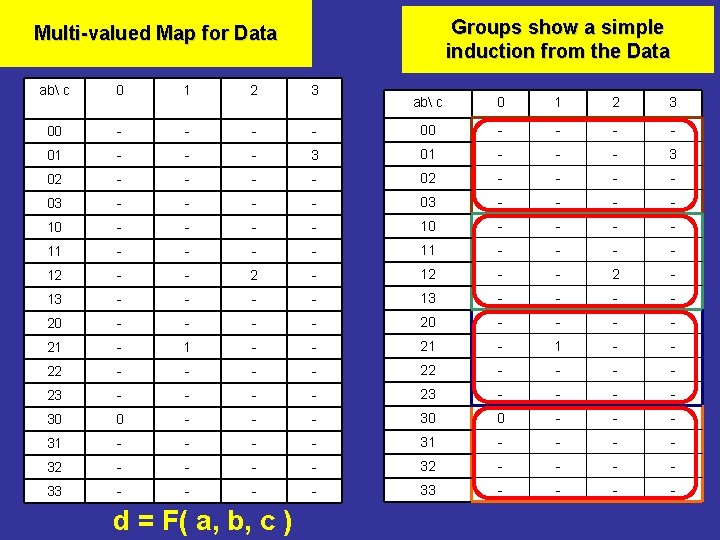

Groups show a simple induction from the Data Multi-valued Map for Data ab c 0 1 2 3 00 - - - 01 - - 02 - 03 ab c 0 1 2 3 - 00 - - - 3 01 - - - 3 - - - 02 - - - - 03 - - - - 10 - - - - 11 - - 12 - - 2 - 13 - - - - 20 - - - - 21 - 1 - - 22 - - - - 23 - - - - 30 0 - - - 31 - - - - 32 - - - - 33 - - - - d = F( a, b, c )

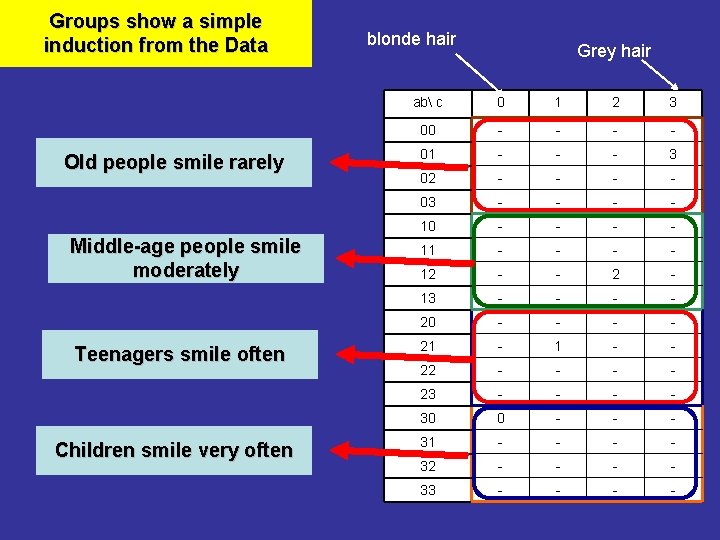

Groups show a simple induction from the Data Old people smile rarely Middle-age people smile moderately Teenagers smile often Children smile very often blonde hair Grey hair ab c 0 1 2 3 00 - - 01 - - - 3 02 - - 03 - - 10 - - 11 - - 12 - - 2 - 13 - - 20 - - 21 - - 22 - - 23 - - 30 0 - - - 31 - - 32 - - 33 - -

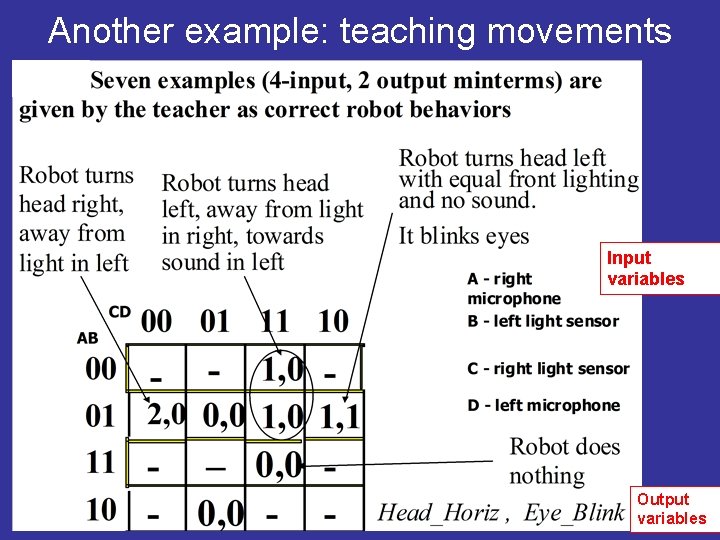

Another example: teaching movements Input variables Output variables

Generalization of the Ashenhurst. Curtis decomposition model

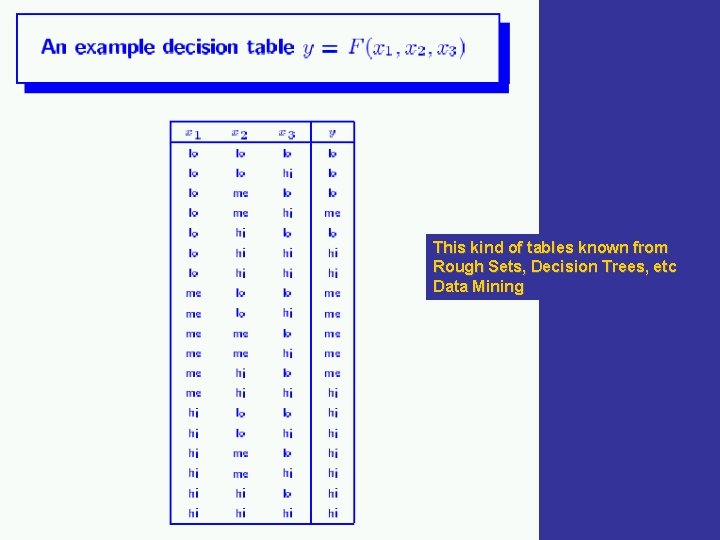

This kind of tables known from Rough Sets, Decision Trees, etc Data Mining

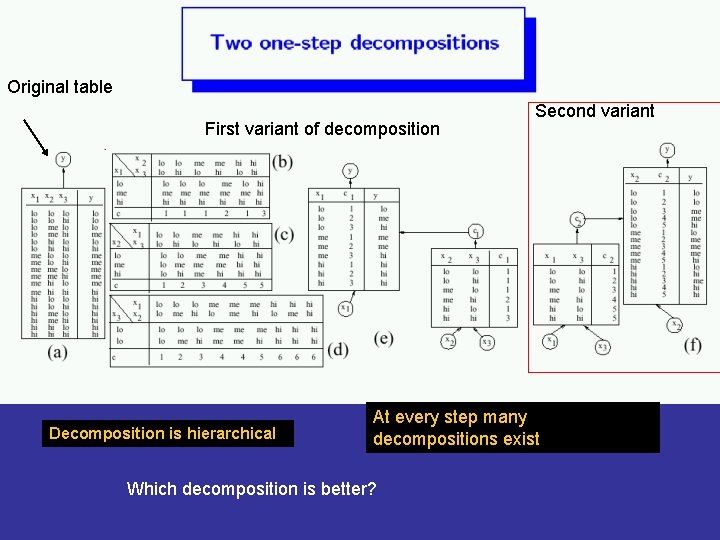

Original table First variant of decomposition Decomposition is hierarchical Second variant At every step many decompositions exist Which decomposition is better?

Constructive Induction: Technical Details • U. Wong and M. Perkowski, A New Approach to Robot’s Imitation of Behaviors by Decomposition of Multiple-Valued Relations, Proc. 5 th Intern. Workshop on Boolean Problems, Freiberg, Germany, Sept. 19 -20, 2002, pp. 265 -270. • A. Mishchenko, B. Steinbach and M. Perkowski, An Algorithm for Bi-Decomposition of Logic Functions, Proc. DAC 2001, June 18 -22, Las Vegas, pp. 103 -108. • A. Mishchenko, B. Steinbach and M. Perkowski, Bi. Decomposition of Multi-Valued Relations, Proc. 10 th IWLS, pp. 35 -40, Granlibakken, CA, June 12 -15, 2001. IEEE Computer Society and ACM SIGDA.

Constructive Induction • Decision Trees, Ashenhurst/Curtis hierarchical decomposition and Bi-Decomposition algorithms are used in our software • These methods create our subset of MVSIS system developed under Prof. Robert Brayton at University of California at Berkeley [2]. – The entire MVSIS system can be also used. • The system generates robot’s behaviors (C program codes) from examples given by the users. • This method is used for embedded system design, but we use it specifically for robot interaction.

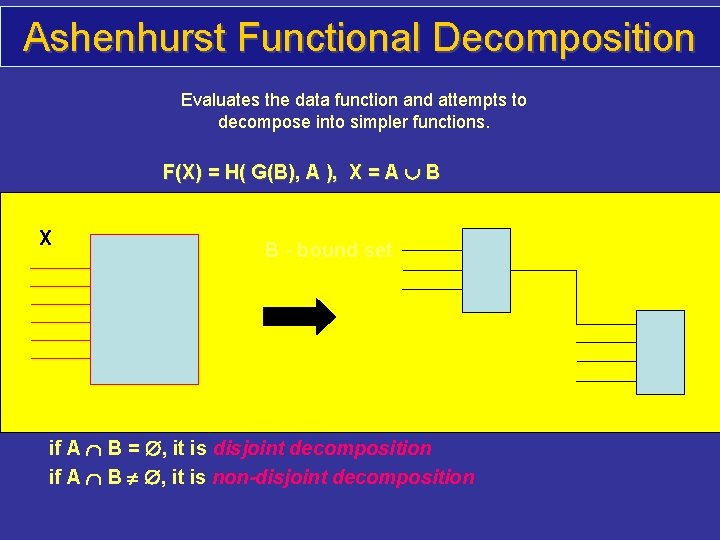

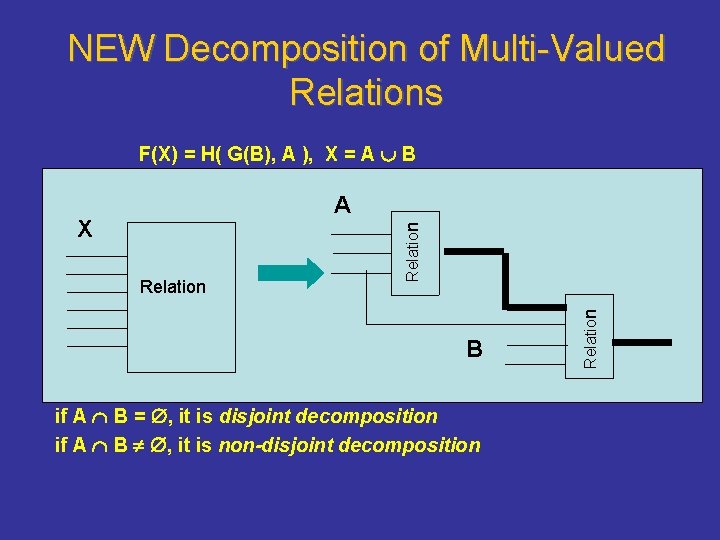

Ashenhurst Functional Decomposition Evaluates the data function and attempts to decompose into simpler functions. F(X) = H( G(B), A ), X = A B X B - bound set A - free set if A B = , it is disjoint decomposition if A B , it is non-disjoint decomposition

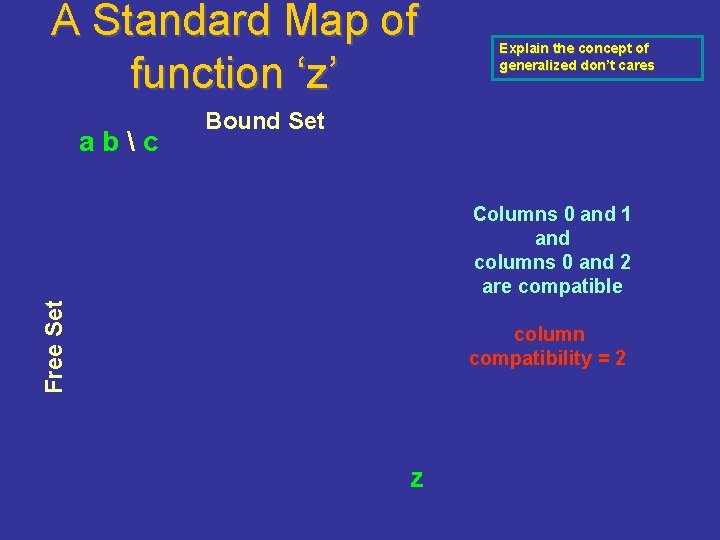

A Standard Map of function ‘z’ abc Explain the concept of generalized don’t cares Bound Set Free Set Columns 0 and 1 and columns 0 and 2 are compatible column compatibility = 2 z

NEW Decomposition of Multi-Valued Relations F(X) = H( G(B), A ), X = A B Relation B if A B = , it is disjoint decomposition if A B , it is non-disjoint decomposition Relation X Relation A

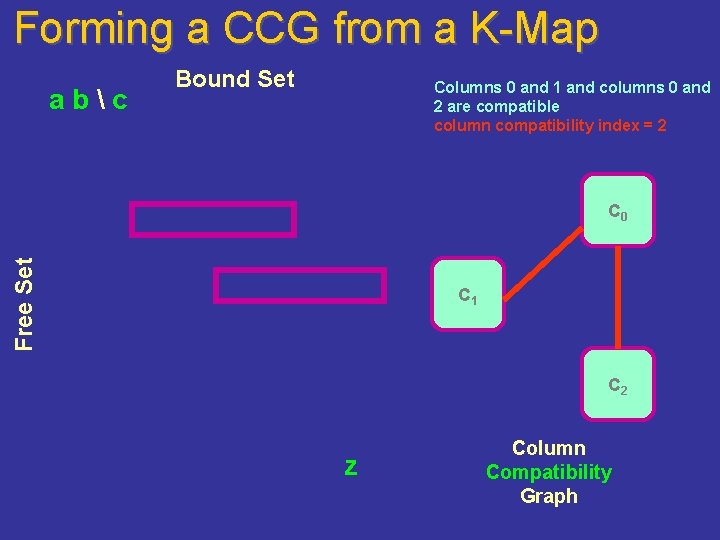

Forming a CCG from a K-Map abc Bound Set Columns 0 and 1 and columns 0 and 2 are compatible column compatibility index = 2 Free Set C 0 C 1 C 2 z Column Compatibility Graph

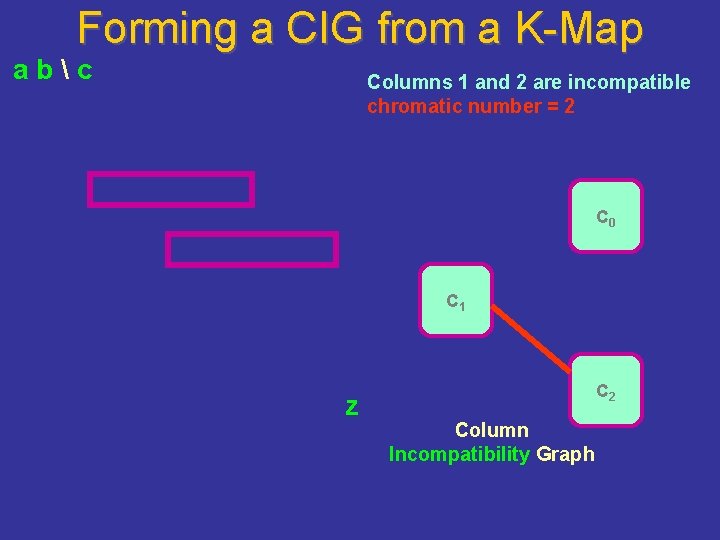

Forming a CIG from a K-Map abc Columns 1 and 2 are incompatible chromatic number = 2 C 0 C 1 z C 2 Column Incompatibility Graph

Constructive Induction • A unified internal language is used to describe behaviors in which text generation and facial gestures are unified. • This language is for learned behaviors. • Expressions (programs) in this language are either created by humans or induced automatically from examples given by trainers.

Conclusion. What did we learn • (1) the more degrees of freedom the better the animation realism. Art and interesting behavior above certain threshold of complexity. • (2) synchronization of spoken text and head (especially jaw) movements are important but difficult. Each robot is very different. • (3) gestures and speech intonation of the head should be slightly exaggerated – superrealism, not realism.

Conclusion. What did we learn(cont) • (4) Noise of servos: – the sound should be laud to cover noises coming from motors and gears and for a better theatrical effect. – noise of servos can be also reduced by appropriate animation and synchronization. • (5) TTS should be enhanced with some new sound-generating system. What? • (6) best available ATR and TTS packages should be applied. • (7) Open. CV from Intel is excellent. • (8) use puppet theatre experiences. We need artists. The weakness of technology can become the strength of the art in hands of an artist.

Conclusion. What did we learn(cont) • (9) because of a too slow learning, improved parameterized learning methods should be developed, but also based on constructive induction. • (10) open question: funny versus beautiful. • (11) either high quality voice recognition from headset or low quality in noisy room. YOU CANNOT HAVE BOTH WITH CURRENT ATR TOOLS. • (12) low reliability of the latex skins and this entire technology is an issue.

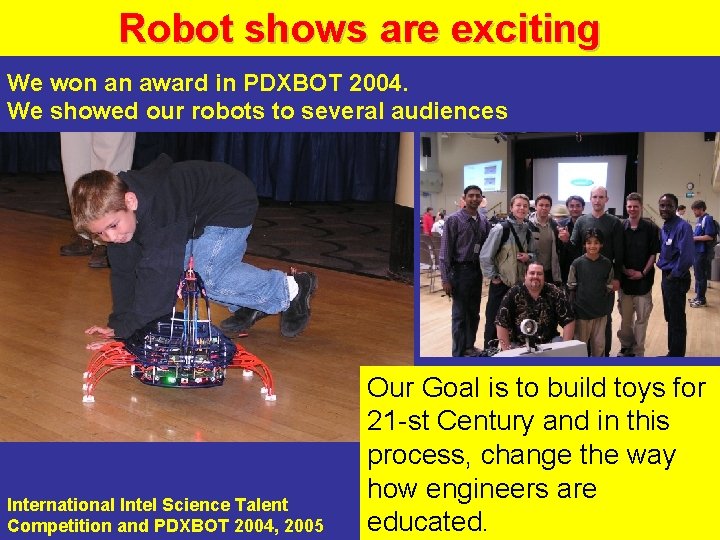

Robot shows are exciting We won an award in PDXBOT 2004. We showed our robots to several audiences International Intel Science Talent Competition and PDXBOT 2004, 2005 Our Goal is to build toys for 21 -st Century and in this process, change the way how engineers are educated.

What to remember? • Robot as a mapping from inputs to outputs • Braitenberg Vehicles • State machines, grammars and probabilistic state machines • Natural language conversation with a robot • Image processing for a interactive robot. • Constructive induction for behavior and language acquisition.

Projects: • Project 1 – Lego NXT. 2 people. Editor for state-machine and probabilistic state machine base robot behavior of mobile robots with sensors. • Project 2 – Vision for KHR-1 robot; Immitation. 2 people. Matthias Sunardi – group leader. • Project 3 – Head design for a humanoid robot

Projects: • Project 4 – Leg design for a humanoid robot • Project 5 – Hand design for a humanoid robot • Project 6 – Eye. Sim simulator – no robot needed. • Project 7 – Conversation with a humanoid robot (dialog and speech).

Projects: • Project 8 – Editor for an animatronic robot theatre • Project 9 – Quantum-Computer Controlled Robot • Project 10 – • Project 11 –

- Slides: 120