Towards Privacy in Public Databases Shuchi Chawla Cynthia

Towards Privacy in Public Databases Shuchi Chawla, Cynthia Dwork, Frank Mc. Sherry, Adam Smith, Hoeteck Wee Shuchi Chawla

Database Privacy • A “Census” problem Individuals provide information Ø Census Bureau publishes sanitized records Ø ― Ø Privacy is legally mandated; what utility can we achieve? Inherent Privacy vs. Utility trade-off • Our goal: Ø Find a middle path: preserve macroscopic properties ― “disguise” individual records (containing private info) ― Ø 2 Establish a framework for meaningful comparison of techniques Database Privacy Shuchi Chawla

![What about Secure Function Evaluation? • Secure Function Evaluation [Yao, GMW] Allows parties to What about Secure Function Evaluation? • Secure Function Evaluation [Yao, GMW] Allows parties to](http://slidetodoc.com/presentation_image/fb7a082c890418c0162fdf26252a0eda/image-3.jpg)

What about Secure Function Evaluation? • Secure Function Evaluation [Yao, GMW] Allows parties to collaboratively compute a function f of their private inputs = f(a, b, c, …) ( E. g. , = sum(a, b, c, …) ) Ø Each player learns only what can be deduced from and her own input to f Ø • SFE and privacy are complementary problems: one does not imply the other 3 Ø SFE: Given what must be preserved, protect everything else Ø Privacy: Given what must be protected, preserve as much as you can Database Privacy Shuchi Chawla

This talk… • A formalism for privacy What we mean by privacy Ø A good sanitization procedure Ø • Results Ø Histograms and Perturbations • Subsequent work; Open problems 4 Database Privacy Shuchi Chawla

![What do we mean by Privacy? • [Ruth Gavison] Protection from being brought to What do we mean by Privacy? • [Ruth Gavison] Protection from being brought to](http://slidetodoc.com/presentation_image/fb7a082c890418c0162fdf26252a0eda/image-5.jpg)

What do we mean by Privacy? • [Ruth Gavison] Protection from being brought to the attention of others inherently valuable Ø attention invites further privacy loss Ø • Privacy is assured to the extent that one blends in with the crowd • Appealing definition; can be converted into a precise mathematical statement… 5 Database Privacy Shuchi Chawla

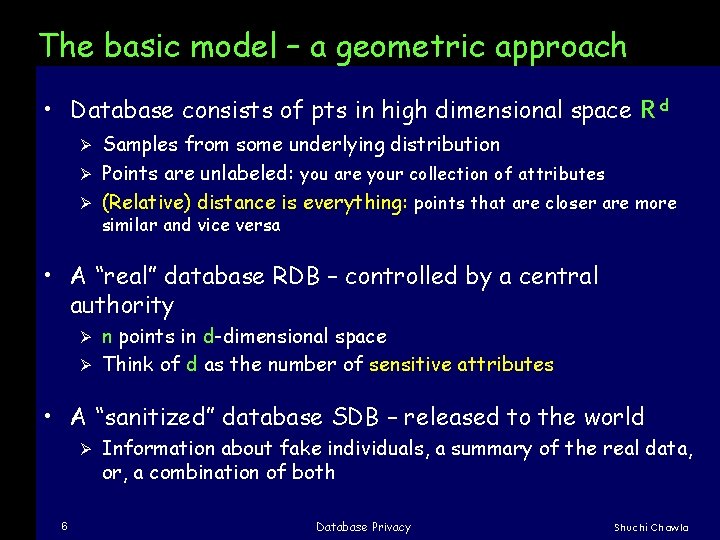

The basic model – a geometric approach • Database consists of pts in high dimensional space R d Samples from some underlying distribution Ø Points are unlabeled: you are your collection of attributes Ø (Relative) distance is everything: points that are closer are more Ø similar and vice versa • A “real” database RDB – controlled by a central authority n points in d-dimensional space Ø Think of d as the number of sensitive attributes Ø • A “sanitized” database SDB – released to the world Ø 6 Information about fake individuals, a summary of the real data, or, a combination of both Database Privacy Shuchi Chawla

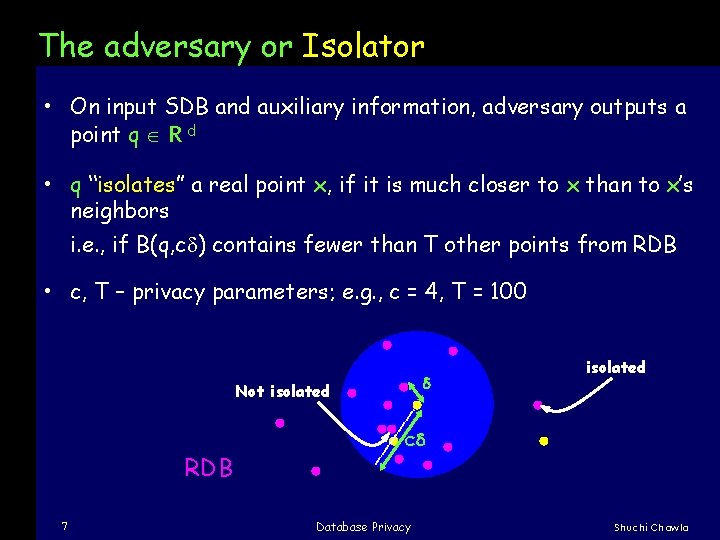

The adversary or Isolator • On input SDB and auxiliary information, adversary outputs a point q R d • q “isolates” a real point x, if it is much closer to x than to x’s neighbors i. e. , if B(q, c ) contains fewer than T other points from RDB • c, T – privacy parameters; e. g. , c = 4, T = 100 d Not isolated RDB 7 isolated cd Database Privacy Shuchi Chawla

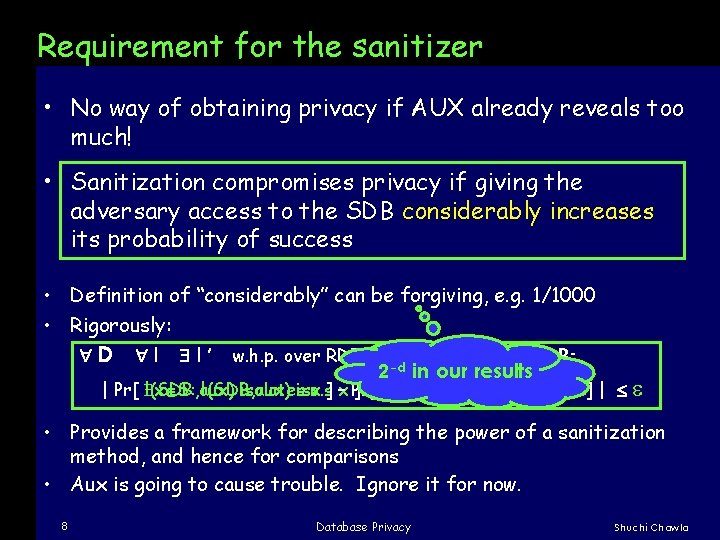

Requirement for the sanitizer • No way of obtaining privacy if AUX already reveals too much! • Sanitization compromises privacy if giving the adversary access to the SDB considerably increases its probability of success • Definition of “considerably” can be forgiving, e. g. 1/1000 • Rigorously: D I I’ w. h. p. over RDB D 2 -d aux x S RDB: in our results | Pr[ x S: I(SDB, aux) isolatesiso. s x ] –x Pr[ ] – Pr[ I ’(aux) x S: isolates I ’(aux)x iso. s ] | x ] | • Provides a framework for describing the power of a sanitization method, and hence for comparisons • Aux is going to cause trouble. Ignore it for now. 8 Database Privacy Shuchi Chawla

![A “bad” sanitizer that passes [Abhinandan Das, Cornell] • Disguise one attribute extremely well; A “bad” sanitizer that passes [Abhinandan Das, Cornell] • Disguise one attribute extremely well;](http://slidetodoc.com/presentation_image/fb7a082c890418c0162fdf26252a0eda/image-9.jpg)

A “bad” sanitizer that passes [Abhinandan Das, Cornell] • Disguise one attribute extremely well; Leave the others in the clear • Without info about the special attribute, the adversary cannot “isolate” any point • However, he knows all other attributes exactly! • What goes wrong? The assumption that “distance is everything” Ø No isolation no privacy breach, even if the adversary knows a lot of information Ø 9 Database Privacy Shuchi Chawla

Utility goals • Desirable results Macroscopic properties (e. g. means) should be preserved Ø Running statistical tests / data-analysis algorithms should return results similar to those obtained from real data Ø • We show: Ø 10 Concrete point-wise results on histograms and clustering algorithms Database Privacy Shuchi Chawla

This talk… • A formalism for privacy What we mean by privacy Ø A good sanitization procedure Ø • Results Ø Histograms and Perturbations • Subsequent work; Open problems 11 Database Privacy Shuchi Chawla

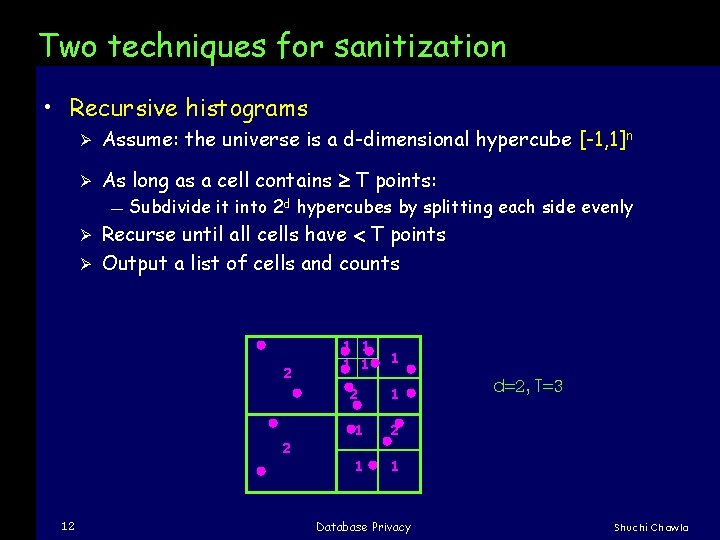

Two techniques for sanitization • Recursive histograms Ø Assume: the universe is a d-dimensional hypercube [-1, 1]n Ø As long as a cell contains T points: ― Subdivide it into 2 d hypercubes by splitting each side evenly Recurse until all cells have T points Ø Output a list of cells and counts Ø 2 2 12 1 1 1 2 1 1 Database Privacy d=2, T=3 Shuchi Chawla

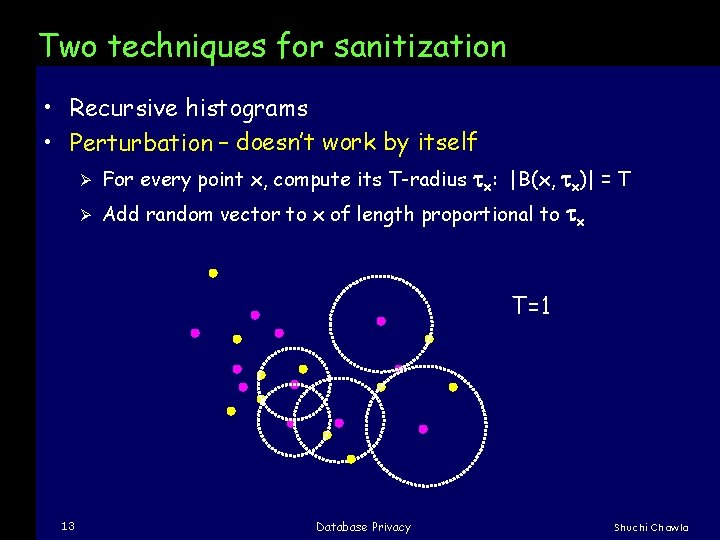

Two techniques for sanitization • Recursive histograms • Perturbation – doesn’t work by itself Ø For every point x, compute its T-radius tx: |B(x, tx)| = T Ø Add random vector to x of length proportional to tx T=1 13 Database Privacy Shuchi Chawla

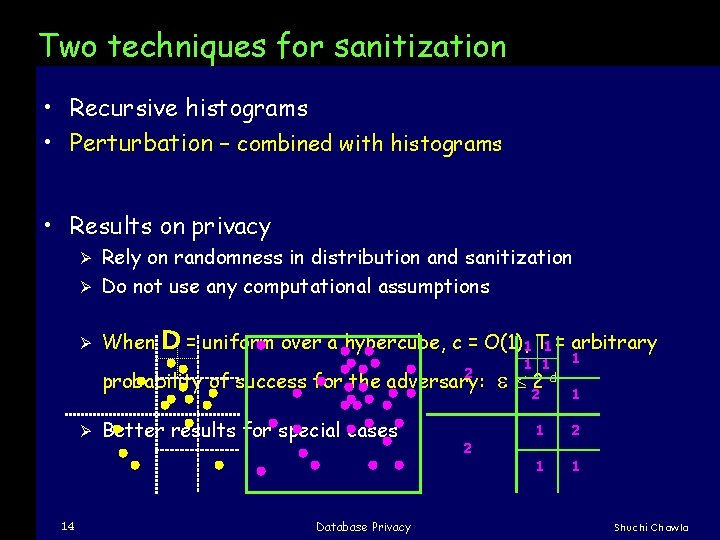

Two techniques for sanitization • Recursive histograms • Perturbation – combined with histograms • Results on privacy Rely on randomness in distribution and sanitization Ø Do not use any computational assumptions Ø Ø When D = uniform over a hypercube, c = O(1), 1 T 1 = arbitrary 2 probability of success for the adversary: Ø 14 Better results for special cases Database Privacy 2 1 1 22 -d 1 1 1 2 1 1 Shuchi Chawla

Key results on utility • Perturbation-based sanitization: Allows for various clustering algorithms to perform nearly as well as on real data Spectral techniques Ø Diameter-based clusterings Ø • Histograms : a popular summarization technique in statistics Recursive histograms – benefit of providing more detail where required Ø Provide density information even without the counts Ø No randomness involved! Ø 15 Database Privacy Shuchi Chawla

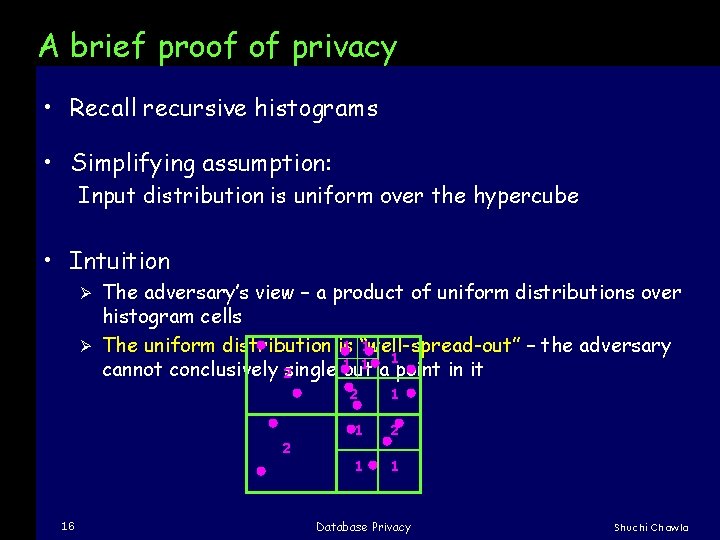

A brief proof of privacy • Recall recursive histograms • Simplifying assumption: Input distribution is uniform over the hypercube • Intuition The adversary’s view – a product of uniform distributions over histogram cells 1 “well-spread-out” 1 Ø The uniform distribution is – the adversary 1 1 1 a point in it cannot conclusively 2 single out Ø 2 2 16 1 1 2 1 1 Database Privacy Shuchi Chawla

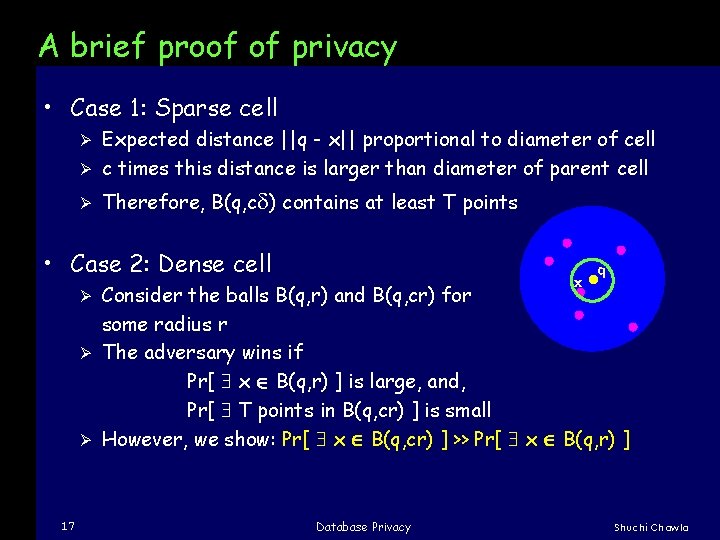

A brief proof of privacy • Case 1: Sparse cell Expected distance ||q - x|| proportional to diameter of cell Ø c times this distance is larger than diameter of parent cell Ø Ø Therefore, B(q, c ) contains at least T points • Case 2: Dense cell x q Consider the balls B(q, r) and B(q, cr) for some radius r Ø The adversary wins if Pr[ x B(q, r) ] is large, and, Pr[ T points in B(q, cr) ] is small Ø However, we show: Pr[ x B(q, cr) ] >> Pr[ x B(q, r) ] Ø 17 Database Privacy Shuchi Chawla

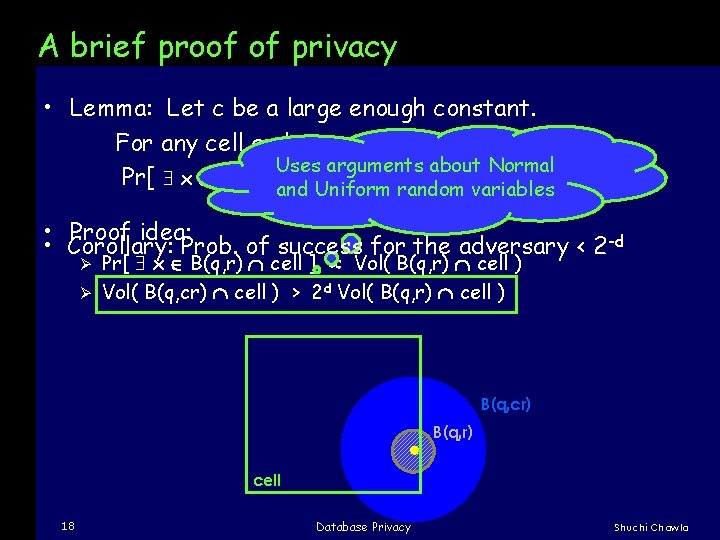

A brief proof of privacy • Lemma: Let c be a large enough constant. For any cell and any r < diam(cell)/c , Uses arguments about Normal Pr[ x B(q, cr)and cell ] 2 d Pr[ x B(q, r) cell ] Uniform random variables • Proof idea: • Corollary: Prob. of success for the adversary < 2 -d Pr[ x B(q, r) cell ] Vol( B(q, r) cell ) Ø Vol( B(q, cr) cell ) > 2 d Vol( B(q, r) cell ) Ø B(q, cr) B(q, r) cell 18 Database Privacy Shuchi Chawla

This talk… • A formalism for privacy What we mean by privacy Ø A good sanitization procedure Ø • Results Ø Histograms and Perturbations • Subsequent work; Open problems 19 Database Privacy Shuchi Chawla

Follow-up work • Isolation in few dimensions Ø Adversary must be more and more accurate in fewer dimensions • Randomized recursive histograms [Chawla, Dwork, Mc. Sherry, Talwar] Similar privacy guarantees for “nearly-uniform” distributions over “well-rounded” universes Ø Preserve distances between pairs of points to a reasonable accuracy (additive error depending on T) Ø • General-case impossibility Ø 20 Cannot allow arbitrary AUX – utility, and definitions of privacy, AUX that prevents privacy-preserving sanitization Database Privacy Shuchi Chawla

What about the real world? • Lessons from the abstract model High dimensionality is our friend Ø Histograms are powerful; Spherical perturbations promising Ø Need to scale different attributes appropriately, so that data is well-rounded Ø • Moving towards real data Ø Outliers ― Ø Discrete attributes ― Ø Possible solution: Convert them into real-valued attributes by adding noise? The low-dimensional case ― ― 21 Our notion of c-isolation deals with them; existence may be disclosed Is it inherently impossible? Dinur and Nissim show impossibility for 1 -dimensional data Database Privacy Shuchi Chawla

Questions? Shuchi Chawla

- Slides: 22