Towards Automatic Scoring of a Test of Spoken

- Slides: 13

Towards Automatic Scoring of a Test of Spoken Language with Heterogeneous Task Types Klaus Zechner Xiaoming Xi Educational Testing Service Listening. Learning. Leading. Copyright © 2004 Educational Testing Service

Motivation • Growing demand for English as a Second/Foreign Language (ESL/EFL) worldwide (business, academia, travel…) • Growing demand for testing the productive skills of ESL/EFL learners (speaking, writing) • Speaking and writing: usually scored by trained human raters – slow, expensive, somewhat subjective • Automatic speech scoring so far: either only highentropy or only low-entropy speech • THT – Test with Heterogeneous Tasks: all levels of linguistic entropy Copyright © 2004 Educational Testing Service

Outline • • • Related work THT tasks ASR adaptation and optimization Speech features Scoring models Summary Copyright © 2004 Educational Testing Service

Related Work • Pronunciation testing (Franco et al. ) • Feature-based speech proficiency scoring (Cucchiarini et al. ) • Speaking tests using simple tasks (Reading, repeating, opposites…) (Bernstein et al. ) • Speaking tests with high entropy tasks (TOEFL® Practice Online; Zechner et al. ) Copyright © 2004 Educational Testing Service

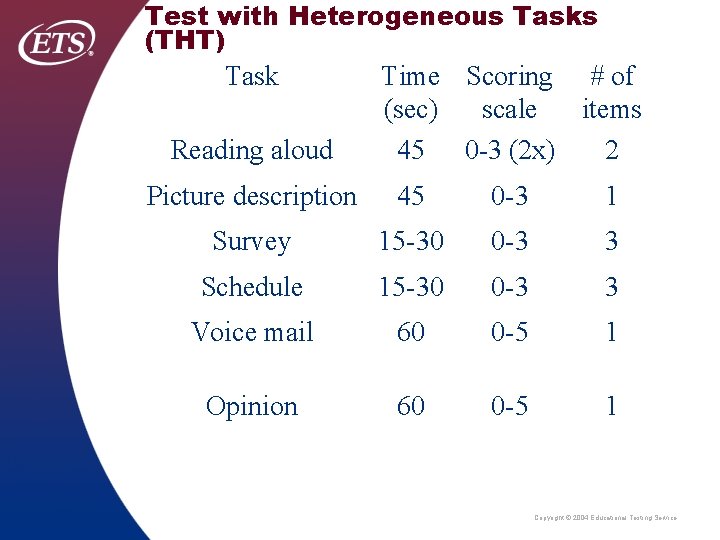

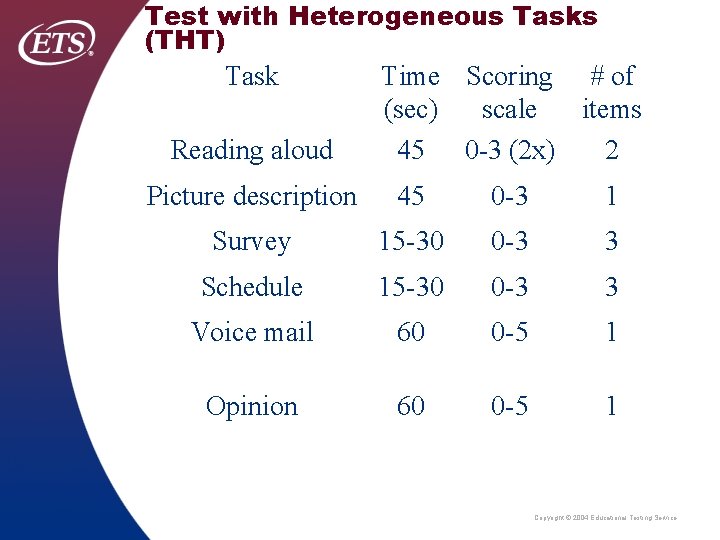

Test with Heterogeneous Tasks (THT) Task Reading aloud Time Scoring # of (sec) scale items 45 0 -3 (2 x) 2 Picture description 45 0 -3 1 Survey 15 -30 0 -3 3 Schedule 15 -30 0 -3 3 Voice mail 60 0 -5 1 Opinion 60 0 -5 1 Copyright © 2004 Educational Testing Service

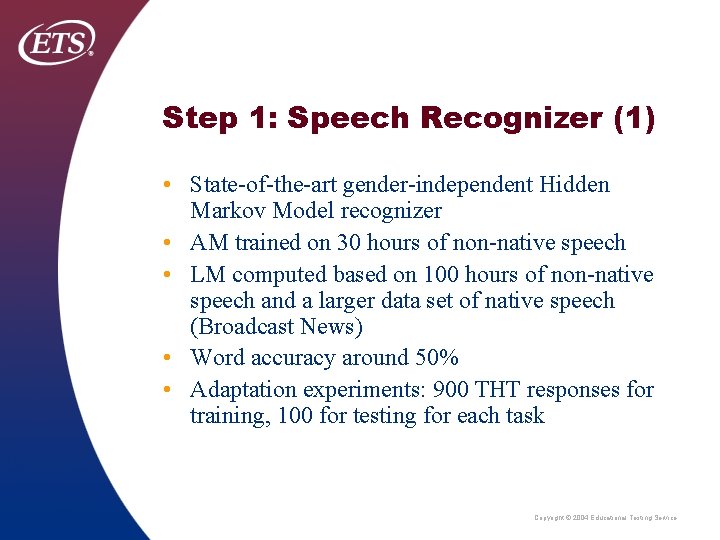

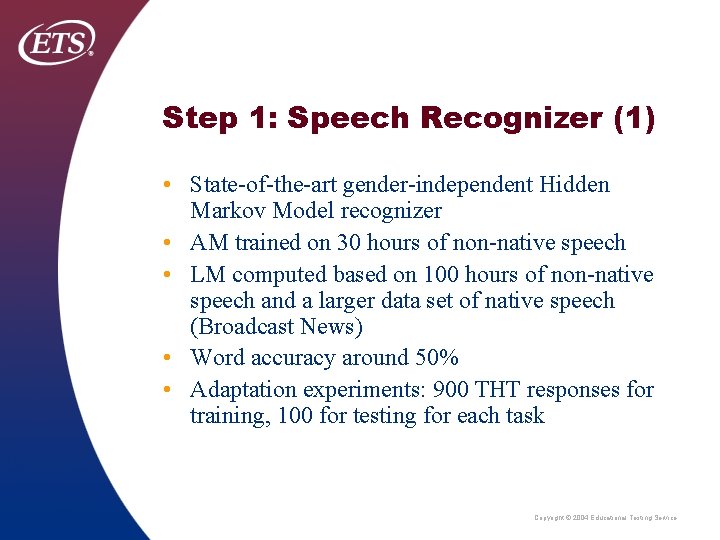

Step 1: Speech Recognizer (1) • State-of-the-art gender-independent Hidden Markov Model recognizer • AM trained on 30 hours of non-native speech • LM computed based on 100 hours of non-native speech and a larger data set of native speech (Broadcast News) • Word accuracy around 50% • Adaptation experiments: 900 THT responses for training, 100 for testing for each task Copyright © 2004 Educational Testing Service

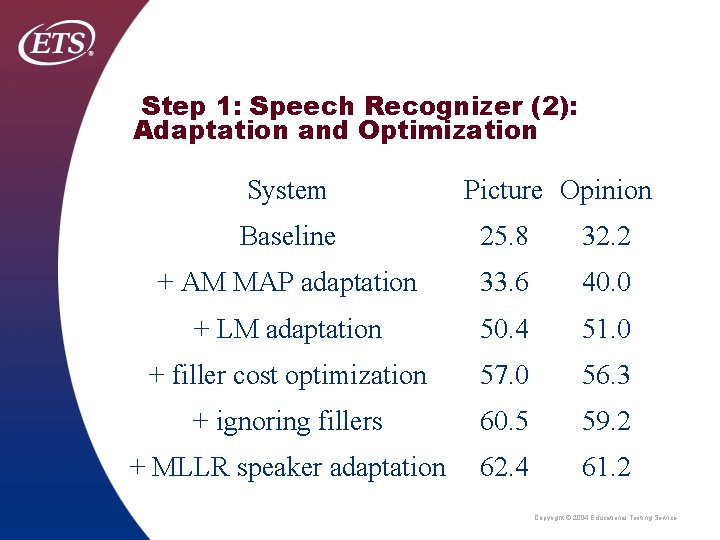

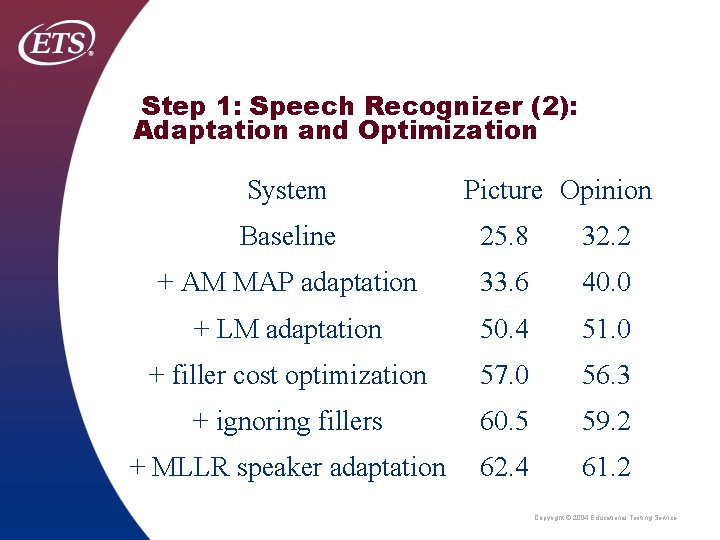

Step 1: Speech Recognizer (2): Adaptation and Optimization System Picture Opinion Baseline 25. 8 32. 2 + AM MAP adaptation 33. 6 40. 0 + LM adaptation 50. 4 51. 0 + filler cost optimization 57. 0 56. 3 + ignoring fillers 60. 5 59. 2 + MLLR speaker adaptation 62. 4 61. 2 Copyright © 2004 Educational Testing Service

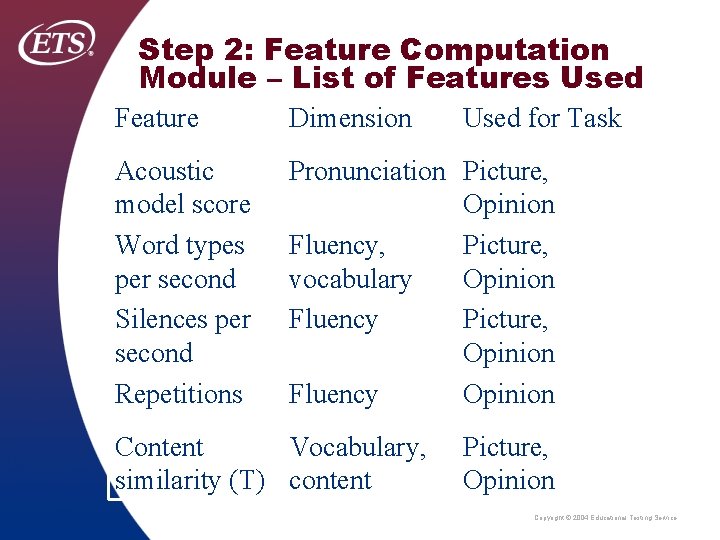

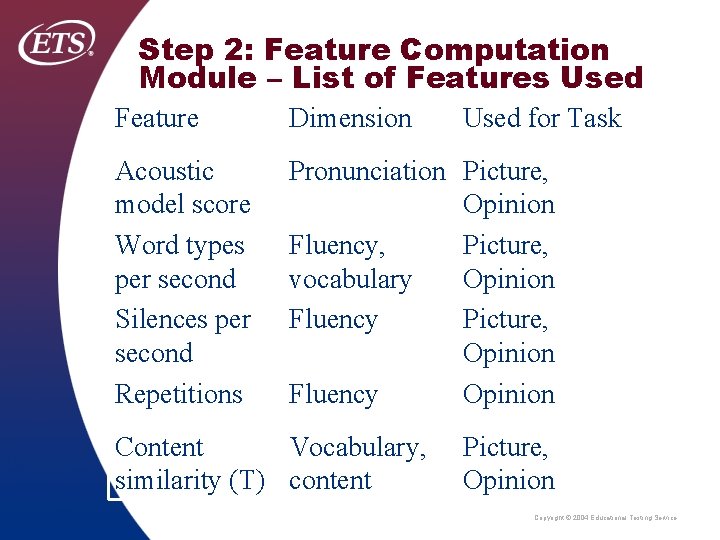

Step 2: Feature Computation Module – List of Features Used Feature Dimension Acoustic model score Word types per second Silences per second Repetitions Pronunciation Picture, Opinion Fluency, Picture, vocabulary Opinion Fluency Picture, Opinion Fluency Opinion Content Vocabulary, similarity (T) content Used for Task Picture, Opinion Copyright © 2004 Educational Testing Service

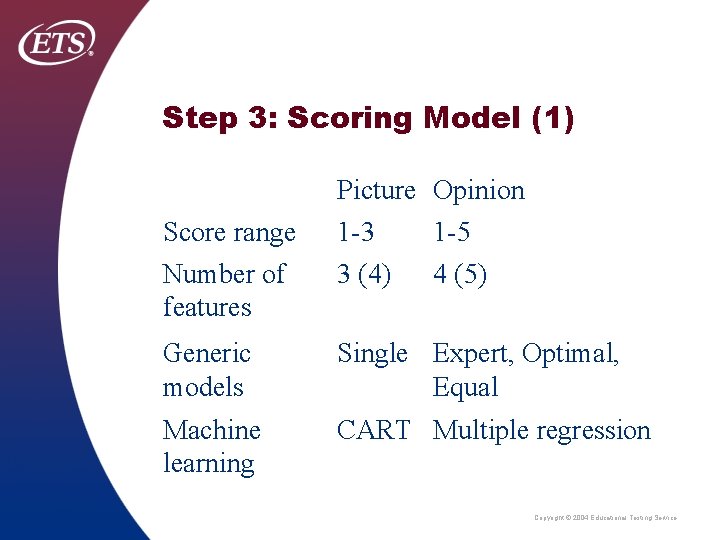

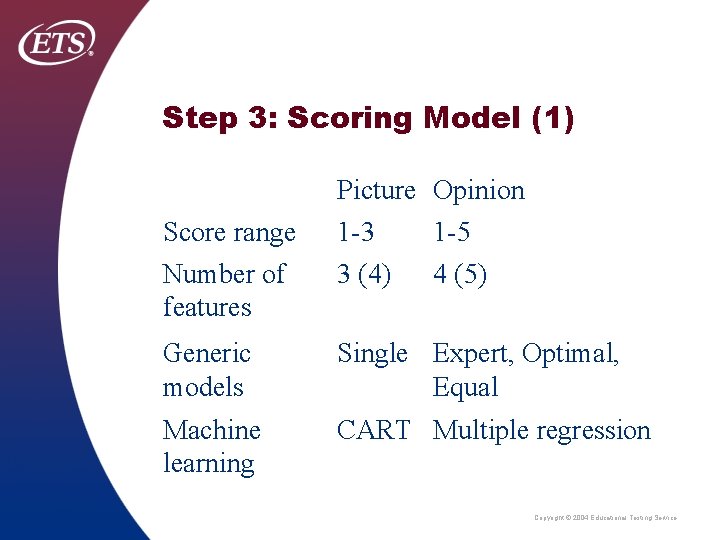

Step 3: Scoring Model (1) Score range Number of features Picture Opinion 1 -3 1 -5 3 (4) 4 (5) Generic models Single Expert, Optimal, Equal Machine learning CART Multiple regression Copyright © 2004 Educational Testing Service

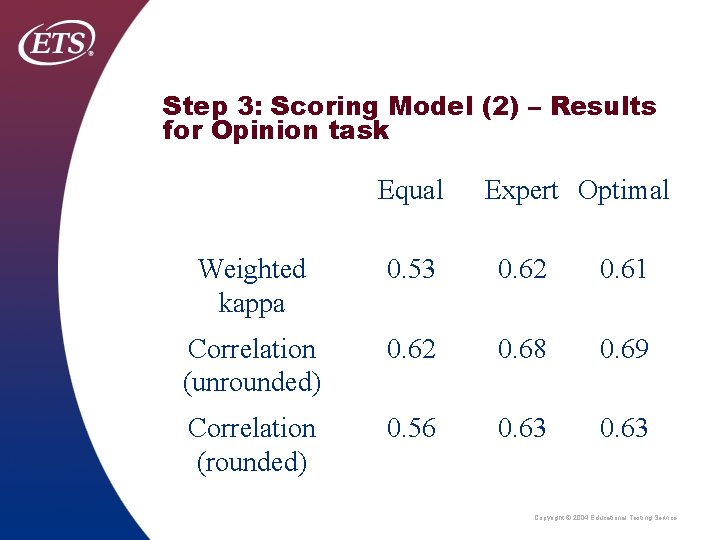

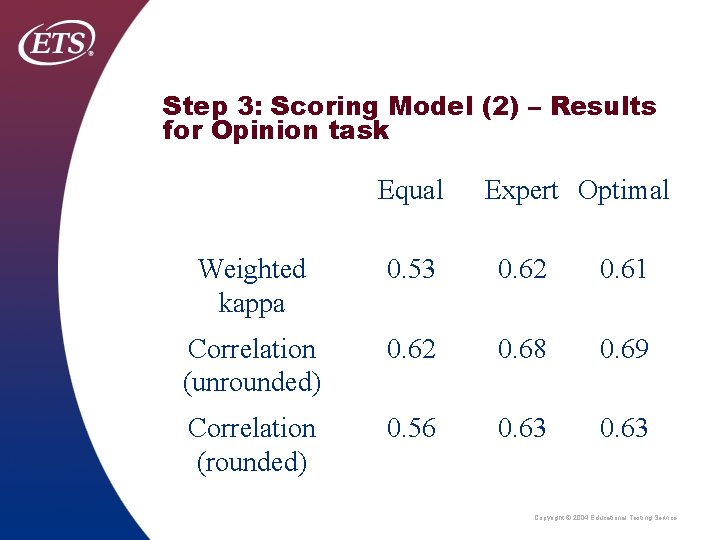

Step 3: Scoring Model (2) – Results for Opinion task Equal Expert Optimal Weighted kappa 0. 53 0. 62 0. 61 Correlation (unrounded) 0. 62 0. 68 0. 69 Correlation (rounded) 0. 56 0. 63 Copyright © 2004 Educational Testing Service

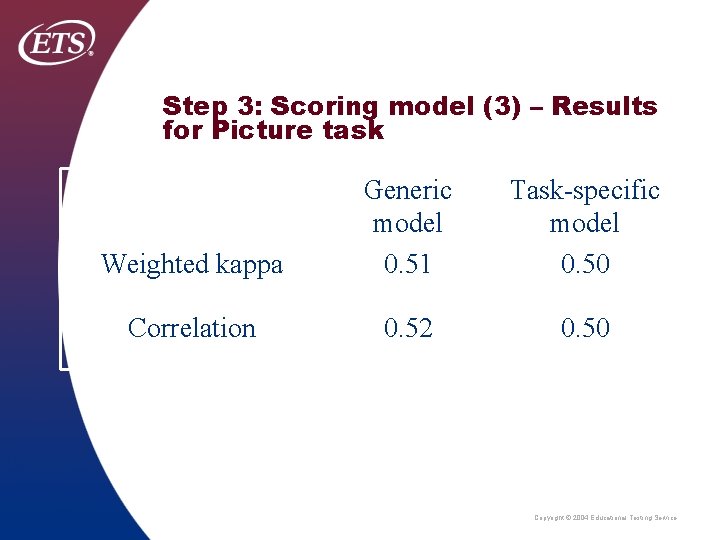

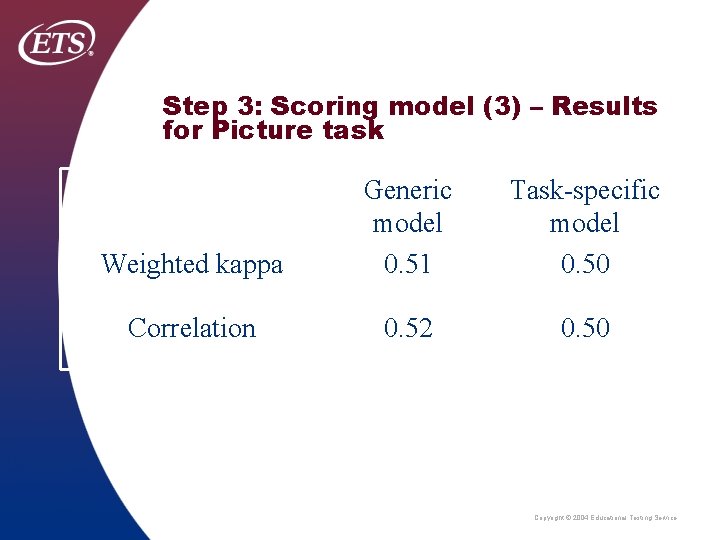

Step 3: Scoring model (3) – Results for Picture task Weighted kappa Generic model 0. 51 Task-specific model 0. 50 Correlation 0. 52 0. 50 Copyright © 2004 Educational Testing Service

Summary • THT contains tasks with wide range of linguistic entropy • Built 3 -stage-system for medium-high entropy (Picture) and high entropy task (Opinion) • Used multiple regression and CART to predict human scores, using 3 -5 features • Can reach inter-rater agreement for Picture task, slightly below for Opinion task • Correlations likely to improve significantly when aggregating scores across multiple tasks Copyright © 2004 Educational Testing Service

Future Work • Building models for remaining four tasks of THT • Expanding the feature set, in particular wrt. vocabulary, grammar and content • Improving speech recognizer’s word accuracy Copyright © 2004 Educational Testing Service