Towards a Standardized Performance Testing Methodology Michael Mild

- Slides: 27

Towards a Standardized Performance Testing Methodology -Michael Mild, Soft. Well Performance AB George Din, Fraunhofer FOKUS

Short History § MTS 46 • Michael Mild presented the idea and motivation for a standardized performance measuring methodology for IMS. • !AP: prepare a pre-normative study on requirements for a performance testing methodology § TTCN-3 User Conference 2008 • George Din presented ideas of a methodology for performance testing of multi services systems (exemplified for IMS). A concrete TTCN-3 based framework has been illustrated. • Stephan Schulz and Giulio Maggiore suggested a common work on a study on a standardized performance testing methdology. § MTS 47 • Presentation of a „pre-normative“ study on performance testing methodology 31. 10. 2020 Performance Test Methodology 2

Common Problems • Various tools and various methods for performance testing • no general understanding of what should be regarded as performance • standard performance indicators • no standard vocabulary for performance related issues • no common interpretation of metrics • no common test procedures • no general notation for performance test cases 31. 10. 2020 Performance Test Methodology 3

Scope of this Proposal (! Not limited to IMS) § General Part common vocabulary for performance and performance related issues standard for interpretation and evaluation of performance metrics common notation for performance test cases simplify maintenance of performance test cases enable performance requirements to be specified, implemented, and verified • enable performance test tools to be certified • enable performance test suites to be defined and certified • provide the basis for TTCN-3 Performance Testing package • • • § Application Part § improve understanding of performance of complex IMS systems 31. 10. 2020 Performance Test Methodology 4

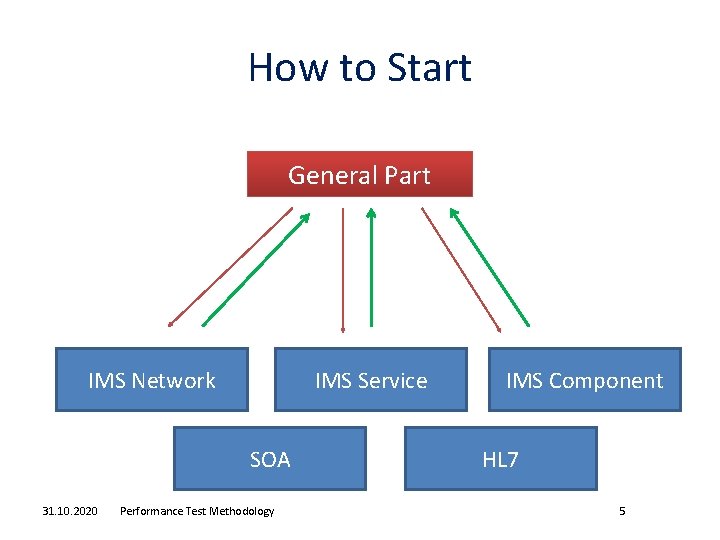

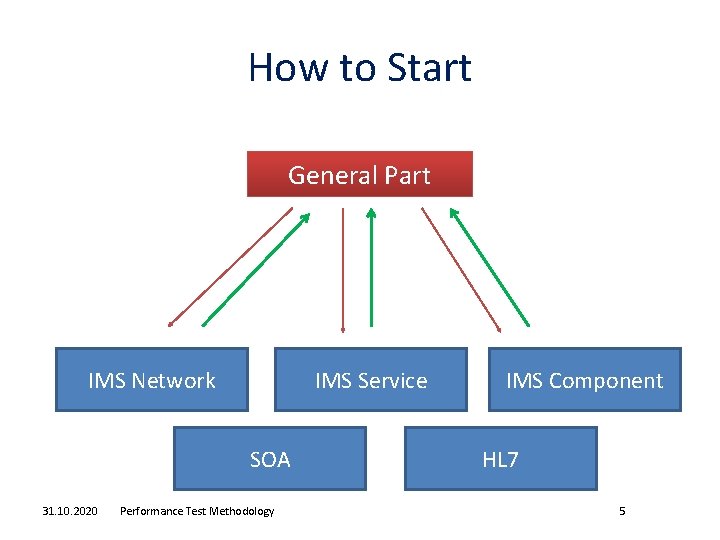

How to Start General Part IMS Network IMS Service SOA 31. 10. 2020 Performance Test Methodology IMS Component HL 7 5

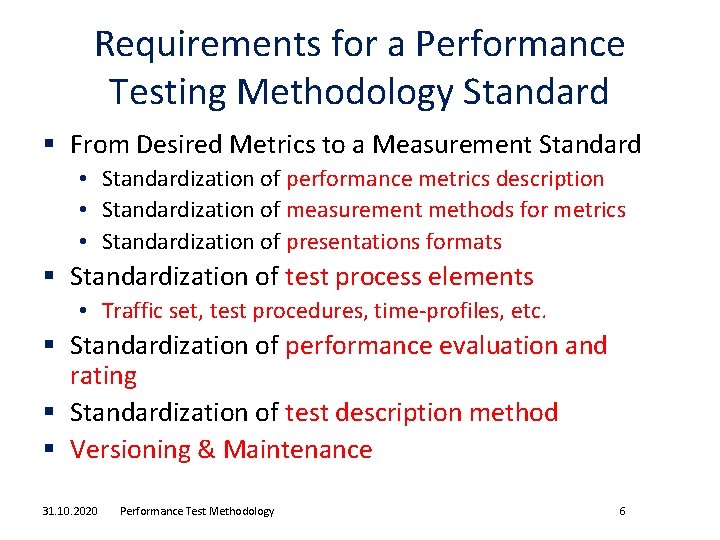

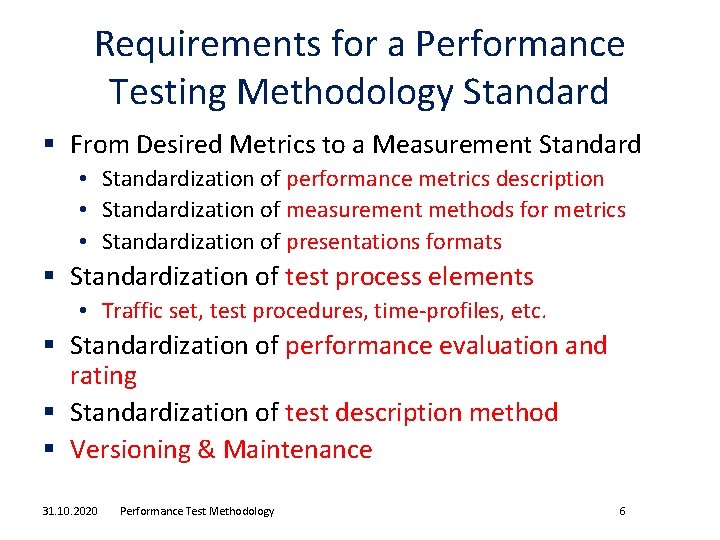

Requirements for a Performance Testing Methodology Standard § From Desired Metrics to a Measurement Standard • Standardization of performance metrics description • Standardization of measurement methods for metrics • Standardization of presentations formats § Standardization of test process elements • Traffic set, test procedures, time-profiles, etc. § Standardization of performance evaluation and rating § Standardization of test description method § Versioning & Maintenance 31. 10. 2020 Performance Test Methodology 6

Performance Testing Methodology Elements 31. 10. 2020 Performance Test Methodology 7

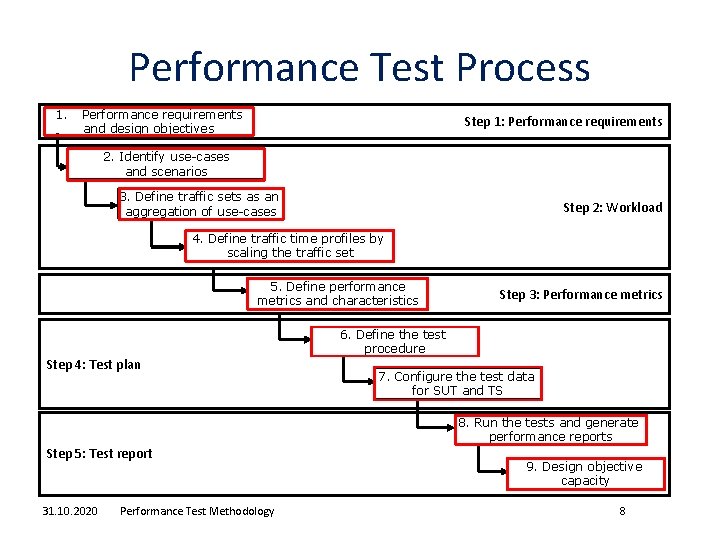

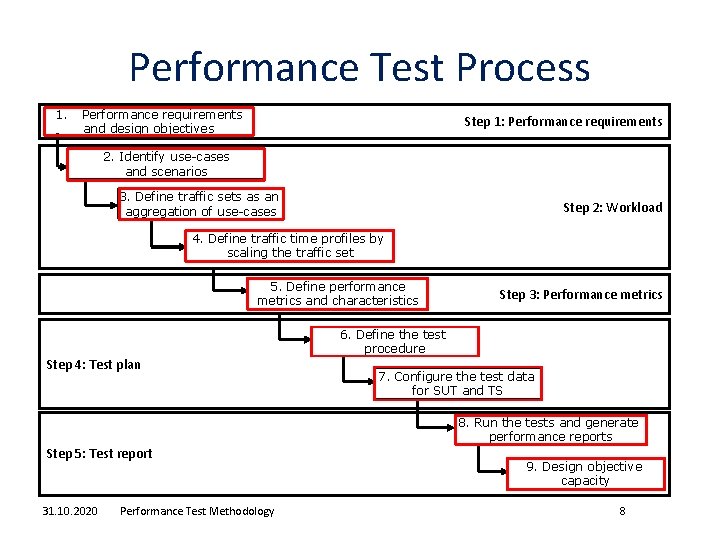

Performance Test Process 1. Performance requirements and design objectives Step 1: Performance requirements 2. Identify use-cases and scenarios 3. Define traffic sets as an aggregation of use-cases Step 2: Workload 4. Define traffic time profiles by scaling the traffic set 5. Define performance metrics and characteristics Step 4: Test plan Step 3: Performance metrics 6. Define the test procedure 7. Configure the test data for SUT and TS 8. Run the tests and generate performance reports Step 5: Test report 31. 10. 2020 Performance Test Methodology 9. Design objective capacity 8

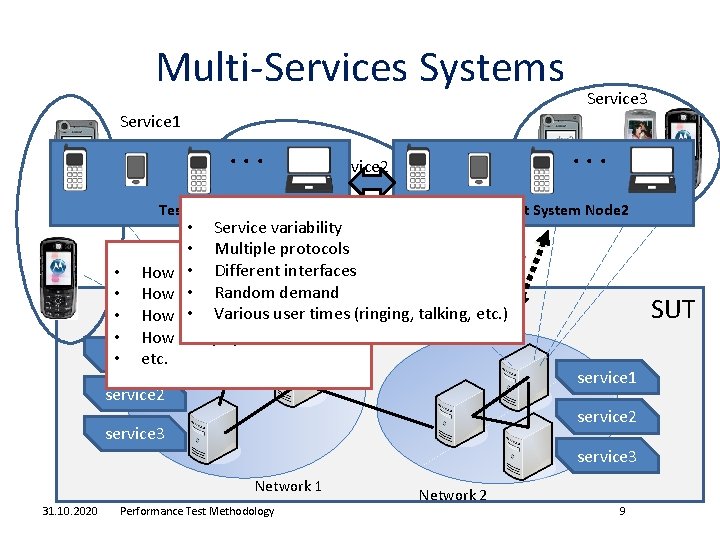

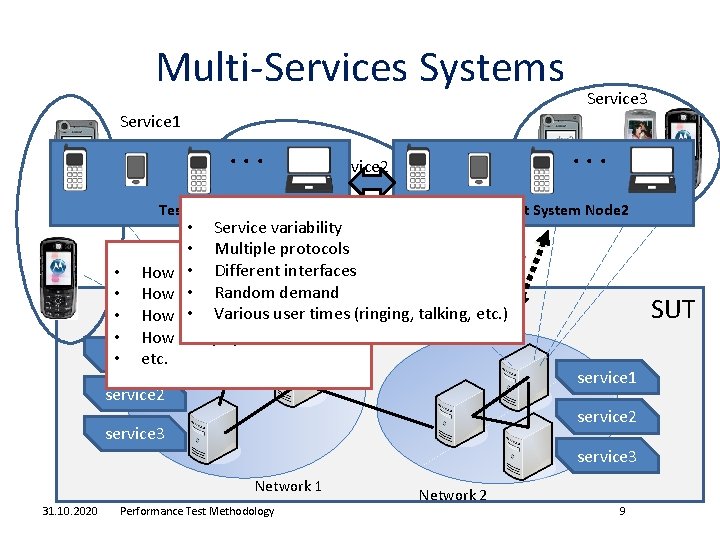

Multi-Services Systems Service 3 Service 1 . . . Service 2 . . . Test System Node 1 Test System Node 2 • Service variability • Multiple protocols • Different • How many users? interfaces • Random • How many calls? demand • Various user times (ringing, talking, etc. ) • How many transactions? • How many open calls? service 1 • etc. service 2 SUT service 1 service 2 service 3 Network 1 31. 10. 2020 Performance Test Methodology Network 2 9

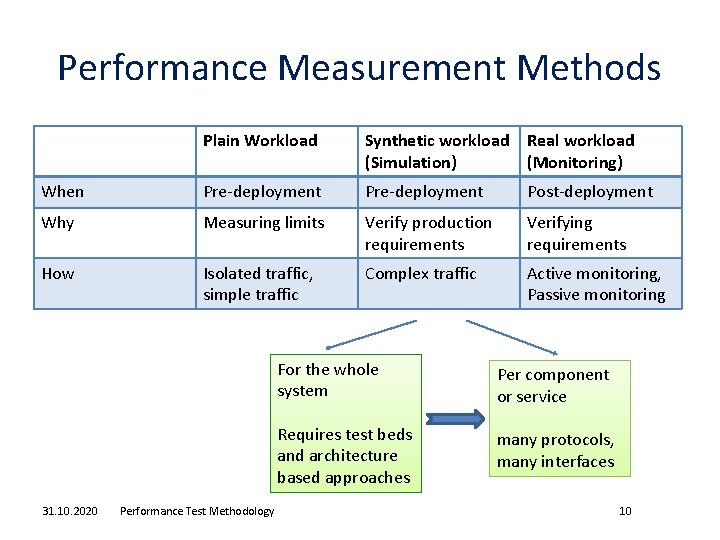

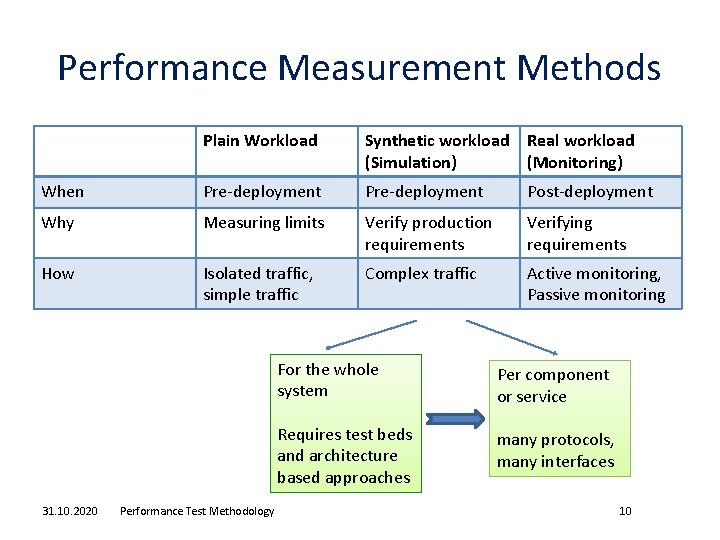

Performance Measurement Methods Plain Workload Synthetic workload Real workload (Simulation) (Monitoring) When Pre-deployment Post-deployment Why Measuring limits Verify production requirements Verifying requirements How Isolated traffic, simple traffic Complex traffic Active monitoring, Passive monitoring 31. 10. 2020 Performance Test Methodology For the whole system Per component or service Requires test beds and architecture based approaches many protocols, many interfaces 10

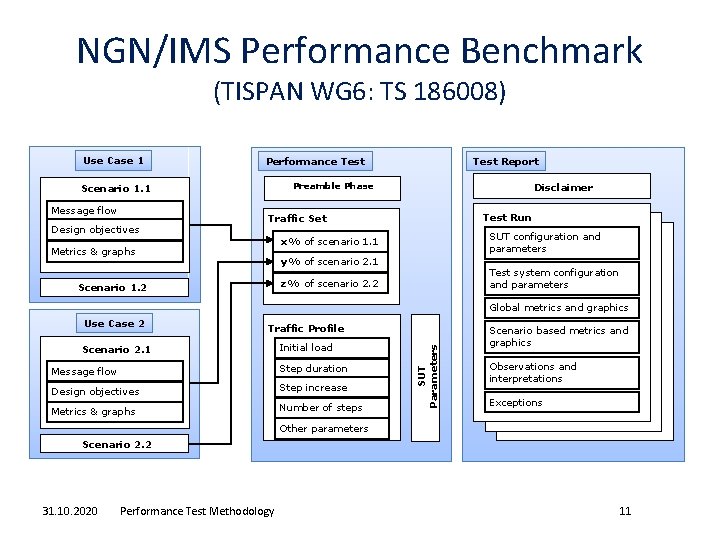

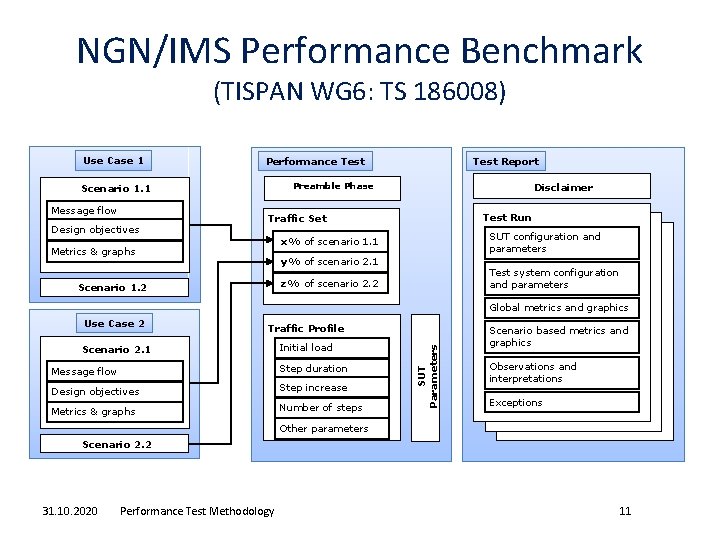

NGN/IMS Performance Benchmark (TISPAN WG 6: TS 186008) Use Case 1 Preamble Phase Scenario 1. 1 Message flow Design objectives Test Report Performance Test Disclaimer Test Run Traffic Set SUT configuration and parameters x% of scenario 1. 1 Metrics & graphs y% of scenario 2. 1 Test system configuration and parameters z% of scenario 2. 2 Scenario 1. 2 Use Case 2 Traffic Profile Scenario 2. 1 Initial load Message flow Step duration Design objectives Step increase Metrics & graphs Number of steps SUT Parameters Global metrics and graphics Scenario based metrics and graphics Observations and interpretations Exceptions Other parameters Scenario 2. 2 31. 10. 2020 Performance Test Methodology 11

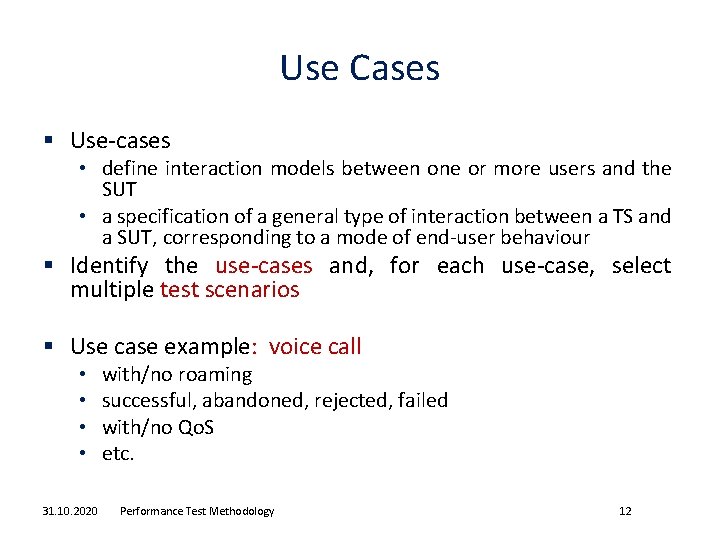

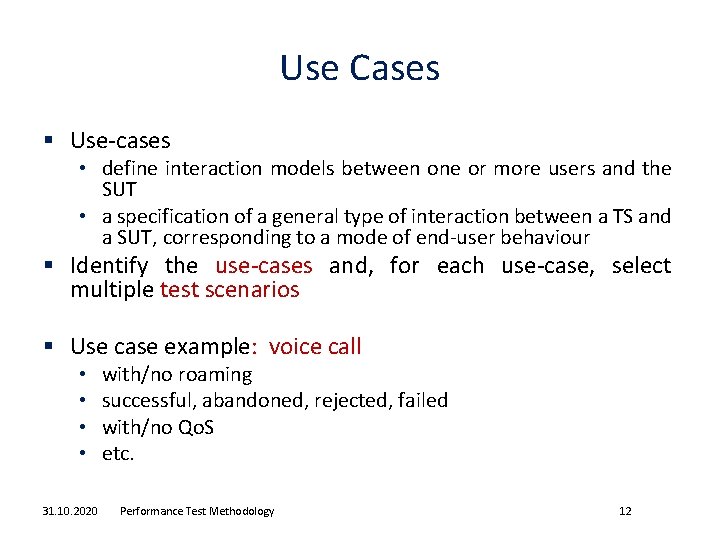

Use Cases § Use-cases • define interaction models between one or more users and the SUT • a specification of a general type of interaction between a TS and a SUT, corresponding to a mode of end-user behaviour § Identify the use-cases and, for each use-case, select multiple test scenarios § Use case example: voice call • • 31. 10. 2020 with/no roaming successful, abandoned, rejected, failed with/no Qo. S etc. Performance Test Methodology 12

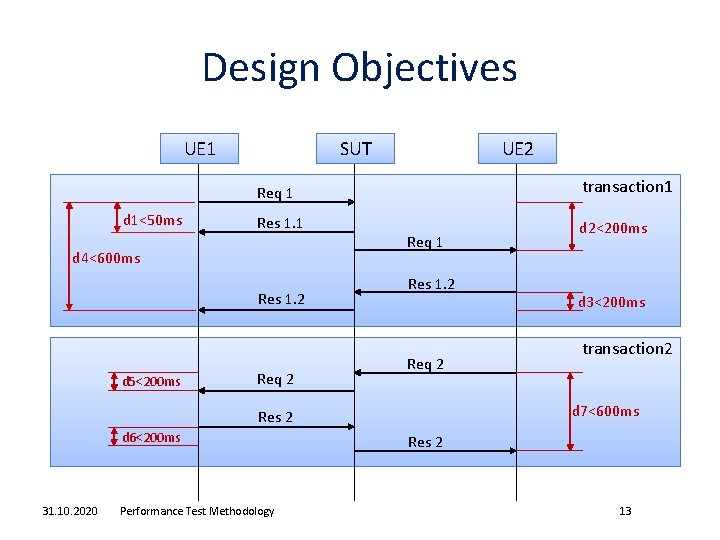

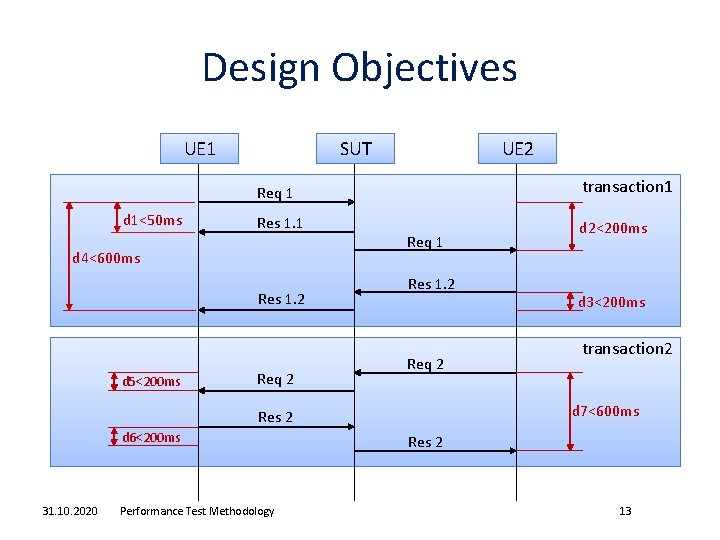

Design Objectives UE 1 SUT UE 2 transaction 1 Req 1 d 1<50 ms Res 1. 1 d 4<600 ms Res 1. 2 d 5<200 ms Req 2 Req 1 Res 1. 2 Req 2 31. 10. 2020 Performance Test Methodology d 3<200 ms transaction 2 d 7<600 ms Res 2 d 6<200 ms d 2<200 ms Res 2 13

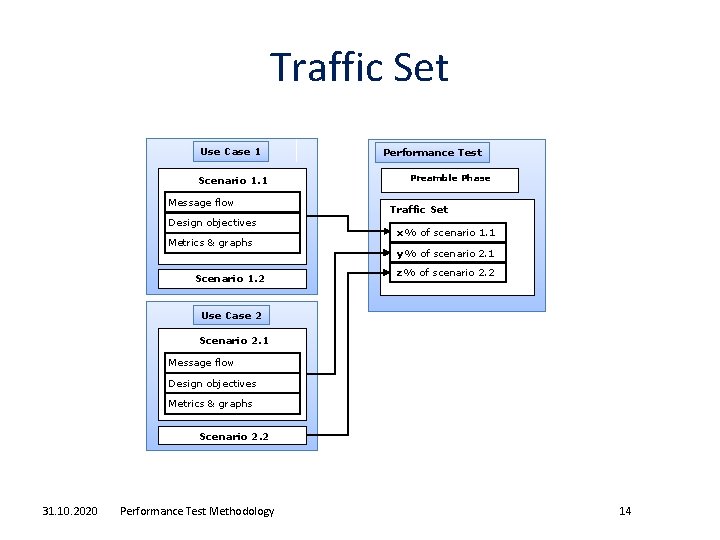

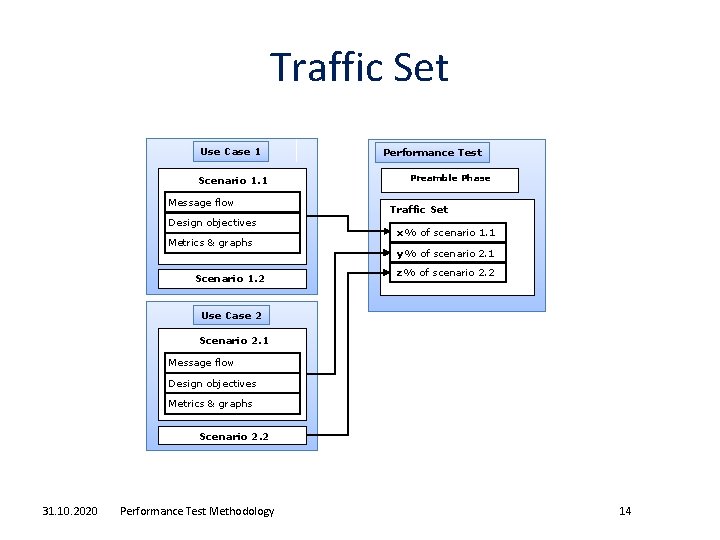

Traffic Set Use Case 1 Scenario 1. 1 Message flow Design objectives Metrics & graphs Scenario 1. 2 Performance Test Preamble Phase Traffic Set x% of scenario 1. 1 y% of scenario 2. 1 z% of scenario 2. 2 Use Case 2 Scenario 2. 1 Message flow Design objectives Metrics & graphs Scenario 2. 2 31. 10. 2020 Performance Test Methodology 14

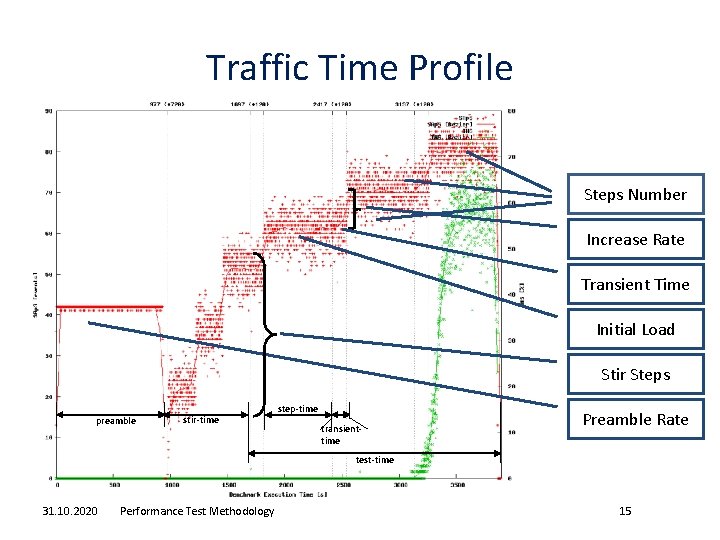

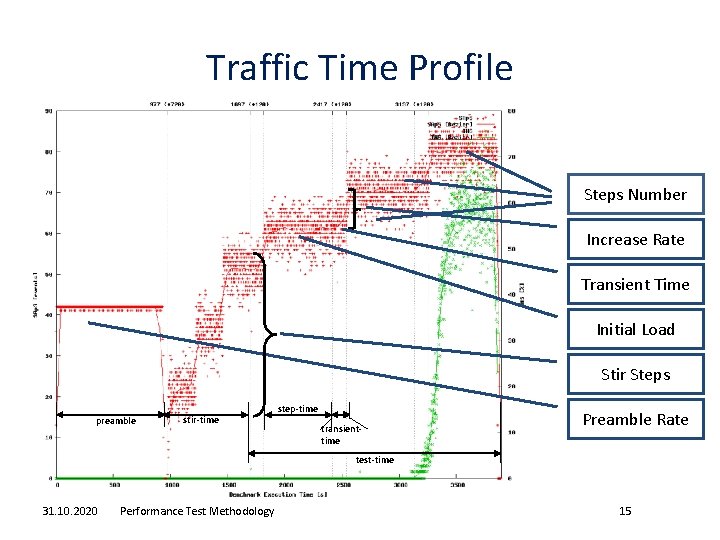

Traffic Time Profile Steps Number Increase Rate Transient Time Initial Load Stir Steps preamble stir-time step-time transienttime Preamble Rate test-time 31. 10. 2020 Performance Test Methodology 15

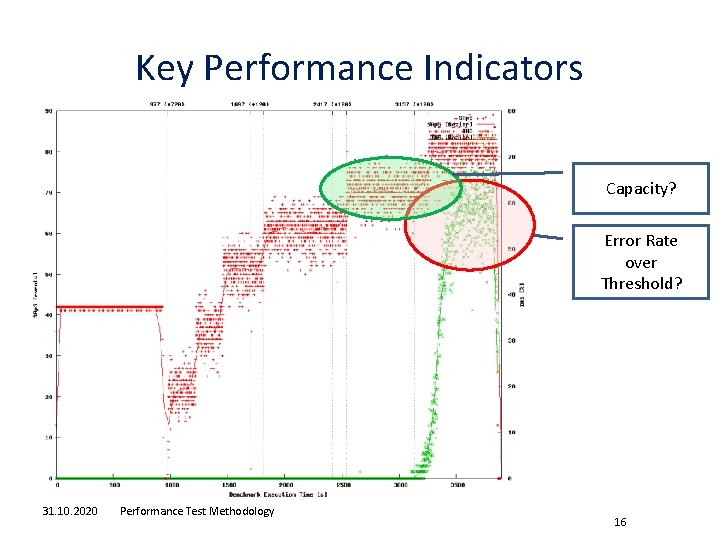

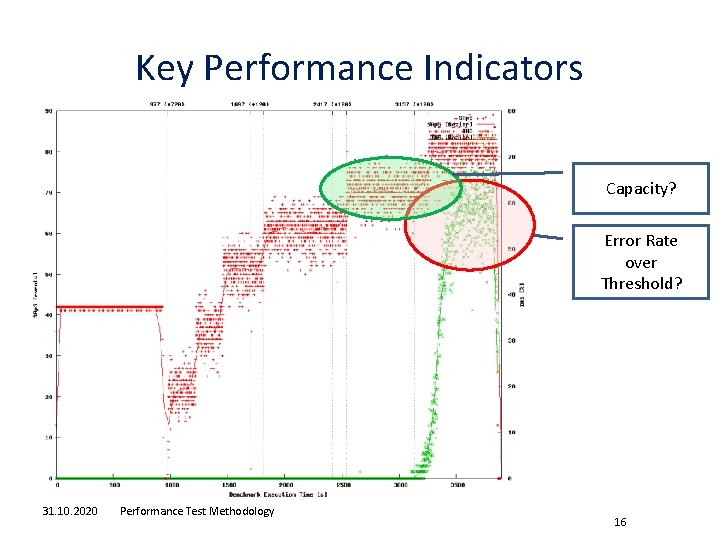

Key Performance Indicators Capacity? Error Rate over Threshold? 31. 10. 2020 Performance Test Methodology 16

Measurement and Workload Translation 31. 10. 2020 Performance Test Methodology 17

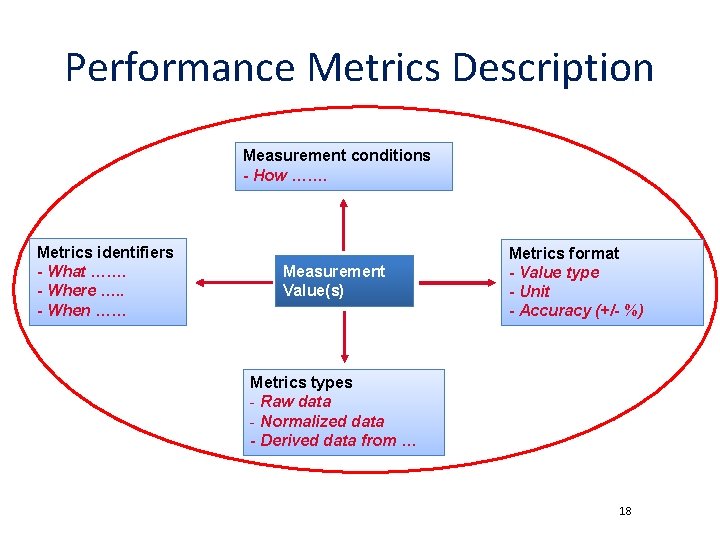

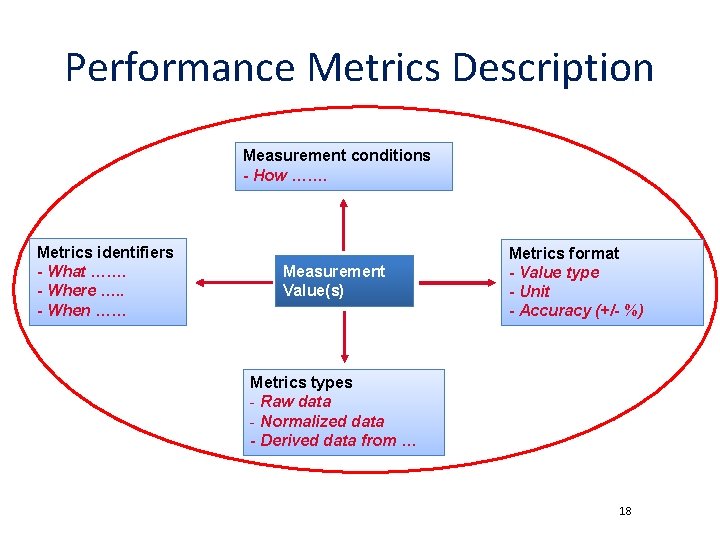

Performance Metrics Description Measurement conditions - How ……. Metrics identifiers - What ……. - Where …. . - When …… Measurement Value(s) Metrics format - Value type - Unit - Accuracy (+/- %) Metrics types - Raw data - Normalized data - Derived data from … 18

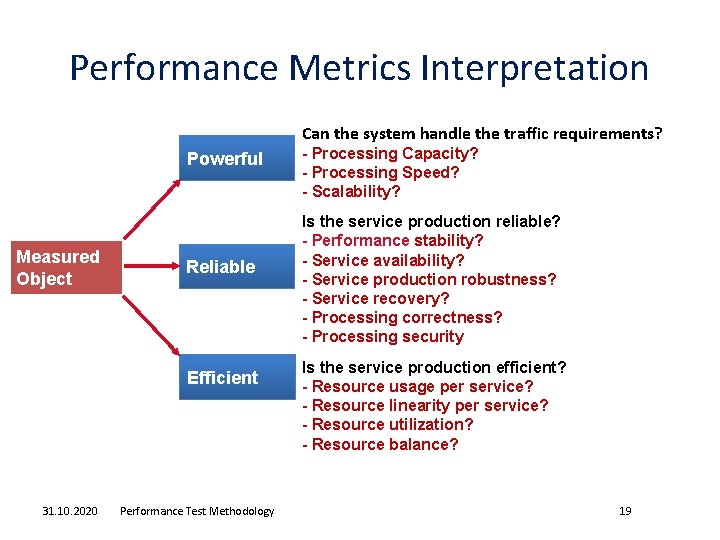

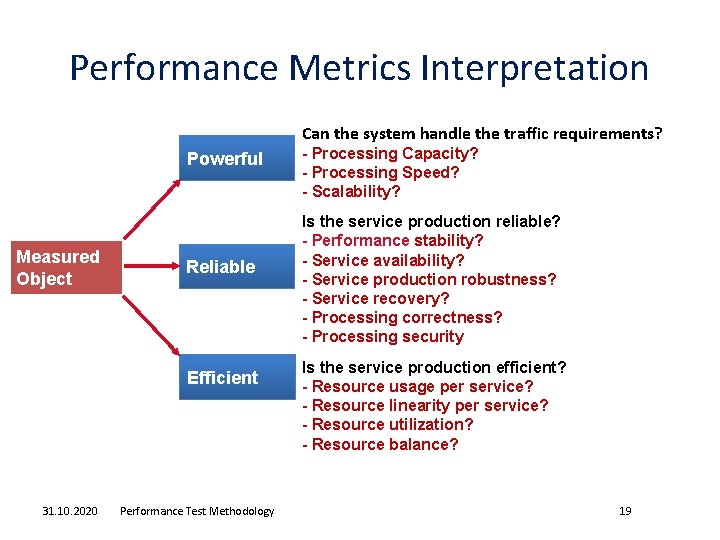

Performance Metrics Interpretation Can the system handle the traffic requirements? Powerful Measured Object Reliable Efficient 31. 10. 2020 Performance Test Methodology - Processing Capacity? - Processing Speed? - Scalability? Is the service production reliable? - Performance stability? - Service availability? - Service production robustness? - Service recovery? - Processing correctness? - Processing security Is the service production efficient? - Resource usage per service? - Resource linearity per service? - Resource utilization? - Resource balance? 19

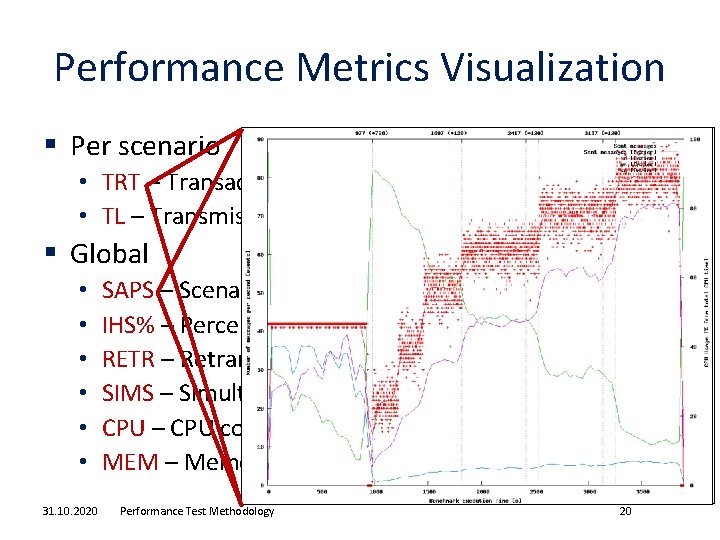

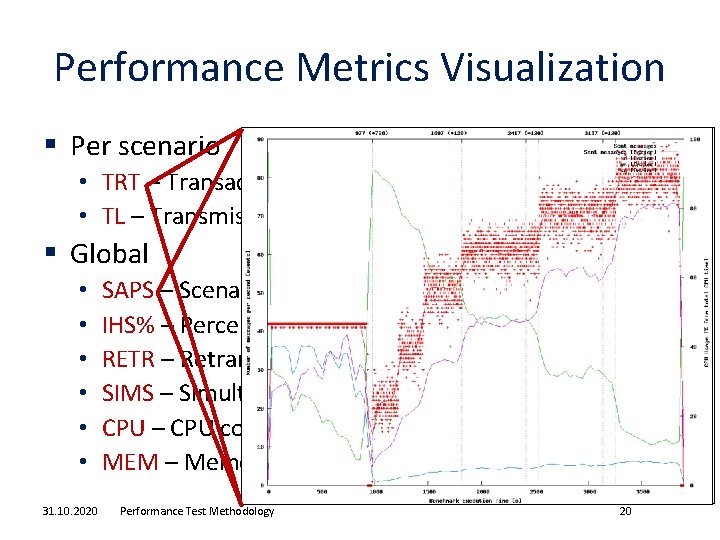

Performance Metrics Visualization § Per scenario • TRT – Transaction Response Time • TL – Transmission Latency § Global • • • 31. 10. 2020 SAPS – Scenario Attempts per Second IHS% – Percent of Inadequately Handled Scenarios RETR – Retransmissions SIMS – Simultaneous Scenario CPU – CPU consumption on SUT MEM – Memory consumption on SUT Performance Test Methodology 20

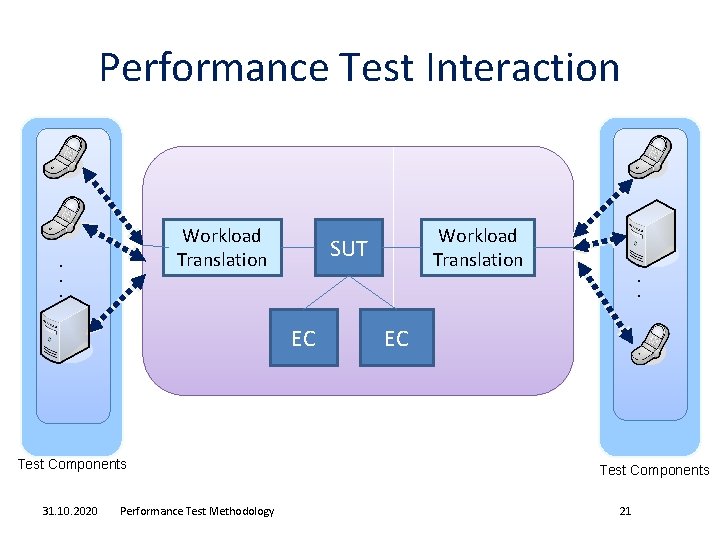

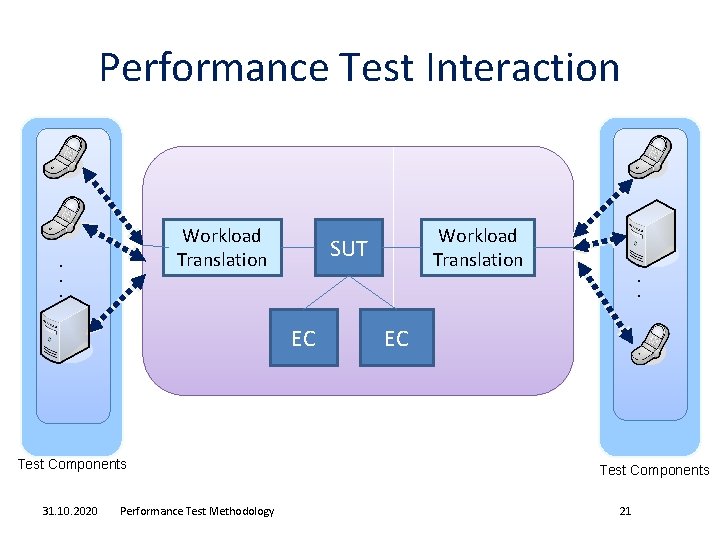

Performance Test Interaction EC Test Components 31. 10. 2020 Performance Test Methodology Workload Translation SUT . . . Workload Translation EC Test Components 21

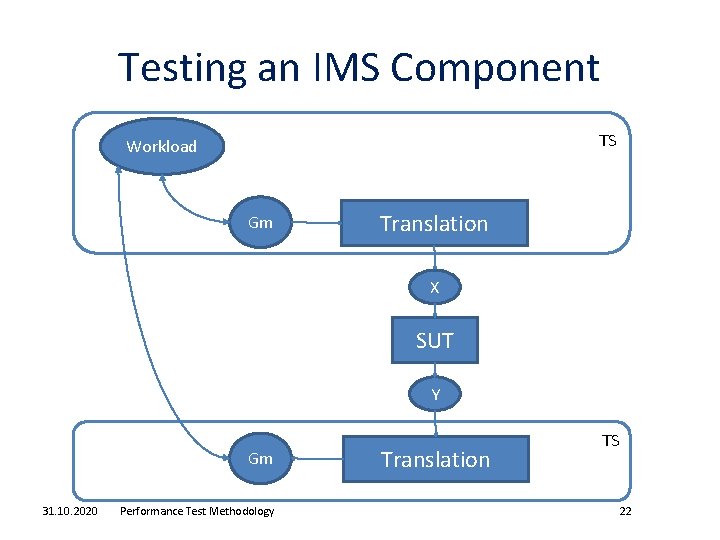

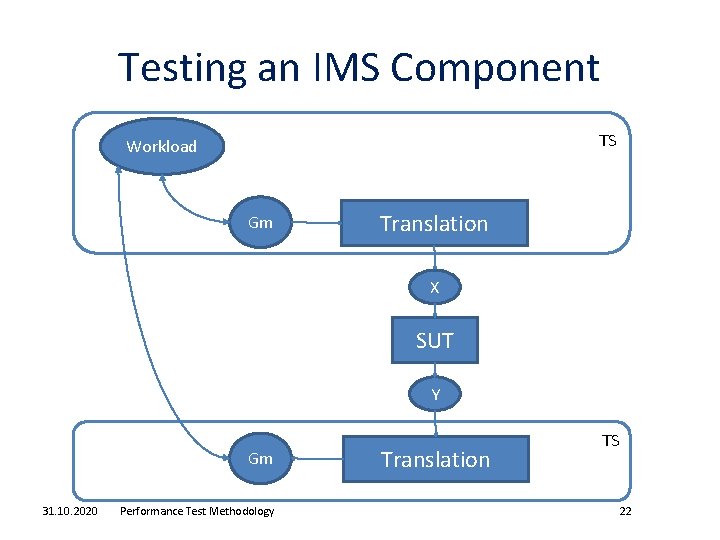

Testing an IMS Component TS Workload Gm Translation X SUT Y Gm 31. 10. 2020 Performance Test Methodology Translation TS 22

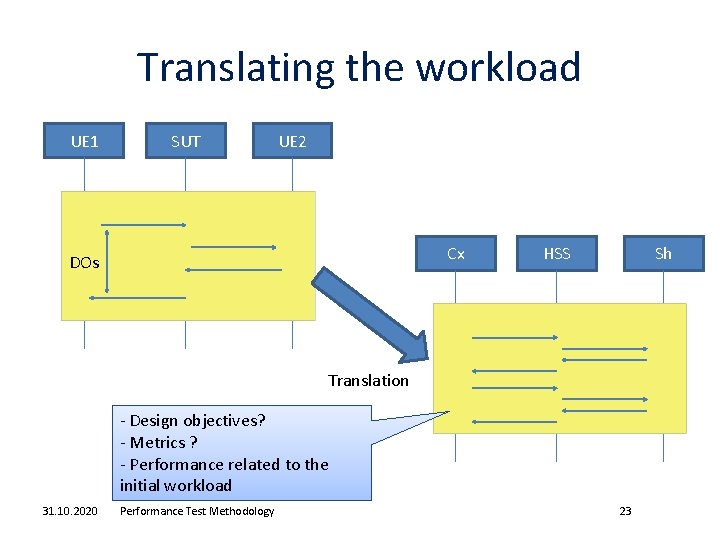

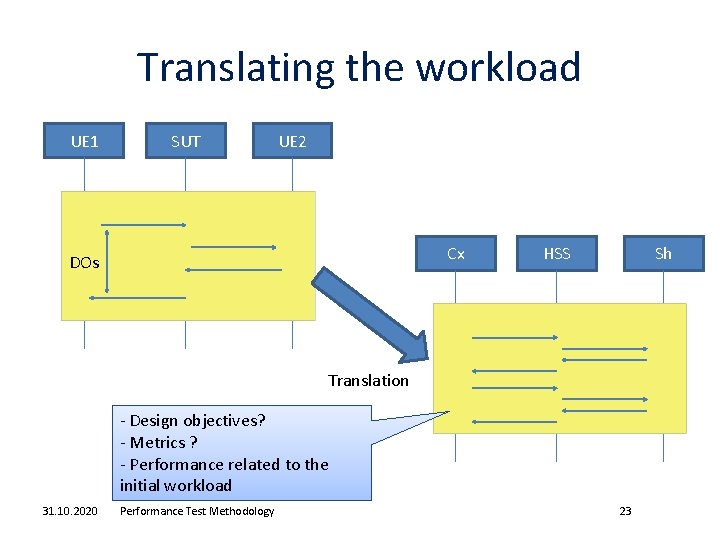

Translating the workload UE 1 SUT UE 2 Cx DOs HSS Sh Translation - Design objectives? - Metrics ? - Performance related to the initial workload 31. 10. 2020 Performance Test Methodology 23

Roadmap 31. 10. 2020 Performance Test Methodology 24

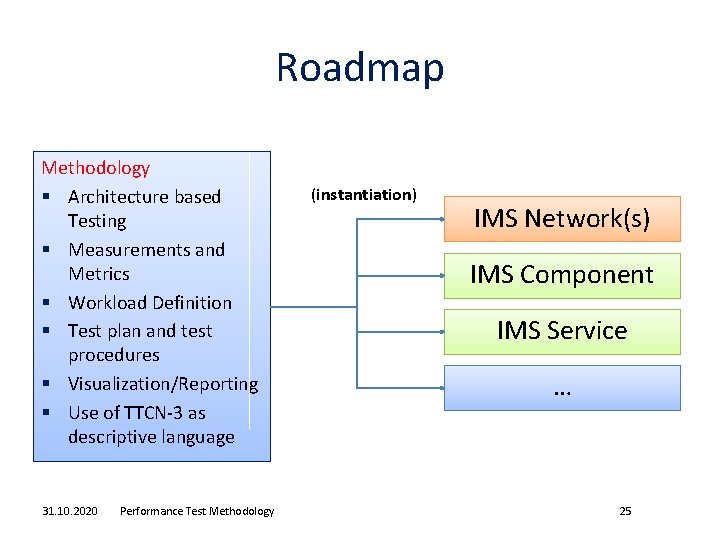

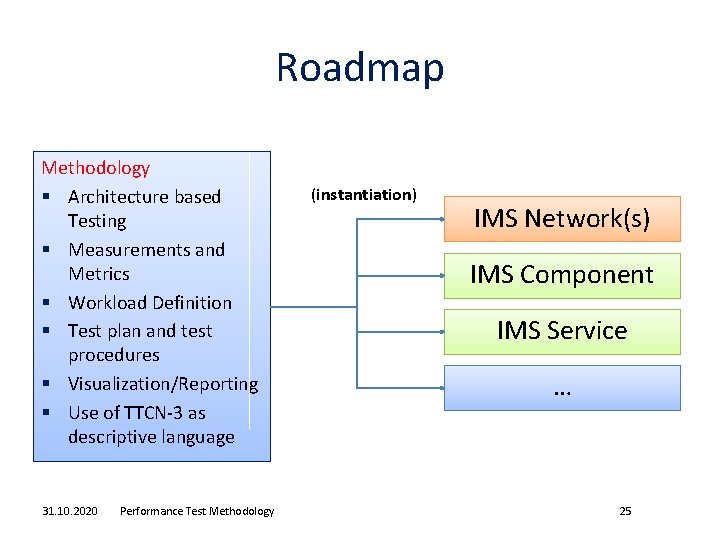

Roadmap Methodology § Architecture based Testing § Measurements and Metrics § Workload Definition § Test plan and test procedures § Visualization/Reporting § Use of TTCN-3 as descriptive language 31. 10. 2020 Performance Test Methodology (instantiation) IMS Network(s) IMS Component IMS Service … 25

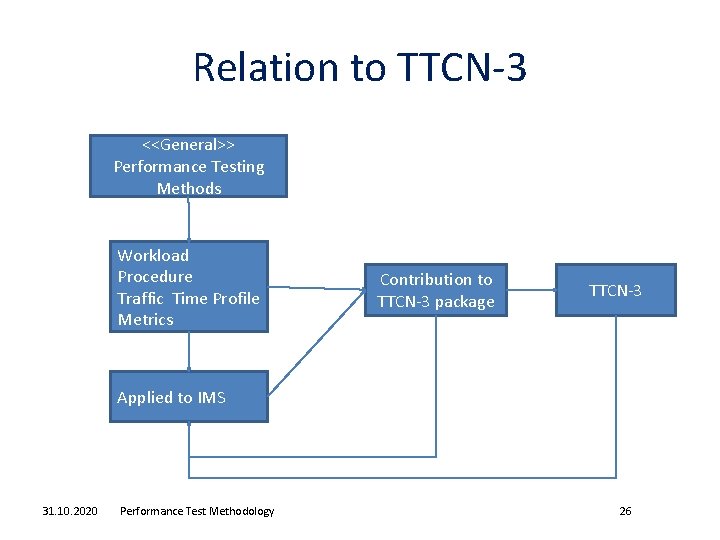

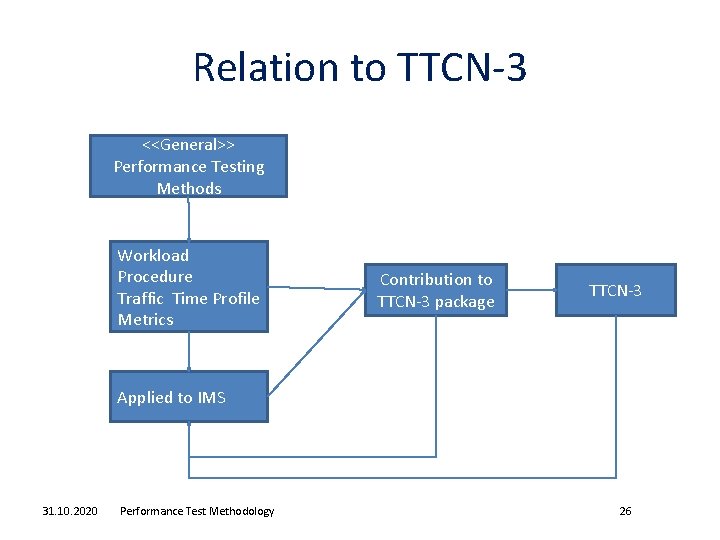

Relation to TTCN-3 <<General>> Performance Testing Methods Workload Procedure Traffic Time Profile Metrics Contribution to TTCN-3 package TTCN-3 Applied to IMS 31. 10. 2020 Performance Test Methodology 26

Outlook § Benefits for community • Common terminology for performance testing • Create performance tests on a common basis § Investigate the interest for this proposal • Set up a WI • Get more participants • (later on) set up a task force § Agenda • March 2009: common methodology proposal • Oct 2009: IMS performance testing proposal based on the methodology 31. 10. 2020 Performance Test Methodology 27