Towards a Scalable Adaptive and Networkaware Content Distribution

Towards a Scalable, Adaptive and Network-aware Content Distribution Network Yan Chen EECS Department UC Berkeley

Outline • Motivation and Challenges • Our Contributions: SCAN system • Case Study: Tomography-based overlay network monitoring system • Conclusions

Motivation • The Internet has evolved to become a commercial infrastructure for service delivery – Web delivery, Vo. IP, streaming media … • Challenges for Internet-scale services – – Scalability: 600 M users, 35 M Web sites, 2. 1 Tb/s Efficiency: bandwidth, storage, management Agility: dynamic clients/network/servers Security, etc. • Focus on content delivery - Content Distribution Network (CDN) – Totally 4 Billion Web pages, daily growth of 7 M pages – Annual traffic growth of 200% for next 4 years

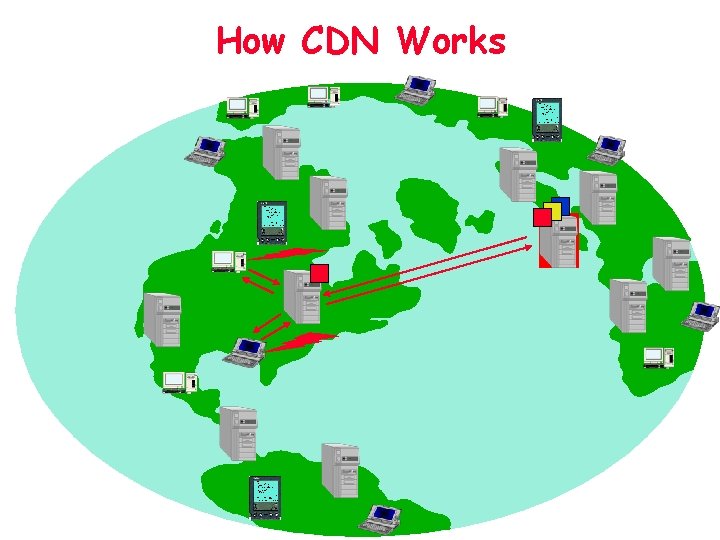

How CDN Works

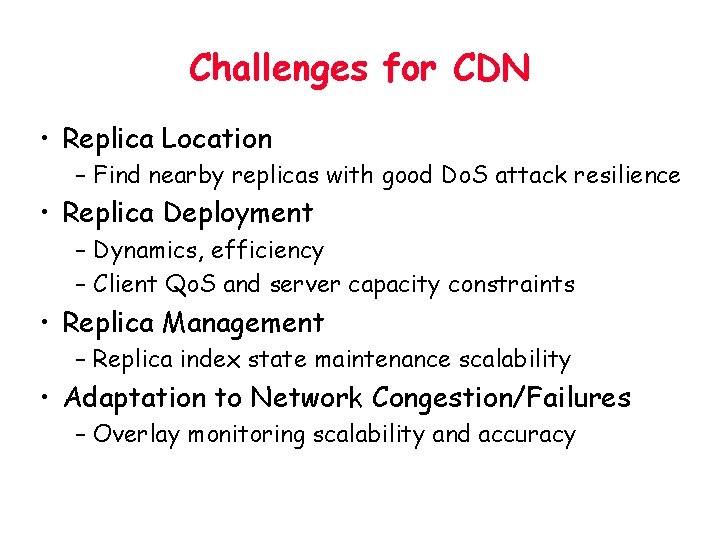

Challenges for CDN • Replica Location – Find nearby replicas with good Do. S attack resilience • Replica Deployment – Dynamics, efficiency – Client Qo. S and server capacity constraints • Replica Management – Replica index state maintenance scalability • Adaptation to Network Congestion/Failures – Overlay monitoring scalability and accuracy

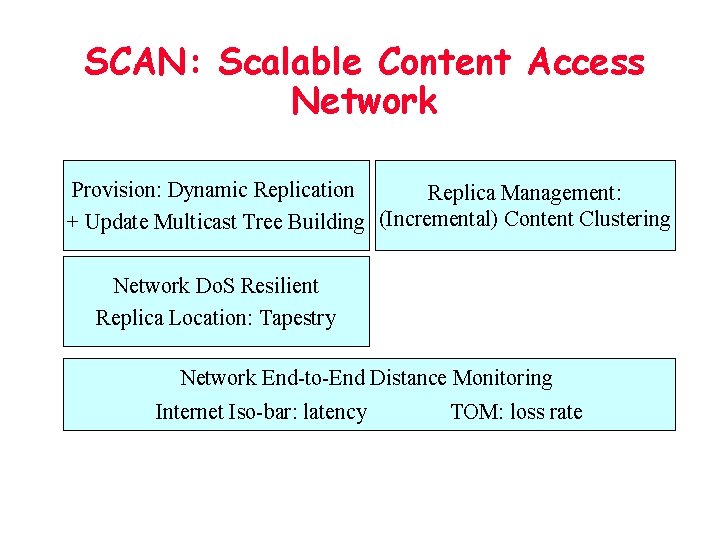

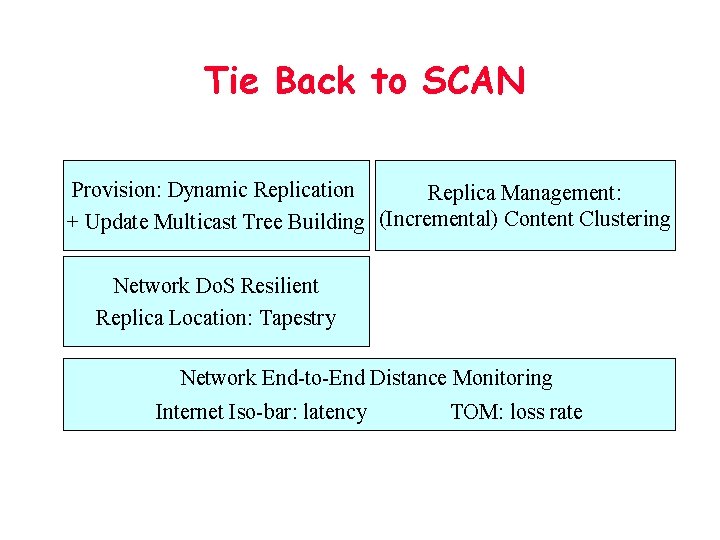

SCAN: Scalable Content Access Network Provision: Dynamic Replication Replica Management: + Update Multicast Tree Building (Incremental) Content Clustering Network Do. S Resilient Replica Location: Tapestry Network End-to-End Distance Monitoring Internet Iso-bar: latency TOM: loss rate

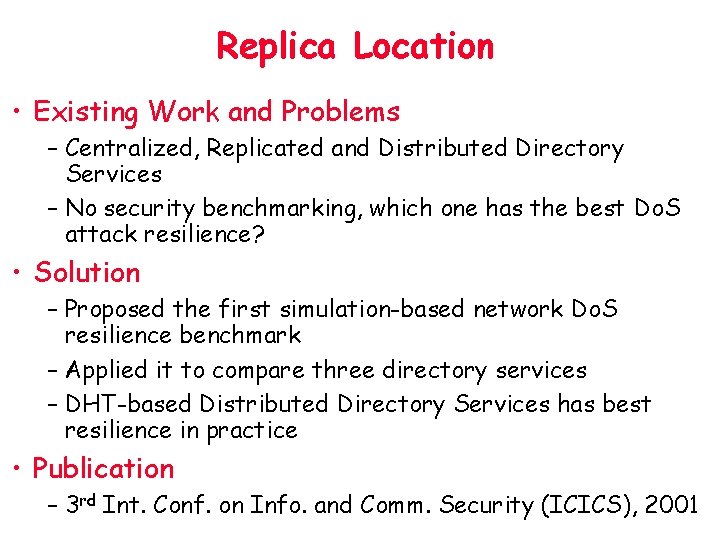

Replica Location • Existing Work and Problems – Centralized, Replicated and Distributed Directory Services – No security benchmarking, which one has the best Do. S attack resilience? • Solution – Proposed the first simulation-based network Do. S resilience benchmark – Applied it to compare three directory services – DHT-based Distributed Directory Services has best resilience in practice • Publication – 3 rd Int. Conf. on Info. and Comm. Security (ICICS), 2001

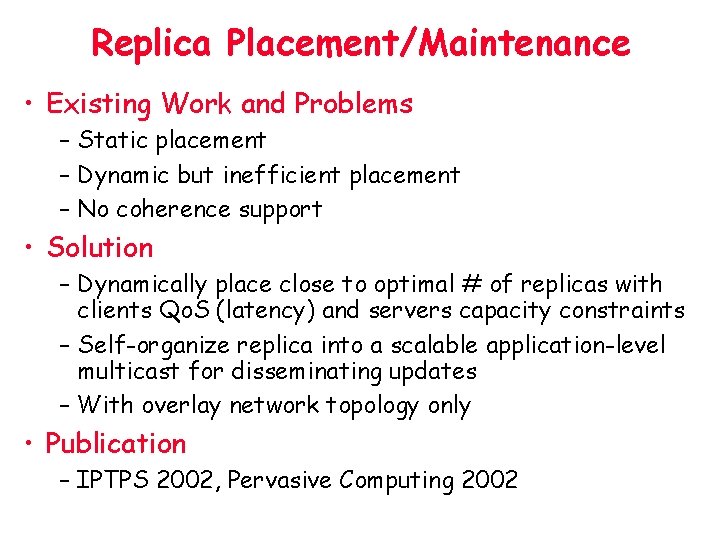

Replica Placement/Maintenance • Existing Work and Problems – Static placement – Dynamic but inefficient placement – No coherence support • Solution – Dynamically place close to optimal # of replicas with clients Qo. S (latency) and servers capacity constraints – Self-organize replica into a scalable application-level multicast for disseminating updates – With overlay network topology only • Publication – IPTPS 2002, Pervasive Computing 2002

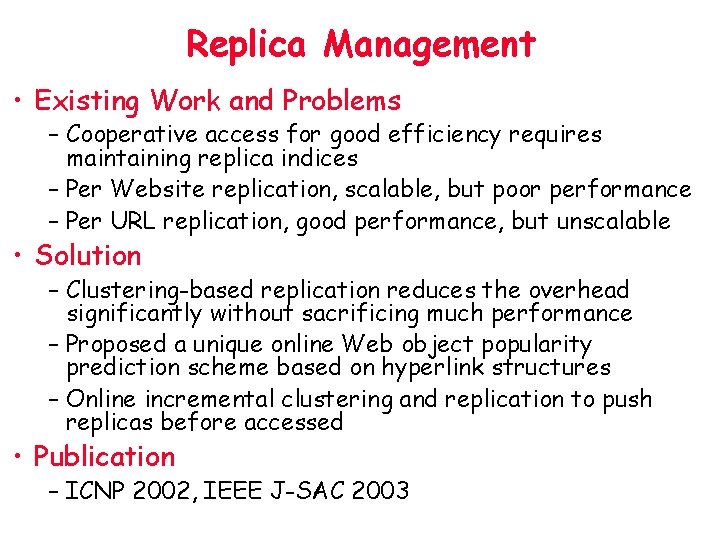

Replica Management • Existing Work and Problems – Cooperative access for good efficiency requires maintaining replica indices – Per Website replication, scalable, but poor performance – Per URL replication, good performance, but unscalable • Solution – Clustering-based replication reduces the overhead significantly without sacrificing much performance – Proposed a unique online Web object popularity prediction scheme based on hyperlink structures – Online incremental clustering and replication to push replicas before accessed • Publication – ICNP 2002, IEEE J-SAC 2003

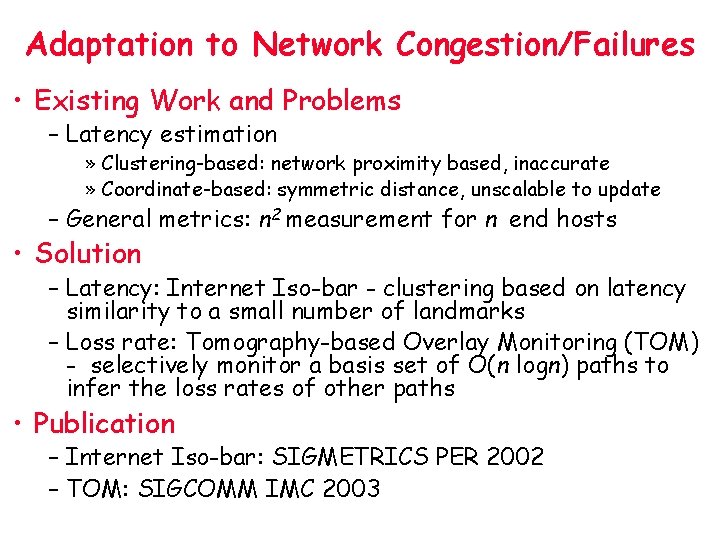

Adaptation to Network Congestion/Failures • Existing Work and Problems – Latency estimation » Clustering-based: network proximity based, inaccurate » Coordinate-based: symmetric distance, unscalable to update – General metrics: n 2 measurement for n end hosts • Solution – Latency: Internet Iso-bar - clustering based on latency similarity to a small number of landmarks – Loss rate: Tomography-based Overlay Monitoring (TOM) - selectively monitor a basis set of O(n logn) paths to infer the loss rates of other paths • Publication – Internet Iso-bar: SIGMETRICS PER 2002 – TOM: SIGCOMM IMC 2003

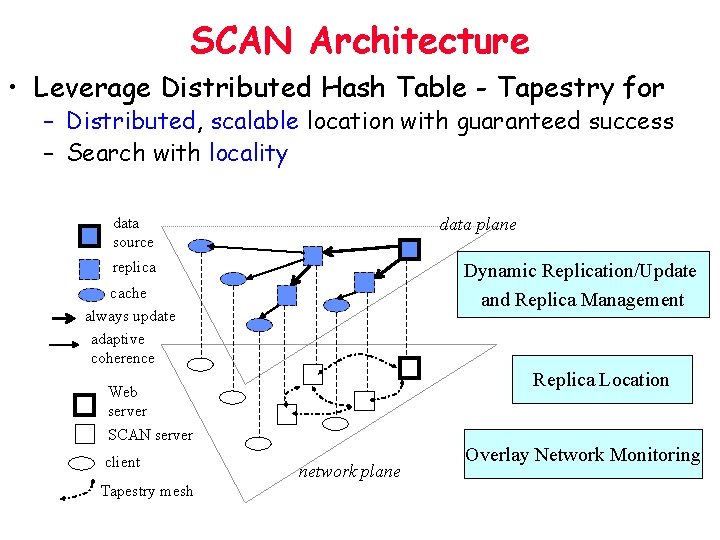

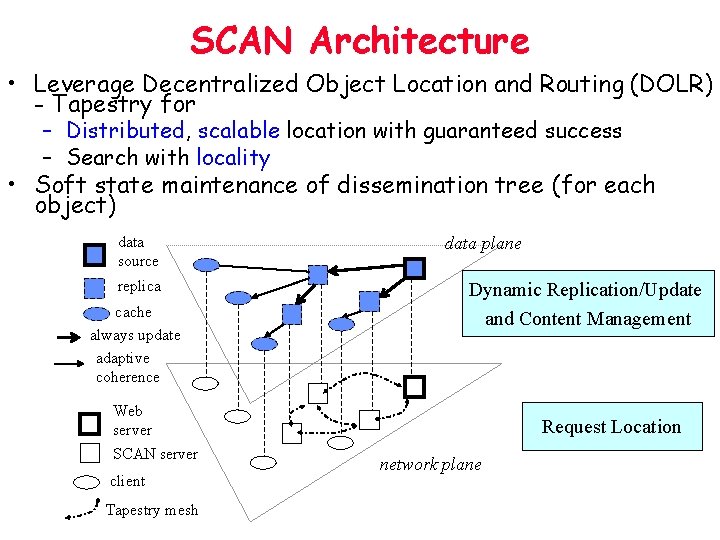

SCAN Architecture • Leverage Distributed Hash Table - Tapestry for – Distributed, scalable location with guaranteed success – Search with locality data source data plane replica Dynamic Replication/Update and Replica Management cache always update adaptive coherence Replica Location Web server SCAN server client Tapestry mesh network plane Overlay Network Monitoring

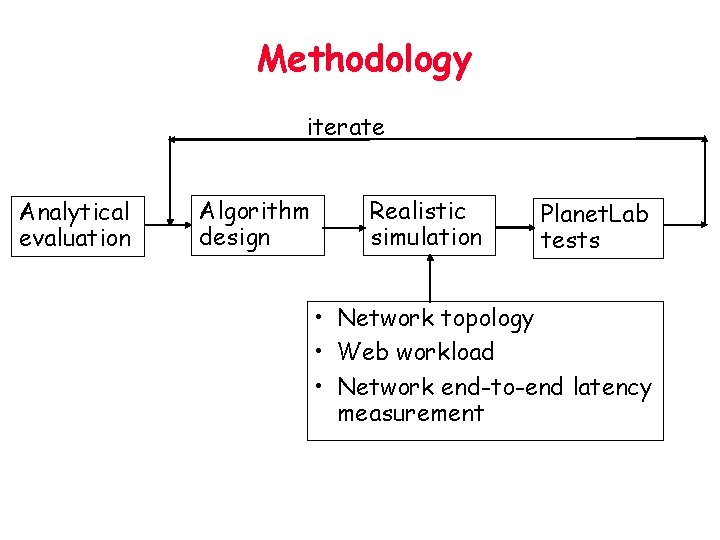

Methodology iterate Analytical evaluation Algorithm design Realistic simulation Planet. Lab tests • Network topology • Web workload • Network end-to-end latency measurement

Case Study: Tomography-based Overlay Network Monitoring

TOM Outline • • • Goal and Problem Formulation Algebraic Modeling and Basic Algorithms Scalability Analysis Practical Issues Evaluation Application: Adaptive Overlay Streaming Media • Conclusions

Goal: a scalable, adaptive and accurate overlay monitoring system to detect e 2 e congestion/failures Existing Work • General Metrics: RON (n 2 measurement) • Latency Estimation – Clustering-based: IDMaps, Internet Isobar, etc. – Coordinate-based: GNP, ICS, Virtual Landmarks • Network tomography – Focusing on inferring the characteristics of physical links rather than E 2 E paths – Limited measurements -> under-constrained system, unidentifiable links

Problem Formulation Given an overlay of n end hosts and O(n 2) paths, how to select a minimal subset of paths to monitor so that the loss rates/latency of all other paths can be inferred. Assumptions: • Topology measurable • Can only measure the E 2 E path, not the link

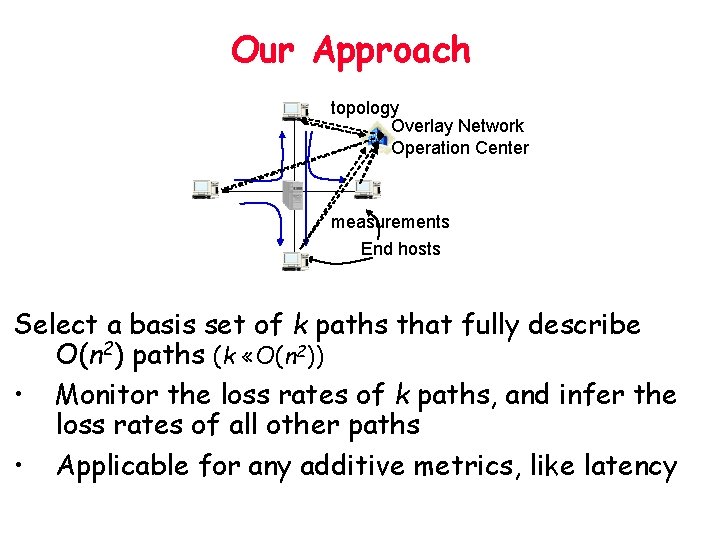

Our Approach topology Overlay Network Operation Center measurements End hosts Select a basis set of k paths that fully describe O(n 2) paths (k «O(n 2)) • Monitor the loss rates of k paths, and infer the loss rates of all other paths • Applicable for any additive metrics, like latency

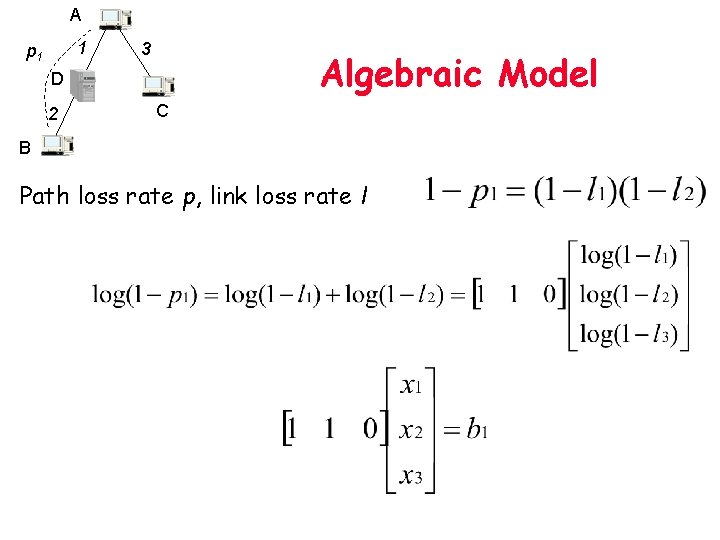

A 1 p 1 3 Algebraic Model D 2 C B Path loss rate p, link loss rate l

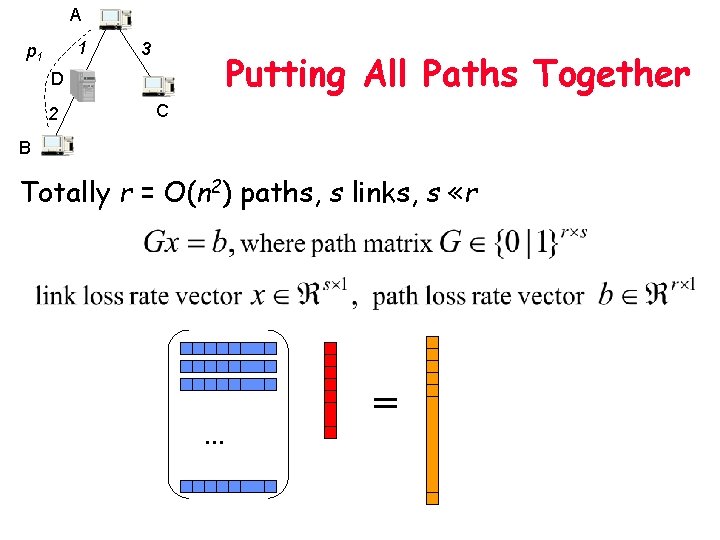

A 1 p 1 3 Putting All Paths Together D 2 C B Totally r = O(n 2) paths, s links, s «r … =

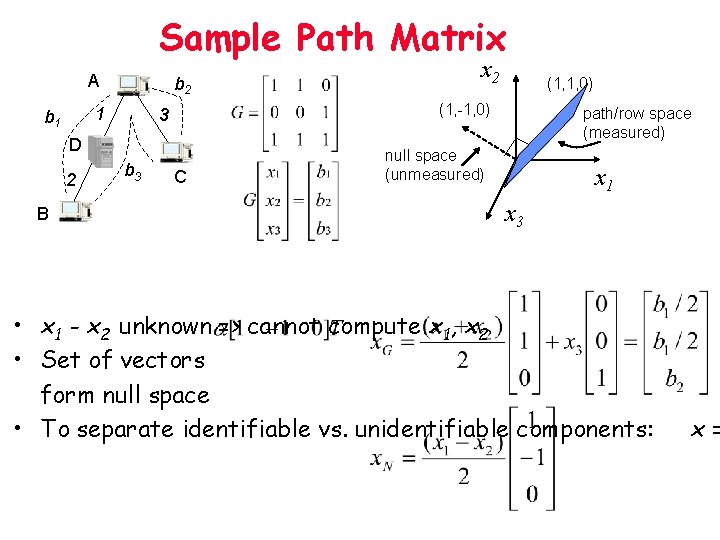

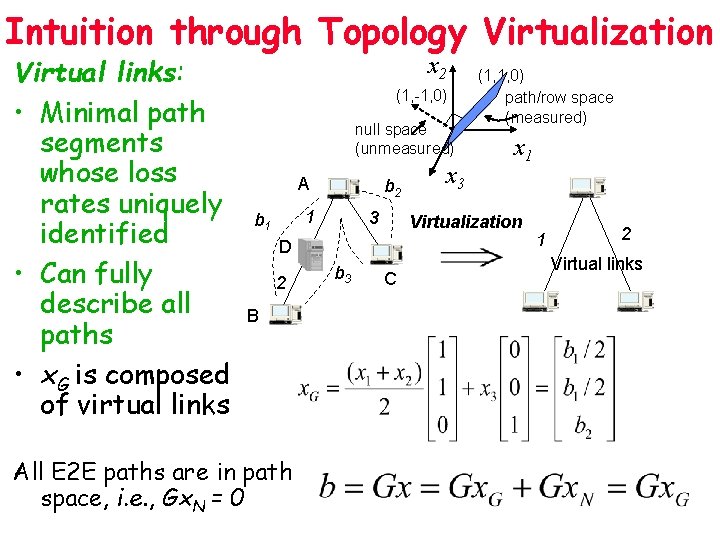

Sample Path Matrix A b 2 1 b 1 B b 3 (1, 1, 0) (1, -1, 0) 3 D 2 x 2 C path/row space (measured) null space (unmeasured) x 1 x 3 • x 1 - x 2 unknown => cannot compute x 1, x 2 • Set of vectors form null space • To separate identifiable vs. unidentifiable components: x=

Intuition through Topology Virtualization Virtual links: • Minimal path segments whose loss rates uniquely identified • Can fully describe all paths • x. G is composed of virtual links x 2 (1, -1, 0) null space (unmeasured) A b 2 1 b 1 3 2 B All E 2 E paths are in path space, i. e. , Gx. N = 0 x 1 Virtualization D b 3 x 3 (1, 1, 0) path/row space (measured) C 1 2 Virtual links

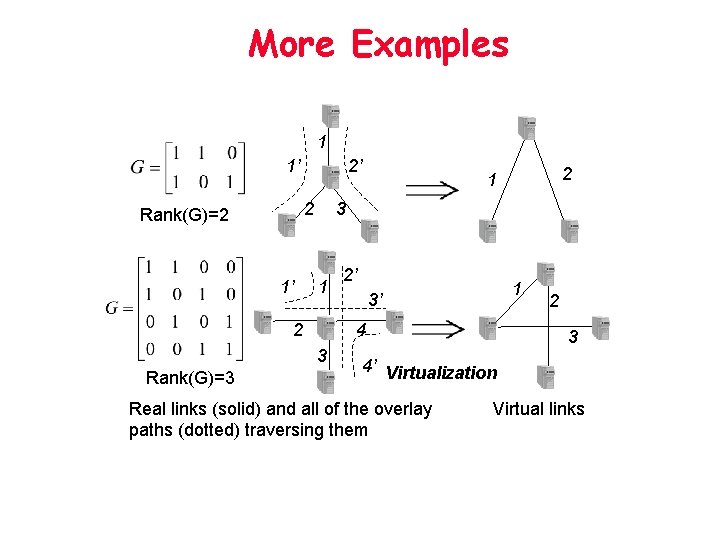

More Examples 1 1’ 2’ 2 Rank(G)=2 1’ 3 1 2 2’ 1 3’ 4 3 Rank(G)=3 2 1 2 3 4’ Virtualization Real links (solid) and all of the overlay paths (dotted) traversing them Virtual links

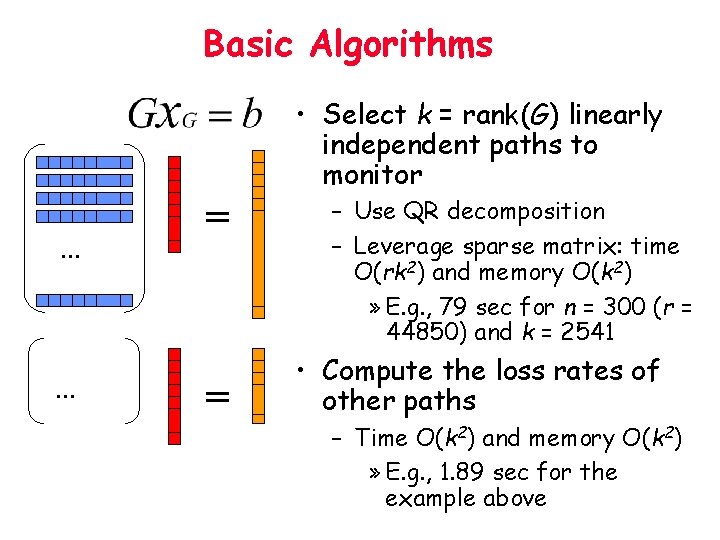

Basic Algorithms • Select k = rank(G) linearly independent paths to monitor … … = = – Use QR decomposition – Leverage sparse matrix: time O(rk 2) and memory O(k 2) » E. g. , 79 sec for n = 300 (r = 44850) and k = 2541 • Compute the loss rates of other paths – Time O(k 2) and memory O(k 2) » E. g. , 1. 89 sec for the example above

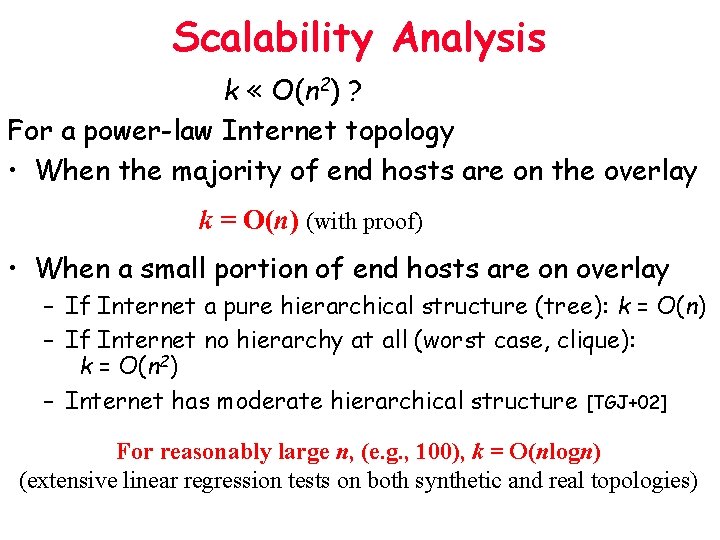

Scalability Analysis k « O(n 2) ? For a power-law Internet topology • When the majority of end hosts are on the overlay k = O(n) (with proof) • When a small portion of end hosts are on overlay – If Internet a pure hierarchical structure (tree): k = O(n) – If Internet no hierarchy at all (worst case, clique): k = O(n 2) – Internet has moderate hierarchical structure [TGJ+02] For reasonably large n, (e. g. , 100), k = O(nlogn) (extensive linear regression tests on both synthetic and real topologies)

TOM Outline • • • Goal and Problem Formulation Algebraic Modeling and Basic Algorithms Scalability Analysis Practical Issues Evaluation Application: Adaptive Overlay Streaming Media • Summary

Practical Issues • Topology measurement errors tolerance – Router aliases – Incomplete routing info • Measurement load balancing – Randomly order the paths for scan and selection of • Adaptive to topology changes – Designed efficient algorithms for incrementally update – Add/remove a path: O(k 2) time (O(n 2 k 2) for reinitialize) – Add/remove end hosts and Routing changes

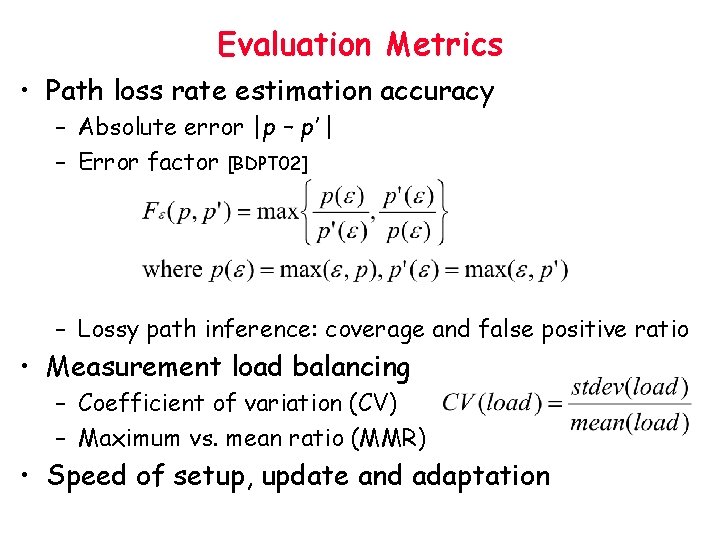

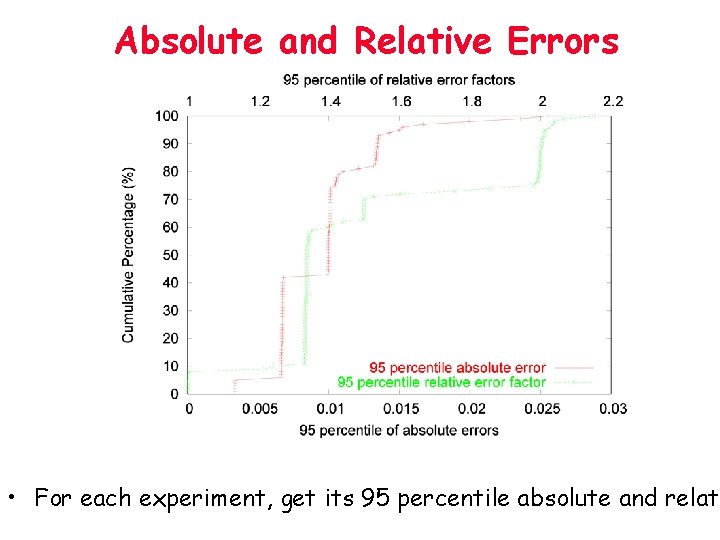

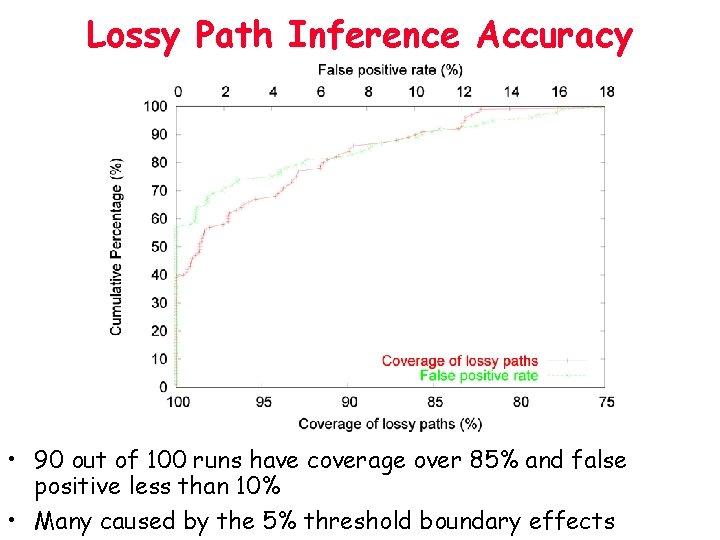

Evaluation Metrics • Path loss rate estimation accuracy – Absolute error |p – p’ | – Error factor [BDPT 02] – Lossy path inference: coverage and false positive ratio • Measurement load balancing – Coefficient of variation (CV) – Maximum vs. mean ratio (MMR) • Speed of setup, update and adaptation

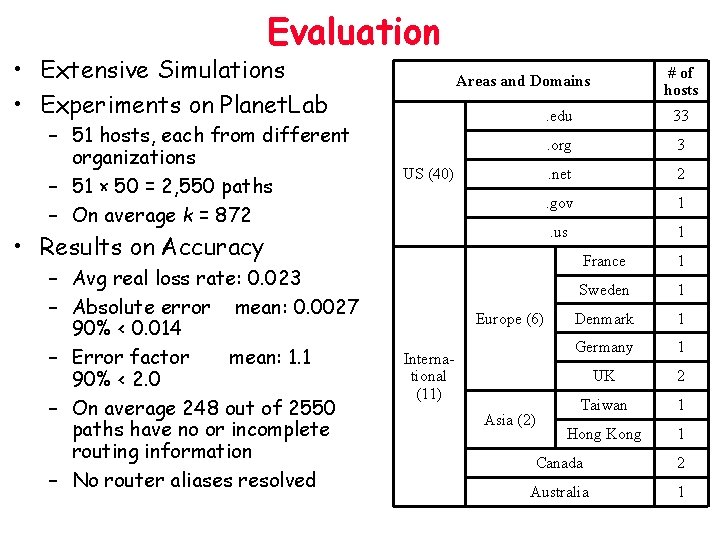

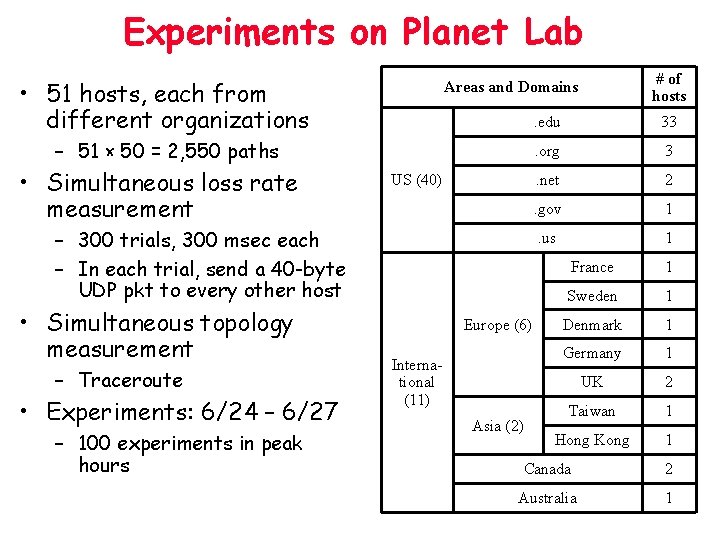

Evaluation • Extensive Simulations • Experiments on Planet. Lab – 51 hosts, each from different organizations – 51 × 50 = 2, 550 paths – On average k = 872 US (40) • Results on Accuracy – Avg real loss rate: 0. 023 – Absolute error mean: 0. 0027 90% < 0. 014 – Error factor mean: 1. 1 90% < 2. 0 – On average 248 out of 2550 paths have no or incomplete routing information – No router aliases resolved # of hosts Areas and Domains Europe (6) International (11) Asia (2) . edu 33 . org 3 . net 2 . gov 1 . us 1 France 1 Sweden 1 Denmark 1 Germany 1 UK 2 Taiwan 1 Hong Kong 1 Canada 2 Australia 1

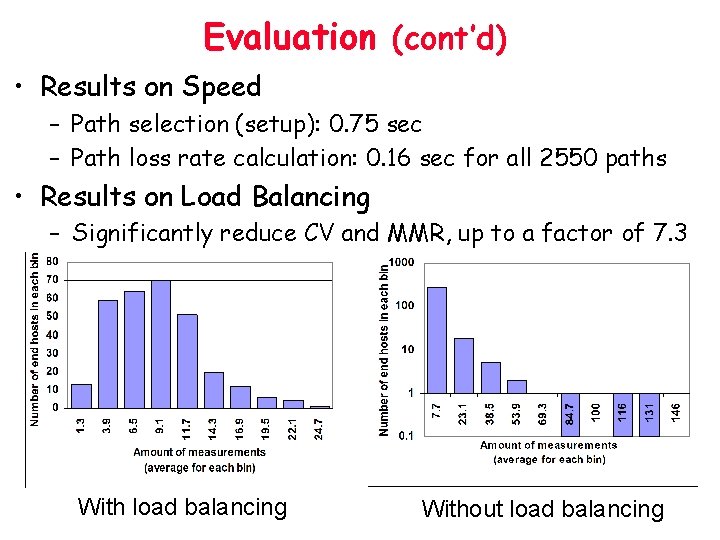

Evaluation (cont’d) • Results on Speed – Path selection (setup): 0. 75 sec – Path loss rate calculation: 0. 16 sec for all 2550 paths • Results on Load Balancing – Significantly reduce CV and MMR, up to a factor of 7. 3 With load balancing Without load balancing

TOM Outline • • • Goal and Problem Formulation Algebraic Modeling and Basic Algorithms Scalability Analysis Practical Issues Evaluation Application: Adaptive Overlay Streaming Media • Conclusions

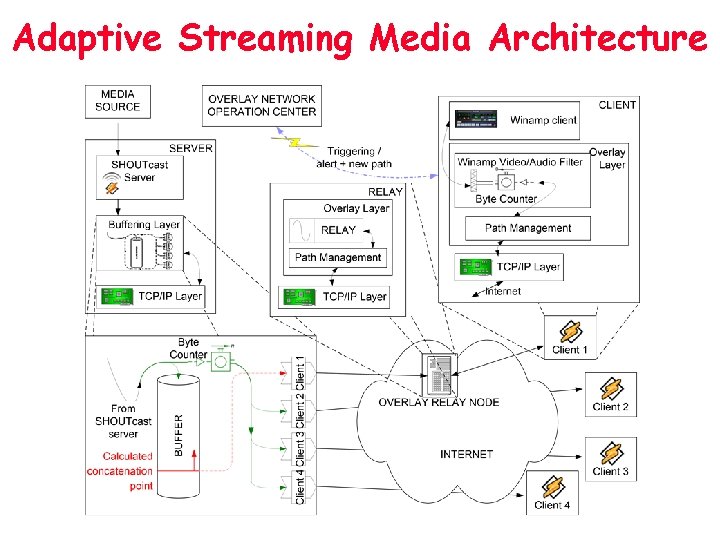

Motivation • Traditional streaming media systems treat the network as a black box • Adaptation only performed at the transmission end points • Overlay relay can effectively bypass congestion/failures • Built an adaptive streaming media system that leverages – TOM for real-time path info – An overlay network for adaptive packet buffering and relay

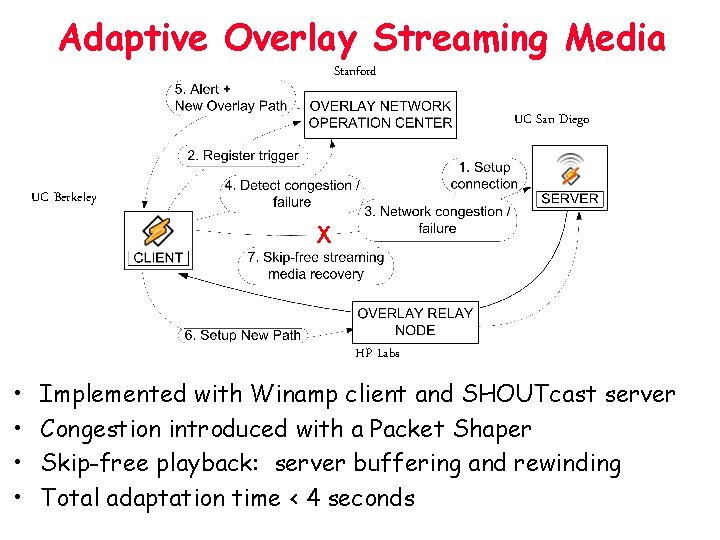

Adaptive Overlay Streaming Media Stanford UC San Diego UC Berkeley X HP Labs • • Implemented with Winamp client and SHOUTcast server Congestion introduced with a Packet Shaper Skip-free playback: server buffering and rewinding Total adaptation time < 4 seconds

Adaptive Streaming Media Architecture

Summary • A tomography-based overlay network monitoring system – Selectively monitor a basis set of O(n logn) paths to infer the loss rates of O(n 2) paths – Works in real-time, adaptive to topology changes, has good load balancing and tolerates topology errors • Both simulation and real Internet experiments promising • Built adaptive overlay streaming media system on top of TOM – Bypass congestion/failures for smooth playback within seconds

Tie Back to SCAN Provision: Dynamic Replication Replica Management: + Update Multicast Tree Building (Incremental) Content Clustering Network Do. S Resilient Replica Location: Tapestry Network End-to-End Distance Monitoring Internet Iso-bar: latency TOM: loss rate

Contribution of My Thesis • Replica location – Proposed the first simulation-based network Do. S resilience benchmark and quantify three types of directory services • Dynamically place close to optimal # of replicas – Self-organize replicas into a scalable app-level multicast tree for disseminating updates • Cluster objects to significantly reduce the management overhead with little performance sacrifice – Online incremental clustering and replication to adapt to users’ access pattern changes • Scalable overlay network monitoring

Thank you !

Backup Materials

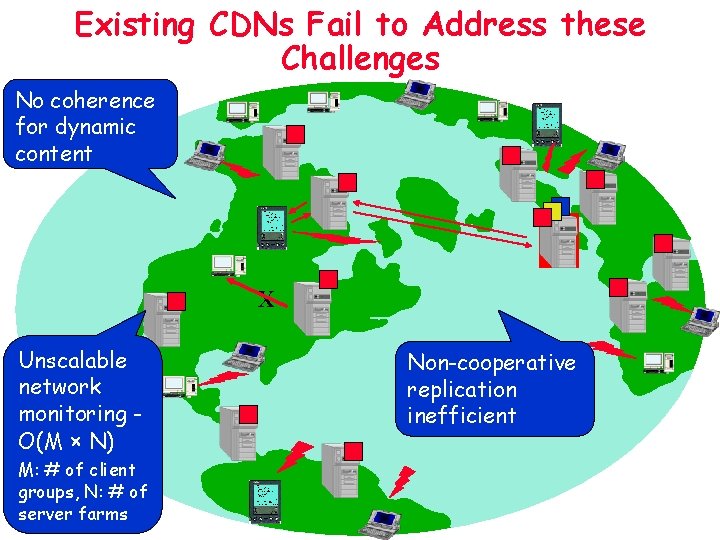

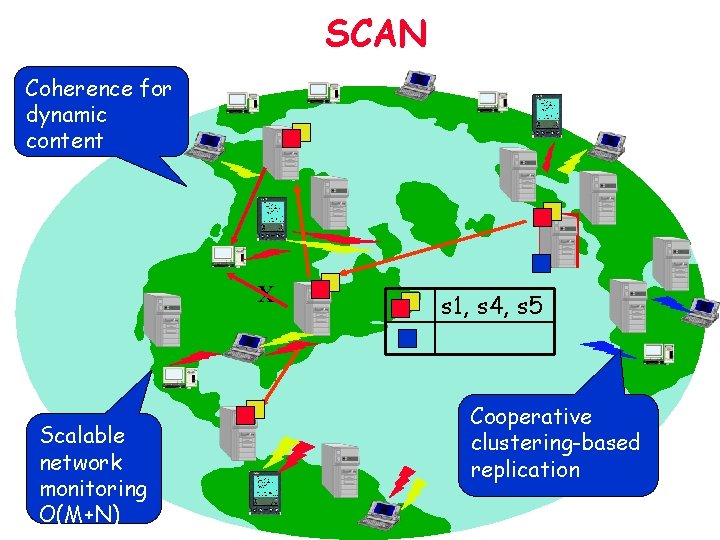

Existing CDNs Fail to Address these Challenges No coherence for dynamic content X Unscalable network monitoring O(M × N) M: # of client groups, N: # of server farms Non-cooperative replication inefficient

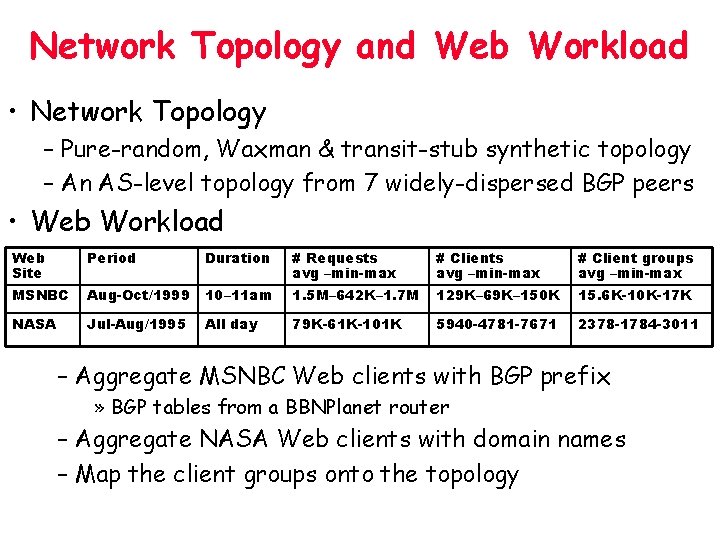

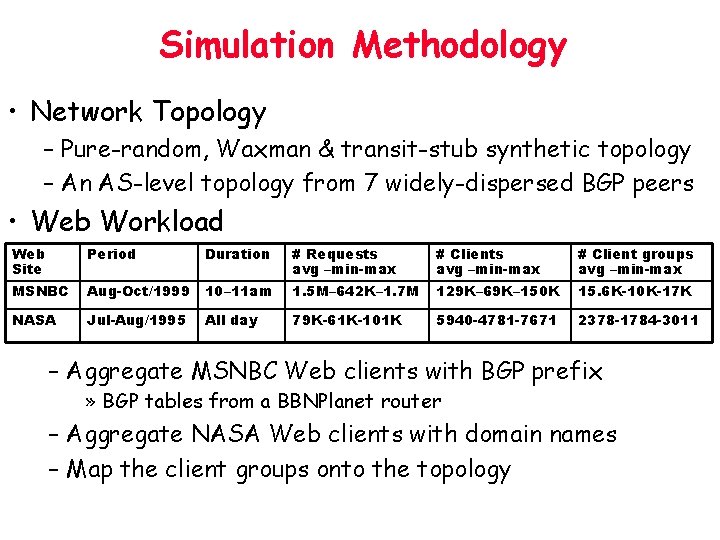

Network Topology and Web Workload • Network Topology – Pure-random, Waxman & transit-stub synthetic topology – An AS-level topology from 7 widely-dispersed BGP peers • Web Workload Web Site Period Duration # Requests avg –min-max # Client groups avg –min-max MSNBC Aug-Oct/1999 10– 11 am 1. 5 M– 642 K– 1. 7 M 129 K– 69 K– 150 K 15. 6 K-10 K-17 K NASA Jul-Aug/1995 All day 79 K-61 K-101 K 5940 -4781 -7671 2378 -1784 -3011 – Aggregate MSNBC Web clients with BGP prefix » BGP tables from a BBNPlanet router – Aggregate NASA Web clients with domain names – Map the client groups onto the topology

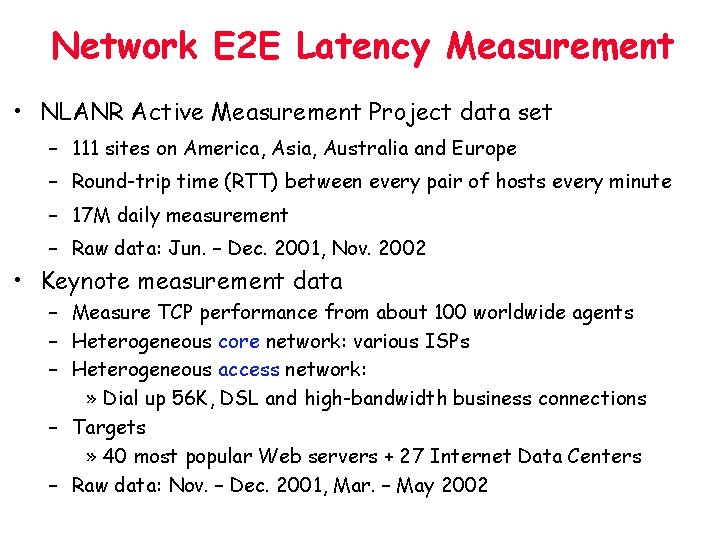

Network E 2 E Latency Measurement • NLANR Active Measurement Project data set – 111 sites on America, Asia, Australia and Europe – Round-trip time (RTT) between every pair of hosts every minute – 17 M daily measurement – Raw data: Jun. – Dec. 2001, Nov. 2002 • Keynote measurement data – Measure TCP performance from about 100 worldwide agents – Heterogeneous core network: various ISPs – Heterogeneous access network: » Dial up 56 K, DSL and high-bandwidth business connections – Targets » 40 most popular Web servers + 27 Internet Data Centers – Raw data: Nov. – Dec. 2001, Mar. – May 2002

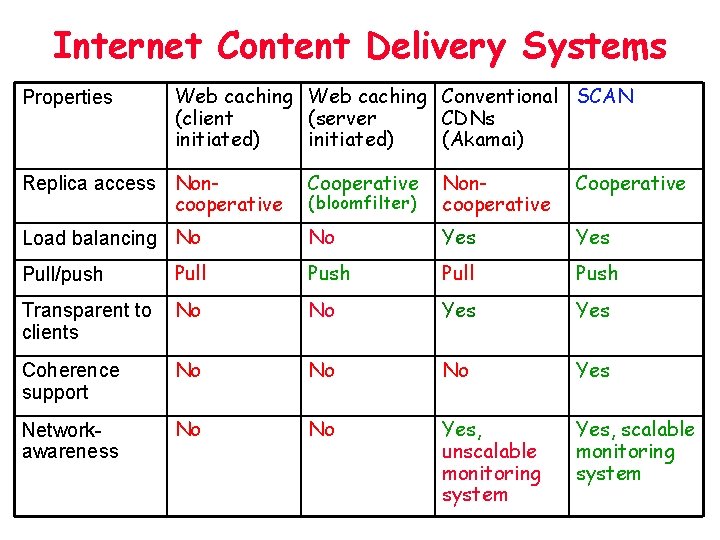

Internet Content Delivery Systems Properties Web caching Conventional SCAN (client (server CDNs initiated) (Akamai) Replica access Noncooperative Cooperative (bloomfilter) Noncooperative Cooperative Load balancing No No Yes Pull/push Pull Push Transparent to clients No No Yes Coherence support No No No Yes Networkawareness No No Yes, unscalable monitoring system Yes, scalable monitoring system

Absolute and Relative Errors • For each experiment, get its 95 percentile absolute and relati

Lossy Path Inference Accuracy • 90 out of 100 runs have coverage over 85% and false positive less than 10% • Many caused by the 5% threshold boundary effects

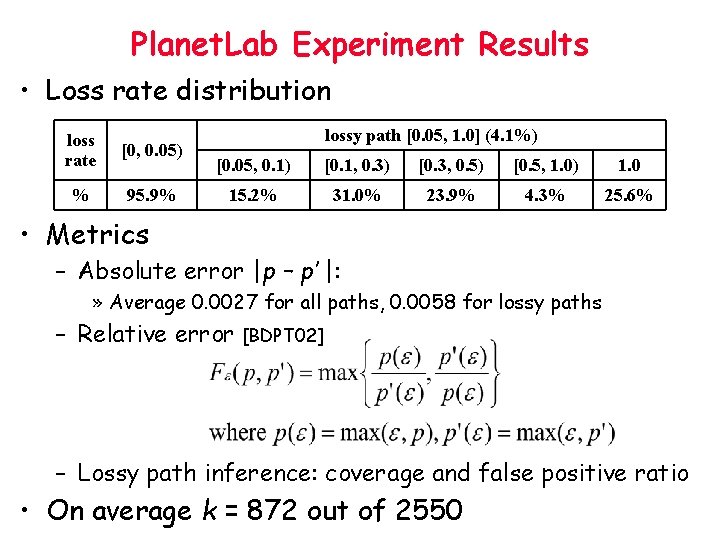

Planet. Lab Experiment Results • Loss rate distribution loss rate [0, 0. 05) % 95. 9% lossy path [0. 05, 1. 0] (4. 1%) [0. 05, 0. 1) [0. 1, 0. 3) [0. 3, 0. 5) [0. 5, 1. 0) 1. 0 15. 2% 31. 0% 23. 9% 4. 3% 25. 6% • Metrics – Absolute error |p – p’ |: » Average 0. 0027 for all paths, 0. 0058 for lossy paths – Relative error [BDPT 02] – Lossy path inference: coverage and false positive ratio • On average k = 872 out of 2550

Experiments on Planet Lab • 51 hosts, each from different organizations – 51 × 50 = 2, 550 paths • Simultaneous loss rate measurement US (40) – 300 trials, 300 msec each – In each trial, send a 40 -byte UDP pkt to every other host • Simultaneous topology measurement – Traceroute • Experiments: 6/24 – 6/27 – 100 experiments in peak hours # of hosts Areas and Domains Europe (6) International (11) Asia (2) . edu 33 . org 3 . net 2 . gov 1 . us 1 France 1 Sweden 1 Denmark 1 Germany 1 UK 2 Taiwan 1 Hong Kong 1 Canada 2 Australia 1

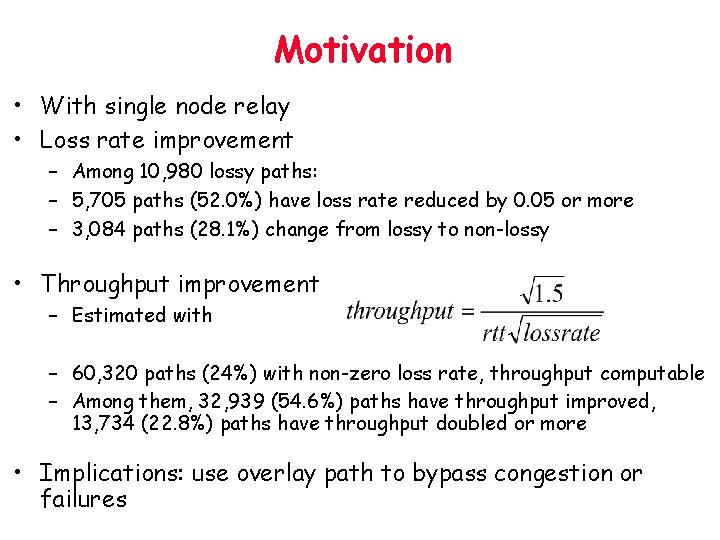

Motivation • With single node relay • Loss rate improvement – Among 10, 980 lossy paths: – 5, 705 paths (52. 0%) have loss rate reduced by 0. 05 or more – 3, 084 paths (28. 1%) change from lossy to non-lossy • Throughput improvement – Estimated with – 60, 320 paths (24%) with non-zero loss rate, throughput computable – Among them, 32, 939 (54. 6%) paths have throughput improved, 13, 734 (22. 8%) paths have throughput doubled or more • Implications: use overlay path to bypass congestion or failures

SCAN Coherence for dynamic content X Scalable network monitoring O(M+N) s 1, s 4, s 5 Cooperative clustering-based replication

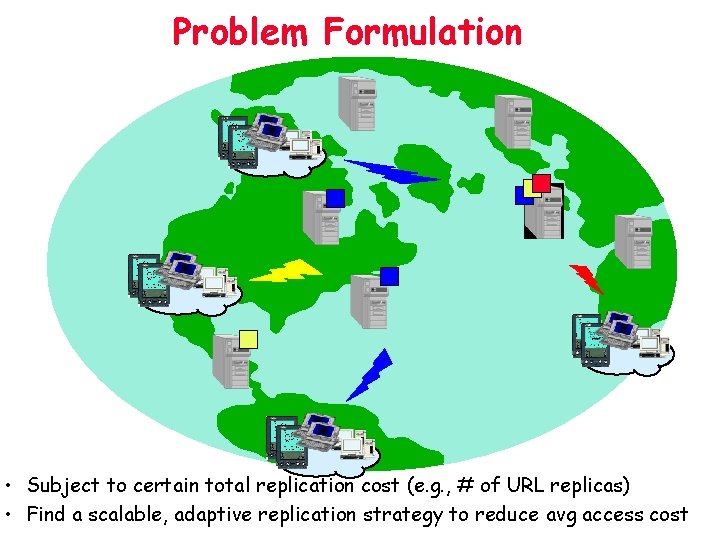

Problem Formulation • Subject to certain total replication cost (e. g. , # of URL replicas) • Find a scalable, adaptive replication strategy to reduce avg access cost

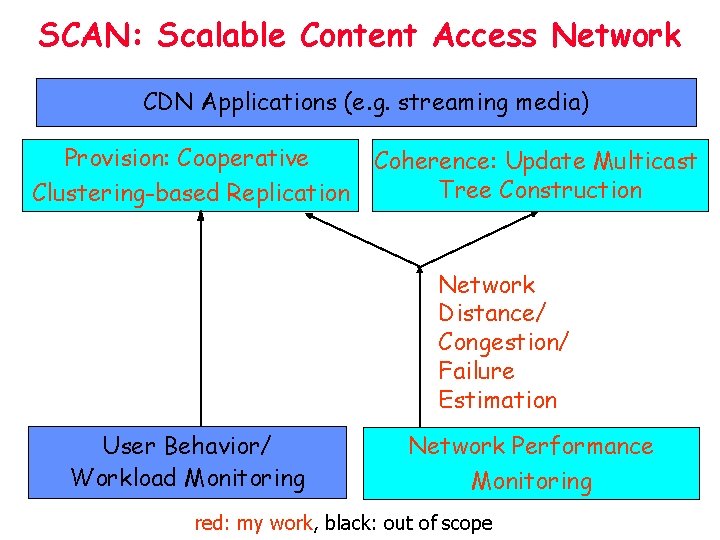

SCAN: Scalable Content Access Network CDN Applications (e. g. streaming media) Provision: Cooperative Clustering-based Replication Coherence: Update Multicast Tree Construction Network Distance/ Congestion/ Failure Estimation User Behavior/ Workload Monitoring Network Performance Monitoring red: my work, black: out of scope

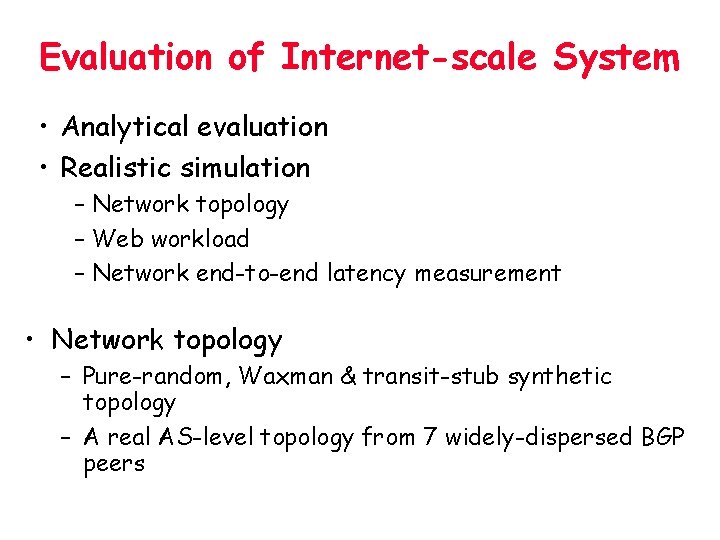

Evaluation of Internet-scale System • Analytical evaluation • Realistic simulation – Network topology – Web workload – Network end-to-end latency measurement • Network topology – Pure-random, Waxman & transit-stub synthetic topology – A real AS-level topology from 7 widely-dispersed BGP peers

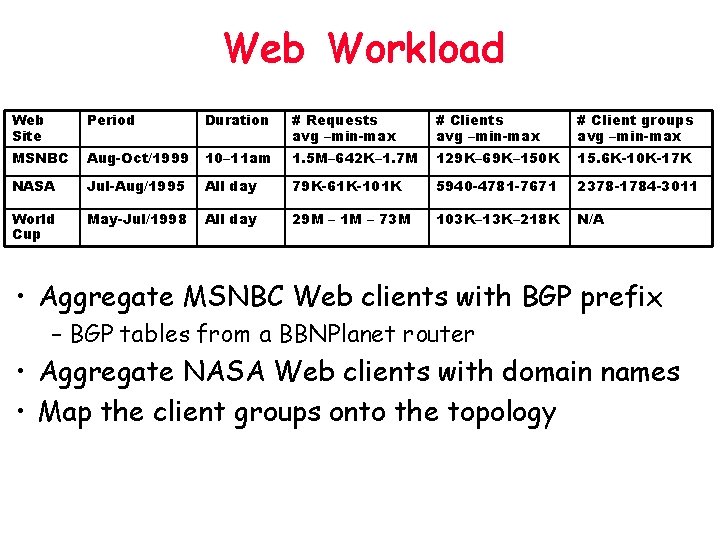

Web Workload Web Site Period Duration # Requests avg –min-max # Client groups avg –min-max MSNBC Aug-Oct/1999 10– 11 am 1. 5 M– 642 K– 1. 7 M 129 K– 69 K– 150 K 15. 6 K-10 K-17 K NASA Jul-Aug/1995 All day 79 K-61 K-101 K 5940 -4781 -7671 2378 -1784 -3011 World Cup May-Jul/1998 All day 29 M – 1 M – 73 M 103 K– 13 K– 218 K N/A • Aggregate MSNBC Web clients with BGP prefix – BGP tables from a BBNPlanet router • Aggregate NASA Web clients with domain names • Map the client groups onto the topology

Simulation Methodology • Network Topology – Pure-random, Waxman & transit-stub synthetic topology – An AS-level topology from 7 widely-dispersed BGP peers • Web Workload Web Site Period Duration # Requests avg –min-max # Client groups avg –min-max MSNBC Aug-Oct/1999 10– 11 am 1. 5 M– 642 K– 1. 7 M 129 K– 69 K– 150 K 15. 6 K-10 K-17 K NASA Jul-Aug/1995 All day 79 K-61 K-101 K 5940 -4781 -7671 2378 -1784 -3011 – Aggregate MSNBC Web clients with BGP prefix » BGP tables from a BBNPlanet router – Aggregate NASA Web clients with domain names – Map the client groups onto the topology

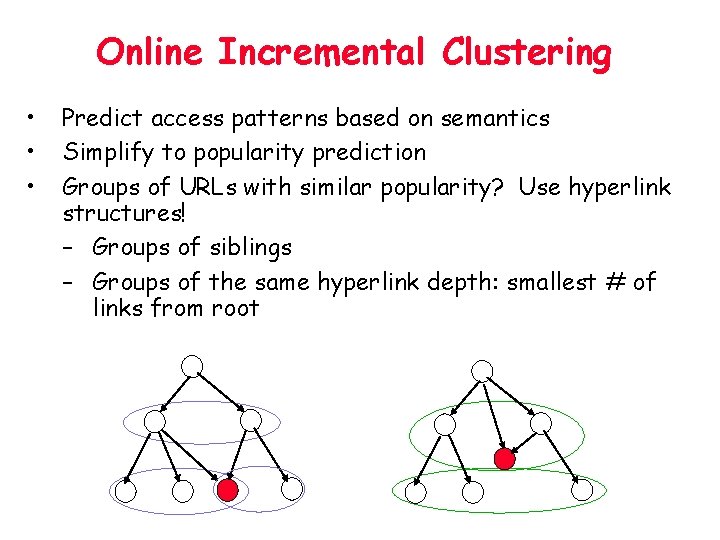

Online Incremental Clustering • • • Predict access patterns based on semantics Simplify to popularity prediction Groups of URLs with similar popularity? Use hyperlink structures! – Groups of siblings – Groups of the same hyperlink depth: smallest # of links from root

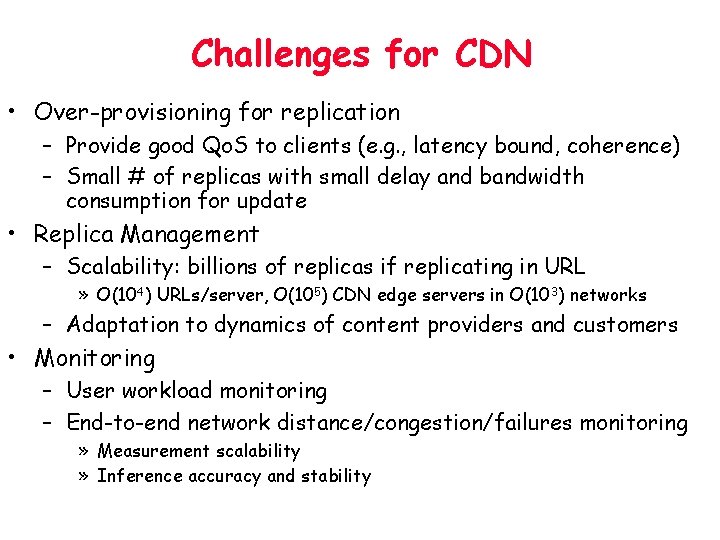

Challenges for CDN • Over-provisioning for replication – Provide good Qo. S to clients (e. g. , latency bound, coherence) – Small # of replicas with small delay and bandwidth consumption for update • Replica Management – Scalability: billions of replicas if replicating in URL » O(104) URLs/server, O(105) CDN edge servers in O(103) networks – Adaptation to dynamics of content providers and customers • Monitoring – User workload monitoring – End-to-end network distance/congestion/failures monitoring » Measurement scalability » Inference accuracy and stability

SCAN Architecture • Leverage Decentralized Object Location and Routing (DOLR) - Tapestry for – Distributed, scalable location with guaranteed success – Search with locality • Soft state maintenance of dissemination tree (for each object) data source replica cache always update adaptive coherence data plane Dynamic Replication/Update and Content Management Web server SCAN server client Tapestry mesh Request Location network plane

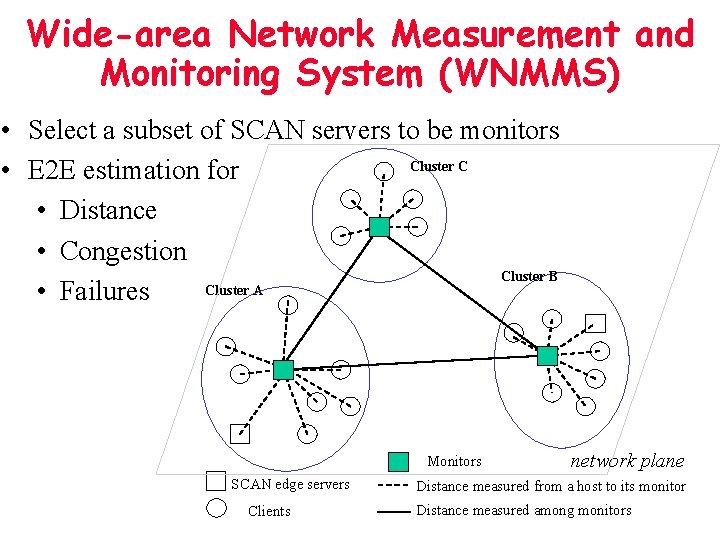

Wide-area Network Measurement and Monitoring System (WNMMS) • Select a subset of SCAN servers to be monitors Cluster C • E 2 E estimation for • Distance • Congestion Cluster B Cluster A • Failures Monitors SCAN edge servers Clients network plane Distance measured from a host to its monitor Distance measured among monitors

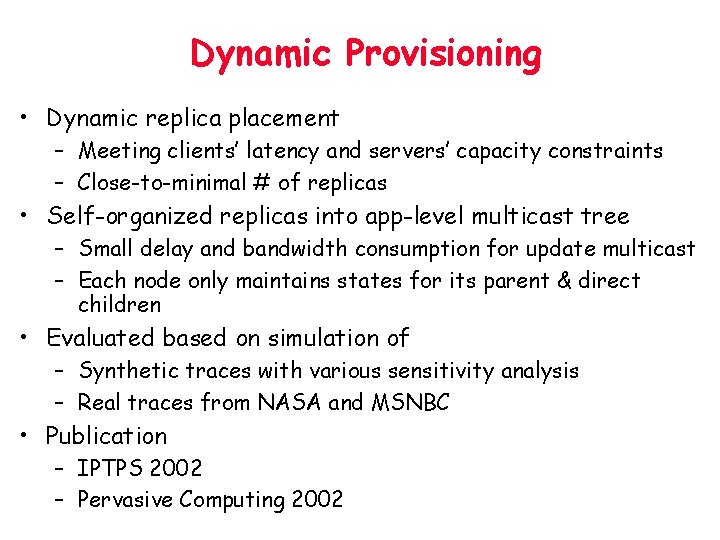

Dynamic Provisioning • Dynamic replica placement – Meeting clients’ latency and servers’ capacity constraints – Close-to-minimal # of replicas • Self-organized replicas into app-level multicast tree – Small delay and bandwidth consumption for update multicast – Each node only maintains states for its parent & direct children • Evaluated based on simulation of – Synthetic traces with various sensitivity analysis – Real traces from NASA and MSNBC • Publication – IPTPS 2002 – Pervasive Computing 2002

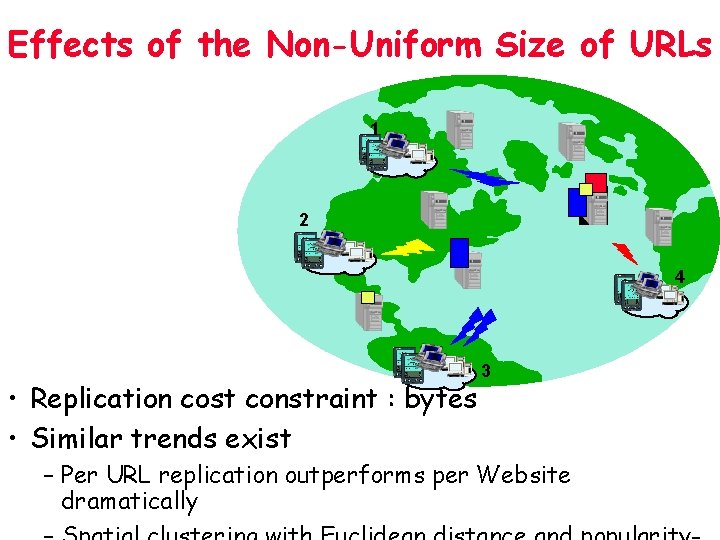

Effects of the Non-Uniform Size of URLs 1 2 4 • Replication cost constraint : bytes • Similar trends exist 3 – Per URL replication outperforms per Website dramatically

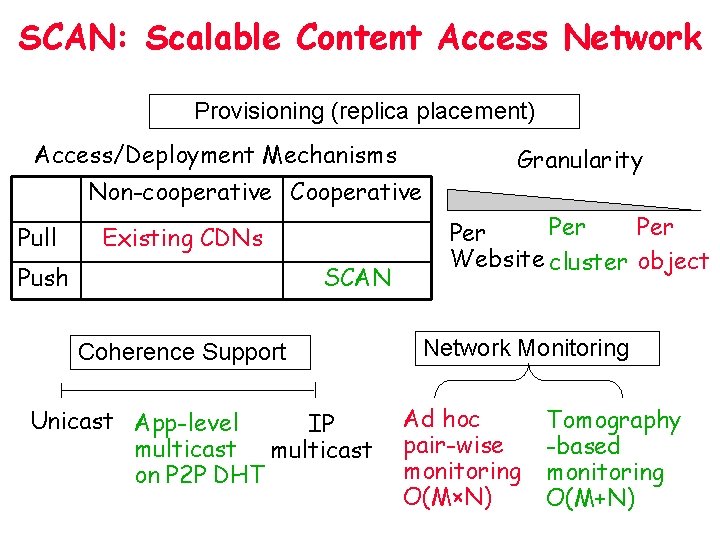

SCAN: Scalable Content Access Network Provisioning (replica placement) Access/Deployment Mechanisms Non-cooperative Cooperative Pull Existing CDNs Push SCAN Coherence Support Unicast App-level IP multicast on P 2 P DHT Granularity Per Per Website cluster object Network Monitoring Ad hoc pair-wise monitoring O(M×N) Tomography -based monitoring O(M+N)

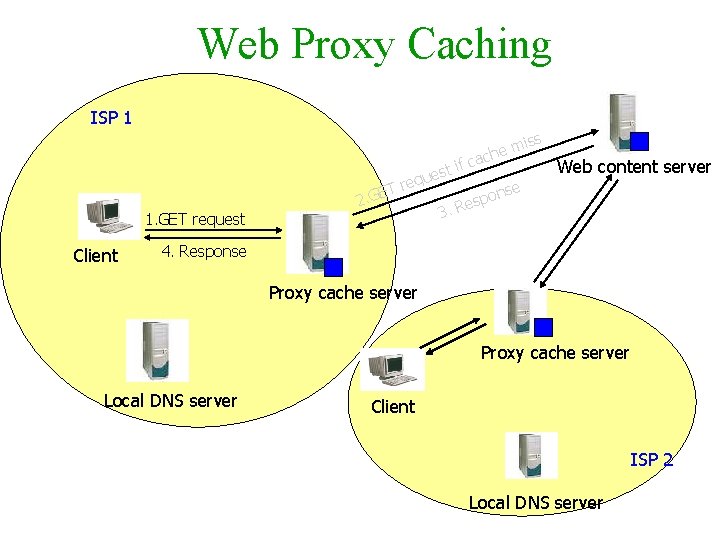

Web Proxy Caching ISP 1 iss T 1. GET request Client 2. GE m che a c t if s e requ 3. Web content server nse o p s Re 4. Response Proxy cache server Local DNS server Client ISP 2 Local DNS server

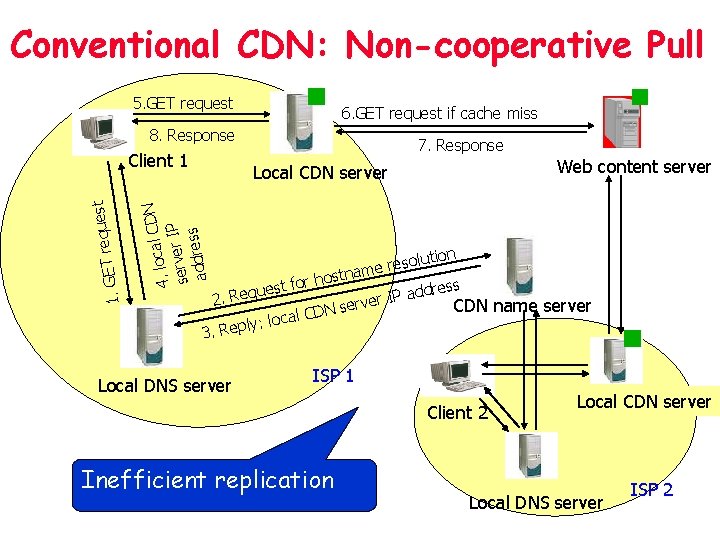

Conventional CDN: Non-cooperative Pull 5. GET request 6. GET request if cache miss 8. Response Web content server Local CDN server l CDN a c o l. 4 IP server s addres uest q e r T E 1. G Client 1 7. Response lution o s e r tname s o h r dress uest fo d q a e R P I. 2 CDN name server N D C l a ly: loc 3. Rep Local DNS server ISP 1 Client 2 Inefficient replication Local CDN server Local DNS server ISP 2

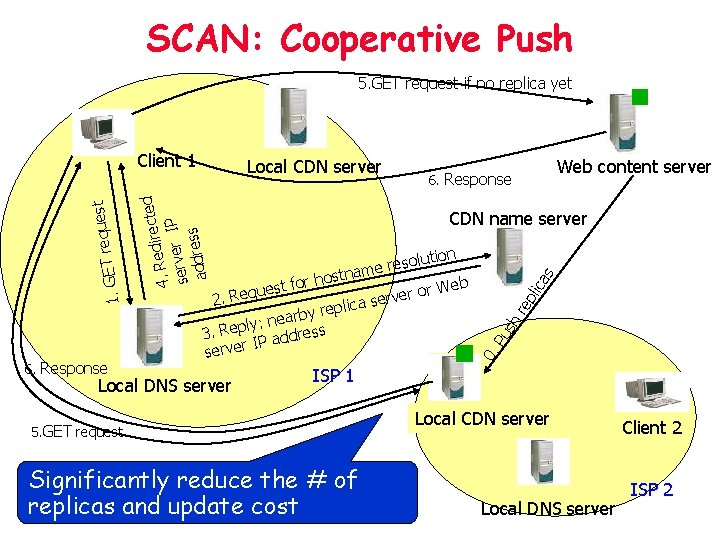

SCAN: Cooperative Push 5. GET request if no replica yet Pu sh re pli ca s CDN name server lution o s e r tname s o h r r Web uest fo o q r e e R v r. 2 lica se p e r y b : near s y l p e R 3. ddres a P I r serve Local DNS server Web content server 6. Response 0. 6. Response Local CDN server irected d e R. 4 IP server s addres uest q e r T E 1. G Client 1 ISP 1 5. GET request Significantly reduce the # of replicas and update cost Local CDN server Local DNS server Client 2 ISP 2

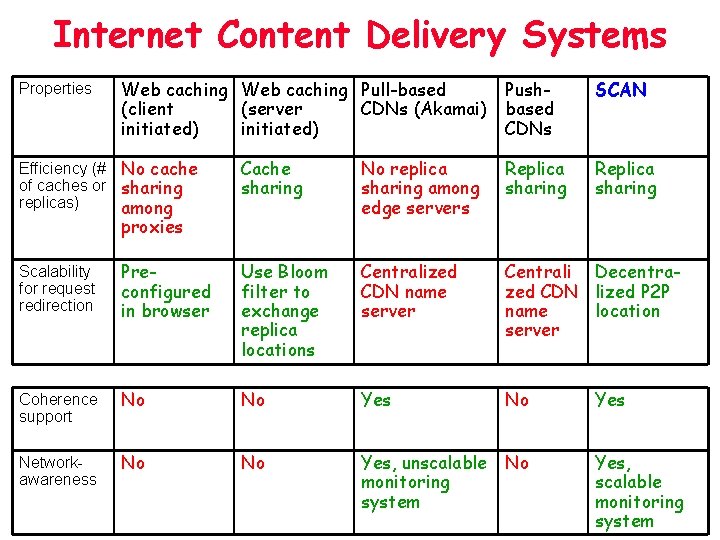

Internet Content Delivery Systems Properties Web caching Pull-based (client (server CDNs (Akamai) initiated) Efficiency (# No cache of caches or sharing replicas) among Pushbased CDNs SCAN Replica sharing Cache sharing No replica sharing among edge servers Replica sharing proxies Scalability for request redirection Preconfigured in browser Use Bloom filter to exchange replica locations Centralized CDN name server Centrali Decentrazed CDN lized P 2 P name location server Coherence support No No Yes Networkawareness No No Yes, unscalable monitoring system No Yes, scalable monitoring system

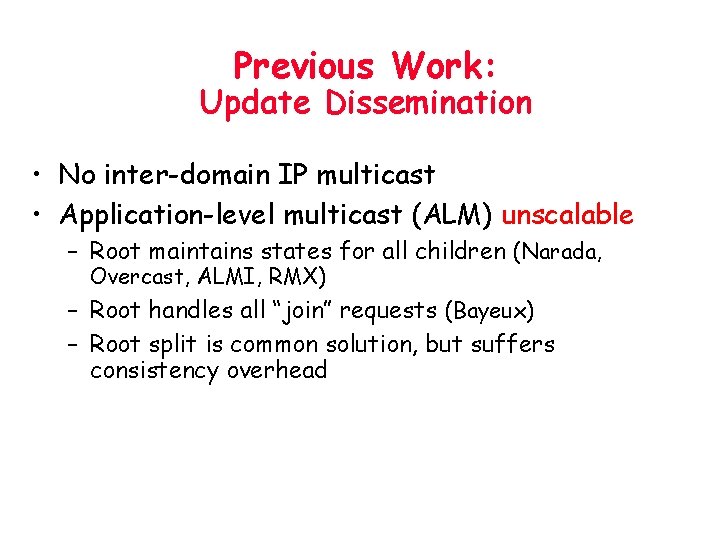

Previous Work: Update Dissemination • No inter-domain IP multicast • Application-level multicast (ALM) unscalable – Root maintains states for all children (Narada, Overcast, ALMI, RMX) – Root handles all “join” requests (Bayeux) – Root split is common solution, but suffers consistency overhead

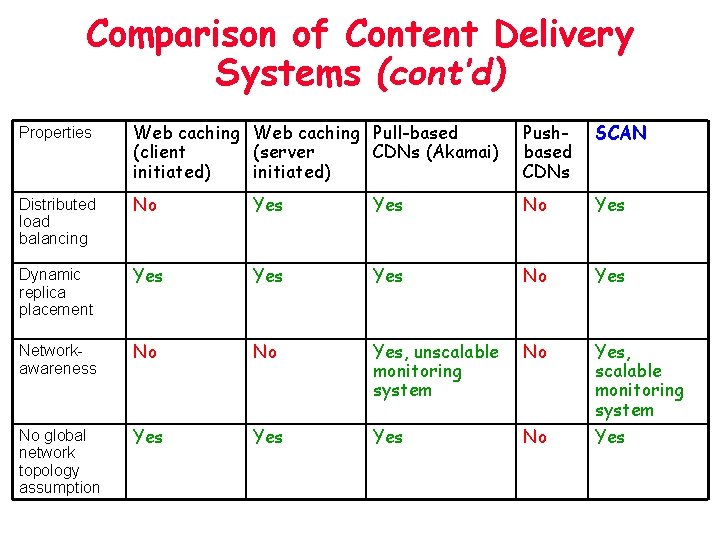

Comparison of Content Delivery Systems (cont’d) Properties Web caching Pull-based (client (server CDNs (Akamai) initiated) Pushbased CDNs SCAN Distributed load balancing No Yes Dynamic replica placement Yes Yes No Yes Networkawareness No No Yes, unscalable monitoring system No No global network topology assumption Yes Yes No Yes, scalable monitoring system Yes

- Slides: 66