Toward Privacy in Public Databases Shuchi Chawla Cynthia

Toward Privacy in Public Databases Shuchi Chawla, Cynthia Dwork, Frank Mc. Sherry, Adam Smith, Larry Stockmeyer, Hoeteck Wee Work Done at Microsoft Research Shuchi Chawla, Carnegie Mellon University

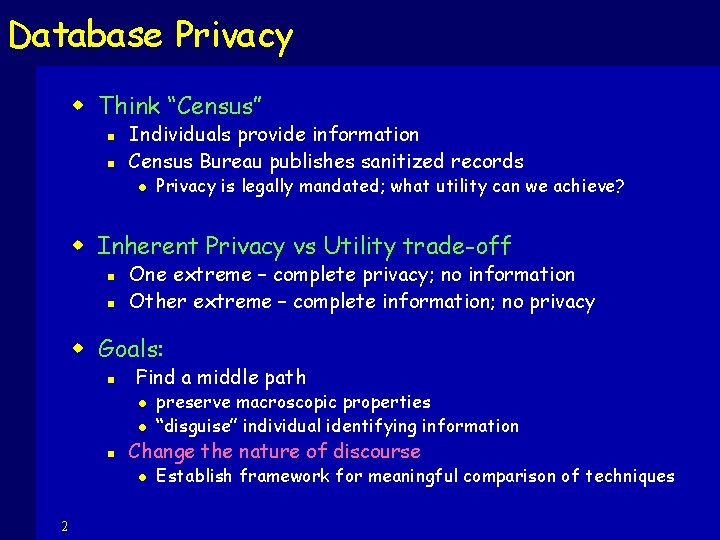

Database Privacy w Think “Census” n n Individuals provide information Census Bureau publishes sanitized records l Privacy is legally mandated; what utility can we achieve? w Inherent Privacy vs Utility trade-off n n One extreme – complete privacy; no information Other extreme – complete information; no privacy w Goals: n Find a middle path l l n Change the nature of discourse l 2 preserve macroscopic properties “disguise” individual identifying information Establish framework for meaningful comparison of techniques

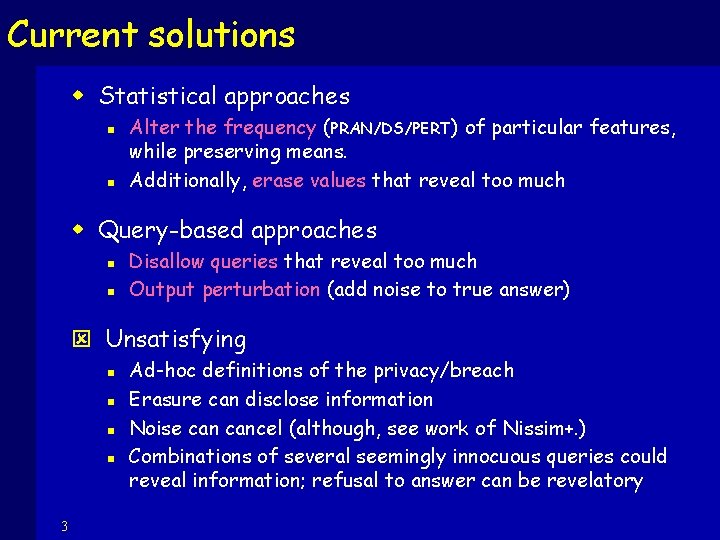

Current solutions w Statistical approaches n n Alter the frequency (PRAN/DS/PERT) of particular features, while preserving means. Additionally, erase values that reveal too much w Query-based approaches n n Disallow queries that reveal too much Output perturbation (add noise to true answer) ý Unsatisfying n n 3 Ad-hoc definitions of the privacy/breach Erasure can disclose information Noise cancel (although, see work of Nissim+. ) Combinations of several seemingly innocuous queries could reveal information; refusal to answer can be revelatory

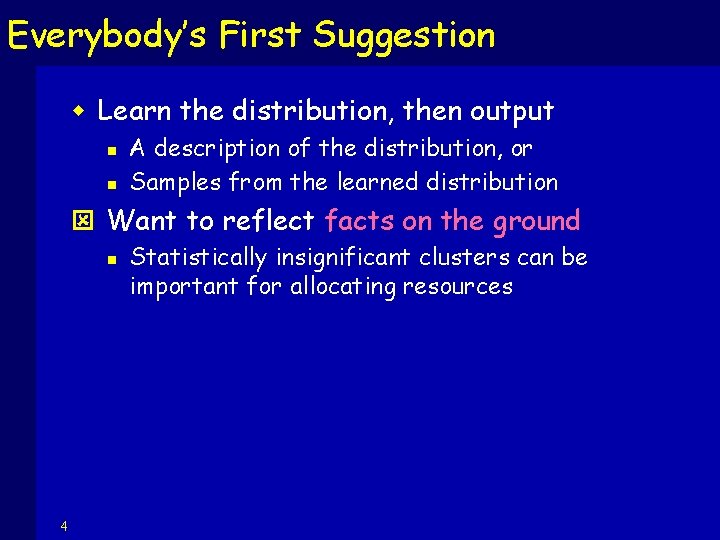

Everybody’s First Suggestion w Learn the distribution, then output n n A description of the distribution, or Samples from the learned distribution ý Want to reflect facts on the ground n 4 Statistically insignificant clusters can be important for allocating resources

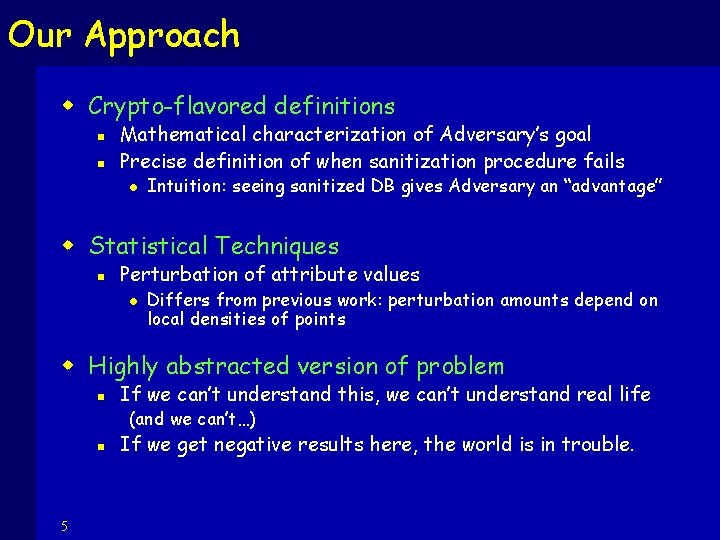

Our Approach w Crypto-flavored definitions n n Mathematical characterization of Adversary’s goal Precise definition of when sanitization procedure fails l Intuition: seeing sanitized DB gives Adversary an “advantage” w Statistical Techniques n Perturbation of attribute values l Differs from previous work: perturbation amounts depend on local densities of points w Highly abstracted version of problem n If we can’t understand this, we can’t understand real life (and we can’t…) n 5 If we get negative results here, the world is in trouble.

![What do WE mean by privacy? w [Ruth Gavison] Protection from being brought to What do WE mean by privacy? w [Ruth Gavison] Protection from being brought to](http://slidetodoc.com/presentation_image/8e7ffbb0f7feb7057069b2a7b5d0a8b4/image-6.jpg)

What do WE mean by privacy? w [Ruth Gavison] Protection from being brought to the attention of others n n inherently valuable attention invites further privacy loss w Privacy is assured to the extent that one blends in with the crowd w Appealing definition; can be converted into a precise mathematical statement… 6

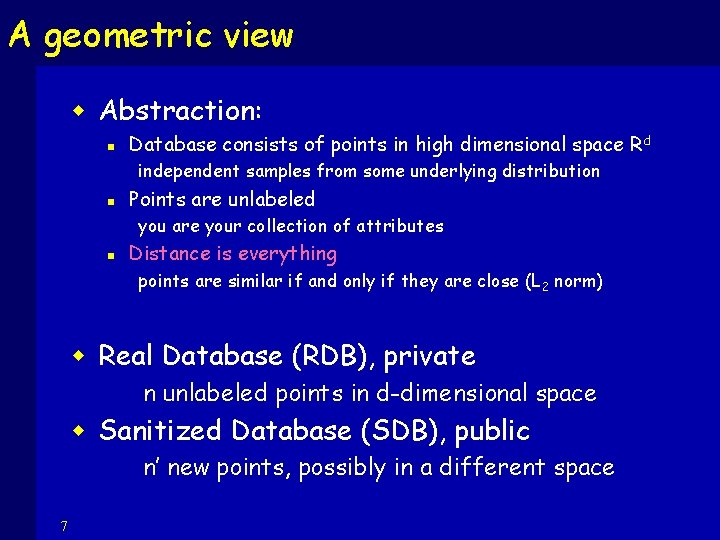

A geometric view w Abstraction: n Database consists of points in high dimensional space R d independent samples from some underlying distribution n Points are unlabeled you are your collection of attributes n Distance is everything points are similar if and only if they are close (L 2 norm) w Real Database (RDB), private n unlabeled points in d-dimensional space w Sanitized Database (SDB), public n’ new points, possibly in a different space 7

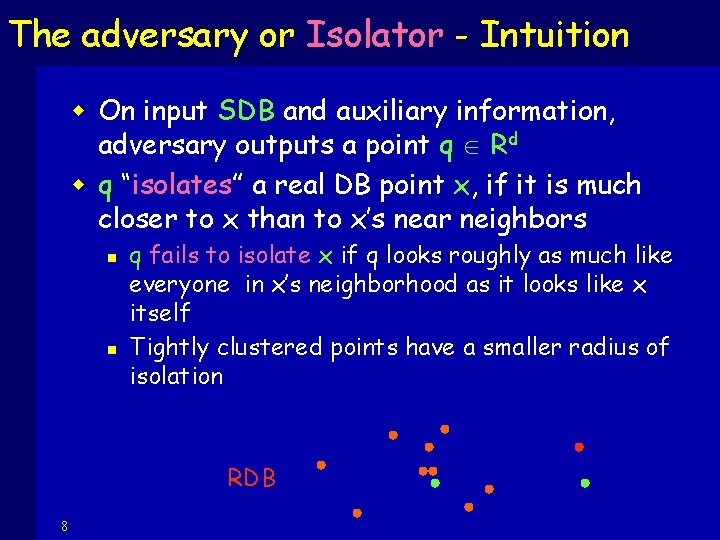

The adversary or Isolator - Intuition w On input SDB and auxiliary information, adversary outputs a point q Rd w q “isolates” a real DB point x, if it is much closer to x than to x’s near neighbors n n q fails to isolate x if q looks roughly as much like everyone in x’s neighborhood as it looks like x itself Tightly clustered points have a smaller radius of isolation RDB 8

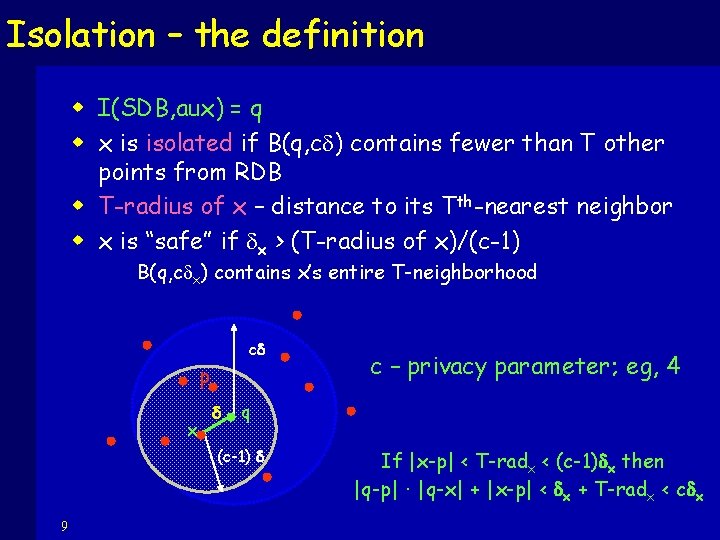

Isolation – the definition w I(SDB, aux) = q w x is isolated if B(q, c ) contains fewer than T other points from RDB w T-radius of x – distance to its Tth-nearest neighbor w x is “safe” if x > (T-radius of x)/(c-1) B(q, c x) contains x’s entire T-neighborhood c p x q (c-1) 9 c – privacy parameter; eg, 4 If |x-p| < T-radx < (c-1) x then |q-p| · |q-x| + |x-p| < x + T-radx < c x

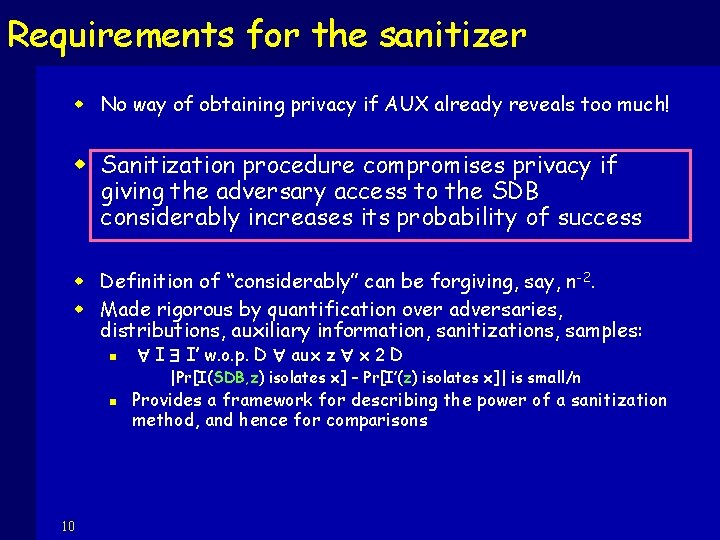

Requirements for the sanitizer w No way of obtaining privacy if AUX already reveals too much! w Sanitization procedure compromises privacy if giving the adversary access to the SDB considerably increases its probability of success w Definition of “considerably” can be forgiving, say, n -2. w Made rigorous by quantification over adversaries, distributions, auxiliary information, sanitizations, samples: n I I’ w. o. p. D aux z x 2 D |Pr[I(SDB, z) isolates x] – Pr[I’(z) isolates x]| is small/n n 10 Provides a framework for describing the power of a sanitization method, and hence for comparisons

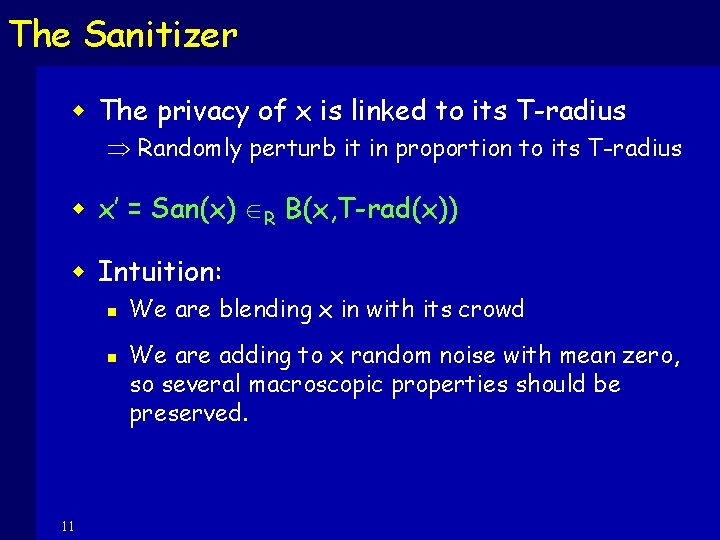

The Sanitizer w The privacy of x is linked to its T-radius Randomly perturb it in proportion to its T-radius w x’ = San(x) R B(x, T-rad(x)) w Intuition: n n 11 We are blending x in with its crowd We are adding to x random noise with mean zero, so several macroscopic properties should be preserved.

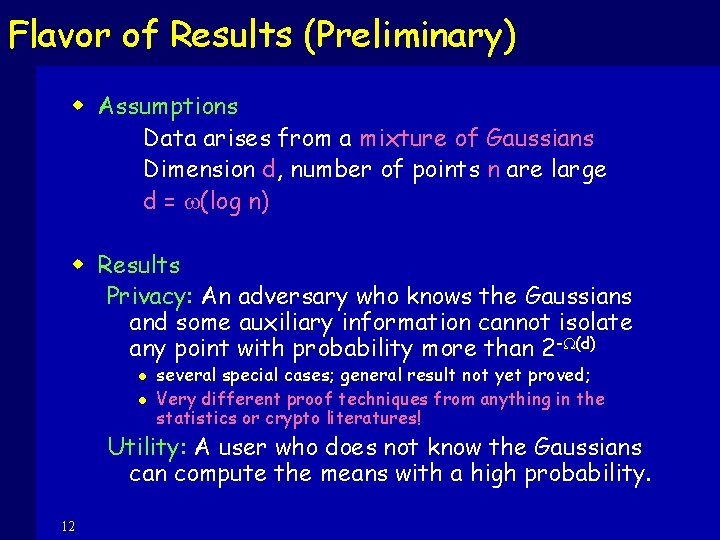

Flavor of Results (Preliminary) w Assumptions Data arises from a mixture of Gaussians Dimension d, number of points n are large d = w(log n) w Results Privacy: An adversary who knows the Gaussians and some auxiliary information cannot isolate any point with probability more than 2 - (d) l l several special cases; general result not yet proved; Very different proof techniques from anything in the statistics or crypto literatures! Utility: A user who does not know the Gaussians can compute the means with a high probability. 12

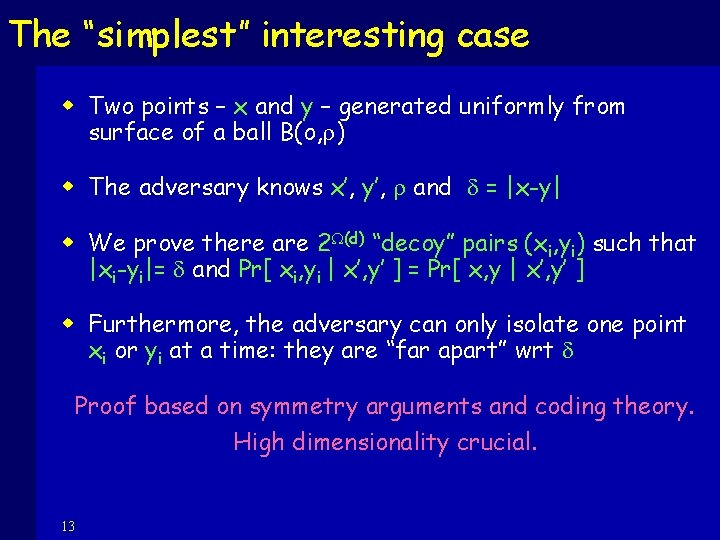

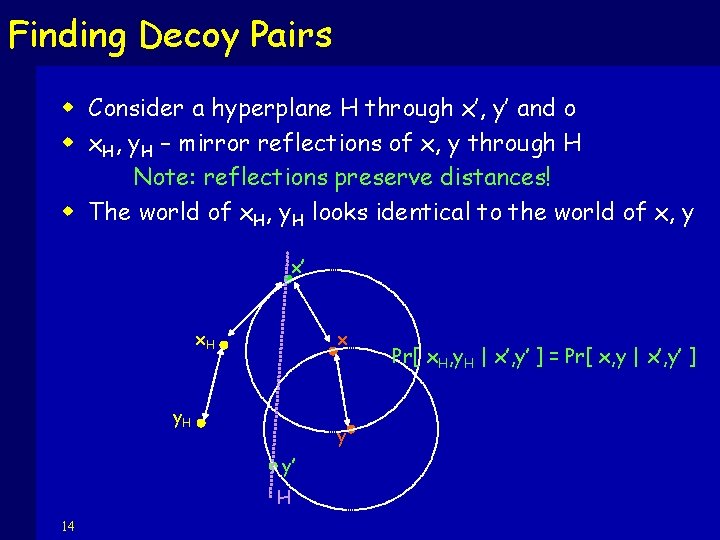

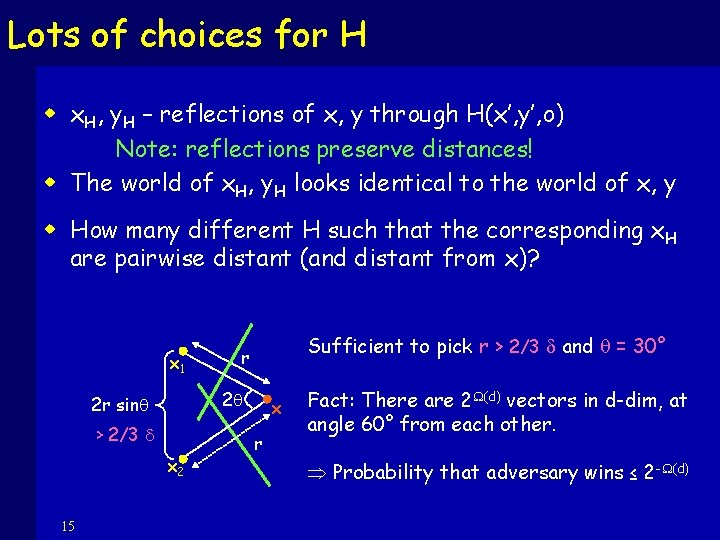

The “simplest” interesting case w Two points – x and y – generated uniformly from surface of a ball B(o, ) w The adversary knows x’, y’, and = |x-y| w We prove there are 2 (d) “decoy” pairs (xi, yi) such that |xi-yi|= and Pr[ xi, yi | x’, y’ ] = Pr[ x, y | x’, y’ ] w Furthermore, the adversary can only isolate one point xi or yi at a time: they are “far apart” wrt Proof based on symmetry arguments and coding theory. High dimensionality crucial. 13

Finding Decoy Pairs w Consider a hyperplane H through x’, y’ and o w x. H, y. H – mirror reflections of x, y through H Note: reflections preserve distances! w The world of x. H, y. H looks identical to the world of x, y x’ x. H x y. H y y’ H 14 Pr[ x. H, y. H | x’, y’ ] = Pr[ x, y | x’, y’ ]

Lots of choices for H w x. H, y. H – reflections of x, y through H(x’, y’, o) Note: reflections preserve distances! w The world of x. H, y. H looks identical to the world of x, y w How many different H such that the corresponding x. H are pairwise distant (and distant from x)? x 1 2 q 2 r sinq > 2/3 x 2 15 Sufficient to pick r > 2/3 and q = 30° r x r Fact: There are 2 (d) vectors in d-dim, at angle 60° from each other. Probability that adversary wins ≤ 2 - (d)

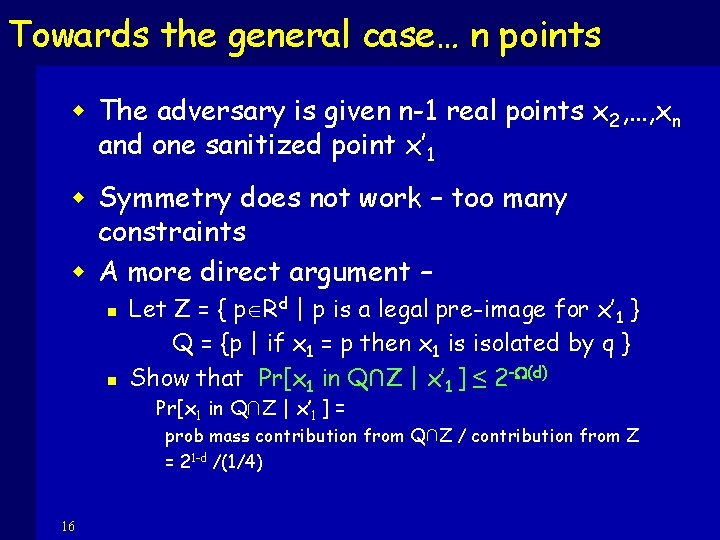

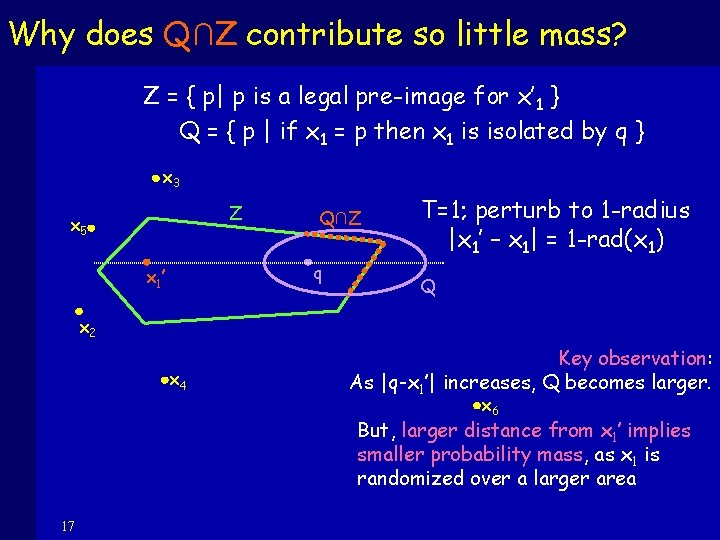

Towards the general case… n points w The adversary is given n-1 real points x 2, …, xn and one sanitized point x’ 1 w Symmetry does not work – too many constraints w A more direct argument – n n Let Z = { p Rd | p is a legal pre-image for x’ 1 } Q = {p | if x 1 = p then x 1 is isolated by q } Show that Pr[x 1 in Q∩Z | x’ 1 ] ≤ 2 -W(d) Pr[x 1 in Q∩Z | x’ 1 ] = prob mass contribution from Q∩Z / contribution from Z = 21 -d /(1/4) 16

Why does Q∩Z contribute so little mass? Z = { p| p is a legal pre-image for x’ 1 } Q = { p | if x 1 = p then x 1 is isolated by q } x 3 Z x 5 Q∩Z q x 1 ’ T=1; perturb to 1 -radius |x 1’ – x 1| = 1 -rad(x 1) Q x 2 x 4 Key observation: As |q-x 1’| increases, Q becomes larger. x 6 But, larger distance from x 1’ implies smaller probability mass, as x 1 is randomized over a larger area 17

The general case… n sanitized points w Initial intuition is wrong: n n 18 Privacy of x 1 given x 1’ and all the other points in the clear does not imply privacy of x 1 given x 1’ and sanitizations of others! Sanitization of other points reveals information about x

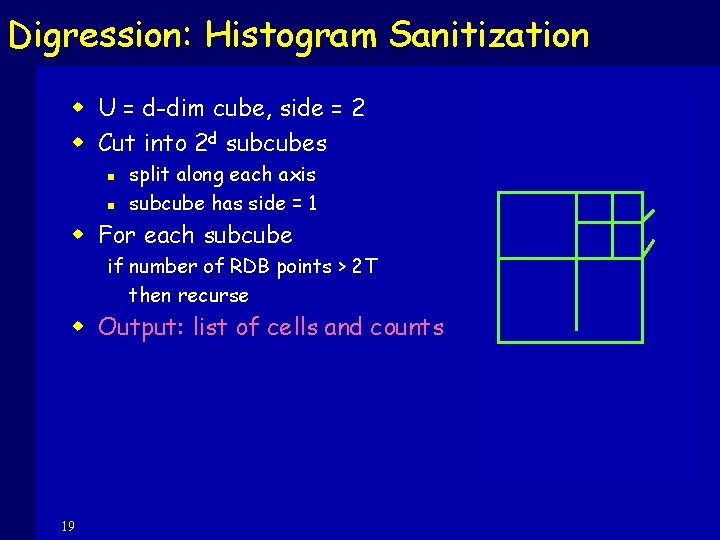

Digression: Histogram Sanitization w U = d-dim cube, side = 2 w Cut into 2 d subcubes n n split along each axis subcube has side = 1 w For each subcube if number of RDB points > 2 T then recurse w Output: list of cells and counts 19

Digression: Histogram Sanitization w Theorem: If n = 2 o(d) and points are drawn uniformly from U, then histogram sanitizations are safe with respect to 8 isolation: Pr[I(SDB) succeeds] · 2 - (d). w Rough Intuition: For q 2 C: expected distance to any x 2 C is relatively large (and even larger for x 2 C’); distances tightly concentrated. Increasing radius by 8 captures almost all the parent cell, which contains at least 2 T points. 20

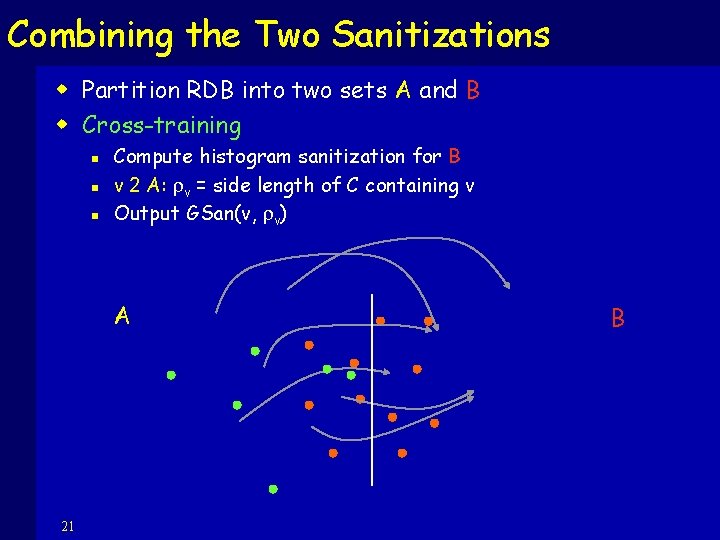

Combining the Two Sanitizations w Partition RDB into two sets A and B w Cross-training n n n Compute histogram sanitization for B v 2 A: v = side length of C containing v Output GSan(v, v) A 21 B

Cross-Training Privacy w Privacy for B: only histogram information about B is used w Privacy for A: enough variance for enough coordinates of v, even given C containing v and sanitization v’ of v. 22

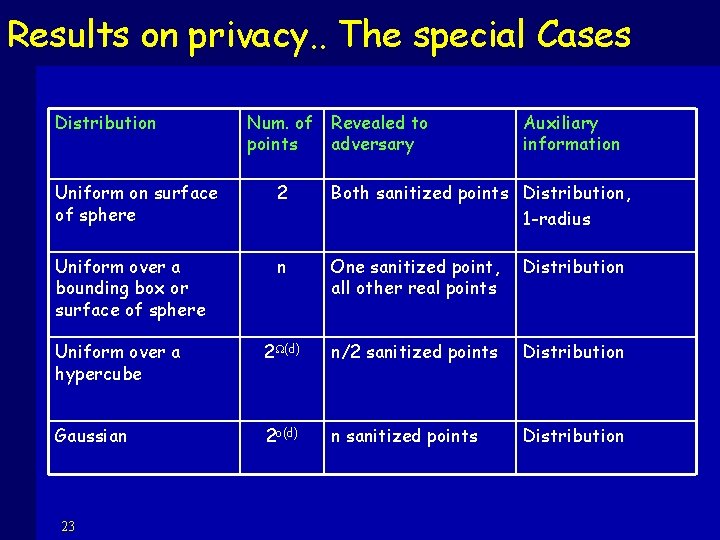

Results on privacy. . The special Cases Distribution Num. of Revealed to points adversary Auxiliary information Uniform on surface of sphere 2 Both sanitized points Distribution, 1 -radius Uniform over a bounding box or surface of sphere n One sanitized point, all other real points Distribution Uniform over a hypercube 2 (d) n/2 sanitized points Distribution Gaussian 2 o(d) n sanitized points Distribution 23

Learning mixtures of Gaussians - Spectral techniques w Observation: Optimal low-rank approx to a matrix of complex data yields the underlying structure, eg, means [M 01, VW 02]. w We show that Mc. Sherry’s algorithm works for clustering sanitized Gaussian data original distribution (mixture of Gaussians) is recovered 24

Spectral techniques for perturbed data w A sanitized point is the sum of two Gaussian variables – sample + noise w w. h. p. the T-radius of a point is less than the “radius” of its Gaussian Variance of the noise is small Previous techniques work 25

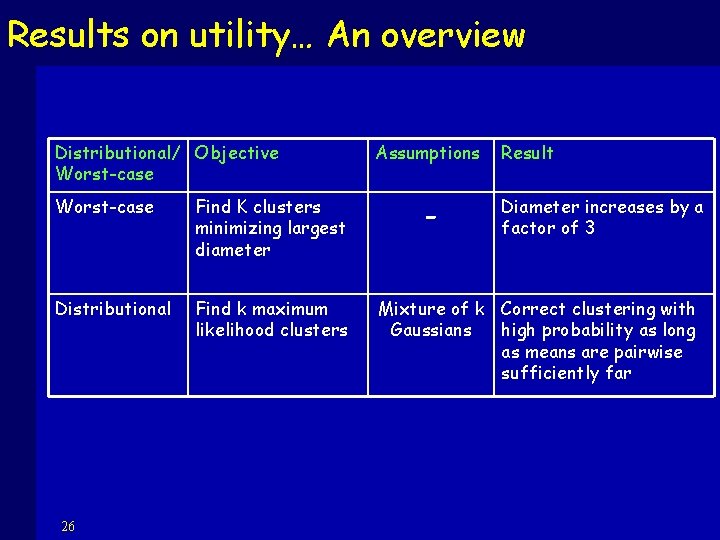

Results on utility… An overview Distributional/ Objective Worst-case Find K clusters minimizing largest diameter Distributional Find k maximum likelihood clusters 26 Assumptions - Result Diameter increases by a factor of 3 Mixture of k Correct clustering with Gaussians high probability as long as means are pairwise sufficiently far

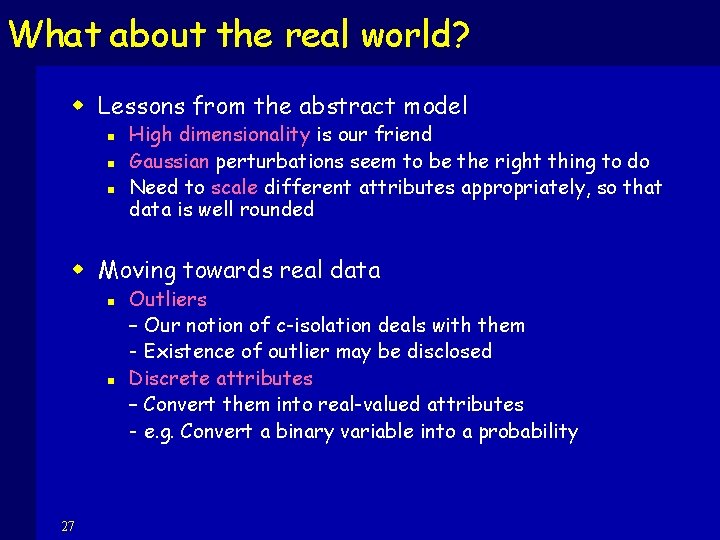

What about the real world? w Lessons from the abstract model n n n High dimensionality is our friend Gaussian perturbations seem to be the right thing to do Need to scale different attributes appropriately, so that data is well rounded w Moving towards real data n n 27 Outliers – Our notion of c-isolation deals with them - Existence of outlier may be disclosed Discrete attributes – Convert them into real-valued attributes - e. g. Convert a binary variable into a probability

- Slides: 27