Topics Left Superscalar machines IA 64 EPIC architecture

- Slides: 38

Topics Left • Superscalar machines • IA 64 / EPIC architecture • Multithreading (explicit and implicit) • Multicore Machines • Clusters • Parallel Processors • Hardware implementation vs microprogramming

Chapter 14 Superscalar Processors • Definition of Superscalar • Design Issues: - Instruction Issue Policy - Register renaming - Machine parallelism - Branch Prediction - Execution • Pentium 4 example

What is Superscalar? A Superscalar machine executes multiple independent instructions in parallel. They are pipelined as well. • “Common” instructions (arithmetic, load/store, conditional branch) can be executed independently. • Equally applicable to RISC & CISC, but more straightforward in RISC machines. • The order of execution is usually assisted by the compiler.

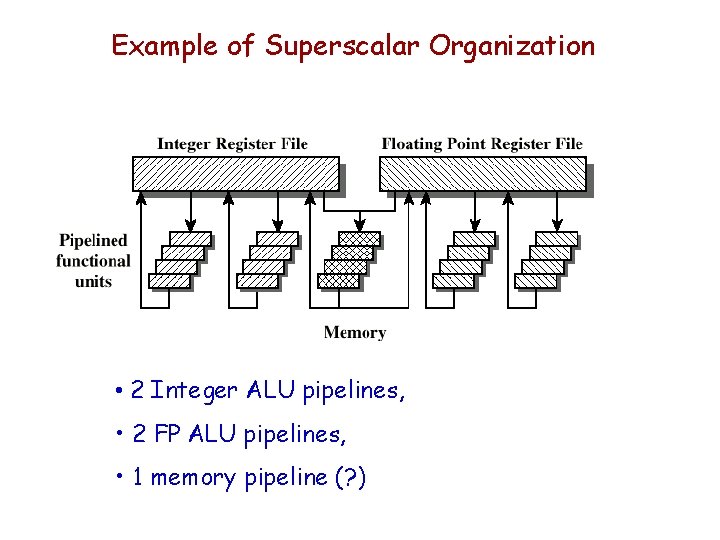

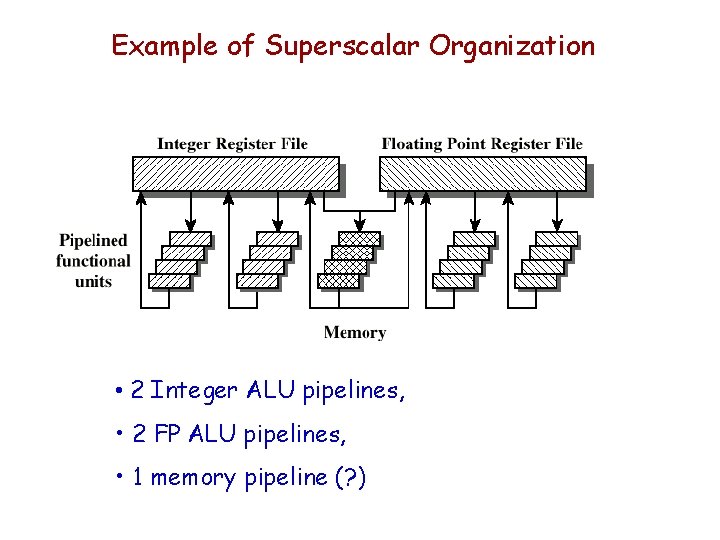

Example of Superscalar Organization • 2 Integer ALU pipelines, • 2 FP ALU pipelines, • 1 memory pipeline (? )

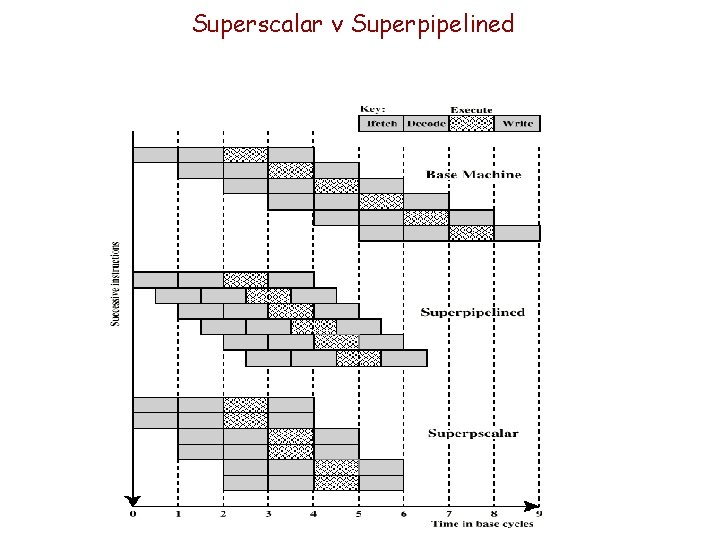

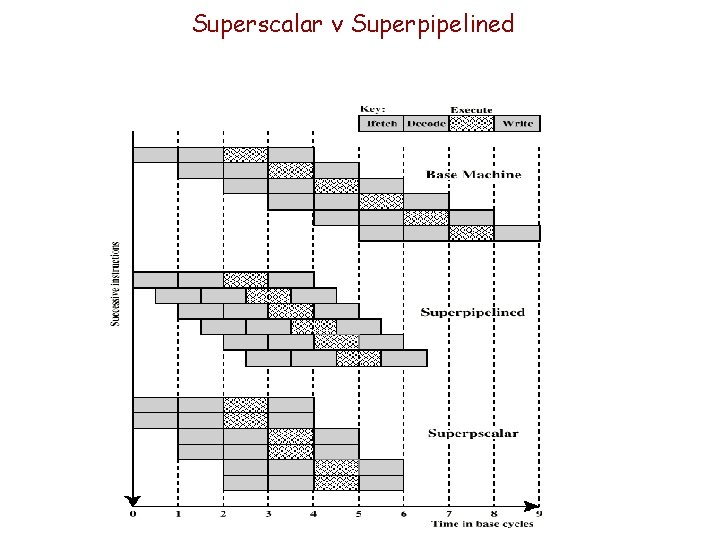

Superscalar v Superpipelined

Limitations of Superscalar • Dependent upon: - Instruction level parallelism possible - Compiler based optimization - Hardware support • Limited by — Data dependency — Procedural dependency — Resource conflicts

(Recall) True Data Dependency (Must W before R) ADD r 1, r 2 MOVE r 3, r 1+r 2 r 1 r 3 • Can fetch and decode second instruction in parallel with first LOAD r 1, X x (memory) r 1 MOVE r 3, r 1 r 3 • Can NOT execute second instruction until first is finished Second instruction is dependent on first (R after W)

(recall) Antidependancy (Must R before W) ADD R 4, R 3, 1 R 3 + 1 R 4 ADD R 3, R 5, 1 R 5 + 1 R 3 • Cannot complete the second instruction before the first has read R 3

(Recall) Procedural Dependency • Can’t execute instructions after a branch in parallel with instructions before a branch, because? Note: Also, if instruction length is not fixed, instructions have to be decoded to find out how many fetches are needed

(recall) Resource Conflict • Two or more instructions requiring access to the same resource at the same time — e. g. two arithmetic instructions need the ALU • Solution - Can possibly duplicate resources — e. g. have two arithmetic units

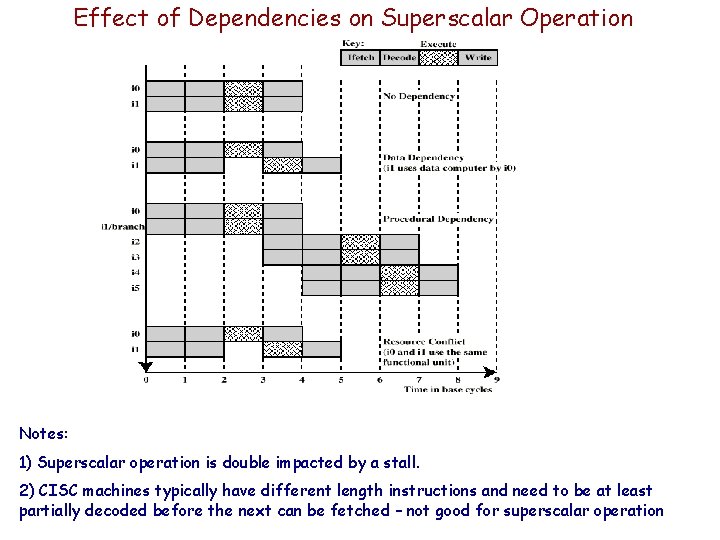

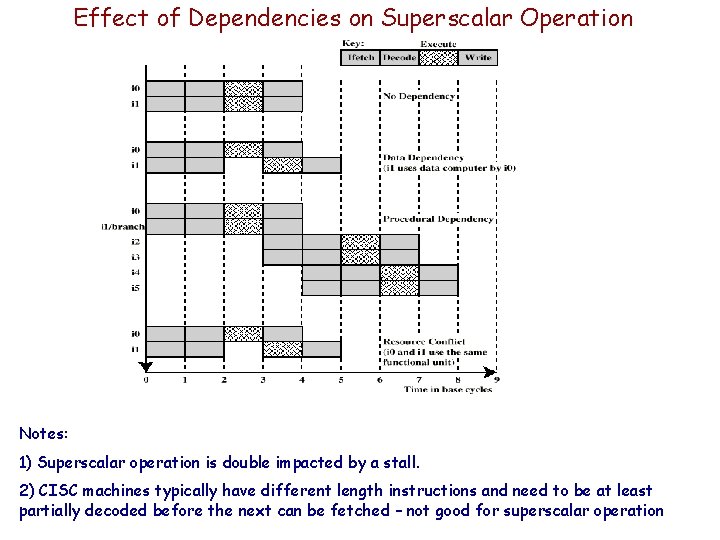

Effect of Dependencies on Superscalar Operation Notes: 1) Superscalar operation is double impacted by a stall. 2) CISC machines typically have different length instructions and need to be at least partially decoded before the next can be fetched – not good for superscalar operation

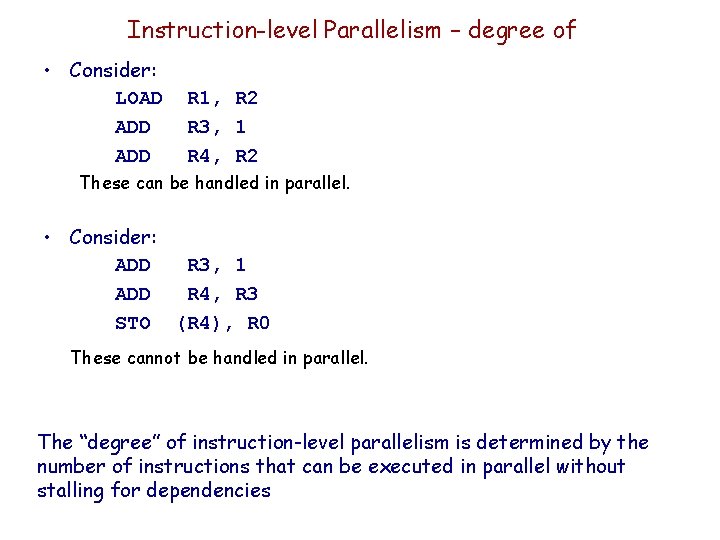

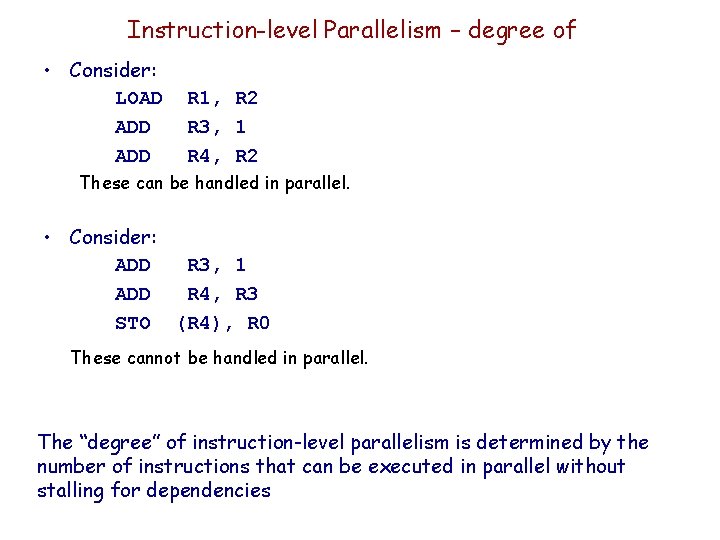

Instruction-level Parallelism – degree of • Consider: LOAD ADD R 1, R 2 R 3, 1 R 4, R 2 These can be handled in parallel. • Consider: ADD R 3, 1 ADD R 4, R 3 STO (R 4), R 0 These cannot be handled in parallel. The “degree” of instruction-level parallelism is determined by the number of instructions that can be executed in parallel without stalling for dependencies

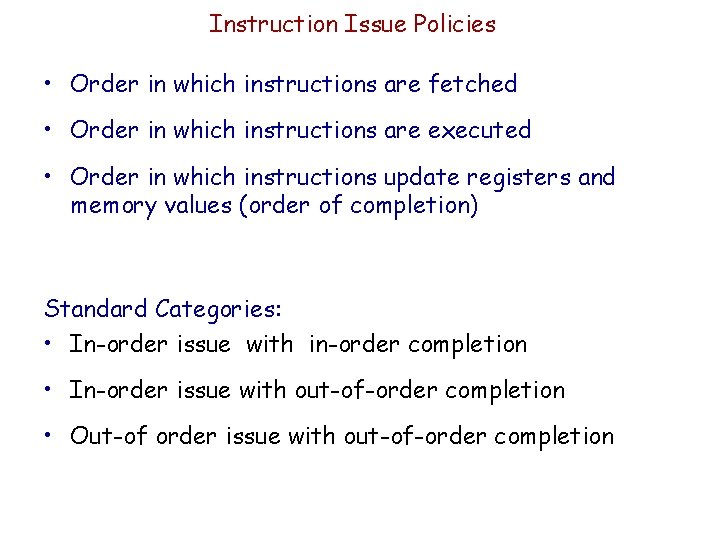

Instruction Issue Policies • Order in which instructions are fetched • Order in which instructions are executed • Order in which instructions update registers and memory values (order of completion) Standard Categories: • In-order issue with in-order completion • In-order issue with out-of-order completion • Out-of order issue with out-of-order completion

In-Order Issue -- In-Order Completion Issue instructions in the order they occur: • Not very efficient • Instructions must stall if necessary (and stalling in superpipelining is expensive)

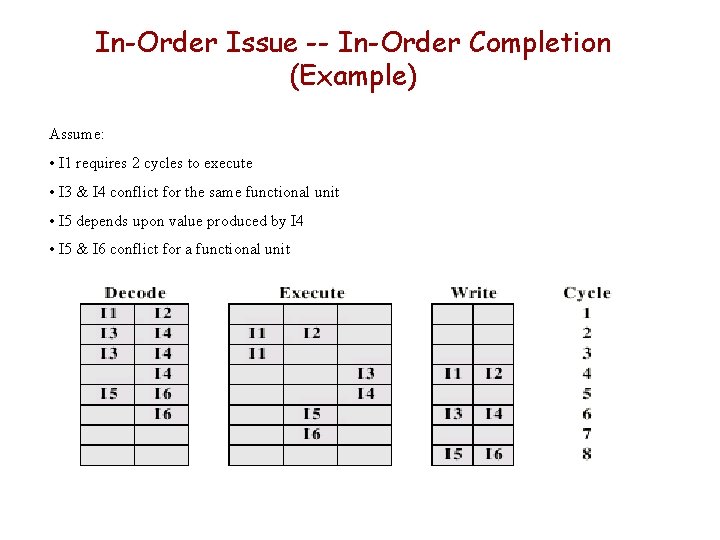

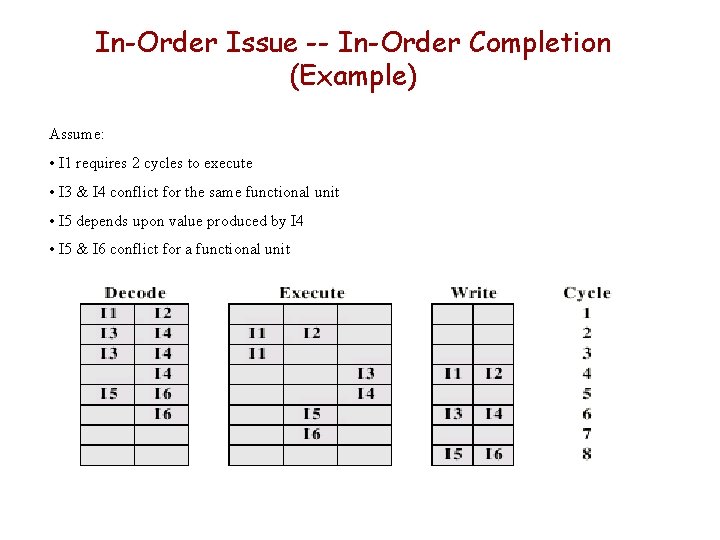

In-Order Issue -- In-Order Completion (Example) Assume: • I 1 requires 2 cycles to execute • I 3 & I 4 conflict for the same functional unit • I 5 depends upon value produced by I 4 • I 5 & I 6 conflict for a functional unit

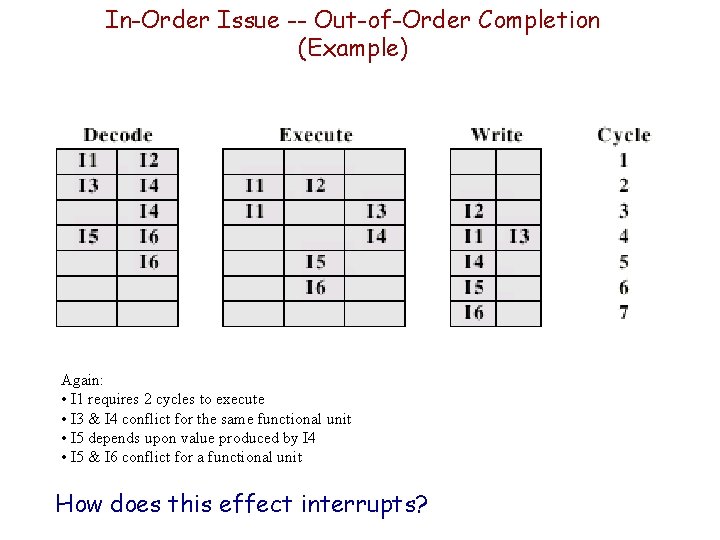

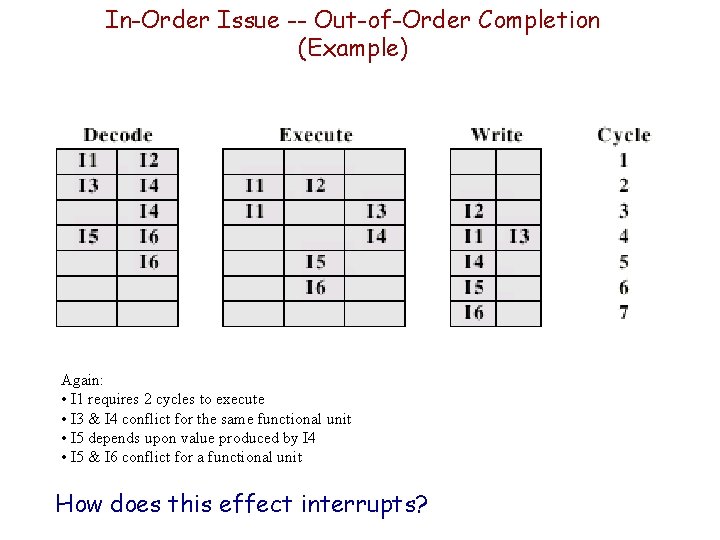

In-Order Issue -- Out-of-Order Completion (Example) Again: • I 1 requires 2 cycles to execute • I 3 & I 4 conflict for the same functional unit • I 5 depends upon value produced by I 4 • I 5 & I 6 conflict for a functional unit How does this effect interrupts?

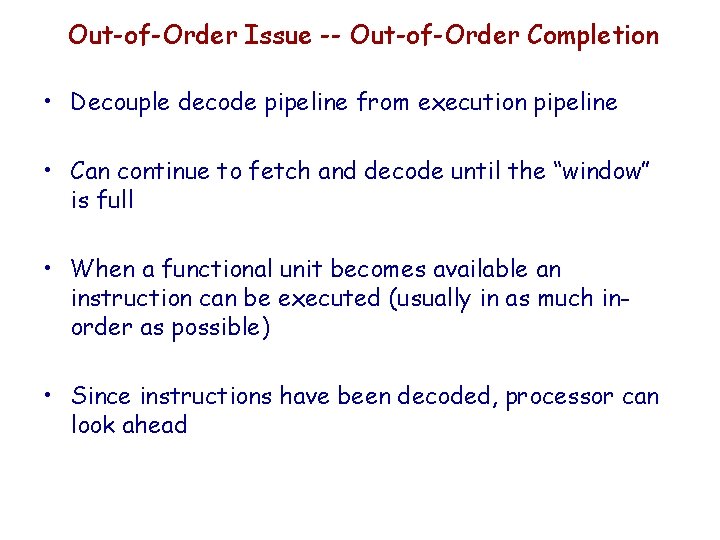

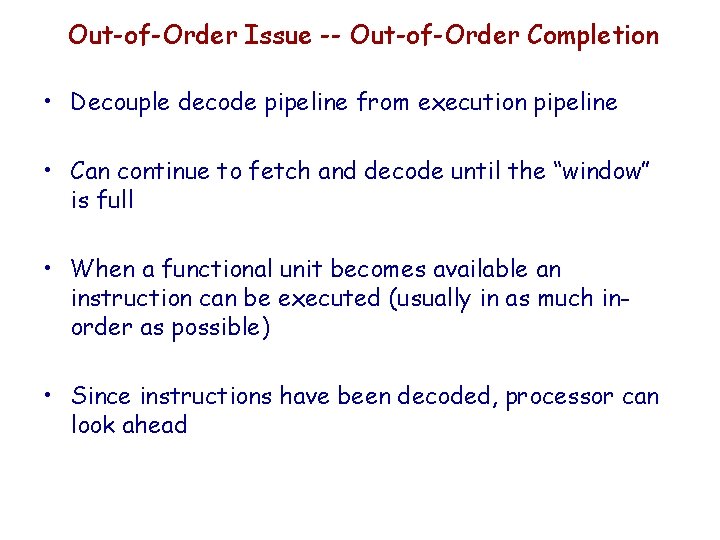

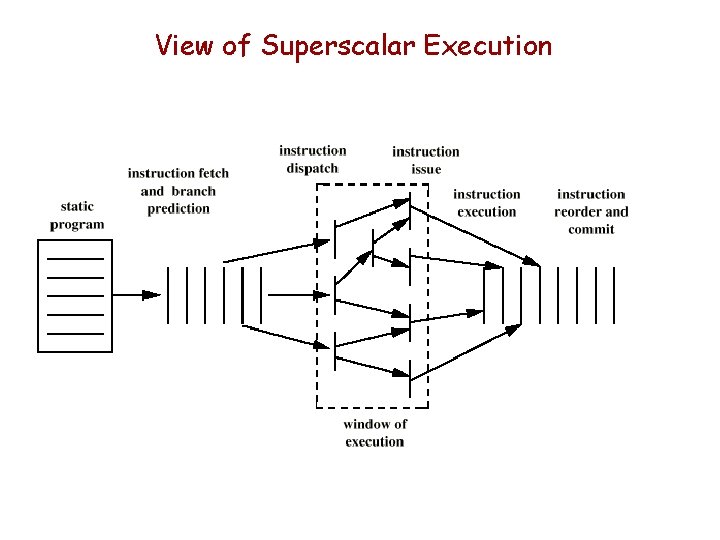

Out-of-Order Issue -- Out-of-Order Completion • Decouple decode pipeline from execution pipeline • Can continue to fetch and decode until the “window” is full • When a functional unit becomes available an instruction can be executed (usually in as much inorder as possible) • Since instructions have been decoded, processor can look ahead

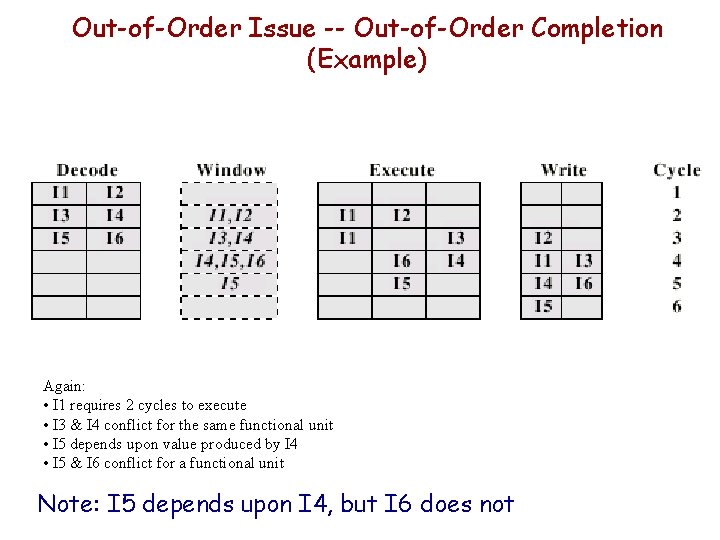

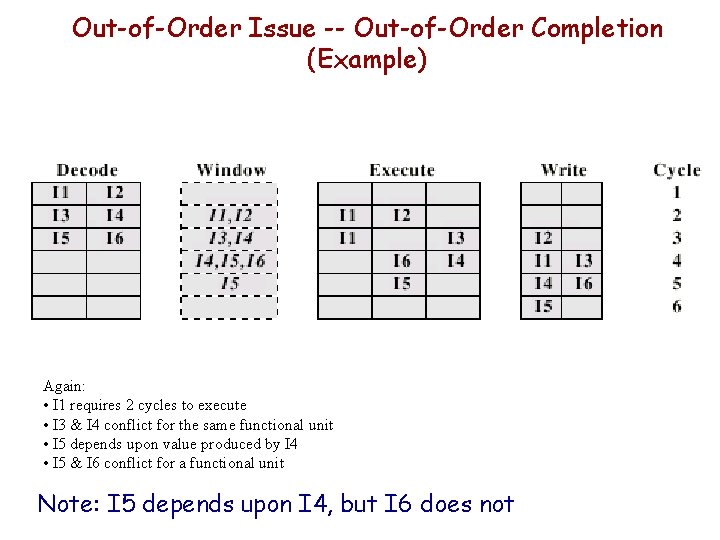

Out-of-Order Issue -- Out-of-Order Completion (Example) Again: • I 1 requires 2 cycles to execute • I 3 & I 4 conflict for the same functional unit • I 5 depends upon value produced by I 4 • I 5 & I 6 conflict for a functional unit Note: I 5 depends upon I 4, but I 6 does not

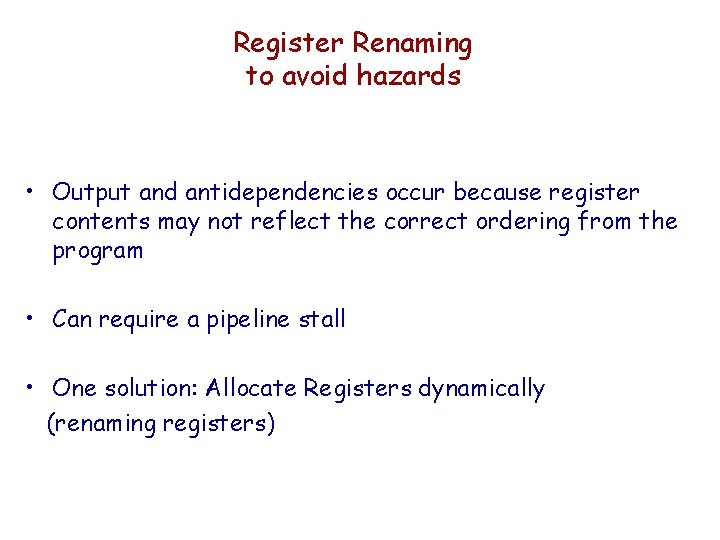

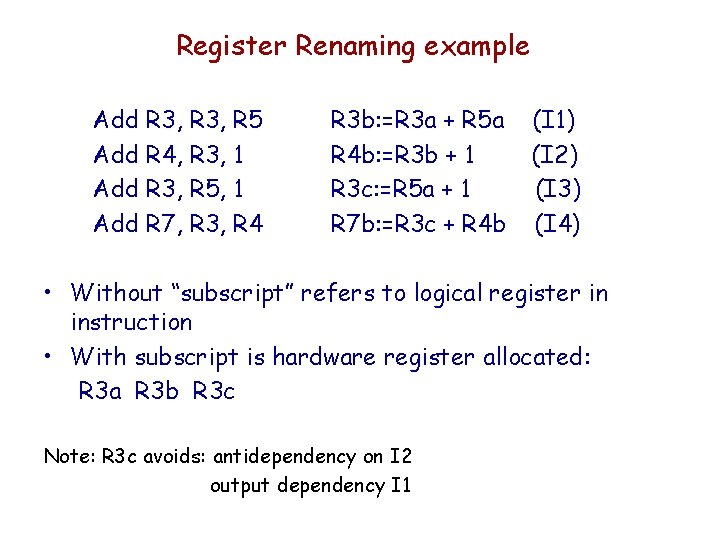

Register Renaming to avoid hazards • Output and antidependencies occur because register contents may not reflect the correct ordering from the program • Can require a pipeline stall • One solution: Allocate Registers dynamically (renaming registers)

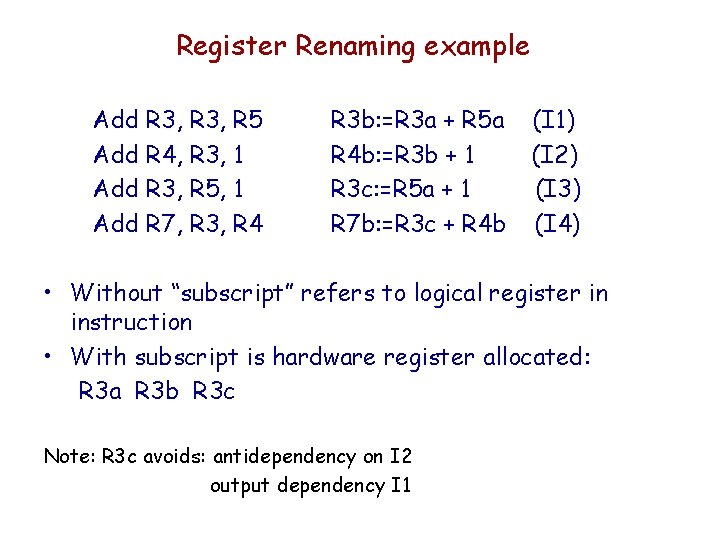

Register Renaming example Add R 3, R 5 Add R 4, R 3, 1 Add R 3, R 5, 1 Add R 7, R 3, R 4 R 3 b: =R 3 a + R 5 a R 4 b: =R 3 b + 1 R 3 c: =R 5 a + 1 R 7 b: =R 3 c + R 4 b (I 1) (I 2) (I 3) (I 4) • Without “subscript” refers to logical register in instruction • With subscript is hardware register allocated: R 3 a R 3 b R 3 c Note: R 3 c avoids: antidependency on I 2 output dependency I 1

Recaping: Machine Parallelism Support • Duplication of Resources • Out of order issue hardware • Windowing to decouple execution from decode • Register Renaming capability

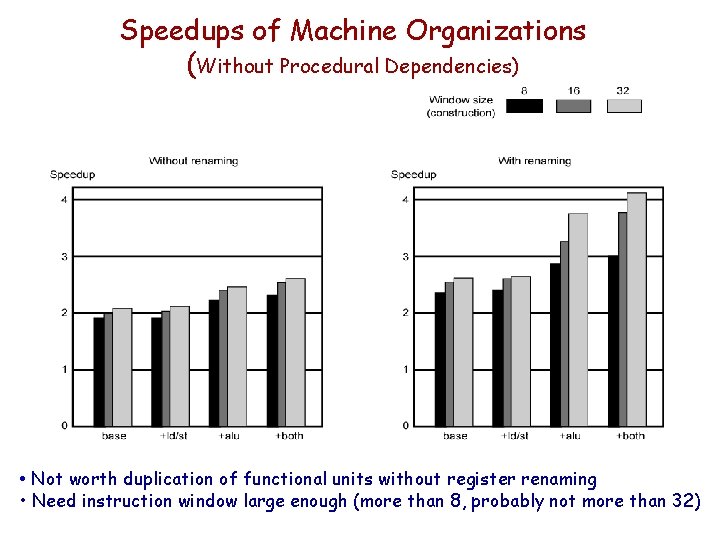

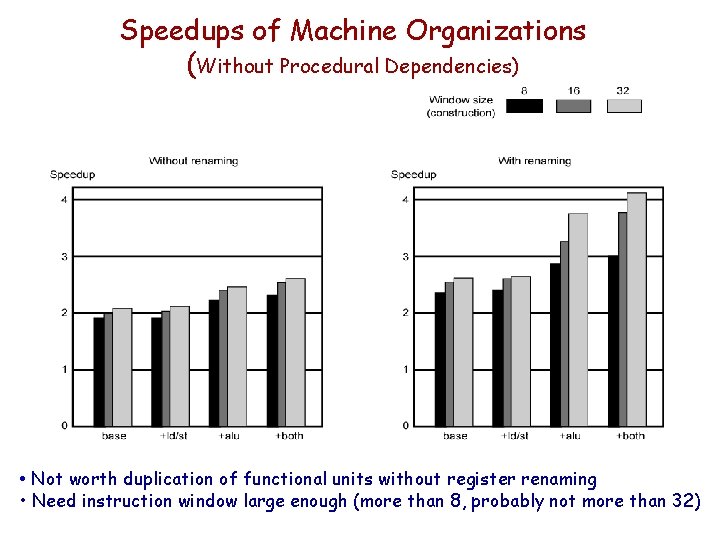

Speedups of Machine Organizations (Without Procedural Dependencies) • Not worth duplication of functional units without register renaming • Need instruction window large enough (more than 8, probably not more than 32)

Branch Prediction in Superscalar Machines • Delayed branch not used much. Why? Multiple instructions need to execute in the delay slot. This leads to much complexity in recovery. • Branch prediction should be used - Branch history is very useful

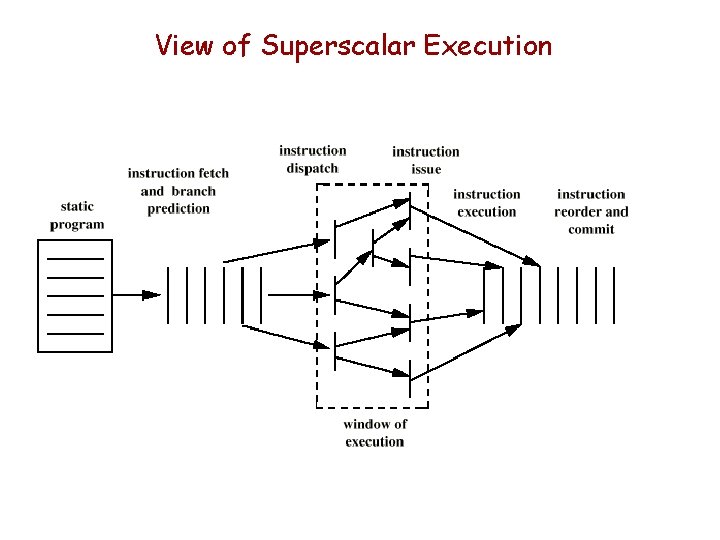

View of Superscalar Execution

Committing or Retiring Instructions Results need to be put into order (commit or retire) • Results sometimes must be held in temporary storage until it is certain they can be placed in “permanent” storage. (either committed or retired/flushed) • Temporary storage requires regular clean up – overhead – done in hardware.

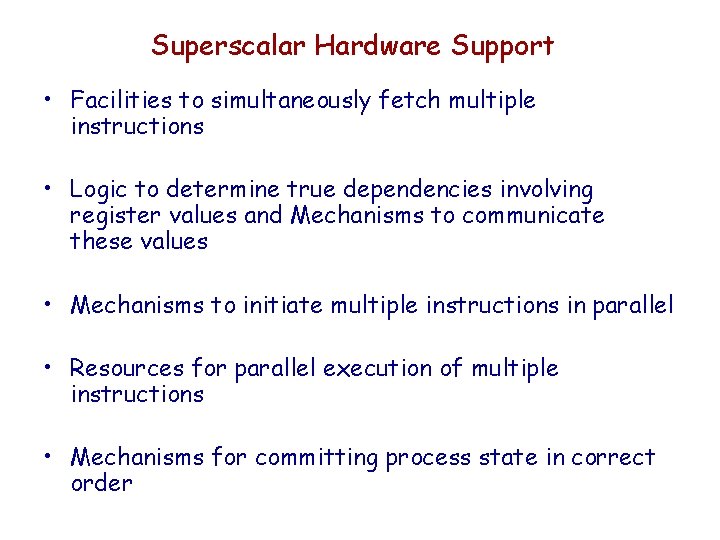

Superscalar Hardware Support • Facilities to simultaneously fetch multiple instructions • Logic to determine true dependencies involving register values and Mechanisms to communicate these values • Mechanisms to initiate multiple instructions in parallel • Resources for parallel execution of multiple instructions • Mechanisms for committing process state in correct order

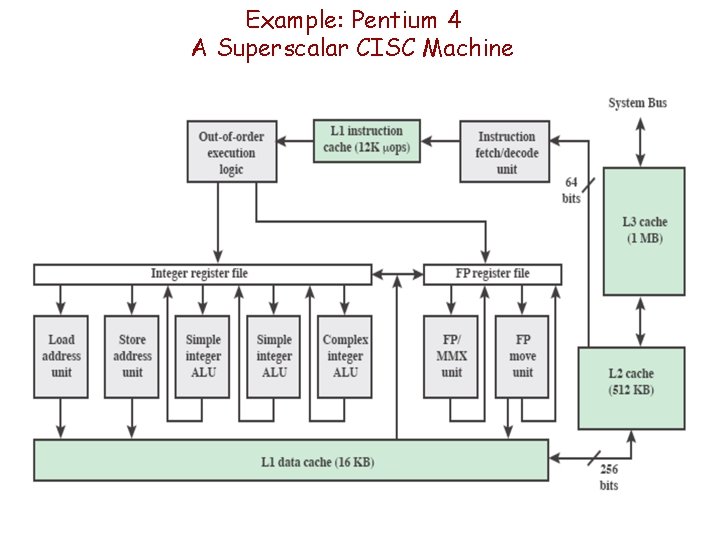

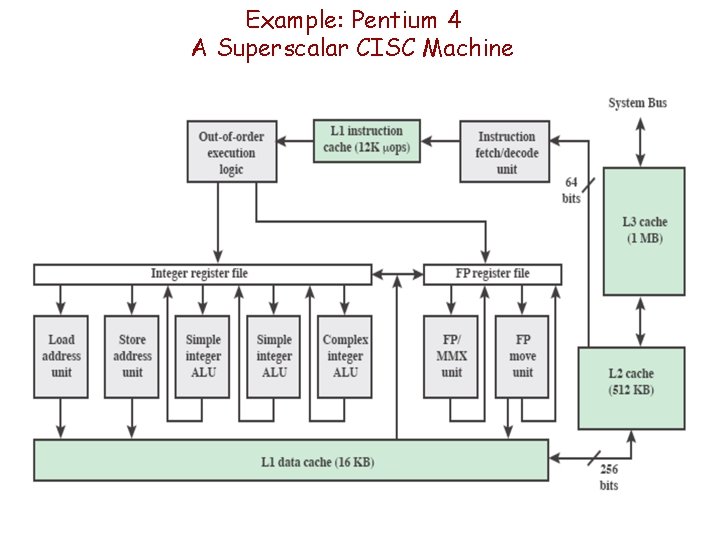

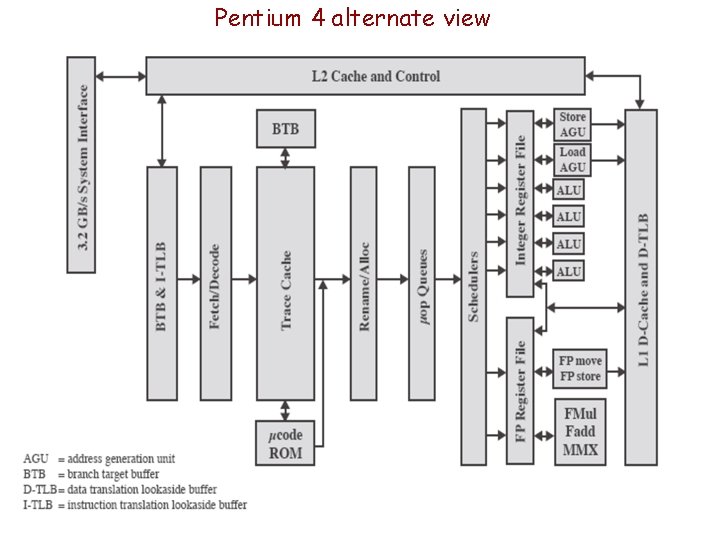

Example: Pentium 4 A Superscalar CISC Machine

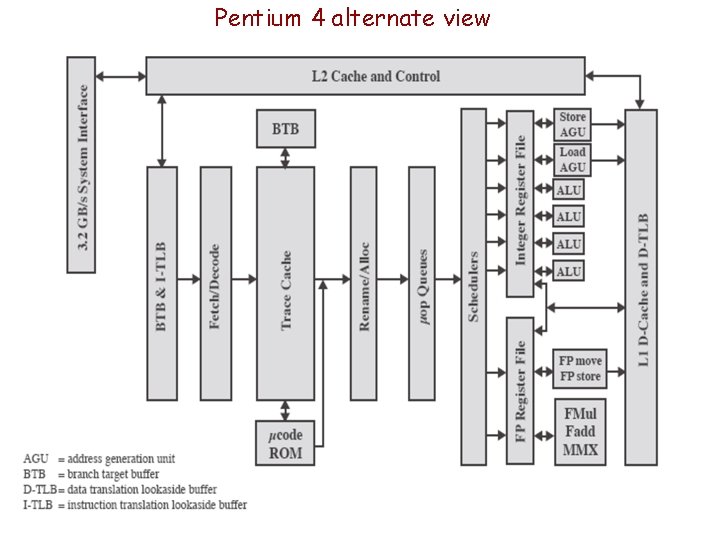

Pentium 4 alternate view

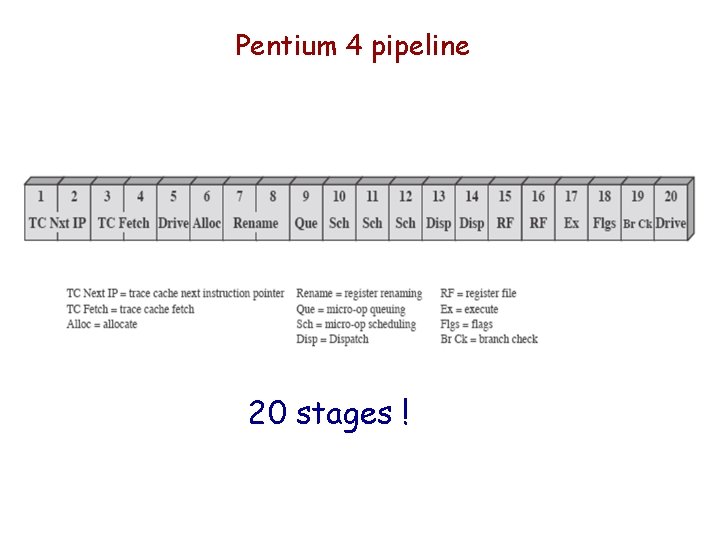

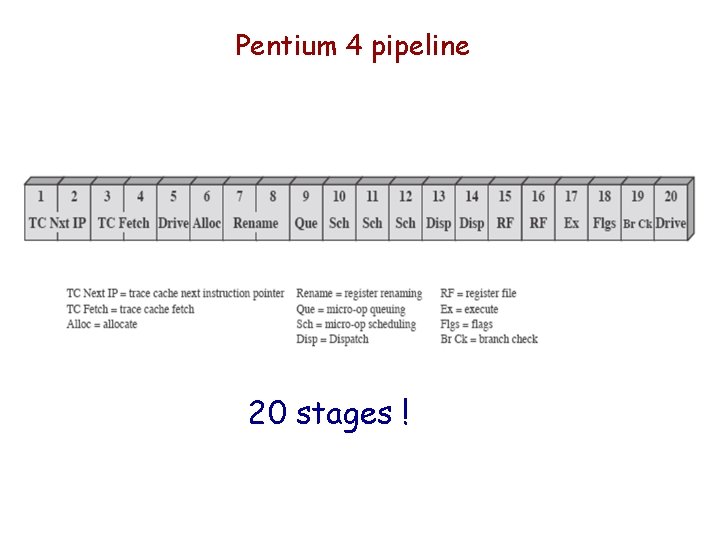

Pentium 4 pipeline 20 stages !

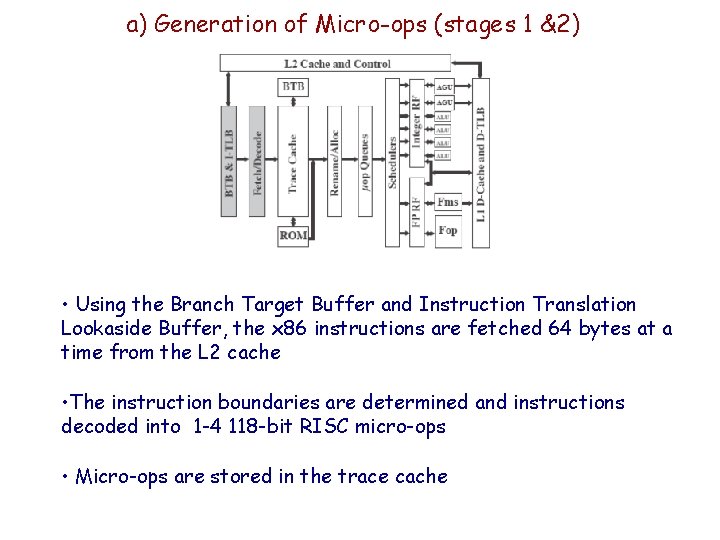

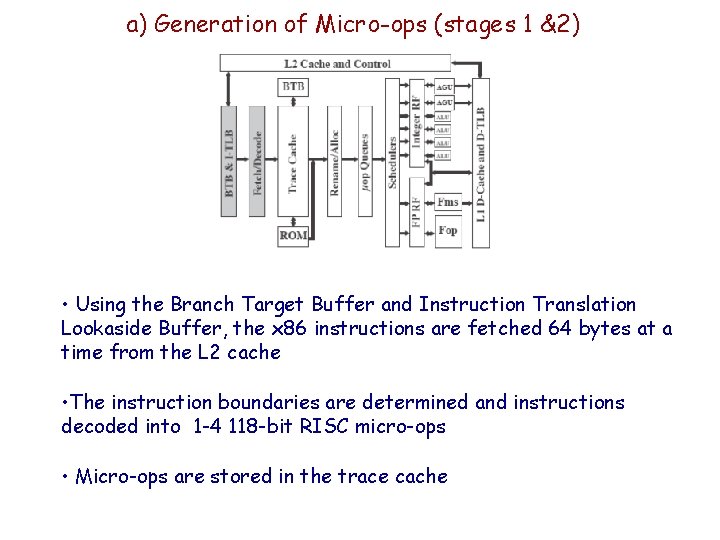

a) Generation of Micro-ops (stages 1 &2) • Using the Branch Target Buffer and Instruction Translation Lookaside Buffer, the x 86 instructions are fetched 64 bytes at a time from the L 2 cache • The instruction boundaries are determined and instructions decoded into 1 -4 118 -bit RISC micro-ops • Micro-ops are stored in the trace cache

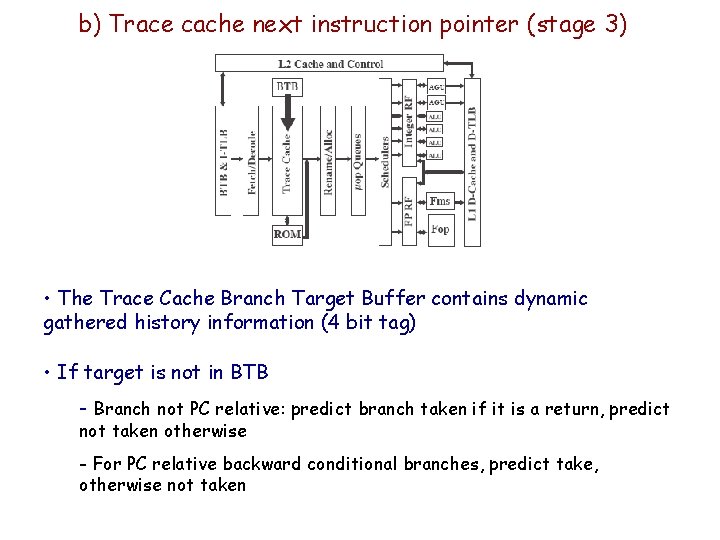

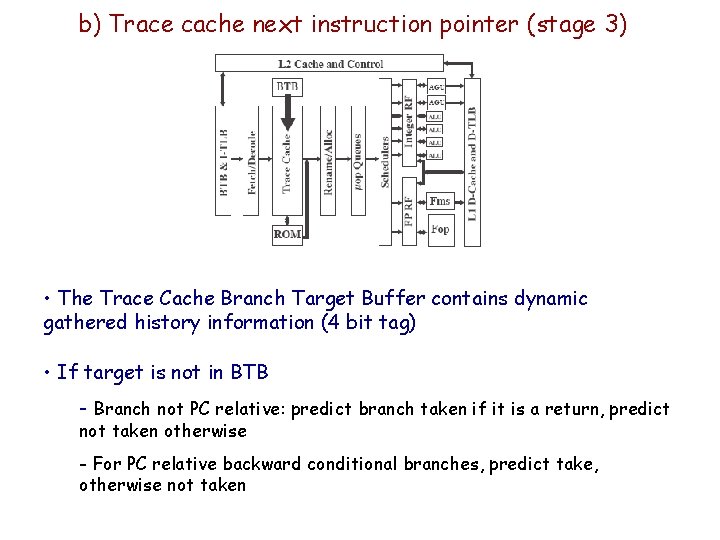

b) Trace cache next instruction pointer (stage 3) • The Trace Cache Branch Target Buffer contains dynamic gathered history information (4 bit tag) • If target is not in BTB - Branch not PC relative: predict branch taken if it is a return, predict not taken otherwise - For PC relative backward conditional branches, predict take, otherwise not taken

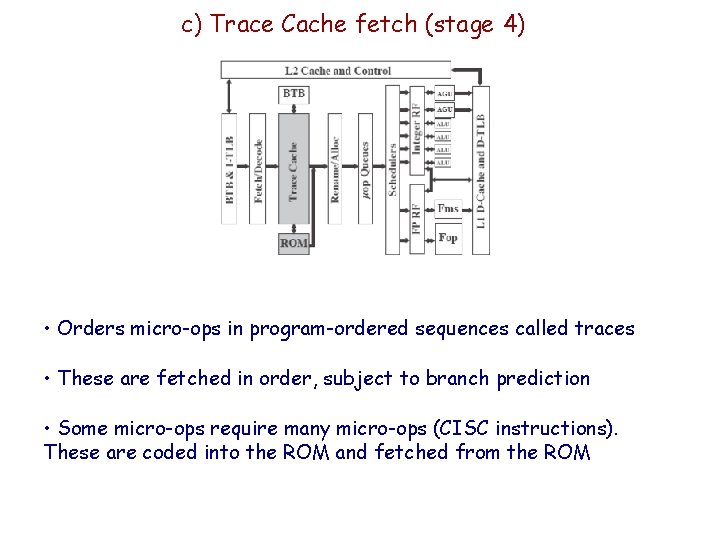

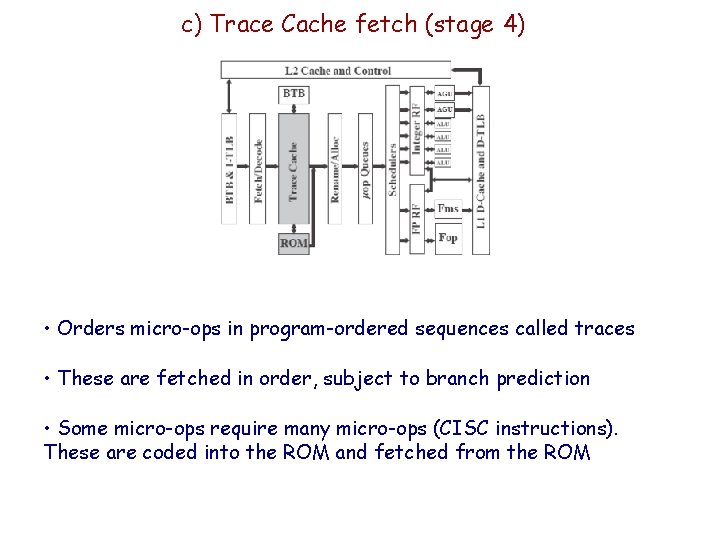

c) Trace Cache fetch (stage 4) • Orders micro-ops in program-ordered sequences called traces • These are fetched in order, subject to branch prediction • Some micro-ops require many micro-ops (CISC instructions). These are coded into the ROM and fetched from the ROM

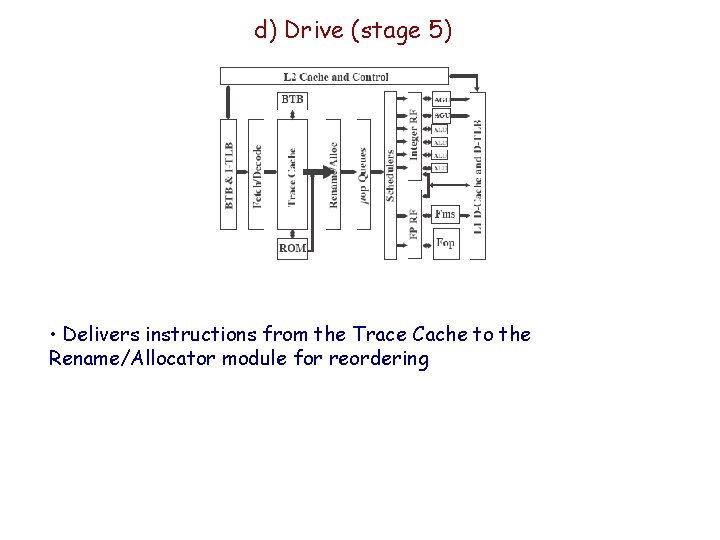

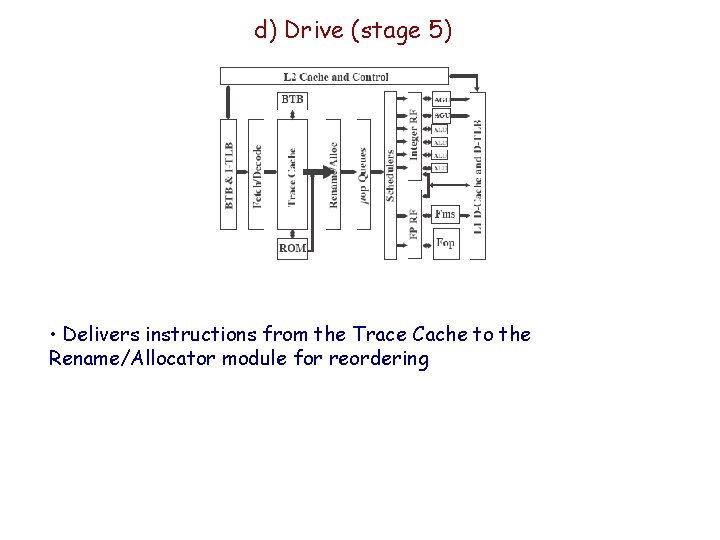

d) Drive (stage 5) • Delivers instructions from the Trace Cache to the Rename/Allocator module for reordering

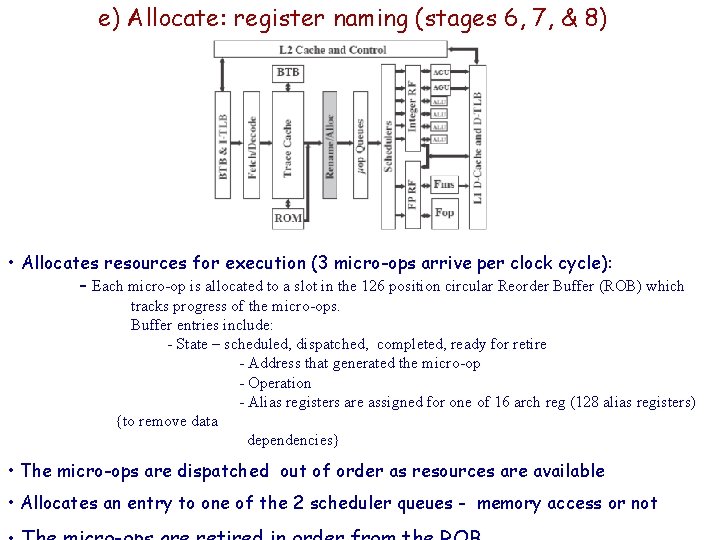

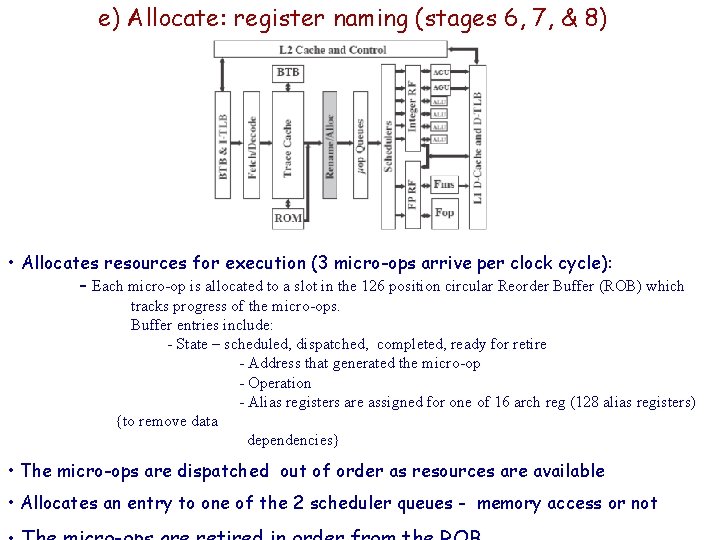

e) Allocate: register naming (stages 6, 7, & 8) • Allocates resources for execution (3 micro-ops arrive per clock cycle): - Each micro-op is allocated to a slot in the 126 position circular Reorder Buffer (ROB) which tracks progress of the micro-ops. Buffer entries include: - State – scheduled, dispatched, completed, ready for retire - Address that generated the micro-op - Operation - Alias registers are assigned for one of 16 arch reg (128 alias registers) {to remove data dependencies} • The micro-ops are dispatched out of order as resources are available • Allocates an entry to one of the 2 scheduler queues - memory access or not

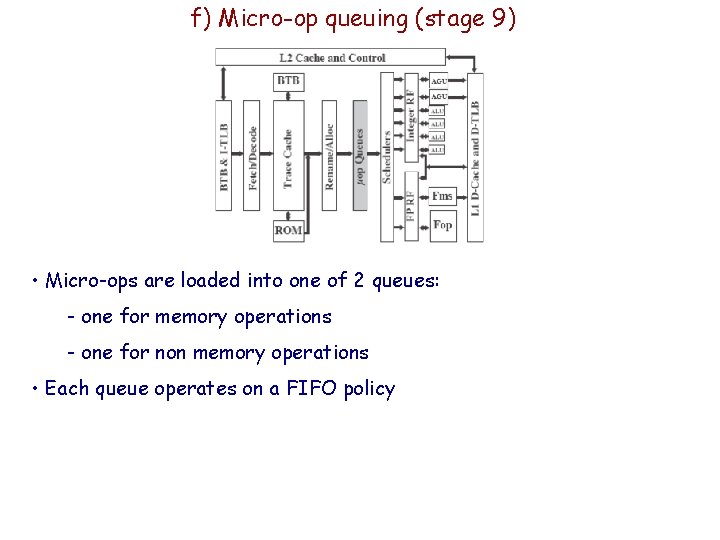

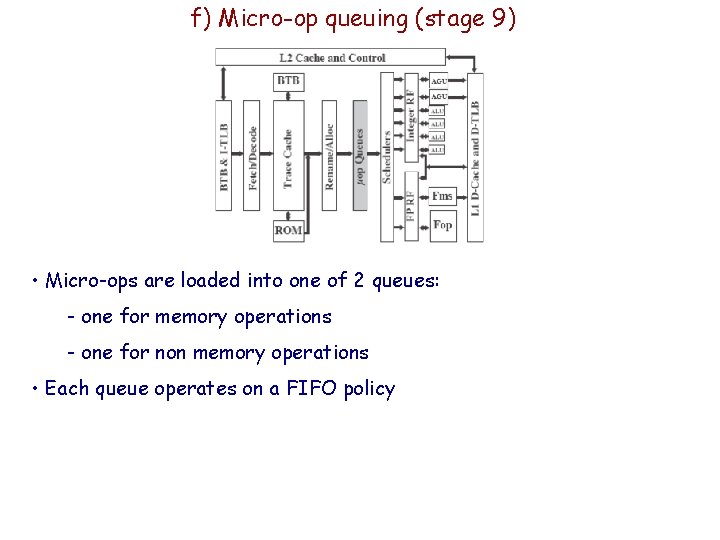

f) Micro-op queuing (stage 9) • Micro-ops are loaded into one of 2 queues: - one for memory operations - one for non memory operations • Each queue operates on a FIFO policy

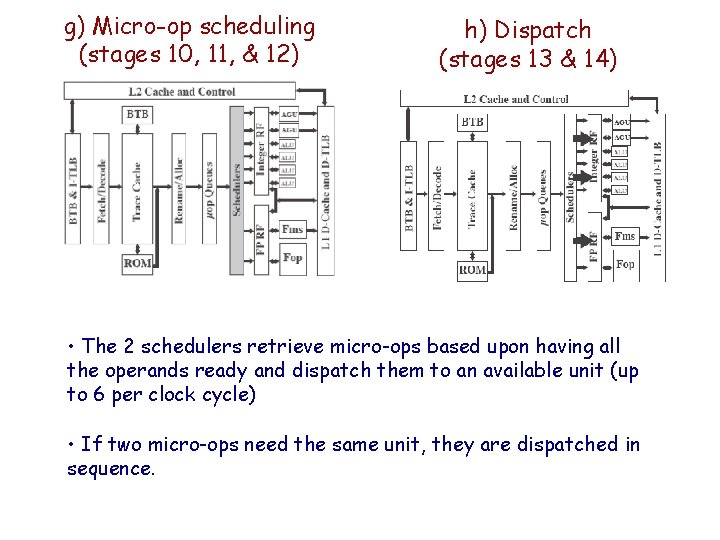

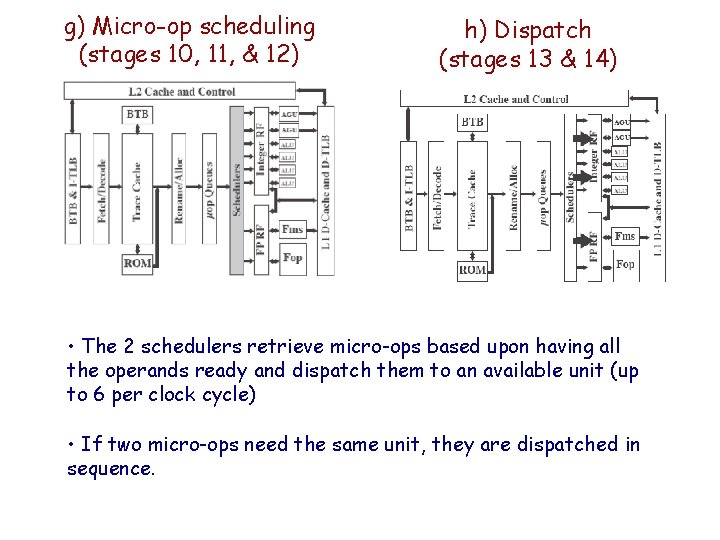

g) Micro-op scheduling (stages 10, 11, & 12) h) Dispatch (stages 13 & 14) • The 2 schedulers retrieve micro-ops based upon having all the operands ready and dispatch them to an available unit (up to 6 per clock cycle) • If two micro-ops need the same unit, they are dispatched in sequence.

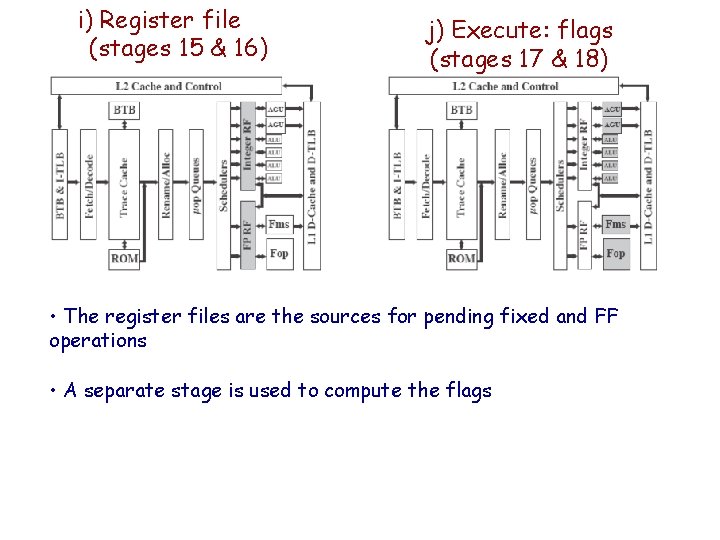

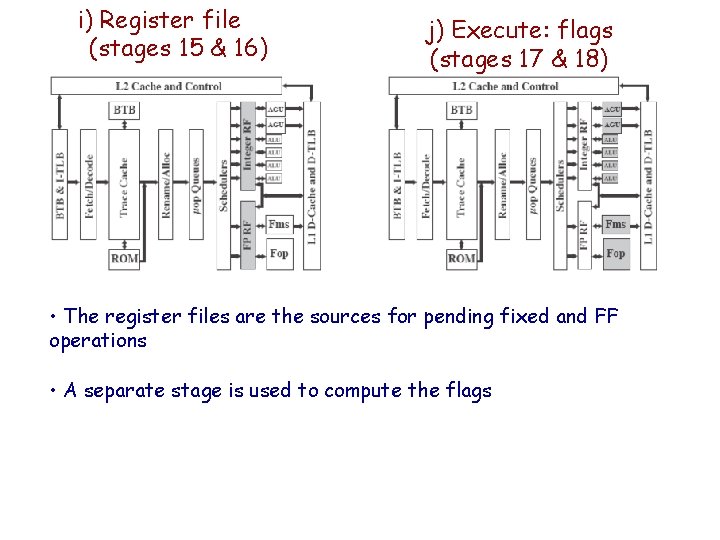

i) Register file (stages 15 & 16) j) Execute: flags (stages 17 & 18) • The register files are the sources for pending fixed and FF operations • A separate stage is used to compute the flags

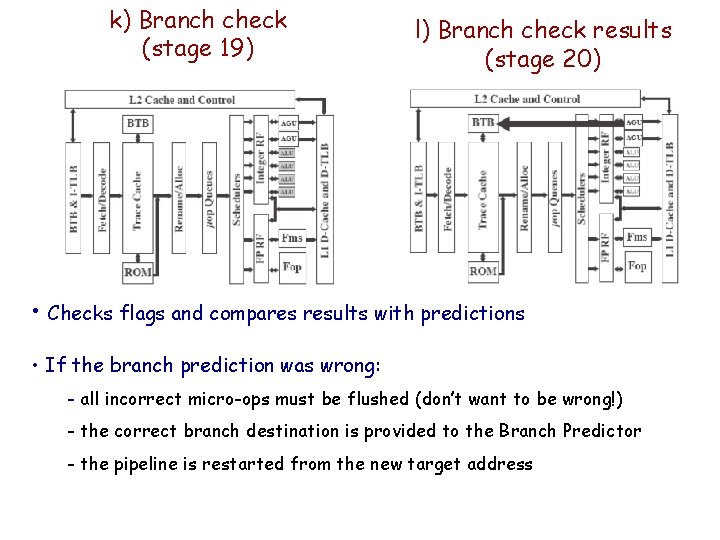

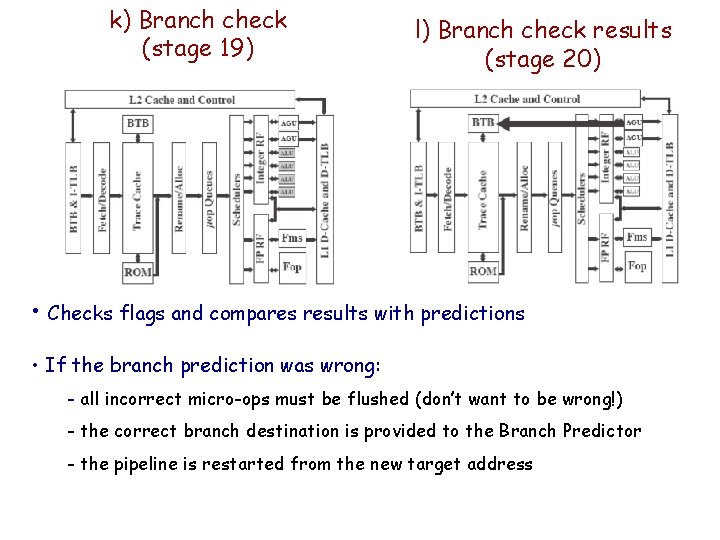

k) Branch check (stage 19) l) Branch check results (stage 20) • Checks flags and compares results with predictions • If the branch prediction was wrong: - all incorrect micro-ops must be flushed (don’t want to be wrong!) - the correct branch destination is provided to the Branch Predictor - the pipeline is restarted from the new target address