Tokenization POSTagging presented by Yajing Zhang Saarland University

Tokenization & POS-Tagging presented by: Yajing Zhang Saarland University yazhang@coli. uni-sb. de winter semester 05/06

Outline ¡ Tokenization l l ¡ Importance Problems & solutions POS tagging l l HMM tagger Tn. T statistical tagger winter semester 05/06 2

Why Tokenization? Tokenization: the isolation of wordlike units from a text. ¡ Building blocks of other text processing. ¡ The accuracy of tokenization affects the results of other higher level processing, e. g. : parsing. ¡ winter semester 05/06 3

Problems of tokenization ¡ Definition of Token l ¡ Ambiguity of punctuation as sentence boundary l ¡ United States, AT&T, 3 -year-old Prof. Dr. J. M. Ambiguity in numbers l 123, 456. 78 winter semester 05/06 4

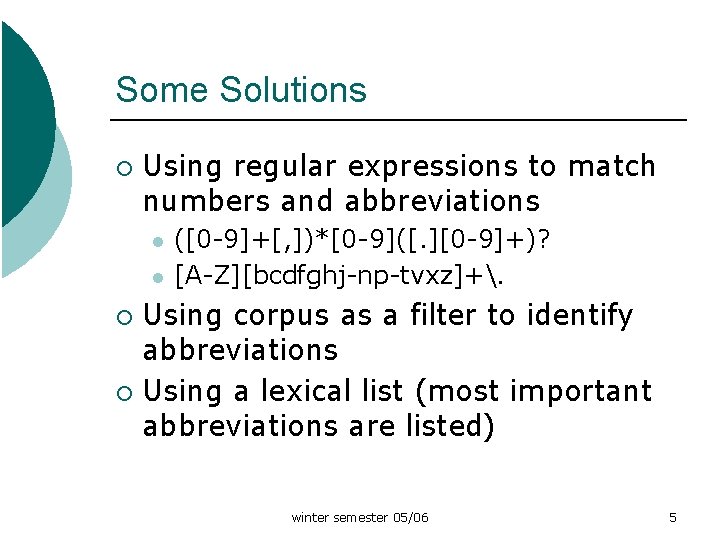

Some Solutions ¡ Using regular expressions to match numbers and abbreviations l l ([0 -9]+[, ])*[0 -9]([. ][0 -9]+)? [A-Z][bcdfghj-np-tvxz]+. Using corpus as a filter to identify abbreviations ¡ Using a lexical list (most important abbreviations are listed) ¡ winter semester 05/06 5

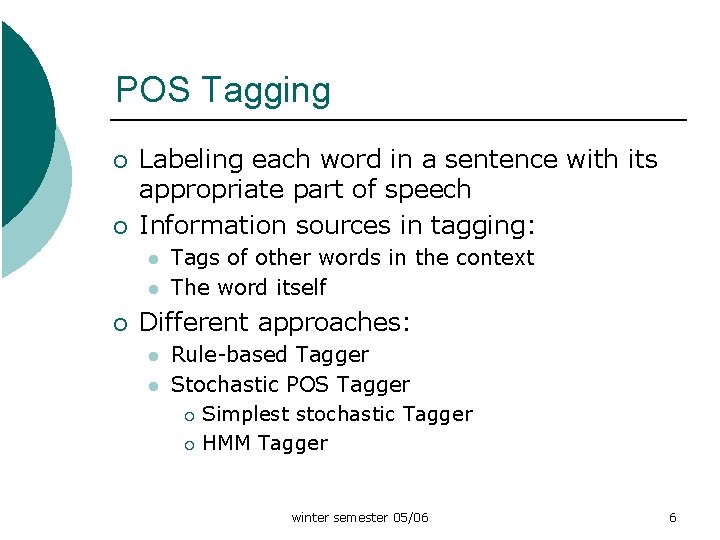

POS Tagging ¡ ¡ Labeling each word in a sentence with its appropriate part of speech Information sources in tagging: l l ¡ Tags of other words in the context The word itself Different approaches: l l Rule-based Tagger Stochastic POS Tagger ¡ Simplest stochastic Tagger ¡ HMM Tagger winter semester 05/06 6

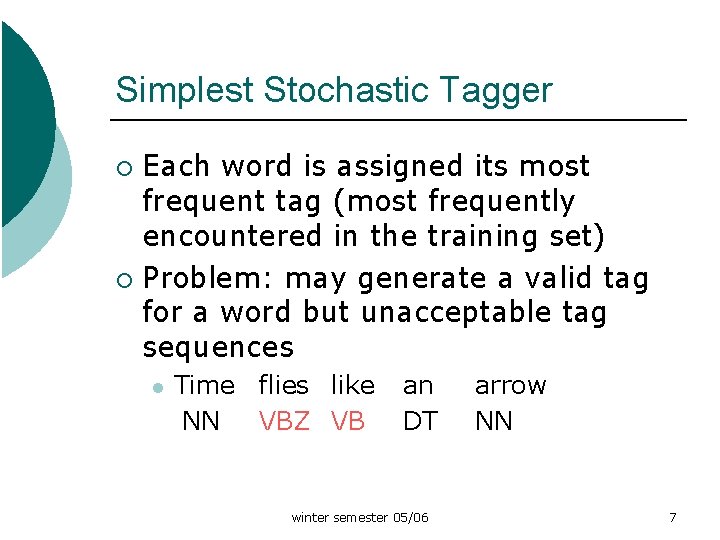

Simplest Stochastic Tagger Each word is assigned its most frequent tag (most frequently encountered in the training set) ¡ Problem: may generate a valid tag for a word but unacceptable tag sequences ¡ l Time flies like NN VBZ VB an DT winter semester 05/06 arrow NN 7

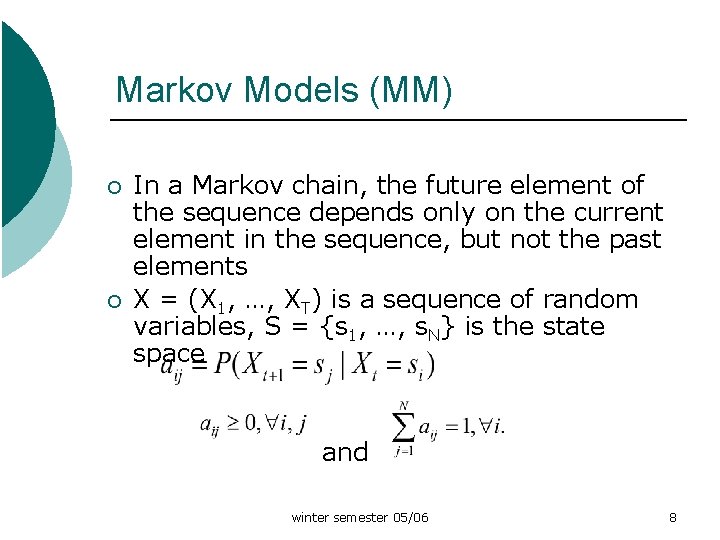

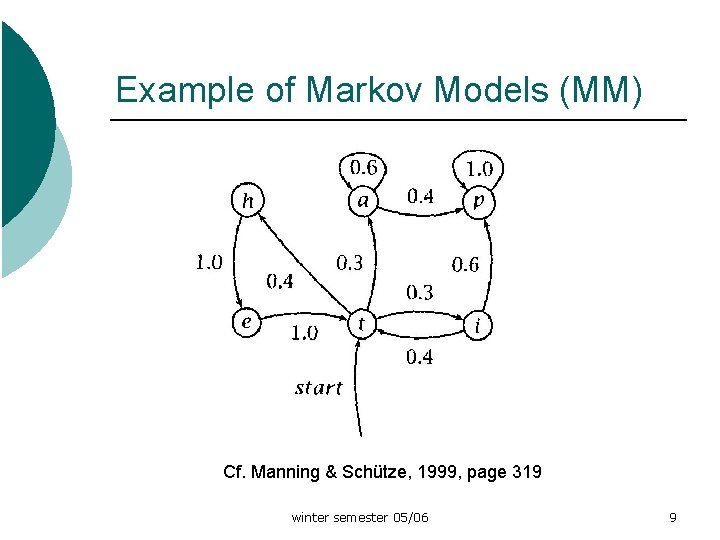

Markov Models (MM) ¡ ¡ In a Markov chain, the future element of the sequence depends only on the current element in the sequence, but not the past elements X = (X 1, …, XT) is a sequence of random variables, S = {s 1, …, s. N} is the state space and winter semester 05/06 8

Example of Markov Models (MM) Cf. Manning & Schütze, 1999, page 319 winter semester 05/06 9

Hidden Markov Model ¡ ¡ In (visible) MM, we know the state sequences the model passes, so the state sequence is regarded as output In HMM, we don’t know the state sequences, but only some probabilistic function of it Markov models can be used wherever one wants to model the probability of a linear sequence of events HMM can be trained from unannotated text winter semester 05/06 10

HMM Tagger ¡ ¡ ¡ Assumption: word’s tag only depends on the previous tag and this dependency does not change over time HMM tagger uses states to represent POS tags and outputs (symbol emission) to represent the words. Tagging task is to find the most probable tag sequence for a sequence of words. winter semester 05/06 11

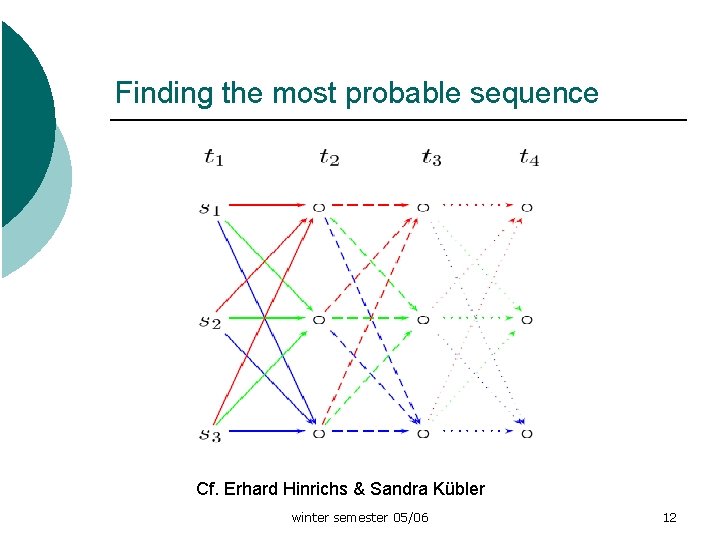

Finding the most probable sequence Cf. Erhard Hinrichs & Sandra Kübler winter semester 05/06 12

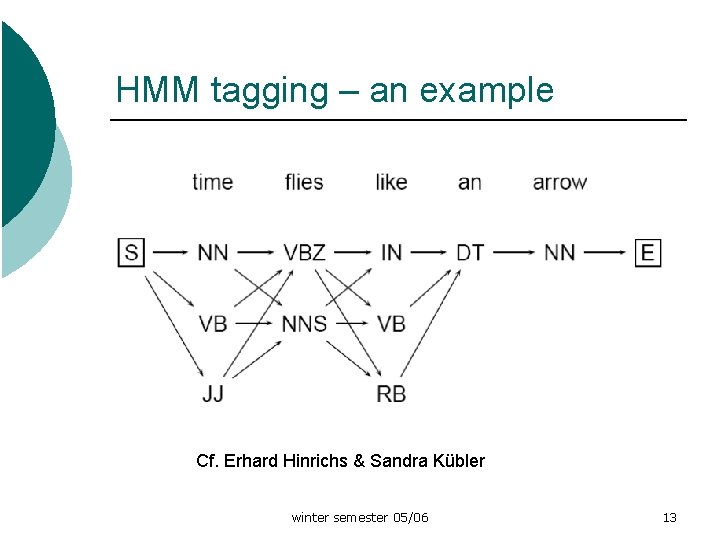

HMM tagging – an example Cf. Erhard Hinrichs & Sandra Kübler winter semester 05/06 13

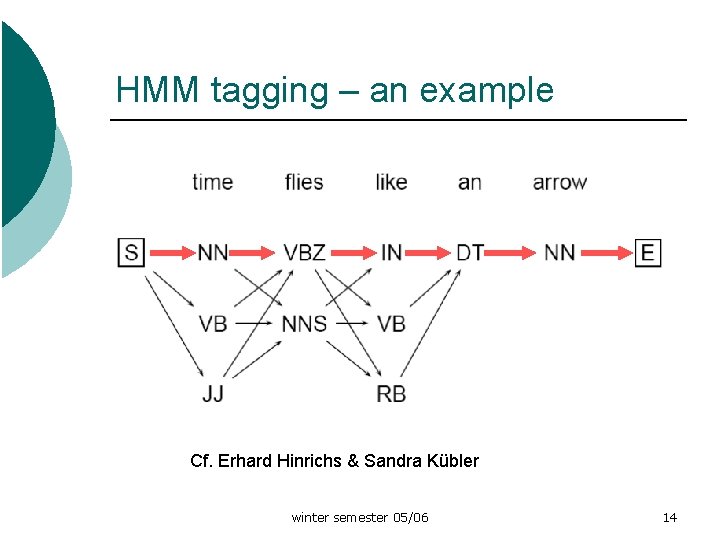

HMM tagging – an example Cf. Erhard Hinrichs & Sandra Kübler winter semester 05/06 14

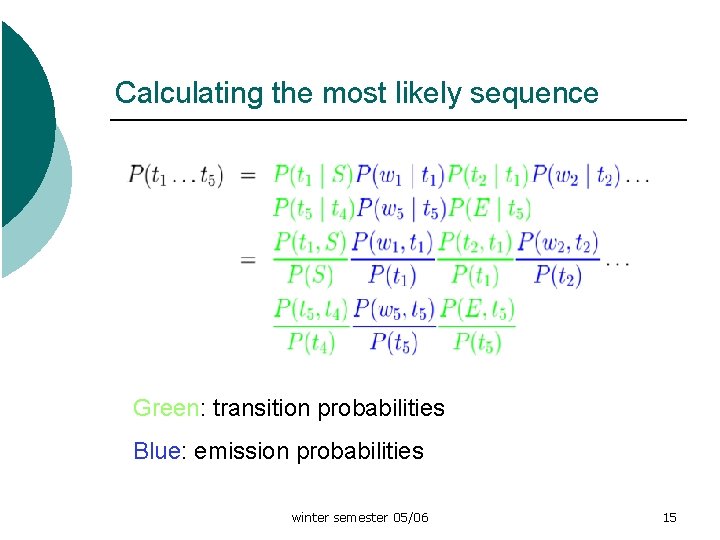

Calculating the most likely sequence Green: transition probabilities Blue: emission probabilities winter semester 05/06 15

Dealing with unknown words The simplest model: assume that unknown words can have any POS tags, or the most frequent one in the tagset ¡ In practice, morphological info like suffix is used as hint ¡ winter semester 05/06 16

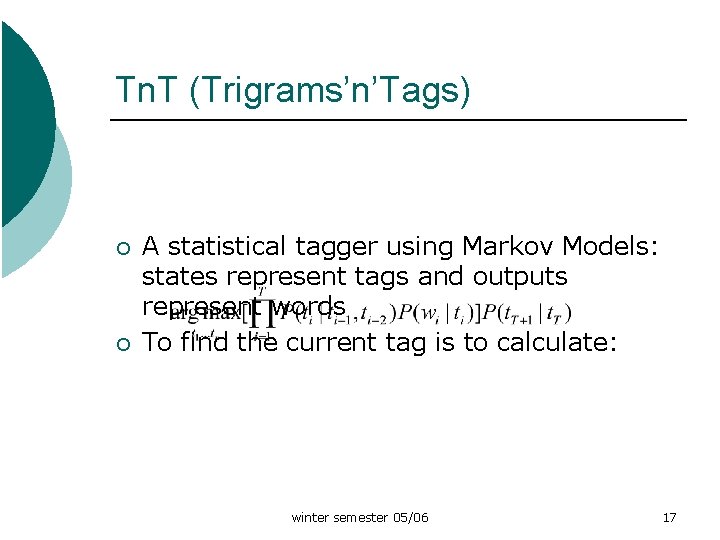

Tn. T (Trigrams’n’Tags) ¡ ¡ A statistical tagger using Markov Models: states represent tags and outputs represent words To find the current tag is to calculate: winter semester 05/06 17

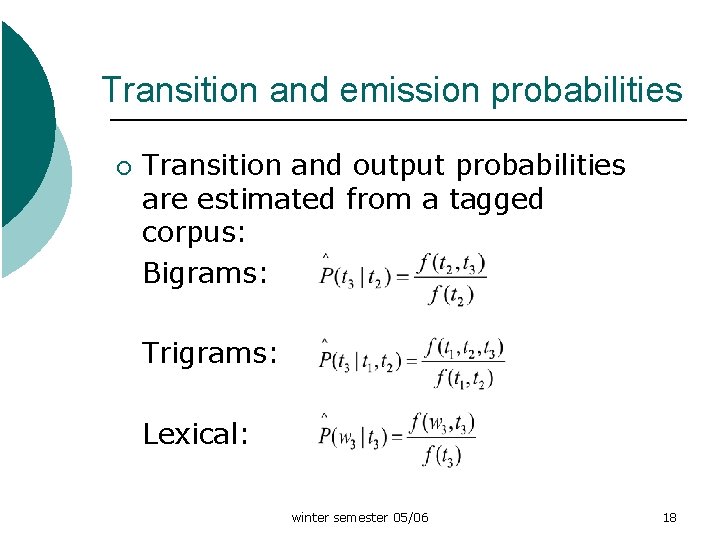

Transition and emission probabilities ¡ Transition and output probabilities are estimated from a tagged corpus: Bigrams: Trigrams: Lexical: winter semester 05/06 18

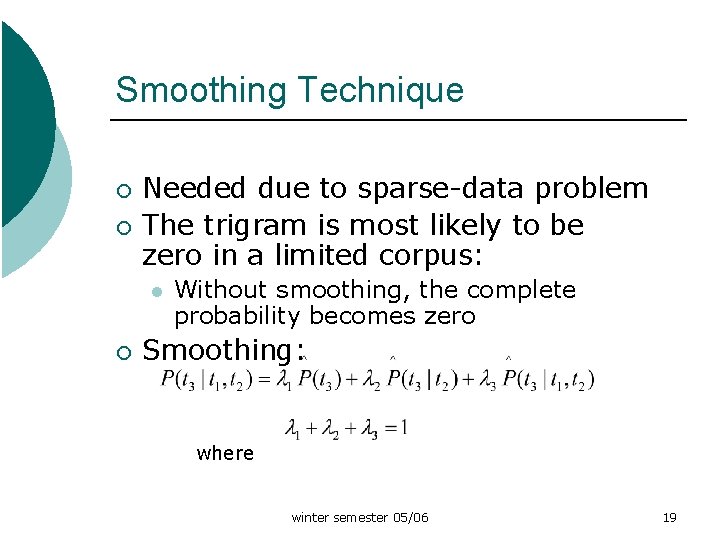

Smoothing Technique ¡ ¡ Needed due to sparse-data problem The trigram is most likely to be zero in a limited corpus: l ¡ Without smoothing, the complete probability becomes zero Smoothing: where winter semester 05/06 19

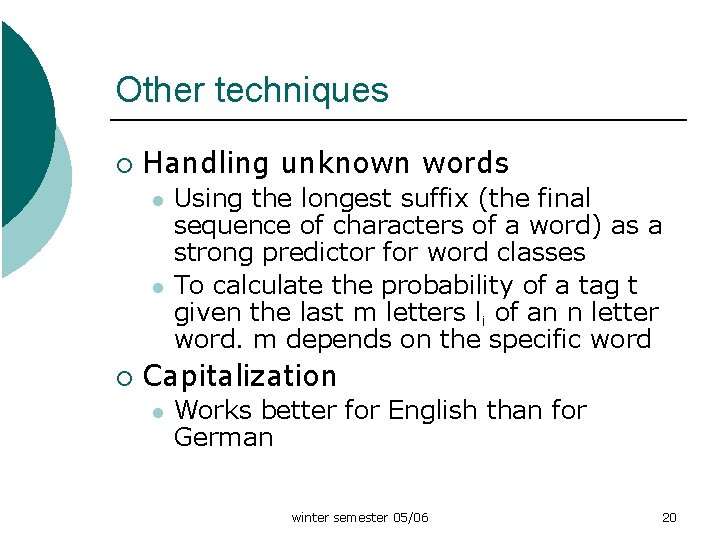

Other techniques ¡ Handling unknown words l l ¡ Using the longest suffix (the final sequence of characters of a word) as a strong predictor for word classes To calculate the probability of a tag t given the last m letters li of an n letter word. m depends on the specific word Capitalization l Works better for English than for German winter semester 05/06 20

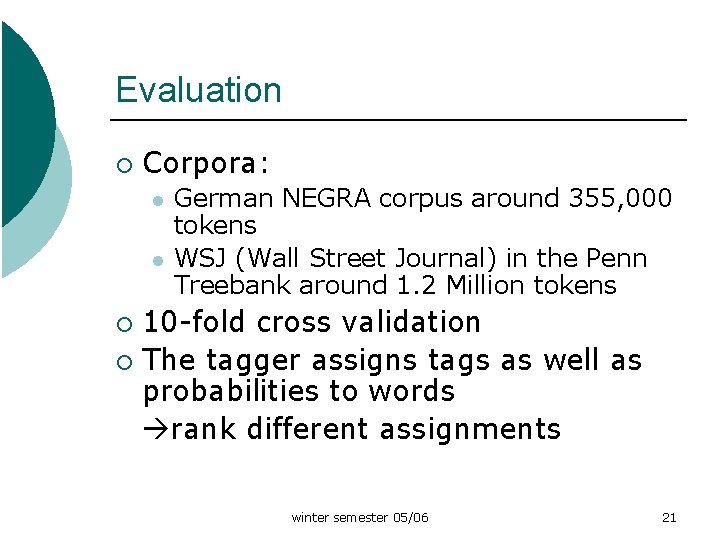

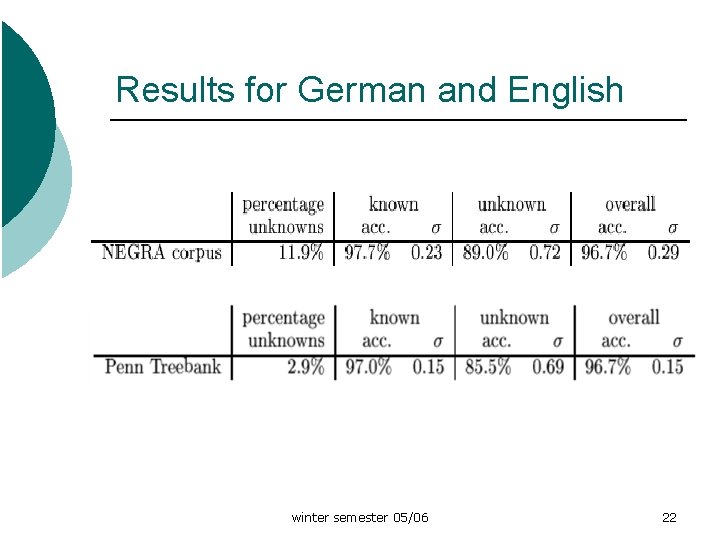

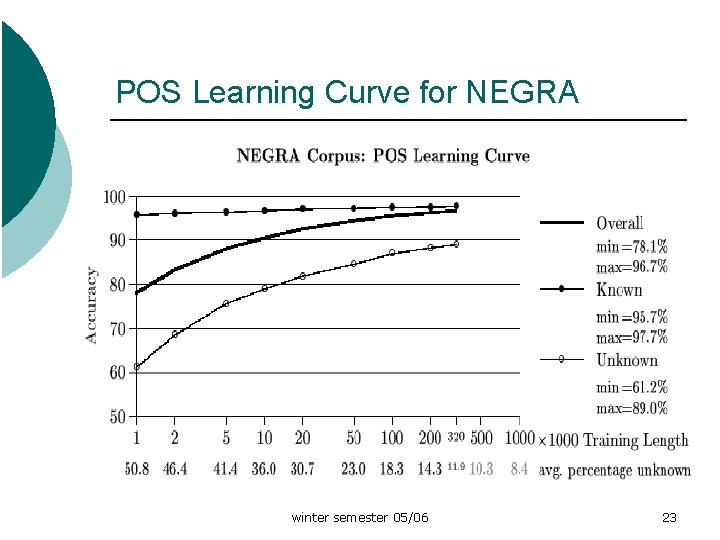

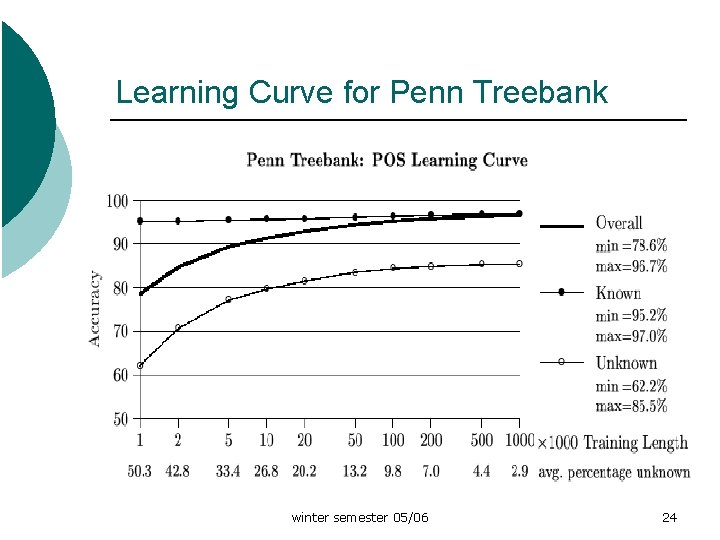

Evaluation ¡ Corpora: l l German NEGRA corpus around 355, 000 tokens WSJ (Wall Street Journal) in the Penn Treebank around 1. 2 Million tokens 10 -fold cross validation ¡ The tagger assigns tags as well as probabilities to words rank different assignments ¡ winter semester 05/06 21

Results for German and English winter semester 05/06 22

POS Learning Curve for NEGRA winter semester 05/06 23

Learning Curve for Penn Treebank winter semester 05/06 24

Conclusion Good results for both German and English corpus ¡ Average accuracy Tn. T achieves is between 96% and 97% ¡ The accuracy for known tokens is significantly higher than for unknown tokens ¡ winter semester 05/06 25

References: ¡ ¡ What is a word, what’s a sentence (Grefenstette 94) POS-Tagging and Partial Parsing (Abney 96) TNT- A Statistical Part-of-Speech Tagger (Brants 2000) Foundations of Statistical Natural Language Processing (Manning & Schütze 99) winter semester 05/06 26

- Slides: 26