Todays Topics Boolean IR Signature files Inverted files

Today’s Topics • • • Boolean IR Signature files Inverted files PAT trees Suffix arrays

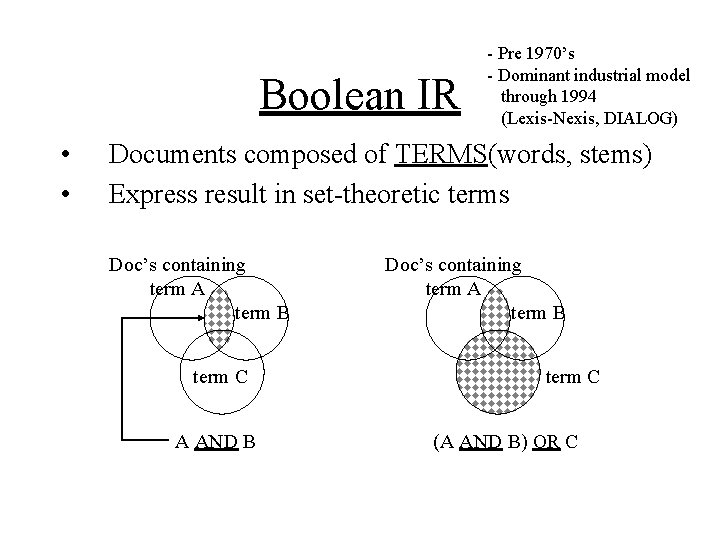

Boolean IR • • - Pre 1970’s - Dominant industrial model through 1994 (Lexis-Nexis, DIALOG) Documents composed of TERMS(words, stems) Express result in set-theoretic terms Doc’s containing term A term B term C A AND B Doc’s containing term A term B term C (A AND B) OR C

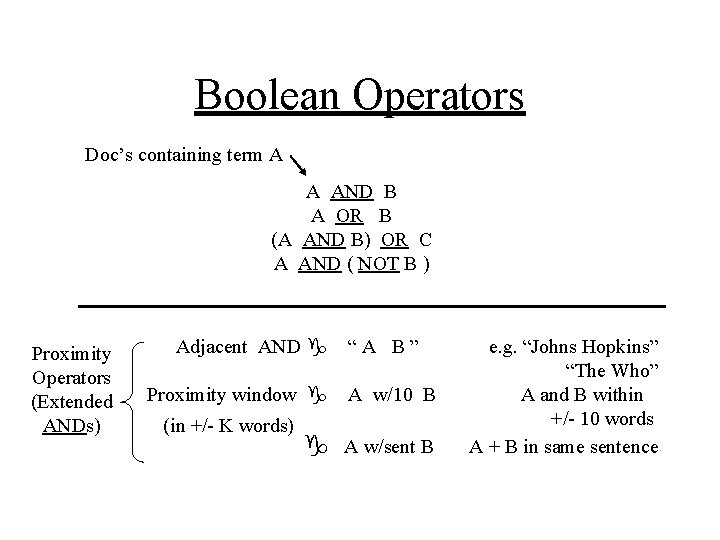

Boolean Operators Doc’s containing term A A AND B A OR B (A AND B) OR C A AND ( NOT B ) Proximity Operators (Extended ANDs) Adjacent AND g Proximity window g (in +/- K words) “A B” A w/10 B g A w/sent B e. g. “Johns Hopkins” “The Who” A and B within +/- 10 words A + B in same sentence

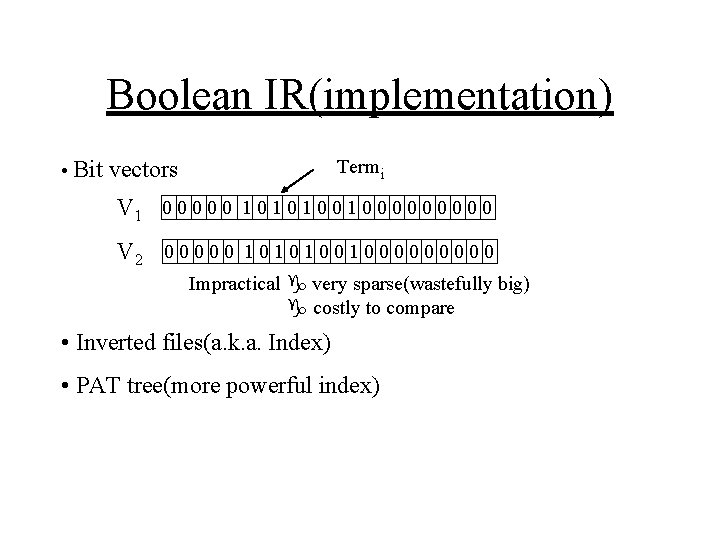

Boolean IR(implementation) Termi • Bit vectors V 1 0 0 0 1 0 1 0 0 0 0 0 V 2 0 0 0 1 0 1 0 0 0 0 0 Impractical g very sparse(wastefully big) g costly to compare • Inverted files(a. k. a. Index) • PAT tree(more powerful index)

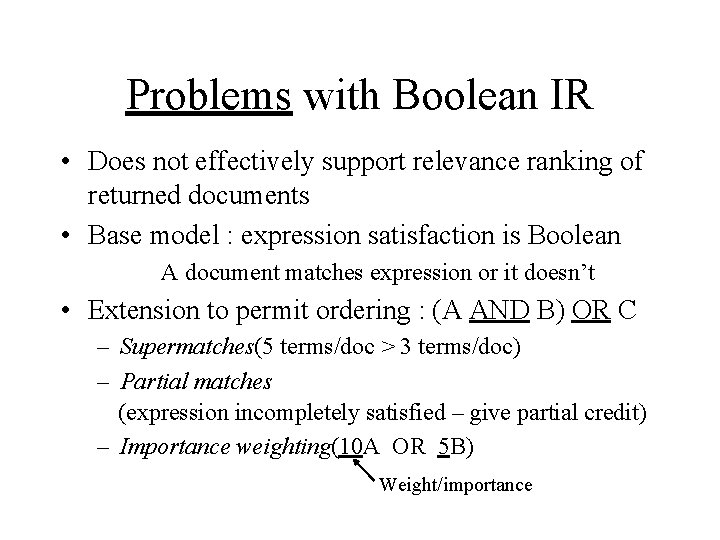

Problems with Boolean IR • Does not effectively support relevance ranking of returned documents • Base model : expression satisfaction is Boolean A document matches expression or it doesn’t • Extension to permit ordering : (A AND B) OR C – Supermatches(5 terms/doc > 3 terms/doc) – Partial matches (expression incompletely satisfied – give partial credit) – Importance weighting(10 A OR 5 B) Weight/importance

Boolean IR • Advantages : Can directly control search Good for precise queries in structured data (e. g. database search or legal index) • Disadvantages : Must directly control search – Users should be familiar with domain and term space(know what to ask for and exclude) – Poor at relevance ranking – Poor at weighted query expansion, user modelling etc.

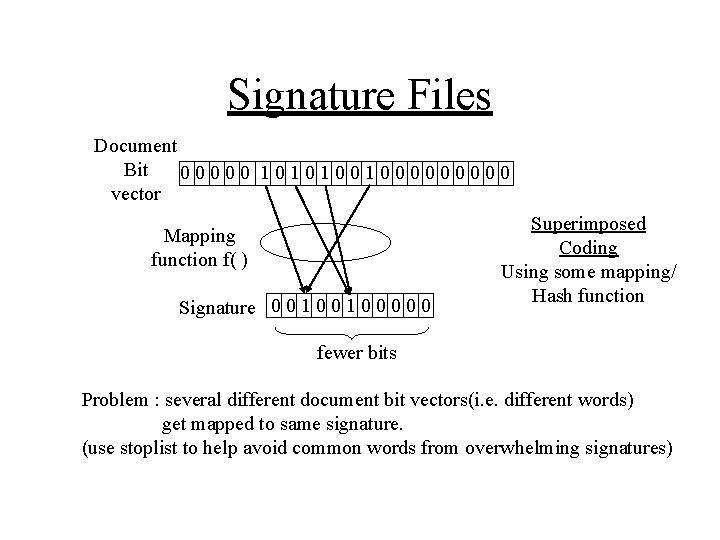

Signature Files Document Bit 00000 1010100100000 vector Mapping function f( ) Signature 0 0 1 0 0 0 Superimposed Coding Using some mapping/ Hash function fewer bits Problem : several different document bit vectors(i. e. different words) get mapped to same signature. (use stoplist to help avoid common words from overwhelming signatures)

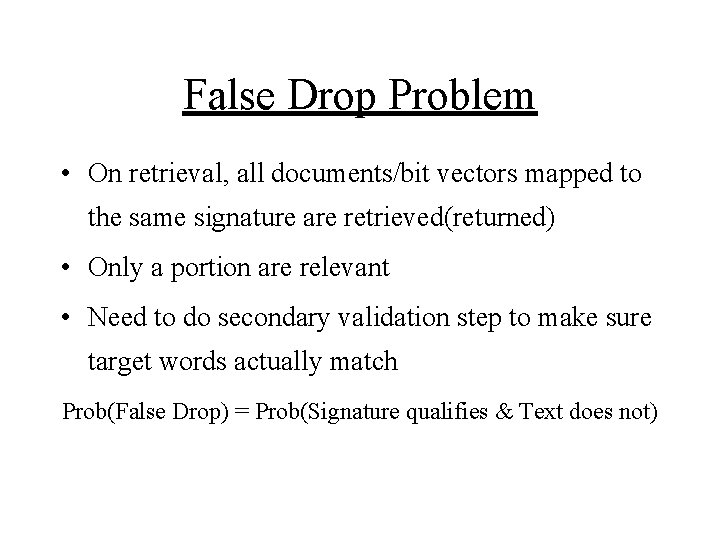

False Drop Problem • On retrieval, all documents/bit vectors mapped to the same signature are retrieved(returned) • Only a portion are relevant • Need to do secondary validation step to make sure target words actually match Prob(False Drop) = Prob(Signature qualifies & Text does not)

Efficiency Problem Testing for signature match may require linear scan through all document signatures

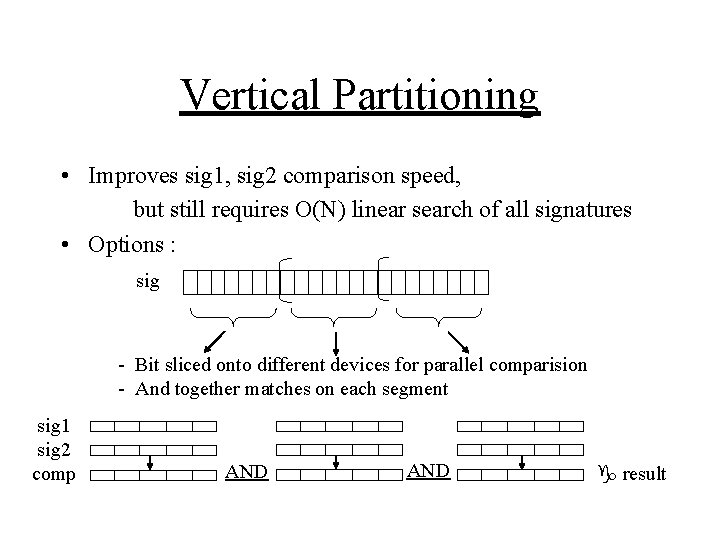

Vertical Partitioning • Improves sig 1, sig 2 comparison speed, but still requires O(N) linear search of all signatures • Options : sig - Bit sliced onto different devices for parallel comparision - And together matches on each segment sig 1 sig 2 comp AND g result

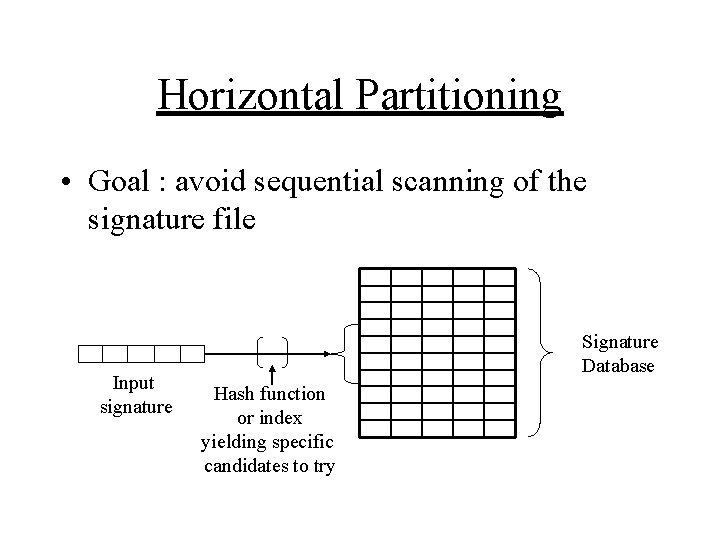

Horizontal Partitioning • Goal : avoid sequential scanning of the signature file Input signature Signature Database Hash function or index yielding specific candidates to try

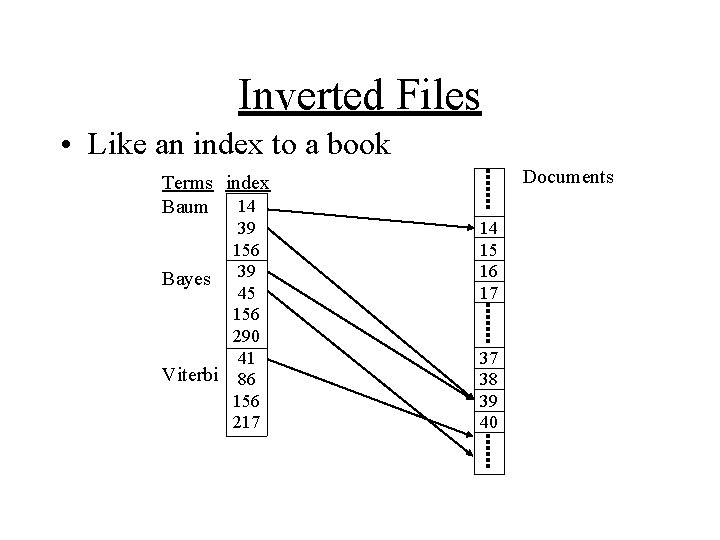

Inverted Files • Like an index to a book Documents Terms index Baum 14 39 156 Bayes 39 45 156 290 41 Viterbi 86 156 217 14 15 16 17 37 38 39 40

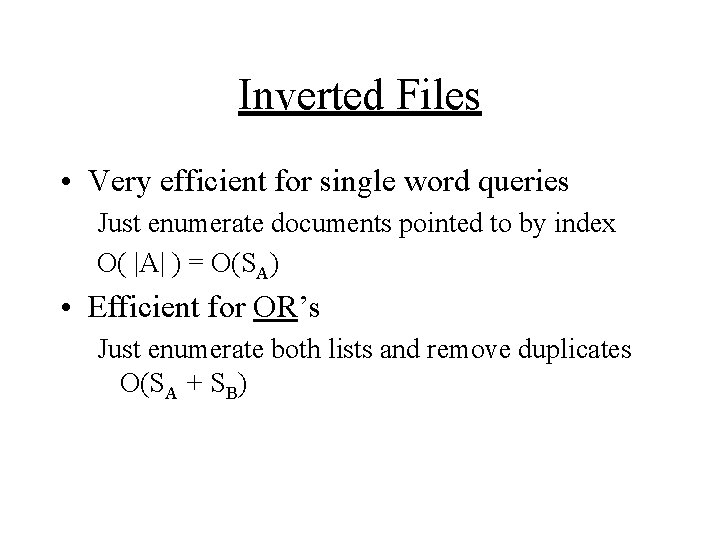

Inverted Files • Very efficient for single word queries Just enumerate documents pointed to by index O( |A| ) = O(SA) • Efficient for OR’s Just enumerate both lists and remove duplicates O(SA + SB)

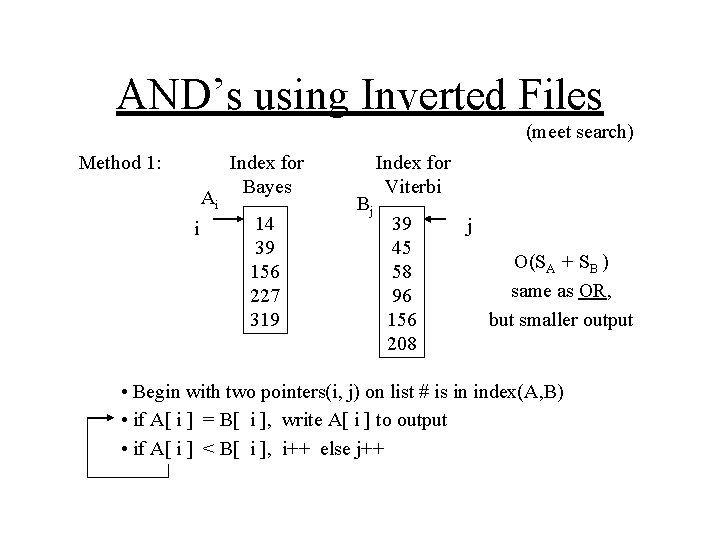

AND’s using Inverted Files (meet search) Method 1: Ai i Index for Bayes 14 39 156 227 319 Bj Index for Viterbi 39 45 58 96 156 208 j O(SA + SB ) same as OR, but smaller output • Begin with two pointers(i, j) on list # is in index(A, B) • if A[ i ] = B[ i ], write A[ i ] to output • if A[ i ] < B[ i ], i++ else j++

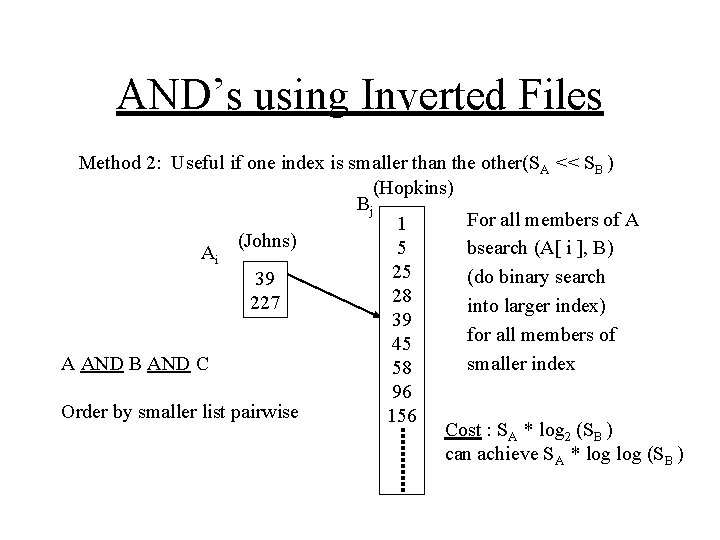

AND’s using Inverted Files Method 2: Useful if one index is smaller than the other(SA << SB ) (Hopkins) Bj For all members of A 1 (Johns) 5 bsearch (A[ i ], B) Ai 25 (do binary search 39 28 227 into larger index) 39 for all members of 45 smaller index A AND B AND C 58 96 Order by smaller list pairwise 156 Cost : SA * log 2 (SB ) can achieve SA * log (SB )

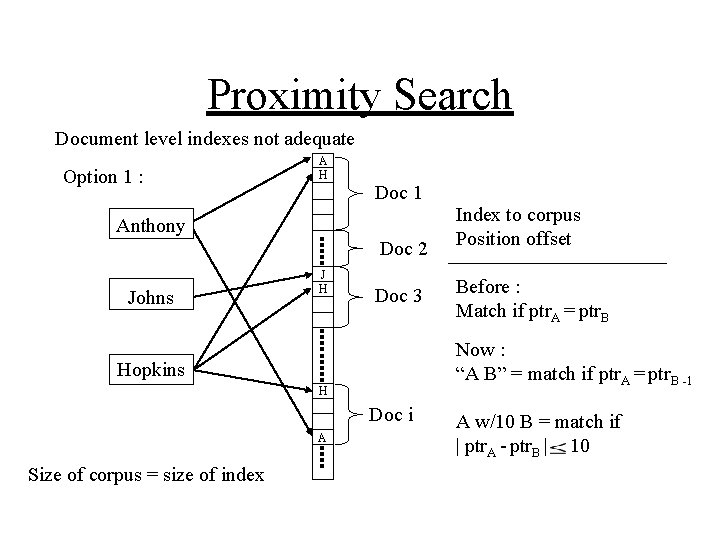

Proximity Search Document level indexes not adequate Option 1 : A H Doc 1 Anthony Doc 2 Johns J H Doc 3 H Doc i Size of corpus = size of index Before : Match if ptr. A = ptr. B Now : “A B” = match if ptr. A = ptr. B -1 Hopkins A Index to corpus Position offset A w/10 B = match if | ptr. A - ptr. B | 10

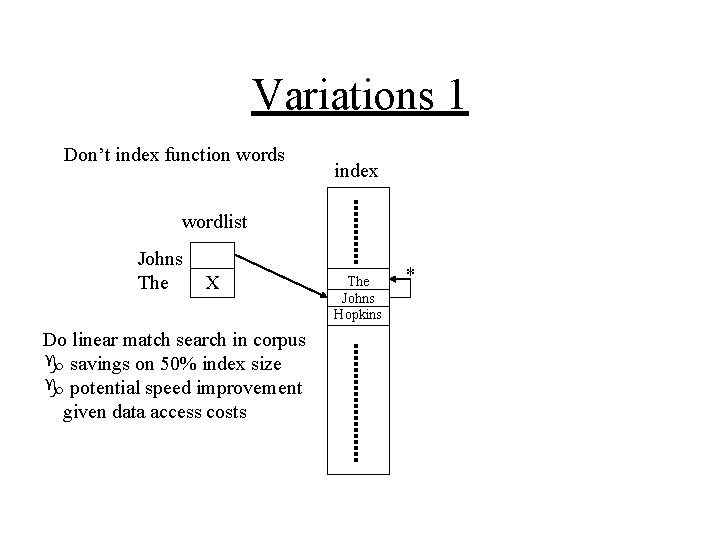

Variations 1 Don’t index function words index wordlist Johns The X Do linear match search in corpus g savings on 50% index size g potential speed improvement given data access costs The Johns Hopkins *

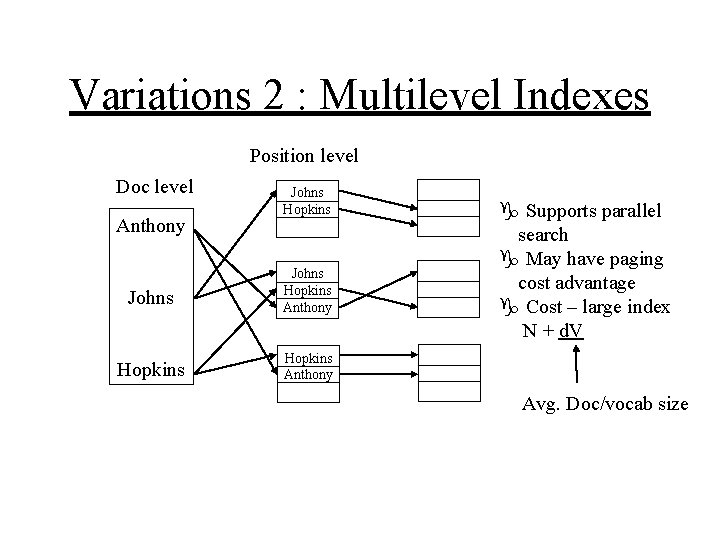

Variations 2 : Multilevel Indexes Position level Doc level Anthony Johns Hopkins Anthony Hopkins Anthony g Supports parallel search g May have paging cost advantage g Cost – large index N + d. V Avg. Doc/vocab size

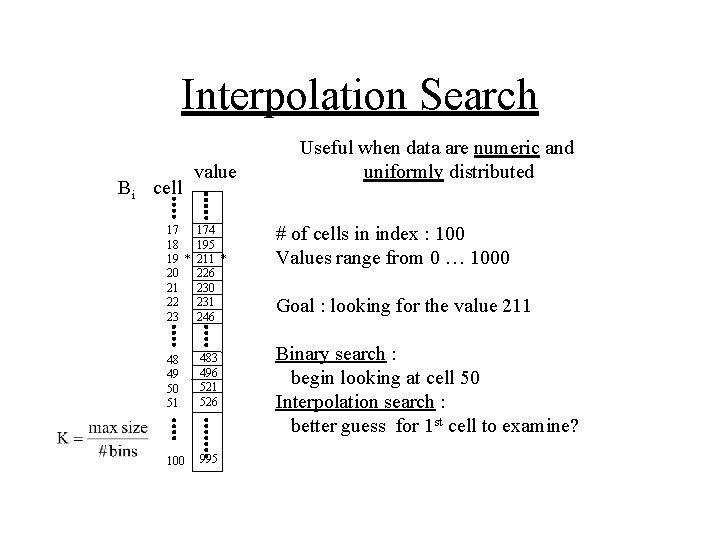

Interpolation Search Bi cell value 17 174 18 195 19 * 211 * 20 226 21 230 22 231 23 246 48 49 50 51 483 496 521 526 100 995 Useful when data are numeric and uniformly distributed # of cells in index : 100 Values range from 0 … 1000 Goal : looking for the value 211 Binary search : begin looking at cell 50 Interpolation search : better guess for 1 st cell to examine?

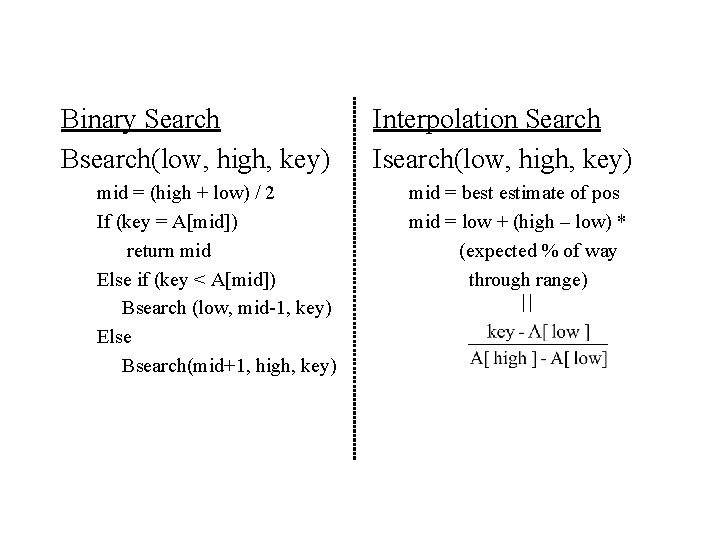

Binary Search Bsearch(low, high, key) mid = (high + low) / 2 If (key = A[mid]) return mid Else if (key < A[mid]) Bsearch (low, mid-1, key) Else Bsearch(mid+1, high, key) Interpolation Search Isearch(low, high, key) mid = best estimate of pos mid = low + (high – low) * (expected % of way through range)

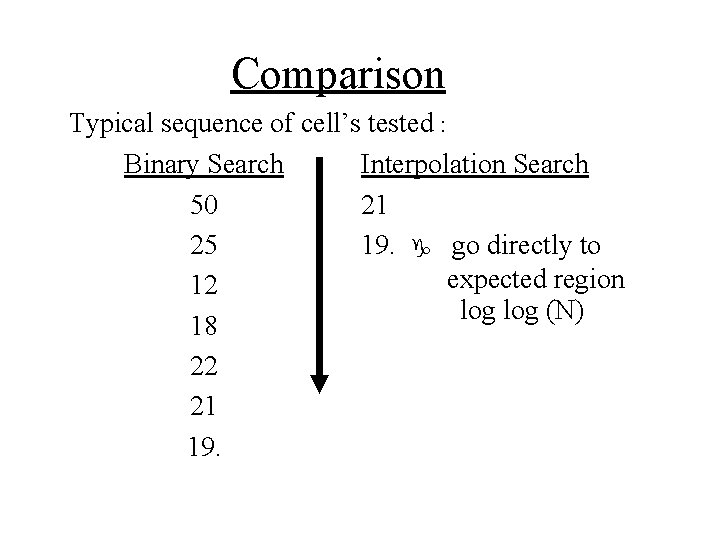

Comparison Typical sequence of cell’s tested : Binary Search Interpolation Search 50 21 25 19. g go directly to expected region 12 log (N) 18 22 21 19.

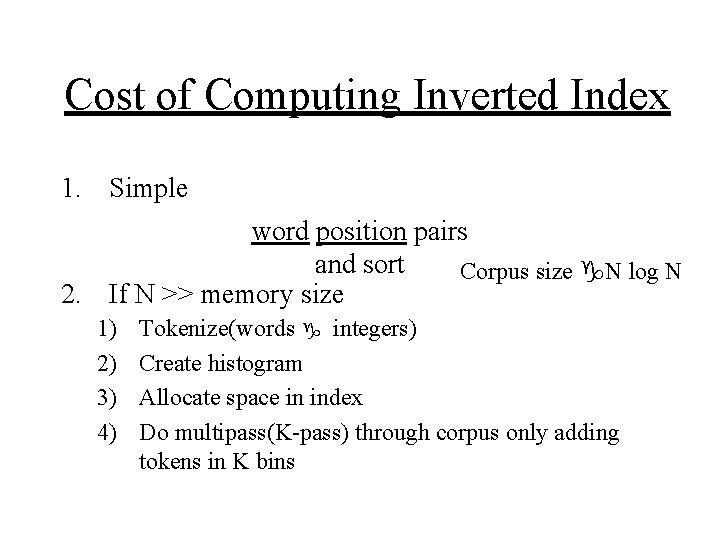

Cost of Computing Inverted Index 1. Simple word position pairs and sort Corpus size g. N log N 2. If N >> memory size 1) 2) 3) 4) Tokenize(words g integers) Create histogram Allocate space in index Do multipass(K-pass) through corpus only adding tokens in K bins

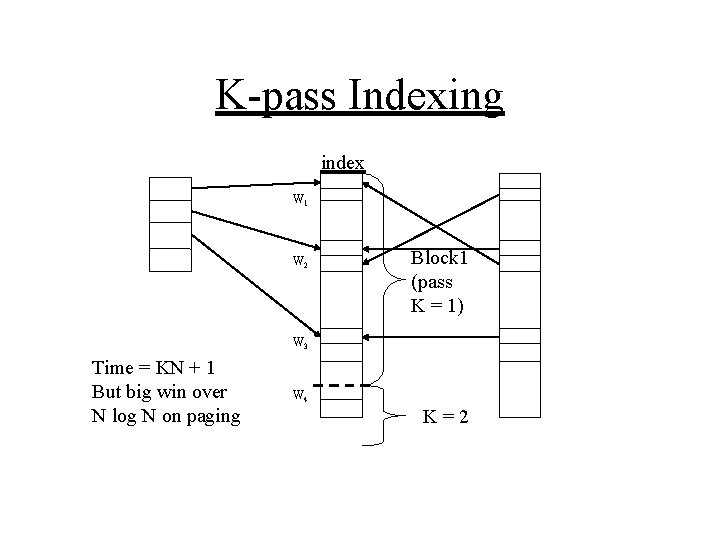

K-pass Indexing index W 1 W 2 Block 1 (pass K = 1) W 3 Time = KN + 1 But big win over N log N on paging W 4 K=2

Vector Models for IR • Gerald Salton, Cornell (Salton + Lesk, 68) (Salton, 71) (Salton + Mc. Gill, 83) • SMART System Chris Buckely, Cornell g Current keeper of the flame Salton’s magical automatic retrieval tool(? )

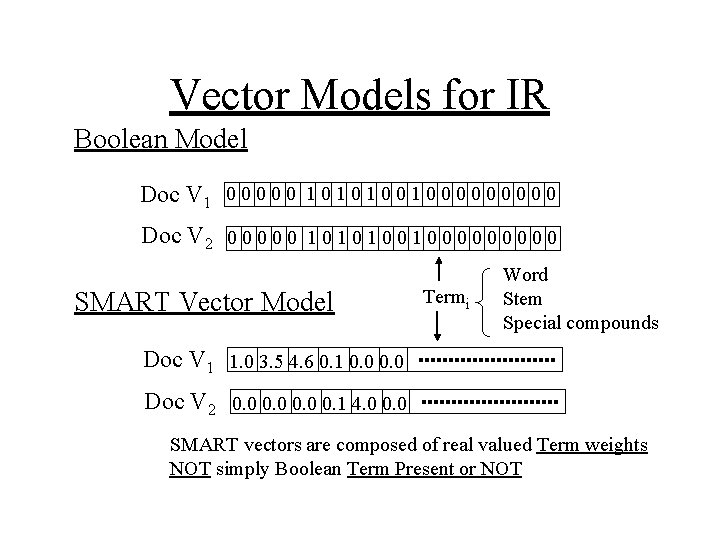

Vector Models for IR Boolean Model Doc V 1 0 0 0 1 0 1 0 0 0 0 0 Doc V 2 0 0 0 1 0 1 0 0 0 0 0 SMART Vector Model Termi Word Stem Special compounds Doc V 1 1. 0 3. 5 4. 6 0. 1 0. 0 Doc V 2 0. 0 0. 1 4. 0 0. 0 SMART vectors are composed of real valued Term weights NOT simply Boolean Term Present or NOT

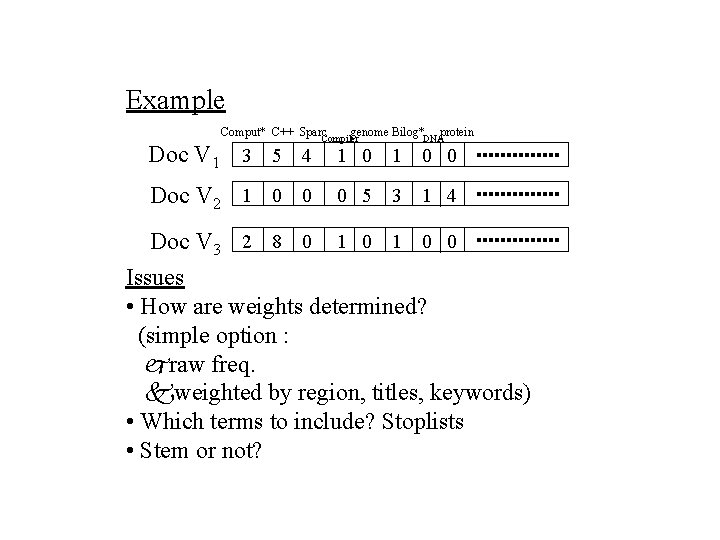

Example Comput* C++ Sparc Doc V 1 3 5 4 Doc V 2 1 0 0 genome Bilog* Compiler protein DNA 1 0 0 0 5 3 1 4 Doc V 3 2 8 0 1 0 0 Issues • How are weights determined? (simple option : jraw freq. kweighted by region, titles, keywords) • Which terms to include? Stoplists • Stem or not?

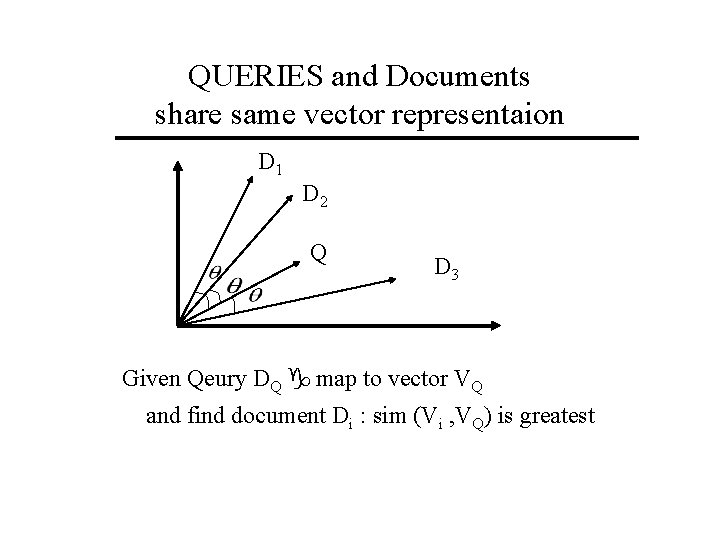

QUERIES and Documents share same vector representaion D 1 D 2 Q D 3 Given Qeury DQ g map to vector VQ and find document Di : sim (Vi , VQ) is greatest

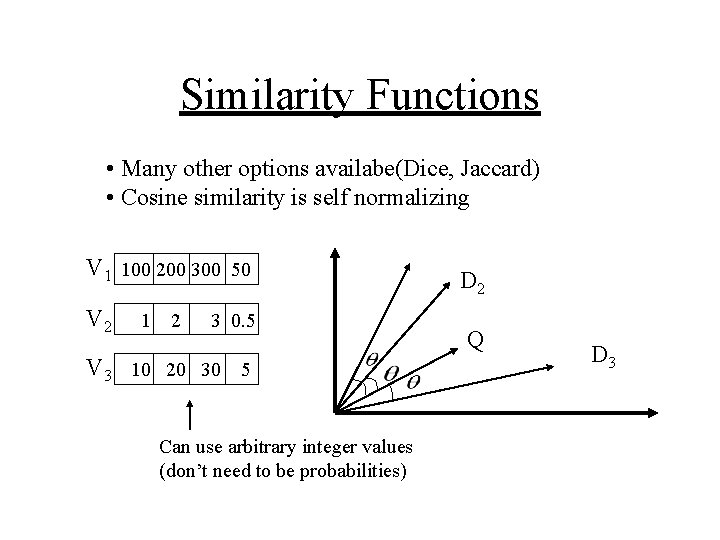

Similarity Functions • Many other options availabe(Dice, Jaccard) • Cosine similarity is self normalizing V 1 100 200 300 50 V 2 1 2 3 0. 5 V 3 10 20 30 5 Can use arbitrary integer values (don’t need to be probabilities) D 2 Q D 3

- Slides: 28