Timespace tradeoff lower bounds for non uniform computation

Time-space tradeoff lower bounds for non -uniform computation Paul Beame University of Washington 4 July 2000 1

Why study time-space tradeoffs? z To understand relationships between the two most critical measures of computation y unified comparison of algorithms with varying time and space requirements. z non-trivial tradeoffs arise frequently in practice y avoid storing intermediate results by recomputing them 2

![e. g. Sorting n integers from [1, n 2] z Merge sort y S e. g. Sorting n integers from [1, n 2] z Merge sort y S](http://slidetodoc.com/presentation_image/93268bce1a0266479dc17bf61a4b0823/image-3.jpg)

e. g. Sorting n integers from [1, n 2] z Merge sort y S = O(n log n), T = O(n log n) z Radix sort y S = O(n log n), T = O(n) z Selection sort y only need - smallest value output so far - index of current element 2) y S = O(log n) , T = O(n 3

Complexity theory z Hard problems y prove L P y prove non-trivial time lower bounds for natural decision problems in P z First step y Prove a space lower bound, e. g. S=w (log n), given an upper bound on time T, e. g. T=O(n) for a natural problem in P 4

An annoyance z Time hierarchy theorems imply y unnatural problems in P not solvable in time O(n) y Makes ‘first step’ vacuous for unnatural problems 5

Non-uniform computation z Non-trivial time lower bounds still open for problems in P y First step still very interesting even without the restriction to natural problems z Can yield bounds with precise constants z But proving lower bounds may be harder 6

Talk outline z The right non-uniform model (for now) y branching programs z Early success y multi-output functions, e. g. sorting z Progress on problems in P y Crawling x restricted branching programs y That breakthrough first step (and more) x true time-space tradeoffs y The path ahead 7

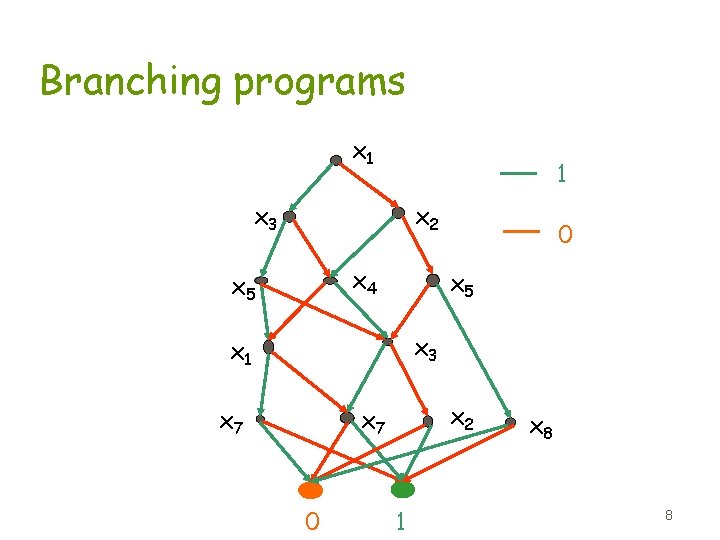

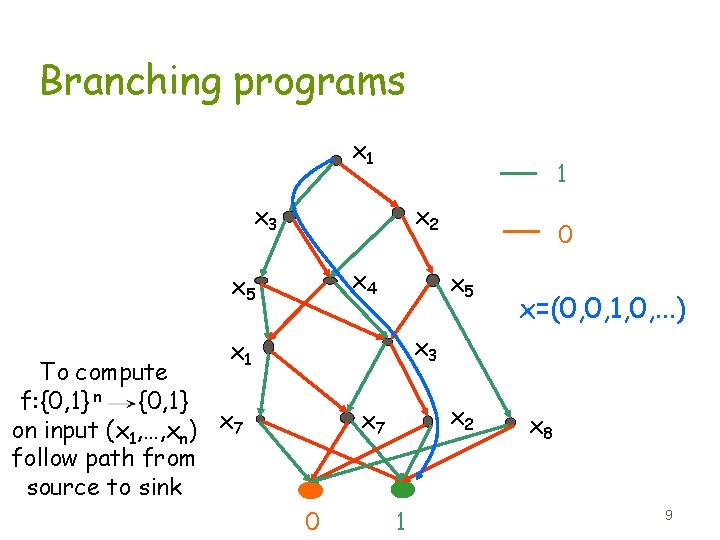

Branching programs x 1 1 x 3 x 2 x 4 x 5 0 x 5 x 3 x 1 x 7 x 2 x 7 0 1 x 8 8

Branching programs x 1 1 x 3 x 2 x 4 x 5 0 x 5 x=(0, 0, 1, 0, . . . ) x 3 x 1 To compute f: {0, 1} n {0, 1} on input (x 1, …, xn) x 7 follow path from source to sink x 2 x 7 0 1 x 8 9

Branching program properties z Length = length of longest path z Size = # of nodes z Simulate TM’s y node = configuration with input bits erased y time T= Length y space S=log 2 Size =TM space +log 2 n (head) = space on an index TM y polysize = non-uniform L 10

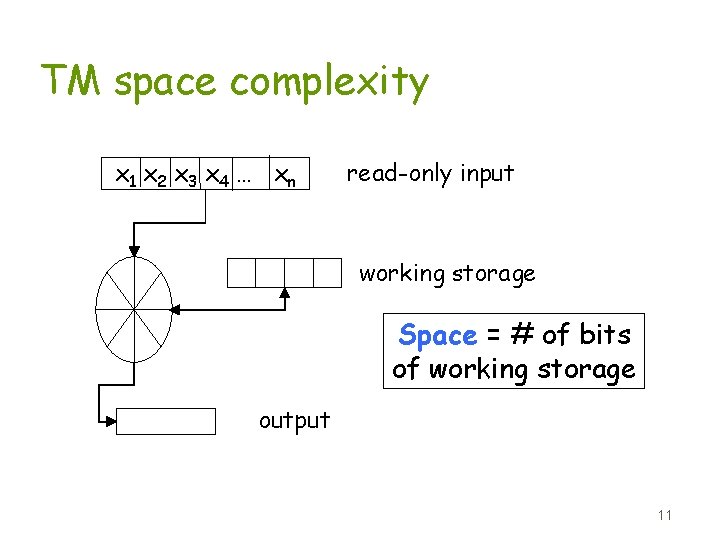

TM space complexity x 1 x 2 x 3 x 4 … xn read-only input working storage Space = # of bits of working storage output 11

Branching program properties z Simulate random-access machines (RAMs) y not just sequential access z Generalizations y Multi-way version for xi in arbitrary domain D x good for modeling RAM input registers y Outputs on the edges x good for modeling output tape for multi-output functions such as sorting z BPs can be leveled w. l. o. g. y like adding a clock to a TM 12

Talk outline z The right non-uniform model (for now) y branching programs z Early success y multi-output functions, e. g. sorting z Progress on problems in P y Crawling x restricted branching programs y That breakthrough first step (and more) x true time-space tradeoffs y The path ahead 13

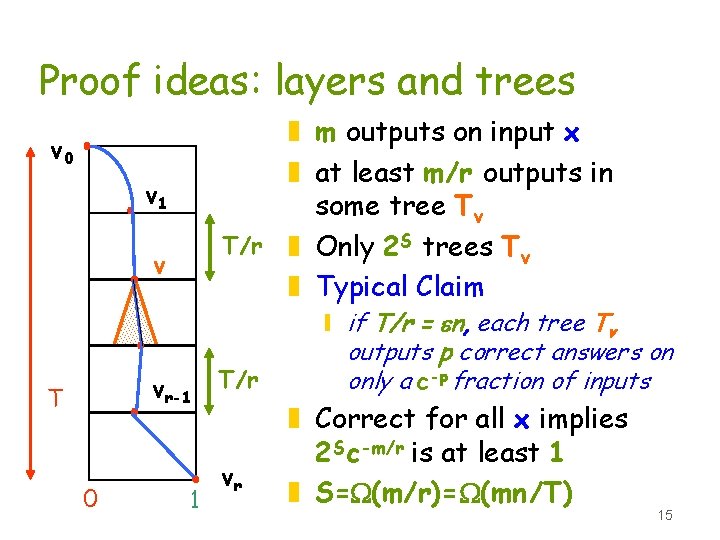

Success for multi-output problems z Sorting y T S =W (n 2/log n) [Borodin-Cook 82] y T S =W (n 2) [Beame 89] z Matrix-vector product y T S =W (n 3) [Abrahamson 89] z Many others including y Matrix multiplication y Pattern matching 14

Proof ideas: layers and trees z m outputs on input x z at least m/r outputs in some tree Tv T/r z Only 2 S trees Tv z Typical Claim v 0 v 1 v vr-1 T 0 1 T/r vr y if T/r = en, each tree Tv outputs p correct answers on only a c-p fraction of inputs z Correct for all x implies 2 Sc-m/r is at least 1 z S=W(m/r)=W(mn/T) 15

Limitation of the technique z Never more than T S =W (nm) where m is number of outputs z “It is unfortunately crucial to our proof that sorting requires many output bits, and it remains an interesting open question whether a similar lower bound can be made to apply to a set recognition problem, such as recognizing whether all n input numbers are distinct. ” [Cook: Turing Award Lecture, 1983] 16

Talk outline z The right non-uniform model (for now) y branching programs z Early success y multi-output functions, e. g. sorting z Problems in P y Crawling x restricted branching programs y That breakthrough first step (and more) x true time-space tradeoffs y The path ahead 17

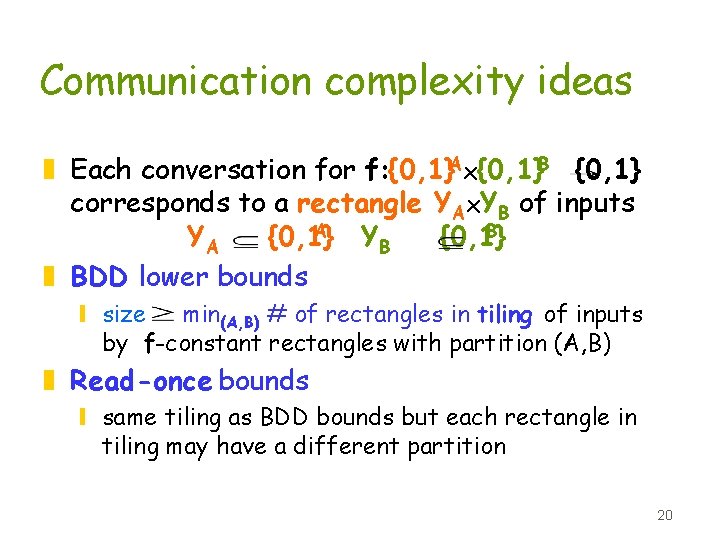

Restricted branching programs z Constant-width -only a constant number of nodes per level x [Chandra-Furst-Lipton 83] z Read-once -every variable read at most once per path x [Wegener 84], [Simon-Szegedy 89], etc. z Oblivious - same variable queried per level x [Babai-Pudlak-Rodl-Szemeredi 87], [Alon-Maass 87], [Babai-Nisan-Szegedy 89] z BDD = Oblivious read-once 18

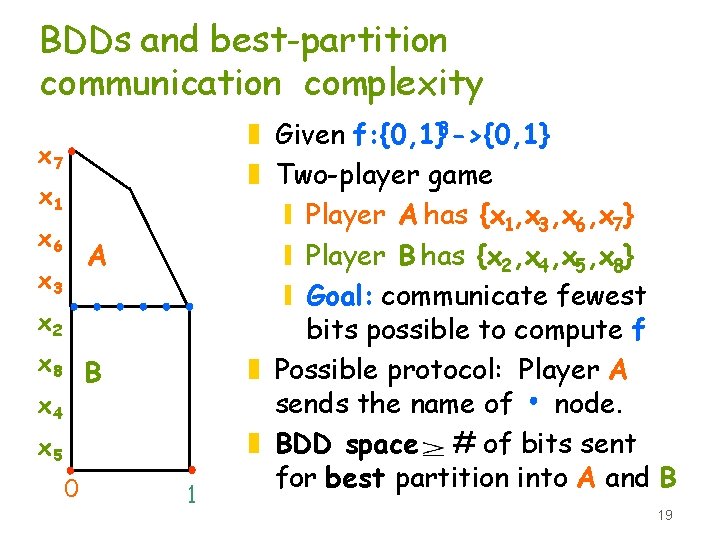

BDDs and best-partition communication complexity x 7 x 1 x 6 A x 3 x 2 x 8 B x 4 x 5 0 1 z Given f: {0, 1}8 ->{0, 1} z Two-player game y Player A has {x 1, x 3, x 6, x 7} y Player B has {x 2, x 4, x 5, x 8} y Goal: communicate fewest bits possible to compute f z Possible protocol: Player A sends the name of node. z BDD space # of bits sent for best partition into A and B 19

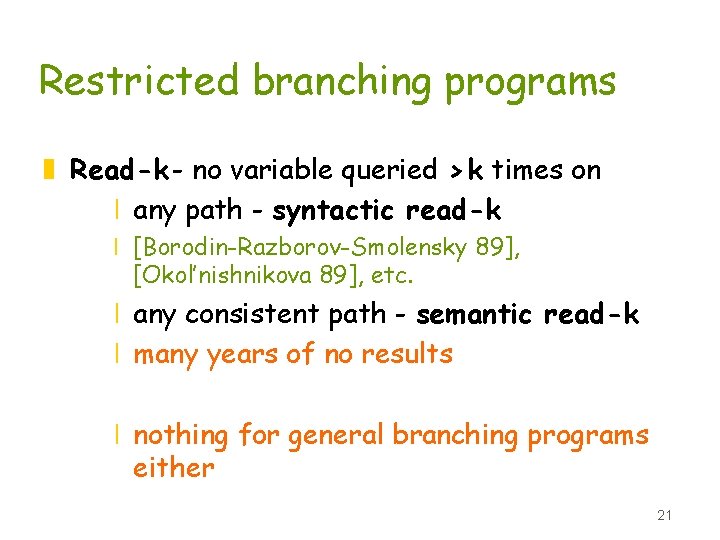

Communication complexity ideas z Each conversation for f: {0, 1}Ax{0, 1}B {0, 1} corresponds to a rectangle YAx. YB of inputs A B YA {0, 1} YB {0, 1} z BDD lower bounds y size min(A, B) # of rectangles in tiling of inputs by f-constant rectangles with partition (A, B) z Read-once bounds y same tiling as BDD bounds but each rectangle in tiling may have a different partition 20

Restricted branching programs z Read-k - no variable queried > k times on x any path - syntactic read-k x [Borodin-Razborov-Smolensky 89], [Okol’nishnikova 89], etc. x any consistent path - semantic read-k x many years of no results x nothing for general branching programs either 21

Uniform tradeoffs z SAT is not solvable using O(n 1 -e) space if time is n 1+o(1). [Fortnow 97] y uses diagonalization y works for co-nondeterministic TM’s z Extensions for SAT e ) deterministic y S=log. O(1) n implies T= W (n 1. 4142. . - [Lipton-Viglas 99] y with up to no(1) advice [Tourlakis 00] y S= O(n 1 -e) implies T=W (n 1. 618. . -e ). [Fortnow -van Melkebeek 00] 22

![Non-uniform computation z [Beame-Saks-Thathachar FOCS 98] y Syntactic read-k branching programs exponentially weaker than Non-uniform computation z [Beame-Saks-Thathachar FOCS 98] y Syntactic read-k branching programs exponentially weaker than](http://slidetodoc.com/presentation_image/93268bce1a0266479dc17bf61a4b0823/image-23.jpg)

Non-uniform computation z [Beame-Saks-Thathachar FOCS 98] y Syntactic read-k branching programs exponentially weaker than semantic read-twice. y f(x) = “x. TMx=0 (mod q)” x e nloglog n time x GF(q)n W(n log 1 -en) space for q~n y f(x) = “x. TMx=0 (mod 3)” x{0, 1}n x 1. 017 ntime implies W (n) space x first Boolean result above time n for general branching programs 23

![Non-uniform computation z [Ajtai STOC 99] y 0. 5 log n Hamming distance for Non-uniform computation z [Ajtai STOC 99] y 0. 5 log n Hamming distance for](http://slidetodoc.com/presentation_image/93268bce1a0266479dc17bf61a4b0823/image-24.jpg)

Non-uniform computation z [Ajtai STOC 99] y 0. 5 log n Hamming distance for x [1, n 2]n x kn time implies W(n log n) space x follows from [Beame-Saks-Thathachar 98] x improved to W(nlog n) time by [Pagter-00] y element distinctness for x [1, n 2]n x kn time implies W(n) space x requires significant extension of techniques 24

![That breakthrough first step! z [Ajtai FOCS 99] y f(x, y) = x “ That breakthrough first step! z [Ajtai FOCS 99] y f(x, y) = x “](http://slidetodoc.com/presentation_image/93268bce1a0266479dc17bf61a4b0823/image-25.jpg)

That breakthrough first step! z [Ajtai FOCS 99] y f(x, y) = x “ TMyx (mod 2)” x kn time implies W(n) space x {0, 1}n y {0, 1}2 n-1 z First result for non-uniform Boolean computation showing y time O(n) space w(log n) 25

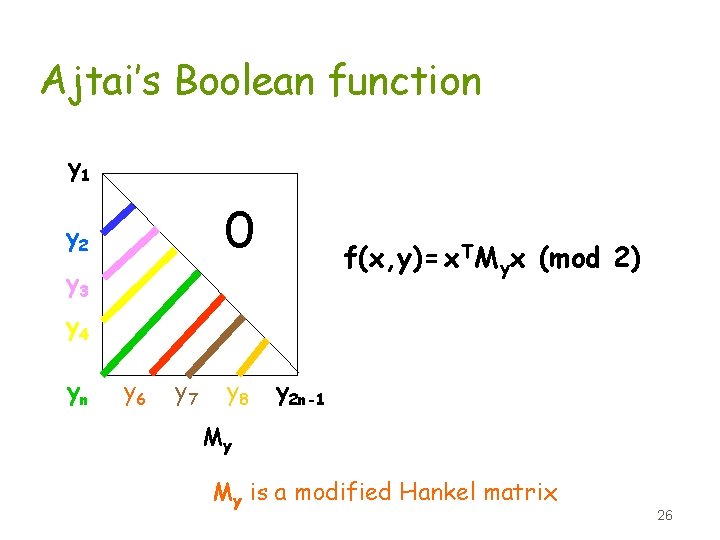

Ajtai’s Boolean function y 1 0 y 2 f(x, y)= x. TMyx (mod 2) y 3 y 4 yn y 6 y 7 y 8 y 2 n-1 My My is a modified Hankel matrix 26

![Superlinear lower bounds z [Beame-Saks-Sun-Vee FOCS 00] y Extension to e-error randomized -uniform algorithms Superlinear lower bounds z [Beame-Saks-Sun-Vee FOCS 00] y Extension to e-error randomized -uniform algorithms](http://slidetodoc.com/presentation_image/93268bce1a0266479dc17bf61a4b0823/image-27.jpg)

Superlinear lower bounds z [Beame-Saks-Sun-Vee FOCS 00] y Extension to e-error randomized -uniform algorithms y Better time-space tradeoffs non y Apply to both element distinctness and f(x, y) = x “ TMyx (mod 2)” 27

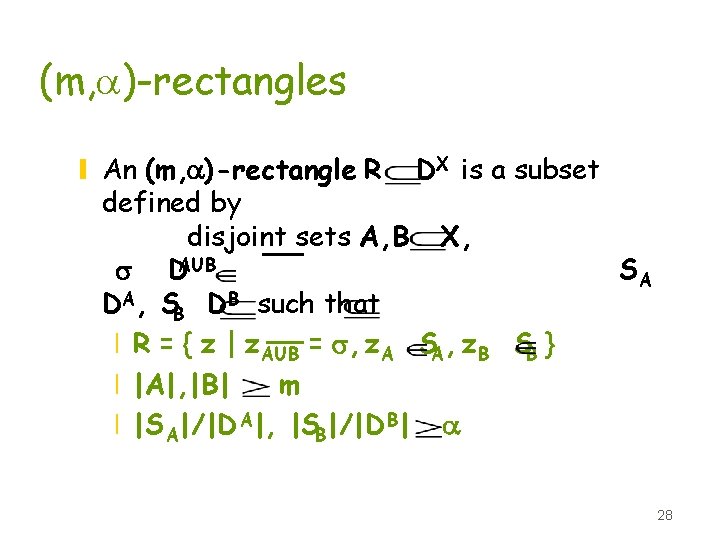

(m, a)-rectangles y An (m, a)-rectangle R DX is a subset defined by disjoint sets A, B X, s DAUB SA DA, SB DB such that x R = { z | z. AUB = s, z. A SA, z. B SB } x |A|, |B| m x |S A|/|D A|, |SB|/|D B| a 28

SA An (m, a)-rectangle SB s x 1 DB DA m m SA SB A B xn SA and SB each have density at least a In general A and B may be interleaved in [1, n] 29

![Key lemma [BST 98] z Let program P use y time T = kn Key lemma [BST 98] z Let program P use y time T = kn](http://slidetodoc.com/presentation_image/93268bce1a0266479dc17bf61a4b0823/image-30.jpg)

Key lemma [BST 98] z Let program P use y time T = kn y space S y accept fraction d of its inputs in Dn z then P accepts all inputs in some (m, a)-rectangle where y m = bn y a is at least d 2 -4(k+1)m - (S+1)r y b-1 ~ 2 k and r ~ k 2 2 k 30

![Improved key lemma [Ajtai 99 s] z Let program P use y time T Improved key lemma [Ajtai 99 s] z Let program P use y time T](http://slidetodoc.com/presentation_image/93268bce1a0266479dc17bf61a4b0823/image-31.jpg)

Improved key lemma [Ajtai 99 s] z Let program P use y time T = kn y space S y accept fraction d of its inputs in Dn z then P accepts all inputs in some (m, a)-rectangle where y m = bn y a is at least y b-1 and r are constants depending on k 31

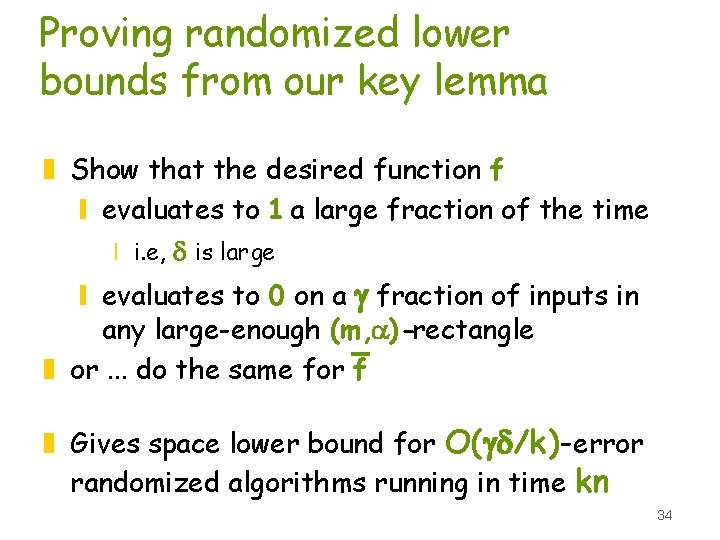

Proving lower bounds using the key lemmas z Show that the desired function f y evaluates to 1 a large fraction of the time x i. e. , d is large y evaluates to 0 on some input in any large (m, a)-rectangle x where large is given by the lemma bounds z or. . . do the same for f 32

Our new key lemma z Let program P use time T = kn space S and accept fraction d of its inputs in Dn z Almost all inputs P accepts are in (m, a) -rectangles accepted by P where y m = bn y a is at least y b-1 and r are z no input is in more than O(k) rectangles 33

Proving randomized lower bounds from our key lemma z Show that the desired function f y evaluates to 1 a large fraction of the time x i. e, d is large y evaluates to 0 on a g fraction of inputs in any large-enough (m, a)-rectangle z or. . . do the same for f z Gives space lower bound for O(gd/k)-error randomized algorithms running in time kn 34

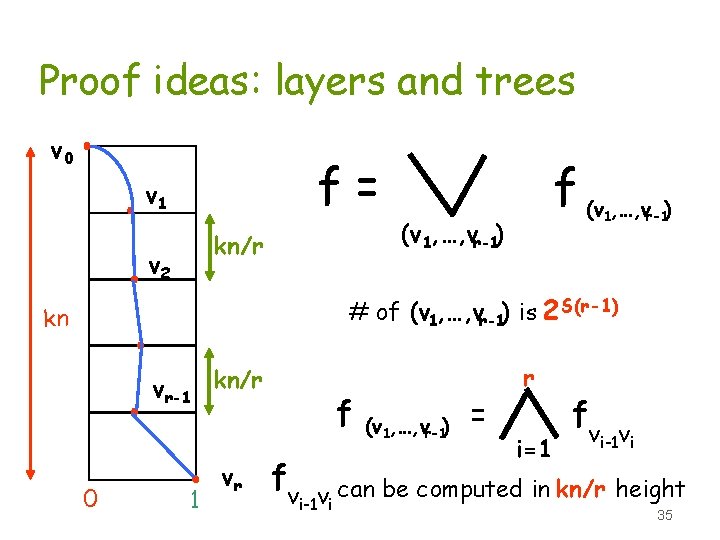

Proof ideas: layers and trees v 0 f= v 1 kn/r v 2 f (v , …, v ) 1 (v 1, …, vr-1) r-1 # of (v 1, …, vr-1) is 2 S(r-1) kn vr-1 kn/r 0 1 vr f f (v 1, …, vr-1) = r i=1 f vi-1 vi can be computed in kn/r height 35

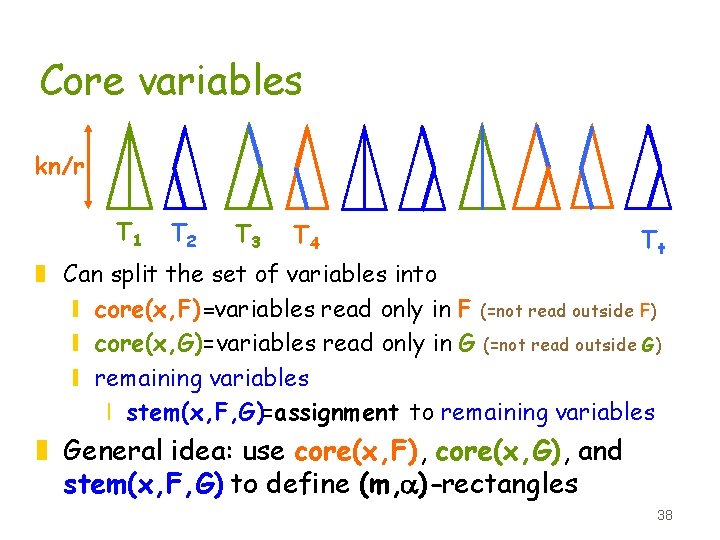

(r, e)-decision forest z The conjunction of r decision trees (BP’s that are trees) of height en x Each f (v 1, …, vr-1) is a computed by a (r, k/r)-decision forest x Only 2 S(r-1) of them x The various f (v 1, …, vr-1) accept disjoint sets of inputs 36

Decision forest kn/r T 1 T 2 T 3 T 4 Tr z Assume wlog all variables read on every input z Fix an input x accepted by the forest z Each tree reads only a small fraction of the variables on input x z Fix two disjoint subsets of trees, F and G 37

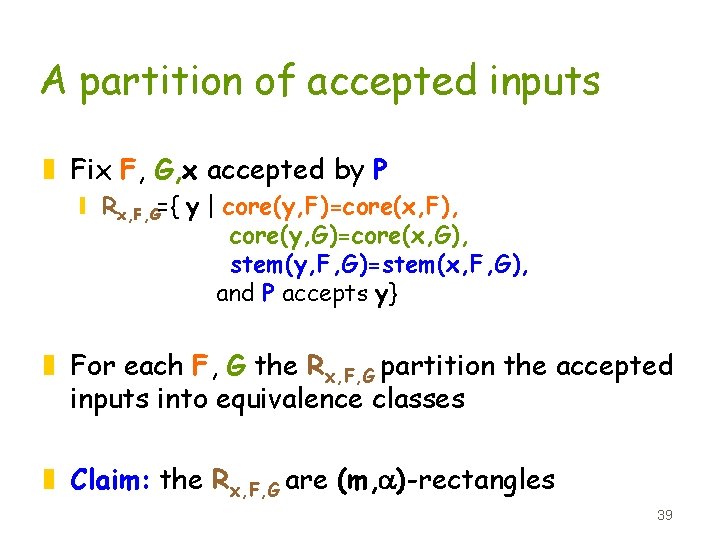

Core variables kn/r T 1 T 2 T 3 T 4 Tt z Can split the set of variables into y core(x, F)=variables read only in F (=not read outside F) y core(x, G)=variables read only in G (=not read outside G) y remaining variables x stem(x, F, G)=assignment to remaining variables z General idea: use core(x, F), core(x, G), and stem(x, F, G) to define (m, a)-rectangles 38

A partition of accepted inputs z Fix F, G, x accepted by P y Rx, F, G={ y | core(y, F)=core(x, F), core(y, G)=core(x, G), stem(y, F, G)=stem(x, F, G), and P accepts y} z For each F, G the Rx, F, G partition the accepted inputs into equivalence classes z Claim: the Rx, F, G are (m, a)-rectangles 39

Classes are rectangles z Let A=core(x, F), B=core(x, G), s=stem(x, F, G) z SA={y. A| y in Rx, F, G }, SB={z. B| z in Rx, F, G } z Let w=(s, y. A, z. B) y w agrees with y in all trees outside G x core(w, G)=core(y, G)=core(x, G) y w agrees with z in all trees outside F x core(w, F)=core(z, F)=core(x, F) y stem(w, F, G)=s=stem(x, F, G) y P accepts w since it accepts y and z z So. . . w is in Rx, F, G 40

Few partitions suffice z Only 4 k pairs F, G suffice to cover almost all inputs accepted by P by large (m, a)-rectangles Rx, F, G y Choose F, G uniformly at random of suitable size, depending on access pattern of input x probability that F, G isn’t good is tiny x one such pair will work for almost all inputs with the given access pattern y Only 4 k sizes needed. 41

Special case: oblivious BPs z core(x, F), core(x, G) don’t depend on x z Choose Ti in F with prob q G with prob q neither with prob 1 -2 q 42

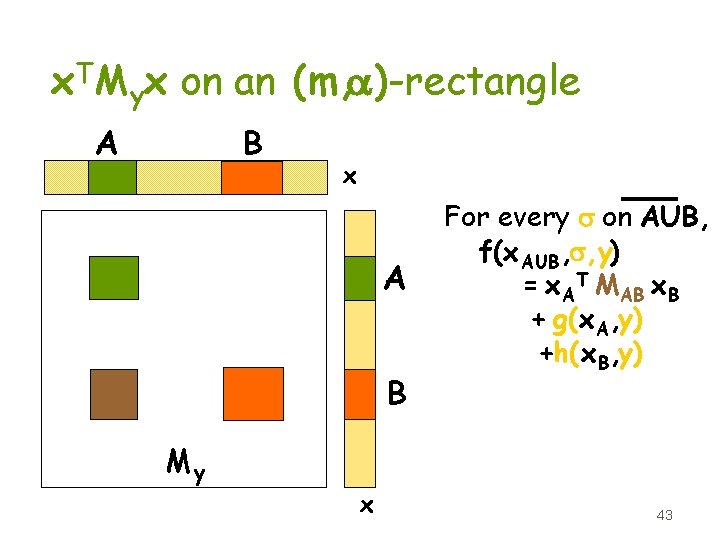

x. TMyx on an (m, a)-rectangle B A x A B My x For every s on AUB, f(x AUB , s, y) = x. AT MAB x. B + g(x. A, y) +h(x. B, y) 43

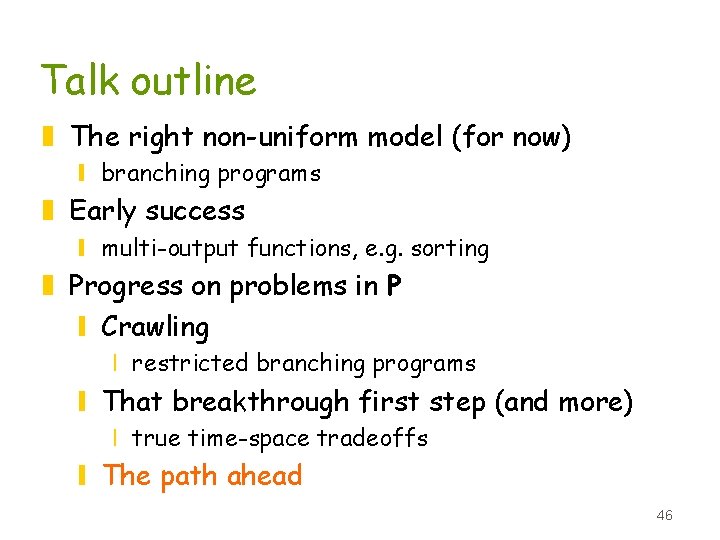

Rectangles, rank, & rigidity z largest rectangle on which x. ATMx B is constant has a 2 -rank(M) y [Borodin-Razborov-Smolensky 89] z Lemma [Ajtai 99] Can fix y s. t. every bnxbn minor MAB of My has rank(MAB) cbn/log 2(1/b) y improvement of bounds of [Beame-Saks-Thathachar 98] & [Borodin-Razborov-Smolensky 89] for Sylvester matrices 44

High rank implies balance z For any rectangle SAx. SB {0, 1}Ax{0, 1}B with m(SAx. SB) |A||B|23 -rank(M) Pr[ x. ATMx B= 1 | x. A SA, x. B SB] 1/32 Pr[ x. ATMx B= 0 | x. A SA, x. B SB] 1/32 y derived from result for inner product in r dimensions z So rigidity also implies balance for all large rectangles and so z Also follows for element distinctness y [Babai-Frankl-Simon 86] 45

Talk outline z The right non-uniform model (for now) y branching programs z Early success y multi-output functions, e. g. sorting z Progress on problems in P y Crawling x restricted branching programs y That breakthrough first step (and more) x true time-space tradeoffs y The path ahead 46

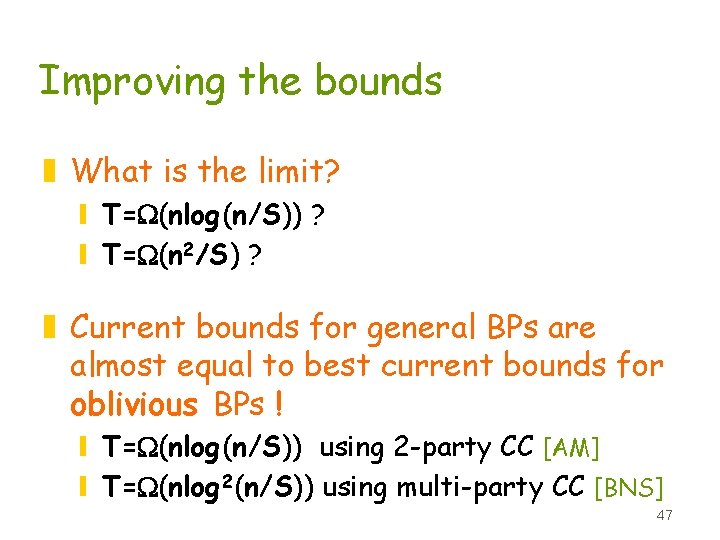

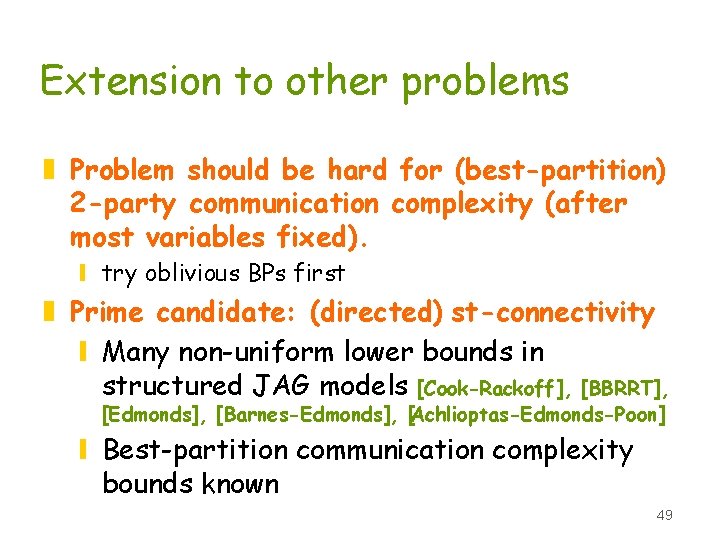

Improving the bounds z What is the limit? y T=W(nlog(n/S)) ? y T=W(n 2/S) ? z Current bounds for general BPs are almost equal to best current bounds for oblivious BPs ! y T=W(nlog(n/S)) using 2 -party CC [AM] y T=W(nlog 2(n/S)) using multi-party CC [BNS] 47

Improving the bounds z (m, a)-rectangles a 2 -party CC idea y insight: generalizing to non-oblivious BPs y yields same bound as [AM] for oblivious BPs z Generalize to multi-party CC ideas to get better bounds for general BPs? y similar framework yields same bound as [BNS] for oblivious BPs z Improve oblivious BP lower bounds? y ideas other than communication complexity? 48

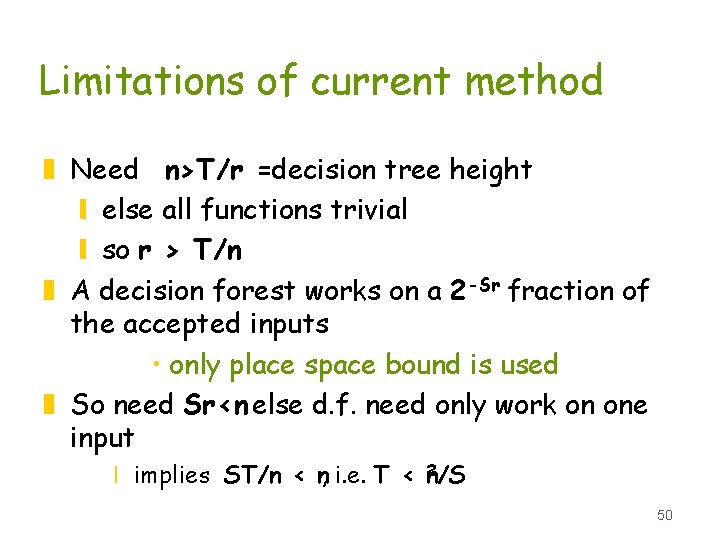

Extension to other problems z Problem should be hard for (best-partition) 2 -party communication complexity (after most variables fixed). y try oblivious BPs first z Prime candidate: (directed) st-connectivity y Many non-uniform lower bounds in structured JAG models [Cook-Rackoff], [BBRRT], [Edmonds], [Barnes-Edmonds], [Achlioptas-Edmonds-Poon] y Best-partition communication complexity bounds known 49

Limitations of current method z Need n>T/r =decision tree height y else all functions trivial y so r > T/n z A decision forest works on a 2 -Sr fraction of the accepted inputs • only place space bound is used z So need Sr<n else d. f. need only work on one input x implies ST/n < n, i. e. T < n 2/S 50

- Slides: 50