TIGGE CISL EXEC Briefing 11072006 Doug Schuster 12252021

TIGGE CISL EXEC Briefing 11/07/2006 Doug Schuster 12/25/2021 1

Outline Ø TIGGE - Who, What, Why? Ø Archive Center Data Collection Ø Data Discovery, Access, And Distribution Ø Current and Future Challenges Ø Summary 12/25/2021 2

TIGGE - Who, What, Why? WMO THORPEX Interactive Grand Global Ensemble (TIGGE) Ø Foster multi-model ensemble studies. Ø Improve accuracy of 1 to 14 day high-impact weather forecasts. Ø Up to 10 operational centers contributing ensemble data. Two Phases Ø 1: Three central Archive Centers Ø China Meteorological Administration (CMA) Ø European Center for Medium-Range Weather Forecasts (ECMWF) Ø National Center for Atmospheric Research (NCAR) Ø 2: Widely Distributed Access (not discussed today) 12/25/2021 3

Archive Center Data Collection Mechanism Ø 10 GB/hour transfer volume desired (Between ECMWF and NCAR). Ø Allows time for daily real-time data transfer and time for fixes and data recovery. Ø Unidata’s Internet Data Distribution/Local Data Manager system (IDD/LDM) Ø History of providing similar functionality in delivering NCEP model data to the university community. Ø FTP was tested and found insufficient 12/25/2021 4

Archive Center Data Collection Cooperative Support Team for Data Ingest and Highlights Ø ECMWF – Raoult, Fuentes Ø Ø Ø Optimally tune ECWMF and NCAR systems to work together Ø Develop protocols to ensure complete transfer (e. g. grid manifests) Unidata – Yoksas Ø Expert advise on IDD/LDM VETS – Brown Ø Installed, configured/re-configured, monitored IDD/LDM DSG – Arnold Ø System administration NETS – Mitchell Ø TCP packet analysis and advise 12/25/2021 5

Archive Center Data Collection Highlights Ø Succeeded to reach target data rate (10 GB/hr) – Oct. 2005 Ø Build up supporting systems and software around IDD/LDM Ø Develop archiving procedures and implement file management for the TIGGE portal – another portal within the CDP. Ø Begin realistic daily test data flow – April 2006 Ø Initiate operational data collection October 1, 2006 12/25/2021 6

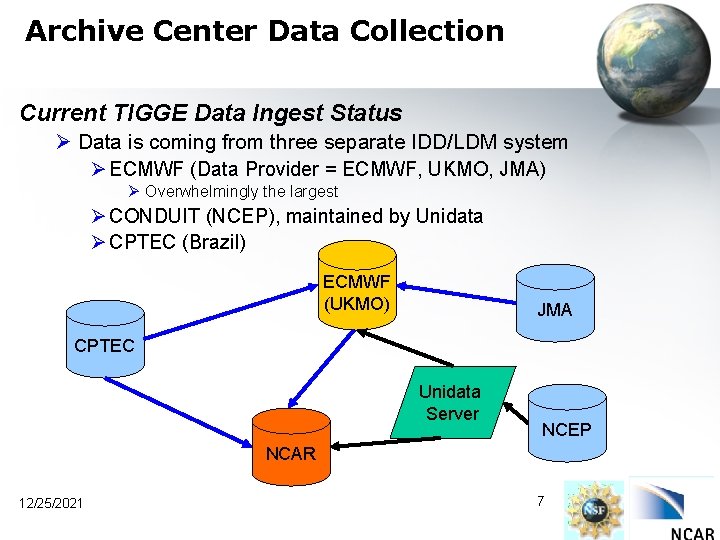

Archive Center Data Collection Current TIGGE Data Ingest Status Ø Data is coming from three separate IDD/LDM system Ø ECMWF (Data Provider = ECMWF, UKMO, JMA) Ø Overwhelmingly the largest Ø CONDUIT (NCEP), maintained by Unidata Ø CPTEC (Brazil) ECMWF (UKMO) JMA CPTEC Unidata Server NCEP NCAR 12/25/2021 7

Archive Center Data Collection Hourly Volume Receipt from IDD EXP Stream 12/25/2021 8

Archive Center Data Collection Hourly Volume Receipt from IDD CONDUIT Stream 12/25/2021 9

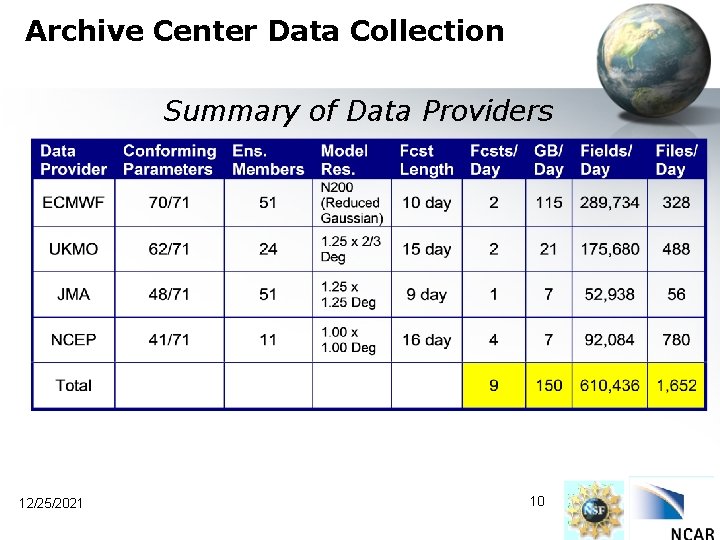

Archive Center Data Collection Summary of Data Providers 12/25/2021 10

Archive Center Data Collection TIGGE Archive File Stages Ø 2 – 3 week period of most recent forecast files online (SAN Disk) for direct user download. Ø Long term archive maintained on MSS as part of the RDA. 12/25/2021 11

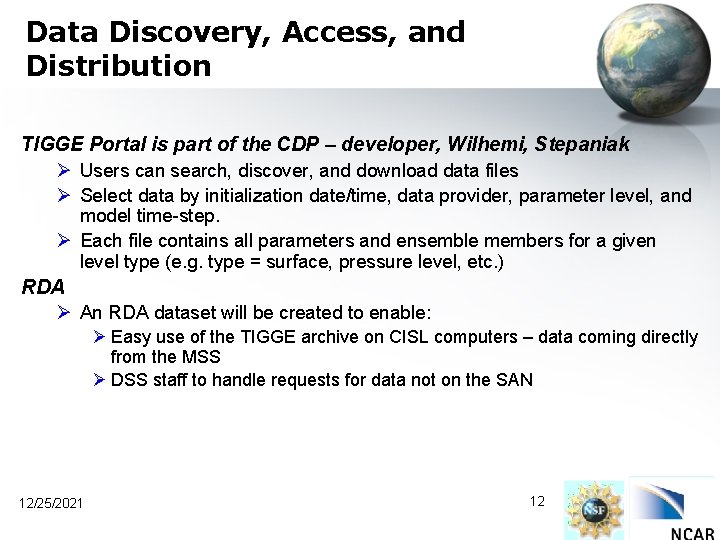

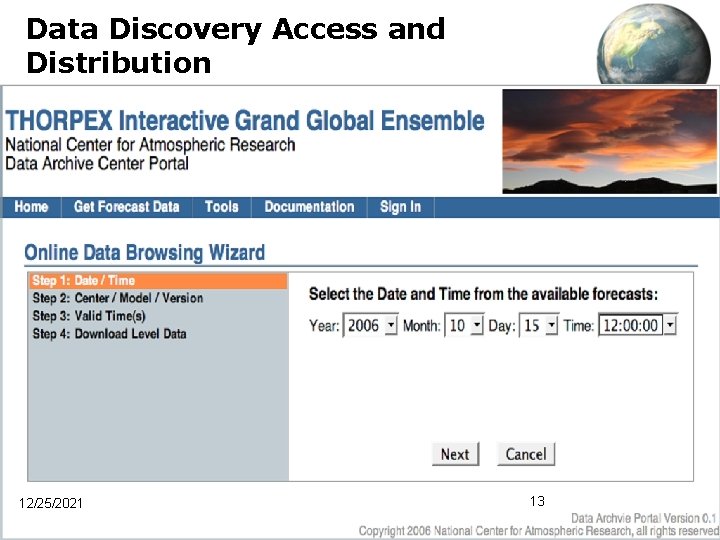

Data Discovery, Access, and Distribution TIGGE Portal is part of the CDP – developer, Wilhemi, Stepaniak Ø Users can search, discover, and download data files Ø Select data by initialization date/time, data provider, parameter level, and model time-step. Ø Each file contains all parameters and ensemble members for a given level type (e. g. type = surface, pressure level, etc. ) RDA Ø An RDA dataset will be created to enable: Ø Easy use of the TIGGE archive on CISL computers – data coming directly from the MSS Ø DSS staff to handle requests for data not on the SAN 12/25/2021 12

Data Discovery Access and Distribution 12/25/2021 13

Current and Future Challenges TIGGE Portal Ø User registration package to meet TIGGE access requirements Ø Ø Ø Ø Two user groups – default is 48 hour delayed access, real time by special permission from IPO. Upgrade to handle subset data requests through a simple interface Distribute data requests in a timely manner Track user metrics Subscription Services Provide web services (SOAP/RESTS) Design procedure for 24 x 7 monitoring Plan to open public access end of November 12/25/2021 14

Current and Future Challenges User Analysis and Basic Data Manipulation Tools Ø WMO GRIB 2 is very new, so all tools are immature Ø Forecasts with ensemble members add another dimension Ø Improvements underway in: Ø Spherepack Ø NCL/Python (End of November) Ø Gempak (Unidata) Ø NCEP tools (wgrib 2, etc…) Ø ECMWF GRIB API Ø Need time to work with these and others to gain experience 12/25/2021 15

Current and Future Challenges Sub-setting and Grid Interpolation Ø Users will need more than file downloads at model center native resolution Ø Specify parameter, temporal, and spatial sub-setting across all models Ø Requires verified software from each data provider Ø Must build and run effectively on local hardware Ø Too soon to estimate computational requirements Ø Don’t know Ø Number of users. Ø All grid native grid configurations Ø Efficiency of data provider provided software Ø As the effort becomes defined computational requirements will be estimated Ø The degree to which we address this challenge depends on how the NSF responds to our request for additional TIGGE support. 12/25/2021 16

Summary Ø There has been and will be much collaboration, throughout UCAR and internationally. Ø Initiated operational data collection October 1, 2006. Ø Currently, 150 GB/day. Ø The TIGGE archive and access system designed to accommodate irregularity between providers. Ø Plan to open public access, via the TIGGE portal, end of November. Ø User specified sub-setting and grid interpolations are a major challenge - looking for help from NSF. 12/25/2021 17

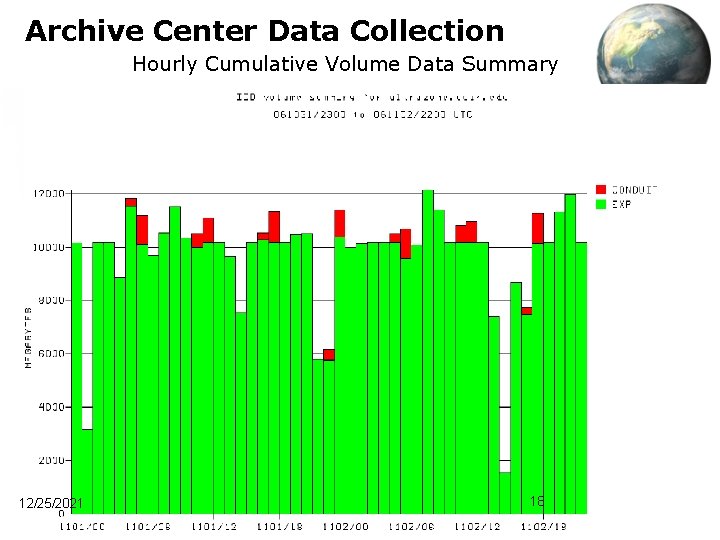

Archive Center Data Collection Hourly Cumulative Volume Data Summary 12/25/2021 18

Current and Future Challenges Transition from Dataportal to Ultra-zone was not seamless Ø Machine differences perturbed a number of adjustments ØClock synchronization with ECMWF ØTCP Cache, and File IO settings ØLDM configuration ØThings are running well now. 12/25/2021 19

- Slides: 19