Thread Block Compaction for Efficient SIMT Control Flow

Thread Block Compaction for Efficient SIMT Control Flow Wilson W. L. Fung Tor M. Aamodt University of British Columbia HPCA-17 Feb 14, 2011 1

Graphics Processor Unit (GPU) n Commodity Many. Core Accelerator q n SIMD HW: Compute BW + Efficiency Non-Graphics API: CUDA, Open. CL, Direct. Compute q q q Programming Model: Hierarchy of scalar threads SIMD-ness not exposed at ISA Scalar threads grouped into warps, run in lockstep Warp Scalar Thread Grid Blocks Thread Blocks 1 12 23 34 4 5 6 7 8 9 9 1010 1111 1212 Single-Instruction-Multi. Thread Wilson Fung, Tor Aamodt Thread Block Compaction 2

![SIMT Execution Model Reconvergence Stack A[] = {4, 8, 12, 16}; A 1 2 SIMT Execution Model Reconvergence Stack A[] = {4, 8, 12, 16}; A 1 2](http://slidetodoc.com/presentation_image_h/21e3ce8519ce24a0cba27afea4425fe0/image-3.jpg)

SIMT Execution Model Reconvergence Stack A[] = {4, 8, 12, 16}; A 1 2 3 4 B: if (K > 10) B 1 2 3 4 C 1 2 -- -- D -- -- 3 4 E: B = C[tid. x] + K; E 1 2 3 4 C: K = 10; else D: K = 0; 50% SIMD Efficiency! Time A: K = A[tid. x]; PC RPC Active Mask 1111 B E 0011 D E 1100 E E C Branch Divergence In some cases: SIMD Efficiency 20% Wilson Fung, Tor Aamodt Thread Block Compaction 3

Dynamic Warp Formation (W. Fung et al. , MICRO 2007) Warp 0 A Warp 1 Warp 2 1234 A 5678 A 9 10 11 12 B 1234 B 5678 B 9 10 11 12 C 1 2 -- -- Time C D 5 -- 7 8 C -- -- 11 12 D 9 10 -- -- SIMD Efficiency 88% C 1 2 7 8 Pack C 5 -- 11 12 -- -- 3 4 D E Reissue/Memory Latency -- 6 -- -- 1234 E 5678 E 9 10 11 12 Wilson Fung, Tor Aamodt Thread Block Compaction 4

This Paper n Identified DWF Pathologies q Greedy Warp Scheduling Starvation n q Breaks up coalesced memory access n q n Lower memory efficiency Extreme case: 5 X slowdown Some CUDA apps require lockstep execution of static warps n n Lower SIMD Efficiency DWF breaks them! Additional HW to fix these issues? Simpler solution: Thread Block Compaction Wilson Fung, Tor Aamodt Thread Block Compaction 5

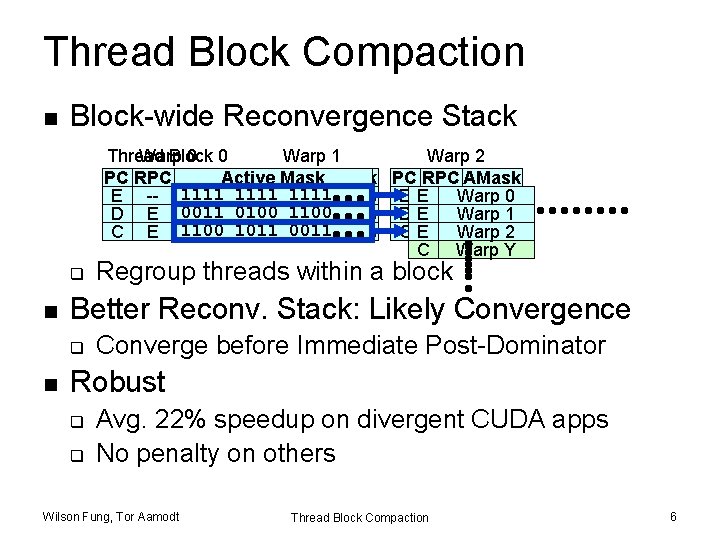

Thread Block Compaction n Block-wide Reconvergence Stack Thread Block Warp 0 0 Warp 1 Warp 2 PC RPC AMask Active Mask. AMask PC RPC E -- 1111 E 1111 -- 1111 E E -- Warp 11110 E E Warp 1 D E 0011 0100 D 1100 E 0100 DD 1100 U C E 1100 E E Warp 2 C T 1100 1011 C 0011 E 1011 CD 0011 X C Warp Y q n Better Reconv. Stack: Likely Convergence q n Regroup threads within a block Converge before Immediate Post-Dominator Robust q q Avg. 22% speedup on divergent CUDA apps No penalty on others Wilson Fung, Tor Aamodt Thread Block Compaction 6

Outline n n n n Introduction GPU Microarchitecture DWF Pathologies Thread Block Compaction Likely-Convergence Experimental Results Conclusion Wilson Fung, Tor Aamodt Thread Block Compaction 7

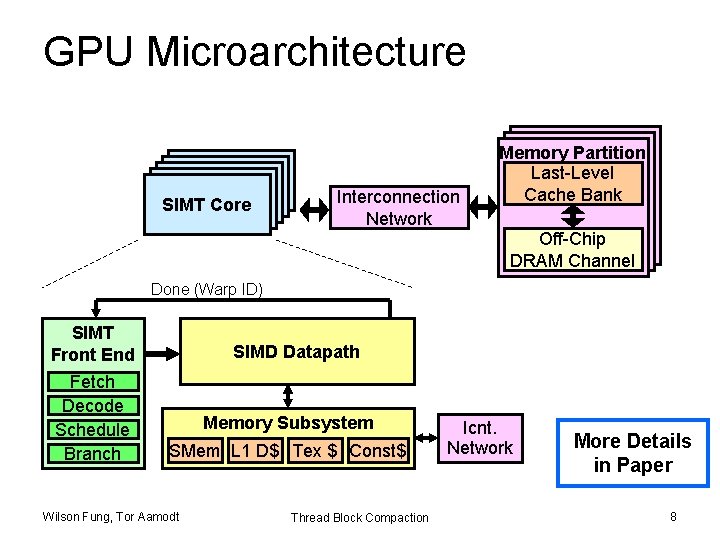

GPU Microarchitecture SIMTCore SIMTCore SIMT Interconnection Network Memory. Partition Memory Partition Last-Level Cache. Bank Cache Bank Off-Chip DRAMChannel DRAM Channel Done (Warp ID) SIMT Front End Fetch Decode Schedule Branch SIMD Datapath Memory Subsystem SMem L 1 D$ Tex $ Const$ Wilson Fung, Tor Aamodt Thread Block Compaction Icnt. Network More Details in Paper 8

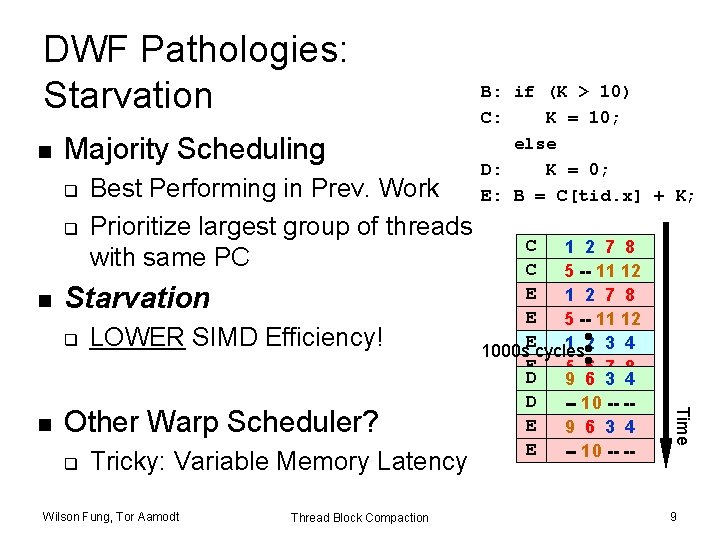

DWF Pathologies: Starvation n Majority Scheduling q q n Starvation q LOWER SIMD Efficiency! Other Warp Scheduler? q Tricky: Variable Memory Latency Wilson Fung, Tor Aamodt Thread Block Compaction C 1 2 7 8 C 5 -- 11 12 D E 9 2 1 6 3 7 4 8 D E 5 -- -1011 -- 12 -E 1 2 3 4 1000 s cycles E 5 6 7 8 D 9 6 3 4 E 9 10 11 12 D -- 10 -- -E 9 6 3 4 E -- 10 -- -- Time n Best Performing in Prev. Work Prioritize largest group of threads with same PC B: if (K > 10) C: K = 10; else D: K = 0; E: B = C[tid. x] + K; 9

DWF Pathologies: Extra Uncoalesced Accesses n Coalesced Memory Access = Memory SIMD q n 1 st Order CUDA Programmer Optimization Not preserved by DWF E: B = C[tid. x] + K; No DWF With DWF Wilson Fung, Tor Aamodt E E E #Acc = 3 0 x 100 1 2 3 4 0 x 140 5 6 7 8 9 10 11 12 0 x 180 #Acc = 9 0 x 100 1 2 7 12 0 x 140 9 6 3 8 5 10 11 4 0 x 180 Thread Block Compaction Memory L 1 Cache Absorbs Redundant Memory Traffic L 1$ Port Conflict 10

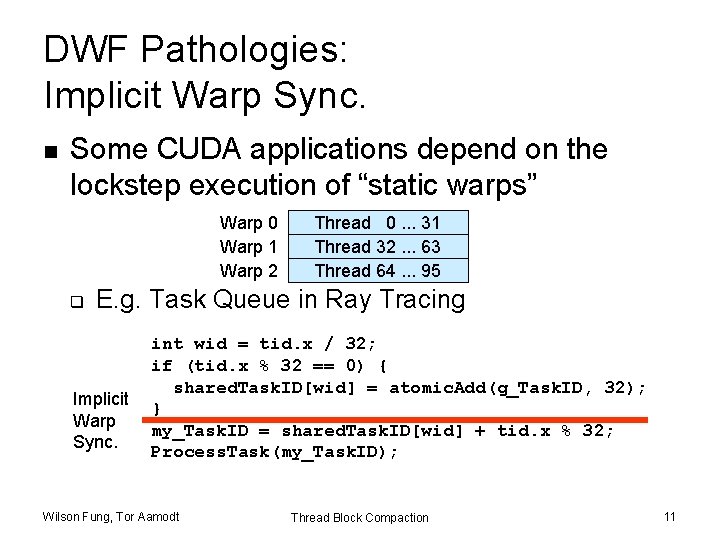

DWF Pathologies: Implicit Warp Sync. n Some CUDA applications depend on the lockstep execution of “static warps” Warp 0 Warp 1 Warp 2 q Thread 0. . . 31 Thread 32. . . 63 Thread 64. . . 95 E. g. Task Queue in Ray Tracing Implicit Warp Sync. int wid = tid. x / 32; if (tid. x % 32 == 0) { shared. Task. ID[wid] = atomic. Add(g_Task. ID, 32); } my_Task. ID = shared. Task. ID[wid] + tid. x % 32; Process. Task(my_Task. ID); Wilson Fung, Tor Aamodt Thread Block Compaction 11

Observation n n Compute kernels usually contain divergent and non-divergent (coherent) code segments Coalesced memory access usually in coherent code segments q DWF no benefit there Wilson Fung, Tor Aamodt Thread Block Compaction Coherent Divergent Static Warp Divergence Dynamic Warp Reset Warps Coales. LD/ST Static Coherent Warp Recvg Pt. 12

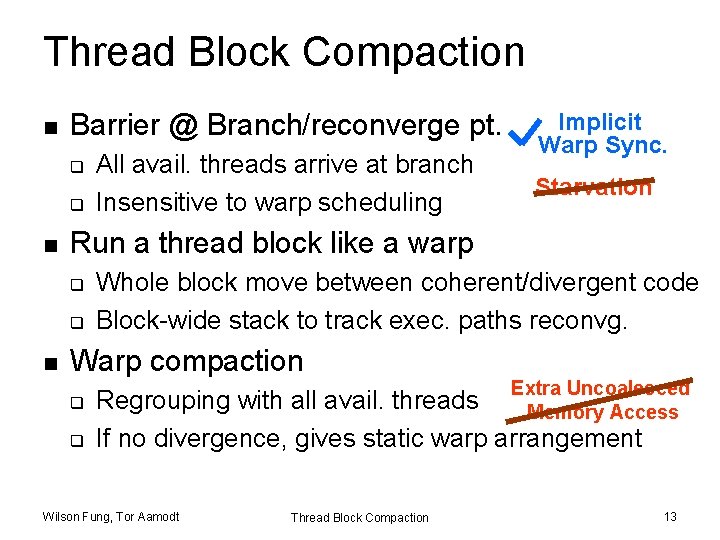

Thread Block Compaction n Barrier @ Branch/reconverge pt. q q n Starvation Run a thread block like a warp q q n All avail. threads arrive at branch Insensitive to warp scheduling Implicit Warp Sync. Whole block move between coherent/divergent code Block-wide stack to track exec. paths reconvg. Warp compaction q q Extra Uncoalesced Memory Access Regrouping with all avail. threads If no divergence, gives static warp arrangement Wilson Fung, Tor Aamodt Thread Block Compaction 13

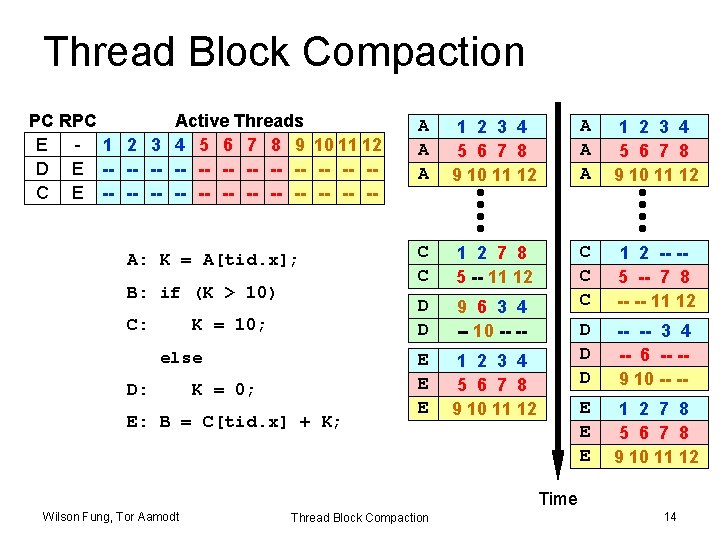

Thread Block Compaction PC RPC Active Threads A E - 1 2 3 4 5 6 7 8 9 10 11 12 D E -- -- -3 -4 -- -6 -- -- -9 10 -- -- --- 12 -C E -1 -2 -- -- -5 -- -7 -8 -- -- 11 A: K = A[tid. x]; B: if (K > 10) C: K = 10; else D: K = 0; E: B = C[tid. x] + K; A A A 1 2 3 4 5 6 7 8 9 10 11 12 C C 1 2 7 8 5 -- 11 12 D D 9 6 3 4 -- 10 -- -- C C C 1 2 -- -5 -- 7 8 -- -- 11 12 E E E 1 2 3 4 5 6 7 8 9 10 11 12 D D D -- -- 3 4 -- 6 -- -9 10 -- -- E E E 1 2 7 8 5 6 7 8 9 10 11 12 Time Wilson Fung, Tor Aamodt Thread Block Compaction 14

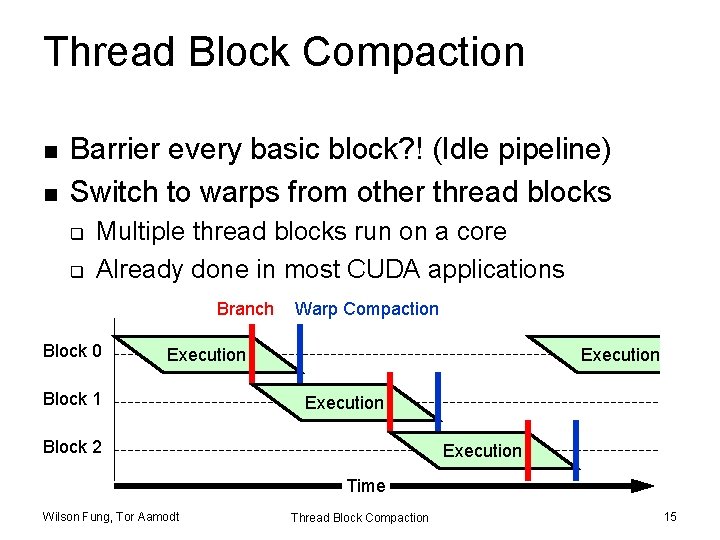

Thread Block Compaction n n Barrier every basic block? ! (Idle pipeline) Switch to warps from other thread blocks q q Multiple thread blocks run on a core Already done in most CUDA applications Branch Block 0 Warp Compaction Execution Block 1 Execution Block 2 Execution Time Wilson Fung, Tor Aamodt Thread Block Compaction 15

Microarchitecture Modification n n Per-Warp Stack Block-Wide Stack I-Buffer + TIDs Warp Buffer q n New Unit: Thread Compactor q n Store the dynamic warps Translate activemask to compact dynamic warps More Detail in Paper Branch Target PC Fetch Valid[1: N] I-Cache Block-Wide Warp Buffer Decode Mask Issue Score. Board Wilson Fung, Tor Aamodt Stack Thread Compactor Active Pred. ALU ALU Reg. File MEM Done (WID) Thread Block Compaction 16

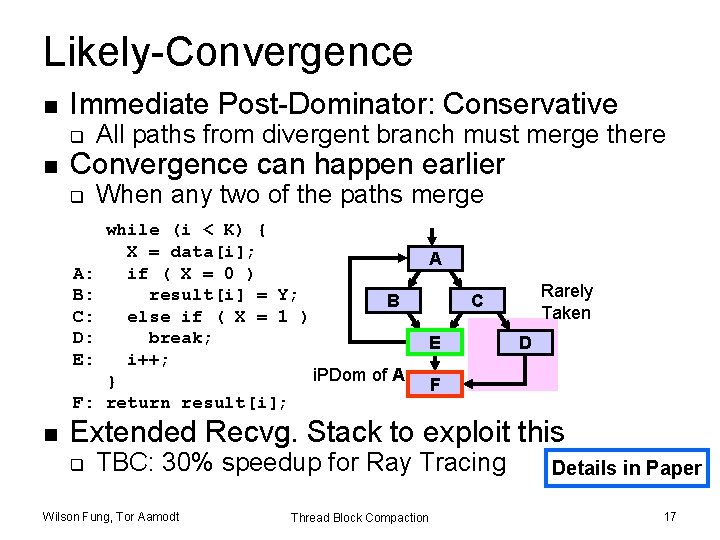

Likely-Convergence n Immediate Post-Dominator: Conservative q n Convergence can happen earlier q A: B: C: D: E: F: n All paths from divergent branch must merge there When any two of the paths merge while (i < K) { X = data[i]; if ( X = 0 ) result[i] = Y; B else if ( X = 1 ) break; i++; i. PDom of A } return result[i]; A Rarely Taken C E D F Extended Recvg. Stack to exploit this q TBC: 30% speedup for Ray Tracing Wilson Fung, Tor Aamodt Thread Block Compaction Details in Paper 17

Outline n n n n Introduction GPU Microarchitecture DWF Pathologies Thread Block Compaction Likely-Convergence Experimental Results Conclusion Wilson Fung, Tor Aamodt Thread Block Compaction 18

Evaluation n Simulation: GPGPU-Sim (2. 2. 1 b) q n ~Quadro FX 5800 + L 1 & L 2 Caches 21 Benchmarks q q q All of GPGPU-Sim original benchmarks Rodinia benchmarks Other important applications: n n Face Detection from Visbench (UIUC) DNA Sequencing (MUMMER-GPU++) Molecular Dynamics Simulation (NAMD) Ray Tracing from NVIDIA Research Wilson Fung, Tor Aamodt Thread Block Compaction 19

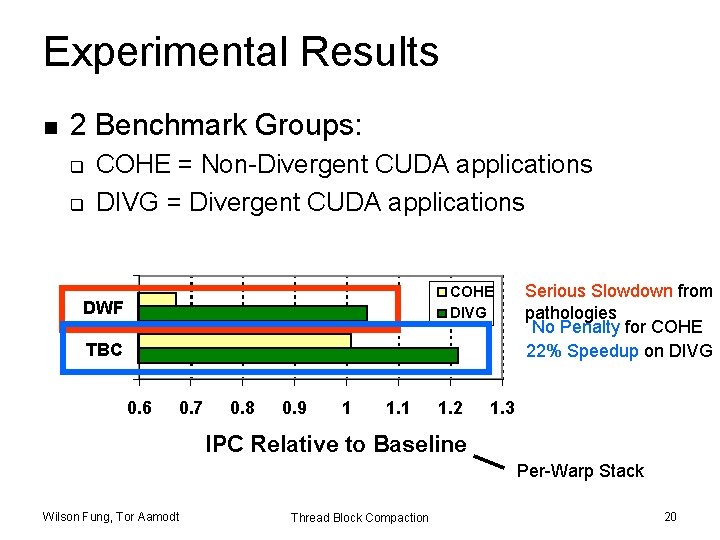

Experimental Results n 2 Benchmark Groups: q q COHE = Non-Divergent CUDA applications DIVG = Divergent CUDA applications COHE DIVG DWF TBC 0. 6 0. 7 0. 8 0. 9 1 1. 2 Serious Slowdown from pathologies No Penalty for COHE 22% Speedup on DIVG 1. 3 IPC Relative to Baseline Per-Warp Stack Wilson Fung, Tor Aamodt Thread Block Compaction 20

Conclusion n Thread Block Compaction q q n Addressed some key challenges of DWF One significant step closer to reality Benefit from advancements on reconvergence stack q q Likely-Convergence Point Extensible: Integrate other stack-based proposals Wilson Fung, Tor Aamodt Thread Block Compaction 21

Thank You! Wilson Fung, Tor Aamodt Thread Block Compaction 22

Backups Wilson Fung, Tor Aamodt Thread Block Compaction 23

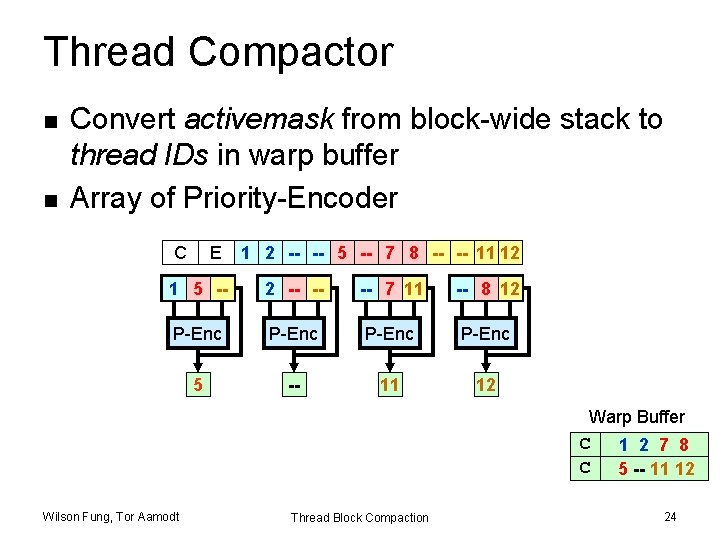

Thread Compactor n n Convert activemask from block-wide stack to thread IDs in warp buffer Array of Priority-Encoder C E 1 2 -- -- 5 -- 7 8 -- -- 11 12 1 5 -- 2 -- -- -- 7 11 -- 8 12 P-Enc 1 5 -2 11 7 12 8 Warp Buffer C 1 2 7 8 C 5 -- 11 12 Wilson Fung, Tor Aamodt Thread Block Compaction 24

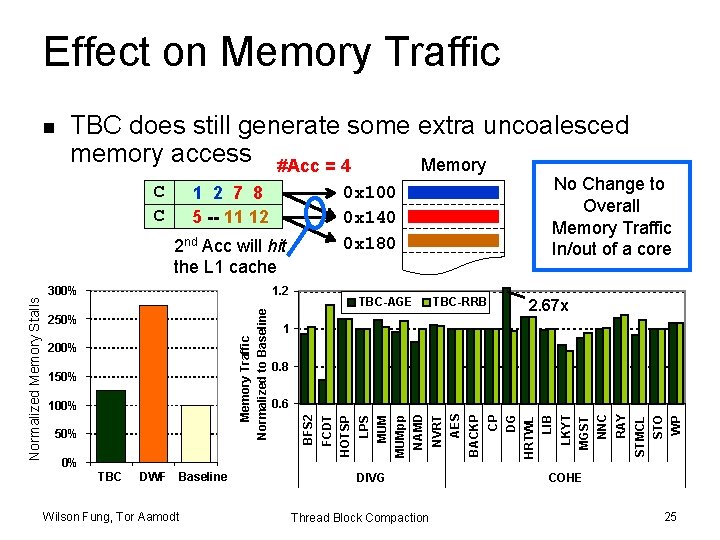

Effect on Memory Traffic TBC does still generate some extra uncoalesced memory access #Acc = 4 Memory 1 2 7 8 5 -- 11 12 1. 2 TBC DWF Baseline Wilson Fung, Tor Aamodt DIVG Thread Block Compaction WP STO STMCL RAY NNC MGST LKYT LIB HRTWL DG CP BACKP 0% NVRT AES 50% 0. 6 NAMD 100% 2. 67 x 0. 8 MUMpp 150% MUM 200% TBC-RRB 1 FCDT 250% TBC-AGE BFS 2 Memory Traffic Normalized to Baseline Normalized Memory Stalls 2 nd Acc will hit the L 1 cache 300% No Change to Overall Memory Traffic In/out of a core 0 x 100 0 x 140 0 x 180 LPS C C HOTSP n COHE 25

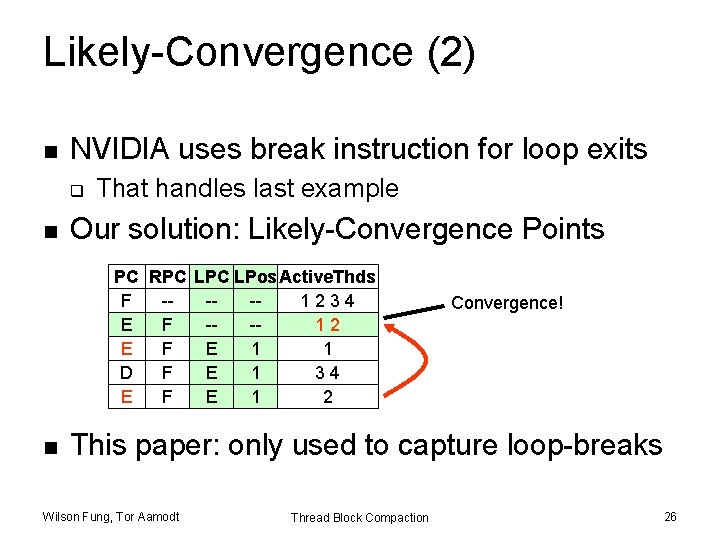

Likely-Convergence (2) n NVIDIA uses break instruction for loop exits q n That handles last example Our solution: Likely-Convergence Points PC RPC LPos. Active. Thds F ---1234 E F --1 -22 B E F E 1 1 C D F E 1 23344 E F E 1 2 n Convergence! This paper: only used to capture loop-breaks Wilson Fung, Tor Aamodt Thread Block Compaction 26

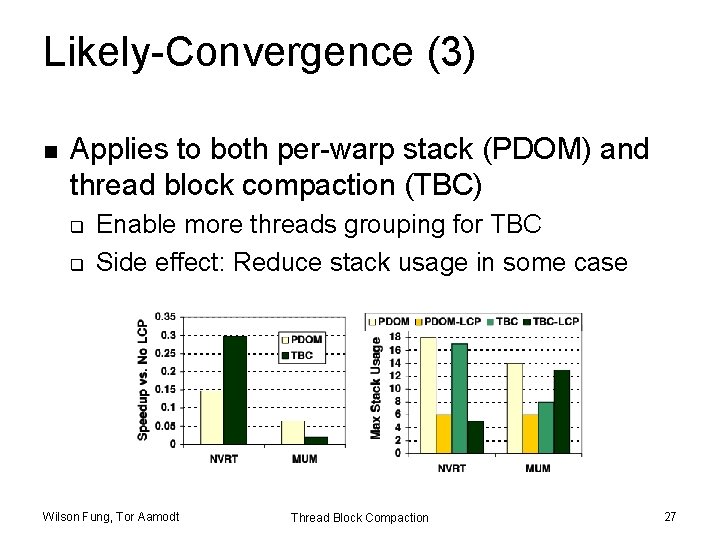

Likely-Convergence (3) n Applies to both per-warp stack (PDOM) and thread block compaction (TBC) q q Enable more threads grouping for TBC Side effect: Reduce stack usage in some case Wilson Fung, Tor Aamodt Thread Block Compaction 27

- Slides: 27