Theory Building Through Praxis Discourse A Theory And

Theory Building Through Praxis Discourse: A Theory- And Practice-Informed Model Of A Transformative Participatory Approach To Evaluation MICHAEL A. HARNAR DOCTORAL CANDIDATE, CLAREMONT GRADUATE UNIVERSITY AMERICAN EVALUATION ASSOCIATION ANNUAL CONFERENCE NOV 5, 2011; SESSION 842 ANAHEIM, CA

Today’s Agenda �Discipline Building/Background �The Method Survey Modeling Practice Webinars �Preliminary Findings Key T-PE Variables T-PE Questions �Next Steps

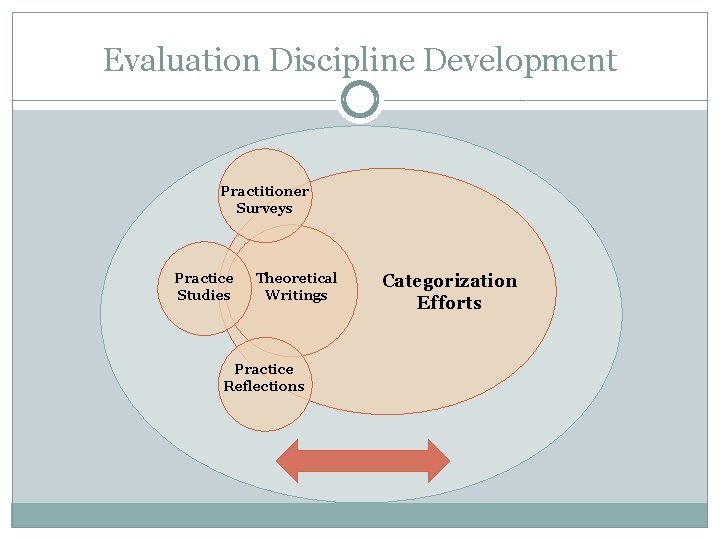

Evaluation Discipline Development Practitioner Surveys Practice Studies Theoretical Writings Practice Reflections Categorization Efforts

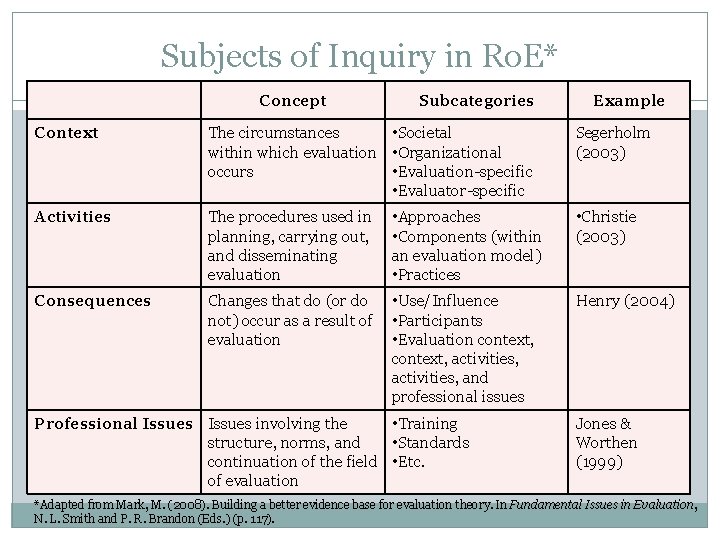

Subjects of Inquiry in Ro. E* Concept Subcategories Context The circumstances • Societal within which evaluation • Organizational occurs • Evaluation-specific • Evaluator-specific Activities The procedures used in planning, carrying out, and disseminating evaluation Consequences Changes that do (or do • Use/Influence not) occur as a result of • Participants evaluation • Evaluation context, activities, and professional issues • Approaches • Components (within an evaluation model) • Practices Professional Issues involving the • Training structure, norms, and • Standards continuation of the field • Etc. of evaluation Example Segerholm (2003) • Christie (2003) Henry (2004) Jones & Worthen (1999) *Adapted from Mark, M. (2008). Building a better evidence base for evaluation theory. In Fundamental Issues in Evaluation, N. L. Smith and P. R. Brandon (Eds. ) (p. 117).

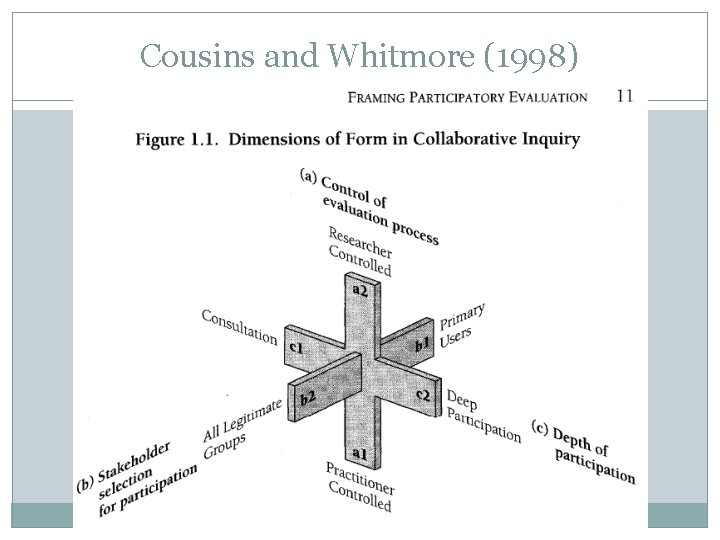

Cousins and Whitmore (1998)

P-PE vs. T-PE �Practical Participatory Evaluation (P-PE) Involves key stakeholders to � Increase evaluation’s usefulness, � Increase its use � Grounded data & findings � Organizational decision-making �Transformative Participatory Evaluation (T-PE) Involves diverse stakeholders to � Grounded data & findings � Empower participants � Increase social action

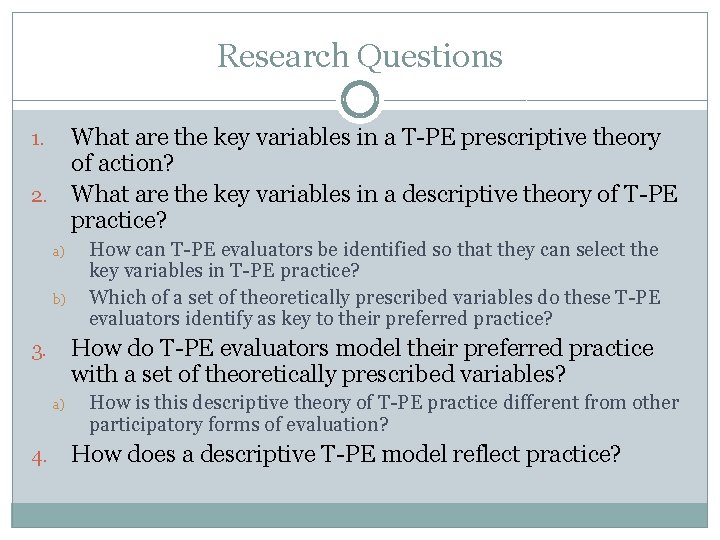

Research Questions What are the key variables in a T-PE prescriptive theory of action? 2. What are the key variables in a descriptive theory of T-PE practice? 1. a) b) How do T-PE evaluators model their preferred practice with a set of theoretically prescribed variables? 3. a) 4. How can T-PE evaluators be identified so that they can select the key variables in T-PE practice? Which of a set of theoretically prescribed variables do these T-PE evaluators identify as key to their preferred practice? How is this descriptive theory of T-PE practice different from other participatory forms of evaluation? How does a descriptive T-PE model reflect practice?

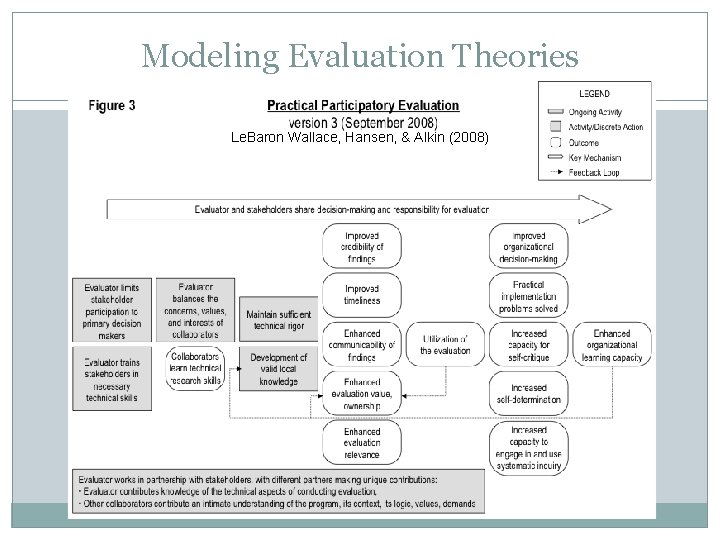

Modeling Evaluation Theories Le. Baron Wallace, Hansen, & Alkin (2008)

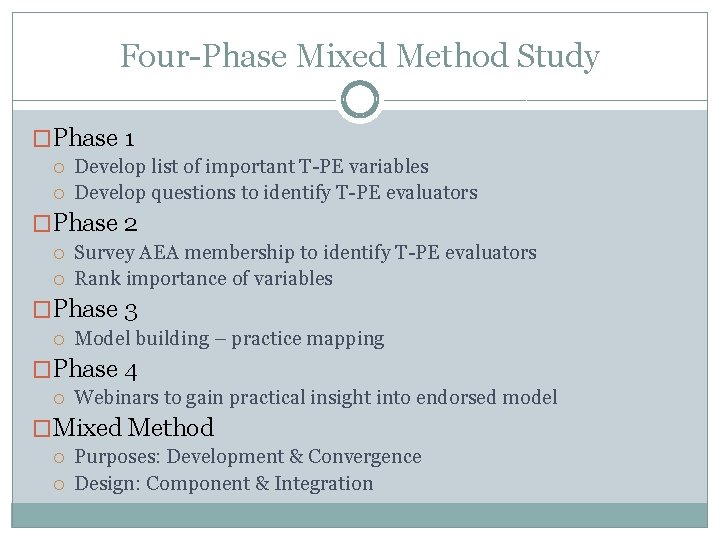

Four-Phase Mixed Method Study �Phase 1 Develop list of important T-PE variables Develop questions to identify T-PE evaluators �Phase 2 Survey AEA membership to identify T-PE evaluators Rank importance of variables �Phase 3 Model building – practice mapping �Phase 4 Webinars to gain practical insight into endorsed model �Mixed Method Purposes: Development & Convergence Design: Component & Integration

Variables And Question Development �Cousins, Whitmore, Mertens Wiki-based dialogue Produced 30 variables Produced 8 questions

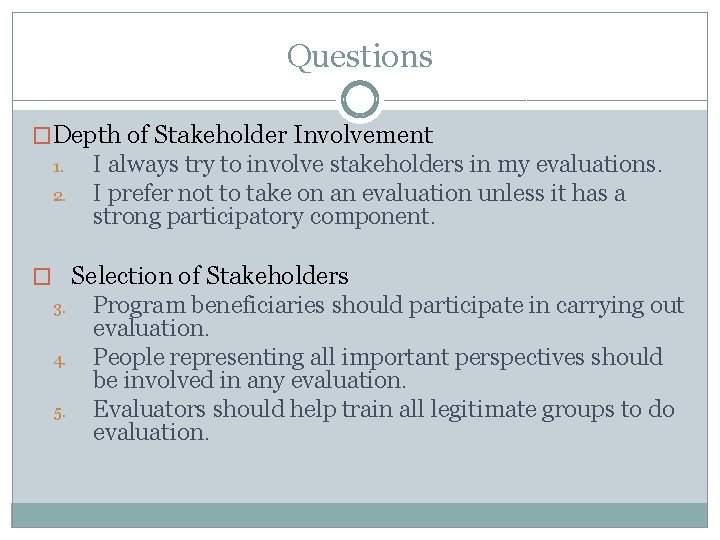

Questions �Depth of Stakeholder Involvement 1. 2. I always try to involve stakeholders in my evaluations. I prefer not to take on an evaluation unless it has a strong participatory component. � Selection of Stakeholders 3. 4. 5. Program beneficiaries should participate in carrying out evaluation. People representing all important perspectives should be involved in any evaluation. Evaluators should help train all legitimate groups to do evaluation.

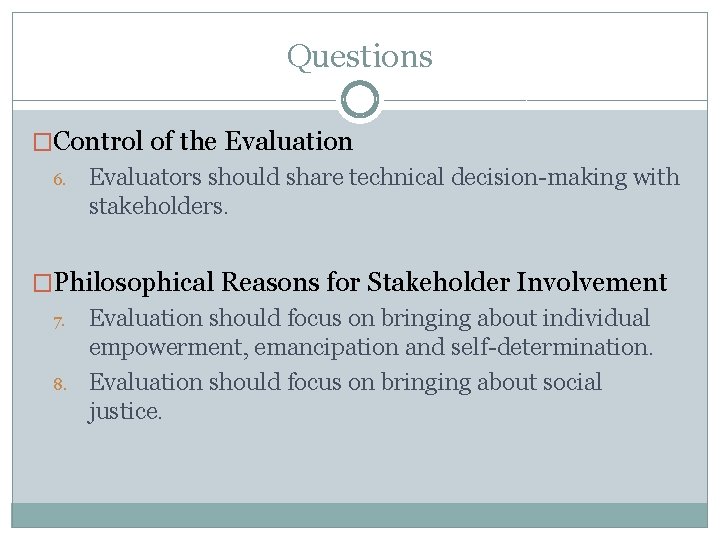

Questions �Control of the Evaluation 6. Evaluators should share technical decision-making with stakeholders. �Philosophical Reasons for Stakeholder Involvement 7. 8. Evaluation should focus on bringing about individual empowerment, emancipation and self-determination. Evaluation should focus on bringing about social justice.

Instructions � In answering these questions, please think about how you prefer to practice evaluation. I know that answers to these questions are almost always context dependent, and "it depends" might be your answer choice. But, I would like you to think of your ideal evaluation situation. � The term "stakeholder" is used here to mean anyone, other than the evaluator, with a vested interest in the entity (evaluand) being evaluated. � "Participants" are those stakeholders who take an active role in the evaluation. � "Participation" is any active role and may vary widely in breadth and depth.

Questions Strongly Disagree Slightly Disagree Somewhat Agree Strongly Agree Ο Ο

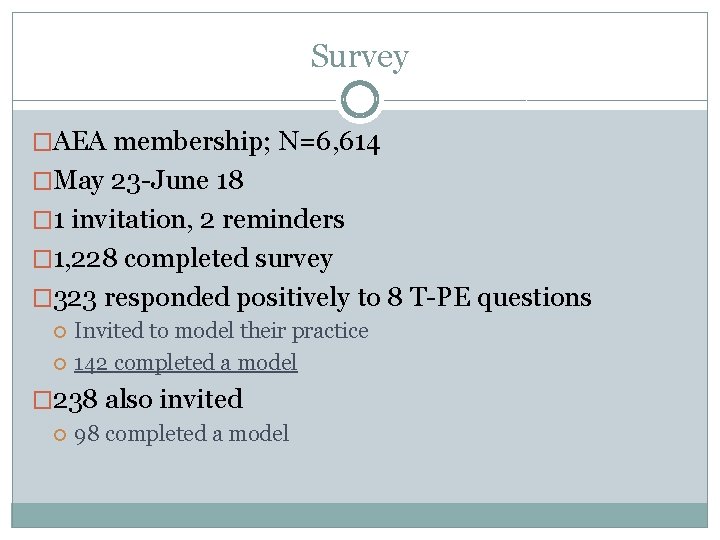

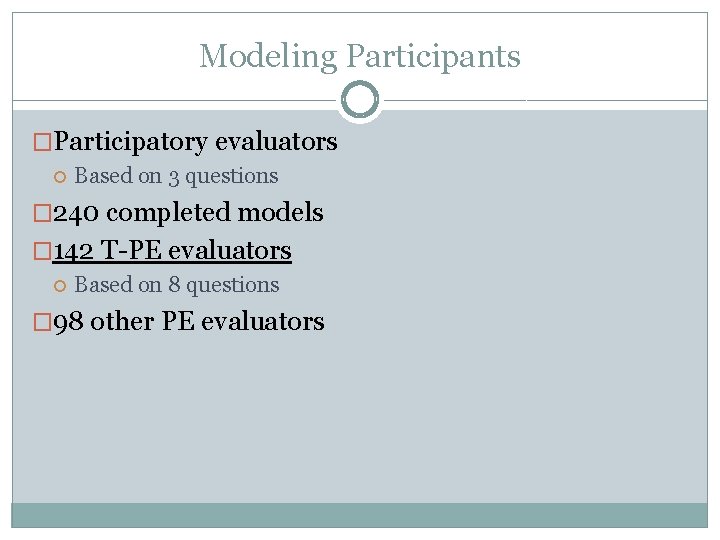

Survey �AEA membership; N=6, 614 �May 23 -June 18 � 1 invitation, 2 reminders � 1, 228 completed survey � 323 responded positively to 8 T-PE questions Invited to model their practice 142 completed a model � 238 also invited 98 completed a model

Modeling Filter Questions �I always try to involve stakeholders in my evaluations. �I prefer not to take on an evaluation unless it has a strong participatory component. �I prefer to involve stakeholders in every possible stage of the evaluation.

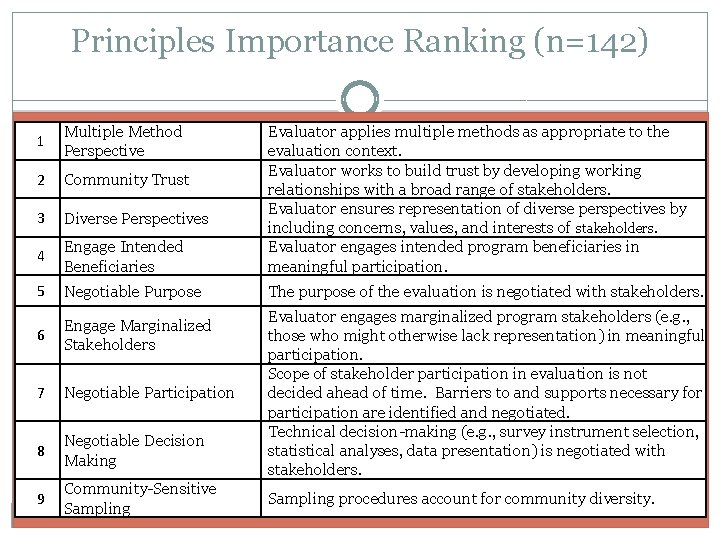

Principles Importance Ranking (n=142) 1 Multiple Method Perspective 2 Community Trust 3 Diverse Perspectives 4 Engage Intended Beneficiaries Evaluator applies multiple methods as appropriate to the evaluation context. Evaluator works to build trust by developing working relationships with a broad range of stakeholders. Evaluator ensures representation of diverse perspectives by including concerns, values, and interests of stakeholders. Evaluator engages intended program beneficiaries in meaningful participation. 5 Negotiable Purpose The purpose of the evaluation is negotiated with stakeholders. 6 Engage Marginalized Stakeholders 7 Negotiable Participation 8 Negotiable Decision Making 9 Community-Sensitive Sampling Evaluator engages marginalized program stakeholders (e. g. , those who might otherwise lack representation) in meaningful participation. Scope of stakeholder participation in evaluation is not decided ahead of time. Barriers to and supports necessary for participation are identified and negotiated. Technical decision-making (e. g. , survey instrument selection, statistical analyses, data presentation) is negotiated with stakeholders. Sampling procedures account for community diversity.

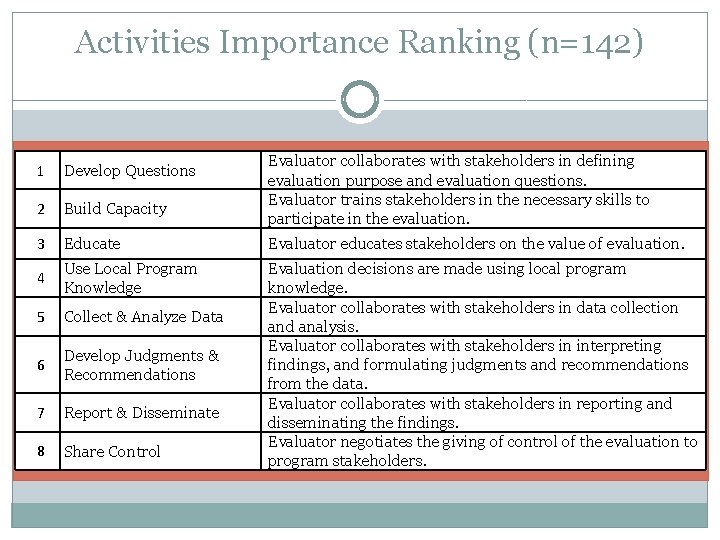

Activities Importance Ranking (n=142) Evaluator collaborates with stakeholders in defining evaluation purpose and evaluation questions. Evaluator trains stakeholders in the necessary skills to participate in the evaluation. 1 Develop Questions 2 Build Capacity 3 Educate Evaluator educates stakeholders on the value of evaluation. 4 Use Local Program Knowledge 5 Collect & Analyze Data 6 Develop Judgments & Recommendations 7 Report & Disseminate 8 Share Control Evaluation decisions are made using local program knowledge. Evaluator collaborates with stakeholders in data collection and analysis. Evaluator collaborates with stakeholders in interpreting findings, and formulating judgments and recommendations from the data. Evaluator collaborates with stakeholders in reporting and disseminating the findings. Evaluator negotiates the giving of control of the evaluation to program stakeholders.

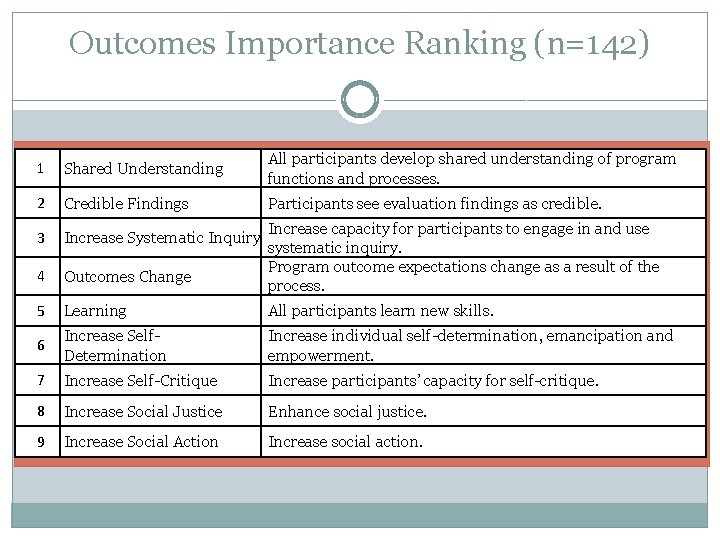

Outcomes Importance Ranking (n=142) 1 Shared Understanding All participants develop shared understanding of program functions and processes. 2 Credible Findings Participants see evaluation findings as credible. 3 Increase Systematic Inquiry 4 Outcomes Change 5 Learning All participants learn new skills. 6 Increase Self. Determination Increase individual self-determination, emancipation and empowerment. 7 Increase Self-Critique Increase participants’ capacity for self-critique. 8 Increase Social Justice Enhance social justice. 9 Increase Social Action Increase social action. Increase capacity for participants to engage in and use systematic inquiry. Program outcome expectations change as a result of the process.

Modeling Participants �Participatory evaluators Based on 3 questions � 240 completed models � 142 T-PE evaluators Based on 8 questions � 98 other PE evaluators

Model Building Process

Model Building �Variable around which model is built: Stakeholder Involvement �Question: “How do you ensure stakeholder involvement and what outcomes do you intend to create? ”

Model Building Process

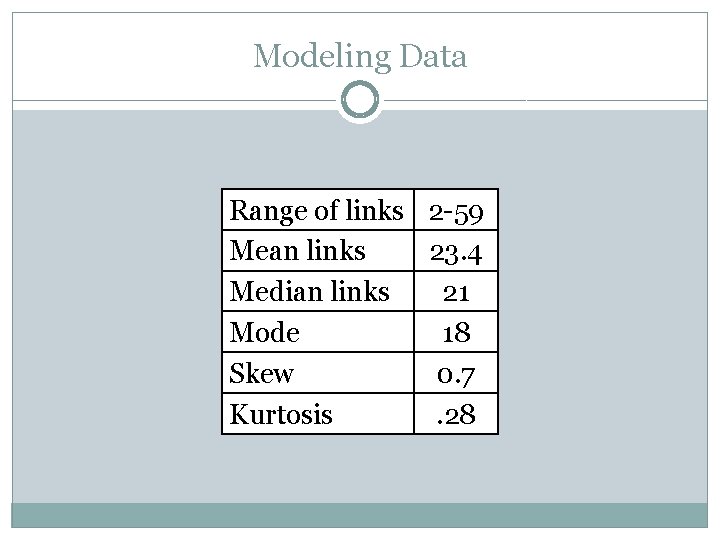

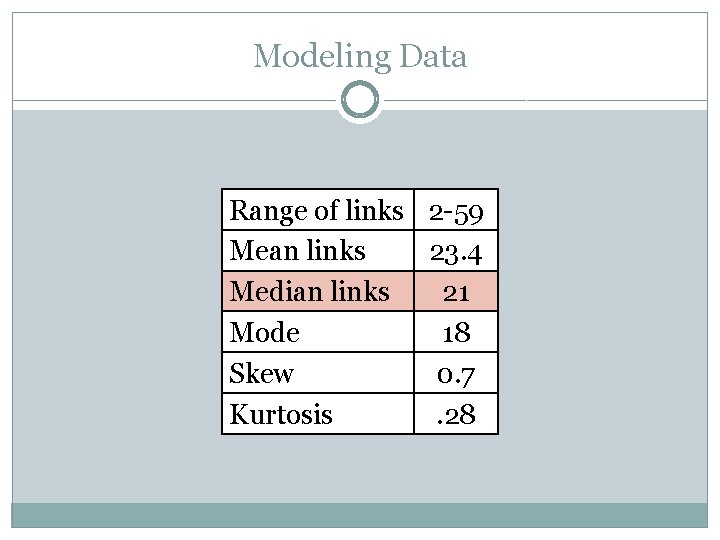

Modeling Data Range of links 2 -59 Mean links 23. 4 Median links 21 Mode 18 Skew 0. 7 Kurtosis. 28

Modeling Data Range of links 2 -59 Mean links 23. 4 Median links 21 Mode 18 Skew 0. 7 Kurtosis. 28

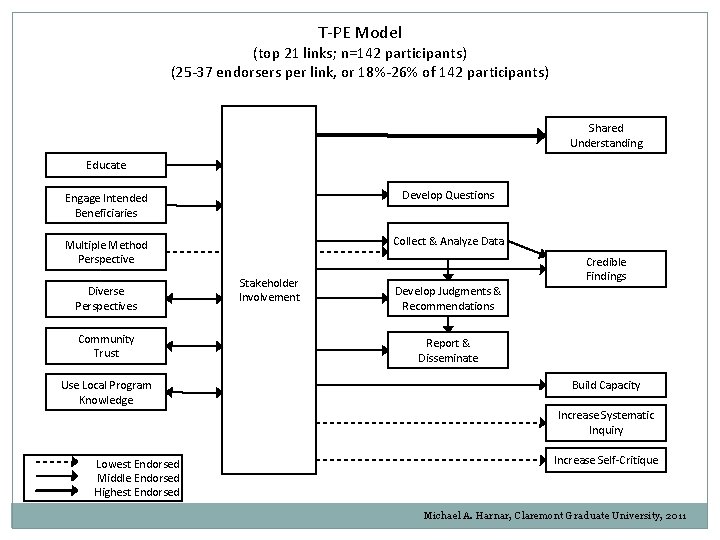

T-PE Model (top 21 links; n=142 participants) (25 -37 endorsers per link, or 18%-26% of 142 participants) Shared Understanding Educate Engage Intended Beneficiaries Develop Questions Multiple Method Perspective Collect & Analyze Data Diverse Perspectives Community Trust Use Local Program Knowledge Stakeholder Involvement Credible Findings Develop Judgments & Recommendations Report & Disseminate Build Capacity Increase Systematic Inquiry Lowest Endorsed Middle Endorsed Highest Endorsed Increase Self-Critique Michael A. Harnar, Claremont Graduate University, 2011

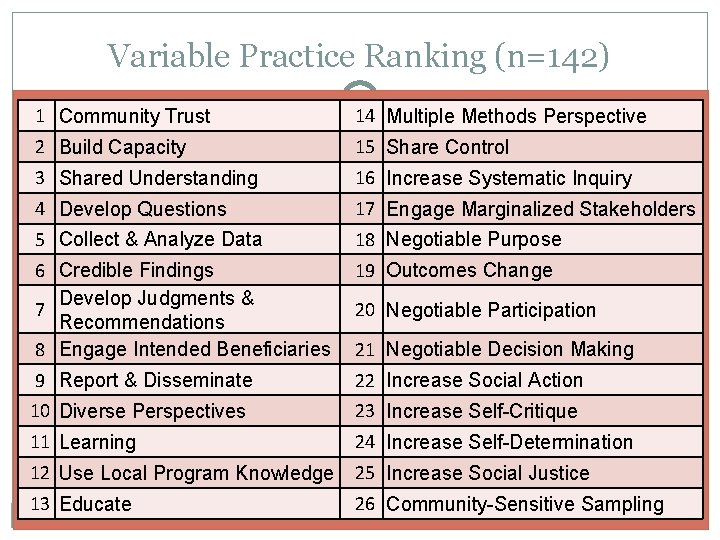

Variable Practice Ranking (n=142) 1 Community Trust 14 Multiple Methods Perspective 2 Build Capacity 15 Share Control 3 Shared Understanding 16 Increase Systematic Inquiry 4 Develop Questions 17 Engage Marginalized Stakeholders 5 Collect & Analyze Data 18 Negotiable Purpose 6 Credible Findings Develop Judgments & 7 Recommendations 8 Engage Intended Beneficiaries 19 Outcomes Change 9 Report & Disseminate 22 Increase Social Action 10 Diverse Perspectives 23 Increase Self-Critique 11 Learning 24 Increase Self-Determination 12 Use Local Program Knowledge 25 Increase Social Justice 13 Educate 26 Community-Sensitive Sampling 20 Negotiable Participation 21 Negotiable Decision Making

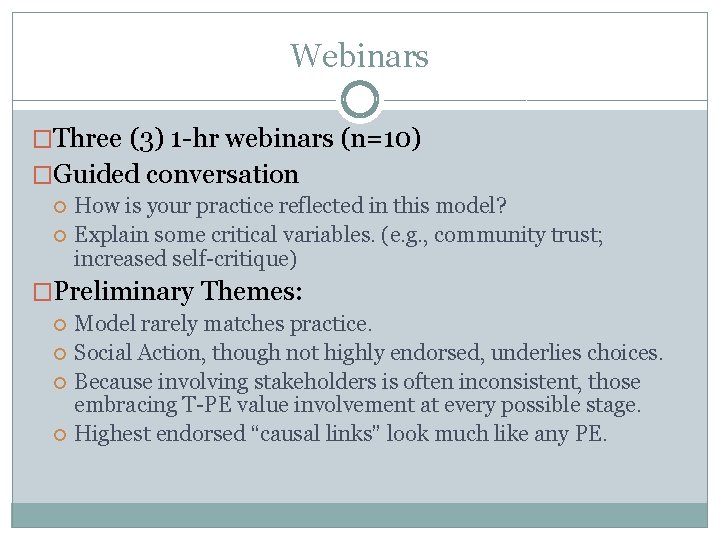

Webinars �Three (3) 1 -hr webinars (n=10) �Guided conversation How is your practice reflected in this model? Explain some critical variables. (e. g. , community trust; increased self-critique) �Preliminary Themes: Model rarely matches practice. Social Action, though not highly endorsed, underlies choices. Because involving stakeholders is often inconsistent, those embracing T-PE value involvement at every possible stage. Highest endorsed “causal links” look much like any PE.

Preliminary Conclusions �Mixed method converges understanding Different rankings provide greater understanding �Participant reflections Modeling Webinar �Publish findings as a visual framework to discuss practice �Provide more accessible research �Provide a preliminary list of key variables for PE & TPE

Questions? MICHAEL. HARNAR@CGU. EDU

- Slides: 30