Theories impacts evaluation Simon Pringle SQW Ltd Objectives

Theories, impacts, & evaluation Simon Pringle, SQW Ltd

Objectives for this Session 4+1! 1. Deepening our general understanding of the role of ‘Theory’ in evaluation 2. What Theory Based Impact Evaluation involves > > > When & how to do it, generally Specific approaches to TBIE Practical fieldwork methods 3. Where, & when, Counterfactual Impact Evaluation makes sense > > The ‘necessary’ conditions to undertake CIE Pros & cons vs TBIE approaches 2

Objectives for this Session 4. Suggestions for evaluating the ‘added value’ of cooperation – Strategic Added Value (SAV) > > Key aspects of SAV Challenges in characterising Mix of chalk/talk, exercises, & discussion Regular Q&A For you to have fun & enjoyment in to the process My promise – KISS! . . . & copies of slides to follow 3

About SQW 33 years old ~40 staff, € 4. 2 m T/O, 3 offices (cf ~90, € 12 m T/O, 6 offices in 2011) Working primarily for Public Sector > EC, ADB, World Bank, nation states > UK Government - BIS, DECC, DEFRA, HMT - & the Devolved Administrations > City States, wider place & sector partnerships, etc. . > Innovation, Place, Children & Young People 4

About SQW Recent assignments > WEFO - evaluation of ERDF Business Support in Wales (CIE) > DETI - evaluation of the Regional Selective Assistance Programme in Northern Ireland (CIE) > Interreg 4 B - review of management structures & programme procedures for NWE INTERREG IVB > Interreg 4 B - evaluation of results & achievements of the 2007/14 Inter. Reg IVB Programme ('Capitalisation' Study, TBIE) > DG Res & Inno - Supply & Demand Side Policies for Innovation 5

6

Let’s start at the very beginning. . .

![Talking Terms Activities > things that are done [within programme/project's timeframe] Outputs > direct Talking Terms Activities > things that are done [within programme/project's timeframe] Outputs > direct](http://slidetodoc.com/presentation_image_h/9c9066fec3dcf19de20a72ef22522fe6/image-9.jpg)

Talking Terms Activities > things that are done [within programme/project's timeframe] Outputs > direct measures of an Activity that can be counted [within programme/project's timeframe] – e. g. no attendees at workshop, no businesses advised Results > subsequent effects caused by the Outputs, which can again be measured, ideally) [within/after programme/project] – ↑ understanding of new policy, ↑ knowledge/confidence among SMEs Impacts (aka ‘long term effects’) > wider economic, social or other effects that can be credibly attributed to an intervention [generally after programme/ 8 project]

Talking Terms Monitoring. . . > Is the systematic, regular collection & occasional analysis of information to identify (& possibly measure) change over time Evaluation. . . > Is the analysis of the effectiveness & direction of an activity, & involves making judgments about progress & impact. Main differences between monitoring & evaluation > Timing & frequency of observations > Types of questions asked >. . . when monitoring & evaluation are integrated as project management tools, the line between the two becomes can be blurred (After Vernooy et al 2003) 9

So, why bother with evaluation? Because of. . . Compliance Ø Emphasis in new 2014 -2020 period on ‘results orientation’ > Attempt to shift Programmes from being ‘busy’ to being ‘productive’ Ø Explicit requirement in the Regulations > Common Provision Regulation (CPR), ETC Regulation etc 10

So, why bother with evaluation? Because of. . . Conscience Ø Are programmes/project's doing the right things & doing these things right Ø Identifying learning to enable real-time change & improvement Ø Establishing evaluation (& underpinning monitoring) as a core behaviour & part of our legacy 11

Stakeholders Typically, 3 groups 1. Those being evaluated – Justification/Legitimacy – Learning at operational level 2. Sponsor(s) & audience (s) for the evaluation – Accountability – Resource allocation – Learning at policy level 3. Those performing the evaluation – Professional & academic interest/advancement Forget about stakeholders, their interactions & their various interests at your peril! 12

Differing levels & scopes System(s) &/or markets Policy(s) Programme portfolio Programme, ranging from > Multi-measure, multi-partner > Single-measure, single-partner Individual Project > As part of programme implementation (exante, selection of funded projects) > As part of Programme evaluation to understand effects, E 3 etc. . Same core thinking applies. . . but approaches/methods vary 13

Evaluation is not new Formally recognised since the 1920 s Jacob Adler, Manchester Institute of Innovation Research 1. “Part of the general practice of science > Career progression, editorial judgment, award of financial grants > Allocation of funds beyond projects (basic funding), attractiveness of institutions 2. Broader requirement for evaluation of publiclyfunded activities at various levels > Legal requirements: monitor, audit > Management: Economy, efficiency & effectiveness > Policy: the intended effects, cost-benefit, Value for Money > Society: the overall benefits, along many dimensions” 14

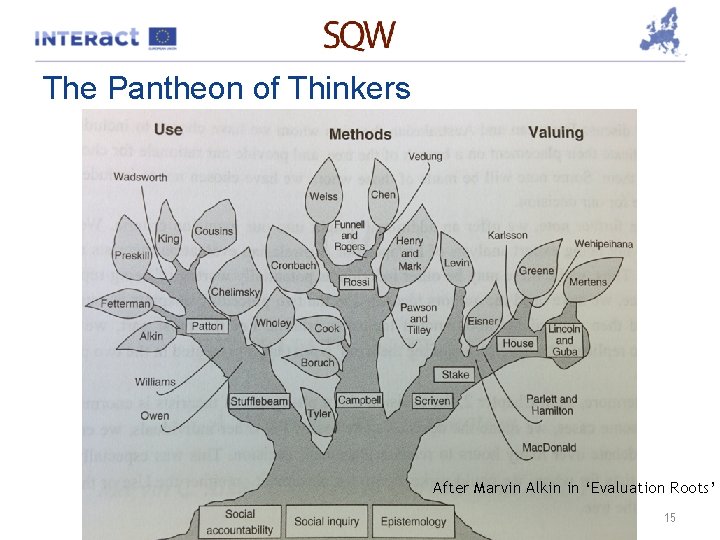

The Pantheon of Thinkers After Marvin Alkin in ‘Evaluation Roots’ 15

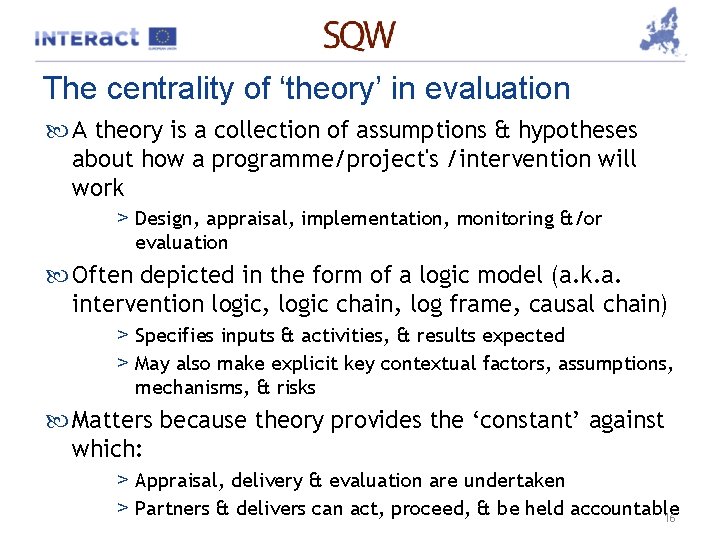

The centrality of ‘theory’ in evaluation A theory is a collection of assumptions & hypotheses about how a programme/project's /intervention will work > Design, appraisal, implementation, monitoring &/or evaluation Often depicted in the form of a logic model (a. k. a. intervention logic, logic chain, log frame, causal chain) > Specifies inputs & activities, & results expected > May also make explicit key contextual factors, assumptions, mechanisms, & risks Matters because theory provides the ‘constant’ against which: > Appraisal, delivery & evaluation are undertaken > Partners & delivers can act, proceed, & be held accountable 16

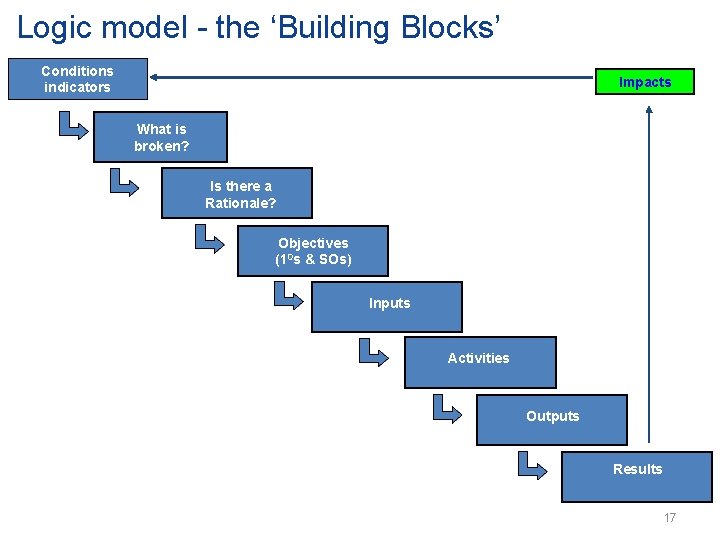

Logic model - the ‘Building Blocks’ Conditions indicators Impacts What is broken? Is there a Rationale? Objectives (10 s & SOs) Inputs Activities Outputs Results 17

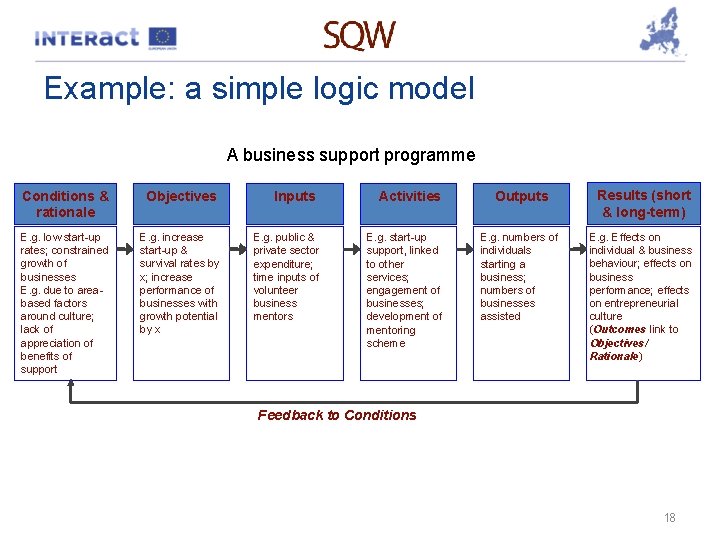

Example: a simple logic model A business support programme Conditions & rationale Objectives E. g. low start-up rates; constrained growth of businesses E. g. due to areabased factors around culture; lack of appreciation of benefits of support E. g. increase start-up & survival rates by x; increase performance of businesses with growth potential by x Inputs E. g. public & private sector expenditure; time inputs of volunteer business mentors Activities E. g. start-up support, linked to other services; engagement of businesses; development of mentoring scheme Outputs Results (short & long-term) E. g. numbers of individuals starting a business; numbers of businesses assisted E. g. Effects on individual & business behaviour; effects on business performance; effects on entrepreneurial culture (Outcomes link to Objectives/ Rationale) Feedback to Conditions 18

Example: a more complicated logic model 19

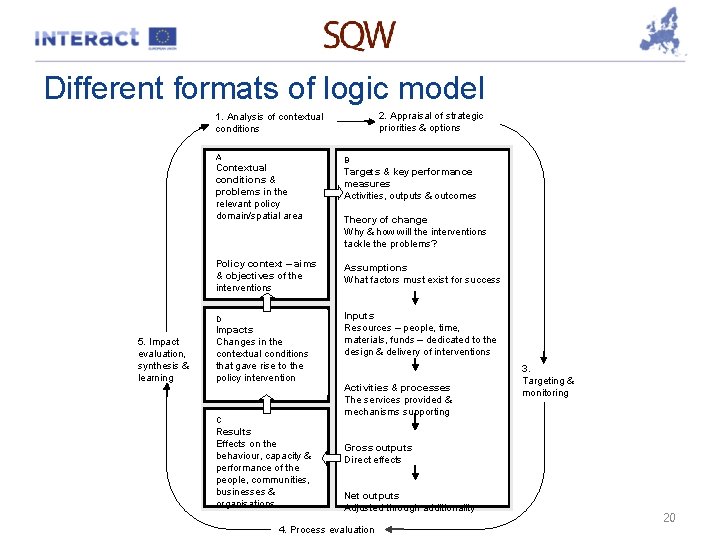

Different formats of logic model 2. Appraisal of strategic priorities & options 1. Analysis of contextual conditions A Contextual conditions & problems in the relevant policy domain/spatial area Policy context – aims & objectives of the interventions D 5. Impact evaluation, synthesis & learning Impacts Changes in the contextual conditions that gave rise to the policy intervention C Results Effects on the behaviour, capacity & performance of the people, communities, businesses & organisations B Targets & key performance measures Activities, outputs & outcomes Theory of change Why & how will the interventions tackle the problems? Assumptions What factors must exist for success Inputs Resources – people, time, materials, funds – dedicated to the design & delivery of interventions Activities & processes The services provided & mechanisms supporting 3. Targeting & monitoring Gross outputs Direct effects Net outputs Adjusted through additionality 4. Process evaluation 20

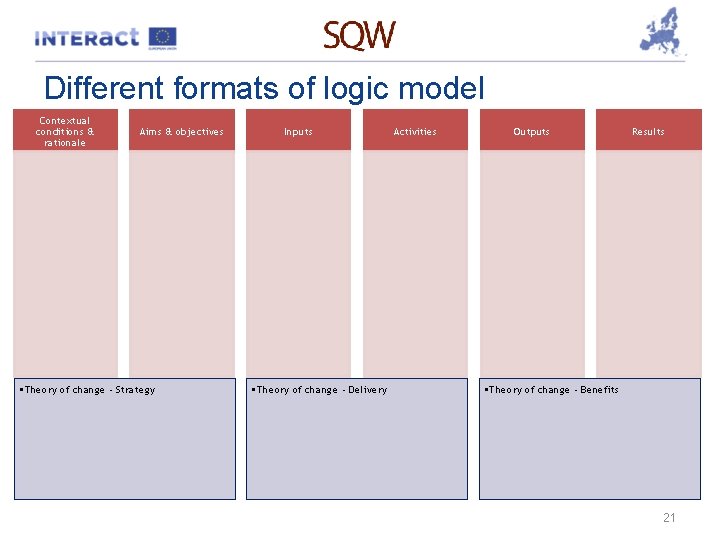

Different formats of logic model Contextual conditions & rationale Aims & objectives • Theory of change - Strategy Inputs • Theory of change - Delivery Activities Outputs Results • Theory of change - Benefits 21

To summarise Evaluation Ø Matters! Ø Is not new. . . & continues to develop as a science/art Ø ‘Theory’ thinking is increasingly centre-stage Ø. . . And very, very satisfying to undertake! 22

Q&A 1

2. The importance of ‘n’ in determining evaluation methods

Large & small ‘n’ considerations in evaluation In the ideal world of evaluation. . . Ø All intervention populations (i. e. ‘n’) are large – QED, statistical tests of significance hold Ø The beneficiary (or ‘treatment’) group, the intervention (or treatment), & the wider context are highly homogeneous Ø Budgets, & political/other constraints are not an issue for sample sizes, and/or the use of comparison groups Ø In these circumstances, CIE is the way to go. . . 25

Large & small ‘n’ considerations in evaluation But the world is very rarely ideal – ‘n’ is often small - & CIE very frequently unfeasible Hence, role of Theory-Based Impact Evaluation (TBIE) approaches in evaluation Ø Yes, TBIE does not provide a quantifiable counterfactual Ø Yes, TBIE does not provide statistically hard & fast numbers Ø Yes, TBIE has limitations in deriving robust cost/benefit numbers Ø. . . But done well, can make very meaningful causal interferences to evaluate impact 26

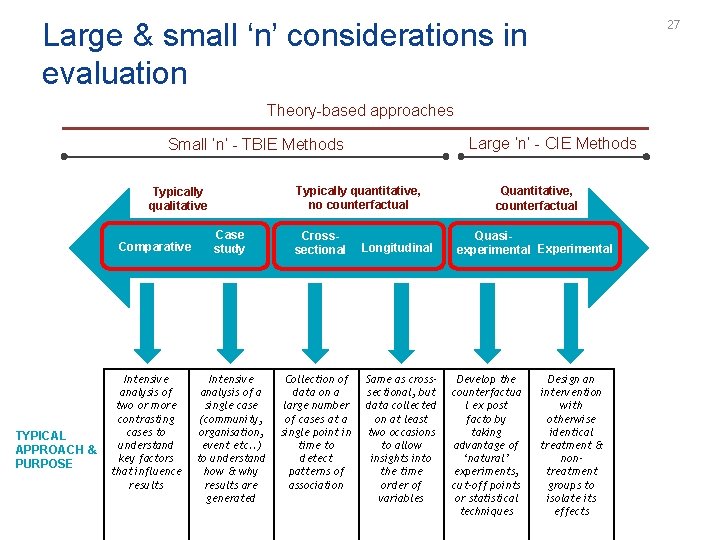

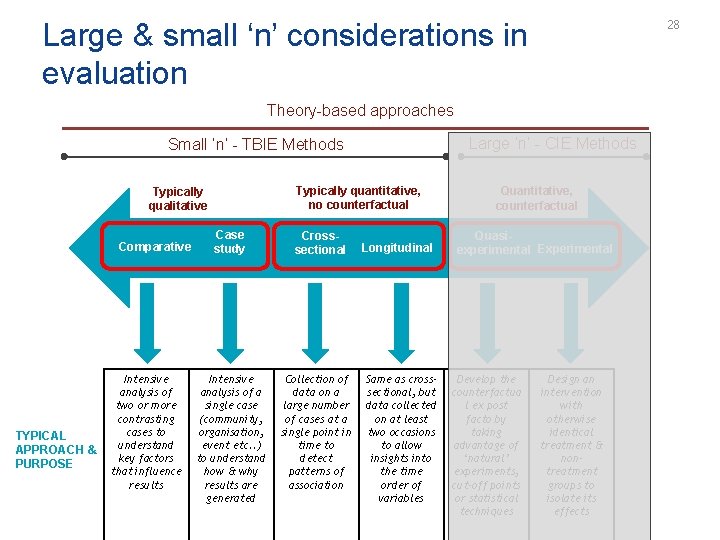

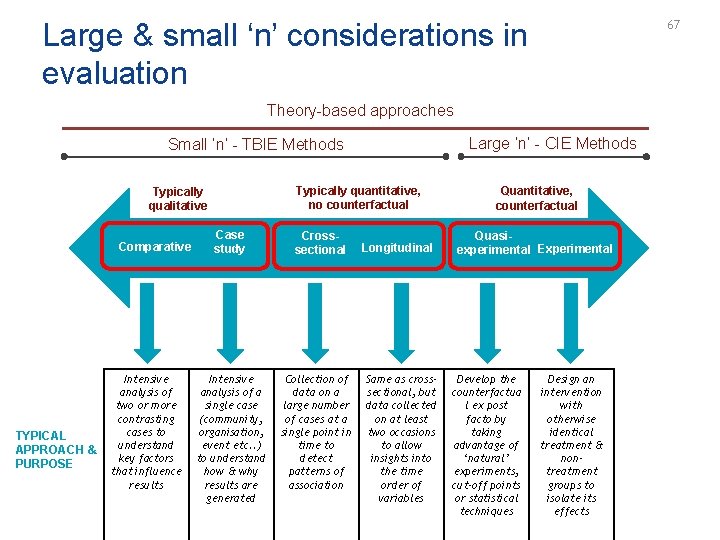

Large & small ‘n’ considerations in evaluation 27 Theory-based approaches Large ‘n’ - CIE Methods Small ‘n’ - TBIE Methods Typically quantitative, no counterfactual Typically qualitative Comparative TYPICAL APPROACH & PURPOSE Intensive analysis of two or more contrasting cases to understand key factors that influence results Case study Intensive analysis of a single case (community, organisation, event etc. . ) to understand how & why results are generated Crosssectional Collection of data on a large number of cases at a single point in time to detect patterns of association Longitudinal Same as crosssectional, but data collected on at least two occasions to allow insights into the time order of variables Quantitative, counterfactual Quasiexperimental Experimental Develop the counterfactua l ex post facto by taking advantage of ‘natural’ experiments, cut-off points or statistical techniques Design an intervention with otherwise identical treatment & nontreatment groups to isolate its effects

Large & small ‘n’ considerations in evaluation 28 Theory-based approaches Large ‘n’ - CIE Methods Small ‘n’ - TBIE Methods Typically quantitative, no counterfactual Typically qualitative Comparative TYPICAL APPROACH & PURPOSE Intensive analysis of two or more contrasting cases to understand key factors that influence results Case study Intensive analysis of a single case (community, organisation, event etc. . ) to understand how & why results are generated Crosssectional Collection of data on a large number of cases at a single point in time to detect patterns of association Longitudinal Same as crosssectional, but data collected on at least two occasions to allow insights into the time order of variables Quantitative, counterfactual Quasiexperimental Experimental Develop the counterfactua l ex post facto by taking advantage of ‘natural’ experiments, cut-off points or statistical techniques Design an intervention with otherwise identical treatment & nontreatment groups to isolate its effects

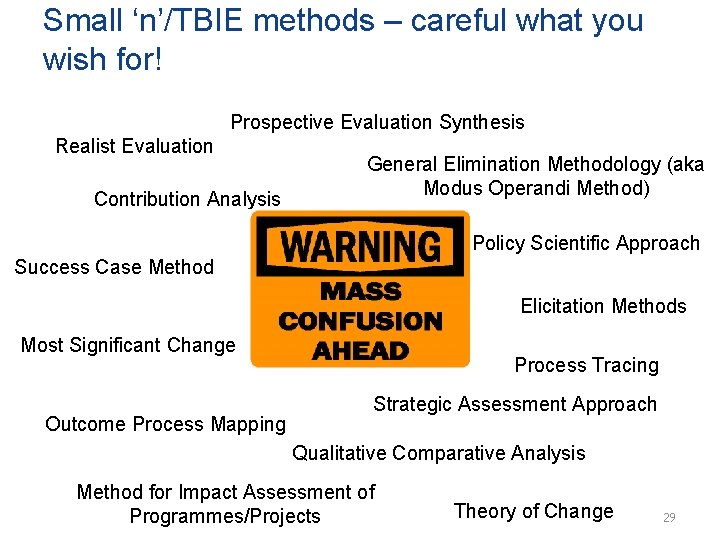

Small ‘n’/TBIE methods – careful what you wish for! Prospective Evaluation Synthesis Realist Evaluation Contribution Analysis General Elimination Methodology (aka Modus Operandi Method) Policy Scientific Approach Success Case Method Elicitation Methods Most Significant Change Outcome Process Mapping Process Tracing Strategic Assessment Approach Qualitative Comparative Analysis Method for Impact Assessment of Programmes/Projects Theory of Change 29

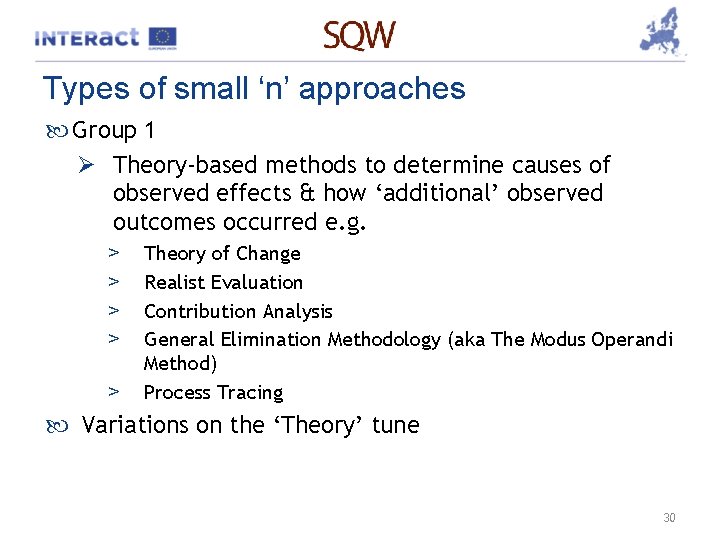

Types of small ‘n’ approaches Group 1 Ø Theory-based methods to determine causes of observed effects & how ‘additional’ observed outcomes occurred e. g. > > > Theory of Change Realist Evaluation Contribution Analysis General Elimination Methodology (aka The Modus Operandi Method) Process Tracing Variations on the ‘Theory’ tune 30

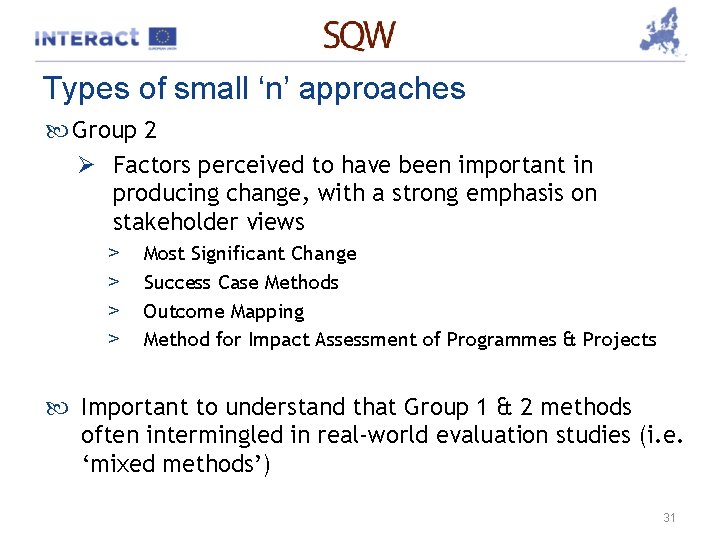

Types of small ‘n’ approaches Group 2 Ø Factors perceived to have been important in producing change, with a strong emphasis on stakeholder views > > Most Significant Change Success Case Methods Outcome Mapping Method for Impact Assessment of Programmes & Projects Important to understand that Group 1 & 2 methods often intermingled in real-world evaluation studies (i. e. ‘mixed methods’) 31

3 x Group 1 approaches

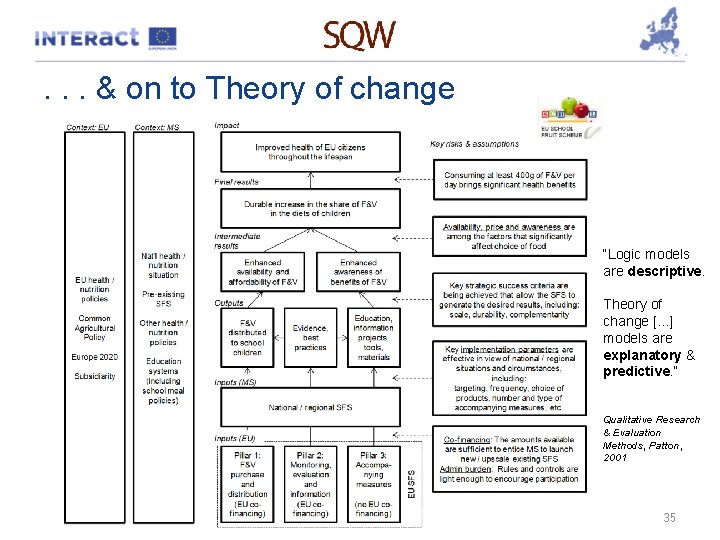

1 A. ‘Vanilla’ Theory of Change Takes the logic chain for the intervention. . . . & develops this in to a predictive & explanatory depiction of what should happen through the intervention Evaluation explores each step of the To. C to understand whether theoretically predicted changes occurred as expected, &/or as result of other external factors 33

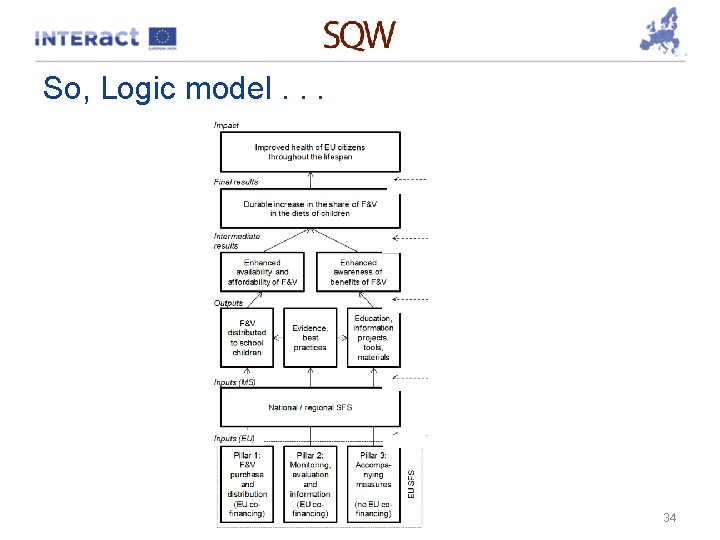

So, Logic model. . . 34

. . . & on to Theory of change “Logic models are descriptive. Theory of change [. . . ] models are explanatory & predictive. ” Qualitative Research & Evaluation Methods, Patton, 2001 35

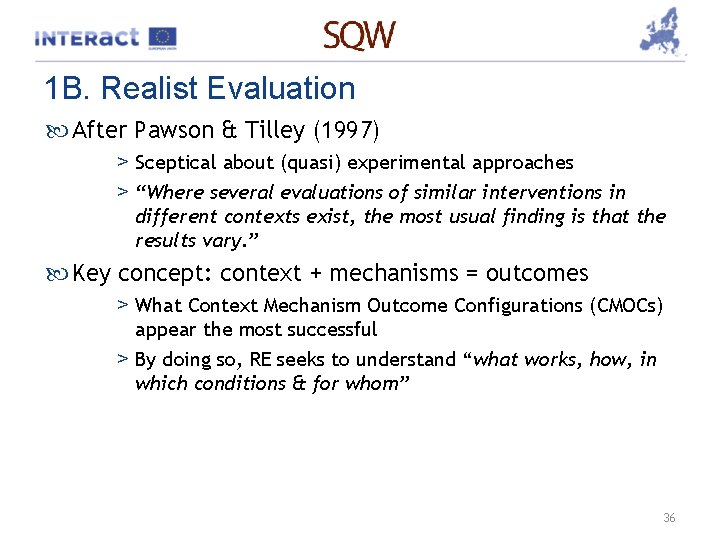

1 B. Realist Evaluation After Pawson & Tilley (1997) > Sceptical about (quasi) experimental approaches > “Where several evaluations of similar interventions in different contexts exist, the most usual finding is that the results vary. ” Key concept: context + mechanisms = outcomes > What Context Mechanism Outcome Configurations (CMOCs) appear the most successful > By doing so, RE seeks to understand “what works, how, in which conditions & for whom” 36

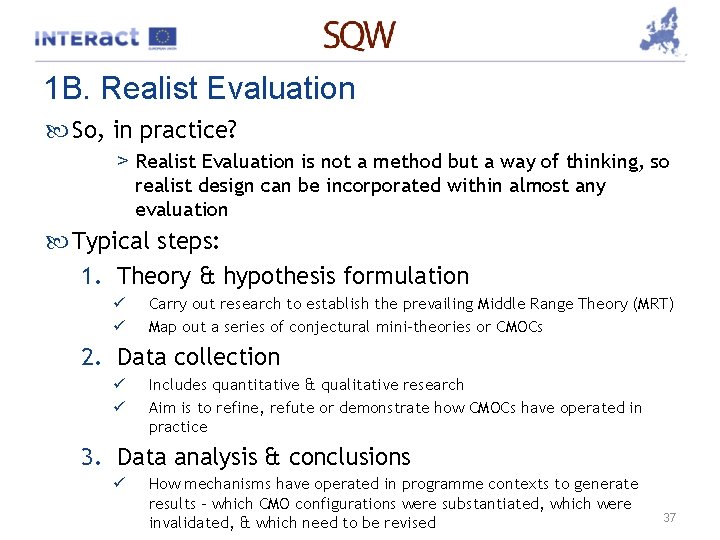

1 B. Realist Evaluation So, in practice? > Realist Evaluation is not a method but a way of thinking, so realist design can be incorporated within almost any evaluation Typical steps: 1. Theory & hypothesis formulation ü ü Carry out research to establish the prevailing Middle Range Theory (MRT) Map out a series of conjectural mini-theories or CMOCs 2. Data collection ü ü Includes quantitative & qualitative research Aim is to refine, refute or demonstrate how CMOCs have operated in practice 3. Data analysis & conclusions ü How mechanisms have operated in programme contexts to generate results – which CMO configurations were substantiated, which were invalidated, & which need to be revised 37

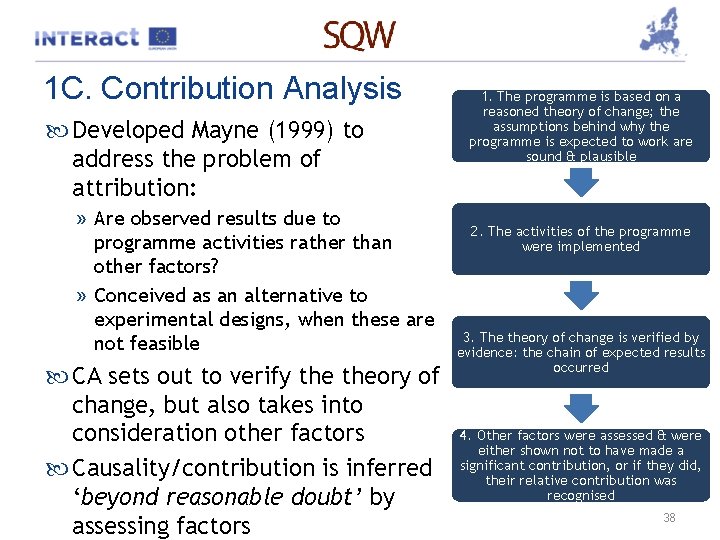

1 C. Contribution Analysis Developed Mayne (1999) to address the problem of attribution: » Are observed results due to programme activities rather than other factors? » Conceived as an alternative to experimental designs, when these are not feasible CA sets out to verify theory of change, but also takes into consideration other factors Causality/contribution is inferred ‘beyond reasonable doubt’ by assessing factors 1. The programme is based on a reasoned theory of change; the assumptions behind why the programme is expected to work are sound & plausible 2. The activities of the programme were implemented 3. The theory of change is verified by evidence: the chain of expected results occurred 4. Other factors were assessed & were either shown not to have made a significant contribution, or if they did, their relative contribution was recognised 38

1 C. Contribution Analysis So, in practice Ø Develop a theory of change > Including underlying assumptions, risks to it, & other factors that may influence outcomes Ø Gather the existing evidence on theory of change > Need evidence on programme activities & results… > …but also on assumptions & other influencing factors > Methods can include surveys, case studies, process tracking, etc. . Ø Assemble & assess the contribution story, & challenges to it > Any weaknesses point to where additional data or information would be useful. Ø Seek out additional evidence Ø Revise & strengthen the contribution story 39

Break for Coffee

And 3 x Group 2 approaches

2 A. Most Significant Change (MSC) • After Davies & Dart (2005) • Participatory process involving sequential collection of stories of significant change which have occurred as a result of intervention • Linked process of sifting by stakeholders to select, discuss, & crystallise most significant changes • Typically, “looking back over the last XX, what do you think the MSC in XX or YY has been” • Done well, can generate useful information for the specification & subsequent assessment of a Theory of Change 42

2 B. Success Case Method (SCM) • After Brinkerhoff (2003) • Narrative technique using naturalistic enquiry & case study analysis • Intended to be quick/simple • Focus deliberately on very best & very worst results of intervention, & role of contextual factors in driving this • “Searches out & surfaces successes, bringing them to light in persuasive & compelling stories so that they can be weighed. . . provided as motivating & concrete examples to others, & learned from so that we have a better understanding of why things worked & why they did not” 43

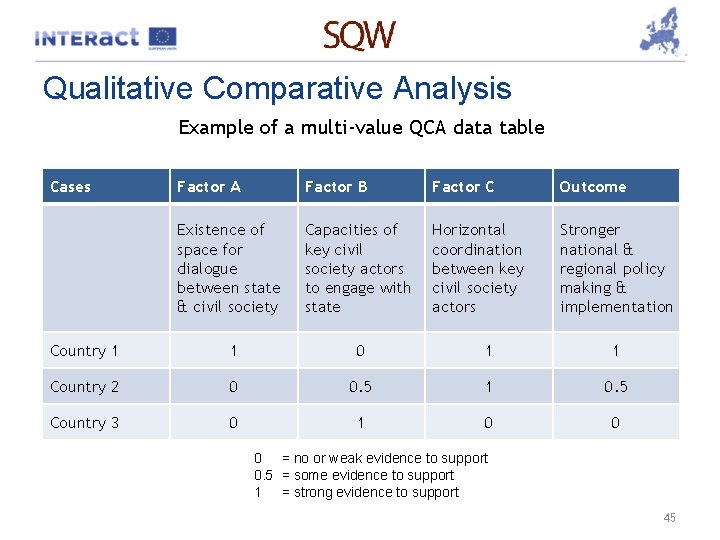

2 C. Qualitative Comparative Analysis Case-based method which identifies different combinations of factors that are critical to a given result, in a given context Not yet widely used in evaluation, provides an innovative way of testing programme theories of change Qualitative data/evidence on potentially relevant causal factors is turned into a quantitative score that can be compared across cases – Crisp set QCA: cases coded “ 0” or “ 1” – Multi-value QCA: allows for some intermediate values (e. g. 0. 33 or 0. 5) – Fuzzy set QCA: allows for coding on a continuous scale anywhere between 0 & 1 44

Qualitative Comparative Analysis Example of a multi-value QCA data table Cases Factor A Factor B Factor C Outcome Existence of space for dialogue between state & civil society Capacities of key civil society actors to engage with state Horizontal coordination between key civil society actors Stronger national & regional policy making & implementation Country 1 1 0 1 1 Country 2 0 0. 5 1 0. 5 Country 3 0 1 0 0 0 = no or weak evidence to support 0. 5 = some evidence to support 1 = strong evidence to support 45

OK, Simon says. . . Let’s be honest > Evaluation in an EU-funding context still maturing > Many of you personally coming new to this area. . . >. . . & many of you want to ‘do’ rather, than ‘review’! > There’s a ferocious market of external ‘evaluators’ > Very significant risk of ‘running’ before ‘walking’ So, 4 guiding principles to go forward with 1. Be pragmatic – 85% of something is better than 100% of nothing. . . & avoid being overly academic! 2. Be developmental – Rome wasn’t build in a day, & evaluative capability needs to build & evolve 3. Become intelligent consumers – think what you are doing, or buying, evaluation-wise 4. Do commit to making time for evaluation things, so 46 building your knowledge

Q&A 2

3. So, to Euro. Gung. Ho

Exercise 1: logic chain & theory of change Purpose Ø Develop Logic Chain & Theory of Change for Euro. Hung. Ho Context Ø Euro. Hung. Ho – a fabricated project! Ø ‘Improving existing & developing new innovation support services, with a focus on the sectors of special interest to the Programme Area’ Ø 8 countries Ø 5 sectors of special interest Ø Identify/developing R&D projects, pilots/prototypes, demonstrators 49 You’ve already seen the script. . .

Exercise 1: logic chain & theory of change Task Ø Using template, develop 1. Logic chain (descriptive) 2. Theory of Change (explanatory & predictive) Logistics Ø Work as tables Ø Self-selected: > Chair > Rapporteur for feedback Ø I will float around. . . Ø You have until 1205 Ø. . . Plenary to 12. 30 Off you go! 50

Exercise 1: logic chain & theory of change Plenary Feedback Ø Our good friend Euro. Gung. Ho Ø 2 tasks > Logic Chain > Theory of Change 51

Break for lunch – back at 1320 prompt

Theories, impacts, & evaluation Simon Pringle, SQW Ltd

Reprising this morning Why evaluation matters The development of ‘Theory’ thinking The ‘n’ thing 2 groups of theory-based approaches Putting the thinking in to practice 54

4. So far so good, what about some evaluation techniques?

Technique 1: Contextual & Documentary Review ‘What did we think we were doing? ’ Desk-based review of > The problem/challenge faced (context – data) > The case for intervention (rationale – arguments) > Our practical commitment (objectives, inputs) > Progress so far (activities, outputs, & processes) > Knowing what we know now: – How logically consistent is all of this? – Do we need to change track? Sources: secondary data (local, national, European) original programme documents, application forms, appraisals, approvals, monitoring data & reports etc. 56

![Technique 2 - One-to-one Consultation [As an informed viewer] ‘What are you observing about Technique 2 - One-to-one Consultation [As an informed viewer] ‘What are you observing about](http://slidetodoc.com/presentation_image_h/9c9066fec3dcf19de20a72ef22522fe6/image-58.jpg)

Technique 2 - One-to-one Consultation [As an informed viewer] ‘What are you observing about the intervention? ’ Detailed consultations with key stakeholders Ø Policymakers/funders Ø Adjacent programmes Ø Delivery bodies Modes: face-to-face – telecom – postal – online Useful for scoping the issues & for cross-checking messages from elsewhere in study 57

![Technique 3 – Surveys [As someone who is impacted] ‘What has your experience of Technique 3 – Surveys [As someone who is impacted] ‘What has your experience of](http://slidetodoc.com/presentation_image_h/9c9066fec3dcf19de20a72ef22522fe6/image-59.jpg)

Technique 3 – Surveys [As someone who is impacted] ‘What has your experience of this intervention been’ 2 groups Ø Beneficiaries – intended or otherwise Ø Non-Beneficiaries – typically those who were ruled out Modes: Face-to-face – telecom – postal – online Typically, self-reported view & observation Prone to Ø Last event bias Ø Memory decay Questionnaire design & analyse-ability a key challenge 58

![Technique 4 – One-to-Many Consultation [As informed viewers] ‘What are you observing about the Technique 4 – One-to-Many Consultation [As informed viewers] ‘What are you observing about the](http://slidetodoc.com/presentation_image_h/9c9066fec3dcf19de20a72ef22522fe6/image-60.jpg)

Technique 4 – One-to-Many Consultation [As informed viewers] ‘What are you observing about the intervention? ’ Similar to one-to-one consultations, but multi- rather than bi-lateral Efficient to setup/deliver . . . but prone to Ø Superficiality Ø Herd effects Ø Loudest voices Ø & tend to be primarily qualitative in observation Often useful to calibrate headlines from 1 to 1 consultations & surveys 59

Technique 5 – Case Studies What has worked well & less well Provide deep-dives into specific aspects of the intrevnetion Ø Process Ø Impact Ø Learning Typically, done face-to-face – so, resource intensive Judgement required to establish rounded view Can be hard to secure consensus amongst consultees Can be difficult to synthesise findings across case study authors 60

Technique 6 – Learning Diaries Real-time recording of intervention experiences Avoids memory decay & last-event bias Does require discipline on part of participants to maintain diary Need recording interval that makes sense – related to speed of changes happening/progress being achieved Helpful to frame wider consultation/survey work 61

5. Euro. Gung. Ho Returns. . .

Exercise 2 – Impact Evaluation Plan Back to our good friend Euro. Gungho. . . . we now have a To. C we understand . . . So, what might an Impact Evaluation Plan for Euro. Gungho look like? Using presented methods & techniques etc. : > ‘What, where & how’ of an outline impact evaluation plan – Which theory based approach? – What mix of techniques to progress, & sequencing? – Do as a simple block diagram – template provided > What pre-requisites > Timing of impact evaluation activity – When, & why? > Resourcing 63 – What cost to undertake - €s, internal vs external?

Exercise 2 – Impact Evaluation Plan Logistics Ø Again, work as tables Ø Self-select > Chair > Rapporteur for feedback Ø I’ll be on hand. . . Ø You have until 14. 30! Ø Plenary 14. 30 - 15. 00 Off you go! 64

Exercise 2 – Impact Evaluation Plan Plenary Feedback Ø Our good friend Euro. Gung. Ho Ø Evaluation Plan 1. 2. 3. 4. ‘What, where & how’ of an outline evaluation plan What pre-requisites Timing of impact evaluation activity Resourcing 65

6. Where, & when, Counterfactual Impact Evaluation makes sense

Large & small ‘n’ considerations in evaluation 67 Theory-based approaches Large ‘n’ - CIE Methods Small ‘n’ - TBIE Methods Typically quantitative, no counterfactual Typically qualitative Comparative TYPICAL APPROACH & PURPOSE Intensive analysis of two or more contrasting cases to understand key factors that influence results Case study Intensive analysis of a single case (community, organisation, event etc. . ) to understand how & why results are generated Crosssectional Collection of data on a large number of cases at a single point in time to detect patterns of association Longitudinal Same as crosssectional, but data collected on at least two occasions to allow insights into the time order of variables Quantitative, counterfactual Quasiexperimental Experimental Develop the counterfactua l ex post facto by taking advantage of ‘natural’ experiments, cut-off points or statistical techniques Design an intervention with otherwise identical treatment & nontreatment groups to isolate its effects

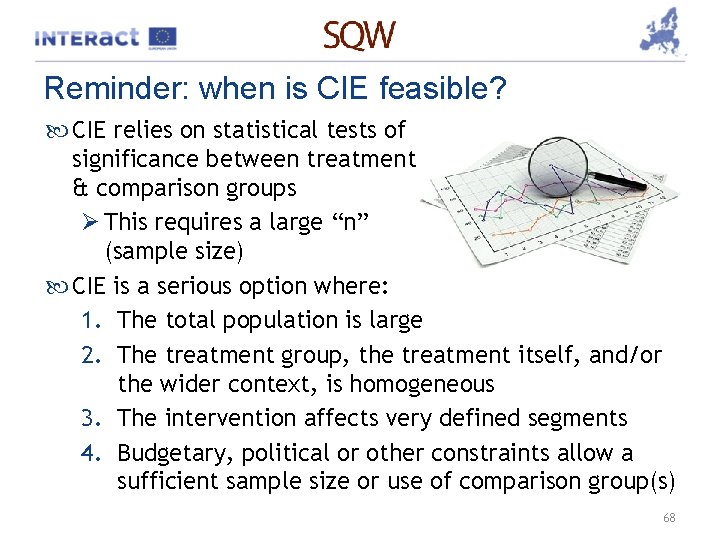

Reminder: when is CIE feasible? CIE relies on statistical tests of significance between treatment & comparison groups Ø This requires a large “n” (sample size) CIE is a serious option where: 1. The total population is large 2. The treatment group, the treatment itself, and/or the wider context, is homogeneous 3. The intervention affects very defined segments 4. Budgetary, political or other constraints allow a sufficient sample size or use of comparison group(s) 68

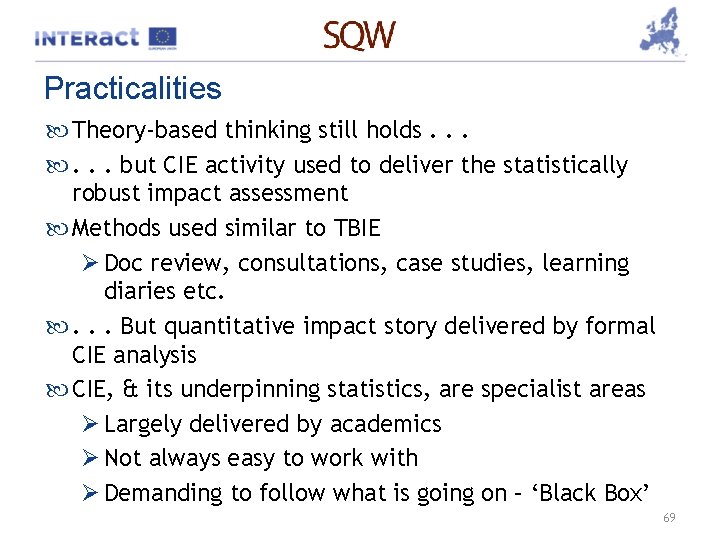

Practicalities Theory-based thinking still holds. . . . but CIE activity used to deliver the statistically robust impact assessment Methods used similar to TBIE Ø Doc review, consultations, case studies, learning diaries etc. . . . But quantitative impact story delivered by formal CIE analysis CIE, & its underpinning statistics, are specialist areas Ø Largely delivered by academics Ø Not always easy to work with Ø Demanding to follow what is going on – ‘Black Box’ 69

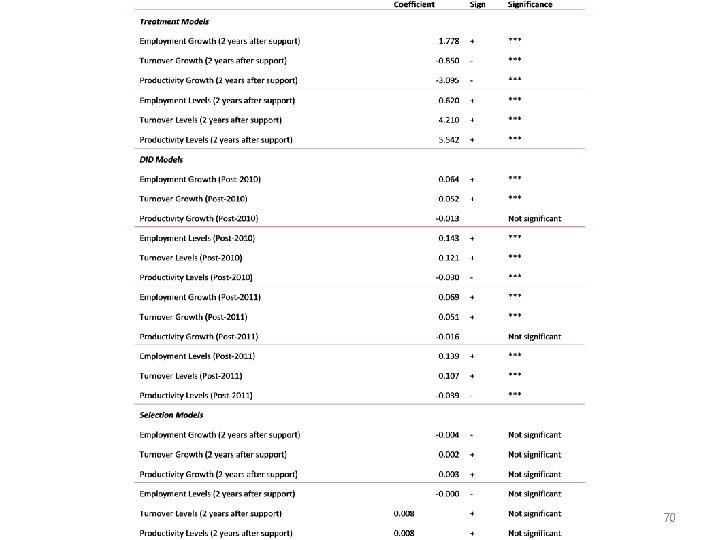

70

So, TBIE vs CIE? TBIE CIE Pros • • • Cons • • • 71

Suitability of CIE for Interreg programmes SDP’s personal view Ø Limited because > Width & range of projects, plus cross-border issues, makes homogeneity hard to achieve > Sophistication of method out of step with programme evaluation capacities, which are still building > Cost to undertake effectively may be prohibitive Ø So, time is not yet right. . . but something to think about for the future, & to be alert to as you evaluate consultancy submissions 72

Conclusions Theory-based impact evaluation cannot rival the rigour with which well-designed counterfactual impact evaluation addresses issues of attribution However, done ‘right’, TBIE can tackle attribution & provide evidence to back up causal claims White & Phillips have identified the following “common steps for causal inference in small ‘n’ cases”: 1. 2. 3. 4. 5. Set out the attribution question(s) Set out the programme’s theory of change Develop an evaluation plan for data collection & analysis Identify alternative causal hypotheses Use evidence to verify the causal chain 73

Further reading European Commission: Evalsed Sourcebook – Methods & Techniques Rogers, P (2008): Using Programme Theory to Evaluate Complicated & Complex Aspects of Interventions. Evaluation, Vol 14(1): 29 – 48 White, H & Phillips, D (2012): Addressing attribution of cause & effect in small n impact evaluations: towards an integrated framework. 3 ie Working Paper 15 (You already have!) Westhorp, G (2014): Realist Impact Evaluation – an Introduction. ODI. org/methodslab Mayne, J: Contribution analysis (2008): An approach to exploring cause & effect. ILAC Brief 16 Baptist, C & Befani, B (2015): Qualitative Comparative Analysis – A Rigorous Qualitative Method for Assessing Impact 74

7. Suggestions for evaluating the added value of cooperation – Strategic Added Value

Suggestions for evaluating the added value of cooperation – SAV Cooperation a key element of our programmes – part of the Cohesion agenda Our interventions primarily about delivery. . . but changing attitudes & behaviours also important strategically Important to think carefully about these strategic intents Beware – outcomes & results are harder to assess & prove than for ‘straight’ project delivery 76

Analytical Framework for assessing SAV – the English Experience 5 aspects of SAV Ø Strategic leadership & catalyst: Articulating & communicating development needs in the programme area, opportunities & solutions to partners & stakeholders in the programme area & elsewhere Ø Strategic influence: Carrying-out or stimulating activity that defines the distinctive roles of partners, gets them to commit to shared strategic objectives & to behave & allocate their resources accordingly 77

Analytical Framework for assessing SAV – the English Experience Ø Leverage: Providing/securing financial & other incentives to mobilise partner & stakeholder resources – equipment & people, as well as funding Ø Synergy: Using organisational capacity, knowledge & expertise to improve information exchange & knowledge transfer & coordination &/or integration of the design & delivery of interventions between partners 78

Analytical Framework for assessing SAV – the English Experience Ø Finally, Engagement: Setting-up the mechanisms & incentives for the more effective & deliberative engagement of stakeholders in the design & delivery of programme emphases 79

Contact Simon Pringle SQW Ltd springle@sqw. co. uk +44 7915 650 732

- Slides: 81