THE UBC Model3CMAQ AQ Forecast System Luca Delle

THE UBC Model-3/CMAQ AQ Forecast System Luca Delle Monache & Roland Stull Weather Forecast Research Team NW-AIRQUEST ANNUAL MEETING, Monday, October 6 th, 2003 1

OUTLINE • UBC AQ Forecast System – – – • • • MC 2 mesoscale model MCIP SMOKE CMAQ PAVE or Gr. ADS? Monster Cluster Computational Domain and Forecast Period Parallel Run Comparison Future Work Acknowledgements 2

Mesoscale Compressible Community Model (MC 2) • Version 4. 9. 1 • Non-hydrostatic • Terrain following Gal-Chen coordinate system • Polar stereographic projection • Semi-implicit semi-Lagrangian scheme • PBL: turbulent kinetic energy (implicit vertical diffusion) • Surface layer scheme: similarity theory Note: MC 2 output is converted into MM 5 format (RWDI) 3

Meteorology Chemistry Interface Processor (MCIP) Links MM 5 with CMAQ to provide a complete set of meteorological data: • Version 2. 1 • Physical and dynamical algorithms • Data format translation • Conversion of units of parameters • Diagnostic estimations of parameters not provided (PBL height, deposition parameters, cloud parameter) • Extraction of data for appropriate window domains • Reconstruction of meteorological data on different grid and layer structures • Mass consistency check 4

Sparse Matrix Operator Kernel Emissions Modeling System (SMOKE) Emissions data processing methods integrated by high-performance computing sparse-matrix algorithms: • Version 1. 5. 1 (modified by RWDI - mobile as area source) • Emissions types supported: - Area - Mobile - Point source - Biogenic • Emissions inventory data conversion into CMAQ formatted emission files 5

Community Multiscale Air Quality Modeling System (CMAQ) • Version 4. 2. 1 (parallel version modified by RWDI and UC Riverside) • Horizontal and Vertical transport: Piece-wise Parabolic Method and Bott scheme • Horizontal and Vertical diffusion: spatially varying and K-theory • Cloud transport • Chemical mechanism: cb 4_ae 2_aq (43 species and 96 reactions) • Chemistry solver: Modified Euler Backward Iterative method • Aqueous phase chemistry: explicit 1 -section • Particle size: modal • 1 -way nesting • Wet and dry deposition 6

Why CMAQ Version 4. 2. 1? • Extensively tested (by RWDI and UC Riverside) on Linux clusters • Enhancements and bug fixes in latest version: √ Modifications of the vertical diffusion module to improve data locality to speed up computation √ Bug fixed for the case where user wants to build a model for no aerosols √ Changes to improve robustness for inexact I/O API (net. CDF) file header data √ Bug fixed for the heterogeneous N 2 O 5 reaction in the aerosol module √ Rate constant calculation for this reaction has been changed to use effective radius instead of diameter √ Bug fixed in the contribution of N 2 O 5 to total initial HNO 3 7

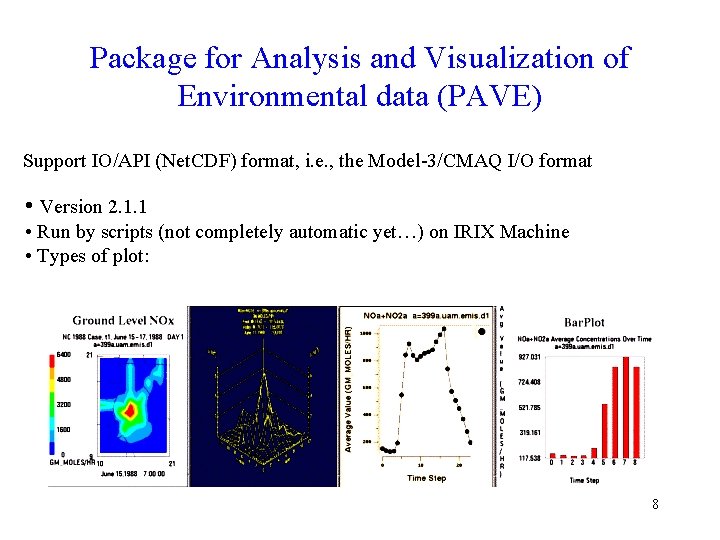

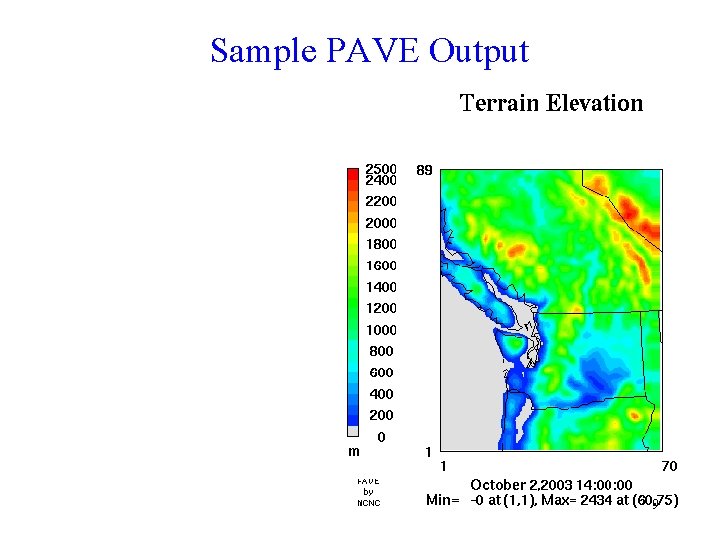

Package for Analysis and Visualization of Environmental data (PAVE) Support IO/API (Net. CDF) format, i. e. , the Model-3/CMAQ I/O format • Version 2. 1. 1 • Run by scripts (not completely automatic yet…) on IRIX Machine • Types of plot: 8

Sample PAVE Output 9

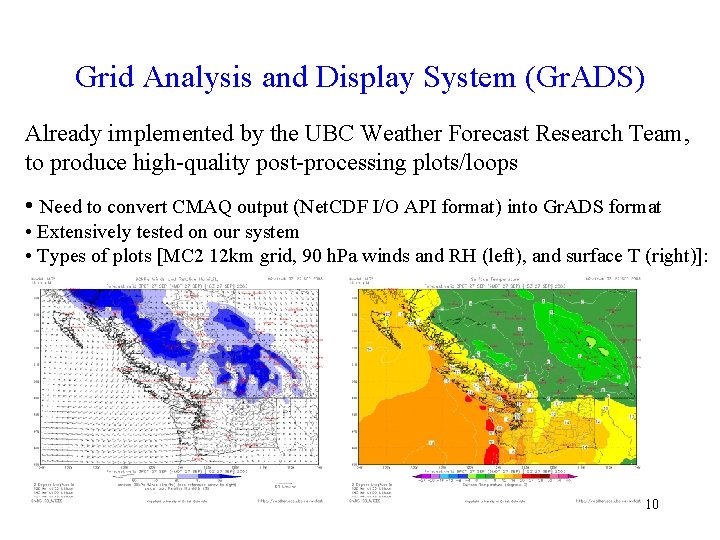

Grid Analysis and Display System (Gr. ADS) Already implemented by the UBC Weather Forecast Research Team, to produce high-quality post-processing plots/loops • Need to convert CMAQ output (Net. CDF I/O API format) into Gr. ADS format • Extensively tested on our system • Types of plots [MC 2 12 km grid, 90 h. Pa winds and RH (left), and surface T (right)]: 10

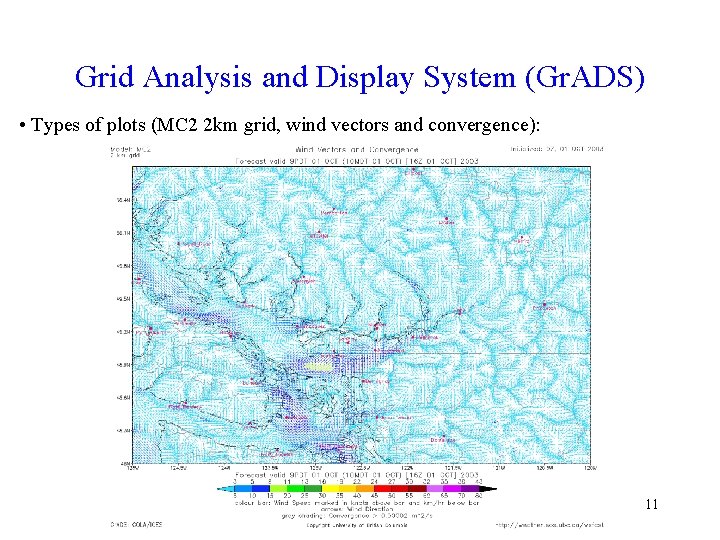

Grid Analysis and Display System (Gr. ADS) • Types of plots (MC 2 2 km grid, wind vectors and convergence): 11

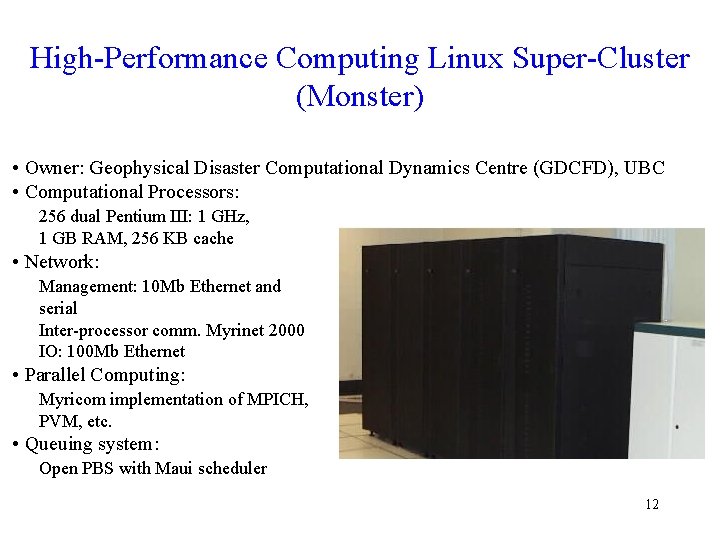

High-Performance Computing Linux Super-Cluster (Monster) • Owner: Geophysical Disaster Computational Dynamics Centre (GDCFD), UBC • Computational Processors: 256 dual Pentium III: 1 GHz, 1 GB RAM, 256 KB cache • Network: Management: 10 Mb Ethernet and serial Inter-processor comm. Myrinet 2000 IO: 100 Mb Ethernet • Parallel Computing: Myricom implementation of MPICH, PVM, etc. • Queuing system: Open PBS with Maui scheduler 12

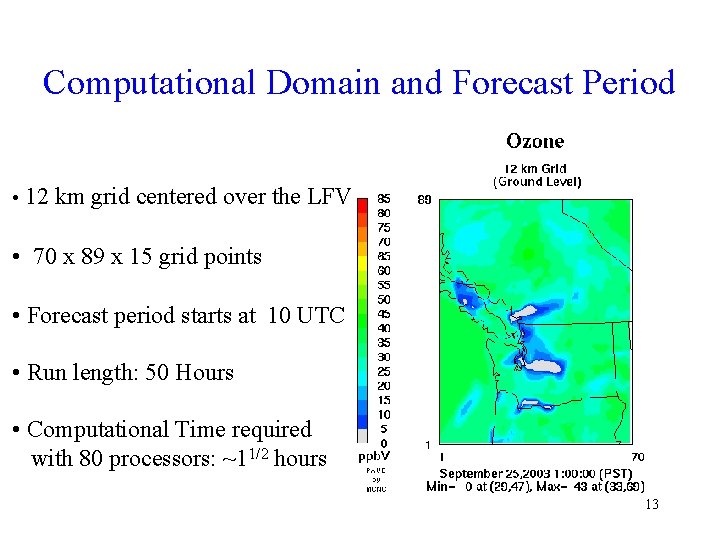

Computational Domain and Forecast Period • 12 km grid centered over the LFV • 70 x 89 x 15 grid points • Forecast period starts at 10 UTC • Run length: 50 Hours • Computational Time required with 80 processors: ~11/2 hours 13

CMAQ Parallel Run Comparison Note: • Run length: 50 Hours • with 40, 60 and 80 procs slower I/O settings compared to 20 procs => better scaling expected for future tests with optimal I/O settings 14

Future Work • Operational mode: √ More performance tests with different number of processors Ö Run at 4 km Ö Run at 2 km Ö CMAQ driven by MM 5 (@ 12, 4 and 2 km) Ö CMAQ driven by WRF (@ 12, 4 and 2 km) Ö …Ensemble forecast! Ö Results on the web Ö Implementation of a more recent CMAQ version • Research mode: Ö Ensemble forecast with the “Multi-Emission approach” 15

Acknowledgements Grants support • Environment Canada (Colin di Cenzo) • Canadian Natural Science and Engineering Research Council • British Columbia Ministry of Water and Air Protection • Canadian Foundation for Climate and Atmospheric Science • Canadian Foundation for Innovation • BC Knowledge Development Fund • BC Ministry of Water Land Air Protection • Parks Canada 16

MC 2 => MM 5 Conversion • Converter developed by RWDI • Conversion from MC 2 output to pseudo MM 5 format • Purpose: allow MCIP to process MC 2 data • MC 2 polar stereographic projection on pressure levels • Interpolation (inverse-squared distance) onto uniformly spaced MM 5 grid in Lambert Conic Conformal projection, and sigma levels • Mapping and/or recalculation of parameters required by CMAQ not included in the MC 2 output • Room of improvements to maintain mass conservation (partially performed by MCIP) 17

- Slides: 17