The Tradeoff between Data Utility and Disclosure Risk

The Trade-off between Data Utility and Disclosure Risk (using a GA Synthetic Data Generator) Yingrui Chen, Jennifer Taub, Mark Elliot The University of Manchester

Acknowledgements • • • Gillian Raab (Edinburgh) Anne-Sophie Charest (Laval) Cong Chen (Public Health England) Christine M. O'Keefe (CSIRO) Michelle Pistner Nixon(Penn State) Joshua Snoke (RAND) Aleksandra Slavkovi'c (Penn State) Duncan Smith (Univerity of Manchester) Joe Sakshaug (IAB)

Outline • Measuring Utility • Measuring Risk • The trade off • NB: Focus on structured categorical data

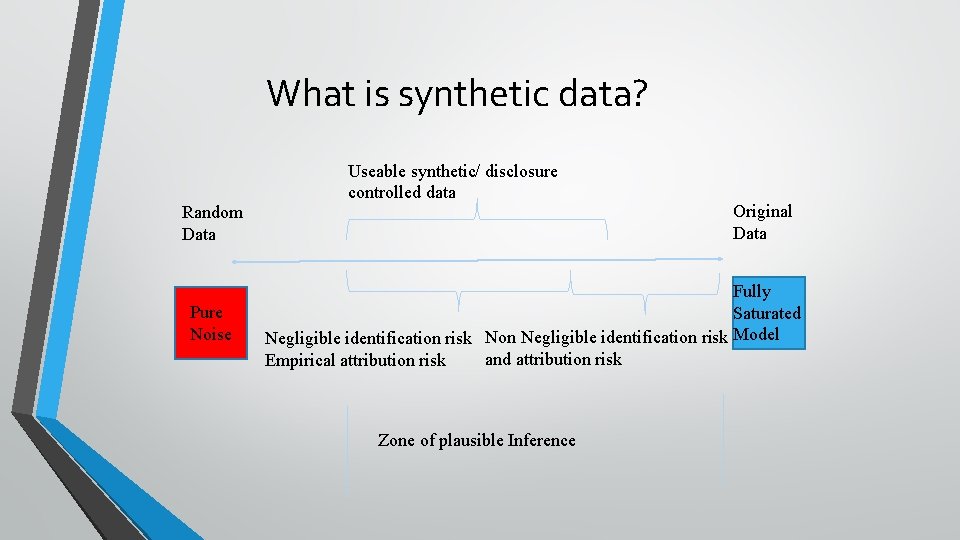

What is synthetic data? Random Data Pure Noise Useable synthetic/ disclosure controlled data Original Data Fully Saturated Negligible identification risk Non Negligible identification risk Model and attribution risk Empirical attribution risk Zone of plausible Inference

X

X

X XX

X X X

Utility

Information Utility • Purdam and Elliot (2007): “The loss of analytical validity as occurring when a disclosure control method has changed a dataset to the point at which a user reaches a different conclusion from the same analysis’” (p. 1102) • Every statistical property can be considered as an element utility • Utility is considered as the objective of the optimising program • Objectives should be measureable and comparable

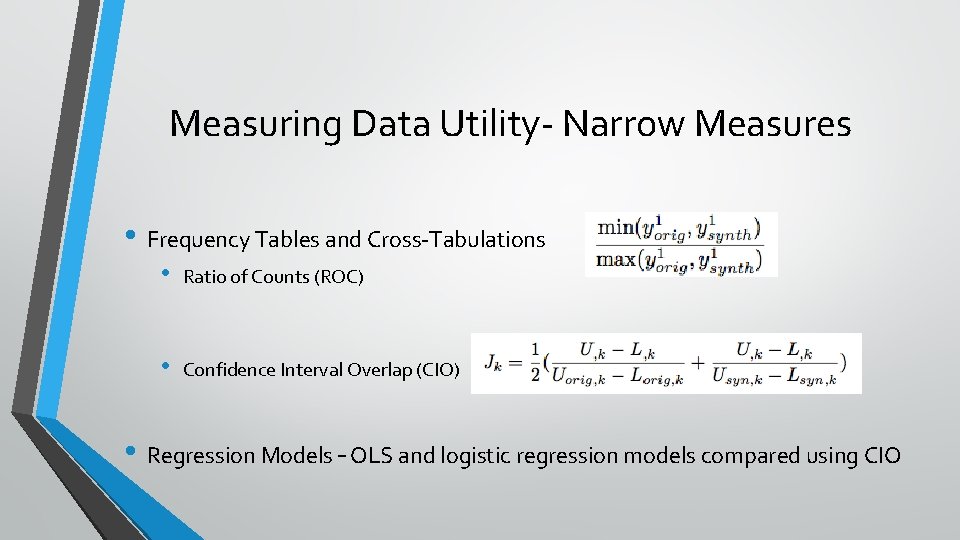

Measuring Data Utility- Narrow Measures • Frequency Tables and Cross-Tabulations • Ratio of Counts (ROC) • Confidence Interval Overlap (CIO) • Regression Models – OLS and logistic regression models compared using CIO

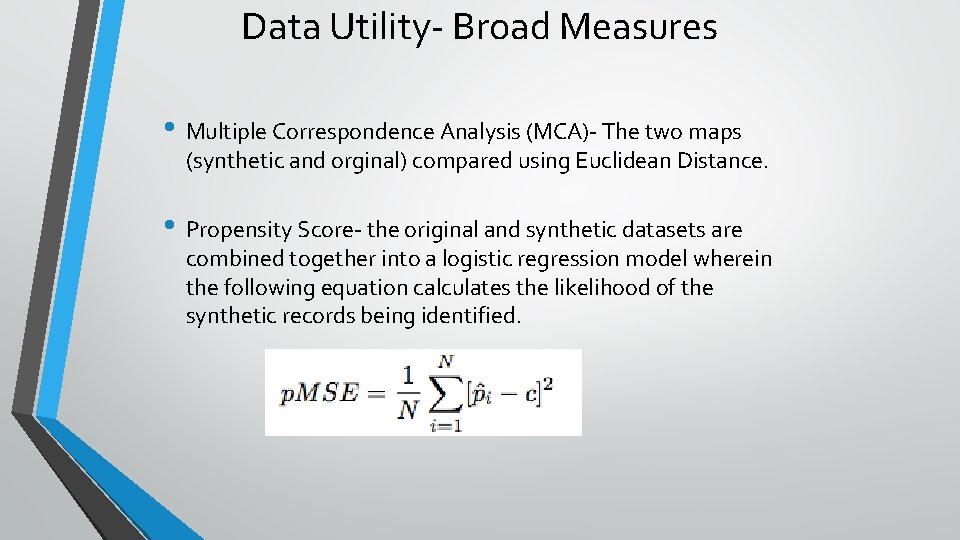

Data Utility- Broad Measures • Multiple Correspondence Analysis (MCA)- The two maps (synthetic and orginal) compared using Euclidean Distance. • Propensity Score- the original and synthetic datasets are combined together into a logistic regression model wherein the following equation calculates the likelihood of the synthetic records being identified.

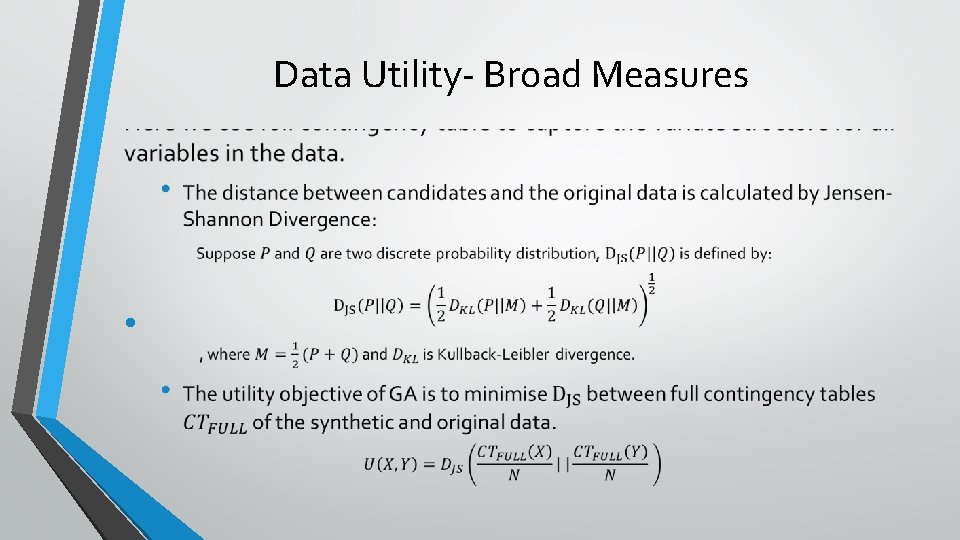

Data Utility- Broad Measures •

Measuring Risk

Disclosure Risk • Identification • A disclosure risk dataset contains identification risk if a data subject can be reidentified from the dataset. • Attribute • A disclosure risk dataset contains attribution risk if sensitive information of any population units can be inferred from the dataset. • Either deterministically or probabilistically

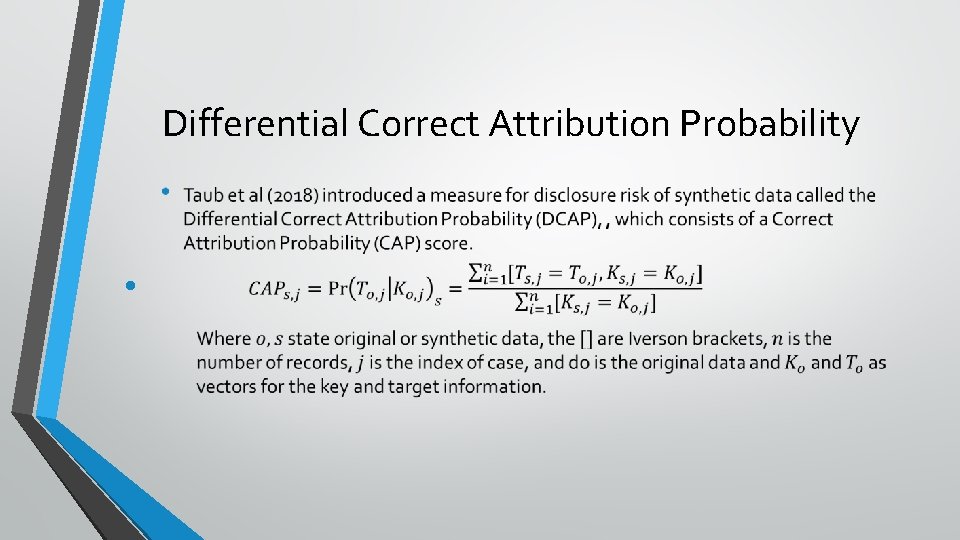

Differential Correct Attribution Probability •

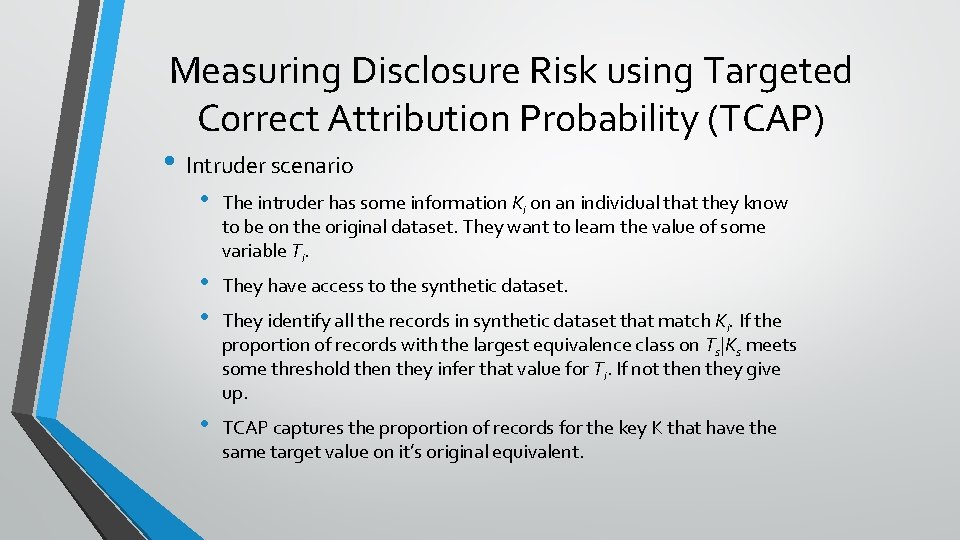

Measuring Disclosure Risk using Targeted Correct Attribution Probability (TCAP) • Intruder scenario • • The intruder has some information Ki on an individual that they know to be on the original dataset. They want to learn the value of some variable Ti. They have access to the synthetic dataset. They identify all the records in synthetic dataset that match Ki. If the proportion of records with the largest equivalence class on Ts|Ks meets some threshold then they infer that value for Ti. If not then they give up. TCAP captures the proportion of records for the key K that have the same target value on it’s original equivalent.

Using GAs to capture the Trade Off

Genetic Algorithms (GAs) Natural Computing • Natural computing is computational systems inspired by natural systems Biological system • simulating the process of organisms surviving from limited resources and predators Evolutionary Computing • It uses the principals of natural evolution and genotypic variation to solve complicated optimisation problems Genetic Algorithms • It simulates the process of natural evaluation including natural selection, crossover and mutation.

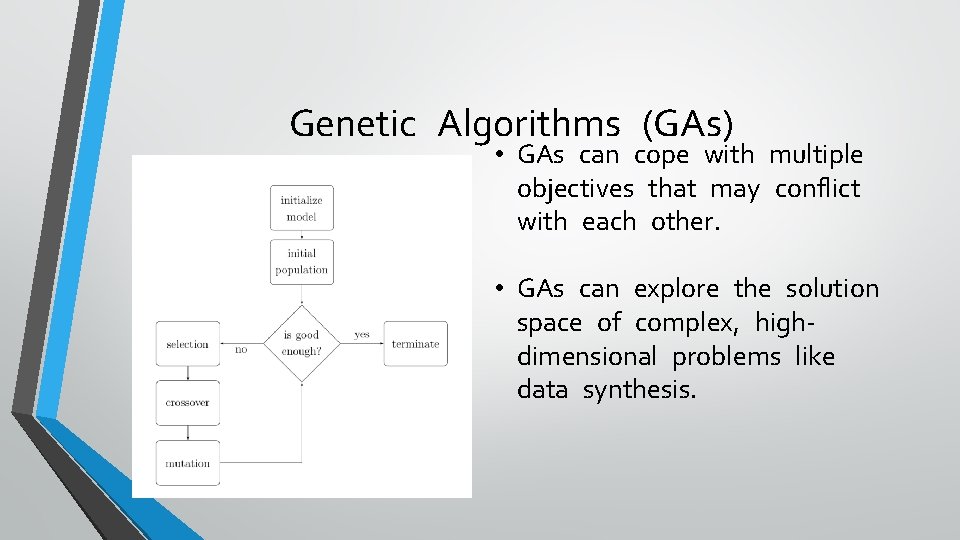

Genetic Algorithms (GAs) • GAs can cope with multiple objectives that may conflict with each other. • GAs can explore the solution space of complex, highdimensional problems like data synthesis.

• Initial • 100 Population Model Design candidates that are mutated from the original data, thus they are high in utility (and risk). • Selection Operator: • Deterministic tournament selection operator with tournament size t = 2. i. e. 2 candidates are randomly selected into tournaments (with replacement) and only the winner can enter the crossover operator.

Model Design • Crossover • Whole-Case Parallelised Crossover: it occurs on every case in the candidate, the case was chosen by determined crossover rate (0. 1 in this paper) and it is then switched with the corresponding case in paired candidate. • Mutation • Operator Uniform mutation: it gives every single element/cell in the candidate a chance (0. 001 in this paper) to mutate.

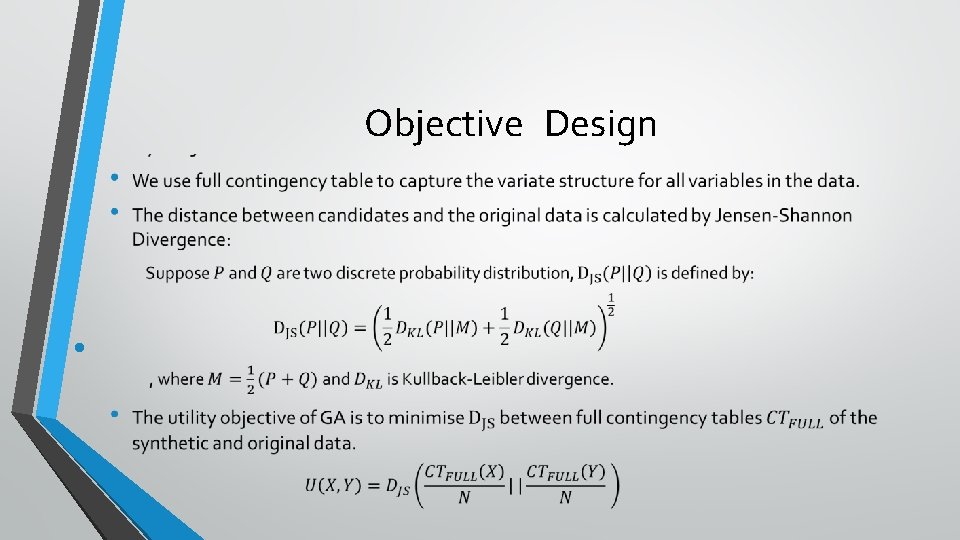

Objective Design •

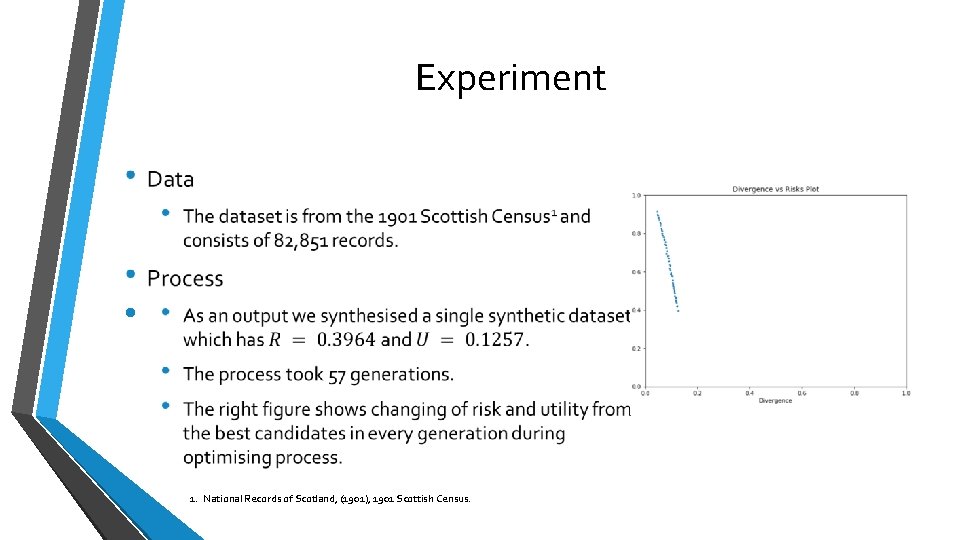

Experiment • 1. National Records of Scotland, (1901), 1901 Scottish Census.

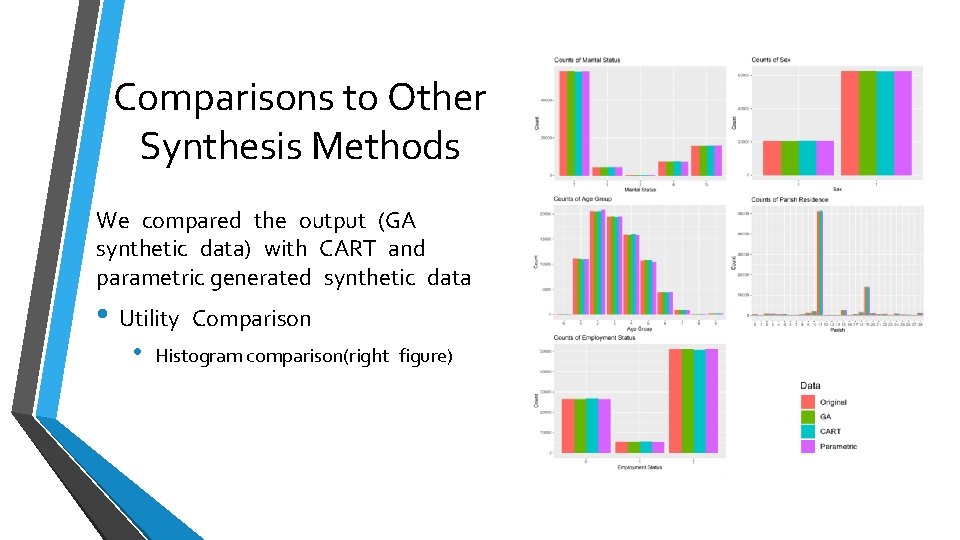

Comparisons to Other Synthesis Methods We compared the output (GA synthetic data) with CART and parametric generated synthetic data • Utility • Comparison Histogram comparison(right figure)

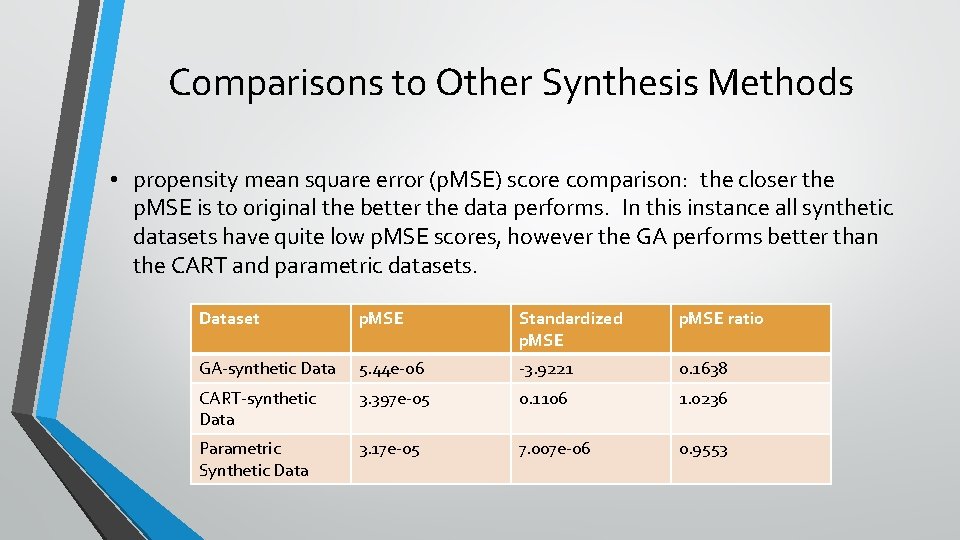

Comparisons to Other Synthesis Methods • propensity mean square error (p. MSE) score comparison: the closer the p. MSE is to 0 riginal the better the data performs. In this instance all synthetic datasets have quite low p. MSE scores, however the GA performs better than the CART and parametric datasets. Dataset p. MSE Standardized p. MSE ratio GA-synthetic Data 5. 44 e-06 -3. 9221 0. 1638 CART-synthetic Data 3. 397 e-05 0. 1106 1. 0236 Parametric Synthetic Data 3. 17 e-05 7. 007 e-06 0. 9553

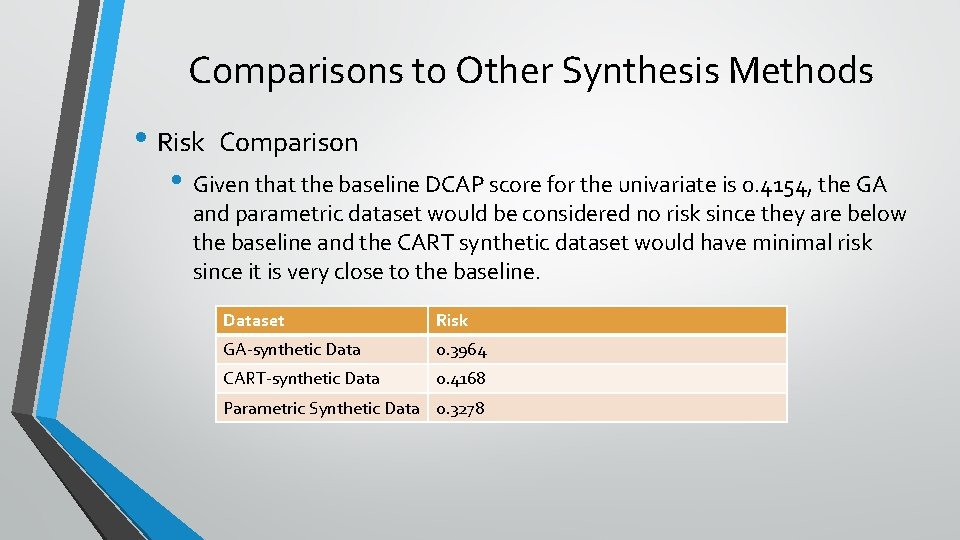

Comparisons to Other Synthesis Methods • Risk Comparison • Given that the baseline DCAP score for the univariate is 0. 4154, the GA and parametric dataset would be considered no risk since they are below the baseline and the CART synthetic dataset would have minimal risk since it is very close to the baseline. Dataset Risk GA-synthetic Data 0. 3964 CART-synthetic Data 0. 4168 Parametric Synthetic Data 0. 3278

Concluding remarks • GAs are viable alternative to standard synthesisers. • GAs are able to produce synthetic data that allows disclosure risk and information utility to be included in the same generation framework. • GAs • Current and Future work • Synthetic data challenge – watch out for a call! • Keen to have some DP synthetic datasets this time. • Bringing attribute and identification disclosure into a common • framework Better general measures: • • Earth movers distance Sample size equivalence

References • Taub, J. , Elliot M. and Sakshaug, J. (2020, Accepted) The impact of synthetic data generation on data utility Transactions on Data Privacy • Taub J. , Elliot M. , Pamparka M. and Smith D. (2018) “Differential Correct Attribution Probability for Synthetic Data: An Exploration”. In J. Domingo. Ferrer and F Montes (eds), Privacy in Statistical Databases (LNCS, volume 11126) 122 -137. • Chen, Y. , Elliot, M. and Smith D. (2018) “The Application of Genetic Algorithms to Data Synthesis: A Comparison of Three Crossover Methods” In J. Domingo-Ferrer and F Montes (eds), Privacy in Statistical Databases LNCS, volume 11126 160 -171. • Taub, J. , Elliot M. Raab, G. , Charest, A-S. , Chen, C. , Pistner, M. , Snoke, J. , Slavkovic, . A. (2019) Creating the best Risk-Utility Profile: The Synthetic Data Challenge

- Slides: 30