THE STRUCTURE OF THE UNOBSERVED Ricardo Silva ricardostats

THE STRUCTURE OF THE UNOBSERVED Ricardo Silva – ricardo@stats. ucl. ac. uk Department of Statistical Science and CSML HEP Seminar February 2013

Shameless Advertisement http: //www. csml. ucl. ac. uk/

Outline • In the next 50 minutes or so, I’ll attempt to provide an overview of some of my lines of work • The main unifying theme: How assumptions about variables we never observe can help us to infer important facts about variables we do observe?

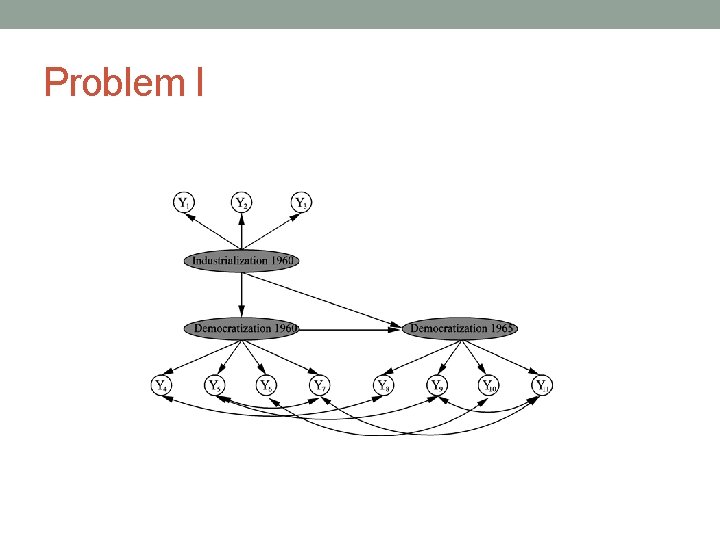

Problem I • Suppose we have measurements which indicate some signal about unobservable variables • Which assumptions Country: Freedonia 1. GNP per capita: _____ 2. Energy consumption per capita: _____ 3. % Labor force in industry: _____ 4. Ratings on freedom of press: _____ 5. Freedom of political opposition: _____ 6. Fairness of elections: _____ 7. Effectiveness of legislature _____ justify postulating hidden variable explanations? • What can we get out of them?

Problem I • Smoothing measurements • Which implications would this have to structure discovery?

Problem II • Prediction problems with network information Book features(Bi) Political Inclination(Bi) xkcd. com

Problem II • There are many reasons why people can be linked • One interpretation is to postulate common causes as the source leading to linkage • Which implications would this have to prediction?

Problem III • The joy of questionnaires: what is the actual information content?

Problem III • How to define the information content of such data? • Given this, how to “compress” information with some guidance on losses? • Which implications to the design of measurements?

Problem I

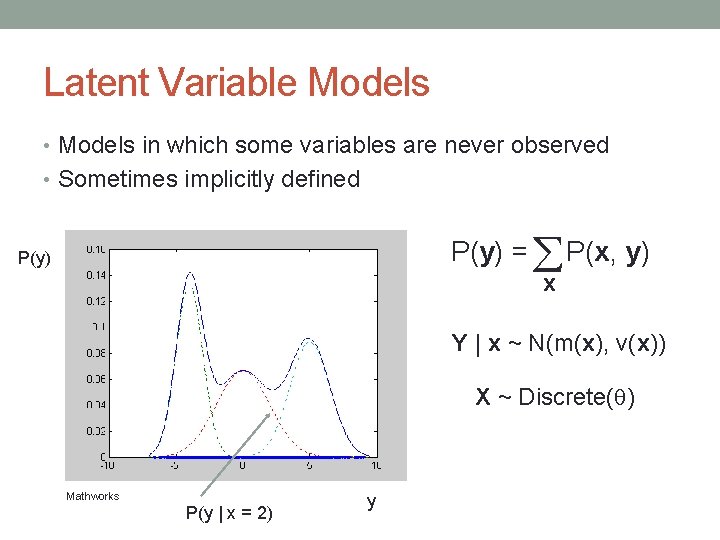

Latent Variable Models • Models in which some variables are never observed • Sometimes implicitly defined P(y) = P(x, y) P(y) x Y | x ~ N(m(x), v(x)) X ~ Discrete( ) Mathworks P(y | x = 2) y

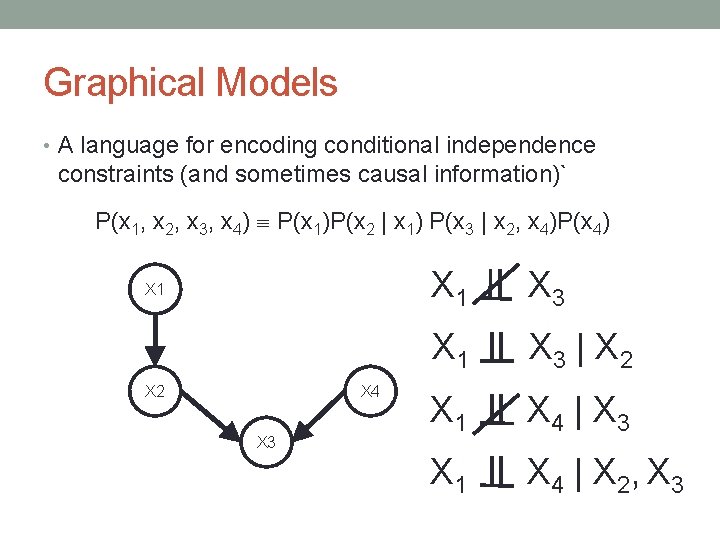

Graphical Models • A language for encoding conditional independence constraints (and sometimes causal information)` P(x 1, x 2, x 3, x 4) P(x 1)P(x 2 | x 1) P(x 3 | x 2, x 4)P(x 4) X 1 X 2 X 4 X 3 X 1 X 3 | X 2 X 1 X 4 | X 3 X 1 X 4 | X 2, X 3

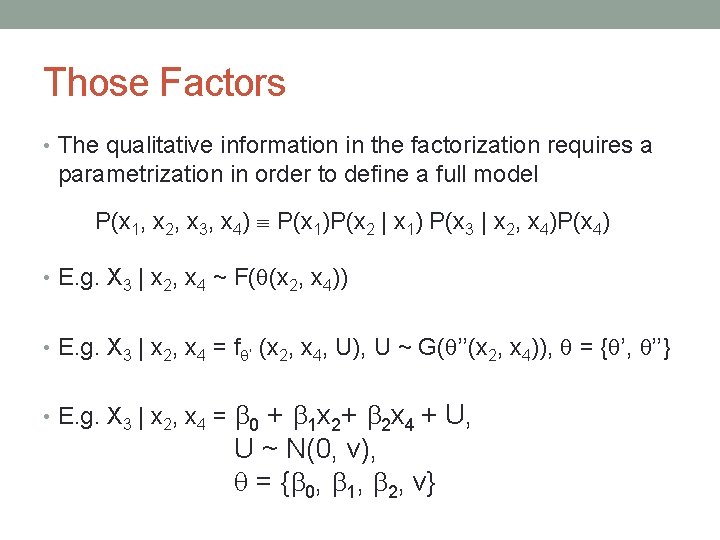

Those Factors • The qualitative information in the factorization requires a parametrization in order to define a full model P(x 1, x 2, x 3, x 4) P(x 1)P(x 2 | x 1) P(x 3 | x 2, x 4)P(x 4) • E. g. X 3 | x 2, x 4 ~ F( (x 2, x 4)) • E. g. X 3 | x 2, x 4 = f ’ (x 2, x 4, U), U ~ G( ’’(x 2, x 4)), = { ’, ’’} • E. g. X 3 | x 2, x 4 = 0 + 1 x 2+ 2 x 4 + U, U ~ N(0, v), = { 0, 1, 2, v}

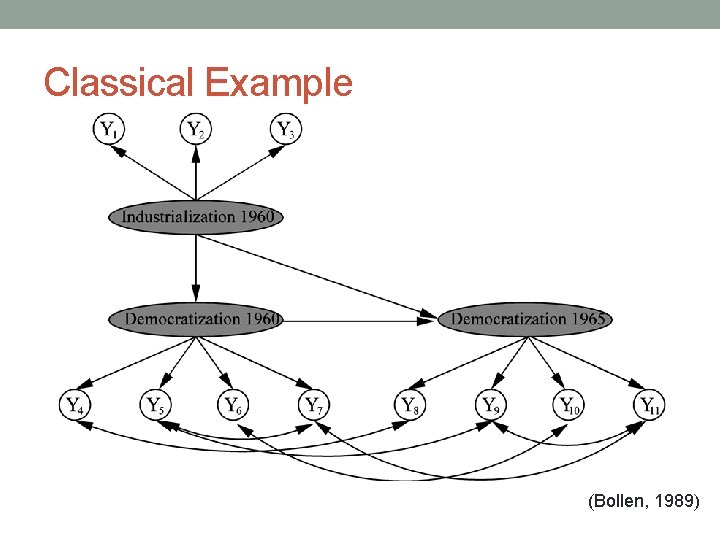

Classical Example (Bollen, 1989)

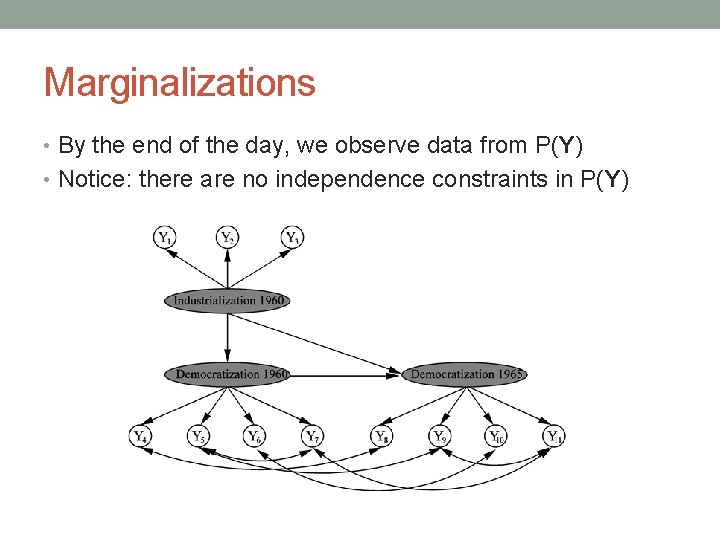

Marginalizations • By the end of the day, we observe data from P(Y) • Notice: there are no independence constraints in P(Y)

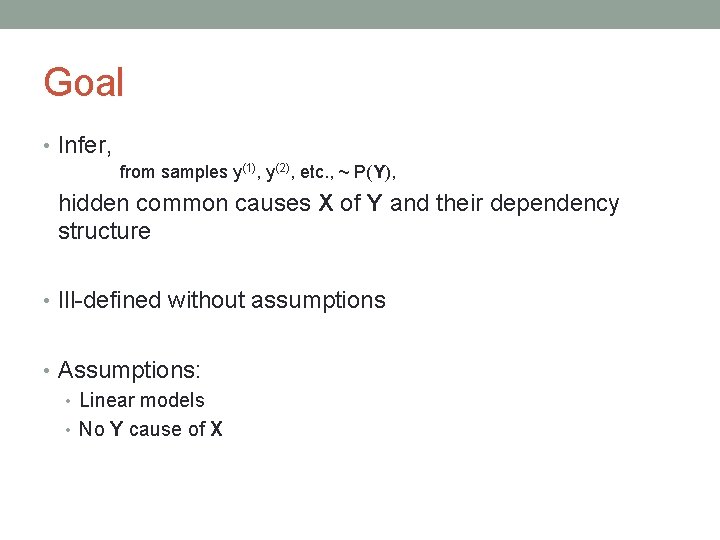

Goal • Infer, from samples y(1), y(2), etc. , ~ P(Y), hidden common causes X of Y and their dependency structure • Ill-defined without assumptions • Assumptions: • Linear models • No Y cause of X

Outcome • Ideally: • Given a reasonably large sample, reconstruct the whole dependency structure of the graphical model • Realistically speaking: • There can be very many structures compatible with P(Y) • Return then some sort of “equivalence class” • If you are lucky, it is an informative class • Statistical limitations

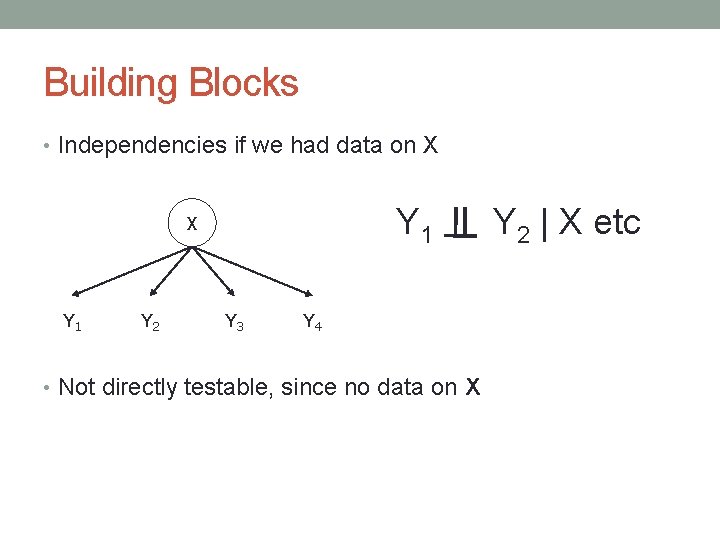

Building Blocks • Independencies if we had data on X Y 1 Y 2 Y 3 Y 4 • Not directly testable, since no data on X Y 2 | X etc

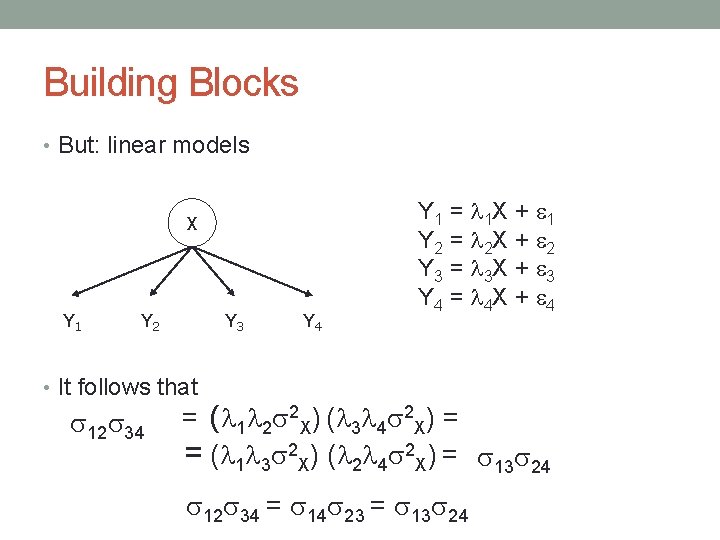

Building Blocks • But: linear models X Y 1 Y 2 Y 3 Y 4 Y 1 = 1 X + 1 Y 2 = 2 X + 2 Y 3 = 3 X + 3 Y 4 = 4 X + 4 • It follows that 12 34 = ( 1 2 2 X) ( 3 4 2 X) = = ( 1 3 2 X) ( 2 4 2 X) = 13 24 12 34 = 14 23 = 13 24

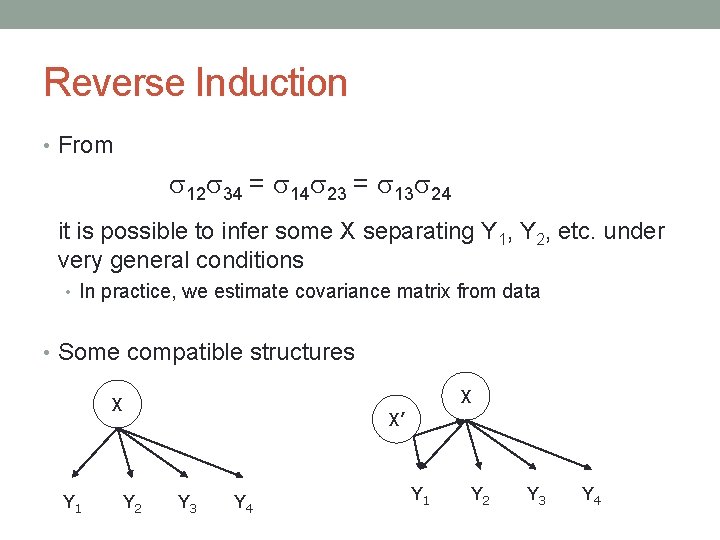

Reverse Induction • From 12 34 = 14 23 = 13 24 it is possible to infer some X separating Y 1, Y 2, etc. under very general conditions • In practice, we estimate covariance matrix from data • Some compatible structures X X Y 1 Y 2 X’ Y 3 Y 4 Y 1 Y 2 Y 3 Y 4

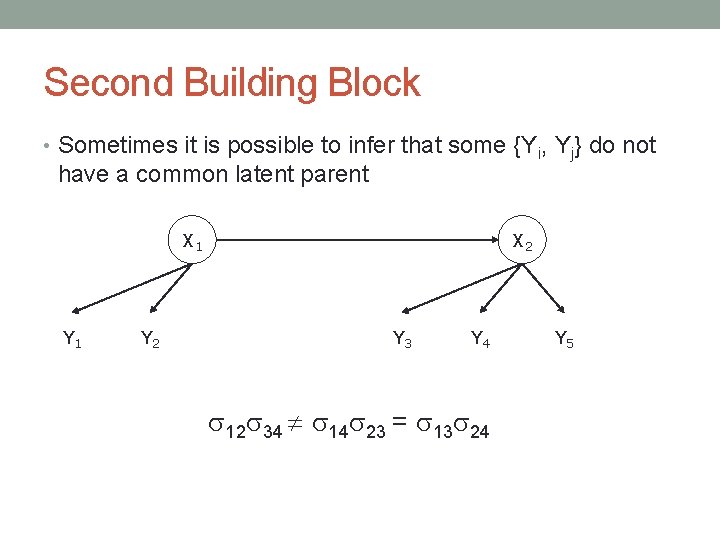

Second Building Block • Sometimes it is possible to infer that some {Yi, Yj} do not have a common latent parent X 1 Y 2 X 2 Y 3 Y 4 12 34 14 23 = 13 24 Y 5

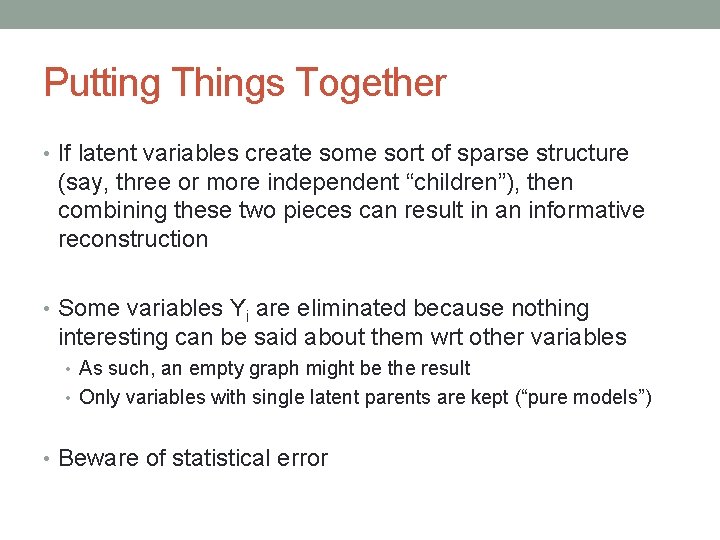

Putting Things Together • If latent variables create some sort of sparse structure (say, three or more independent “children”), then combining these two pieces can result in an informative reconstruction • Some variables Yi are eliminated because nothing interesting can be said about them wrt other variables • As such, an empty graph might be the result • Only variables with single latent parents are kept (“pure models”) • Beware of statistical error

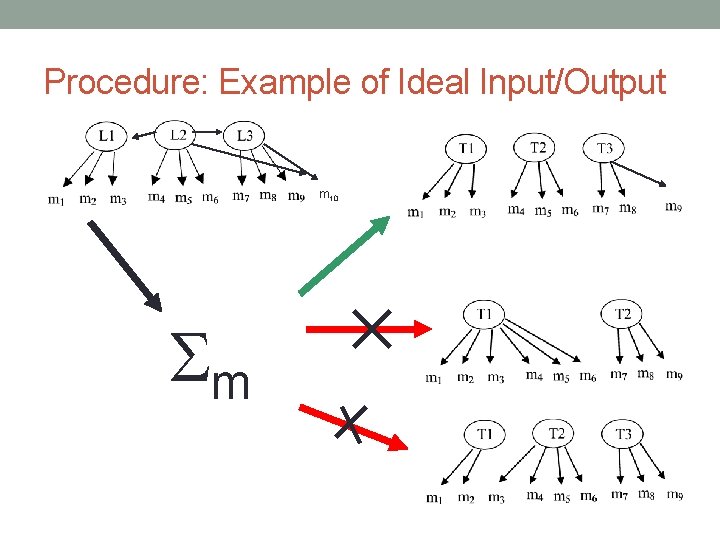

Procedure: Example of Ideal Input/Output m 10 m 23

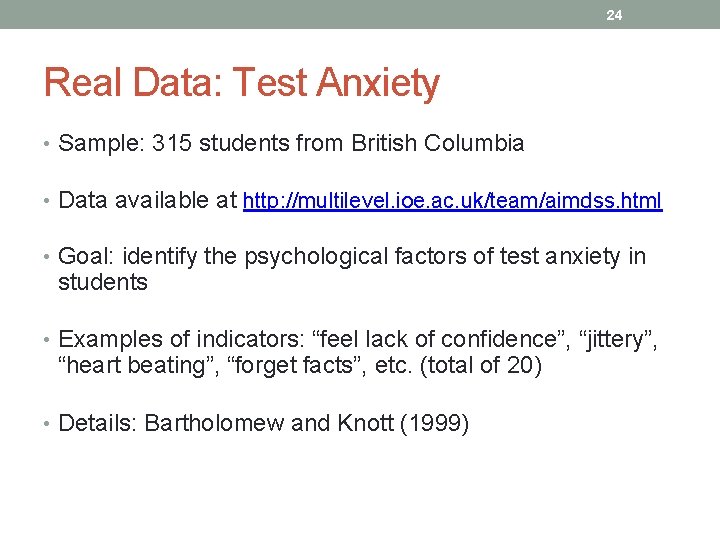

24 Real Data: Test Anxiety • Sample: 315 students from British Columbia • Data available at http: //multilevel. ioe. ac. uk/team/aimdss. html • Goal: identify the psychological factors of test anxiety in students • Examples of indicators: “feel lack of confidence”, “jittery”, “heart beating”, “forget facts”, etc. (total of 20) • Details: Bartholomew and Knott (1999)

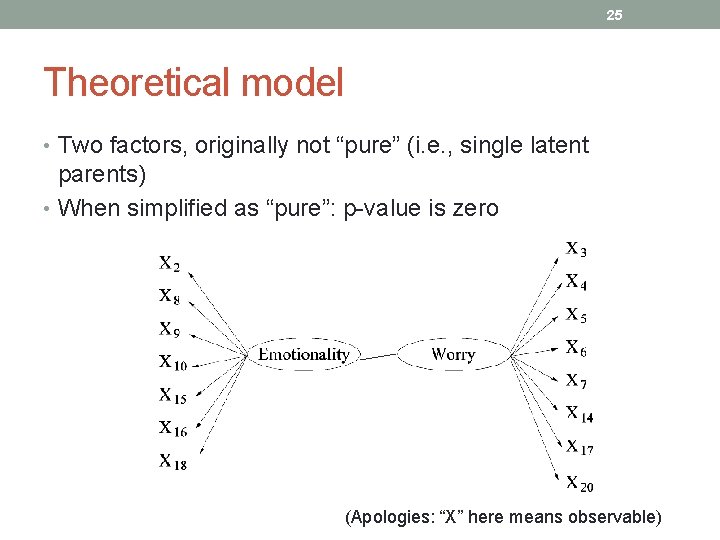

25 Theoretical model • Two factors, originally not “pure” (i. e. , single latent parents) • When simplified as “pure”: p-value is zero (Apologies: “X” here means observable)

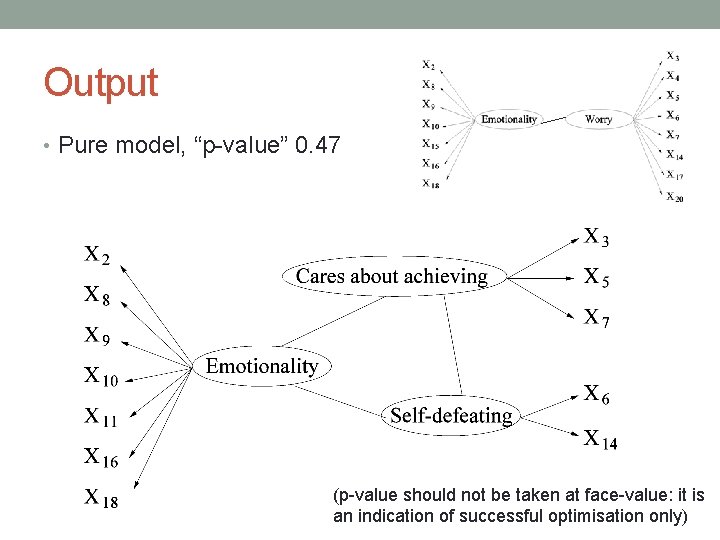

26 Output • Pure model, “p-value” 0. 47 (p-value should not be taken at face-value: it is an indication of successful optimisation only)

Problem II Book features(Bi) Political Inclination(Bi) xkcd. com

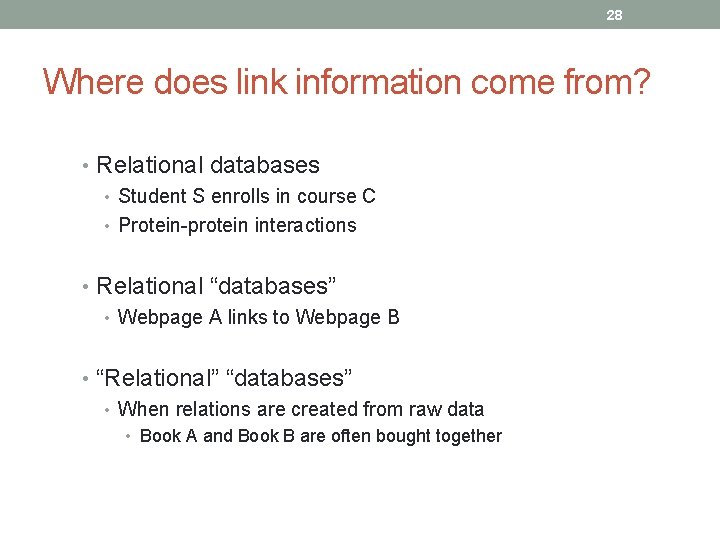

28 Where does link information come from? • Relational databases • Student S enrolls in course C • Protein-protein interactions • Relational “databases” • Webpage A links to Webpage B • “Relational” “databases” • When relations are created from raw data • Book A and Book B are often bought together

29 Where does the link information go to? • The link analysis problems • Predicting links • Using links as data • Relations as data • “Linked webpages are likely to present similar content” • “Political books that are bought together often have the same political inclination”

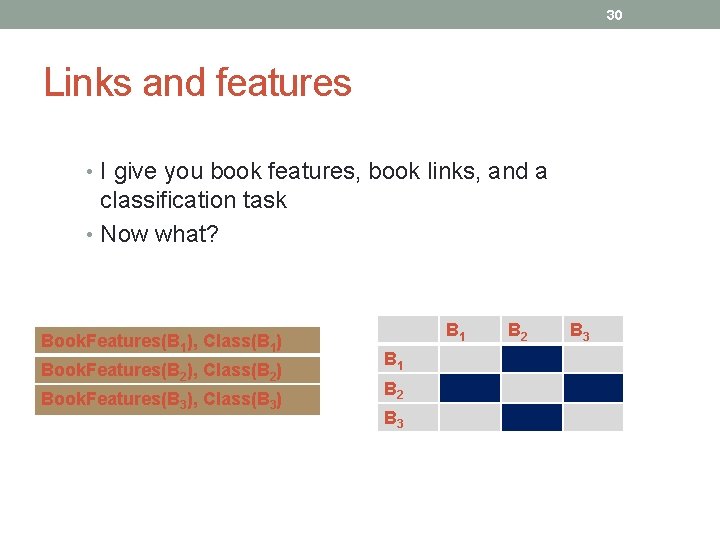

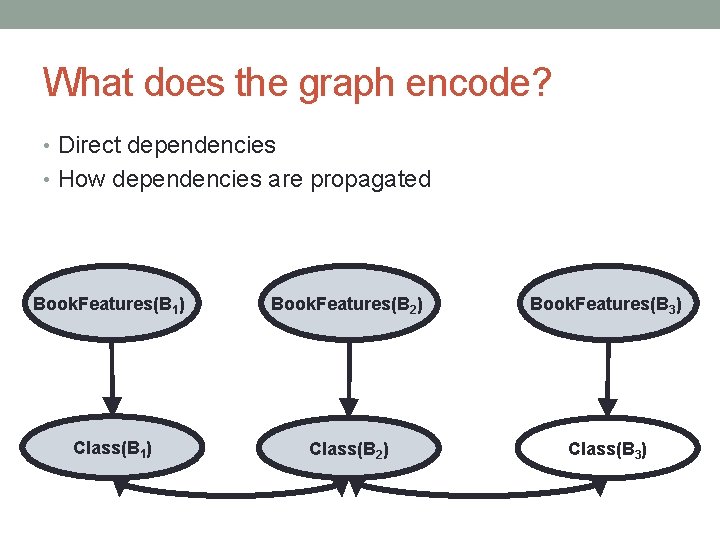

30 Links and features • I give you book features, book links, and a classification task • Now what? Book. Features(B 1), Class(B 1) Book. Features(B 2), Class(B 2) Book. Features(B 3), Class(B 3) B 1 B 2 B 3

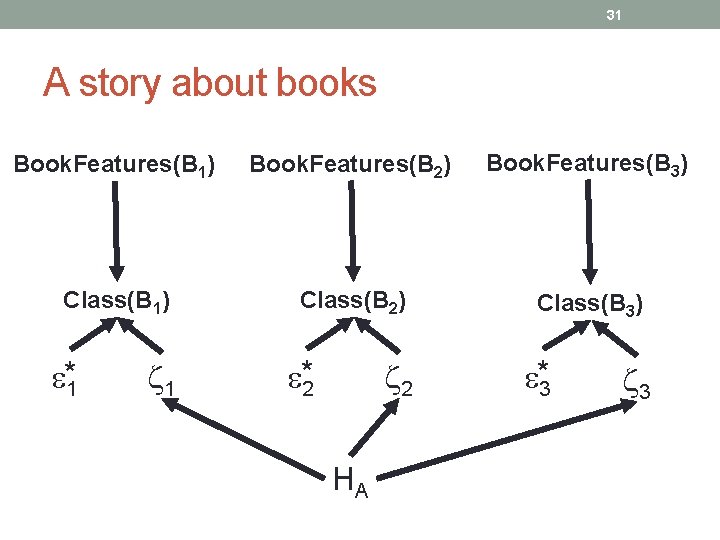

31 A story about books Book. Features(B 1) Book. Features(B 2) Book. Features(B 3) Class(B 1) Class(B 2) Class(B 3) *1 1 *2 2 HA *3 3

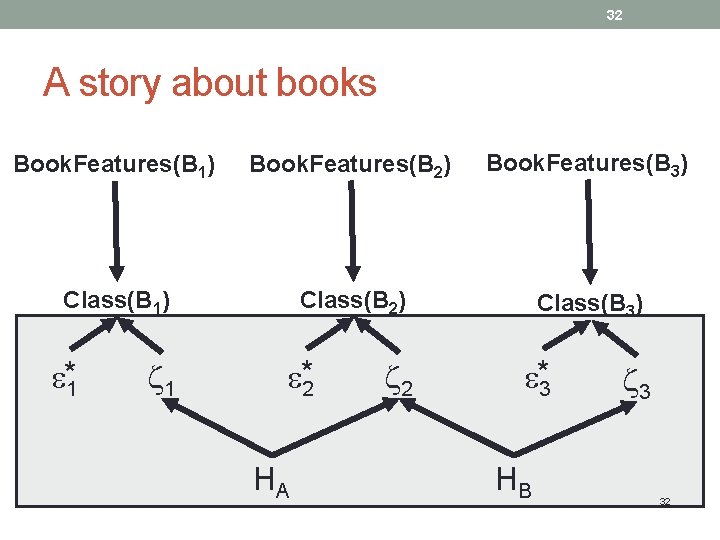

32 A story about books Book. Features(B 1) Book. Features(B 2) Book. Features(B 3) Class(B 1) Class(B 2) Class(B 3) *1 1 *2 HA 2 *3 HB 3 32

33 A model for integrating link data • Hypothesis: • The links between my books are useful indicators for such hidden common causes • The link matrix as a surrogate of the unknown structure

34 Example: Political Books database • A network of books about recent US politics sold by the online bookseller Amazon. com • Valdis Krebs, http: //www. orgnet. com/ • Relations: frequent co-purchasing of books by the same buyers • Political inclination factors as the hidden common causes

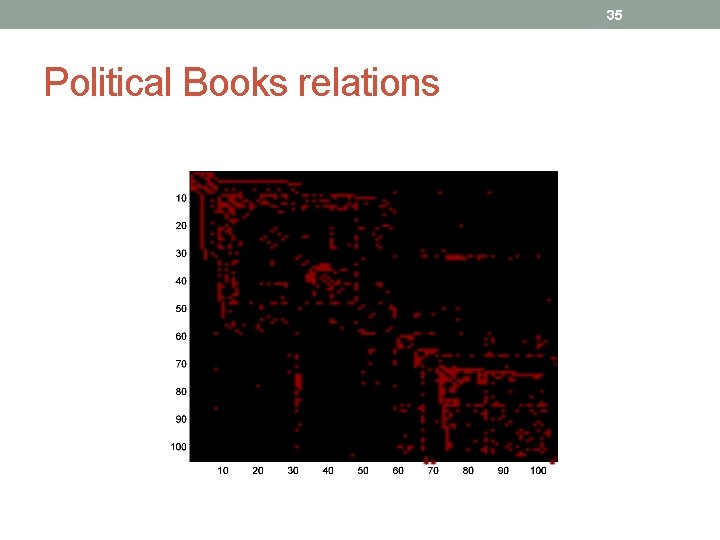

35 Political Books relations

36 Political Books database • Features: • I collected the Amazon. com front page for each of the books • Bag-of-words • (tf-idf features, normalized to unity) • Task: • Binary classification: “liberal” or “not-liberal” books • 43 liberal books out of 105

What does the graph encode? • Direct dependencies • How dependencies are propagated Book. Features(B 1) Book. Features(B 2) Book. Features(B 3) Class(B 1) Class(B 2) Class(B 3) 37

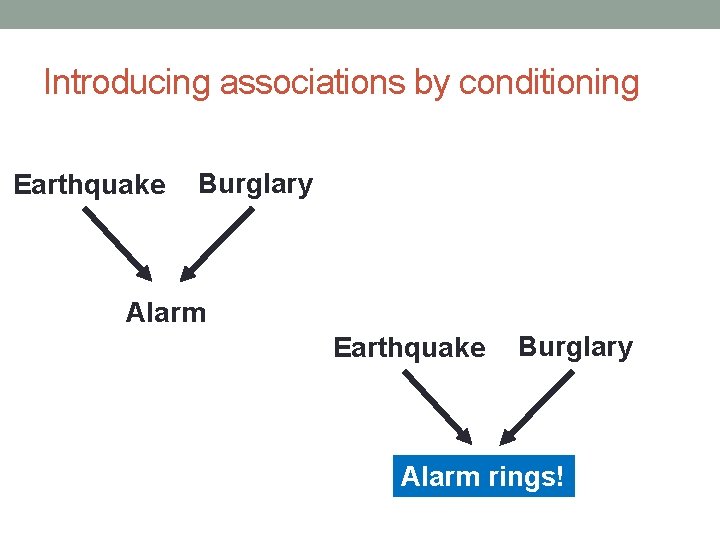

Introducing associations by conditioning Earthquake Burglary Alarm rings!

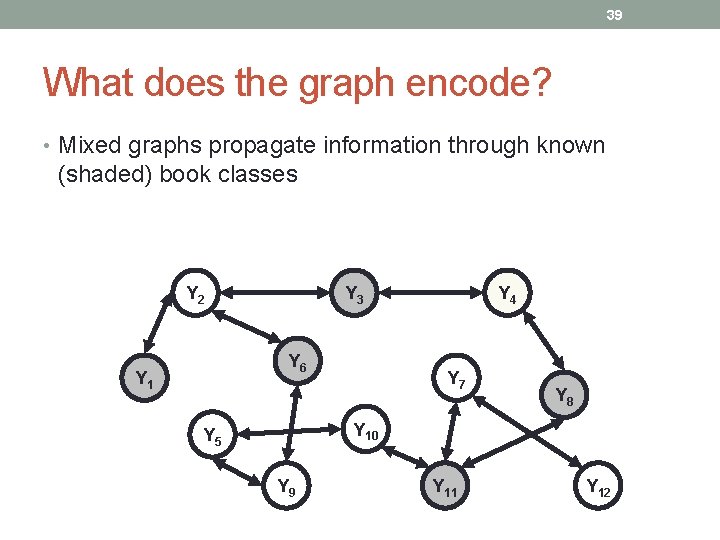

39 What does the graph encode? • Mixed graphs propagate information through known (shaded) book classes Y 2 Y 3 Y 6 Y 1 Y 4 Y 7 Y 8 Y 10 Y 5 Y 9 Y 11 Y 12

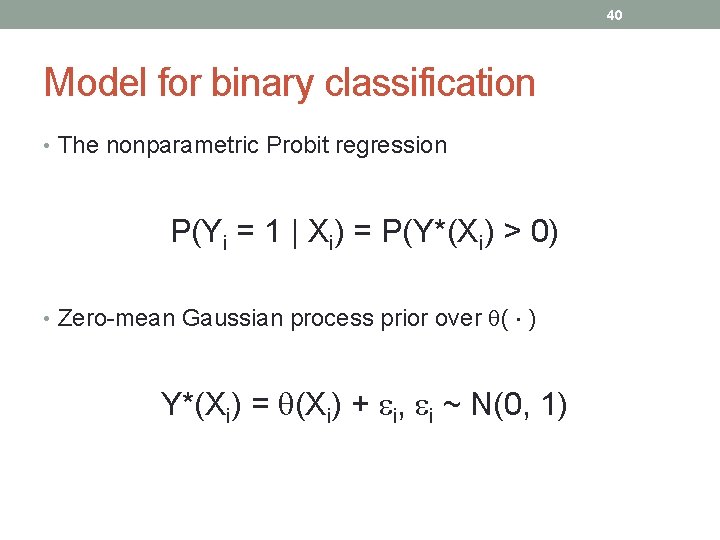

40 Model for binary classification • The nonparametric Probit regression P(Yi = 1 | Xi) = P(Y*(Xi) > 0) • Zero-mean Gaussian process prior over ( ) Y*(Xi) = (Xi) + i, i ~ N(0, 1)

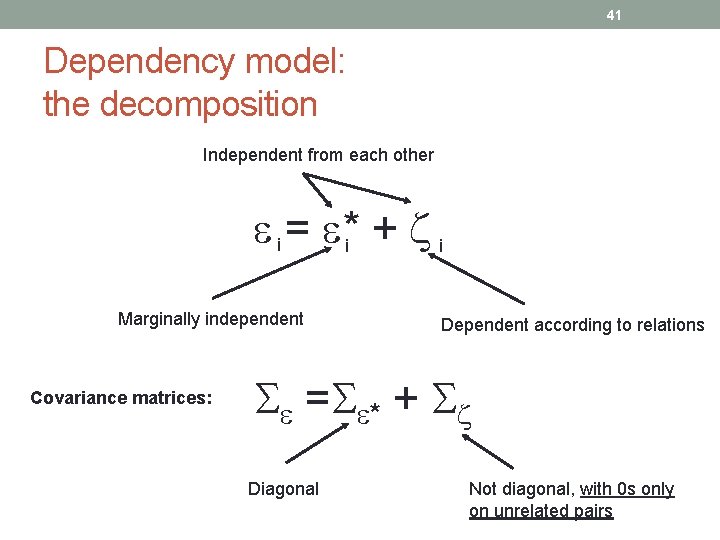

41 Dependency model: the decomposition Independent from each other i = *i + i Marginally independent Covariance matrices: Dependent according to relations = * + Diagonal Not diagonal, with 0 s only on unrelated pairs

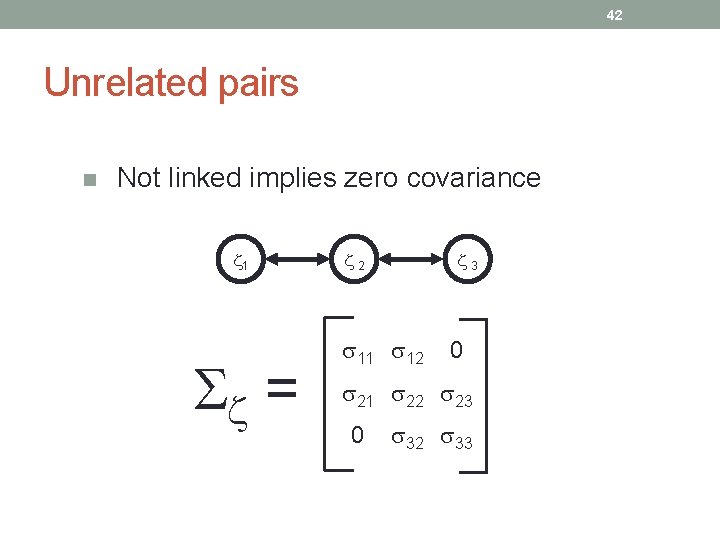

42 Unrelated pairs n Not linked implies zero covariance 1 = 2 3 11 12 0 21 22 23 0 32 33

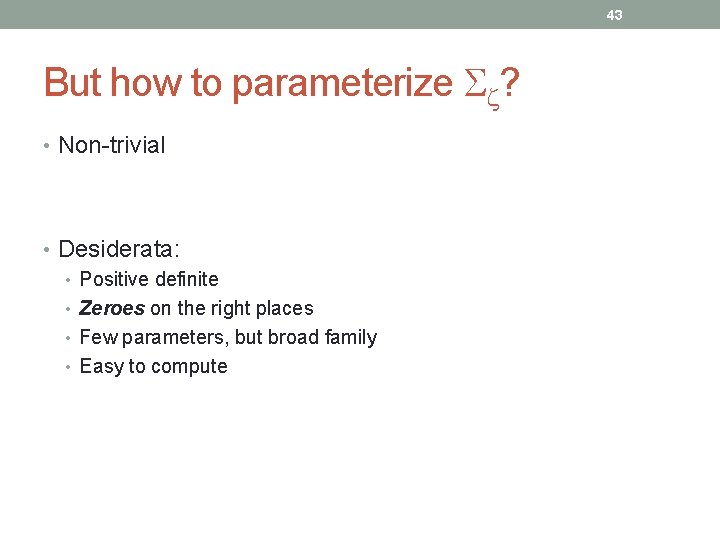

43 But how to parameterize ? • Non-trivial • Desiderata: • Positive definite • Zeroes on the right places • Few parameters, but broad family • Easy to compute

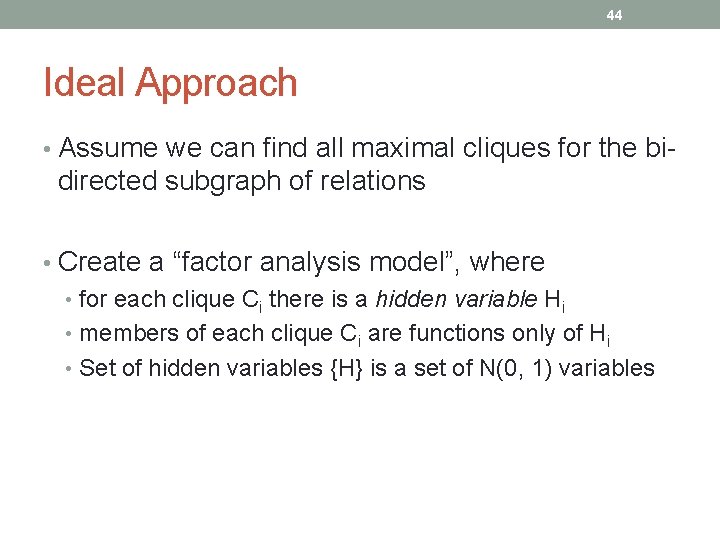

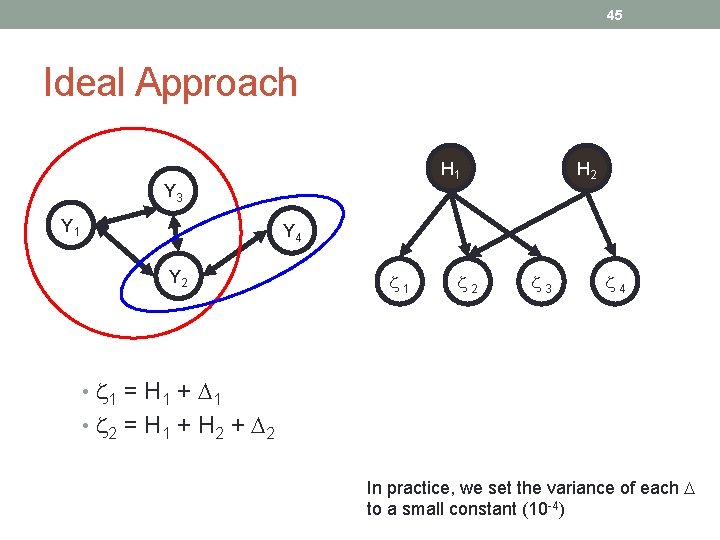

44 Ideal Approach • Assume we can find all maximal cliques for the bi- directed subgraph of relations • Create a “factor analysis model”, where • for each clique Ci there is a hidden variable Hi • members of each clique Ci are functions only of Hi • Set of hidden variables {H} is a set of N(0, 1) variables

45 Ideal Approach H 1 Y 3 Y 1 H 2 Y 4 Y 2 1 2 3 4 • 1 = H 1 + 1 • 2 = H 1 + H 2 + 2 In practice, we set the variance of each to a small constant (10 -4)

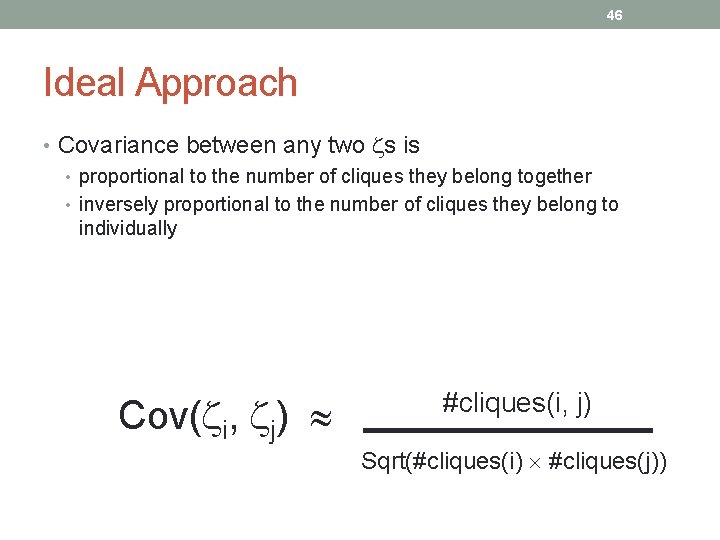

46 Ideal Approach • Covariance between any two s is • proportional to the number of cliques they belong together • inversely proportional to the number of cliques they belong to individually Cov( i, j) #cliques(i, j) Sqrt(#cliques(i) #cliques(j))

In reality • Finding cliques is not tractable in general • In practice, we introduce some approximations to this procedure

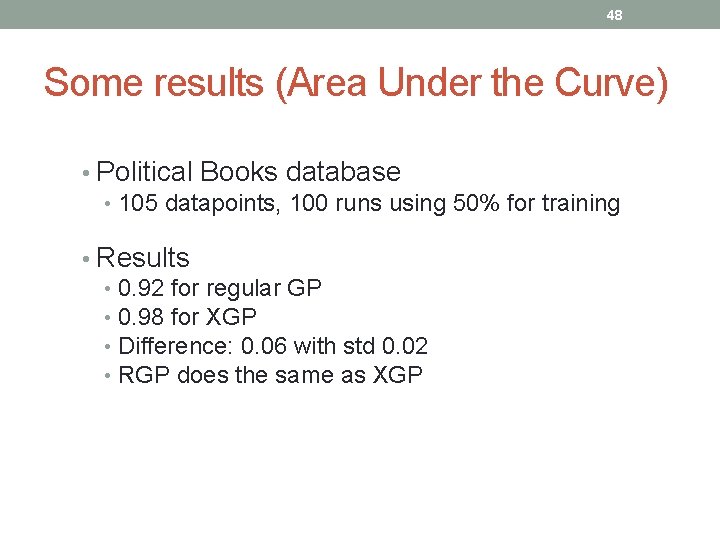

48 Some results (Area Under the Curve) • Political Books database • 105 datapoints, 100 runs using 50% for training • Results • 0. 92 for regular GP • 0. 98 for XGP • Difference: 0. 06 with std 0. 02 • RGP does the same as XGP

Problem III

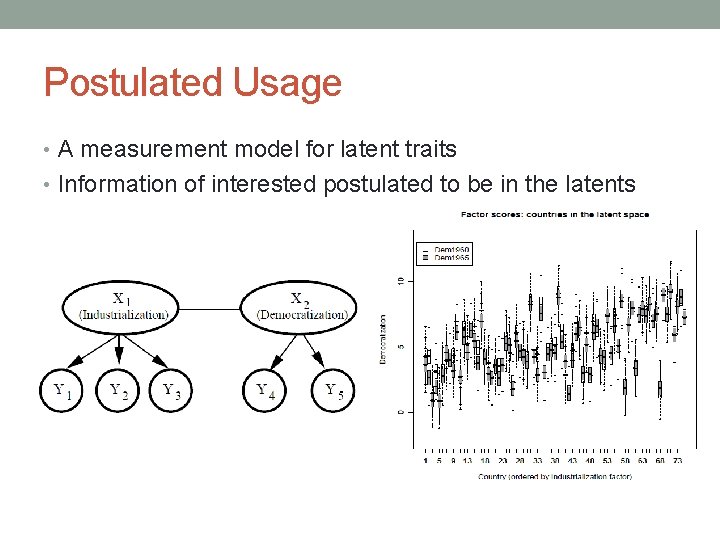

Postulated Usage • A measurement model for latent traits • Information of interested postulated to be in the latents

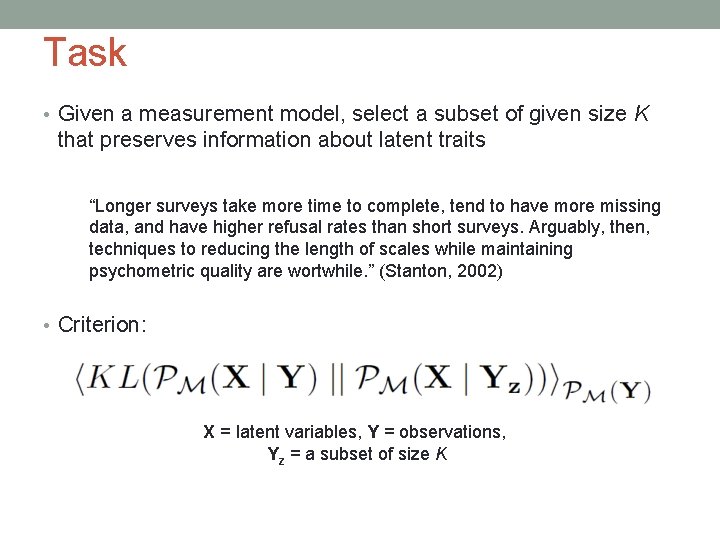

Task • Given a measurement model, select a subset of given size K that preserves information about latent traits “Longer surveys take more time to complete, tend to have more missing data, and have higher refusal rates than short surveys. Arguably, then, techniques to reducing the length of scales while maintaining psychometric quality are wortwhile. ” (Stanton, 2002) • Criterion: X = latent variables, Y = observations, Yz = a subset of size K

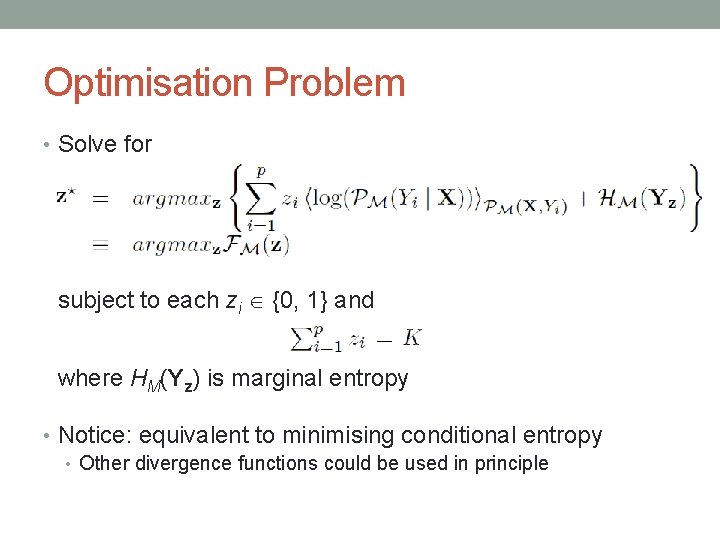

Optimisation Problem • Solve for subject to each zi {0, 1} and where HM(Yz) is marginal entropy • Notice: equivalent to minimising conditional entropy • Other divergence functions could be used in principle

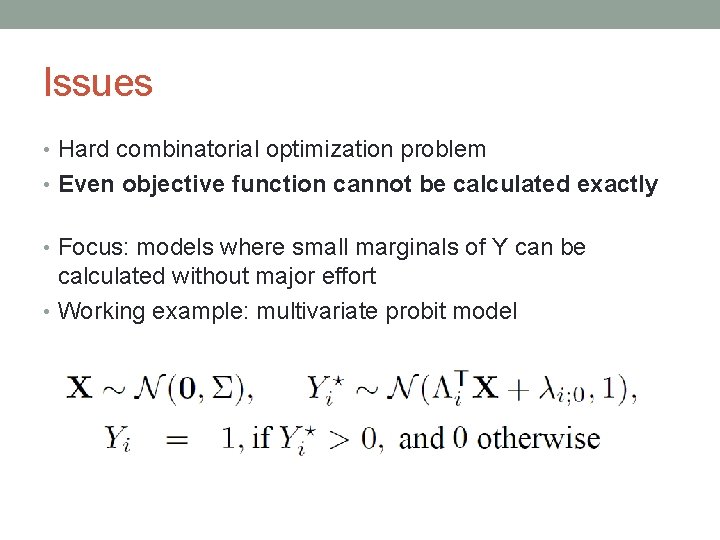

Issues • Hard combinatorial optimization problem • Even objective function cannot be calculated exactly • Focus: models where small marginals of Y can be calculated without major effort • Working example: multivariate probit model

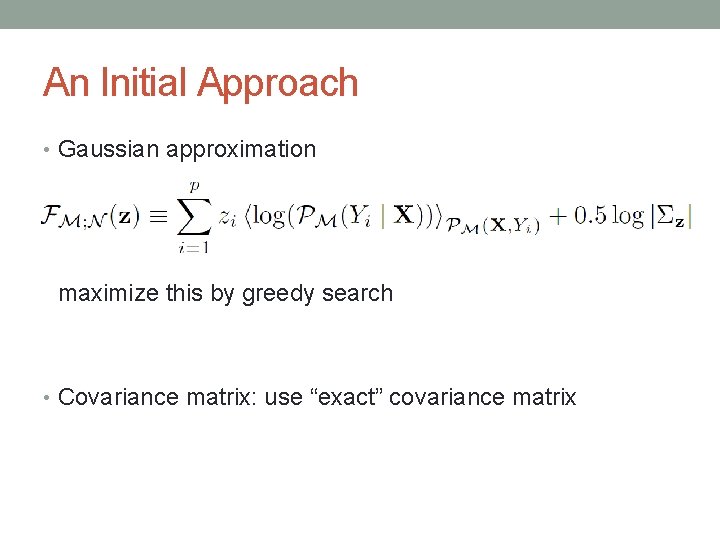

An Initial Approach • Gaussian approximation maximize this by greedy search • Covariance matrix: use “exact” covariance matrix

Alternatives • More complex approximations • But how far can we interwine the approximation with the optimisation procedure? • i. e. , use a single global approximation that can be quickly calculated to any choice of Z

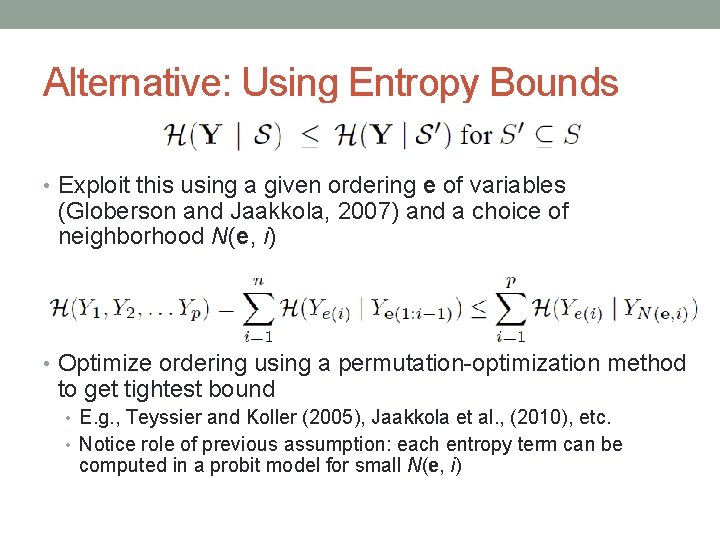

Alternative: Using Entropy Bounds • Exploit this using a given ordering e of variables (Globerson and Jaakkola, 2007) and a choice of neighborhood N(e, i) • Optimize ordering using a permutation-optimization method to get tightest bound • E. g. , Teyssier and Koller (2005), Jaakkola et al. , (2010), etc. • Notice role of previous assumption: each entropy term can be computed in a probit model for small N(e, i)

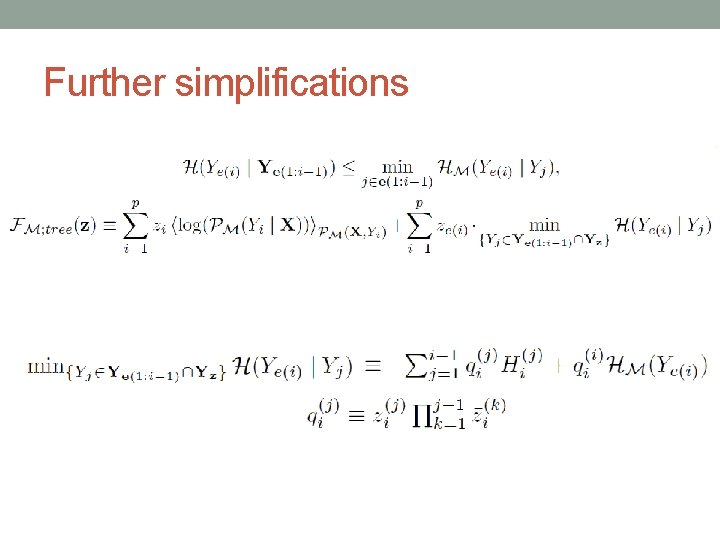

Further simplifications

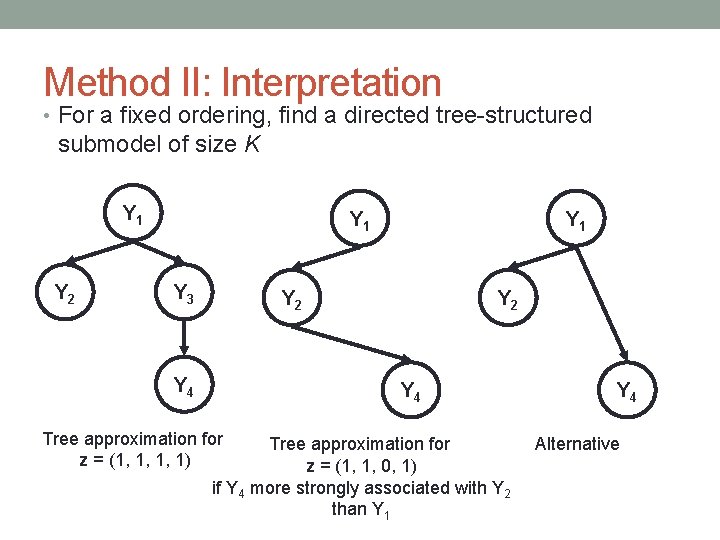

Method II: Interpretation • For a fixed ordering, find a directed tree-structured submodel of size K Y 1 Y 2 Y 1 Y 3 Y 1 Y 2 Y 4 Tree approximation for z = (1, 1, 1, 1) Tree approximation for z = (1, 1, 0, 1) if Y 4 more strongly associated with Y 2 than Y 1 Y 4 Alternative

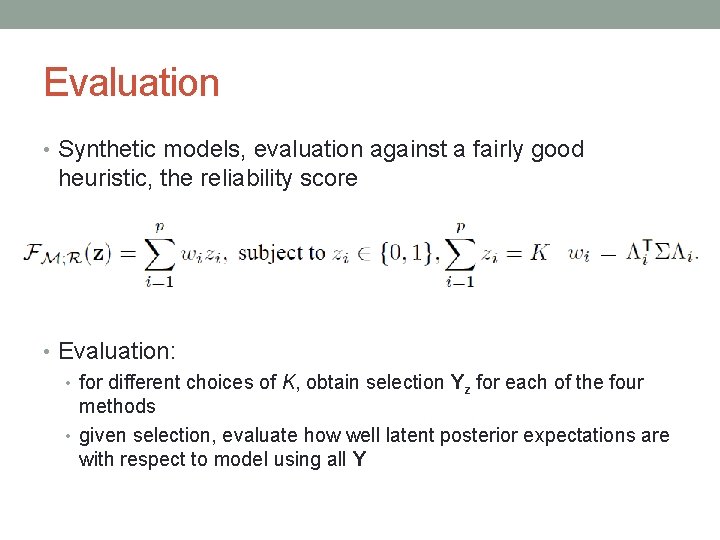

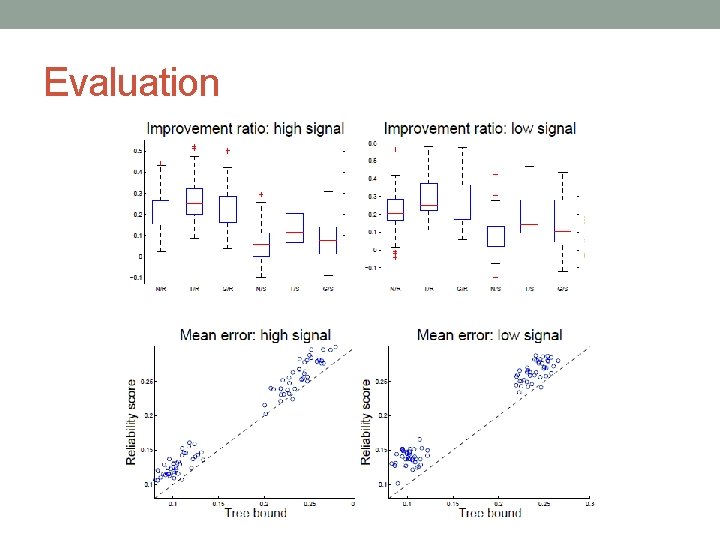

Evaluation • Synthetic models, evaluation against a fairly good heuristic, the reliability score • Evaluation: • for different choices of K, obtain selection Yz for each of the four methods • given selection, evaluate how well latent posterior expectations are with respect to model using all Y

Evaluation

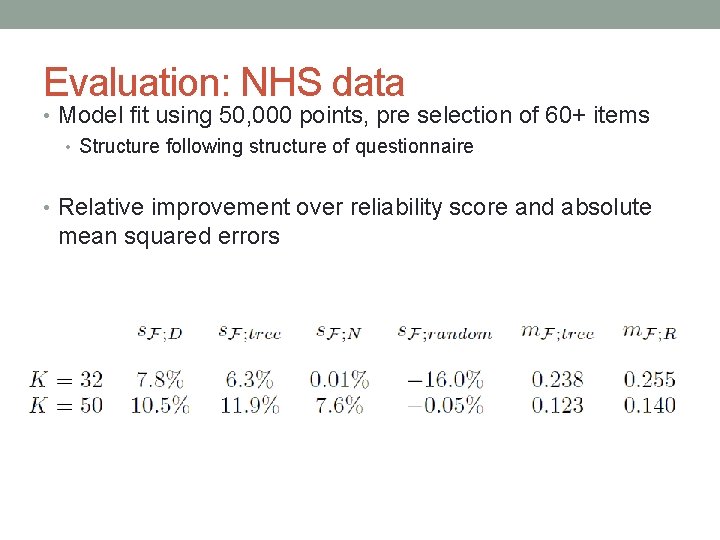

Evaluation: NHS data • Model fit using 50, 000 points, pre selection of 60+ items • Structure following structure of questionnaire • Relative improvement over reliability score and absolute mean squared errors

Conclusion

Take-home messages • Postulating latent structure have implications of all sorts • A framework for smoothing data and assessing causal relationships • Source of dependency across data points • A way of quantifying trade-offs between information content and cost of collecting data • Many computational, information-theoretical, and statistical problems arise from there, providing several non -trivial research questions http: //www. csml. ucl. ac. uk/

Thank you • Silva, R. (2011). “Thinning measurement models and questionnaire design”. Advances in Neural Information Processing Systems 24, NIPS 2011. • Silva, R. ; Chu, W. and Ghahramani, Z. (2007). “Hidden common cause relations in relational learning”. Advances in Neural Information Processing Systems, NIPS ’ 07. • Silva, R; Scheines, R. ; Glymour, C and Spirtes, P. (2006). “Learning the structure of linear latent variable models”. Journal of Machine Learning Research 7, 191 -246.

- Slides: 64