The Stable Marriage Problem Original author S C

The Stable Marriage Problem Original author: S. C. Tsai (交大蔡錫鈞教授) Revised by Chuang-Chieh Lin and Chih-Chieh Hung

A partner from NCTU ADSL (Advanced Database System Lab) n Chih-Chieh Hung (洪智傑) n n Bachelor Degree in the Department of Applied Math, National Chung-Hsing University. (1996 -2000) Master Degree in the Department of Computer Science and Information Engineering, NCTU. (2003 -2005) Ph. D. Student (博一) in the Department of Computer Science and Information Engineering, NCTU. (since 2005) Will be a Ph. D. candidate. His master diploma. 2

A partner from NCTU ADSL (Advanced Database System Lab) n Research topics n n Advisor: n n Data mining Mobile Data Management Data management on sensor networks Professor Wen-Chih Peng (彭文志) Personal state: n n The master of CSexam. Math forum on Jupiter BBS. Single, but he has a girlfriend now. 3

n n n Consider a society with n men (denoted by capital letters) and n women (denoted by lower case letters). A marriage M is a 1 -1 correspondence between the men and women. Each person has a preference list of the members of the opposite sex organized in a decreasing order of desirability. 4

n n n A marriage is said to be unstable if there exist 2 marriage couples X-x and Y-y such that X desires y more than x and y desires X more than Y. The pair X-y is said to be “dissatisfied. ” (不滿的) A marriage M is called “stable marriage” if there is no dissatisfied couple. 5

n n Assume a monogamous, hetersexual society. For example, N = 4. A: abcd B: bacd C: adcb D: dcab a: ABCD b: DCBA c: ABCD d: CDAB Consider a marriage M: A-a, B-b, C-c, D-d, C-d is dissatisfied. Why? 6

Proposal algorithm: Assume that the men are numbered in some arbitrary order. n The lowest numbered unmarried man X proposes to the most desirable woman on his list who has not already rejected him; call her x. 7

n n n The woman x will accept the proposal if she is currently unmarried, or if her current mate Y is less desirable to her than X (Y is jilted and reverts to the unmarried state). The algorithm repeats this process, terminating when every person has married. (This algorithm is used by hospitals in North America in the match program that assigns medical graduates to residency positions. ) 8

Does it always terminate with a stable marriage? n An unmatched man always has at least one woman available that he can proposition. n At each step the proposer will eliminate one woman on his list and the total size of the lists is n 2. Thus the algorithm uses at most n 2 proposals. i. e. , it always terminates. 9

Claim that the final marriage M is stable. n Proof by contradiction: n n Let X-y be a dissatisfied pair, where in M they are paired as X-x, Y-y. Since X prefers y to x, he must have proposed to y before getting married to x. 10

n n Since y either rejected X or accepted him only to jilt (拋棄) him later, her mates thereafter (including Y) must be more desirable to her than X. Therefore y must prefer Y to X, contradicting the assumption that y is dissatisfied. 11

n n Goal: Perform an average-case analysis of this (deterministic) algorithm. For this average-case analysis, we assume that the men’s lists are chosen independently and uniformly at random; the women’s lists can be arbitrary but must be fixed in advance. 12

n n TP denotes the number of proposal made during the execution of the Proposal Algorithm. The running time is proportional to TP. But it seems difficult to analyze TP. 13

n Principle of Deferred Decisions: n n n The idea is to assume that the entire set of random choices is not made in advance. At each step of the process, we fix only the random choices that must be revealed to the algorithm. We use it to simplify the average-case analysis of the Proposal Algorithm. 14

n n Suppose that men do not know their lists to start with. Each time a man has to make a proposal, he picks a random woman from the set of women not already propositioned by him, and proceeds to propose to her. The only dependency that remains is that the random choice of a woman at any step depends on the set of proposals made so far by the current proposer. 15

n n n However, we can eliminate the dependency by modifying the algorithm, i. e. , a man chooses a woman uniformly at random from the set of all n women, including those to whom he has already proposed. He forgets the fact that these women have already rejected him. Call this new version the Amnesiac Algorithm. 16

n n n Note that a man making a proposal to a woman who has already rejected him will be rejected again. Thus the output by the Amnesiac Algorithm is exactly the same as that of the original Proposal Algorithm. The only difference is that there are some wasted proposals in the Amnesiac Algorithm. 17

n Let TA denote the number of proposals made by the Amnesiac Algorithm. Pr [TA > m] dominates That is, Pr [TP >i. e. , m]T·A stochastically for all m. TP. T P > m TA > m, 18

n n n It suffices to find an upper bound to analyze the distribution TA. A benefit of analyzing TA is that we need only count that total number of proposals made, without regard to the name of the proposer at each stage. This is because each proposal is made uniformly and independently to one of n women. 19

n n The algorithm terminates with a stable marriage once all women have received at least one proposal each. Moreover, bounding the value of TA is a special case of the coupon collector’s problem. 20

![n Theorem: ([MR 95, page 57]) For any const ant c 2 R, and n Theorem: ([MR 95, page 57]) For any const ant c 2 R, and](http://slidetodoc.com/presentation_image_h2/6b8781f43df907134901037f62b1e6c2/image-21.jpg)

n Theorem: ([MR 95, page 57]) For any const ant c 2 R, and m = n ln n + cn, lim Pr [TA > m] = 1 ¡ e¡ n! 1 n e¡ c ! 0: The Amnesiac Algorithm terminates with a stable marriage once all women have received at least one proposal each. 21

n Bounding the value of TA is a special case of the coupon collector’s problem. 22

The Coupon Collector’s Problem n n Input: Given n types of coupons. At each trial a coupon is chosen at random. Each random choice of the coupons are mutually independent. Output: The minimum number of trials required to collect at least one of each type of coupon. 23

n n n You may regard this problem as “Hello Kitty Collector’s Problem”. Let X be a random variable defined to be the number of trials required to collect at least one of each type of coupon. Let C 1, C 2, …, CX denote the sequence of trials, where Ci {1, …, n} denotes the type of the coupon drawn in the ith trial. 24

n n n Call the ith trial Ci a success if the type Ci was not drawn in any of the first i – 1 selections. Clearly, C 1 and CX are always successes. We consider dividing the sequence into epochs (時期), where epoch i begins with the trial following the ith success and ends with the trial on which we obtain the (i+1)st success. 25

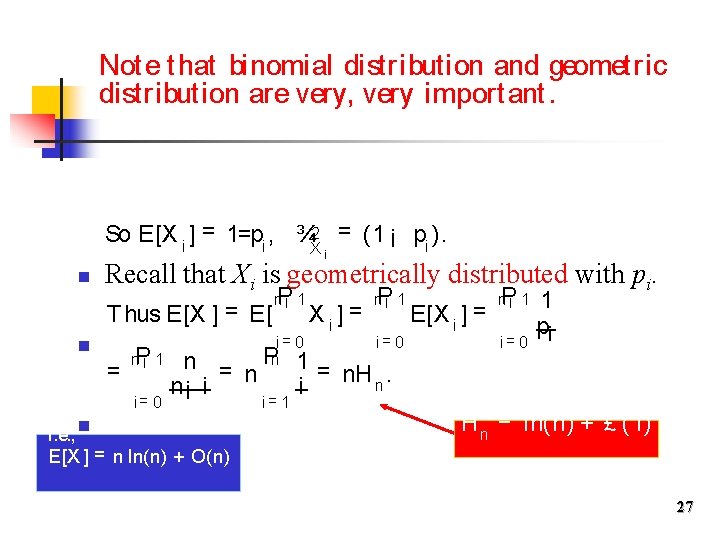

What kind of probability distribution does Xi possess? n Define the random variable Xi , for 0 i n 1, to be the number of trials in the ith stage P 1 n¡ = Xi. (epoch), so that. X i= 0 n Let pi denote the probability of success on any trial of the i-th stage. n This is the probability of drawing one of the n – i i. so, = n¡ and remaining coupon p types i n 26

Not e that binomial distribution and geometric distribution are very, very important. So E[X i ] = 1=pi , ¾X 2 = (1 ¡ pi ). i n Recall that Xi is geometrically distributed with pi. P 1 n¡ Thus E[X ] = E[ n = P 1 n¡ i= 0 n = n n¡ i i. e. , n E[X ] = n ln(n) + O(n) i= 0 Pn 1 i= 1 Xi] = P 1 n¡ i= 0 E[X i ] = P 1 n¡ i= 0 1 pi = n. H. n i H n = ln(n) + £ (1) 27

¾X 2 = = = P 1 n¡ i= 0 ¾X 2 i ni 2 i (n¡ ) i= 0 Pn n(n¡ i 0) i 02 ¼ 2=6 i 0= 1 n Pn 1 = n 2 ¡ n. H n. 2 0 i = 0 i 1 thus Xi’s are independent, 28

Exercise n Use the Chebyshev’s inequality to find an upper bound on the probability that X > n ln n, for a constant > 1. n Try to prove that Pr [X ¸ ¯n ln n] · O( 1 ). ¯ 2 ln 2 n (You might need the result: n lnn · n. H n · n lnn + n. ) 29

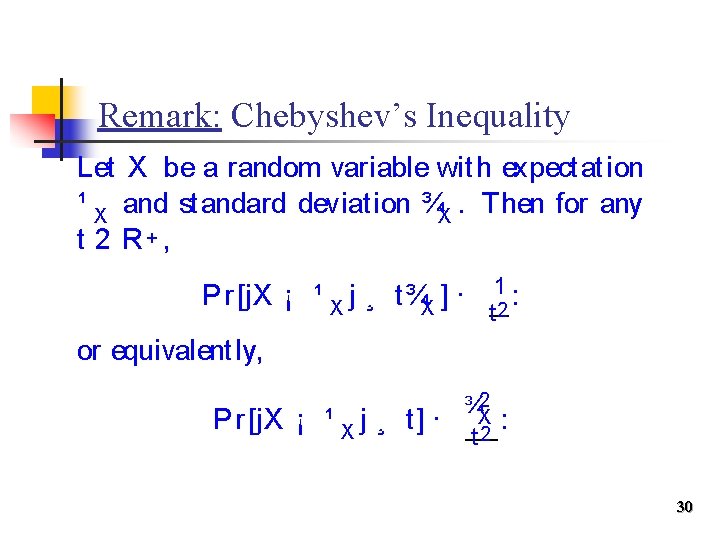

Remark: Chebyshev’s Inequality Let X be a random variable with expectation ¹ X and standard deviation ¾X. Then for any t 2 R+ , Pr [j. X ¡ ¹ X j ¸ t¾X ] · 12 : t or equivalent ly, Pr [j. X ¡ ¹ X j ¸ t] · 2 ¾X t 2 : 30

n n Our next goal is to derive sharper estimates of the typical value of X. We will show that the value of X is unlikely to deviate far from its expectations, or, is sharply concentrated around its expected value. 31

![» ir Thus Pr [» ir ] = (1 ¡ n 1 )r n » ir Thus Pr [» ir ] = (1 ¡ n 1 )r n](http://slidetodoc.com/presentation_image_h2/6b8781f43df907134901037f62b1e6c2/image-32.jpg)

» ir Thus Pr [» ir ] = (1 ¡ n 1 )r n · e¡ r =n. For r = ¯n ln(n), e¡ r =n = n¡ ¯ , ¯ > 1. Let denote the event that coupon type i is not [n collected r trials. > rfirst Prin [X the ] = Pr [ » r ] It is still polynomially small. i n · n Xn i= 1 Pr [» ir ] · Xn i= 1 n¡ ¯ = n¡ (¯¡ 1) : i= 1 32

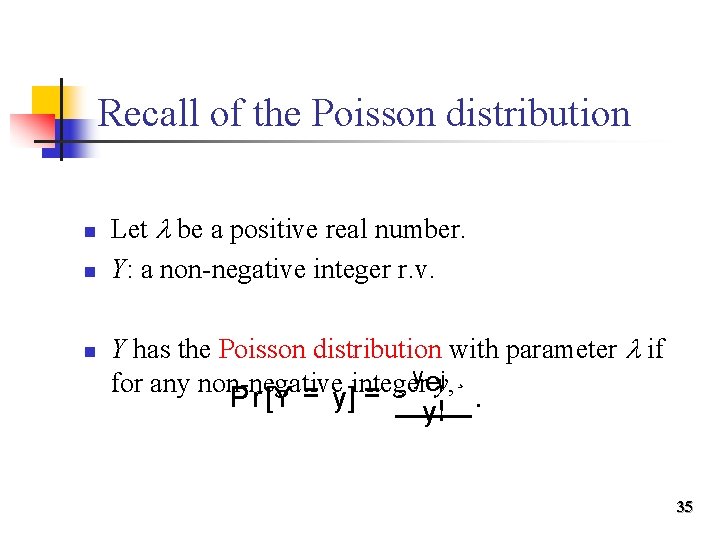

n So that’s it? n Is the analysis good enough? n Not yet! n Let consider the following heuristic argument which will help to establish some intuition. 33

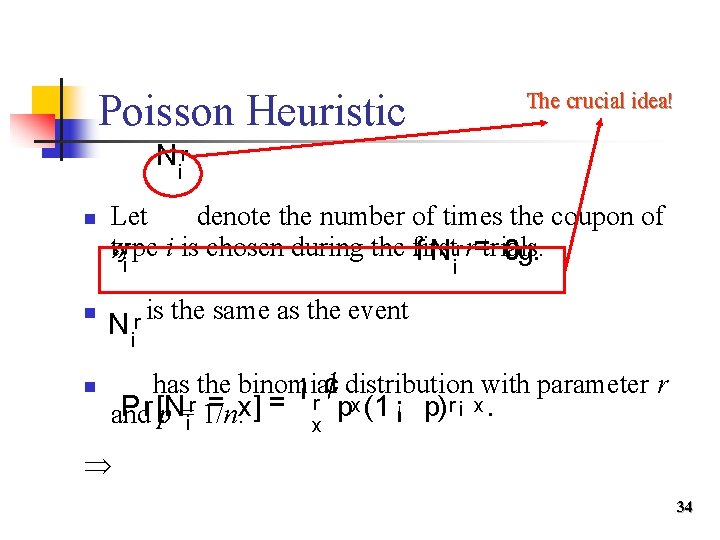

Poisson Heuristic The crucial idea! N ir n Let denote the number of times the coupon of r type i is chosen during the first r r=trials. » f N 0 g. i i n n N ir is the same as the event has the binomial ¡ ¢ distribution with parameter r = x] = r px (1 ¡ p) r ¡ x. Pr [N and p =ir 1/n. x 34

Recall of the Poisson distribution n Let be a positive real number. Y: a non-negative integer r. v. Y has the Poisson distribution with parameter if y ey, ¡ ¸ ¸ for any non-negative integer Pr [Y = y] = y!. 35

n n For proper small and as r , the Poisson distribution with = rp is a good approximation to r the binomial N distribution with parameter r and p. i ¸ = r =n Approximate by the Poisson distribution with 0 e¡ ¸ ¸ parameter since p = 1/n. r r = = = e¡ r =n. Thus, Pr [» i ] Pr [N i 0] t 0! n 36

![» ir n n Tk Pr [» ir j » jr ] = Pr » ir n n Tk Pr [» ir j » jr ] = Pr](http://slidetodoc.com/presentation_image_h2/6b8781f43df907134901037f62b1e6c2/image-37.jpg)

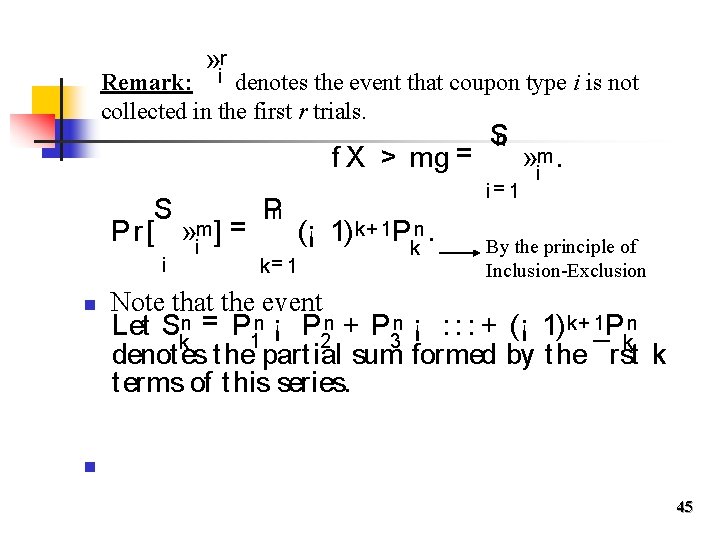

» ir n n Tk Pr [» ir j » jr ] = Pr [» ir ]. Claim: , for 1 l =i 1 n, are almost independent. l i. e. , for any index set {j 1, . . . , Tk jk} not containing i, r ( r )] » Pr [» i jl k+ 1 ) r Tk (1¡ = l 1 n = Pr [» ir j » jr ] = k )r k T (1¡ l n Pr [ » jr ] Proof: l = 1 t e¡ r (k+ 1)=n e¡ r k=n = e¡ l= 1 l r =n. 37

![R emar k: Pr [» ir ] t e¡ Sn n r =n. Tn R emar k: Pr [» ir ] t e¡ Sn n r =n. Tn](http://slidetodoc.com/presentation_image_h2/6b8781f43df907134901037f62b1e6c2/image-38.jpg)

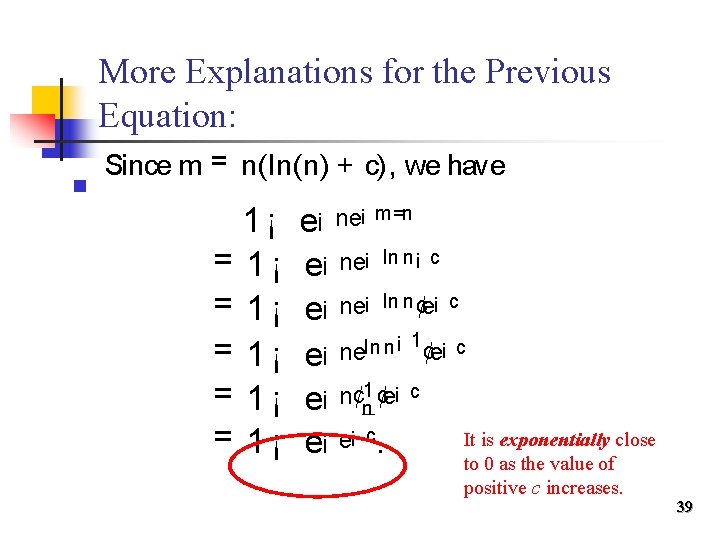

R emar k: Pr [» ir ] t e¡ Sn n r =n. Tn P r [: » im ] = P r [ (: » im )] t (1 ¡ e¡ Thus, i = 1 i= 1 t e¡ ne¡ m =n. m=n ) n m = n(ln(n) + c) Sn Sn m] = Pr [ » m ] = 1 ¡ Pr [: [X Pr > m] » i n Let , fori any constant c. i= 1 t 1 ¡ e¡ ne¡ m =n = 1 ¡ e¡ e¡ c. 0 for large positive c. 1 for large negative c. 38

More Explanations for the Previous Equation: n Since m = n(ln(n) + c), we have 1¡ = 1¡ = 1¡ e¡ ne¡ m=n e¡ ne¡ ln n¡ c e¡ ne¡ ln n ¢e¡ c ln n ¡ 1 ¢e¡ c ne ¡ e e¡ n¢n 1 ¢e¡ c It is exponentially close e¡ e¡ c. to 0 as the value of positive c increases. 39

The Power of Poisson Heuristic n n It gives a quick back-of-the-envelope type estimation of probabilistic quantities, which hopefully provides some insight into the true behavior of those quantities. Poisson heuristic can help us do the analysis better. 40

But… n n However, it is N notr rigorous enough since it only approximates i. We can convert the previous argument into a rigorous proof using the Boole-Bonferroni Inequalities. (Yet the analysis will be more complex. ) 41

n Are you ready to be rigorous? n Tighten your seat belt! 42

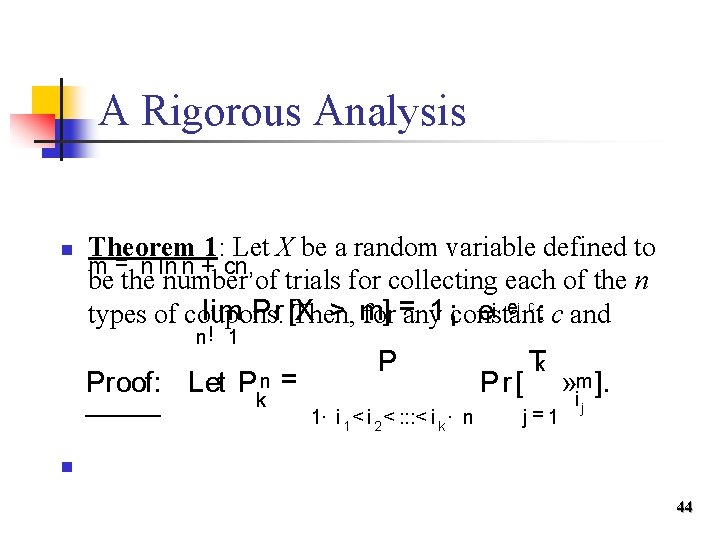

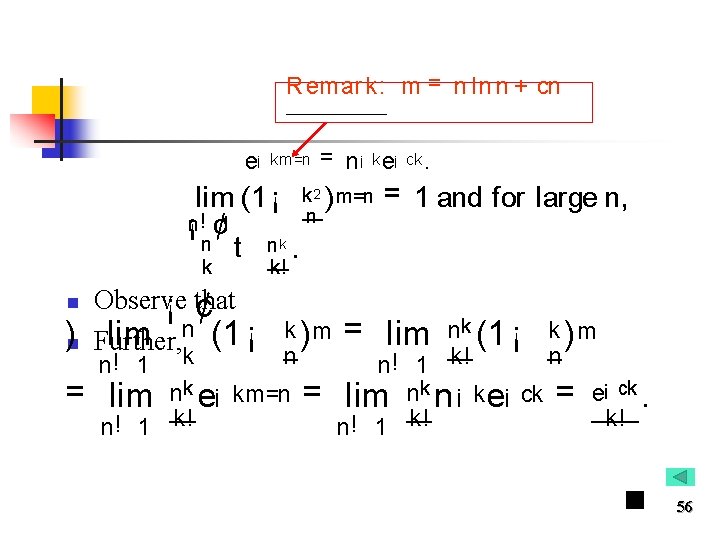

A Rigorous Analysis n Theorem 1: Let X be a random variable defined to m = n ln n + cn, be the number of trials for collecting each of the n > m] e¡ e¡ c : c and lim Pr [X 1 ¡constant types of coupons. Then, for =any n! 1 Proof: Let Pkn = P 1· i 1 < i 2 < : : : < i k · n Pr [ Tk j=1 » im ]. j n 44

» ir Remark: denotes the event that coupon type i is not collected in the first r trials. f X > mg = S Pn (¡ 1) k+ 1 Pkn. Pr [ » im ] = i n k= 1 Sn i= 1 » im. By the principle of Inclusion-Exclusion Note that the event Let Skn = P 1 n ¡ P 2 n + P 3 n ¡ : : : + (¡ 1) k+ 1 Pkn denot es t he partial sum formed by the ¯rst k t erms of this series. n 45

![S n · Pr [ » m ] · Sn by We have S S n · Pr [ » m ] · Sn by We have S](http://slidetodoc.com/presentation_image_h2/6b8781f43df907134901037f62b1e6c2/image-46.jpg)

S n · Pr [ » m ] · Sn by We have S 2 k the i 2 k+ 1 i Boole-Bonferroni inequalities: n n 1. 2. Y 1; : : : ; Yn : arbit rary event s. For odd k: Sn Pk P Pr [ i = 1 Yi ] · (¡ 1) j + 1 j=1 For even k: Sn Pk Pr [ i = 1 Yi ] ¸ (¡ 1) j + 1 j=1 Pr [ Tj i 1 < i 2 < : : : < i j r= 1 P Tj i 1 < i 2 < : : : < i j Pr [ r= 1 Yi ]. r 46

![Illustration for the Boole-Bonferroni inequalities Sk Pr [ = Yi ] i 1 Sn Illustration for the Boole-Bonferroni inequalities Sk Pr [ = Yi ] i 1 Sn](http://slidetodoc.com/presentation_image_h2/6b8781f43df907134901037f62b1e6c2/image-47.jpg)

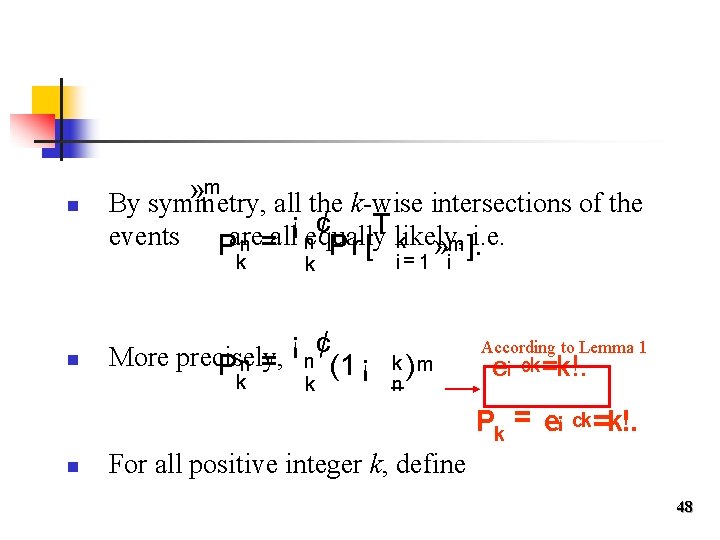

Illustration for the Boole-Bonferroni inequalities Sk Pr [ = Yi ] i 1 Sn Pr [ = Yi ] i 1 … k 47

n m » By symmetry, all the k-wise intersections of the i ¡ ¢ T likely, events Pare all equally i. e. n = n Pr [ k » m ]. k n k ¡ ¢ More precisely, Pkn = n (1 ¡ k i= 1 i k )m n According to Lemma 1 e¡ ck =k!. Pk = e¡ n ck =k!. For all positive integer k, define 48

n Sk = Pk (¡ 1) j + 1 Pj = Pk (¡ 1) j + 1 e¡ Define the partial sum of j. P=k 1’s as j=1 f (c) = 1 ¡ e¡ e¡ c cj j! , . = 1 ¡ e¡ x Hint : Consider the first k terms of the power seriesg(x) expansion = f (c). Thus lim S k of k! 1 n n ¯rst. i. e. , for all ² > 0, t here exist s k ¤ such that for k > k ¤, j. Sk ¡ f (c)j < ². 49

R emar k: Skn = P 1 n ¡ P 2 n + P 3 n ¡ : : : + (¡ 1) k+ 1 Pkn. Pk Pk Sk = (¡ 1) j + 1 Pj = (¡ 1) j + 1 e¡ cj , j=1 j! j=1 Since lim Pkn = Pk , we have lim Skn = Sk. n! 1 n n n! 1 Thus for all ² > 0 and k > k ¤, when n is su± cient ly large, j. Skn ¡ Sk j < ². Thus for all ² > 0 and k > k ¤, and large enough n, we have j. Skn ¡ Sk j < ² and j. Sk ¡ f (c)j < ² which implies t hat n n ¡ Sn j. Skn ¡ f (c)j < 2² and j. S 2 k j 4²: < 2 k+ 1 50

R emar k: (1) (2) j. Skn n S 2 k S n · Pr [ » im ] · S 2 k+ 1 i n ¡ Sn ¡ f (c)j < 2² and j. S 2 k j < 4², 2 k+ 1 f (c) Using the bracketing property of partial sum, we have that for any ² > 0 and n su± cient ly large, n S j. Pr [ » im ] ¡ f (c)j < 4². i S ) lim Pr [ » m ] = f (c) = 1 ¡ e¡ i n! 1 i e¡ c : ¥ 51

Thank you.

![References n n [MR 95] Rajeev Motwani and Prabhakar Raghavan, Randomized algorithms, Cambridge University References n n [MR 95] Rajeev Motwani and Prabhakar Raghavan, Randomized algorithms, Cambridge University](http://slidetodoc.com/presentation_image_h2/6b8781f43df907134901037f62b1e6c2/image-53.jpg)

References n n [MR 95] Rajeev Motwani and Prabhakar Raghavan, Randomized algorithms, Cambridge University Press, 1995. [MU 05] Michael Mitzenmacher and Eli Upfal, Probability and Computing - Randomized Algorithms and Probabilistic Analysis, Cambridge University Press, 2005. 53

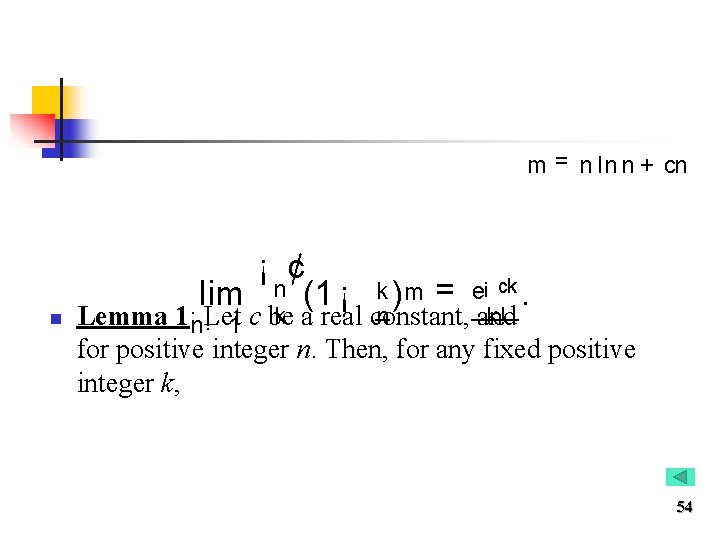

m = n ln n + cn n ¡ ¢ lim n (1 ¡ k ) m = e¡ ck. k a real constant, n k! Lemma 1: n!Let 1 c be and for positive integer n. Then, for any fixed positive integer k, 54

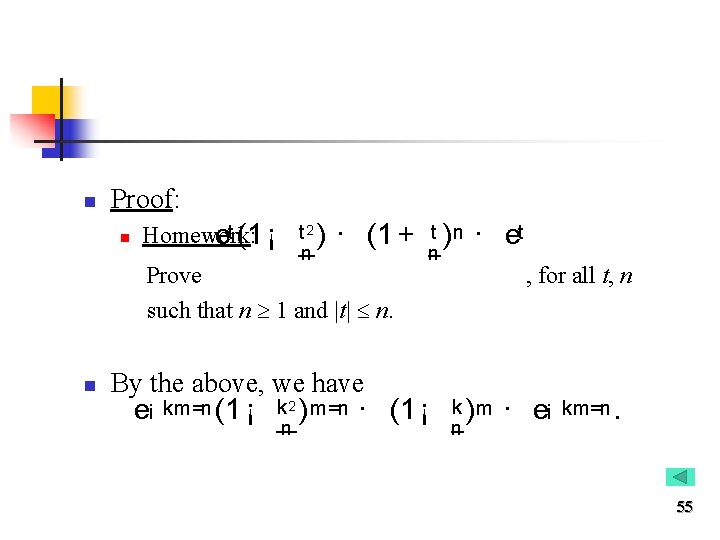

n Proof: n Homework: et (1 ¡ t 2 ) n · (1 + t ) n · et Prove such that n 1 and |t| n. n n By the above, we have e¡ km=n (1 ¡ k 2 ) m=n · (1 ¡ n , for all t, n k )m n · e¡ km=n. 55

R emar k: m = n ln n + cn e¡ km=n = n¡ k e¡ ck. lim (1 ¡ k 2 ) m=n = 1 and for large n, n ¡n ! ¢ 1 n t nk. k n )n k! Observe ¡ that ¢ lim n (1 ¡ k )m Further, n! 1 = lim k n nk e¡ km=n n! 1 k! = = lim nk (1 ¡ k ) m n n! 1 k! lim nk n ¡ k e¡ ck = e¡ ck. k! n! 1 k! 56

Principle of Inclusion-Exclusion n Let Y 1; Y 2; : : : Yn be arbitrary events. Then Sn P P Pr [ i = 1 Yi ] = Pr [Yi ] ¡ Pr [Yi Yj ] + P i< j < k i i< j Pr [Yi Yj Yk ] ¡ : : : + (¡ 1) l+ 1 Pl r= 1 Pr [Yi ] r + : : : 57

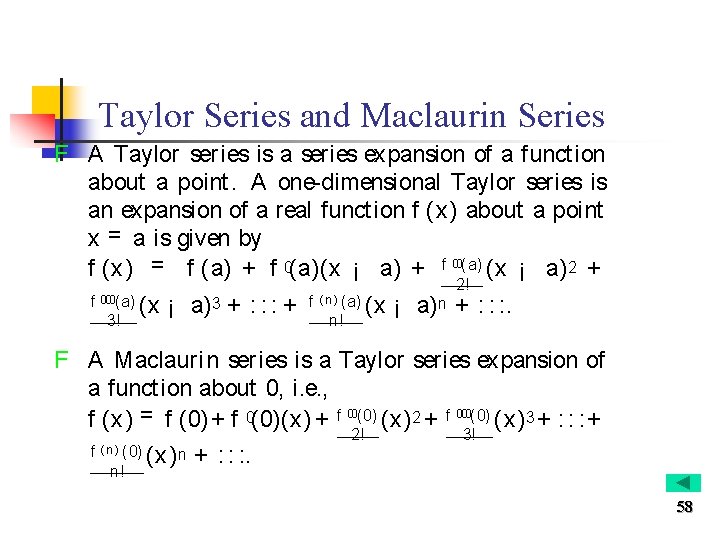

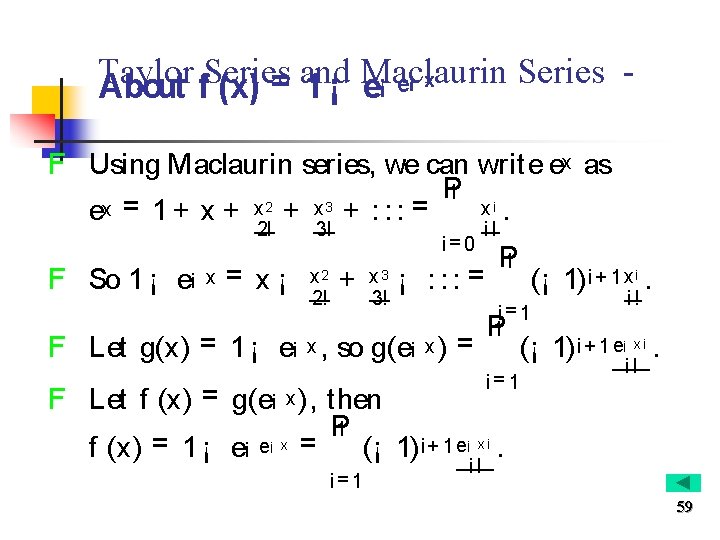

Taylor Series and Maclaurin Series F A Taylor series is a series expansion of a funct ion about a point. A one-dimensional Taylor series is an expansion of a real funct ion f (x) about a point x = a is given by f (x) = f (a) + f 0(a)(x ¡ a) + f 00(a) (x ¡ a) 2 + f 000(a) 3! (x ¡ a) 3 + : : : + f ( n ) (a) n! 2! (x ¡ a) n + : : : . F A Maclaurin series is a Taylor series expansion of a function about 0, i. e. , f (x) = f (0) + f 0(0)(x) + f 00(0) (x) 2 + f 000(0) (x) 3 + : : : + f ( n ) (0) n! (x) n + : : : . 2! 3! 58

Taylor Series and Maclaurin Series ¡ x e ¡ = About f (x) 1 ¡ e F Using Maclaurin series, we can write ex as P 1 i 2 3 x. ex = 1 + x + x + : : : = 2! F So 1 ¡ e¡ x = x¡ 3! x 2 2! i= 0 + x 3 3! i! ¡ : : : = F Let g(x) = 1 ¡ e¡ x , so g(e¡ x ) = P 1 (¡ 1) i + 1 x i. i= 1 P 1 i! (¡ 1) i + 1 e¡ x i. i= 1 i! F Let f (x) = g(e¡ x ), then P 1 ¡ x (¡ 1) i + 1 e¡ x i. f (x) = 1 ¡ e¡ e = i= 1 i! 59

- Slides: 59