The SLAC Cluster Chuck Boeheim Assistant Director SLAC

The SLAC Cluster Chuck Boeheim Assistant Director, SLAC Computing Services

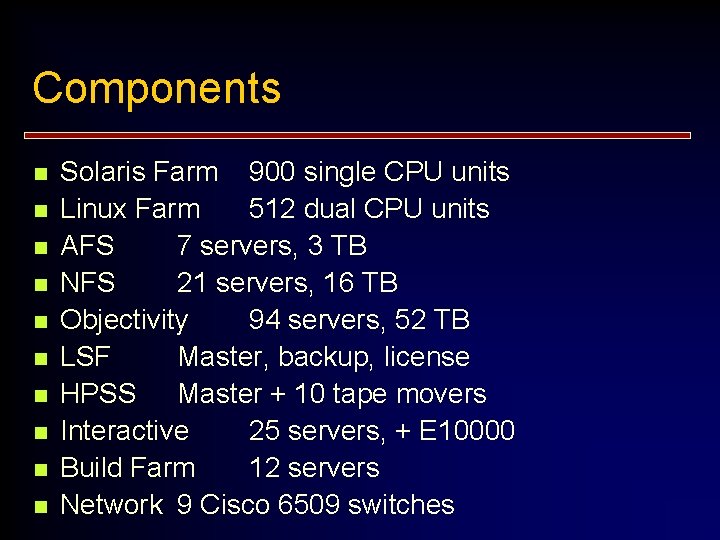

Components n n n n n Solaris Farm 900 single CPU units Linux Farm 512 dual CPU units AFS 7 servers, 3 TB NFS 21 servers, 16 TB Objectivity 94 servers, 52 TB LSF Master, backup, license HPSS Master + 10 tape movers Interactive 25 servers, + E 10000 Build Farm 12 servers Network 9 Cisco 6509 switches

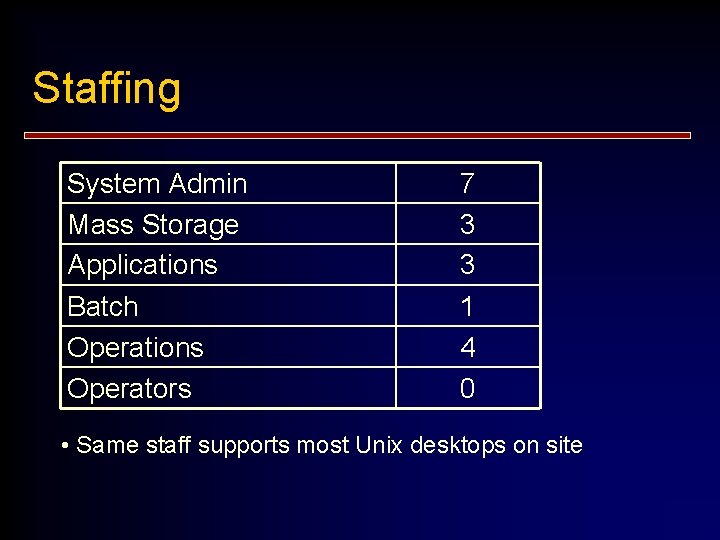

Staffing System Admin Mass Storage Applications Batch Operations Operators 7 3 3 1 4 0 • Same staff supports most Unix desktops on site

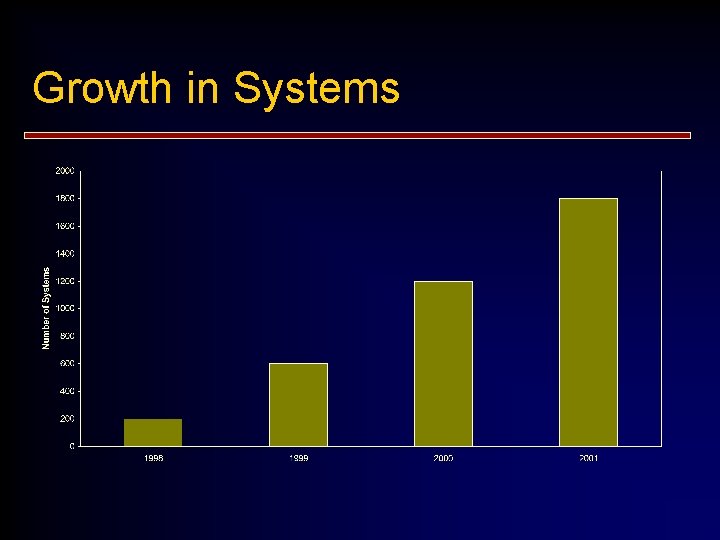

Growth in Systems

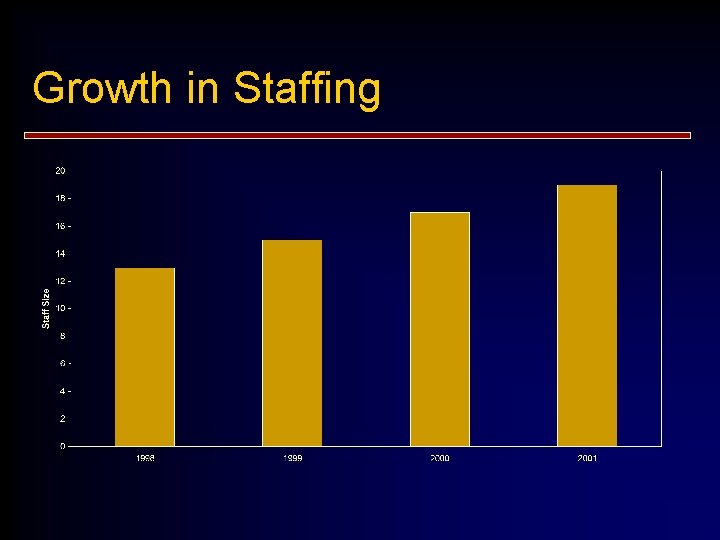

Growth in Staffing

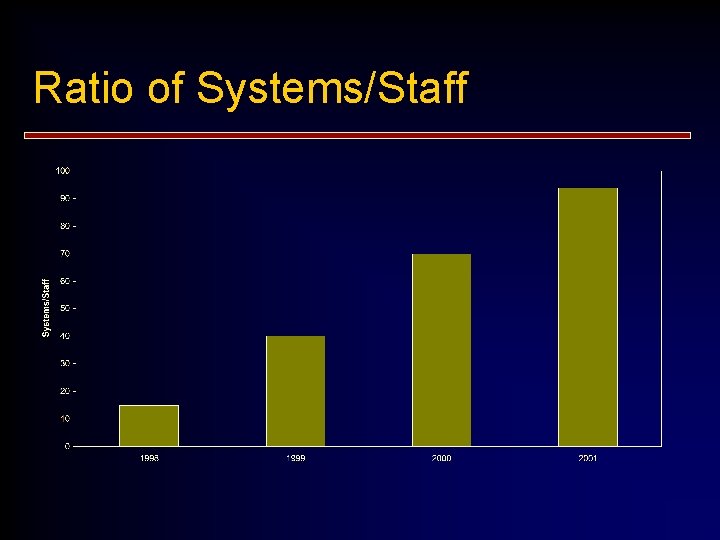

Ratio of Systems/Staff

Physical n n Racking, power, cooling, seismic, network Remote power management Remote console management Installation n n Maintenance n n n Burn-in, DOAs Replacement burn-in Divergence from original models Locating a machine

Networking n n n Gb to servers 100 Mb to farm nodes Speed matching (problems) at switches Network glitches and storms Network monitoring

System Admin Network install (256 machines in < 1 hr) n Patch management n Power Up/Down n Nightly maintenance n System Ranger (monitor) n Report summarization n“A Cluster is a large Error Amplifier” n

User Application Issues n n Workload scheduling Startup effects Distribution vs Hot Spots System and Network Limits n n n File descriptors Memory Cache contention NIS, DNS, AMD Job Scheduling Test Beds

- Slides: 10