The Portland Group Inc Brent Leback brent lebackpgroup

The Portland Group, Inc. Brent Leback brent. leback@pgroup. com www. pgroup. com HPC User Forum, Broomfield, CO September 2009

High Level Languages for Clusters q Many failures in this area, academically and commercially q Lack of Supply? Lack of Standards? Bad/Buggy Implementations? Lack of Generality? q Lack of Performance? q q CAF is headed for the Fortran Standard (? ) (!) q q Is it a good idea? Is it mature enough to standardize? Will anyone in attendance use it? Given our experience with HPF, PGI will be conservative on this front

Performance Across Platforms: PGI Unified Binary q PGI Unified Binary has been available since 2005 q A single X 64 binary including optimized code sequences for multiple target processor cores. -tp switch to specify target processor type, a number of AMD and Intel processor families currently supported. Especially important to ISVs q AVX support is in progress q q q Now PGI Unified Binary supports accelerated/non-accelerated binaries q q A single X 64 binary recognizes the existence of a GPU and runs PGI accelerated versions there if available. -ta switch to specify target accelerator, currently only –ta=nvidia is supported. Use –ta=nvidia, host to generate code for both cases Target processor and Target Accelerator switches can be used together. Today, Intel 64, AMD 64, + NVIDIA is the full gamut.

The “Full Gamut” Isn’t Very Full 4

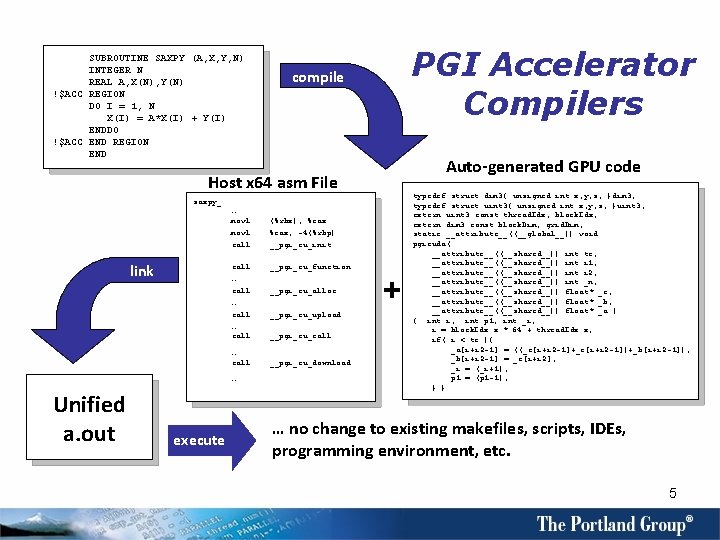

SUBROUTINE SAXPY (A, X, Y, N) INTEGER N REAL A, X(N), Y(N) !$ACC REGION DO I = 1, N X(I) = A*X(I) + Y(I) ENDDO !$ACC END REGION END PGI Accelerator Compilers compile Auto-generated GPU code Host x 64 asm File saxpy_: … movl call. . . call link (%rbx), %eax, -4(%rbp) __pgi_cu_init __pgi_cu_function … call __pgi_cu_alloc … call … Unified a. out execute __pgi_cu_upload __pgi_cu_call __pgi_cu_download + typedef struct dim 3{ unsigned int x, y, z; }dim 3; typedef struct uint 3{ unsigned int x, y, z; }uint 3; extern uint 3 const thread. Idx, block. Idx; extern dim 3 const block. Dim, grid. Dim; static __attribute__((__global__)) void pgicuda( __attribute__((__shared__)) int tc, __attribute__((__shared__)) int i 1, __attribute__((__shared__)) int i 2, __attribute__((__shared__)) int _n, __attribute__((__shared__)) float* _c, __attribute__((__shared__)) float* _b, __attribute__((__shared__)) float* _a ) { int i; int p 1; int _i; i = block. Idx. x * 64 + thread. Idx. x; if( i < tc ){ _a[i+i 2 -1] = ((_c[i+i 2 -1]+_c[i+i 2 -1])+_b[i+i 2 -1]); _b[i+i 2 -1] = _c[i+i 2]; _i = (_i+1); p 1 = (p 1 -1); } } … no change to existing makefiles, scripts, IDEs, programming environment, etc. 5

Supporting Heterogeneous Cores: PGI Accelerator Model q Minimal changes to the language – directives/pragmas, in the same vein as vector or Open. MP parallel directives. As simple as !$ACC REGION <your Fortran kernel here> !$ACC END REGION q Minimal library calls – usually none Standard x 64 toolchain – no changes to makefiles, linkers, build process, standard libraries, other tools Not a “platform” – binaries will execute on any compatible x 64+GPU hardware system Performance feedback – learn from and leverage the success of vectorizing compilers in the 1970 s and 1980 s Incremental program migration – put migration decisions in the hands of developers PGI Unified Binary Technology – ensures continued portability to non GPU-enabled targets q q q 6

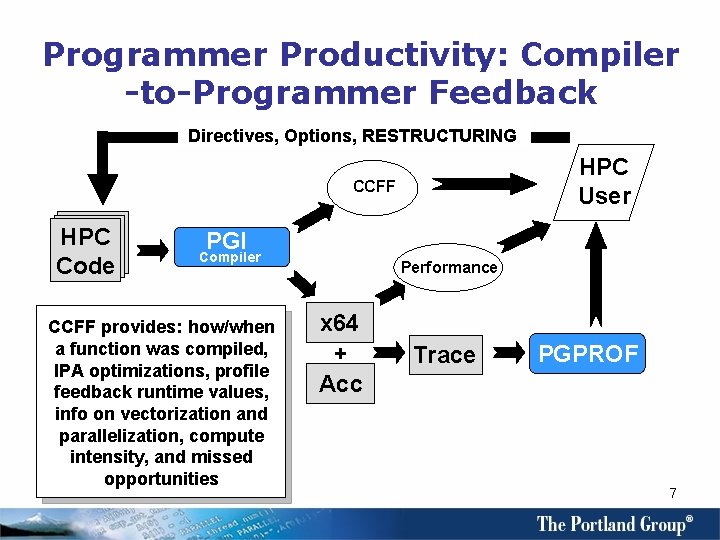

Programmer Productivity: Compiler -to-Programmer Feedback Directives, Options, RESTRUCTURING HPC User CCFF HPC Code PGI Compiler CCFF provides: how/when a function was compiled, IPA optimizations, profile feedback runtime values, info on vectorization and parallelization, compute intensity, and missed opportunities Performance x 64 + Acc Trace PGPROF 7

Supporting Third-Parties q PGI 9. 0 supports Open. MP 3. 0 for Fortran, C/C++. q q q PGI is currently working with the Open. MP committee to investigate the support of an accelerator programming model as part of Open. MP and/or other standards body. q q Open. MP 3. 0 Tasks supported in all languages Open. MP runtime overhead as measured by the EPCC benchmark is lower than our competition Michael Wolfe is our Open. MP representative IMSL and NAG are already supported with PGI compilers; we're enabling them to migrate incrementally to heterogeneous manycore.

Availability and Additional Information q PGI Accelerator Programming Model – is supported for x 64+NVIDIA Linux targets in the PGI 9. 0 Fortran and C compilers, available now q PGI CUDA Fortran – supporting explicit programming of x 64+NVIDIA targets will be available in a production release of the PGI Fortran 95/03 compiler currently scheduled for release in November, 2009 q Other GPU and Accelerator Targets – are being studied by PGI, and may be supported in the future as the necessary low-level software infrastructure (e. g. Open. CL) becomes more widely available q Further Information – see www. pgroup. com/accelerate for a detailed specification of the PGI Accelerator model, an FAQ, and related articles and white papers q CCFF – The Common Compiler Feedback Format, is described at www. pgroup. com/resources/ccff. htm 9

- Slides: 9