The Page Rank Citation Ranking Bringing Order to

The Page. Rank Citation Ranking “Bringing Order to the Web”

Google Design Goals Scale up to work with much larger collections – In 1997, older search engines were breaking down due to manipulations by advertisers, etc. Exhibit high precision among top-ranked documents – Many documents are kind-of relevant – For the Web, relevant should be just the very best documents Provide data sets for academic research on search engines

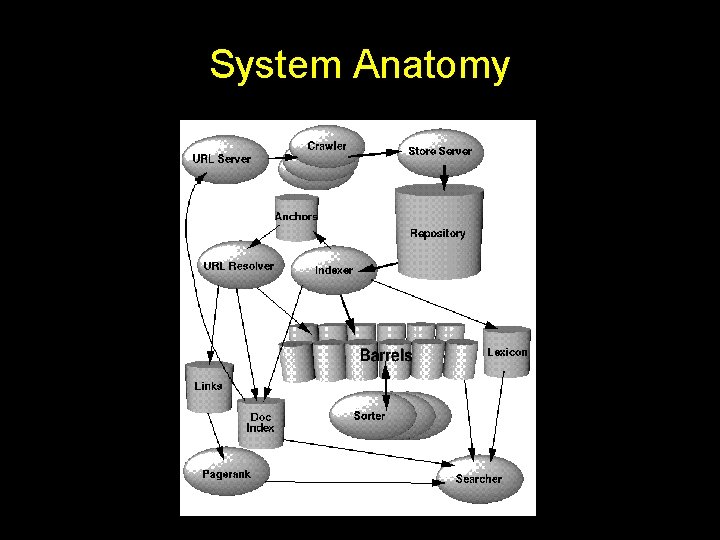

System Anatomy

Basic Concept Bibliometrics – Use citation patterns like for academic research – But Web documents are not reviewed – Web documents do not have the same cost of production/publishing – Programs can easily generate pages pointing to another page – Very valuable to attempt to game the system

Page. Rank Design Page. Rank – Rank importance of pages – Each incoming link raises importance – Importance increment related to rank of source page – Importance increment normalized for number of links on source page A high ranking results from – Lots of links from low ranked pages – Fewer links from highly ranked pages

Ranking Process Initialize Page. Rank values based on heuristics Initial values do not change results, only time to converge Repeat until the rankings converge: For each incoming link L Page. Rank = Page. Rank + Rank ( Source (L) ) #Links ( Source (L) ) Model Web user with some chance of jumping to random location Page. Rank = c * Page. Rank

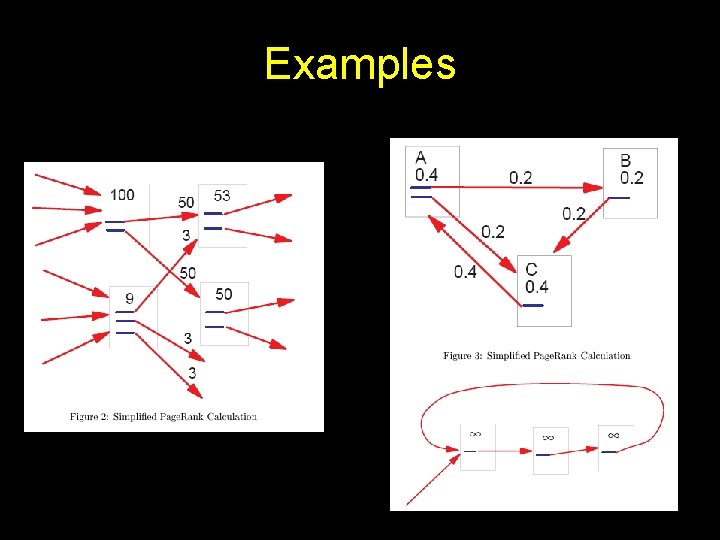

Examples

Problem with Subgraphs The Web is composed of many independent graphs – Links among themselves but no links in/out of the set – This results in ranking between independent graphs to be difficult Solution – Introduce a decay factor – This also increases the rate of convergence

Problem with Dangling Links Many links go to pages with no outgoing links – Influence the distribution of “rank” • Don’t know how to push their rank back into system during iteration – They are to pages that • Have no links • Have not been downloaded • Are not in a form that the system can identify – There are lots of them Solution – Remove “dead-ends” during convergence – Compute ranks of these after convergence

Implementation Web issues – Infinitely large Web sites, pages, and URLs – Much broken HTML – Web is constantly changing Page. Rank Implementation – Each URL assigned integer ID – Dangling links iteratively pruned from graph • Few iterations get rid of most – Generate guess at ranking • Does not affect outcome (much), just how fast it converges – Iterate rank computation until convergence – Add dangling links back in – Iterate rank computation again for the same number of times that it took for dangling links to be removed

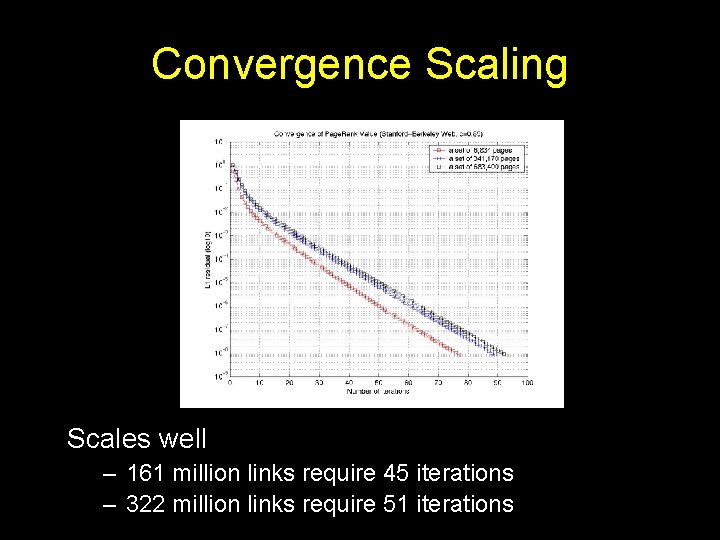

Convergence Scaling Scales well – 161 million links require 45 iterations – 322 million links require 51 iterations

Searching with Page. Rank Google search – Uses a variety of factors • • Standard IR measures Proximity Anchor Text Page. Rank – Page. Rank most valuable for underspecified queries (e. g. few terms, lots of results) – “Spam pages” given no/low Page. Rank to reduce their effect on resulting weights.

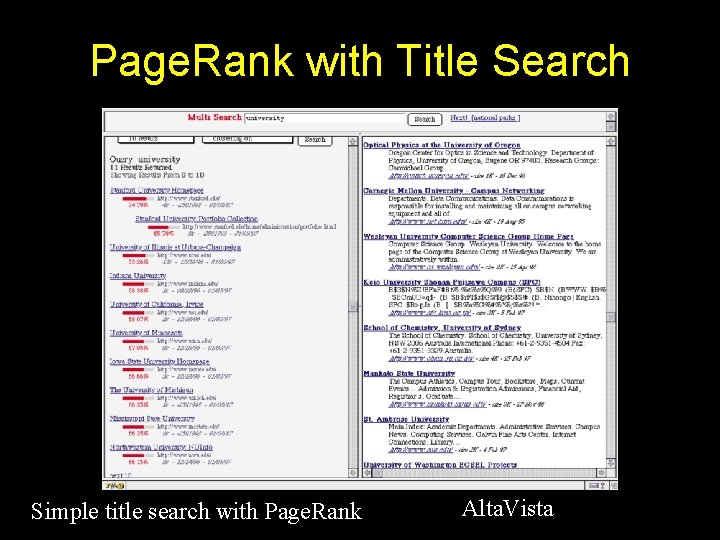

Page. Rank with Title Search Simple title search with Page. Rank Alta. Vista

Page. Rank Applications Estimating Web. Traffic – Because it models a random Web surfer Backlink Predictor – Like to estimate number of backlinks to identify important pages • Use CPU/bandwidth to increase precision – Use incomplete data to rank pages to generate order of importance for crawling User Navigation – Browser can annotate link based on Page. Rank to provide user clue as to destination’s value

- Slides: 14