The Opt IPuter Project Eliminating Bandwidth as a

The Opt. IPuter Project— Eliminating Bandwidth as a Barrier to Collaboration and Analysis DARPA Microsystems Technology Office Arlington, VA December 13, 2002 Dr. Larry Smarr Director, California Institute for Telecommunications and Information Technologies Harry E. Gruber Professor, Dept. of Computer Science and Engineering Jacobs School of Engineering, UCSD

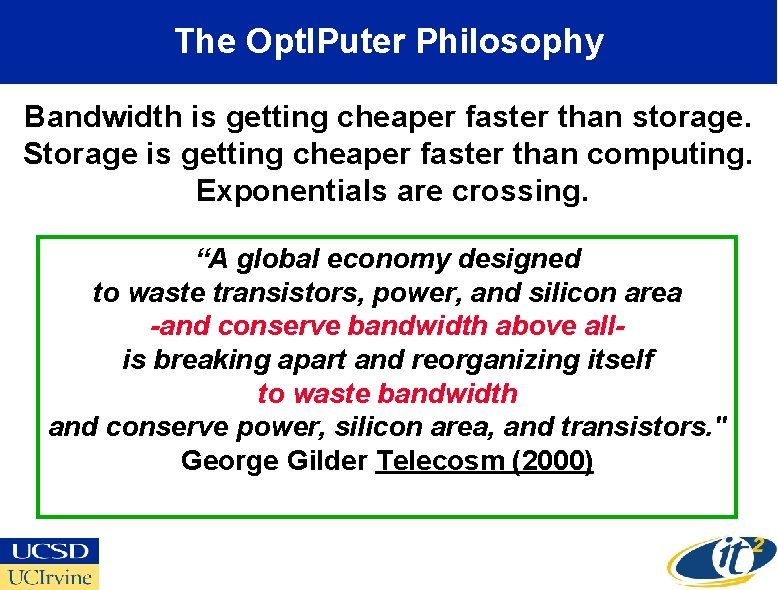

Abstract The Opt. IPuter is a radical distributed visualization, teleimmersion, data mining, and computing architecture. The National Science Foundation recently awarded a six-campus research consortium a five-year large Information Technology Research grant to construct working prototypes of the Opt. IPuter on campus, regional, national, and international scales. The Opt. IPuter project is driven by applications leadership from two scientific communities, the US National NSF's Earth. Scope and the National Institutes of Health's Biomedical Imaging Research Network (BIRN), both of which are beginning to produce a flood of large 3 D data objects (e. g. , 3 D brain images or a SAR terrain datasets) which are stored in distributed federated data repositories. Essentially, the Opt. IPuter is a "virtual metacomputer" in which the individual "processors" are widely distributed Linux PC clusters; the "backplane" is provided by Internet Protocol (IP) delivered over multiple dedicated 1 -10 Gbps optical wavelengths; and, the "mass storage systems" are large distributed scientific data repositories, fed by scientific instruments as Opt. IPuter peripheral devices, operated in near real-time. Collaboration, visualization, and teleimmersion tools are provided on tiled mono or stereo super-high definition screens directly connected to the Opt. IPuter to enable distributed analysis and decision making. The Opt. IPuter project aims at the re-optimization of the entire Grid stack of software abstractions, learning how, as George Gilder suggests, to "waste" bandwidth and storage in order to conserve increasingly "scarce" high-end computing and people time in this new world of inverted values.

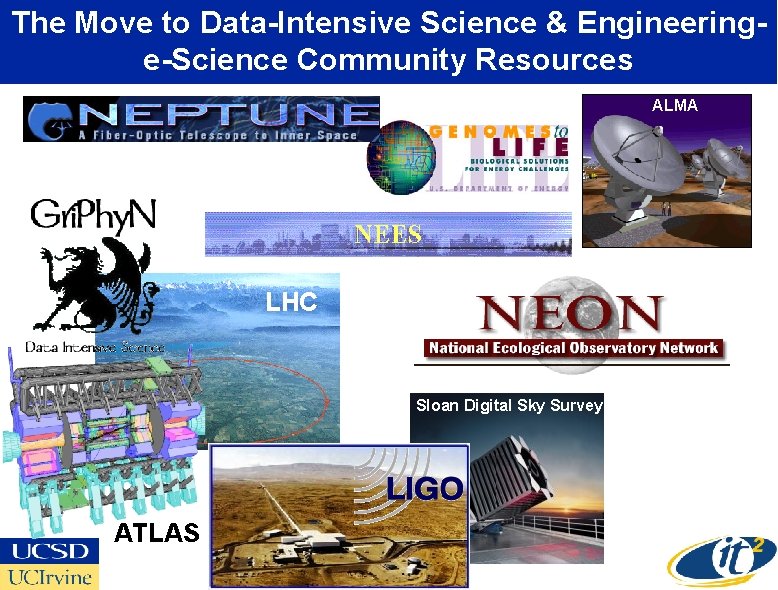

The Move to Data-Intensive Science & Engineeringe-Science Community Resources ALMA LHC Sloan Digital Sky Survey ATLAS

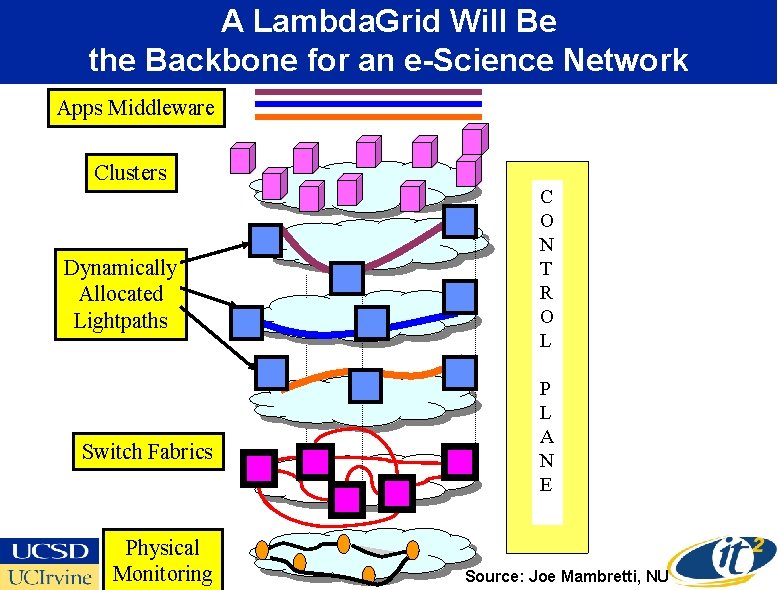

A Lambda. Grid Will Be the Backbone for an e-Science Network Apps Middleware Clusters Dynamically Allocated Lightpaths Switch Fabrics Physical Monitoring C O N T R O L P L A N E Source: Joe Mambretti, NU

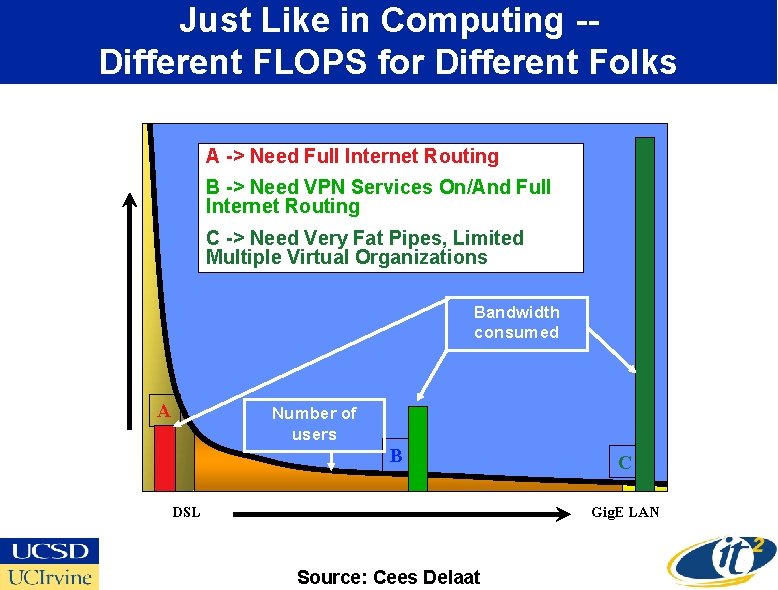

Just Like in Computing -Different FLOPS for Different Folks A -> Need Full Internet Routing B -> Need VPN Services On/And Full Internet Routing C -> Need Very Fat Pipes, Limited Multiple Virtual Organizations Bandwidth consumed A Number of users B DSL C Gig. E LAN Source: Cees Delaat

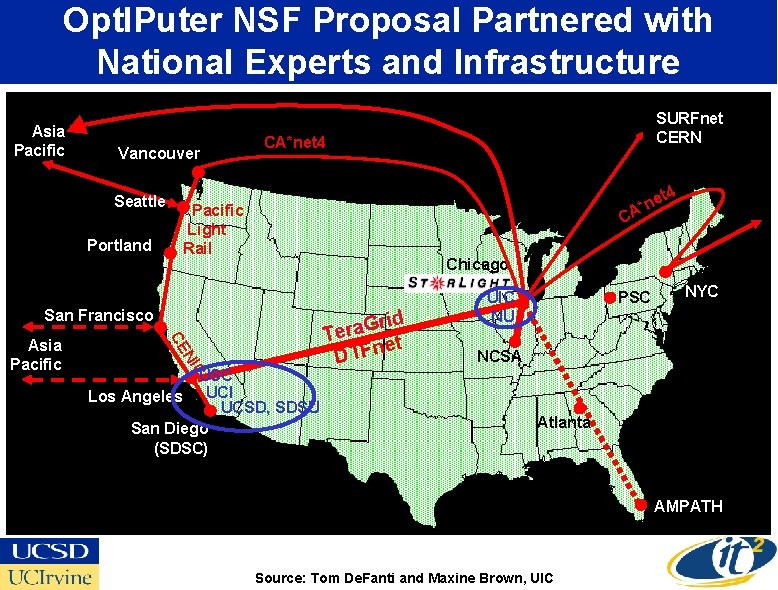

Opt. IPuter NSF Proposal Partnered with National Experts and Infrastructure Asia Pacific Vancouver Seattle Portland CA*net 4 e n * Pacific Light Rail CA Chicago San Francisco NI CE USC Los Angeles UCI UCSD, SDSU San Diego (SDSC) rid G a r e T et DTFn UIC NU PSC NYC NCSA C Asia Pacific SURFnet CERN Atlanta AMPATH Source: Tom De. Fanti and Maxine Brown, UIC

The Opt. IPuter is an Experimental Network Research Project • Driven by Large Neuroscience and Earth Science Data • Multiple Lambdas Linking Clusters and Storage – – – Lambda. Grid Software Stack Integration with PC Clusters Interactive Collaborative Volume Visualization Lambda Peer to Peer Storage With Optimized Storewidth Enhance Security Mechanisms Rethink TCP/IP Protocols • NSF Large Information Technology Research Proposal – – UCSD and UIC Lead Campuses—Larry Smarr PI USC, UCI, SDSU, NW Partnering Campuses Industrial Partners: IBM, Telcordia/SAIC, Chiaro Networks $13. 5 Million Over Five Years

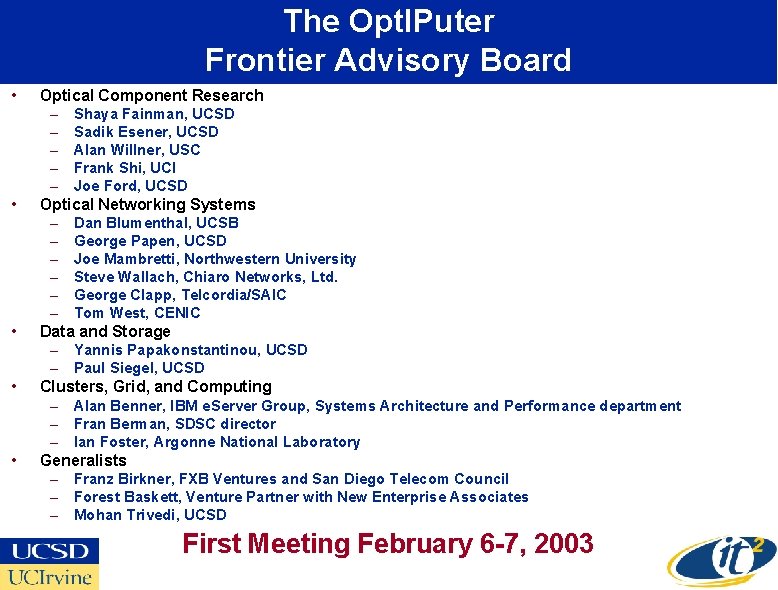

The Opt. IPuter Frontier Advisory Board • • • Optical Component Research – Shaya Fainman, UCSD – Sadik Esener, UCSD – Alan Willner, USC – Frank Shi, UCI – Joe Ford, UCSD Optical Networking Systems – Dan Blumenthal, UCSB – George Papen, UCSD – Joe Mambretti, Northwestern University – Steve Wallach, Chiaro Networks, Ltd. – George Clapp, Telcordia/SAIC – Tom West, CENIC Data and Storage – Yannis Papakonstantinou, UCSD – Paul Siegel, UCSD Clusters, Grid, and Computing – Alan Benner, IBM e. Server Group, Systems Architecture and Performance department – Fran Berman, SDSC director – Ian Foster, Argonne National Laboratory Generalists – Franz Birkner, FXB Ventures and San Diego Telecom Council – Forest Baskett, Venture Partner with New Enterprise Associates – Mohan Trivedi, UCSD First Meeting February 6 -7, 2003

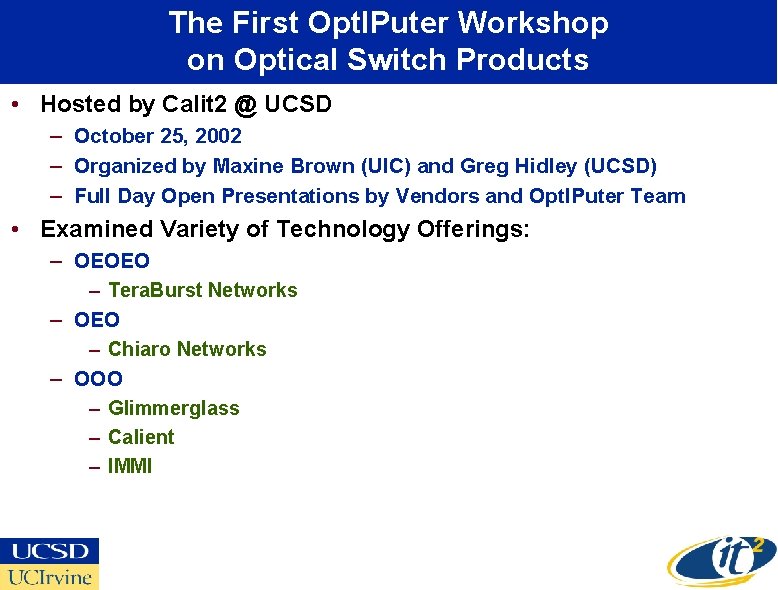

The First Opt. IPuter Workshop on Optical Switch Products • Hosted by Calit 2 @ UCSD – October 25, 2002 – Organized by Maxine Brown (UIC) and Greg Hidley (UCSD) – Full Day Open Presentations by Vendors and Opt. IPuter Team • Examined Variety of Technology Offerings: – OEOEO – Tera. Burst Networks – OEO – Chiaro Networks – OOO – Glimmerglass – Calient – IMMI

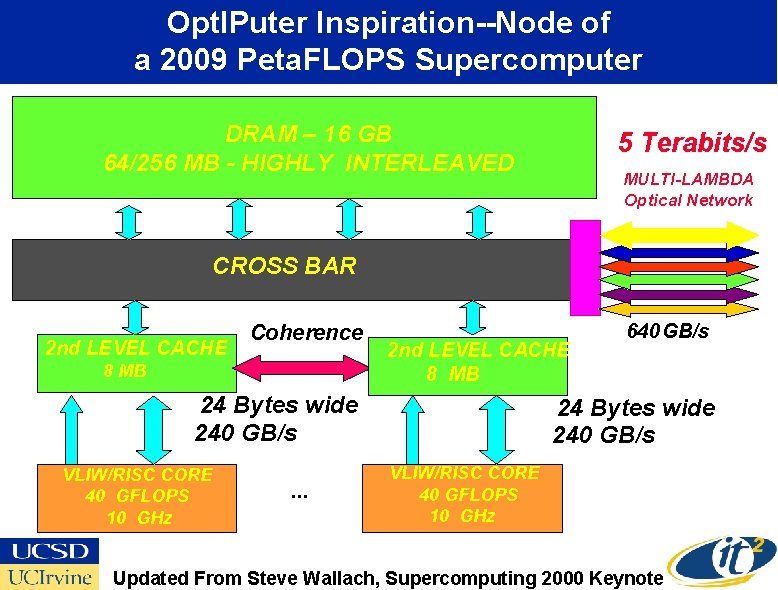

Opt. IPuter Inspiration--Node of a 2009 Peta. FLOPS Supercomputer DRAM – 16 GB DRAM - 4 MB GB- -HIGHLYINTERLEAVED 64/256 5 Terabits/s MULTI-LAMBDA Optical Network CROSS BAR 2 nd LEVEL CACHE Coherence 8 MB 2 nd LEVEL CACHE 8 MB 24 Bytes wide 240 GB/s VLIW/RISC CORE 40 GFLOPS 10 GHz . . . 640 GB/s 24 Bytes wide 240 GB/s VLIW/RISC CORE 40 GFLOPS 10 GHz Updated From Steve Wallach, Supercomputing 2000 Keynote

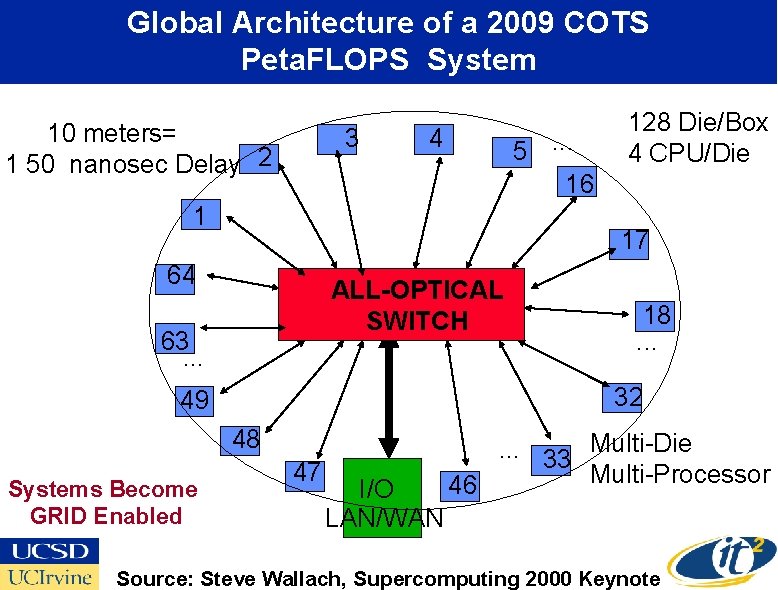

Global Architecture of a 2009 COTS Peta. FLOPS System 10 meters= 11 50 nanosec Delay 2 3 4 5. . . 16 1 17 64 ALL-OPTICAL SWITCH 63. . . 18. . . 32 49 48 Systems Become GRID Enabled 128 Die/Box 4 CPU/Die 47 I/O LAN/WAN . . . 33 Multi-Die Multi-Processor 46 Source: Steve Wallach, Supercomputing 2000 Keynote

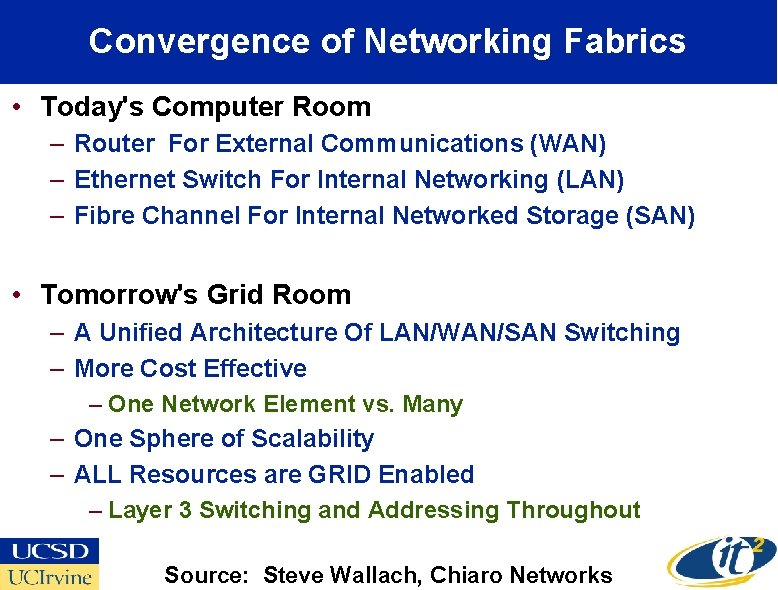

Convergence of Networking Fabrics • Today's Computer Room – Router For External Communications (WAN) – Ethernet Switch For Internal Networking (LAN) – Fibre Channel For Internal Networked Storage (SAN) • Tomorrow's Grid Room – A Unified Architecture Of LAN/WAN/SAN Switching – More Cost Effective – One Network Element vs. Many – One Sphere of Scalability – ALL Resources are GRID Enabled – Layer 3 Switching and Addressing Throughout Source: Steve Wallach, Chiaro Networks

The Opt. IPuter Philosophy Bandwidth is getting cheaper faster than storage. Storage is getting cheaper faster than computing. Exponentials are crossing. “A global economy designed to waste transistors, power, and silicon area -and conserve bandwidth above allis breaking apart and reorganizing itself to waste bandwidth and conserve power, silicon area, and transistors. " George Gilder Telecosm (2000)

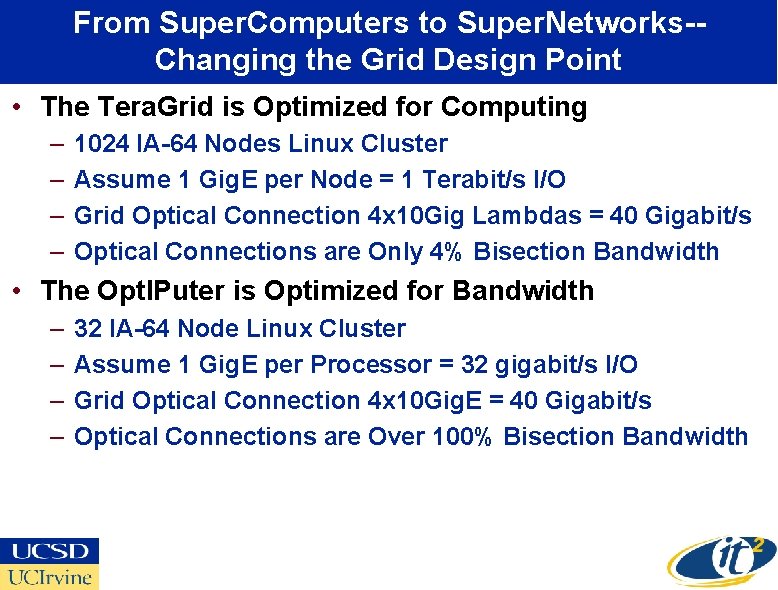

From Super. Computers to Super. Networks-Changing the Grid Design Point • The Tera. Grid is Optimized for Computing – – 1024 IA-64 Nodes Linux Cluster Assume 1 Gig. E per Node = 1 Terabit/s I/O Grid Optical Connection 4 x 10 Gig Lambdas = 40 Gigabit/s Optical Connections are Only 4% Bisection Bandwidth • The Opt. IPuter is Optimized for Bandwidth – – 32 IA-64 Node Linux Cluster Assume 1 Gig. E per Processor = 32 gigabit/s I/O Grid Optical Connection 4 x 10 Gig. E = 40 Gigabit/s Optical Connections are Over 100% Bisection Bandwidth

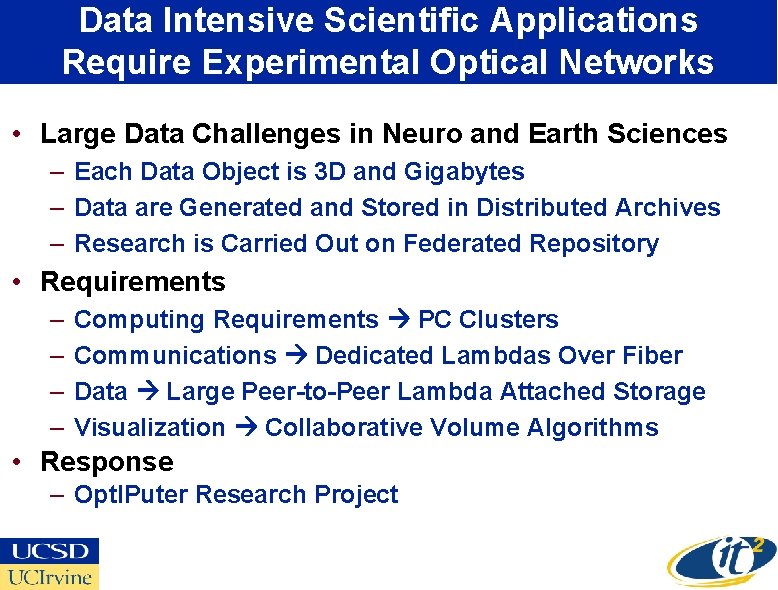

Data Intensive Scientific Applications Require Experimental Optical Networks • Large Data Challenges in Neuro and Earth Sciences – Each Data Object is 3 D and Gigabytes – Data are Generated and Stored in Distributed Archives – Research is Carried Out on Federated Repository • Requirements – – Computing Requirements PC Clusters Communications Dedicated Lambdas Over Fiber Data Large Peer-to-Peer Lambda Attached Storage Visualization Collaborative Volume Algorithms • Response – Opt. IPuter Research Project

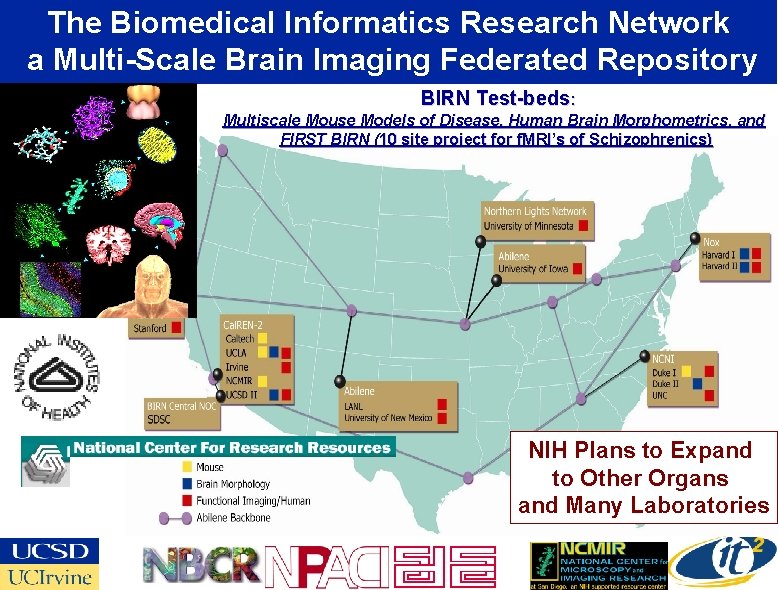

The Biomedical Informatics Research Network a Multi-Scale Brain Imaging Federated Repository BIRN Test-beds: Multiscale Mouse Models of Disease, Human Brain Morphometrics, and FIRST BIRN (10 site project for f. MRI’s of Schizophrenics) NIH Plans to Expand to Other Organs and Many Laboratories

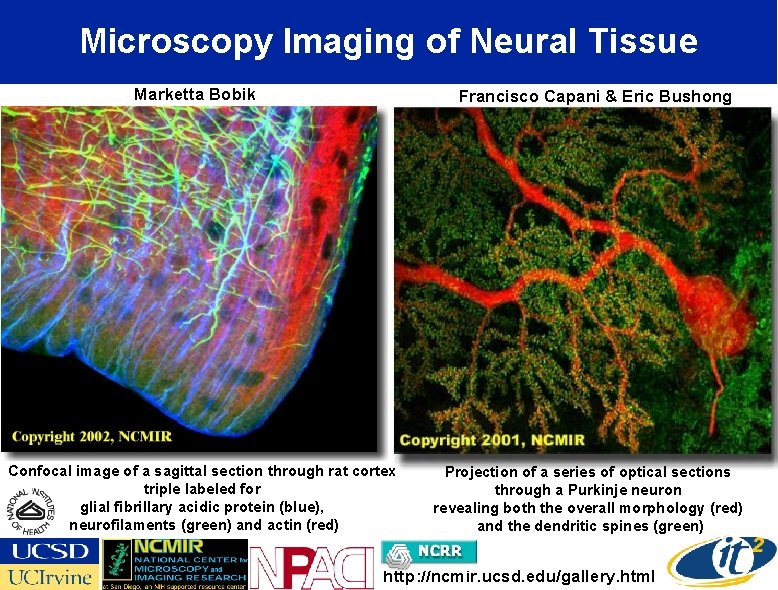

Microscopy Imaging of Neural Tissue Marketta Bobik Francisco Capani & Eric Bushong Confocal image of a sagittal section through rat cortex triple labeled for glial fibrillary acidic protein (blue), neurofilaments (green) and actin (red) Projection of a series of optical sections through a Purkinje neuron revealing both the overall morphology (red) and the dendritic spines (green) http: //ncmir. ucsd. edu/gallery. html

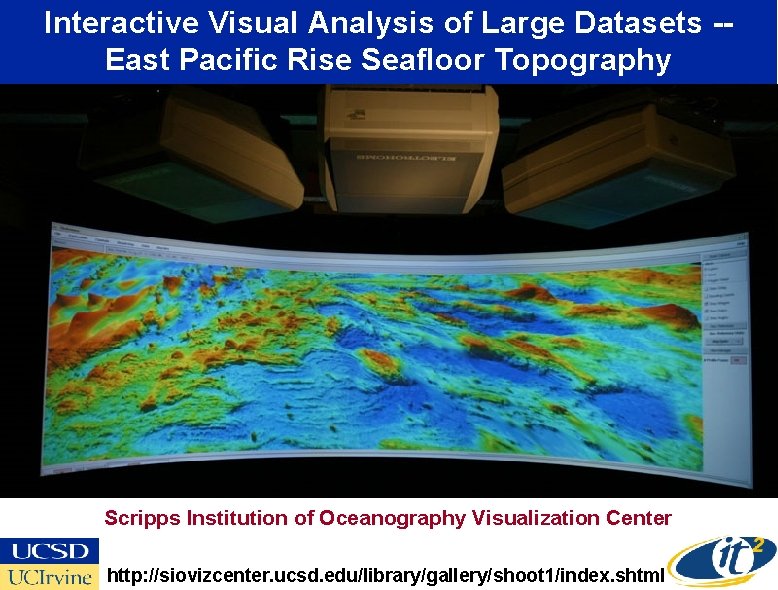

Interactive Visual Analysis of Large Datasets -East Pacific Rise Seafloor Topography Scripps Institution of Oceanography Visualization Center http: //siovizcenter. ucsd. edu/library/gallery/shoot 1/index. shtml

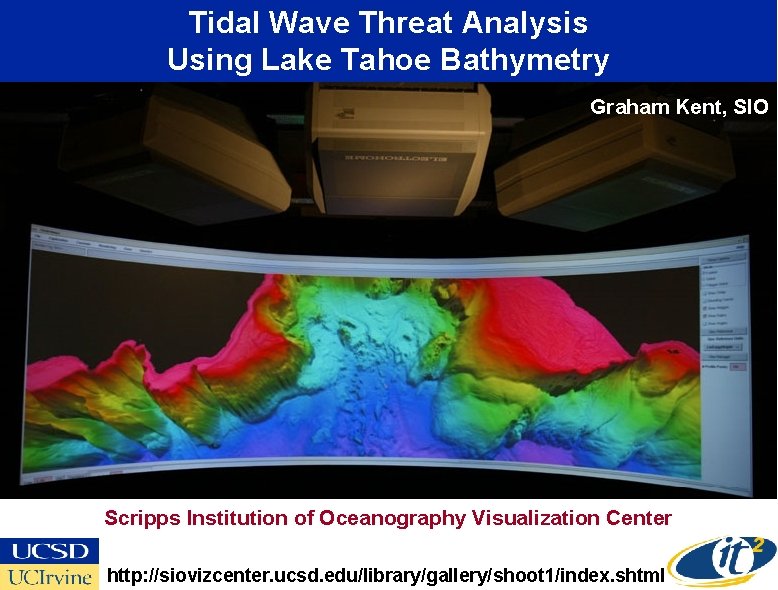

Tidal Wave Threat Analysis Using Lake Tahoe Bathymetry Graham Kent, SIO Scripps Institution of Oceanography Visualization Center http: //siovizcenter. ucsd. edu/library/gallery/shoot 1/index. shtml

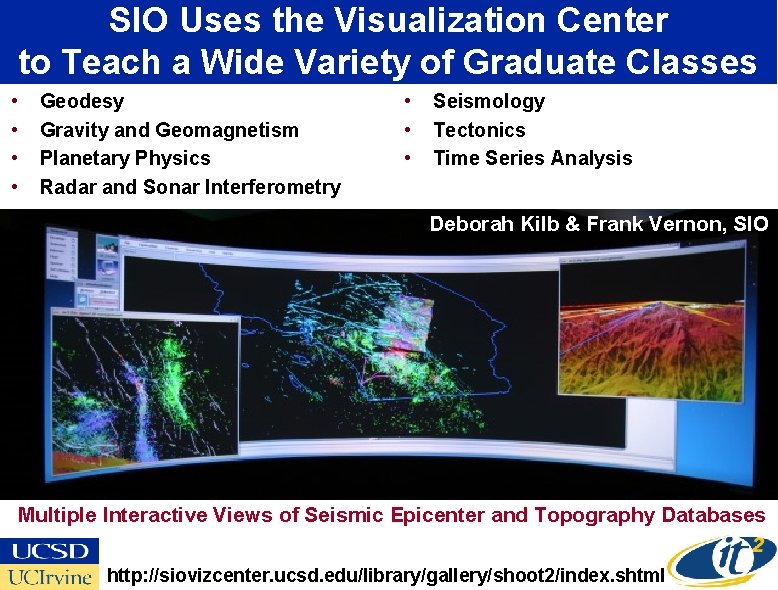

SIO Uses the Visualization Center to Teach a Wide Variety of Graduate Classes • • Geodesy Gravity and Geomagnetism Planetary Physics Radar and Sonar Interferometry • • • Seismology Tectonics Time Series Analysis Deborah Kilb & Frank Vernon, SIO Multiple Interactive Views of Seismic Epicenter and Topography Databases http: //siovizcenter. ucsd. edu/library/gallery/shoot 2/index. shtml

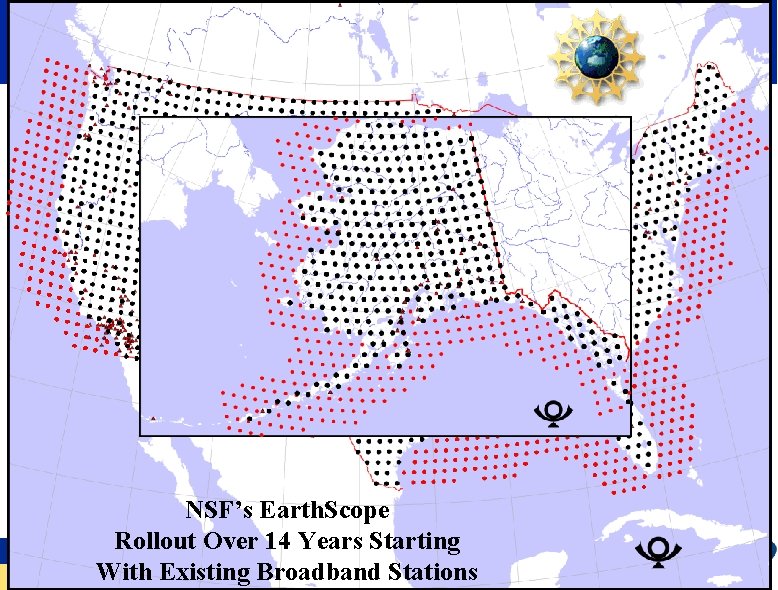

NSF’s Earth. Scope Rollout Over 14 Years Starting With Existing Broadband Stations

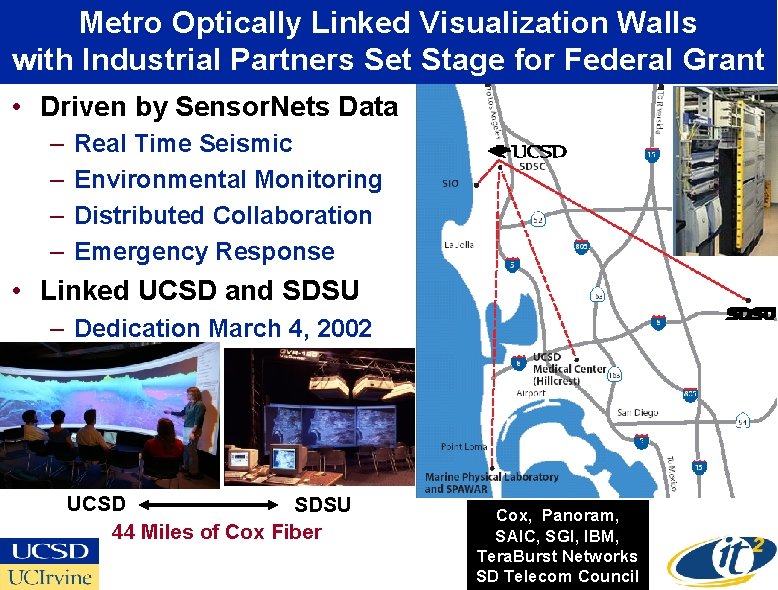

Metro Optically Linked Visualization Walls with Industrial Partners Set Stage for Federal Grant • Driven by Sensor. Nets Data – – Real Time Seismic Environmental Monitoring Distributed Collaboration Emergency Response • Linked UCSD and SDSU – Dedication March 4, 2002 Linking Control Rooms UCSD SDSU 44 Miles of Cox Fiber Cox, Panoram, SAIC, SGI, IBM, Tera. Burst Networks SD Telecom Council

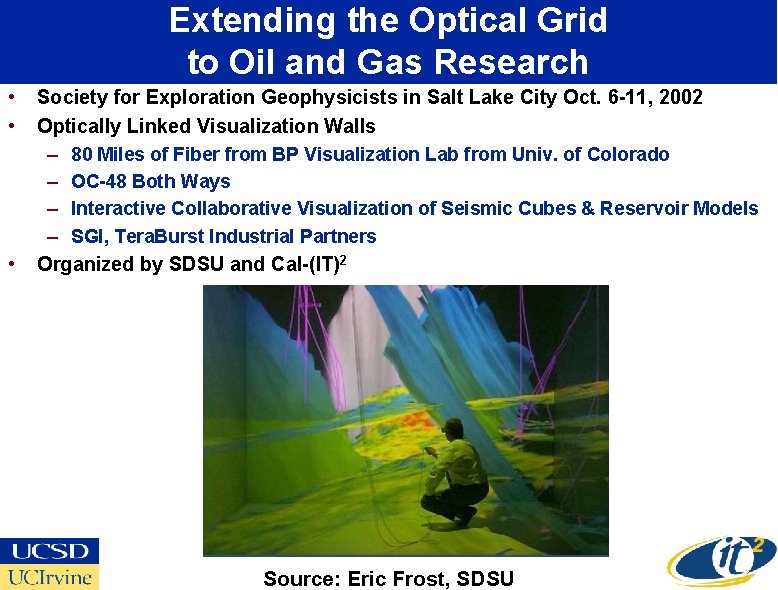

Extending the Optical Grid to Oil and Gas Research • • • Society for Exploration Geophysicists in Salt Lake City Oct. 6 -11, 2002 Optically Linked Visualization Walls – 80 Miles of Fiber from BP Visualization Lab from Univ. of Colorado – OC-48 Both Ways – Interactive Collaborative Visualization of Seismic Cubes & Reservoir Models – SGI, Tera. Burst Industrial Partners Organized by SDSU and Cal-(IT)2 Source: Eric Frost, SDSU

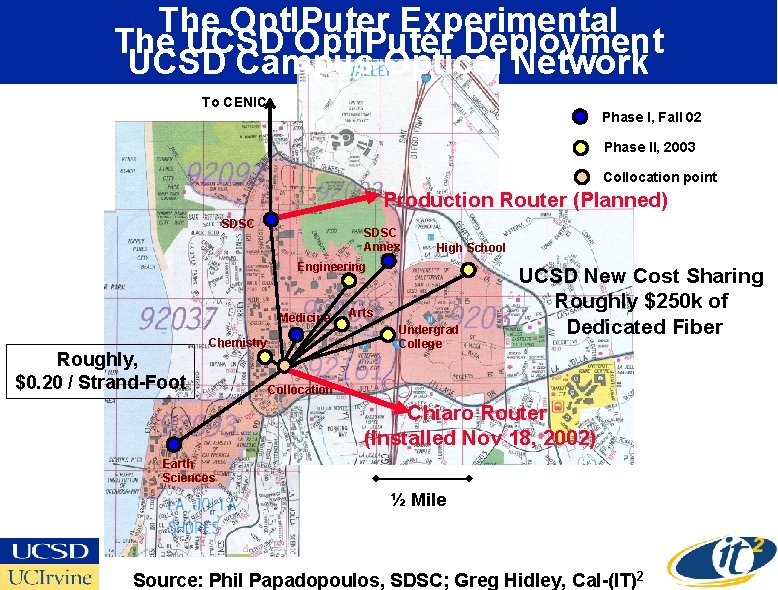

The Opt. IPuter Experimental The UCSD Opt. IPuter Deployment UCSD Campus Optical Network To CENIC Phase I, Fall 02 Phase II, 2003 Collocation point Production Router (Planned) SDSC Annex JSOE Engineering CRCA Arts SOM Medicine Roughly, $0. 20 / Strand-Foot Chemistry Phys. Sci Keck Preuss High School 6 th Undergrad College UCSD New Cost Sharing Roughly $250 k of Dedicated Fiber Node M Collocation Chiaro Router (Installed Nov 18, 2002) SIO Earth Sciences ½ Mile Source: Phil Papadopoulos, SDSC; Greg Hidley, Cal-(IT)2

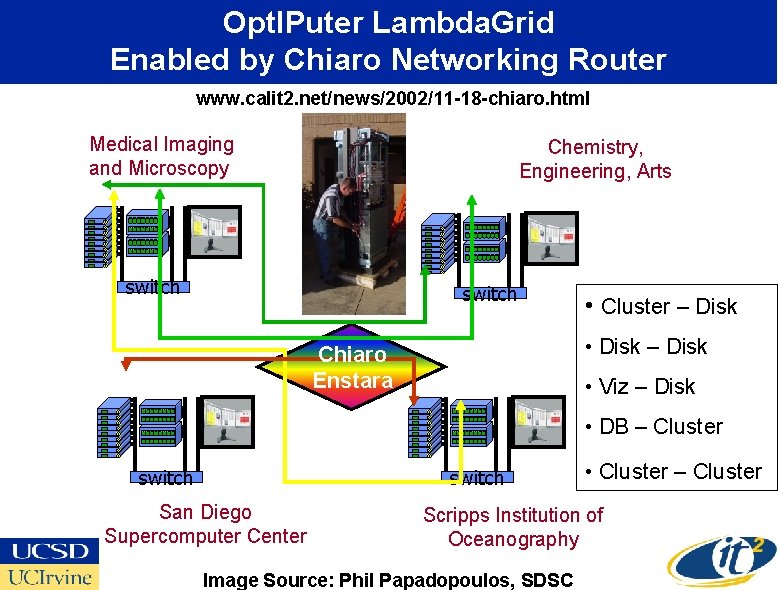

Opt. IPuter Lambda. Grid Enabled by Chiaro Networking Router www. calit 2. net/news/2002/11 -18 -chiaro. html Medical Imaging and Microscopy Chemistry, Engineering, Arts switch • Cluster – Disk • Disk – Disk Chiaro Enstara • Viz – Disk • DB – Cluster switch San Diego Supercomputer Center • Cluster – Cluster Scripps Institution of Oceanography Image Source: Phil Papadopoulos, SDSC

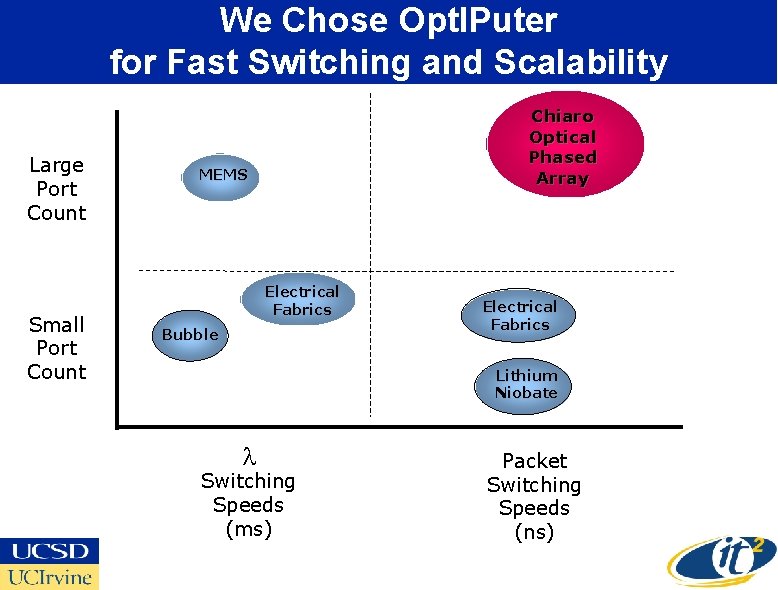

We Chose Opt. IPuter for Fast Switching and Scalability Large Port Count Small Port Count Chiaro Optical Phased Array MEMS Electrical Fabrics Bubble Electrical Fabrics Lithium Niobate l Switching Speeds (ms) Packet Switching Speeds (ns)

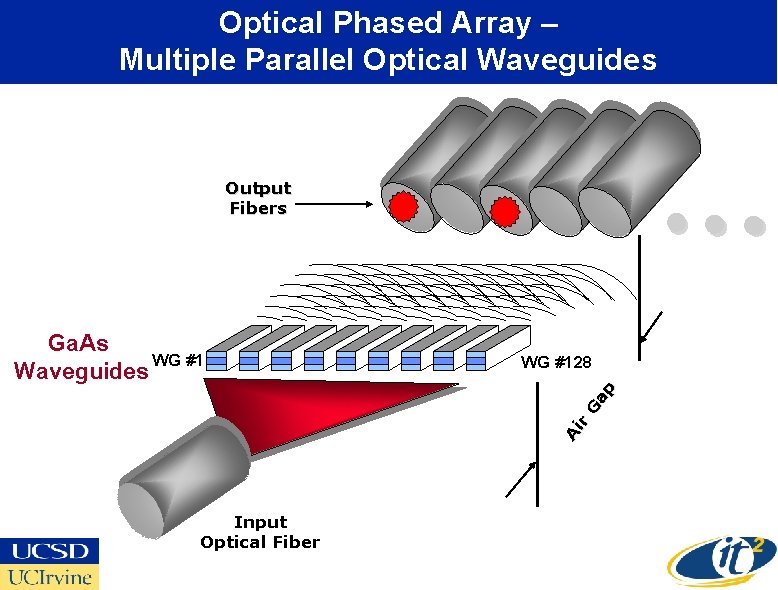

Optical Phased Array – Multiple Parallel Optical Waveguides Output Fibers WG #128 Ai r Ga p Ga. As WG #1 Waveguides • • • Input Optical Fiber

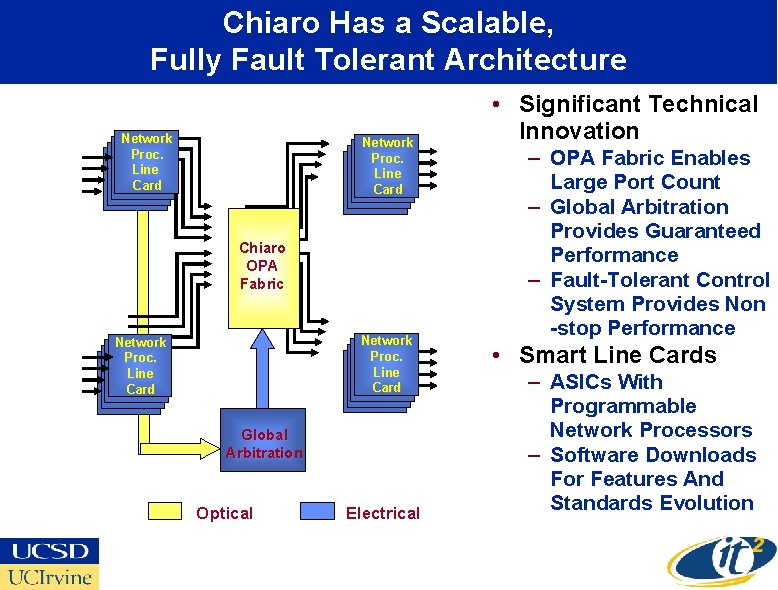

Chiaro Has a Scalable, Fully Fault Tolerant Architecture Network Proc. Line Card Chiaro OPA Fabric Network Proc. Line Card Global Arbitration Optical Electrical • Significant Technical Innovation – OPA Fabric Enables Large Port Count – Global Arbitration Provides Guaranteed Performance – Fault-Tolerant Control System Provides Non -stop Performance • Smart Line Cards – ASICs With Programmable Network Processors – Software Downloads For Features And Standards Evolution

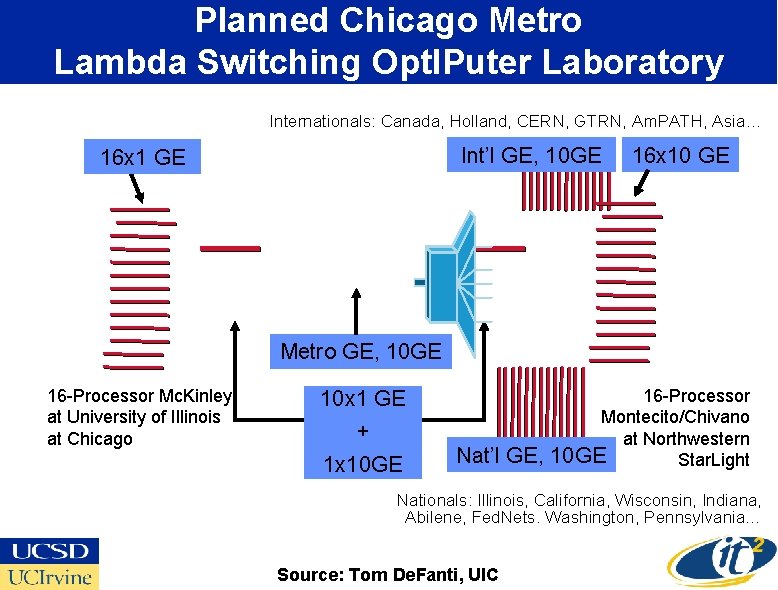

Planned Chicago Metro Lambda Switching Opt. IPuter Laboratory Internationals: Canada, Holland, CERN, GTRN, Am. PATH, Asia… Int’l GE, 10 GE 16 x 10 GE Metro GE, 10 GE 16 -Processor Mc. Kinley at University of Illinois at Chicago 10 x 1 GE + 1 x 10 GE Nat’l GE, 16 -Processor Montecito/Chivano at Northwestern 10 GE Star. Light Nationals: Illinois, California, Wisconsin, Indiana, Abilene, Fed. Nets. Washington, Pennsylvania… Source: Tom De. Fanti, UIC

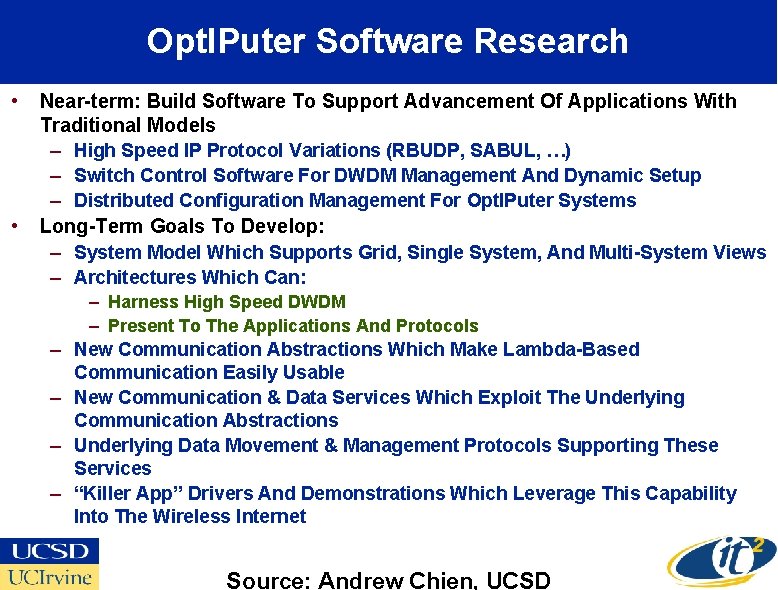

Opt. IPuter Software Research • • Near-term: Build Software To Support Advancement Of Applications With Traditional Models – High Speed IP Protocol Variations (RBUDP, SABUL, …) – Switch Control Software For DWDM Management And Dynamic Setup – Distributed Configuration Management For Opt. IPuter Systems Long-Term Goals To Develop: – System Model Which Supports Grid, Single System, And Multi-System Views – Architectures Which Can: – – – Harness High Speed DWDM – Present To The Applications And Protocols New Communication Abstractions Which Make Lambda-Based Communication Easily Usable New Communication & Data Services Which Exploit The Underlying Communication Abstractions Underlying Data Movement & Management Protocols Supporting These Services “Killer App” Drivers And Demonstrations Which Leverage This Capability Into The Wireless Internet Source: Andrew Chien, UCSD

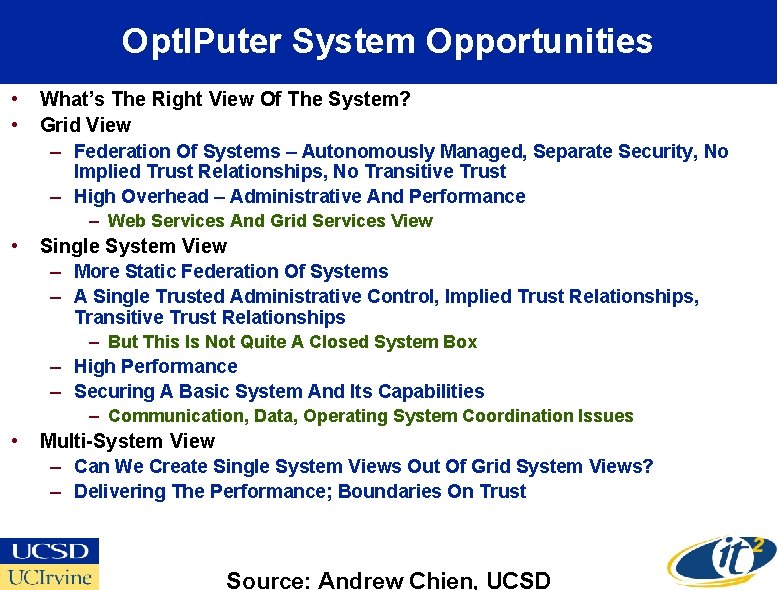

Opt. IPuter System Opportunities • • What’s The Right View Of The System? Grid View – Federation Of Systems – Autonomously Managed, Separate Security, No Implied Trust Relationships, No Transitive Trust – High Overhead – Administrative And Performance – Web Services And Grid Services View • Single System View – More Static Federation Of Systems – A Single Trusted Administrative Control, Implied Trust Relationships, Transitive Trust Relationships – But This Is Not Quite A Closed System Box – High Performance – Securing A Basic System And Its Capabilities – Communication, Data, Operating System Coordination Issues • Multi-System View – Can We Create Single System Views Out Of Grid System Views? – Delivering The Performance; Boundaries On Trust Source: Andrew Chien, UCSD

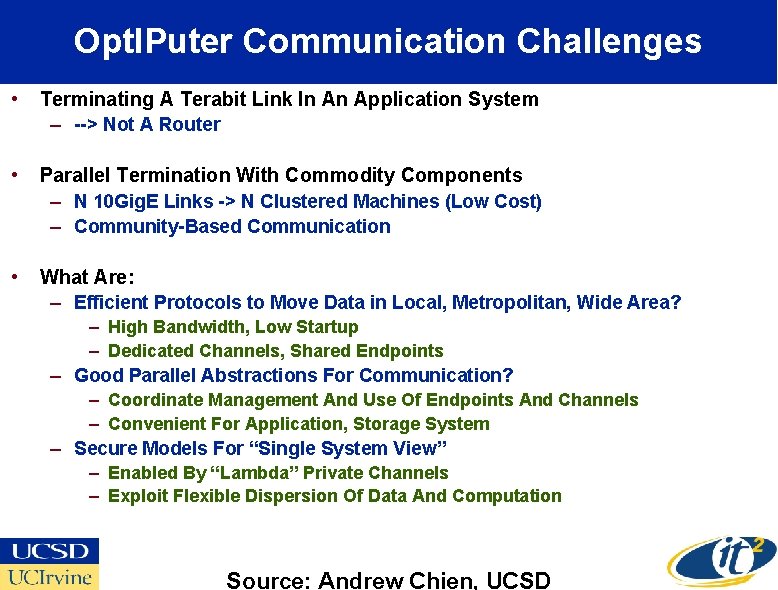

Opt. IPuter Communication Challenges • Terminating A Terabit Link In An Application System – --> Not A Router • Parallel Termination With Commodity Components – N 10 Gig. E Links -> N Clustered Machines (Low Cost) – Community-Based Communication • What Are: – Efficient Protocols to Move Data in Local, Metropolitan, Wide Area? – High Bandwidth, Low Startup – Dedicated Channels, Shared Endpoints – Good Parallel Abstractions For Communication? – Coordinate Management And Use Of Endpoints And Channels – Convenient For Application, Storage System – Secure Models For “Single System View” – Enabled By “Lambda” Private Channels – Exploit Flexible Dispersion Of Data And Computation Source: Andrew Chien, UCSD

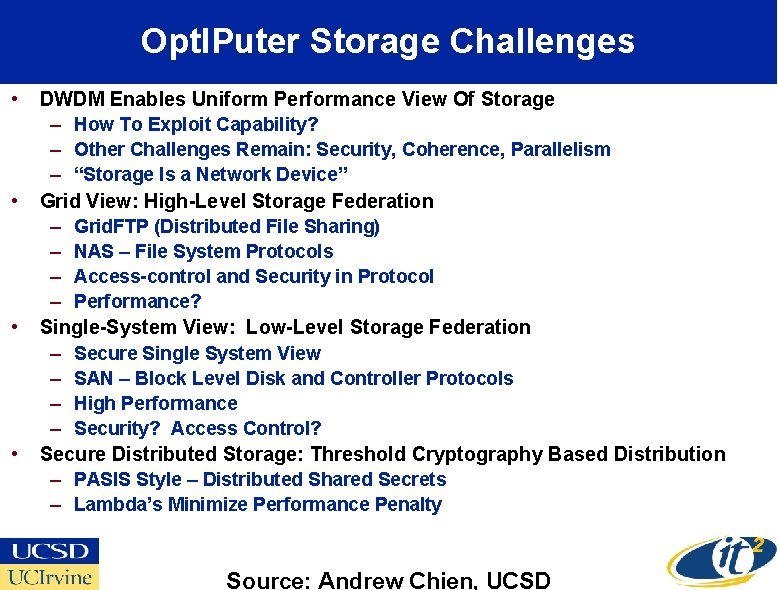

Opt. IPuter Storage Challenges • • DWDM Enables Uniform Performance View Of Storage – How To Exploit Capability? – Other Challenges Remain: Security, Coherence, Parallelism – “Storage Is a Network Device” Grid View: High-Level Storage Federation – Grid. FTP (Distributed File Sharing) – NAS – File System Protocols – Access-control and Security in Protocol – Performance? Single-System View: Low-Level Storage Federation – Secure Single System View – SAN – Block Level Disk and Controller Protocols – High Performance – Security? Access Control? Secure Distributed Storage: Threshold Cryptography Based Distribution – PASIS Style – Distributed Shared Secrets – Lambda’s Minimize Performance Penalty Source: Andrew Chien, UCSD

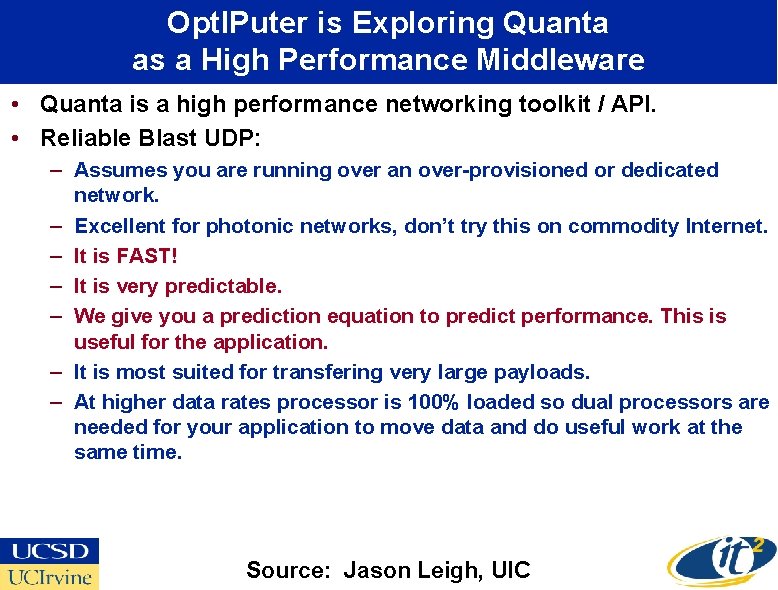

Opt. IPuter is Exploring Quanta as a High Performance Middleware • Quanta is a high performance networking toolkit / API. • Reliable Blast UDP: – Assumes you are running over an over-provisioned or dedicated network. – Excellent for photonic networks, don’t try this on commodity Internet. – It is FAST! – It is very predictable. – We give you a prediction equation to predict performance. This is useful for the application. – It is most suited for transfering very large payloads. – At higher data rates processor is 100% loaded so dual processors are needed for your application to move data and do useful work at the same time. Source: Jason Leigh, UIC

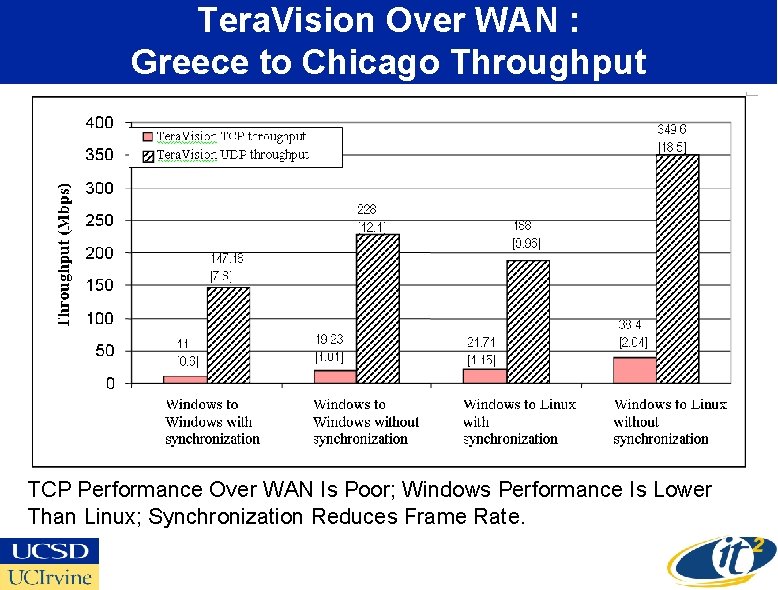

Tera. Vision Over WAN : Greece to Chicago Throughput TCP Performance Over WAN Is Poor; Windows Performance Is Lower Than Linux; Synchronization Reduces Frame Rate.

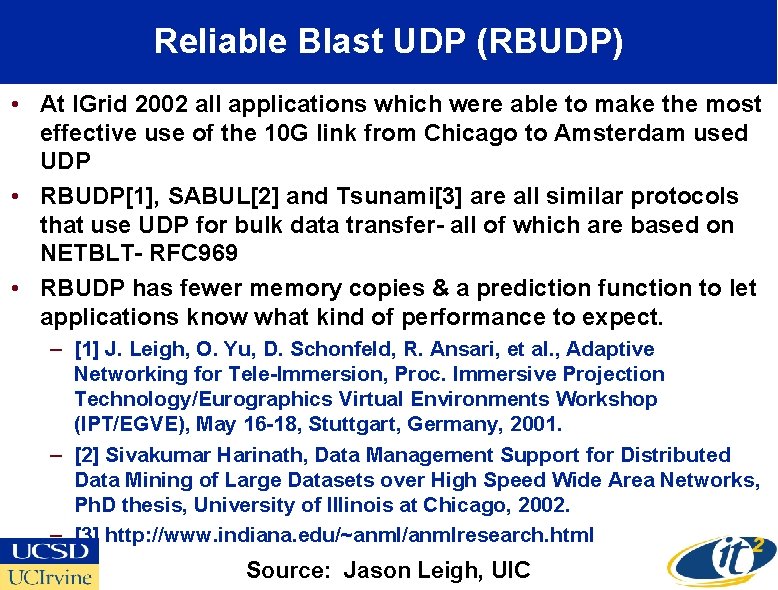

Reliable Blast UDP (RBUDP) • At IGrid 2002 all applications which were able to make the most effective use of the 10 G link from Chicago to Amsterdam used UDP • RBUDP[1], SABUL[2] and Tsunami[3] are all similar protocols that use UDP for bulk data transfer- all of which are based on NETBLT- RFC 969 • RBUDP has fewer memory copies & a prediction function to let applications know what kind of performance to expect. – [1] J. Leigh, O. Yu, D. Schonfeld, R. Ansari, et al. , Adaptive Networking for Tele-Immersion, Proc. Immersive Projection Technology/Eurographics Virtual Environments Workshop (IPT/EGVE), May 16 -18, Stuttgart, Germany, 2001. – [2] Sivakumar Harinath, Data Management Support for Distributed Data Mining of Large Datasets over High Speed Wide Area Networks, Ph. D thesis, University of Illinois at Chicago, 2002. – [3] http: //www. indiana. edu/~anml/anmlresearch. html Source: Jason Leigh, UIC

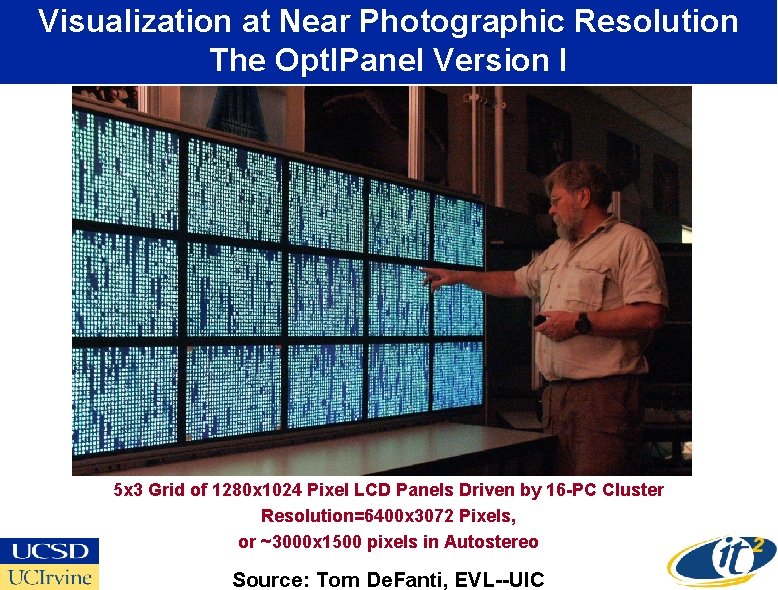

Visualization at Near Photographic Resolution The Opt. IPanel Version I 5 x 3 Grid of 1280 x 1024 Pixel LCD Panels Driven by 16 -PC Cluster Resolution=6400 x 3072 Pixels, or ~3000 x 1500 pixels in Autostereo Source: Tom De. Fanti, EVL--UIC

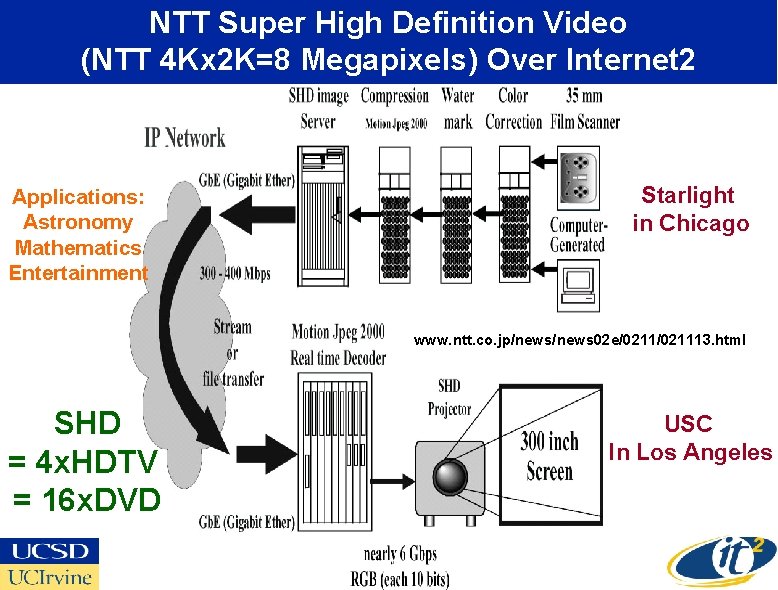

NTT Super High Definition Video (NTT 4 Kx 2 K=8 Megapixels) Over Internet 2 Applications: Astronomy Mathematics Entertainment Starlight in Chicago www. ntt. co. jp/news 02 e/021113. html SHD = 4 x. HDTV = 16 x. DVD USC In Los Angeles

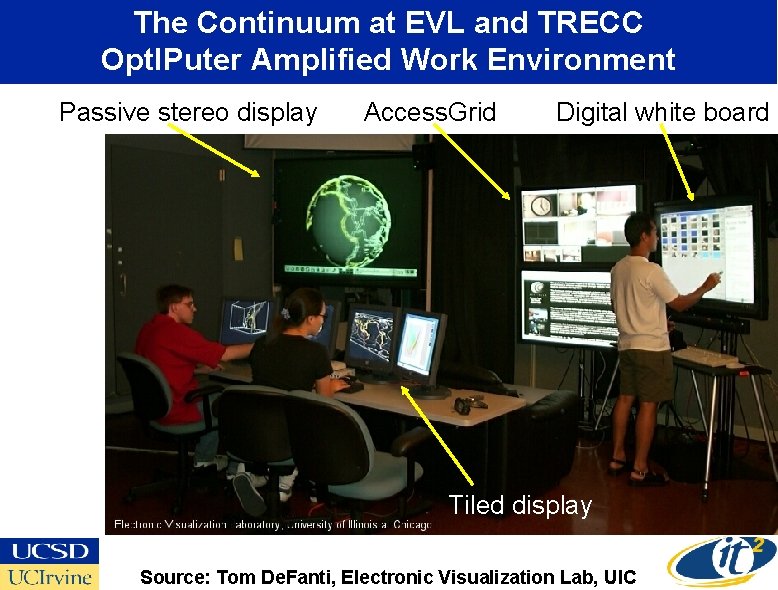

The Continuum at EVL and TRECC Opt. IPuter Amplified Work Environment Passive stereo display Access. Grid Digital white board Tiled display Source: Tom De. Fanti, Electronic Visualization Lab, UIC

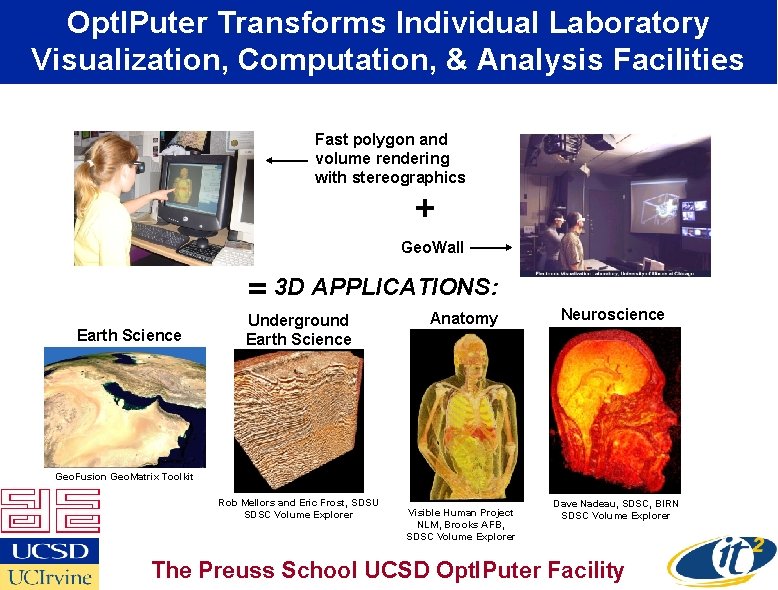

Opt. IPuter Transforms Individual Laboratory Visualization, Computation, & Analysis Facilities Fast polygon and volume rendering with stereographics + Geo. Wall = 3 D APPLICATIONS: Earth Science Underground Earth Science Anatomy Neuroscience Geo. Fusion Geo. Matrix Toolkit Rob Mellors and Eric Frost, SDSU SDSC Volume Explorer Visible Human Project NLM, Brooks AFB, SDSC Volume Explorer Dave Nadeau, SDSC, BIRN SDSC Volume Explorer The Preuss School UCSD Opt. IPuter Facility

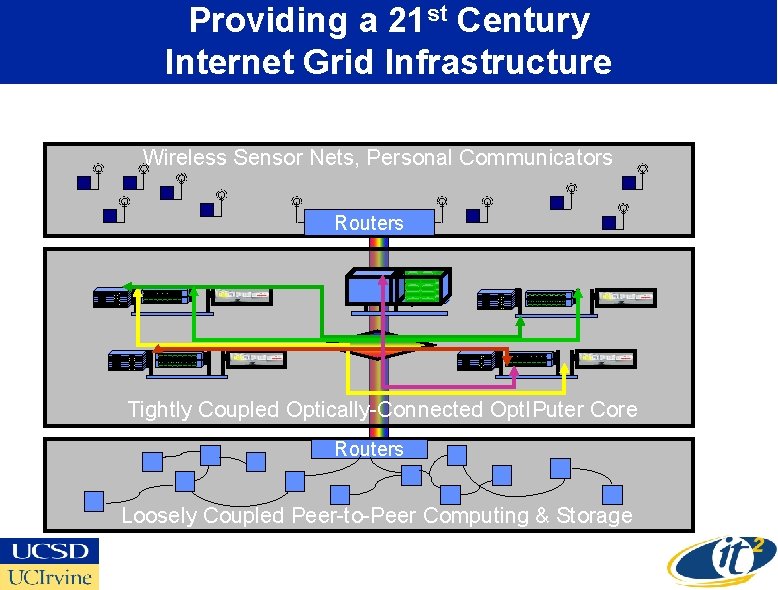

Providing a 21 st Century Internet Grid Infrastructure Wireless Sensor Nets, Personal Communicators Routers Tightly Coupled Optically-Connected Opt. IPuter Core Routers Loosely Coupled Peer-to-Peer Computing & Storage

- Slides: 41