The Netflix Challenge Parallel Collaborative Filtering James Jolly

The Netflix Challenge Parallel Collaborative Filtering James Jolly Ben Murrell CS 387 Parallel Programming with MPI Dr. Fikret Ercal

What is Netflix? • subscription-based movie rental • online frontend • over 100, 000 movies to pick from • 8 M subscribers • 2007 net income: $67 M

What is the Netflix Prize? • attempt to increase Cinematch accuracy • predict how users will rate unseen movies • $1 M for 10% improvement

The contest dataset… • contains 100, 480, 577 ratings • from 480, 189 users • for 17, 770 movies

Why is it hard? • user tastes difficult to model in general • movies tough to classify • large volume of data

Sounds like a job for collaborative filtering! • infer relationships between users • leverage them to make predictions

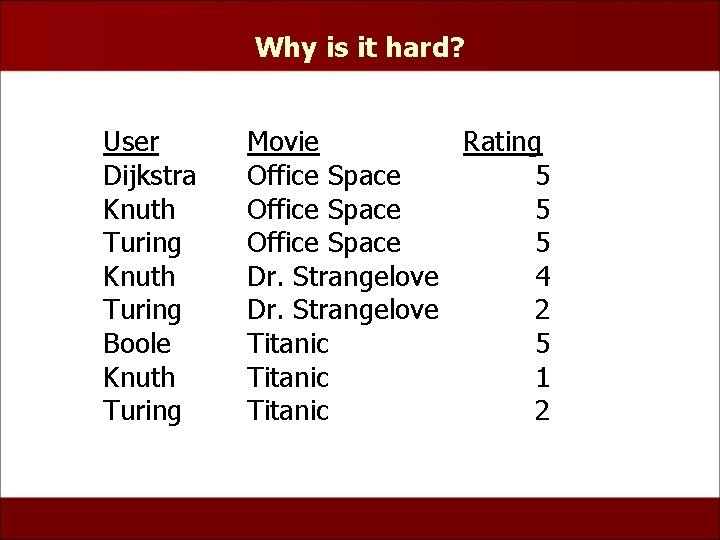

Why is it hard? User Dijkstra Knuth Turing Boole Knuth Turing Movie Rating Office Space 5 Dr. Strangelove 4 Dr. Strangelove 2 Titanic 5 Titanic 1 Titanic 2

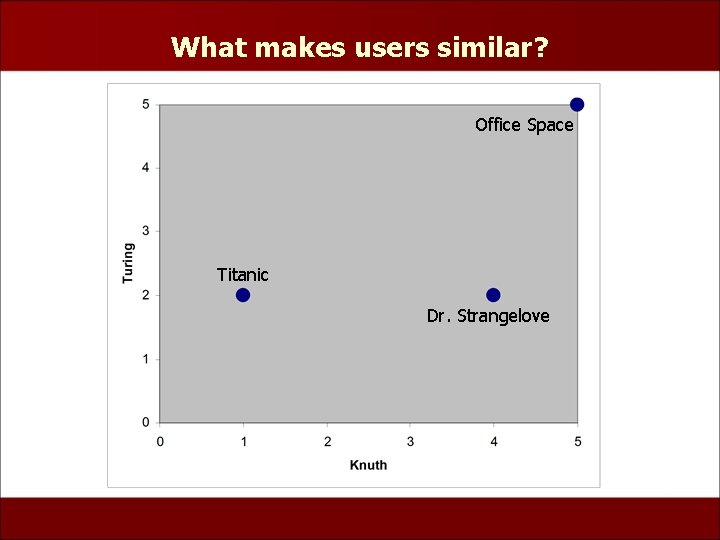

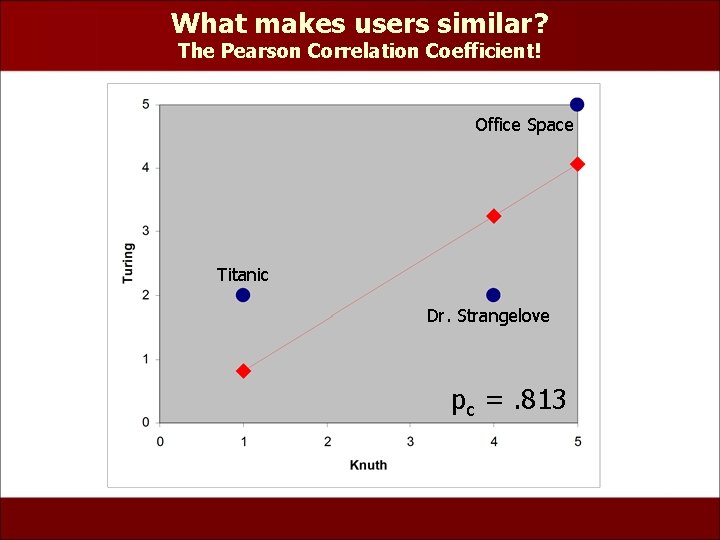

What makes users similar? Office Space Titanic Dr. Strangelove

What makes users similar? The Pearson Correlation Coefficient! Office Space Titanic Dr. Strangelove pc =. 813

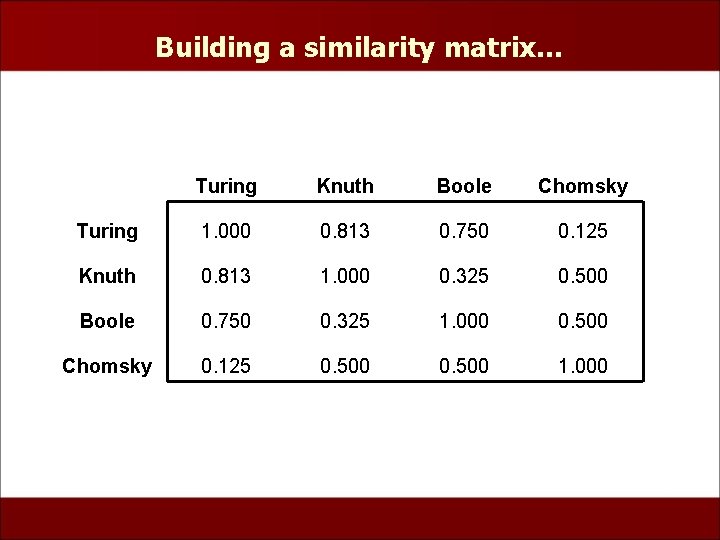

Building a similarity matrix… Turing Knuth Boole Chomsky Turing 1. 000 0. 813 0. 750 0. 125 Knuth 0. 813 1. 000 0. 325 0. 500 Boole 0. 750 0. 325 1. 000 0. 500 Chomsky 0. 125 0. 500 1. 000

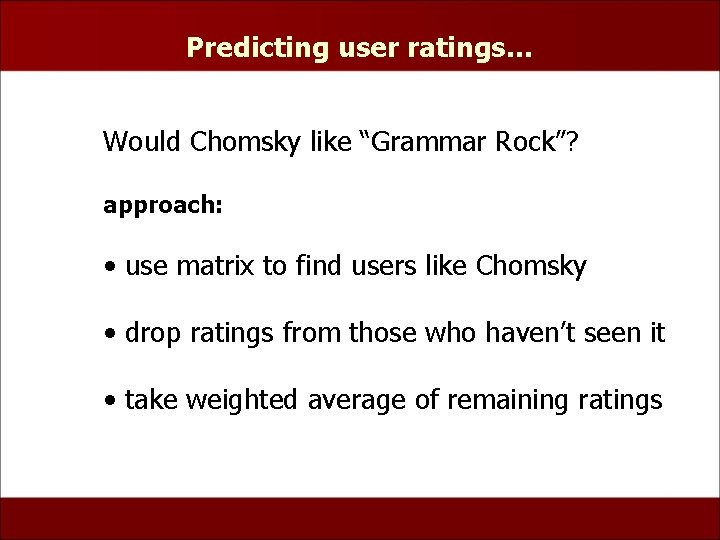

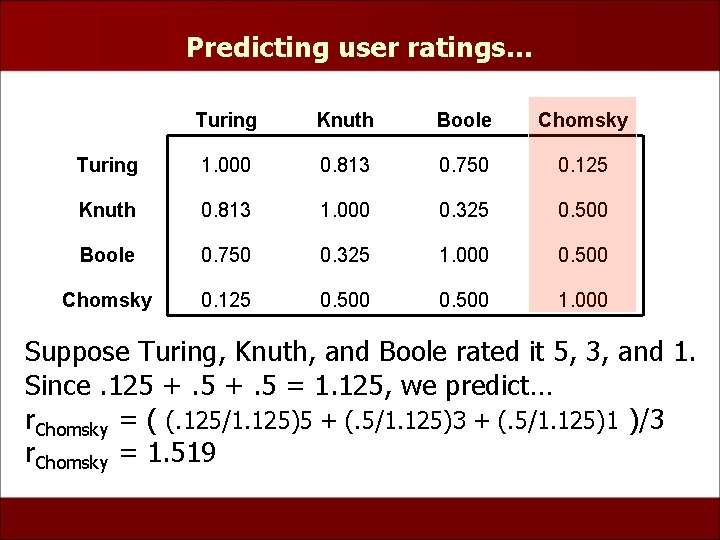

Predicting user ratings… Would Chomsky like “Grammar Rock”? approach: • use matrix to find users like Chomsky • drop ratings from those who haven’t seen it • take weighted average of remaining ratings

Predicting user ratings… Turing Knuth Boole Chomsky Turing 1. 000 0. 813 0. 750 0. 125 Knuth 0. 813 1. 000 0. 325 0. 500 Boole 0. 750 0. 325 1. 000 0. 500 Chomsky 0. 125 0. 500 1. 000 Suppose Turing, Knuth, and Boole rated it 5, 3, and 1. Since. 125 +. 5 = 1. 125, we predict… r. Chomsky = ( (. 125/1. 125)5 + (. 5/1. 125)3 + (. 5/1. 125)1 )/3 r. Chomsky = 1. 519

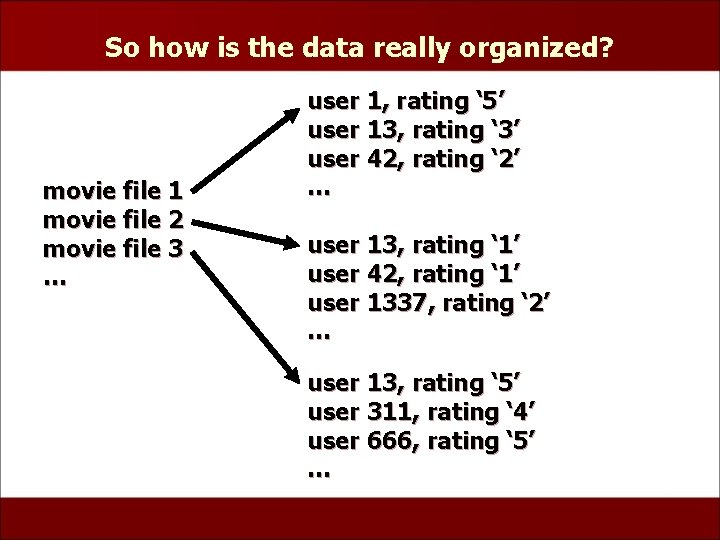

So how is the data really organized? movie file 1 movie file 2 movie file 3 … user 1, rating ‘ 5’ user 13, rating ‘ 3’ user 42, rating ‘ 2’ … user 13, rating ‘ 1’ user 42, rating ‘ 1’ user 1337, rating ‘ 2’ … user 13, rating ‘ 5’ user 311, rating ‘ 4’ user 666, rating ‘ 5’ …

Training Data • 17, 770 text files (one for each movie) • > 2 GB

Parallelization Two Step Process: • Learning Step • Prediction Step Concerns: • Data Distribution • Task Distribution

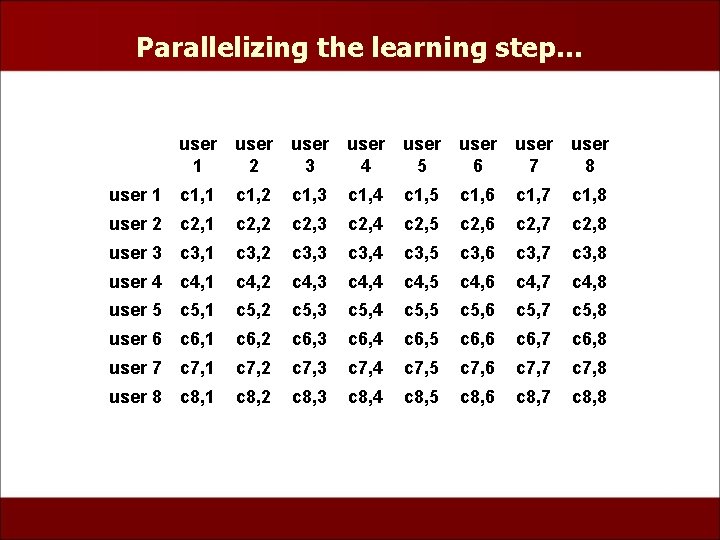

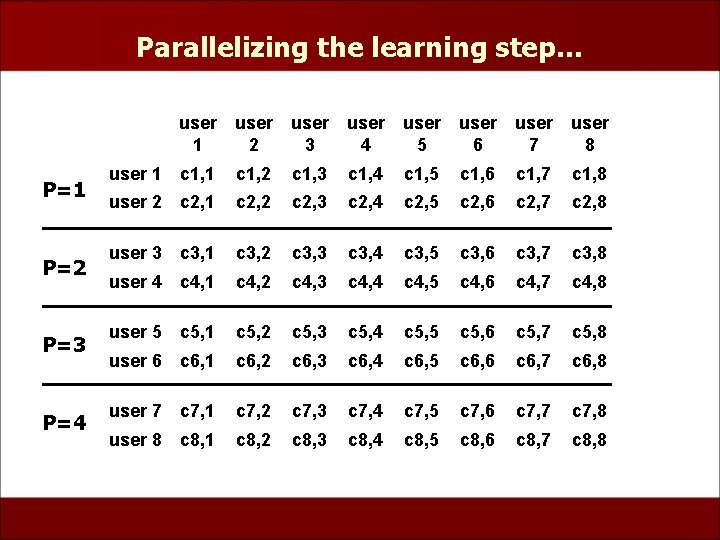

Parallelizing the learning step… user 1 user 2 user 3 user 4 user 5 user 6 user 7 user 8 user 1 c 1, 2 c 1, 3 c 1, 4 c 1, 5 c 1, 6 c 1, 7 c 1, 8 user 2 c 2, 1 c 2, 2 c 2, 3 c 2, 4 c 2, 5 c 2, 6 c 2, 7 c 2, 8 user 3 c 3, 1 c 3, 2 c 3, 3 c 3, 4 c 3, 5 c 3, 6 c 3, 7 c 3, 8 user 4 c 4, 1 c 4, 2 c 4, 3 c 4, 4 c 4, 5 c 4, 6 c 4, 7 c 4, 8 user 5 c 5, 1 c 5, 2 c 5, 3 c 5, 4 c 5, 5 c 5, 6 c 5, 7 c 5, 8 user 6 c 6, 1 c 6, 2 c 6, 3 c 6, 4 c 6, 5 c 6, 6 c 6, 7 c 6, 8 user 7 c 7, 1 c 7, 2 c 7, 3 c 7, 4 c 7, 5 c 7, 6 c 7, 7 c 7, 8 user 8 c 8, 1 c 8, 2 c 8, 3 c 8, 4 c 8, 5 c 8, 6 c 8, 7 c 8, 8

Parallelizing the learning step… P=1 P=2 P=3 P=4 user 1 user 2 user 3 user 4 user 5 user 6 user 7 user 8 user 1 c 1, 2 c 1, 3 c 1, 4 c 1, 5 c 1, 6 c 1, 7 c 1, 8 user 2 c 2, 1 c 2, 2 c 2, 3 c 2, 4 c 2, 5 c 2, 6 c 2, 7 c 2, 8 user 3 c 3, 1 c 3, 2 c 3, 3 c 3, 4 c 3, 5 c 3, 6 c 3, 7 c 3, 8 user 4 c 4, 1 c 4, 2 c 4, 3 c 4, 4 c 4, 5 c 4, 6 c 4, 7 c 4, 8 user 5 c 5, 1 c 5, 2 c 5, 3 c 5, 4 c 5, 5 c 5, 6 c 5, 7 c 5, 8 user 6 c 6, 1 c 6, 2 c 6, 3 c 6, 4 c 6, 5 c 6, 6 c 6, 7 c 6, 8 user 7 c 7, 1 c 7, 2 c 7, 3 c 7, 4 c 7, 5 c 7, 6 c 7, 7 c 7, 8 user 8 c 8, 1 c 8, 2 c 8, 3 c 8, 4 c 8, 5 c 8, 6 c 8, 7 c 8, 8

![Parallelizing the learning step… • store data as user[movie] = rating • each proc Parallelizing the learning step… • store data as user[movie] = rating • each proc](http://slidetodoc.com/presentation_image/7252af8e2398b20415f5c2fa5596d0fb/image-18.jpg)

Parallelizing the learning step… • store data as user[movie] = rating • each proc has all rating data for n/p users • calculate each ci, j • calculation requires message passing (only 1/p of correlations can be calculated locally within a node)

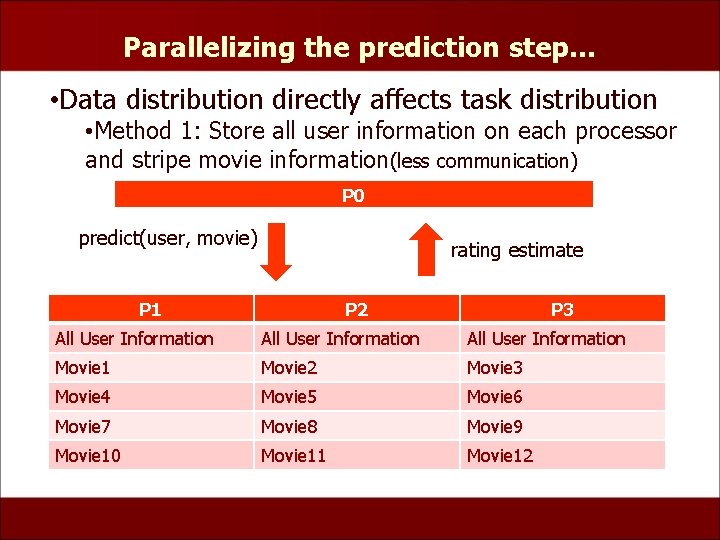

Parallelizing the prediction step… • Data distribution directly affects task distribution • Method 1: Store all user information on each processor and stripe movie information(less communication) P 0 predict(user, movie) rating estimate P 1 P 2 P 3 All User Information Movie 1 Movie 2 Movie 3 Movie 4 Movie 5 Movie 6 Movie 7 Movie 8 Movie 9 Movie 10 Movie 11 Movie 12

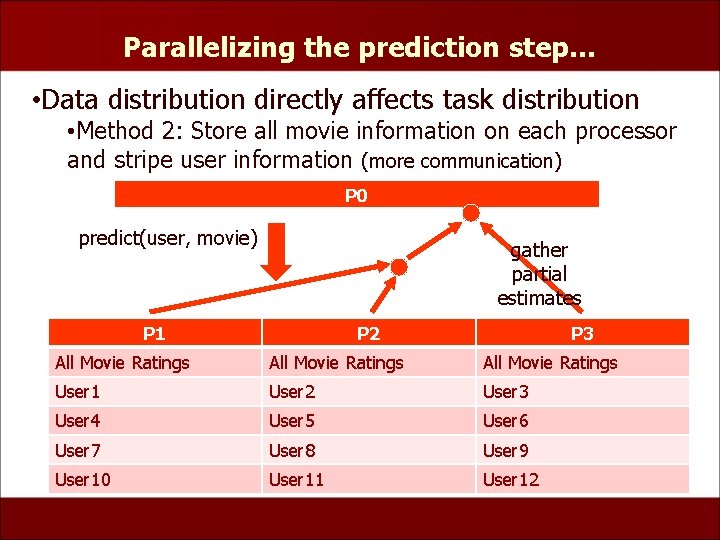

Parallelizing the prediction step… • Data distribution directly affects task distribution • Method 2: Store all movie information on each processor and stripe user information (more communication) P 0 predict(user, movie) gather partial estimates P 1 P 2 P 3 All Movie Ratings User 1 User 2 User 3 User 4 User 5 User 6 User 7 User 8 User 9 User 10 User 11 User 12

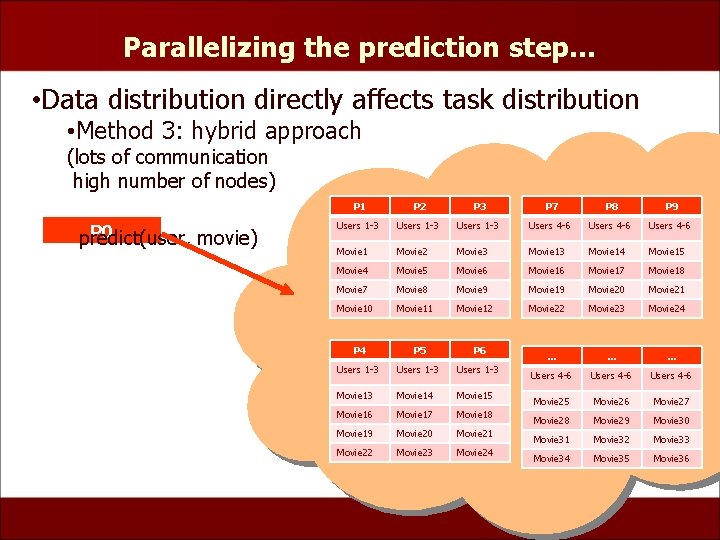

Parallelizing the prediction step… • Data distribution directly affects task distribution • Method 3: hybrid approach (lots of communication high number of nodes) P 0 predict(user, movie) P 1 P 2 P 3 P 7 P 8 P 9 Users 1 -3 Users 4 -6 Movie 1 Movie 2 Movie 3 Movie 14 Movie 15 Movie 4 Movie 5 Movie 6 Movie 17 Movie 18 Movie 7 Movie 8 Movie 9 Movie 19 Movie 20 Movie 21 Movie 10 Movie 11 Movie 12 Movie 23 Movie 24 P 5 P 6 Users 1 -3 Movie 14 Movie 15 Movie 16 Movie 17 Movie 18 Movie 19 Movie 20 Movie 21 Movie 22 Movie 23 Movie 24 … … … Users 4 -6 Movie 25 Movie 26 Movie 27 Movie 28 Movie 29 Movie 30 Movie 31 Movie 32 Movie 33 Movie 34 Movie 35 Movie 36

Our Present Implementation • operates on a trimmed-down dataset • stripes movie information and stores similarity matrix in each processor • this won’t scale well! • storing all movie information on each node would be optimal, but nic. mst. edu can’t handle it

In summary… • tackling Netflix Prize requires lots of data handling • we are working toward an implementation that can operate on the entire training set • simple collaborative filtering should get us close to the old Cinematch performance

- Slides: 23