The Motivation Statements by Prof Raj Reddy Information

The Motivation. Statements by Prof Raj Reddy • Information will be read by both humans and machines - more so by machines. • If you are not in Google you are not there ! What does Google do ?

The Google • • It removes the stop words It stems It does not disambiguate It makes you wonder why you did such a beautiful Translation

The machine Translation • Often follow the Law of Diminishing Returns – Asymptotic – • Require Huge Human, material and computer resources. • Assume that the user is unaware of either the context or has no intelligence • Almost impossible to attain perfect Human Like translation • Lexical, syntactical and semantic error

Our Experience • The Migrant workers in India pick up the local alien language in less than a month • The Butler English • Learning by Experience and usage

What is a Good Translation • If the user does not get irritated when reading the translated text • Intelligent Human Beings have more resistance to irritation • If we design a Machine Translation system that assumes Intelligent Users, then the resourses and time required would be significantly less • Intelligent users would be more tolerant to syntactical and semantic errors

Good Enough Translation • Lexical Errors are easy to handle since bilingual dictionaries can be built easily • Mike Shamos’s concept of Universal Dictionary and Disambiguation • Colocation Frequencies have been exploited by us in Automatic Summarization- Its manifestation is the Phrase dictionary • Add to this simple aligned corpora of human translated frequently used sentences • Mine the sentences for new phrases and mine the phrases for new words • Use the Wikipedia Approach to enhancing the learning • First prototype built by Hemant, Madhavi, Raj and me for Hindi • Later on extended to Kannada and tamil by Rashmi, Sravan, Sheik, Anand, Vivek and Vinodini • Now we have the ability to make EBMT good enough in 30 Days

Universal Dictionary • Mike Shamos’s contribution to UDL • A collection of dictionaries in various languages. • Contains many European languages. • Given a word in English we can get the meaning of the word in various languages at one click • A total of five Indian languages were added to the Universal dictionary: • Kannada, Telugu, Tamil, Malayalam, Hindi • Microsoft Access Database.

Good Enough Translation

Example Based Machine Translation A good enough Translation • Requires: –

Problem statement Aim: • To obtain a “good enough” translation Constraints: • Limited Data • Limited Processing Time

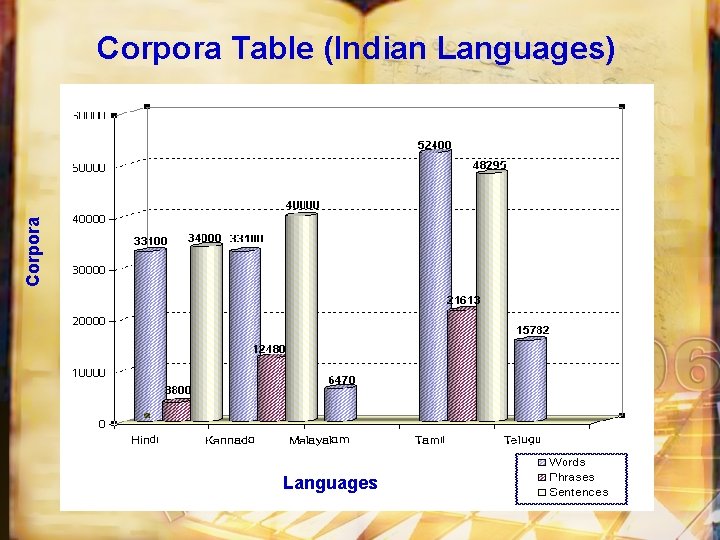

Corpora Table (Indian Languages) Languages Words Phrases Sentences Hindi 33947 3800 34000 Kannada 33000 12480 40000 Malayalam 6470 - - Tamil 52400 21613 48295 Telugu 15782 - -

Corpora Table (Indian Languages) Languages

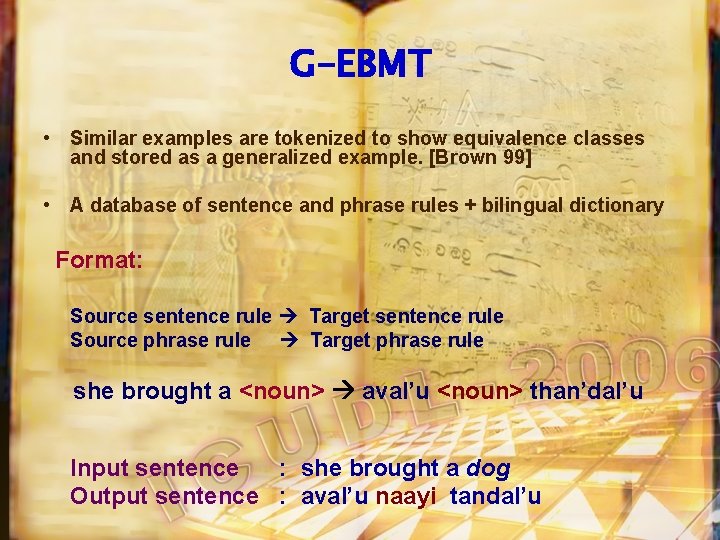

G-EBMT • Similar examples are tokenized to show equivalence classes and stored as a generalized example. [Brown 99] • A database of sentence and phrase rules + bilingual dictionary Format: Source sentence rule Target sentence rule Source phrase rule Target phrase rule she brought a <noun> aval’u <noun> than’dal’u Input sentence : she brought a dog Output sentence : aval’u naayi tandal’u

Word Order Free – A feature of Indian languages Root words take different forms according to its meaning in the sentence, i. e. the sequential order of the words in the sentence does not become important, unlike in English, for example , -I am Going Home-Home Going I am May mean Languages the same thing in Indian

The Great War of Mahabharat took place between the Pandavas and the Kauravas.

Krishna told Yudhisthira that Drona would finish his entire army if not checked. The only way to check Drona was to make him lay down his arms.

That was possible only when Drona was told that his son Ashwathama had died. Telling a lie is not a good practice even in wars. Krishna came up with an idea, Yudhisthira agreed reluctantly.

Bhima killed an elephant named ‘ Ashwathama '. Then he loudly announced for all to hear, ‘Ashwathama Hathah Kunjarah’ Ashwathama– an elephant, is killed

Lord Krishna blows his conch and makes the word Kunjarah (elephant) inaudible in the battlefield.

Drona turned to Yudhisthira and asked if that was true. Yudhisthira said, “Ashwathama is killed - An Elephant ” he added in a low voice

Dronacharya hears only the first two words ‘Ashwathama Hathah’, Presumed that his son ‘Ashwathama’ has been killed.

He gives up his weapons and sits in prayer. Dhristadymna takes advantage of the opportunity and kills Dronacharya.

This story depicts Indian Languages like Sanskrit are Word Order Free Languages. Hence good lexical Corpora would help in making nearly good enough Translation

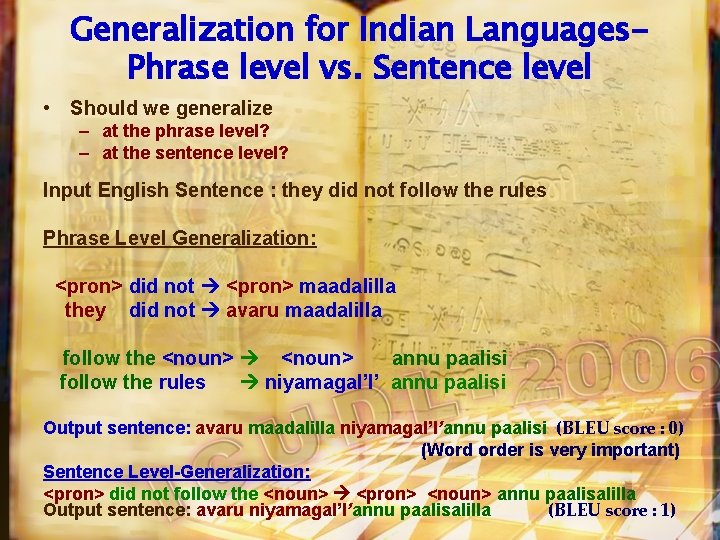

Generalization for Indian Languages. Phrase level vs. Sentence level • Should we generalize – at the phrase level? – at the sentence level? Input English Sentence : they did not follow the rules Phrase Level Generalization: <pron> did not <pron> maadalilla they did not avaru maadalilla follow the <noun> annu paalisi follow the rules niyamagal’l’ annu paalisi Output sentence: avaru maadalilla niyamagal’l’annu paalisi (BLEU score : 0) (Word order is very important) Sentence Level-Generalization: <pron> did not follow the <noun> <pron> <noun> annu paalisalilla Output sentence: avaru niyamagal’l’annu paalisalilla (BLEU score : 1)

Motivation for Linguistic Rules What happens if the input sentence doesn’t match with any of the rules ? What do we When will this happen? do if it does? WHEN: Will surely happen since we can’t have an infinite set of examples…. . WHAT TO DO: The most obvious thing - go in for… Word-Word Translation as back-off – which is not a good idea as we will need to rearrange the words Can we add Linguistic rules?

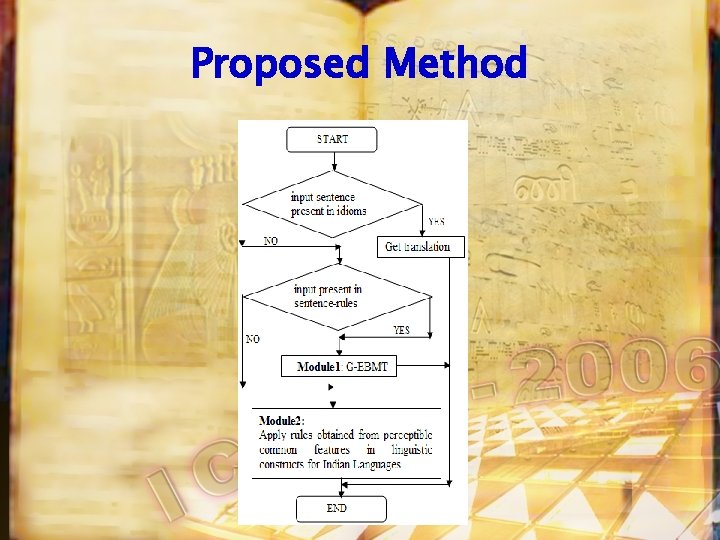

Proposed Method

Managing Idioms • Meanings of idioms cannot be inferred from the meanings of the words that make it up • Idioms are stored separately in a file in the following format bite the hand that feeds un'd'a manege erad'u bage

Applying Language Specific Rules

Stage 1: Tagger and Stemmer (1) Input sentence: he is Output of the tagger playing in my house *: he _PP is_VBZ play_VBG in_IN my _PP$ house _NN PP- Personal pronoun VBZ-verb, 3 rd person singular present VBG-verb, gerund or present participle IN-Preposition or subordinating conjunction PP$-Possessive pronoun NN-Noun, singular or mass * Helmut Schmid, “Probabilistic Part-of-Speech Tagging using Decision Trees”, Proceedings of International Conference on New Methods in Language Processing, September 1994.

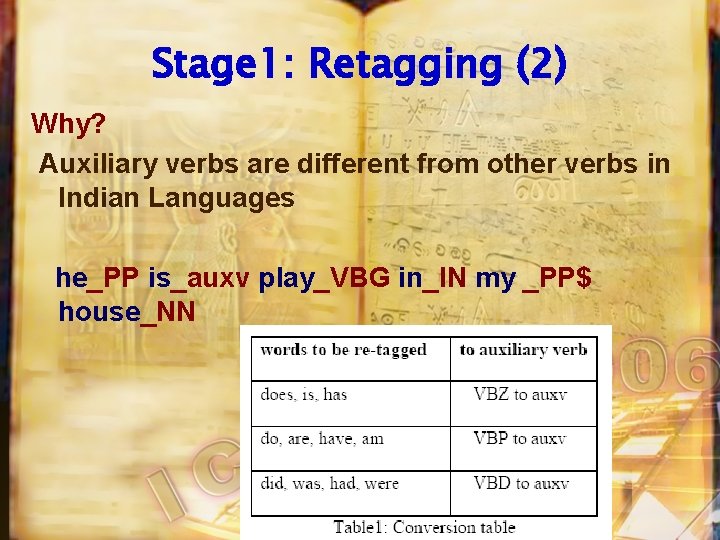

Stage 1: Retagging (2) Why? Auxiliary verbs are different from other verbs in Indian Languages he_PP is_auxv play_VBG in_IN my _PP$ house_NN

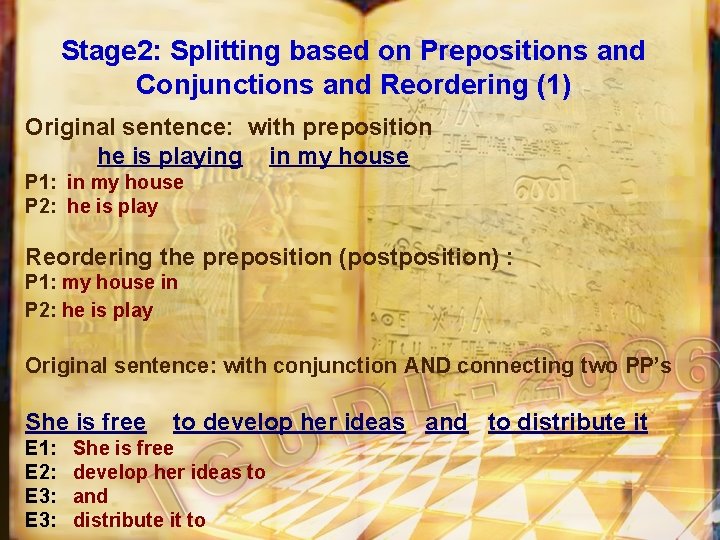

Stage 2: Splitting based on Prepositions and Conjunctions and Reordering (1) Original sentence: with preposition he is playing in my house P 1: in my house P 2: he is play Reordering the preposition (postposition) : P 1: my house in P 2: he is play Original sentence: with conjunction AND connecting two PP’s She is free E 1: E 2: E 3: to develop her ideas and to distribute it She is free develop her ideas to and distribute it to

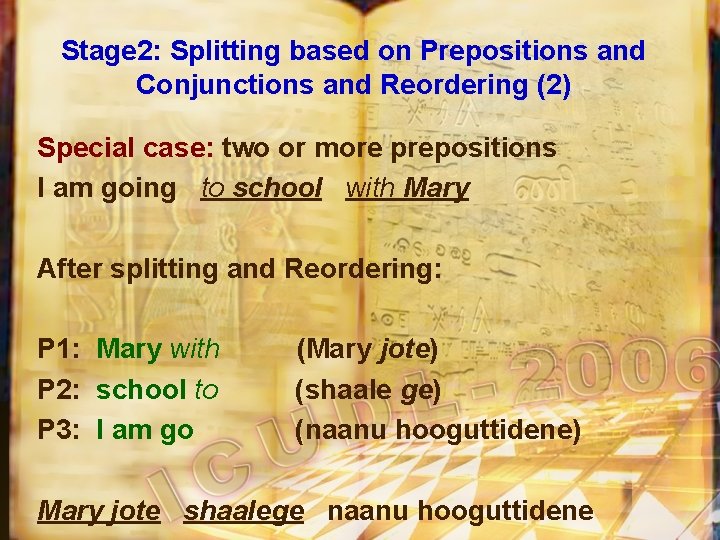

Stage 2: Splitting based on Prepositions and Conjunctions and Reordering (2) Special case: two or more prepositions I am going to school with Mary After splitting and Reordering: P 1: Mary with P 2: school to P 3: I am go (Mary jote) (shaale ge) (naanu hooguttidene) Mary jote shaalege naanu hooguttidene

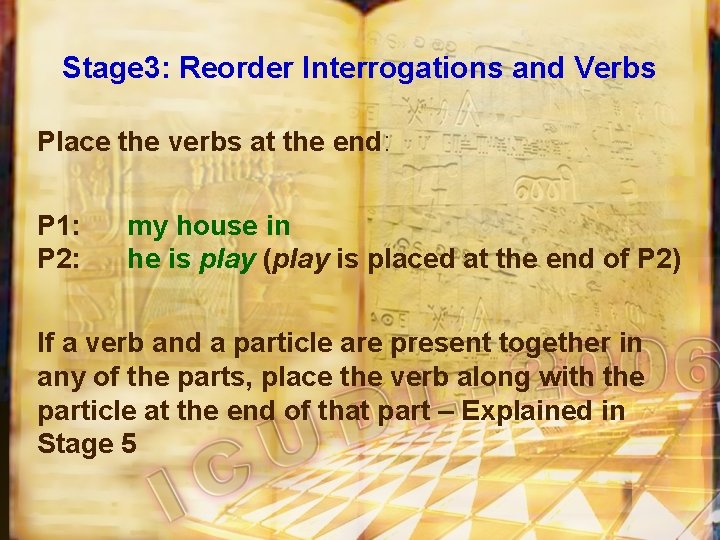

Stage 3: Reorder Interrogations and Verbs Place the verbs at the end: P 1: P 2: my house in he is play (play is placed at the end of P 2) If a verb and a particle are present together in any of the parts, place the verb along with the particle at the end of that part – Explained in Stage 5

Stage 4: Reorder Auxiliary/Modal verbs P 1: my house in P 2: he play is (is is placed at the end of P 2) Special Case: He is not playing in my house E 1: my house in E 2: he play is not

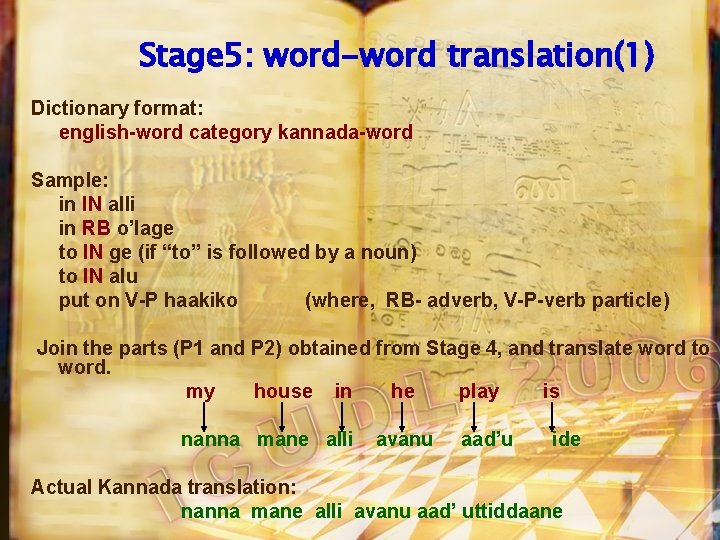

Stage 5: word-word translation(1) Dictionary format: english-word category kannada-word Sample: in IN alli in RB o’lage to IN ge (if “to” is followed by a noun) to IN alu put on V-P haakiko (where, RB- adverb, V-P-verb particle) Join the parts (P 1 and P 2) obtained from Stage 4, and translate word to word. my house in he play is nanna mane alli avanu aad’u ide Actual Kannada translation: nanna mane alli avanu aad’ uttiddaane

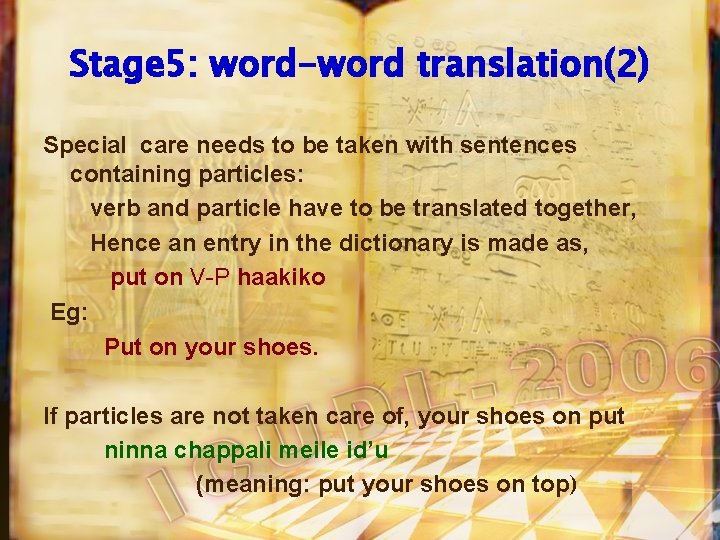

Stage 5: word-word translation(2) Special care needs to be taken with sentences containing particles: verb and particle have to be translated together, Hence an entry in the dictionary is made as, put on V-P haakiko Eg: Put on your shoes. If particles are not taken care of, your shoes on put ninna chappali meile id’u (meaning: put your shoes on top)

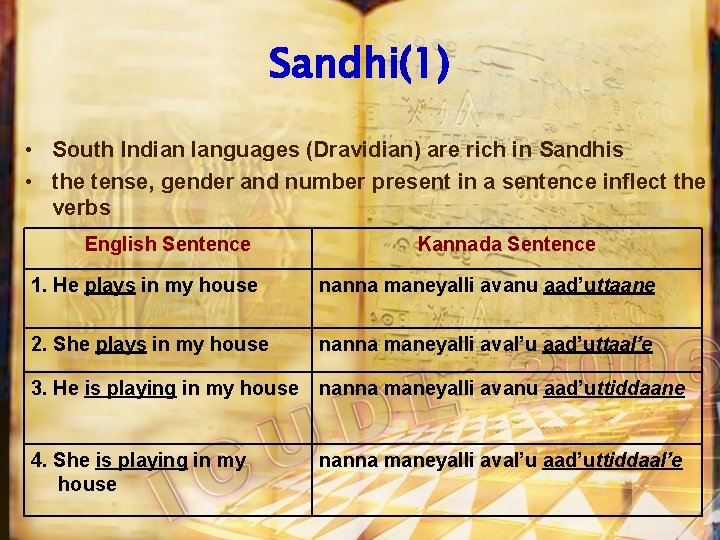

Sandhi(1) • South Indian languages (Dravidian) are rich in Sandhis • the tense, gender and number present in a sentence inflect the verbs English Sentence Kannada Sentence 1. He plays in my house nanna maneyalli avanu aad’uttaane 2. She plays in my house nanna maneyalli aval’u aad’uttaal’e 3. He is playing in my house nanna maneyalli avanu aad’uttiddaane 4. She is playing in my house nanna maneyalli aval’u aad’uttiddaal’e

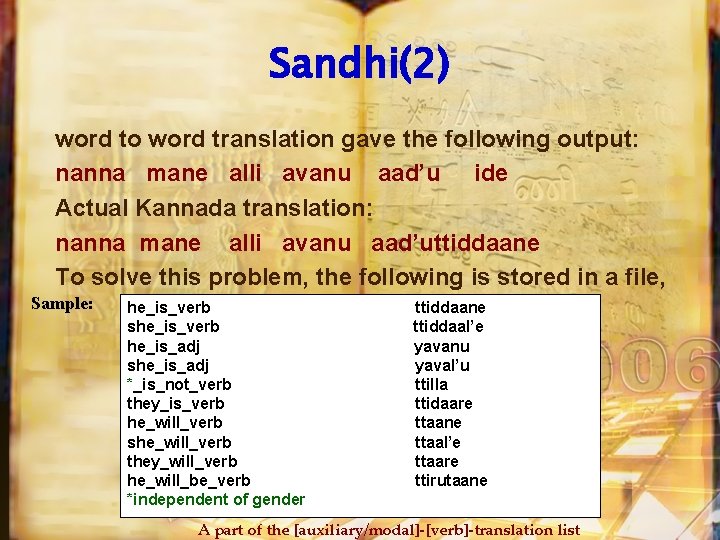

Sandhi(2) word to word translation gave the following output: nanna mane alli avanu aad’u ide Actual Kannada translation: nanna mane alli avanu aad’uttiddaane To solve this problem, the following is stored in a file, Sample: he_is_verb she_is_verb he_is_adj she_is_adj *_is_not_verb they_is_verb he_will_verb she_will_verb they_will_verb he_will_be_verb *independent of gender ttiddaane ttiddaal’e yavanu yaval’u ttilla ttidaare ttaane ttaal’e ttaare ttirutaane A part of the [auxiliary/modal]-[verb]-translation list

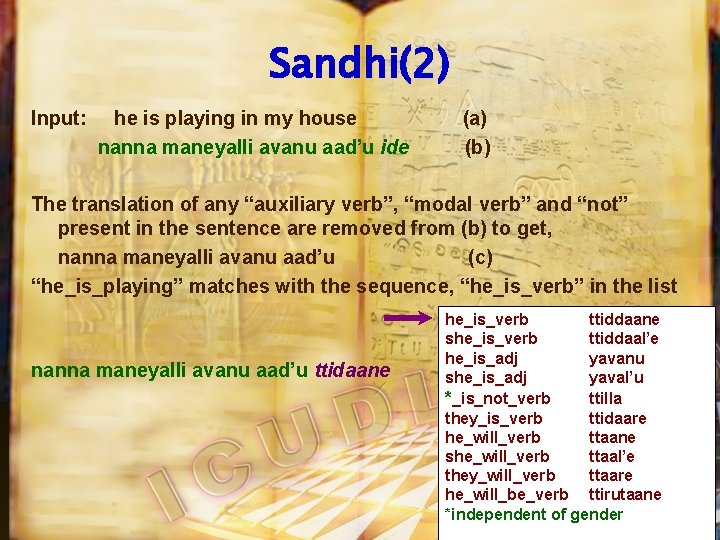

Sandhi(2) Input: he is playing in my house nanna maneyalli avanu aad’u ide (a) (b) The translation of any “auxiliary verb”, “modal verb” and “not” present in the sentence are removed from (b) to get, nanna maneyalli avanu aad’u (c) “he_is_playing” matches with the sequence, “he_is_verb” in the list nanna maneyalli avanu aad’u ttidaane he_is_verb ttiddaane she_is_verb ttiddaal’e he_is_adj yavanu she_is_adj yaval’u *_is_not_verb ttilla they_is_verb ttidaare he_will_verb ttaane she_will_verb ttaal’e they_will_verb ttaare he_will_be_verb ttirutaane *independent of gender

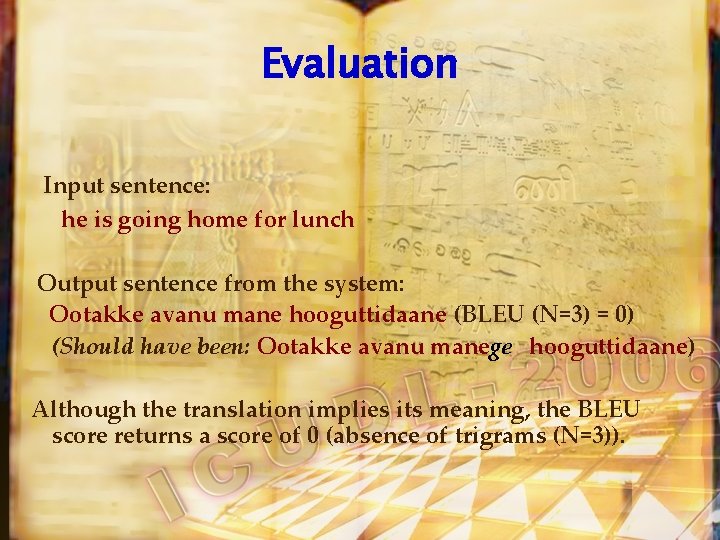

Evaluation Input sentence: he is going home for lunch Output sentence from the system: Ootakke avanu mane hooguttidaane (BLEU (N=3) = 0) (Should have been: Ootakke avanu manege hooguttidaane) Although the translation implies its meaning, the BLEU score returns a score of 0 (absence of trigrams (N=3)).

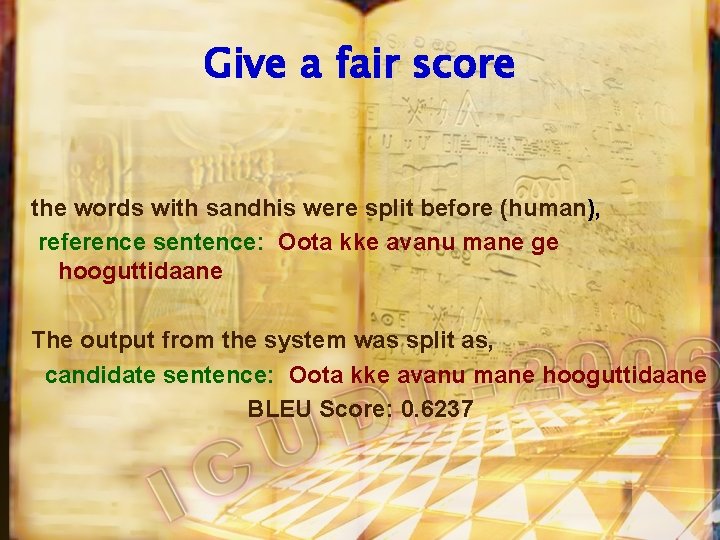

Give a fair score the words with sandhis were split before (human), reference sentence: Oota kke avanu mane ge hooguttidaane The output from the system was split as, candidate sentence: Oota kke avanu mane hooguttidaane BLEU Score: 0. 6237

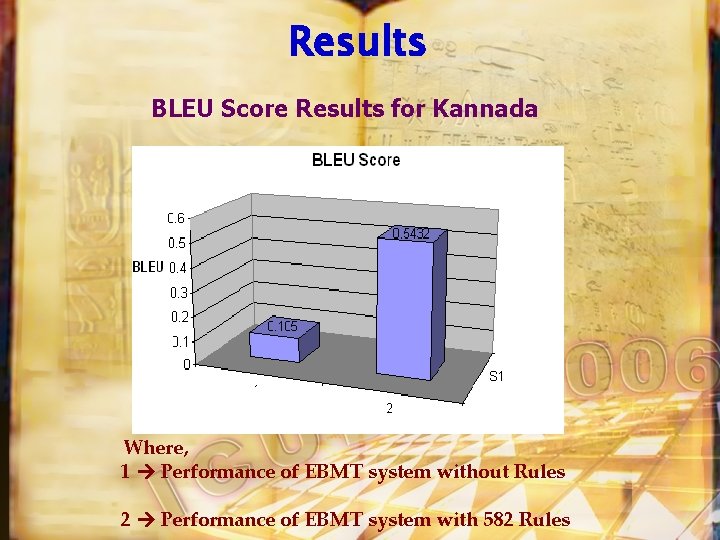

Results BLEU Score Results for Kannada Where, 1 Performance of EBMT system without Rules 2 Performance of EBMT system with 582 Rules

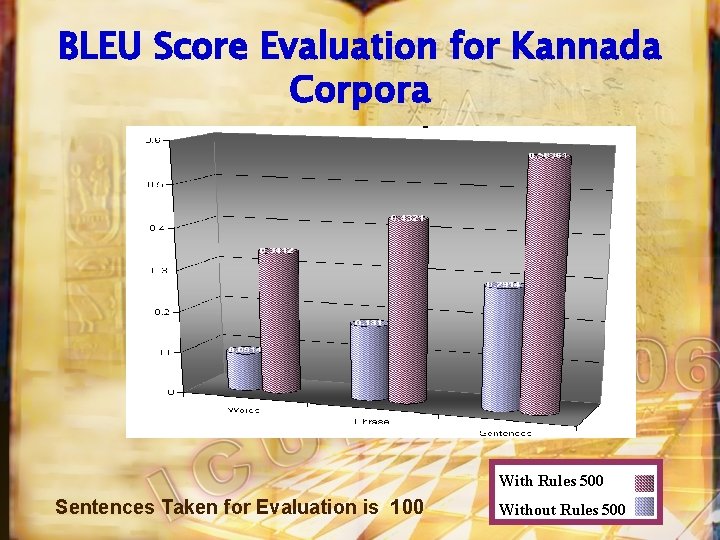

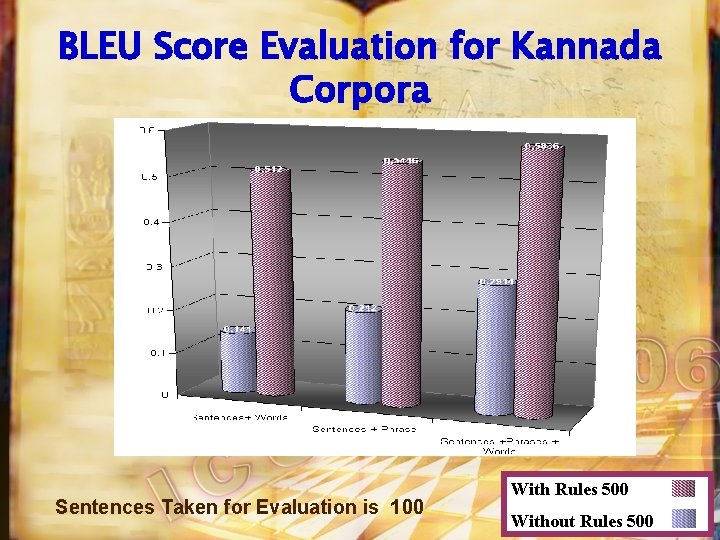

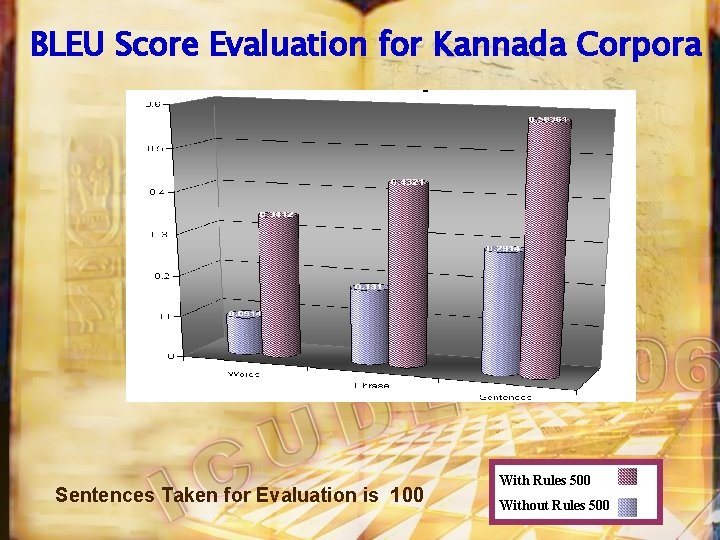

BLEU Score Evaluation for Kannada Corpora With Rules 500 Sentences Taken for Evaluation is 100 Without Rules 500

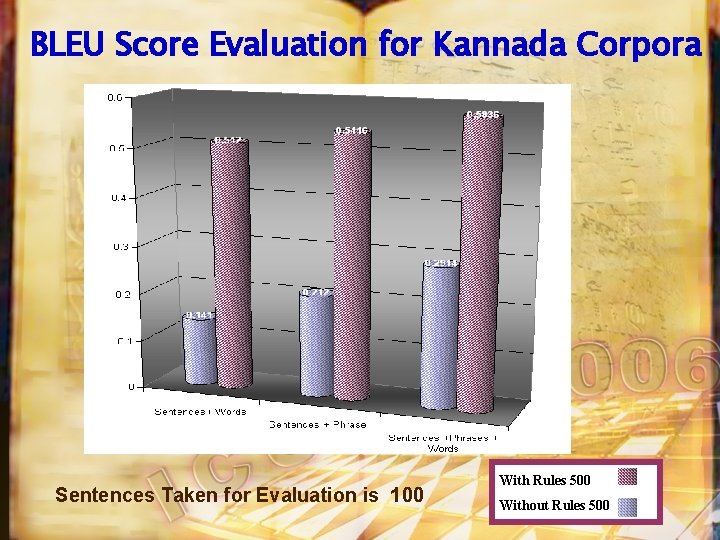

BLEU Score Evaluation for Kannada Corpora Sentences Taken for Evaluation is 100 With Rules 500 Without Rules 500

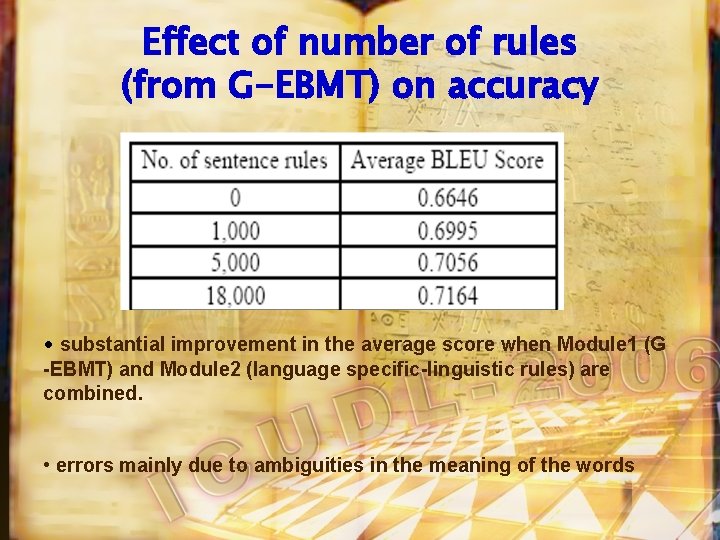

Effect of number of rules (from G-EBMT) on accuracy • substantial improvement in the average score when Module 1 (G -EBMT) and Module 2 (language specific-linguistic rules) are combined. • errors mainly due to ambiguities in the meaning of the words

How can we improve further • Wikipedia approach • Human Evaluation rather than BLEU • Use linguistic expertise to generate more Linguistic rules • We are also using data mining rules to infer rules from the corpora

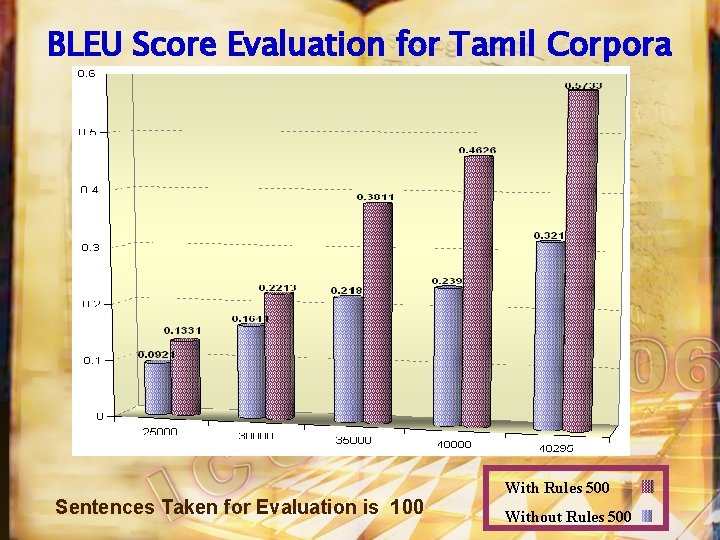

BLEU Score Evaluation for Tamil Corpora Sentences Taken for Evaluation is 100 With Rules 500 Without Rules 500

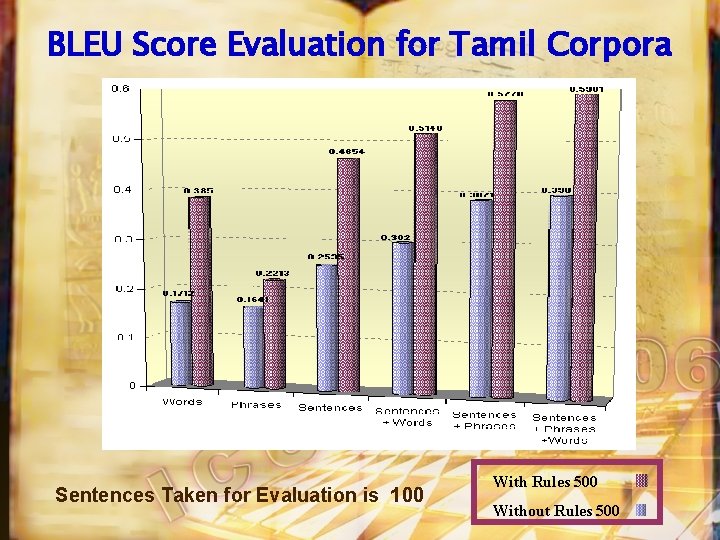

BLEU Score Evaluation for Tamil Corpora Sentences Taken for Evaluation is 100 With Rules 500 Without Rules 500

BLEU Score Evaluation for Kannada Corpora Sentences Taken for Evaluation is 100 With Rules 500 Without Rules 500

BLEU Score Evaluation for Kannada Corpora Sentences Taken for Evaluation is 100 With Rules 500 Without Rules 500

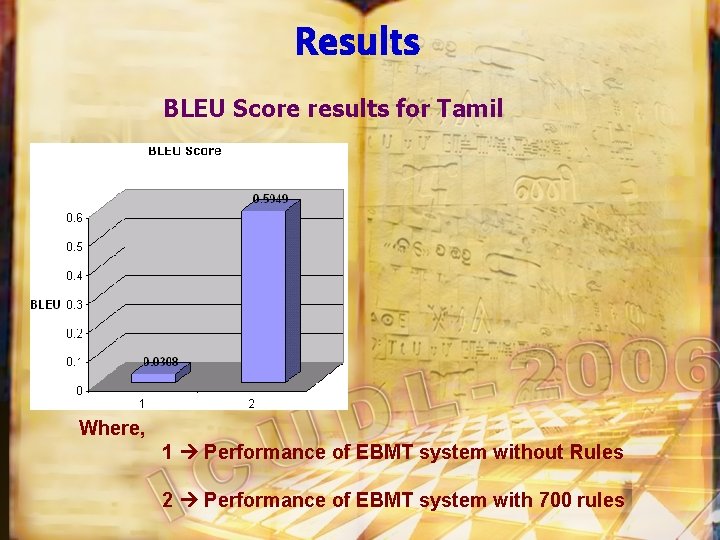

Results BLEU Score results for Tamil Where, 1 Performance of EBMT system without Rules 2 Performance of EBMT system with 700 rules

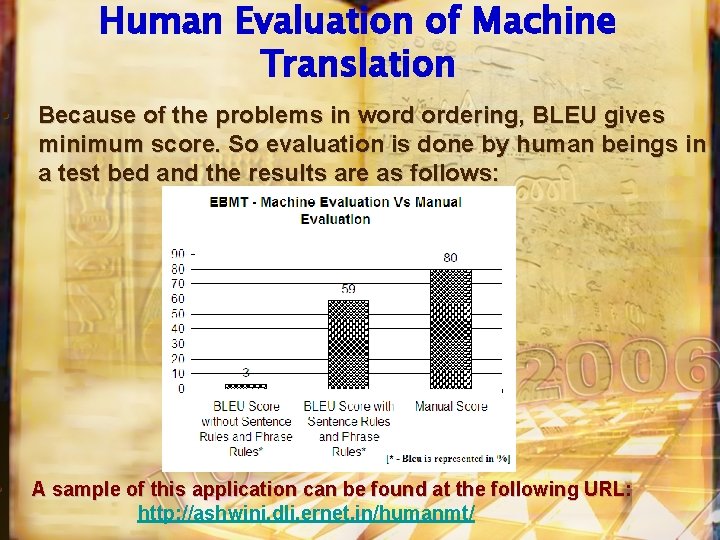

Human Evaluation of Machine Translation • Because of the problems in word ordering, BLEU gives minimum score. So evaluation is done by human beings in a test bed and the results are as follows: • A sample of this application can be found at the following URL: http: //ashwini. dli. ernet. in/humanmt/

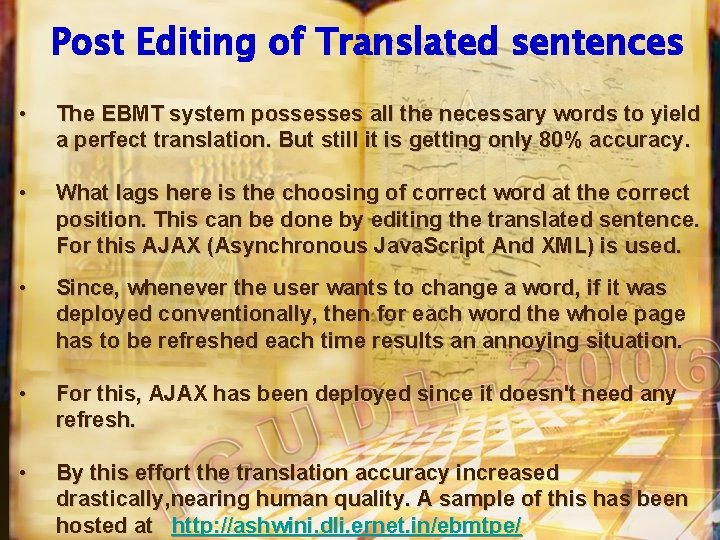

Post Editing of Translated sentences • The EBMT system possesses all the necessary words to yield a perfect translation. But still it is getting only 80% accuracy. • What lags here is the choosing of correct word at the correct position. This can be done by editing the translated sentence. For this AJAX (Asynchronous Java. Script And XML) is used. • Since, whenever the user wants to change a word, if it was deployed conventionally, then for each word the whole page has to be refreshed each time results an annoying situation. • For this, AJAX has been deployed since it doesn't need any refresh. • By this effort the translation accuracy increased drastically, nearing human quality. A sample of this has been hosted at http: //ashwini. dli. ernet. in/ebmtpe/

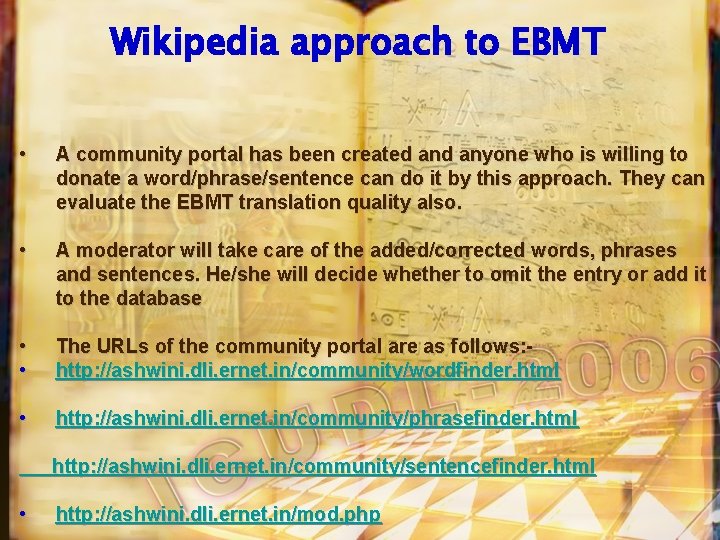

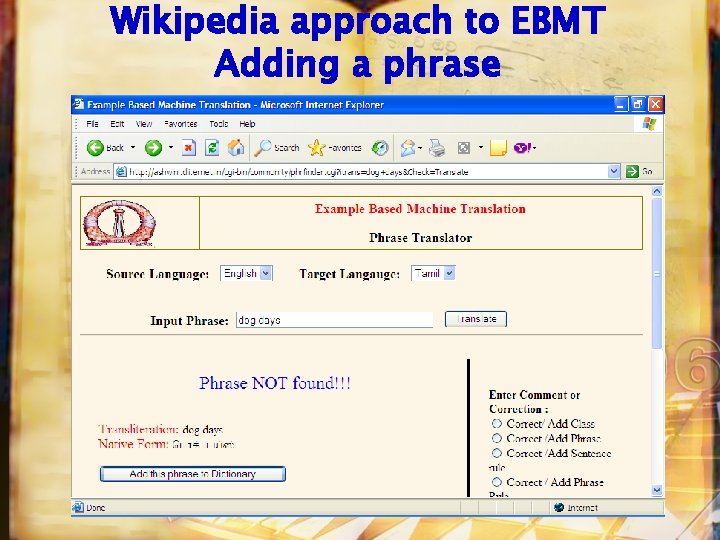

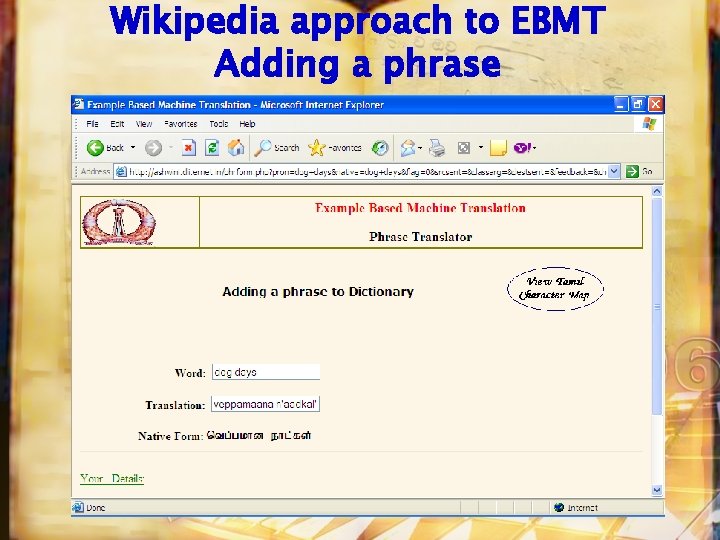

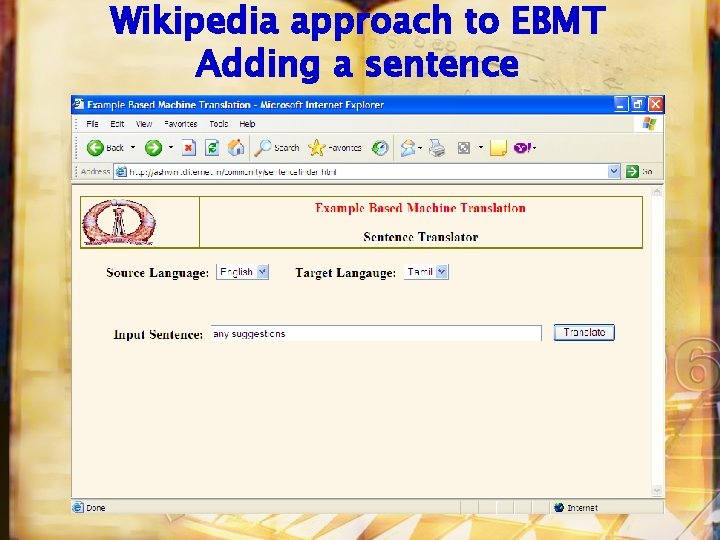

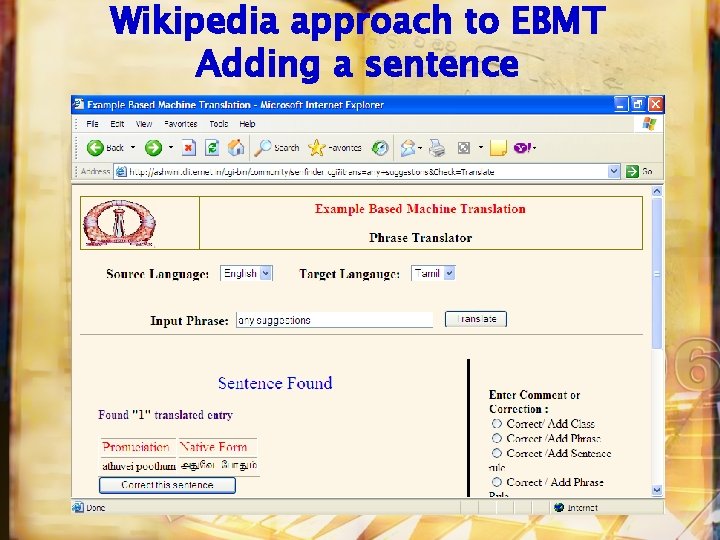

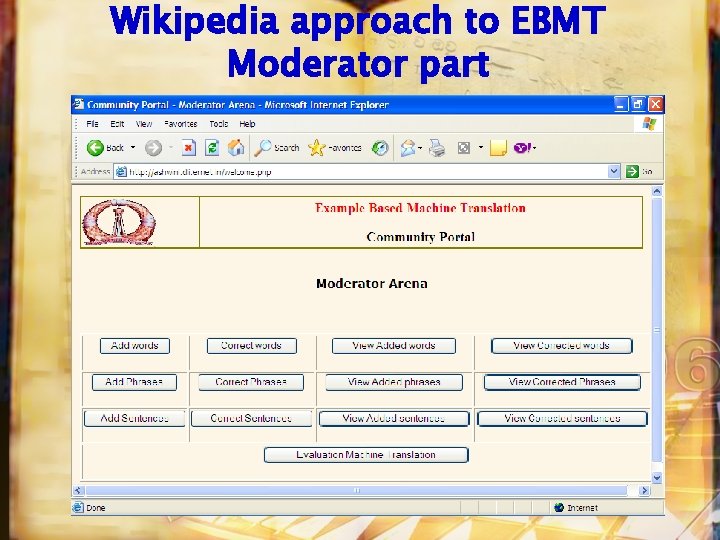

Wikipedia approach to EBMT • A community portal has been created anyone who is willing to donate a word/phrase/sentence can do it by this approach. They can evaluate the EBMT translation quality also. • A moderator will take care of the added/corrected words, phrases and sentences. He/she will decide whether to omit the entry or add it to the database • • The URLs of the community portal are as follows: http: //ashwini. dli. ernet. in/community/wordfinder. html • http: //ashwini. dli. ernet. in/community/phrasefinder. html http: //ashwini. dli. ernet. in/community/sentencefinder. html • http: //ashwini. dli. ernet. in/mod. php

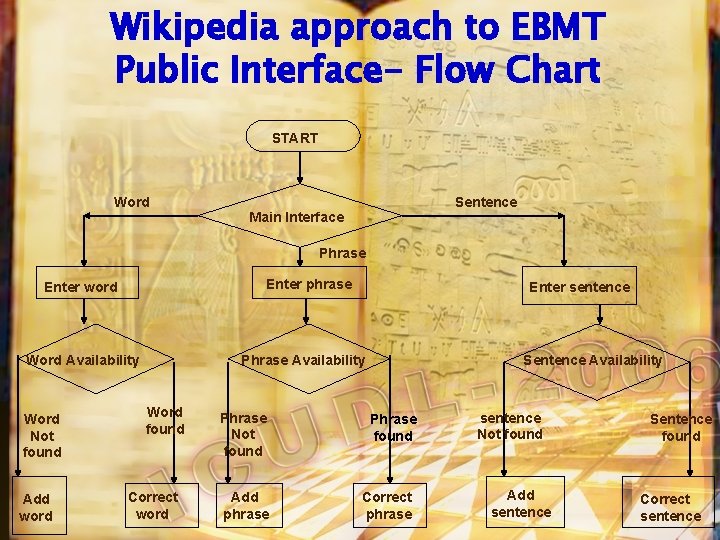

Wikipedia approach to EBMT Public Interface- Flow Chart START Word Sentence Main Interface Phrase Enter phrase Enter word Word Availability Word Not found Add word Enter sentence Phrase Availability Word found Correct word Phrase Not found Add phrase Sentence Availability Phrase found Correct phrase sentence Not found Add sentence Sentence found Correct sentence

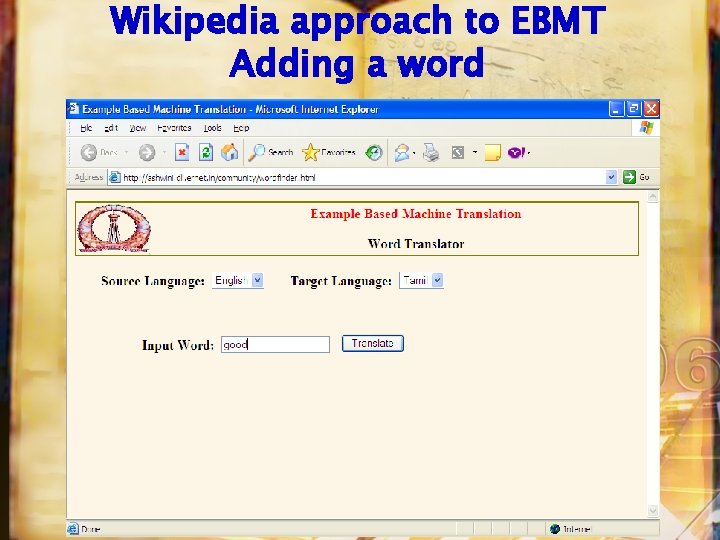

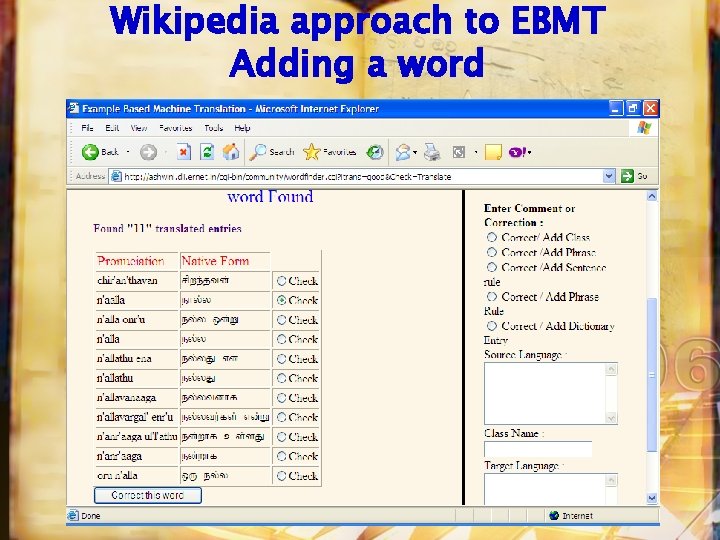

Wikipedia approach to EBMT Adding a word

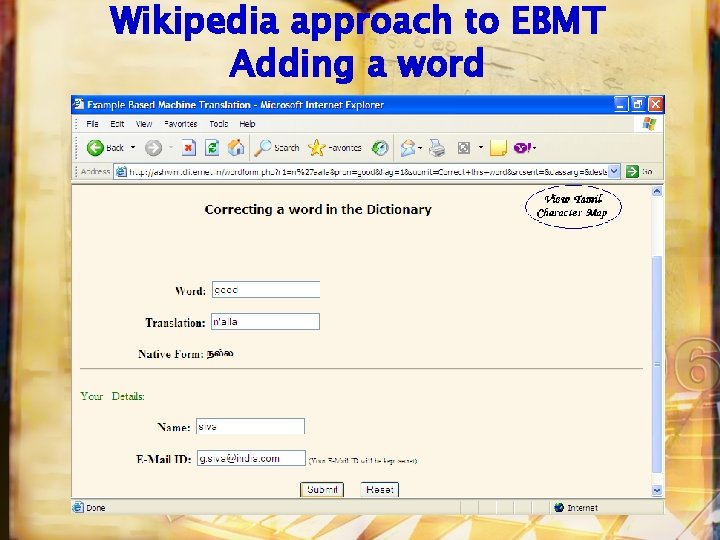

Wikipedia approach to EBMT Adding a word

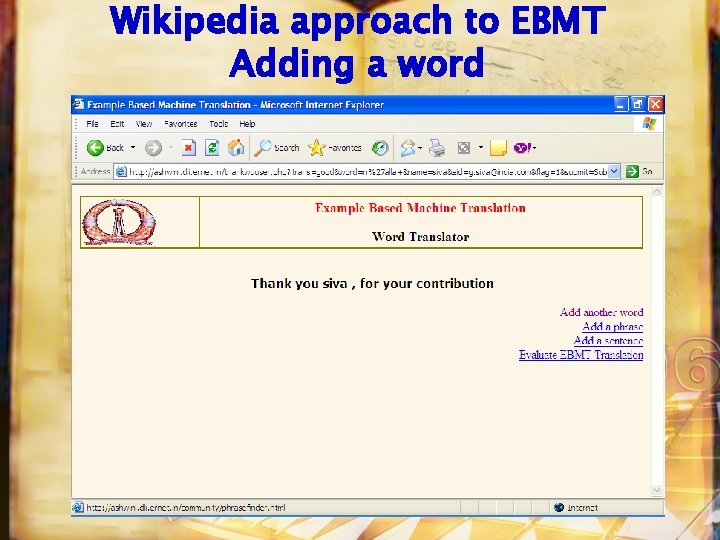

Wikipedia approach to EBMT Adding a word

Wikipedia approach to EBMT Adding a word

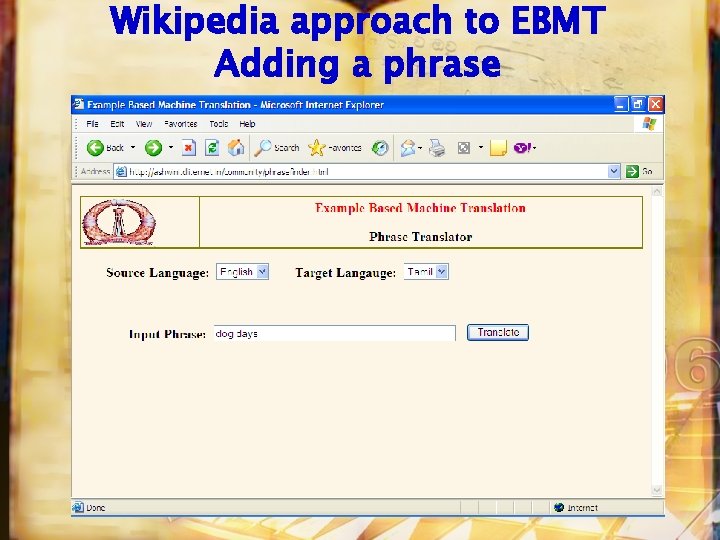

Wikipedia approach to EBMT Adding a phrase

Wikipedia approach to EBMT Adding a phrase

Wikipedia approach to EBMT Adding a phrase

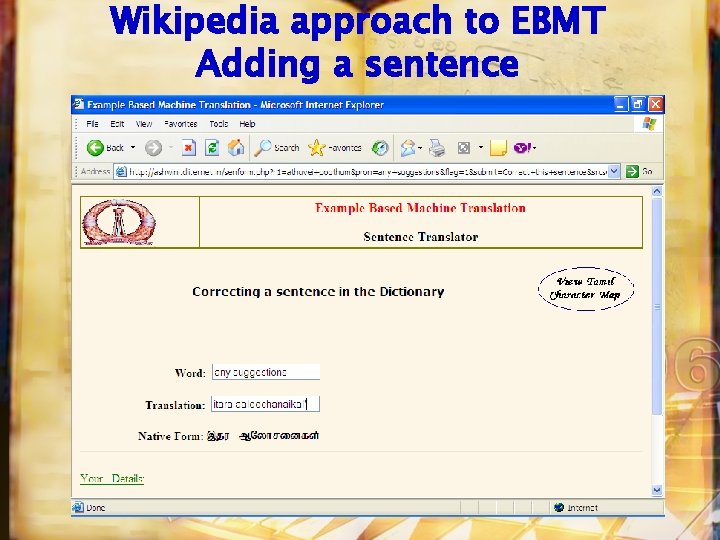

Wikipedia approach to EBMT Adding a sentence

Wikipedia approach to EBMT Adding a sentence

Wikipedia approach to EBMT Adding a sentence

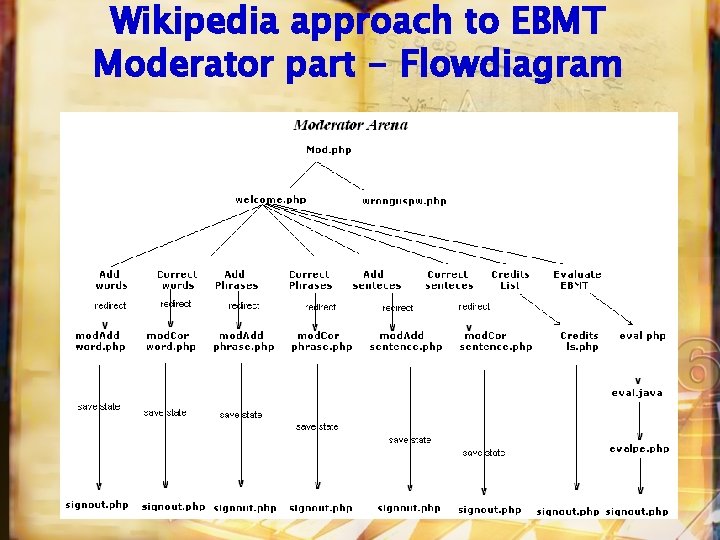

Wikipedia approach to EBMT Moderator part - Flowdiagram

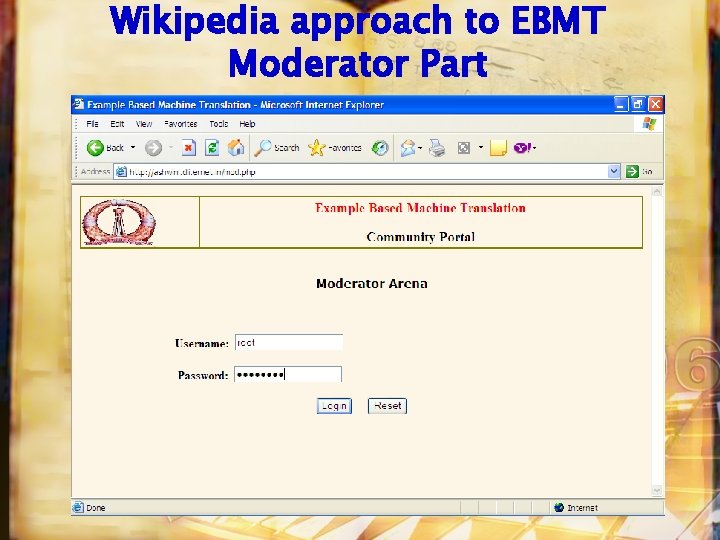

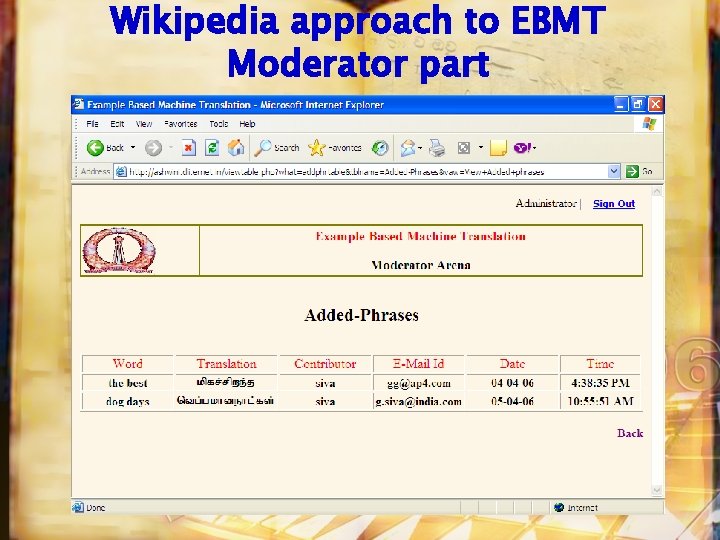

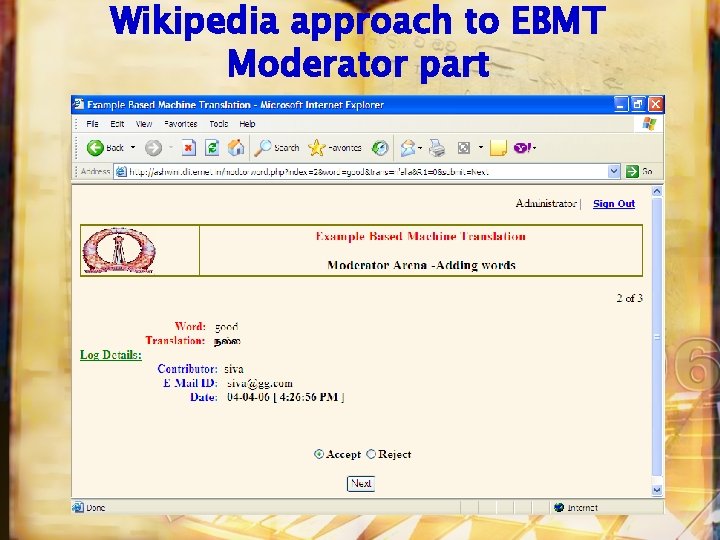

Wikipedia approach to EBMT Moderator Part

Wikipedia approach to EBMT Moderator part

Wikipedia approach to EBMT Moderator part

Wikipedia approach to EBMT Moderator part

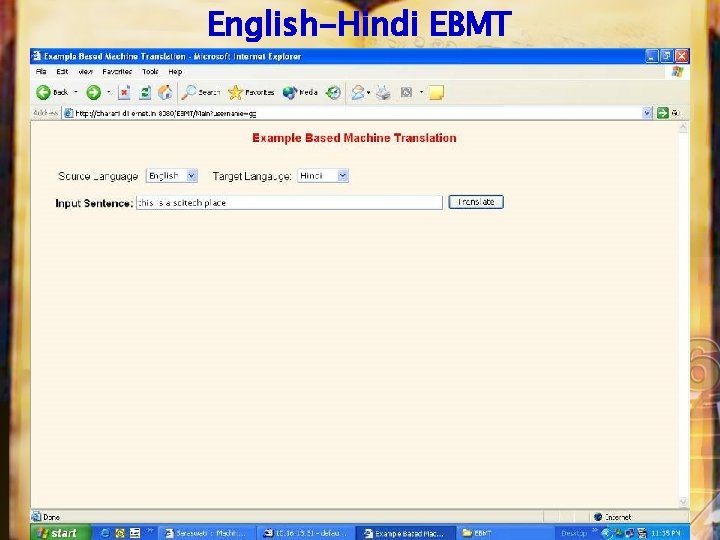

English-Hindi EBMT

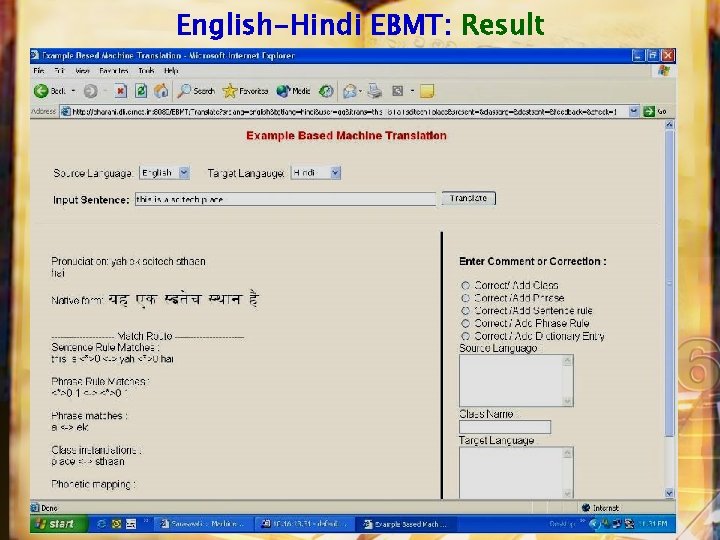

English-Hindi EBMT: Result

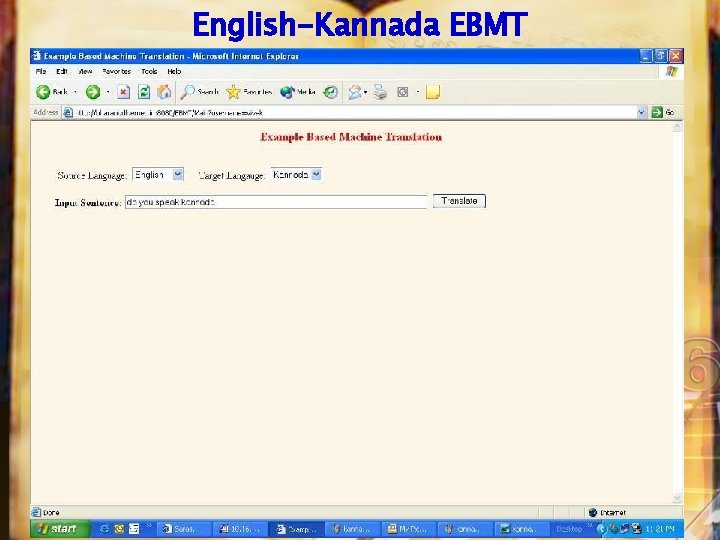

English-Kannada EBMT

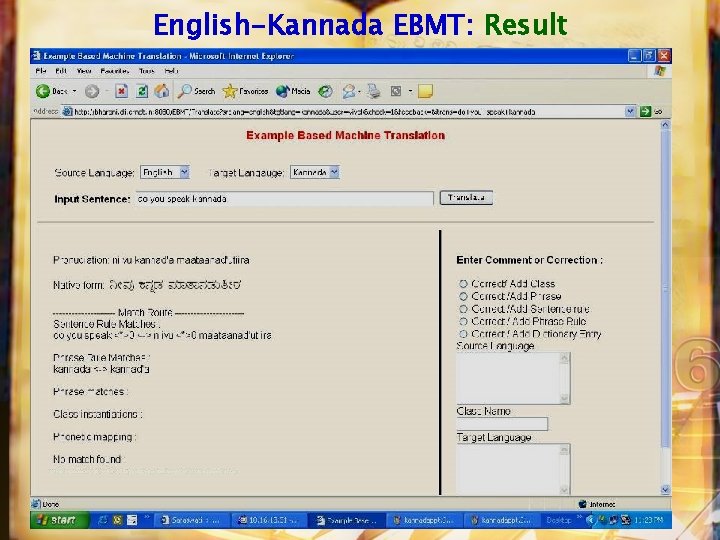

English-Kannada EBMT: Result

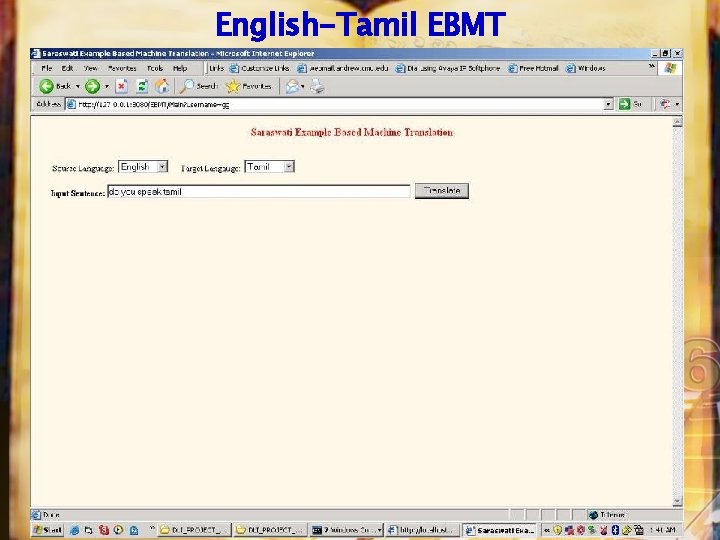

English-Tamil EBMT

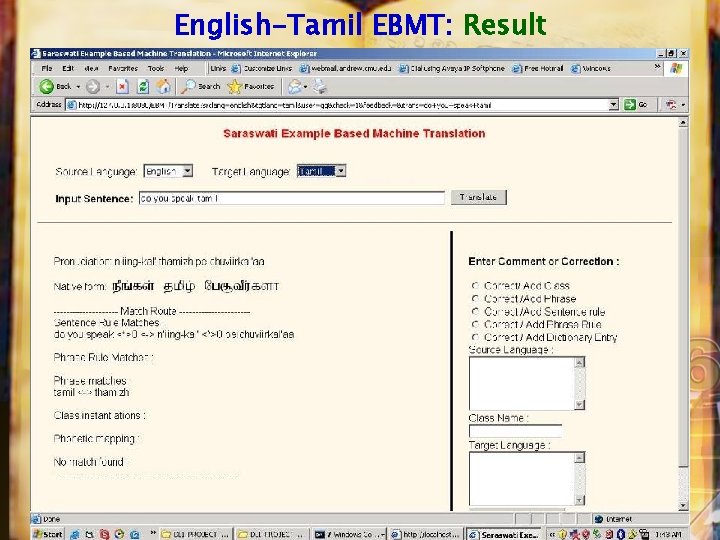

English-Tamil EBMT: Result

Conclusion • Many problems in Indian languages are looking more and more intractable like the legendary Indian language OCR ! • What is succesful in English and European languages need not be successful for Indian languages • A whole new way of thinking may be needed • Overall UDL will turn out to be a very fertile ground for Research- many unsolved problems for which we do not even know the directions • Good Enough Technologies are part of the new way of thinking

The Websites to watch • http: //www. new. dli. ernet. in/ • http: //www. dli. ernet. in/ • http: //dli. iiit. ac. in/ • http: //swati. dli. ernet. in/om • http: //bharani. dli. ernet. in/ebmt/ • http: //revati. dli. ernet. in/Search. Tamil. html

Acknowledgements • Prof Raj Reddy for his great vision and Guidance • Madhavi, Hemant, Eric, Krishna, Kiran, Srini, Sravan, Sheik, Mini, Rashmi, Pradeepa, Tina, Malar, Anand, Jiju, Ravi, Vamshi, Vivek, Vinodini and Kishore

s n e p p It ha a i d n I n only i

- Slides: 82